Abstract

Platforms govern users, and the way that platforms govern matters. In this article, I propose that the legitimacy of governance of users by platforms should be evaluated against the values of the rule of law. In particular, I suggest that we should care deeply about the extent to which private governance is consensual, transparent, equally applied and relatively stable, and fairly enforced. These are the core values of good governance, but are alien to the systems of contract law that currently underpin relationships between platforms and their users. Through an analysis of the contractual Terms of Service of 14 major social media platforms, I show how these values can be applied to evaluate governance, and how poorly platforms perform on these criteria. I argue that the values of the rule of law provide a language to name and work through contested concerns about the relationship between platforms and their users. This is an increasingly urgent task. Finding a way to apply these values to articulate a set of desirable restraints on the exercise of power in the digital age is the key challenge and opportunity of the project of digital constitutionalism.

Keywords

Introduction

In 2009, Facebook suffered a backlash for proposing to change its Terms of Service without adequately consulting its community. Mark Zuckerberg (2009) pledged that from then on, Facebook users would have direct input on the site’s Terms: Our terms aren’t just a document that protect our rights; it’s the governing document for how the service is used by everyone across the world. Given its importance, we need to make sure the terms reflect the principles and values of the people using the service. Since this will be the governing document that we’ll all live by, Facebook users will have a lot of input in crafting these terms.

In May 2009, Facebook renamed its “Terms of Use” to a “Statement of Rights and Responsibilities” that included a mechanism for Facebook’s users to vote on proposed changes to the Terms of Service. The vote would be binding on Facebook if more than 30% of its active userbase participated. This turned out to be an unrealistically high threshold. When Facebook later introduced controversial changes to its privacy policy, it was opposed by 88% of the 668,872 people who voted—a group that represented less than 1% of the more than 1 billion registered users at the time (Schrage, 2012), much fewer than the 30% required. By the end of 2012, Facebook had rolled back its commitment to binding votes. Sometime after October 2014, an edit was made to Facebook’s blog, and Zuckerberg’s earlier comments 1 were disavowed, attributed instead to a former Facebook employee who left the firm in 2010. 2

A great deal of work remains to be done to ensure that online governance is legitimate and fair. Facebook’s limited experiment with voting reflected an early unease over the governance of our shared online social spaces. Unfortunately, Facebook treated this process as a failure and never seriously tried again to experiment to develop a better mechanism to seek user input and consensus on its governance processes. In the time since then, concerns over governance have multiplied and intensified. Some of the most visible controversies revolve around privacy and the extent that users consent to the sharing of detailed data about their lives and activities with both advertisers and nation states. Others focus on the visibility of content, as platforms seek to rank and order information on the basis of individual relevance, on criteria that are generally not well explained, and that sometimes appear to be deeply biased. And in recent times, calls have markedly increased for platforms to be more accountable for the way that they moderate speech—both from groups seeking enhanced responsibility of platforms to tackle abuse and groups seeking strong restrictions on the extent to which platforms censor speech. As software continues to “eat the world” (Andreessen, 2011), these issues extend beyond communications platforms to massive e-commerce marketplaces and the rapidly emerging peer economy platforms that use digital networks to coordinate the provision of goods and services across a broad range of social life. The role of digital platforms as “architects of public spaces” (Gillespie, 2017, p. 25) suggests that they ought to be more accountable to the public for the ways they create and enforce the rules that govern our interactions.

In this article, I argue that the governance of platforms raises fundamental constitutional concerns—in the sense of legal and social responsibilities about how these social spaces are constituted and how the exercise of power ought to be constrained. In the first section, I show how these concerns are emerging in a disparate set of controversies across a range of different platforms involving diverse groups of stakeholders, including users, business, governments, and civil society. I analyze these concerns from the perspective of common law systems (particularly in the United States) that the major social media platforms are most directly regulated by. In the section titled “The Principles of the Rule of Law,” I propose a framework for evaluating the legitimacy of governance of platforms based on the values of the rule of law. The rule of law is a well-established concept in western liberal theory that anchors the legitimacy of governance in legality. The rule of law requires that decisions of those who have power over us are made according to law, defined in opposition to the arbitrary or capricious exercise of human discretion. The values of the rule of law—consent, predictability, and procedural fairness—are core liberal values of good governance. While these have historically been limited to the public sphere, I argue that these values provide a useful guide to understanding the role that private online platforms play in governing social life. By examining the contractual Terms of Service of 14 major platforms, I show how a rule of law framework provides the analytical tools and the language to conceptualize what is at stake in the governance of platforms. I argue that these constitutional values help to make explicit the core concern of online governance: that control over behavior is exercised in a way that is not accountable to the people who are affected.

The rule of law framework provides a lens through which to evaluate the legitimacy of online governance and therefore to begin to articulate what limits societies should impose on the autonomy of platforms. For the governance of platforms to be legitimate according to rule of law values, we should expect certain basic procedural safeguards. First, decisions must be made according to a set of rules, and not in a way that is arbitrary or capricious. Second, these rules must be clear, well-understood, and relatively stable, and they must be applied equally and consistently. Third, there must be adequate due process safeguards, including an explanation of why a particular decision was made and some form of an appeals process that allows for the independent review and fair resolution of disputes. These are the fundamental minimum procedural standards for a system of governance to be legitimate, and platforms currently perform very poorly on these measures. I argue that the extent of influence that major platforms and other digital intermediaries have over social life implies that we should seek to hold them to account against these values. This is not to suggest the necessity of any particular mechanism of ensuring procedural legitimacy – we should not expect platforms to adopt the heavy standards of a constitutional democracy, for example. Holding platforms to account against these values instead suggests the need for an ongoing process of monitoring, justification, and improvement for the systems that platforms implement to regulate the behavior of their users. I conclude by arguing that the linked concepts of rights of users and responsibilities of platforms provide a useful way of making explicit concerns over the constitution of our shared online social spaces. The values of the rule of law provide the language that is needed to express these concerns and progress the project of “digital constitutionalism” that seeks to articulate and realize appropriate standards of legitimacy for governance in the digital age.

The Governance of Platforms

The ways in which platforms are governed matters. Platforms mediate the way people communicate, and the decisions they make have a real impact on public culture and the social and political lives of their users (DeNardis & Hackl, 2015; Gillespie, 2015). Taking a definition of governance that includes all “organized efforts to manage the course of events in a social system” (Burris, Kempa, & Shearing, 2008, p. 3), it is clear that private actors often play a very significant role in regulating social behavior. A growing body of literature now seeks to reconceptualize the role of the state in a “decentralized” (Black, 2001), “pluralized” (Parker, 2008), or networked (Burris, Drahos, & Shearing, 2005; A. Crawford, 2006; Shearing & Wood, 2003) regulatory environment. In the context of digital media platforms, widening the governance lens beyond governments requires “[accounting] for a diverse, contested environment of agents with differing levels of power and visibility: users, algorithms, platforms, industries and governments” (K. Crawford & Lumby, 2013, p. 9).

Facebook’s experiment with democratic ideals neatly illustrates the disconnect between the social values at stake and the hard legal realities. At law, Terms of Service are contractual documents that set up a simple consumer transaction: in exchange for access to the platform, users agree to be bound by the terms and conditions set out (Jankowich, 2006). The legal relationship of providers to users is one of firm to consumer, not sovereign to citizen (Balkin, 2004; Grimmelmann, 2009). In legal terms, it makes little sense to talk of “rights” in these consumer transactions unless they are explicitly bargained for (Elkin-Koren, 1997; Radin, 2004). Zuckerberg’s proclamation recognizes a truth that the law does not: contractual Terms of Service play an important constitutional role in the governance of everyday life. They are constitutional documents in that they are integral to the way our shared social spaces are constituted and governed.

Terms of Service documents allocate a great deal of power to the operators. Particularly for large, corporate platforms, these Terms of Service are generally written in a way that is designed to safeguard the commercial interests of platform providers. Earlier studies have shown how ISP contracts explicitly forbid constitutionally protected speech and reject constitutional standards of due process for enforcement of the rules (Braman & Roberts, 2003). In the United States, the language of constitutional rights has almost no application in the “private” sphere; constitutional law applies primarily to the “public” actions of state actors and organizations in which the state is directly involved (Berman, 2000). This means that constitutional rights—freedom of speech and association, requirements of due process, rights to participate in the democratic process—where they exist, all apply only against the state, not private actors. While some scholars have suggested that constitutional rules may apply to platforms that can be thought of as quasi-public fora (Balkin, 2004; Netanel, 2000; Nunziato, 2005), the law has not yet developed in this way.

The result is that users have very little legal redress for complaints about how platforms are governed. Users are thought of as consumers who have voluntarily accepted the terms of participation in private networks. Having accepted and adopted these terms, users are legally bound by them. The legal answer to concerns users have about the governance of platforms is, largely: if you don’t like it, leave.

Platforms also work hard to avoid any perception that they are in any sense responsible to third parties for what their users do on their networks. They do this primarily by limiting the extent to which they are seen to be governing their users. By presenting themselves as neutral intermediaries, mere carriers of content and facilitators of conversations, platforms seek to avoid the implication that they may be responsible for how their users behave or how their systems are designed and deployed (Gillespie, 2010). At the same time, platforms have strong incentives to shape the way their users act and interact in order to satisfy the varied and often conflicting demands from and within different user communities, civil society groups, advertisers, businesses, and governments. This is a delicate balancing act: platforms at once express their neutrality and their absolute discretion, as businesses and owners of private property, to manage their affairs and control their networks.

Platforms, of course, are not neutral. Their architecture (Lessig, 2006) and algorithms (Gillespie, 2014) shape how people communicate and what information is presented to participants. Their policies and terms of use are expressed in formally neutral terms, but the powers they provide are carefully wielded and selectively enforced (Humphreys, 2007). Their ongoing governance processes are shaped by complex socio-economic (Dijck & van Poell, 2013) socio-technical (K. Crawford & Gillespie, 2014) structures, and the interplay of emergent social norms (Taylor, 2006). As systems that mediate between users, platforms can never be neutral in any real sense of the word: “platforms intervene” (Gillespie, 2015).

The contractual Terms of Service documents must accordingly do double duty. For users, the subjects of regulation, they reserve to the platform complete discretion to control how the network works and how it is used. For those who would ask that platforms exercise their power to control behavior for other ends—including users themselves, copyright owners and other third parties with grievances, and governments who seek to surveil users or censor content—the Terms are structured to disclaim liability or responsibility for how autonomous users act. This duality can only be maintained to the extent that it is accepted that platforms are inherently private spaces. It rests on the assertion of a fundamental distinction: platforms have the technical ability and the legal right to control how their systems are used, but do not bear the moral or legal responsibility for what users do. This distinction works on the basis that users are fully autonomous, rational actors in a liberal marketplace. They consent to the platform’s control as the price of entry, but they retain personal responsibility for their actions. It is precisely this assertion that digital media platforms are fully private that is coming under increasing strain as it becomes more clear how much of a role platforms play in governing everyday life (Gillespie, 2018; Lastowka, 2010).

There is a growing global unease about how the rights of individuals can be protected online (Pettrachin, 2018). Sir Tim Berners-Lee, for example, has recently called for a “Magna Carta for the Web” (Kiss, 2014) to protect the rights of individuals, and the W3C’s “Web We Want” initiative has taken up the campaign. This initiative builds on others before it, like the Internet Rights & Principles Coalition’s (IPRC, 2014) charter and the work started by the Global Network Initiative’s (2012) principles. Many other declarations and campaigns from civil society groups, nation states, and supranational bodies have echoed these calls, which are fundamentally clustered along classic liberal priorities: decentralized power, formal equality (in the form of network neutrality), freedom of expression, and privacy rights (Redeker, Gill, & Gasser, 2018). These initiatives are generally positioned in opposition to interference from state actors—demands from various governments to collect and disclose information on the activities of individuals, to remove or block access to prohibited information, and to engineer networks and technologies in ways that facilitate surveillance and law enforcement.

By contrast, the internal “self-governance” practices of intermediaries is where pressure for better governance is most dispersed and least visible. As a rule, intermediaries are secretive about their own practices in policing content and enforcing their terms of service (MacKinnon, Hickock, Bar, & Lim, 2014). Only some of the major declarations of rights and the institutions that have developed to hold internet intermediaries to account focus on the procedural legitimacy of their internal policies and procedures, and even these primarily focus on freedom of expression and privacy rights (Suzor, Van Geelen, & West, 2018). Unlike well-funded lobby groups and powerful state governments, the users who care deeply about how content is regulated are not as well-organized or influential on the policies of platforms. The problem is exacerbated by a fundamental conflict and uncertainty within civil society, where there is no consensus about the extent to which users need protection from the governance decisions of platforms themselves. The lack of a language of rights and clearly articulated concepts of the responsibility of platforms makes it difficult to even discuss the legitimate concerns that both users and platforms have (Suzor, 2010).

The need for a language of users’ rights is becoming increasingly pressing. If digital media platforms are “the new governors” (Klonick, 2017) of our shared social spaces, there is an ongoing challenge to articulate what rights their users ought to have, and how these rights can be protected. In some ways, online social spaces can be experienced as quasi-public spaces, where the longstanding liberal distinction between public and private spaces is insufficient to adequately understand the relationships and embodied experiences of users (Cohen, 2012; Santaniello, Palladino, Catone, & Diana, 2018). There is no efficient market for terms of service, and platforms wield power so disproportionate that the agency of users to negotiate terms is extremely limited (Suzor, 2010). Even if a market for rulesets existed, to the extent that private governance deals with issues of fundamental human rights, these values are too important to be left to contractual negotiation. Tensions over private governance are continually emerging in diverse and very loosely organized ways, as users seek to renegotiate the social contract that sets out their relationships to platforms. These struggles sometimes manifest as high-profile controversies over how power is exercised—including, for example, questions of censorship, bias in algorithmic content selection and curation, and responses to abuse and harassment perpetrated through social media (Nahon, 2016). Sometimes, organized and sustained actions by groups of users have been effective enough to pressure platform owners to change the Terms of Service (e.g., Matias et al., 2015). But without any consensus over whether users even have interests that can be expressed as “rights” that apply against platforms in a way that overrides the contractual terms of service, these struggles only ever proceed slowly and in isolation.

This is the project of digital constitutionalism: to rethink how the exercise of power ought to be limited (made legitimate) in the digital age (Fitzgerald, 2000). The core ideal of the rule of law is that the exercise of power is limited by rules. Constitutionalism is the political project of defining these limits. “Digital constitutionalism,” then, is the work of articulating limits on the exercise of power in a networked society (Padovani & Santaniello, 2018). The key challenge of digital constitutionalism is to identify how values of good governance can be protected in the digital age.

The task of identifying and developing social, technical, and legal approaches that can improve the legitimacy of online governance is an increasingly pressing issue (Brown & Marsden, 2013; DeNardis, 2014; Mansell, 2012). The protection of the constitutional rights of users of telecommunications has been at issue for a long time now (e.g., Pool, 1983), but has become more pressing with an increasing recognition of the important role that platforms play in mediating communication (Gillespie, 2015). The strong division between public and private in constitutional law and theory becomes deeply problematic once it is clear that regulation is not only, or even not primarily, done by the state (Black, 2001; Burris et al., 2005; Grabosky, 1994). The rules of online social spaces and the ways they are enforced have real impact on the human rights of users (Council of Europe, 2012; Kaye, 2016). Recognition of this point has led to increasing calls for a new way of thinking about governance by platforms and a more substantive understanding of constitutional rights in online governance (K. Crawford & Lumby, 2013; Langlois, 2013). There is now a clear need to further explore how constitutional values and rights can be protected once the idea of governance is decentralized (Black, 2008; Grabosky, 2013; Morgan, 2007).

The Principles of the Rule of Law

The rule of law provides a way to evaluate the legitimacy of governance in a normative sense. The core of the idea of the rule of law is that governments ought to wield their power in a way that is authorized and subject to the law (Raz, 1977). The way that these principles have historically been applied has been state-centric. This article argues that these values can be usefully applied to assess the governance of digital media, paying particular attention to the role of platforms as writers of the rules of participation; designers of technology that enables communication and constrains action; developers of algorithms that sort, organize, highlight, and suppress content; and employers of human moderators who enforce rules on acceptable content and behavior.

It is important to note at the outset that constitutionalism implicates both procedural and substantive concerns. In this article, I focus on the procedural issues—the baseline set of protections for due process that are almost universally accepted as a fundamental requirement of the rule of law. Importantly, however, we should not simply transpose the heavy expectations that characterize legitimacy in governance by states to apply to platforms. We must instead pay attention to a set of core governance concerns that might apply, without assuming the necessity of any of them in any particular context. This is a largely political project; it requires difficult negotiations about the types of communities and platforms we want to create and the legal and social norms that are appropriate to achieving those goals.

As an initial starting point, I categorize the procedural values of the rule of law into three sets of concerns: meaningful consent, equality and predictability, and due process. In the sections that follow, I will explain each of these requirements in turn. In order to contextualize and illustrate the analysis of the legitimacy of contractual governance documents, I examine the legal terms and conditions of the largest English-language social media platforms against each of these sets of concerns. I selected the 14 largest platforms by traffic, 3 on the basis that the largest platforms are those that are likely to have the most significant impact on the civil and political rights of their users. Note that this sample is highly western- and US-centric; it omits most major non-English language social media platforms. For this initial stage, it was useful to constrain analysis to contracts governed by common law systems in general and US law in particular, but future work should extend this further. I apply the rule of law framework here to large social media platforms—but it could also be extended in the future to other platforms that govern important aspects of social life. Each contractual document was analyzed to identify the extent to which they provided protections for the procedural interests of users. Note that the analysis was carried out in June of 2015 as the documents then stood and does not account for any changes after that date. (For a similar study carried out concurrently and focusing on human rights in terms of service documents, see Venturini et al., 2016.) The core limitation of this methodological approach is that it examines only formal documents, which are often drafted to protect the interests of platforms to the fullest extent permissible under law. It does not include the documents that are designed to be more accessible to users—like community guidelines or other explanatory documents—but are usually not expressed to be legally binding. Neither does it include the hidden documents that are used to train and guide moderation teams or the training sets of machine learning algorithms that might be said to more accurately represent the actual rules as routinely enforced. As such, the documents analyzed here represent the outer legal bounds only of platform governance, and not governance in practice. These outer bounds are still important to study: they are the points at which disputes can be resolved by the legal system, and the only meaningful legal limits on power. But because these bounds are so expansive, in future work, it will be important to study how governance operates in practice, and how it is limited by non-legal pressure from different stakeholders.

Meaningful Consent: Governance Limited by Law

Legitimacy, at its core, depends upon some consensus that the regulator has a right to govern in the way that it does (Black, 2008). For a system of governance to be legitimate, there must be some consensus that social rules represent some defensible vision of the common good (Allan, 2001). At a minimum, the consent of the governed requires that governance power is exercised in a way that is limited by rules—not arbitrarily (Dicey, 1959). This is ultimately the most basic value of the rule of law—that power is wielded in a way that is accountable, that those in positions of power abide by the rules, and that those rules should only be changed by appropriate procedures within appropriate limits. In this limited sense, there is good reason to believe that the rule of law is a universal human good—that all societies benefit from restraints on the arbitrary or malicious exercise of power (Tamanaha, 2004, p. 137; Thompson, 1990, p. 266).

This prohibition on the arbitrary exercise of power provides a very useful criterion through which to measure the legitimacy of the governance of platforms. One of the most concerning characteristics of private governance is that it is very seldom transparent, clear, or predictable, and providers often purport to have absolute discretion on the exercise of their power to eject under both contract and property law. Essentially, providers have control over the code that creates the platform, allowing them to exercise absolute power within the community itself. The exercise of this power is limited by the market and by emergent social norms, but it is barely limited by law. Take, for example, the Facebook Terms of Service, as they were before they were updated due to user protest in May 2009, which provided that Facebook may terminate your membership, delete your profile and any content or information that you have posted on the Site [. . .] and/or prohibit you from using or accessing the Service or the Site [. . .] for any reason, or no reason, at any time in its sole discretion, with or without notice[.].

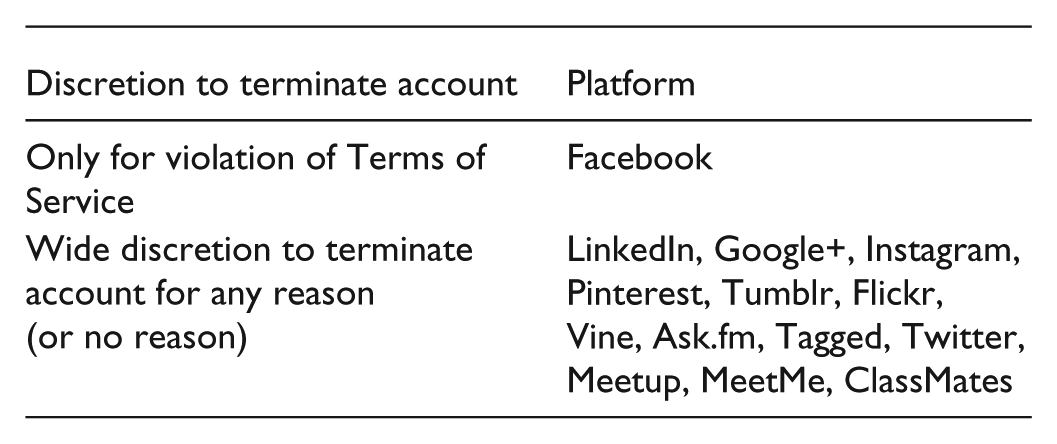

Most Terms of Service documents in our sample use similar language that very clearly reserves to the platform the right to terminate user accounts at any time. Most also included an explicit extension that termination can be for any or no reason, at the service provider’s sole discretion.

Importantly, Facebook has replaced its previous Terms with a more accessible “Statement of Rights and Responsibilities.” In this process, the equivalent language was significantly watered down to read: If you violate the letter or spirit of this Statement, or otherwise create risk or possible legal exposure for us, we can stop providing all or part of Facebook to you.

Even though this clause was the most generous to users from our sample, it is still broad enough to provide the platform with almost complete control over the relationship. While Facebook’s Terms became more readable, this change has no real binding effect.

In all cases examined, the Terms of Service provided broad, unfettered discretion to platform owners. The core value of the rule of law, as a prohibition on the arbitrary exercise of power, provides a simple and powerful framework through which to articulate why these clauses are so concerning. Like constitutional documents, Terms of Service grant powers, but unlike constitutions, they rarely limit those powers or regulate the ways they are exercised. Throughout recorded history, this basic conception of the rule of law has been seen as important to help ward off tyrannical governance (Tamanaha, 2004, pp. 138–139). By explicitly allocating broad discretion to platforms to terminate access on any grounds, these terms seek to firmly keep the political processes of governance outside of any legal standards of review. These terms represent a claim by platforms that the public governance values do not apply to disputes over access to the platform. Where platforms can exercise control over access, by extension, they are able to make access conditional upon accepting any other written or unwritten rule. These clauses are the legal lynchpin of a governance strategy that participants must submit to the authority of the platform in order to gain access (“take it or leave it”).

This structural framing of relationships between users and platforms firmly positions ongoing debates about platform governance as an issue to be negotiated with the platform operators, rather than as a public political question. This is deeply problematic, particularly since users are constrained in their power to negotiate with platforms or exit established networks (Centre for International Governance Innovation, 2016). On utilitarian grounds, these contracts are simply unlikely to ever reflect an optimal bargain. Since these contracts are not effectively bargained for, it can hardly be said that the interests of users are well represented. The fine print of standard form contracts cannot be said to be assented to in any real sense (Llewellyn, 1960; Radin, 2005).

This analysis suggests that where platforms play a central role in public communication, we ought to be concerned about the arbitrary or capricious exercise of power by providers and their delegates. All of the other interests that users might have in participating in online social spaces hinge, ultimately, on access. Clearly platforms have a legitimate interest in being able to determine membership—much of the character of shared social spaces has to do with the participants who make up the community or who have access to the platform. Without making any claims about the substantive rules at stake, about who may join and continue to participate, we can at least suggest that a fundamental requirement of legitimate governance is that if a participant is denied access, that should be done according to rules that can reasonably be said to have the consensual support of the participants. The extensive powers that platforms reserve to themselves to unilaterally determine continued access are deeply problematic because they directly reject the fundamental proposition that their governance power ought to be limited and not arbitrary.

Formal Legality: Equality and Predictability

Beyond the general prohibition on arbitrary punishment, the most commonly agreed principles of the rule of law are procedural protections. These essentially require that rules are applied equally and predictably (Tamanaha, 2004, p. 114). At a minimum, this means that users should be aware of the rules and the reasons upon which decisions that affect them are made (Kingsbury, Krisch, & Stewart, 2005). It also implies that rules should be equally enforced and should be stable enough to guide behavior (Trebilcock & Daniels, 2008). This is often the aspect of legitimacy that is most at play in contests over how platforms govern their networks. Many of the major controversies over governance in recent years are rooted in disagreements over perceptions that a platform is biased or discriminating against some group of users or promoting some opinions over others, particularly but not exclusively on the basis of race, gender, sexuality, and political speech.

Immediately, Terms of Service present a clear problem on clarity. Many of the documents I examined were written in a style that was not designed to be read or understood by users. A recent experimental study confirms what is generally assumed—users of social networking sites overwhelmingly do not read the Terms of Service (Obar & Oeldorf-Hirsch, 2016). In recent times, some sites have worked to simplify their Terms of Service. More than half of the documents in the sample used more accessible, “plain-English” drafting—which reflects a major change over the last decade. Many of the documents, though, are still too long and too complex to be easily understood by a lay audience. A handful of platforms (Twitter, Pinterest, Tumblr, Tagged) use simple annotations in their terms. This too is a recent change—most of these annotations began to appear over the last 3–4 years. 4 This is a very important move that should be applauded; the annotated versions are substantially easier to read than other contractual documents. Other platforms create more accessible versions of the important rules that users are expected to follow as “community guidelines” or similar documents that are simpler to understand. While these set out the rules that users are expected to follow, they often have little legal weight. The Terms of Service documents themselves may refer to these guidelines, but they are never expressed to be exclusive. That is, platforms reserve the discretion to enforce different, as yet-unwritten rules, should the need arise. For many platforms, the Terms of Service are made significantly more complicated by referring to and incorporating other documents like these, including guidelines, privacy policies, advertising policies, and more.

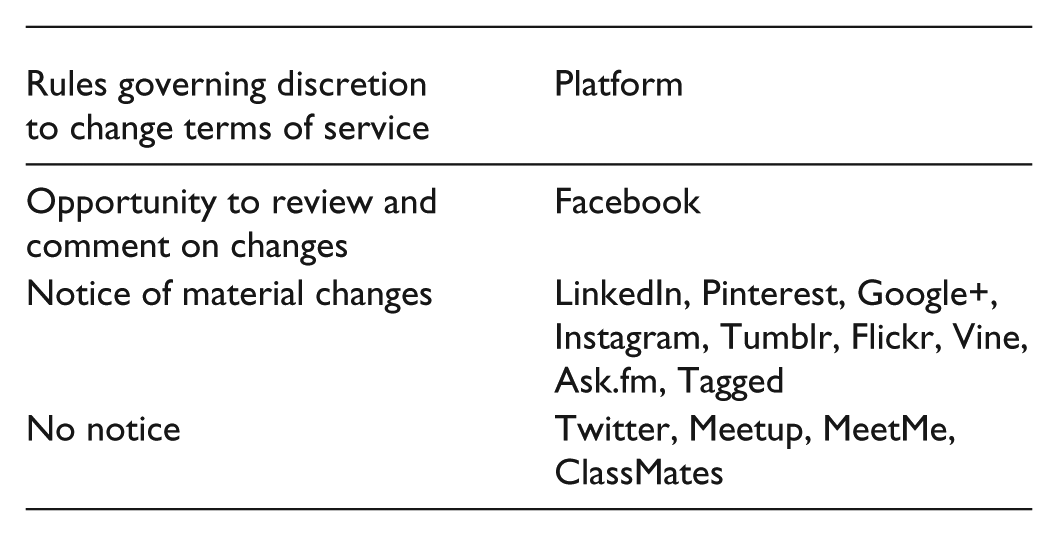

Not only are Terms of Service often unclear, but they are all able to be changed by the unilateral decision of the platform. Approximately half of the platforms committed to some responsibility to inform users that their terms had changed, either through email or a notice posted on the site itself. Only Facebook commits to providing users with an opportunity to review and comment on changes before they come into force, but its commitment to a democratic voting mechanism has been removed. Remarkably, some platforms do not commit to giving any notice of changes, instead requiring users to bear responsibility for continuously checking the legal terms to see whether they may have changed.

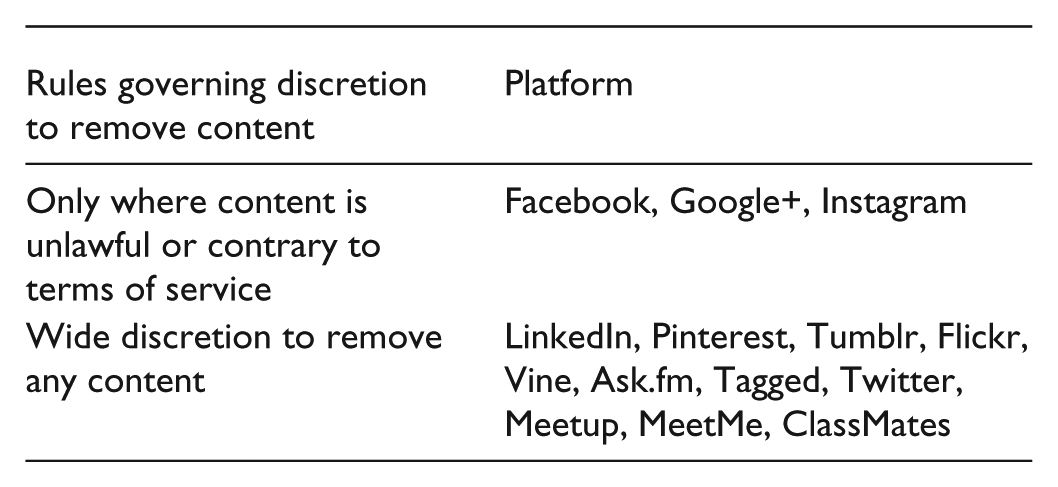

Apart from changes in policy, the arbitrariness, or perceived arbitrariness, of the way that platforms make decisions is a key source of anxiety around governance. In addition to allowing platforms to terminate entire user accounts, all of the Terms of Service studied reserve power to the platform to remove any content that users post to the sites. Most Terms of Service express this in quite broad terms—that platforms can remove content at their “sole discretion” or “belief” that it violates their policies. Some platforms go even further, expressly reserving the right to remove “any” content at any time; LinkedIn’s terms, for example, state that “We are not obligated to publish any information or content on our Service and can remove it in our sole discretion, with or without notice.”

Even for the limited subset of platforms that commit to only removing content that violates explicitly stated rules, there is a great deal of uncertainty about how those rules will be interpreted. As one example, mothers whose breastfeeding photos have been removed have been led to wonder how exactly Facebook’s complaints team enforce their rule against “pornography” in a way that distinguishes, in Facebook’s explanation, between mothers genuinely sharing their experiences and “pictures of naked women who happen to be holding a baby” (Facebook spokesman Barry Schnitt, quoted in Ingram, 2011). Over years of complaints by mothers and advocacy groups (Ibrahim, 2010), Facebook has clarified their position somewhat, noting that it will respond to complaints and remove “Photos that show a fully exposed breast where the child is not actively engaged in nursing” (Facebook, n.d.). What exactly “actively engaged” means remains contested.

This example is only one among many. It is clear that platforms are developing and refining rules in an ad hoc way, as circumstances require (Buni & Chemal, 2016). To an extent, this is understandable—in such rapidly developing environments with emergent social uses, it is almost impossible to lay out all the rules in advance. These increasingly frequent disputes over the interpretation and enforcement of rules, however, show that platforms face a difficult challenge not only in setting rules but in setting rules that are able to be clearly communicated to users and managing changes in a way that maintains the ongoing consent of the governed.

Due Process

The final procedural component of the rule of law is that there is some mechanism to resolve disputes. Policies and rules are always imprecisely interpreted and applied; the very fact that they are expressed in language, which can only imperfectly describe practice, means that there will always be some degree of uncertainty (Hart, 1961). The way that legal systems deal with this uncertainty is to develop procedural safeguards that ensure, as far as practicable, that decision makers are impartial, that the reasons upon which they make decisions are transparent, that the discretion they exercise is curtailed within defined bounds, and, if something goes wrong, that there are procedures to appeal the decision to an independent body. This is one of the basic tenets of procedural fairness or due process, a fundamental component of the rule of law (Fuller, 1969). These requirements of due process are generally accepted as “a necessary, albeit not sufficient condition for the realization of almost any defensible conception of the rule of law” (Trebilcock & Daniels, 2008, p. 30).

As minimally applied to governance by platforms, we might expect due process to have two main components. First, that before a regulatory decision is made, it is made according to valid criteria and processes. Second, once a decision has been made, due process then requires that users who are adversely affected have some avenue of appeal and independent review. Formal terms of service provide little reassurance to users on either count. The criteria that platform moderation teams use to evaluate decisions are secret, and none of the terms examined made any commitment to transparency here. Even for the subset of platforms that promise only to remove content that violates their Terms of Service, users have only a slim possibility of appealing decisions they disagree with. None of the Terms establish any formal internal dispute resolution mechanism, but users can seek to challenge enforcement of the Terms in court or through arbitration. Some of the platforms studied have internal appeals processes for challenging decisions, but these are not particularized or expressed to be binding in the contractual documents. In practice, these processes are generally poorly understood and not particularly reliable (Urban, Karaganis, & Schofield, 2016; West, 2018).

Six of the Terms in my sample required users to submit to binding arbitration, although for three of those, the platform agreed to pay the costs. Arbitration can reduce the costs of hearing disputes, but arbitration proceedings tend to favor the large repeat players over individual consumers if they are not carefully designed to promote consumer rights (Wilson, 2016). Almost all Terms required users to resolve disputes in the platform’s home jurisdiction. This is particularly problematic, since it imposes a heavy cost on users to travel in order to bring a claim. Most of the platforms that require binding arbitration also prohibit users from bringing class actions to reduce the individual costs of bringing many similar claims. Even if a claim is successfully brought, all platforms limit their potential liability for any wrongdoing. Because rules about limitations of liability differ in different jurisdictions, this is usually expressed as a complete limitation or, alternatively, limited to a small monetary maximum of USD$100 or similar (LinkedIn is an exception, limiting liability up to USD$1,000 for paying users—still a sum small enough to discourage users to file disputes). The cumulative effect of all this drafting is that generally, users have no realistic chance of challenging decisions made by platforms in any formal legal process.

Rule of Law, Not of Individuals

The implications of this analysis are that consumer contracts are poor ways to articulate the rights of users and the responsibilities of platforms. In purely formal terms, the Terms of Service of major platforms are almost universally designed to maximize their discretionary power and minimize their accountability. Through the lens of the rule of law, we can see how this is immediately problematic. At a general level, Terms of Service documents of platforms fall well short of accepted standards of good governance because they do almost nothing to restrain the platform’s exercise of power. As constitutional documents, Terms of Service fail to provide meaningful safeguards against arbitrary or capricious decisions. In procedural terms, they generally struggle to provide the clarity that is required to guide behavior, they provide no protection from unilateral changes in rules, do nothing to ensure that decisions are made according to the rules, and present no meaningful avenues for appeal.

The values of the rule of law provide a way to talk and think about the growing but amorphous set of concerns about the appropriate normative limits of the power of platforms. The rule of law, as an ideal, is a vision that to live under the rule of law is not to be subject to the unpredictable vagaries of other individuals—whether monarchs, judges, government officials, or fellow citizens. It is to be shielded from the familiar human weaknesses of bias, passion, prejudice, error, ignorance, cupidity, or whim. (Tamanaha, 2004, p. 122)

From this ideal, it becomes possible to articulate with some greater precision what is at stake when platforms govern our shared online social spaces. Constitutionalism is fundamentally about the limitation of governance power; “digital constitutionalism” requires a very messy contestation of the appropriate ways in which the power of platforms ought to be limited. This is an inherently political task, and there can be no common agreement on the exact shape of either substantive or procedural limitations on the power of platforms.

Constitutionalism does not, however, depend upon a full overarching political framework (Huggins, 2017). So-called “thin constitutionalism” provides a way to focus on legitimacy in discrete contexts. It is possible, for example, to talk about how decisions are made and reviewed without having all of the structures of constitutional government (Klabbers, 2004). The core principles of the rule of law provide the language that is needed to engage in the ongoing and deeply contested political discussion about how we imagine the future of our shared online social spaces. In this sense, the values of the rule of law are not prescriptive—we would not want to envisage a future where all platforms are held to the same standards of legitimacy as territorial states (Suzor, 2012). But they do provide a language to name and work through the loose set of often inchoate concerns about the relationship between platforms and their users. The next steps for scholars and advocates who care about platform governance will be to continue, with more precision, to press the political discussion about what responsibility platforms should have to protect the rights of users and how these responsibilities might be enforced.

The messy work of articulating limits on governance power is becoming an increasingly urgent task. Platforms play a vital role in governing important parts of the daily lives of billions of individuals. The legal mechanisms that we have for protecting civil and political rights do not translate well to governance by platforms. The law of contract, which currently regulates these relationships, does not address these governance concerns. In this gap, we have an opportunity to develop a normative understanding of the responsibilities of platforms. This is an opportunity to set out the constitutional principles that we collectively believe ought to underpin our shared social spaces in the digital age. The values of the rule of law—values of good governance—provide a way to conceptualize governance by platforms in constitutional terms. At a minimum, for a system of governance to be legitimate, decisions must be made according to a set of clear and well-understood rules, in ways that are equal and consistent, with fair opportunities for due pr independent review. These are the basic procedural values against which the legitimacy of platform governance ought to be measured. Not all of these values should apply to all platforms or in all circumstances, but they provide an established language through which to evaluate systems of governance. The task of holding platforms to account against these values work is necessarily contested, inherently political, and complicated by different contextual and cultural considerations. But it can only be progressed with a clearer understanding of the values that are at stake. Finding ways to improve the legitimacy of platform governance, through both legal rules and social obligations, is the key challenge and opportunity of digital constitutionalism.

Footnotes

Acknowledgements

Many thanks to Tess Van Geelen for excellent research assistance, and to Lucy Cradduck, Angela Daly, Anna Huggins, Brendan Keogh, Carmel O’Sullivan, Kylie Pappalardo, Matthew Rimmer, Emily Van Der Nagel, the anonymous reviewers, and participants at IPP 2016 and AoIR 2016 for helpful comments and feedback.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: A/Prof. Suzor is the recipient of an Australian Research Council DECRA Fellowship (project number DE160101542).