Abstract

Introduction

Over recent years, task-oriented training has emerged as a dominant approach in neurorehabilitation. This article presents a novel, sensor-based system for independent task-oriented assessment and rehabilitation (SITAR) of the upper limb.

Methods

The SITAR is an ecosystem of interactive devices including a touch and force–sensitive tabletop and a set of intelligent objects enabling functional interaction. In contrast to most existing sensor-based systems, SITAR provides natural training of visuomotor coordination through collocated visual and haptic workspaces alongside multimodal feedback, facilitating learning and its transfer to real tasks. We illustrate the possibilities offered by the SITAR for sensorimotor assessment and therapy through pilot assessment and usability studies.

Results

The pilot data from the assessment study demonstrates how the system can be used to assess different aspects of upper limb reaching, pick-and-place and sensory tactile resolution tasks. The pilot usability study indicates that patients are able to train arm-reaching movements independently using the SITAR with minimal involvement of the therapist and that they were motivated to pursue the SITAR-based therapy.

Conclusion

SITAR is a versatile, non-robotic tool that can be used to implement a range of therapeutic exercises and assessments for different types of patients, which is particularly well-suited for task-oriented training.

Keywords

Background

The increasing demand for intense, task-specific neurorehabilitation following neurological conditions such as stroke and spinal cord injury has stimulated extensive research into rehabilitation technology over the last two decades.1,2 In particular, robotic devices have been developed to deliver a high dose of engaging repetitive therapy in a controlled manner, decrease the therapist’s workload and facilitate learning. Current evidence from clinical interventions using these rehabilitation robots generally show results comparable to intensity-matched, conventional, one-to-one training with a therapist.3–5 Assuming the correct movements are being trained, the primary factor driving this recovery appears to be the intensity of voluntary practice during robotic therapy rather than any other factor such as physical assistance required.6,7 Moreover, most existing robotic devices to train the upper limb (UL) tend to be bulky and expensive, raising further questions on the use of complex, motorised systems for neurorehabilitation.

Recently, simpler, non-actuated devices, equipped with sensors to measure patients’ movement or interaction, have been designed to provide performance feedback, motivation and coaching during training.8–12 Research in haptics13,14 and human motor control15,16 has shown how visual, auditory and haptic feedback can be used to induce learning of a skill in a virtual or real dynamic environment. For example, simple force sensors (or even electromyography) can be used to infer motion control 17 and provide feedback on the required and actual performances, which can allow subjects to learn a desired task. Therefore, an appropriate therapy regime using passive devices that provide essential and engaging feedback can enhance learning of improved arm and hand use.

Such passive sensor-based systems can be used for both impairment-based training (e.g. gripAble 18 ) and task-oriented training (ToT) (e.g. AutoCITE8,9, ReJoyce 11 ). ToT views the patient as an active problem-solver, focusing rehabilitation on the acquisition of skills for performance of meaningful and relevant tasks rather than on isolated remediation of impairments.19,20 ToT has proven to be beneficial for participants and is currently considered as a dominant and effective approach for training.20,21

Sensor-based systems are ideal for delivering task-oriented therapy in an automated and engaging fashion. For instance, the AutoCITE system is a workstation containing various instrumented devices for training some of the tasks used in constraint-induced movement therapy. 8 The ReJoyce uses a passive manipulandum with a composite instrumented object having various functionally shaped components to allow sensing and training of gross and fine hand functions. 11 Timmermans et al. 22 reported how stroke survivors can carry out ToT by using objects on a tabletop with inertial measurement units (IMU) to record their movement. However, this system does not include force sensors, critical in assessing motor function.

In all these systems, subjects perform tasks such as reach or object manipulation at the tabletop level, while receiving visual feedback from a monitor placed in front of them. This dislocation of the visual and haptic workspaces may affect the transfer of skills learned in this virtual environment to real-world tasks. Furthermore, there is little work on using these systems for the quantitative task-oriented assessment of functional tasks. One exception to this is the ReJoyce arm and hand function test (RAHFT) 23 to quantitatively assess arm and hand function. However, the RAHFT primarily focuses on range-of-movement in different arm and hand functions and does not assess the movement quality, which is essential for skilled action.24–28

To address these limitations, this article introduces a novel, sensor-based System for Independent Task-Oriented Assessment and Rehabilitation (SITAR). The SITAR consists of an ecosystem of different modular devices capable of interacting with each other to provide an engaging interface with appropriate real-world context for both training and assessment of UL. The current realisation of the SITAR is an interactive tabletop with visual display as well as touch and force sensing capabilities and a set of intelligent objects. This system provides direct interaction with collocation of visual and haptic workspaces and a rich multisensory feedback through a mixed reality environment for neurorehabilitation.

The primary aim of this study is to present the SITAR concept, the current realisation of the system, together with preliminary data demonstrating the SITAR’s capabilities for UL assessment and training. The following section introduces the SITAR concept, providing the motivation and rationale for its design and specifications. Subsequently, we describe the current realisation of the SITAR, its different components and their capabilities. Finally, preliminary data from two pilot clinical studies are presented, which demonstrate the SITAR’s functionalities for ToT and assessment of the UL.

Methods

The SITAR concept

A typical occupational therapy or assessment session may involve patients carrying out different activities of daily living on a tabletop. For example, this could involve simple reaching tasks, transferring wooden blocks from one place to another, peg removal and insertion, etc. The SITAR concept is based on the idea of instrumenting this setup to measure patients’ movement and interaction to provide feedback, gamification for active patient participation and assessment of patients' sensorimotor ability in a natural context. The SITAR concept consists of a combination of the following components:

An interactive force–sensitive tabletop. A large proportion of our daily activities involving the UL are carried out on a tabletop. Thus, having an interactive tabletop that can sense activities performed on it (i.e. touch and placement of objects) and can provide visual and audio feedback will serve as an excellent platform for designing an engaging system for training. Note that the ability to sense interaction force at the table surface enables a sensitive and accurate characterisation of the motor behaviour; for example, the impact force of pick-and-place tasks can be a useful indicator of motor ability.

29

An ecosystem of intelligent objects capable of both sensing and providing haptic, visual and auditory feedback directly from the object. These intelligent objects, which abstract the functional shapes and capabilities of real-world objects, can be used as separate tools or along with the interactive tabletop for training and assessing different UL tasks. They would be capable of sensing the patient’s interaction such as touch, interaction force, translational/rotational movements, and they provide appropriate multisensory visual, audio and vibratory feedback. Natural sensorimotor context. In most existing systems, the visual and haptic workspaces are dislocated, i.e. a patient works or interacts physically on a tabletop and receives visual and audio feedback from a computer monitor located in front of the head. In contrast, the SITAR provides collocated haptic and visual workspaces with natural sensorimotor interaction for patients to perform and train tasks, which provides a more natural context for interaction. This may potentially enhance transfer to equivalent real-world tasks. Modular architecture. The system would have a modular architecture that enables new tools (a new object, an additional table, etc.) to be easily integrated into the system. Moreover, each of these tools would be suitable for using them separately without the need for any of the other system components. In particular, an intelligent object can be used either with or without the tabletop or the other objects. A suitably designed game using the modular system architecture would allow a subject to simultaneously interact with multiple objects without any confusion. Moreover, the system would also allow the use of other external sensing or assistive devices that extend the SITAR’s capabilities; for example, 3D vision–based motion tracking of the UL kinematics, an arm support system, a wearable robotic device or a functional electrical stimulation system for hand assistance.

The SITAR with these different features would act as a natural, interactive and quantitative tool for training and sensing UL tasks that are relevant to the patient. It would also facilitate the development of engaging mixed reality environments for neurorehabilitation by (a) integrating different intelligent objects and (b) providing clear instructions and performance feedback to train patients with minimal supervision from a therapist.

SITAR’s components

Multimodal Interactive Motor Assessment and Training Environment (MIMATE)

The SITAR’s interactive tabletop and intelligent objects were developed using a common platform that can (a) collect data from the different sensors in the table, objects, etc.; (b) provide some preliminary processing of sensor data (e.g. orientation estimation using IMU); (c) provide multimodal (e.g. audio, visual and vibratory) feedback and (d) communicate bi-directionally to a remote workstation (e.g. a PC). This common platform, called the MIMATE (Multimodal Interactive Motor Assessment and Training Environment), is a versatile, wireless-embedded platform for developing interactive devices for a variety of healthcare applications. It has been previously used for training, teaching and designing intelligent objects. 30 In the SITAR, the MIMATE serves as an integral part of all its components for collecting, processing and communicating data to a remote workstation, where all the information is fully processed for providing feedback to the subject. A detailed description of the MIMATE was discussed previously in the study by Hussain et al. 31 Embodiments of the SITAR can be implemented with other commercially available platforms as well; however, the MIMATE was custom-made for use in applications involving human interaction in motor control, learning and neurorehabilitation.

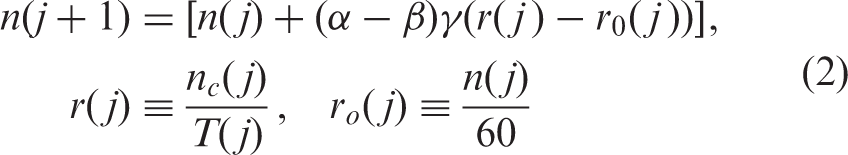

Interactive force and touch–sensitive tabletop

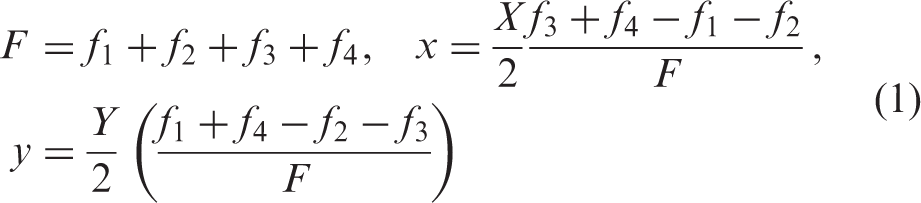

The SITAR tabletop is a toughened glass surface supported on a custom-built, aluminium, table-like structure with a 42-in. liquid crystal display television situated directly below the glass surface (Figure 1(a)). The glass is supported on four load cells (CZL635 Micro Load Cell (0–20 kg), Phidgets Inc.) placed on the aluminium frame at the four corners of the table. The four load cells are individually preamplified and connected to a MIMATE module, which samples the data from these sensors at 100 Hz. It then wirelessly transmits the data to the workstation. The glass surface acts similar to a force plate used in gait analysis for detecting ground reaction force and its centre-of-pressure (COP). The glass surface, along with the television underneath, thus behaves like a simple, cost-effective and large touchscreen capable of detecting a single touch and its associated force.

The SITAR concept with (a) the interactive table-top alongside some examples of intelligent objects developed including (b) iJar to train bimanual control, (c) iPen for drawing, and (d) iBox for manipulation and pick-and-place.

By measuring the load cell forces, we can determine the downward component of the total force F acting on the glass surface and the COP of this applied force (x, y). The total touch force F and position

Table calibration

Individual calibration of each load cell, linear calibrations of the normal force and (x, y) coordinates of the touch position associated with the interactive table was performed prior to its use. This was achieved using a least squares fit to data spanning a range of ‘typical interaction’ values arranged in a

Figure 2 shows the mean root mean square (RMS) error of the touch force averaged over the entire workspace with error bars indicating the average 3 standard deviation measurement error of the force during a single four-second touch. The left subplot shows the absolute errors, while the right plot shows the error as a percentage of the force specified. These plots highlight that the average RMS error increases with elevated force but at a much slower rate than the force itself. Conversely, the time-dependent error is fixed as it is predominantly due to the individual measurement noise associated with each load cell.

Table force errors against touch forces (temporally and spatially averaged) with bars showing average RMS errors (biases) and error bars indicative of the time-dependent error (i.e. as three standard deviations calculated from each four second trial averaged over all nine spatial locations). The plots show (a) the absolute errors and (b) the errors normalised by the force-level as a percentage.

Figure 3 shows the positional errors at the nine (x, y) locations for four F levels tested. At low touch forces, the position estimation becomes erratic. This can be seen in both the 0.49 N (50 g) and 0.98 N (100 g) plots where the positional errors (both the RMS and measurement noise) are in the centimeter range. At larger touch forces, these errors reduce to the millimeter scale as highlighted in Table 1. Therefore, a touch threshold of 1 N (100 g) has been set, below which no touch would be registered by the system. This threshold does not affect the detection of the typical therapy objects used (see section ‘Intelligent objects’) that generally have masses of over 200 g.

Table positional errors for four different touch force values ((a) F = 0.49 N, (b) F = 0.98 N, (c) F = 2.45 N and (d) F = 4.91 N)). Target (reference) locations (red plus) are shown alongside (blue) ellipses with the centre and principal axes indicative of the mean touch location and ±3SD measurement error in the x and y directions. Average (F, x, y) RMS errors and time-dependent measurement noise for different touch force values. RMS: root mean square.

Detecting objects on the table

To enhance the functionality of the interactive table, a special algorithm has been developed permitting single-touch interaction with or without objects placed on the table surface. To achieve this, it is necessary to differentiate the sensor data from the four load cells during (and just following) object placement from (both static and dynamic) human interaction. This is possible due to the observation that during human interaction, there is always increased variability in the force data due to either movement (dynamic interaction) or physiological tremor (static interaction). Therefore, by thresholding the variance of F, in both amplitude and time, it is possible to robustly detect when any object is placed on the surface. When an object has been detected, its weight can be used for identification while its weight contribution can be compensated for, allowing additional objects to be placed on the surface and/or concurrent single-touch interaction to occur as usual. Once an object has been detected, it is added to a virtual object list so that when its removal is detected (i.e. due to a sudden drop in F), the appropriate object can be selected and removed from the list based on this change.

Intelligent objects

The intelligent objects are a set of compact, instrumented and functionally shaped devices. They are designed to enable natural interaction and sensing during assessment and rehabilitation of common day-to-day activities such as pick-and-place, can-opening, jar manipulation, key manipulation and writing. The developed objects are abstracted from the shape and basic functionality of common everyday objects making training and assessment using these objects similar to real-world tasks. So far, five different intelligent objects have been designed and implemented, namely iCan (for grasping and opening), iJar (bimanual grasping and twisting), iKey (fine manipulation and turning), iBox (for grasping and transportation) and iPen (grasping and drawing). We have previously published the design details of some of the intelligent objects and their use for assessing sensorimotor function:31–33 (a) The study by Hussain et al. 31 presents the design details of the iCan and iKey objects; (b) the study by Jarrassé et al. 32 presents preliminary design details of the iBox and its use for studying grasping strategies in healthy and hemiparetic patients and (c) the study by Hussain et al. 33 presents the use of iKey for assessing fine manipulation in patients with stroke. Here, we briefly describe three of the intelligent objects, namely iBox, iJar and iPen. The iBox is currently used as part of a UL assessment protocol using the SITAR. The iJar and iPen are currently not part of any training or assessment studies with the SITAR. However, we present their design here as they are additional objects that will become part of the SITAR ecosystem for future training and assessment studies.

iBox

is an object designed for accurately measuring and analysing grasping strategies during manipulation tasks.

32

It comes in the form of a cuboid (see Figure 1(d)) with dimensions

iJar

is a tool for measuring hand coordination during an asymmetric bimanual task similar to unscrewing the lid on a jar. It consists of a stabilising handle that measures the grasp force (up to 20 N) during a cylindrical grip and can be grasped with either hand (see Figure 1(b)). This is connected to a second rotating handle through a torsional spring mechanism with an off-centred, bidirectional force sensor-enabling torque (or moment) to be measured during rotation. Two rotational springs are connected in series, enabling (a) bidirectional movement to be performed, (b) removal of any play in the system due to each spring pre-straining the other and (c) the changing of the torque-extension profile by adjusting both the spring constants and the amount of pre-straining. Due to size and weight constraints, the second handle does not measure grip force but does allow for both a cylindrical (medium wrap) or circular grasp shape depending on the orientation of the object within the hand. The iJar elicits different types of movement/interaction, including (a) coordinated activity from both hands, measured through the isometric grasp forces on the top and bottom parts of the object and (b) wrist movements (pronation/supination and/or flexion/extension) measured from the rotation of the top and bottom parts of the object. This measured interaction will be analysed to infer specifics about the bimanual motor behaviour. As with the iBox, a MIMATE is used for data collection and measures translational accelerations, rotational velocities and orientations during manipulation, along with values associated with the grip force and torque measurements. The dimension of the current iJar design is approximately 220 × 60 mm with a weight of

iPen

Handwriting is an essential skill, which beyond utilitarian purposes, offers an opportunity to train the entire UL. For patients with high-level stroke, training with a writing system is a useful opportunity to exercise meaningful and challenging motor skills. The intelligent pen (iPen) was conceived to enable these training opportunities. The iPen, shaped like a thick, whiteboard marker, can measure interactive forces and inertial data during writing (see Figure 1(c)). Three 3D printed semi-cylindrical shells (with 36-mm outer diameter, 3-mm thickness, subtending 115°) are linked to a core, each via a single-axis load cell (SMD2551-002 miniature beam load cells, Strain Measurement Devices, Bury St Edmunds, UK) to measure grip force. The core serves as the mounting point for the load cells, and by extension, the grip plates. The wire conduit atop the core provides a convenient and axially centred position of the IMU (Analog Devices ADXL345, InvenSense ITG-3200 and a Honeywell HMC5843), which is secured with a nylon screw. The writing tip uses a button-type axial compression load cell (FC22 load cell, Measurement Specialities) and a floating stylus point to measure contact force with a table or surface.

Results

SITAR for UL assessment

SITAR is an ideal platform to carry out quantitative task-oriented assessment of the UL in a more natural manner compared to conventional modes of quantitative assessment. This section will illustrate some of the possibilities offered by the SITAR, in the context of an ongoing, multicentre, assessment study. Ethical approval for the study was granted by the Proportionate Review Sub-committee of the London Dulwich Ethics Committee (REC reference: 11/LO/1818; IRAS project ID: 88134). Here, we only present preliminary results of selected tasks to illustrate the assessment possibilities of the SITAR. Participants provided informed consent prior to beginning the experiment.

Inclusion and exclusion criteria

Patients with stroke, of age greater than 18 years, with UL impairment who are able to initiate a forward reach (grade 2 on Medical Research Council (MRC) at shoulder and elbow) and cognitively able to understand and concentrate adequately for performing the task were included in the study. On the other hand, patients with no UL deficit following stroke or with severe comorbidity including severe osteoarthritis, rheumatoid arthritis, significant UL trauma (e.g. fracture) or peripheral neuropathy were excluded from the study. People with severe neglect (star cancellation test and line bisection test) or cognitive impairment (Mini Mental State Examination) were also excluded.

Participants

Demographics of the participating patients in the assessment and usability studies.

FMA: Fugl–Meyer assessment.

Procedure

Participants were seated on a chair fitted with a back support in front of the SITAR table. The participant’s feet were flat on the floor, with the hips and knees flexed at approximately 90°. We present three important sensorimotor abilities assessed through this protocol: workspace estimate, pick-and-place and tactile resolution. The following subsections present the details of how these abilities were assessed along with the preliminary results from patients with stroke and healthy subjects.

Workspace estimate

For capturing the workspace of participants, they are seated in front of the SITAR table and are asked to reach as far as possible in five different directions, at 0°, 45°, 90°, 135° and 180° (with 90° representing the forward direction). In each trial, the subject starts from the resting position (bee-hive shown in Figure 4(a)) on the tabletop and tries to reach the maximum distance possible along the green patch of grass displayed. Three trials are recorded in each direction, with or without trunk restraint, to assess the difference between compensatory and non-compensatory range of motion, respectively.

Workspace assessment: (a) Visuals of the Bee game presenting five movement options away from the body. Subjects were asked to reach as far as possible on the displayed green paths. (b) Polar plots show a typical decrease in range of motion with functional impairment.

Figure 4 shows the normalised reaching distance of two representative participants with stroke (subject-1: age = 60 years, Fugl–Meyer assessment (FMA) = 10; subject-2: age = 52 years, FMA = 42). Here, the normalised reaching distance is defined as displacement from the start (bee-hive) to the final position (farthest touch point on the grass patch from the bee-hive) in each direction divided by the length of the completely stretched arm. The arm length was measured from acromion to the tip of digitus medius. The results show differences in the average range of motion for different directions within the control population and the two chronic stroke survivors. Control participants had the highest average range of motion while within the two stroke participants presented, the participant with higher FMA had a larger workspace compared to the severely impaired participant.

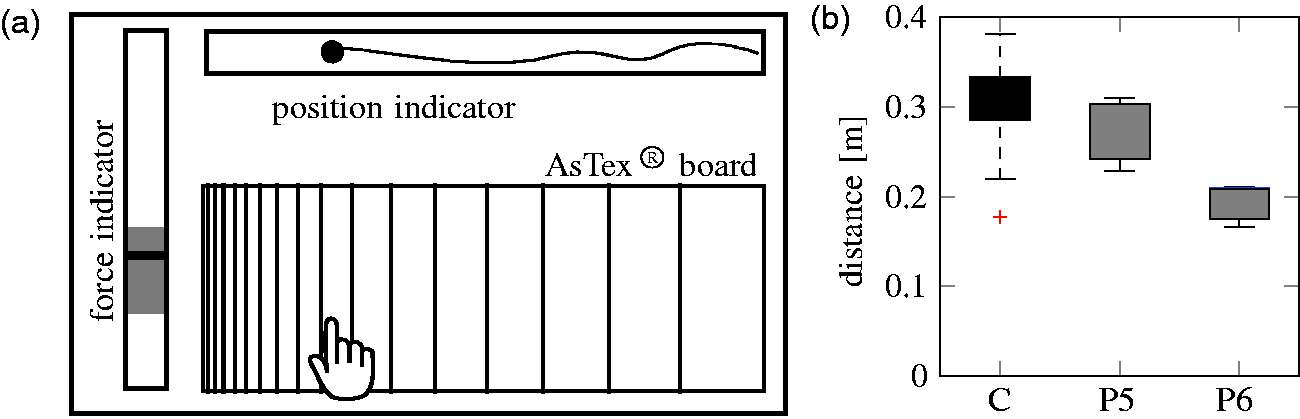

Pick and place

To assess ‘pick and placing’ of objects, subjects are seated in front of the table (without trunk restraint) and asked to reach for the iBox placed by the therapist. The iBox is initially positioned at 80% of the participant’s workspace as calculated during the workspace estimate assessment (without trunk restraint) described in the previous section. Subjects are asked to reach for the iBox, grasp it and then transfer it to the target location (Figure 5). The target is set away from the body’s midline at an angle of 45°, i.e. if the left arm is to be evaluated, the target position is located on the 135° direction as shown in Figure 5.

Pick-and-place task: (a) Schematic overview of the pick-and-place task alongside illustrative results showing (a) grasping time and (b) peak force of two stroke-affected patients (P3, P4) compared to healthy control subjects (c) (The red plus signs in the boxplots are the outliers in the data that fall beyond the boxplot’s whiskers).

The results of two preliminary metrics for the assessment of the performance of two representative participants with stroke (subject-3: age = 54 years, FMA = 35; subject-4: age = 52 years, FMA = 42) are shown in Figure 5. This figure shows that the grasping time, defined as the time between the first contact with the iBox and the time when it is lifted off the table, increases with impairment. Similarly, the peak force applied on the iBox during its transport to the target location also changes as a result of impairment.

Tactile resolution

The sensory assessment of tactile resolution uses the AsTex® clinical tool for quick and accurate quantification of sensory impairment.

34

The AsTex® is a rectangular plastic board to measure edge detection capabilities, with parallel vertical ridges and grooves that logarithmically reduce in width and are printed on a specific test area laterally across the board. The errors that can occur due to changes in force applied by the index finger on the board or the velocity with which the finger is moved

35

were overcome by placing the AsTex® board on the SITAR table, which can sense the touch force and position on the AsTex® board. To assess the tactile resolution, participants placed their index finger on the rough end of the AsTex® board, which was slid slowly along the board by a therapist until the point where the surface started to feel smooth to the subject.

34

The therapist had feedback of the force applied by the finger, which ensured a relatively constant force was maintained during the assessment (Figure 6). The position where patients perceive the board to be smooth provides a measure of their tactile resolution capability. Using the AsTex® board with the SITAR allows automatic logging of all the associate force and position information during the assessment.

Assessment of tactile resolution: (a) By placing the AsTex® board on the interactive table, one can control the force and measure the position; (b) shows where representative stroke survivors (P5, P6) and healthy control subjects (C) stop when they feel a smooth surface, which corresponds to their tactile resolution (The red plus signs in the boxplots are the outliers in the data that fall beyond the boxplot’s whiskers).

Figure 6 shows the results of the tactile resolution assessed with two representative stroke survivors (subject-5: age = 35 years, FMA = 23 and subject-6: age = 66 years, FMA = 21). Subjects were asked to wear a blindfold, and a therapist guided their index finger across the marked indentations from coarse to fine grooves while ensuring a nearly constant force level (by keeping track of on-screen visual feedback of the force). The process was repeated three times with the results indicating a decrease in tactile resolution against impairment, with healthy controls having the highest tactile resolution. The current protocol used only the rough-to-smooth direction for the finger to slide. It is possible that the results of the reverse direction (smooth to rough) might be different and could be assessed in future studies.

SITAR for upper-extremity therapy

Apart from being an assessment tool, the SITAR also allows one to implement interactive, engaging, task-oriented UL therapy. This section describes a pilot usability study based on two therapeutic games illustrated in Figure 7 for training arm movements and memory.

Screenshots of two therapeutic games that have been developed for the SITAR system, namely (a) the heap game and (b) the memory game.

Usability study

A pilot evaluation of the usability of the SITAR with the two aforementioned adaptive therapy games for independent UL rehabilitation was tested at the Rehabilitation Institute of Christian Medical College (CMC) Vellore, India. This pilot clinical trial, approved by the Institutional Review Board of CMC Vellore (meeting held on 3 March 2015; IRB number: 9382), was conducted on patients with UL paresis resulting from stroke or brain injury.

Inclusion and exclusion criteria and participants

The inclusion criteria were the ability to (a) initiate a forward reach, (b) understand the therapy task and games and (c) give informed consent. Patients with no UL deficit or with comorbidity including severe osteoarthritis, rheumatoid arthritis, significant UL trauma (e.g. fracture, peripheral neuropathy), severe neglect or cognitive impairment were excluded from the study. The study recruited five patients with UL impairments to participate in the week-long pilot usability study with biographical information described in Table 2.

Intervention

Five patients underwent therapy for about 20–30 min per session with the SITAR for five therapy sessions on consecutive days except Sundays. The first session (lasting approximately 30 min) was used to accustom the patient with the therapy setup, the SITAR and the games. Following this, the patients played the games by themselves without the constant presence of the therapist or the engineer in the room. A caregiver was allowed to stay with patients who required their presence. However, the caregiver was instructed not to interfere with the training. On each session, the patient played at least six trials of the heap game (HG) and four trials of memory game (MG). Additional trials of these games were included in a session if the patient completed these games before 20 minutes and requested more game time. Patients took small breaks in between each game trial. During the sessions, if the patient required any assistance during the therapy session, they could call for a therapist or an engineer present in the adjacent room.

Outcomes

Questionnaire and patient responses in the range {#x02212;2, −1, 0, 1, 2}.

SITAR: system for independent task-oriented assessment and rehabilitation.

Heap game

The HG is an adaptive computerised version of the classic ‘Pick-up sticks’ game. It is commonly used as a therapy game, especially for children with hemiplegia, hemiparesis or cognitive/behavioural disorders. The game presents a heap of pencils lying on top of each other, and the task for the patient is to clear all pencils sequentially in one minute. The pencils can be cleared one-by-one by touching the topmost pencil in the heap (shown in Figure 7(a)). The primary aim of this game is to encourage and train patients to reach out and touch the SITAR tabletop at different points in the workspace with the paretic limb. Additionally, playing the game requires good visual perception to identify the topmost pencil, and this cognitive ability will also be trained while playing the HG.

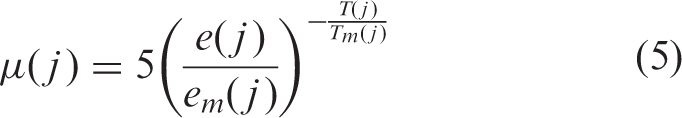

Motor recovery generally increases with training intensity.36,37 To engage a patient in training intensively, the therapeutic game should be challenging but achievable.

38

Therefore, the difficulty of a rehabilitative game should adapt to the motor condition of each subject. In the HG, this is done by modifying the number of pencils to be cleared and the distribution of the pencils in the workspace for the next game trial according to the performance in previous trials. The number of pencils for the

The workspace, formed of discrete points described in polar coordinates

Memory game

The MG illustrated in Figure 7(b) was implemented to explore the possibility of using SITAR for cognitive training alongside arm rehabilitation. This game presents patients with pairs of distinct pictures placed at random locations in a rectangular grid. At the start of the game, the patient is shown the entire grid of pictures, for a small duration proportional to the size of the grid

The difficulty of the game increases with the number of image pairs to be identified. This number n is modified on a trial-by-trial basis depending on the performance history of the patient on the previous trials:

Usability study results

The usability of the SITAR and the two therapy games was analysed using (a) the patients’ response on the questionnaire, (b) the record of the assistance requested by patients during the SITAR therapy and (c) the adaptation of the two therapy games to the patients’ performance. The summary of patient responses on the questionnaire in Table 3 shows a positive median score over the five patients for all questions. Four of the five patients had English literacy and were able to respond to the questionnaire without any assistance; for one of the patients, SB verbally translated the questionnaire in Hindi, which he can fluently read, write and speak.

In general, patients were satisfied with the SITAR training and found it easy to use the system. They also indicated an interest in using SITAR as part of their regular therapy sessions and also in recommending it to other patients with similar sensorimotor problems. Informal discussion with the patients indicated that they would like to have many more games than just the two games tested as part of this study. The lower score in MG relative to HG is probably due to the larger cognitive requirements of this game.

All patients but P11 required only intermittent assistance from the engineer over the course of the therapy. The engineer was with the patients to instruct them during the first session. In the following sessions, presence of the engineer was required only intermittently. The most common reasons for the engineer to intervene during a therapy session were to change the game played by the patient or to motivate him to play (or sometimes due to a technical issue, e.g. a faulty load cell in the SITAR system).

Summary of the assistance requested by five patients during their therapy sessions.

AP: always present; E (encouragement and motivation): This is for the purpose of encouraging and motivating the patient to play and do well in the therapy games; GC (game change): This is when a patient wanted to skip a particular game and move on to the next game. The request for a game change could be because they were bored with the current game or the difficulty level has become too high due to fatigue etc.; TE (technical error): including issues with the calibration or with the patient resting his/her forearm on the table-top.

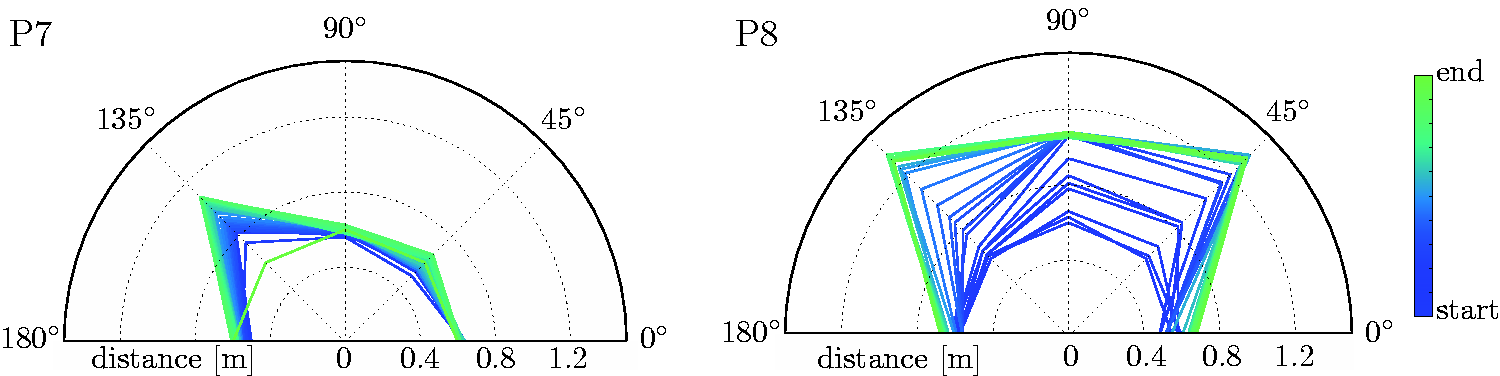

The two games adapted well to the abilities of each of the five patients who participated in the study. In HG, the workspace estimates starting from a default value of Illustrative results showing the adaptation of the workspace over the course of a trial for two different patients (P7, P8) while playing the heap game.

The performance of a patient in MG was evaluated by the number of exposures taken to find a pair of images correctly; this performance was a measure of their visuospatial and working memory. When a patient completes a trial, the number of image pairs that were cleared in one, two, or more exposures can be determined. This is graphically represented in Figure 9(b) which shows the performance and progress of two representative subjects in the MG over the course of the study. All the games started with two pairs of images, with patient P10 (left plot) advancing to ultimately play a game with 21 image pairs, while patient P9 was playing the game with seven pairs by the end of his/her therapy sessions. In the stack plot shown, the colours represent the number of exposures, and their height indicates the number of image pairs that were identified with that many exposures. For example, in the sixth trial of MG for patient P10, there were eight pairs of images to be identified, out of which the patient identified three with a single exposure, four with two exposures and one with three exposures.

Illustrative results showing the performance of two patients (P9, P10) while they played the memory game. In general, as patients progress, the game becomes more challenging.

Discussion

Innovative task-oriented rehabilitation

Three primary factors make the SITAR unique compared to the existing sensor-based systems for neurorehabilitation,8,9,11,12 namely the interactive tabletop, the collocation of visual and haptic workspaces and the modular components capable of sensing and reacting to a patient’s interaction.

The interactive tabletop can sense the position and force of a touch and is capable of providing visual and audio feedback. Apart from providing a workspace for carrying out different UL tasks, its sensing and feedback capabilities can make the patient’s interaction engaging and game-like. The usefulness of such an interactive tabletop for neurorehabilitation has prompted some of the recent commercial developments such as the ReTouch (RehabTronics Inc.) and the Myro (Tyromotion Ltd), with the latter developed based on the interactive table described in this article. The table can be used in conjunction with other devices such as a mobile arm support or a device that can help opening the hand, so that a larger proportion of patients can use it for training.

The second important feature is the collocation of the visual and haptic workspaces. This is an important feature for enabling natural interaction during training and its possible transfer to real-world tasks. Most existing sensor-based systems8,9,11 and robotic systems1,2 present an interface with dislocated visual and haptic workspaces. Patients interact and train with objects at the level of a tabletop while they receive visual feedback from a computer monitor that is placed in front of them. When training with the SITAR table or the intelligent objects, a patient’s visual attention remains in and around the workspace where they are physically interacting.

The third important feature of the SITAR is its fully modular architecture, which allows its different components to act with some level of autonomy when sensing and reacting to a patient’s interaction. This feature makes the system very versatile, enabling the different SITAR components to be used either separately or together and, thus, gives a clinician the freedom to implement different types of therapeutic programs. For example, a simple impairment-based therapy program for training grip strength can be implemented using just the iBox, which can also provide autonomous feedback to make the training interesting for the patients. Moreover, from a technical point of view, when two or more SITAR components are used together, they act as independent sources of information about a patient’s interaction with the system; these multiple sources can be fused to obtain more accurate information. For instance, short-duration arm reaching movements between two successive touches on the SITAR table can be reconstructed using information from an IMU worn on a subject’s wrist and the touch position data from the SITAR table. Whenever a subject touches the SITAR table, a zero-velocity update 39 can be carried out by incorporating the position information from the table to recalibrate the IMU and thus minimise integration drift. This design approach makes SITAR an ideal tool for quantifying natural interaction of a patient with the system.

The versatile architecture and the possibility of varied form factors make the SITAR an excellent candidate for both clinic- and home-based deployment and to train a variety of patients. A full set of components (the large SITAR table and all intelligent objects) would be ideal for a hospital-based setup. On the other hand, a smaller SITAR table, along with one or two selected objects can be used at patients’ homes. The SITAR would also be suitable for use with children although some of the objects would need to be miniaturised.

The current SITAR can be extended in the following ways. The current interactive table only detects the COP of the touch; thus, multi-touches cannot be detected directly. Besides using technology that supports multi-touch, similar to Tyromotion’s Myro, it is, however, possible to use the force-sensing capabilities of some of the intelligent objects to solve the ambiguity of multi-touches in an economic way. Furthermore, 3D vision technologies such as the Kinect and IMU can be used to monitor arm movements which do not interact with an object or the table, alongside compensatory arm movements, thus, greatly complementing the current system.

Clinical feasibility

The SITAR can be used for the assessment of a patient’s sensorimotor impairments and also one’s ability to perform complex sensorimotor tasks related to activities of daily living. Some of the previous work with the iKey 33 and the iBox 32 demonstrated how assessment protocols can be implemented, with the different SITAR components used individually. In this article, we presented preliminary data on the use of SITAR for the assessment of workspace with the interactive tabletop, pick-and-place of objects (with the iBox) and tactile assessment (with the AsTex® board), further illustrating some of the possibilities of SITAR as an assessment tool. The SITAR can be used to implement simple, quick and useful measures of sensorimotor ability, as was illustrated by the workspace estimate. It can be used to analyse complex sensorimotor tasks by breaking them down into simpler and specific sub-tasks, as was demonstrated by the pick-and-place task. Appropriate external tools can be easily interfaced with the SITAR to quantify existing measures of sensorimotor performance, as was illustrated with the AsTex® board. Other possible extensions include the use of the SITAR table for quantifying traditional box and block tests 40 or the Action Research Arm Test (ARAT), 41 by placing the specific test objects on the table, thus complementing the scores provided by the therapist with accurate quantitative (e.g. force and task timing) data. The SITAR provides a rich framework for supporting interactive strategies for neurorehabilitation of the UL. To optimally develop some of its features, we are currently focusing on extracting useful information from the large amounts of data generated by the system and identify information with maximum clinical relevance.

Gamification of therapy is an important requirement for engaging patients in training, as higher motivation can help deliver increased dosage of movement training to promote recovery. The results of the pilot usability study showed that patients enjoyed playing the two adaptive rehabilitation games implemented on the SITAR, as was reflected in their responses to the questionnaire. Patients were able to use the SITAR with only little supervision or help over the course of the study. The record of the assistance required by the patients during therapy indicates that in general the assistance required decreased with the therapy sessions as patients learned to use the system better. Apart from a technical issue with the SITAR table, there were no major issues that hindered patients from using the system on their own. However, there are two important aspects of independent training that the current system does not address sufficiently: (a) The current system falls significantly short in its ability for social interaction to encourage and coach patients. This was an issue with one of the patients in the usability study, who required the therapist in one of the sessions to keep him/her engaged and motivated to train; (b) The absence of a therapist can lead to patients using undesirable compensatory strategies to play the therapy games, which can have deleterious long-term effects. The implementation of these aspects will require further work and will be addressed in our future activities with the SITAR.

The two games tested illustrate how the SITAR can be used to train arm-reaching movements along with other cognitive abilities such as visual perception and visuospatial memory. However, based on feedback from patients and clinicians, we are currently working on developing a larger set of games to ensure longer engagement of patients during this therapy. Furthermore, tasks involving some of the intelligent objects in the assessment study can be used for implementing both impairment-based training (e.g. training with the iBox for improving grip strength control) or ToT of activities of daily living. In this context, the use of a mobile arm support and a device to assist hand opening/closing will enable lower baseline patients to engage with the SITAR system. In addition to training UL tasks, it is also important to monitor and discourage compensatory trunk movements, which were observed in patients participating in the usability study. Trunk restraints during training have been found to have a moderate effect in reducing sensorimotor impairments of the upper extremity as measured by the FMA 42 and thus would be a useful addition to the SITAR system. We note that the data presented here are merely to illustrate the system capabilities and do not represent a complete study.

Conclusion

This article introduced the SITAR – a novel concept for an interactive UL workstation for task-oriented neurorehabilitation. It presented the details of the current realisation of the SITAR, along with preliminary data demonstrating the capability of the system for assessment and rehabilitation in a naturalistic context. The SITAR is a versatile tool that can be used to implement a range of therapeutic exercises for different types of patients.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the European Commission grants EU-FP7 HUMOUR (ICT 231554), CONTEST (ITN 317488), EU-H2020 COGIMON (ICT 644727), COST ACTION TD1006 European Network on Robotics for NeuroRehabilitation and by a UK-UKIERI grant between Imperial and CMC Vellore.

Guarantor

EB

Contributorship

AH, SB, NR, JK, NJ, MM and EB designed the SITAR. The assessment study was conceived and carried out by AH, SB, SG and EB. The rehabilitation usability study was conceived and carried out by SB and AD. The manuscript was written by AH, SB, AD, MM and EB, with all authors reading and approving the final manuscript. AH and SB contributed equally to this study.