Abstract

The successful implementation of digital health and artificial intelligence (AI) innovations in the National Health Service (NHS) requires more than technical development. Navigating regulation, generating decision-grade evidence, and meeting clinical safety, information-governance, and interoperability standards are critical steps that frequently delay or prevent adoption. This article presents a practical, implementation-focused roadmap designed to help clinicians, innovators, and healthcare leaders translate policy requirements into real-world NHS deployment. Drawing on guidance from the Medicines and Healthcare products Regulatory Agency (MHRA), the National Institute for Health and Care Excellence (NICE), and NHS Digital, we outline an eight-step pathway covering medical-device classification, value-proposition development, intended-purpose definition, regulatory approval, evidence generation, algorithmic fairness and generalisability, interoperability and information governance, and post-market surveillance. Unlike high-level digital health frameworks, the roadmap specifies minimum artefacts, typical ownership and sign-off responsibilities, and decision points aligned with NHS procurement and clinical governance processes. The roadmap is illustrated through a detailed case study of a UK-deployed AI stroke imaging decision-support software. Its progression from academic development to multi-site NHS deployment demonstrates how early regulatory engagement, robust real-world evaluation, and sustained clinical collaboration can support safe scaling and measurable service improvements, including increased access to reperfusion therapies and reduced inter-hospital transfer times. By distilling complex regulatory and evidence requirements into executable steps, this guide offers a clear route from idea to adoption. It emphasises that aligning regulation, evidence generation, bias mitigation, and interoperability from the outset is essential to sustainable digital health integration within the NHS.

Keywords

1. Why read this guide?

Digital health and AI tools promise faster diagnosis, more personalised care and lighter clinical workloads, but the NHS rightly insists on evidence of safety, effectiveness and sound data governance before innovation reaches patients.1–4 This concise guide keeps every critical regulation in mind but tells the story in plain language so you can move from idea to deployment without wading through pages of legal jargon.

2. Evidence first

Well-designed digital programmes consistently cut cost and improve care quality. 5 National reviews still spotlight two stubborn gaps: many staff lack digital confidence, and too few products land with robust real-world evidence.6–8 If you plan training and evidence collection from the outset, the rest of the journey becomes easier.

3. An eight-step roadmap

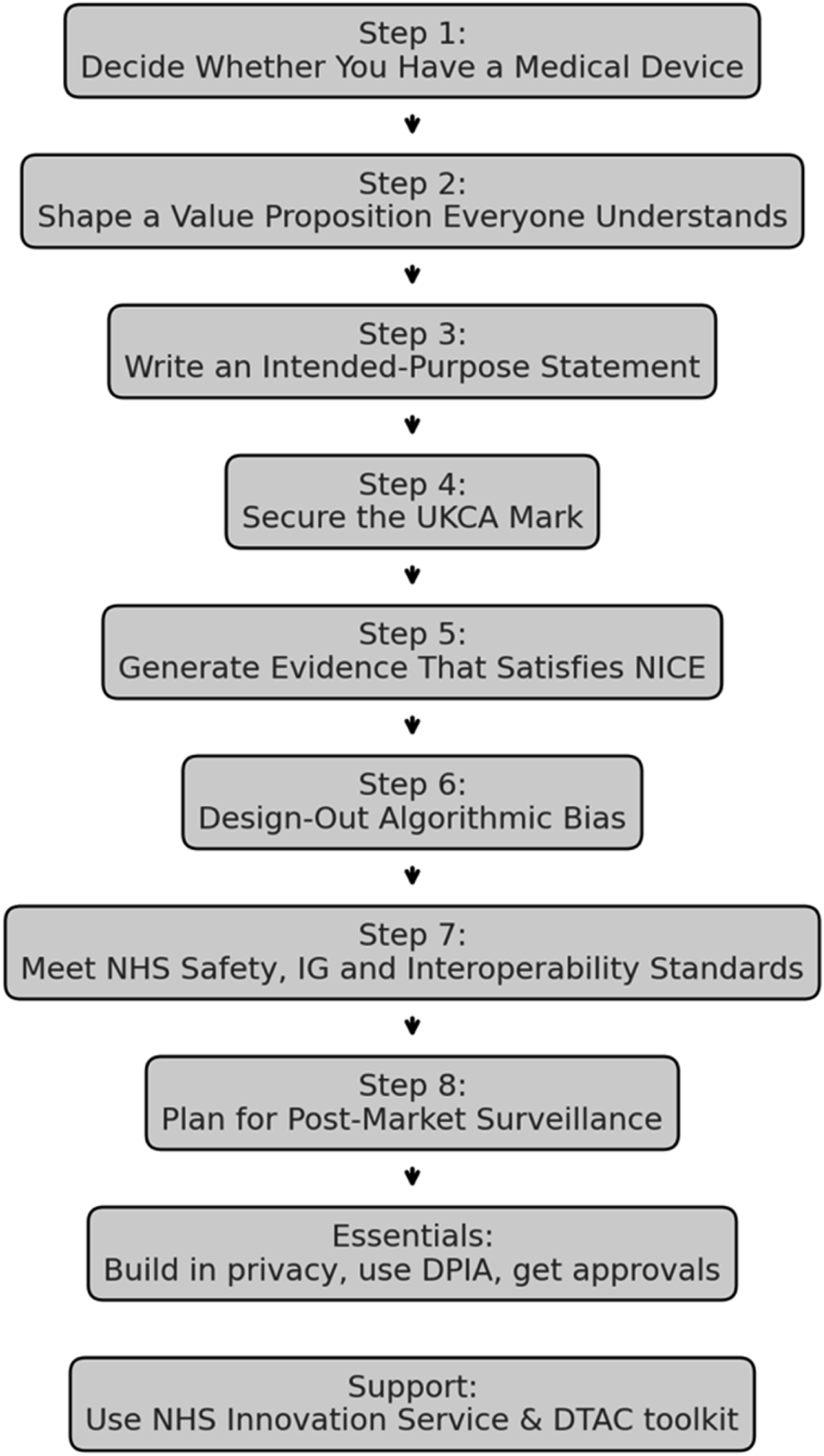

These steps weave together MHRA, NICE and NHS Digital guidance with lessons from real NHS implementations of digital therapeutics, community-based diagnostics, AI-enabled rehabilitation support, and imaging decision-support software. 1

The eight-step process is illustrated schematically in Figure 1. Unlike high-level digital health frameworks, this roadmap is designed to be executable: it specifies minimum artefacts, typical ownership/sign-off responsibilities, and decision points aligned to NHS procurement, deployment, and post-market monitoring. Flowchart to illustrate steps required for approval of digital health innovation.

3.1. Step 1: Decide whether you have a medical device

If your software influences a clinical decision, UK Medical Device Regulations (UK MDR 2002) apply. 9 Classify it honestly (Class I–III); under-classifying only postpones pain. 10

The regulation groups devices by risk, from Class I (low risk) to Class III (highest risk). Correct classification is critical: under-classifying to “keep things simple” may speed early development, but regulators will force a costly re-route later. Annex IX of Directive 93/42/EEC lays out the decision rules, while MHRA “borderline” guidance helps where categories overlap with medicines, cosmetics or wellness products.10,11

A NICE-assessed digital CBT-I platform for insomnia illustrates how correct Class I classification can streamline the regulatory pathway. 12 If borderline, document the rationale and seek MHRA borderline advice early to avoid reclassification late in procurement.

3.2. Step 2: Shape a value proposition everyone understands

Clinicians care about clinical benefit, commissioners about cost or time savings, and patients about outcomes. Frame your claims against the NICE Evidence Standards Framework (ESF) to satisfy all three audiences.

4

A community-based cardiovascular risk screening platform illustrates how clearly articulated clinical and service value can support adoption, including earlier identification of cardiovascular risk and potential reduction in reliance on traditional GP-led testing pathways.

13

The

3.3. Step 3: Write an intended-purpose statement

Regulators expect a concise statement that specifies

3.4. Step 4: Secure the UKCA mark

Once you have confirmed the risk class, the hard regulatory work begins. Every device that reaches NHS patients must comply with 1. 2. 3.

3.4.1. One last checkpoint: Will you need a clinical investigation?

If your device cannot rely on published data or evidence from an equivalent UK-approved comparator, you must generate fresh clinical data through a prospective

Register the project on the

3.5. Step 5: Generate evidence that satisfies NICE

NICE advice hinges on two questions:

Once your technical file is in hand, turn your attention to the

3.5.1. How topics reach NICE

Digital, AI and other medical technologies flow into NICE through the HealthTech Programme. A prioritisation board short-lists topics that align with national health priorities; eligible products usually have a UKCA (or CE) mark, sit in Tier C of the Evidence Standards Framework (ESF), and already carry preliminary data to support their value proposition.

Routes for NICE assessment of medical technologies.

Registration on the NHS Innovation Service portal is the quickest way to check eligibility and flag a new topic for consideration.

3.5.2. Design studies that answer NICE’s questions

Remember the distinction between regulatory and HTA evidence: • •

Practical implications: • Recruit populations that look like real NHS users. • Pick outcomes that matter to patients (symptom relief, function, survival), not just process metrics. • Follow-up long enough to capture downstream benefits or harms. • Pre-register protocols and use accepted risk-of-bias tools.

For digital tools with modest clinical risk but large workflow impact, well-designed service evaluations can suffice. NICE says so explicitly in its

Economic evidence must match the clinical story: • •

3.5.3. Use NICE advice to de-risk the journey

If you are unsure whether your draft protocol will satisfy the committee, book an appointment with

3.5.4. Pack the perfect submission

By submission day aim to hand NICE a dossier that: 1. Demonstrates 2. Confirms 3. Quantifies

Digital CBT provides a model. The digital cognitive behavioural therapy (CBT-I) platform mentioned above, secured a positive Medtech Innovation Briefing by publishing peer-reviewed data showing equivalence to face-to-face therapy and an acceptable cost-effectiveness ratio. 12

3.6. Step 6: Design-out algorithmic bias and plan for drift

Adopters will judge an AI tool’s trustworthiness primarily by the quality, representativeness and generalisability of the data used for training, testing and validation. Data should reflect: (i) the target population, (ii) the intended clinical setting and workflow, and (iii) technical quality (e.g., imaging acquisition quality and device/software heterogeneity). Inadequate representation (including “marginal cases”) risks unstable models and uncertain performance estimates in under-represented groups.

3.6.1. Minimum artefacts (attach to the technical file/evidence pack)

1. 2. 3.

3.6.2. Minimum metrics to report (tailor to task)

Report discrimination and error within each contextualised group (e.g., AUROC/AUPRC for classification; sensitivity/specificity; PPV/NPV; calibration slope/intercept; and clinically relevant error trade-offs). Where disparities exist, document the likely mechanism (sampling, measurement, aggregation, label bias), the mitigation applied, and residual risk accepted by governance. 4. 5.

Minimun artefacts and typical ownership/sign-off.

3.7. Step 7: Meet NHS safety, IG and interoperability standards

Long before an NHS Trust plugs your app into its network, its clinical-safety lead will open two documents: • •

3.7.1. What DCB0129 actually demands

As the developer you must put in place a formal • appointing named clinical-safety officers and documenting their governance arrangements; • running structured hazard analyses throughout the build, not just at the end; • recording evidence that team members have the right safety training and competence.

Risk management does not stop at release. The standard expects you to plan for

NHS Digital provides templates that map directly to the standard; use them. If you cannot show a complete, traceable safety case, an adopter simply cannot switch you on—DCB0160 forbids it.

3.7.2. The DTAC: Baseline passport to procurement

Clinical-risk governance is only one slice of the approval pie. During procurement most NHS organisations now apply the

Meeting DTAC is not optional; it is the new baseline. The form will ask for: • proof of • a data-protection-impact assessment • evidence that you exchange data via open standards such as • accessibility testing against WCAG and use of the NHS Design System for consistent look-and-feel.

Because DTAC echoes legal obligations you already face—UK GDPR, medical-device law, cyber-security essentials—building its requirements into your design phase saves painful retro-fits later.

3.7.3. Medical devices add ISO 14971 to the mix

If the product is a medical device, the safety bar rises again. You must also comply with

3.7.4. Best-practice accelerators

• • •

3.8. Step 8: Plan for post-market surveillance

Deployment is the start of a new phase, not a finish line. The NHS expects ongoing surveillance: track adverse events, monitor key performance indicators and collect user feedback. Push software updates promptly when safety signals emerge and remember that high-risk services may need Care Quality Commission registration. A transparent improvement cycle sustains patient safety and clinician trust. 20

4. Data & information-governance essentials

Apply “data protection by design” at every phase. Prototype with anonymous or synthetic data where possible; where personal data is essential, complete a DPIA and document Article 6 and 9 lawful bases.21–23 Research studies or un-marked device investigations need MHRA “no-objection” and HRA/REC clearance via IRAS.

5. Getting help faster

The NHS Innovation Service portal offers one-door sign-posting to regulatory support and NICE topic selection and even links to MHRA advice on tricky borderline-device questions.11,15 Re-using components from the NHS Digital Service Manual can instantly satisfy parts of DTAC.

6. In a nutshell

Start with classification, value proposition and intended purpose; build your technical file alongside your code; collect evidence that satisfies both UKCA and NICE; design for fairness, interoperability and solid IG up-front; and treat deployment as day one of monitoring and improvement. Follow this path and your innovation will reach clinicians and patients more quickly and stay there.

7. Case study – UK-deployed AI stroke imaging decision-support software

The software is an AI decision-support platform that automatically analyses CT/MRI brain scans to identify stroke patients who could benefit from reperfusion therapies such as thrombectomy. Deployed across multiple NHS trusts, it illustrates the roadmap in action. • • • • • • • • •

7.1. Behind-the-scenes insights

The software spun out of the University of Oxford in 2012, but in its first two years the founders discovered that capital and talent were scarcer than algorithms. A 2014 £1.35 million seed round from the Oxford Innovation Fund topped up by Innovate UK grants, finally let the team grow beyond a handful of academics. 27

Turning research code into a clinical product brought two early pitfalls. First, robust evidence takes longer than code: the company had to generate multiple peer-reviewed validation studies before stroke physicians were comfortable relying on AI decisions. 26 Second, regulations kept moving; The software secured a Class IIa CE-mark in 2017 but still had to re-tool documentation for the tougher EU MDR and prepare for UKCA marking, all while pursuing FDA clearances for other markets.

Rolling the software out to NHS trusts surfaced very human hurdles. Integration teams found that “plug-and-play” often meant weeks of firewall tweaks and PACS scripting, so the software now insists on an up-front responsibilities matrix with each local IT department. Clinician buy-in followed a familiar curve: initial scepticism gave way to advocacy once real cases showed faster, safer transfers for thrombectomy. Identifying a local clinical champion helped, but programmes learned not to rely on one enthusiast; shared ownership and regular cross-site user forums sustain momentum. 28

With clinical, economic and regulatory boxes ticked, the focus has shifted to scaling: an NHS-led business-case template and the AI in Health & Care Award funding now help new trusts procure the software under standard tariffs. 29

7.2. Key lessons

• Secure milestone-linked funding early; AI validation is a multi-year marathon. • Publish evidence continuously; credibility trumps hype. • Treat regulatory engagement as iterative, not a one-off hurdle. • Agree an IT responsibilities matrix before installation; it halves deployment time. • Cultivate multiple clinical champions and schedule refresher training to embed use.

8. Limitations and future work

This roadmap is intentionally UK-centred, reflecting current MHRA, NICE, and NHS Digital requirements. While the principles generalise, artefacts and approval routes vary internationally. The guide focuses on software-led digital health (including AI decision support) rather than hardware devices or consumer wellness applications, and it is not a substitute for formal regulatory, legal, or information-governance advice for higher-risk deployments. Future work should produce openly accessible templates for key artefacts (e.g., intended-purpose statements, DCB0129 safety cases, DTAC evidence packs, and post-market surveillance plans), specialty-specific extensions, and prospective evaluation of roadmap-guided implementations compared with ad hoc approaches.

9. Conclusion

Digital health adoption in the NHS rarely fails because of weak algorithms; it fails when regulation, evidence generation, information governance, and workflow integration are treated as late-stage hurdles rather than design requirements. This roadmap translates MHRA, NICE, and NHS Digital expectations into executable steps, helping teams define intended use, build the correct regulatory artefacts, generate decision-grade evidence, and plan monitoring from day one. The overarching principle is practical: align regulation, evidence, and clinical workflow continuously through the product lifecycle to achieve safe scaling rather than perpetual piloting.

Footnotes

Acknowledgements

The authors thank colleagues in digital health regulation, NHS clinical governance, and translational research environments for informal discussions that informed the development of this roadmap.

Ethical considerations

This manuscript does not involve primary data collection, human participants, or patient-level data analysis. Therefore, ethical approval and participant consent were not required.

Author contributions

AM (Anurup Mukherjee) conceptualised the manuscript, conducted the literature synthesis, developed the implementation roadmap, and drafted the manuscript.

SS (Sukhi Shergill) provided senior academic oversight, contributed to critical review of the manuscript, and advised on research positioning and regulatory framing.

CA (Chee Ang) contributed expertise in digital systems and human–computer interaction and critically reviewed the manuscript with respect to implementation and technical governance considerations.

All authors reviewed, revised, and approved the final manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

No datasets were generated or analysed during the current study.

AI declaration

Generative AI tools were used to assist with language refinement and structural editing. All content was critically reviewed and approved by the authors.

Guarantor

Anurup Mukherjee accepts full responsibility for the integrity of the manuscript and the accuracy of the content.