Abstract

Objective

Short-video platforms have become major sources of rheumatoid arthritis (RA) information for the public. The quality and reliability of such content remain uncertain.

Methods

We conducted a cross-sectional study in October 2025. Using the Chinese keyword “Rheumatoid Arthritis” we screened top-ranked videos on TikTok and Bilibili and included eligible, non-advertising videos that addressed RA. To reduce personalization, searches were performed with newly registered accounts in private-browsing mode. Two trained raters assessed overall quality using the Global Quality Score and reliability using a modified DISCERN instrument. Uploaders were classified as rheumatology professionals, medical professional on other, patients, and science communicators. Inter-rater agreement was quantified using Cohen's kappa. Nonparametric tests compared platforms and sources. Spearman correlations examined associations between engagement metrics (likes, collects, comments, shares, duration) and quality scores.

Results

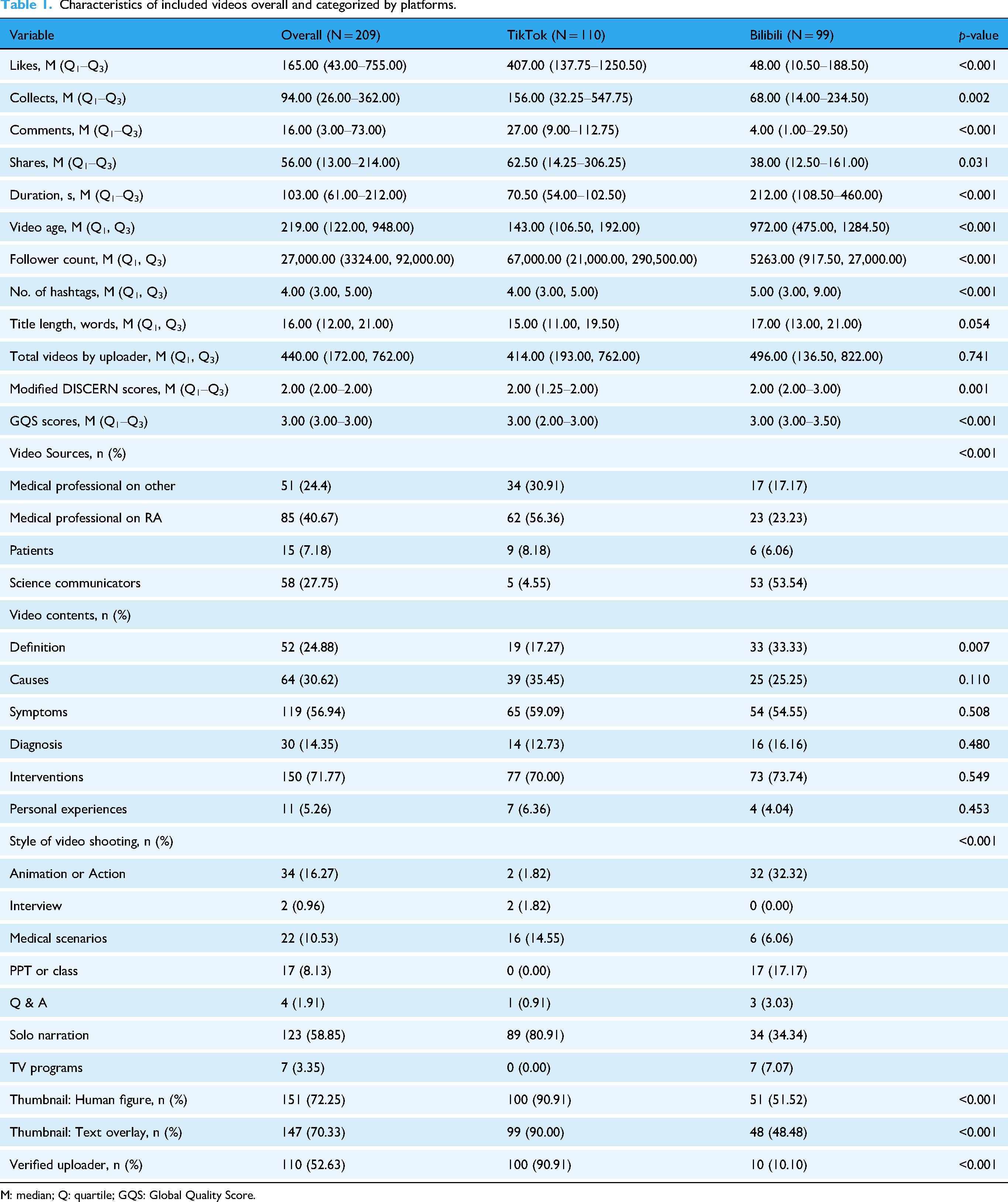

We included 209 videos (TikTok n = 110; Bilibili n = 99). Engagement was higher on TikTok (median likes, collects, comments, shares all greater; all p < 0.05), whereas Bilibili videos were longer (median 212 s vs 70.5 s; p < 0.001) and achieved higher Global Quality Score and modified DISCERN scores (both p ≤ 0.001). Uploader distributions differed by platform: TikTok was dominated by medical professionals; Bilibili had more science communicators. Video source was strongly associated with quality and reliability: rheumatology specialists and other medical professionals scored highest; patient-generated content scored lowest. Engagement metrics were strongly inter-correlated but showed no meaningful correlation with Global Quality Score or modified DISCERN. Longer duration correlated positively with quality.

Conclusion

RA related short videos on TikTok and Bilibili show variable quality and reliability. Bilibili tends to provide higher-quality and more reliable information, and clinician-produced content scores highest, whereas engagement does not indicate trustworthiness. Platform-level quality signals and greater clinician participation may improve the reliability of RA information online.

Keywords

Introduction

Rheumatoid arthritis (RA) is a systemic, chronic, and autoimmune disease that is marked by constant synovitis, as well as by progressive destruction of cartilage and bone, which may result in joint deformity and functional loss. 1 It occurs worldwide at approximately 0.5–1% although there is regional and ethnic diversity with obvious sex and age differences. 2 RA may affect any age but is most prevalent in people between the ages of 30 and 50 years and women are two to three times as susceptible to it as men. Genetic predisposition is not the only cause of both environmental and genetic causes. 3 The effects of RA are not limited to the joints: it is a major cause of disability and work loss and has several extra-articular comorbid conditions, such as high cardiovascular risk, pulmonary involvement, osteoporosis, and depression. 1 These complications cause significant quality of life, mental, socioeconomic outcomes. Respect of the quality of public awareness, the promotion of diagnostic treatment in a timely manner, and the treatment on the basis of the guidelines are therefore fundamental priorities in the field of the public health.

The digital health period has transformed medical information access in people. It is known that approximately half of the adult population consults the internet for health-related information. 4 Short-video platforms are increasingly used for rapid, interactive health communication, but videos are not only verbal and often contain written information, which raises health literacy and readability concerns. Guidance from major organizations has emphasized that patient education materials should be written at approximately a sixth-grade reading level to maximize comprehension. 5 In this evolving information environment, Tik Tok and Bilibili are emerging as key sources of health information to the masses as a result of their easy-to-use format, quick spread, and high interactivity.6,7 Through these platforms, there are reduced barriers to medical knowledge and satisfaction on convenience and immediacy with significant potential to increase disease awareness among the users. Their open creation models and restricted pre-publication scrutiny is however a 2-sided sword. These platforms may also facilitate the spread of health misinformation, which can mislead users and may contribute to inappropriate health decisions or delayed care.8,9 Moreover, the algorithmic recommendation systems can generate echo chambers, exposing the user within the same system to biased or inaccurate opinions. 10

A growing body of work has assessed the quality and reliability of short-video health information across specific diseases, including diabetes, 11 cervical cancer, 12 and Hashimoto's thyroiditis. 13 Similar evaluation frameworks have also been applied in rheumatology, including recent assessments of YouTube exercise videos for fibromyalgia syndrome using comparable quality and reliability instruments. 14 Yet evidence focusing on RA remains limited. This gap matters because RA management often relies on long-term patient education and self-management, and patients who have knowledge about the causes, pathophysiology, treatment and prevention of a disease may be better able to participate and comply during disease prevention or treatment procedures. 15

To fill this gap, we held a cross-sectional analysis of RA-related short videos on Bilibili and Tik Tok, since they are two powerful short video platforms with specific content ecosystems and recommendation/interaction design features in Chinese, it is possible to comparatively communicate RA-related information quality and reliability in the same language context in a pragmatic way. We measured overall quality with Global Quality Score (GQS) and reliability with a modified DISCERN instrument and we tested the hypothesis whether engagement measures correlate with these two scores.

Methods

Search strategy

We conducted a cross-sectional study to evaluate the quality and reliability of RA-related short videos on two Chinese platforms, Bilibili and TikTok. Data collection took place in October 2025 using each platform's default “comprehensive ranking” to approximate what a non-logged-in user would see. In order to reduce personalization, as well as recommendation bias, searches were performed with newly registered accounts and the use of private-browsing mode where possible. Prior to every search, browsing history and cache were deleted and no likes, follows, comments, and watch-history were permitted to accrue during data collection. The search of videos through the platform search feature was done using a standardized Chinese keyword and stored in the order of place at the time of search. We searched both platforms using the Chinese keyword “类风湿性关节炎” (“rheumatoid arthritis”). From each platform, we retrieved the top 120 results.

Inclusion criteria were publicly accessible, non-advertising videos that directly addressed RA (definition, causes, symptoms, diagnosis, treatment, or prevention). Exclusion criteria were duplicates, RA-irrelevant content, and videos uploaded within the past week. In total, 209 videos were included (TikTok n = 110; Bilibili n = 99). Publicly available metrics (likes, collects, comments, shares, duration) were extracted into a standardized spreadsheet for analysis; baseline characteristics are summarized in Table 1.

Characteristics of included videos overall and categorized by platforms.

M: median; Q: quartile; GQS: Global Quality Score.

Video variables and content coding

Uploaders (“video sources”) were categorized using a predefined coding manual developed by the authors based on publicly available account information and commonly used source categories in prior social-media health information evaluations. Classification was determined from the uploader's profile and verification status (when available), stated professional credentials, institutional affiliation, and the video content itself. “Medical professional on RA” referred to uploaders who self-identified as rheumatologists (or RA-focused clinicians) and/or whose profile indicated RA-related clinical specialization. “Medical professional on other” referred to licensed health professionals whose stated specialty was not rheumatology/RA (e.g. orthopedics, rehabilitation, general internal medicine, pharmacy, nursing). “Patients” referred to uploaders who self-identified as people living with RA (or caregivers) and primarily shared personal experiences without professional medical credentials. “Science communicators” referred to non-clinician individuals or organizations producing health-science educational content (e.g. popular science creators, media accounts) without claiming licensed clinical credentials. Content themes covered definition, causes, symptoms, diagnosis, interventions, and personal experiences. Filming styles included animation or dramatized action, interview, medical scenarios, PPT/classroom talk, Q&A, and solo narration. In addition, to explore potential drivers of video visibility beyond content accuracy, we extracted platform-available metadata and creator-level features for each video, including upload date (used to calculate video age at the time of data collection), uploader follower count, total number of videos posted by the uploader, number of hashtags, and title length. We also coded thumbnail presentation cues (presence of a human figure and/or text overlay) and recorded whether the uploader was a verified account (when applicable). Two researchers independently completed all classifications; disagreements were resolved by discussion or third-party arbitration.

Video review and classification

Two trained reviewers (Fangjun Xiao, Yifei Liufu) with RA expertise independently screened the initially retrieved videos using predefined inclusion and exclusion criteria, then labeled source category, content theme, and filming style as defined in section “Video variables and content coding.” Inter-rater agreement for categorical labels was assessed using Cohen's kappa. Disagreements were resolved by consensus; unresolved cases were adjudicated by a senior reviewer (Junxing Yang).

Video quality and reliability assessment

Video quality was assessed using the GQS (5-point Likert scale from 1 = poor to 5 = excellent), covering professionalism, completeness, clarity, and usefulness, and reliability was assessed using the modified DISCERN instrument (five yes/no items; total score 0–5, higher scores indicate greater reliability).16,17 Detailed scale descriptions are provided in Supplemental Material 1. Two trained raters, blinded to engagement data, scored all videos independently.

Statistical analysis

Analyses were conducted in IBM SPSS Statistics 27.0. Continuous variables (e.g. engagement metrics, quality scores) were non-normally distributed by Shapiro–Wilk testing; they are presented as median (interquartile range) and compared using the Mann–Whitney U-test (two groups) or Kruskal–Wallis H-test (≥3 groups). Categorical variables are shown as counts and percentages and compared using the chi-square test or Fisher's exact test. Spearman's rank correlation examined associations between quality scores and engagement/duration. Inter-rater agreement for GQS and modified DISCERN was evaluated with Cohen's kappa. Statistical significance was set at p < 0.05.

Results

Video characteristics and platform comparison

We analyzed 209 RA-related short videos (TikTok n = 110; Bilibili n = 99), and the selection process is shown in Figure 1. Overall video characteristics by platform are summarized in Table 1. TikTok videos attracted substantially higher engagement than Bilibili (likes, collects, comments, and shares; all p < 0.05). In contrast, Bilibili videos were markedly longer (median 212.0 s vs 70.5 s; p < 0.001) and were generally older (median video age 972 days vs 143 days; p < 0.001). Despite lower engagement, Bilibili achieved higher information quality and reliability, with higher GQS and modified DISCERN scores (both p ≤ 0.001). Platform-available metadata also differed. TikTok videos were associated with higher uploader follower counts and higher rates of verified accounts, and were more likely to use thumbnails featuring a human figure and text overlay (all p < 0.001). Bilibili videos used more hashtags (p < 0.001). Uploader (“video source”) composition differed significantly between platforms (p < 0.001): TikTok was dominated by medical professionals (RA-focused 56.36%; other medical professionals 30.91%), whereas Bilibili included a larger proportion of science communicators (53.54%). Content themes were broadly similar, with “Interventions” most common on both platforms (TikTok 70.00%; Bilibili 73.74%; p = 0.549). Filming styles differed strikingly (p < 0.001): TikTok mainly used solo narration (80.91%), while Bilibili more often adopted animation/action (32.32%) and PPT/class formats (17.17%). These patterns are displayed in Figures 2 and 3.

Video search strategy for rheumatoid arthritis.

Percentage of RA videos by sources and style of video shooting on TikTok and Bilibili.

Percentage of RA videos by content on TikTok and Bilibili.

Within-platform stratified analyses further revealed internal differences (Tables 2 and 3). On TikTok, videos uploaded by patients received the highest engagement across likes, collects, comments, and shares, yet their content focused predominantly on personal experiences (77.78%). On Bilibili, science communicators not only constituted the largest uploader group but also demonstrated greater content completeness, with higher coverage of “Definition” (43.40%), “Causes” (37.74%), and “Interventions” (81.13%).

Characteristics of included videos on TikTok, stratified by video source.

M: median; Q: quartile; GQS: Global Quality Score.

Characteristics of included videos on Bilibili, stratified by video source.

M: median; Q: quartile; GQS: Global Quality Score.

Quality and reliability by video source

Two reviewers showed high agreement on video ratings. Inter-rater reliability was excellent for both instruments (GQS: Cohen's κ = 0.816; modified DISCERN: κ = 0.951; Supplemental Material 2).

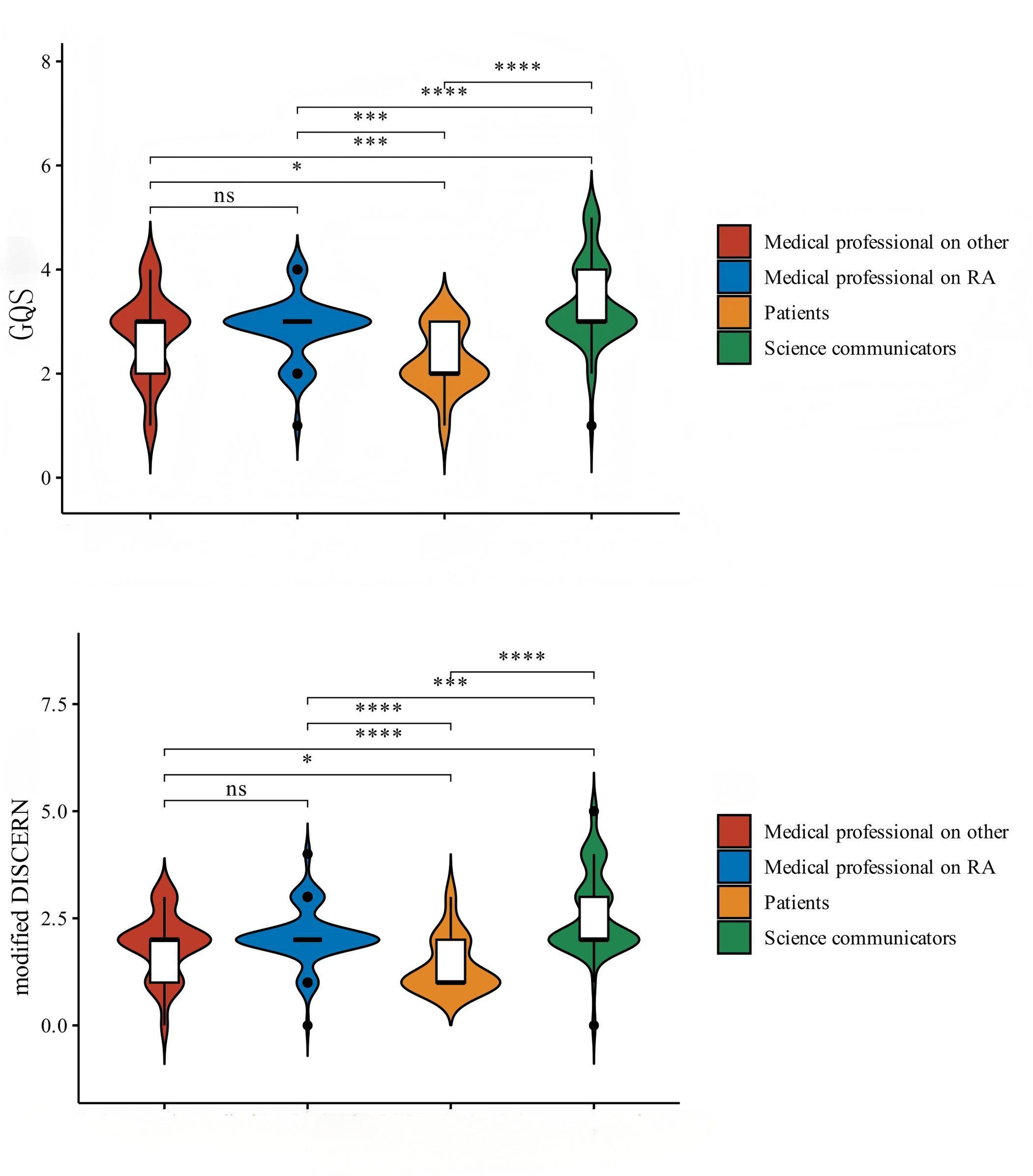

Overall, Bilibili scored higher than TikTok on both quality and reliability measures (Table 1; Figure 4). The median GQS on Bilibili was 3.00 (3.00–3.50), compared with 3.00 (2.00–3.00) on TikTok (p < 0.001). The median modified DISCERN score was also higher on Bilibili [2.00 (2.00–3.00)] than on TikTok [2.00 (1.25–2.00)] (p = 0.001).

Comparison of video quality scores between TikTok and Bilibili.

When stratified by video source, score distributions differed across uploader categories on both platforms (Figures 5 and 6). On TikTok, videos uploaded by rheumatology professionals had the highest scores (median GQS: 3.00; modified DISCERN: 2.00). Patient-uploaded videos had the lowest modified DISCERN scores on both platforms (TikTok: 1.00; Bilibili: 1.50). On Bilibili, science communicator videos showed comparable scores to professional groups (median GQS: 3.00; modified DISCERN: 2.00).

Video quality scores by sources on TikTok and Bilibili.

Video quality by sources across platforms (pooled TikTok and Bilibili).

Platform-specific summaries are provided in Tables 2 and 3. On TikTok, science communicator videos accounted for 4.55% of the sample and had median scores of GQS 3.00 and modified DISCERN 2.00. On Bilibili, science communicator videos accounted for 53.54% of the sample, and 25% achieved GQS ≥ 4 (Tables 2 and 3).

Correlations between scores and engagement

Spearman correlation matrices for TikTok and Bilibili are shown in Figure 7. On both platforms, the engagement indicators (likes, collects, comments, and shares) were strongly and positively correlated with one another. In contrast, no significant correlations were observed between video quality/reliability scores (GQS and modified DISCERN) and engagement indicators on either platform. Video duration showed positive correlations with GQS and modified DISCERN on both platforms. Associations between video duration and engagement indicators were weak overall and were not consistently significant across platforms. Regarding creator- and post-level metadata, follower count, number of hashtags, title length, total videos by uploader, verification status, and thumbnail features (human figure and text overlay) showed variable correlation patterns with engagement indicators and with GQS/modified DISCERN across the two platforms, with most correlations being weak and not statistically significant. Video age also showed weak and variable correlations with engagement indicators and with GQS/modified DISCERN across platforms.

Correlation matrix of video engagement metrics and quality scores on TikTok and Bilibili.

Discussion

In the current age of digital health-based communications, short-video platforms like TikTok and Bilibili have emerged as significant sources of information regarding RA to the population. Although RA predominantly affects middle-aged adults, these platforms remain relevant because their user base skews younger, resulting in high exposure to digital health content. 18 Younger users may encounter RA information for general health learning or when seeking information for family members, rather than because RA is more prevalent in their age group. Accordingly, our discussion of TikTok and Bilibili reflects population-level information exposure and dissemination dynamics rather than the epidemiological age distribution of RA. Within this broader context, access to short-form digital content may influence how patients and the public seek information and engage in empowerment and self-management. 19

In this cross-sectional study, we compared RA-related content across TikTok and Bilibili and observed a platform contrast: Bilibili videos had higher quality and reliability scores, whereas TikTok videos showed higher engagement. This difference may relate to platform-specific content ecosystems. Bilibili supports longer videos and often facilitates more structured, educational presentations, which may be better suited for explaining complex topics such as RA definitions and pathophysiology. In our sample, science communicators represented a substantial proportion of Bilibili uploaders and achieved quality/reliability scores comparable to professional groups, consistent with their potential role in translating specialist knowledge into accessible communication. 20 In comparison, TikTok being a typical short-form platform satisfies the need to draw the highest possible attention and immediate response of the user. 21 As a short-form platform, TikTok content is often optimized for brevity and immediate viewer response, which may favor simplified framing and personal narratives. We have found, as have a series of studies in other disease domains,22,23 that this is the means that makes one concentrate on such treatment interventions and personal experiences of illness. However, as shown in our correlation analyses, engagement metrics were not significantly associated with quality or reliability scores on either platform, indicating that highly engaged videos do not necessarily provide more rigorous or reliable information.

Scientific rigor depends on the source of the video. We discovered that rheumatology professionals recorded the highest scores in quality of videos and this is corroborated with the underlying influence of professional authority in medical communication. 24 Patient-uploaded videos showed the lowest reliability scores on both platforms; although they may reflect lived experiences, their content may be less likely to provide comprehensive, evidence-based information. What is more important, we have not found any significant positive relationship between the user engagement metrics and the quality ratings, and even a negative tendency on Tik Tok. This pattern is consistent with prior research on online patient education materials in musculoskeletal conditions, which has highlighted substantial variability in information quality and the need for cautious interpretation of online health materials. 25 Given that engagement is time-dependent and Bilibili videos were generally older than TikTok videos, video age should also be considered when interpreting engagement differences. In addition, our exploratory analyses incorporating creator- and post-level metadata showed mostly weak and nonsignificant associations with engagement and with quality/reliability scores. Explaining why certain content attains high visibility would likely require platform-level recommendation data (e.g. impressions and watch-time) that were not accessible in this study.

Our cross-platform findings illustrate that reach and trustworthiness can diverge across platforms, and the following communication perspective offers a possible contextual explanation. The health information ecosystem associated with short videos is a complex adaptive system determined by features of platforms, creator incentives, and user actions. 26 By creating a subculture of “knowledge,” Bilibili generates an environment conducive to depth, so users exhibit a greater desire to learn and high-quality content is positively reinforced. 27 The model based on algorithms in TikTok can create “information cocoons” where users are summarized repeatedly and repeatedly to similar (sometimes partial or incorrect) messages which reinforce previously held biases. 28 Furthermore, RA is a chronic disease whose management depends on the permanence of education of the patient and shared decision making. Information of this kind online that is wrong or one sided (e.g. stress upon a “special” medicine) may lead to delays in treatment based on guidelines and increased anxiety or financial burden.29,30

To situate these findings within the wider online information landscape, it is also important to consider other contemporary channels through which RA information is accessed. Beyond TikTok and Bilibili, RA-related health information is also widely accessed through other channels, including traditional web-based materials, long-form video platforms such as YouTube, and rapidly expanding AI-assisted question-answering tools. Compared with short-video platforms, web-based patient education materials and many disease-specific online resources are typically organized in a more text-centered format, where readability and completeness become major determinants of usability. 31 YouTube, as a long-form video platform, often enables more extended explanations but similarly shows heterogeneity in information quality across medical topics. 32 More recently, AI-generated responses (e.g. ChatGPT-like systems) have been evaluated for readability, reliability, and quality in pain-related queries, underscoring both the potential and the uncertainty of AI-mediated health information. 33 Thus, the results should be regarded as platform-specific to China-based short-video ecosystems, and further research may establish the quality of the RA information across these newer and more established platforms by harmonizing their evaluation systems.

These findings carry clear practical and policy implications for multiple stakeholders. For the public and patients, the study underscores the urgency of developing digital health literacy—the ability to critically appraise online health information. 34 Users viewing videos should assess uploader credentials and beware of overreaching claims, lack of citations, or content that appeals mainly to emotion. For health care professionals and institutions, a more proactive role in creating authoritative, accessible short video content that corrects information is necessary. In clinical practice, clinicians should explicitly inquire about the sources of information patients have, and clarify misconceptions as part of the office visit. 35 For platforms, our results suggest that greater social responsibility is needed: improve the review of health content, provide readily visible verification badges for certified professionals and institutions, consider including quality assessment in recommendation algorithms—for example, an “information quality score” to balance popularity against scientific rigor and guide the ecosystem toward beneficial outcomes. Regulators might consider issuing guidelines to promote graded management of health care content and effective means for reporting and correcting misinformation. 36

This study has several limitations. The design is cross-sectional and depicts associations rather than causal effects, and the sampling describes only one time point in a dynamic recommendation ecosystem. Only two Chinese language platforms were examined, which limits generalizability to other cultures, languages, and product characteristics. The search terms and eligibility rules were predetermined, but the tagging of short videos and the rapid turnover of content open the prospect for selection bias. Besides, the engagement metrics also require time to work; we included video age and made it a part of our exploratory saliency, but time since upload can still confound comparisons between platforms. We also used platform-visible data (e.g. likes, collects, comments, shares, and selected metadata) and were not allowed access to platform data such as distribution and recommendation data (e.g. impressions, watch-time, or recommendation pathways), which would have helped us be more mechanistic in understanding how and why some videos get high visibility. The subsequent research would consider longitudinal sampling between a few times and on several occasions and use of RA-specific checklists of assessment and in case feasible, a statistical extrapolation of video age upon retrieval of results of analysis on engagement.

To our knowledge, this is one of the first sources to measure the general level and trustworthiness of the RA-related short-video information on two big Chinese-language sources. This is also a major strength because it has a real-world application: the analysis shows what can easily be accessed and consumed by the population during the everyday search of information. Our comparison of platforms with different content ecosystems reveals structural dissociation between reach and trustworthiness, which can be used in practice to educate patients and engage clinicians as well as govern quality on a platform-by-platform basis. This is also a start point that can be used in later cross-disease and cross-platform comparisons.

In conclusion, RA short-video content shows a clear divide: one platform reaches more people, while the other is generally more reliable. Video quality depends most on who made it and how it is presented, and likes or views are not good indicators of trustworthiness. A practical way forward is to educate viewers, involve more clinicians and skilled science communicators, and build simple reliability signals into how videos are recommended. These steps can help keep information both easy to access and accurate, supporting better learning, decisions, and self-management for patients.

Conclusion

The current cross-sectional investigation demonstrates that the level of the RA videos on short-video platforms is not even: Bilibili tends to have longer and higher-quality content, whereas Tik Tok achieves a better reach, but not necessarily and better accuracy. The most important driving force is uploader identity with most score, and the least reliable is patient-generated content. The metrics used in engagement are not used to believe in quality and, as a result, popularity is a bad measure of reliability. In practice, platforms ought to introduce verified medical creators, facilitate citation/labeling capabilities; clinicians ought to contribute proactively, and viewers should be given specific digital-health-literacy signals.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076261435374 - Supplemental material for The quality and reliability of short videos about rheumatoid arthritis on Bilibili and TikTok: Cross-sectional study

Supplemental material, sj-docx-1-dhj-10.1177_20552076261435374 for The quality and reliability of short videos about rheumatoid arthritis on Bilibili and TikTok: Cross-sectional study by Junpeng Qiu, Muyuan Hou, Yifei Liufu, Junxing Yang and Fangjun Xiao in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076261435374 - Supplemental material for The quality and reliability of short videos about rheumatoid arthritis on Bilibili and TikTok: Cross-sectional study

Supplemental material, sj-docx-2-dhj-10.1177_20552076261435374 for The quality and reliability of short videos about rheumatoid arthritis on Bilibili and TikTok: Cross-sectional study by Junpeng Qiu, Muyuan Hou, Yifei Liufu, Junxing Yang and Fangjun Xiao in DIGITAL HEALTH

Footnotes

Acknowledgements

This work was supported by Shenzhen Hospital (Futian) of Guangzhou University of Chinese Medicine Research Project (No. GZYSY2024018) and Shenzhen Society of Traditional Chinese Medicine Scientific Research Project (No. 2024099F).

Ethical approval

The data used in this study were sourced from publicly available video content published on platforms such as Bilibili and TikTok. These videos are publicly accessible, and no personal privacy information was involved during the data collection process. All analyzed content was publicly available, and the study did not involve the collection or processing of users’ private information. In accordance with relevant ethical review guidelines, ethical approval for this study was not required.

Author contributions

Conceptualization: Fangjun Xiao. Data curation: Junpeng Qiu, Muyuan Hou. Formal analysis: Junpeng Qiu. Methodology: Junpeng Qiu, Fangjun Xiao. Resources: Junpeng Qiu, Muyuan Hou. Software: Junpeng Qiu; Muyuan Hou, Fangjun Xiao. Validation: Junpeng Qiu; Muyuan Hou. Visualization: Junpeng Qiu, Yifei Liufu, Junxing Yang. Writing—original draft: Junpeng Qiu. Writing—review & editing: Muyuan Hou, Yifei Liufu, Junxing Yang, Fangjun Xiao. All the authors contributed to manuscript writing and editing and approved.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Shenzhen Hospital (Futian) of Guangzhou University of Chinese Medicine Research Project (No.GZYSY2024018) and Shenzhen Society of Traditional Chinese Medicine Scientific Research Project (No.2024099F).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

The datasets used and/or analyzed during the current study are available from the corresponding author on reasonable request.

Generative AI and AI-assisted technologies

The authors declare that no AI tools were used in the development or editing of this manuscript.

Guarantor

Fangjun Xiao.

Supplemental material

Supplemental material for this article is available online.