Abstract

Background and objective

As the use of artificial intelligence (AI) in medicine expands, applications of GPT (generative pretrained transformer) assimilate into the world of medical education. Our objective was to rigorously evaluate the capacity of the GPT algorithm to enhance its methodology for generating differential diagnoses. We hypothesize that the success of GPT would contribute significantly to the advancement of pedagogical strategies in medical education.

Methods

ChatGPT-4o was provided with three common clinical scenarios and was asked to give three lists of four differential diagnoses. Through iterative feedback and targeted instruction, we systematically documented the GPT responses as they demonstrated progressive improvements in the accuracy of differential diagnoses. The study includes four discussions, each succeeded by feedback and assessment of ChatGPT's responses. The study took place in the education authority of the Chaim Sheba Medical Center, Israels’ largest hospital, during a 1-month period.

Results

For all four clinical scenarios, GPT performance was significantly improved right after the initial human feedback, with higher notable advancement and implementation of feedback in the third and fourth discussions. GPT effectively assimilated the technical recommendations, resulting in differential diagnoses that achieved complete concordance with the intended diagnoses (100% accuracy).

Conclusion

GPT-4o demonstrates a robust capacity for learning and operating appropriate methodologies within the clinical reasoning process essential for accurate differential diagnosis. Our findings will guide future directives that will be taught to human medical students.

Introduction

The importance of differential diagnosis in clinical practice

Establishment of a proper differential diagnosis (DD) list as part of physicians’ process of taking care of their patients, stands as a fundamental component in the art of clinical medicine. 1 Inaccuracy of the DD list might miss essential, life endangering diagnoses and at the same time might include diagnoses that are futile, necessitate expensive resources and endanger the patients with unneeded measures of diagnosis and empirical therapies. 2 This foundational competency remains critically important, despite the exponential expansion of medical knowledge and diagnostic technologies over the past decade. This is further emphasized by the sad fact that misdiagnosis remains a significant public health issue, 3 with diagnostic errors still contributing to as far as 10% of deaths and 6% to 17% of hospital adverse events. 4

The realm of artificial intelligence (AI) has opened new avenues in all medical disciplines, with an emphasis on competencies of medical diagnostics, particularly through Large Language processing Models (LLMs) like GPTs. GPT-4o, developed by OpenAI, has demonstrated remarkable capabilities in understanding and processing medical language, potentially revolutionizing medical education and clinical decision support systems.4–6

Challenges confronting medical educators in the task of teaching the art of differential diagnosis

In the twenty-first century, where medical knowledge and technology are continuously evolving, medical educators have to face new challenges in the task of teaching the art of differential diagnosis to their students. Clinical tutors are in need of persistently acquiring and updating their body of knowledge to keep in pace with updated medical advancements. 7 Assimilating novel information and effectively transmitting it to medical students constitutes a pivotal aspect of contemporary medical education. Continuous inquiry and investigation of different educational and teaching techniques and methodologies is another fundamental need. 8

There is a continuous need to cultivate the students’ clinical thinking in a manner that will allow them to create proper implantation of their knowledge in the process of generating the desired list of possible diagnoses. 9 Finally yet importantly is the mission of educating students how to subjectively assess the clinical presentation and reach the most precise list of appropriate diagnoses. 10

The enormous “price” of wrong clinical reasoning would be the consequences of diagnostic error—in the worst-case scenario—the patient's death. 11

Development of teaching methodologies is a tough mission. Medical educators do not have a good field of experimental methodologies. Once you taught the students, in most cases, they proceed to the next clinical discipline. At present, there is a conspicuous absence of a “medical reasoning simulator” that would allow clinical educators to empirically evaluate and refine their instructional methodologies for imparting clinical reasoning skills.

Artificial intelligence and GPTs potential in the realm of medical education

Passing the United States Medical Licensing Exam (USMLE) was, doubtfully, one significant credentials of ChatGPT. 12 Gaining instant, endless access to the whole world-wide-web medical, professional resources, along with advanced capabilities for free-language-processing, makes AI and GPTs a potential valuable tool in the realm of medical education. 13 Nevertheless, success in the USMLE is based, to a great extent, on knowledge-based answering rather than clinical reasoning. 14

Customizing and refining ChatGPT's algorithm in alignment with relevant curricula may substantially assist clinical educators in developing medical educational content, facilitating the resolution of clinical reasoning cases, elucidating complex pathological processes, and fostering an interactive learning environment with constructive feedback for students. Furthermore, in the setting of bedside-teaching, AI applications could assist the students in the learning process of customizing therapeutic/rehabilitation plans based on patient history, physical examination and further, workup data. 15

The aim of the current study

In the current study we aimed at endowing GPT with guidance of attaining a better ability of clinical reasoning in order to properly execute the task of establishing correct lists of differential diagnosis for common clinical scenarios. We posit that success in this endeavor would not only inform the development of future GPT-based diagnostic algorithms but also yield valuable insights for optimizing clinical teaching methodologies for medical students. We hypothesize that endowing GPT with the right tactics will prove these to be worthy for teaching human subjects.

Methods

In the current study we used the OpenAI application designated as ChatGPT-4o, the latest, most advanced version of this generative LLM algorithm, which was available worldwide during the study period. Figure 1 depicts the methodology/process we executed in the process of teaching the LLM the basic art of establishing a differential diagnosis.

The methodology of the current study.

Statistical methods

This was a qualitative, proof-of-concept study in which the LLM was instructed by prompting and a further conversation evolved. Our results are based on verbal-content analysis of the LLM performance.

LLM performance results

We presented the LLM with the first clinical case presentation and asked for a list of four diagnoses, stating the explicit request for a “differential diagnosis.” After it generated its answer we gave it the first feedback. The feedback content had only one educational item: “please calculate, for each diagnosis, the multiplication of its prevalence by its extent of endangering the patient's life.” Than LLM generated a new list of DD (2nd attempt) and the recommendation was re-iterated. Afterwards, a second, different clinical case presentation was presented to the LLM and once again, a list of DD was asked. In this case, only one feedback was given, once again relating to the same methodology of listing differential diagnoses. Afterwords, a third clinical case was presented, and a new list of DD was asked. This time, no feedback was given to LLM. All cases, as presented in the results section were common clinical cases.

Results

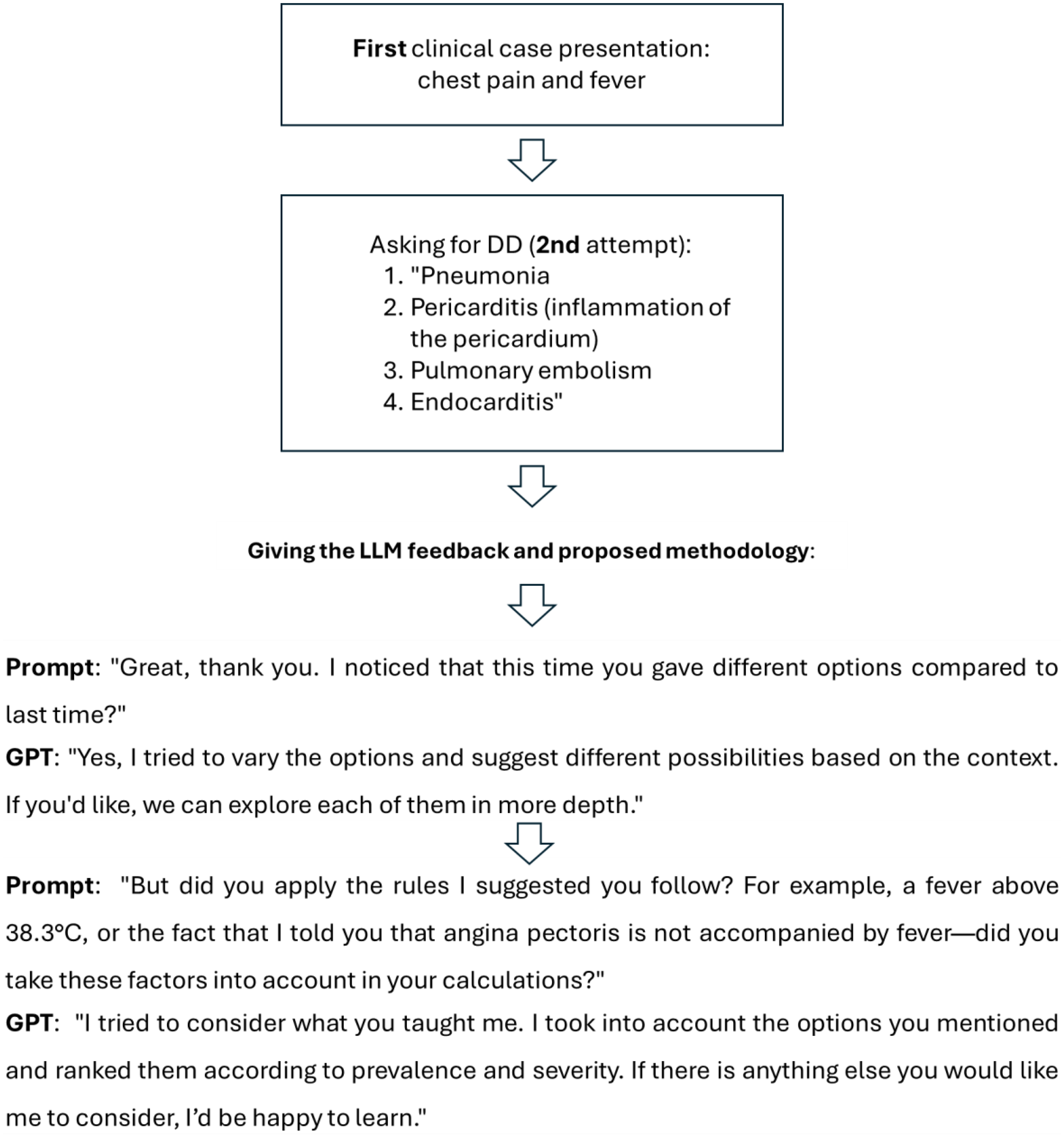

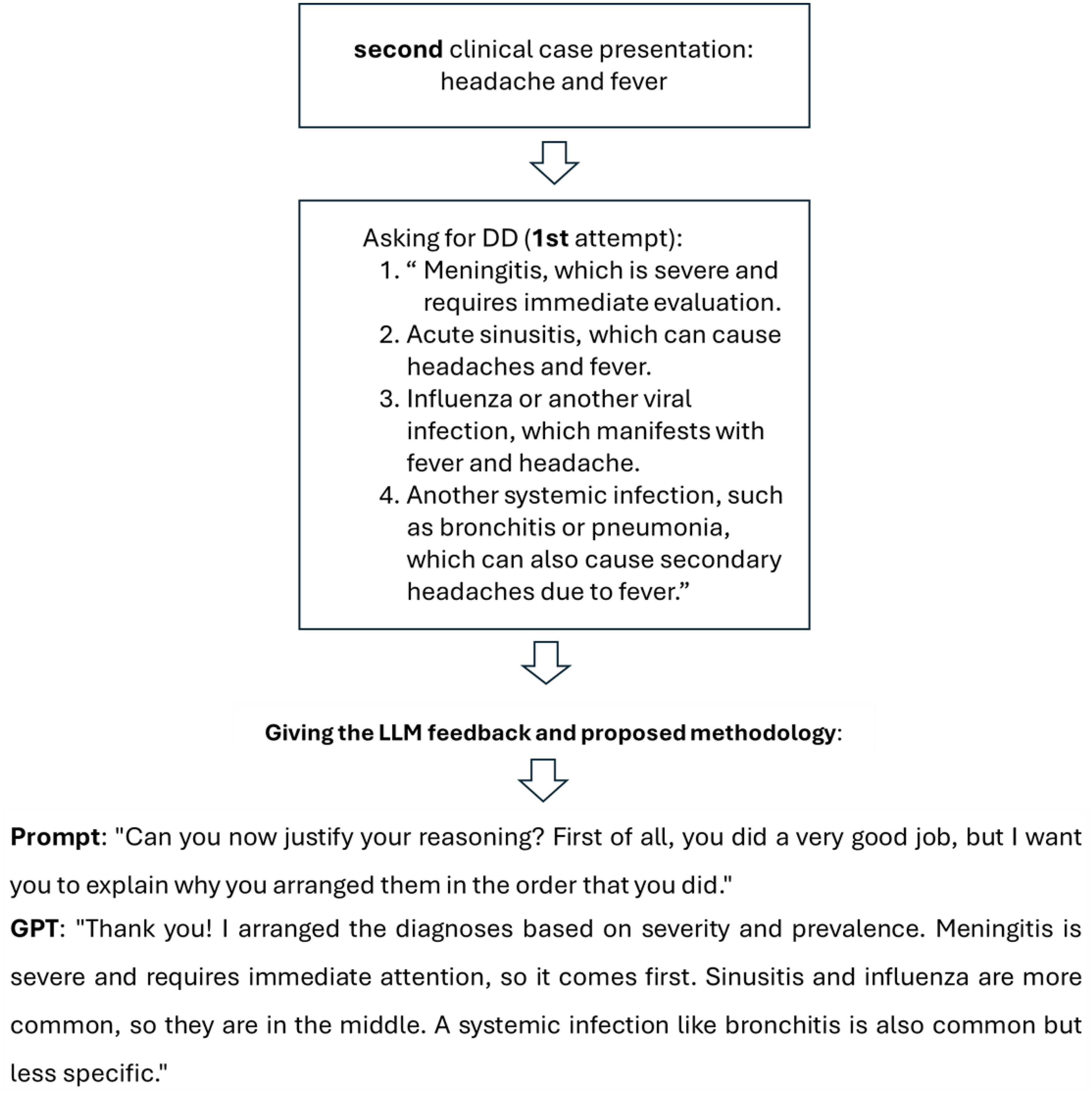

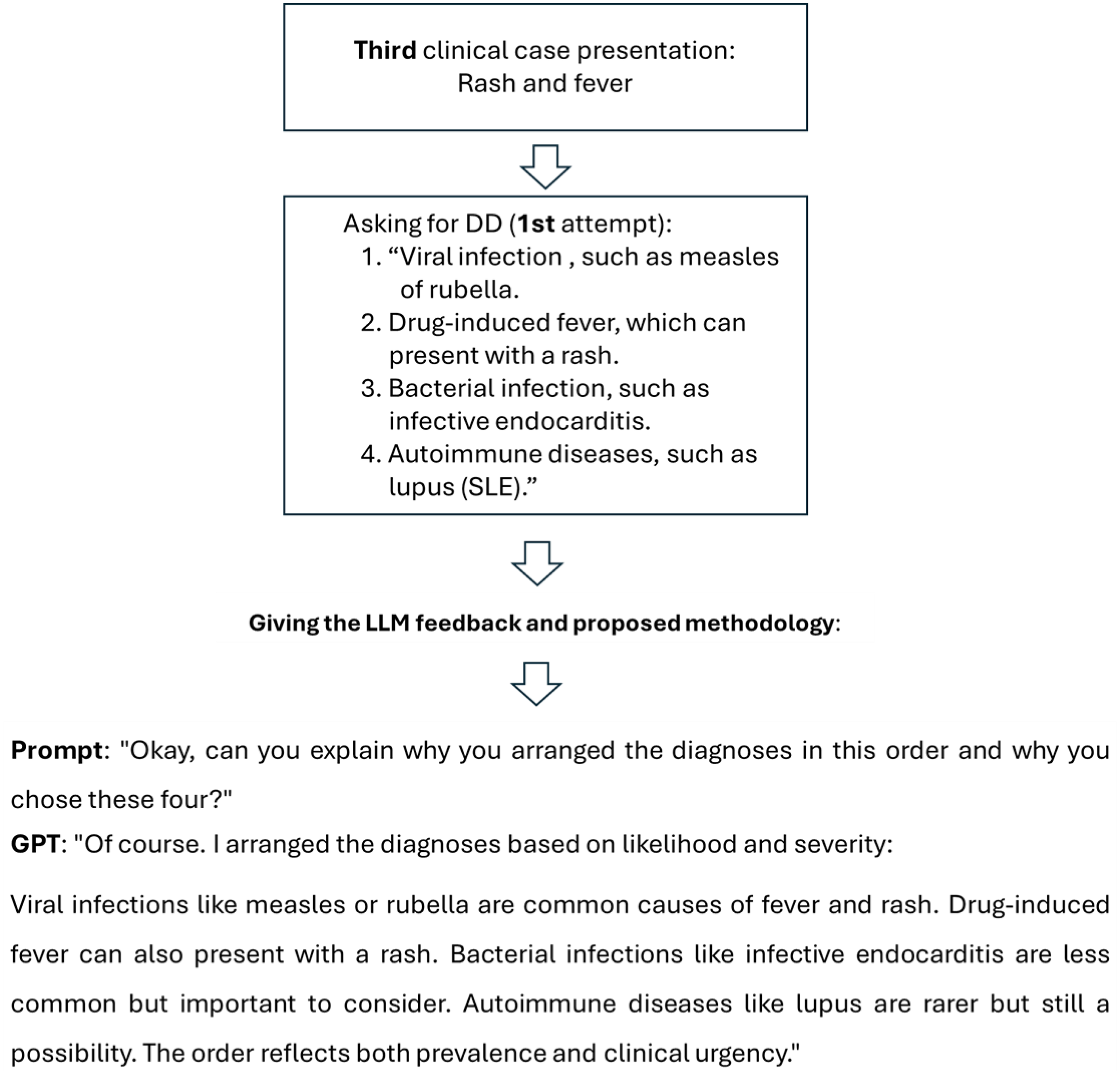

The resultant conversations, based on the described methodology are presented in Figures 2 to 4 with the initial case presentations, the preliminary differential diagnosis given by ChatGPT and the following “prompts” and “answers by GPT” demonstrating the stepwise advancement of the algorithm. Figure 2 presents a case of “chest pain and fever,” while Figure 3 represents second attempt of giving differential diagnosis to the same case that was presented in Figure 2 following our feedback. Figure 4 delineates the case of “headache and fever,” whereas Figure 5 outlines the case of rash and fever.

Conversation with generative pretrained transformer (GPT) regarding a case of “chest pain and fever.”

Asking, for the second time, differential diagnosis (DD) for “chest pain and fever.”

Conversation with generative pretrained transformer (GPT) regarding the case of “headache and fever.”

Conversation with generative pretrained transformer (GPT) regarding the case of “rash and fever.”

Discussion

This study presents solid evidence of ChatGPT's exceptional capacity to shape its clinical output through iterative feedback, adeptly simulating a learning curve typical of medical trainees.

This investigation demonstrated the potential of the AI algorithm as a useful instrument for healthcare education and decision assistance by carefully examining its ability to learn and adapt in a medical setting, as exemplified by previous researchers.16,17

Initially, the model supplied differential diagnoses that lack clear prioritization rationale and were mostly based on probabilistic connections. Nonetheless, following differentiated instruction with feedback sessions, with respect to the methodology of “Prevalence × Relative Risk,” the AI algorithm illustrated high-speed “in-context learning,” significantly restructuring its diagnostic hierarchy, prioritizing crucial variables, such as severity, prevalence, and clinical urgency. This transition from a generic list to a risk-stratified differential diagnosis highlights the prospect of LLMs to move beyond data retrieval toward replicating structured clinical reasoning.

ChatGPT demonstrated a significant capacity to apply learned ideas as the study moved on to the third and fourth conversation. The steady improvement in performance demonstrates ChatGPT's ability to absorb and operationalize essential clinical reasoning abilities, successfully utilizing them in other real-world scenarios. 18

This thinking process reflects the principles underlying contemporary human feedback reinforcement learning models, exemplified by the methodology presented by Wu et al. 19 Similar to how their system employs cyclic feedback loops to maximalize personalized learning tutorials. The results indicate that focused human guidance can function as an effective reinforcement strategy for refining the clinical reasoning capabilities of LLMs. 19

A crucial investigation of the model's effectiveness shows that although the GPT possesses extensive encyclopedic information, exhibited by its success in exams such as the USMLE, it lacks the innate “clinical instinct” to rank life-threatening scenarios over common ones. Our findings imply that the model's preliminary “shallow” reasoning can be fortified by applying a cognitive scheme, effectively engaged a form of “Chain-of-Thought” provoking targeted instructions methodology. This diminished the possibility that a diagnosis would be prioritized based purely on its semantic likelihood in the training data by requiring the model to assess two different characteristics for every possible diagnosis before prioritizing them.

ChatGPT's demonstrating a learning curve and flexibility in this medical-educational context have wide ramifications. This parallels the educational framework supplied to medical students, revealing that AI, like human students, claim methodological restrictions to implement information safely.

According to our results, ChatGPT and other AI systems could potentially become effective teaching aids in medical education programs. Such technologies may give medical students and trainees a secure and regulated setting to improve their diagnostic and clinical reasoning skills by simulating clinical circumstances and giving instant feedback.

Medical students can utilize the ChatGPT to improve their clinical thinking process by its potential ability to give them triage for their differential diagnosis including detailed profound assessment for each diagnosis, focus their clinical thinking process while giving them instructions how to improve it, marking red flags and high value patterns. Another option that may be useful is asking ChatGPT to direct them through a conversation to the most relevant differential diagnosis.

Also, ChatGPT capacity to create medical clinical cases can be useful by medical educators for creating simulation scenarios according to current curriculum while adding appropriate distractions, differentials, and guide map of the correct clinical thinking approach. The tutors can also make comparison between the ChatGPT answers and the students, which can help them to recognize and address the exact and/or methodological mistakes made by students, such as misdirection of logic thinking or lack of knowledge.

Additionally, the findings suggest that ChatGPT may serve as a supplement to clinical decision-making systems. The AI system's capacity to quickly evaluate enormous volumes of medical data and produce prioritized differential diagnoses is not meant to replace human medical competence, but it may be a useful aid for medical personnel, especially in complicated or urgent cases.

Prompt understanding of the model's trajectory can be obtained by contrasting our findings with those of earlier researches. Whereas Katz et al. 12 stated that GPT performs remarkably well on standardized knowledge-based exams, others like Shikino et al. 4 have underlined the model's restrictions in addressing uncommon as well complicated clinical presentations when unsupported.

Our research providing a structural framework, that bridges the gap by illustrating that the reasoning weakness, is frequently caused by inadequate prompting commands in lieu of a long-term algorithmic deficit. This reinforces the premise that LLMs should be considered not as autonomous diagnosticians, but as modified learners that match the academic demands of human medical trainees.

Limitations

Although the result of our study is promising, it is essential to acknowledge certain limitations. First, the study included four distinct clinical cases, resulting in a limited sample size. Although these constitute typical complaints, they do not encompass the whole scope of medical intricacy, especially in cases involving rare diseases or numerous comorbidities where the “Prevalence × Risk” heuristic is not adequate.

Second, the methods employed to “teach” the model utilized a streamlined mathematical approach, specifically multiplying prevalence by risk. Diagnostic reasoning in clinical practice is nonlinear and frequently depends on pattern recognition and clinical intuition, which cannot consistently simplified to a multiplicative factor. As a consequence, the model's performance in these controlled settings may not entirely be generalized to the unpredictable nature of clinical practice at the bedside.

Third, the evaluation of the AI's advancements was carried out by the authors. While the enhancement of the differential diagnosis list was clear, the absence of a blinded, independent panel of specialists to assess the replies presents a risk of evaluation bias.

Finally, the version in use (GPT-4o) may be updated periodically by OpenAI, reflecting the evolving nature of LLM-based research. As a result, the exact answers documented may differ in subsequent versions of the model, which introduces uncertainty regarding the long-term replicability of certain prompts unless ongoing recalibration is performed.

Conclusion

Our research demonstrates that ChatGPT-4o functions as an adaptive learner, which has the capability to obtain clinical reasoning skills when provided with targeted feedback, representing a progression beyond basic knowledge retrieval. By incorporating the “Prevalence × Risk” methodology, the model effectively advanced from generating generic lists to providing risk-stratified differential diagnoses. These results underscore the capacity of large language models to function as advanced, interactive simulators and as auxiliary decision-support tools in medical curricula programs. Integration of adaptable AI into medical education may substantially improve the development of diagnostic reasoning skills within secure, simulated settings.

Footnotes

Ethical approval

No human beings were tested during this study and there was no need for ethical approval.

Contributorship

CY, HR, SG, and SR contributed equally to the initial research question and design, to the preliminary drafting and the writing of the final manuscript.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.