Abstract

Aims

This study combines bibliometric and structured analyses to comprehensively examine the development, methodological characteristics, and application trends of multimodal artificial intelligence (AI) in Alzheimer’s disease (AD) diagnosis.

Materials and Methods

Literature from January 1, 2017 to December 31, 2024, was retrieved from the Web of Science Core Collection. Retrospective bibliometric and visual analyses were conducted using VOSviewer, CiteSpace, and the Bibliometrix R package.

Results

A total of 234 papers were identified, showing a continuous increase in publication volume, with the United States and China as dominant contributors. The analysis focused on data modalities, fusion architectures, and clinical applications. Data trends highlight the fusion of imaging data with genetics, biomarkers, and clinical data. Methodologically, five fusion approaches were categorized, with intermediate fusion being the most widely used strategy for its ability to balance heterogeneous data integration. In application, multimodal AI demonstrated clear advantages in early diagnosis, disease classification, and progression prediction.

Conclusion

Research on multimodal AI for AD has gained global attention and remains a key direction for diagnostic innovation. By synthesizing bibliometric insights with structured analyses of modalities and fusion strategies, this study offers a systematic understanding of current progress and provides valuable guidance for future methodological and translational research.

Keywords

Introduction

Background

Alzheimer’s disease (AD) is a common, irreversible neurodegenerative disorder and the leading cause of dementia among the elderly, posing a significant challenge to global public health. According to the latest epidemiological data,

Currently, the diagnosis of AD primarily relies on unimodal technologies, including imaging data, genetic data, biomarkers, and clinical data. Although these unimodal methods offer certain diagnostic value, they present notable limitations. For instance, the subtle pathological changes associated with early stage AD often exhibit low signal contrast in imaging, resulting in insufficient specificity. 4 Genetic testing, while useful for assessing disease risk, cannot provide a comprehensive prediction of an individual’s likelihood of developing AD, and it may impose psychological stress and the risk of social discrimination on patients. 5 Cerebrospinal fluid (CSF), a key biomarker, requires an invasive collection process that increases patient discomfort and risks. Additionally, its diagnostic accuracy is constrained by sampling conditions and technical expertise. 6 Cognitive assessments can effectively evaluate cognitive impairment but are costly, time-consuming, and reliant on specialized personnel.7,8 In contrast, multimodal data fusion leverages the complementary strengths of these various data sources, facilitating the construction of a more comprehensive disease profile. This integrative approach enhances the accuracy of early detection and diagnosis while providing more precise disease progression predictions.9,10

Multimodal AI technology plays a critical role in applying multimodal approaches. Machine learning models can automatically learn features from various modalities to construct robust predictive models. For example, Ran et al. 11 applied a multi-kernel regularized label relaxation linear regression model to integrate multimodal information, including magnetic resonance imaging (MRI), genetic data, and clinical cognitive behavioral test results, significantly improving diagnostic accuracy. Deep learning models excel at automatically extracting complex features from different modalities and integrating information at multiple levels to uncover potential pathological changes and disease biomarkers. Convolutional neural networks (CNNs), for instance, are extensively used to analyze MRI and positron emission tomography (PET) imaging data, effectively capturing structural and functional alterations in the brain. 12 Recurrent neural networks (RNNs) are particularly suited for processing sequential data, enabling the analysis of temporal patterns in cognitive test results or physiological signals. 13 By combining imaging data, genetic data, clinical data, and biomarkers, multimodal AI enables comprehensive analysis and enhances the accuracy of early prediction and diagnosis of AD. 14

In 2020, Lazli et al. 15 highlighted the limitations of using unimodal MRI for AD diagnosis, citing issues such as the large volume of data and low signal-to-noise ratio. They pointed out that integrating multiple imaging modalities, including structural MRI, functional MRI, CT, and PET, could enhance diagnostic accuracy and improve disease prediction capabilities. This study underscored the importance of multimodal fusion using imaging data for AD diagnosis, laying a solid foundation for future applications of multimodal data in Alzheimer’s research. In 2021, Grueso et al. 16 summarized the application of multimodal data, including imaging data and clinical and genetic data, in AD, further promoting the application of multimodal data fusion and machine learning in the early diagnosis of AD. Compared to the 2020 research, it not only reviews the necessity of imaging data fusion, but also explicitly highlights the importance of integrating other modalities and deep learning models, thereby further improving diagnostic accuracy. In 2022, Qiu et al. 17 explored a fusion model combining MRI and clinical data, extending its application to other types of dementia. The study emphasized model interpretability, representing a significant advancement in multimodal data fusion and deep learning applications. Compared to the 2021 review, this research provided a more comprehensive perspective, offering greater insights into model explainability. In 2024, Teoh et al. 18 systematically reviewed various multimodal data fusion methods, including early, intermediate, and late. Unlike the 2022 review, the 2024 study provided a more structured and detailed analysis of specific fusion techniques, expanded the scope to cover a broader range of diseases, and emphasized addressing challenges related to data missingness, computational complexity, and model interpretability.

Motivation for this article

Although several studies have summarized the progress of AI and multimodal data applications in AD research, there remains a lack of bibliometric analyses that quantitatively reveal the evolution of research hotspots and thematic trends in this field, as well as a lack of systematic examinations of multimodal AI development from three complementary dimensions—data modalities, fusion mechanisms, and clinical applications.

To address these gaps, this study combines bibliometric analysis with a systematic structured analysis to investigate the development of multimodal AI in AD. The main contributions of this article are as follows:

1. Introducing a unified framework of five data fusion architectures. Building upon recent advances in multimodal learning, this study systematically categorizes and summarizes five representative fusion architectures—early fusion, intermediate fusion, late fusion, adaptive fusion, and hybrid fusion—that characterize how heterogeneous data are integrated in multimodal AI systems.

Each architecture represents a specific mechanism of information interaction: early fusion emphasizes direct feature-level integration; intermediate fusion combines learned representations; late fusion merges decisions at the output level; adaptive fusion dynamically adjusts strategies based on data reliability and task relevance; and hybrid fusion flexibly combines multiple fusion levels.

By systematically clarifying these architectures, this article establishes a more comprehensive perspective on how multimodal AI models process, integrate, and interpret diverse data sources in AD research.

2. Employing bibliometric and visualization analyses to reveal research evolution and hotspots. Using tools such as VOSviewer, CiteSpace, and Bibliometrix, this study quantitatively analyzes the literature from 2017 to 2024 to identify publication trends, collaboration networks, and thematic clusters. This bibliometric evidence enables the tracking of topic evolution and the prediction of emerging hotspots, providing a data-driven understanding of how multimodal AI has developed in the context of AD research.

By combining bibliometric mapping with literature analysis, this study bridges quantitative evidence with thematic insights, allowing a more comprehensive understanding of research evolution in multimodal AI for AD.

3. Establishing a three-dimensional (3D) analytical framework. To interpret the bibliometric findings and provide a holistic understanding, this article analyzes the multimodal AI literature across three complementary dimensions:

(1) Data modality dimension: Summarizes and compares the use of imaging, genetic, biomarker, and clinical data, revealing their complementarity and distinct diagnostic roles.

(2) Fusion architecture dimension: Summarizes the five representative data fusion architectures—early fusion, intermediate fusion, late fusion, adaptive fusion, and hybrid fusion—and outlines their core mechanisms and roles in multimodal integration.

(3) Clinical application dimension: Reviews the major application areas of multimodal AI in AD research, including early diagnosis, disease staging, progression prediction, biomarker identification, and other research.

Through this 3D analysis, the article outlines the relationships among data, fusion networks, and application domains, and reveals how multimodal AI research has evolved to address the heterogeneity of data sources and the methodological challenges in AD diagnosis, staging, and prognosis.

This integrated perspective, combining bibliometric analysis with structured analysis of data fusion methods and application domains, provides a comprehensive overview and useful reference for future research on multimodal AI in AD.

Materials and methods

Systematic search strategy

This study comprehensively analyzed existing literature in the Web of Science (WOS) database, focusing on the application of multimodal technologies in AD. A systematic search was performed on the WOS Core Collection, covering publications from 1 January 2017 to 31 December 2024. All relevant records were downloaded and completed within one day to minimize potential biases and accelerate the document retrieval process (10 January 2025).

The search strategy was developed using the following query:

TS = ((”Artificial Intelligence” OR ”Deep Learning” OR ”Machine Learning” OR ”Support Vector Machine” OR ”Linear Regression” OR ”Logistic Regression” OR ”Decision Tree” OR ”Random Forest” OR ”K-Nearest Neighbors” OR ”Naive Bayes” OR ”Naive Bayes Model” OR ”Convolutional Neural Network” OR ”Recurrent Neural Network” OR ”Fully Convolutional Network” OR ”Generative Adversarial Network” OR ”Reinforcement Learning” OR ”Back Propagation” OR ”Fully Neural Network” OR ”Recursive Neural Network” OR ”Autoencoder” OR ”Deep Belief Network” OR ”Restricted Boltzmann Machine” OR ”Transformers” OR ”Graph Convolution Networks” OR ”K-Means” OR ”AdaBoost” OR ”Markov Chain” OR ”Natural Language Processing” OR ”Generative Pre-trained Transformer” OR ”Bidirectional Encoder Representations from Transformers”)AND (”Multimodal” OR ”Fusion” OR ”Data Fusion” OR ”Combined Imaging” OR ”Multi-Modality” OR ”Multimodal Imaging Fusion” OR ”Multimodal Learning”)AND (”Alzheimer” OR ”Alzheimer’s Disease”)).

This search strategy ensured comprehensive coverage of AI models, multimodal fusion techniques, and AD research, providing a robust foundation for subsequent bibliometric analysis.

Data inclusion criteria

The inclusion criteria for studies in the database were defined as follows: (1) published in English; (2) categorized as original research articles or review articles (including systematic reviews); (3) published on or before 31 December 2024; and (4) focused on multimodal AI research in the context of AD. Studies meeting all these criteria were included in the analysis, while those failing to meet any were excluded, as illustrated in Figure 1.

Criteria and flowchart for inclusion and exclusion.

A total of six papers were excluded for not being classified as articles or reviews, and 41 were excluded for not being indexed in SCI or SSCI. Additionally, 330 papers were excluded for being irrelevant to the research topic, based on the following predefined exclusion criteria:

(1) Non-multimodal studies: Excluded if they utilized only a single data modality (e.g. MRI alone, genetic data alone, or linguistic data alone) without explicit descriptions of ‘‘modality fusion,” ‘‘multimodal integration,” or equivalent operations. This also included studies applying multiple analytical methods to a single modality (e.g. using different algorithms to analyze the same MRI dataset).

(2) Non-core AD studies: Excluded if the primary research focus was not on AD, such as studies in which AD appeared only as an auxiliary or secondary validation example rather than the main subject of investigation.

(3) Non-AI-driven studies: Excluded if no AI methods—such as machine learning or deep learning—were used at any stage of the analytical workflow. That is, studies were excluded when all key processes (e.g. feature extraction, modality fusion, and decision modeling) were performed solely through manual rules or traditional statistical techniques without any learning-based algorithms.

To enhance the objectivity and consistency of screening, two researchers independently evaluated each article against the predefined criteria. Discrepancies were resolved through discussion with a third researcher, ensuring consensus. This process guaranteed the reproducibility and transparency of the screening workflow.

Ultimately, 234 records were extracted, each containing the abstract, title, authors, keywords, country/region, institution, journal, and references. The data from these 234 publications were exported as ‘‘plain text files” for bibliometric analysis using VOSviewer (version 1.6.19), CiteSpace (version 4.3.R1), and the bibliometrix package (version 4.3.0) in R (version 4.3.3). The study examined the following publication characteristics: countries/regions, journals, institutions, references, and keywords. Additionally, the h-index, a widely recognized metric for assessing the research productivity and academic impact of scholars, 19 was used to analyze both research productivity and academic influence in this study.

Bibliometric data analysis

VOSviewer is a software tool that visualizes co-occurrence relationships among authors, keywords, citations, and other bibliometric elements. In VOSviewer visualizations, terms closer to each other are categorized into the same cluster. The size of a node represents the frequency of term occurrence, while the distance between nodes indicates the strength of their association. 20 This study used VOSviewer (version 1.6.19) to cluster keywords for further analysis.

The bibliometrix package in RStudio enables comprehensive bibliometric analysis. By examining aspects such as the annual publication volume, the number of publications from various countries and institutions, and journal distribution, bibliometrix reveals key research hotspots, developmental trends, and leading contributors in multimodal technologies for AD. These insights provide a robust foundation for understanding the knowledge structure of this research domain and identifying future directions.

CiteSpace utilizes co-citation analysis and pathfinding algorithms to represent the evolution of a specific knowledge domain visually. Constructing a scientific knowledge map highlights relationships among publications, illustrating the historical research trajectory, current research landscape, and emerging topics in the field. CiteSpace offers valuable insights into the progression and prospects of research. This study applied CiteSpace for burst detection analysis of keywords to identify research hotspots and trending topics across different time periods, thereby facilitating a comprehensive analysis of research trends. 21

Result

Annual publication volume analysis

Figure 2(a) shows that the annual publication volume on the application of multimodal AI in AD research has shown a significant upward trend since 2017. Between 2017 and 2019, the number of publications remained relatively low, indicating that the field was in its early stages. However, starting in 2020, the publication volume began to accelerate, with a notable increase in 2022 and 2023. This growth reflects the continuous development of multimodal AI technologies and their expanding applications in the AD domain. The increasing academic interest in this technology suggests that research output in this field is likely to grow further in the coming years, potentially driving breakthroughs in AD diagnosis, classification, disease progression prediction, and biomarker discovery.

Trends and characteristics of multimodal artificial intelligence (AI) research in Alzheimer’s disease from 2017 to 2024: (a) annual scientific production; (b) modal combination types; (c) fusion network architecture designs; and (d) clinical application scenarios.

Analysis of national scientific output and collaboration networks

According to the visualization results of bibliometric data (Figure 3), research on multimodal AI in AD exhibits significant regional concentration globally. China and the United States dominate publication volume, with noticeably deeper geographic color markers than other countries, indicating the highest research output density in these two nations. South Korea, Spain, the United Kingdom, India, and Egypt are closely followed, with gradually lighter color gradients, reflecting a sequential decrease in research productivity. Regarding international collaboration, China and the United States maintain relatively strong co-operation in this field, with a collaboration frequency of 10 instances. Additionally, China and the United Kingdom have collaborated eight times, while South Korea and Egypt also show a collaboration frequency of eight instances. Similarly, Spain and Egypt have collaborated six times. These collaborative relationships illustrate global scientific communities’ interactive and cooperative efforts in advancing AD research through multimodal AI.

Distribution of publications by countries and regions.

Journal analysis

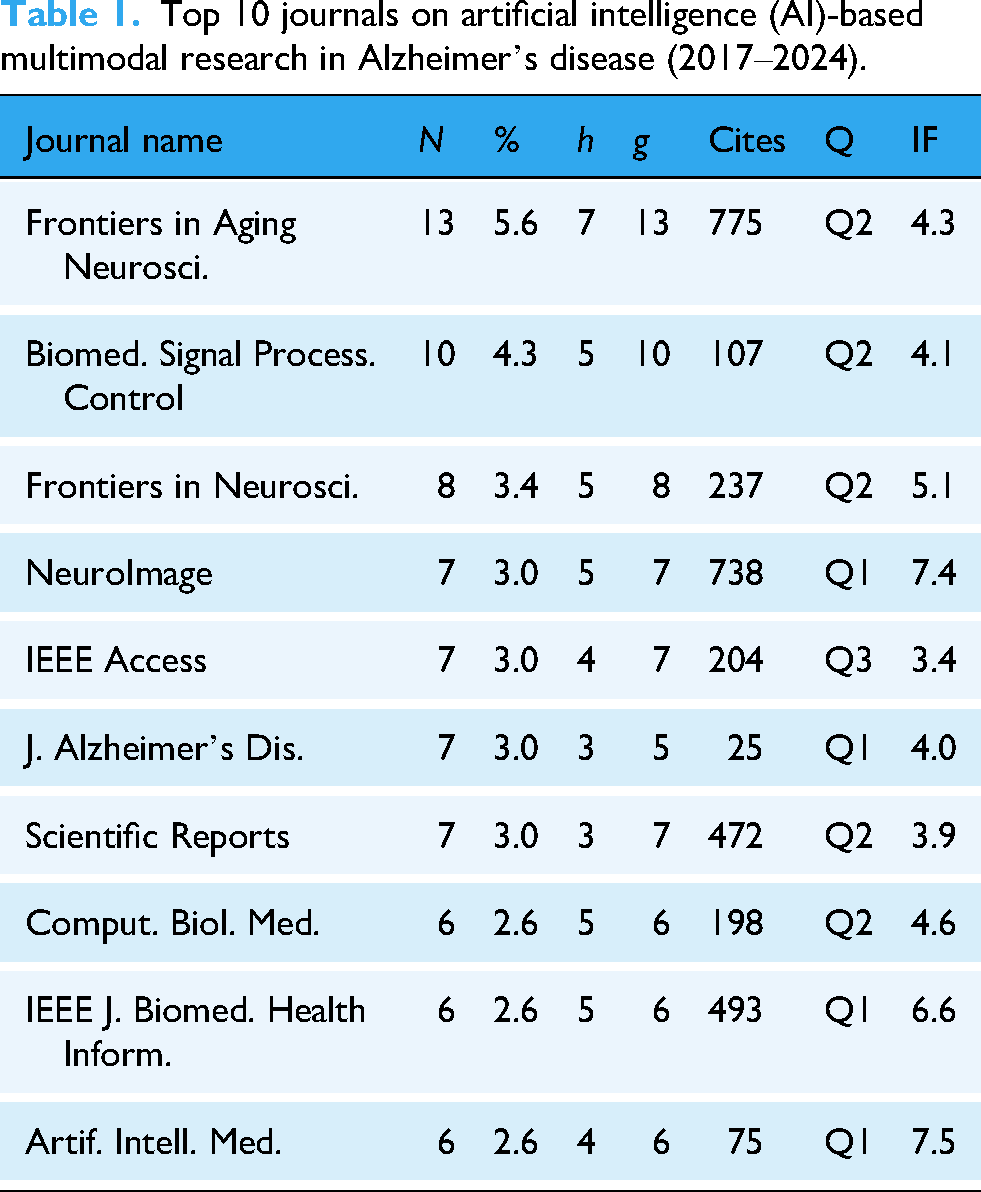

Based on the data presented in Table 1, it is evident that the core journals in the field of AD research using multimodal AI exhibit a strong interdisciplinary nature. Frontiers in Aging Neuroscience leads with 13 publications (5.6% of the total) and has been cited 775 times (h-index = 7), highlighting its prominent role in neurodegenerative disease research. Its high publication and citation rates reflect the impact of the open-access model in promoting multidisciplinary research. In contrast, NeuroImage has published only seven papers (3.0% of the total) but accumulated 738 citations (h-index = 5), underscoring its significant influence in the intersection of brain imaging and AI. Neuroscience journals such as Frontiers in Neuroscience and the Journal of Alzheimer’s Disease primarily focus on the clinical translation of multimodal data. Meanwhile, engineering and information science journals, including the IEEE Journal of Biomedical and Health Informatics and Artificial Intelligence in Medicine, emphasize algorithmic innovations in AI, representing the high academic barriers in technology-driven research. Notably, Biomedical Signal Processing and Control and Computers in Biology and Medicine, as leading journals in the biomedical engineering field, demonstrate a moderate publication volume and citation count. This reflects the practical orientation of signal processing technologies in facilitating the early diagnosis of AD.

Top 10 journals on artificial intelligence (AI)-based multimodal research in Alzheimer’s disease (2017–2024).

Institutional analysis

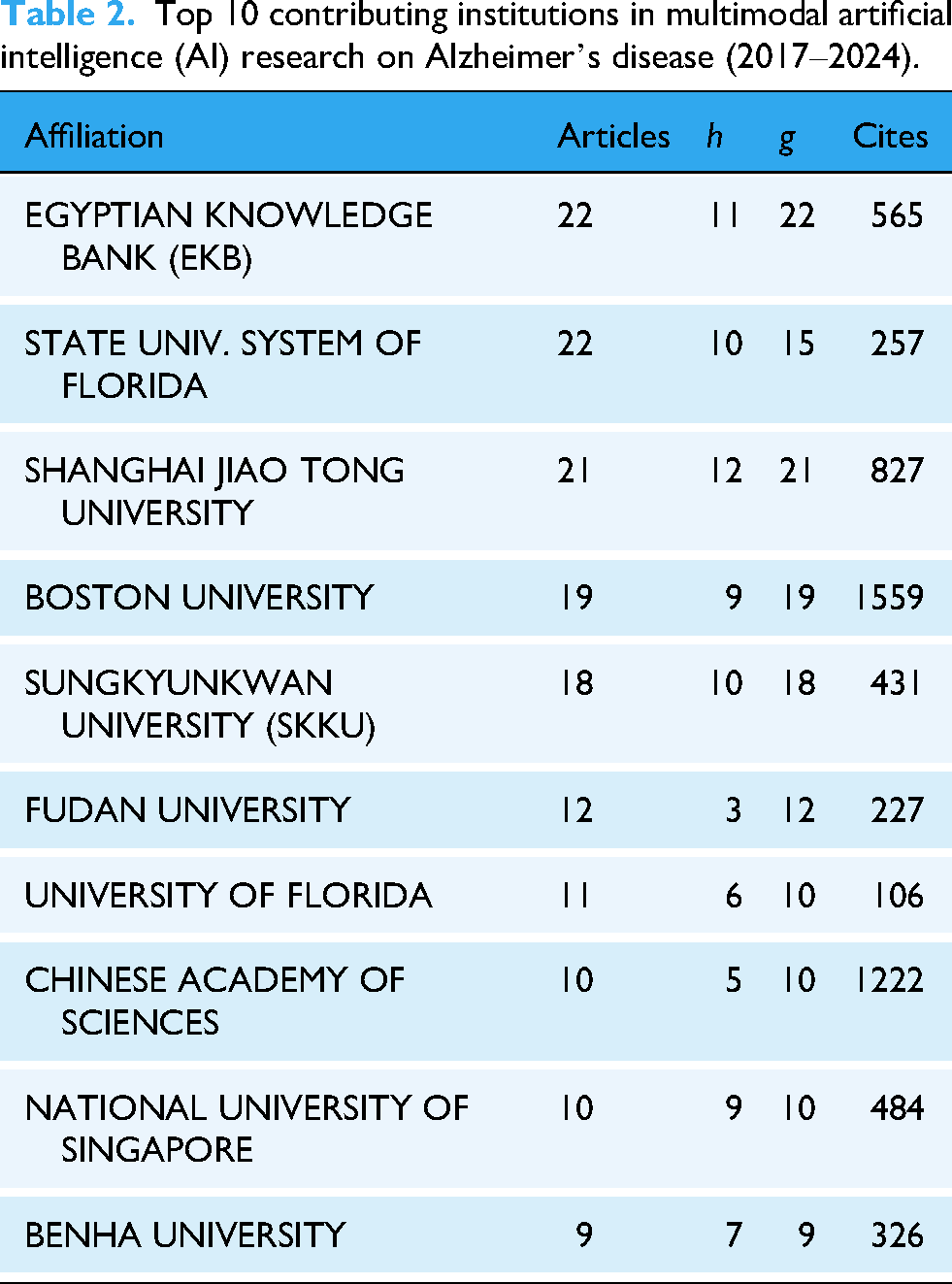

As shown in Table 2, the top 10 institutions have made significant academic contributions to the field of multimodal AI research on AD, as revealed by the bibliometric analysis. The Egyptian Knowledge Bank (EKB) and the Florida State University System lead in the number of publications. At the same time, Shanghai Jiao Tong University has shown remarkable performance in citations and academic impact, underscoring its significant position in this field. With its substantial total citations, Boston University has emerged as one of the most academically influential institutions. Although Sungkyunkwan University (SKKU) and Fudan University have relatively fewer publications, they maintain strong citation records, indicating the sustained impact of their research. Overall, these institutions’ research output and citation performance reflect their extensive participation and significant contributions to the advancement of multimodal AI research in AD, particularly in driving academic progress and enhancing the field’s influence.

Top 10 contributing institutions in multimodal artificial intelligence (AI) research on Alzheimer’s disease (2017–2024).

Citation analysis

The analyzed papers in this study have been cited 7405 times, with the top 10 most cited references listed as shown in Table 3. The most cited paper, “Multimodal Classification of Alzheimer’s Disease and Mild Cognitive Impairment,” 22 has received 60 citations. It proposed a multimodal classification approach integrating MRI, FDG-PET, and CSF biomarkers to enhance the early diagnosis of AD and mild cognitive impairment (MCI). An early fusion strategy was adopted to merge features from the three modalities, followed by classification using a kernel-combined multimodal method. Support vector machine (SVM) served as the classifier, with performance evaluated via 10-fold cross-validation. The results showed that the proposed method achieved a classification accuracy of 93.2% for distinguishing AD patients from healthy controls, outperforming the best single-modality result of 86.5%. For MCI versus healthy controls, the multimodal approach reached an accuracy of 76.4%, again significantly higher than single-modality methods. This study highlighted the advantages of multimodal data fusion in the early diagnosis of AD and MCI, particularly in enhancing sensitivity and specificity. The proposed multimodal approach demonstrated excellent performance, illustrating that the combination of MRI, PET, and CSF biomarkers provides complementary information, offering a more precise diagnostic tool for early detection.

Top 10 most locally cited references in multimodal artificial intelligence (AI) research on Alzheimer’s disease (2017–2024).

Keyword co-occurrence network analysis

The existing literature contains 1026 keywords, which can be divided into four clusters. The selected keywords were visualized using the abstracts, titles, and keywords from papers in the WOS database. The analysis conducted with VOSviewer revealed four distinct clusters. The keyword co-occurrence relationships in multimodal AI research on AD are visualized in Figure 4.

Keywords co-occurrence overlay visualization map.

Cluster 1. Clinical application scenarios (green): This cluster represents the application domains of research, with core keywords including ‘‘classification,” ‘‘diagnosis,” ‘‘prediction,” and ‘‘mild cognitive impairment (MCI).” It reflects that the primary focus of current studies is on early diagnosis, disease classification, and prediction using AI technologies.

Cluster 2. Multimodal AI methods: Deep learning (blue) keywords such as ‘‘deep learning,” ‘‘ CNN,” and ‘‘feature representation” are prominent in this cluster, emphasizing the central role of deep learning techniques in disease classification.

Cluster 3. Multimodal AI methods—machine learning (Red): This cluster highlights the application of traditional machine learning methods, with core keywords including ‘‘machine learning” and ‘‘feature fusion.” Despite the widespread adoption of deep learning, conventional machine learning algorithms like SVMs and random forests (RFs) play a significant role in disease diagnosis.

Cluster 4. Multimodal data (yellow): The yellow region focuses on the various modalities relevant to multimodal AI, particularly ‘‘MRI,” ‘‘FDG-PET,” and ‘‘structural MRI.” Additionally, terms like ‘‘multimodal classification” are frequently observed, indicating the application of these modalities in disease diagnosis. This cluster also incorporates keywords related to integrating multimodal data with AI techniques, including CNNs and SVMs, showcasing the critical role of multimodal AI in processing and analyzing diverse data sources.

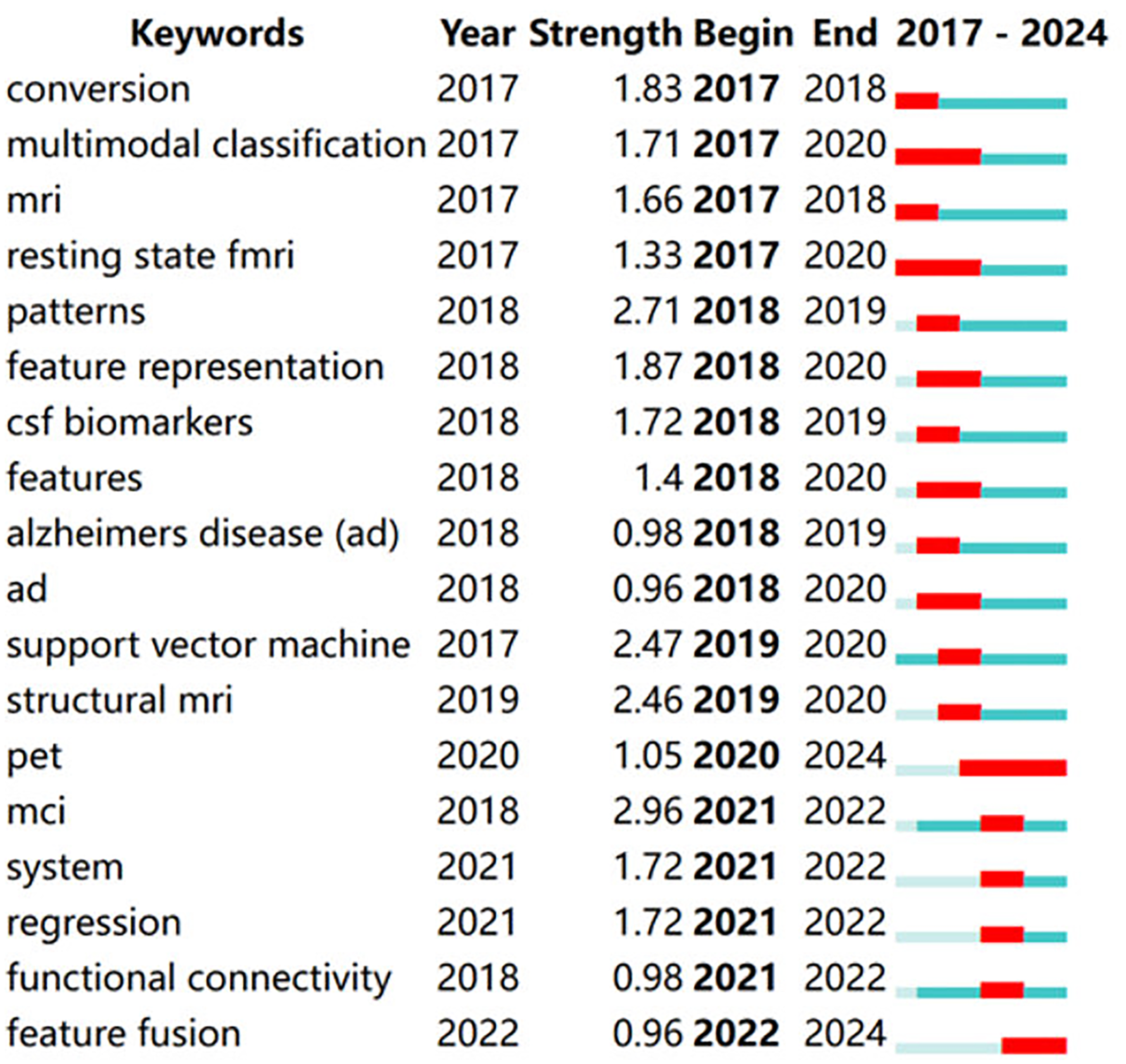

Emerging keyword analysis

Although VOSviewer effectively visualizes keyword co-occurrence, it has limitations in displaying changes in keyword prominence over time, failing to indicate the start and end times of keywords and their burst periods. We used CiteSpace to extract all keywords to address this limitation, focusing on the top 18 keywords with the strongest citation bursts. By analyzing these 18 keywords with the most significant citation bursts in Figure 5, we aim to uncover the research trends and emerging hotspots in this field. Keywords such as ‘‘random forest,” ‘‘neural network,” ‘‘tau,” ‘‘progression,” ‘‘explainable AI,” ‘‘multimodal learning,” and ‘‘cerebrospinal fluid” have experienced citation bursts at different periods. This trend reflects the increasing application of deep learning techniques, such as RF and neural networks, in AD research in recent years. Additionally, there has been a growing emphasis on studying biomarkers like tau proteins and disease progression. The rise of explainable AI and multimodal learning indicates that researchers are not only focused on model performance but also on model interpretability and integrating multimodal data to enhance the diagnosis of AD and predict disease progression.

Top 18 keywords with the strongest citation bursts.

Multimodal data characteristics

Multimodal data fusion has emerged as a crucial research direction, offering a more comprehensive understanding of disease mechanisms, predicting disease progression, and formulating personalized treatment plans by integrating different data types. For instance, combining genetic data with imaging data can reveal how genetic variations influence brain structure while integrating biomarkers with cognitive data can help assess early manifestations of the disease. This multimodal approach significantly enhances diagnostic accuracy and treatment effectiveness. The commonly used data modalities mainly include the following:

Clinical application scenarios

Multimodal AI in AD research can be applied to several key clinical scenarios:

Fusion network architectures

This study involves five types of multimodal fusion architectures: early fusion, intermediate fusion, late fusion, adaptive fusion, and hybrid fusion. Only original studies that explicitly implemented multimodal fusion models were analyzed when classifying these architectures; review papers were not included in this methodological categorization. The structures and characteristics of these fusion methods are shown in Table 4.

Comparison of fusion strategies in multimodal artificial intelligence (AI) for Alzheimer’s disease.

Early fusion

The architecture of early fusion is illustrated in Figure 6(a). In early fusion, multimodal data is integrated before the feature extraction layer, mapping heterogeneous data into a unified feature space for model processing. The core methods of early fusion include the following categories: (1) Concatenation23–35: This method directly concatenates data from different modalities along the feature dimension to generate a unified input vector. Its simplicity and efficiency make it advantageous while preserving the completeness of the original information. (2) Weighted averaging23,26,27,29,31,33,36,37: This approach assigns static weights to each modality to create a fused feature representation, highlighting the contributions of key modalities. (3) Tensor fusion (Liu et al., 38 Bi et al., 39 Odusami et al., 40 and Bi et al. 41 ): Tensor fusion methods use high-order tensor operations (such as outer products and Kronecker products) to model fine-grained interactions between modalities. This strategy effectively captures non-linear interactions and enhances the representation capacity of the features.

Fusion network architectures for Alzheimer’s disease multimodal data integration: (a) early fusion; (b) intermediate fusion; (c) late fusion; (d) adaptive fusion; and (e) hybrid fusion.

From the perspective of data modalities, studies24–27,31,33,35,36,38,40,42–51 have utilized imaging data (e.g. MRI and PET) for multimodal fusion. Among these, studies24–26,36,38,40,42–45,47–50 used network-based approaches for fusion, employing CNNs and their variants, such as Inception-ResNet, ResNet-50, and mobile vision transformer. For example, ResNet-50, with its deep network architecture, can extract higher-level and more abstract features from imaging data, providing valuable insights for AD diagnosis and analysis. Some studies (Hojjati et al., 27 Dachena et al., 29 Gullett et al., 31 Chen et al., 33 Nan et al., 35 Bi et al., 41 Ismail et al., 46 and Shukla et al. 51 ) also applied network-based fusion but introduced innovations and adjustments in their network architecture and fusion methods based on their specific research objectives. For instance, some employed ensemble models to integrate multiple submodels, leveraging their advantages. Others used logistic regression (LR) models to further optimize classification and prediction based on fused data. Additionally, studies23,28–30,32,34,37,39,41,52–57 fused imaging data with other modalities, such as biomarkers, clinical data, and cognitive and behavioral test data. For example, combining biomarker data (e.g. blood or CSF markers) with imaging data reflecting structural and functional brain changes can provide comprehensive insights into AD diagnosis and progression. The most commonly used network architectures in these studies include CNNs, RNNs, and their variants (e.g. gated recurrent units (GRUs), long short-term memory (LSTM)), SVMs, and RF. CNNs are effective for feature extraction from imaging data, RNNs excel in analyzing temporal patterns from behavioral and cognitive test data, and traditional machine learning algorithms like SVMs and RF are used for classification and prediction based on fused data. Some Jemimah et al. 58 and Zuo et al. 59 focused on modalities other than imaging data. For instance, Jemimah et al. 58 analyzed other types of data, such as motion data from depth cameras, force plates, and interface boards, to identify AD-related patterns. Zuo et al. 59 also utilized genetic data for model construction, investigating the genetic mechanisms and prediction methods for AD.

Regarding learning methods, studies27–31,33–35,37,39,41,51–55,57 applied traditional machine learning algorithms, including SVMs, RF, decision trees DT, LR, and k-nearest neighbors (KNNs). Various feature selection techniques such as Fisher Score, greedy search, and genetic algorithms were used to optimize feature selection and enhance model performance. Multimodal time-series analysis, radiomics, and genetic-imaging data fusion were also commonly applied. Conversely, studies23–26,32,36,38,40,42–50,55,56 employed deep learning methods, including CNNs, RNNs, GRUs, deep belief networks (DBNs), and LSTMs. Advanced multimodal fusion techniques such as image fusion (MRI-PET), feature concatenation, and transfer learning were also adopted to improve model performance. Some studies further applied techniques like quantum dynamic optimization, wavelet transform, and ResNet architectures to enhance accuracy and robustness. Jemimah et al. 58 integrated machine learning with deep learning by combining a constrained deep learning model (c-Diadem) with KEGG pathway constraints while also using SHAP to interpret feature importance, offering novel approaches for AD research.

In terms of clinical applications, studies23–30,32–35,37–39,41,44–49,51–57 focused on early diagnosis of AD, using multimodal data fusion and advanced learning methods to detect symptoms at an early stage for timely treatment and intervention. Studies23,27,28,30–32,34,36,37,41,44–46,55,56,58 addressed the prediction of AD progression, aiming to predict disease progression trends through multimodal data analysis to support personalized treatment planning. Studies40,42,47,48,50,53,55 focused on the classification of AD stages, dividing AD into different stages to aid physicians in tailoring treatment plans. Other studies30,38–40,42,43,48,50,55 were dedicated to the diagnosis of AD, enhancing diagnostic accuracy through multimodal data and machine learning models. Furthermore, studies34,54,55,58 focused on biomarker Research, identifying biomarkers indicative of AD progression to support diagnosis and monitoring. Bi et al. 41 and Jemimah et al. 58 contributed to pathological mechanism research, exploring the molecular and cellular mechanisms of AD to establish a theoretical basis for therapeutic development. Hall et al. 57 investigated personalized interventions, aiming to develop individualized treatment and intervention plans using multimodal data, thereby improving therapeutic outcomes and quality of life for patients.

Despite its advantages, early fusion still faces challenges when dealing with high-dimensional data, redundancy, and data quality issues. To enhance the effectiveness of early fusion, careful design in feature selection and dimensionality reduction is necessary to ensure that the fused data sufficiently represents the key information from each modality. In AD research and applications, early fusion has demonstrated significant value in supporting diagnosis and prediction, especially when integrating imaging data, clinical data, and biomarkers.

Intermediate fusion

The architecture of intermediate fusion, as shown in Figure 6(b), involves merging data after feature extraction but before the decision layer. In this approach, different modalities interact and exchange information within the intermediate layers of the model. The main strategies for intermediate fusion include, (1) Joint representation learning methods59–63: These methods generate unified cross-modal representations through shared parameters or adversarial learning, creating low-dimensional, compact embeddings that reduce heterogeneity and enhance model robustness. (2) Cross-modal attention mechanisms64–69: By dynamically adjusting attention weights, these methods capture dependencies between modalities, adaptively focusing on key cross-modal relationships while preserving modality-specific information. (3) Multimodal recurrent network methods13,70–72: These methods apply temporal models to process dynamic multimodal data, fusing features within recurrent units. They are particularly effective for capturing disease progression trajectories in longitudinal studies. (4) Hybrid architecture fusion methods73–77: Combining heterogeneous networks such as CNNs and transformers, these approaches integrate multi-level features by leveraging complementary strengths, achieving more comprehensive feature fusion. These approaches effectively retain the individual characteristics of each modality while ensuring meaningful information exchange.

Based on the data modalities used in the studies, research works12,59–62,66,67,71,74,76,78–103 utilized multimodal imaging data such as MRI, PET, and DTI as input, employing networks like 3D-CNN, ResNet, and vision transformer to extract high-order features. For instance, Zuo et al. 59 proposed the CT-GAN model, which integrates brain network features from fMRI and DTI using a swapping bi-attention mechanism. Additionally, Lu et al. 91 utilized a multimodal and multiscale deep neural network to extract structural and functional features of the brain and their associations from MRI and FDG-PET imaging modalities. Studies13,14,63,64,68,69,72,73,75,77,79,99,104–143 integrated imaging data with other modalities such as genetic, clinical, and biomarker data. Notable examples include the Qiang et al. 104 that constructed a dual attention network (DANMLP). This model used Patch-CNN to extract sMRI features, an MLP to encode APOE gene data, and positional and channel-wise self-attention for cross-modal alignment. El-Sappagh et al. 70 applied CNNs to process MRI data and BiLSTMs to model MMSE score sequences using multitask learning for AD progression prediction. In addition, Tabarestani et al. 116 proposed the kernelized tensorized multitask network (KTMnet) to simultaneously process MRI, PET, CSF, and cognitive scores, employing kernel mapping and tensor decomposition to achieve high-order feature interactions. Studies129,132,144–149 explored non-imaging modalities such as biomarkers, speech, and eye-tracking data. For instance, Ilias and Askounis 149 a method that combines BERT for text processing and vision transformer (ViT) for analyzing speech spectrograms, capturing the associations between linguistic and acoustic features through co-attention. Yin et al. 144 designs an Internet of Things (IoT)-based architecture that utilizes eye-tracking nodes and machine learning algorithms on the cloud platform to achieve early screening of AD.

Based on the applied learning methods, studies14,83–86,88,97,99,100,102,107,108,112–114,120,122,123,125,130,135–137,140,142,144 employed traditional machine learning approaches. For instance, Castellazzi et al., 83 Kim and Lee, 107 and Mehdipour Ghazi et al. 112 utilized shallow models such as SVM, RF, and extreme learning machines (ELMs). Specifically, Kim and Lee, 107 proposed a multi-scale hierarchical extreme learning machine (MSH-ELM) that integrates sMRI, FDG-PET, and CSF features. AlMohimeed et al. 140 applied particle swarm optimization (PSO) to select cognitive sub-scores and improved classification performance using a multi-level stacked ensemble model.

On the other hand, studies12–14 employed deep learning methods, including CNN, RNN, GRU, DBN, and LSTM networks. These studies also applied multimodal fusion techniques such as image fusion (e.g. MRI-PET), feature concatenation, and transfer learning to enhance the models’ capability in processing multimodal data. Optimization techniques like quantum dynamic optimization, wavelet transform, and ResNet architecture were introduced to improve model performance and accuracy. Morar et al. 13 proposed a highly accurate predictive model by integrating multimodal data and deep learning techniques, which can effectively predict cognitive test scores for AD patients. Miao et al. 74 combine machine learning and deep learning to propose a multimodal multiscale transformer fusion network for computer-aided diagnosis of AD. This model not only efficiently extracts local abnormal information related to AD but also learns the underlying representations of multimodal data, thereby significantly improving diagnostic accuracy.

In terms of clinical applications, studies64,65,67–69,72,76–79,81,82,87–91,93,95–97,100,101,104–109,111,112,115,120,121,123–129,132–134,136–145,147–149 focused on early diagnosis of AD using intermediate fusion methods. By integrating various data sources and fusion strategies, these studies aimed to detect potential pathological features at an early stage, providing a scientific basis for early intervention and treatment. Studies12–14,68,70,72,75,77,79,83,94,102,108,109,112–116,118,120,122,127,131,134,138,140,148 focused on AD progression prediction, employing multimodal data and appropriate models to capture dynamic changes during disease development. The goal was to support the development of personalized treatment plans and disease management strategies. Research works12,61,63,66,67,76,78,80–86,90,91,93,95,97,98,101,102,112,117,123,130,132,135–137,139,142,143,146 were dedicated to AD stage classification. By analyzing and extracting features of different disease stages, these studies accurately classified various stages of AD, providing deeper insights into disease progression. In the field of AD diagnosis, studies63,69,71,73,74,94,96,101,110,117,119,122,128,129,133,137,146,147 adopted comprehensive approaches that combined multiple data sources and fusion strategies. This facilitated improved diagnostic accuracy and reliability. Furthermore, studies13,64,73,95,105,107,111–113,116,120,122,127,134,135 concentrated on biomarker research. By integrating and analyzing multimodal data, these studies aimed to identify biomarkers associated with AD, providing novel biological targets for diagnosis, treatment, and further research.

Intermediate fusion methods utilize multi-level and dynamic feature interaction mechanisms, effectively preserving the unique characteristics of each modality (e.g. structural information from MRI and metabolic features from PET) while enabling in-depth exploration of cross-modal semantic relationships. Compared to early fusion, which involves simple feature concatenation, and late fusion, which relies on independent decisions, intermediate fusion achieves a more refined balance at the feature representation level. This approach provides a robust and interpretable solution for multimodal diagnosis of AD.

Late fusion

The architecture of late fusion is illustrated in Figure 6(c). Late fusion is a strategy that integrates multimodal information during the prediction phase of a model. Its core concept is to independently process each modality’s data and combine the results using flexible decision-making strategies. Compared to early and intermediate fusion, late fusion effectively preserves modality-specific information and reduces the complexity of aligning heterogeneous data. Based on different fusion strategies, late fusion can be categorized into the following approaches: (1) Voting mechanism150–153: This approach aggregates predictions from multiple unimodal models using majority voting or weighted voting strategies to generate the final decision. By independently training classifiers for each modality, it maximizes the retention of unique modality features and mitigates the impact of noise or misclassification from a single modality, thereby enhancing decision robustness. (2) Multiple kernel learning (MKL)11,154: In this method, multiple kernel functions are constructed to learn features from different modalities. By performing feature fusion in various kernel spaces, MKL improves the overall classification or prediction performance. (3) Stacking ensemble 155 : Utilizing the ensemble integration (EI) framework, local predictive models are trained separately from each modality of data, and the complementary and consensus information from multimodal data are integrated to predict the likelihood of patients with MCI progressing to dementia in the future.

In terms of the data modalities used in the studies, research works11,150,154,156–159 primarily focused on multimodal fusion using imaging data, integrating sMRI, fMRI, and PET imaging, or incorporating MEG to enhance spatiotemporal resolution. Common fusion strategies include feature-level fusion (e.g. kernel canonical correlation analysis (KCCA) and fractal dimension analysis) and decision-level fusion (e.g. majority voting and multi-kernel SVM), with an emphasis on capturing the complementary information between imaging modalities. For example, Wang et al. 156 proposed a fusion strategy using KCCA to integrate sMRI and fMRI features. On the other hand, studies151,152,155,160–165 combined imaging data with non-imaging modalities, such as biomarkers (e.g. CSF), behavioral scales (e.g. MMSE), genetic information (e.g. ApoE4), or sociodemographic data, to enhance the clinical interpretability of models. For instance, Zhang et al. 163 introduced an FCRN network to extract imaging features from MRI/PET, which were then fused with clinical data processed using an MLP at the decision level. Zhang et al. 160 constructed a graph neural network (GNN) to combine sMRI/PET imaging features with phenotypic data, achieving personalized early diagnosis of AD. Meanwhile, studies153,166,167 explored non-imaging approaches, relying primarily on physiological signals (e.g. EEG and HRV), behavioral data (e.g. movement and speech), and biomarkers, focusing on the temporal dynamics or behavioral patterns. These studies can be broadly classified into spatiotemporal feature fusion and biomarker-driven approaches. In the spatiotemporal feature fusion category, Boudaya et al. 151 successfully implemented a hybrid machine learning model to integrate the time–frequency features of EEG and HRV, achieving a classification accuracy of 93.86% for MCI. Mohan Gowda et al. 153 combined a long-term recurrent convolutional network (LRCN) with a RF classifier to fuse video and sensor data for fall detection.

In terms of learning methods, studies11,152,155,157,159,165,168 primarily applied machine learning techniques, including ensemble learning, SVM, and traditional regression models, which are widely used in small-sample and heterogeneous data scenarios. For example, Cirincione et al. 155 employed an EI framework to fuse MRI, PET, and clinical data for predicting the conversion of MCI, achieving an AUC of 0.81. Similarly, in the context of deep learning, studies150,151,153,154,156,158,160,161,163,165,169 adopted advanced methods such as 3D CNN, GNNs, and federated learning to enhance the modeling of complex modalities. For instance, Zhang et al. 160 constructed a multimodal GNN that integrated graph structures from sMRI/PET with phenotypic information. Lakhan et al. 165 proposed the FDCNN-AS framework, utilizing federated learning to enable cross-institutional multimodal data fusion while ensuring data privacy. Additionally, Cheng et al. 161 converted one-dimensional fNIRS signals into two-dimensional Gramian angular fields (GAFs) and applied CNNs for end-to-end feature learning.

In clinical applications, studies11,150,154,155,157–165 were primarily focused on the early diagnosis of AD, leveraging the complementary nature of imaging data and biomarkers. For example, El-Gamal et al. 158 combined structural MRI and 11C PiB PET for regional diagnosis, achieving a sensitivity of 96.77%. In the context of AD progression prediction, studies152–154,157,161,166,170 emphasized dynamic functional connectivity and the temporal changes of biomarkers. Chen et al. 159 demonstrated the predictive value of both static and dynamic features from resting-state fMRI for monitoring MCI conversion. Alty et al. 166 developed a smartphone application called TapTalk, which assesses AD risk by analyzing hand movement and speech patterns; regarding AD stage classification, studies11,151,156 integrated neuroimaging and cognitive assessments. For instance, Wang et al. 156 applied KCCA to fuse sMRI and fMRI features for AD staging. Studies150,162,167 were employed to diagnose AD, while studies 152 specifically investigated biomarkers. Additionally, Mohan Gowda et al. 153 applied multimodal techniques in the caregiving domain, introducing a multimodal fall detection system that combined visual and sensor data to enhance caregiving efficiency.

By integrating independent modeling with flexible decision-making, late fusion preserves modality-specific characteristics while balancing inter-modality complementarity. The voting mechanism is widely applied for its simplicity and efficiency, multi-kernel learning demonstrates advantages in handling heterogeneous data, and stacking ensemble methods leverage meta-learning to achieve high-level feature interactions. These approaches have shown significant advantages in improving the accuracy of AD diagnosis, advancing the understanding of pathological mechanisms, and optimizing caregiving practices. Future research should further explore integrating federated learning and explainability-enhancement techniques to accelerate the translation of multimodal AI into clinical diagnostic applications.

Adaptive fusion

The architecture of adaptive fusion is shown in Figure 6(d). Adaptive fusion allows models to achieve optimal integration based on information from different modalities. Its core concept involves adaptive weight adjustment or domain adaptation to optimize the fusion process. It enables the model to automatically learn which modalities or features are critical for the final prediction. Adaptive fusion strategies can be categorized into the following approaches: (1) Dynamic weight adjustment168–171: Methods such as attention mechanisms,168,171 Adaboost, 172 and boosting ensemble approaches 169 are used to dynamically allocate modality weights, optimizing feature contributions. This strategy offers considerable flexibility and robustness. (2) Cross-modal generation and imputation172–181: GANs and RNN are employed to generate missing data dynamically, enhancing the completeness of multimodal data. This method effectively addresses the problem of modality missingness in clinical data collection, expanding the applicability of models. (3) Self-supervised learning and mutual information maximization (Fedorov et al. 182 ): Contrastive learning or maximizing mutual information can be applied to discover cross-modal consistency without requiring labeled data. This method enhances the model’s generalization ability and improves cross-modal consistency in learning.

From the perspective of the data modalities used, studies168,170,171,176–184 applied multimodal fusion using only imaging data, with a particular emphasis on addressing data missingness through GANs in studies.172–174,180,181 These approaches effectively resolve data gaps by generating missing modalities. Additionally, studies168,171,176 utilized attention mechanisms to dynamically allocate weights for imaging data fusion, enhancing model interpretability and performance. Studies169,180,181,183,185 integrated imaging data with other modalities, such as biomarkers and clinical data. Specifically, Zhang et al. 169 and Hwang et al. 180 combined imaging and clinical data using feature augmentation strategies, employing various dimensional models or generative models to strengthen feature fusion. In contrast, Nguyen Martí-Juan et al. 181 integrates imaging data, clinical data, and time-series data, leveraging the minimal RNN to accommodate missing data and to learn the relationships between modalities across different time points. Moreover, the Chandrashekar et al. 186 did not use imaging data but instead leveraged genetic data and other modalities. It introduced the DeepGAMI model, which utilizes functional genomic interaction networks to connect data from different sources. It effectively integrated these modalities for comprehensive analysis through feature imputation and adaptive fusion.

In terms of learning methods, studies168,171–177,183–189 primarily applied deep learning approaches, utilizing architectures such as RNNs, CNNs, GANs, self-supervised learning, and deep generative models for multimodal data fusion. These methods emphasize end-to-end learning, enabling the automatic extraction of high-level cross-modal features while reducing reliance on manual feature engineering. As demonstrated in studies,168,171,172 and shared latent spaces, as in Martí-Juan et al. 183 study, attention mechanisms were used to adaptively allocate the importance of different modalities. Meanwhile, studies169,170,178 integrated machine learning and deep learning techniques, employing deep learning models for feature extraction and generation and applying machine learning classifiers for decision-making. This hybrid approach formed an ensemble classification network, enhancing overall model robustness and accuracy.

In the context of clinical applications, studies151,153,155–157,162,164,165,169,170 focused on early diagnosis of AD, employing adaptive fusion strategies to effectively integrate data from different modalities. This approach significantly supports the early identification of AD, facilitating timely interventions. Regarding the classification of AD stages, studies168,170,172,182,186 applied adaptive fusion techniques to combine multiple modalities, achieving more accurate differentiation between various stages of AD. This enables the development of more targeted treatment plans. In the field of prediction of AD progression, studies177,180,181,183,186 utilized adaptive fusion strategies to dynamically capture the temporal relationships among multimodal data. This enhanced the accuracy of predicting disease progression, providing valuable insights for disease management. For the diagnosis of AD, studies170,171,173,175,178,179,185 employed adaptive fusion methods, which facilitated comprehensive information extraction from different modalities. This improved the accuracy and reliability of AD diagnosis. In the domain of biomarker research, the Liu et al. 175 integrated multimodal data to identify potential biomarkers associated with AD. This approach offers new therapeutic targets and advances understanding the disease’s underlying mechanisms.

Adaptive fusion optimizes the multimodal data fusion process by automatically adjusting the weights of different modalities or using generative models to fill in missing data. This strategy demonstrates significant flexibility and robustness, enabling the model to autonomously learn the most suitable fusion approach based on the characteristics of the data. Through methods such as dynamic weight adjustment, cross-modal generation and imputation, joint learning, and multi-task optimization, adaptive fusion has shown remarkable application potential in AD research. It exhibits exceptional adaptability and practicality in scenarios involving missing or heterogeneous data.

Hybrid fusion

The architecture of hybrid fusion is illustrated in Figure 6(e). Hybrid fusion leverages the advantages of early, intermediate, and late fusion strategies to achieve complementary and synergistic multimodal data integration at different levels. The core architecture, as illustrated in the figure, primarily includes the following four modes: (1) Early + Intermediate fusion187,188: This approach integrates raw data during the low-level feature extraction phase (early fusion) while enhancing feature representation through cross-modal interactions at the intermediate level (intermediate fusion). By retaining fine-grained information from the original modalities and capturing complex dependencies through dynamic intermediate-layer interactions, this method significantly enhances model robustness. (2) Early + Late fusion 190 : In this approach, low-level features are fused early to preserve detailed information, followed by integrating multimodal classification results at the decision layer (late fusion). This strategy effectively balances local feature preservation with global decision optimization, offering strong biological interpretability. (3) Intermediate + Late fusion 191 : This method generates joint feature representations at the intermediate layer, while the late fusion stage employs generative models to address modality missingness. The key advantage of this approach lies in its end-to-end optimization of both the generation and classification processes, thereby enhancing clinical applicability. (4) Comprehensive hybrid fusion 192 : Characterized by its application of multi-stage strategies combining early, intermediate, and late fusion, this approach is well-suited for complex data scenarios. It flexibly handles heterogeneous data while ensuring feature complementarity and model generalization capability.

From the perspective of the data modalities used in the studies, approaches employing imaging and non-imaging multimodal data187,188,189,190,191 integrated MRI/PET with clinical, genetic, and cognitive score data. For instance, Rahim et al. 187 integrated 3D MRI imaging data, cognitive scores, and biomarker modalities using a hybrid architecture comprising 3D CNNs and bidirectional RNNs (BRNNs) to efficiently predict the progression of AD. In contrast, non-imaging multimodal studies 192 relied on longitudinal clinical data (e.g. MMSE and RAVLT) and demographic features. Through LSTM-based temporal modeling and ensemble learning, these approaches optimized predictive stability.

From the perspective of learning methods, studies188,190 integrated machine learning and deep learning approaches, using artificial neural networks (ANNs) or SVM to enhance feature interpretability while employing CNN to process high-dimensional imaging data, thereby balancing performance and clinical applicability. For instance, Tu et al. 188 expanded clinical features using geometric algebra, fused them with CNN-derived imaging features, and then input the results into an ANN for classification. Studies187,191–193 applied deep learning methods, utilizing end-to-end architectures such as transformers and LSTM-CNNs to automate feature extraction and fusion. Notably, Nguyen et al. 181 used a 3D-CNN combined with a BRNN to integrate longitudinal MRI data with baseline biomarkers, achieving an AUC of 0.96.

In clinical applications, hybrid fusion strategies have been widely utilized in various aspects of AD research. Studies187,188,194,195 focused on the early diagnosis of AD. By integrating multimodal data through hybrid fusion, these studies provided robust support for the early detection of AD, facilitating timely intervention and treatment during the initial stages of the disease. Studies187,190–192 applied hybrid fusion to predict AD progression. Leveraging this strategy, these studies captured disease progression trends more accurately, offering valuable insights for developing personalized treatment plans and disease management strategies. Balaji et al. 193 addressed the classification of AD stages. Fusing multimodal data using hybrid fusion achieved the precise classification of different AD stages, aiding clinicians in better understanding the disease’s progression and formulating targeted treatment plans.

Hybrid fusion leverages the advantages of early, intermediate, and late fusion strategies to achieve complementary and synergistic integration of multimodal data across multiple levels. This approach effectively preserves the detailed features of each modality while enhancing model robustness and performance through fusion at different stages, thereby improving the model’s adaptability to complex data patterns.

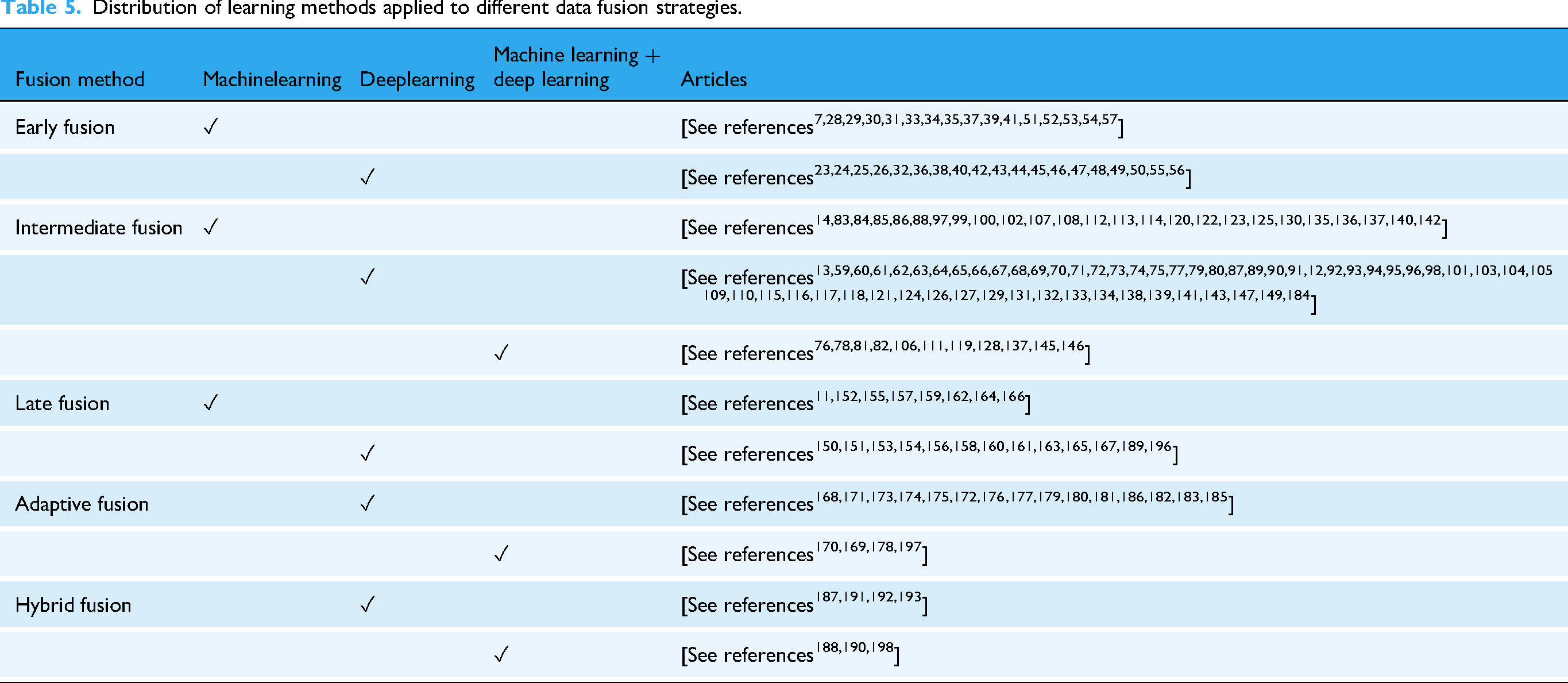

To better understand the distribution of fusion strategies and their associated learning methods, Table 5 categorizes the reviewed studies according to fusion type (early, intermediate, late, adaptive, and hybrid) and whether they employ machine learning, deep learning, or a combination of both.

Distribution of learning methods applied to different data fusion strategies.

Discussion

Summary of current research status

This section provides an in-depth analysis of the current state of multimodal AI applications in AD research from three perspectives: data modalities used, fusion network architectures, and clinical application scenarios. Only original research articles that proposed or implemented multimodal AI models were included in this analytical section; review papers were not considered in these methodological and application-oriented analyses.

The characteristics of multimodal data are illustrated in Figure 2(b); integrating imaging data with other data types has increasingly become a mainstream approach. Specifically, the combination of multiple imaging modalities remains predominant, highlighting the significant role of imaging data in AD research. However, studies integrating imaging data with other data modalities, such as biomarkers, clinical data, and genetic data, are more prevalent. This reflects a growing trend towards multimodal fusion, which enables a more comprehensive extraction of disease-related information, thereby enhancing diagnostic accuracy and disease prediction capabilities. In contrast, the combination of non-imaging data remains relatively limited, potentially due to factors such as data heterogeneity and insufficient sample sizes. Overall, future research is expected to focus more on optimizing fusion strategies that integrate imaging and non-imaging data. For instance, combining imaging modalities with biomarker data could further improve model performance and enhance its clinical applicability. The design of fusion network architectures is illustrated in Figure 2(c); intermediate fusion is the most widely applied fusion network architecture, primarily because it effectively captures cross-modal semantic associations while preserving the distinct characteristics of each modality. This approach provides more robust and interpretable solutions for the diagnosis and prediction of AD. Although early fusion offers advantages such as streamlined processes, it faces challenges in handling high-dimensional data, information redundancy, and data quality issues. Late fusion, on the other hand, focuses on integrating information at the decision-making stage, resulting in limited cross-modal interaction. Adaptive fusion and hybrid fusion demonstrate significant potential due to their capacity for dynamic integration and enhanced performance. However, their relatively limited application can be attributed to the high model complexity. With advancements in computational power and algorithm optimization, these fusion strategies are expected to gain broader adoption in the future.

Clinical application scenarios are illustrated in Figure 2(d); early diagnosis has garnered the most attention, with numerous studies focusing on detecting signs of AD before symptoms become apparent. This emphasis reflects the critical importance of early intervention in improving patient outcomes. MCI represents a key stage in the early diagnosis of AD, while CD is typically regarded as an earlier prodromal manifestation that precedes MCI. 194 As such, CD is implicitly encompassed within the AD progression continuum rather than analyzed as a separate category in our framework. In many of the included studies, multimodal AI models involved participants with early CD or MCI, allowing these models to capture preclinical cognitive changes that occur prior to a formal AD diagnosis. Accordingly, the integrated use of multimodal AI can effectively identify cognitive alterations that precede the clinical onset of AD, demonstrating its relevance across the continuum from early CD to overt AD. Beyond early diagnosis, research on AD progression prediction and stage classification is also substantial, contributing to the development of personalized treatment plans and enhancing therapeutic effectiveness. While studies on biomarkers are relatively fewer in number, they hold significant value, as identifying more effective biomarkers is crucial for early diagnosis, disease monitoring, and drug development. Other research areas, such as pathological mechanism exploration and personalized interventions, remain limited in the number of studies. However, they are of great importance for gaining a deeper understanding of AD pathogenesis and enhancing the precision of treatment strategies.

Limitations and challenges of existing research

Although multimodal AI has made significant progress in AD research, several limitations and challenges remain:

In terms of multimodal data characteristics, issues related to data quality and consistency are particularly prominent. Multimodal data from different sources often exhibit variations in acquisition equipment, processing methods, and annotation standards, leading to increased noise and bias that can compromise model performance and generalization capability. For instance, MRI devices from different hospitals may have varying parameters, resulting in inconsistent image quality, which hinders the model’s ability to accurately extract features during the learning process. Additionally, the lack of sufficient data poses a significant challenge, especially for rare diseases or specific AD subtypes where data scarcity limits model training and validation. This data insufficiency prevents the model from fully capturing the complex characteristics and underlying patterns of the disease.

Each fusion approach has limitations from the perspective of fusion network architectures. Early fusion requires extensive data preprocessing and struggles to handle high-dimensional data and information redundancy. Intermediate fusion, while capable of capturing cross-modal interactions, often involves high model complexity, prolonged training time, and strict requirements for modality alignment. Late fusion retains modality-specific information but lacks sufficient cross-modal interactions, limiting its ability to fully leverage complementary information. Although adaptive and hybrid fusion methods offer notable advantages, their complex model structures pose significant training challenges, requiring substantial computational resources and domain expertise. Additionally, in practical applications, the interpretability of these models remains a concern, making it difficult to intuitively understand the rationale behind model decisions.

Furthermore, the clinical translation of multimodal AI in AD research faces significant challenges. Most current studies remain at the laboratory stage, with a considerable gap between research findings and practical clinical applications. Clinicians often have limited understanding and acceptance of complex AI models, and the reliability and safety of these models require further validation in real-world clinical settings. For instance, the model’s performance may vary across different ethnicities and age groups. Ensuring that the model functions reliably and accurately across diverse clinical scenarios remains a critical issue that needs to be addressed.

At the same time, ethical concerns cannot be overlooked. The collection and utilization of multimodal data involve issues related to patient privacy protection and data security. For example, the use of genetic data may pose privacy risks, and improper access or misuse of such data could lead to severe social and psychological consequences for patients. Additionally, AI models may exhibit decision-making biases. Ensuring model fairness and impartiality to prevent adverse impacts on specific demographic groups is a critical issue that requires careful consideration.

Future research directions

Future research will focus on further optimizing multimodal fusion strategies, particularly the integration of imaging and non-imaging data. Non-imaging modalities—such as biomarkers data, clinical data, genetic data—offer complementary information that can enrich imaging-based analysis. Their integration is expected to strengthen AI applications across key clinical domains of AD research, including early diagnosis, prediction of disease progression, classification of disease stages, clinical diagnosis, and biomarker discovery. Although current studies are most concentrated in early diagnosis, expanding multimodal integration to these diverse application areas will help establish a more comprehensive understanding of AD pathophysiology and improve translational potential. With technological advances, the seamless combination of heterogeneous data will enable more accurate and generalizable predictive models, providing strong support for disease monitoring, treatment optimization, and personalized intervention frameworks. In terms of fusion network architecture design, although intermediate fusion currently dominates research, adaptive fusion, and hybrid fusion strategies are likely to become prominent research focuses in the future, driven by advances in computational power and algorithm optimization. These methods offer greater flexibility and robustness, enabling dynamic adjustment of fusion strategies based on data characteristics and making them particularly suitable for handling heterogeneous data and addressing data missingness issues. Furthermore, with the continuous development of deep learning and self-supervised learning techniques, future research should also prioritize enhancing model interpretability and practicality to better support clinical decision-making and implementation. From the perspective of clinical applications, multimodal AI will continue to drive innovations across multiple domains of AD research. Current studies are predominantly concentrated on early diagnosis, which remains the most active and impactful application area, followed by prediction of disease progression, classification of AD stages, and clinical diagnosis, reflecting growing efforts to enhance disease stratification and monitoring accuracy. In addition, biomarker-driven research—though relatively limited in number—has demonstrated strong potential for elucidating disease mechanisms and identifying therapeutic targets, thereby supporting individualized treatment optimization. By integrating diverse data sources, including imaging, biomarker, clinical, and genetic information, multimodal AI systems can provide more comprehensive assessments of disease trajectories and enable tailored intervention strategies. Notably, recent research 195 has confirmed that single-modal AI has achieved robust performance in early CD diagnosis, while also highlighting the potential of multimodal integration—such as combining MRI with clinical or speech-based data—to further enhance diagnostic efficacy in clinical settings. Building on these findings, and considering the close clinical and pathological continuum between CD and AD, future research should extend the application of multimodal AI from AD to the broader CD spectrum, thereby improving its generalizability and translational value across related cognitive disorders.

Overall, future research on multimodal AI in AD should prioritize the refinement of data fusion methodologies, the optimization of fusion architectures, and the advancement of clinical translation. To ensure reproducibility and clinical reliability, efforts should focus on standardization sample imbalance and missing modalities. In addition, enhancing model interpretability and transparency will be essential for establishing clinician trust and facilitating regulatory acceptance. By bridging methodological innovation with clinical applicability, multimodal AI has the potential to provide robust tools for early diagnosis, disease monitoring, and personalized treatment in AD and related cognitive disorders.

Analysis and conclusion

This study conducted a bibliometric and visual analysis of the applications of multimodal AI in AD. Through the analysis of existing literature, it was observed that multimodal AI technologies based on imaging modalities demonstrate significant application value in early diagnosis, disease prediction, and stage classification. Emerging fusion strategies, such as intermediate fusion and adaptive fusion, have proven more effective in handling complex multimodal data, thereby enhancing model performance and robustness.

Despite the remarkable progress made by multimodal AI in AD research, challenges remain, including data heterogeneity, computational complexity, and model interpretability. Future research should focus on optimizing multimodal data fusion strategies, improving model interpretability, and leveraging generative models and adaptive learning methods to address issues related to data scarcity and noise. With continuous technological advancements, multimodal AI is expected to play an increasingly pivotal role in early diagnosis, disease progression prediction, and personalized interventions for AD. This will further drive the development of precision medicine and provide more scientifically informed decision support for clinical practice.

Footnotes

Ethical approval

No patients were involved in this research, so the ethical approval is not required. The data sets analyzed during this study are available in the Web of Science core collections.

Author contributions

Wenhui Zhou: conceptualization, methodology, software, and writing–original draft. Yanhua Wang: software and writing–review. Yudong Wu: visualization and investigation. Xin Li: supervision. Hong Liu: software. Hailing Wang: validation. Zhichang Zhang: conceptualization and validation. He Huang: writing–review and editing.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the International Industrial Technology Research and Development Project of the Liaoning Provincial Department of Science and Technology (No. 2025JH2/101900023); the Liaoning Provincial Department of Education (Nos. LJ222410164024 and LJ222410164030); the Liaoning Province Science and Technology Joint Plan (Technology Research and Development Program Project, No. 2024JH2/102600237); the Shenyang Medical College Horizontal Research Projects (Nos. SYKT2025002, SYKT2025005, and SYKT2025007); and the Liaoning Provincial Department of Education Project “Research and Exploration on Olfactory Training Methods and Devices for Anti-Drug Squirrels” (Grant No. SYYX201909).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.