Abstract

Background

As the world population continues to age, the prevalence of neurological diseases, such as dementia, poses a significant challenge to society. Detecting cognitive impairment at an early stage is vital in preserving and enhancing cognitive function. Digital tools, particularly mHealth, offer a practical solution for large-scale population screening and prompt follow-up assessments of cognitive function, thus overcoming economic and time limitations.

Objective

In this work, two versions of a digital solution called Guttmann Cognitest® were tested.

Methods

Two hundred and one middle-aged adults used the first version (Group A), while 132 used the second one, which included improved tutorials and practice screens (Group B). This second version was also validated in an older age group (Group C).

Results

This digital solution was found to be highly satisfactory in terms of usability and feasibility, with good acceptability among all three groups. Specifically for Group B, the system usability scale score obtained classifies the solution as the best imaginable in terms of usability.

Conclusions

Guttmann Cognitest® has been shown to be effective and well-perceived, with a high potential for sustained engagement in tracking changes in cognitive function.

Introduction

The world's population is getting older and age is the strongest known risk factor for cognitive decline. 1 While this population aging can be undoubtedly seen as a success story, it also brings healthcare and economic challenges. For example, neurological disorders, including dementia, are the second leading cause of death and the leading cause of disability worldwide. Given the strong association between aging and the incidence of these disorders, estimates suggest that they will account for over half of the economic impact of disability by 2050. 2

In this context, Owens et al.3 early detection of cognitive impairment opens to early interventions that could help to maintain and even improve cognitive functioning.4 In recent years, an increasing number of studies have tried to find effective strategies to prevent the development of cognitive impairment, Alzheimer's disease, or any other kind of dementia.5–7

Neuropsychological assessment provides a reliable method to quantify cognitive functioning and may have a better predictive value and sensitivity in preclinical dementia than imaging techniques.8,9 However, even though neuropsychological assessment provides highly valuable information through an easy and reliable methodology,10 it also has certain important limitations. Classical neuropsychological testing has some specific requirements that make it expensive and time-consuming: a trained neuropsychologist carries out the evaluation in person, in a quiet and bright room, usually requires at least one hour to complete testing sessions, tests need to be corrected and scored manually, and subjects must move to the center or clinical facility.11 All these aspects make these traditional face-to-face procedures not scalable for efficient assessments in large samples. On the other hand, quick screening assessment tools such as the Mini-Mental State Exam (MMSE12) or the Montreal Cognitive Assessment (MoCA13) are not the best alternative for effectively assessing and monitoring subtle changes over time.11,14

In this context, digital solutions, and specifically mHealth, are promising ways to overcome these barriers by allowing large-scale populational screening and repeated assessments of cognitive functions, not only in older population, but starting from middle-age adulthood, in an efficient manner. Moreover, the COVID-19 pandemic has shown the need to speed up alternative ways to explore people's cognitive functioning, reducing mobility and physical contact between clinicians and patients to lower diagnosis barriers.

Furthermore, technology-based assessments have specific features of great value by providing better precision of measurement and scoring; offering instant automated feedback and results; allowing high standardized administration with objective data gathering and automated correction and scoring; minimizing the possible professional expertise bias and reducing human error; allowing the implementation of multiple alternate forms to minimize learning effects; and having the potential to use sophisticated algorithms to analyze other information rather than just the final score.15–18

Digital solutions in general, and mobile apps in particular, allow the administration of this kind of clinical service by the use of a smartphone or any other mobile device. This helps to reduce time, frequency, and geographical barriers to cognitive assessment, allowing efficient and long-term monitoring through repeated sessions to detect early, subtle signs of cognitive decline. In the top 10 developed countries, more than 70% of people own a smartphone. Particularly, in Spain, 74.3% of the population has a smartphone.19 Increased use of mobile technology in older adults also provides the opportunity to deliver convenient, cost-effective assessments for earlier detection of impairment, likewise bringing on more engagement in cognitive screening and monitoring, both inside and outside clinical facilities. Furthermore, thanks to the objective and rapid data transfer to healthcare providers,20 these kinds of innovative solutions have a great potential to support clinical decisions in a cost-effective manner.

Few mobile apps have been developed to assist individuals and medical professionals in screening these conditions. We can find computerized versions of existing neuropsychological tests, and new cognitive tests developed specifically for mobile platforms.21 Some of them are screening tests that require a relatively short time for completion and are focused on general cognitive function or a few specific domains. For example, eSage20 is the mobile version of the paper-based Self-Administered Gerocognitive Examination (SAGE) assessing orientation, memory, language, calculation, visuospatial, abstraction, and executive functions. e-MOCA is an electronic version of the standard paper-based MoCA22 to detect mild cognitive impairment. The Cognitive Assessment for Dementia (CADi23) and CADi2 (improved version24) are also screening tests with an iPad version developed for a mass screening for dementia. Another example is Mindmore™, a digital solution consisting of traditional cognitive tests adapted for self-administration through a digital platform.25 It covers five cognitive domains (attention and processing speed, memory, language, visuospatial functions, and executive functions) and can be arranged in different test batteries depending on the purpose of the assessment. On the other hand, test batteries are longer and assess both overall cognition and specific domains, such as Toronto Cognitive Assessment was created with the aim of producing a test that is more comprehensive than screening tests but shorter than a neuropsychological battery, or CogState26 a brief battery with four tests to evaluate processing speed, attention, visual learning, and working memory.

In this line, “Guttmann Cognitest®”27 has been designed, developed, and initially validated as a digital solution for cognitive assessment, specifically designed for mobile devices (mobile first design), with the final aim to be eventually integrated as an assessment module of the “Guttmann, NeuroPersonalTrainer®,”28 a tele-cognitive rehabilitation platform. The current version of “Guttmann Cognitest®" consists of seven tasks specifically designed to assess main cognitive functions, requiring approximately 20 min to be completed. Following an iterative process, after the initial technical and usability validation of the first version, a second one was developed improving task instructions and tutorials, and also adding a practice trial prior to each task to facilitate comprehension.

The study presented in this paper aims to evaluate the feasibility and usability of “Guttmann Cognitest®,” comparing the two developed versions of the digital solution, in a sample of healthy adults from the Barcelona Brain Health Initiative (BBHI6) and in a sample of older adults participating in the APTITUDE project.29,30

Materials and methods

Participants

Three hundred and thirty-three healthy subjects (42–66 years old) from the BBHI6 participated in this study. Two hundred and one of the sample completed the first version (Group A) of the digital solution while 132 completed the last version, with practice trials included (Group B). This last version is the one validated by Cattaneo et al.31

For these two groups (A and B), we established exclusion criteria for participants with a history or current diagnosis of neurological, psychiatric, Traumatic Brain Injury with loss of consciousness, substance abuse/dependence, treatment with psychopharmacological drugs, or visual impairments. Prior to the study initiation participants provided explicit written informed consent, and the protocol was approved by the Ethics and Clinical Research Committee of the Catalan Hospitals Union (Comité d’Ètica I Investigació Clínica de la Unió Catalana Hospitals).

The application was also administered to 30 older adults (Group C; age range 72–87), some of them possibly presenting cognitive decline following MMSE or MoCA scores.

Procedures

In this cross-sectional prospective observational study participants were administered the “Guttmann Cognitest®” and completed the system usability scale (SUS32) on the same day, and a few minutes apart. The time needed to complete the full assessment is approximately 30 min.

We conducted a two-step validation analysis to assess the feasibility and usability of ‘Guttmann Cognitest’ in both healthy adults and older adults.

Groups A and B cognitive testing took place in Institut Guttmann Barcelona facilities and was partially supervised by a technician, who provided the mobile device to the participant, explained the purpose of the activity, and was available for any request during the assessment.

Group C assessment, instead, took place in Andorra (Sant Julià de Lòria) and was supervised by the Ageing and Health team of the Andorran Healthcare system, using the first version of the application. In this case, the technician was available during all the sessions in order to help and provide more accurate information, as we expected more difficulties in older adults due to less technology literacy.

The questionnaires utilized in this study are either ad hoc or open-access tools exclusively. Therefore, specific permissions from copyright holders were not required.

“Guttmann Cognitest®”

“Guttmann Cognitest®”31 is a digital solution specifically designed for smartphones and other mobile devices with seven tasks to evaluate the main cognitive functions: memory, executive functions, and visuospatial abilities.

All tasks are preceded by written descriptions of the tasks, followed by a set of screens with more detailed instructions and a video tutorial. In a second version, a very simple practice trial is presented after the tutorial and prior to the task, to ensure that the user understood the rationale and objective of the task. If the user is not able to successfully complete the practice on the first or second attempt, whatever the reason is, it is inferred that the user is not capable of successfully fulfilling the task and continuing with the next. This avoids generating results due to comprehension difficulties, instead of cognitive deficits (Figure 1).

Screenshot of the video tutorial and instructions for the circle tapping task. Users can navigate forward (Siguiente) and backward (Anterior) if needed.

After completing all tasks included in the session, a final questionnaire is presented asking (a) if they were performed in a quiet place, (b) if the participant could be concentrated enough or if occurred any disruption or technical issue, (c) if the instructions were clear enough for any task, and (d) if the difficulty of any task was so high that the participant gave up.

At the end of the questionnaire, the application shows a global summary of results, briefly explaining the primary cognitive function addressed in each one.

Task 1: Visual span backward

This task, designed to assess working memory, is based on the visual span backward paradigm.

A grey grid appears on the screen. Every second a square of the grid is highlighted in green. Participants are instructed to memorize the sequence and then reproduce it in backward order. The number of highlighted elements increases by one after each correct execution. If the user fails, another sequence with the same length is presented one more time. After two consecutive errors the task ends.

The final score is the longer sequence correctly repeated (Figure 2).

Screenshot example of the visual span backward task.

Task 2: Free and cued image–number associations

This associative memory task consists of six pairs of two-digit numbers and images are presented one by one for 2 seconds each. The instructions remind the participant to remember the number associated with each image. When all six pairs have been presented, the images are presented alone in a different order from the first presentation, and the participant is asked to recall the number. Items that are not correctly remembered are presented again with a cue, consisting of two possible numbers associated with it, where the subject must select the correct one. The whole procedure is repeated three times.

The total score for the free recovery is the number of successful associations without a clue and the recognition score is the number of correct associations the participant has been able to recognize (Figure 3).

Screenshot example of a free and cued image-number task.

Task 3: Logic sequences

Twelve logic sequences were designed to evaluate fluent intelligence and logical reasoning. Each series is composed of a 3 × 3 matrix of elements with a missing element. The participant is asked to select the correct element among a set of four possible options to complete the sequence. The maximum response time available is 90 s and participants are informed by a message on the screen when there are 10 s left.

The score is the number of sequences completed correctly (Figure 4).

Screenshot example of the logic sequences task.

Task 4: Cancellation

The symbol cancellation test was designed to assess visuospatial searching and selective attention. The task asks the participant to scan a structured arrangement of figures and mark target figures with specific shapes and colors as quickly as they can, having a time limit of 45 s. The figures are in a matrix of 135 (9 × 5 in three consecutive screens). Distractors could be different shapes of the same color or the same shape but different color from the target. The task is divided into two parts, in the first one, the target is only one colored shape (15 marks in total) while in the second, has two colored shape targets to search (30 targets in total).

The total score is the sum of symbols correctly selected in the two parts of the task (Figure 5).

Screenshot example of the two-target cancellation task.

Task 5: Circle tapping

This task was designed to measure visuomotor speed and sustained attention.

Six grey circles appear on the screen and randomly one of them is highlighted in red or blue. The user will have to tap only the red ones and ignore the blue circles as fast as possible, inhibiting the response. The task lasts 2 min.

This task has two data results. Correct detection is indicated by the number of times the user taps the target stimulus, and reaction time is represented by the mean of time that passes between the presentation of the stimulus and the user's correct responses (Figure 6).

Screenshot of the red target in the circle tapping task.

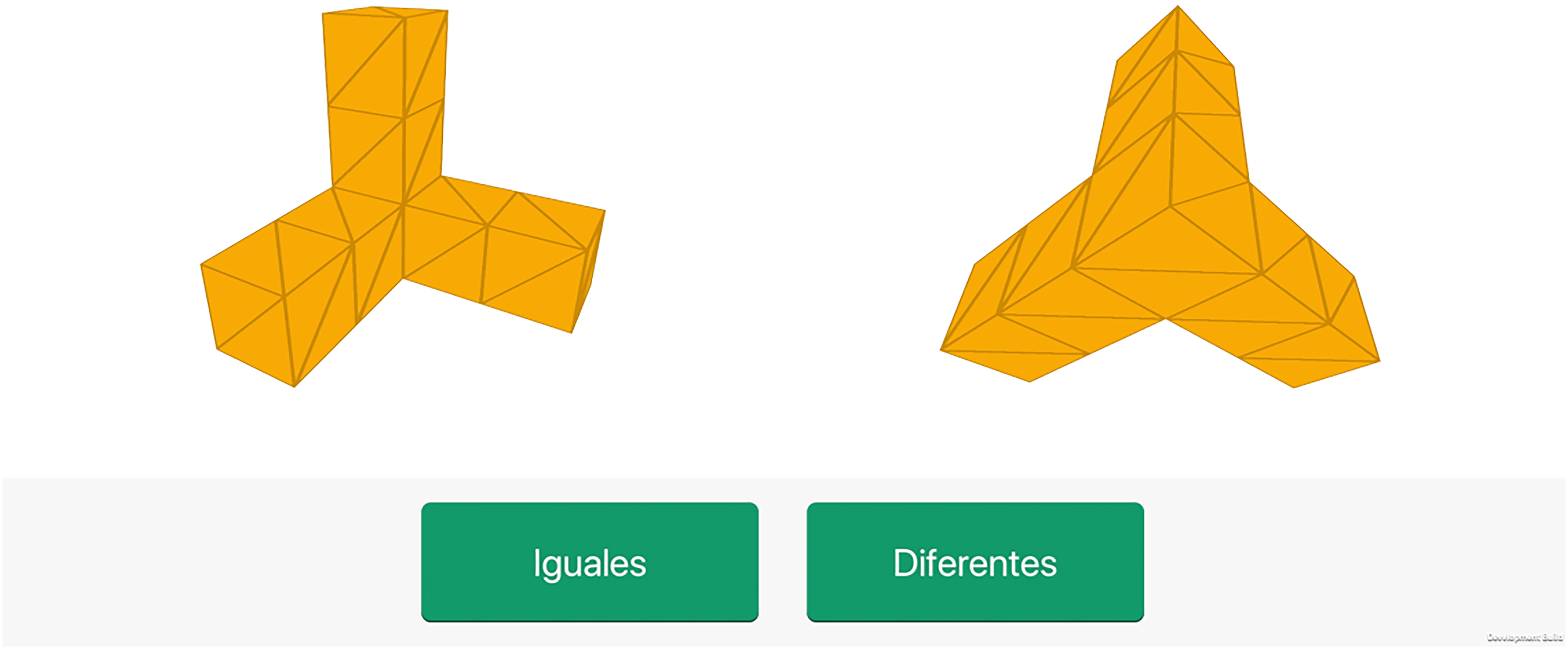

Task 6: Mental rotation

This task, based on the mental rotation paradigm33 was designed to evaluate visuo-spatial abilities.

Pairs of 3D figures are shown with different orientations and the participant is requested to indicate if they are identical or not, regardless its orientation. There are 12 pairs of 3D figures and the time to respond is 10 seconds.

The score is the total number of correct responses (Figure 7).

Screenshot example of the mental rotation task.

Task 7: Delayed images and numbers association recall

To explore the subject's retention capacity over time, the final task consists of presenting the same six pictures previously shown in the memory task (Task 2). Images are presented one by one, and the participant is requested to recall the associated number. Similarly, to the short-term task, a free recall mode comes first, while a cued mode is presented if the participant gives the wrong answer.

The number of correctly remembered associations represents the total free recall long-term score.

System usability scale (SUS)

SUS is a very quick, easy, and simple scale-based questionnaire widely used to evaluate usability, intended in terms of effectiveness, efficiency, and user satisfaction of a product, system, or service in a specific context34. It has become a popular questionnaire for end-of-test subjective assessments of usability, as it has been shown to discriminate between systems that have poor usability and those that are considered usable. It is also suitable even when applied to small samples (N < 14) and it has excellent reliability, and it has been translated and validated in several languages including Spanish.35

It consists of 10 items with a Likert five-point response scale varying from “strongly agree” to “strongly disagree.” A final score is calculated by summing up all the items, and a higher SUS score indicates better product usability. Based on previous research, a SUS score above 68 would be considered above average and anything below 68 is below average.36,37 Its results could be easily interpreted in terms of acceptance and net promoter score (NPS), a business metric used to estimate the likeliness of customers or users to recommend a product or service to others and/or keep loyalty.

In SUS, the score for odd questions is calculated as “Response—1,” whereas the score for even questions is calculated as “5—Response.” Therefore, each question score goes from 0 to 4. The final SUS Score is calculated by adding all the question scores and multiplying them by 2.5.36,37

SUS was collected anonymously. Apart from analyzing SUS results, a specific ad hoc brief questionnaire was designed to gather extra information about clarity, comprehension, and technical issues. The questionnaires used can be found in the Supplemental material (Files S1 and S2)

Results

System usability scale (SUS)

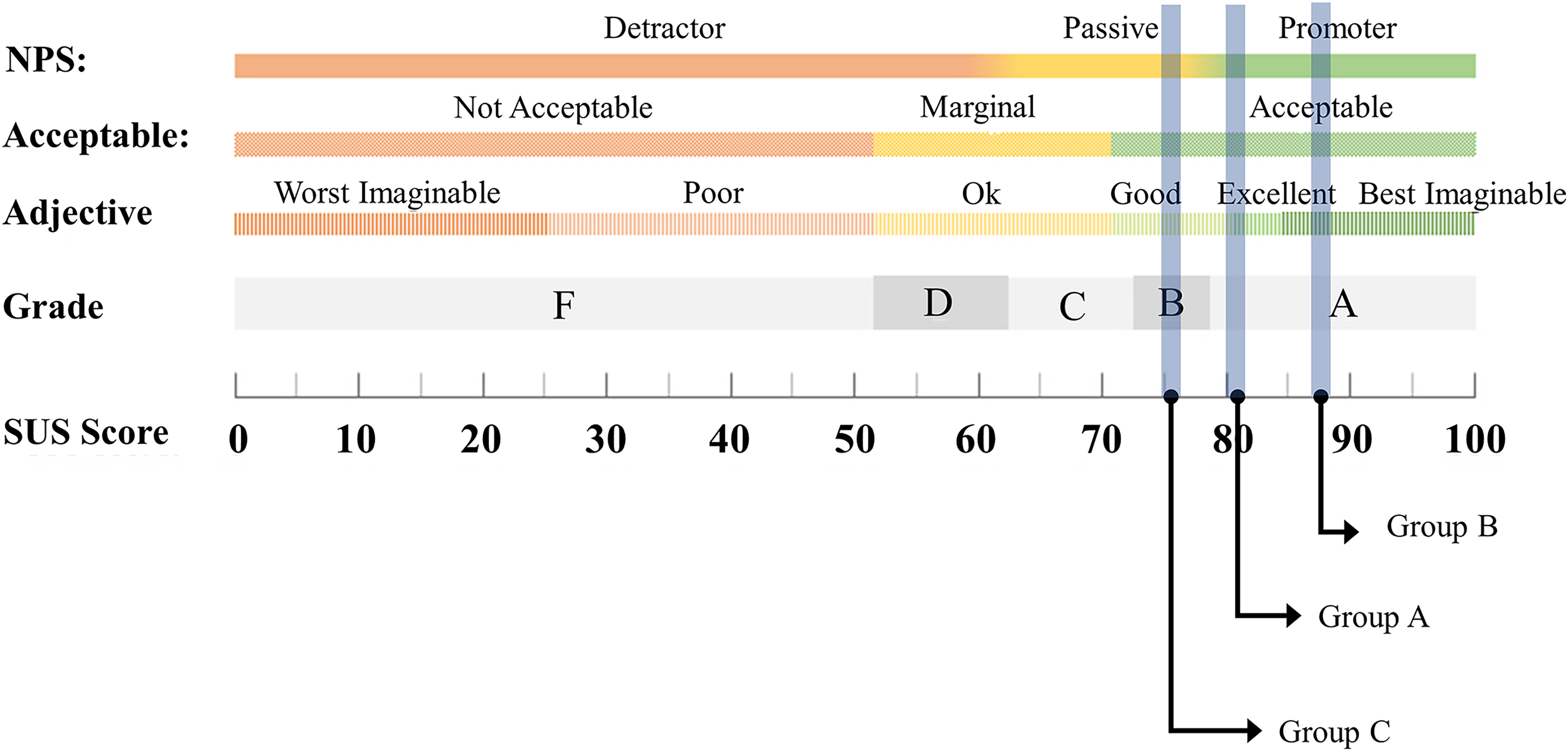

Analyses showed significant differences between groups in terms of usability (F = 15.69, p < .001, η2 = 0.08). Post hoc analyses showed that Group B reported higher usability levels (mean = 87.7, SD = 10.63) compared with both Group A (mean = 81.41, SD = 13.32; p < .001) and Group C (mean = 75.83, SD = 14.61; p < .001). Also, Group A showed higher usability than Group C (p = .021; see Table 1 for a summary of the results and Figures 8 and 9 to see the results and difference between groups).

Detailed score of each question on system usability scale (SUS) in Groups A and B (different versions). The line shows the difference between both versions.

Detailed score of each question on system usability scale (SUS) in Groups A and C. The line shows the difference between both versions. On the right axis, the items in negative mean Group C scored better for those questions.

Mean scores and percentages of responses to the system usability scale (SUS) usability questionnaire items of the three groups.

Figure 10 shows the NPS grade. Group B score corresponds to an A+ grade, which is regarded as the highest possible grade considered best imaginable. The outcomes of Group A are also deemed promoters in NPS with the term excellent. In contrast, Group C has a grade of B and is rated as good usability with a passive NPS.

Grades, adjectives, acceptability, and NPS categories associated with raw SUS scores for each group. Note: Image adapted from Sauro. 35

Specific ad hoc final questionnaire

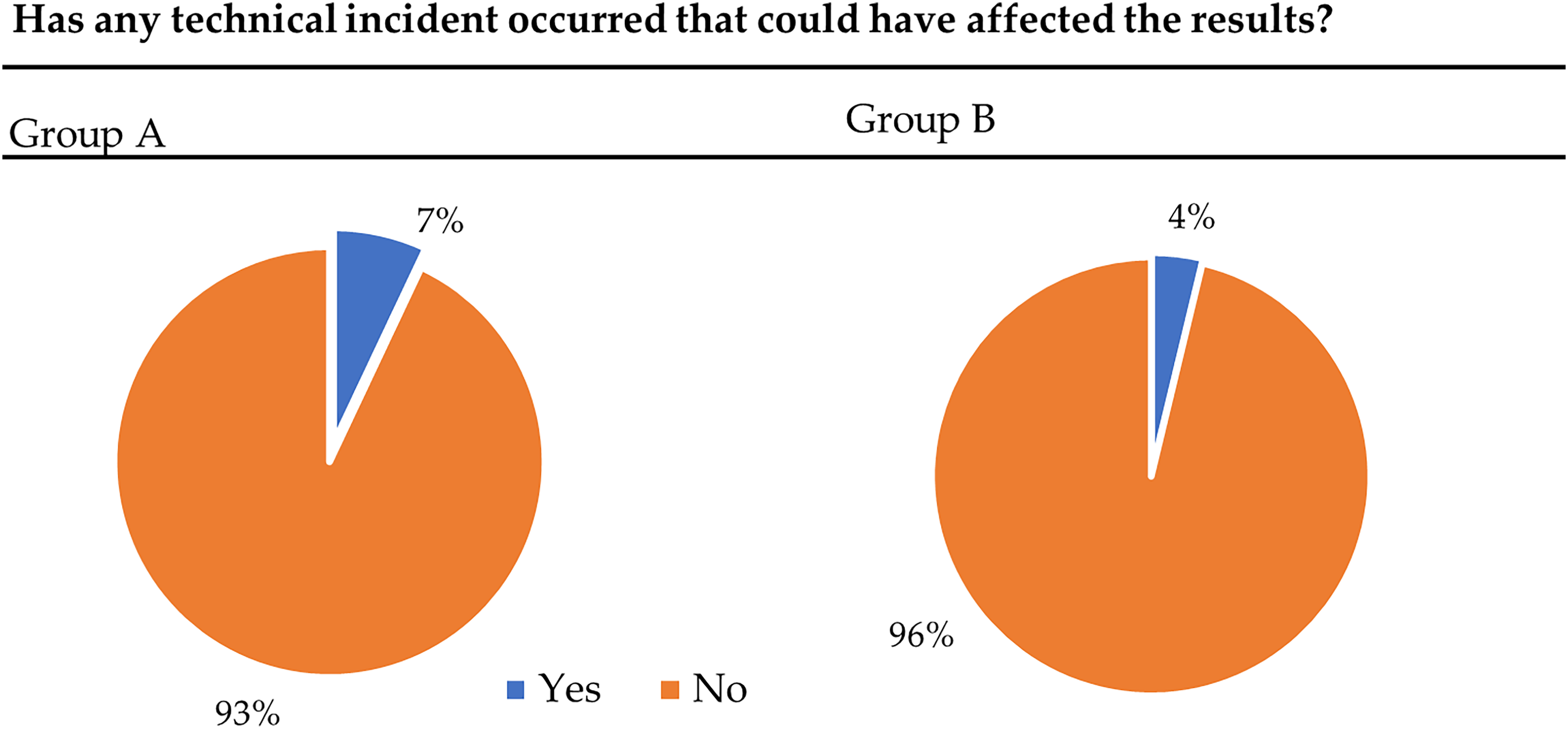

With the objective of comparing the improvements introduced in the second version of the solution, the results from the specific ad hoc final questionnaire are plotted in the following figures (the questionnaire can be found in File S2 of the Supplemental material). The first question evaluates the stability of the application and asks if any incident has occurred. As we can see in Figure 11, in more than 90% of the cases no incident occurred. Group B has 3% less incidents than Group A.

Representation of the technical incidents that occurred during the assessment using the cognitest app perceived by the users and extracted from the last questions of the test from Groups A and B.

Figure 12 shows if the participants understood the tasks from the application. In Group A, 85% understood all the tasks, 12% did not understand one task, and 3% did not understand more than one task. Otherwise, 95% of Group B understood all the tasks, 4% did not understand one task, and just 1% did not understand more than one task. Being the difference between both groups more than 10%.

Difference between Group A and Group B in the understanding of all tasks and instructions.

The last question asks if the tasks were too difficult or too confusing that the participant had to give up. Seventy-seven percent of Group B think that all the tasks are clear enough and not too difficult to carry on all the tasks. Sixteen percent perceived that one task was difficult or confusing and 7% more or one task was too difficult or confusing. In the other group, 9% more of the participants do not perceive the tasks as too difficult to give up and 14% think that one or more tasks are too difficult or confusing.

Discussion

The purpose of this study was to present the feasibility and usability pilot study of “Guttmann Cognitest®,” a digital solution to perform a brief cognitive assessment. Six tasks were designed to study the main cognitive abilities such as memory, executive function, and visual speed. The study of feasibility and usability is crucial when evaluating the use of any digital solution to conduct an initial cognitive assessment. Applications that measure speed and performance must convey confidence to the user to continue using it and attribute failures and successes to the person themselves rather than to the device.

After the feasibility and usability pilot, the results obtained are very promising with a good acceptability for all assessed groups. Considering the usability evaluation in the healthy adult sample (Groups A and B), the SUS score obtained reveals that users perceive the system to be useful, usable, satisfying, and easy to learn.

Most of the sample were able to understand and adhere to all task requirements and testing could be conducted with minimal supervision of individuals, even in the group with an older range of age (Group C).

As it was expected, the inclusion of trials in the second version of the solution (Group B) improved the usability perceived by users in all the aspects evaluated by SUS and understanding and perception of difficulty as well. The changes increased the perception of integrity, consistency, and confidence in the application. As we can see in the final specific ad hoc questionnaire, these changes also improved the perception of difficulty and confusion, increasing the level of understanding. The percentage of technical issues was not high in the first version, but it was also reduced in the new version giving the sense of more stability in this last version.

The results are also promising for the group with an older age range (Group C). The results of this group are of particular interest as is one of the main target groups of this kind of applications that aim to assess cognitive changes and deficits. The development of applications directed toward this population needs to take into consideration features such as text size, tiny targets, speed of stimuli, or startling sounds. Regardless senior adults are expected to have more difficulties with technologies, people born between 1946 and 1964, Baby Boomer generation, are those now reaching retirement age. This generation is far more likely than past generations to interact with Information and Communication Technologies (ICT) as have had more substantial experience, many of them had used computers and the internet at work for years before retiring. We asked this group about its technology literacy and most subjects estimated their familiarity with the use of technology and mobile devices as moderate to high. Just 27% of them had low or very low knowledge. Punctuations in SUS items regarding complexity, ease of use, and confidence are successful and above the mean, either the need for technical help where the majority did not feel necessary to receive support.

Looking over NPS grades and percentiles, the three groups had results from good to best imaginable. As we hypothesized, Group C had the lower results, nonetheless has acceptable results, and is considered good. As we already stated, it is crucial to assess the usability and feasibility of this kind of digital solution in older adults, as they are the group with the most vulnerability and social isolation and are more likely to be excluded from the benefits of ICT-based.38

Apart from the significance of these findings, it is very important to consider certain limitations within the present study. Firstly, the variation in sample size results from limited access and resources for assessing older adults (Group C). Additionally, due to external time constraints and limitations to access the older adults group, it was not possible to administer the improved second version to this group, being only possible to analyze the initial version in this group. Further analysis is needed to validate the feasibility and the usability of the second version among older adults. Moreover, an in-depth analysis of participant characteristics, such as educational background, cognitive levels, or socioeconomic factors, as well as the support received during the test administration, could provide valuable insights into the feasibility and usability of the test. Unfortunately, due to the participant anonymity in this study, such analysis was not feasible, but should be considered in future similar works to improve the conclusions presented here. Finally, in this study, we did not exclude older adults with cognitive impairments, this could represent a potential confounder in the data interpretation.

Conclusions

This paper presents the feasibility and usability validation of “Guttmann Cognitest®,” a digital solution for cognitive assessment. In this study, two developed versions have been compared in a sample of healthy adults from the BBHI. Besides, in a specific sample of older adults, the second version has been validated in terms of usability.

This solution not only has been shown good in terms of usability and feasibility, but also has been initially validated against standard and extensive in-person neuropsychological assessments in the context of the BBHI cohort study.31 Performing a cognitive assessment with this kind of digital solution has been proven to be feasible and useful for gathering information about cognitive functioning in clinical and experimental settings, with a huge potential for cognitive assessment in large-scale samples. Even among older adults, the acceptability of this digital solution was excellent, and they all liked to continue using it, which can foster a sustained engagement to track changes in cognitive function over time.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076231224246 - Supplemental material for “Guttmann Cognitest®,” a digital solution for assessing cognitive performance in adult population: A feasibility and usability pilot study

Supplemental material, sj-docx-1-dhj-10.1177_20552076231224246 for “Guttmann Cognitest®,” a digital solution for assessing cognitive performance in adult population: A feasibility and usability pilot study by Alba Roca-Ventura, Javier Solana-Sánchez, Eva Heras, Maria Anglada, Jan Missé, Encarnació Ulloa, Alberto García-Molina, Eloy Opisso, David Bartrés-Faz, Alvaro Pascual-Leone, Josep M. Tormos-Muñoz and Gabriele Cattaneo in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors thank the participation of volunteers in this pilot. They would also like to thank the research assistants (Selma Delgado, Goretti España, María Redondo, Vanessa Alviárez, and Rubén Romero) for their help and participation in the administration of the tasks. Finally, thanks to Jaume López and Marc Navarro for their help and support in the development of the solution. We extend our gratitude to all participants, for their invaluable collaboration. No companies were involved in any step of the study, from design to data collection and analysis, data interpretation, the writing of the article, or the decision to submit it for publication.

Contributorship

GC, JSS, AGM, EO, and JTM participated in the initial conception of the design of the “Guttmann Cognitest®” digital solution. AR, JSS, EH, MA, EU, JM, and GC participated actively in the data collection and analysis. AGM, EO, DBF, APL, and JTM contributed to the interpretation of the results. AR and JSS drafted the article and all other authors made critical revisions, introducing important intellectual content. All authors have read and agreed to the published version of the manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

The study was conducted in accordance with the Declaration of Helsinki, and approved by the Institutional Review Board (or Ethics Committee) of Ethics and Clinical Research Committee of the Catalan Hospitals Union—Comité d’Ètica I Investigació Clínica de la Unió Catalana hospitals, CEIC18/07.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: DBF was funded by the Spanish Ministry of Science, Innovation, Universities (RTI2018-095181-B-C21) and ICREA Academia 2019 award research grants. JTM was partly supported by Fundació Joan Ribas Araquistain_Fjra, AGAUR, Agència de Gestió d’Ajuts Universitaris i de Recerca (2018 PROD 00172), Fundació La Marató De TV3 (201735.10), and the European Commission (Call H2020-SC1-2016-2017_RIA_777107).

Guarantor

JSS

Informed consent statement

Written informed consent was obtained from all subjects involved in the study.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.