Abstract

Background

Otitis media remains a significant global health concern, particularly in resource-limited settings where timely diagnosis is challenging. Artificial intelligence (AI) offers promising solutions to enhance diagnostic accuracy in mobile health applications.

Objective

This study introduces a hybrid AI framework that integrates convolutional neural networks (CNNs) for image classification with large language models (LLMs) for clinical reasoning, enabling real-time otoscopic diagnosis.

Methods

We developed a dual-path system combining CNN-based feature extraction with LLM-supported interpretation. The framework was optimized for mobile deployment, with lightweight models operating on-device and advanced reasoning performed via secure cloud APIs. A dataset of 10,465 otoendoscopic images (expanded from 2820 original clinical images through data augmentation) across 10 middle-ear conditions was used for training and validation. Diagnostic performance was benchmarked against clinicians of varying expertise.

Results

The hybrid CNN–LLM system achieved an overall diagnostic accuracy of 97.6%, demonstrating the synergistic benefit of combining CNN-driven visual analysis with LLM-based clinical reasoning. The system delivered sub-200 ms feedback and achieved specialist-level performance in identifying common ear pathologies.

Conclusions

This hybrid AI framework substantially improves diagnostic precision and responsiveness in otoscopic evaluation. Its mobile-friendly design supports scalable deployment in telemedicine and primary care, offering a practical solution to enhance ear disease diagnosis in underserved regions.

Keywords

Introduction

Otitis media (OM) remains a major global health issue, affecting approximately 10.85% of the population annually, with 51% of cases occurring in children under five. 1 The financial impact is considerable, as direct costs per episode range from $122.64 to $633.6, with total annual expenditures in the USA reaching up to $5 billion. 2 OM is associated with hearing impairment and other potentially life-threatening complications. 1 Although symptomatic management and watchful waiting are often adopted, antibiotics are typically reserved for more severe cases. 3 In addition, recent therapeutic advancements—such as suspension gels, transcutaneous immunization, and intranasal drug delivery systems—aim to overcome challenges like biofilm formation and ensure effective drug concentrations in the middle ear. 4 Despite the advent of pneumococcal conjugate vaccines, OM continues to drive high rates of medical consultations and antibiotic prescriptions worldwide. 3

Recent studies have highlighted the promise of artificial intelligence (AI) in otoscopic diagnosis. For example, Chen et al. 5 developed a smartphone-based AI system that outperformed general physicians in detecting middle-ear diseases, 6 while Song et al. 7 reported an average diagnostic accuracy of 86% across various AI approaches in a systematic review. 8 Chawdhary and Shoman further emphasized AI's potential in otological imaging—including automated diagnosis and image segmentation for surgical planning—observing that in some instances, AI performance is comparable to or even exceeds that of human experts. 9 Similarly, Canares et al. underscored AI's superior capabilities in otoscopic image recognition relative to human evaluation.10,11 Collectively, these findings suggest that AI-powered otoscopic diagnosis can significantly improve diagnostic accuracy and support telemedicine in otolaryngology. However, recent work has highlighted persistent concerns regarding dataset bias and limited generalizability in otoscopic AI systems, 5 indicating that further research is needed to resolve these challenges and optimize patient outcomes.

Concurrently, the advent of large language models (LLMs) in healthcare has demonstrated their utility in enhancing diagnostic accuracy, facilitating patient communication, and bolstering clinical decision-making when combined with human oversight.12,13 LLMs also offer benefits in medical education, research support, and workflow automation.14,15 Nevertheless, challenges such as limited contextual understanding, potential training data biases, and ethical concerns persist, necessitating ongoing optimization, standardized evaluation, and robust ethical oversight prior to full clinical integration.12,14 Future directions may include exploring multimodal LLMs16,17 for diverse medical data processing, developing autonomous LLM-powered agents for personalized care, and ensuring responsible implementation.

Our research addresses these multifaceted challenges through a comprehensive investigation of AI-assisted otoscopic diagnosis. Specifically, we compare the efficacy of direct vision-based LLM (VLLM) inference with a convolutional neural network (CNN)–LLM hybrid approach, assess the feasibility of mobile edge computing deployment, and evaluate diagnostic support across varying levels of clinical expertise. Additionally, we refine system prompts to further enhance diagnostic accuracy and clinical relevance. By introducing a novel dual-path diagnostic framework that combines CNN-based pattern recognition with LLM-enhanced medical reasoning—realized through a hybrid deployment architecture—our approach aims to deliver accessible, reliable diagnostic support while maintaining strict privacy and efficiency standards. Ultimately, this method has the potential not only to improve diagnostic precision but also to address broader challenges in healthcare accessibility and resource optimization.

Methods

Study design and ethical considerations

We conducted a retrospective study at Taipei Veterans General Medical Center in Taiwan using the same dataset as our previous publication. 6 The otoendoscopic images were collected between January 2011 and December 2019 under IRB approval (IRB No. 2025-01-027CC) and in accordance with the Declaration of Helsinki. No additional images were collected for this study; the present work builds upon the previously validated CNN backbone.

Data collection and preprocessing

As described in our prior work, 6 a total of 2820 otoendoscopic images were curated across 10 middle-ear conditions. After quality screening (removing blurring, duplications, inappropriate views, and records without clinical confirmation), 2820 clinical eardrum images remained and were categorized into 10 diagnostic classes; 2161 images were retained for CNN training after preprocessing. To improve class balance and model performance, we applied data augmentation, expanding the dataset to 10,465 images in total (per-class counts detailed in Supplementary Table 1 of the previous study). Images were screened and annotated via a multistep process involving initial research staff review, independent verification by two otolaryngologists, and consensus adjudication for discrepant cases. As in our previous study, 6 six practitioners (two general physicians, two residents, and two specialists) participated in labeling and validation.

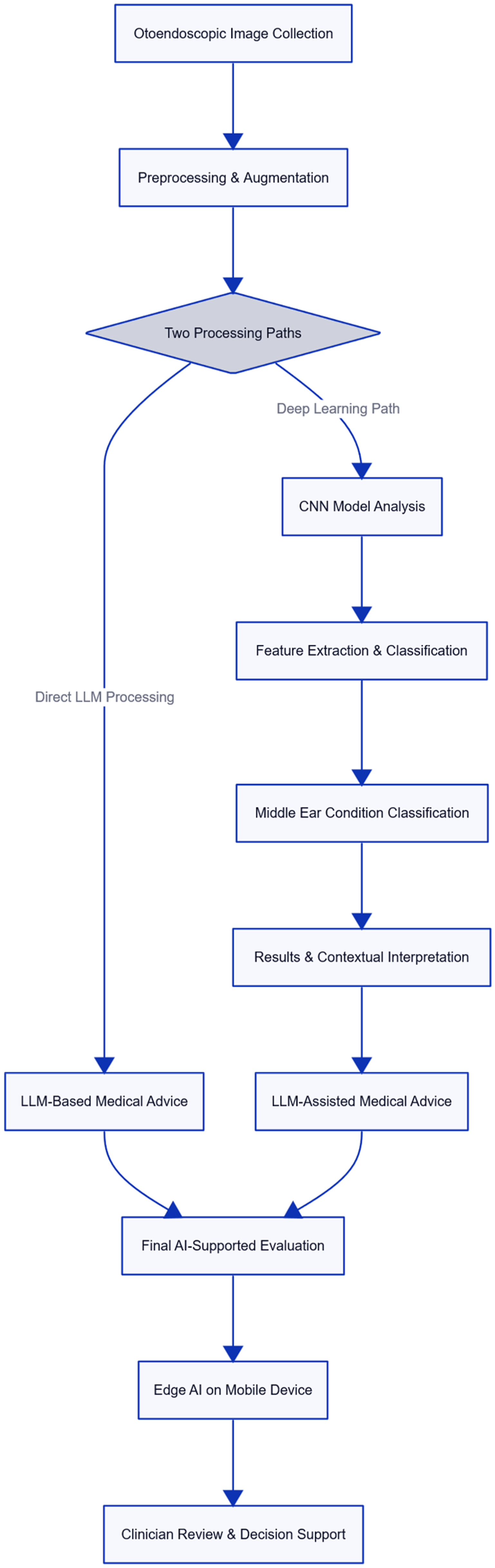

AI architecture and implementation

We developed a novel dual-path AI framework that integrates traditional computer vision methods with advanced language model capabilities. One processing pathway utilizes direct VLLM inference for image interpretation, while the other employs CNNs for feature extraction and classification. This integrated approach enables a comprehensive evaluation of middle-ear conditions by combining the strengths of both pattern recognition and medical reasoning. The CNN backbone and training procedures followed our previous work, 6 in which six different CNN architectures (Xception, ResNet50, NASNetLarge, VGG16, VGG19, and InceptionV3) were evaluated.18,19 InceptionV3 achieved the highest F1-score (98%) and was therefore adopted for transfer learning, The dual-path framework was implemented as a sequential pipeline: the CNN generated diagnostic outputs, which were then provided to the LLM for contextual interpretation and explanation. The LLM did not override CNN predictions.

Mobile platform optimization

To support mobile integration, the system was optimized to allow partial on-device execution through lightweight model compression and modular processing pipelines. Specifically, initial image preprocessing and CNN-based feature extraction were deployed locally, while high-complexity components such as the large classification models and LLM-based reasoning modules were executed through secure cloud APIs. This hybrid setup, informed by iterative clinical feedback, enabled responsive diagnostic interaction with sub-200 ms feedback for preliminary analysis, while preserving scalability and performance.

System validation and performance evaluation

The model was trained using an 80/20 split of the dataset for training and validation, and its robustness was further confirmed through five-fold cross-validation. All 10 diagnostic categories were represented in the validation sets, though their proportions reflected real-world clinical frequencies and were therefore not strictly balanced (see Supplementary Table 1 in Chen et al. 6 ). An external test set was used to assess the generalizability of the system. Statistical analyses were conducted using Python (v3.9) and R (v4.0.2) to evaluate diagnostic accuracy and clinical utility across all diagnostic categories. Performance was also benchmarked against assessments from human practitioners with varying levels of clinical expertise.

Results

Integration of the dual-path AI framework

Our dual-path AI system successfully combined CNN-based deep learning with direct VLLM inference to provide a comprehensive diagnostic evaluation of middle-ear conditions. Figure 1 illustrates the system architecture, 20 demonstrating how the integration of traditional machine learning with advanced language model capabilities yields complementary diagnostic insights.

Dual-path hybrid AI framework for otoscopic diagnosis, integrating CNN-based image classification with LLM-driven clinical reasoning via cloud-assisted architecture. AI: artificial intelligence; CNN: convolutional neural network; LLM: large language model.

Comparative analysis of AI approaches

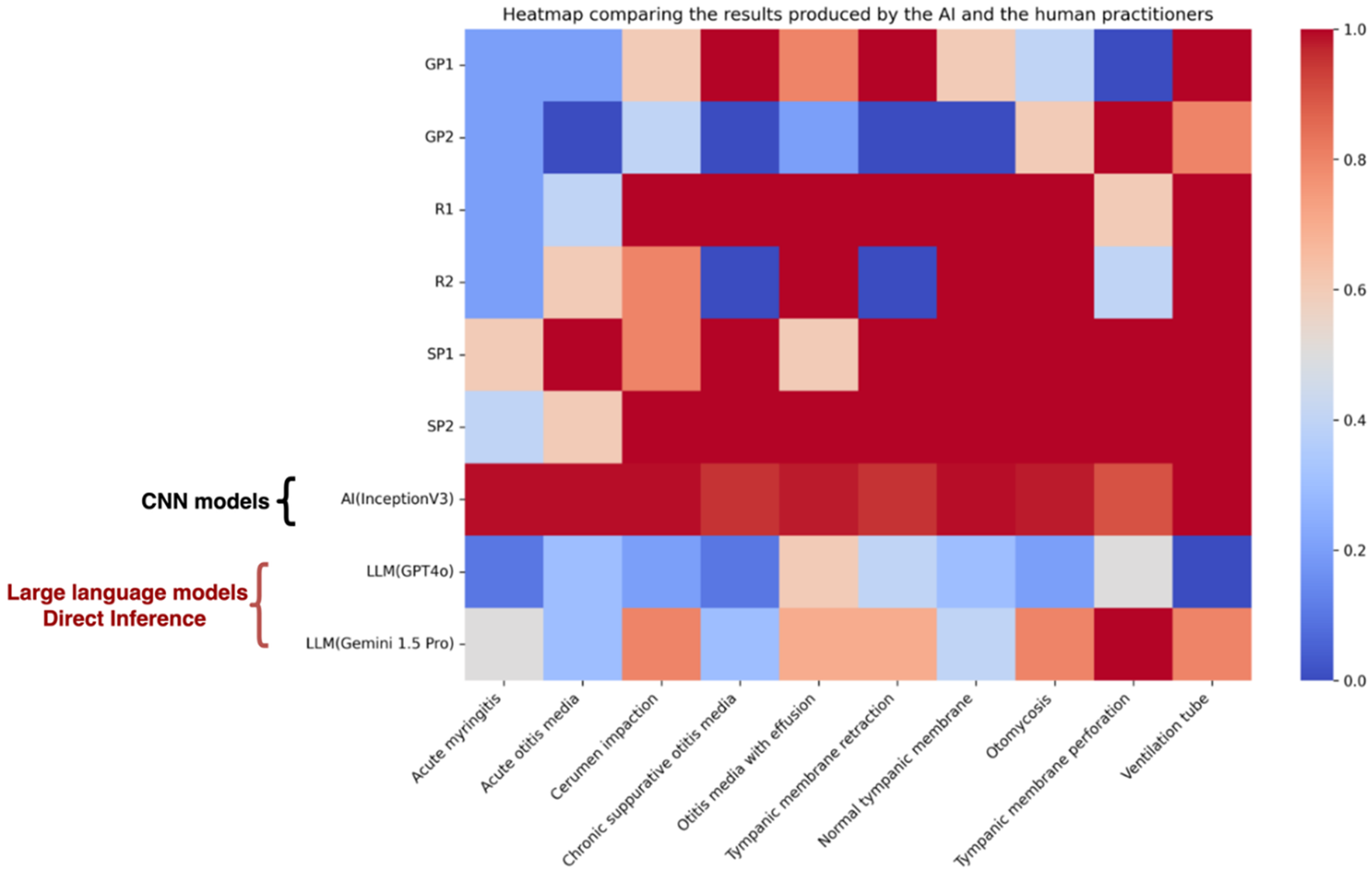

The CNN backbone used in this study was trained and validated in our previous work, where the performance metrics (accuracy, precision, recall, F1-score, and area under the curve (AUC)) were reported. The corresponding results from the conference study are presented in Figure 2. 21

Heatmap of diagnostic accuracy across 10 middle-ear conditions, comparing human practitioners, standalone models, and the proposed hybrid CNN–LLM system. The hybrid approach demonstrates superior performance, particularly in acute and structural pathologies. CNN: convolutional neural network; LLM: large language model. Adapted from Chu Y-C, Lin K-H, Chen Y-C et al. ‘Enhancing Clinical Accuracy in Middle Ear Disease Diagnosis with a Cloud-Based AI System Integrating CNNs and LLMs,’ © 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG. Reproduced with permission from Springer Nature (License No. 6137560845503)21

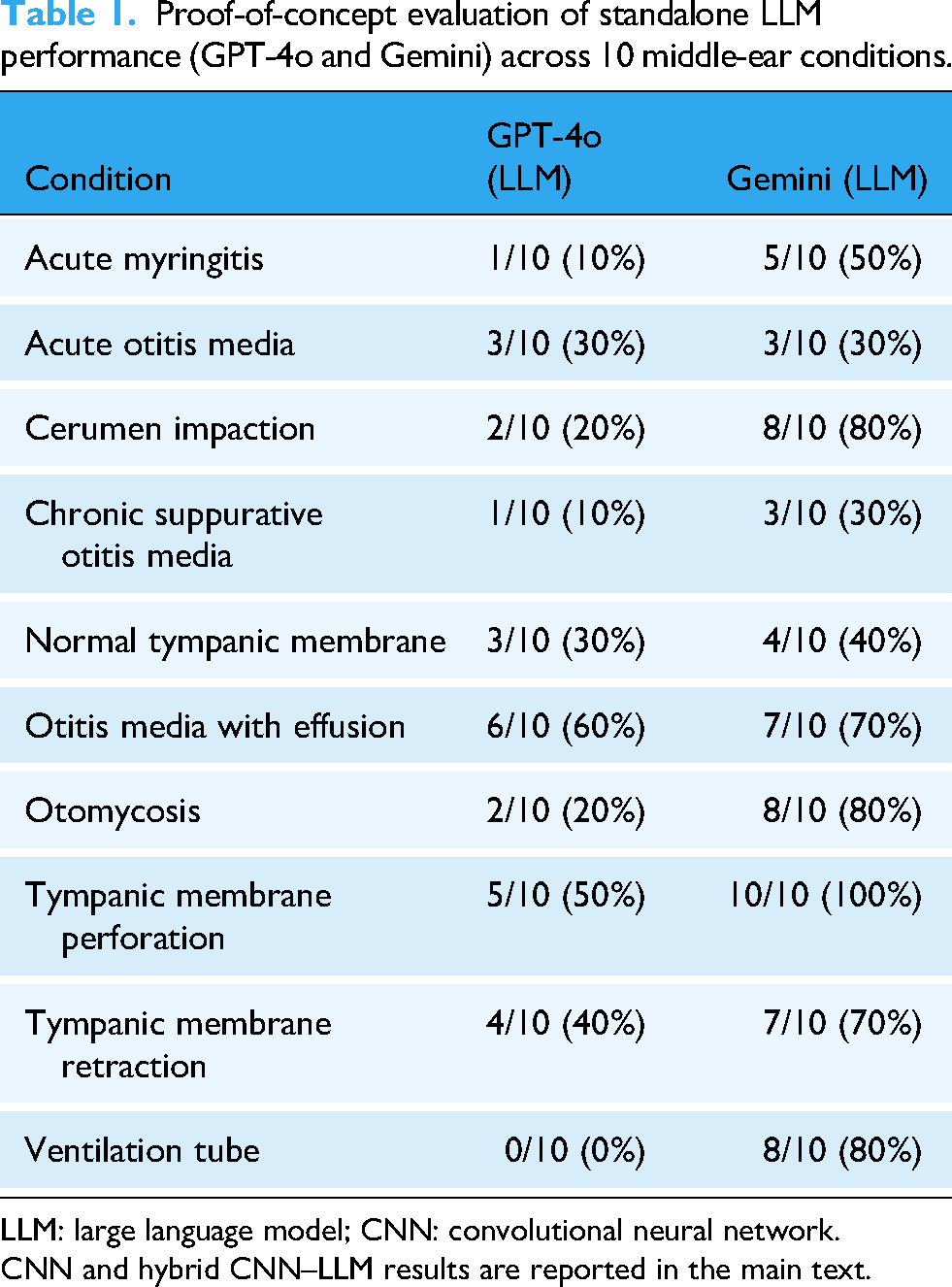

Table 1 provides a proof-of-concept evaluation of standalone LLMs (GPT-4o, Gemini 1.5 Pro) using 10 images per disease category. This experiment was exploratory and intended to assess whether LLMs could independently recognize otoscopic findings, rather than to serve as a performance benchmark.

Proof-of-concept evaluation of standalone LLM performance (GPT-4o and Gemini) across 10 middle-ear conditions.

LLM: large language model; CNN: convolutional neural network.

CNN and hybrid CNN–LLM results are reported in the main text.

In the integrated framework, however, CNN remained the sole diagnostic classifier, while LLM provided contextual interpretation and clinical advice. Thus, traditional classification metrics (receiver operating characteristic, AUC, accuracy) were applied only to the CNN backbone, not to the LLM outputs. Representative examples of CNN outputs and corresponding LLM interpretations are provided in Supplemental Table 1.

Mobile platform implementation

The transform-based encoder–decoder architecture was adapted for mobile responsiveness, enabling partial on-device execution with optimized local modules. As illustrated in Figure 3, the mobile application interface provided sub-200 ms feedback for preliminary image analysis using locally deployed CNN models. The complete diagnostic process, which integrates cloud-based reasoning via large classification models and LLMs, maintained high overall accuracy and user responsiveness. As shown in Figure 4, the two-step workflow achieved 99% accuracy in identifying normal tympanic membranes, ensuring reliable and timely clinical decision support.

Mobile application interface demonstrating sub-200 ms on-device CNN feedback for initial screening, followed by cloud-based disease classification and LLM-driven clinical reasoning. CNN: convolutional neural network; LLM: large language model. Adapted from Chu Y-C, Lin K-H, Chen Y-C et al. ‘Enhancing Clinical Accuracy in Middle Ear Disease Diagnosis with a Cloud-Based AI System Integrating CNNs and LLMs,’ © 2025 The Author(s), under exclusive license to Springer Nature Switzerland AG. Reproduced with permission from Springer Nature (License No. 6137560845503) 21 .

Two-step diagnostic workflow in the mobile application: (1) CNN-based tympanic membrane classification on-device, followed by (2) LLM-driven clinical reasoning via secure cloud APIs. CNN: convolutional neural network; LLM: large language model.

Validation and clinical integration

Comprehensive validation using five-fold cross-validation and external dataset verification (Supplemental Figure 1) confirmed stable performance across diverse patient cohorts. Detailed training dynamics analyses indicated robust and stable convergence across the different model architectures. Clinically, the integrated system delivered diagnostic performance comparable to specialist-level assessments, underscoring its potential to provide expert-level diagnostic support 22 in resource-constrained settings and enhance overall healthcare quality.

Discussion

Advancement in AI integration for otoscopic diagnosis

Our study introduces a novel dual-path architecture that combines CNN and LLM capabilities to enhance otoscopic diagnosis. This integrated approach not only improves diagnostic accuracy but also underscores the potential of blending traditional image analysis with advanced language processing for more comprehensive clinical decision support.

Unlike many contemporary studies that primarily focus on either multimodal data fusion to achieve incremental accuracy gains or leveraging LLMs to generate complex differential diagnoses from clinical notes,23,24 our framework addresses a different yet equally critical clinical need: delivering real-time explainability to a state-of-the-art visual classifier without compromising its performance.

Architecturally, our design avoids complex deep fusion mechanisms and instead implements a streamlined dual-path system. The CNN functions as a highly accurate “perception engine” (achieving 97.6% accuracy), while the LLM serves as a “reasoning engine.” This clear division of labor distinguishes our work from existing approaches. The primary innovation lies not in marginally improving CNN accuracy, but in augmenting high-precision image classification with instantaneous, clinically coherent reasoning. This design makes the system particularly well-suited for telemedicine and mobile health applications, where rapid feedback and transparent, interpretable decision-making are essential.

Comparative analysis of AI methodologies

Our findings align with previous research demonstrating the efficacy of LLMs in medical diagnosis, particularly when integrated with human expertise. For instance, hybrid systems that merge LLMs with clinician input have been shown to outperform both individual practitioners and standalone LLM ensembles in generating accurate differential diagnoses. 23 Similarly, a novel LLM-based hybrid-transformer network has exhibited superior performance in brain tumor diagnosis compared to traditional methods. 3 Moreover, structured clinical reasoning prompts have been shown to significantly enhance LLM diagnostic capabilities, with a two-step approach yielding markedly improved accuracy over conventional methods. 25 An LLM optimized for diagnostic reasoning has also demonstrated superior standalone performance and the ability to augment clinicians’ accuracy when used as an assistive tool.24,26 These findings suggest that integrating LLMs with clinical expertise and structured reasoning may offer the optimal balance between computational efficiency and clinical utility.

Role of medical knowledge engineering

Our analysis highlights the critical role of structured medical knowledge in enhancing AI-driven diagnostic reasoning. By refining prompts to incorporate precise medical terminology and anatomical context, the system produced markedly more accurate and clinically coherent interpretations. This improvement underscores that advanced AI systems cannot operate optimally without domain-specific guidance, reinforcing the importance of medical knowledge engineering in the development of reliable and explainable healthcare AI solutions.

Technical implementation and hybrid deployment

The system was designed with a hybrid architecture that balances on-device responsiveness and cloud-based scalability. Lightweight CNN modules deployed locally on mobile devices enable preliminary image analysis within sub-200 ms, enhancing user interactivity and reducing latency. Meanwhile, large-scale reasoning tasks—such as diagnostic classification using LLMs—are performed through secure cloud APIs. While full edge deployment remains a future goal, this hybrid model effectively addresses performance, privacy, and accessibility concerns 27 in real-world settings, particularly where computational constraints or connectivity limitations are present. 28

Clinical integration and performance analysis

Clinically, our dual-path system demonstrated diagnostic performance comparable to that of specialist-level assessments. The consistency of results across different levels of clinical expertise highlights its potential as a supportive tool in both primary care and educational settings. Importantly, our system is designed to assist rather than replace clinical judgment, 29 ensuring that final diagnostic decisions remain under the control of healthcare professionals.

Limitations and future directions

Despite the robust performance in diagnosing common middle-ear conditions, our system's effectiveness in identifying rare pathologies and edge cases requires further validation. The current reliance on structured prompt engineering also suggests limitations in adapting to novel clinical presentations. Future research should focus on expanding system capabilities to cover rare conditions, developing adaptive prompt mechanisms, exploring educational applications, and conducting longitudinal studies to evaluate the long-term clinical impact.

Conclusion

This study presents the development and validation of a hybrid AI system for otoscopic diagnosis that integrates CNN and LLM technologies through a dual-path framework. The system combines partial on-device execution with cloud-based reasoning, achieving high diagnostic accuracy and rapid feedback suitable for mobile health deployment.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251395449 - Supplemental material for Hybrid artificial intelligence frameworks for otoscopic diagnosis: Integrating convolutional neural networks and large language models toward real-time mobile health

Supplemental material, sj-docx-1-dhj-10.1177_20552076251395449 for Hybrid artificial intelligence frameworks for otoscopic diagnosis: Integrating convolutional neural networks and large language models toward real-time mobile health by Yuan-Chia Chu, Yen-Chi Chen, Chien-Yeh Hsu, Chen-Tsung Kuo, Yen-Fu Cheng, Kuan-Hsun Lin and Wen-Huei Liao in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076251395449 - Supplemental material for Hybrid artificial intelligence frameworks for otoscopic diagnosis: Integrating convolutional neural networks and large language models toward real-time mobile health

Supplemental material, sj-docx-2-dhj-10.1177_20552076251395449 for Hybrid artificial intelligence frameworks for otoscopic diagnosis: Integrating convolutional neural networks and large language models toward real-time mobile health by Yuan-Chia Chu, Yen-Chi Chen, Chien-Yeh Hsu, Chen-Tsung Kuo, Yen-Fu Cheng, Kuan-Hsun Lin and Wen-Huei Liao in DIGITAL HEALTH

Footnotes

Acknowledgments

The authors express their sincere appreciation to the Big Data Center at Taipei Veterans General Hospital for their significant contribution to data processing and to Dr. Shang-Liang Wu for their impactful recommendations which have significantly enriched this study. We also appreciate the computational resources provided by TVGH Cloud 1. The funders had no involvement in the study design, data collection and analysis, interpretation of the results, manuscript preparation, or the decision to publish. Some graphical elements in the figures were created or adapted using publicly available design resources.

Ethics approval

This study was conducted in accordance with the Declaration of Helsinki and approved by the Institutional Review Board of Taipei Veterans General Hospital (IRB No. 2025-01-027CC). The study protocol adhered to all relevant institutional and national research guidelines.

Consent to participate

The requirement for individual informed consent was waived by the ethics committee due to the retrospective nature of the study and the use of de-identified otoscopic images. All patient data were anonymized prior to analysis, and no personal identifying information was included in the study database.

Author contributions

Y-C Chu and K-H Lin contributed equally to this work. Y-C Chu conceptualized the study, supervised the overall project, and contributed to manuscript writing and revisions. K-H Lin led the AI system design and model implementation, and contributed to manuscript preparation. Y-C Chen was responsible for clinical interpretation of otoscopic images and validation of diagnostic relevance. C-Y Hsu conducted data preprocessing and statistical analysis. C-T Kuo contributed to mobile platform optimization and technical integration. Y-F Cheng provided clinical oversight, verified otologic diagnoses, and reviewed the clinical utility aspects. W-H Liao coordinated clinical data acquisition and contributed to the evaluation of system usability in medical workflows. All authors reviewed and approved the final manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The authors express their sincere gratitude to the National Science and Technology Council for their substantial support through grants NSTC113-2320-B-075-010 and NSTC114-2320-B075-009. Additional funding was provided by the Taipei Veterans General Hospital through grants V114C-149 and V114E-006-2. The funding organizations provided financial support exclusively and had no involvement in the study design, data collection, analysis, interpretation of results, manuscript preparation, or the decision to publish. All authors maintained complete independence in research conduct and publication decisions.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

The clinical otoendoscopic image dataset analyzed in this study was collected under institutional IRB approval and contains patient-related information. Due to privacy restrictions, the full dataset cannot be made publicly available. However, anonymized sample images, metadata descriptions, and evaluation scripts are available from the corresponding authors, Dr. Wen-Huei Liao (whliao@vghtpe.gov.tw) and Kuan-Hsun Lin (![]() ), upon reasonable request and subject to institutional approval.

), upon reasonable request and subject to institutional approval.

Data protection and privacy

All data processing and storage procedures complied with institutional privacy policies and relevant data protection regulations. The implementation of edge computing technology ensured that patient data remained secure through local processing, eliminating the need for external data transmission. Access to the research database was strictly controlled and limited to authorized research team members.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.