Abstract

Background

Colorectal cancer (CRC) represents a substantial global burden, particularly in China. Patients have limited awareness of the importance of postoperative follow-up examinations and insufficient knowledge about review, which ultimately leads to poor long-term prognosis. The advent of artificial intelligence tools such as chat-generating pre-trained transformer (ChatGPT) in the healthcare sector is poised to transform patient management strategies.

Objective

The objective of this study was to evaluate the effectiveness of ChatGPT in responding to patients’ inquiries regarding postoperative follow-up of CRC. The overarching objective is to enhance patients’ awareness of, and compliance with, postoperative follow-up examinations.

Methods

A set of 10 questions concerning postoperative review of CRC was posed to ChatGPT4.5. The responses to these inquiries were evaluated in three domains (accuracy, completeness, and comprehensibility) by five anorectal specialists in Zhejiang Province, in conjunction with 100 inpatients in three domains (completeness, comprehensibility, and trustability).

Result

The accuracy scale (scoring from 1 to 6) received an average score of 4.6 ± 0.7. The completeness (scoring from 1 to 3) and comprehensibility (scoring from 1 to 3) scales received average scores of 2.2 ± 0.5 and 2.5 ± 0.5, respectively. Cronbach's α analyses indicated good reliability for the accuracy and completeness scales (α = 0.85) and excellent reliability for the comprehensibility scale (α = 0.93). However, they also suggested possible redundancy issues. Patient feedback was positive, with 98% to 100% rating all questions as completeness, comprehensibility, and trustability.

Conclusion

ChatGPT has been demonstrated to possess the capacity to formulate satisfactory responses to inquiries concerning CRC postoperative review. Furthermore, it has the potential to enhance patient awareness and knowledge, which may consequently lead to an improvement in long-term outcomes.

Introduction

Colorectal cancer (CRC) is the third most prevalent cancer worldwide and the second leading cause of death from malignant tumours. 1 In recent years, the incidence and mortality of CRC have increased significantly, posing a substantial threat to public health. The most widely employed prognostic assessment instrument in clinical practice to date is the tumour node metastasis (TNM) staging. For CRC without distant metastases (Union Internationale Contre le Cancer/American Joint Committee on Cancer TNM stage I to III), the guidelines advocate radical surgical resection.2–4 The development of postoperative treatment and follow-up protocols is contingent on the evaluation of the patient's risk of postoperative recurrence.5–7 Nevertheless, even when patients present with the same stage, there may be considerable variation in their prognoses. This may be attributable to a number of factors, including limited awareness of postoperative review, inadequate knowledge of different review techniques, or a lack of self-monitoring capabilities. 8

Chat-generating pre-trained transformer (ChatGPT), an artificial intelligence (AI)-powered chatbot, has been demonstrated to deliver effective personalised support and education to individuals, and it is emerging as a revolutionary tool to disrupt the process of accessing healthcare, with its use now extending to various areas of medicine. 8

A recent study evaluated the performance of ChatGPT in providing answers to patients with non-alcoholic fatty liver disease, demonstrating high accuracy, completeness and ease of comprehensibility. 9 A number of studies have been conducted in the field of gastroenterology, with a particular focus on acute pancreatitis and Helicobacter pylori.10,11 Conventional academic studies have exclusively evaluated the quality of ChatGPT responses, yet these assessments have been limited in scope and repetitive in nature.

In light of the preceding studies, the present study incorporated the list of questions from prior research, formulating a dual perspective evaluation that encompassed the perspectives of both physicians and patients. This evaluation sought to interrogate ChatGPT in five domains: its capacity to capture information, its capacity to distil information, its quality and completeness control, its tracking of cutting-edge technology, and its optimisation of patient-centred experience. The objective of this study was to evaluate the efficacy of ChatGPT in responding to patients’ inquiries regarding postoperative CRC review. The overarching ambition of this study is to enhance patient awareness and engagement in global national screening programmes.

Methods

Study design

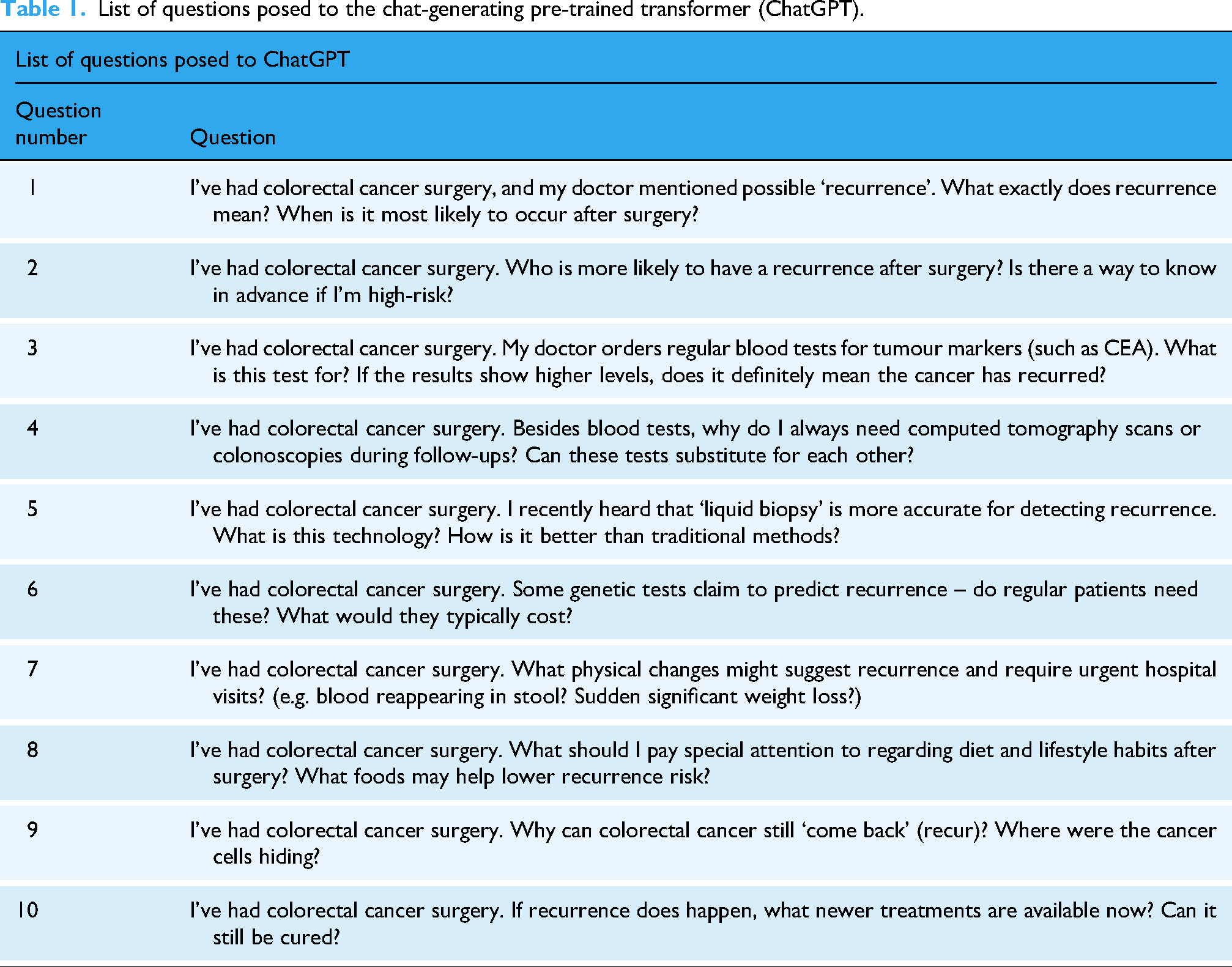

The present study was conducted from March to June 2025 at Ningbo No. 2 Hospital. This study was approved by the Institutional Review Board (IRB) of Ningbo No. 2 Hospital (Approval No. PJ-NBEY-KY-2024-203-01). The IRB waived the requirement for informed consent due to the study's low risk and the use of fully anonymised retrospective data. The questionnaire comprising 10 questions was developed based on a comprehensive review of clinical experience, current practice guidelines, and international literature on patient education in oncology (Table 1). These questions were deliberately categorised into five thematic areas to ensure a structured and multidimensional assessment of patient understanding and needs. Although only 10 questions were included, grouping them into five domains allows for a more nuanced analysis of different aspects of patient education, such as basic knowledge, monitoring methods, updates in care, self-management, and pathological mechanisms. This approach ensures that, despite the limited number of items, the questionnaire covers a broad spectrum of clinically relevant topics.8–12

List of questions posed to the chat-generating pre-trained transformer (ChatGPT).

Population and sample size

The study comprised a total of 100 participants, including 37 females and 63 males. The age of the participants ranged from 30 to 63 years, with the youngest being 30 and the oldest 63. The present study examined a cohort of patients diagnosed with postoperative CRC, who were reviewed at the outpatient anorectal clinic of Ningbo No. 2 Hospital between March and June 2025. The patients were invited to rate each response binary (yes/no) in terms of its completeness, comprehensibility, and trustability.

Data collection and scoring

The 10 questions have been categorised into five main areas: (1) Questions 1 and 2 (Q1 and Q2) describe the basic concepts of postoperative recurrence of CRC, so that the patients can have a clearer understanding of their own situation; (2) questions 3 and 4 (Q3 and Q4) contain the existing and common methods of reviewing their condition; (3) questions 5 and 6 (Q5 and Q6) contain the latest information about CRC recurrence; (4) questions 7 and 8 (Q7 and Q8) cover the key points of self-monitoring, including factors for deterioration or improvement; and (5) questions 9 and 10 (Q9 and Q10) relate to the pathomechanisms of CRC recurrence and the need for further treatment if CRC recurs. Each question was processed using ChatGPT (version GPT-4.5), and its responses were recorded. A panel of five anorectal specialists evaluated each response using a Likert scale across three domains: accuracy (scored 1–6), completeness (1–3), and comprehensibility (1–3). The use of different scales reflects the varying levels of granularity needed for each dimension. Accuracy was graded on a wider scale (1–6) to capture subtle differences in correctness, which is critical in medical contexts. In contrast, completeness and comprehensibility were assessed on a narrower scale (1–3) as they represent more holistic attributes that can be reliably categorised into low, medium, and high levels without requiring excessive differentiation.

Validation

The responses were validated by a group of five anorectal specialists who evaluated each response using a Likert scale based on accuracy, completeness, and comprehensibility. Additionally, a study was conducted on the cohort of 100 postoperative CRC patients who were reviewed at the outpatient anorectal clinic of Ningbo No. 2 Hospital. These patients were invited to rate each response binary (yes/no) in terms of its completeness, comprehensibility, and trustability.

Statistical analysis

The results were analysed with means (standard deviations) reported for continuous variables and frequencies and percentages for categorical variables. The internal consistency of the scale was assessed using the Cronbach's alpha coefficient, with thresholds of <50%, 51%–60%, 61%–70%, 71%–80%, 81%–90%, and >90% considered to be unacceptable, poor, dubious, acceptable, good, and very good, respectively. 13

All statistical analyses were performed using Origin 2024.

Results

Expert assessment

A systematic quantitative analysis was conducted across three dimensions – accuracy, completeness and comprehensibility – to evaluate the responses generated by ChatGPT concerning CRC. The evaluation was conducted by five experts in the field. All scores are reported as mean (standard deviation), with the proportion of questions meeting specific score thresholds statistically quantified.

With regard to the question of accuracy, the mean score was found to be 4.6 out of a possible 6 points, with a standard deviation of 0.7. Specifically, Q2 and Q3 achieved the highest scores, both at 5.2 (standard deviations of 0.4 and 0.8, respectively), while Q7 scored lowest at 3.2 (standard deviation: 0.8). It is evident that Q2, Q3 and Q4 have all achieved an average score of 5 or above, thus indicating that they are ‘almost entirely correct’. It is noteworthy that these three questions account for 30% of the total. The proportion of questions that received a score of 5 or above ranged from 0% (Q7) to 100% (Q2) (Table 2 and Figure 1).

Box plots showing the rating of chat-generating pre-trained transformer (ChatGPT) answers by experts (accuracy).

Assessment of the chat-generating pre-trained transformer (ChatGPT) answers by the five experts.

With regard to the question of completeness (scoring range: 1–3 points), the mean average was found to be 2.2 (standard deviation: 0.5). Q2 and Q3 achieved the highest completeness scores at 2.6 (standard deviation: 0.5), while Q5 and Q6 scored lowest at 1.8 (standard deviations of 0.8 and 0.4, respectively). Of the 10 questions, eight (Q1–Q4, Q7–Q10) achieved an average score of at least 2 points (representing ‘sufficient judgement’), accounting for 80%. The proportion of questions receiving the maximum of three points ranged from 0% (Q6, Q7) to 60% (Q2, Q3) (Figure 2).

Box plots showing the rating of chat-generating pre-trained transformer (ChatGPT) answers by experts (completeness).

With regard to comprehensibility (scoring range: 1–3 points), the mean overall score was 2.5 (standard deviation: 0.6). Q3 and Q8 demonstrated the highest mean scores of 2.8 (standard deviation: 0.4), while Q7 exhibited the lowest mean score of 2.2 (standard deviation: 0.2). All questions received comprehension ratings of no less than 2 points (indicating ‘understandable’), with 100% of respondents achieving this threshold or higher. The proportion of full marks (3 points) varied by question, yet overall performance remained consistent (Figure 3).

Box plots showing the rating of chat-generating pre-trained transformer (ChatGPT) answers by experts (comprehensibility).

Furthermore, Cronbach's alpha coefficient was utilised to evaluate the reliability of expert ratings. The internal consistency of both the accuracy (α = 0.85) and completeness (α = 0.85) scales was found to be satisfactory, with the internal consistency of the understandability scale (α = 0.93) demonstrating excellent reliability. However, it was also observed that the items on the understandability scale exhibited signs of redundancy.

Patient assessment

A subsequent study was conducted in which a questionnaire survey was administered to 100 patients undergoing CRC follow-up examinations at Ningbo Second Outpatient Hospital (aged 50–90 years). The objective of this survey was to assess patient feedback on responses generated by ChatGPT.

With regard to the comprehensibility of the responses, the proportion of patients who found them ‘comprehensible’ (answering ‘yes’) ranged from 98% to 100%. It was found that six of the questions (Q3, Q4, Q6, Q7, Q8, Q10) were comprehensible to all patients.

With regard to the question of completeness, the proportion of affirmative responses (‘complete’) ranged from 99% to 100%. It was determined that six of the questions (Q1, Q2, Q3, Q6, Q8, Q10) were to be considered complete by all patients.

With regard to the issue of trustability, the proportion of responses that were evaluated as ‘reliable’ by patients ranged from 99% to 100%. It is notable that eight questions (Q1–Q6, Q8, Q10) received a 100% trust rating (Table 3).

Assessment of the chat-generating pre-trained transformer (ChatGPT) answers by the 100 patients.

Discussion

As indicated by previous literature, social media has been identified as a popular resource for patients seeking surgery-related information from sources other than healthcare providers.14,15 However, it is important to note the limitations inherent to online sources, particularly social media, with regard to the quality and reliability of information concerning surgical procedures. 16 While ChatGPT has been demonstrated to generate fluent dialogue, it exhibits limitations when confronted with complex medical queries. 17 In light of this, an evaluation was conducted of ChatGPT's capability and potential value in providing information regarding postoperative follow-up for CRC. The findings indicate that ChatGPT performs admirably in this domain, with its generated responses aiding patients’ comprehension of the postoperative review process and its significance. This has the effect of enhancing patients’ awareness of the necessity for follow-up care following CRC surgery.

The independent assessments conducted by five domain experts corroborated the quality of ChatGPT's responses. The consensus among experts was unanimous in their assessment of ChatGPT's responses, which they found to be both accurate and comprehensive in their content, and easily comprehensible.

The average accuracy scores of ChatGPT for responses pertaining to CRC recurrence, encompassing pertinent concepts, extant follow-up screening methodologies, mechanisms of recurrence, and treatment approaches, ranged from 4.8 to 5.2. This exemplifies ChatGPT's advanced retrieval capabilities and its aptitude for synthesising structured knowledge from authoritative guidelines. The platform has been found to consistently produce content that is based on consensus, while also maintaining stringent data quality control measures. 18 However, its performance in tracking cutting-edge technologies and delivering health education for patient self-screening is suboptimal. The key issues identified include outdated data, misleading content, and misplaced emphasis. For instance, while ChatGPT provided accurate responses to specific queries (Q7, concerning warning symptom recognition), such as stating ‘haematochezia is a significant warning sign’, its characterisation of other symptoms (e.g. unexplained weight loss, altered bowel habits, fatigue or weakness) proved contentious. Historically, these symptoms were often regarded as effective indicators for self-monitoring. However, recent observations have indicated a shift in the disease's progression, with frequent suggestions that it may have advanced to an intermediate or advanced stage. 2 It is evident that ChatGPT's responses do not adequately underscore the significance of regular, standardised medical follow-up examinations, encompassing professional imaging and laboratory tests, irrespective of the manifestation of symptoms. This is due to the fact that routine follow-up remains the most reliable method for detecting potential recurrence or metastasis at an early stage, and should not be contingent upon the appearance of symptoms.

With regard to the question of completeness, experts consider ChatGPT's responses to be relatively brief, with incomplete data coverage and insufficient depth of analysis in specialised fields. ChatGPT is trained using extensive databases, including web content (e.g. blogs, news articles, and websites), e-books, scientific papers, and clinical guidelines and recommendations. Incomplete responses generated by ChatGPT may be attributable to a number of factors, including, but not limited to: insufficient or biased training data, misunderstandings, technical limitations, reliance on unreliable or outdated sources, lack of integration with healthcare expertise, and errors in programming or maintenance processes. 19 ChatGPT is only capable of analysing input data. 20 The siloed nature of medical information, privacy barriers, and unstructured characteristics collectively create obstacles to data acquisition.

In terms of comprehensibility, the overall assessment is favourable. This is primarily attributable to its robust information processing capabilities, which enable it to accurately distil the core elements of complex information. It then organises this information into a cognitively accessible format through clear, concise language, logical structuring, appropriate connectives, and potential visualisation suggestions. Simultaneously, it optimises expression in real-time based on context and interaction, significantly reducing cognitive load for users and substantially enhancing comprehension efficiency. 21 Patients’ comprehension of information generated by ChatGPT is crucial for making informed decisions, enhancing knowledge, self-management, adherence, and engagement in treatment. 22 Poorly readable text may pose challenges for patients, potentially leading to misinformation and worsened health outcomes.

It is acknowledged that patients generally lack the expertise to evaluate the accuracy of medical information. For this reason, this study did not require patients to assess this dimension. However, when asked to rate the completeness, comprehensibility, and credibility of the responses, patients provided highly positive feedback. The content was found to be comprehensive and accessible, notwithstanding the utilisation of medical terminology. This finding suggests that ChatGPT is capable of efficiently organising and presenting information in patient communications.

A particularly noteworthy finding was that almost all patients participating in the assessment expressed a high degree of trust in the responses given by ChatGPT, thereby developing confidence in the model itself. This finding is of considerable significance, as generative language models such as ChatGPT are fundamentally based on statistical patterns. The design of these systems does not prioritise absolute accuracy; rather, it aims to appear credible by mimicking human linguistic patterns. 23 The high level of trust exhibited by patients in the aforementioned tools is indicative of the substantial influence that such tools wield in the dissemination of health information. However, this influence is accompanied by inherent risks.

A salient benefit of ChatGPT's capacity to generate pertinent information is its incorporation of disclaimers concerning health-related counsel. It has been observed that between 70% and 100% of responses from ChatGPT include disclaimers such as: It is imperative to acknowledge that the provided information is not intended to supersede the expertise of a qualified medical professional. It is imperative to consult a healthcare provider for guidance on matters pertaining to one's specific health condition. These disclaimers are of paramount importance given that the information provided by chatbots is generally generalised, not always reliable, and fails to account for the individual variations present in each patient. 24

The present study posed a series of questions to ChatGPT across five domains, encompassing information gathering and refinement, quality and completeness control, tracking of cutting-edge technologies, and optimisation of patient-centred experiences. However, the research is not without its limitations. Firstly, the evaluation was confined to five predefined domains and 10 specific questions; consequently, the findings cannot be extrapolated to ChatGPT's capacity for addressing other CRC-related queries or broader medical topics. Furthermore, the evaluation is constrained by the perspectives of specific assessors (in this case, experts and patients), and due to the inherent nature of generative language models, outputs fluctuate with variations in input prompts (wording and context). This renders it challenging to obtain identical high-quality responses at different times or with slightly altered phrasing, presenting issues of reproducibility. The present study's objective was to provide an overall evaluation of ChatGPT, without considering specific patient characteristics such as age, gender, physical condition, or comorbidities. It is recommended that future research incorporate these variables in order to facilitate more personalised assessments of the chatbot's responses. Moreover, the questions employed in this study were drawn from prior research, which may have led to the adoption of a somewhat dated perspective. In practice, questions posed by patients may be more fragmented, colloquial, or address different concerns, potentially affecting ChatGPT's capacity to provide optimal responses. Furthermore, the distribution of the patient questionnaire was exclusive to Ningbo Second Hospital, resulting in a relatively homogeneous sample source. This limits the external validity of the findings across broader geographical areas, diverse healthcare settings, and varied populations.

Conclusion

In summary, the present study provides substantial evidence that ChatGPT is capable of effectively answering specific postoperative review questions concerning CRC. It has the capacity to function as an ancillary instrument to enhance the level of public awareness concerning postoperative management of CRC, with the potential to exert a favourable influence on promoting patient adherence and optimising long-term prognosis. However, it is imperative to exercise the utmost caution when interpreting these findings, as the model's exceptional performance is confined to the specific inquiries and evaluation contexts delineated in this study. Consequently, its performance cannot be extrapolated to represent the model's general proficiency in all medical consultation scenarios. The discrepancy between the elevated level of patient trust and their actual discriminative ability, in conjunction with the intrinsic reproducibility challenges and the prospect of ‘hallucination’ in the model, necessitates the maintenance of critical thinking on the part of the user.

The potential for generative language modelling in healthcare is considerable; however, it is imperative that the technology is refined. The development of specialised versions (or models) designed specifically for healthcare scenarios, trained and fine-tuned strictly on the basis of the latest scientific evidence and current clinical guidelines, and the establishment of effective fact-checking and risk-alerting mechanisms, will be a key direction to enhance their reliability, safety and usefulness. The ultimate objective should be for AI to become a useful addition that enhances communication between patients and doctors and improves health literacy, rather than a substitute for professional medical care.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251393297 - Supplemental material for Multidimensional assessment of ChatGPT in colorectal cancer postoperative consultations: Analysing response variations across critical clinical domains

Supplemental material, sj-docx-1-dhj-10.1177_20552076251393297 for Multidimensional assessment of ChatGPT in colorectal cancer postoperative consultations: Analysing response variations across critical clinical domains by Yitong Hu, Shisong Wang, Ping Cai and in DIGITAL HEALTH

Supplemental Material

sj-xlsx-2-dhj-10.1177_20552076251393297 - Supplemental material for Multidimensional assessment of ChatGPT in colorectal cancer postoperative consultations: Analysing response variations across critical clinical domains

Supplemental material, sj-xlsx-2-dhj-10.1177_20552076251393297 for Multidimensional assessment of ChatGPT in colorectal cancer postoperative consultations: Analysing response variations across critical clinical domains by Yitong Hu, Shisong Wang, Ping Cai and in DIGITAL HEALTH

Footnotes

Acknowledgements

We thank Ping Cai for his guidance. The members of The Artificial Intelligence Colorectal Cancer Research (AI-CORE) Working Group are: Xiaoyu Dai1, Jianjiong Li2, Zhou Wu3, Boxu Chen3 and Haoxun Mao3.

1Shaoxing University, Shaoxing, China; 2Health Science Center, Ningbo University, Ningbo, China; and 3Ningbo No. 2 Hospital, Ningbo, China.

Ethical approval

This study was approved by the IRB of Ningbo No. 2 Hospital (Approval No. PJ-NBEY-KY-2024-203-01). The IRB waived the requirement for informed consent due to the study's low risk and the use of anonymised data.

Consent for publication

All authors consent to publication.

Author contributions

HYT and CP contributed to the study concepts and study design and helped in revising the manuscript. HYT and WSS supervised the research and wrote the manuscript. All authors read and approved the final manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Zhu Xiushan Talent Award Fund (2021hmyq10); Hwa Mei Key Research Fund (2021HMZD02), Medical Scientific Research Foundation of Zhejiang Province, China (2024KY1551, 2025KY1386, 2025KY1503) and the Science and Technology Innovation Yongjiang 2035 Key Research and Development Project of Ningbo (2024Z229).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

Not applicable.

Guarantor

The guarantor of this study is Yitong Hu, who takes overall responsibility for the integrity of the research and the accuracy of the data.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.