Abstract

Background

The study aimed to evaluate the readability of COVID-19 health science information released by the Provincial Health Commissions of China and analyze the problems existing in the current health information webpage, so as to provide an improvement direction for the development of highly readable health information related to public health events.

Methods

The main research data were obtained from health articles on COVID-19 published on the websites of 27 Provincial Health Commissions of China. Two researchers independently evaluated the readability of webpages using an Online Health Information Readability Evaluation Tool across seven dimensions. Besides, we also applied linguistic analysis techniques to assess readability from text factors.

Results

Readability scores ranged from 0.49 to 0.98 for 92 included articles, with an average of 0.78 ± 0.10. Among the evaluation dimensions, organizational layout obtained the highest score, whereas visual assistance received the lowest score. No statistically significant differences were observed in readability level across different regions or information topics (p > 0.05). For text factors of readability, the mean value of difficulty coefficient (scored on a 1–200 scale, where a value greater than 30 indicated relatively high reading difficulty) was 63.56 ± 10.79, suggesting the articles were challenging to read. The correlation coefficient between the webpage readability score and the text difficulty coefficient was −0.535 (p < 0.001).

Conclusions

The overall readability of the COVID-19 health information published by China's Provincial Health Commissions is acceptable, but the improvement measures for public understanding are needed. Health information producers should better align with the general public's literacy level through using shorter sentences, providing specific behavioral guidance with clear visual assistance, and utilize question-and-answer formats, which will be helpful to enhance the dissemination of the COVID-19 health information in China.

Background

The COVID-19 pandemic outbreak in December 2019 has posed a severe threat to global public health security according to the World Health Organization (WHO). 1 Research indicates a high demand for health information related to COVID-19 diagnosis, prevention, and knowledge among the public. 2 Health information on COVID-19 can effectively control the spread of the infection and reduce the incidence and mortality rates when meeting the public's health information needs. With the development of information technology, the Internet has become an important source for obtaining health-related information, 3 and played a significant role in the prevention and control of COVID-19 pandemic. 4 However, for individuals without medical education background, the Internet is filled with a plethora of mixed information, making it difficult to discern the reliability of health information online. In the face of public health emergencies, the public requires authoritative, accurate, and reliable pandemic information from government institutions.5,6

In China, the National Health Commission (NHC) serves as the central public health authority, with responsibilities akin to those of the WHO, including oversight of regional healthcare services, resident well-being, and epidemic prevention and control measures. 7 As the leading public health department of China, the NHC plays a pivotal role in guiding provincial health commissions, which are the key public health administrative units at the provincial level, across China's 31 provincial-level administrative regions (covering 22 provinces, 5 autonomous regions, and 4 municipalities). These commissions coordinate with China's Centers for Disease Control and Prevention (CDC) and other healthcare institutions to implement public health measures effectively. Additionally, the NHC leverages its government-backed new media platforms to disseminate health-related scientific information through official channels and online platforms, serving as a crucial source of knowledge for the public during events like the COVID-19 pandemic. 8

The official websites of provincial health commissions in China typically publish various types of health information to meet the needs of both the general public and healthcare professionals, whose details can be seen in “Appendix” section. Such information usually includes the following categories: (1) public health alerts and announcements: displayed on the homepage, these provide timely updates on disease outbreaks and other urgent public health incidents; (2) health education information: organized into sections such as disease prevention, healthy living tips, and nutrition advice; (3) health services and facilities: information on medical institutions, medical service guidelines, and online service portals, which can be accessed through a dedicated “Health Services” or “Facilities sections”; (4) regulations and policies: usually presented in a structured manner, with links to detailed documents and guidelines. In this study, scientific health information referred to evidence-based, publicly accessible content that delivers accurate, reliable data, clear explanations, and actionable recommendations pertaining to public health events, aimed at educating the general public. This includes articles intended to educate and inform the general public about COVID-19 prevention, treatment, and public health measures.

Against this backdrop, readability plays a pivotal role in effective health communication, referred as the degree to which health information is easily understood. 9 Regarding the definition of readability, from the perspective of writing style, Fry 10 defined readability as the degree of difficulty in reading caused by the way words and sentences are constructed in writing. Benjamin 11 believed that readability research should include aspects such as measuring the complexity of words, the style of the text, and the characteristics of sentences. Studies have also pointed out that readability refers to all the elements contained in the reading material that affect the effective use by readers, as well as the interactions among these elements.12,13 They have also analyzed the influence of internal language, external layout, and illustrations on the difficulty of text reading and comprehension. In our research, we define the readability of online health information as the degree to which the textual and nontextual factors of online health information are easy for readers to read and understand. The text factors primarily involve the characteristics of health information itself, such as text expression, numerical application, content design, and behavior operability.

High readability of online health information can enhance public knowledge acquisition and self-management abilities, thereby supporting COVID-19 prevention and control efforts. 14 Conversely, low readability may lead to misinterpretation, confusion, or even anxiety among the public regarding disease prognosis or preventive measures. 15 Thus, rigorous readability assessment is essential during the design, compilation, and revision of online health information to ensure alignment with the reading and comprehension abilities of target audiences.

Several studies16–18 assessed the readability of COVID-19 health information on public health organization websites and showed that the available information was poor and challenging for the public. Worrall et al. 16 used Google to search for COVID-19-related health information and investigated the first 20 webpages displayed in the search engine. The results showed that among the different website sources, webpages published by the government and public health organizations had the highest readability. However, it still exceeded the American Medical Association's recommendation that patient information materials should be read at 3–7 grade levels. Mani et al. 17 used the Flesch–Kincaid Grade Level (FKGL) to analyze 42 COVID-19 prevention materials published on the websites of public health departments in ten states in the United States, and the results showed that 45% of the materials were rated at a reading level above Grade 7, were considered “difficult” to read, and their readability could be improved. However, the readability of COVID-19 information released by the governments in China was unknown. Is it difficult for the Chinese public to read and understand?

Although various readability assessment tools have been developed, their applicability to Chinese online health information remained limited. The most commonly used assessment tools for textual factors are readability formulas, such as the Flesch Reading Ease Score, FKGL, Simple Measure of Gobbledygook, and Gunning Fog Index.19,20 These formulas are mainly based on the number of words, syllables, and sentences to infer linguistic complexity and reading effort, but are not explicitly present in written Chinese. Besides, Chinese is a logographic language that does not use spaces between words, and its sentence structure, word frequency distribution, and character-based semantics differ significantly from English. The effectiveness and plausibility of textual factors assessment vary between languages due to their linguistic peculiarities; thus, textual factors assessment tools should be developed based on the linguistic structures and properties of a specific language. For nontextual factors, the most commonly used assessment tools include the Suitability Assessment of Materials, Patient Education Materials Assessment Tool, and CDC Clear Communication Index.21,22 Most of them were developed specifically for printed materials but not fully capture the unique characteristics of online media, such as their diverse formats and interconnected content.

In China, researchers have attempted to adapt Chinese-specific tools. For instance, Li et al. used DISCERN and Chinese Readability Index Explorer to evaluate the readability of selected webpages about breast cancer information, which indicated that the quality and readability of breast cancer websites in Chinese were not satisfactory, and they varied among different website producer categories. 23 In the same way, Chu et al. used DISCERN and Chinese Text Reading Difficulty Grading to access the readability of online information about Alzheimer's Disease in China. 24 Current readability assessment tools for Chinese web pages primarily focus on analyzing textual factors. Beyond assessing textual factors, researchers typically rely solely on the DISCERN tool to assess the information quality of web pages, neglecting to analyze nontextual factors such as page layout and graphic design. For the assessment of nontextual factors, secondary evaluation using scale-based tools such as the SAM remains the mainstream approach. This separate evaluation of textual and nontextual factors limits the comprehensive evaluation of the readability of health information websites.

So as to bridge the gap for evaluating the readability of Chinese online health information, our team started to develop the Online Health Information Readability Evaluation Tool (OHIRET) 25 in 2018, which could not only evaluate textual (e.g., Chinese character complexity, semantic ambiguity index) and nontextual (e.g., clarity of visual charts, accessibility of policy links) factors for online health information simultaneously but also be applicable to the Chinese cultural background. The tool has been used to evaluate the readability of online health information related to diabetes in Chinese. 26

Yet, the quality and readability of health information on Chinese COVID-19 health information have never been investigated. Besides, the Provincial Health Commissions of China is an important platform for the government to release public health information, and the readability of COVID-19 health information is still unclear. Furthermore, the research questions for this study are as follows: What is the readability of the online health information on COVID-19 released by the Provincial Health Commissions when using OHIRET? In cases where the readability of health information is inadequate, what actions should the Provincial Health Commission implement?

Methods

Design and data collection

The sample selection process consisted of two steps: screening the websites of the Provincial Health Commissions of China and extracting health science information. The screening process is illustrated in Figure 1.

Flow diagram for health commissions websites and health articles.

Baidu (www.baidu.com) was selected as the search engine for this study because it had the second highest annual market share of search engines in China. Although Bing (www.bing.com), by virtue of its bundling with the Windows operating system and Edge browser, had become the top-ranked search engine in China's retrieval market, it still fell short of meeting the retrieval requirements for the research samples in this study when compared to Baidu. This inadequacy stemmed from its insufficient coverage of certain domestic Chinese information, which was constrained by the local network environment and relevant policies. In August 2023, the researcher YZ conducted a search on Baidu using “Provincial Health Commission” as the search term to find the relevant websites, and selected 32 candidate official Provincial Health Commissions websites preliminarily (Xinjiang has two official websites of the Health Commission). Then YZ identified 27 eligible websites according to the exclusion criteria as follows: (a) lack of updates for more than one year; (b) absence of COVID-19-related health information; and (c) duplication.

During the epidemic period, all the official websites of provincial health commissions established special columns on COVID-19. As internet users generally tended to choose from the first five search results when seeking information online, 27 the researcher YZ viewed the special column and selected five latest published health science articles about COVID-19, and a total of 135 articles were included. The exclusion criteria of the articles were as follows: (a) repeated information; (b) news reports or notices; (c) content was only videos or pictures; and (d) articles not intended for public reading (meeting minutes, budget reports, and operational guidelines). Ultimately, finally 92 articles were included in the analysis. According to the classification of health information on COVID-19, the 92 selected articles were divided into two topics: “COVID-19 prevention knowledge” and “COVID-19 vaccination knowledge.” If the content only involved the classification of COVID-19 vaccines, vaccination status, precautions, etc., it was classified as “COVID-19 vaccination knowledge.” Otherwise, it was classified as “COVID-19 prevention knowledge.”

Online Health Information Readability Evaluation Tool—Primary readability evaluation tool

The OHIRET is the primary readability evaluation tool in this paper, which has been developed by our research team since 2018. We searched databases such as PubMed 28 and Embase 29 to obtain relevant literatures on the readability evaluation of online health information both domestically and internationally. Through a literature review, we got knowledge of the types of readability assessment tools, the development methods for representative tools (e.g., SAM 30 and PEMAT 31 ). We systematically summarized the evaluation indicators of text factors and nontext factors pertaining to the readability of online health information in the literature, and preliminarily established an evaluation system consisting of 7 dimensions and 55 items. Then, we conducted three rounds of Delphi expert consultations, performed internal consistency tests, assessed interrater reliability to delete and revise some unreasonable evaluation items. The final OHIRET evaluation system consisted of 7 dimensions and 33 items in total. Besides, the Cronbach's α coefficient was 0.704, and the Cohen's kappa coefficient ranged from 0.6242 to 1. After that, we executed reader testing and used the readability formula to evaluate the structural validity of the tool. The correlation coefficients between the readability evaluation results and the correct knowledge answer rate of high and low health literacy readers were 0.511 and 0.424, respectively (p < 0.05). The correlation coefficient between the results of readability evaluation and the results calculated by the Chinese readability formula (linguistic analysis techniques 32 ) was −0.369 (p < 0.05). The reliability and validity of the index system were good. 25

In order to assist the evaluators in using the index system more effectively, the research group compiled the “User's Guide,” which includes definitions, explanations, and scoring standards for the 33 items. To make sure the target users can accept and use our tools easily, we also accomplished the research on its applicability within the group of nurses. 26 After three rounds of applicability tests, the research group modified the definitions, explanations, and scoring criteria of some items and deleted one indicator. The interrater reliability among the assessors of each item of the indicator system reached 0.70–1, and the correlation coefficient between the nurses’ evaluation results of the readability of the web page and the calculated results of the readability formula was −0.67 (p < 0.05). Subsequently, the analytic hierarchy process was employed to calculate the weight coefficients of the items.

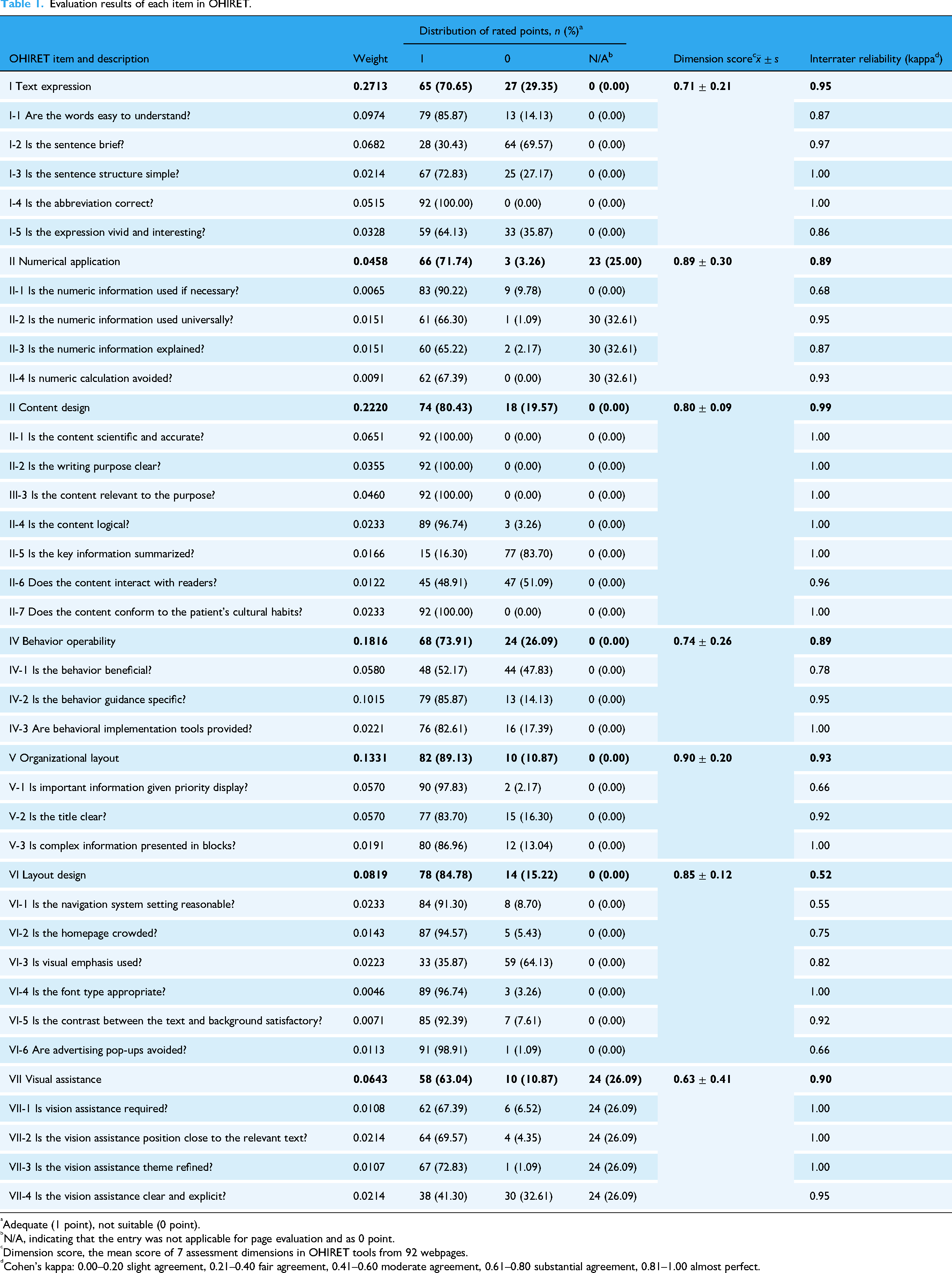

The OHIRET is mainly used to evaluate website-based health information, which primarily consists of text information supplemented by other information presentation forms. The final evaluation index system comprises 32 items across 7 evaluation dimensions: (1) textual factors: text expression (5 items, weight 0.2713), numerical application (4 items, weight 0.0458), content design (7 items, weight 0.2220) and behavior operability (3 items, weight 0.1816); (2) nontextual factors: organizational layout (3 items, weight 0.1331), layout design (6 items, weight 0.0819), and visual assistance (4 items, weight 0.0643), whose details can be seen in the Table 1.

Evaluation results of each item in OHIRET.

Adequate (1 point), not suitable (0 point).

N/A, indicating that the entry was not applicable for page evaluation and as 0 point.

Dimension score, the mean score of 7 assessment dimensions in OHIRET tools from 92 webpages.

Cohen's kappa: 0.00–0.20 slight agreement, 0.21–0.40 fair agreement, 0.41–0.60 moderate agreement, 0.61–0.80 substantial agreement, 0.81–1.00 almost perfect.

The User Usage Guide includes detailed explanations of each indicator, criteria for scoring at all levels, and evaluation examples to ensure consistent understanding of the item by different evaluators. The scoring scale is “1 = fit, 0 = unfit” for an item score. When more than 80% of the content of a web page matches the characteristics of an item, the item is rated as “fit.” Some items are set to “N/A,” which means that the item is not suitable for evaluating the page, and “N/A” does not score. Since our scoring criteria is dichotomous, the corresponding calculation formulas are as follows:

where Dimension score is the average score for the corresponding dimension of all evaluation webpages; j is the total num of items for the corresponding assessment dimensions; num k is the total number of webpages with a corresponding item score of 1; sample is the total number of the evaluated webpages; Readability represents the total readability score for the evaluation article; Item(i) denotes the score corresponding to each individual item in the 32-item evaluation system OHIRET, where i ranges from 1 to 32 (i.e., I-1 to VII-4 in Table 1); W(i) represents the corresponding item weight factor. The research object is considered to have acceptable readability when Readability ≥ 0.7.

The readability evaluation process was as follows: the evaluation was conducted by two researchers (YZ and JL) who had mastered the use of relevant assessment tools. Two researchers randomly selected five web pages to use the evaluation tool to conduct a preevaluation experiment before the formal experiment, so as to ensure that the researchers' understanding of the evaluation tool was at a similar level in the subsequent formal experiments. In this study, Cohen's kappa coefficient proposed by Landis and Koch was used to test the interrater reliability, 33 and the reliability among raters referred to the degree of consistency when different raters rated the same group of evaluated objects. For items with a coefficient lower than 0.4, the two researchers engaged in in-depth discussions. If there was ambiguity about a certain evaluation criterion, they discussed specific examples and agree on a common interpretation. If the evaluators were still unable to reach an agreement on the scoring differences after discussion, a third researcher was invited to provide opinions, which were then used to make the final determination.

Pure text readability assessment

In order to evaluate the complexity of text factor objectively, a systematic analysis for text factor was also performed. Since foreign readability calculation formulas (e.g., Flesch–Kincaid, SMOG) were generally not applicable to readability calculations of Chinese text, linguistic analysis techniques were adopted to assess the factors of text readability, 32 which was more suitable for the Chinese text. The corresponding analysis steps were as follows:

Firstly, all articles were imported into Microsoft Word. The main text was preprocessed by removing non-Chinese characters, including Latin alphabets, Arabic numerals, and punctuation marks, so that only Chinese characters remained for analysis. Secondly, we performed word segmentation using Jieba (https:// github.com/fxsjy/jieba), an open-source and widely adopted Python library for Chinese text analysis.32,34 Jieba adopted a combination of dictionary-based methods and Hidden Markov Models to segment Chinese text into individual words, and supported part-of-speech tagging and named entity recognition, which were helpful for linguistic feature extraction. Thirdly, we used the official reference vocabulary from the Syllabus of Graded Words and Characters for Chinese Proficiency to classify words into five levels based on their language difficulty: (1) Grade A words: most frequently used and most basic Chinese vocabulary; (2) Grade B words: commonly used words of intermediate difficulty; (3) Grade C words: low-frequency or advanced vocabulary; (4) Grade D words: rare, technical, or classical Chinese terms; and (5) superclass words: vocabulary beyond the D grade, including newly coined terms, dialectal words, or foreign transliterations. These categories allowed us to quantitatively assess the lexical complexity of the texts. In our analysis, Grade C, D, and superclass words were considered difficult words. Fourthly, the total number of sentences of article was performed by counting special punctuation tokens that typically indicated sentence boundaries in Chinese texts, such as “.”, “!”, “?”, “……”. Finally, we calculated a series of textual factors to evaluate readability using the following formulas

32

:

where ASL is the average sentence length; word_num is the total number of words; sen_num is the total number of sentences; NS_100 is the average number of sentences per 100 words; dif_word_num is the total number of difficult words; NDW_100 is the number of difficult words per 100 words; and Difficulty is the difficulty coefficient of the text. These formulas were developed based on established principles in Chinese readability studies and adapted to accommodate Chinese-specific linguistic structures, such as word segmentation dependency and character-based complexity. 32 In general, a text is considered to have low readability if ASL > 16.7 and NS_100 < 6, or if Difficulty > 30.32,34

Statistical analysis

Corresponding data analysis was performed using Excel 2019 and IBM SPSS 25.0. Categorical data were summarized as frequencies and percentages, and continuous data were described as means with standard deviations. Cohen's kappa coefficient was used to express the interrater reliability of the OHIRET. Readability scores across regions and topics were compared using the rank sum test. Spearman's correlation analysis was carried out between the web page readability score and the text difficulty coefficient. Differences were considered statistically significant at p < 0.05.

Results

Online Health Information Readability Evaluation Tool scores

Totally, 92 articles were included in the survey. The readability score of 92 articles ranged from 0.49 to 0.98, with an average of 0.78 ± 0.10. The overall interrater reliability Cohen's kappa coefficient of the 92 articles was 0.94, and the interdimensional reliability Cohen's kappa coefficient ranged from 0.52 to 1. The evaluation results of each item in OHIRET are shown in Table 1.

The evaluation results about textual factors mainly related to four dimensions in Table 1. In the dimension of text expression, 28 articles (30.43%) used short sentences with an average sentence length of less than 40 characters, and 59 articles (64.13%) had vivid expressions. In the numerical application dimension, most articles (90.22%) used numerical information when necessary; while 61 articles (66.30%) used general numerical and unit information, 60 articles (65.22%) explained the numerical information. In the content design dimension, 15 articles (16.30%) reviewed the key information of the full text in the form of a summary, and 45 articles (48.91%) provided interactive forms for readers to obtain more information. In the behavior operability dimension, 48 articles (52.17%) analyzed the pros and cons of the recommended behaviors when introducing behavioral information.

Besides, for the evaluation results of dimension about nontextual factors, the average score of organizational layout was the highest at 0.90 ± 0.20 among all the dimensions; 90 articles (97.83%) provided the most important information at the beginning of the sections or created lists to attract readers’ attention; 80 articles (86.96%) used bullets or numbering to break down complex information into small parts to ensure ease of understanding for long information content; 77 articles (83.70%) used clear and concise titles or subtitles to distinguish between different topics, which could summarize the main points. In the layout design dimension, 33 articles (35.87%) used visual cues (such as arrows, bold, large font, and highlights) to emphasize key information. The average score of the visual assistance dimension was the lowest at 0.63 ± 0.41. Only 38 articles (41.30%) used visual aids with corresponding titles and high clarity.

Classification comparison of readability evaluation

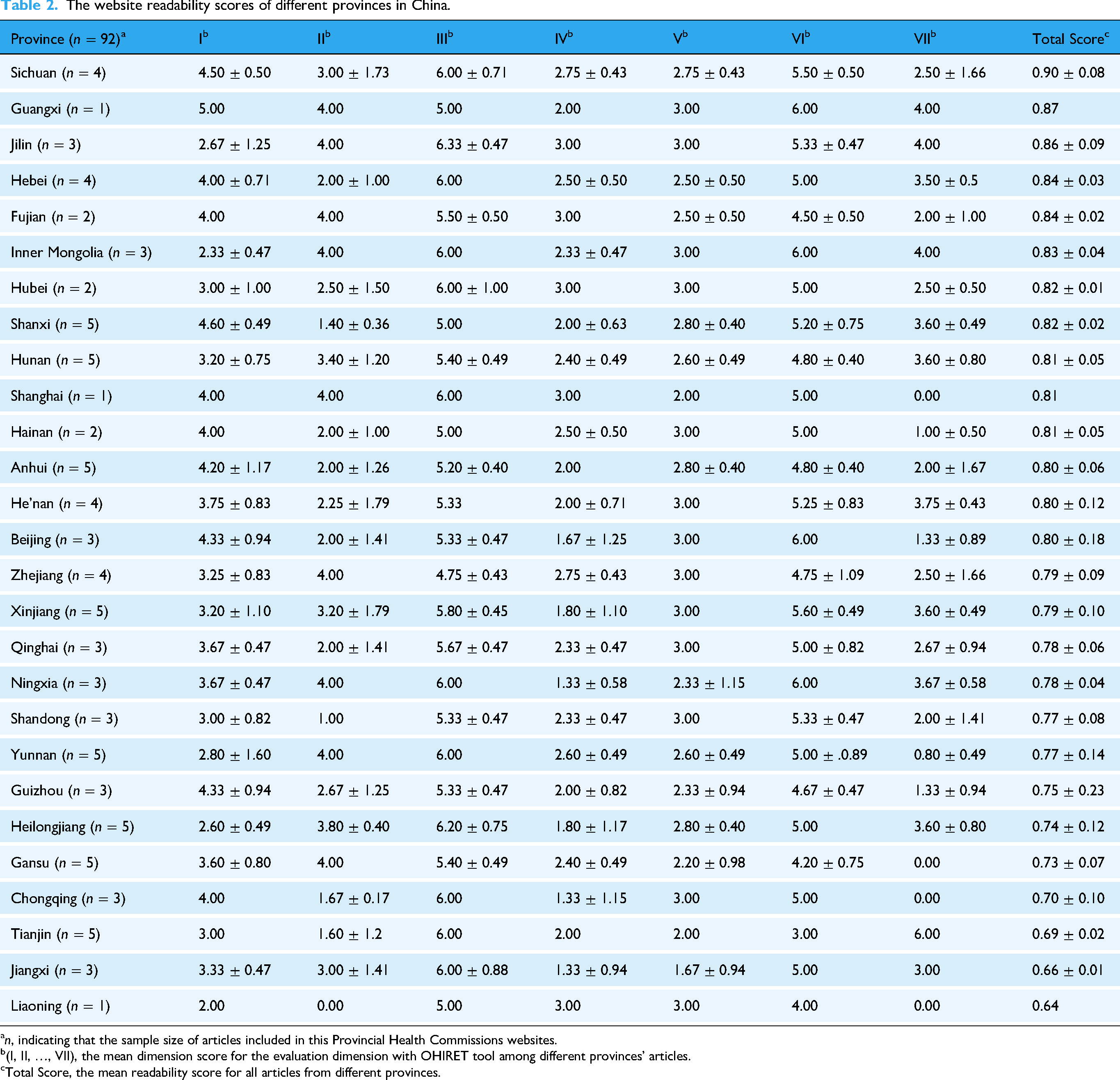

We classified the collected 92 articles according to the different provinces corresponding to their sources and systematically analyzed the readability average score level of the health commissions websites of different provinces in Table 2. Among the 27 Provincial Health Commissions websites, Sichuan Province had the highest score of readability 0.90 ± 0.08, and Liaoning Province had the lowest score of 0.64. Only three provinces (Liaoning, Jiangxi, and Tianjin) did not achieve a good level of readability, and there was no statistically significant difference in readability scores among the different regions (F = 4.404, p = 0.178).

The website readability scores of different provinces in China.

n, indicating that the sample size of articles included in this Provincial Health Commissions websites.

(I, II, …, VII), the mean dimension score for the evaluation dimension with OHIRET tool among different provinces’ articles.

Total Score, the mean readability score for all articles from different provinces.

Besides, the readability scores for websites based on different topics were also evaluated, as shown in Table 3. Among the 92 articles, 81.52% were on prevention knowledge and 18.48% were on vaccination knowledge. It showed that the readability score of COVID-19 prevention knowledge was higher than that of COVID-19 vaccination knowledge, but it was not statistically significant (F = 4.566, p = 0.992).

The readability scores for websites based on the topics.

n, indicating that the sample size of articles included in this information topic group.

(I, II, …, VII), the mean dimension score for the evaluation dimension with OHIRET tool information topic groups’ articles.

Total Score, the mean readability score for all articles about different information topic groups.

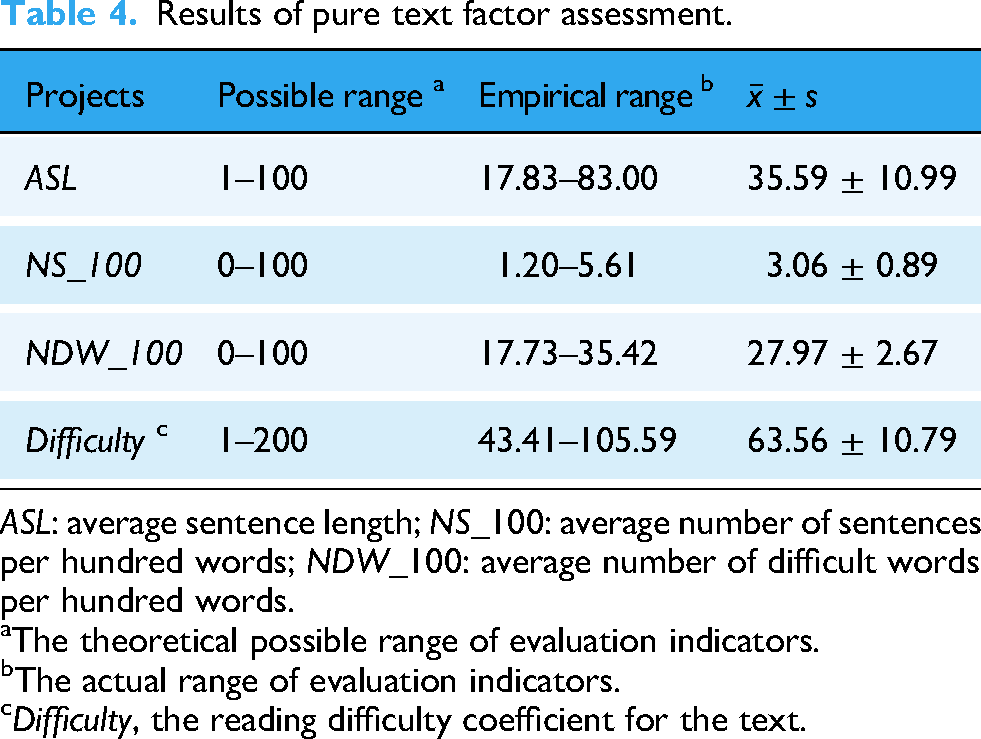

Pure text readability assessment results

Pure text factor assessment results are shown in Table 4. The analysis of the textual factor results showed that the average sentence length of articles on COVID-19 was 35.59 ± 10.99, the average number of sentences per hundred words was 3.06 ± 0.89, and the mean value of difficulty coefficient was 63.56 ± 10.79, which indicated that almost all articles had a certain level of reading difficulty coefficient. The correlation coefficient between the web page readability score and the text difficulty coefficient was −0.535 (p < 0.001).

Results of pure text factor assessment.

ASL: average sentence length; NS_100: average number of sentences per hundred words; NDW_100: average number of difficult words per hundred words.

The theoretical possible range of evaluation indicators.

The actual range of evaluation indicators.

Difficulty, the reading difficulty coefficient for the text.

Discussion

In recent years, the authenticity and readability of COVID-19 information have become the key to prevention and control. 35 In this public health event, a variety of health information comes one after another, and the public inevitably faces the problem of no way to verify the authenticity of information and conflicting information, which leads to the outbreak of “information epidemic.” 36 As the national public health department, the Health Commission is an important source for the public to obtain credible information and reliable guidance. This study was the first study to evaluate the readability of COVID-19 health information in Provincial Health Commissions websites in China from the perspectives of textual and nontextual factors.

The results of our study showed that the overall readability level of COVID-19 health information was above the average standard, but almost all articles had a certain level of reading difficulty coefficient, which still does not meet the recommended reading level.37,38 Similar results have been reported in other studies, the readability level of most COVID-19 health information released by WHO, the US CDC, and other health organizations is much higher than the recommended sixth grade reading level of patient education materials.35,38 This suggested that the health department failed to take into account the comprehension capacity of the general public when disseminating knowledge regarding COVID-19 prevention and control, which is likely to diminish the effectiveness of health education dissemination.

In terms of textual factors, the score of text expression dimension was 0.71 ± 0.21, just met the adequate standard. Most of the shortcomings of the included websites can be attributed to that 69.57% articles used long sentences with single punctuation at the end of the sentence, which was consistent with the results of the text factor assessment. Sentences in online health information should be short and punctuated to help the public understand it better. Additionally, most articles presented difficult medical information, such as the definition, etiology, and pathogenesis of COVID-19, which is not conducive to public understanding. The emergence of a large number of professional medical terms is also one of the reasons for the difficult Chinese materials in the assessment of text factors. 32.61% of the articles didn't use any numerical information, resulting in an unclear and abstract expression for readers. When analyzing the readability scores of COVID-19 health information in different provinces, we also found that none of the articles published in Liaoning province contained numerical information. While it was commendable that all the articles were scientific and correct in our study, 83.70% of the articles did not review or summarize the key information, which may lead to readers forgetting some key information. Reviewing key information points in previous chapters and providing examples and pictures to summarize content can strengthen people's memory of the key information. 39 At the same time, 51.09% of the webpages did not have any interactive content. Gemma et al. believed that increasing interaction between readers and content can motivate them to change their behavior and participate in health decision-making, 40 and improve readers’ sense of participation and learning motivation. 41 In addition, the score for behavior operability dimension was 0.74 ± 0.26, which did not meet the standard of excellence. The main problem was that some articles did not offer helpful guidance for readers. Studies have shown that beneficial and clear behavioral guidance can improve the self-efficacy of patients and encourage them to take effective measures to improve their health status. 42 When health information providers introduce behavioral information, it is important to analyze the pros and cons of the behavior and describe the specific steps, detailing how often a behavior must be performed and how long it takes.

In terms of nontextual factors, the score of organizational layout dimension was 0.90 ± 0.20, which indicated that most articles usually give priority to important information at the beginning, allowing readers to quickly grasp the main points of the article. However, there were some problems with this dimension, such as the lack of detailed and subheading guidance for complex content. Complex content can be broken down to bullet points, different topics should be distinguished by clear titles, and the titles should be objective and attractive to increase readers’ interest. For example, the online educational materials for psoriasis patients from the American Academy of Dermatology were well-organized, presenting information in a logical sequence with clear headings and no distractions, which can help readers identify and remember key information. 43 Compared to printed materials, text presented in electronic format is more flexible and has advantages in terms of layout adapted to different users’ needs, 44 which is also the main reason for the high score in layout design dimension. The study found that the biggest advantage of the health information website published by the Health Commission platform was that there were no advertisements and pop-up Windows, and the clear navigation was set up, which can help readers get the information they need faster. However, 64.13% of webpages lack visual emphasis (such as bold and highlights), making it difficult for readers to access important information quickly. Besides, visual assistance dimension had the lowest score due to the low clarity of the picture, the lack of explanation in the table, and the absence of captions and titles in the graphics. The results showed that the most prominent problem with the COVID-19 health information released by four Provincial Health Commissions (Shanghai, Gansu, Chongqing, and Liaoning) was that all the articles did not use any visual assistance. Studies have shown that long-term reading of pure text information can affect readers’ attention and execution ability,45,46 and even cause reading fatigue. 47

Limitations

The current study has some limitations. First, the findings pertain solely to the COVID-19 pandemic situation on websites before August 2023. Owing to the dynamic nature of websites, web search results evolve with the progression of the pandemic and the updates released by the Health Commission regarding COVID-19-related information. Second, OHIRET is specifically designed to evaluate textual health information supplemented by other forms of presentation. Therefore, video-based health information was not included in our study. Our team has developed a quality evaluation index system for short videos of health science popularization and we will conduct the popular science video quality assessment about COVID-19 in the future. Third, the metadata and machine readability are also important for digital health information evaluation, which are ignored at present. These factors directly affect the difficulty of users accessing or retrieving information and significantly impact accessibility of health information.

Conclusion

In this paper, the readability of COVID-19 health science information on the official websites of 27 Provincial Health Commissions in China was evaluated by the OHIRET from the perspectives of textual and nontextual factors. From the assessment results, while the readability level demonstrated an acceptable standard, the visual assistance dimension had the lowest score and became a common deficiency among these official websites, and the text factor assessment also indicated that almost all articles had a quite high level of reading difficulty coefficient. Therefore, the readability of such COVID-19 health science information released by China's Provincial Health Commissions needs further improvement from both textual and nontextual factors. Firstly, the Provincial Health Commissions can provide professional training for health information providers with reference to the User's Guide of the OHIRET to enhance their capacity for improving health information readability, 48 such as using short sentences with punctuation, providing specific behavioral guidance with clear visual assistance, and utilizing question-and-answer formats. Secondly, an easy-to-use online plain language tool for Chinese written health information is expected to be developed to help users simplify health information and apply health literacy guidelines—such as the Sydney Health Literacy Lab Health Literacy Editor, a tool adapted for English health information as reported. 49 These targeted approaches will further enhance the effectiveness of health communication and address the critical need to bridge the gap between expert knowledge and public understanding.

Footnotes

Acknowledgements

The authors gratefully acknowledge all the participants for their contributions to this paper.

Informed consent

All participants provided written informed consent prior to enrolment in the study.

Contributorship

YZ led the analysis and writing of this paper. YZ and JL led data collection and data analysis. LJ and FL participated in revising the manuscript. QC, CC, and SL provided subject matter expertise. LX led the conceptual development of the study, provided subject matter expertise, and was heavily involved in revising the manuscript. All authors reviewed the manuscript and approved the publication of manuscript.

Funding

This work was supported by the Key Projects of Natural Science Research in Colleges and Universities in Anhui Province (KJ2021A0255) and Anhui Medical University School of the 2024 Nursing Postgraduate Youth Program Cultivation Project (hlqm12024017) and Provincial Innovation and Entrepreneurship Training Program for College Students (S202410366075).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Availability of data and materials

The datasets supporting the findings of this article are available from the first author Yuxi Zhang.

Appendix

Due to the frequent changes in both the content and layout of the dynamic interface on the official website of the Provincial Health Commission, there may be discrepancies between the subsequent interface displays and the actual ones. Therefore, we have selected the official website of the Shanghai Health Commission for the relevant introduction of its content and layout, and the corresponding retrieval date is 5 September 2025.

The articles regarding on COVID-19 released by Shanghai Health Commission website were under “Convenient services/Disease prevention and control/Infectious disease prevention and control.” After accessing the official website's homepage (https://wsjkw.sh.gov.cn/) (Figure 2), the public can enter the actual COVID-19 information article section by clicking on the “Convenient services” section, then the “Disease prevention and control” section (Figure 3) and last the “Infectious disease prevention and control” section (Figure 4) in sequence.