Abstract

Objective

This scoping review reports on findings of recent studies which assess the methodologies, significant features, and machine learning (ML) models employed in wearable-based panic attack (PA) research.

Background

PAs affect a significant percentage of people worldwide and can induce rapid heartbeat, sweating, and trembling in affected individuals. They are often unpredictable and can seriously affect day-to-day activities and functioning. The integration of wearable technology with advanced ML methods has been successfully used in identifying and managing several health conditions. Despite significant advancements, the predictive capabilities of these tools to detect PAs remain less understood.

Method

A comprehensive search of databases including PubMed, PsycINFO, Embase, and Google Scholar identified seven studies focusing on PA prediction using wearable devices.

Results

These studies employed a range of ML models, such as supervised anomaly detection, deep learning (e.g. LSTM, RNN), random forests, and mixed regression models. The studies analyzed physiological metrics like heart rate (HR) variability, respiratory rate, and activity levels. Accuracy rates varied, with models achieving between 67.4% and 94.8% predictive accuracy.

Conclusion

Findings show the utility of combining psychological, physiological, and environmental data for improved predictions, and highlight the key data features, such as resting HR, heart rate variability, and certain sleep metrics that may help predict the onset of PAs. However, most of these studies have impractical prediction time frames of PAs with limited evidence of successful near-real-time prediction, highlighting the need for further research to predict the onset of PAs in real time.

Introduction

The World Health Organization estimates that 1 in 8 people worldwide suffer from a mental disorder, making mental health a major global concern. 1 Specifically, panic attacks (PAs), which are a form of “sudden, intense feelings of fear” impact around 2–3% of the population in the US for example, and up to 35% of the population are likely to experience one at some point in their lives. 2 These unexpected, strong episodes of anxiety or discomfort frequently manifest as heart palpitations, sweating, dizziness, or shortness of breath. 2 If left unchecked, they can cause social distancing, fear of certain places, and, in extreme situations, agoraphobia, which can seriously affect day-to-day activities and functioning.3,4 Usually, medication, cognitive-behavioral therapy, and lifestyle changes are used to treat PAs after they occur but anticipating the occurrence of these PAs may help patients better plan their daily activities and avoid certain PA triggers when at risk. However, predicting these panic episodes has not yet been adequately validated with the current technology available.5,6

Wearable devices have advanced significantly in recent years allowing the detection and real-time monitoring of various health conditions such as hypoglycemia and hypertension.7,8 Devices such as smartwatches and fitness trackers are capable of monitoring changes in physiologic metrics such as skin conductance, heart rate (HR), physical activity levels, and sleep patterns. 9 These devices offer several advantages over traditional laboratory-based assessments. Unlike stationary laboratory systems that restrict mobility, wearable's enable unobtrusive collection of behavioral and physiological data in the participants’ natural environments, enhancing real-world validity. 10 This is particularly important for panic disorder (PD) research, as PAs often occur spontaneously outside of controlled laboratory settings, 11 typically at home especially during sleep or rest, in public places, at work or during physical activity. 12 Wearable devices also facilitate remote monitoring and longitudinal study designs, reducing participant burden while allowing continuous data collection across weeks or years.13,14 Additionally, they are more cost-effective as compared to traditional laboratory systems, enabling scalability without the need for costly facilities or staff supervision. 15 Recent research has explored the potential of wearable technology to monitor mental health and alleviate psychological distress. In their evaluation on the use of wearable sensors for mental health monitoring, Sadeghi 16 demonstrated how these devices can effectively detect PTSD-related hyperarousal events in real-world settings. Similarly, wearables were shown to promote mental health by lowering psychological discomfort through encouraging increased physical activity, better self-care, and improved health perception. 9 These results point to the growing importance of wearable technology in treating mental health issues, and open new avenues to investigate how well wearables can inform their users of the onset of PAs.

With increasing research on the utility of wearable technology in the healthcare field, and despite recent developments in machine learning (ML) and wearable technologies, to our knowledge, there are no published reviews on the use of these wearables to predict PAs. Therefore, we conducted a scoping review of the current research approaches to address this gap and propose a practical framework for PA prediction. The review specifically aimed to answer the following questions:

- What data features predict a PA? - How effective are wearable devices in predicting PAs using physiological data? - What are the common ML models employed for PA prediction? - What challenges exist in using wearables to predict the onset of PAs?

Methods

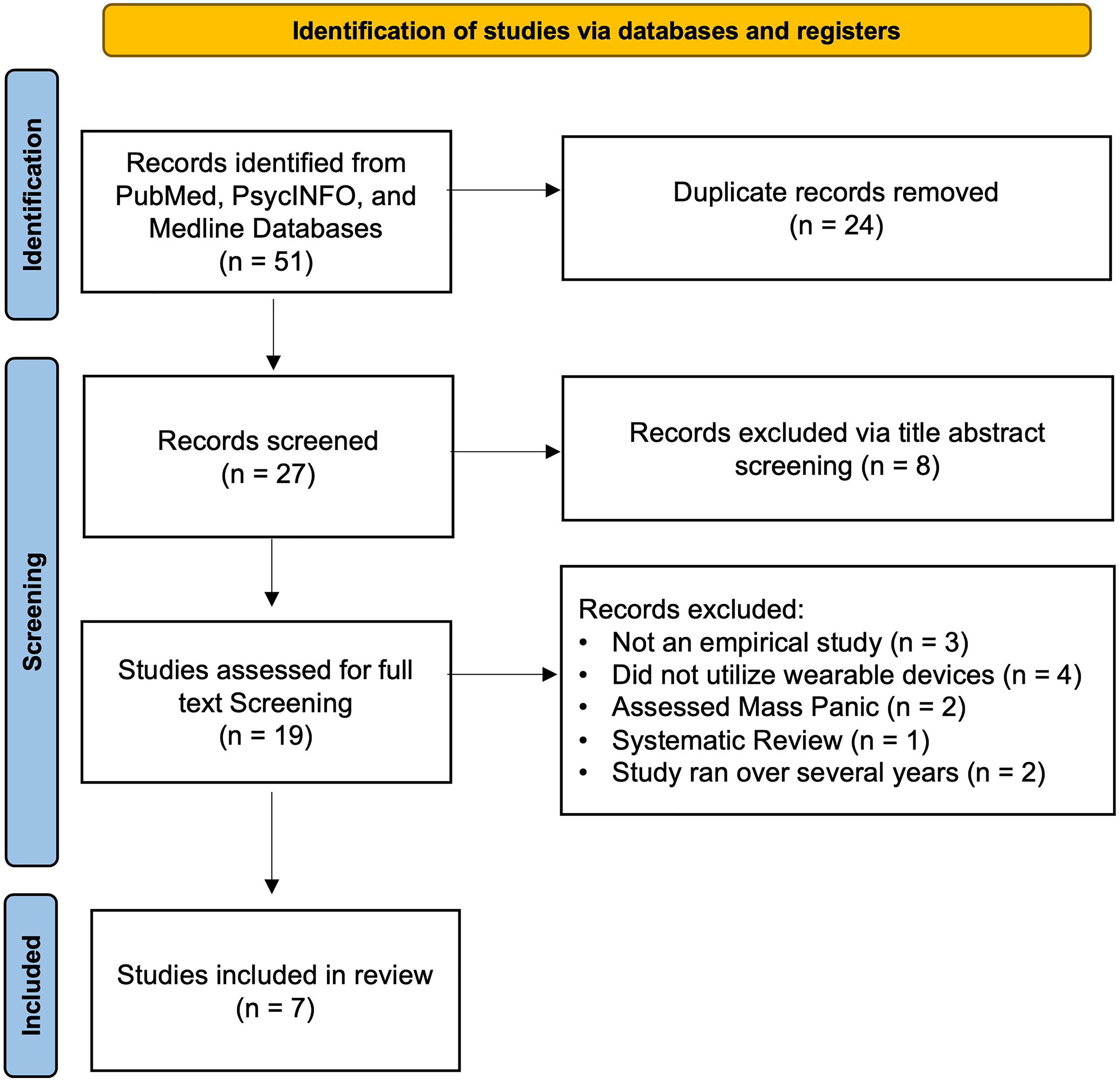

The research team followed the Preferred Reporting Items for Systematic Reviews and Meta-Analyses guidelines represented in Figure 1 below. 17

Prisma diagram.

Literature search and inclusion criteria

This review assessed studies that focused on the prediction of PAs using wearable devices and ML and was conducted between September and December 2024. The methodology utilized three databases: PubMed, PsycINFO, and Medline, and there was no restriction on the publication date. Two researchers collaborated on the screening process, guided by predefined inclusion and exclusion criteria.

The inclusion criteria comprised as follows:

Empirical studies published in English. Studies focus specifically on PAs. Studies utilizing wearable devices (e.g. smartwatches, fitness trackers) for data collection. Research that used ML or deep learning models to predict PAs.

The exclusion criteria were follows:

Review articles Abstracts, editorials, or other partial academic articles.

The following keywords were used in the search: (“Panic attack prediction” OR “panic attacks” OR “anxiety attack prediction”) AND (“wearable devices” OR “wearable technology” OR “smartwatches”) AND (“machine learning” OR “deep learning” OR “predictive modeling”). In the search process, Boolean operators and quotation marks were employed to capture variations in terminology and ensure the scope was sufficiently broad to include all relevant studies.

Screening procedures and extracting themes

The search yielded 51 studies across the four databases. After removing duplicates (n = 24), 27 articles remained for screening. Using Rayyan.ai, a web-based systematic review tool, two researchers KA and JH independently performed the title and abstract screening, guided by the inclusion and exclusion criteria mentioned earlier. The Cohen's kappa was calculated after the initial screening to assess inter-rater reliability and was found to be a value of 0.59, indicating substantial agreement. Discrepancies between the reviewers were resolved by the third reviewer KZ.

Based on the title and abstract screening, 10 articles were excluded for not meeting the inclusion criteria. The remaining 19 articles moved on to the full-text screening phase. During full-text screening, both researchers read and assessed the articles, ensuring that the studies met the inclusion and exclusion criteria. After full-text screening, seven articles were included in the final review and were assessed to extract the data features analyzed, the ML algorithms employed and the corresponding accuracy, highlight the devices used in the study, and thematically code any issues common across the reviewed studies.

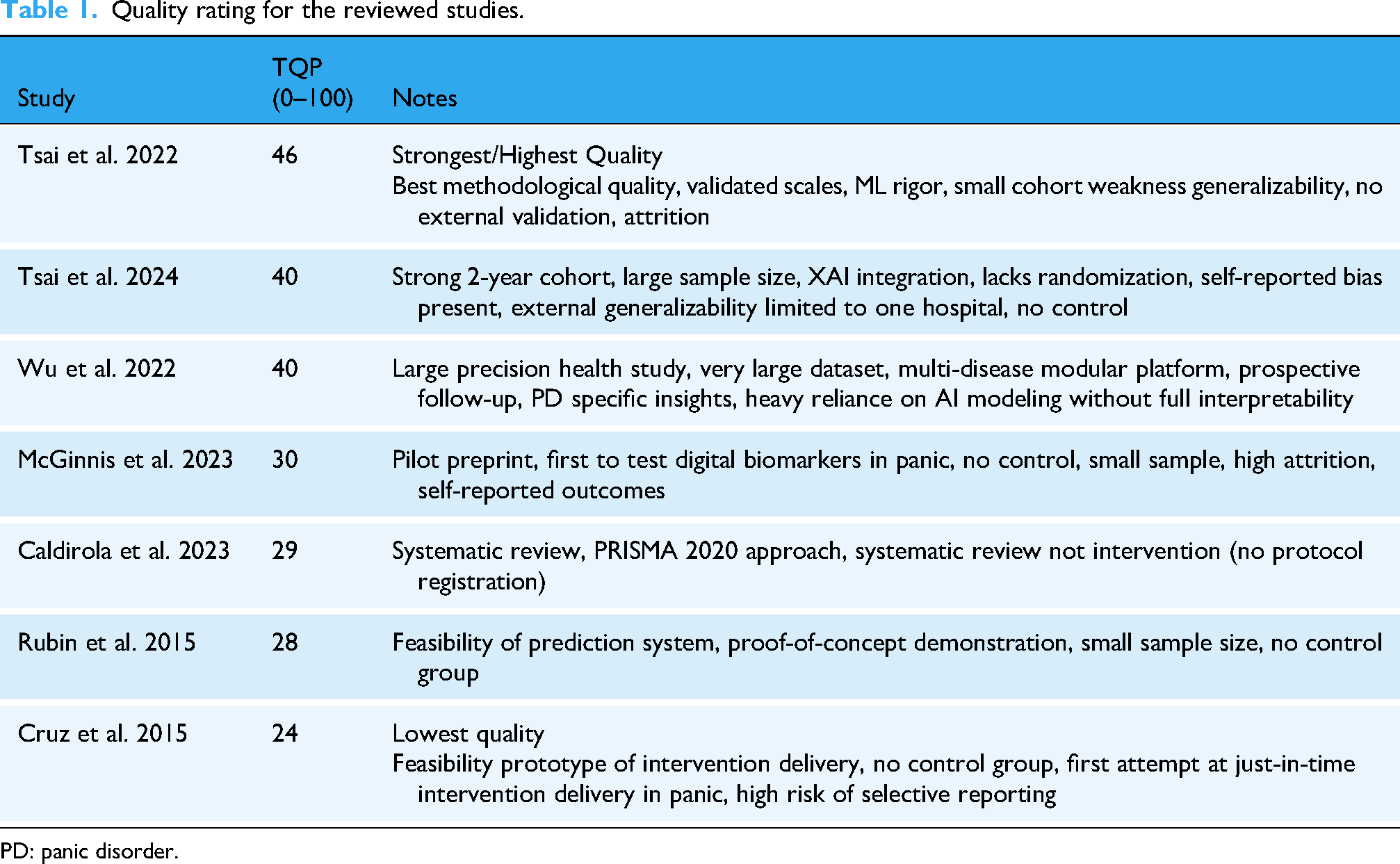

After finalizing which studies were included in the review, those studies were assessed for quality based on the Quality of Study Rating Form (QSRF), a universally standardized instrument commonly applied in evaluation research to synthesize and assess the studies’ quality. The QSRF enables systematic rating of key features such as treatment effect size, characteristics of interventions, and participant profiles. 18 It facilitates consistent evaluation of methodological rigor and the identification of important study parameters across multiple studies as seen in Table 1.

Quality rating for the reviewed studies.

PD: panic disorder.

Results

The results were split into three themes revolving around data features collected and devices, ML models employed, and participant issues and challenges.

Devices and data features

Across recent efforts to predict PAs using wearable technology, a range of devices and data features were utilized. Both Cruz et al. 19 and Rubin et al. 11 used the Zephyr BioPatch, Patch (Zephyr Technology– Annapolis, Maryland), a chest-worn device, to anticipate panic episodes in real time and offer prompt treatments, such as guided breathing exercises. It collects key physiological features including HR, respiratory rate (RR), heart rate variability (HRV), core body temperature, and physical activity. These raw physiological signals were analyzed and processed into engineered features such as mean, standard deviation, and change points. Pre-panic periods were generally characterized by lower HRV and higher HR, breathing rate (BR), and temperature, making them the most informative features with 93.8% precision. However, the study was conducted on a small sample size (n = 7), and no follow-up was performed to validate these initial findings on real-time prediction.

Similarly, Caldirola et al. 10 utilized the Zephyr Bio Patch in their pilot study to evaluate its accuracy in PA assessment, by comparing its measurements of HR and BR against those obtained from a gold-standard system (Quark-b2). The Bio Patch demonstrated promising but inconsistent accuracy, particularly for BR measurements. The study underscored challenges in using wearable devices for respiratory assessment and highlighted the need for methodological precision when deploying such tools outside lab environments.

McGinnis et al. 20 leveraged the Apple Watch to passively collect physiological and environmental data including resting heart rate (RHR), HRV, RR, ambient noise level and physical activity (steps, distance, stair flights all combined into an “Activity” score) using an Ecological Momentum Assessment (EMA) style of reporting, whereby participants report the occurrence of PAs or log their symptoms in real time via a phone app or wearables. RHR and ambient noise showed statistically significant associations with increased likelihood of next-day PAs. The study found that both a 1 Beat Per Minute (BPM) increase, and a 5 BPM decrease in RHR from an individual's average more than doubled the risk of a next-day PA from 9% to 23% and 9% to 19%, respectively. Additionally, elevated ambient noise levels were associated with an 80% increase in risk of a PA.

Next, Tsai et al.13,14 conducted a longitudinal study using the Garmin Vivosmart 4 smart watch (Garmin – Olathe, Kansas, USA), selected for its capacity to capture continuous HR metrics, HRV, physical activity, and sleep metrics including total sleep duration and general sleep patterns in naturalistic settings. These were combined with environmental factors such as air quality index (AQI) and psychological questionnaires namely Beck's Anxiety Index (BAI), 21 Beck's Depression Inventory (BDI), 22 and State Trait Anxiety Inventory (STAI). 23 Prediction accuracy was enhanced from 77.1% accuracy using questionnaires alone, and only 67.4% accuracy using lifestyle and environmental data alone, to 81.3% accuracy when all factors were combined. Additionally, the study highlighted that adequate sleep and physical activity provide a protective effect against PAs.

The former finding is consistent with Wu et al. 24 , which used a modular system that integrated data across multiple commercial devices including Fitbit (Fitbit, now owned by Google – Mountain View, California, USA), Garmin, Apple, Oura Ring (Oura Health – Oulu, Finland), and Asus (ASUS – Taipei, Taiwan). From these wearables, they collected diverse physiological features including HR, SpO2, HRV, sleep stages, and physical activity (steps, floors climbed). Environmental data, including AQI, were obtained via open APIs. Additionally, psychological questionnaires such as BDI, BAI, STAI, PD severity scale 25 and MINI 26 were utilized to collect psychological features. The prediction model achieved its highest performance when all features were integrated, with accuracy rising from 83.1% using only psychological state and sleep metrics to 88.5% when all combined.

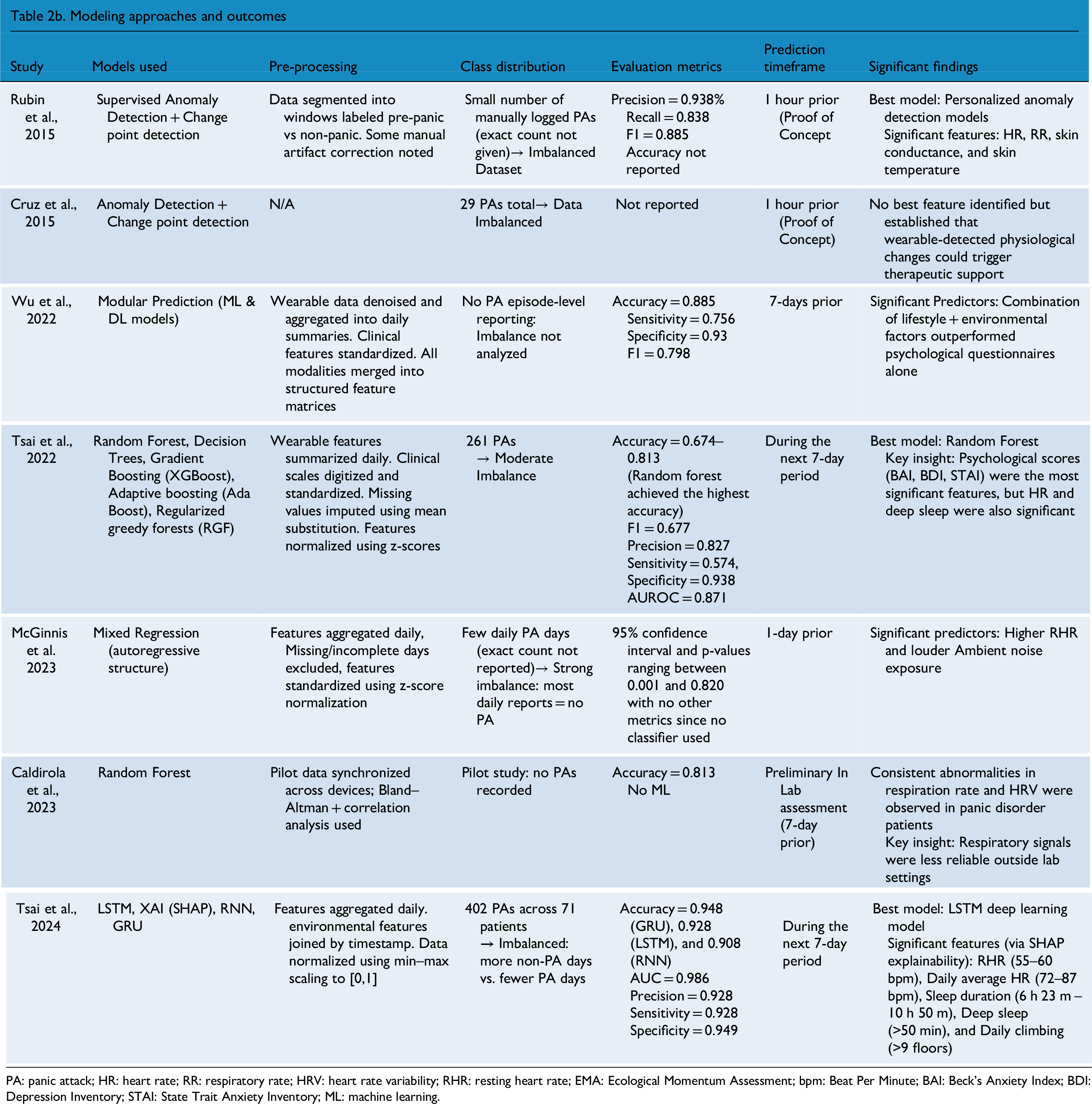

A summary of the key findings from each study can be found in Table 2a and 2b which are split to encompass Study Characteristics and Modeling Approaches & Outcomes respectively. Additionally, a summary of devices used, and their benefits, can be found in Table 3.

Key findings from each study included in the review.

PA: panic attack; HR: heart rate; RR: respiratory rate; HRV: heart rate variability; RHR: resting heart rate; EMA: Ecological Momentum Assessment; bpm: Beat Per Minute; BAI: Beck's Anxiety Index; BDI: Depression Inventory; STAI: State Trait Anxiety Inventory; ML: machine learning.

Comparison of device characteristics across studies.

Most studies reported data at an aggregated level, whereas Caldirola et al. (2023), Rubin et al. (2015), and Cruz et al. (2015) employed continuous raw data, preserving the full signal.

HR: heart rate; RR: respiratory rate; HRV: heart rate variability; RHR: resting heart rate.

Machine learning models

Across six out of seven reviewed studies, both classical ML and deep learning (DL) approaches were employed to predict PAs using physiological, behavioral, and environmental data collected via wearable devices and psychological questionnaires.

First, Cruz et al. 19 and Rubin et al. 11 , similarly built personalized models using wearable data, relying on supervised anomaly detection via Gaussian probability models tailored to each subject. This model used Gaussian probability distributions to create personalized profiles, fitting the density to non-panic windows and classifying outliers below a learned threshold as pre-panic, designed to trigger in the moment mobile interventions. Additionally, they adjusted leave-k-out evaluation ensuring every positive instance is tested and reported macro averages alongside micro to avoid large-class dominance at the evaluation stage. They achieved an accuracy of 0.938, a precision of 0.938, a recall of 0.838, an F1 score of 0.885, and demonstrated strength in handling data imbalance (17 pre-panic and 280 non-panic windows) as well as emphasized early-stage feasibility.

Next, McGinnis et al. 20 employed a per feature Mixed Regression Model with an autoregressive covariance structure, using physiological data (e.g. HRV, RHR, and RR) to predict the likely hood of next-day PAs. The model adjusted physiological parameters relative to each participant's unique baseline, effectively capturing day-to-day variations in PA risk. They faced moderate class imbalance between 16 and 24% panic days and therefore reported parameter estimates with 95% confidence interval and p-values ranging between 0.001 and 0.820 with no AUC, F1, precision and recall calculations since they didn't use a classifier.

Third, Wu et al. 24 employed modular prediction models that incorporated both ML and DL techniques including decision trees, random forests, and deep neural networks. Collectively, these models achieved an overall accuracy of 0.885, a sensitivity of 0.756, a specificity of 0.93, and an F1 score of 0.798 in predicting acute exacerbations. Additionally, they passed external validation. These models, applied to various chronic conditions including PD, were trained on multimodal data from wearable sensors, lifestyle factors and environmental inputs, reinforcing the value of multimodal lifestyle-environmental integration. The model handled class imbalance (386 out of the 1667 detected as abnormal episodes) by upweighting the minority class using “Keras” class weights to increase sensitivity. Additionally, re-sampling was done to mitigate a “disparate ratio of abnormal events” without reporting exact per-class counts for the dataset. Similarly, Tsai et al. 14 used six ML models: Random Forest, Decision Trees, Linear discriminant analysis “LDA,” AdaBoost, XG-Boost, and Regularized Greedy Forests. They achieved the highest accuracy with Random Forest at 81.3% with an F1 score of 0.677, a precision of 0.827, a sensitivity of 0.574, a specificity of 0.938, and an AUROC of 0.871 using features such as HR, sleep duration, and standardized anxiety scores from BAI, BDI, and STAI. Nonetheless, they reported a moderate class imbalance showing 35.1% PA vs 64.9% non-PA in the training set and 34.2% vs 65.8% in the testing set. Seven days of physiological data were backfilled to align with participants’ weekly label, and models were trained to predict whether a PA would happen in the upcoming week. Their later study Tsai et al. 13 applied LSTM, RNN, and GRU models. As a result, LSTM achieved an accuracy of to 92.8% for a 7-day prediction window with an AUC of 0.986, a precision of 0.928, a sensitivity of 0.928, and a specificity of 0.949. Six days of time-series data were used to predict the label on the 7th day. However, this 7-day label still reflected whether a PA had occurred at any point during the week. Hence, the models signaled risk within the next 7-days, without specifying whether the attack would happen the following day, 3 days later, or exactly on the 7th day. Similarly, they reported a moderate class imbalance for the PA label with about 37% positive in both the training and testing sets. Evaluating F1, recall, precision, and AUC alongside accuracy reduces bias from class imbalance. Nonetheless, they integrated SHAP to elucidate the most influential features, identifying optimal sleep, and activity thresholds to mitigate PA risk. A summary of the accuracy of some of these models mentioned in the studies reviewed can be found in Figure 2 below.

Panic attack prediction accuracy across various ML models.

Participant issues

Gender imbalance and dropout rates were a common issue across most studies dealing with PAs. Caldirola et al. 10 reviewed seven different studies before conducting their experiment and found varying participant demographics. For instance, one study they mentioned recruited 26 healthy controls and 26 patients with PD, with women constituting 85% of the PD group and 77% of the healthy controls. Across all their studies, women were the majority constituting 59% to 85% of the samples. Tsai et al. 14 recruited 59 participants with a primary diagnosis of PD, 61% of which were females, but excluded 974 data points due to missing environmental or physiological data. They later conducted a follow-up study in 2024, starting with 114 enrolling participants, out of which only 99 completed the study (58.6% were females).

Wu et al. 24 involved 1667 participants having various chronic diseases including PD. Over a 24-month follow-up, 386 abnormal episodes were explicitly identified. McGinnis et al. 20 , started initially with 107 participants; however, only 87 completed the daily survey, and only 38 uploaded apple watch data, 79% of which were females. Moreover, high dropout rates were noted, in which 35 participants were withdrawn after the first week, 25 after the second week and 1 after the third week. Finally, Cruz et al. 19 and Rubin et al. 11 both included 10 individuals who suffer from PD. In Rubin et al.'s. 11 study, 7 active participants remained after excluding those who didn’t log any physiological data (Initial cohort included: 5 females, 1 male and 1 trans-male).

Discussion

Given that no reviews have been published on the use of wearable devices to predict PAs, this manuscript synthesizes the key findings from studies attempting the prediction of PAs using wearables. Below, we comment on the data features, devices, and ML algorithms employed to serve this purpose, while addressing the challenges, implications, and ethical issues pertinent to these interventions.

Data features and devices

Conducting research with wearables requires careful attention to several important factors, given the variety of devices utilized in literature and on the market. In fact, the selection of a device is critical since it affects what data features are analyzed, the data's accuracy, as well as the user experience with the wearable. Although device choice and features varied, several insights emerged.

Among the reviewed devices, the Apple Watch and Garmin Vivosmart 4 offered strong usability and reliable tracking of behavioral and physiological trends such as RHR, sleep patterns, and ambient noise.32,33 However, both lacked ECG-grade precision, with the absence of measurements of HRV during the day, and low accuracy in inferring what sleep stage a person is in. In contrast, the Zephyr BioPatch delivered the most physiologically precise data particularly for HR, HRV, and RR due to its chest-worn ECG-grade sensors. 34 However, its bulky design, susceptibility to motion artifacts, and lower comfort and wearability may limit scalability and compliance with the study. 35 Overall, consumer-grade wrist-worn devices may provide greater comfort and user adherence, while clinical-grade chest sensors offer greater depth at the expense of long-term feasibility.

Accordingly, we propose that Garmin Vivosmart 4 seems to offer an optimal balance for PA prediction, as it combines acceptable physiological tracking with strong usability in real-world settings. Our findings suggest its predictive performance improves when integrated with psychological and environmental data. 6 Additionally, it stands out as the most affordable device among those evaluated. 36 In terms of battery life, we note that the Garmin Vivosmart 4 supports up to 7 days of continuous use, making it particularly suitable for long-term monitoring. 36 The Zephyr Bio Patch supports short-term high-fidelity assessments for up to 36 h of use but requires more frequent charging. 37 Similarly, the Apple Watch SE provides the shortest battery life up to 18 h, which may limit its utility for continuous tracking. 38 Researchers must weigh the different limitations and benefits of each watch as they choose the optimal one for their study. Other devices can also be validated in terms of PA prediction, such as the Fitbit, which is affordable, supports a long battery life, and can be considered an everyday smartwatch.

Regardless of the device used, our results indicate that key predictive features included HRV, RHR, sleep quality, and scores from validated psychological scales. Even though HRV proved to be the most informative physiological feature, as it's sensitive to stress-induced shifts preceding PAs.22,23 HRV alone did not outperform psychological features as a standalone predictor. Most watches are unable to measure HRV during the day and only provide this feature during sleep. Alternatively, sleep quality seems to be an interesting feature to further investigate due to the correlation between sleep stages and next-day PA vulnerability. 39

Meanwhile, data from psychological questionnaires (BAI, BDI and STAI) emerged as the strongest standalone predictors of a PA 1 week in advance, as they captured subjective emotional states closely aligned with panic vulnerability. However, seeing that psychological questionnaires require frequent user engagement which can be perceived as intrusive, we propose that models integrate multimodal data streams (physiological, psychological, and environmental). This approach can mitigate participant burden by increasing passive monitoring and enhancing overall robustness and accuracy as compared to single-feature models. Additionally, by leveraging personalization and adaptive sampling, models can learn individual patterns over time, increasing accuracy and ultimately reducing participant burden. 40

These key predictive features can be clinically contextualized to guide interventions. Reduced HRV and elevated RHR signify autonomic dysregulation, a state linked to heightened vulnerability to PAs.10,13 Interventions such as HRV biofeedback and paced breathing have shown efficacy in improving autonomic balance and reducing anxiety symptoms in other studies. 41 Poor sleep quality, indicated by fragmentation or reduced duration, reflects impaired recovery and greater next-day panic risk. 13 Cognitive-behavioral therapy for insomnia (CBT-I) and structured sleep-hygiene strategies are effective treatments that also mitigate anxiety. 42 Moreover, psychological burden scores (BDI, BAI, STAI) support stepped-care interventions including CBT, psychoeducation, and pharmacotherapy. 14 Together, these insights may illustrate how wearable-derived metrics can be translated into actionable interventions for panic prediction and prevention.

Machine learning

The LSTM deep learning model delivered the highest performance for predicting PAs over a short-term 7-day window. It captured temporal patterns and handled sequential physiological data well. However, it required larger datasets and external tools like SHAP for interpretability. Random Forest was the second-best performer in short-term PA prediction. Nonetheless, it was the top-performing traditional ML model, achieving high accuracy using physiological and psychological time-series data. It demonstrated high interpretability and robustness to over fitting, although some models showed limited sensitivity.

The modular prediction model bridged the gap between performance and practicality by combining the strengths of deep learning and ML in an explainable, multi-source architecture. It delivered high accuracy using only a few selected features, making it highly suitable for real-world deployment and personalized health monitoring. While it doesn’t quite match the peak accuracy of LSTM, it stands out as the most well-rounded and scalable approach for PA prediction.

As such we recommend using LSTM models when the primary objective is to maximize performance, particularly when rich sequential physiological data is on hand. However, for real-world deployment scenarios that require interpretability and integration of diverse data types (e.g. physiological, environmental), we suggest using modular prediction models which offer an optimal balance between accuracy, usability, and explainability. Meanwhile, random forest serves as a strong traditional ML baseline offering computational simplicity, robustness, and interpretability with reasonable predictive performance.

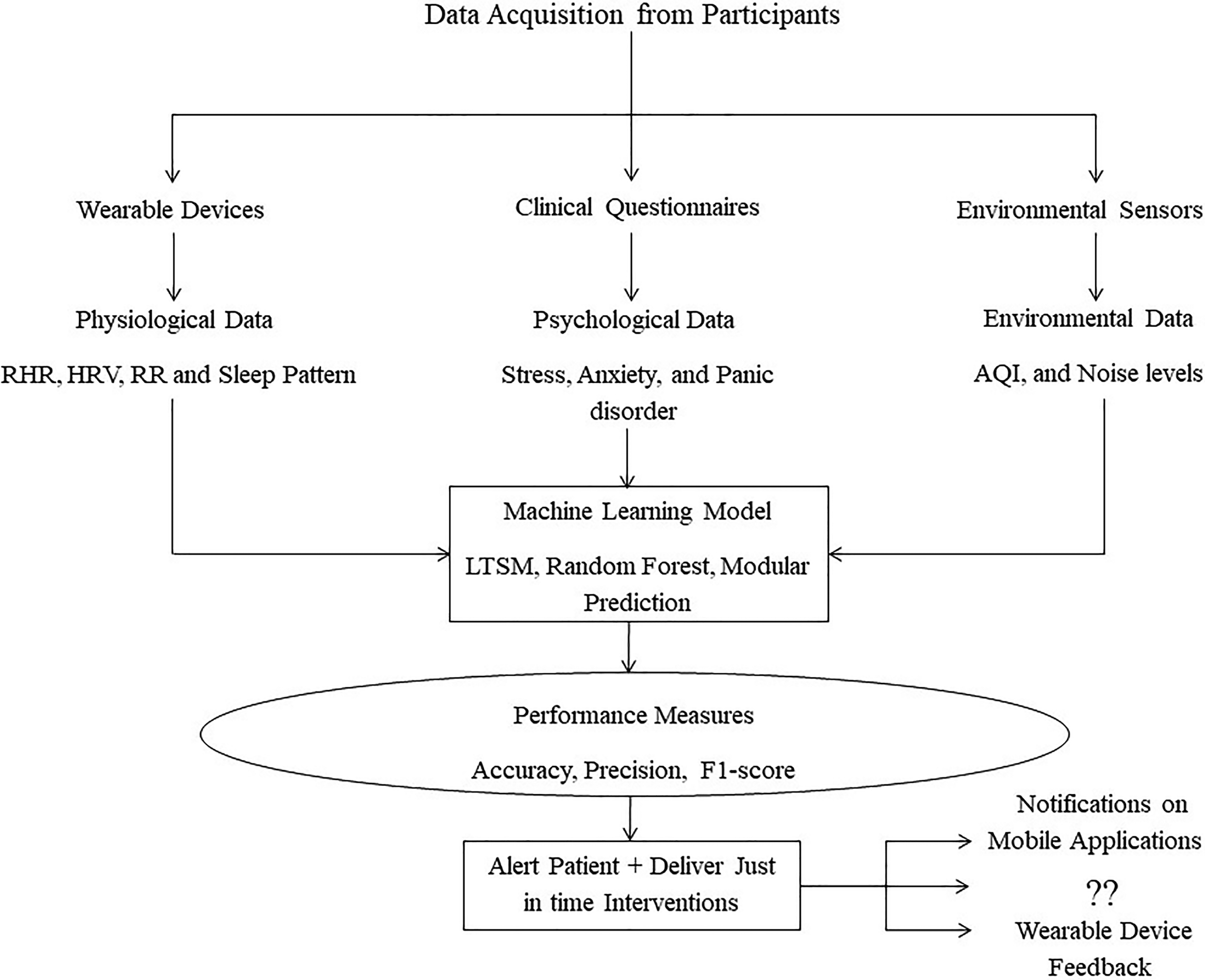

Based on these findings of data features, devices, and ML models, we propose a framework to aid in the design of a PA prediction study in Figure 3. The framework integrates multimodal data acquisition from participants through three main modalities: wearable devices, psychological questionnaires, and environmental sensors. Wearables provide continuous physiological data such as RHR, HRV, RR, and sleep patterns. On the other hand, psychological questionnaires capture psychological assessments related to stress, anxiety, and PD symptoms, while environmental sensors collect contextual data including AQI and noise levels. These data streams feed into a ML model potentially an LSTM, Random Forest, or modular ensemble, as these demonstrated strong predictive performance in our findings, tasked with predicting impending PAs. Model performance is evaluated using standard performance metrics such as precision, accuracy, and F1-scores. If thresholds are met, the system proceeds to alert the user and deliver just-in-time interventions either via notifications on mobile applications or delivering feedback directly through wearable devices. The framework also opens the door to broader possibilities, encouraging the exploration of other novel feedback mechanisms and inviting researchers and practitioners to envision how such interventions could evolve.

Proposed framework.

Predictive Timeframe: Weekly risk prediction in PAs, as seen in Tsai et al.13,14, offers clinical utility through closer monitoring and preventive strategies, aligning with real-world aggregated reporting and reducing daily fluctuation noise. However, this method sacrifices temporal precision, failing to pinpoint the exact day of an attack within the week which leads to ambiguous early warnings and potentially inflated performance metrics. This contrasts with other studies aiming to achieve more fine-grained predictions such as 1 hour or one day in advance.11,20 The lack of temporal precision limits personalized interventions, as precise critical periods within the week cannot be identified. Future research should focus on developing models capable of daily or at least near-real-time predictions for more actionable, just-in-time interventions.

Common study issues

Attrition

Research on wearable technology and PAs should address recruitment and dropout challenges and ensure adequate sample sizes for robust findings. Dropout rates in many studies ranged from 20% to 50%, influenced by a variety of factors. These factors may be due to the sensitive nature of the study population, as well as other issues related to technology, the hassle of completing frequent surveys, and the longitudinal nature of the study.10,13

These concerns highlight the need for better study design that accounts for the sensitive nature of participants and the need for better retention and recruitment tactics. First, how participants are referred to the study plays a big role in how serious they may be in completing the study. 43 Researchers must utilize various forms of recruitment (e.g. posters, direct email, physician referrals, counseling, or community centers) to attempt to recruit as many serious participants as possible. Researchers must also be understanding of the PA population, their concerns over participating in such a study, and attempt to address any barriers the participants may have on remaining in the study for the required period of time. Additionally, emphasizing the benefits of participating as well as scheduling regular reminders on the required tasks, and including elements of gamification and goal setting have been found to be important motivators to keep participants engaged and dedicated.44,45 Finally, early prediction of dropout using passive behavioral data such as time spent on tasks or device usage combined with self-reported feedback may enable the timely identification of at-risk users. 46 This enables researchers to customize support, such as reminders, nudges, or light coaching to stop disengagement before it starts.

Gender imbalance

In most studies reviewed in this paper, the majority of participants were female, primarily because females are more likely to experience forms of anxiety such as PA. 47 This indicates a need for achieving a higher representation for males in study samples to better understand the experience males undergo to manage their PAs . However, it seems that males are also more hesitant to participate or stay engaged in mental health studies, and this may be influenced especially by cultural and demographic factors.48,49 Recruitment can attempt to target more male participants by tailoring the recruitment material to males and incorporating gamification elements to align with male interests such as sports themes and goal-oriented progression to further enhance engagement among men. Male participants enrolled in the study may also help in a snowball approach to recruit additional male acquaintances into the study.

Outcome measurement and labeling

Most studies relied on self-reported PAs via EMA reporting of PA onset or symptoms in either real time or at fixed times, which is prone to recall bias, inaccurate timing, and inconsistent compliance.11,20 Participants may miss or misreport episodes, especially during distress, and even with real-time widgets, 19 alignment accuracy is limited by compliance and delays. Subjective interpretation further introduces inconsistent labeling, adding noise that undermines predictive validity in longitudinal studies.13,14

Study design and cohort size

Most studies were short-term pilots with small cohorts,10,11,19,20 which limits power and reproducibility. Even larger efforts such as Tsai et al.13,14, remain modest for ML, yielding proof-of-concept value but may be insufficient scale for robust, externally validated models.50,51 Recruitment challenges and difficulties of conducting a longitudinal study partly explain these tradeoffs. Additionally, both studies by Tsai et al.13,14 lacked clarity in how the data was split. The first study used temporal split without confirming participant separation, risking leakage, while the second study applied an 80:20 random split without maintaining chronological integrity. Rigorous time-aware methods may be essential to avoid inflated performance estimates.

Methodological and generalizability limitations

Several of the reviewed studies face methodological, ecological, and generalizability limitations. Many lacked transparencies in pre-processing and artifact handling, hindering replication.14,19,24 Ecological validity was also limited by controlled settings or healthy volunteers rather than clinical populations. 10 Generalizability is further constrained by single-country, outpatient cohorts, often in Taiwan or specific U.S samples.13,14,20,24 These issues underscore the need for transparent reporting, ecologically valid real-world data, and multi-site studies to improve external validity.

Ethical issues

The chosen articles addressed various ethical issues related to data privacy, digital literacy barriers, and predictive reliability that are discussed below.

Data privacy and confidentiality

Caldirola el al. 10 and Wu et al. 24 highlighted the importance of privacy when it comes to handling sensitive patient data. Wearable devices and mobile systems continuously collect and transmit environmental data, physiological data, physical activity data as well as patient location. For this reason, Tsai et al. 14 emphasized the necessity of utilizing and implementing strict data protection guidelines that guarantee confidentiality of patient information during collection and analysis to prevent it from being accessed by unauthorized parties. Privacy concerns must be handled seriously especially since it may affect recruitment and retention, especially in cultures where mental health stigma is particularly high. 52

Socioeconomic and digital literacy barriers

Aside from privacy, Wu et al. 24 stated that despite the promising potential of such technologies, not everyone would benefit from them equally. Wearable technologies such as smart watches may be expensive, thus preventing low-income population segments from accessing these devices. Additionally, digital literacy may hinder certain individuals, such as elderly people or underserved communities, from using such devices effectively, further widening the gap of healthcare accessibility. Communities interested in improving the management of PAs may subsidize and integrate wearable devices with their local services offered to individuals who may not be able to afford them, push insurance agencies to help cover costs of these wearables, and train affected individuals on using these devices effectively to manage their condition.34,35

Challenges in data uncertainty and predictive reliability

One of the articles reviewed posed another ethical concern revolving around the validity and accuracy of information gathered from wearables in real-world settings. 10 As compared to more controlled and stationary systems, the accuracy of commercial wearables’ physiological data such as stages of sleep, HR and BR may sometimes be low leading to incorrect interpretations and decisions. 53 Therefore, care must be taken when such algorithms are applied as it may potentially affect the quality of care provided and hampers people's trust in such technology.

Merits and advancements of reviewed studies

Across these studies, the main merit lies in advancing the conceptualization of PAs from unpredictable and spontaneous mental health events to conditions with identifiable physiological, behavioral, and environmental precursors. Feasibility studies by Rubin et al. 11 and Cruz et al. 9 were the first to demonstrate that wearable devices may predict PA episodes up to an hour in advance also and deliver in-the-moment interventions, reframing panic management from reactive treatment to proactive support. Later, McGinnis et al. 20 demonstrated a fully remote real-world feasibility study identifying that consumer wearables can yield digital biomarkers such as RHR and ambient noise that prospectively signal next-day panic risk.

Meanwhile, Tsai et al. 14 added critical contribution of multimodal modeling proving that combining environmental, physiological, and psychological data enriches predictive capacity and reframes PAs as outcomes of complex biopsychosocial interactions. Wu et al. 24 pushed this research further by embedding panic prediction within a precision health service, contributing a systems-level view that integrates panic management into chronic disease care. Tsai et al. 13 then extended this work by demonstrating that panic recurrence is not only predictable with high, accuracy but may also be impacted by daily behaviors like sleep and physical activity, situating PAs within a lifestyle framework. Collectively, these contributions have shifted the field toward viewing PAs as predictable, preventable, and digitally actionable, strengthening the foundation for precision psychiatry and early intervention.

Future work

While the potential of wearables to track physiological markers such as HRV, HR, sleep data, or BR has been shown in numerous studies, models that can reliably predict PAs before they happen are still in the early phases of development. First, the prediction timeframe of these PAs is still not well validated as mentioned earlier, and more research is warranted on defining what constitutes a practical prediction time frame within a naturalistic setting.

Second, phenotyping patients based on symptoms and demographics may be a necessary approach to incorporate when attempting to predict PA onset, yet this has not been incorporated in the studies we reviewed. Ohst & Tuschen-Caffier 54 noted that while individuals with PD often show heightened interoception and exaggerated physiological responses, this sensitivity varies widely among individuals and does not consistently predict the onset of PAs. Additionally, Jang et al. 55 emphasized that tailoring predictions to individual baselines and accounting for inter-individual variability in panic symptom patterns is essential for achieving optimal performance. Therefore, personalizing the prediction model by merging advanced ML models with a patients’ profile and symptoms may ensure a more realistic prediction model.

Third, once prediction has achieved adequate accuracy and a practical time frame, a need will arise to provide patient-friendly interventions. This would require an understanding of how the wearable technology might be used in conjunction with proactive intervention measures, such as automated breathing exercises or relaxation tasks, to help patients better manage the onset of panic episodes. 56 Work is in progress to interview physicians and PA patients to understand how to design such an intervention in conjunction with PA prediction via a commercial smartwatch.

Limitations

Our review had a few limitations, mainly because of our focus on the prediction of PAs using wearable devices. This led to a limited number of articles (n = 7) being included in the full review and possibly excluding articles that do not use a wearable device or are not solely focused on PAs . This reflects the fact that wearable-based research on PAs is still in its infancy, with only very few studies achieving adequate sample size and monitoring patient condition longitudinally. The limited number of studies across a variety of devices also limits our ability to conduct a systematic review of the existing literature to provide more generalizable findings. Second, we only utilized three common research databases namely PubMed, PsycINFO, and Medline. Third, we utilized specific keywords and search terms in the search and may have missed some other terminology that may be used. Lastly, the review only included articles published in English and did not critically assess the quality of the publication. Given, the limited research in this field, conducting a review at this stage was critical as it enables the synthesis of emerging findings and the identification of consistent features that may serve as preliminary indicators to guide hypothesis generation and help lay the groundwork for future large-scale research.

Conclusion

This paper highlighted the various devices and data features used to predict PAs with different levels of accuracy and prediction time frames. Our study also provided a comprehensive framework for conducting a study to predict the onset of a PA by highlighting the ML models, wearable devices, and data features recommended. Given the limited research in this area, future work should investigate phenotyping participants to improve prediction efforts, validate a near-real-time prediction algorithm, and implement strategies to reduce participant attrition and ensure balance gender representation. Additionally, studies should further investigate the correlation between sleep stages and next-day PA vulnerability, identify the ideal intervention to provide upon the detection of such a PA, as well as identify an approach that balances between device wearability and ability to record HRV during the day which is deemed critical for near-real-time PA detection.

Footnotes

Acknowledgments

The team would like to thank Samir Abdul Aal for his help in final editing and proofreading of this manuscript.

Ethical considerations

Not required.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Author contributions

KA and JH contributed to data curation, formal analysis, and writing—original draft; YH contributed to formal analysis, investigation, and writing—original draft; KZ contributed to methodology, project administration, conceptualization, supervision, and writing; HG contributed to supervision and writing—review & editing.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Vertically Integrated Project Program at the Maroun Semaan Faculty of Engineering & Architecture at the American University of Beirut.

Declaration of conflicting interest

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

N/A.

Guarantor

KZ.