Abstract

Objective:

This research aims to address the challenges of just-in-time adaptive interventions (JITAIs) in behaviour change by introducing an architecture that integrates both the tailoring of the message to the user profile and context, and the timing of the intervention by detecting the trigger of the behaviour.

Methods:

We designed a system that integrates trigger detection to determine optimal intervention moments and uses prompt engineering on a large language model (LLM) to give personalised support based on the detected trigger, the context, and personal information of the person. As a proof of concept, we applied this intervention to the domain of smoking cessation. We conducted an in-depth semi-structured interview with a domain expert to evaluate the correctness, relevancy and personalisation of the chatbot’s responses.

Results:

An expert indicated that the support given by the chatbot is correct, personal, and tailored to the trigger and circumstances. While some suggestions were provided to further enhance the chatbot, its current capabilities were deemed effective and acceptable as a supportive tool for smoking cessation.

Conclusions:

An LLM with prompt engineering can be used to create a chatbot that can react to a trigger in a personalised way. Integrating both trigger detection and a generative chatbot into a JITAI is possible while ensuring privacy of the individual’s personal information and circumstances.

Keywords

Introduction

The advent of large language models (LLMs) in generative artificial intelligence has opened up new possibilities for integrating LLMs into chatbot interfaces. In the field of behavioural change interventions, new research has shown that the incorporation of LLMs can positively influence engagement and provide better social support. 1 Bak and Chin 2 found that LLM-based chatbots effectively motivate users who are preparing for change and support those already in the process of implementing it. However, its ability to persuade users to implement the change was still insufficient. In addition, LLM-based chatbots supporting physical behaviour change have also been developed and show promising potential.3,4

Digital health behavioural change interventions (DBCIs) often lack sustained engagement and, thus, long-term behavioural change effects. This is due to a lack of personalisation at two levels: time and type of interaction. 5 Just-in-time adaptive interventions (JITAIs) incorporate these two conditions. They deliver the right support at the right time according to the current circumstances of the individual. 6 However, this approach still comes with two big challenges.

The first challenge is that notifications at inconvenient and irrelevant times trigger irritation and loss of attention. 7 Moreover, users are often overwhelmed with notifications. 8 As receptivity depends on context, letting users define timings upfront is difficult and an unnecessary burden. There is thus a need to detect naturally occurring conditions and circumstances in which a particular user is motivated to adapt their lifestyle and form new habits, as these are expected to be the optimal time points to engage the user. Moreover, the gathering of this data needed to detect these moments should be obtained unobtrusively to lessen user burden.

The second challenge is that it has been demonstrated that DBCIs have higher effectiveness when data insights, social support, challenges and motivational messages are tailored to the profile of the user. 9 Evidence-based methodologies recommend tailoring engagement and feedback by considering (a) physiological data, for example, heart rate, (b) context data, for example, time of day, current activity, (c) profile data and personality traits, for example, obtained through questionnaires, (d) type of motivation that is driving the user, and (e) the stage of behavioural change the person is in.

Since individuals may respond in various ways, abruptly change the subject, or give unexpected answers, handling certain conversation flows can be challenging. To move away from the confines of scripted conversation flows, research is being done on using LLMs in the healthcare domain. 10 In a few medical use cases, these LLMs are used as-is, without any prompt engineering, in-context learning or fine tuning.11,12 With the use of prompt engineering, more context can be supplied such as conversation examples or profile data. On a conversational basis, more information such as the current circumstances and recent physiological data can be incorporated, resulting in a more personalised approach.

The just-in-time aspect of JITAIs increases the efficacy of the intervention, especially in problem domains that have triggers as antecedent to the behaviour. 13 In behaviour change interventions, a trigger is defined as the specific circumstances that precede the onset of the behaviour targeted for change. In stress interventions, the number of hours of sleep can act as a trigger for stress, such as when insufficient sleep leads to heightened stress levels. 14 Similarly, a combination of recorded food intake and self-imposed dietary goals can serve as a trigger for promoting healthy eating, for example, when exceeding a calorie limit prompts behaviour adjustments. 15 Likewise, location can influence mood shifts, such as experiencing a change in mood upon arriving at work. 16

In summary, various clear issues exist in the current implementations of JITAIs. To address these challenges, we present JITAIs that use contextual information unobtrusively derived from both smartphone and wearable data to determine optimal intervention moments. In this way, we can facilitate the generation of context-sensitive prompts, personalising the support to the user’s needs and circumstances. The proposed intervention will focus on smoking cessation, as smokers face a diverse range of triggers that vary from person to person. Without considering the individual context, only generic support can be provided. Therefore, this scenario is well-suited for the introduction of trigger detection in JITAIs.

The remainder of this article is as follows. The ‘Related work' section contains the overview of context and timing of chatbots in JITAIs for behaviour change interventions. The ‘Methods’ section explains the architecture of the JITAI and the design of the chatbot. The ‘Implementation details of the smoking cessation use case’ section contains the implementation of the architecture and the chatbot in the domain of smoking cessation. To evaluate the chatbot, the ‘Evaluation set-up’ section gives the characteristics of the expert interview. The ‘Results’ section discusses the findings of the expert evaluation. Next, observations and suggestions are given in the ‘Discussion’ section. The limitations of the proposed approach are discussed in the ‘Limitations’ section. Finally, the findings are concluded in the ‘Conclusion’ section.

Related work

The integration of chatbots in DBCIs promotes engagement with the intervention due to the aspect of social accountability, as was put forth in the model of supportive accountability. 17 A review on artificial intelligence-based chatbots shows that chatbots are already used in different behavioural change studies, successfully introducing improvement in the desired behavioural change. 18

However, the integration of context in these chatbots is sub-par. The context is often collected through questionnaires,19–23 in person assessments,22,24 wearable activity trackers,22,24 existing health records, 25 behaviour event logging,21,22,24 user feedback of messages,24,25 context in the conversation (intents),23,26,27 and sensors from phones and wearables. 27 The integration of this context into the chatbot was sometimes not explained,19,20,27 happened through placeholders in scripted dialogue options (intents)21–25 or through states that kept track of both dialogue and data connected to the state, 26 or was used to refine a message recommender system.24,25 Thus, none of these chatbots can integrate context without dialogue design.

The timing of when a chatbot stages an intervention in these studies depends on general19,27 or individual20,21,25 scheduled fixed notifications, on a recommender system based on the user rating of messages, 24 or on a recognised pattern in the behaviour by analysing user-given behaviour events. 26 Some of them do not even stage an intervention and the user is required to initiate the conversation themselves.22,23 These interventions do not happen on time, are inflexible, or require consistent user input, resulting in them being insufficient for an adequate JITAI.

A study by Yang et al. 28 investigated JITAIs to identify smoking circumstances and high stress levels to determine the appropriate timing for sending supportive messages to smokers in challenging situations. The study found that while their strategies to improve the perceived helpfulness of their support were feasible, the intervention’s timing could be improved due to inaccuracies in detecting the optimal message delivery time. There were two types of notifications: the first one sent out notifications at random in four-hour blocks, and the second one was dependent on their smoking and stress algorithm. Due to faults in these algorithms and sensor inaccuracies, hardly any smoke or stress moments were detected, and thus almost no just-in-time notifications were sent. Moreover, the messages sent to smokers lacked personalisation and were randomly selected, failing to address the smoker’s specific circumstances at the time. Another study tried to address this issue and sent different messages according to the urge to smoke, cigarette availability and stress levels of the smoker which resulted in greater reduction in smoking urge compared to general messages. 29 However, the participants had to fill in five ecological momentary assessments per day to calculate their current risk and to decide which message to send. Whether or not a message was sent in the first place, also depended on this calculation.

Several chatbots have been developed for the healthcare sector with the aim of optimising the timing of interventions. They do this by identifying the trigger that precedes the behaviour and alerting the person when the trigger is detected. One such example is Foodbot, which concentrates on promoting healthy eating habits by issuing notifications to individuals when they deviate from their self-set goals. 15 The Foodbot chatbot recommends food based on participant’s previous food intake. The trigger in this case is the user not achieving his self-set goal. Another chatbot, EMMA, detects mood based on smartphone sensor data and reacts appropriately.16,30 Although both the Foodbot and EMMA chatbots react at the right time to the trigger, both these chatbots are unfortunately scripted, resulting in structured, predictable, and controlled conversations.

In the domain of smoking cessation, a few attempts have been made to create chatbots for DBCIs since technology-based interventions can bring accessible support to smokers in low socioeconomic circumstances, often a disproportional high portion of the overall smoking population. 31 However, the review by Whittaker et al. 32 highlights that these studies had methodological issues, generally resulting in low quality, and are limited to using rule-based, mainly scripted chatbots with a few components of natural language processing such as natural language understanding and sentiment analysis.33–35

By contrast, the hybrid long-term engaging controlled conversational agents (HyLECA) framework proposes a combination of both a dialogue manager and LLMs to achieve a hybrid architecture. 36 The LLMs are used for natural language generation. Although this chatbot achieves promising preliminary results, this framework and chatbot still ask for conversation flow design to manage the dialogue, requiring many iterations on conversation design with challenges such as participant recruitment and collecting specific structured feedback. 37

To narrow down the timing of the intervention, trigger detection models and algorithms are needed. In the literature, certain triggers can be detected by observing passively collected data. Some examples of these triggers are stress,14,38,39 mood,16,40 emotions,41–43 location,44,45 fatigue 14 or alcohol.46,47 Some need wearable data such as accelerometer,14,38 heart rate,38,43,47 skin temperature,14,38,47 galvanic skin conductance14,38 or photoplethysmograph, 38 while others rely on phone data such as app usage,39–42 on/off screen,42,46 timing and content of keypresses, 46 incoming and outgoing calls and messages,39,40,42,46 number of correspondents, 46 email messages, 40 web browsing history, 40 battery, 46 global positioning system (GPS) coordinates,16,40–42,44–46 activities,14,16,39,46 sleep,14,39 sound, 42 light, 42 Wi-Fi, 42 gyroscope, 42 and accelerometer.14,42,46 Other information such as time of the day or day of the week is also used.41,46

In some studies motivational interviewing (MI) is applied to behavioural change interventions for smoking cessation.33,34,48 MI tries to enhance the intrinsic motivation of the user to change their behaviour. Although MI was originally developed for interventions targeting alcohol addiction, 49 extensive research has also explored its potential influence in the field of smoking cessation. 50 Brown et al. 48 notice that there is an positive impact on readiness to quit smoking after conversing with their MI chatbot for one week. Since chatbots for JITAIs interact with smokers in difficult situations, on the edge of relapsing, incorporating MI in the chatbot can further motivate the smoker to avoid smoking.

Methods

The intervention is designed to help prevent a certain behaviour or consequence from happening by detecting relevant triggers and responding appropriately. These triggers can vary widely and may include unmet goals, 15 declines in mood, 16 headache triggers, 51 or stress. 13 Corresponding interventions include promoting healthy eating, supporting emotional well-being, monitoring headaches, and managing stress. Since an appropriate response depends on the specific trigger and the personal circumstances of the individual, interventions need to be adaptive and context-aware to maximise their effectiveness.

As can be seen in Figure 1, the intervention can be divided into two parts: an intervention with a physical and digital coach. The physical coach handles the

Design of the intervention.

The digital coach starts with the

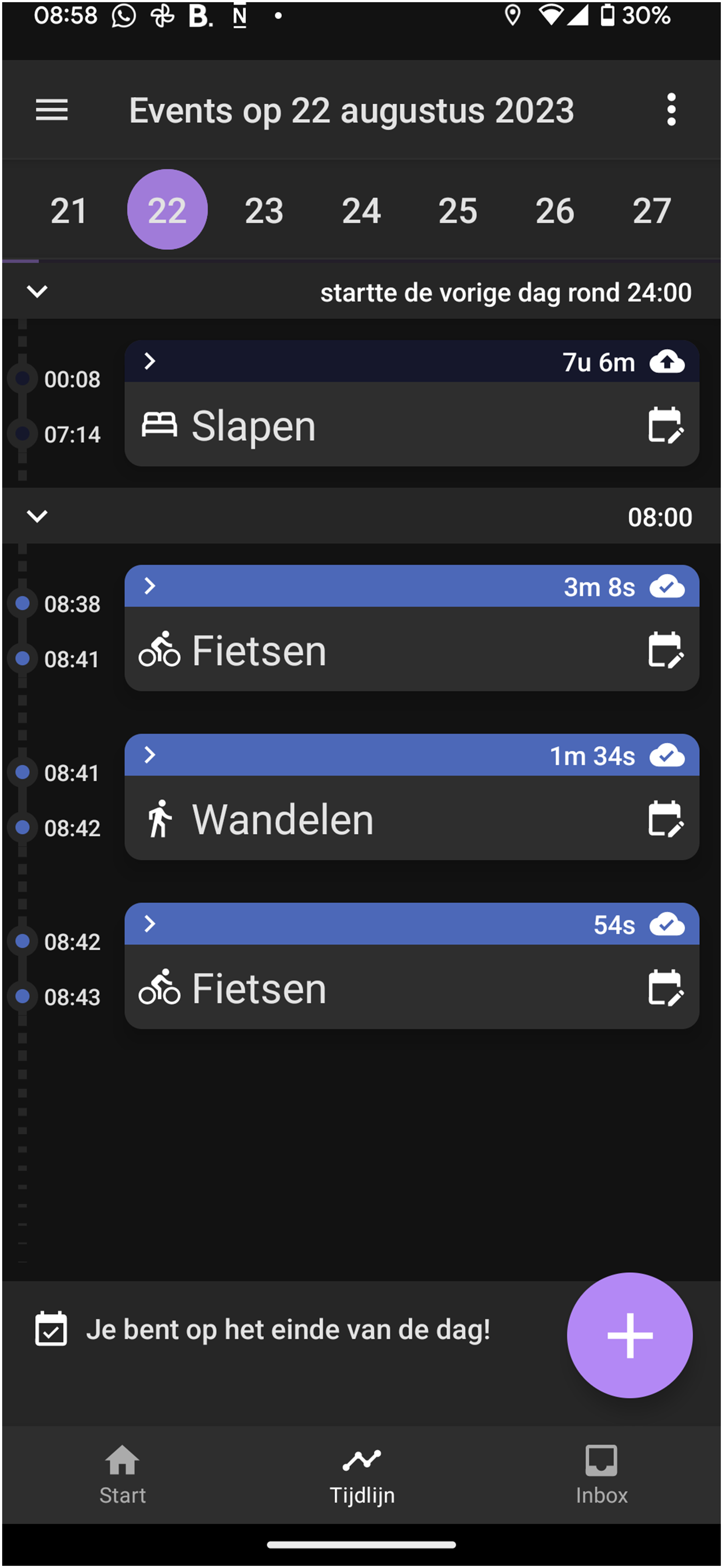

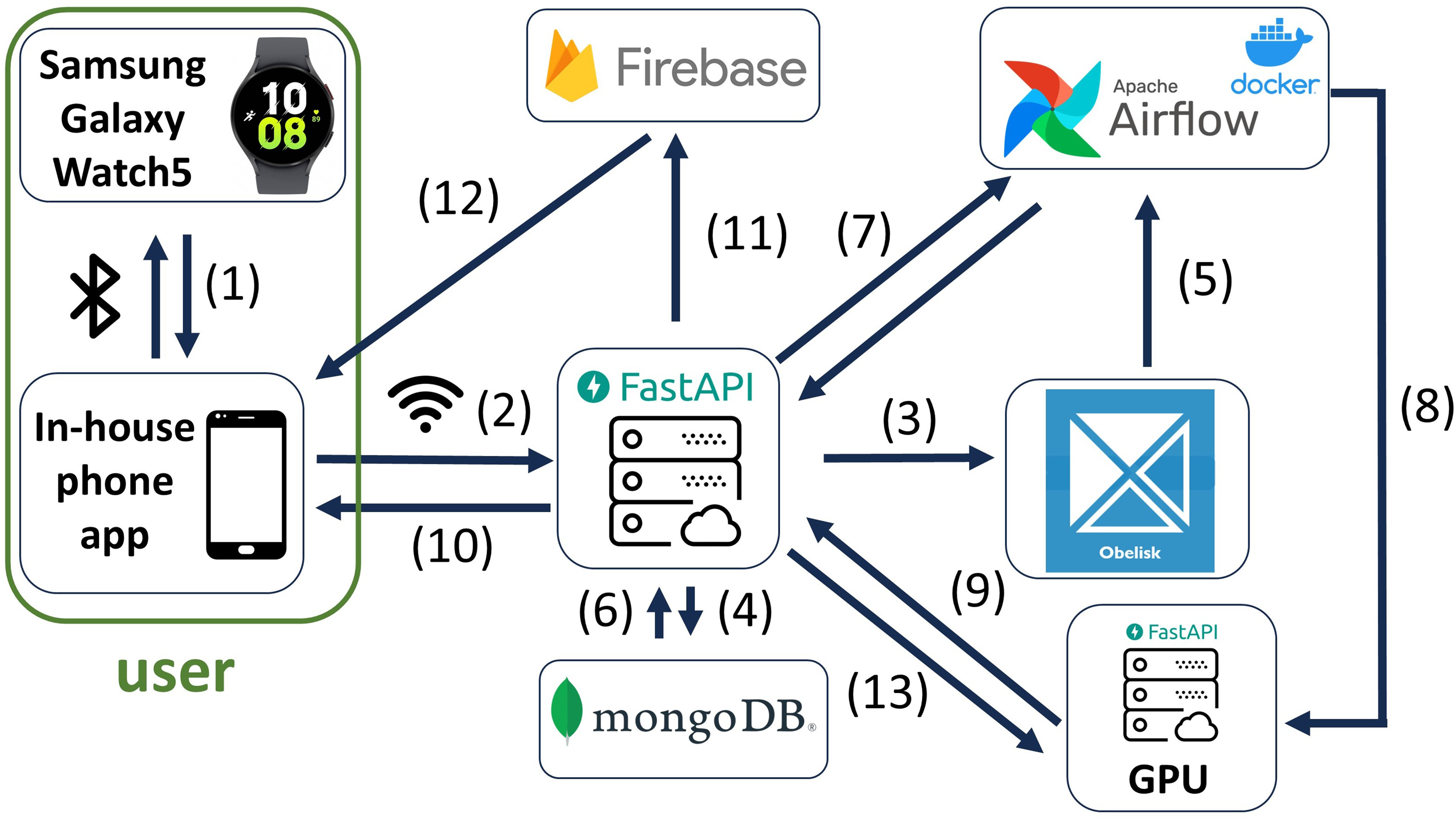

The architectural design of the digital coach and the digital tool can be found in Figure 2. As an example of a digital tool, we showcase our in-house app, which is used to gather contextual data for our studies. 52 A screenshot of the app is shown in Figure 3.

Architectural design.

Screenshot of the timeline in our in-house app.

Trigger detection

Each trigger requires a different set of data streams to be detected. These different data streams are combined and collected from an app on the phone and wearable. These data are stored, processed and directed towards the right trigger detection model.

Behavioural change application

Not all aspects from our in-house app need to be implemented for this architecture. First, the app needs to implement questionnaires that gather profile information. Second, the app requires a chat window that serves as the interface with the chatbot. Third, the app should enable and integrate push notifications so that the chatbot can intervene when they detect a trigger and provide support. Lastly, the app should collect the data that is needed to detect the trigger.

Our app collects the following context data: location, screen status, notifications, application usage, keyboard, activity recognition, light, accelerometer, gyroscope, magnetometer, rotation, linear accelerometer, proximity, gravity, steps, sleep begin and end timestamp. Thus, depending on which trigger we aim to detect, we enable or disable specific data streams.

Wearable integration

When collecting wearable data that is necessary for trigger detection, using smartwatches as the wearable can offer many optional features. One such feature is enabling push notifications to appear on the wearable, increasing the chance that the user notices the intervention. However, the most important consideration is which data streams we can collect from the wearable. Therefore, an essential aspect of this integration is choosing the most suitable wearable for the use case and transmitting the data from the device to the user’s phone.

Our in-house app already collects the following context data: light, accelerometer, gyroscope, magnetometer, rotation, linear accelerometer, gravity, heart beat, steps, photoplethysmogram (ppg) green, heart beat interval, distance, floors, calories, location, and skin temperature.

Data storage

As can be seen in Figure 2, the wearable sensor data is sent from the wearable via Bluetooth to the phone (1). All the context data is stored on the phone as long as the user has no internet access. When the user has internet access, the data is sent to the back-end (2). Time series raw data is sent to a time series database (3). The questionnaire answers are sent to a NoSQL database and are stored as documents (4).

Trigger detection with machine learning (ML)

The ML models in this architecture run on a docker-supporting platform in docker containers. As can be seen in Figure 2, the ML models retrieve the raw data directly from the time series database (5) and the events and questionnaire answers from the NoSQL database (6 and 7). Here, some models are not trigger detection models, but models that provide more context, for example, activity recognition, 53 sleep detection, 14 and stress detection. 14 This context can then be given to the trigger detection models and generative chatbot. For this research, the trigger detection models are the most relevant ML models that run on the docker-supporting platform. For every trigger, there is an ML model that detects this trigger. Depending on which triggers the user indicated during the on-boarding, those ML models will be running in separate docker containers. When one of these ML models detects a trigger, the detected trigger will be sent to the generative chatbot (8).

Running multiple models at the same time requires extensive scheduling, but due to our requirement of needing a platform that can schedule and run docker containers, the recurring execution of the models can be spaced in time on the docker-supporting platform to more evenly distribute the load. Still, the situation can appear when a lot of users connect to the internet at the same time. This might result in the models having to process a lot more data, which can in turn overload the system. To solve this, the docker-supporting platform can be run on a Kubernetes cluster so that it can dynamically assign more resources when the demands of the algorithm temporarily gets higher.

Generative chatbot

Generative chatbots are conversational agents that are open-domain, data-driven and generate responses from scratch. 54 To create a generative chatbot to converse with, LLMs are used. This can be done in several ways, either by fine-tuning the LLM to a chatbot use case, or by using prompt engineering. 55 In the case of fine-tuning, more conversation data between a coach and a user is needed to further train the model. That is, until the chatbot behaves in the desired manner, resembling a coach. The amount of data needed differs from use case to use case. When it comes to prompt engineering, none or only a few data samples are needed. The chatbot is instructed on what to do in a well-defined prompt. A combination of fine-tuning and prompt engineering can lead to even better results. To ensure privacy, open source and locally running LLMs are preferred. In the case of an open source LLM, the source of the training data can be examined. Moreover, they can be run on dedicated private servers and ensure that the sensitive, personal data of the participants stays in a controlled, enclosed environment. It is also essential to select a specific LLM that best aligns with the requirements of the intended use case.

LLM framework

For prompt engineering, LangChain 56 provides a framework for creating agents that have memory and have a standard interface for all kinds of LLM providers (OpenAI, Hugging Face, etc.). An agent is a reasoning engine for choosing certain actions and the exact order these actions should be taken. This chain of actions is the reasoning process that the agent goes through when it receives a message and constructs a response.

The LangChain framework 56 can be used to construct the agents and to give the chatbot memory. The system prompt, instruction, chat history, user profile, and input is given to the LangChain agent to react appropriately. LangChain has multiple ways to store the chat history of a conversation. One such way is to store a defined amount of the latest messages of the conversation, and discarding the rest. Another way is to summarise the conversation with an LLM and keep that in memory. One can also combine these two options, keeping a few of the latest messages in memory, while summarising all the others before. Since LLMs have a token limit (i.e. a maximum amount of text they can process at once), managing memory efficiently is crucial. By combining the two options, the use of the remaining available tokens is optimised with the chatbot being able to respond naturally to ongoing conversations as the most recent context is available, whereas older information is retained in a condensed form to preserve past details without using too many tokens.

LangChain also provides the tools that help developers create custom parsers that process the LLM’s answer. The parser can handle structured responses and multi-step tasks, so the right information can be obtained to perform an action and directly inquire the LLM for the next step. An example for the use of a parser is when an LLM mistakenly generates both perspectives of a conversation, producing messages for both the coach and user roles. Such responses need to be stripped of all user-related content. To avoid confusion when such mistakes happen, the parser filters out all text after the first encountered user tag, ensuring only the chatbot’s answer is retained.

Conversational agents

Figure 4 shows the generative chatbot module. The module contains two agents: the trigger and chat agent. The trigger agent receives the detected trigger for the user along with their user profile and context information. The chat agent is only initiated when the user responds to the trigger agent. It receives the same information as the trigger agent, but also receives the conversation history since the chat agent manages the chat session.

Generative chatbot module.

When a trigger is detected by the trigger detection model running in a docker container in Figure 2, the necessary data for the trigger agent is sent from the docker container to the server with a GPU (8). With prompt engineering, the agent is instructed on what it should and should not say, that it should first greet the user and that it should give a tip related to the trigger that is given to the agent. Moreover, it receives user profile knowledge to incorporate demographic information of the user. It receives context, such as location, date and time to frame the current circumstances.

The chatbot sends its answer to the user, as can be seen in Figure 2 (9 and 10). Additionally, to achieve the just-in-time aspect of the intervention, the chatbot sends a push notification to alert them of the trigger and the chatbot’s message (11 and 12). Next, the user’s answer will be sent to the chat agent (2 and 13). This agent’s instructions are more specific to a conversation rather than to give an appropriate tip. The chat agent receives the conversation history between the user and the chatbot, and reacts accordingly. Depending on the use case, the decision can be made to send a notification again to notify the user of the new message from the chatbot.

Trigger agent

The full prompt that is given to the trigger agent is provided in Listing 1. The 26 general guiding principles for querying and prompting an LLM were followed for this prompt to make full use of an LLM’s capabilities. 57 To apply this prompt to any intervention, the parts between brackets (and indicated in red) should be filled in. The behavioural change goal is the goal we want to achieve by applying the intervention, that is, avoiding stress, promoting exercise or eating healthy. The behaviour is the behaviour we want to change, that is, partaking in stressful situations, not moving much all day, or eating unhealthy. The behavioural change profile information is the user profile information that is needed to personalise the intervention to the user, that is, personal stress factors, preferred exercise methods, or personal unhealthy eating habits.

Every LLM has special tokens to indicate to the trained model where the text, system prompt, instructions and role starts and ends. In Listing 1, the placement of these special tokens are shown between < and > (and indicated in magenta). Not all LLMs have the full set of special tokens, but in these cases, the tokens can just be dropped in the given prompt.

In Listing 1, the agent is first told in lines 2 and 3 how the chatbot should behave and that it should cause no harm. Then, it is told in line 4 how it should behave in certain situations, especially what to do if the user wants to partake in the behaviour. It is also forbidden from recommending medicine in line 5. To prevent the chatbot from going on long monologues, the chatbot is asked to give short answers. The chatbot is instructed on line 6 to give the user only one single tip regarding the trigger the user is experiencing, rather than giving a list of tips. This choice aligns with research indicating that users with a level of education below higher education tend to prefer concise messages over lengthy ones. 58

Based on interviews with behavioural change experts in the domain of smoking, we learned that coaches often offer different methods to distract the individual from the urge to partake in the behaviour. 59 Therefore, in lines 9 and 10 a list of distraction methods is given for the trigger agent to choose from. This results in more variation of the tips that the trigger agents gives the individual. Moreover, we also learned from the interviews that coaches first inquire about the person’s personal distraction methods to reiterate these later with them if they have difficulties. In our architecture, these personal distraction methods are asked in the questionnaires. Lines 12 and 13 show the location where this data is added after the chatbot requests it from the NoSQL database.

However, more personalisation can be added by including a user profile. On lines 18 and 19, the profile of the user is stated as simple sentences. Moreover, the profile information that is relevant to the behavioural change intervention is also integrated here. Again, the necessary data to fill these in is requested from the NoSQL database. This information was gathered from the questionnaires.

The conversation log for every user is stored in the NoSQL database. Thus, optionally, one can add the summary of the chat history and the recent chat messages to the system prompt on lines 22 and 23. Then, this would also cover the situation where the chatbot and the user are talking when a trigger is detected and the chatbot has to interrupt the conversation. This way, the chatbot can adjust their tip according to what they were discussing to minimise the interruption. However, this can also result in the chatbot being confused about whether they have to put more emphasis on the earlier conversation, or on the new trigger.

To further optimise the agent towards the use case, few-shot learning is used in lines 15 and 16 so only a few examples need to be added to the prompt compared to fine-tuning the LLM from scratch. Listing 4 shows the template of these examples, constructed as first stating the trigger, then the context and then, last of all, the answer that is expected from the coach. Experts in the fields or physical coaches should be consulted to construct these examples.

To prevent the chatbot from forgetting the most important part, an instruction is added to every input to reiterate the goal. This is shown in lines 26 and 27 in Listing 1. The coach greets the user first and then gives a tip according to the trigger that was detected and is given to the chatbot. The input in line 28 contains the information about the detected trigger. For this agent, no message from the user is needed to formulate a response. Since the input is not a message and thus does not have a role tag to predicate the message, the input seems to belong in the instruction too. However, better results can be obtained from putting the input in the message itself, just before the coach tag, but without a user tag predicating it. In our experimentation phase, we encountered that if the input is put into the instruction, the chatbot sometimes ignores the trigger and gives general advice regarding all types of triggers.

Finally, in the instruction on line 27, the context is also stated, with a structure similar to the examples in Listing 2. This context contains fast-changing variables that are relevant to how the chatbot should react, that is, date, time, or location. If these variables would be put directly into the system prompt instead of the instruction, the agent can sometimes ignore these variables. This results in hallucinations, such as the chatbot believing it is evening instead of morning. Thus, facts that should not be ignored under any circumstances, are fast-changing and are relevant to every interaction, are better put into the instruction. User profile information is also very important, and should not be ignored, but since we do not want the chatbot to reiterate specific pieces of information about the individual, such as their age during every interaction, this is better put into the system prompt.

Chat agent

The system prompt of the chat agent in Listing 3 is much longer since it has to account for far more situations. Different instructions are needed to deal with the user’s answers, such as in line 10 by not insisting to talk to the user when the user does not want to. Moreover, the agent is also told not to give elaborate answers unless the user asks for it in line 6. Additionally, it is instructed to pose one question at a time and to talk about one subject at a time. Since the input now originates from the user, the input message in line 30 represents user communication, with the user tag added to explicitly indicate to the agent that this content was provided by the user.

The biggest difference between the trigger and chat agent is the instruction. Since the chat agent’s primary function is chatting with the user, certain instructions are needed to ensure that the conversation flow goes smoothly (lines 25–29). This involves providing additional instructions, such as imposing penalties for repeating the same line or question (line 27), and for veering off-topic during the conversation (line 28). The chatbot needs to deal with the trigger first before moving on to other behaviour change-related subjects (line 29). Moreover, if the individual asks for advice, the chatbot should give advice rather than repeatedly asking questions (line 26). Without these instructions, the chatbot often makes these mistakes.

In the case of the trigger agent, conversation history is optional for the prompt since the trigger agent starts the conversation as can be seen in Figure 4. For the chat agent, however, the conversation history is essential for the prompt (line 22). If the conversation goes on for too many turns, the earliest exchanges are summarised to preserve the conversation’s essence, while preventing the chat history from using all available tokens in the prompt (line 21). This ensures that the chatbot can keep track of the conversation.

Implementation details of the smoking cessation use case

We selected smoking cessation as a specific use case to evaluate the presented intervention method. Smokers often have various triggers that lead them to smoking. If these triggers can be detected using our context-monitoring, a chatbot can intervene and help the user avoid smoking by providing helpful and timely tips.

We implemented the architecture for this smoking cessation use case in Figure 5. We used the commercially available Samsung Galaxy Watch5 as the wearable device and our proprietary in-house mobile app for data collection. The service that handles our push notifications is Firebase. Our two databases are mongoDB for the NoSQL database and ObeliskHFS for the time series database. The interface of the backend towards the app is handled by FastAPI, as is the interface of the GPU. For scheduling and running Docker containers, we rely on Apache Airflow.

Implementation of architectural design.

Trigger detection

Smokers can have a variety of different smoking triggers, which can vary significantly from person to person. Some triggers appear more often in the smoking population and are more likely to cause a relapse.60,61 From this selection of triggers, we selected those for which there are sufficiently accurate algorithms available that are able to detect the triggers based on the available modalities (smartphone & wearable sensors), that is, stress, negative and positive emotions, location, social situations, fatigue, alcohol, and time-based. Supplemental File 1 shows a table of these smoking triggers and which situations, places or detailed feelings fall under these smoking triggers. Table 1 shows which input parameters and algorithms are required to detect the triggers. More information on which modalities, for example, types of sensor data, and algorithms, for example, ML, that can be used to detect these triggers can be found in the ‘Related work’ section, such as for stress detection,14,38,39 emotions,41–43 location,44,45 fatigue, 14 and alcohol.46,47 From these algorithms, we select the ones that are able to sufficiently accurately detect one of the triggers using the data obtained through the app. Because of the modular set-up of our architecture, this detection algorithm can be easily swapped with another algorithm when more fitting, for example, when using other data modalities, or when preferring a different detection algorithm. Changing this detection algorithm only influences the correctness of the detection, but not the overall architecture of the chatbot intervention.

Trigger detection.

GPS: global positioning system.

The prompt in Listing 1 requires an input on line 28, and this input differs for every smoking trigger. The exact instruction for every trigger is given in Table 2. Moreover, after every trigger, the sentence ‘Because of this trigger, the User wants to smoke now. The Coach gives a tip related to this trigger’ is added to ensure that the focus stays on the needed tip for the detected trigger.

Smoking triggers in the input of the prompt.

Generative chatbot

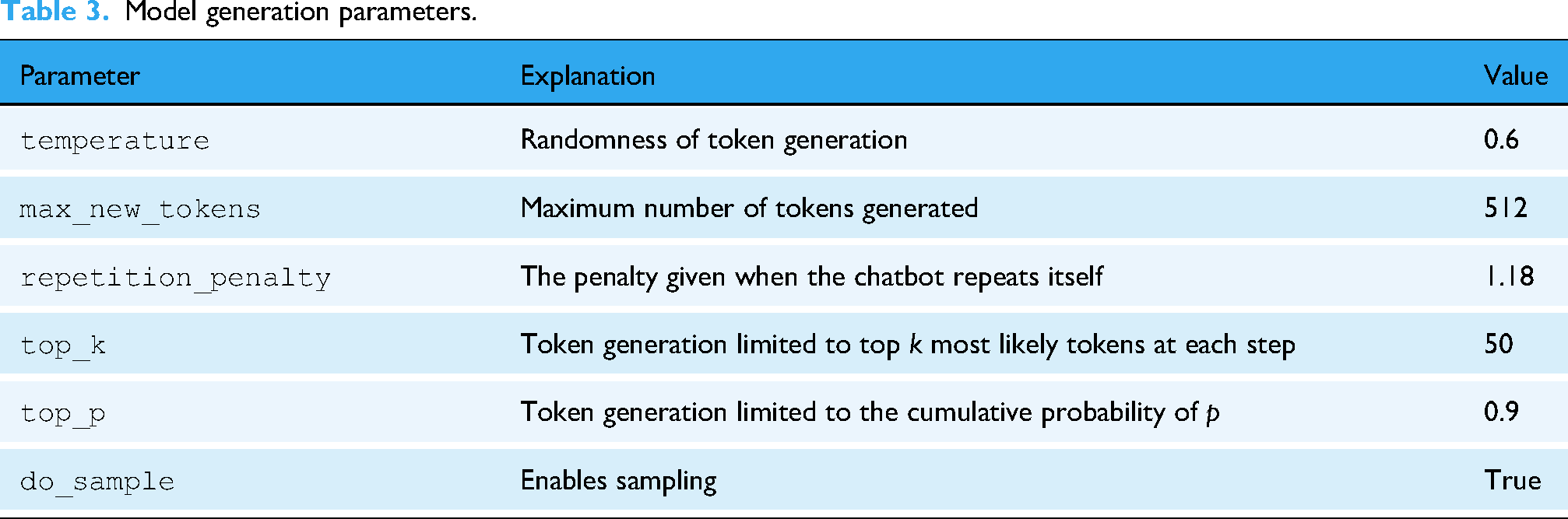

For this use case, LLM Meta AI 2 (LLaMA-2)

65

is used as the LLM due to its commercial license and finetuned version for chatbots. This LLM can be downloaded and run on our own servers. The specific model is

Model generation parameters.

Conversational agents

For both the trigger and chat agents, a few placeholders have to be filled in to adjust the general prompt to the smoking cessation use case. The behavioural change goal here is smoking cessation, and the behaviour we want to change is smoking. The profile information that is specific for smoking cessation is the age when a person started to smoke and how many times a person tried to quit smoking. The text, system and instruction tags are filled in with LLaMa-2’s special tokens. This LLM does not have role special tokens, so these are removed from the prompt.

Trigger agent

For the trigger agent, we decided not to include the chat history in order to not delude the tips for handling the smoking trigger. The example situations are based on interviews we conducted with smoking experts. 59 They were rewritten to fit the template shown in Listing 2. A few of these rewritten situations for smoking cessation are given in Listing 4. The adjusted prompt of the trigger agent is illustrated in Listing 5.

Chat agent

The full adjusted prompt for the chat agent can be found in Listing 6. Due to the success of MI in smoking cessation, we integrated MI into the chat agent (line 12). MI tries to enhance the intrinsic motivation of the user to change their behaviour. Since the chatbot interacts with smokers in difficult situations, on the verge of relapse, incorporating MI in the chat prompt can further motivate the smoker to avoid smoking. As the user has already received advice on handling the situation; the conversation can now guide them to realise why they should avoid relapsing on their own. To achieve this, the basic principles, skills and guidelines of MI as provided by Hall et al. 67 are incorporated into the prompt. Still, to ensure that the focus is on the trigger, this point is reiterated in the instruction.

Evaluation set-up

This architecture proposes chatbot prompts that can be used for JITAIs. Since these prompts contain several placeholders that still need to be filled in based on a specific behaviour, their evaluation is based on their implementation within a smoking cessation chatbot. This section first outlines the characteristics of an in-depth semi-structure interview with a smoking expert. Following this, we present the generated responses from both the trigger and chat agents.

Interview characteristics

The study involved a single subject, a smoking cessation domain expert, for whom specific inclusion and exclusion criteria were defined. The inclusion criteria required the expert to hold a senior leadership position at a national-level public health organisation focused on smoking cessation and to possess deep, demonstrable expertise in the psychological, behavioural, and social aspects of nicotine addiction. This was essential to ensure the expert could rigorously evaluate the correctness, relevance, and applicability of the chatbot’s responses. The exclusion criteria were that they could not be involved in the tobacco industry. The selected expert serves as the coordinator of Belgium’s national smoking cessation agency, meeting these criteria.

Drawing on practises from existing literature,68,69 we conducted an in-depth semi-structured interview focusing on a selected set of system outputs: four single triggers, a combined trigger, a location-based trigger, and an extended chatting session. The entire set of system responses and the full interview transcript are available on our Open Science Framework (OSF) project page: https://osf.io/t64kc/, presented to the expert in the same order as the document. The interview guide, along with the tailored questions for each trigger type, is also accessible on this OSF project. Given the flexible nature of semi-structured interviews, strict adherence to the interview guide was not necessary, allowing us to focus on areas the expert identified as most critical, while still providing a structured guide that ensured all important aspects were considered. The interview was conducted in March 2024 in Flanders, Belgium.

Generated responses

Before generating the responses, a fictional person (based on domain knowledge from interviews 59 ) was created to fill in the personal information, as shown in Table 4. The selected circumstances shown in Table 5 were based on the moment of generation.

User profile.

Context.

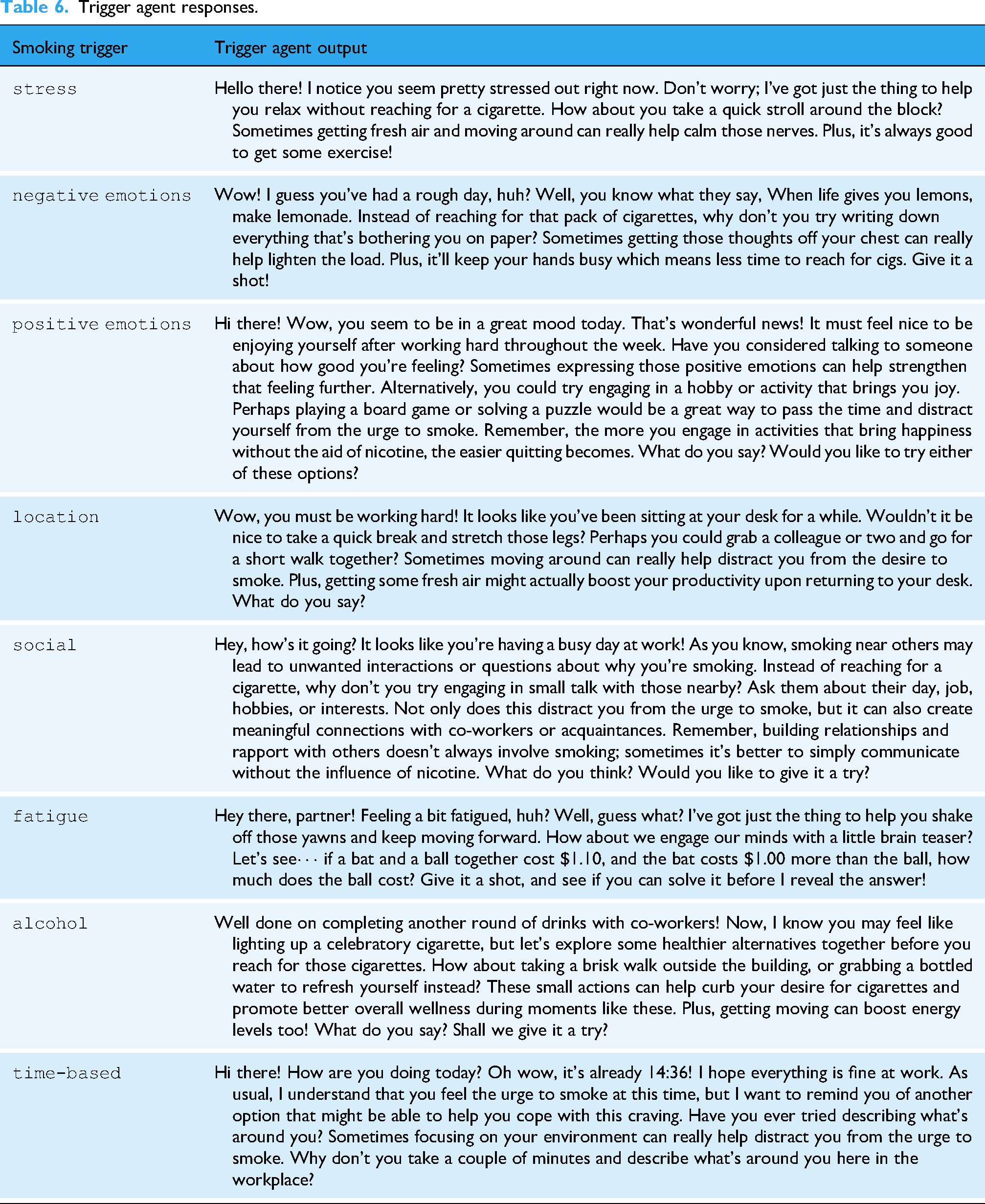

Table 6 presents a response from the trigger agent for each of the selected smoking triggers. Due to time constraints, only four triggers – stress, social, fatigue, and time-based – were selected for inclusion in the interview. Table 7 shows the trigger agent’s responses for multiple triggers, but for the same reason, only the combination of alcohol and social were presented in the interview.

Trigger agent responses.

Trigger agent responses for multiple triggers.

In Table 8, the user’s location is a variable that changes with time, making it the only case where the context differs slightly from the other examples. This approach was taken to assess how well the trigger agent adapts its response to the trigger location. For the interview, the location ‘in the car’ was chosen, not only because individuals cannot respond directly while driving but also because it represents a particularly challenging context for smoking cessation. This allowed us to both test the system’s adaptability and gather expert feedback on how best to handle such situations. While individuals may apply the advice afterward for their return trip or future car rides, the message could also be delivered via in-car assistance tools to provide real-time support in resisting the urge to smoke.

Trigger agent responses for different locations.

Listings 7 to 9 show chat sessions between the coach and the user, respectively for the triggers social, stress and negative emotions. Only the chat session for the trigger ‘negative emotions’ was chosen for the interview due to time constraints.

Results

The expert provided a comprehensive and nuanced evaluation of each of the system’s outputs, highlighting several strengths and areas for improvement in the conversations regarding smoking cessation. For brevity, our discussion will focus on the overarching insights from all outputs, though the full interview transcript is available on our OSF project page for those interested in deeper exploration and all details.

Overall, the expert’s review was favourable, commending the factual accuracy and completeness of both the trigger and chat agent. The expert confirmed that in the examples provided, the system reliably avoided hallucinating false or medically incorrect information that could potentially endanger users. The foundation of theoretical behavioural change principles embedded within the chatbot using system prompts was clearly reflected in the nuanced way responses were crafted, hinting at the successful integration of motivational interviewing. This was evident through the appropriate tone of the chatbot’s responses, which were in most cases neither condescending nor didactic. This approachable tone was valued for its potential to facilitate the acceptance of advice, ensuring that users maintain autonomy over their smoking cessation decisions.

Additionally, the evaluator was often pleasantly surprised by the originality and practicality of the coping strategies and tips provided for managing cravings, surpassing expectations in terms of creativity. Most of the recommendations were praised for being accessible and involving low-barrier activities capable of engaging users both physically and mentally away from smoking triggers when faced with challenges. Moreover, the chatbot’s ability to differentiate and tailor suggestions based on specific triggers provided, such as stress or specific locations, and seamlessly incorporating these contextual details into its interactions, was highlighted as a key strength. This adaptability should enhance the perceived relevance and personalisation of the support. The expert referred back to the context information table – shown in Tables 4 and 5 – on multiple occasions, confirming that the help and information provided were appropriate for the context of the user, such as age, location or day of the week.

However, the expert also identified key areas for improvement. The trigger agent’s responses were often seen as overly lengthy or excessively detailed, delving into the mechanisms and rationale behind recommending specific activities. While this approach is well-intentioned, aiming to thoroughly inform users about the reasons for undertaking certain actions, it was suggested that the ‘push’ messages from the trigger agent should be concise and direct, featuring clear calls to action. This approach aims to prevent overwhelming users with information, especially when their cognitive load is already high due to exposure to smoking triggers. Additionally, the expert recommended including options for further external support, such as contacting friends or family when possible.

Furthermore, the expert highlighted the need for a stronger focus on user autonomy and personalisation, to prevent the chatbot from being overly directive. Specifically, it was suggested that the chat agent should foster more interactive and engaging conversations, encouraging users to actively participate in their quitting journey by sharing insights into what strategies work best for them. This approach assumes that as users actively interact and converse with the chatbot, they have more time and mental capacity, or perhaps a need for distraction, which the chat agent could fulfil. Meanwhile, the agent could gather comprehensive data on the user’s preferred cessation techniques and coping strategies. This information would allow for tailoring based on user feedback, thereby boosting the chatbot’s relevance and effectiveness. Notably, the expert stressed the importance of a balanced interaction between the chatbot and the user, advocating for a conversational method where the chatbot, especially the chat agent, remembers and integrates users’ preferences into subsequent advice. It was also pointed out that in line with motivational interviewing theory, the notion of the chatbot as an ‘all-knowing’ agent, merely instructing users on actions to take, should be avoided. Moreover, it was mentioned that the chatbot sometimes places excessive emphasis on the physical aspects of smoking cessation, rather than offering in-depth psychological and emotional support, which necessitates more extended interactions. This highlights the importance for the chatbot to also tackle habitual behaviours with practical advice and emotional challenges with tailored psychological support.

Discussion

In this last section, we will discuss the expert’s recommendations regarding both the trigger and chat agents and how they can be incorporated into the system’s prompts. The following statements are intended as preliminary suggestions and should undergo further testing and refinement to enhance their effectiveness and achieve optimal outcomes.

Regarding the trigger agent, the expert recommended minimising the reasoning behind recommending a tip and shortening their length to prevent smokers from ignoring messages at crucial moments. This can be addressed by prompting the chatbot to avoid justifying its advice or, alternatively, allowing individuals to choose whether they prefer concise tips or more detailed explanations. The expert also noted that coping strategies suggested by the individuals themselves are often the most effective. However, in this use case – where the chatbot provides tips and ideas – an extra mechanism has to be put into place to prevent the individual from being overwhelmed with ineffective suggestions. To address this, the chatbot could periodically check whether the user has tried certain tips and whether they found them effective. Based on user feedback, ineffective tips could be deprioritised, while successful strategies could be reinforced through a tailored recommendation list in the system prompt. This is similar to what Carrasco-Hernandez et al. 24 and Stephens et al. 25 did through collecting user feedback, but instead of constructing a recommender system that recommends from a fixed list of messages, the expert now advised incorporating user preferences directly into the prompts. For example, the chatbot could adapt its recommendations based on whether the individual prefers mindfulness techniques, enjoys physical activity, is socially engaged, or has an affinity for mental challenges and puzzles. This level of customisation would align the tips more closely with the individual’s interests and lifestyle.

Moreover, the expert evaluation highlighted the need to move beyond physical coping strategies and deepen the chatbot’s emotional and psychological support. While our design incorporates MI principles to foster intrinsic motivation, future iterations must enhance this capability. To achieve this, prompt engineering will focus on refining the chat agent’s instructions to encourage more probing, open-ended questions that help users explore their own feelings and reasoning in greater depth. The goal is to evolve the chatbot from primarily an advice-giver to a more sophisticated, collaborative partner that helps users navigate the complex emotional landscape of behaviour change.

As for the chat agent, the expert provided some suggestions that would improve user autonomy in regard to conversations with the chatbot. Although the user can provide free-text responses and the chatbot adapts to the user input accordingly, improvements can be obtained by translating the expert suggestions into the prompt using the following statements:

If I propose a coping mechanism myself, instead of only telling me it’s a great way to distract myself, explain why this is a great coping mechanism. Attach more importance and go deeper into what I propose instead of proposing things yourself. Attach more importance to the psychological aspect of behavioural change. The reason for smoking is not always stress-related. Take into account that some smokers smoke out of habit. Thoroughly explain your reasoning for the coping mechanisms you give or why you are asking for certain information. Try to encourage me and ask me questions to make me explain my reasoning in depth.

Not all examples of the chatbot responses could be included in the expert evaluation, as responses can be generated infinitely. However, some recurring issues were identified. One such issue is that the chatbot sometimes prefaces responses with unnecessary details like the date, time, and location. For example, it states, ‘Today is Thursday, February 22, 2024, at 14:36 PM. Currently, you are located at work’. Further experimentation is required to find the optimal placement of the context in the prompt to mitigate this problem. Additionally, the chatbot frequently uses the word ’wow’ in its greetings, which may need moderation. A more significant concern is the chatbot’s use of emoting–actions denoted by asterisks such as *ahem*, *eye contact*, and *pausing dramatically*. Once this behaviour appears, it tends to persist throughout the conversation. While this style of communication may be acceptable for certain age groups, it might not be appropriate for the 48-year-old user used in our example, as it may come across as overly casual or theatrical. To address this sudden shift in tone, prompt instructions should either prohibit emoting altogether or define an appropriate tone. Based on our experience, the strategy of simply disallowing asterisks and directing the chatbot to adjust its tone based on the user’s age has proven insufficient in fully resolving these issues.

Importantly, chatbots should not use vulgar language or encourage harmful behaviours. The prompt contains instructions to prevent these problems. Two hundred messages were generated by the trigger agent and no issues regarding foul language and inappropriate responses were found, suggesting that this issue is unlikely to arise during routine use with the trigger agent. However, testing the chat agent poses greater challenges since users might pose provocative questions or create unforeseen scenarios that have not been previously assessed. Consequently, the possibility of encountering such problems with the chat agent cannot be entirely ruled out. Therefore, further testing is essential for chat agents, or alternative measures should be implemented to filter out inappropriate responses should they occur. One potential safeguard could be leveraging specialised models. For example, LLaMa-2 includes a guard model,

When designing a behavioural change chatbot, ethical considerations such as data privacy, data consent and user autonomy are important factors to consider. By hosting the databases and the LLM on our private servers and developing our proprietary application, we eliminate the need to transmit sensitive data to third parties, thereby enhancing data security. However, if an LLM requiring third-party access would be used, it is crucial to investigate how the personal data is transmitted and stored to ensure the privacy and protection of users’ information. All users are informed during the account creation process that their data will be stored on secure, private servers, and they must explicitly consent to this before proceeding. No data is shared with third parties. Furthermore, users give explicit permission for each individual sensor modality (e.g. GPS and accelerometer), with clear communication about what data will be collected and for what purpose. Importantly, users retain full control and can opt out of any of these modalities at any time, although this may impact the system’s ability to detect certain contextual triggers, for example, inability to perform location-based triggers if no consent is given to track the GPS. To further enhance transparency and user control, future iterations of the system will explore two mechanisms to enhance data privacy and control. First, we will investigate processing the data as much as possible on the edge, that is, on the smartphone device itself, so that, for example, sensitive GPS data does not leave the smartphone. Various architectures can be investigated, from performing some preliminary data processing on the smartphone (e.g. transforming the raw accelerometer data to detected activities, or transforming GPS data to a point of interest (POI) category) to running the complete processing and chatbot itself on the smartphone. It needs to be investigated what is feasible in terms of resource usage, battery drainage and correctness of the solution, for example, detection algorithms that might become less accurate because of the limited capabilities of smartphone processing, or a less accurate chatbot due to the use of smaller LLMs. Second, the use of solid-based personal data stores is actively being researched. 71 This would allow data to be stored in a fully user-controlled environment, with explicit consent and access permissions defined at a granular level for each data parameter and intended use. The first proof-of-concept implementations of solid for healthcare have emerged72,73 and are a promising avenue to overcome the ethical barriers towards behavioural change chatbots in terms of data consent and control.

While LLMs and chatbots offer a responsive, low threshold and scalable approach towards behavioural change interventions, an important ethical consideration is the risk of user overreliance or dependency on automated systems for emotional regulation or decision-making. This may lead to reduced self-efficacy or avoidance of human support networks. As such, we do not advocate for this chatbot to be viewed as a replacement for professional support by for example smoking cessation coaches or psychologists, but more to adopt a human-in-the-loop approach where the chatbot is integrated in a hybrid care path where both professionals and chatbots play a role. This would include a form of human oversight, in which the chatbot can either automatically escalate a conversation to a professional when certain keywords, alarming signals or conversation types are detected, or where a professional can occasionally review conversations and intervene themselves if deemed necessary. This would reduce the potential harm that can be caused by the chatbot when inappropriate, overly confident, or context-insensitive responses are generated. Such hybrid models can help balance the scalability of automation with the nuance, empathy, and accountability that human involvement provides.

As mentioned in the introduction, the two conditions for a JITAI is getting the support and timing right. All the chatbots in the ‘Related work’ section cannot integrate context without dialogue design. Although some of these chatbots on some level fulfil the right support condition,15,16,19–27,29 the human resources needed to construct these dialogues cannot be underestimated. 37 By integrating the context into the chatbot by relying on prompt engineering as is done in our design, the construction of a right support chatbot becomes more feasible in practice. The second condition, intervening at the right time, is fulfilled by two chatbots.15,16 However, for the other chatbots that are used in interventions, the timing is wrong.19–21,24–29,33,34,48 Our approach to getting the timing right is through trigger detection. Thus, since our approach fulfils both conditions, it brings an adequate design of a JITAI to the table.

Limitations

Although an expert in the field evaluated the design of the intervention, the real-world usability and impact cannot be fully determined without direct input from the end users. While feedback from a smoking cessation expert provides valuable direction during early stage design of a behavioural change chatbot in terms of correctness, originality and practicality of the proposed interventions, it does not replace the need for empirical evidence from the target population. We feel the presented preliminary expert insights and lessons learned already provide valuable guidance towards other researchers in setting up user studies for trigger-based behavioural change chatbot interventions. Future work should include rigorous user-centred studies to assess the intervention’s acceptability, usability, and effectiveness across specific behavioural domains, such as smoking cessation, healthy eating, or stress management. Such a study would allow us to empirically measure the impact of message characteristics, like verbosity, on user engagement, response rates, and perceived helpfulness. Different prompt engineering techniques to alter the verbosity of the chatbot messages should be tested, for example, instructing the chatbot to be concise and/or direct or to avoid justifications for advice or enabling the user to indicate a preferred message style, for example, empathic versus task-oriented.

The smoking trigger detection algorithms mentioned in Table 1 were chosen based on literature review to pinpoint a trigger detection algorithm with sufficient accuracy based on the modalities that were available through the application. As such, the proof-of-concept might not necessarily always include the most accurate detection algorithm for each trigger. Consequently, the effectiveness of the trigger-based intervention chatbot is constrained by the accuracy of these detection algorithms. In other words, if the trigger is not detected, the chatbot will not be activated and no intervention will be executed. Conversely, if a trigger is wrongly detected, an unnecessary intervention might be launched. Elaborate empirical studies would need to be performed to study the impact of these undetected or wrongly detected triggers on the behavioural change of the end users. Moreover, although the timing of the support is triggered when one of the algorithms detects a trigger, no extra rules are in place yet to determine if support needs to be sent every time, or what takes precedence or which triggers need to be combined when multiple triggers overlap. This overlap might also be subjected to whether the data for multiple algorithms overlap or whether one algorithm can cover multiple triggers at once and obtain a better result in behaviour change. This intricate relationship between the data, triggers and detection methods has to be looked at in future work to optimise the trigger detection.

Furthermore, while the system was designed as a generalisable framework, its implementation and evaluation have so far been confined to the current smoking cessation use case. Future work is required to validate its effectiveness in other behavioural change domains. Similarly, the practical integration of the system with existing clinical support systems and workflows was not implemented. Although the modular architecture is designed to facilitate such connections, the challenges of interoperability remain to be tested. Finally, the adaptability of the intervention across diverse cultural contexts was not assessed in this study. The personalisation of the chatbot’s tone, language, and suggested strategies for different cultural populations is a critical area for future research to ensure broader applicability and acceptance.

Conclusion

In this article, we designed a system that utilises prompt engineering on an LLM to deliver the right support when a specific trigger is detected. This novel approach results in appropriate, personalised, relevant and accurate advice tailored to the type of trigger. Our proposed design is capable of automatically identifying a trigger at the right time and offer the right support to this trigger, personalised to the individual’s current circumstances, context, and profile information. Privacy and protection of sensitive data is ensured by hosting the databases and LLM on secured, private servers. This just-in-time intervention methodology can serve as a tool to change unhealthy behaviours such as smoking using the tailored support provided by the chatbot.

In the domain of smoking cessation, our expert evaluator highlights the importance of integrating contextual information and behavioural change practises into the chatbot to provide relevant support in moments of need. Key recommendations include simplification and directness of responses, activating individuals in sharing their quitting journey, and focusing more on psychological and emotional support to address habitual behaviours.

In the future, we aim to automatically detect an individual’s personal triggers without relying on a physical coach. This would address the issue of certain triggers going undetected by both the individual and the physical coach. Moreover, we plan to explore ways to optimise the coach’s advice to maximise user engagement and incentivise compliance with behaviour change strategies.

Supplemental Material

sj-pdf-1-dhj-10.1177_20552076251381747 - Supplemental material for Designing a just-in-time adaptive intervention with trigger detection and a generative chatbot: Smoking cessation use case

Supplemental material, sj-pdf-1-dhj-10.1177_20552076251381747 for Designing a just-in-time adaptive intervention with trigger detection and a generative chatbot: Smoking cessation use case by Kyana Bosschaerts, Arian Kashefi, Lieven De Marez, Peter Conradie, Sofie Van Hoecke and Femke Ongenae in DIGITAL HEALTH

Footnotes

Acknowledgements

This research was funded by the Research Foundation – Flanders (FWO) under the IMPERIO project (grant number: G0D5322N). We also extend our gratitude to the experts at Tabakstop for their valuable insights and contributions toward improving the chatbot.

Ethical approval

This project was approved by the Political and Social Sciences Ethics Committee of Ghent University (Ref. 2022-46) on 12 December 2022.

Consent for publication

Written informed consent was obtained from the smoking cessation expert with whom the interview was conducted.

Authors’ contributions

All authors collaboratively designed the intervention. KB configured the large language model setup, conducted prompt engineering for the chatbot and generated the responses. AK conducted the expert interview. KB and AK wrote the initial draft of the manuscript. All authors reviewed and edited the manuscript and approved the final version of the manuscript.

Funding

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Guarantor

FO is the guarantor who takes full responsibility for this article.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.