Abstract

Background

Online learning is an accessible and cost-effective solution to deliver training in healthcare. In-person training has long supported mental health practitioners in delivering cognitive health interventions, however for many underserved populations, significant barriers to accessing standardized, evidence-based training for such treatments remain. The E-Cog platform aims to bridge this implementation gap, delivering accessible and engaging remote training that maintains the quality of in-person training.

Objectives

Describe the design, development, and implementation of E-Cog, an innovative online training platform for two cognitive health interventions, within the context of a Canadian multisite implementation trial for individuals diagnosed with psychosis. Additionally, examine the feasibility and acceptability of the ADDIE educational framework for e-learning development.

Methods

Following the ADDIE model's five phases (analysis, design, development, implementation, and evaluation), we developed our training platform and its curriculum of two cognitive health online certifications: Action-Based Cognitive Remediation and Metacognitive Training. This protocol describes the first four phases of our work and reports on the feasibility and acceptability of the ADDIE model.

Conclusions

E-Cog has been implemented to deliver remote, asynchronous training that maintains in-person quality standards and shows potential for cost-effectiveness. Successful uptake of mental health practitioners (n = 28) was observed during the first two years of implementation. ADDIE was perceived as feasible and acceptable as a framework for e-learning content, depending on flexibility to adapt its structure to research challenges and constraints. Next steps include a qualitative assessment of E-Cog's usability and impact on intervention delivery.

Keywords

Introduction

Cognitive health interventions in schizophrenia spectrum disorders

Severe mental illnesses such as schizophrenia spectrum disorders (SSD) impose an enormous burden on individuals, families, and communities.1,2 The course of SSD commonly involves symptom recurrence or relapse and variable magnitudes of deterioration in social and vocational functioning.3–5 A common feature of SSD is the presence of cognitive dysfunction (e.g., impairments6,7 and biases8,9). Cognitive impairments include difficulties in verbal memory, executive functions, attention, and social cognition, among other domains. Cognitive biases are distortions in the way information is collected, processed, remembered, and evaluated. 10 These cognitive impairments and biases require greater clinical attention as they adversely impact clinical trajectories and functioning.11,12 Hence, there is an important need to ameliorate overall cognitive health in SSD as the most direct and accessible means to improving outcome.

The last 25 years have witnessed significant, though often separate, advances in the development of cognitive health interventions targeting cognitive impairments and cognitive biases in SSD. 13 Specifically, cognitive remediation (CR) is a treatment designed to improve cognitive impairments, and involves drill practices and compensatory strategies. 14 In SSD, CR has been shown across multiple trials to significantly improve cognitive capacity as well as functioning.15,16 Cognitive remediation also improves measures of brain structure and function in SSD, including increases in hippocampal volume 17 and cortical thickness, 18 and normalization neural activity during cognitive tasks. 19

Similarly, advances in treatments for cognitive biases have emerged which target specific cognitive biases underlying delusions, based on the theoretical foundations of cognitive models of SSD. 20 One treatment for cognitive biases is metacognitive training (MCT). Likewise, multiple randomized controlled trials have confirmed the efficacy of MCT on several outcome measures including delusions, 21 cognitive biases, 11 and insight. 22 The results of these trials have been aggregated in recent meta-analyses-functioning which are comparable to effect sizes observed in the CBT literature.23,24

Despite the proven efficacy of such interventions, a significant implementation gap persists, largely due to issues of accessibility and consistency, including those related to training. Populations such as individuals in remote areas, low-income communities, and BIPOC groups often have limited access to standardized treatment protocols, with training resources used in clinical trials rarely present in routine practice. This lack of accessibility may stem from the expense, logistical challenges, and time required for in-person training, 25 along with a shortage of health professionals adequately trained in these methods. 26 Online training programs, by offering flexible, cost-effective, and scalable options, have the potential to facilitate the widespread adoption of evidence-based interventions and increased logistic feasibility compared to in-person training methods. 27 Furthermore, the shift toward digital solutions in healthcare since the COVID-19 pandemic indicates a strong receptivity to remote, asynchronous training options that maintain in-person standards while reaching underserved populations more equitably.28–31

Context of the iCogCA project

Thus, the E-Cog platform has been developed in the context of iCogCA, an effectiveness-implementation trial for cognitive health interventions (CR and MCT) in Canada, aiming to promote cognitive health in SSD. The iCogCA study proposes an entirely online hybrid trial across five sites in Canada (Douglas Institute in Montréal, Royal's Institute of Mental Health Research in Ottawa, Kingston Health Sciences Centre, Ontario Shores Centre for Mental Health Sciences in Toronto, and Vancouver Coastal Health/UBC). The CR and MCT will be offered to a total of 390 individuals (78 per site) with SSD between 2023 and 2026. This study aims to determine the clinical effectiveness of the two online interventions on multiple dimensions of mental health and functioning, and to evaluate the implementation of such online interventions, and the online training modality, across multiple care settings. 32

The platform development detailed in this manuscript was not independently registered; however, the implementation trial protocol for iCogCA was registered at ClinicalTrials.gov (NCT05661448) and has been published by Au-Yeung, Thai. 32

The E-Cog platform

The name “E-Cog” was chosen to convey a combination of the terms “e-health” and “cognition.” The E-Cog platform was developed to facilitate online training on two specific interventions: action-based cognitive remediation therapy (ABCR) and MCT. The ABCR33,34 is a type of CR which involves web-based cognitive training, developing, monitoring, and flexibly adjusting strategies for solving problems ('strategic monitoring”), and transfer activities. Transfer includes discussing and role-playing how cognitive skills and strategies are applied in everyday life and teaches potential compensatory strategies for overcoming cognitive challenges. The MCT program8,9,20 targets specific cognitive biases underlying delusions. It involves learning activities that allow participants to recognize cognitive biases involved in the formation and maintenance of psychotic symptoms and prompts for reflection on and change in their current problem-solving repertoire.

The E-Cog platform hosts four distinct modules which can be completed asynchronously. The first module trains learners on cognitive impairments (cognitive capacity, cognitive biases) in psychiatric disorders and their impacts. The second module provides training for digital mental health tools such as remote cognitive assessment (used to quantify intervention effectiveness and for development of personalized care) and virtual technologies (e.g., Zoom Health). Finally, modules 3 and 4 offer training in the delivery of the core components of ABCR and MCT. The targeted learners are professional therapists in the field of mental health as well as students or trainees already interacting with those with SSD, such as those facilitating interventions in the context of the iCogCA study.

The E-Cog platform was developed using the ADDIE model, 27 a framework for Analyzing, Designing, Developing, Implementing, and Evaluating instructional content.27,35 To date, guidance on developing online training platforms for psychological interventions (including cognitive health interventions) has been limited. According to a recent meta-analysis of 23 distance education studies, the ADDIE framework offers a promising approach, providing best practices for creating effective educational experiences across diverse settings. 36 Recognized as a valuable resource for building online education 36 and supporting community-based interventions, 27 ADDIE outlines a structured, linear approach that is well-suited for projects needing rigorous design and evaluation, while remaining adaptable to different learning environments.

A recent comparative analysis 37 indicates that ADDIE performs particularly well in asynchronous learning contexts, whereas more iterative models such as the Successive Approximation Model (SAM) are better suited for synchronous formats requiring rapid prototyping and adaptability. In contrast, models like the Six Steps in Quality Intervention Development (6SQUID), 38 though effective for intervention development in health research, are not specifically intended for instructional design. Given our focus on creating training materials for a digital platform, ADDIE was more closely aligned with our goals than alternative frameworks such as 6SQUID or SAM.

Recent studies suggest a growing interest in ADDIE application to mental health interventions. For instance, the ADDIE model guided the creation of e-learning modules for an evidence-based employment program for individuals with mental health conditions. 39 Learners rated the modules positively (between 4.4 and 4.6/5), and self-report of knowledge acquisition was high. About half of them reported that they will change their practice after watching the modules. Another study 40 describing a mental health app for trauma survivors created using the ADDIE model showed significant improvements in PTSD symptoms and stress levels. User experience (UX) was assessed, with results showing positive expert evaluations across six components: navigation (M = 4.32, SD = 0.73), content (M = 4.10, SD = 0.75), accessibility (M = 4.48, SD = 0.58), design and development (M = 4.34, SD = 0.71), understanding (M = 4.02, SD = 0.66), and satisfaction (M = 4.27, SD = 0.62).

However, research on ADDIE's feasibility and acceptability in the context of online training for psychological interventions remains scarce. 27

Study objectives

The present protocol aims to (1) report on the development of an e-learning platform for training in cognitive health interventions using the ADDIE model, and (2) discuss the feasibility and acceptability of the ADDIE Model to develop online training for cognitive health interventions.

Methods

Overview of the ADDIE model

Our research and technical plan for E-Cog followed the five phases described in the ADDIE model 27 (see Figure 1 for an overview). In the first phase, “Analysis,” the overall goal of the training was described, and an investigation of the profiles and training needs of learners was conducted. Factors affecting implementation and potential constraints were also identified.

Overview of the ADDIE model (Gavarkovs et al. 27 ).

The second phase, “Design,” was dedicated to developing learning objectives and aligned training activities. For each module, a general goal was identified and divided into learning units with a list of specific learning objectives. Learning activities were then designed to ensure the achievement of goals and objectives. The teaching strategies, medium used, length, and content of the activities, were outlined during that step to create a coherent learning experience following principles of constructive alignment and Bloom's revised taxonomy.41,42

More specifically, learning objectives, teaching strategies, and evaluation methods were aligned to ensure that learners are taught what will be evaluated in a way that adequately prepares them to successfully demonstrate their newly acquired skills during evaluation. For example, a lecture-based teaching strategy was used for theoretical knowledge acquisition, which was evaluated using multiple-choice questions. Complementarily, a scenario-based teaching strategy was preferred for practical and critical thinking skills development, which was then evaluated using process-based essay questions.

The third phase of the ADDIE model, “Development,” was performed in parallel with the previous phases and represented a significant portion of the work. Both the learning material and the online platform were developed during this stage: the material for the learning activities (e.g., narrated PowerPoints) was created and the online platform was programmed.

The fourth and fifth phases of the ADDIE model, “Implementation” and “Evaluation,” involve an ongoing pilot study to assess the platform's performance and educational content. Usage data and feedback on learner experiences are being collected in accordance with the ADDIE framework. These data are used to inform iterative improvements to both the educational material and the platform (i.e., revisiting the design and development phases) and to evaluate participant experience, achievement of learning objectives, and engagement behaviors with the platform.

Finally, we evaluated our experience using the ADDIE model during the development and implementation of the E-Cog Platform. This objective is in line with the authors Gavarkovs et al.’s27 suggestion that the reach and effectiveness of such an evaluation plan and training approach is investigated in future studies, as a way to provide evidence for its use. In the present work, we intend to report on feasibility and acceptability of the ADDIE model as perceived by our development team. A future study will address the effectiveness of the ADDIE model to support the delivery of the cognitive health interventions herein included.

ADDIE phase 1—Analysis

Training needs analysis

The analysis phase of the ADDIE model is compared to the needs assessment phase of intervention design. 27 For E-Cog, the analysis phase occurred between 2020 and 2021, in continuous iteration with the design phase. Some of the authors with expertise in the fields of cognitive health and education (K.M.L, D.R.C., M.L., and G.S.) collaborated to identify the answers to the topics posed in each of the five steps of the analysis phase. An account of this phase is detailed in Appendix A and summarized below.

Step 1. Overall goal of the training: Teach learners about cognitive health and promote the delivery of evidence-based online interventions (i.e., ABCR and/or MCT) in SSD.

Step 2: What learners need to do to achieve the goal(s) of the training: Learners are expected to understand concepts of each module, to apply knowledge acquired in modules 2 and 3/4, to be able to prepare an intervention plan, and to evaluate intervention's effects.

Step 3. Who are the learners: The professions of learners were identified based on those receiving in-person training and currently implementing interventions in academic and clinical settings. Five professions of potential learners were identified: 1) clinical psychologist and neuropsychologists; 2) social workers; 3) psychiatrists; 4) psychoeducators, and 5) occupational therapists. Profiles for each of these learners were developed by elaborating on their education, knowledge, attitudes, and behaviors. This was done by analyzing professional orders requirements, university coursework, values promoted by their profession (available on professional orders websites), and legally reserved acts with their responsibilities (e.g., clinical notes, reports). The learner profiles are detailed in Appendix A (step 3).

Step 4. What the learners need to learn: a list of specific learning objectives for each module was determined based on goals established in step 2, formulated using Bloom's revised taxonomy of educational objectives. 43 In E-Cog, learning objectives included defining basic concepts relevant to cognitive health, gaining knowledge of digital technologies (specifically the requirements and supporting evidence necessary for delivering interventions online, which is a core component of the iCogCA implementation trial), and each of the interventions; describing evidence associated with the prevalence and presentation of cognitive challenges in psychosis, and their impacts on functioning; situating tools and interventions available for improving cognitive health; integrating secure operations in online delivery of interventions; resolving technology related issues; and gaining a deep understanding of the theoretical basis, required skills, core elements; techniques and variations of delivery of each intervention. Importantly, learning objectives were defined with the goal to “fill in the gaps” in the knowledge the learners were already likely to possess, rather than offering redundant content. As an example, since mental health practitioners were likely knowledgeable in the definition and manifestation of psychotic disorders, Module 1 did not include a review of the diagnosis. A comprehensive list of learning objectives can be found in Appendix A (step 4).

Step 5. Other factors (besides skill/knowledge) that could affect implementation and how they can be addressed through training: Gavarkovs et al.27 point out that the successful implementation of an intervention requires more than knowledge and skill proficiency. Other factors could influence training, such as beliefs about the need for the intervention, beliefs in the effectiveness of the intervention, and self-efficacy to successfully implement the intervention. Factors believed to affect implementation were identified at the Beliefs, Benefits and Self-Efficacy level, and informed adjustments in phase 4 (i.e., Development). For instance, addressing learners’ belief in the intervention's relevance guided the selection of topics such as the prevalence and impacts of psychosis in Module 1. Intervention benefits were covered in Modules 3 and 4, including testimonials from patients and practitioners collected during unstructured feedback sessions. The complete list of “other factors” is available in Appendix A (step 5).

Step 6. Identify Constraints (and possible solutions): Eight possible constraints were identified, including financial constraints, technological constraints (e.g., computer access, technical issues), and personal constraints (e.g., not enough time to do training, motivation). Possible solutions to each are included in Appendix A (step 6).

Online platform analysis

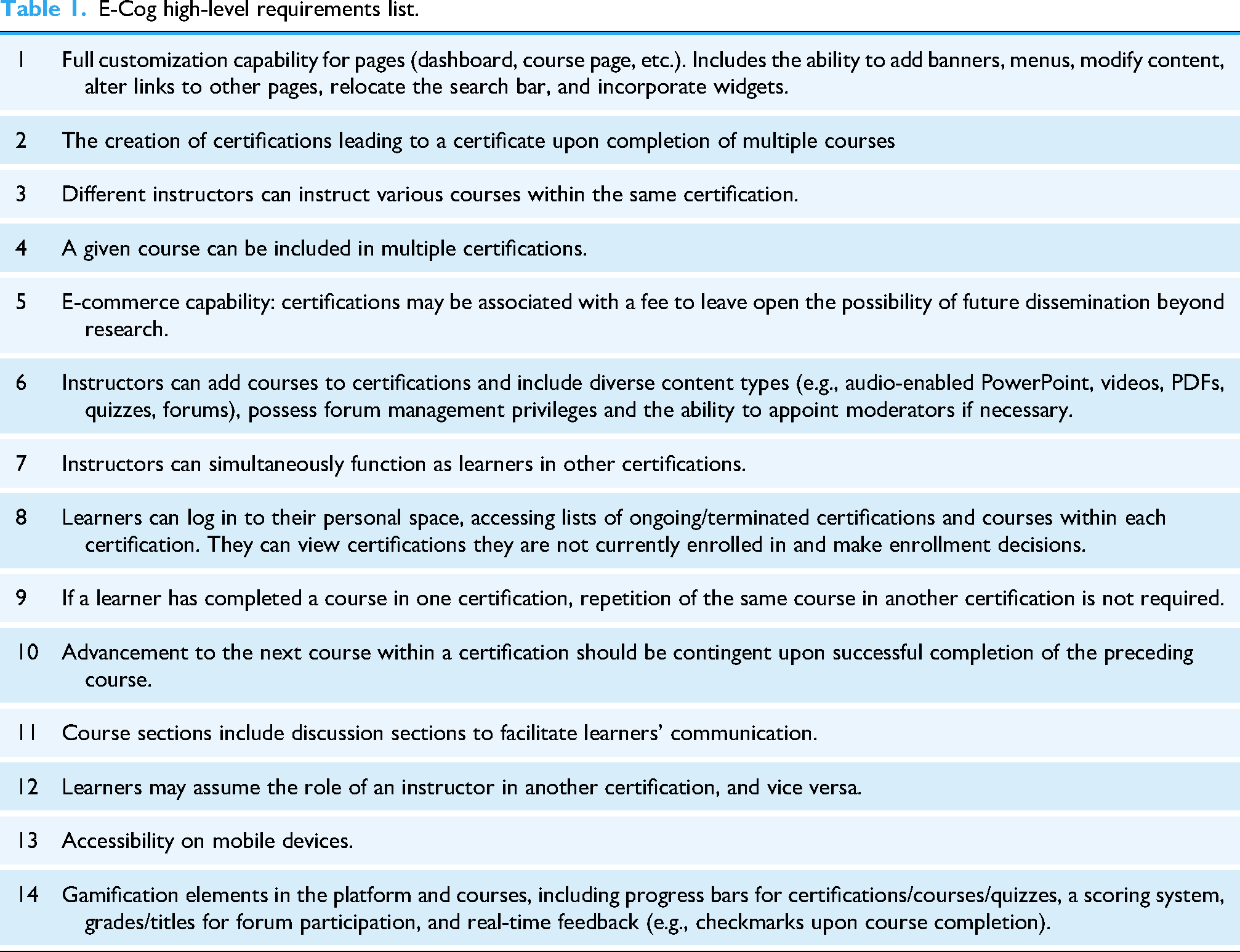

To establish the direction and vision of the platform, a high-level requirements list was generated (Table 1). This list informed the selection of a technological structure combining a Learning Management System (LMS) component and a Content Management System (CMS). The LMS comprised the core learning environment (i.e., content and learning progression) and the CMS hosted the static pages of the website (e.g., homepage, about us, and contact form).

E-Cog high-level requirements list.

The LMS chosen was OpenEdx, offered by a private service provider (name omitted for neutrality). The decision to opt for a cloud solution over a self-hosted one was made considering the size of the IT team. Among the various providers for OpenEdx, the service provider we chose stood out for offering the highest level of customization at a comparatively low cost. The service provider selection process was guided by considerations of implementation time, costs, usability (ease of utilizing existing features, modifying design, capacity for implementing changes), support for multimedia content (e.g., videos, Sharable Content Object Reference Model (SCORM) files—a standard format for packages containing e-learning content, ensuring their interoperability, accessibility, and reusability—, PDFs, quizzes, PowerPoint, forums), and the ability to extend these features. A forum with moderation capabilities and ability to furnish learning analytic tools (e.g. descriptive analytics, reporting) was also deemed essential. Technical prerequisites included support for a minimum of 200 users, scalability, compatibility across various devices, and mobile learning capabilities. Other technical prerequisites included sustainability, security (encompassing updates, upgrades, data encryption, account management, backups, and secure payment processing), and ease of migration to another LMS/server.

Other desirable features included gamification tools (e.g., progress bars, scoring systems with grades, real-time feedback upon course completion), as well as the integration of supplementary tools such as e-commerce functionalities to facilitate paid certifications. OpenEdx, distinguished by a modern interface and extensibility through ad hoc tools, satisfactorily met the specified criteria.

The LMS has been intricately integrated with the WordPress CMS, leveraging its robust capabilities to extend functionality with a suite of supplementary general pages. This augmentation encompasses a highly customizable homepage, an informative “about us” section, privacy and policy pages, a user-friendly contact form, an FAQ section, and other features inherent to the CMS platform.

ADDIE phase 2—Design

Determining learning units and activities

Step 1. Determine learning units: learning units were determined by grouping specific goals/objectives to facilitate learning and continuity of the topics, from defining basic concepts (e.g., Module 1, Learning Unit 1: Definitions and concepts), to expanding concepts to real-life, providing the latest research and concrete examples (e.g., Module 1, Learning Unit 2: Presentation and impacts), to real-life applications and solutions (e.g., Module 1, Learning Unit 3: Existing Solutions and Resources). A complete plan for the learning units at the design stage is available in Appendix B (step 1).

Step 2: Design learning activities: activities at the design stage included narrated PowerPoint presentations, demonstration videos and scientific articles to present theory, forums to provide learners with guidance and interaction, and online quizzes to provide feedback and assess learner's performance, knowledge and acquired skills. Modules 3 and 4 were designed to include online seminar Q&A with experts and quality control of recorded intervention sessions. However, notable changes in learning activities were implemented during development, described in detail in the “Development” section. A detailed account of learning objectives, strategies, medium, length, and content for each activity of each module are detailed in Appendix B (step 2).

Contrary to the first three modules, that were developed for E-Cog from the outset, the MCT training module was adapted from an existing online training developed by the intervention's creators, Steffen Moritz, Todd S. Woodward, Marit Hauschildt and the Metacognition Study Group at the University of Hamburg-Eppendorf (www.uke.de/e-mct). Modifications to the original MCT for psychosis online training aimed at making training material visually and structurally match the other modules.

Online platform design

Step 3: Design of the online platform: The list of requirements established in Phase 1 Step 4 for the online platform facilitated the creation of several documents to aid in the design process. We established the terminology to be employed (i.e., “certifications,” “modules,” “lessons,” “quizzes,” and “final assessment”) and formulated an initial list featuring the names of pages and their organizational structure (home page, login, dashboard, user profile, certification list, course page, lesson page, activity page). Subsequently, we developed an initial site map based on this information (Figure 2). From there, the initial wireframes for the most crucial pages were created first using pen and paper, then translated digitally (Figure 3) and further refined into visually realistic renditions (mockups) that inspired the final appearance of our website and learning environment (Figure 4).

Initial site map of the E-cog platform.

E-Cog website digital wireframes.

E-Cog website screenshots.

Learner access and navigation

Access to either certification was initially through email invitation to create an account, login, and access preauthorized content, as learners were preappointed by the study sites participating in the iCogCA study.

To facilitate navigation, the homepage included a summary of the goal of the website and links to important pages and information (certification pages, about us page, contact page). To promote the understanding of the structure of the training and certification requirements, certification pages, individual course pages and a Q&A page were included on the website.

Certifications assessment

To obtain a certificate for each module included in either the ABCR or MCT certification, learners had to pass each individual module's final assessment. These assessments included questions evaluating important learning objectives of that module which incorporated interactive scenarios, case studies and theory applied to real-life examples. This was to ensure that content was not only internalized but could be translated to actionable skills required to deliver the interventions. The minimum score was 80% and implementers could repeat the assessment indefinitely until the minimum score was reached.

Booster content and supervision sessions

Opportunities for retraining were available to learners by ensuring they had access to training content throughout the period when the intervention was delivered. Furthermore, learners had access to weekly group supervision sessions to support intervention implementation in the context of the iCogCA study. Supervision sessions were offered by five clinicians extensive experience delivering both the in-person and online modality of the target interventions. MCT supervision was led by the author S.M., and two additional members of his team, while ABCR supervision was initially conducted by G.S. and later continued by a member of C.B.'s team.

Additionally, Q&As by creators of both MCT (Dr Steffen Moritz) and ABCR (Dr Chris Bowie) were available either live or as interactive videos. This content was developed exclusively for the E-Cog platform.

ADDIE phase 3—Development

In phase 3, the online training was built based on the detailed planning developed during the design phase. Although Gavarkovs et al. 27 describes this phase of the ADDIE model as straightforward, our development process informed important changes in the decisions taken in the previous phase. These changes were influenced by pilot testing and technical difficulties using the software and technologies. Below, we describe the development and the required adaptations.

Content creation

Step 1: Create pedagogical material for learning activities: Pedagogical content for each module was developed by 1) transforming each learning goal in a topic, and grouping topics that covered the same theme together when necessary; 2) describing the topic, or selecting updated research on each topic; 3) selecting most relevant information from the reviewed literature and reformulating the information into accessible language, producing and reviewing a script; 4) using the script to guide the creation of visual content (e.g., Prezi presentation, Articulate slides); 5) recording the script in studio with each module instructor; 6) combining visual presentation with instructor videos, and (7) adding animations, sound effects and interactive components.

Additionally, the original manuals for both interventions were reviewed. The manual for in-person delivery of ABCR was adapted for remote delivery of the intervention and included among the required reading materials for this certification. Due to the remote-friendly nature of MCT (PowerPoint presentations for each session and group discussions), the original MCT manual was included, without the need for specific adaptation to the remote setting.

Feedback loop

The ADDIE model stresses that pilot testing and soliciting feedback are both critical aspects of program development, and that deciding how much content to develop before requesting feedback can be a balancing act; developing too much before requesting feedback can require significant revisions, while not having enough content before asking for feedback may not provide an accurate representation of what the training/platform will look like (Gagne et al. 44 cited in Gavarkovs et al. 27 ). Furthermore, it precludes that design, and development can happen at the same time, with feedback and pilot testing from a subset of learning activities informing future design decisions.

With that in mind, we opted to request feedback from different levels of expertise at different stages of development. For instance, during the content planning stages (i.e., transforming learning goals within topics, combining topics, describing topics and selecting relevant literature), the topics were distributed among specialists in the field (coauthors), who took turns to review each other's work and provide feedback. The following steps of producing a script, and developing visual content, were undertaken by a “content creation team” composed by some of the authors (A.E.S, C.D., C.A.Y.) and the help of graduate students that were familiar with the topics covered. Both the script and visual content were, in turn, reviewed by at least two specialists involved in the content planning, which allowed for accuracy of the translated research and accompanying visuals. Further changes were made to the script at the recording stage, to best reflect natural speech and language fluency, and to match the instructor style. Those changes were then reflected in the visual content when necessary. During the combination of visual and audio content, sections were reviewed as produced, once more, by specialists. At this stage, other inaccuracies were identified and corrected, and sometimes concepts were clarified or represented in a different way than planned, when a potential for confusion or ambiguity was detected.

Finally, all content produced was piloted by individuals uninvolved in the E-Cog planning and creation process who were representative of those eligible to be learners. Their feedback was discussed internally, and further improvements made to the content. Appendix C offers an overview of the content planning and creation process.

Online platform: web development

Step 2: Code and program the technological architecture of the learning platform: After acquiring OpenEdx through a private service provider, purchasing our domain name (e-cog.ca), and obtaining WordPress via a private WEB hosting provider (name omitted for neutrality), we initiated the development phase. The development process was somewhat unique as we had two platforms interacting together: the WordPress CMS and the OpenEdx LMS. With the WordPress CMS, we had access to the files stored on the server and source code, providing the opportunity for greater customization through PHP and the flexibility to perform updates at our discretion. On the other hand, with the LMS, we lacked server access but still had the ability to leverage JavaScript for making modifications.

The initial step involved familiarizing ourselves with the various applications and their initial setup. Concerning WordPress CMS, selecting the base theme was essential, and it needed to closely align with our visual preferences to minimize subsequent workload. A theme presenting the best match with our mockup was chosen, and a subtheme was configured to establish a designated space for modifications while mitigating potential disruptions to these alterations with each WordPress update.

The appearance and UX (frontend) of the WordPress CMS theme were subsequently tailored to replicate the mockup. The integration of elements or features on the pages was executed via PHP coding within the subtheme, while styling adjustments were made through CSS coding. Additionally, the WordPress builder was employed to facilitate the modification of text.

The process was similar to the OpenEdx LMS. The distinction lies in the utilization of the base theme provided by our LMS provider and the necessity to code in Vanilla JavaScript. The integration of elements or features on the pages was accomplished using Vanilla JavaScript, with styling adjustments executed through CSS coding. Given fewer text modifications on the LMS side, these changes were also implemented using Vanilla JavaScript.

We encountered limitations in both the development and utilization of the LMS provider. The cloud-based nature of the system made us reliant on the LSM provider service. The company's updates caused some disruptions to other functionalities. In addition, certain functionalities, although documented, were not fully implemented in practice or began to work unpredictably (e.g., creating certifications with courses, adding SCORM files, tracking course progress), resulting in the need for additional development time.

Pilot testing and UX evaluation

Once the content for modules 1 and 2 were deemed ready for testing by the expert reviewers and published on the platform, a round of internal pilot testing was conducted for each module inside the two certifications. Testers, who were students, research assistants, and therapists in our research group, were asked to complete all steps of the training as if a real learner and to report on any difficulties encountered while 1) navigating website pages, 2) navigating learning platform, 3) completing training content and 4) generating a certification. Additionally, we asked testers to estimate their completion time for each module.

Module 3 (ABCR training module) underwent pilot testing at a later stage, and pilot testing was completed by the content creation team and the experts involved in review, rather than by students/staff, due to time restrictions to release content. Nevertheless, the pilot process of reviewing the previous two modules informed the creation and testing of module 3, for which technical problems, usability issues, and content mistakes were less recurrent.

As training content for module 4 (MCT) was kept as original, piloting tests for this module included only UX aspects such as navigability, visualization of all units, and the capacity of users to complete final assessment. Pilot testing for this module was performed by two PhD students that underwent complete training.

Testers used a shared Microsoft Excel document to report on their experience. Technical issues, bugs, and minor errors were fixed throughout pilot testing, while major proposed changes (e.g., additional instructions to navigate SCORM files, introductory video for module navigation, pages design modifications, and creating an FAQ and Terms of Service page) were discussed internally before being implemented in subsequent versions of the website/content.

Testers of modules 1 and 2 (n = 9) were also asked to fill out a feedback form (see E-Cog Piloting Feedback Form in Appendix D and responses in Appendix E). The mean scores on a 6-point scale indicated a generally positive perception of the platform's usability and design. Specifically, ease of use received a mean score of 4.7, while ease of navigation scored 4.6. The attractiveness of the platform was rated highly, with a mean of 5.8, and the design was perceived as clean and simple, scoring 5.7. Regarding content quality, pilot users rated the credibility and trustworthiness of the information at a maximum score of 6 out of 6, reflecting strong confidence in the material provided. Additionally, 88% of pilot users would recommend the certification to others, and 100% would return to complete further certifications. These findings highlight not only strong learner satisfaction but also high acceptability of both the content and the platform.

In addition to having pilot users review the platform, a UX evaluation was conducted by an intern student enrolled in the Design and Cognitics Master's program at École Nationale Supérieure de Cognitique in France. As a result, a table was produced detailing the problems encountered on the pages along with the proposed solutions. Some of the proposed changes were implemented, while others were deemed nonviable given the advanced stage of development of the platform at the time.

ADDIE phase 4—Implementation

The E-Cog platform was officially released in March 2023, with both certifications (ABCR and MCT) available for therapists facilitating interventions within the iCogCA study. For a demo video of our platform, visit the link in Appendix F.

Therapists were recruited internally by the five sites involved in the iCogCA study and referred to our platform for training if they did not have previous experience with the intervention they were expected to facilitate (i.e., ABCR or MCT). All therapists were mental health professionals with experience with the SSD population. As of May 2025, 28 therapists have enrolled in at least one certification on our platform, and among them, 25 have successfully completed at least one certification, representing a completion rate of 89%—supporting strong engagement as well as high content and platform acceptability among learners. As the iCogCA study is ongoing, we expect more therapists to enroll and train on our platform over the next year.

Challenges and adaptations

Originally, we intended for users to be required to view content in sequential order, with subsequent content unlocking only after the current content had been viewed. However, we encountered difficulties in tracking users’ progress through the module. The content was not being marked as completed after being viewed, preventing users from advancing to the next content.

To address these issues, we implemented two temporary measures: offering free navigation (i.e., subsequent content is not locked) and adding a self-completion button for users to manually mark their progress. These measures, however, resulted in progression tracking being subjective to users’ report, and access to subsequent content possible without users having to achieve a passing grade in the previous module. Although it was not possible to verify whether learners had completed every lesson in detail while the temporary measures were in place, we manually reviewed their performance upon training completion to ensure they had achieved a passing grade in all modules before issuing a completion certificate. This approach helped confirm that learners had achieved a minimum level of knowledge acquisition.

One year after the initial launch of the platform, we opted to relaunch it with LearnDash, a different LMS solution due to that and other limitations outlined in the development phase, step 2. The decision was made to utilize an LMS that operates as a WordPress plugin and is self-contained within our WordPress CMS environment provided by our WEB hosting service. This approach provided greater control over the timing of updates and customization options while allowing us to leverage the advantages of cloud hosting, such as cost savings in management and maintenance, automatic backups, and scalability. The migration necessitated redesigning certain LMS webpages using CSS and recording certain functionalities via WordPress PHP.

Technical support and maintenance plan

Ongoing technical support has been made available to users by the contact form available on the website, or directly by email, with a response time of 1–2 h during work hours. Maintenance of the platform includes fixing technical issues as they are reported by users, in addition to security updates as often as possible, and no longer than every 6 months, for the duration of the iCogCA implementation trial. A WordPress plugin was installed to identify vulnerabilities in software components, allowing for the prioritization and quick implementation of updates when a critical vulnerability is detected.

ADDIE phase 5—Evaluation plan

The E-Cog platform evaluation plan includes the analysis of quantitative and qualitative data collected during the implementation years precluded by the iCogCA implementation trial. Quantitative data to assess knowledge acquisition, training effectiveness, and users attitudes toward digital tools include number of users, average time of use and frequency of access, final assessment average scores, and data on the subjective UX using the e-Therapy Attitudes and Process Questionnaire – Therapist Version (e-TAP-T, Clough et al. 45 ), a self-report tool developed to evaluate therapists’ perspectives, expectations, and willingness to engage in e-therapy.

Qualitative data will be collected by semistructured interviews of a subsample of therapists (n = 12) inquiring about the learning experience of therapists who were provided access to the platform and completed, or did not complete, the certifications required to facilitate interventions as part of the iCogCA study (Appendix G, item 1). Questions regarding self-efficacy in delivering interventions upon E-Cog training are included in this interview (i.e., questions 1, 2, and 11).

To assess therapists’ competency in delivering the intervention posttraining, intervention fidelity will be monitored through the audio recording of a subsample of sessions. These recordings will be analyzed by an experienced therapist using established fidelity tools, including session-level checklists and treatment fidelity tracking measures (such as the Treatment Fidelity Checklist for ABCR/MCT, the Grid for assessing therapeutic integrity and monitoring CR strategies, and the Treatment Integrity Scale for MCT, adapted from Schneider, Brüne 46 ). These tools, originally developed or adapted for in-person delivery of the interventions, are provided in Appendix G (items 2–6). Results pertaining to the feasibility, acceptability and efficacy of the E-Cog platform, as well as the treatment fidelity of ABCR/MCT delivery by E-Cog-trained therapists, will be presented in a future publication.

Once we have developed and tested our training platform for ABCR and MCT, we will focus on activities pertaining to the dissemination step. Briefly, this will include i) an assessment of the potential market for such a training platform, ii) the elaboration of an expansion plan to develop and integrate within our platform additional mental health interventions that we and others have developed (e.g., cognitive behavioral therapy for social anxiety, and stigma intervention), and iii) evaluation of the optimal business model (startup versus licensing agreement).

Discussion

The present study aimed to report on the development of an e-learning platform for the delivery of training in cognitive health interventions, and to assess the feasibility and acceptability of the ADDIE model on the development of online training for such interventions. Over the course of four years, we planned, developed, and implemented a novel online learning environment for the training of mental health experts in two cognitive health interventions aimed to improve cognitive difficulties in SSDs, following the ADDIE model. The E-Cog platform includes three newly created modules and one adapted module, and the technological structure (i.e., website and LMS) to host online certifications. Below, we discuss our experience as it relates to each of those goals.

Developing an e-learning platform for the delivery of training in cognitive health interventions

The E-Cog learning platform was developed in collaboration with a multidisciplinary team composed of mental health researchers and clinicians with several years of experience training for and personally delivering the mental health interventions for which we were developing online certifications, an experienced web developer, and the contribution of several trainees and research staff within our research group. The planning of the pedagogical content, selection of relevant literature, and review process was facilitated by the expertise of these professionals, resulting on a product that is highly relevant to mental health professionals, based on the most updated evidence in the field,15,16,23,33 and carefully tailored to address the real-life needs of delivering such interventions. It is important to note that the involvement of highly trained professionals in the feedback loop of several steps requires a considerable amount of time, which must be taken into consideration when devising a timeline for content planning and online platform creation. As we did not wish to compromise on the quality of our product, we opted to allow for an extended development time, which may not be realistic in other contexts.

We, however, observed that this time flexibility made it possible to experiment with different tools and processes for producing content and web developing, and to learn the necessary skills associated with using such tools (e.g., Prezi video and Articulate 360 software, media recording and video editing). The skills and knowledge acquired throughout this process helped our team to develop the expertise to create and implement e-learning training in the future with more efficiency. In fact, we observed that the creation and testing of each module increased the quality of the next one, decreasing the number of issues found by our reviewers and the time required to address such issues.

Additionally, the possibility of enrolling the collaboration of trainees and staff of our research group at different stages of content/web development provided them with the opportunity of learning skills that may otherwise not have been available in their training, and in exchange, offered us with a fresh and diversified perspective throughout the creative process that innovation requires.

Other positive aspects observed in the development process were the implementation of monthly meetings with the expert team (authors) to address issues and speed up the feedback process, and the progressive adoption of new, more robust tools to develop online platforms and content (e.g., replacing PowerPoint and Prezi Video by Articulate, replacing our LMS provider by LearnDash).

Based on our experience, we would prioritize the following aspects in our procedures when developing e-learning in the future:

Implementing interviews with potential learners early in the analysis/design stages to identify needs and current gaps in existing tools for similar purposes. While our team benefited from the advice of clinicians with vast experience in training mental health care professionals in-person, and in delivering interventions online, we did not interview users of online learning systems to identify the current state of the technology until after implementation. While many of the features already included in our platform were addressed by potential users (e.g., pleasant and easy interface, gamification and progress tracking to support engagement, real-life instructor and access to experts for questions and clarifications), other aspects mentioned in those informal interviews could have been incorporated if identified early on in our process. Involving UX analysis early in the design stage. Our UX evaluation was accomplished at the development stage, and consequently, some of the considerations brought up in the analysis could not be implemented at the time without significant delay in our timeline. Careful assessment of robustness and flexibility of tools for developing content and technological structure, with reliable providers and efficient customer support. As mentioned before, we had significant delays in our online development due to unreliable provider updates and delayed customer support that interfered with our implementation and ultimately geared us toward the rebuilding of our online platform using a different provider, which incurred additional, unpredicted development time after implementation. Another option would be opting for self-hosted platforms instead of adapting existing ones, if the priority is high customization and autonomy, and the time and resources are available. Planning for multilingual training at analysis/design stage to facilitate later development (e.g., devising content examples and exercises that are easily adaptable to other languages; saving editable version of scripts and visual material for future adaptation; verifying if chosen tools support multilingual e-learning courses). This measure would respond to the need of our bilingual environment and increase the global reach of our training. Keeping a flexible mindset to allow for feature changes over the course of the project. Many of the initial features predicted in our high-level list of requirements were implemented in a different way in the final version, responding to needs not identified at analysis/design, or to incorporate piloting/users’ feedback. Other features were added to improve the learning experience, navigability and to optimize data collection. A flexible and resilient web development and content creation team was essential for implementing and testing such adaptations throughout the process, thus increasing the quality of our final product beyond initial prototyping.

The feasibility and acceptability of the ADDIE model

The acceptability of the ADDIE model was inferred from informal observations and subjective impressions gathered by the core members of the development team (C.D., A.E.S., and G.S.) throughout the design and implementation process. No standardized indicators or formal measurement tools were used specifically to assess the model's acceptability.

Nonetheless, qualitative insights collected during team meetings and collaborative sessions revealed a generally positive reception. The ADDIE model provided us with a valuable framework to develop our online training platform. Team members expressed enthusiasm upon being introduced to the ADDIE model, particularly appreciating its structured and comprehensive framework, which provided much-needed guidance given the team's limited prior experience with instructional design and e-learning.

The detailed specifications included in each phase allowed us to anticipate and plan for the required features of our online platform, and to guide the development of our certifications content. We observed a significant overlap of all the phases included in the model (not only of the analysis and design stages, as suggested in the initial model directives in Gavarkovs et al. 27 ). Design and development of different aspects were done in iterations, with changes suggested by piloting after development informing adaptations to the original design plan.

Furthermore, the modules of our certifications were developed independently, which sometimes resulted in being at a different stage for each module at a given time (e.g., development of module 1 concurrent with design of modules 2 and 3). The process of the website design and development was concurrent to that of the content, and informed by it, so that needs that arose from the content informed revisions in the design, and restrictions in the online platform informed adaptations in the content. This observation suggests that perhaps larger projects can benefit from greater flexibility in the use of the ADDIE model to keep up with the real development needs, including that of delivering the final product in a timely manner.

Despite facing certain technical challenges that led to timeline adjustments, the team consistently adhered to the model's phases and deliverables—suggesting not only good acceptability but also high perceived usefulness and practicality of the framework in our context.

We assessed the acceptability of the E-Cog platform and its training content through quantitative feedback collected from pilot testers using a customized questionnaire, as reported in the Pilot testing and UX evaluation section of this manuscript. The results indicate a generally positive perception of the platform's usability and design.

Our next steps involve completing the final stage of the ADDIE model and evaluating our platform's usability and its impact on cognitive health intervention delivery. Once validated as an effective tool for training in cognitive health interventions, we aim to expand access by developing a sustainable model for commercialization. This includes exploring funding mechanisms to support ongoing maintenance, updates, and user support beyond the trial. To promote international scalability, we plan to adapt the platform for multilingual use and assess its relevance across diverse health care contexts. Finally, future research will include long-term impact assessments to evaluate the platform's effectiveness in improving the delivery and accessibility of mental health interventions over time.

Limitations

The development of the E-Cog platform faced several limitations. First, technical issues such as tracking errors and occasional update bugs in the initial version of our LMS interfered with data collection, particularly in verifying whether participants completed all content as intended. As a result, we relied on a self-check measure, which may not have fully captured participant engagement or completion rates. Second, development was more time-consuming than anticipated, which reduced the timeframe for iterative design and limited broader testing of later-developed modules. Additionally, our evaluation of participants’ uptake did not include a pre/post assessment of learners’ competence, which limits our ability to assess the effectiveness of the online training.

Finally, although the instructional design was guided by the ADDIE model, there is limited empirical evidence supporting its effectiveness in digital cognitive training contexts. Our literature review found no studies that directly compare the effectiveness of ADDIE-designed interventions with non-ADDIE-designed interventions under comparable conditions. Instead, existing research primarily evaluates the effectiveness of the final applications developed using ADDIE, without isolating the model's specific impact on outcomes. As a result, it remains challenging to determine whether the observed effects are due to the ADDIE framework itself or from other factors, such as the quality of the pedagogical content.

Of note, evaluating the efficacy of the ADDIE framework itself was beyond the scope of our study. Isolating such an effect would require a comparative trial contrasting an intervention developed using ADDIE with one designed without such a structured instructional model. In our case, ADDIE was selected as a well-established and practical framework to guide the design, development, and implementation of the training content. Our goal is not to isolate the impact of ADDIE but rather to document its application in a real-world clinical training context and to assess the feasibility and acceptability of this approach as part of a large-scale implementation trial.

Conclusions

The E-Cog platform has been implemented as a tool to deliver remote training that is engaging and asynchronous, while maintaining the quality of in-person training. We observed the successful uptake of mental health practitioners during the first year of implementation and addressed technical challenges to improve UX. As user numbers grow, it shows potential for long-term cost savings without requiring additional content development.

Next steps include completing the final steps of the ADDIE model and evaluating the platform's usability and impact on cognitive health intervention delivery. We aim to support broader dissemination by making the training modules commercially available, increasing access to mental health interventions in health care settings.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251381249 - Supplemental material for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model

Supplemental material, sj-docx-1-dhj-10.1177_20552076251381249 for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model by Ana Elisa Sousa, Caroline Dakoure, Christy Au-Yeung, Katie M. Lavigne, Delphine Raucher-Chéné, Christopher R. Bowie, Steffen Moritz, Martin Lepage and Geneviève Sauvé in DIGITAL HEALTH

Supplemental Material

sj-docx-2-dhj-10.1177_20552076251381249 - Supplemental material for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model

Supplemental material, sj-docx-2-dhj-10.1177_20552076251381249 for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model by Ana Elisa Sousa, Caroline Dakoure, Christy Au-Yeung, Katie M. Lavigne, Delphine Raucher-Chéné, Christopher R. Bowie, Steffen Moritz, Martin Lepage and Geneviève Sauvé in DIGITAL HEALTH

Supplemental Material

sj-docx-3-dhj-10.1177_20552076251381249 - Supplemental material for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model

Supplemental material, sj-docx-3-dhj-10.1177_20552076251381249 for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model by Ana Elisa Sousa, Caroline Dakoure, Christy Au-Yeung, Katie M. Lavigne, Delphine Raucher-Chéné, Christopher R. Bowie, Steffen Moritz, Martin Lepage and Geneviève Sauvé in DIGITAL HEALTH

Supplemental Material

sj-pdf-4-dhj-10.1177_20552076251381249 - Supplemental material for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model

Supplemental material, sj-pdf-4-dhj-10.1177_20552076251381249 for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model by Ana Elisa Sousa, Caroline Dakoure, Christy Au-Yeung, Katie M. Lavigne, Delphine Raucher-Chéné, Christopher R. Bowie, Steffen Moritz, Martin Lepage and Geneviève Sauvé in DIGITAL HEALTH

Supplemental Material

sj-pdf-5-dhj-10.1177_20552076251381249 - Supplemental material for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model

Supplemental material, sj-pdf-5-dhj-10.1177_20552076251381249 for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model by Ana Elisa Sousa, Caroline Dakoure, Christy Au-Yeung, Katie M. Lavigne, Delphine Raucher-Chéné, Christopher R. Bowie, Steffen Moritz, Martin Lepage and Geneviève Sauvé in DIGITAL HEALTH

Supplemental Material

sj-pdf-6-dhj-10.1177_20552076251381249 - Supplemental material for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model

Supplemental material, sj-pdf-6-dhj-10.1177_20552076251381249 for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model by Ana Elisa Sousa, Caroline Dakoure, Christy Au-Yeung, Katie M. Lavigne, Delphine Raucher-Chéné, Christopher R. Bowie, Steffen Moritz, Martin Lepage and Geneviève Sauvé in DIGITAL HEALTH

Supplemental Material

Supplemental Material

sj-pdf-7-dhj-10.1177_20552076251381249 - Supplemental material for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model

Supplemental material, sj-pdf-7-dhj-10.1177_20552076251381249 for E-Cog: An online training platform for cognitive health interventions based on the ADDIE model by Ana Elisa Sousa, Caroline Dakoure, Christy Au-Yeung, Katie M. Lavigne, Delphine Raucher-Chéné, Christopher R. Bowie, Steffen Moritz, Martin Lepage and Geneviève Sauvé in DIGITAL HEALTH

Footnotes

Acknowledgements

A special thank you to the E-Cog development team and collaborators: Karyne Anselmo, Tammy Vanrooy, Alexandre Caron; to the students involved in content creation and review: Maiwen Maginet, Marianna Khalil, Jesse Rae, Helen Thai; and to our pilot testers: Samantha Aversa, Quinta Seon, Vanessa Valiquette, Marie-Christine Boulianne, Libby Lassman, Clayton Jeffrey, Crystal Yang, Jiaxuan Deng, and Danielle Penney.

ORCID iDs

Ethical approval

The E-Cog platform was included was part of the research study iCogCA: An innovative protocol promoting cognitive health through online interventions for persons living with schizophrenia-spectrum disorder, approved by the Research Ethics Board of the Integrated University Health and Social Services Centre (CIUSSS) of the West Island of Montreal, on August 23, 2023 (#2023-561).

Contributorship

AES, CAY, CD, KML, DRC, and ML contributed to the conceptualization and methodology of this study. Data curation and formal analysis of preliminary data were performed by AES and CD. The authors DRC, CRB, ML, KML, and GS contributed to funding acquisition. AES, CD, and CAY contributed to investigation process. Project administration and supervision was shared between GS, AES, CD, and ML; further mentorship was provided by KML, DRC, CRB, and SM as needed. Authors CRB and SM contributed resources for the creation of online training modules based on their respective interventions (ABCR and MCT). CD headed software development and implementation. AES, CD, and CAY contributed to data visualization/presentation and writing the original draft. All authors were involved in reviewing and/or editing the initial and subsequent drafts.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by the Douglas Foundation, the Canadian Institutes of Health Research (CIHR) iCogCA study grant (#180501) and by the Canada First Research Excellence Fund, awarded through the Healthy Brains, Healthy Lives initiative at McGill University.

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: AES, CD, CAY, KML, DRC and GS declares no potential conflict of interest with respect to the research, authorship, and/or publication of this article. SM is the developer of the Metacognitive Training intervention. CB is the developer of the Action-Based Cognitive Remediation training. ML reports grants from Roche Canada, grants from Otsuka Lundbeck Alliance, grants and personal fees from Janssen, and personal fees from Otsuka Canada, Lundbeck Canada, and Boehringer Ingelheim outside the submitted work.

Data availability

The data that support the findings of this study are available from the corresponding author, [ML], upon reasonable request.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.