Abstract

Background

Spinal cord injury (SCI) severely affects patients’ quality of life. With the rise of short video platforms, they have become important sources of health information, yet few studies have assessed the quality of SCI-related content on these platforms.

Objective

This study aimed to analyze the content and quality of SCI-related videos on three major short video platforms.

Material and Methods

This study collected SCI-related short videos published between 28 March and 10 April 2025 on three platforms: BiliBili, Kwai, and TikTok. After strict screening (removing advertisements, duplicates, and irrelevant content), 251 valid samples were finally included. To minimize the influence of platform recommendation algorithms, the study used newly registered accounts to conduct standardized searches with “spinal cord injury” as the uniform search term. Video quality was assessed using four methods: Journal of the American Medical Association (JAMA), global quality scale (GQS), modified DISCERN, and patient education materials assessment tool. Two staff members (Z-SH and Z-GF) independently scored all videos. When their ratings differed by more than 15%, an expert (TY) made the final decision.

Results

A total of 251 SCI-related videos were analyzed across BiliBili (n = 68), Kwai (n = 91), and TikTok (n = 92), revealing marked differences in content characteristics and quality. BiliBili featured the longest videos (median: 300 s) and the highest collection rate. It also achieved the highest JAMA (2.10 ± 0.85) and GQS (3.18 ± 0.91) scores. Kwai videos were the shortest (median: 15 s) but generated the most user interaction (likes, comments, and shares). However, it consistently scored lowest across all quality metrics (e.g. JAMA = 1.28 ± 0.42; GQS = 2.00 ± 0.83), with limited understandability (38 ± 24) and actionability (22 ± 24). TikTok content, primarily created by professionals, showed the highest modified DISCERN score (2.80 ± 0.78) and moderate practical value (understandability: 71 ± 21; actionability: 41 ± 29), though user engagement was relatively low. Quality indicators (JAMA, GQS, and DISCERN) were moderately correlated with follower count but weakly or negatively correlated with user engagement. Finally, understandability and actionability showed a moderate correlation (r = 0.49).

Conclusion

This cross-platform comparative study reveals significant disparities in content quality among SCI-related videos on three leading short video platforms. Despite diverse video formats, the overall quality and reliability remain suboptimal.

Introduction

Spinal cord injury (SCI), as one of the most devastating traumatic injuries of the central nervous system, is characterized by high disability and mortality rates, severe life-threatening complications, and irreversible pathological mechanisms, profoundly impacting patients’ quality of life. The disease burden from SCI is concentrated in working-age adults (20–49 years), accounting for 65% to 70% of injury-related disability-adjusted life years (DALYs), with total DALYs projected to increase by 20% to 35% by 2050. 1 According to data released by the World Health Organization, over 15 million individuals globally were living with SCI by 2021, with the majority of cases attributed to traumatic causes such as falls, road traffic accidents, or violence. 2 Research indicates that the risk of lifelong paralysis is significantly increased in patients with complete SCI if no signs of neurological recovery emerge within 48 h after injury. 3 The prevention strategies, knowledge of complications, and rehabilitation training for SCI remain underemphasized. Thus, early identification, standardized treatment, and effective rehabilitation are critical for reducing long-term disability and enhancing patients’ quality of life. Equally important is ensuring patients have access to reliable information on lifestyle adjustments (e.g. increased physical activity, nutritional optimization, and regular health screenings), structured health management, and self-management training, which are more pivotal in preventing the onset and progression of post-SCI complications.

The rapid development of the internet and mobile communication technologies has established social networks as primary channels for information dissemination. Short-form video platforms have gained immense popularity due to their efficiency in utilizing fragmented time. As of April 2024, TikTok reported 1.582 billion global monthly active users (MAUs), ranking as the fifth-largest social media platform worldwide. 4 Kwai Technology's Q4 2024 financial report revealed a domestic MAU surpassing 736 million for the first time, 5 while BiliBili's Q3 2024 report indicated a domestic MAU of 348 million. 6 On these platforms, users can freely access extensive health-related videos by searching keywords such as “spinal cord injury.” Notably, TikTok Health's 2024 Annual Health Report, released in March 2025, highlighted the addition of 3.7 million new science popularization videos in 2024, with medical videos accumulating 3 billion likes and 1.93 billion saves. 7

Despite the pivotal role of short-form video platforms in health communication, the lack of rigorous review mechanisms for SCI-related content raises concerns about inaccuracies that may mislead users and influence health decisions. To date, no systematic research has been conducted globally to evaluate the quality and reliability of SCI-related short videos. This study therefore aims to analyze the quality and credibility of SCI-related videos on mainstream platforms (TikTok, Kwai, and BiliBili), providing empirical evidence to optimize health content and guide evidence-based public health strategies.

We hypothesize that the quality and credibility of SCI-related videos across these platforms will vary significantly due to the lack of adequate review mechanisms, which results in substantial content differences. Given the absence of robust content review systems, it is essential to improve the accuracy, reliability, and standardization of health-related content to better meet users’ needs.

Methods

Ethical considerations

This study did not require ethical review, as the data were derived from publicly available content on short-form video platforms and involved no human experimentation. Additionally, no personally identifiable information (e.g. user IDs and portraits) was included in the analysis.

Search strategy and data collection

This study focused on analyzing health information related to SCI accessible to users on short-form video platforms, evaluating the potential misinformation and reliability of such content. Data were collected from 28 March to 10 April 2025. To mitigate bias from personalized recommendation algorithms, newly registered accounts were used to search for the keyword “spinal cord injury” on TikTok, Kwai, and BiliBili. Based on evidence that approximately 70% of health seekers only review the first two pages of search results 8 and that over 90% of clicks occur on the first search page (with a click-through rate of <2.5% for the second page), 9 we retrieved the top 100 ranked videos from each platform (a total of 300 videos). After excluding duplicates, advertisements, and irrelevant content, 251 valid videos remained (68 from BiliBili, 91 from Kwai, and 92 from TikTok).

Inclusion criteria

Videos directly related to SCI content (including traumatic SCI, spinal cord disorders, paraplegia caused by SCI, etc.). (2) Videos in Chinese.

Exclusion criteria

Duplicate content. (2) Commercial advertisements promoting products (e.g. medications and devices) or services (e.g. hospital promotions). (3) Irrelevant content (e.g. brain injury and peripheral nerve injury).

For included videos, metadata and characteristics were recorded: title, engagement metrics (likes, comments, saves, and shares), upload duration (days since posting), video length, source, uploader's follower count, presentation format (Vlog, Monologue, Dialogue, Animation, and News Broadcasting), and content type (Patient Experience, Disease Knowledge, Outpatient Scenes, Diagnosis/Treatment Process, and News) and account verification type.

Personal verification confirms identity and reflects social influence, while institutional verification ensures organizational legitimacy. In this study, we verified accounts based on platform-specific certification systems to classify content. All three platforms—TikTok, BiliBili, and Kwai—use yellow V and blue V certifications, though the application varies slightly. The yellow V is granted to independent users with significant influence or following, indicating expertise or authority. The blue V is used for institutional accounts, such as media outlets or government bodies, ensuring their official status and credibility on the platform.

Video content and quality assessment

The Journal of the American Medical Association (JAMA) Benchmark Criteria, a validated tool for assessing the reliability of health information, was applied to evaluate four core dimensions: author credentials, citation of sources, disclosure of conflicts of interest, and content timeliness. 10 The global quality scale (GQS), a five-point Likert scale, subjectively assessed overall video quality, structure, and informational completeness (1 = poor quality; 5 = excellent fluency and quality), measuring the synergy between information delivery and platform usability.11,12 The modified DISCERN tool, designed to evaluate the credibility of online health information, employed five equally weighted questions (one point each) to establish a structured reliability framework.13,14 These tools—JAMA, GQS, and modified DISCERN—have been widely validated in health information research.15–17

To further analyze the clarity and actionable value of video content, the patient education materials assessment tool (PEMAT), developed by the U.S. Agency for Healthcare Research and Quality, was applied across two dimensions (understandability and actionability) to assess information clarity and practical guidance for patients.18,19 Detailed scoring protocols for these assessment systems are provided in Supplemental Appendix 1 (Table S1–S4).

Inter-rater reliability

A dual-independent scoring mechanism ensured objectivity: two researchers (Z-SH and Z-GF) independently rated videos three times (Z-SH twice and Z-GF once). Discrepancies were resolved by a third researcher (TY). Intraclass correlation coefficients (ICCs) were calculated to evaluate inter- and intra-rater consistency, with reliability categorized as poor (ICC < 0.50), moderate (0.50 ≤ ICC < 0.75), good (0.75 ≤ ICC < 0.90), or excellent (ICC ≥ 0.90). 20

Inter-rater agreement was strong: JAMA (ICC = 0.940, 95% CI [0.923, 0.953]), GQS (ICC = 0.859, 95% CI [0.819, 0.890]), modified DISCERN (ICC = 0.799, 95% CI [0.743, 0.843]), understandability (ICC = 0.846, 95% CI [0.802, 0.880]), and actionability (ICC = 0.797, 95% CI [0.740, 0.842]). Intra-rater reliability was also robust: JAMA (0.951), GQS (0.886), modified DISCERN (0.843), understandability (0.875), and actionability (0.849).

Statistical analysis

Data analysis was performed using SPSS 22.0 (IBM Corporation, Armonk, NY, USA) and GraphPad Prism 9.0 (Dotmatics, Bristol, UK). Categorical variables were described using frequencies and percentages. For continuous variables, different statistics were used according to normality: data conforming to a normal distribution were expressed as “mean ± standard deviation (SD)” and non-normally distributed data were presented as “median (interquartile range (IQR)).” Normality was assessed by the Shapiro–Wilk test (two-sided, α = 0.05), and a significant result (p < 0.05) was used to determine non-normal data.

Group comparisons

Two independent samples

For normally distributed data, the Student's t-test was used to compare mean differences. For non-normally distributed data, the Mann–Whitney U test was employed to compare medians, as this method does not rely on data distribution assumptions.

Three or more samples

For normally distributed data, overall comparison was carried out by one-way analysis of variance, and post-hoc pairwise comparisons were conducted using the Bonferroni test to control the family-wise error rate (Type I error) to p < 0.05.

For non-normally distributed data, the Kruskal–Wallis H test was used, and post-hoc multiple comparison corrections were made using the Dunn–Bonferroni method to maintain statistical validity.

Reliability assessment

Inter-rater and intra-rater reliabilities of video quality scores (JAMA, GQS, modified DISCERN, PEMAT-understandability, and PEMAT-actionability) were quantified using the intraclass correlation coefficient (ICC) combined with a two-way random-effects model (absolute agreement). ICC values and 95% confidence intervals (CIs) were calculated, and reliability was classified according to the criteria of Koo and Li 20 : poor (ICC < 0.50), moderate (0.50 ≤ ICC < 0.75), good (0.75 ≤ ICC < 0.90), and excellent (ICC ≥ 0.90).

Correlation analysis

The monotonic relationships between quantitative variables (such as user engagement metrics, video characteristics, and quality scores) were evaluated using Spearman's rank correlation coefficient (r). This non-parametric approach was suitable for handling potential non-normality and ordinal data structures. Correlation strengths were classified as follows: weak correlation (|r| < 0.3), moderate correlation (0.3 ≤ |r| < 0.5), strong correlation (|r| ≥ 0.5), and statistical significance was determined as p < 0.05 (two-tailed test). 21

Data preprocessing

Video screening: 300 initial videos were obtained through keyword searches on BiliBili, Kwai, and TikTok. After manual screening, duplicate videos (four items), commercial advertisements (one item), and irrelevant content (46 items) were excluded, and finally 251 valid videos were included (Figure 1).

Flowchart of the study.

Outlier handling: Continuous variables (such as the number of likes and comments) were visually inspected for outliers using boxplots, which were defined as data points outside Q1 − 1.5 × IQR or Q3 + 1.5 × IQR. Outliers were retained in the dataset, and non-parametric tests were prioritized for analyses involving them.

Results

Basic characteristics of videos

As shown in Figure 1, after excluding advertisements, duplicates, and irrelevant videos, our cross-platform analysis filtered out 251 SCI-related videos from BiliBili (n = 68), Kwai (n = 91), and TikTok (n = 92), revealing significant platform-specific variations in user engagement, content quality, and practical utility. As shown in Table 1, BiliBili was characterized by long video durations (median: 300 s; IQR: 155–498) and high collection counts (median: 204; IQR: 82–827), with videos having been uploaded for a median of 801 days (IQR: 341–1160). Kwai featured ultra-short videos (median: 15 s; IQR: 10–46) that drove high interaction, with significantly leading numbers of likes (median: 727; IQR: 140–5406), comments (median: 181; IQR: 31–670), and shares (median: 117; IQR: 33–342). Creators on this platform also had the largest follower bases (median: 85,000; IQR: 13,000–210,000), but content was less current (median upload duration: 126 days). TikTok showed the lowest active user engagement (median likes: 206; comments: 17), though uploaders had moderate follower counts (median: 28,527; IQR: 6178–136,769). BiliBili performed best in reliability (JAMA score: 2.10 ± 0.85) and overall quality (GQS score: 3.18 ± 0.91). TikTok demonstrated significantly higher information credibility (modified DISCERN score: 2.80 ± 0.78) compared to other platforms (BiliBili: 2.00 ± 0.74; Kwai: 1.97 ± 0.63). Kwai lagged behind in all quality measures (JAMA = 1.28 ± 0.42; GQS = 2.00 ± 0.83). BiliBili and TikTok had similar understandability scores (both around 70), significantly higher than Kwai (38 ± 24). Both platforms also showed better actionability (BiliBili: 44 ± 36; TikTok: 41 ± 29) compared to Kwai (22 ± 24).

General characteristics and scores of videos related to spinal cord injury.

JAMA: Journal of the American Medical Association; GQS: global quality scale; PEMAT: patient education materials assessment tool; SD: standard deviation.

Kruskal–Wallis rank sum test.

Video sources and content

This study analyzed the sources and thematic characteristics of SCI-related videos across three platforms: BiliBili, Kwai, and TikTok. Differences in creator identity, thematic focus, presentation style, and verification status were observed among the platforms. As detailed in Table 2, BiliBili, the majority of videos were produced by independent users (84%, 57 out of 68), followed by physicians (10%, 7 out of 68). Similarly, independent users accounted for 67% (61 out of 91) of content creators on Kwai, though the proportion of physicians was relatively higher (23%, 21 out of 91). In contrast, TikTok was predominantly driven by medical professionals (79%, 73 out of 92), with independent users comprising only 17% (16 out of 92). BiliBili and Kwai emphasized patient experience sharing (51% and 68%, respectively). TikTok, however, prioritized educational content on SCI (53%, 49 out of 92) and recordings of outpatient scenarios (30%, 28 out of 92). Vlogs were the dominant format on both BiliBili (53%, 36 out of 68) and Kwai (65%, 59 out of 91). TikTok, on the other hand, favored monologues (49%, 45 out of 92) and dialogues (29%, 27 out of 92) as its primary modes of presentation. Regarding verification status, TikTok had the highest rate of “yellow V” professional verification (82%, 75 out of 92). In contrast, the majority of users on BiliBili (84%) and Kwai (71%) remained unverified. Finally, animation was significantly more prevalent on Kwai (22%, 20 out of 91) compared to BiliBili (0%) and TikTok (5.4%), indicating a platform-specific preference for animated formats.

The characteristics and content of videos on spinal cord injury.

Fisher's exact test for count data with simulated p-value (based on 2000 replicates).

Analysis of video quality and popularity across different sources, content, and formats of health education videos

Quality differences among videos from different publishers

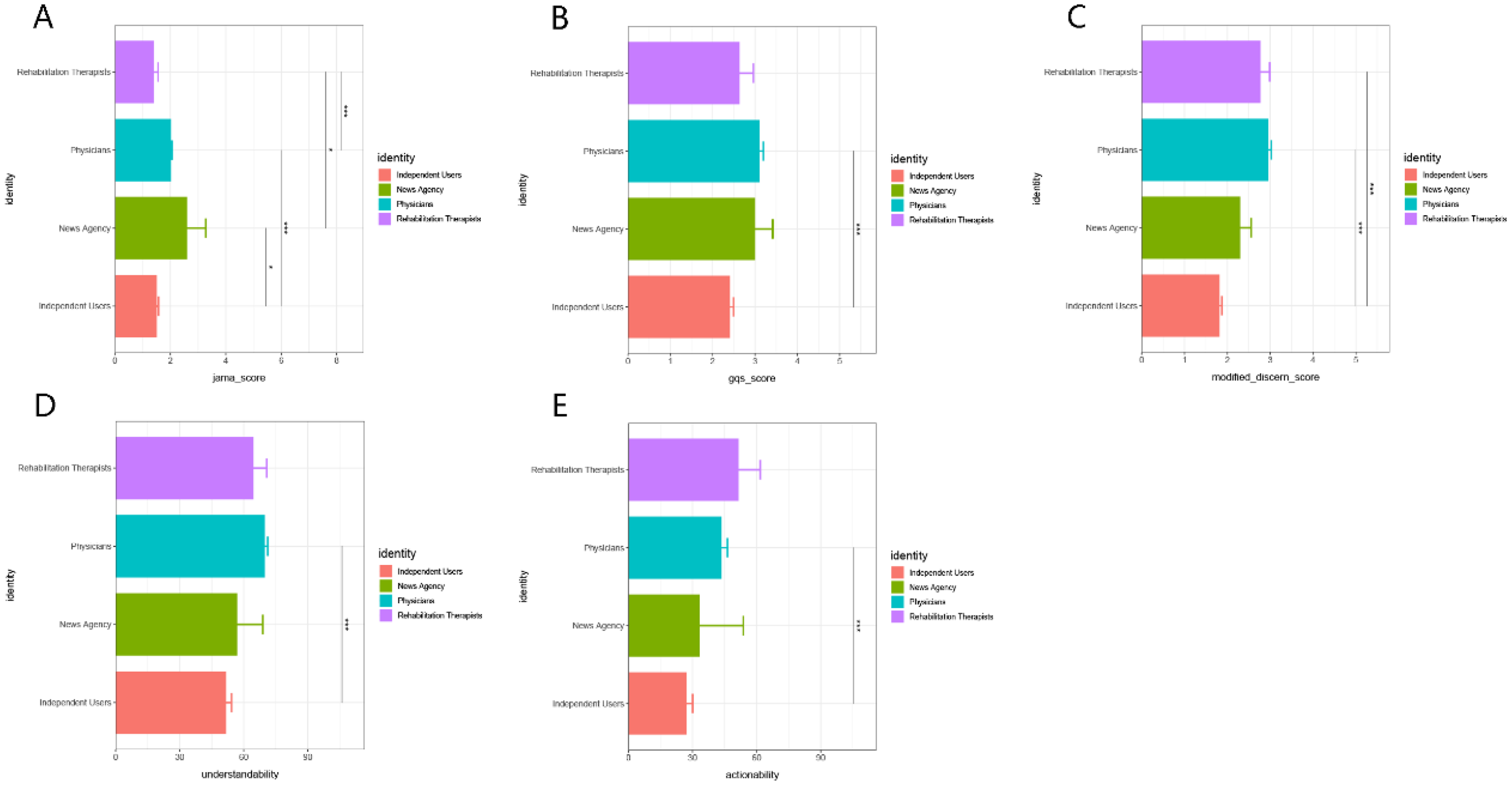

Videos produced by physicians achieved significantly higher scores than those from other creator groups in the GQS (Figure 2B), the modified DISCERN score (Figure 2C), and understandability (Figure 2D), with all five indicators demonstrating significant differences relative to independent users (p < 0.001). Videos created by rehabilitation therapists achieved the highest scores in actionability (Figure 2E), and also performed well in both the modified DISCERN and understandability metrics. Content from news organizations ranked highest in authority, as reflected by the JAMA score (Figure 2A), which was significantly higher than that of independent users and rehabilitation therapists (p < 0.05). However, their performance was inferior to professional groups in terms of the modified DISCERN score, understandability (Figure 2D), and actionability (Figure 2E). Videos from independent users were of the lowest quality overall, with significantly lower scores across all metrics compared to physicians (p < 0.001), news organizations (JAMA score, p < 0.05), and rehabilitation therapists (modified DISCERN score, p < 0.05).

Scores of spinal cord injury videos from diverse sources, including. (A) The JAMA score, (B) the GQS score, (C) the modified DISCERN score, (D) the PEMAT-understandability, and (E) PEMAT-actionability, were evaluated across assessment criteria.*p < 0.05, **p < 0.01, ***p < 0.001.

Assessment of quality across different content types

Videos categorized under disease knowledge consistently outperformed patient experience videos across all five metrics (Figure 3A to E, all p < 0.001). News-related content demonstrated moderate quality in the GQS (Figure 3B), modified DISCERN score (Figure 3C), and understandability (Figure 3D), while achieving a higher JAMA score than patient experience videos (Figure 3A, p < 0.001). Outpatient scene-related videos scored significantly lower than disease knowledge videos across all five dimensions, yet still outperformed patient experience videos overall (p < 0.001). Patient experience videos consistently received the lowest scores among all content types. Their JAMA, GQS, and modified DISCERN scores were significantly lower than those of the other three content types (p < 0.001, p < 0.01), with similarly low scores in understandability and actionability (both p < 0.001).

Assessment scores for spinal cord injury videos focusing on distinct topics—including (A) the JAMA score, (B) the GQS score, (C) the modified DISCERN score, (D) PEMAT-understandability, and (E) PEMAT-actionability. *p < 0.05, **p < 0.01, ***p < 0.001.

Comparison of quality across different presentation styles

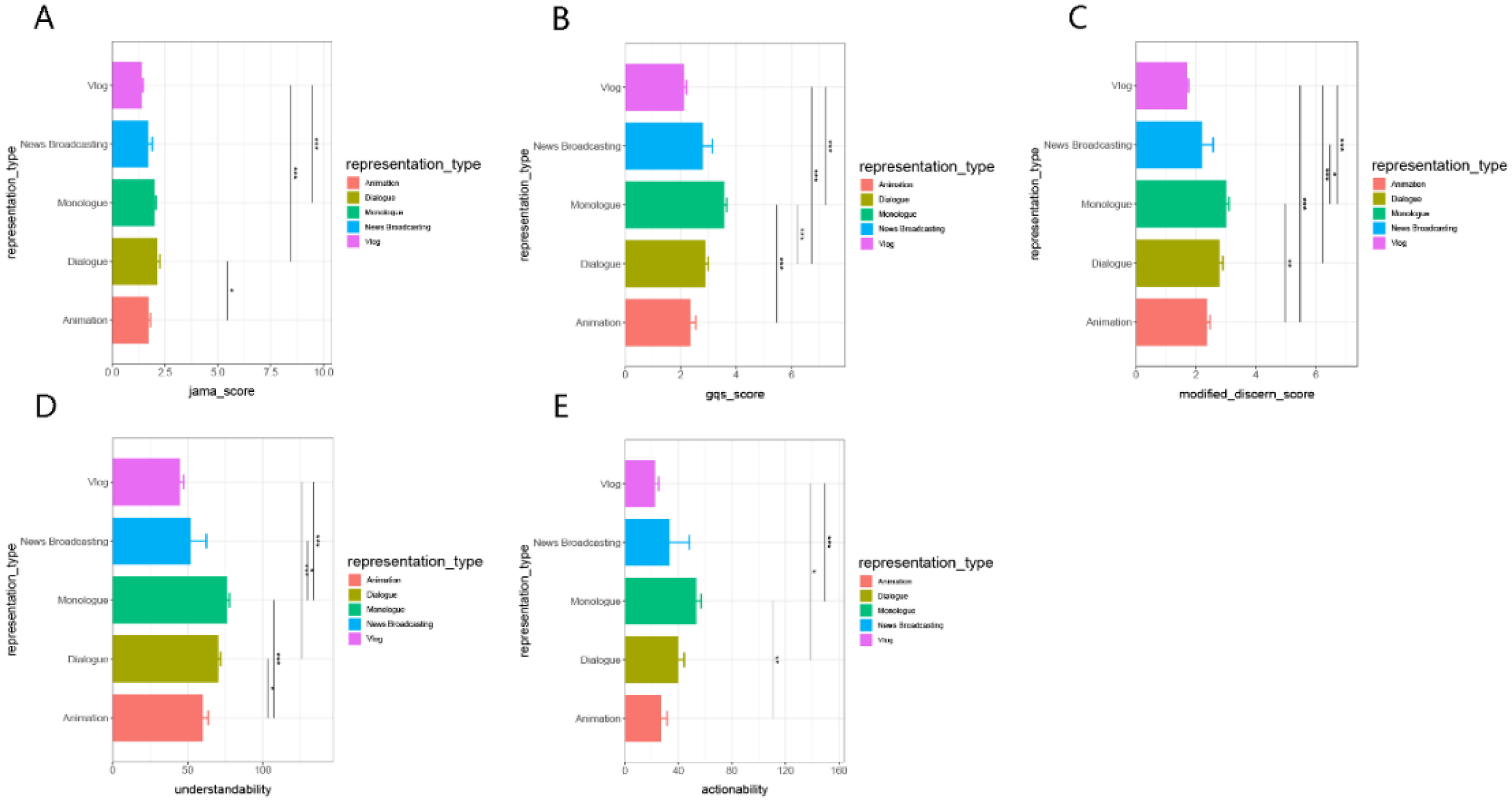

News broadcast videos demonstrated the highest overall quality, scoring significantly higher than formats such as animation and vlogs in JAMA (Figure 4A), GQS (Figure 4B), and the modified DISCERN score (Figure 4C) (all p < 0.001). Vlog videos also performed well in terms of JAMA, GQS, and DISCERN scores, and achieved the highest rating in understandability (Figure 4D, p < 0.05). However, they scored relatively lower in actionability (Figure 4E). Animated videos received the highest scores in actionability (Figure 4E, p < 0.001) and also performed well in understandability (Figure 4D). Nonetheless, they were rated lower in more technical measures such as JAMA and GQS. Monologue and dialogue formats exhibited moderate performance across all evaluation dimensions. While they did not show significant advantages or disadvantages overall, they outperformed animated videos in the modified DISCERN score (Figure 4C).

Scores of spinal cord injury videos with different representations—including (A) the JAMA score, (B) the GQS score, (C) the modified DISCERN score, (D) PEMAT-understandability, and (E) PEMAT-actionability. *p < 0.05, **p < 0.01, ***p < 0.001.

Impact of account verification status on video quality

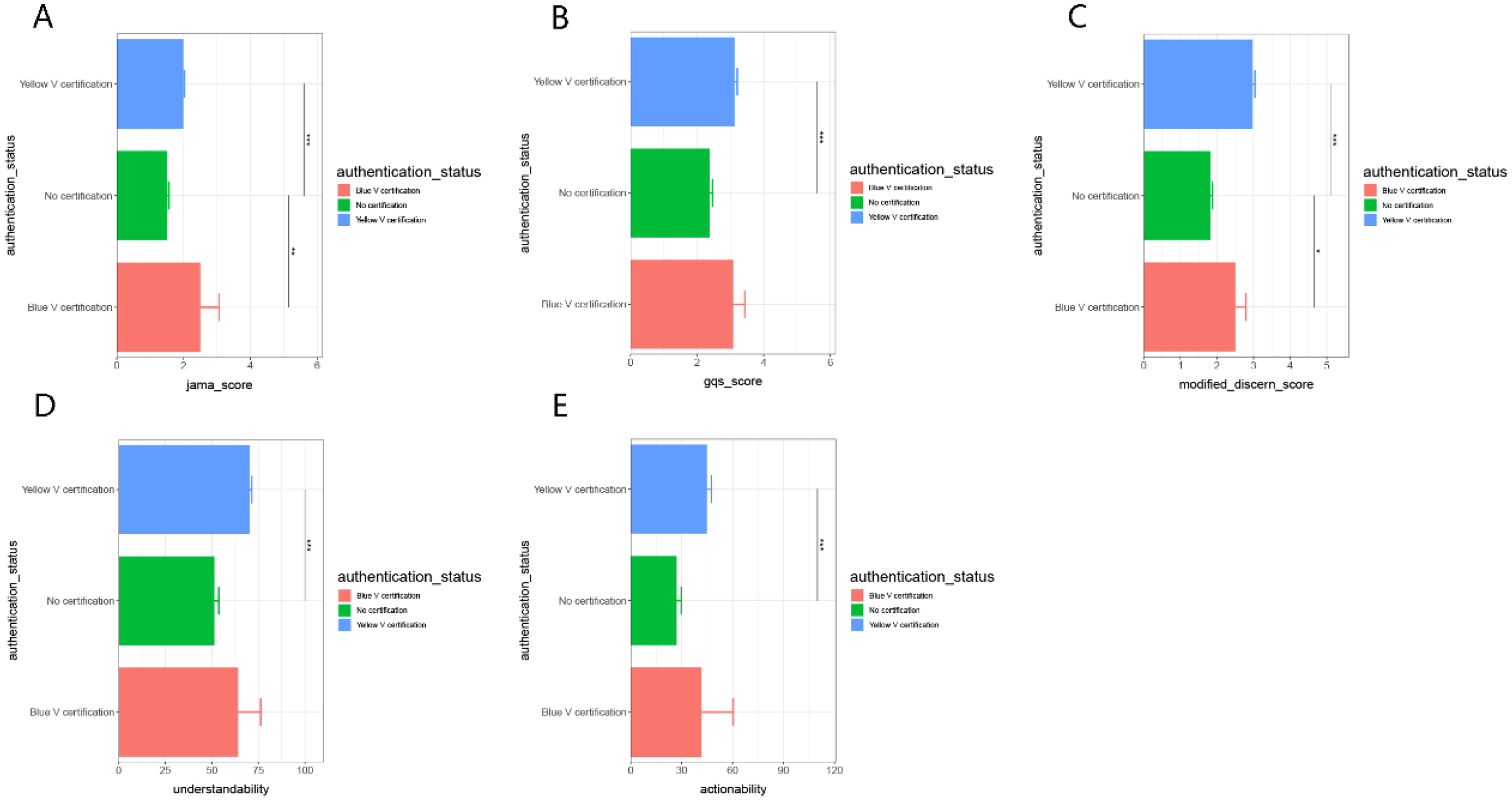

Yellow V-verified accounts outperformed the other two verification categories in GQS (Figure 5B), modified DISCERN score (Figure 5C), understandability (Figure 5D), and actionability (Figure 5E). Across all five metrics, yellow V accounts scored significantly higher than no verified accounts (p < 0.001). Blue V-verified accounts also achieved higher scores than non-verified accounts across all metrics, with particularly notable differences observed in the JAMA score (Figure 5A) and the modified DISCERN score (Figure 5C) (p < 0.05 and p < 0.01, respectively).

Scores of spinal cord injury videos with different authentication statuses—including (A) the JAMA score, (B) the GQS score, (C) the modified DISCERN score, (D) PEMAT-understandability, and (E) PEMAT-actionability. *p < 0.05, **p < 0.01, ***p < 0.001.

Correlation analysis

The correlation analysis revealed significant positive relationships among likes, comments, and favorites, with the strongest correlation observed between likes and comments (r = 0.92), followed by likes and favorites (r = 0.74), and comments and favorites (r = 0.61). In terms of health information quality, the JAMA score showed a weak negative correlation with both likes (r = −0.14) and comments (r = −0.21). Conversely, follower count was positively correlated with the JAMA score (r = 0.50) and the GQS (r = 0.36). A moderate correlation was observed between actionability and understandability (r = 0.49). Within the rating system, the JAMA score, GQS, modified DISCERN score, understandability, and actionability were all positively correlated with one another. Notably, the strongest correlation was between the GQS and understandability scores (r = 0.60) (Figure 6).

Correlation matrix heatmap of variables, encompassing engagement-related metrics (like, comment, collection, and shares), video-specific attributes (duration, days_since_upload, and fans_of_video_uploaders), and multiple evaluative scores (jama_score, gqs_score, modified_discern_score, understandability, and actionability). Each cell displays the correlation coefficient, where the color gradient reflects the direction and strength of the correlation through hue (warm hues for positive correlations, cool tones for negative correlations) and saturation (darker/lighter shades indicating larger/smaller absolute values of the correlation coefficient).

Discussion

With the rapid development of 5G technology and mobile internet, short video platforms such as TikTok, BiliBili, and Kwai have become important channels for health information dissemination. 22 Given the complexity, high disability rate, and prolonged rehabilitation associated with SCI, ensuring the accuracy and quality of SCI-related information is crucial. Although previous studies have reported variable quality in health-related videos for common diseases such as liver cancer and colorectal polyps,23,24 systematic evaluations of SCI content on short video platforms have been lacking. In this study, we conducted a comprehensive assessment of video characteristics and information quality across three major platforms, providing new insights into the current landscape of SCI health communication.

Representativeness of platform content and algorithmic influence

Our study focuses on the representativeness of content on the platforms, rather than trying to capture the overall average content. We chose the top 100 videos from each platform based on recommendation rankings, which reflect typical user behavior. Studies show that most users only browse the first few pages of search results, 8 so this selection is more likely to reflect the content users will actually see, rather than offering a balanced view of all available content. The recommendation algorithms of these platforms prioritize content based on user behavior and interactions, meaning that the content seen by users is highly visible and widely distributed. As a result, our analysis highlights the visibility and spread of content based on user engagement, rather than the entire range of content across the platform. While this approach provides useful insights into content distribution, future research could expand the sample to explore other aspects of content visibility for a more comprehensive understanding of how content is shared and seen across platforms.

Influence of user demographics and algorithms

BiliBili, characterized by longer video durations, exhibited higher collection rates, suggesting that its users are more inclined to regard high-quality content as a learning resource. This pattern is consistent with BiliBili's community culture of knowledge sharing and its predominantly well-educated user base. 25 In contrast, Kwai, which features ultra-short videos, demonstrated higher interaction rates but shorter content lifecycles, reflecting its orientation toward a broader, less urbanized audience and a focus on lightweight entertainment.26,27 Although TikTok predominantly featured physician-led content, it showed lower user engagement, indicating that the use of specialized medical terminology may present comprehension barriers for the general audience. These findings suggest that platform algorithms and user demographics substantially influence the dissemination and reception of health-related information. Given that these platforms have varying user bases worldwide, the findings may be more reflective of regional digital health trends, particularly in China, Brazil, and South Korea, where these platforms are most widely used.

Professional background and information quality

The professional background of content creators significantly influenced information quality. Videos produced by physicians achieved the highest accuracy scores, suggesting that professional background plays a critical role in ensuring the reliability of health information. 27 Videos created by rehabilitation therapists demonstrated stronger operational practicality, likely due to their emphasis on actionable recovery exercises and daily care guidance, which aligns with findings from previous studies on the effectiveness of therapeutic content in improving patient outcomes. 28 In contrast, videos produced by independent users exhibited the lowest overall quality across multiple indicators, highlighting the limitations of non-professional sources in delivering scientifically rigorous medical information. These results underscore the need for increased involvement of healthcare professionals in the creation of health-related content and the implementation of more robust content verification mechanisms on short video platforms.

Comparative analysis of video categories

Analysis of content themes revealed that disease knowledge videos consistently achieved the highest scores in authority and actionability. These videos—typically created by medical professionals or institutions—offered well-structured and standardized content, serving as valuable sources for health education. News videos received moderate ratings, indicating reliable information sources but a lack of depth in medical guidance. Outpatient scenario videos ranked just below disease knowledge videos, but outperformed patient experience videos overall, particularly in terms of understandability and practicality. Their visual context and realistic settings may enhance viewer comprehension and behavioral guidance. In contrast, patient experience videos scored the lowest across all metrics, likely due to their subjective nature and lack of scientific rigor.

Impact on communication effectiveness

The presentation format influenced both video quality and communicative effectiveness. Dialogue-based videos demonstrated the most balanced performance across quality metrics—combining professionalism and structure with improved audience engagement and execution intent—highlighting their potential in health communication. While Vlog-style videos were engaging and widely shared, they often lacked authority and structural completeness, reflecting the professional limitations of user-generated content. Animated videos scored high in understandability and actionability, suggesting that their visual appeal helps facilitate behavioral change; however, they lagged in authoritative scoring dimensions. News report and explanatory videos achieved balanced performance in professionalism and clarity, though they did not exhibit standout strengths.

Ensuring credibility of health information

Expert-verified (yellow V) accounts consistently outperformed other account types across most quality indicators. On TikTok, the high proportion of yellow V accounts (82%), predominantly comprising physicians, provided dual assurance of professional identity and platform verification. In contrast, BiliBili and Kwai exhibited higher proportions of unverified users (84% and 71%, respectively), indicating a potential risk of disseminating unregulated health information. These findings suggest that platforms should strengthen tiered verification systems and content review processes to enhance the credibility and controllability of health information dissemination.

Relationship between popularity and quality

The correlation between user interaction and content quality was found to be relatively weak, suggesting that content popularity does not necessarily correspond to high informational quality—a phenomenon consistent with the concept of “online infotainment.” 29 Strong correlations among user engagement metrics (likes, comments, shares, and favorites) reflected consistent audience preferences. Meanwhile, the mild positive correlation between GQS scores and engagement implied that optimizing content structure could enhance dissemination efficiency. Furthermore, the moderate correlation observed between understandability and actionability (r = 0.49) highlighted the importance of reducing cognitive barriers while incorporating actionable elements to improve the practical value of health information.

Limitations

Despite its novel contributions, this study has several limitations. Firstly, data collection was limited to three major platforms (BiliBili, Kwai, and TikTok) that are widely used in specific regions, such as mainland China, excluding global platforms such as YouTube and Instagram. As these platforms have very different global usage, the findings may be more reflective of regional digital health trends, particularly in China, Brazil, and South Korea. The sample scope should be expanded in future research to include more platforms with wider global distribution for a more comprehensive view.

Additionally, the video selection process was based on keyword-based searches, extracting the top 100 results per platform based on recommendation rankings. This approach simulates typical user behavior but may overlook lower-ranked high-quality content. The opaque and dynamic nature of platform algorithms also limits the reproducibility and timeliness of findings, and future studies should explore ways to reduce algorithmic bias, such as using manual content selection or larger sample sizes.

Furthermore, there was an uneven distribution of videos across the platforms (BiliBili removed 32 videos, Kwai removed 9 videos, and TikTok removed 8 videos). This imbalance in the number of videos could potentially affect the comparative analysis between platforms. Therefore, this limitation should be considered when interpreting the findings. Future research could balance the number of videos across platforms or use alternative data selection strategies to reduce this impact. Lastly, the interaction analysis focused solely on quantitative data, without addressing the sentiment or informational value of user comments. Future research could incorporate qualitative analysis to deepen the understanding of user engagement.

Conclusion

In conclusion, this study reveals structural disparities in the quality of SCI-related educational content on short video platforms and identifies key factors influencing content quality. It is recommended that the public prioritize health information from verified professional accounts, while platforms should foster interdisciplinary content creation mechanisms (e.g. clinical interpretation + rehabilitation guidance + patient narratives) and optimize algorithms to balance quality and reach. Future research should expand into multilingual, cross-platform comparisons and integrate clinical outcome validation with user behavior tracking to build a robust, scientific evaluation system for digital health communication.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251374226 - Supplemental material for Quality assessment of spinal cord injury-related health information on short-form video platforms: Cross-sectional content analysis of TikTok, Kwai, and BiliBili

Supplemental material, sj-docx-1-dhj-10.1177_20552076251374226 for Quality assessment of spinal cord injury-related health information on short-form video platforms: Cross-sectional content analysis of TikTok, Kwai, and BiliBili by Yujie Zhang, Changming Huang, Ye Tong, Yilin Teng, Baicheng Wan, Jianhua Huang, Gaofeng Zeng and Shaohui Zong in DIGITAL HEALTH

Footnotes

Abbreviations

Ethics approval and consent to participate

This study did not involve the use of clinical data, human specimens, or laboratory animals. All information was sourced from publicly available videos on TikTok, Kwai, and BiliBili, with no inclusion of personal privacy data. Furthermore, as there was no interaction with platform users, ethical review was not required.

Authors contributions

All authors participated in the conception, design, guidance, and supervision of this study. Ye Tong and Yilin Teng were responsible for data collection and curation, Shaohui Zong and Gaofeng Zeng contributed to data analysis, project administration, supervision, and interpretation, and are considered co-corresponding authors. Baicheng Wan and Jianhua Huang provided critical support and oversight throughout the research process. Yujie Zhang and Changming Huang contributed equally to the conceptualization, data interpretation, and manuscript preparation. All authors reviewed and approved the final version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Specific Research Project of Guangxi for Research Bases and Talents (grant no. GuiKe AD24010037).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Availability of data and materials

The data for this study, derived from the TikTok, Kwai, and BiliBili platforms, have been anonymized to protect privacy, with Uniform Resource Locators and titles retained for research integrity. Due to privacy concerns, these data are not publicly accessible. However, we are committed to sharing the data upon request for legitimate research purposes, in accordance with privacy protection principles and data sharing policies. Interested researchers should contact the corresponding author for access.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.