Abstract

Background

As a representative product of generative artificial intelligence (GenAI), ChatGPT demonstrates significant potential to enhance healthcare outcomes and improve the quality of life for healthcare consumers. However, current research has not yet quantitatively analysed trust-related issues from both the healthcare consumer perspective and the uncertainty perspective of human–computer interaction.

Objective

This study aims to analyse the antecedents of healthcare consumers’ trust in ChatGPT and their adoption intentions towards ChatGPT-generated health information from the perspective of uncertainty reduction.

Methods

An anonymous online survey was conducted with healthcare customers in China between September and October 2024. This survey included questions on critical constructs such as social influence, situational normality, anthropomorphism, autonomy, personalisation, information quality, information disclosure, trust in ChatGPT, and intention to adopt health information. A 7-point Likert scale was used to score each item, ranging from 1 (strongly disagree) to 7 (strongly agree). SmartPLS 4.0 was used to analyse data and test the proposed theoretical model.

Results

The findings indicated that trust in ChatGPT had a significant relationship with the intention to adopt health information. The primary factors associated with trust in ChatGPT and the intention to adopt health information were social influence, situational normality, autonomy, personalisation, and information quality. The analysis revealed a negative relationship between social influence and trust in ChatGPT. Familiarity with ChatGPT was identified as a significant control variable.

Conclusion

Trust in ChatGPT is positively related to healthcare consumers’ adoption of health information, with information quality as a key predictor. The findings offer empirical support and practical guidance for enhancing trust and encouraging the use of GenAI-generated health information.

Keywords

Introduction

Strengths and applications of chat generative pre-trained transformer in healthcare

Generative artificial intelligence (GenAI) represents a new stage in the evolution of AI technology, sparking a fresh wave of advancements in the field. Unlike traditional AI, which primarily processes input data, GenAI can independently learn and simulate underlying patterns to generate new content. GenAI generates text, images, audio, video, code, and more by leveraging pre-trained multi-modal foundational models and user-provided inputs. 1 This capability enables GenAI to generate content with logical structure and coherence tailored to users’ needs. 2 In healthcare, its versatile functions have significant potential applications, including clinical decision support, 3 medical education, 4 writing medical research, 5 and processing clinical text, 6 promising numerous benefits.

Chat generative pre-trained transformer (ChatGPT), a representative product of GenAI, is an AI chatbot developed by OpenAI that generates text-based responses to multi-modal inputs. 7 Since its launch in November 2022, ChatGPT has achieved remarkable growth, surpassing 100 million monthly active users by January 2023 and becoming the fastest-growing consumer application in history. 8 Among competing products, ChatGPT is the first to enter the market with such a substantial impact, solidifying its position in the AI industry as the most commercially successful and widely recognised and used tool.9,10 Its influence largely stems from its impressive linguistic capabilities and its demonstrated cognitive functions that closely mirror human capabilities.

The advantages of ChatGPT in healthcare are significant, including ease of use, high adaptability in Q&A functionalities, the effectiveness of its output, and relatively superior performance. 11 In specific healthcare-related tasks, including medical counselling, ChatGPT has demonstrated superior performance to other AI tools.12-14 As a publicly accessible service with minimal bandwidth requirements, it can reach a global population and drive profound transformations in public health and medicine. 15

For healthcare customers, ChatGPT offers significant potential to improve health outcomes and enhance quality of life. It can enhance doctor–patient communication, which positively influences patient outcomes; assist in health interventions that rely on communication among non-professional peers; and provide accessible medical advice. 16 According to surveys, an increasing number of users are utilising ChatGPT as a tool to access health-related information.17,18 However, its implementation must be cautiously approached, ensuring accurate and responsible usage within the healthcare context. 15

Few studies have examined healthcare consumers’ real-world usage of ChatGPT, despite extensive research on providers, which has covered ChatGPT applications, 1 professional perceptions, 19 and physician–ChatGPT comparisons. 20 Furthermore, little is known about how healthcare customers perceive the content generated by ChatGPT and how it influences their self-care and behaviour change.

Trust in ChatGPT and intention to adopt health information

Intention to adopt health information refers to the decision-making process through which individuals assess, accept, and integrate information based on perceived validity, usefulness, and their specific health needs. 21 Understanding the intentions of healthcare customers to adopt health information is essential for enhancing the efficiency and quality of health information dissemination, advancing patient health education and management, and encouraging digital innovation in healthcare. Mao et al. 22 identified three key dimensions influencing users’ willingness to adopt ChatGPT-generated content through interview analysis: information quality, user perceptions, and technical characteristics. However, the lack of systematic quantitative analysis and validation makes it challenging to measure the specific impact of each factor.

Individuals’ trust in technology has become a prominent topic of discussion. Within AI applications, trust reflects users’ confidence in the reliability and accuracy of AI-generated services and outcomes. 23 First, trust is a crucial factor influencing technology adoption 18 and information adoption. 24 Second, trust is predictive of the degree to which users rely on technology, significantly impacting the actual outcomes of technology utilisation. 25 Additionally, trust is closely linked to essential indicators, such as user loyalty. 26 A variety of factors influence trust in AI. At the individual level, these include demographic characteristics, 27 e-health literacy, 28 and prior experience with technology. 29 At the technical level, trust is shaped by factors such as tangibility, 25 transparency, 18 and anthropomorphism. 25 However, these studies overlook trust issues arising from the uncertainties inherent in human–computer interactions.

Research has shown that uncertainty affects users’ trust in AI/ChatGPT and their behavioural intentions.30-32 In the case of ChatGPT, users are confronted with technical and informational uncertainty, with their trust being affected by the absence of definitive methods to mitigate model uncertainty. 33 Uncertainty reduction theory (URT) offers a valuable framework for systematically identifying and mitigating these interaction-related uncertainties. However, few studies have addressed mitigating or minimising interaction uncertainty in AI applications from an uncertainty reduction perspective using quantitative empirical methods to enhance trust and health information adoption intention. This study aims to address two main objectives to bridge these gaps: (1) to explore how healthcare customers’ trust in ChatGPT influences their intention to adopt health information, and (2) to analyse the factors affecting trust in ChatGPT from an uncertainty reduction perspective.

Literature review and theoretical basis

Empirical studies on GenAI/ChatGPT in the healthcare field

Recent studies have explored the adoption of GenAI/ChatGPT across various domains, with a growing focus on healthcare applications. This paper synthesises key empirical findings, primarily in the healthcare field, to examine the factors and mechanisms influencing user adoption and use (Table 1). While not exhaustive, the table highlights key research streams and identifies significant gaps in the current literature.

Antecedents and outcomes of trust and adoption in ChatGPT: a healthcare perspective.

PLS-SEM: partial least squares structural equation modelling, UTAUT: unified theory of acceptance and use of technology, TAM: technology acceptance model, TPB: theory of planned behaviour, fsQCA: fuzzy-set qualitative comparative analysis, HBM: health belief model, TRA: theory of reasoned action.

Several key observations emerge from this synthesis. First, most studies primarily focus on intention to use,34,35 behavioural intention,36,37 and user behaviour.38,39 However, other vital behaviours, such as information adoption, remain underexplored. Research in the healthcare domain lacks sufficient exploration of the mechanisms that shape user interactions with GenAI, particularly their antecedents and outcomes. In this research, we focus on trust and users’ adoption intention of health information.

Second, existing research has primarily focused on individual- and technology-related antecedents, neglecting environmental and multi-actor ecosystem influences. Moreover, healthcare research emphasises functional value, such as information quality 38 and trustworthiness, 39 with limited attention to emotional and experiential value, including perceived interactivity 40 and post-human capabilities. 41 In this research, we aim to integrate insights from multiple domains to develop a more comprehensive understanding.

Third, the technology acceptance model,34,38 and the unified theory of acceptance and use of technology 35 and their extensions are dominant theoretical frameworks. However, although uncertainty is a critical factor in shaping user perceptions and behaviours, it has received limited attention in GenAI adoption studies. This study addresses this by applying URT to examine how perceptual factors during human–ChatGPT interactions influence trust formation and intention to adopt health information.

Uncertainty reduction theory

URT, initially developed to explain interpersonal communication, suggests that individuals reduce uncertainty through active, interactive, and passive information-seeking strategies. 43 According to the theory, uncertainty consists of two forms: predictive uncertainty and interpretive uncertainty, and the communication environment largely influences the level of interactional uncertainty. Thus, URT provides a theoretical framework for analysing and managing interaction uncertainty.

URT's extension to technology adoption is supported by strong empirical evidence,44,45 including ChatGPT in an educational context. 31 Thus, while URT originated in interpersonal settings, its fundamental mechanism of uncertainty reduction through information-seeking strategies still applies when users perceive ChatGPT as a social actor. In this scenario, passive observation primarily concerns system outputs rather than human behaviours. However, ChatGPT presents new challenges due to its human-like abilities 16 and black-box algorithmic nature, 46 particularly in high-risk domains such as healthcare. Given the unique characteristics of both the technology and the healthcare context, this study represents an important attempt to systematically apply URT to human–ChatGPT interactions within healthcare settings.

This research incorporates two aspects of the URT, which are commonly used in previous studies31,45: active strategy and passive strategy. The active search strategy involves obtaining relevant information by consulting a third party or examining the target individual's environment. In line with existing research, 45 we conceptualise social influences and situational normality as manifestations of this active strategy. The passive search strategy entails acquiring information through passive observation of the target individual. Following existing research, 31 we examine information quality and information disclosure as components of the passive strategy.

Given that the environment is an important factor affecting uncertainty, this study incorporates perceived interactive environment into the research model, modelling three ChatGPT characteristics as foundational shapers of this environment: anthropomorphism, autonomy, and personalisation. Specifically, anthropomorphic features foster a user-friendly human–computer interactive environment, 47 personalisation can enhance users’ perception of interaction security and information credibility, 48 and autonomy enhances response speed and simplifies interaction processes.49,50

The research model is shown in Figure 1.

Research model incorporating URT to explain users’ trust and intention to adopt health information.

Hypotheses development

Trust in ChatGPT and intention to adopt health information

Consistent with existing research, this study conceptualises trust in ChatGPT as the willingness to take risks while believing in the potential for positive outcomes.25,51 Implicit in this definition are two characteristics of trust: positive expectations and a willingness to be vulnerable. Among them, positive expectations, as a belief, can change behavioural intention through attitudes towards behaviour. Furthermore, in the field of innovation, it can influence users’ willingness to adopt technological innovations by enhancing perceived usefulness and perceived ease of use, among other factors. This has been consistently verified by several studies based on the theory of reasoned action and technology acceptance models.52,53 Meanwhile, studies grounded in the theory of planned behaviour confirm that users who accept vulnerability make more accurate assessments of available resources and external constraints, thereby aligning perceived behavioural control with actual control capacity and consequently strengthening intention-behaviour consistency. 54 From this, it can be seen that trust is crucial in the adoption of information, particularly during interactions with technologies such as AI. Previous studies on social platforms, 55 online Q&A communities, 56 and online health advice platforms 57 have identified trust as a critical predictor of users’ acceptance and adoption of health-related knowledge. These studies consistently confirm that trust positively influences users’ willingness to adopt health information. Moreover, trust is crucial for effective interaction in uncertain environments. 18 Research indicates that users are more likely to follow the recommendations of application outputs when they regard the health information as credible. 57 Thus, this study hypothesises:

Trust in ChatGPT is positively related to the intention to adopt health information.

Social influence

Social influence refers to the level of trust an individual's social network places in a specific behaviour. 58 Social influence theory posits that individuals are inclined to follow the beliefs and intentions of their social networks. 59 On the one hand, adherence to group norms strengthens individuals’ attachment to the group, which, in turn, fosters positive emotions. 58 On the other hand, when an individual's knowledge base is insufficient, group beliefs are regarded as a reliable source of information for evaluating the target object. 60 Prior studies have demonstrated that social influence predicts trust in online banking services 61 and that social influence and community interest significantly impact trust. 62 Furthermore, research indicates that social influence significantly predicts consumer trust in AI bots. 63 Thus, this study hypothesises:

Social influence is positively related to trust in ChatGPT.

Situational normality

Situational normality refers to the degree of perceived order and stability in an environment that fosters successful interaction. 64 According to trust theory, situational normality and structural assurances collectively constitute the environmental characteristics of trust, which influence trust formation similarly across different trustee types (human, technological, or AI-based). 65 In environments deemed safe and familiar, users are more likely to view the interaction as trustworthy, thereby fostering trust development. 66 From the perspective of uncertainty theory, higher situational normality reduces users’ perceived uncertainty, promoting the establishment of institutional trust. 45 Several studies have examined the impact of situational normality on trust. For example, Srivastava and Chandra 45 found that situational normality enhances trust in virtual worlds. In a study on trust transfer between physical and virtual environments, situational normality was more effective than structural assurance in facilitating trust transfer, particularly regarding vendor- and technology-based trust. 67 In the usage scenarios of ChatGPT, situational normality is reflected explicitly in the fact that users feel comfortable, and both other relevant users and maintenance personnel of ChatGPT can act normally. Thus, this study hypothesises:

Situational normality is positively related to trust in ChatGPT.

Anthropomorphism

Anthropomorphism enhances user trust by assigning human-like characteristics to AI systems, making them more approachable. 38 Beyond visual design, AI anthropomorphism also encompasses emotional expression and social interaction capabilities, thereby enhancing the human-like and emotionally engaging qualities of AI systems. 68 Research has shown that anthropomorphism can increase trust in AI, particularly in healthcare contexts. 69 By enabling users to perceive the system as more relatable, anthropomorphic design fosters trust, especially in acquiring and interpreting health information.68,70 Bedué and Fritzsche 47 argued that anthropomorphic techniques make AI more relevant to users’ needs, enhancing overall interaction. Ferrario et al. 71 further suggested that anthropomorphism, by creating human-like behaviour, facilitates emotional feedback in AI interactions, thereby enhancing trust. Thus, this study hypothesises:

Anthropomorphism is positively related to trust in ChatGPT.

Autonomy

Autonomy refers to AI's ability to make decisions and perform tasks independently, without human intervention, while adapting to environmental changes and generating new decisions autonomously. 72 Through data-driven learning and autonomous execution, such systems can achieve the expected self-learning and autonomous improvement envisioned by AI solutions, 73 while proactively delivering services that are both unexpected and potentially superior, without requiring additional input from users. 74 Moreover, automated AI systems fulfil user needs by minimising information access complexity, reducing operational burden, shortening wait times, and improving response efficiency. Studies suggest that people tend to prefer systems that can learn, adapt to, and respond to individual personality differences. 75 Furthermore, a meta-analysis has identified that automation-related factors are key determinants of user trust. 76 Thus, this study hypothesises:

Autonomy is positively related to trust in ChatGPT.

Personalisation

Personalisation denotes the degree to which AI-generated information is tailored to meet the specific needs of individual users. 77 Healthcare customers often require highly specialised and context-sensitive advice. 78 Customising output information based on a user's particular needs, history, and health status can help enhance trust in the system. 38 Personalisation has increased users’ perceived security and information trust in the system. 48 On the one hand, personalised services provide users with health information that closely aligns with individual user needs, reducing information redundancy and misinterpretation, which enhances satisfaction and trust. 79 On the other hand, personalised health information can make patients feel understood and supported, prompting them to be more willing to share sensitive information and trust the system's advice. 80 Thus, this study hypothesises:

Personalisation is positively related to trust in ChatGPT.

Information quality

In this study, information quality refers to the extent to which the health information provided by ChatGPT is accurate, reliable, and understandable to users. Given the critical nature of healthcare, information quality is essential to healthcare customers, as inaccuracies or low-quality information can result in irreversible consequences. 33 Previous studies have validated the impact of information quality on trust. Specifically, information quality has been shown to significantly influence perceived risk and trust in inter-organisational information exchange and trust in inter-firm data exchange, where it acts as a process incentive. 31 Research on online health information also highlights the role of users’ perceptions of information quality and risk in determining trust in online health information. 81 Based on these findings, the following hypothesis is proposed:

Information quality is positively related to trust in ChatGPT.

Information disclosure

In this study, information disclosure refers to the extent to which users perceive that they receive relevant information from information providers. 82 The public is increasingly concerned about the security and ethical issues that may arise from the use of ChatGPT. It is critical for organisations to foster trust by ensuring the responsible and ethical deployment of AI and informing users of relevant measures. 83 In the health domain, users can observe the official ChatGPT website or user interface to learn about its functionality, data sources, and privacy protection policies, which helps reduce interaction uncertainty. Studies indicate that patients exhibit greater trust in health information exchange technologies when they are informed about the associated security measures and privacy policies. 84 Furthermore, studies on health information exchange have identified the transparency and familiarity of privacy policies as key factors influencing patients’ trust and willingness to share health information. 84 Based on these findings, the following hypothesis is proposed:

Information disclosure is positively related to trust in ChatGPT.

Control variables

Some research has shown that sociodemographic and socioeconomic factors influence the adoption of health information and user engagement with chatbots, including gender, age, educational level, income, health status, and familiarity with technology.85-88 This is because these variables often influence individuals’ behaviours, perceptions, and attitudes, including their reactions to technologies and AI systems like ChatGPT. This study controls for variables such as gender, age, income, and perceived health status.

Methods

Study design and setting

This study was conducted between September and October 2024 by Credamo (https://www.credamo.com/home.html#/), a leading online survey and experimental data platform in China. Credamo offers a proprietary sample pool of over 3 million participants, with rigorous quality control measures, including attention checks, response validation, and automated rejection of invalid submissions. Credamo supports targeted sampling based on demographics (e.g. age, occupation, region) and has been widely used in academic and commercial research.

To ensure the validity and authenticity of the data collected through the online questionnaire, we employed a two-stage sampling method to recruit participants. First, an anonymous online screening questionnaire was administered to identify ChatGPT users who had previously used the platform for health information seeking. Over 800 participants completed the screening survey, and 371 met the inclusion criteria. Then, the 371 pre-qualified users were invited to complete the main survey via an anonymous online survey.

Inclusion criteria included healthcare customers who use ChatGPT to search for health information (including those who have used and are using it). Exclusion criteria included those who had never used ChatGPT to search for health information, healthcare professionals, incredibly short/long completion times, patterned answer sequences, failed attention-check items, and inconsistent answers.

Measures

The survey included both basic demographic questions and measures for key variables. These key constructs were related to social influence, situational normality, autonomy, personalisation, anthropomorphism, information disclosure, information quality, trust in ChatGPT, and intention to adopt health information. Due to the limited existing literature examining patient interactions with ChatGPT, no validated measurement instrument was available for direct adoption. Table 2 presents the constructs and their corresponding measurement items, all adapted from existing literature related to AI. Since it was aimed at the Chinese population, the English version of the instrument was then translated into Chinese to ensure its adaptability to the local language situation. A health information management postgraduate translated the instrument, and another reviewed it for content validity. For scale-based questions, participants indicated their level of agreement with each construct on a 7-point Likert scale, ranging from 1 (strongly disagree) to 7 (strongly agree). To assess the clarity and reliability of the questionnaire, 20 postgraduate students in health information management and social medicine participated in a pilot study, with revisions implemented based on their feedback. The 29-item questionnaire required a minimum of 290 participants, based on statistical guidelines recommending a 10:1 sample-to-item ratio.89,90

List of measures and the corresponding items used in this research.

Statistical analysis

Data were analysed using SPSS 26 and SmartPLS 4.0. Descriptive statistics, structural equation modelling (SEM), and confirmatory factor analysis were applied to examine factors influencing trust in ChatGPT and intention to adopt health information. SEM was utilised to validate the proposed hypotheses and verify the suggested conceptual framework. Partial least squares structural equation modelling (PLS-SEM), an analytical method suitable for forecasting modelling and exploring complex relationships between variables, was used to analyse the collected data. PLS-SEM was chosen due to its ability to handle smaller sample sizes and its robustness against non-normal data distributions.

Ethics approval

This study received ethical review from the Medical Ethics Committee of Tongji Medical College, Huazhong University of Science and Technology (No. 2024S225). Prior to the study, participants viewed an informed consent form on the questionnaire's first page, detailing the purpose, procedures, and right to withdraw. Participants’ continuation indicated their informed consent. All data were anonymised, kept confidential, and used solely for research.

Results

Participant details

A total of 371 questionnaires were distributed, and 363 were returned, resulting in a response rate of 97.8%. Of the returned questionnaires, 308 were valid, resulting in a valid response rate of 83%. Data saturation was achieved, meeting the recommendations proposed by Bentler and Chou 89 and Jackson. 90 The sample consisted of 113 males and 195 females. The average age of participants was 31.93 years, and the average monthly income was 6597.2 Chinese yuan. Most participants reported being in good health and having received higher education. Details are provided in Table 3.

Demographic profile of the research participants (

Measurement model

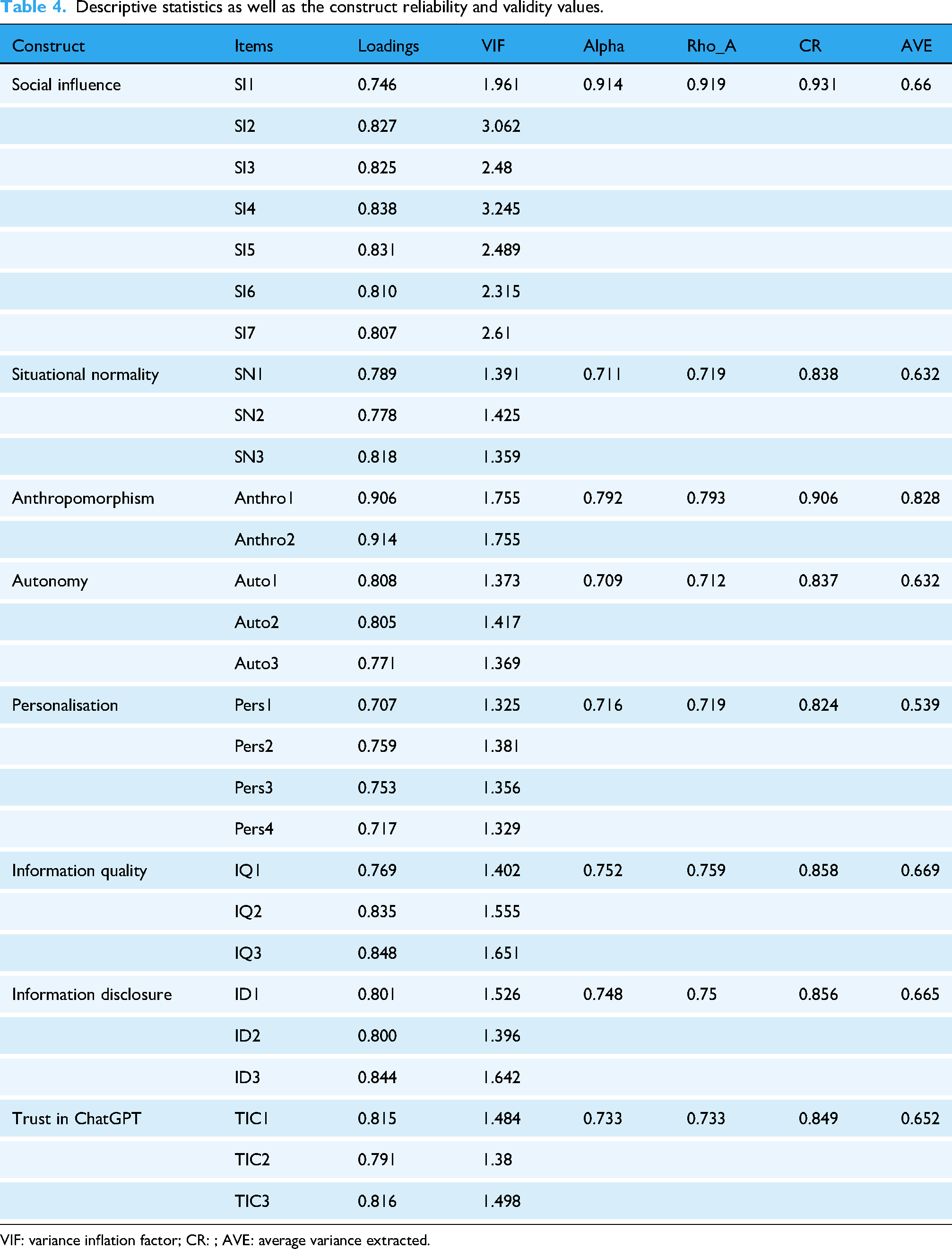

Data were analysed using SPSS 26.0 and SmartPLS 4.0 software, with the bootstrap algorithm selected during the analysis to mitigate potential biases. 94 The analysis of Harman's single factor was conducted, yielding a single construct value of 39.326%, indicating that common method bias is not a substantial issue in our study, as the value does not surpass the 50% threshold. 95 A collinearity assessment revealed that all variance inflation factor values were below 3.3, suggesting no multicollinearity concerns. 96 The Cronbach's alpha coefficients and composite reliability (CR) values for all latent variables exceeded 0.7, indicating satisfactory reliability.96,97 Furthermore, the factor loadings for each measurement item were greater than the recommended threshold of 0.5, the CR values for all latent variables were above 0.7, and the average variance extracted (AVE) exceeded 0.5. These results demonstrated that the constructs in this study exhibited good convergent validity.

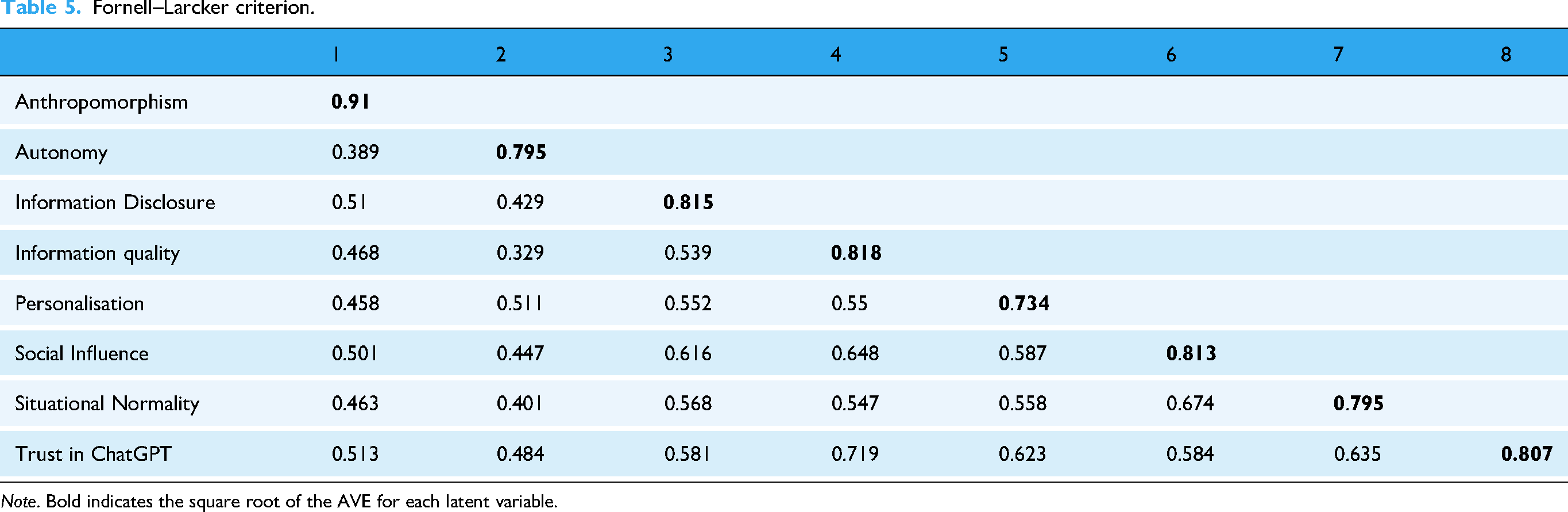

The Fornell–Larcker criterion was employed to evaluate discriminant validity. The square root of the AVE for each latent variable exceeded the corresponding Pearson correlation coefficients between the latent variables, confirming that the measurement model exhibited good discriminant validity. 96 The details of these analyses are presented in Tables 4 and 5.

Descriptive statistics as well as the construct reliability and validity values.

VIF: variance inflation factor; CR: ; AVE: average variance extracted.

Fornell–Larcker criterion.

The PLS algorithm also provided details about the robustness of the structured model. It indicated the factors’ predictive power and shed light on

Structural model

The bootstrapping procedure was utilised to examine the hypotheses of this study. The findings confirmed the robustness of the proposed structured model and reported highly significant effects between the exogenous and endogenous constructs, as indicated in Figure 2. The strongest association was observed for H7, between information quality and trust in ChatGPT, where

Structural analysis of the research model. Note. The solid line means reach the significant at

Table 6 presents the indirect associations identified in the research model. The analysis revealed significant mediating relationships through trust in ChatGPT, linking social influence, situational normality, autonomy, personalisation, and information quality to health information adoption intention.

Indirect effects.

Discussion

Principal findings

By introducing URT, this study provides quantitative empirical evidence on how trust influences the adoption of health information and identifies key factors that shape trust in ChatGPT from the perspective of healthcare consumers. This study offers important insights into how trust and adoption behaviours can be fostered in complex human–GenAI interactions, contributing to the responsible integration and effective use of GenAI in digital health services. Consistent with existing literature,55-57 trust in ChatGPT was positively associated with health information adoption intention, supporting H1. Notably, the model explained 17.8% of the variance in health information adoption intention, with trust as the sole direct predictor alongside control variables, underscoring its critical role as a key influencing factor. However, the remaining unexplained variance reflects the multi-faceted nature of health information adoption. Future research should incorporate additional factors such as source credibility, emotional support, digital literacy, and perceived usefulness to provide a more comprehensive understanding.56,99

Consistent with existing research, situational normality, autonomy, personalisation, and information quality were positively associated with trust in ChatGPT, supporting H3, H5, H6, and H7. Information quality (

In contrast to existing studies,61-63 this study revealed a negative relationship between social influence and trust in ChatGPT. This can be attributed to three key factors. First, the effect of social networks may be influenced by indirect cues such as media discourse and observed behaviours. 105 During ChatGPT's initial launch period (2022–2023), keywords like ‘bias’ and ‘disinformation’ were widely circulated on platforms, fuelling public scepticism and weakening trust.106,107 Second, based on the theory of learning externalities, social influence may follow an inverted U-shaped curve 108 : as negative social experiences accumulate, users may shift from mimicking others to becoming more risk-averse, which reduces trust. Third, the measurement of social influence in this study takes into account status and high prestige, which may not resonate with users seeking reliable health advice. In health contexts, users tend to value accuracy and usefulness over social image. Prestige-based cues may even trigger cognitive resistance, especially among those with prior negative experiences or low baseline trust in AI. This aligns with our finding that information quality is a strong predictor of trust. 109 Moreover, highly educated users may be less influenced by social cues and more likely to rely on their own independent judgement. 110

In contrast to existing studies,68-70 anthropomorphism showed no significant association with trust in ChatGPT. Several factors may explain this discrepancy. First, the elaboration likelihood model suggests that in high-risk and cognitively demanding information contexts, users rely more on central cues such as information quality, rather than on peripheral cues like anthropomorphism. 111 Some studies on ChatGPT have examined human-like conversational features and found that personification does not significantly influence users’ information acquisition or decision-making in medical and health contexts.38,39 Second, anthropomorphism may be interpreted as a sign of ‘unprofessionalism’. Research has shown that some users find anthropomorphic elements distracting. 112 Especially when it comes to medical judgement, users are more inclined to the non-anthropism ‘instrumental rationality’ interaction mode. Watson's research highlights that a high degree of anthropomorphism can lead users to misperceive AI as capable of communicating, thinking, and experiencing emotions like humans, thereby generating excessive trust in AI. 113 When excessive trust affects the accuracy of users’ decisions and harms them, trust is weakened as a result.

The lack of a significant association between information disclosure and trust contrasts with prior findings.84,114 One possible explanation lies in the ‘inverted U-shaped’ effect of transparency: excessive disclosure can overwhelm users’ cognitive capacity, leading to confusion or scepticism. 115 Moreover, as a general-purpose language model not specialised in medicine, ChatGPT may disclose technical or version-related information that does not align with users’ expectations in health contexts, where information quality is prioritised.34,38 This result may also be influenced by conceptual or measurement biases, as well as cultural differences. ChatGPT, as a foreign platform, may adopt design and disclosure norms that differ from those expected by domestic users. Language barriers and limited familiarity with foreign data policies may further hinder users’ understanding of the disclosed information, reducing its perceived value and weakening trust.

Theoretical contribution

First, current studies on ChatGPT utilisation have mainly concentrated on healthcare professionals. This research adds the perspective of healthcare consumers, thereby enriching the literature on users’ experiences, perceptions, and usage behaviours in GenAI-assisted health information contexts.

Second, in contrast to prior research on ChatGPT acceptance that primarily focuses on general technology acceptance, this study explicitly examines the intention to adopt health information, with trust as a mediating variable to explore its underlying mechanism. This focus provides a more targeted theoretical foundation for understanding decision-making processes in human–AI interactions, particularly within the context of health information.

Third, we propose a new perspective on trust and information adoption by applying URT. This theory highlights the often-overlooked role of uncertainty in human–computer interaction. Within this theoretical framework, the study emphasises the significance of information quality (passive strategy), situational normality (active strategy), and autonomy, personalisation (interactive environment). These predictive factors also incorporate insights from marketing and healthcare regarding user cognition and priorities.

Finally, although existing studies have examined a wide range of factors influencing user information behaviour in the context of ChatGPT,22,38 the empirical validation of effect sizes and the relative importance of these variables remains limited, with a lack of robust data-driven evidence to support the findings. To address this, our study employs PLS-SEM to develop a quantitative model, providing empirical and methodological support for existing research.

Practical implications

First, this study highlights four key factors influencing trust in ChatGPT: situational normality, autonomy, personalisation, and information quality. Technical service providers can apply these findings by fostering a consistent and user-friendly environment, supporting user decision-making, delivering personalised health responses, and ensuring that information is accurate, evidence-based, and clearly communicated. These strategies can help promote user confidence and support the responsible adoption of AI in healthcare contexts.

Second, it is essential to address the adverse effects of social influence. By curating high-quality health content and actively responding to issues such as hallucinations, platforms can foster more reliable and reassuring user experiences that help build trust. Additionally, enhancing users’ ability to evaluate AI-generated information through digital campaigns and in-app guidance can foster more informed and balanced engagement. This approach supports appropriately calibrated trust while reducing the risks of both overreliance and excessive scepticism.

Third, this study offers valuable insights to inform regulatory frameworks for ChatGPT's healthcare applications. Policymakers should establish clear accountability mechanisms and content verification standards to ensure the reliability and safety of GenAI outputs in healthcare contexts. Moreover, by providing strategic guidance to healthcare organisations and technology developers, policymakers can help ensure that information quality, situational normality, personalisation, and autonomy are prioritised in the design, implementation, and dissemination of AI tools. Such efforts can foster a more trustworthy and sustainable digital health ecosystem.

Limitations

First, this study focuses on ChatGPT and uses cross-sectional data to explore factors influencing trust and the adoption of health information. Future research could examine healthcare customers’ interactions with large models or GenAI in medical contexts. Analysing interaction patterns and customers’ evolving information needs across different health situations could offer valuable insights. Additionally, studying how customers’ behaviours change over time as trust develops or diminishes would deepen our understanding of human–AI dynamics.

Second, this theoretical lens of URT may not fully capture other critical factors such as cultural environment, self-efficacy, and intrinsic motivation. Although trust was found to be a significant predictor, the model's explanatory power remains limited. Future research could expand the scope by integrating other frameworks, such as social cognition theory and information processing theory, to uncover additional factors that influence trust.

Third, this study relies on survey data, which may be susceptible to biases such as recall bias, variations in introspective ability, and subjective interpretation of questionnaire items. Furthermore, since existing measurement tools were not designed explicitly for AI or ChatGPT applications, they may lack precision in capturing relevant constructs. Additionally, SEM may lack sufficient explanatory power for unexpected findings. Future research could adopt more objective measurement standards or advanced tools and incorporate qualitative methods (e.g. interviews) to yield richer insights.

Fourth, while online recruitment enabled efficient data collection, it introduced demographic biases. The study's exclusive reliance on Chinese participants overlooks the potential impact of cultural differences on trust formation and information processing patterns. Future research should investigate the relationship between URT, trust, and intention to adopt health information across diverse cultural contexts. Additionally, the sample's disproportionate representation of highly educated individuals – a well-documented characteristic of technology early adopters – provides a plausible explanation for key findings, including the unexpected negative correlation between social influence and trust in ChatGPT, as well as the strong predictive power of information quality. Consequently, these results predominantly reflect the perspectives of highly educated users, thereby limiting their generalisability to broader healthcare consumers. Future research should employ stratified sampling to ensure adequate representation across cultural, educational, gender, and other demographic variables.

Finally, the study did not control for additional confounding variables, including digital health literacy 41 and prior experience. 29 These omitted factors may have systematically influenced participants’ interactions with and evaluations of ChatGPT, thereby potentially confounding the observed relationships. Future investigations should incorporate these variables as covariates or moderators to enhance the robustness of the findings.

Conclusion

This study proposes a comprehensive framework and offers a novel analytical perspective for understanding the factors that shape individuals’ trust in and adoption of GenAI-generated health information. The findings indicate that trust in ChatGPT is positively related to users’ intention to adopt health information. Trust is enhanced by situational normality, autonomy, personalisation, and, most notably, information quality. Among these, information quality emerged as the most influential factor in shaping users’ trust and adoption intentions. These empirical results provide data-driven support for existing research and offer practical insights for developing strategies to foster greater trust and promote the adoption of health information generated by GenAI tools.

Supplemental Material

sj-doc-1-dhj-10.1177_20552076251374121 - Supplemental material for Promoting trust and intention to adopt health information generated by ChatGPT among healthcare customers: An empirical study

Supplemental material, sj-doc-1-dhj-10.1177_20552076251374121 for Promoting trust and intention to adopt health information generated by ChatGPT among healthcare customers: An empirical study by Shuangyan Guo, Yang Song, Guanyun Chen, Hongxin Han, Hong Wu and Jingdong Ma in DIGITAL HEALTH

Supplemental Material

sj-doc-2-dhj-10.1177_20552076251374121 - Supplemental material for Promoting trust and intention to adopt health information generated by ChatGPT among healthcare customers: An empirical study

Supplemental material, sj-doc-2-dhj-10.1177_20552076251374121 for Promoting trust and intention to adopt health information generated by ChatGPT among healthcare customers: An empirical study by Shuangyan Guo, Yang Song, Guanyun Chen, Hongxin Han, Hong Wu and Jingdong Ma in DIGITAL HEALTH

Supplemental Material

sj-docx-3-dhj-10.1177_20552076251374121 - Supplemental material for Promoting trust and intention to adopt health information generated by ChatGPT among healthcare customers: An empirical study

Supplemental material, sj-docx-3-dhj-10.1177_20552076251374121 for Promoting trust and intention to adopt health information generated by ChatGPT among healthcare customers: An empirical study by Shuangyan Guo, Yang Song, Guanyun Chen, Hongxin Han, Hong Wu and Jingdong Ma in DIGITAL HEALTH

Footnotes

Abbreviations

Acknowledgements

The authors would like to thank the anonymous reviews for their constructive comments to improve the manuscript.

Ethical approval

This study received ethical review from the Medical Ethics Committee of Tongji Medical College, Huazhong University of Science and Technology (No. 2024S225).

Consent for publication

Not applicable. The survey process did not ask for or disclose the identity of the respondents. The dataset provided by the website administrators contained only anonymous data.

Contributorship

All authors have contributed to the finalisation of the manuscript and are in agreement with the published version.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the National Natural Science Foundation of China (72474078). The contents are those of the authors and do not necessarily represent the official views of, nor an endorsement by, the National Natural Science Foundation of China.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The data that support the findings of this study are available from the corresponding author upon reasonable request.

Guarantor

Jingdong Ma.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.