Abstract

Background

Cardiopulmonary exercise testing (CPET) is conducted globally. On TikTok, CPET-related content serves as a key source of information for the public. However, the quality of these videos has not been systematically evaluated. This study aims to assess whether CPET videos on TikTok meet the informational needs of users.

Methods

A cross-sectional analysis was performed on TikTok videos about CPET in China. Video sources were identified and analyzed. Content evaluation focused on CPET principles, indications, procedures, and indicator interpretation. The reliability and quality of the videos were assessed using four standardized tools: modified DISCERN, Global Quality Scale (GQS), JAMA benchmarks, and Patient Education Materials Assessment Tool for Audiovisual Materials (PEMAT-A/V). Misinformation was summarized, and the relationship between video quality and characteristics was examined.

Results

Of the video sources, 43.8% were from physicians, 12.5% from nonphysicians, 12.5% from general users, 14.5% from news agencies, 12.5% from nonprofit organizations, and 4.2% from for-profit organizations. Median scores for modified DISCERN, GQS, JAMA, PEMAT-A/V understandability, and actionability were 1.00, 1.00, 2.00, 33.00, and 29.00, respectively. Videos by physicians had significantly higher modified DISCERN and JAMA scores compared to those by nonphysicians (

Conclusions

The quality and reliability of CPET videos on TikTok are uncertain, with many containing significant misinformation. This problem largely stems from content creators’ insufficient understanding of CPET. To address this, implementing standardized training and certification is necessary. Videos produced by physicians generally exhibit higher quality, highlighting the importance of strengthening their leadership in CPET teams. Furthermore, social media platforms should work with CPET providers and video creators to develop a certification system for medical information. These steps could improve video quality, reduce misinformation, and promote accurate CPET knowledge, ultimately benefiting public health.

Introduction

Cardiopulmonary exercise testing (CPET) is a maximal exercise assessment that integrates gas exchange analysis to provide a comprehensive evaluation of physiological responses to exercise and cardiorespiratory fitness. 1 Cardiopulmonary exercise testing enables the simultaneous monitoring of cardiovascular and ventilatory system responses under exercise stress, serving as a robust tool for functional assessment. Unlike exercise electrocardiography (ECG) stress tests, CPET directly and noninvasively quantifies minute ventilation, heart rate, and expired gases, including oxygen uptake and carbon dioxide output, during both rest and exercise. These measurements produce reproducible data on the interaction between ventilation, gas exchange, and cardiovascular and musculoskeletal function, facilitating the identification of abnormalities, diagnosis of unexplained exertional symptoms such as dyspnea, and monitoring of health trajectories and therapeutic interventions. 2 Additionally, CPET provides prognostic value for cardiopulmonary diseases, assists in designing exercise prescriptions, and assesses perioperative cardiopulmonary risks. As extreme sports such as marathons, mountain climbing, alpine skiing, and high-altitude activities gain popularity, the evaluation of cardiorespiratory function has become increasingly important. Providing accurate guidance for athletes and enthusiasts on safe participation highlights the growing significance of this field. Guidelines and expert consensus have endorsed its clinical application worldwide.1,3 Despite the increased necessity for CPET, the public understanding remains limited. Insufficient face-to-face communication with CPET providers contributes to this issue. Even when interactions occur, CPET providers often struggle to meet the public's informational needs due to workload constraints and insufficient CPET knowledge among providers. Consequently, the public seek information about CPET through alternative channels. In today's digital age, video dissemination occurs primarily through mobile platforms, particularly short-video platforms like TikTok. Due to its accessibility, TikTok has emerged as a crucial medium for health-related videos, assisting patients in bridging the gap between their demand for health information and limited access to physical services. 4 According to the “TikTok Health Science Data Report,” medical health is one of the platform's key content categories. 5 By March 2023, TikTok hosted over 35,000 certified physician creators who generated more than 21,000 new pieces of health science content daily. The platform sees over 200 million users accessing health-related content each day, with certified physicians having produced a total of 4.43 million videos. The “2023 TikTok Health Lifestyle New Paradigm White Paper” reveals that from January to June 2023, more than 100 million health-related short videos were created, garnering nearly 500 billion views. 6 Over 100 million users show interest in medical health topics, engaging with such videos at least twice a month. Short video platforms now constitute the second-largest medical ecosystem after hospitals, highlighting the expanding role of internet-based health models in modern healthcare systems. 7 Given TikTok's pivotal role in health information dissemination, its CPET-related videos offer an excellent avenue for the public to learn about CPET. However, whether this information meets their knowledge needs or potentially leads to misunderstandings remains unstudied. This study, therefore, analyzes the content quality and prevalence of misinformation regarding Chinese CPET videos on TikTok.

Methods

Search strategy and data extraction

To mitigate bias from personal recommendation algorithms, we used three tactics: creating new TikTok accounts for evaluation purposes, deactivating personalized recommendations, and disabling access to mobile location services. We used two Chinese terms to retrieve relevant TikTok videos related to CPET: “心肺运动试验” (CPET) and “运动心肺功能” (exercise cardiopulmonary function). We selected “exercise cardiopulmonary function” as a keyword for three reasons. First, it highlights that the test is performed under exercise stress conditions, so the term is commonly used by Chinese healthcare staff. Second, searches using this term on Baidu, China's most widely used search engine, pertain directly to CPET. Third, video search analysis indicates many duplicates for these two terms, suggesting TikTok's algorithm robustly correlates them. TikTok offers three sorting options for search results: “overall ranking,” “most recent,” and “most likes.” Since most users use the default setting, we retrieved the top 50 videos for each keyword on September 2, 2023, using the “overall ranking” mode, yielding a total of 100 videos. We chose 50 videos for two reasons. First, TikTok's search algorithm prioritizes topic relevance, with the most pertinent CPET videos appearing at the top. Beyond 50 results, relevant videos became scarce. This selection criterion was also informed by methodologies from previous research, which used 50 as a cutoff point when relevant videos were limited. For instance, in studies on atrial fibrillation, only 49.3% of available videos met the inclusion criteria, leading to the adoption of 50 videos as the threshold.

8

Second, users of web-based health information generally follow the “principle of least effort,” favoring quick access to high-ranking results over thorough exploration.

9

As such, general health consumers typically view only the top search results.

10

To shortlist the most relevant videos, we eliminated duplicates (

Search strategy and video screening procedure.

Classification of videos

The videos were classified into six categories based on their sources: (1) Physicians, (2) Nonphysicians, (3) General users, (4) News agencies (i.e., network media, newspapers, TV stations, radio stations), (5) Nonprofit organizations, and (6) For-profit organizations. This classification facilitated grouping videos with similar content and distinguishing those with different content.

Evaluating methodologies

Evaluation procedure

Evaluation tasks were conducted by two qualified physicians (XG and BD) from the Division of Cardiology in a tertiary teaching hospital, both having significant experience in cardiology and CPET. Prior to scoring, the evaluators familiarized themselves with management guidelines from the American Heart Association, 1 the Perioperative Exercise Testing and Training Society, 3 and Chinese Society of Cardiology, 11 and official scoring instructions for modified DISCERN, Global Quality Scale (GQS), JAMA, and Patient Education Materials Assessment Tool for Audiovisual Materials (PEMAT-A/V). Because CPET involves multidisciplinary knowledge—including cardiology, respiratory medicine, sports medicine, and rehabilitation medicine—the evaluators consulted experts from these disciplines to ensure accurate and objective video evaluations. Video metric data such as likes, comments, collections, and shares may affect evaluator objectivity, leading to bias. Therefore, we used the software “Kwai-TikTok Download Assistant” (version 2.5.4) to download videos without metric data, reducing evaluation bias. Evaluations were carried out independently, and the consistency between raters was quantified by calculating Cohen's κ coefficients, with values ranged from 0.807 to 0.868 indicating good interrater reliability (Supplementary Table 1). For any divergences in ratings, they were reconciled through collaborative dialogue.

Assessment of video content, reliability, and quality

We assessed the content, reliability, and quality of videos using established scoring methods. The video content was evaluated for coverage of CPET principles and functions, indications, operational procedures, and interpretation or application of indicators. We employed a modified DISCERN questionnaire to evaluate content reliability based on five factors: clarity, relevance, traceability, robustness, and impartiality. 8 This tool is extensively validated and widely used for evaluating health-related content across various video-sharing platforms. 12 Each aspect was scored on a 2-point scale: unaddressed (0 points) or addressed (1 point). Median scores of 0–2 indicate low quality, while scores of 3–5 indicate high quality. For overall video quality, we used the GQS, which assesses the quality of information and the value of the source for lay users.13,14 The GQS uses a 5-point Likert scale ranging from 1 (poor quality) to 5 (excellent quality). Videos scoring 4 or 5 were deemed high quality; those with a score of 3 were considered of uncertain quality, and scores of 1 or 2 were classified as low quality. 9 Additionally, we quantified the reliability of video sources using the JAMA benchmark criteria on a scale of 0–4, with each component allocated a score of 1.15,16 Median scores of 3–4 are categorized as high quality, 2 as uncertain quality, and 0–1 as low quality. 9 We also used the PEMAT-A/V to assess the understandability and actionability of audiovisual patient education materials.17,18 It comprises 13 items measuring understandability and 4 items assessing actionability. Except for nonapplicable items, each item is scored either 1 point (Agree) or 0 points (Disagree). The sum is divided by the total possible points, excluding nonapplicable items. Multiplying the result by 100 yields a percentage, representing the understandability or actionability score on PEMAT-A/V, where scores below 70% indicate poor understandability or actionability. 13 Detailed information regarding the modified DISCERN, GQS, JAMA Benchmark Criteria, and PEMAT-A/V are available online as Supplementary material.

Statistical analyses

Statistical analyses were performed using SPSS software (version 27.0; IBM), and data visualizations were conducted using R software (version 4.3.2; R Foundation for Statistical Computing). Categorical variables are presented as frequencies and relative frequencies, and continuous variables are reported as median (IQR) when not normally distributed. Group comparisons were analyzed using the Kruskal–Wallis H test, and relationships between quantitative variables were evaluated using Spearman correlation analysis. A significance level of

Results

Video characteristics

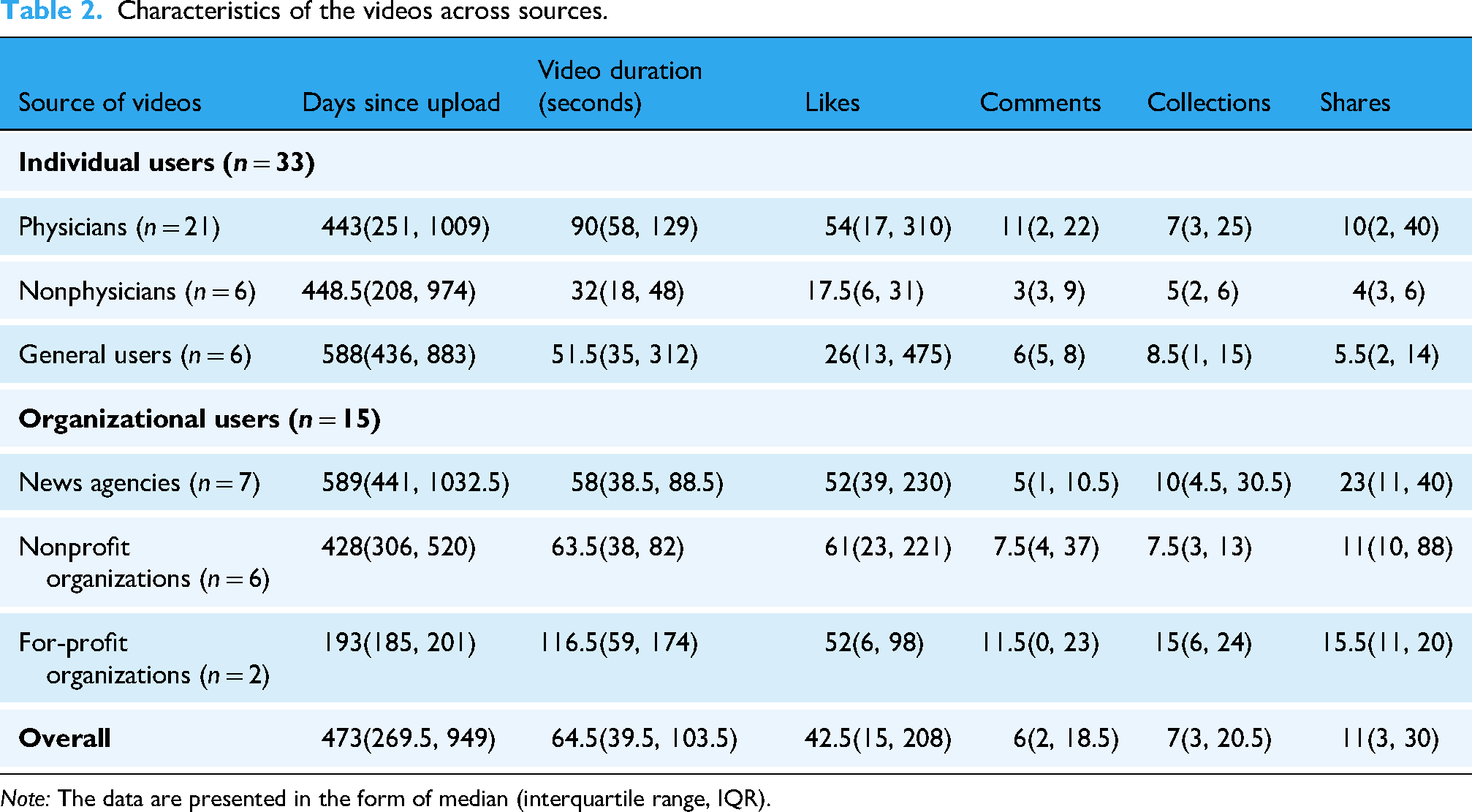

After applying inclusion and exclusion criteria, we analyzed 48 videos (Figure 1). These were categorized by uploader identity into six groups: Physicians, Nonphysicians, General users, News agencies, Nonprofit organizations, and For-profit organizations. Physicians uploaded 21 videos (43.8%), nonphysicians and general users each uploaded 6 videos (12.5%), news agencies uploaded 7 videos (14.5%), nonprofit organizations uploaded 6 videos (12.5%), and for-profit organizations uploaded 2 videos (4.2%) (Table 1). The median time since upload was 473 days (IQR 269.5–949), and the median video duration was 64.5 s (IQR 39.5–103.5). The median numbers of likes, comments, collections, and shares were 42.5 (IQR 15–208), 6 (IQR 2–18.5), 7 (IQR 3–20.5), and 11 (IQR 3–30), respectively. There were no significant differences in these metrics across groups (Table 2).

Proportion of videos by different types of uploaders.

Characteristics of the videos across sources.

Information content and search trends

Coverage of the four content areas varied across the 48 videos (Table 3). Specifically, 16 videos (33.3%) discussed CPET principles and functions, 25 videos (52.1%) mentioned indications, 29 videos (60.4%) showed the operational procedure, and 14 videos (29.2%) addressed interpretation and/or application of indicators. Notably, 14 videos highlighted CPET's evaluative role in extreme sports (29.2%), and 9 videos addressed its use in sudden death screening (18.8%), reflecting current trends in sports and health consciousness. The detailed content and specific search hot terms for each video can be found in Supplementary Table 2 of Supplementary Material.

Video contents and search hot terms.

Information reliability and quality

The median reliability score, assessed by the modified DISCERN instrument, was 1.00 (IQR 0.50–2.00) for all videos, with physicians scoring higher than nonphysicians (Figure 2A). The median GQS score was 1.00 (IQR 1.00–2.00) (Figure 2B), and the median JAMA score was 2.00 (IQR 1.00–2.00) (Figure 2C), with videos from physicians significantly outscoring those from nonphysicians and general users (

Comparisons of modified DISCERN scores, GQS scores, JAMA scores, and PEMAT-A/V scores among different sources, and correlation heatmap among video characteristics. (A) Violin plots showing the modified DISCERN scores among videos from different sources. (B) Violin plots showing the total GQS scores among videos from different sources. (C) Violin plots showing the total JAMA scores among videos from different sources. (D) Violin plots showing the PEMAT-A/V understandability scores among videos from different sources. (E) Violin plots showing the PEMAT-A/V actionability scores among videos from different sources. (F) Correlation heat map showing the correlations among video characteristics. Compared to nonphysicians, *

Modified DISCERN, GQS, JAMA, and PEMAT-A/V scores of videos by source.

Correlation analysis

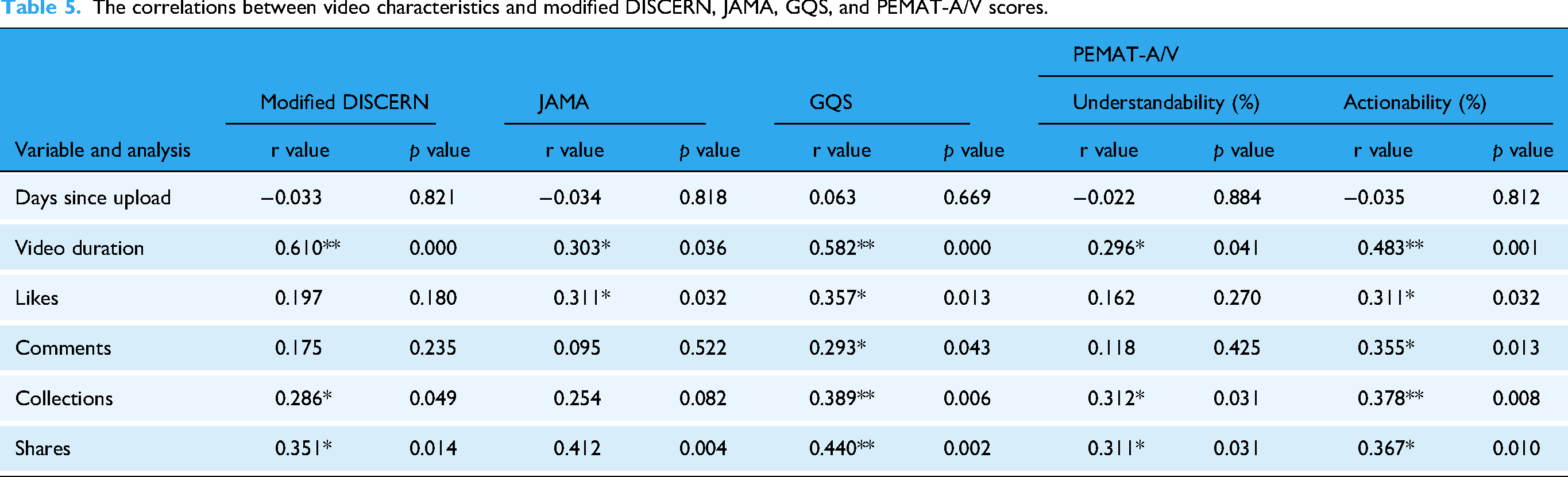

Spearman analysis revealed correlations among video characteristics. Video duration correlated positively with modified DISCERN scores (

The correlations between video characteristics and modified DISCERN, JAMA, GQS, and PEMAT-A/V scores.

Video misinformation

Analysis of misinformation yielded four main areas: 4 videos (8.3%) overemphasized CPET's role, 10 (20.8%) depicted nonstandard procedures, 10 (20.8%) misinterpreted CPET indicators, and 2 (4.2%) contained potential safety risks. Overstatements pertained to CPET's effectiveness in sudden cardiac death screening and marathon performance, while procedural errors mainly included excessive knee joint angles during pedaling exercise. The misinterpretations involved various electrocardiographic and ventilatory metrics. Notably, one video failed to remove a mask promptly from a distressed subject, and another downplayed CPET's safety risks (Table 6). The specific misinformation in each video is also listed in Supplementary Table 2.

Video misinformation.

Comparison with other health-related videos

The quality of CPET-related videos was compared to other health content on TikTok and similar platforms. For cardiovascular content, CPET videos had lower modified DISCERN scores than atrial fibrillation,

8

and scored lower than coronary heart disease videos on GQS and JAMA scores

9

(

Comparisons of modified DISCERN, GQS, and JAMA scores between CPET and other health-related videos.

Discussion

Primary findings

Our results showed a scarcity of CPET videos on TikTok, with 36.8% (32/87) being irrelevant. Most irrelevant videos were related to lung function, step tests, breath-holding tests, and exercise ECG stress tests. This rate was significantly higher compared to coronary heart disease-related videos, which had only nine irrelevant videos out of 200. 9 Although the overall video quality was low, physician-uploaded videos had higher quality than those by nonphysicians and general users. This is reflected in higher modified DISCERN scores for physician videos and higher JAMA scores for physicians compared to nonphysicians and general users. Correlation analysis showed that likes, comments, collections, and shares positively correlated with various quality scores. Misinformation included exaggerations about CPET, improper operations, misinterpretation of indicators, and safety risks.

Correlations between video quality and characteristics

Metrics such as the number of likes, comments, collections, and shares indicate a video's popularity. 19 Our analysis revealed positive correlations between these metrics and various quality scores, including modified DISCERN, JAMA, GQS, and PEMAT-A/V evaluations. This suggests that high-quality videos are more likely to achieve greater popularity. Furthermore, there was a direct correlation between video duration and quality scores, indicating that longer videos tend to provide more comprehensive information, thus enhancing their quality. 20

Critical issues in CPET videos and their implications for public perception

The poor quality of CPET videos reflects significant issues in the presentation and understanding of CPET on platforms like TikTok. Consistent with studies analyzing TikTok health videos,14,21 our findings reveal that the quality of CPET videos is notably low, suggesting a widespread problem across health-related short videos. Notably, CPET videos were of even lower quality than other health-related content (Table 7), indicating a potential lack of professional competence among CPET health providers. Globally, CPET application remains inadequate, and the knowledge of providers is often insufficient.

22

In China, CPET is not widely practiced and is unfamiliar to the public and providers.

11

Even among those aware of CPET, few can effectively interpret results or apply them clinically. Misinformation about CPET is prevalent, including exaggerated claims about its role, improper operations, misinterpretation of indicators, and safety risks. These issues reflect broader problems requiring comprehensive analysis for improvement.

(1) Exaggeration of CPET's Role in Extreme Sports and Sudden Death

A common issue in these videos is the exaggerated portrayal of CPET's role, particularly concerning marathons, extreme sports, and sudden death, as seen in videos No. 3, 14, and 37 (Supplementary Table 2). While CPET can assess an individual's ability to tolerate marathon-like exercises23,24 and predict engagement in activities like mountain climbing,25,26 passing a routine CPET does not ensure readiness for such events. Successful participation requires high cardiorespiratory function, sound psychological and nutritional states, proper running techniques, and injury prevention. Studies predicting marathon performance consider factors beyond peak oxygen uptake, including previous race results, age, weekly distance, and body fat percentage, which are often omitted in these videos. For sudden death screening, various CPET parameters correlate with mortality rates in large-scale population studies.27–32 Despite this, CPET is not the preferred diagnostic method, ECGs and echocardiograms better detect athletes susceptible to sudden death.

33

Exaggerating CPET's role could mislead viewers into underestimating risks, potentially increasing the likelihood of sudden death.

(2) Failure to Holistically Interpret CPET Results

Cardiopulmonary exercise testing provides crucial information for diagnosing and prescribing exercise for cardiovascular patients, including anaerobic and ischemic thresholds. 1 However, some TikTok videos focus mainly on ECG data, ignoring other important indicators such as ventilation and metabolism, especially for diagnosing coronary artery disease (CAD), as seen in videos No. 12, 14, 26, and 43 (Supplementary Table 2). This may lead viewers to mistakenly think CPET is just an ECG stress test, causing confusion about testing procedures and affecting patient compliance. Electrocardiography stress tests alone have lower accuracy for CAD diagnosis, with sensitivity between 50% and 72% and specificity between 69% and 90%. 34 False results can lead to unnecessary tests. 35 Cardiopulmonary exercise testing, which combines ECG with oxygen pulse and uptake, provides superior diagnostic accuracy, identifying CAD in more patients and detecting exercise-induced myocardial ischemia with higher sensitivity.36–38

While heart rate-based exercise prescriptions are common, CPET offers better safety and effectiveness.

39

Unfortunately, many TikTok videos omit or poorly explain key CPET data, as exemplified by video No. 14 (Supplementary Table 2). Relying only on ECG test results to judge exercise endurance ignores factors such as baseline heart rate, medication effects, and training. Test termination criteria are also often incomplete, neglecting symptoms, heart rate response, and oxygen consumption trends,

11

as seen in video No. 26. A more comprehensive approach to interpreting CPET results is needed.

(3) Lack of Interdisciplinary Skills Among CPET Practitioners

Cardiopulmonary exercise testing requires knowledge in sports medicine and human physiology. A common issue is improper knee joint angles during cycling tests, which can increase injury risk and reduce performance,

40

as seen in videos No. 9, 19, 26, 33, 37, 45, and 47 (Supplementary Table 2). For example, a 5% change in saddle height can significantly affect knee movement. Correcting the knee flexion angle (25–30 degrees) can reduce injury risks and improve oxygen consumption.

41

However, many videos show incorrect angles, reflecting a lack of interdisciplinary skills among operators. This can mislead viewers into thinking CPET is simple, potentially causing fatigue and injury during future tests. Cardiopulmonary exercise testing in China involves professionals from various fields, leading to fragmented understanding. There is an urgent need to improve multidisciplinary collaboration despite resource limitations.

(4) Underestimating CPET Safety Risks

Cardiopulmonary exercise testing is generally safe in noncardiovascular populations, with an event rate of 0.6–0.9 per 10,000 tests, but the rate increases to 16–20 per 10,000 tests for those with cardiovascular disease.42,43 Age should not be the only factor in assessing safety, as younger individuals can also experience higher risks if pretest evaluations are neglected. Some videos show younger subjects without proper safety measures, as seen in video No. 34 (Supplementary Table 2), which could lead to unsafe assumptions. To ensure safety, thorough pretest evaluations, following protocols, quick recognition of complications, and posttest observation are essential. Solely using age as a risk factor can cause harm—misleading younger individuals into excessive exercise or discouraging older individuals from using CPET. A safety concern was shown in No. 15 video where a subject wearing a mask faced breathing difficulty, and the operator delayed assistance. Such incidents may make viewers perceive CPET as uncomfortable or unsafe, potentially deterring them from future tests.

Addressing critical challenges in CPET videos through practitioner training and certification standards

The low quality of CPET videos on platforms like TikTok underscores significant gaps in professional expertise and procedural execution. This deficiency primarily stems from inadequate training and multidisciplinary knowledge among CPET health providers. Often, health providers responsible for CPET lack specialized education in CPET during their formative years. Instead, they rely on theoretical resources or short-term programs that do not emphasize hands-on skills. 44 Furthermore, conducting CPET requires a broad understanding of various medical domains, 45 yet many providers focus narrowly on their specialties, neglecting crucial components like the musculoskeletal system. 44 In addition, inadequate physician oversight in nonmedical settings raises concerns about safety protocols and adherence to medical standards, as evidenced by improper procedures such as incorrect mask usage. To address these challenges, establishing standardized training and certification processes is essential. Drawing inspiration from models like the American Association of Cardiovascular and Pulmonary Rehabilitation, comprehensive educational frameworks should be developed. 46 These would integrate CPET education into medical curricula at all levels and involve regular recertification to keep providers abreast of evolving technologies. Moreover, promoting physician leadership in multidisciplinary teams is crucial for ensuring accurate result interpretation and emergency preparedness, particularly for high-risk patients. 45 Nonphysicians must adhere to clear protocols, with strict guidelines indicating the qualifications of personnel presented in video content, particularly within community-based testing environments. Strengthening partnerships between medical and nonmedical institutions can further facilitate collaboration and expand access to professional support.

Enhancing the quality of CPET videos: strategies for effective audience education and platform collaboration

Improving the quality of CPET-related videos is essential for effectively educating audiences about its diagnostic potential. Content creators should emphasize CPET's role in delivering accurate exercise prescriptions39,47 and risk assessments3,48 by focusing on critical metrics such as the anaerobic threshold and VE/VCO2 slope. Employing engaging visuals alongside expert narration can significantly enhance viewer comprehension. Furthermore, digital platforms should promote standardization and best practices to ensure the reliability of CPET content. Collaboration between CPET health providers and platform providers is crucial for credibility. Due to the complexity of medical information, longer videos are recommended to provide sufficient depth without losing quality. 9 Creators should follow established evaluation criteria, such as those provided by modified DISCERN, JAMA, and PEMAT-A/V to enhance clarity and reliability. Additionally, video structure and organization should be optimized, medical jargon minimized to aid comprehension, and practical step-by-step instructions included to enhance actionability. Some videos are poorly structured and only briefly describe CPET procedures without clear sections or summaries, making them hard to follow. A better approach is to explain each stage of CPET in order—covering indications, contraindications, preparation, procedures, and results. Too much unexplained medical jargon, like “anaerobic threshold,” also confuses viewers. For example, the anaerobic threshold is the point during intense exercise when your body cannot get enough oxygen to your muscles, so it produces energy without oxygen, causing lactic acid to build up and making you tired. Many videos also fail to show all steps of CPET, so viewers cannot fully understand or repeat the process. Breaking down CPET into pretest, test, and posttest phases with clear explanations for each can help viewers learn and follow along.

TikTok should consider introducing accreditation systems for medical content creators to help audiences identify trustworthy sources.9,21 Videos created by physicians are particularly valuable due to their accuracy, credibility, and reduced likelihood of misinformation. To leverage physicians’ leadership in medical video production, it is crucial to encourage their increased participation in content creation by focusing on specific areas. First, medical associations should provide better training in professional skills and CPET knowledge to involve more practitioners, 44 enabling them to produce educational videos. Second, associations and social media platforms should support video production by providing tools such as artificial intelligence and deep learning technologies. 49 These resources can save time and reduce workload, allowing physicians to share health information without impacting their clinical duties. Such experience also helps physicians improve their communication skills, making videos clearer and more accessible. 50 Lastly, these platforms should offer algorithmic support to boost visibility, thus enhancing physicians’ social influence and personal brand development, motivating them to produce more TikTok videos. 49 These strategies would promote ongoing quality video production, inspire other physicians to participate, and create a positive cycle.

Strengths, limitations, and future directions

This study assessed the quality of CPET-related videos on TikTok using multiple evaluation methods, including content analysis, modified DISCERN, GQS, JAMA, and PEMAT-A/V. These tools enabled a multidimensional appraisal of video quality, addressing aspects such as informational breadth, treatment credibility, reliability, publication standards, understandability, and actionability. Multiple assessment tools can complement each other's limitations. For instance, the modified DISCERN assesses information reliability based on clarity, relevance, traceability, robustness, and impartiality but does not address the video's understandability or actionability. 8 Conversely, PEMAT-A/V focuses on factors such as content organization, language, layout, design, and visual aids, offering detailed suggestions for improving understandability and actionability, 17 but does not assess scientific accuracy. The GQS provides an overall viewer-centered quality rating based on information flow and breadth13,14 but lacks scientific validity evaluation. The JAMA benchmark evaluates scientific rigor and legal compliance according to journalistic standards. 15 As a result, this comprehensive approach offers a more accurate reflection of video quality and potential misinformation, providing a stronger foundation for identifying and exploring potential solutions. Additionally, the study examined correlations between video characteristics (e.g., likes, comments, collections, and shares) and video quality. The observed positive correlations between quality ratings and popularity metrics reduce the likelihood of artificially manipulated engagement data, thereby enhancing the reliability of the findings. However, some limitations should be noted. Only CPET-related TikTok videos were analyzed, with no inclusion of other platforms such as Bilibili or Kwai. However, given TikTok's leading status among short-video platforms in China, its content is likely representative. Moreover, while CPET videos on global platforms such as Instagram or YouTube were not studied, research on other medical topics indicates that low quality and misinformation are common across social media. 51 Similar trends have been observed in videos on gastric cancer across TikTok, Bilibili, and YouTube. 52 Therefore, these findings may be generalizable to other platforms both domestically and internationally, strengthening the conclusions. Future studies should analyze CPET-related videos across various platforms and languages to further validate these results. Although inter-rater reliability was high, as shown by Cohen's κ coefficients, some subjectivity remains. Further research could incorporate artificial intelligence techniques to enhance the objectivity of quality assessments.

Future efforts should focus on improving professional competence, public education, and interdisciplinary collaboration regarding CPET videos. Establishing comprehensive training and certification for practitioners is essential, integrating practical skills with theory to ensure accurate information delivery. Educational content should also include relevant medical fields to address the current shortage of multidisciplinary expertise. In addition, social media platforms should collaborate with medical experts to develop accreditation systems for content creators, enabling audiences to identify reliable sources and reducing misinformation. Involving physicians and qualified professionals in video production can further improve health content credibility. Lastly, optimizing video structure, minimizing medical jargon, and providing clear instructions are crucial for improving audience understanding and engagement. These strategies can significantly increase public comprehension of CPET and promote its wider clinical adoption.

Conclusion

In conclusion, CPET content on the popular health video platform TikTok is sparse and generally of low quality. Compared to other health-related videos, CPET videos are lacking both in quantity and in quality. The information conveyed is often misleading, reflecting a lack of understanding of CPET and posing significant safety risks. Despite these deficiencies, videos uploaded by physicians tend to be of relatively higher quality. Viewers are therefore advised to prioritize CPET videos produced by professional physicians to avoid misinformation. Future interventions should focus on establishing standardized training and certification for CPET, enhancing physician leadership within multidisciplinary CPET teams, and leveraging the unique benefits of CPET. Additionally, social media platforms should collaborate with CPET health providers and video creators to develop robust certification systems for medical content production, thus improving the quality of CPET videos for more effective audience education. Through these efforts, the theoretical knowledge and operational skills of CPET healthcare providers can be enhanced, leading to the production of higher-quality videos with reduced misinformation. This will ensure that patients and viewers receive accurate and reliable CPET information, foster greater societal understanding and acceptance of CPET, and contribute to the advancement of public health.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251341090 - Supplemental material for TikTok's cardiopulmonary exercise testing videos: A content analysis of quality and misinformation

Supplemental material, sj-docx-1-dhj-10.1177_20552076251341090 for TikTok's cardiopulmonary exercise testing videos: A content analysis of quality and misinformation by Xun Gong, Zhineng Zhang, Bo Dong and Hongwei Pan in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors express their gratitude to the participants who contributed to the study.

Ethical considerations

No clinical data, human specimens, or laboratory animals were used in this study. All information was obtained from publicly released TikTok videos, and none of the data involved personal privacy concerns. Additionally, this study did not involve any interaction with users; therefore, no ethics review was needed.

Author contributions

XG conceived and designed the study, and drafted the original manuscript. XG and BD reviewed and scored the videos. XG and ZNZ collected and analyzed the data. XG and ZNZ interpreted the data. HWP designed the research, supervised the report, and revised the manuscript. All authors contributed to the article and approved the final version.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The study was supported by the Hunan Provincial Natural Science Foundation project (grant number 2022JJ30337); the Hunan Provincial Health Commission Research Project (grant number B202303017770); the Scientific Research Fund of Hunan Provincial Education Department (grant number 22B0051).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

Raw data were generated at Hunan Provincial People's Hospital. Derived data supporting the findings of this study are available from the corresponding authors upon request.

Guarantors

ZZ and BD.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.