Abstract

Objective

Digital communication between patients and healthcare teams is increasing. Most patients find this effective, yet many patients remain digitally isolated, a social determinant of health. This study investigates patient attitudes toward healthcare's newest digital assistant, the chatbot, and perceptions regarding healthcare access.

Methods

We conducted a mixed methods study among patient users of a large healthcare system's chatbot integrated within an electronic health record. We purposively oversampled by race and ethnicity to survey 617/3089 (response rate 20%) patient users online using de novo and validated items. In addition, we conducted semi-structured interviews with users (n = 46) purposively sampled based on diversity, age, or select survey responses between November 2022 and May 2024.

Results

In surveys, 213/609 (35.0%) felt they could not understand the chatbot completely, and 376/614 (61.2%) felt the chatbot did not completely understand them. Of 238 users who felt completely understood by the chatbot, 178 (74.8%) believed the chatbot was intended to help them access healthcare; in comparison, of 376 users who felt not completely understood, 155 (41%) believed the chatbot was intended to help access (p < 0.001). In interviews, among themes observed, Black, Hispanic, less educated, younger, and lower-income participants expressed more positivity about the chatbot aiding healthcare access, stating convenience and perceived absence of judgment or bias.

Conclusion

Patients’ experience with the chatbot appears to affect their perception of the intent of the chatbot's implementation; those adept at chatbot communication or within historically less trusting groups may prefer a quick, non-judgmental answer to questions via the chatbot rather than human interaction. Although our findings are limited to one health system's existing chatbot users, as patient-facing chatbots expand, attention to these factors can support healthcare systems’ efforts to design chatbots that meet the unique communication needs of all patients, expressly those at risk of digital isolation.

Background

Spurred on by COVID-19, patients have increasingly moved to digital communication, such as patient messaging, with their healthcare teams.1–3 Digital literacy, a skill necessary to access digital technologies for healthcare, refers here to the “ability of individuals to acquire, process, communicate, and understand health information and services, make effective health decisions, and promote and improve individual health through the use of digital technologies.”4,5 Such digital literacy, a social determinant of health, typically requires English proficiency and visual acuity and is associated with health outcomes.5–7

Digital isolation refers to “when people find themselves unable to access the internet or digital media and devices as much as other people.” 8 Approximately 15% of patients remain digitally isolated and only one-third of US adults have full digital capability. 4 Digital isolation stems more from a lack of adaptability to this communication mode for healthcare or is limited by communication barriers, rather than availability of technology. 4 Risks for digital isolation include lack of a high school education or age over 65, with patients over age 75 most likely to struggle.4,9 Digital isolation often overlaps with patients in greatest need of frequent communication due to chronic health conditions.4,9,10

The transition to digital communication has been implemented largely without digital literacy patient assessment or education. 3 Digital literacy assessment tools now exist and can be incorporated into electronic health records (EHRs).4,9,11,12 When such assessment tools are utilized with face-to-face patient education an improvement in digital literacy has been shown. 13

Chatbots, artificial intelligence (AI) agents meant to mimic human conversation, are a newer form of direct communication with patients. Public chatbots, such as ChatGPT, are frequently utilized by patients to seek medical information.14–16 Patient-facing chatbots are integrated into the EHR portal interface to assist with patient navigation. 17 Due to AI characteristics, chatbots with expanding abilities have the potential to support 24/7, high-quality, patient-specific care that can improve engagement and assist in healthcare navigation.18–21 Chatbots have also been introduced with minimal clarification as to how best to utilize them for patient benefit. 22

Here, we report on a mixed methods investigation of how patients perceive a chatbot integrated into their EHR and their attitudes toward this chatbot's role in healthcare access. For this study, we define “healthcare access” as “timely use of personal health services to achieve the best possible health outcomes.” 23

Methods

Study setting

Our study involved a “general” patient-facing chatbot integrated within the EHR of a large US health system. The health system partnered with a third party for the chatbot platform but has significant latitude in chatbot features and performance. 24 In our survey and interviews, we referred to the chatbot by its given name chosen by the healthcare system, but referred to it in this manuscript as “the chatbot.” This chatbot is available online to a larger audience and yet this study recruited patient-users within their EHR portal. Launched in October 2018, the chatbot assists in patient tasks, i.e., reporting results, navigating the portal, scheduling appointments, and is linked to the medical record, thus pulling patient-specific information. At the time of our study, the chatbot used natural language processing of patient users’ text-based queries to match user inputs to system intents, providing direct question-and-answer as well as decision-tree-based responses to mimic human conversation. Responses were not generated with a large-language AI model. The chatbot “pops up” automatically as an animated human face for users upon login to their patient portal. As of September 2024, the chatbot was receiving more than 200,000 queries/month from 35,396 unique users. The health system is a three-state hospital system with nineteen affiliated hospitals and hundreds of clinics in rural, suburban, and urban settings. The study was approved as exempt research by the Colorado Multiple Institutional Review Board (21–5127).

Survey sample and procedures

We surveyed patient-users who sent at minimum three back-and-forth messages in one sitting with the chatbot between July 2022 and September 2023. Our sampling procedure was explicitly designed around diversity. We sent a total of 3089 surveys over three phases. In Phase 1, we received (n = 142) responses via random sampling of the eligible patient users. To increase diversity in our sample, in Phase 2 (n = 298) and Phase 3 (n = 177), we sampled in a 2:1 ratio of White non-Hispanic users and users identifying as Black, Hispanic, or Asian to allow exploration of chatbot use by user race and ethnicity. This priority in diverse sampling was done for the sake of justice and inclusivity; if we had continued as in Phase 1, recruitment would have been mainly White participants, and thus not support an ethical commitment to inclusivity in research. There were no repeat participants.

De novo survey questions (Supplemental Materials Survey) asked about the patient-users’ experience with the chatbot, as well as trust, bias, privacy, and perceived intent of the chatbot. Survey questions were a combination of freely available questions and those created by our team related to the novel topic. Survey questions were reviewed for face and content validity by the Community Board of the University of Colorado Center for Bioethics and Humanities (CBH). While the full survey does include validated questions, those questions were not included in this analysis. Users were invited to participate by email up to three times and offered $20 compensation. Data were collected and informed consent appropriate to survey research was obtained using REDCap (version 14.5.19).

Interview sample and procedures

We interviewed chatbot patient-users based on messages or survey answers with ethically significant responses (e.g., misidentification of the chatbot as something other than a computer or extreme trust/distrust). We used purposive sampling to recruit individuals diverse in age, gender, race/ethnicity, rural/urban status, and other sociodemographic traits. Data saturation was met after forty interviews with one exception; as the topic of digital literacy emerged, we sampled five additional older adults to achieve saturation. We also interviewed two healthcare chatbot design engineers for their perspectives.

Semi-structured interviews lasted 30–60 min and asked open-ended questions about the chatbot user experience and ethical concepts. Following an initial review by the Community Board at CBH and a geriatric-focused Patient and Family Research Advisory Council in February 2022, the guide was refined based on emerging findings from each cohort (Supplement Methods Interview Guide). Oral consent was obtained, and interviews were recorded using Zoom (Version 5.0.2) and professionally transcribed.

Analysis

Quantitative analysis

We use descriptive statistics to analyze sociodemographic characteristics. Our primary outcomes were survey questions pertaining to accessibility: “To what extent do you think [the chatbot] is intended to help patients like you navigate the UCHealth system?” and “To what extent do you think [the chatbot] is intended to limit your access to doctors and nurses?”. Both questions had four response options “great extent,” “some extent,” “very little,” or “not at all.” For analysis of both questions, we combined the categories “Very little” and “Not at all” for a total of three response categories per outcome. We combined “very little” with “not at all” due to the small number of respondents in these categories for the system navigation question and then for consistency maintained this categorization for the other access question.

To assess comfort with the Internet, we asked “Overall, how often do you use the Internet?” with answers ranging from “never” to “most of the day,” and “Overall, how confident do you feel using computers, smartphones, or other electronics to do the things you need to do online?”, with responses ranging from “not at all confident” to “very confident.” 25

For this study, we examined whether patient-users’ perceptions of access might be statistically associated with key variables, such as their understanding of the chatbot (and the chatbot's understanding of them), communication abilities, comfort with the Internet, or the belief that the chatbot was asking them to do something they did not want to do. We used two-tailed Chi-square tests and Fisher's exact tests of independence to determine whether there was a significant association between these categorical variables. A p-value of <0.05 was considered statistically significant. All data cleaning and analyses were conducted using Stata version 18.1 (College Station, TX).

Qualitative analysis

Interviews were analyzed using a modified constructivist grounded theory approach. 26 Following interview transcription, the codebook was developed using an open coding approach, to capture and categorize important themes from interviews. All interviews were analyzed by two independent coders in Atlas.ti. (Version 24.0.0.29576). Differences in coding were resolved by a third adjudicator. Constant comparative techniques were applied to clarify codes and the connections between them. 27

Mixed methods integration

The survey initially preceded interviews, but overlapping waves meant the methods were concurrent. For this study, our mixed methods integration began with qualitative themes about users’ perceived impact on accessibility via the chatbot. We then explored survey data to compare the primary outcomes by user sociodemographic characteristics, which we hypothesized were associated with chatbot users’ perceptions of impact on healthcare access. As the survey came first temporally, we present those results first. Qualitative and quantitative researchers met six times to integrate results.

Results

Quantitative results

In total, we received 617 completed surveys, achieving a response rate of 20.0% (617/3089). The mean (SD) survey respondents’ age was 49.3 (SD = 16.8) years, 424/600 (70.7%) reported female sex, and 385/617 (62.4%) reported White non-Hispanic race and ethnicity, 51/617 (8.3%) Black non-Hispanic, 143/617 (23.2%) Hispanic all races, 29/617 (4.7%) Asian non-Hispanic, and 9/617 (1.5%) other races and ethnicities (Table 1).

Demographics of interview and survey participants.

Column totals vary based on missingness in survey responses. Not all questions were mandatory. bInterviews asked participant gender; survey asked participant sex. cInterviewees in the 65+ category were 65–75; survey participants in the 65+ category were 65–85. d“Hispanic” includes the following ethnicity categories: Mexican, Mexican American, Chicano, Puerto Rican, Cuban, or Another Hispanic, Latino, or Spanish Origin. e“Asian” includes the following race categories: Chinese, Filipino, Asian Indian, Vietnamese, Korean, Japanese, or Other Asian. fResponse to the survey question “In your usual language, do you have difficulty communicating, for example, understanding or being understood?”

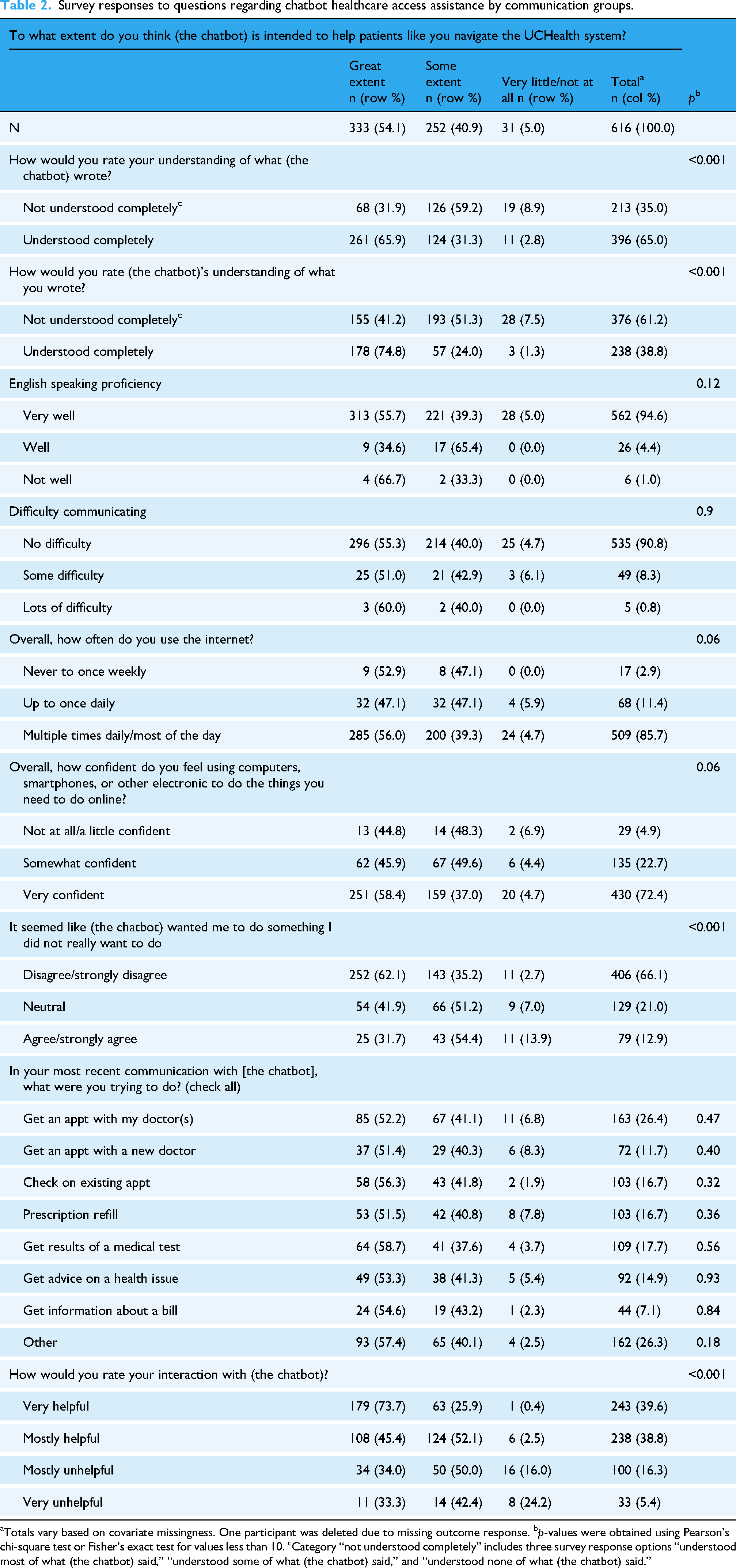

Overall, the survey represented middle-aged, female, healthy, tech-savvy adults. Using the HCAHPS self-reported health scale (where 1 = excellent and 5 = poor) our respondents had an average overall health rating of 2.75, which is slightly improved from the 2023 national average of 2.98. 28 Fewer than 1% (5/590) had a “lot of difficulty communicating” in their usual language. Overall, the group had high English-speaking proficiency; 6/595 respondents (1%) reported speaking English “not well” (Table 2). Approximately three quarters (430/594, 72.3%) of users felt very confident using electronic devices, with most (509/594, 85.7%) using the internet multiple times/most of the day (Table 2).

Survey responses to questions regarding chatbot healthcare access assistance by communication groups.

Totals vary based on covariate missingness. One participant was deleted due to missing outcome response. bp-values were obtained using Pearson's chi-square test or Fisher's exact test for values less than 10. cCategory “not understood completely” includes three survey response options “understood most of what (the chatbot) said,” “understood some of what (the chatbot) said,” and “understood none of what (the chatbot) said.”

Most respondents (333/616, 54.1%) felt that the chatbot was intended to help patients navigate the health system to a “great extent,” and an additional 252/616 (40.9%) felt that the chatbot was intended to help to “some extent” (Table 2). When asked about comprehension between the user and the chatbot, 213/609 (35.0%) felt that they could not understand the chatbot completely, and 376/614 (61.2%) felt that the chatbot could not understand them completely.

In bivariate analysis, there was a statistically significant association between perceptions of access and the chatbot's understanding of what the user had inputted. Of those users who felt completely understood by the chatbot, 178/238 (74.8%) believed the chatbot was intended to help them access healthcare, compared to 155/376 (41%) of users who felt not completely understood (p < 0.001). A similar finding was seen regarding users’ ability to understand what the chatbot wrote back and the perceived helpfulness of the chatbot. English proficiency and the use-case of the chatbot were not statistically associated with perceptions of access.

When asked whether the chatbot is intended to limit access to doctors and nurses, there was a similar association with users’ understanding of the chatbot. Overall, 84/615 (13.7%) of respondents said the chatbot was intended to limit their access to doctors and nurses “to a great extent” (Table 3). Among users who understood the chatbot completely, 248/396 (65.1%) felt that the chatbot was not intended to limit access to providers, compared to 101/212 (47.6%) of people who could not fully understand the chatbot (p < 0.001). Although not statistically significant, there was a relationship of similar direction and magnitude between those who better understood the chatbot and reported that the chatbot was not intended to limit access to providers (Table 3).

Survey responses to questions regarding chatbot limiting access to doctors and nurses/communication.

Totals vary based on covariate missingness. Two participants were deleted due to missing outcome response. bp-values were obtained using Pearson's chi-square test or Fisher's exact test for values less than 10. cCategory “not understood completely” includes three survey response options “understood most of what (the chatbot) said,” “understood some of what (the chatbot) said,” and “understood none of what (the chatbot) said.”

Qualitative results

Of 108 interview invitations sent, we completed 46 interviews with patient-users of the chatbot (overall response rate of 42.6%). Interviewee demographics are presented in Table 1.

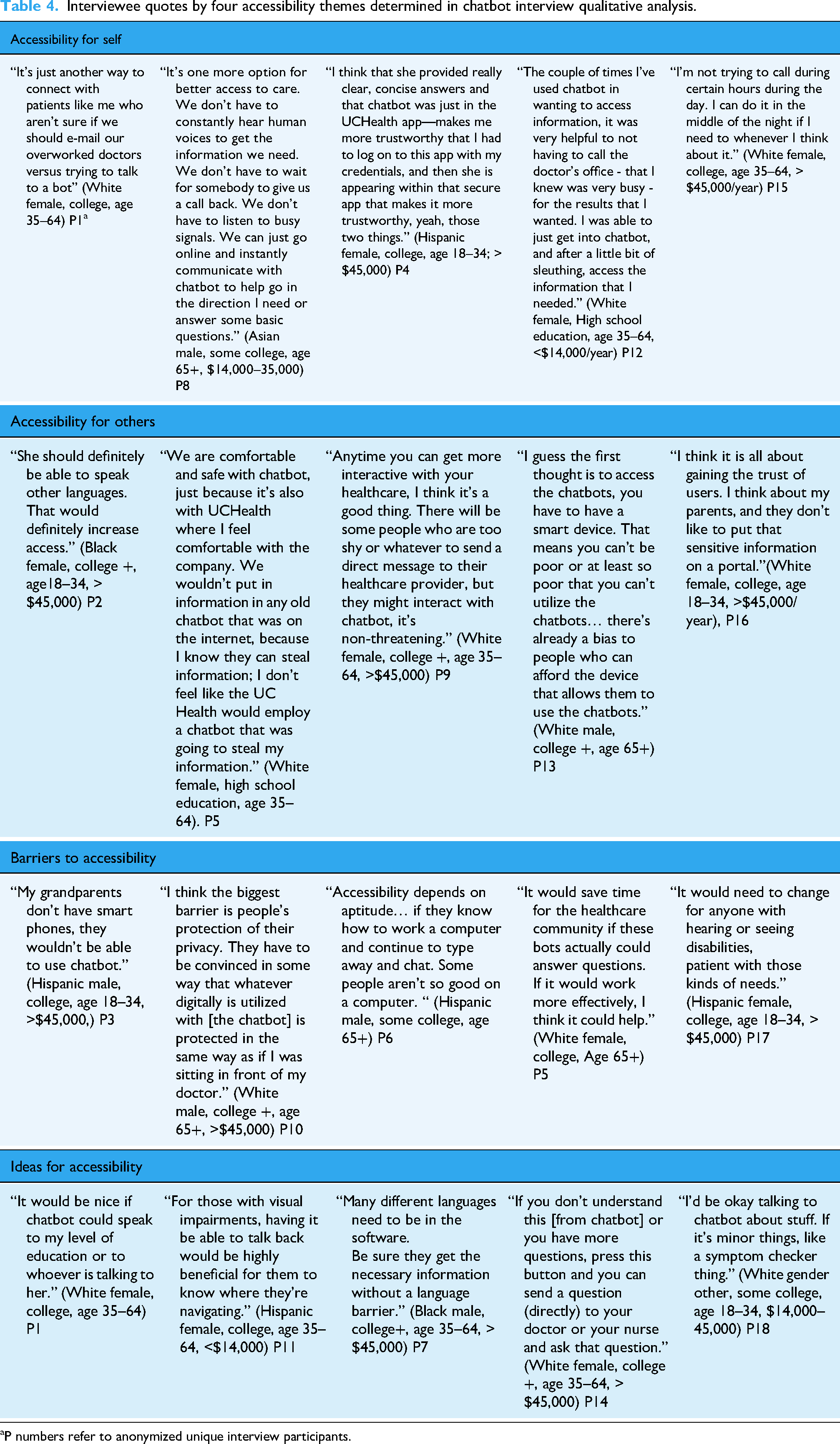

Our analysis found that perceptions of accessibility fit into four main themes, i.e., accessibility for self, accessibility for others, barriers to accessibility, and ideas to improve accessibility (Table 4). These four themes were developed by the authors as they emerged from the qualitative data responses.

1. 2.

Interviewee quotes by four accessibility themes determined in chatbot interview qualitative analysis.

P numbers refer to anonymized unique interview participants.

A speech-impaired person or blind person needs to have somebody work with them, unless they're using a keyboard that can interact with them and talk to them. (Hispanic male, some college, age 65+)

3.

4.

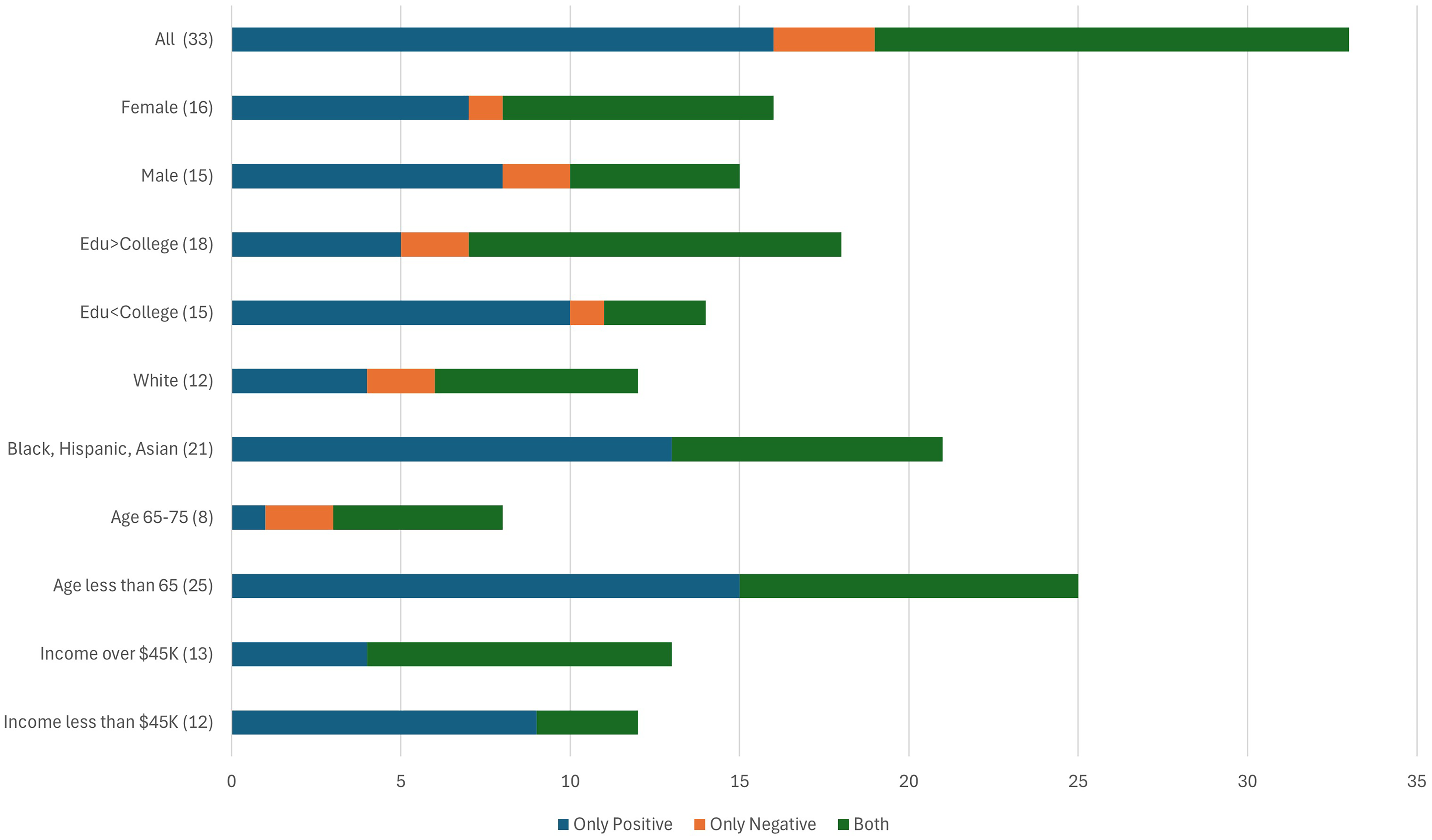

As part of our analysis, we evaluated interviewees’ responses of (chatbot's) impact on access as “only positive,” “only negative,” or “both.” After sorting these perceptions by demographic group, we observed that younger, less educated, lower income, or those who identify as Black, Asian, or Hispanic appeared to express more positivity about access than other groups (Figure 1). This emergent finding led us to re-examine our survey data for differences between groups on relevant access questions. While not statistically significant, Black, Hispanic, lower income, lower education, and younger interviewees did express more positivity about how the chatbot helps with healthcare access. Along with convenience, these users appeared to reference lack of human judgment or human bias as reasons (Table 5). It makes me feel a little better, because as you say, it's a robot, so they can’t judge me. I don’t feel like I’m being judged or looked at in a certain way. (Black race, some college, other gender, age 18–34)

Interviewee participants responses to does the chatbot improve healthcare access.

Insight quotes regarding “why” high chatbot positivity in certain demographic groups.

P numbers refer to anonymized unique interview participants.

This observation was also reiterated by developers: …if they know that their information is private…then they're more comfortable disclosing things (to chatbot) that the system could help them with health-wise…for substance use or gender-affirming care, seeking abortion… Actually, we’ve seen the opposite. We have a chatbot deployed in sexual and reproductive health topics, and we’ve seen a lot of appointments being booked from the chatbot…after they ask a couple of questions.

Optimal system, chatbot and patient factors to promote chatbot user benefits and those excluded.

Discussion

Several important findings emerge from this mixed methods study of patient-facing chatbots. First, English-speaking, visually able, digitally literate patients found value in the chatbot for navigating their healthcare needs efficiently, as well as a desire to expand the chatbot's capability. These findings support further investment in this technology to aid healthcare efficiency and access. 31

Second, our findings confirm that “most patients” does not mean “all patients.” Although our recruitment, by definition, involves patients who use the chatbot, patients from most demographic groups share concerns about how patients who are digitally isolated or have other communication barriers can continue to access healthcare in the chatbot age. The fact that older adults expressed most concern around digitally isolated patients is possibly related to awareness of peers who struggle, or their own perceived digital challenges relative to others. Through exploring factors associated with the nonuse of technology, we can employ approaches to intervene in modifiable factors to reduce digital health disparities. 4 For example, due to the overlap between patients most in need of frequent communication with their healthcare team, such as chronically ill, medically complex older adults, and those digitally isolated, we can incorporate routine assessment for patients of their digital literacy through integration of EHR online tools.8,10,11 Pertinent examples of EHR-integrated digital literacy assessments include the eHealth Literacy Scale (eHEALS), 9 the Digital Health Engagement Tool (DHET), 11 and the Mobile Device Proficiency Questionnaire (MDQ-16), 12 specifically effective for older adults. Based on the outcome of such assessment, one can determine if digital communication is feasible (i.e., through coaching or help desk, educational classes, or proxy through a trusted family member or caregiver), or if an alternative communication plan for these patients is required. As another example of removing accessibility barriers, the health system recently added interactive voice response (IVR), noting an additional 94,478 IVR chatbot queries in September 2024, from 15,302 unique users, and plans to add the Spanish language in 2025.

Third, our survey results suggest that when chatbot users cannot understand the chatbot's messages or feel that the chatbot cannot understand their messages, it not only just affects their experience with the chatbot but also worsens their perception of the intent behind the health system's use of the chatbot. Due to patients’ belief in the positive intent of healthcare, patients expect easy usability and effectiveness when digital tools are released. In other words, when the chatbot “underperforms” by not meeting patient usability expectations the technology worsens patients’ uncertainty gap, leading patients to question the positive intent of healthcare along with how to communicate effectively with their healthcare team in the digital era. 32 Given that evaluation frameworks for patient-facing chatbots are a current research priority, these findings underscore the importance of patient understandability assessment. Part of this requires, as a matter of justice, as outlined in the White House Blueprint for the AI Bill of Rights and other AI ethics guidelines, ensuring that chatbot messages are equally understandable by all user groups. 33 In addition, patients should be educated on what the chatbot is, how to use the chatbot, the capabilities of the chatbot, and how and where information is stored and/or communicated to their healthcare team.

Fourth, demographic groups that historically distrust healthcare34–38 appeared positive about the chatbot in our interviews. While surprising, upon reflection, our findings suggest possible explanation, such as for historically and socially marginalized people, access outside traditional working hours and efficiency may be particularly important. In addition, many of these users discussed the chatbot's favorable traits including “being seen,” “avoidance of risk of shame,” and providing an alternative to their prior experience of bias or judgment with healthcare personnel, especially around stigmatized topics. Other studies have found that, in some circumstances, people may prefer chatbots over people for certain topics.39–41 Given the known possible biases lurking in AI, whether our participants are correct regarding the absence of bias in all patients facing chatbots is unknown and deserves further study.

Limitations

This study occurred at one health system with one chatbot. Our sampling strategy was not meant to represent a general patient population; instead, we oversampled based on diversity to allow subgroup comparisons. This means our findings may not generalize to all patient populations or chatbots. The relatively small number of respondents in key categories limited our ability to do meaningful multivariate analysis; thus, our findings should be seen as exploratory and motivating additional research. The low response rate to the survey also raises the possibility of non-response bias, our use of self-reporting (e.g., assessing chatbot understanding) could introduce the possibility of social desirability bias, and our use of de novo items may limit validity. Last, by recruiting existing chatbot users, we undersampled participants most likely to be digitally isolated, a limitation of the study design. Through utilizing integrated digital literacy assessment tools within the EHR, recruitment could be targeted to include this essential patient group in future research. Additional publications are in progress related to survey and interview questions not included in this analysis.

Conclusion

EHR-integrated chatbots can improve access for patients to their healthcare, along with improving the patient experience and efficiency of healthcare delivery. Further study is essential to understand if chatbots are a preferred communication method for certain demographic groups and/or medical topics.

Healthcare systems have an ethical obligation to integrate digital literacy assessment tools concomitant with chatbot technology and provide education for patients and families in the use of such tools, while providing communication options for patients at every digital literacy level.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076251337321 - Supplemental material for Patient-facing chatbots: Enhancing healthcare accessibility while navigating digital literacy challenges and isolation risks—a mixed-methods study

Supplemental material, sj-docx-1-dhj-10.1177_20552076251337321 for Patient-facing chatbots: Enhancing healthcare accessibility while navigating digital literacy challenges and isolation risks—a mixed-methods study by Annie A Moore, Jessica R Ellis, Natalia Dellavalle, Marlee Akerson, Matt Andazola, Eric G Campbell and Matthew DeCamp in DIGITAL HEALTH

Footnotes

Ethical considerations

The study was approved as exempt research by the Colorado Multiple Institutional Review Board (21-5127).

Consent to participate

Written informed consent to participate was obtained from survey participants and documented in REDCap; verbal consent to participate was obtained from interview participants after obtaining consent to record the conversation.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Greenwald Foundation Making a Difference Program, University of Colorado School of Medicine Brown/Moore Endowed Chair for Excellence in the Patient Experience.

Conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

The datasets generated during and/or analyzed during the current study are available from the corresponding author upon reasonable request.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.