Abstract

Background

Amyotrophic lateral sclerosis (ALS) is a rare neurodegenerative disease that leads to progressive motor weakness and eventual death. Recent years have seen an increase in online information on ALS, with the popular video platform YouTube becoming a prominent source. We aimed to evaluate the quality, reliability, actionability, and understandability of ALS videos on YouTube.

Methods

A search was performed using the keyword “Amyotrophic Lateral Sclerosis” on YouTube. A total of 240 videos were viewed and assessed by two independent raters. Video characteristics such as type of uploader, views, likes, comments, and Video Power Index were also collected.

Results

Videos had moderate to low quality and reliability (Global Quality Scale [GQS] and modified DISCERN [mDISCERN] median 2.5), and poor understandability and actionability (PEMAT total median 8.5). Among the general video characteristics, only length of video showed a significant positive correlation across the tools (with mDISCERN [p < 0.001]; with GQS [p < 0.001]; with PEMAT [p < 0.001]). Videos from physicians (p = 0.024, sig <0.05), other healthcare professionals (p = 0.017, sig <0.05), and educational channels (p = 0.001, sig <0.05) had better quality when compared to others.

Conclusion

YouTube videos are a poor source of information for ALS as videos tend to have moderate to low quality and reliability and are poorly understandable and actionable. Longer videos, and videos uploaded by those in the healthcare and educational fields, were found to perform relatively better. Thus, when using YouTube, caution and careful attention to the video characteristics are recommended.

Introduction

Amyotrophic lateral disease (or ALS) is a neurodegenerative disease of the spinal cord, affecting the anterior horn cells, leading to gradual progressive motor weakness with intact sensory function. 1 The motor weakness is characterized by upper and lower motor neuron lesion manifestations, as well as bulbar motor weakness, progressing within a matter of months to years, eventually leading to death from complications arising from respiratory muscle weakness or paralysis. 1 With a worldwide prevalence of 4.42 per 1,000,000 persons and an incidence of about 1.59 per 1,000,000 person-years, the disease is rare. 2 ALS however, is a fatal condition as there exist no curative treatment options as of current writing, with known pharmacologic and nonpharmacologic therapies only aimed at slowing down disease progression. 3 In recent years, there have been efforts to increase awareness of ALS as a disease. Utilization of social media with the Ice Bucket Challenge and establishment of May as ALS Awareness Month are examples of endeavors that have possibly contributed to an increase in information circulating in the public sphere as well as funding of research. 4

YouTube, as one of the most popular video platforms accessible to the general public, has become an important and widely used source of information for health-related topics, with an estimated 2.4 billion global viewers in 2024. 5 A recent study in 2024 surveyed a total of 3000 users of YouTube, 87.6% of which watched healthcare-related content on the platform, with 84.7% indicating that they make decisions based on the videos they watch. 6 With YouTube being a publicly accessible platform, content can be uploaded by different kinds of entities—institutions and individuals alike. Moreover, with patients and caregivers alike having access, misinformation regarding the disease and its possible management is now a concern for clinicians. Thus, we aimed to evaluate YouTube as a source of information through the following objectives: a) describe the population of YouTube videos and the sources that upload them; b) rate the content of relevant videos in terms of quality, reliability, understandability, and actionability; and c) investigate the factors that influence these parameters.

Methods

Search strategy

This research was a cross-sectional observational study carried out in February 2023. During data gathering, Google Chrome was used and placed in “incognito mode.” YouTube was subsequently opened without the use of an account, as was done in other studies. 7 When using online search engines such as YouTube, 90% of users view the items within the first 3–4 pages of the search results, and YouTube previously had 60 videos on each page.8,9 This served as a foundation for establishing 240 videos as an appropriate number of videos to be assessed. The keyword “Amyotrophic Lateral Sclerosis” was used on February 23, 2024, with “relevant” as the search term as was used in previous studies.9,10 The first 240 videos were listed in a spreadsheet, in order of appearance, and screened for eligibility per the inclusion criteria set.

Inclusion criteria

We included videos with runtime from 0.3 seconds to 20 minutes, and only in the English language. After including the first 240 search results, 15 videos were excluded from the study for the following reasons: a) if the video was not in English, b) if it did not actually discuss any information on ALS, and c) if the video was over 20 minutes long. These parameters were also based on previous studies, with recent data also supporting the notion that longer videos tend to garner less interest.9–16

Data extracted

All pertinent data were recorded via spreadsheet form using GoogleSheets. Upon watching the 240 videos, important parameters were recorded such as date posted, length of video (in seconds), number of views, likes, dislikes, and comments. “Video age” was used to refer to the number of days from date of posting until the date of access (February 23, 2024). The Video Power Index (VPI), which is a metric used in other studies to evaluate the quantifiable impact and popularity of a video,12–16 was calculated and obtained using the following formula: VPI = ([like × 100/{like + dislike}] × [views per day])/100.

We also noted the topics discussed for each video, which were recorded in the form of a “dropdown select” format. Any combination of “Clinical Profile,” “Pathophysiology,” “Diagnosis,” “Therapeutic,” “Prognosis,” and “Personal Experiences” was selected as appropriate for each video, with allowance for multiple selections when applicable.

We also assessed the uploader for each video, noting the name of each channel and if they were verified, before categorizing them into 6—physicians/physician groups, nonphysician healthcare channels, academic institutions, educational sites, news outlets, and “others.” This was determined by the description of the channel, as well as the scope of their uploaded videos. The category “Others” included personal vlogs of patients/caregivers, channels of nonhealthcare organizations, advocacy channels, channels belonging to companies, and uploaders whose identities were unclear at the time of data gathering.

Evaluation of videos

Two independent raters (LGVU and JPDM) viewed and rated each of the included videos independently. Videos were rated using three scales. The Global Quality Scale (GQS) is a 5-point Likert scale that assesses the overall quality of a video based on its flow and usefulness to patients.12,17 The modified version of the DISCERN (mDISCERN) tool was used to evaluate the reliability of each of the videos, as used in several similar studies.2,8–10,15–24 This tool consisted of five questions answerable by “yes” or “no,” for a total score of 0–5 points. A score of 0 indicates low reliability, while a score of 5 indicates high reliability of the content of a video.2,8–10,15–24 For both scores, similar interpretations are given (low: 1–2, moderate: 3, high: 4–5).9 The Patient Education Materials Assessment Tool (PEMAT) is a 26-item tool evaluating the understandability and actionability of the content of an audiovisual material. Each item is answered by “Agree” or “Disagree,” with a score of 1 or 0 given, respectively.25,26 A score above 70% is interpreted to be understandable and/ or actionable, otherwise below this threshold is interpreted as poor. 25 For each of the three abovementioned scales used, a final combined score was calculated by the average of the two raw scores from the individual raters.

Statistical analysis

Data were analyzed using JASP 0.19.0, a downloadable statistical analysis software [Wagenmaker]. The Shapiro–Wilk test was used to determine if each of the variables was normally distributed. Spearman's rank correlation was used to determine the inter-rater reliability for each of the three scales (GQS, mDISCERN, PEMAT), with an alpha of <0.05 used. This statistic is appropriate for nonparametric tests and would provide a direct assessment for two raters. Correlations between the dependent variables (the video parameters) and the corresponding scores for each tool used (GQS, mDISCERN, PEMAT) were also calculated with Spearman's R, with an alpha of <0.05 considered statistically significant. The Kruskal–Wallis test was performed to determine if there were significant differences in quality, reliability, understandability, and actionability among the six uploader categories, with Dunn's post hoc test done to further characterize the differences. Wilcoxon rank-sum test was also employed in comparing differences between uploader groups.

Lastly, we used the STROBE (Strengthening the Reporting of Observational Studies in Epidemiology) cross-sectional checklist when writing our report. 27

Results

A total of 240 videos were watched and carefully screened. After initial screening, 26 videos were ultimately excluded from evaluation, leaving a final count of 214 videos included for evaluation (see Figure 1).

Flowchart illustrating the selection process for this study.

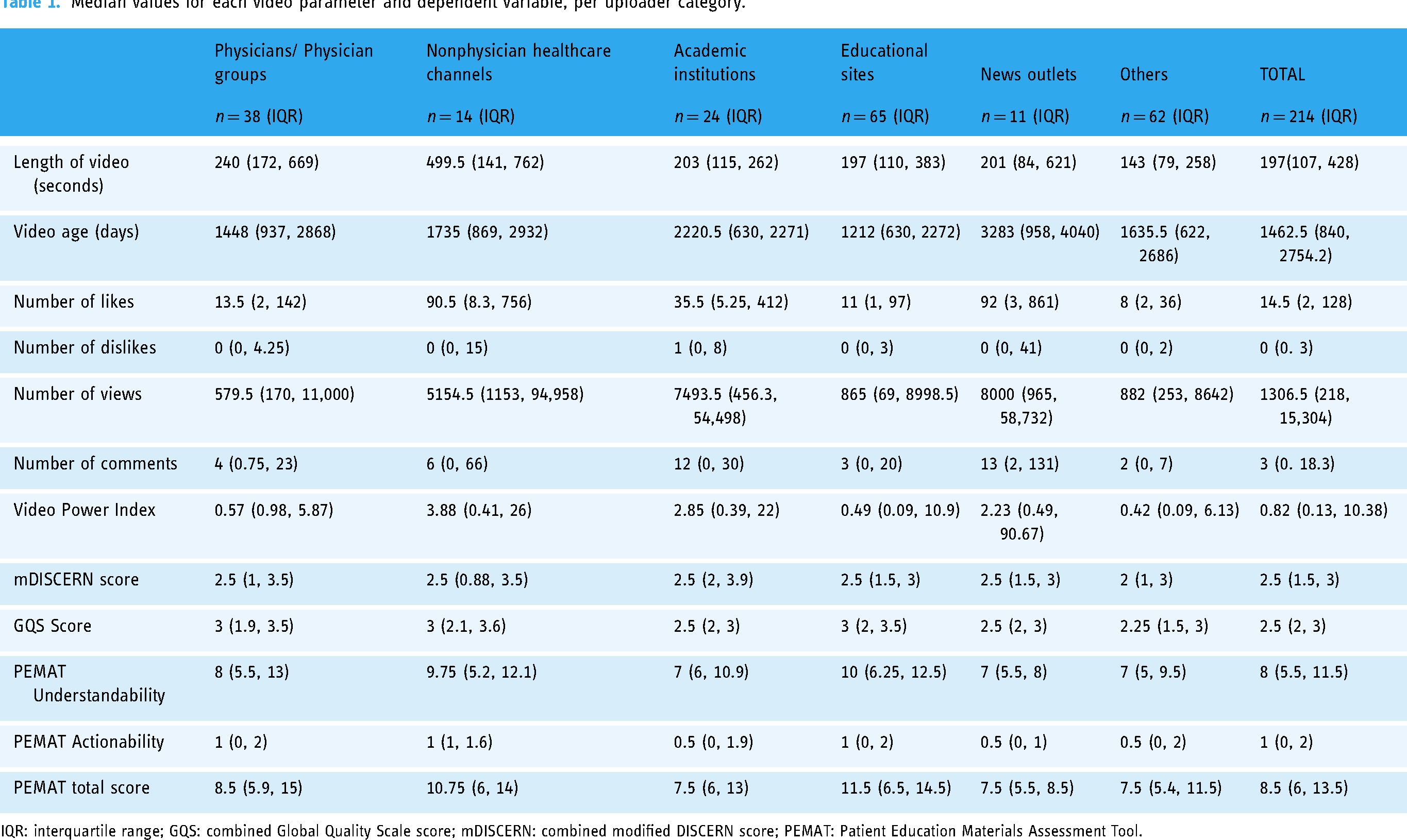

Uploads from educational channels made up 30.4% (n = 65) of the videos included. Channels categorized as “others” closely followed this count (n = 62, 28.9%). Out of the 214 videos, 38 were uploaded by physicians or physician groups (17.8%). Academic institutions, nonphysician healthcare personnel (such as nurses and rehabilitation specialists), and news outlet channels made up the remaining videos (see Table 1). There were only 28 accounts with a YouTube-verified status, majority being from academic institutions (n = 10, 35%) and news outlets (n = 7, 25%). Among topics, clinical profile (n = 125) and pathophysiology (n = 132) were the most frequent, each accounting for 52% and 55% of the videos watched, respectively. Thirty-eight videos discussed the personal experiences of patients or their caregivers and families. As a side note, only one video showed an Ice Bucket Challenge entry, which was preceded by a discussion on the disease.

Median values for each video parameter and dependent variable, per uploader category.

IQR: interquartile range; GQS: combined Global Quality Scale score; mDISCERN: combined modified DISCERN score; PEMAT: Patient Education Materials Assessment Tool.

The median length of the videos screened was 197 seconds (interquartile range = 107, 428) or approximately three and a half minutes. Videos uploaded by nonphysician healthcare professionals were the longest, with a median of 8 minutes. The most viewed videos were uploaded by news outlets (median 8000), academic institutions (median 7493.5), and nonphysician healthcare professionals (median 5154.5), the latter also gaining the most number of likes (median 90.5), and as a result, also had the highest median VPI at 2.85. Dislikes, with a median of 0 overall, were very few across all categories.

Spearman's rank correlation was calculated to check for inter-rater reliability and yielded a significant positive correlation with moderate inter-rater reliability for all of the tools used (mDISCERN = 0.567 [p < 0.001]; GQS = 0.565 [p < 0.001]; PEMAT = 0.647 [p < 0.001]), demonstrating agreement between the two independent raters.

Median combined scores for the mDISCERN and GQS tools reflected mostly low scores. For the mDISCERN tool, the majority of the videos (58.8%) garnered a combined raw score of 2.5 or lower. Only 18 videos (8%) had scores of high reliability (score of 4 to 5), and there was no video with a 5.0 combined score. The median was 2.5 across all categories, with the exception of the “Others” category, which had a median of 2.0. The GQS tool performed similarly, with over half of the scores (53.7%) scoring below 2.5. Only 24 videos (12.1%) showed a score indicating high quality (4–5) and only 2 videos had a 5.0 combined score. Physicians, non-MD healthcare channels, and educational sites all gained a 3.0 median combined score, the highest among all categories. The median combined scores for PEMAT understandability (median = 8 or ∼42% score) and actionability (median = 1 or ∼14% score) domains also highlighted low scores. Majority of the videos ranked low in understandability, with 134 videos (62.6%) below 50% of the total possible score, and only 25 videos (11.7%) scored above 70%. Educational sites were found to be the most understandable of the categories, with a median score of 10 (52%). The actionability domain of the PEMAT showed the lowest ratings, with 213 videos (99.5%) below 50%. No video scored higher than 70% in the actionability domain, and the median score ranged only 0.5–1 across all categories. No combined Total PEMAT score passed the 70% mark.

Length of the video showed the strongest positive correlation with video quality, reliability, actionability, and understandability (mDISCERN r = 0.542 [p < 0.001]; with GQS r = 0.639 [p < 0.001]; with PEMAT r = 0.479 [p < 0.001]). Video age had a statistically significant influence only on the PEMAT scores but showed a small negative correlation (Understandability r = −0.1866 [p = 0.00618]; Actionability r = −0.1854 [p = 0.00653]; PEMAT total r = −0.1931 [p = 0.00458]), meaning the more recently uploaded videos had slightly higher scores. The VPI only impacted the PEMAT Actionability domain (r = 0.135 [p = 0.048]) and did not have a statistically significant correlation with any of the other scoring tools (mDISCERN p = 0.192; GQS p = 0.502). Very high positive correlations were found when tested among all three of the scoring tools used (mDISCERN × GQS r = 0.842 [p < 0.001]; mDISCERN × PEMAT r = 0.732 [p < 0.001]; GQS × PEMAT r = 0.792 [p < 0.001]). A high score on any of mDISCERN, GQS, or PEMAT (and its subtests) correlated with a high score in any of the others (see Table 2).

Spearman's correlations.

Significant correlations are highlighted in bold; Significance level of p < 0.05 used in analysis.

CI: confidence interval; VPI: Video Power Index; mDISCERN: Modified DISCERN Tool (Combined score); GQS: Global Quality Scale (Combined score); PEMAT_U: Understandability component of the PEMAT Score; PEMAT_A: Actionability component of the PEMAT Score; PEMAT Total: Total score taken for the PEMAT tool.

Differences between mDISCERN, GQS, and PEMAT scores per type of uploader.

Significant correlations are highlighted in bold; Significance level of p < 0.05 used in analysis.

GQS: Global Quality Scale; mDISCERN: modified DISCERN; PEMAT_A: PEMAT Actionability domain; PEMAT_Total: Patient Education Materials Total score; PEMAT_U: PEMAT Understandability domain.

Out of all the videos screened, the oldest upload was dated 11 December 2008. Figure 2 shows a line chart illustrating the number of videos per year from the 240 videos screened. Starting 2010, majority of the top 240 videos seemed to cluster into three peak years: 2014, 2018, and 2022. It is of course, worth noting that the videos were collected in February of 2024. Only two videos that were screened were uploaded before the year 2010.

Trend of number of videos posted per year.

Among the dependent variables measured, only video quality, as assessed by the GQS, demonstrated a statistically significant difference when compared across different types of uploaders (p = 0.018) (see Table 3). On further probing, the most significant differences were seen when videos uploaded by channels belonging to the “Others” category were compared to channels uploaded by physicians, nonphysician healthcare entities, and educational sites. These three categories all garnered higher median quality scores than the former.

Discussion

To our knowledge, this is the only study to evaluate the quality of YouTube as a source of information on ALS in the English language to date. One published study assessing the quality of YouTube videos regarding ALS only included videos in the Korean language. 18 Their paper evaluated the GQS scores of the first 100 videos and showed higher quality scores related to length of the videos, a finding congruent with this study.

The available YouTube content on ALS showed moderate to low reliability and quality. Lowest scores came from videos uploaded from the “Others” category, or those not belonging to healthcare or academic entities. Relatively higher scores were seen among the videos with relatively longer length likely because they contained more factual information about the disease. These videos were usually uploaded from the healthcare field (physicians or otherwise). However, these videos—particularly those from physicians—did not always perform well in terms of community interaction as shown by the lower count of views, likes, and comments. This provides an explanation for why, despite the significant correlation, the impact of these parameters remained minimal. In addition, the VPI, a metric that condenses these factors, also ultimately proved nonsignificant. Content on YouTube is relatively free from fact-checking, information is not always verifiable, and this is important to consider when evaluating medical content. 28 Additionally, another study evaluating YouTube quality analyzed 202 studies that rated videos on different topics using standardized rating scales such as the GQS and mDISCERN tools and found that videos were average to below-average quality, with there being no significant correlation between the popularity of a video and quality of content. 17

As for understandability and actionability, ultimately none of the videos were evaluated as understandable or actionable, which indicates that videos on YouTube were mostly driven by shedding light on the disease, rather than educating patients or their caregivers as to what can be done about the disease.

We found that videos uploaded by channels from healthcare and education fields showed better quality when compared to others. However, these were not always as reliable due to inconsistent citation of source materials, lack of additional resources, and discussion of controversial treatments. Additionally, when taking into consideration the different types of uploaders, it is important to keep in mind the different motivations for uploading a video on ALS—whether it is intended for education, information dissemination, or to share subjective experiences. The latter may inherently score lower marks for the tools used. Nevertheless, with no fact-checking measures to date, videos may still be subject to misinformation or misinterpretation. These serve to highlight the depth of the current knowledge and the need for more research efforts on ALS today. 1

Another aspect of note was the increasing trend of the number of videos posted per year, where there was a noticeable spike in the number of videos in the year 2014. This coincided with the start of the ALS Ice Bucket Challenge trend. 4 After a second spike in the numbers, the trends of new videos per year steadily increased, and this coincides with the COVID-19 pandemic, in which most people became isolated, and turned to social media for both entertainment and daily news. 29 A spike in viewers and uploaders could possibly explain why the more recent videos were significantly more understandable and actionable. Video age, however, did not significantly impact the quality and reliability of these videos. A separate study may be done to further explore the impact of the awareness campaigns on these variables, as it is outside the scope of our inquiry.

There are a few limitations in our study. The study only included videos in English and those uploaded only up to a certain date. As a cross-sectional design, the study looks only at the content of the videos and does not directly measure their impact on the learning of the intended audience. Moreover, YouTube does not provide metrics that stratify videos as to their target audiences, as videos can be accessed by anyone from the general public. The subject of uploader intention and its impact on consumer education, although not within the scope of this study, maybe a worthy recommendation for future research. Another limitation is with YouTube's search algorithm, which unfortunately cannot be adjusted, and may influence video visibility and subsequent selection. In addition, certain aspects such as video uploader are not blinded, which may also have a degree of influence on scoring. Inter-rater reliability was ensured with the study's two evaluators; however, it is acknowledged that the two raters have similar affiliations which may also affect scoring. Tools such as the GQS, the widely used, may still be susceptible to a degree of subjectivity, and may give an expected low score to videos which do not focus primarily on education, such as those sharing patient experiences.

Conclusion

Information from YouTube videos is of low to moderate quality and reliability and is poorly understandable and actionable. This was consistent when we looked at these variables across six categories of information sources, which showed no difference except with video quality, wherein those in the healthcare and education fields tend to upload videos with better quality. Community engagement seems to have a small positive correlation. Spikes in the number of uploaded videos seem to coincide with effective efforts in raising social awareness of the disease and may provide insight to potential opportunities in generating more research and funding. Overall, our findings support the notion that caution should be exercised when using YouTube as a source of information on ALS. Patients and their caregivers are advised to carefully consider which videos to click on, paying close attention to specific aspects of the uploaded content.

Footnotes

Contributorship

Liamuel Giancarlo V. Untalan, MD: data acquisition and analysis, drafting and revision of manuscript. John Paulo D. Malanog, MD: data acquisition and analysis, drafting and revision of manuscript. Roland Dominic G. Jamora, MD, PhD: data analysis, revision of manuscript, study supervision, and final approval. All authors accept responsibility for the conduct of the research.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

Not applicable; no human subjects involvement.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.