Abstract

Introduction

Alzheimer's disease (AD) is the first cause of dementia worldwide without any current curative treatment. Facing an increasing prevalence and its associated costs, AD represents a public health challenge. Usual diagnostic methods still rely on extended interviews and paper tests provided by an exterior examiner. We aim to create a novel, quick cognitive-screening tool on a numerical tablet.

Methods

This pilot program, built and edited with Unity®, runs on Android® for the Samsung Galaxy Tab S7 FE®. Composed of seven tasks inspired by the Mini-Mental Status Examination and the Montréal Cognitive Assessment, it browses several cognitive functions. The architectural design of this tablet application is distinguished by its multifaceted capabilities, encompassing not only seamless offline functionality but also a mechanism to ensure the singularity of data amalgamated from diverse sites. Additionally, a paramount emphasis is placed on safeguarding the confidentiality of patient information in the healthcare domain. Furthermore, the application empowers individual site managers to access and peruse specific datasets, enhancing their operational efficacy and decision-making processes. We performed a preliminary usability assessment among young, healthy subjects.

Results

Twenty-four participants were included with a final F-SUS ‘excellent’ score. Participants perceived the tool as simple to use and achieved the test in a mean time of 142 s. No technical errors occurred.

Conclusion

These preliminary results suggest that our new assessment on a numerical tablet might be usable and acceptable for a short cognitive screening but requires further studies among older populations.

Introduction

Alzheimer's disease (AD) is the first cause of neurodegenerative decline, affecting millions of people worldwide with a considerable cost for countries. 1 In AD, patients progressively lose their cognitive abilities, and behavioural troubles can occur. Without any current and efficient treatment, loss of memory and autonomy becomes an essential burden for caregivers and families. Alzheimer's disease management is a global and public challenge for health systems that face a constantly increasing prevalence in ageing populations. Dubois et al. recently defined AD as a clinical amnestic syndrome (memory impairment) associated with the presence of amyloid markers in human fluids. 2 Assessing cognition is central in diagnosing AD, and numerous memory tests exist for precise or global evaluation. Mini-Mental Status Examination (MMSE) 3 and the Montréal Cognitive Assessment (MoCA) 4 are widely used in primary screening because they are short (less than 10 min) and have good sensibility and specificity. Both tests share several questions and explore approximately the same cognitive functions, even though MoCA evaluates frontal deficits more precisely. They both browse several cognitive functions quickly and can be repeated through time in the medical following of patients. More recently, the MoCA seems to have a higher sensitivity (Se) than the MMSE in differentiating healthy subjects from demented patients, whereas MMSE still performs a higher specificity (Sp). 5 Nevertheless, there are good correlations between the two tests.6,7 Some other tests were developed for a very short screening, such as the Mini-Cog, which combines the three-word test (immediate and delayed recall) with the clock drawing task. 8 The Codex test 9 provides a more precise evaluation by integrating questions about spatial orientation. Both tests share questions from MMSE and MoCA. All these assessments are beneficial because they can briefly browse cognitive functions with good performances, especially memory impairment. For further explorations, there are labelled memory consultations where a patient can undergo a global neuropsychological evaluation. However, these specialised centres are primarily available in hospitals, and appointments can be long, delaying diagnosis and social measures. Moreover, the diagnostic process still requires paper tests involving an exterior examiner.

Besides these classical evaluations, numerous authors have developed new screening tools on numerical tablets that show good correlations with usual tests. Although several recent systematic reviews have been published,10–12 only one meta-analysis focused on digital drawing tasks. 13 In all these studies, numerical tests could run either on numerical tablets or on simple computers and touch screens. All assessment could be self or hetero-administered. Unfortunately, few of these programs were available in French,14,15 limiting their use in francophone patients.

Finally, they have not exceeded the experimentation stage. Although many applications are already available on commercial platforms such as Apple iTunes or Google Play Store, 16 health practitioners do not use them daily. Thus, the usability of numerical tablets has been globally demonstrated among large populations, 17 and there is better accessibility to new technologies, with most patients owning a tablet or a smartphone.

Getting a specialised memory consultation can take a long time, and there is a need to facilitate early screening in primary care with general practitioners (GPs) even if some barriers remain. 18 Indeed, GPs have a heavy workload and do not always have time to perform an efficient screening. There is also a false and persistent idea that cognitive decline is expected in ageing, delaying medical diagnosis. Recent literature shows that digital assessment can be performed in primary care with promising results.19–21 General practitioner would highly benefit from using these new technologies, especially autonomous programs that do not require an exterior examiner. By providing early cognitive screening in primary care, they could anticipate and optimise patients’ journeys (precocious imagery, biology) before addressing them to specialised consultations. It would also benefit caregivers by identifying daily burdens and accompanying them in social procedures. Targeting primary care networks, a digital tablet assessment should be short, autonomous, reliable and understandable for patients with cognitive tasks reproducing classical questions from usual paper tests.

We have developed the AlzVR project, which proposes a multimodal digital program for cognitive screening. Previous papers presented our immersive assessment using Oculus Quest®.22,23 We now aim to explore a new modality by making a short auto-questionnaire (as Codex or Mini-Cog) on a touchscreen-based application.

Materials and methods

We constructed our program using Unity® (v.2021.3.11) for Android® (tablet). Three scenes compose AlzVR: the welcome menu, playing scene and F-SUS questionnaire.

Most of the figures presented in this paper have been translated from their French version for a better understanding.

Welcome menu

When launching the application, there are three possibilities (Figure 1):

Supervised experience: medical questionnaire and cognitive assessment Quick experience: cognitive assessment only Results: results visualisation

The game consists of three main scenes:

The ‘Menu’ scene includes the main menu, medical questionnaires and results consultation. The ‘InGame’ scene contains the tutorial and all the user's tasks. The ‘Survey’ scene collects user feedback, which the administrator can only consult.

Medical questionnaire

The supervised experience includes a primary medical questionnaire (Figure 2) to collect socio-demographic items (name, age, type of residence) and medical background (diagnostic, previous cognitive tests, treatments and sensory loss).

Welcome menu view.

Medical questionnaire.

Anonymization

After the last validation of the medical questionnaire, the program generates an automatic anonymised number composed of date and hour until seconds without integrating the name's initials. A typical anonymous number looks like YYYYMMDDHHMMSS. This process safeguards the confidentiality of patient information (personal information) and allows a further blinded analysis. In ‘quick experience’, an ‘A’ precedes all anonymised numbers, such as A-YYYYMMDDHHMMSS. In ‘supervised experience’, the letter of an eventual medical centre can be automatically added before the number.

Playing scene

Experiences architecture

The main module of the ‘InGame’ scene, the GameManager, references the list of nine tasks performed. Although each task has a different objective, each has textual and audio instruction and then proposes none, one or several answers in the form of images or text. Therefore, the ‘JExperience’ parent class groups all the attributes and methods common to all the tasks. However, the specific features of each task have necessitated the creation of new classes (‘JExpMonoChoice’, ‘JExpChoiceTown’, ‘JExpImages’) inherited from ‘JExperience’ (Figure 3).

Main classes’ diagram.

General aspect

The visual aspect should be simple without any cognitive surcharge. All scenes appear in a uniform blue background. All the pictures implemented into the scenes are royalty-free.

In all experiences, the user must select answers by touching one or several buttons. These buttons are big, allowing for an easy touch. A maximum of eight buttons are on the screen, ensuring good visibility.

Answer modality

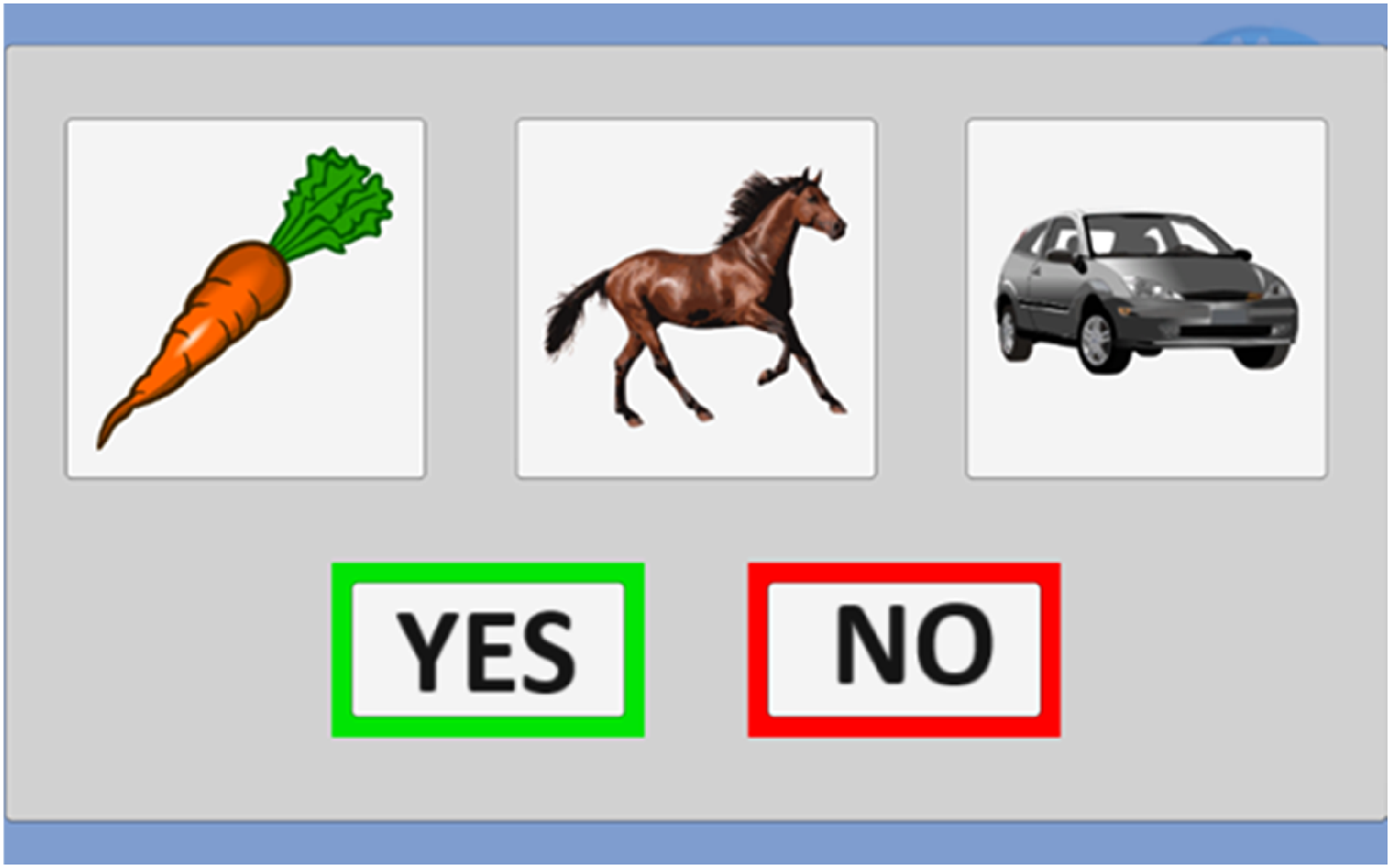

Since the user selects all the answers, a confirmation screen appears with a button ‘Yes’ and ‘No’. This step avoids inattentive answers and validates the choice (Figure 4). A ‘Yes’ leads to the next question, and ‘No’ allows a new chance to answer.

Answer modalities.

Each exercise lasts 30 s maximum. The next question automatically occurs if the user does not answer within the time (counted as TimeOut). The choice of a ‘No’ in the confirmation step reinitialises the time, but only three attempts are allowed.

In every case (success or failure), a message ‘Congratulations’ congratulates the user (Figure 5). This message provides a cheerful ambience and can reduce further false results of stress or fear.

Congratulations message.

Training task

Although we suppose that many older adults have already used a smartphone or a tablet, we have decided to evaluate numerical abilities with two training tasks before the cognitive questionnaire. The user needs to touch shapes on the screen. With these two short exercises, we can ensure a good understanding of the tablet's functioning by the user (Figure 6). A failure in the training tasks leads to the assessment's stopping, and the test cannot continue.

Training task.

Cognitive questionnaire

If the training tests are successful, the cognitive assessment begins and comprises seven tasks. We wanted a varied assessment browsing multiple cognitive fields and our cognitive tasks are inspired by Codex, Mini-Cog (three words, clock drawing task, spatial orientation), MMSE or MoCA questions. Our tasks do not require anexterior examiner, stylus or voice recognition and are presented in Table 1.

Numerical cognitive tasks.

MMSE: Mini-Mental Status Examination; MoCA: Montréal Cognitive Assessment.

The first task is the « three words » test. In the MMSE or MoCA, the examiner orally delivers the three words, and the patient must repeat them (immediately and with a delayed recall). To get a self-questionnaire, we kept the oral deliverance by the program (sound only) but replaced the oral repetition with a choice of three images among eight (Figure 7). There is still an immediate and delayed recall. The three words belong to different semantic fields (animal, vehicle and vegetable).

Three words test.

The clock recognition (Figure 8) task is inspired by the clock drawing test (CDT), 8 where the patients draw a circle, number and needles indicating a precise hour (e.g., 11h10). We created a novel task proposing three different clocks: the good one (10h30), the symmetric clock (05h50) and a false clock (Figure 8). The oral instruction delivers the hour to choose (‘select the clock indicating …’), and the patient selects on the screen. There are two series of clocks followed by the three words delayed recall.

Clock recognition test.

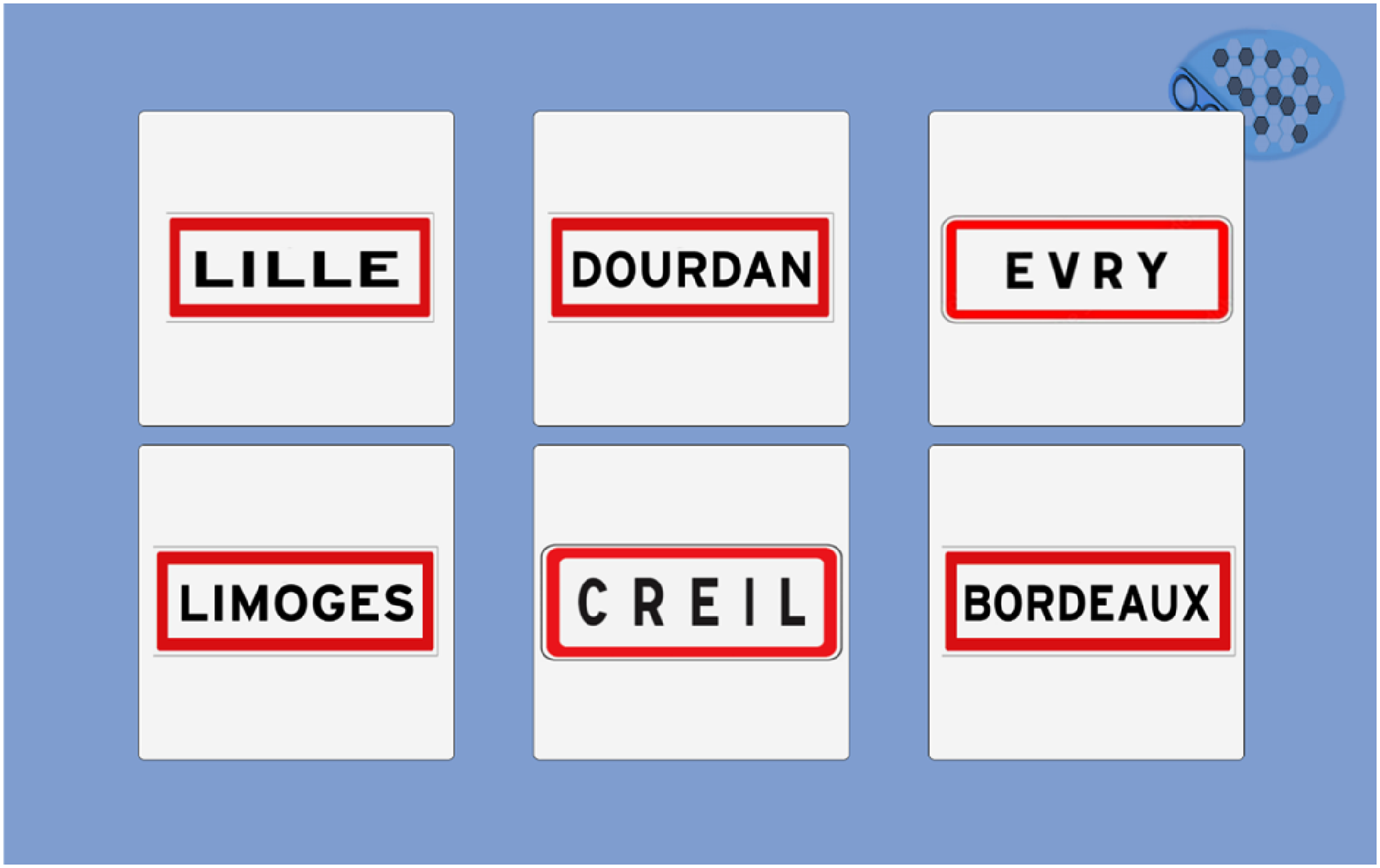

To explore spatial and temporal orientation, we selected a simple format with an oral question (what is the current season? Select the country's flag where we are) and several pictures as answers. The country is represented as a flag for spatial orientation, limiting written instructions (Figure 9). Names of town are presented as classical French signs (Figure 10). The answer proposals for the town change depending on the site assessment.

Country test.

Town test.

Temporal orientation tasks are relatively similar, as they select the current season (Figure 11) and present the name of the season and a typical image. Considering varying dates for season changes, we left a 48-h margin for the answer.

Season test.

In the year test, we introduced several confusing dates (minus one year, minus one century). All dates finish by the same number as the current year (Figure 12).

Year test.

In the MoCA test, abstraction's ability is tested on the similarity between two words (e.g., an orange and a banana are fruits). We chose a fruit series to fill with a third picture in our abstraction test. A confusing element is among the four choices (one image from the three-word test) (Figure 13).

Abstraction test.

Results menu

After the cognitive questionnaire, the results’ menu allows a simple visualisation of the patient's score. A password protects this section and only uses an anonymised number (ID patient). Three possibilities exist: ‘X’ (failure), ‘V’ (success) and ‘?’ (Timeout).

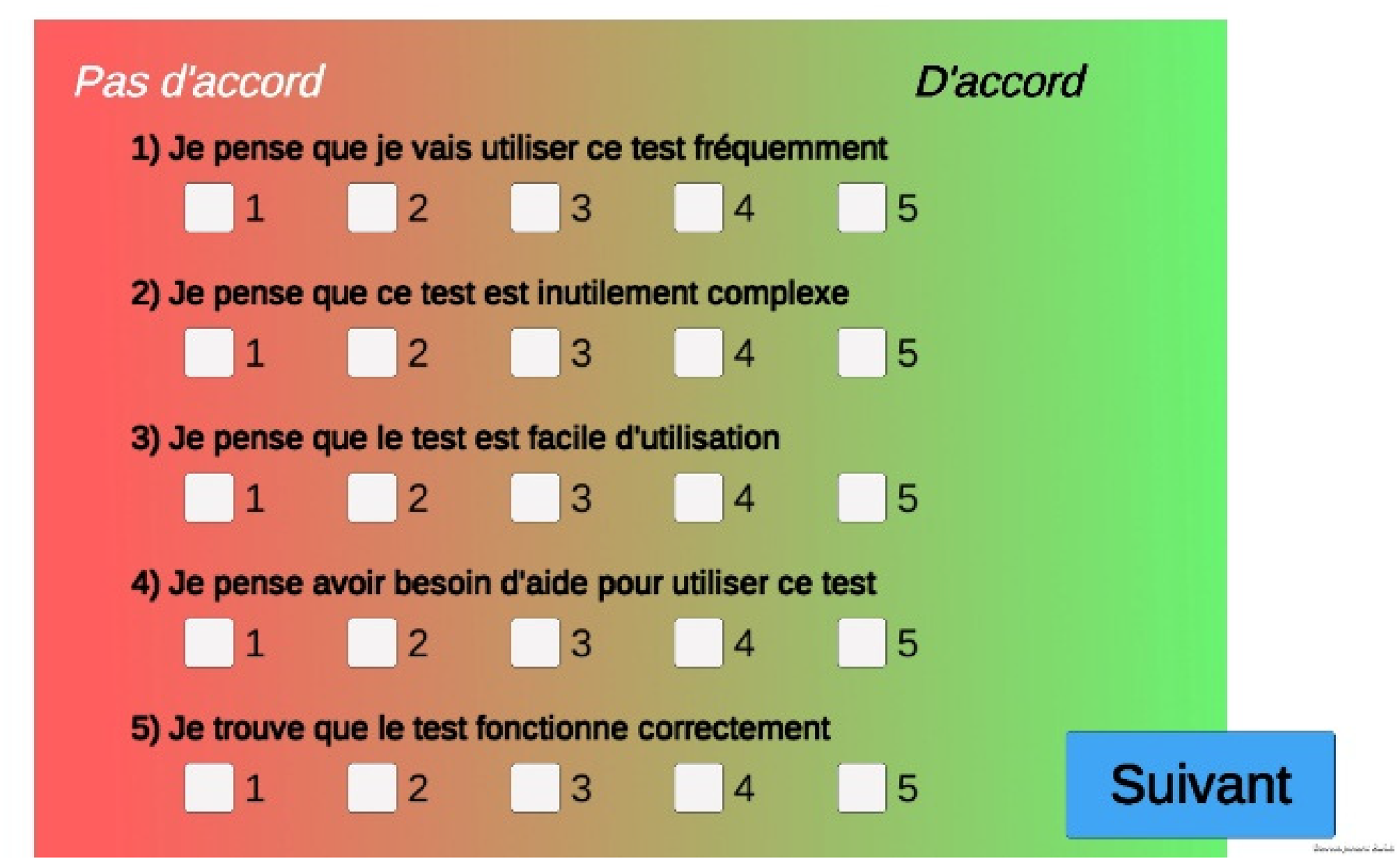

F-SUS questionnaire

After the cognitive tasks, we implemented the French translation of the System Usability Scale (Figure 14) 24 and the F-SUS questionnaire 25 . It evaluates global satisfaction through ten questions and five degrees of response from 1 (strongly disagree) to 5 (strongly agree). F-SUS results do not appear in the results menu and are directly stored.

F-SUS questionnaire view.

Data storage

AlzVR exports and stores all data in a CSV format, allowing easy exploitation. We planned separate storage for personal information from other results (experiences and F-SUS). Thus, a blinded analysis is possible using only results files containing anonymised data (Figure 15).

Process of data storage and anonymisation.

Preliminary usability assessment

Study population

We carried out an experimental, qualitative study in IBISC Laboratory (University of Évry-Paris Saclay, Department of Sciences and Technologies) among volunteers (staff and students) to assess preliminary usability using ISO 9241-11 norm 26 and the Nielsen method. 27 The tablet was a Samsung Galaxy Tab S7 FE® (screen of 315.0 mm, 2560 × 1600) running on Android 11® (user interface One UI 3.1).

The inclusion criteria were age >18 years old and French language understanding. The exclusion criteria were age < 18 years old, no understanding of the French language, and visual or hearing loss with no equipment.

We recruited participants through mailing lists of university and advertisements in the locals.

Stages

Participants successively and anonymously achieved several stages:

Pre-questionnaire: fill in an online questionnaire to collect socio-demographic data (age, profession, sex) and numerical habits (smartphone and tablets) Quick experience F-SUS questionnaire Post-questionnaire: An online questionnaire will be used to collect free comments about the program.

Data collection and exploitation

During the tests, we collected the following parameters: answer (success, fail), number of trials and response time (ms).

We chose the total F-SUS score calculated on the author's recommendation24,25 as the primary endpoint to assess usability with a goal of 85.5%, considered ‘excellent’.

All data were blinded, collected and analysed using the anonymised numbers of participants.

Results

Population

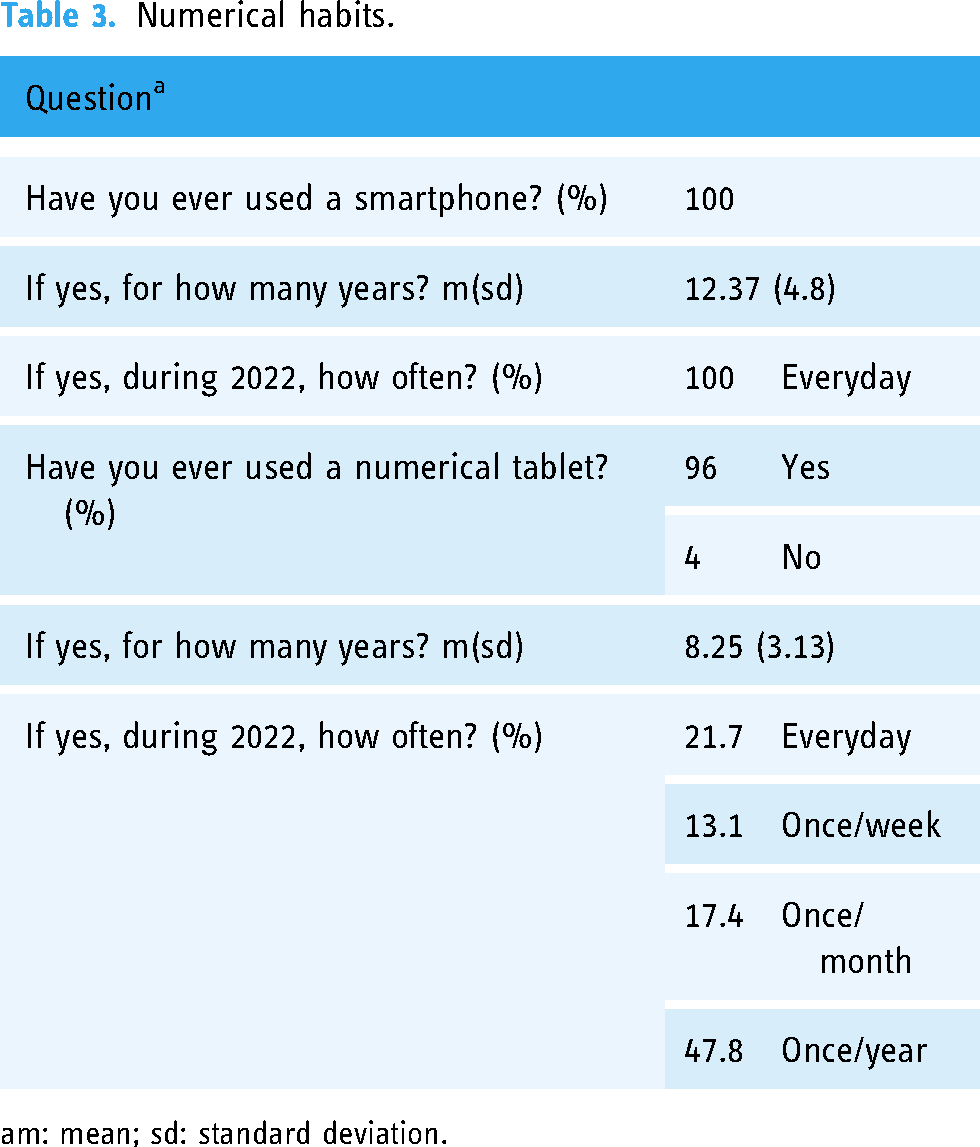

We included 24 participants between 27 September 2022 and 12 October 2022. Table 2 presents their socio-demographics, and Table 3 their numerical habits.

Socio-demographic characteristics of the population.

m: mean; sd: standard deviation; min: minimum; max: maximum.

Professor or associate professor.

Numerical habits.

m: mean; sd: standard deviation.

Success rate

Cognitive tasks were completed by 100% of participants. We observed a success rate for the questions of 97.4% (187 correct answers out of 192). The two tests that presented failures were the clock task (2 failures) and the season (3 failures).

Time of completion

The average test administration time (excluding training tasks) was 141.47 (± 18.77) seconds, and details of task completion times are presented in Table 4.

Tasks completion times.

m: mean; sd: standard deviation; min: minimum; max: maximum.

F-SUS questionnaire

Ninety-six percent of participants completed the F-SUS questionnaire (one person left the application before completing it), and the results for each question are presented in Table 5.

F-SUS questionnaire results.

m: mean; sd: standard deviation.

The overall score on the F-SUS questionnaire was 89.24%, considered ‘excellent’.

General remarks

In the post-questionnaire, we collected general opinions about the computer program. Users overwhelmingly found the program to be easy to use. The negative remarks reported were a lack of fluidity in the oral instructions and the tests being judged too simple.

Discussion

Numerous existing paper tests assess cognition for a global screening or precisely for a specific function. 28 At the same time, several authors studied the possibility of numerical tablet use in evaluating cognitive decline and performing training tasks in healthy and cognitively impaired patients.29,30 Despite these numerous and efficient digital tests, 10 cognitive evaluations still rely on paper tests and need an examiner. Facing an increasing prevalence of AD in the future decades, 1 there is a need to get simple, quick and performing tools to help practitioners in cognitive decline screening. During our conception, we chose to create a new tool in the French language inspired by two primary used and recommended tests31,32: MMSE, MoCA and the CDT (integrated into the MoCA). In usual tests, the patient answers most of the questions orally to the examiner. We chose not to use speech recognition because of its limitations. 33 Incorrect speech interpretation would have led to false results. Excluding oral answers does not allow a global language evaluation as in MMSE or MoCA.

Our first task might present some limits. Indeed, in the traditional three-word tests, the examiner orally delivers the words, and the patient repeats them. There is no picture of written instruction. A direct digital transposition would have needed an automatic speech or human analysis. So, we decided to switch to a picture test with an immediate and delayed recall. Although we kept an identical first step (oral instruction), remembering pictures or words may require some different memory abilities.

Clock drawing test is also hugely used in daily practice and belongs to quick screening tools such as Codex 9 or Minicog. 34 Müller et al. have proposed a digital clock drawing task using a stylus, 35 showing good correlations with paper tests. This transposition still requires exterior human validation or automatic image analysis, as proposed by Park et al. 36 We wanted a simple and short task with no exterior analysis, so we switched from a drawing task to a recognition picture task. Inoue et al. 37 proposed a similar task with a clock timer recognition among a demented population, which showed promising results. Drawing a clock and placing needles (as in the CDT) requires visuospatial abilities and executive functions. Nevertheless, there were technical limitations to producing a self-administered questionnaire with few written instructions, no exterior validation and simple orders. These limitations may lead to potential bias in cognitive evaluation with an underestimation of executive functions as MMSE or MoCA do.

Our assessment does not evaluate writing abilities because we did not want to use a stylus or further human validation. Thus, it is known that dysgraphia is a symptom of AD. 38 Despite exploring several questions and different cognitive fields, our new assessment has limitations that need to be followed and may need upgrades in future versions.

As a first evaluation, we performed a short usability assessment of a healthy population (without cognitive decline) among university users.

Completion time should be short, and the mean time observed in our study (142 s) is a good result. Moreover, usability reached the global score of 89.24%, surpassing the initial objective of 85.5% and close to 90.9% (« best imaginable »).

The participants globally perceived the test as easy to use, corresponding to F-SUS scores (questions 3, 5, 7 and 8). It was a positive evaluation because participants did not know about cognitive tests and thus discovered them for the first time. These results are preliminary satisfying data, but there is a considerable limitation about the population. Indeed, our participants were young (41.88 years old), healthy and used to touch screens. This mean age is widely below the age of AD patients (>60 years) 39 , which can explain the observed good results. They may not be transposable into an older population with cognitive decline and poor use of tablets. The questions were negatively perceived as « too simple » or « too slow » due to the young age of our participants.

Although the participants were healthy, we noted errors in the clock recognition task, probably due to the needle shapes signalled in complimentary remarks. However, recent findings showed that students had more and more difficulties reading traditional clocks, 40 and our two failed users were 24 years old. These difficulties appear in the mean task time of realisation (Table 4); indeed, it is the task with the most significant difference between the minimal and maximal time of realisation. Season errors may be explained by the recent season changes (summer/autumn) before the beginning of the study (Sept 27). We also found an extensive range in task time realisation.

When extracting the results from the tablet, we reported no errors in the CSV files. Data were easily exploitable and well anonymised.

These first results are satisfying, but AlzVR remains a pilot questionnaire. Although classical cognitive tasks inspire the conception, we cannot extrapolate the results of a young population into an older one with cognitive impairment. AlzVR should be tested in an older population for usability assessment and accuracy of discrimination between healthy and demented subjects. However, this pilot study belongs to the global research COGNUM-AlzVR. It aims to evaluate the efficiency and relevance of two numerical tablet programs for cognitive assessment in AD and mild cognitive impairment patients. The Committee for the Protection of People of Ile de France approved the multicentric project in 2022, and the study began in April 2023 (NCT06032611). We intend to include 150 participants (150 patients with AD, 150 patients with MCI and 150 healthy subjects) in two French hospitals. Participants undergo both classical and digital assessments. We extracted some preliminary results about AlzVR. Delayed recall appears to be a discriminant test, 41 and AlzVR shows good feasibility and acceptability among the patients. 42

Conclusion

We have developed a new digital cognitive screening tool with preliminary good feedback among a young and healthy population. The application could also be transposed onto smartphones to enhance its diffusion and utilisation. Definitive accuracy, usability and efficacy will be appreciated at the end of the ongoing COGNUM-AlzVR study.

Footnotes

Acknowledgements

The authors thank all participants and the Génopole (Evry-Courcouronnes, France) for their partnership with IBISC Laboratory.

Contributorship

Conceptualization, FM, GL, JD, FD and SO; methodology, FM, GL, JD and FD; software, GL, JD and FD; validation, FM and GL; formal analysis, FM; investigation, FM, GL and FD; resources, GL and FD; data curation, GL; writing – original draft preparation, FM and FD; writing – review and editing, FM, GL, FD and SO; visualisation, FM and GL; supervision, GL and SO; project administration, GL and SO; funding acquisition, none. All authors have read and agreed to the published version of the manuscript.

Data availability statement

The anonymised data supporting this study's findings are available on simple request from the corresponding author, SO. The original data are not publicly available because they contain information that could compromise the privacy of research participants.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Ethical approval

This work has been carried out in accordance with the Declaration of Helsinki of the World Medical Association, revised in 2013 for experiments involving humans. Data exploitation was anonymous using an automatic number of participation generated from the date and hour of completion. The local University Paris-Saclay ethics committee approved all documents and protocols on 2022/07/07 (Approval registration number CER-2022-433). All subjects involved in the study gave their written informed consent. Participation was free with no remuneration.

Guarantor

SO.