Abstract

Objective

In online health communities (OHCs), patients often list their physicians’ expertise by user-generated tags based on their consulted diseases. These expertise tags play an essential role in recommending the match of physicians to future patients. However, few studies have examined the impact of the accessibility of e-consults on patient assessments using marking of the physicians’ expertise in OHCs. This study aims to investigate what are the patient assessments of the physicians’ expertise if they have e-consult accessibility.

Methods

Through a case–control study, this article examined the effect of e-consult accessibility on patient-generated tags of physician expertise in OHCs. With data collected from the Good Doctor website, the samples consisted of 9841 physicians from 1255 different hospitals widely distributed in China. The breadth of voted expertise (BE) is measured by the number of consulted disease-related labels marked by a physician's served patients (SP). The volume of votes (VV) is measured by the number of a physician's votes given by the SP. The degree of voted diversity (DD) is measured by the information entropy of each physician's service expertise (labeled and voted by patients). The data analysis of e-consult accessibility is conducted by estimating the average treatment effect on the DD of physicians’ expertise.

Results

For the BE, its mean was 7.305 for the case group of physicians with e-consults accessible (photo and text queries), while the mean was 9.465 for the control for physicians without e-consults. For the VV, its mean was 39.720 for the case group, while the mean was 84.565 for the control. For the DD, its mean on patient-generated tags was 2.103 for the case group, which is 0.413 lower than the control group.

Conclusion

The availability of e-consults increases the concentration on physician expertise in the patient-generated tags. e-Consults reinforce the increment of the already-received physician expertise (reflected in tags), reducing the tag information diversity.

Keywords

Introduction

Electronic consultations (e-consults) in online health communities (OHCs) are a promising approach to the challenge of improving access to health care.1,2 Medical e-consults offer a rapid, direct and documented communication pathway for consultation between patients and medical specialists. These e-consults may avert the need for face-to-face visits. The popularity of e-consults benefits from the growing accessibility of electronic health records and the accessibility of the Internet. 3 Although specific incidents have the exposed weaknesses and risks of online healthcare, a service such as an e-consult service is still a useful supplement rather than a replacement for hospital diagnosis and treatment. Therefore, this type of service may offer an appealing new modality for the rational appropriation of healthcare services in OHCs, providing convenience for existing and potential patients, physicians and hospitals.

From the patient’s perspective, the type of e-consult services in OHCs would depend on the patients’ diseases. For instance, patients can be more open to consulting a physician online about a condition that may be embarrassing to ask about in an in-person visit. Patients are more likely to consult a physician online for chronic diseases that are heavily influenced by behavior or lifestyle factors and for non-urgent matters. Obstetrics is an excellent example of a pregnant woman who may face many questions during pregnancy that do not require in-person visits, for example, whether she can ride in an airplane or eat particular food if she has gestational diabetes, how much exercise should she do. From the physician's perspective, whether they open e-consult services would depend on factors including their motivation 4 (economic stimulus, increased prestige or social welfare improvement) and their schedules. 5 Despite their offline consults in hospitals, the physicians could offer either online query (picture and text) consultation or telephone queries. In an OHC, these e-consults are often charged by these physicians according to their existing fee structure. From the platform management perspective, OHCs are platforms for patient–physician interaction and a site for patients to aggregate their information on medical experience. 5 As patients frequently turn to online sources for more health information, there is a great need to examine the information value of patient-generated tags and potential benefits inherent in online votes.

Patient assessments of physicians’ expertise with user-generated tags help improve OHC's service and management quality. 6 There are many intuitive quality assessments, such as the number of likes/badges/gifts and average ratings for physicians in an OHC platform. 4 In particular, patients tend to label physicians’ expertise with their encountered disease and provide their tags online. These patient-generated tags play the role of online reviews. As a result, these tags help future patients to be more flexible in finding matching physicians. For example (see Figure 1), patients’ tags for one physician (from the heart surgery department) in the past 2 years were: valvular heart disease (118 votes), coronary heart disease (33 votes), cardiac myxoma (1) and heart failure (1). These tags would recommend future patients with valvular heart disease or coronary heart disease (CHD) to choose this physician, while they are not suitable for patients with cardiac myxoma or heart failure. In summary, these patient-generated tags are of interest for investigating the effect of e-consults in OHCs (see Figure 2). They play a complementary role for the OHC to state the physicians’ specialty. The OHC platform can also encourage physicians to verify and correct this tag information when it is confusing or misleading.

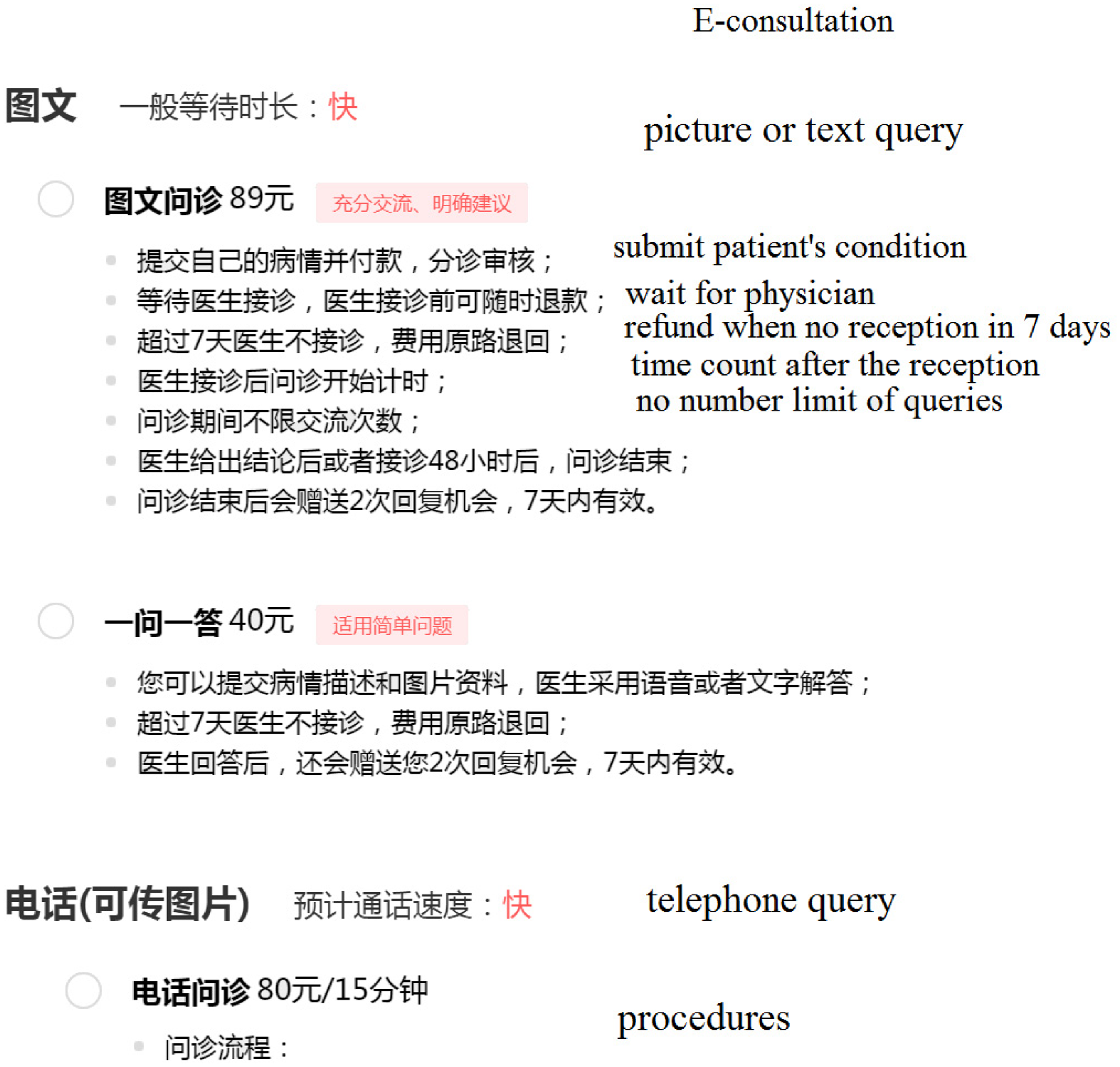

Web page excerpt of accessibility of e-consults and patient assessment tags on physicians’ expertise from Good Doctor (www.haodf.com, accessed 13 August 2020).

Web page excerpt of accessibility of e-consults from Good Doctor (accessed 13 August 2020).

As described in Figure 2, the patients would make a payment of 89 Yuan (RMB) for the picture or text query for an e-consult from Good Doctor. The patients would make a payment of 40 Yuan (RMB) for the question-answer query for an e-consult. If the doctor does not give a response within 48 h (or 7 days for questions), the payment would return to the patient's account. The online communication could also be transferred to telephone query with 80 Yuan (RMB) per 15 min in the e-consult. At present, the cost of those e-consults is mainly covered by the patients rather than National Medical Insurance (NMI) in China. However, the NMI administration is making efforts to improve the price and payment policy of online medical services and the scope of application is public medical institutions at all levels in several provinces (e.g. Zhejiang, China). Moreover, those practices of payment reforming for e-consults are attracting wide attention fast during the COVID-19 epidemic.

On the Good Doctor platform 7 (the widely received OHC in China), approximately 40,737,197 patients have evolved in e-consults.4,5,8 The number of e-consult shows the health profile of the site of the study in terms of the top 15 prevalent diseases on the Good Doctor website with data collected from August 26, 2017 to August 27, 2017 (see Figure 3). In the collected data, there were a total of 66,726 e-consults. The top 15 diseases took up almost 22.44% of all e-consults. Moreover, CHD, hypertension and lung cancer accounted for the largest three numbers of e-consults among the 918 diseases in the OHC with e-consults. These patients have been distinctly counted for the same physician but possibly repeatedly counted for different physicians. Some of the physicians are accessible for e-consults with online reservations, while others provide an offline conclusion with online booking. As e-consult accessibility varies among physicians in OHCs, how this factor impacts the patient assessments of physicians’ expertise with tags is still unknown in OHCs. Understanding how e-consult accessibility affects patient assessments of physicians’ expertise using the tags is critical for studying OHC. Using observational data from an OHC platform, we designed a case–control study with causal inference to investigate it.

Number of e-consults of the top 15 prevalent diseases (with proposition larger than 1%).

Literature review

e-Consults in OHCs have been receiving considerable attention from numerous researchers.4,9,10 Among the studies, various issues have been considered, such as whether rural–urban health disparities can be reduced by an OHC, 10 and how online physician materials can affect their online contributions in OHCs. 4 The e-consult service is an essential function in OHCs, but two contradictory arguments are found in the literature. One traditional argument suggested that e-consults enhanced patient response to their tags of physician expertise, increased the diversity (of user-generated tags) and helped future patients find matching physicians. 11 The other argument proposes that e-consults only reinforce the increment of the already-received physician expertise (labeled by patients), reducing the tag information diversity. 12 The diversity can be disclosed by the physician's expertise marked and voted by their patients. These patient responses can enhance a rich-get-richer effect for well-known experts, 13 and vice versa for unpopular ones.

The studies from the related literature can be classified into the following four categories: intervention based on e-consults, patient response-related studies in OHCs, treatment effect-related studies and covariates of intervention implementation (see Table 1).

Representative studies on OHCs.

Among the studies of intervention based on e-consults, one previous study presented a systematic review and narrative synthesis, supporting the proposition that e-consults improve access to specialty care. 1 As a result, the improvement would increase the physician's expertise diversity (labeled by their patients). Some of the physicians are accessible for e-consults with online reservations, while others provide an offline conclusion with online booking. As e-consult accessibility varies among physicians in OHCs, how this factor impacts patient assessments of physicians’ expertise using tags is still unknown in OHCs.

In the patient response-related literature, patient review valence has been investigated from the view of physicians’ provision of e-consult service. 16 To advance patient safety, a computerized fall risk assessment process was employed to tailor interventions in acute care. 19 In OHCs, the importance of online voting for sharing patient experience has been verified by extensive studies.20,21 Patients label and vote on physicians’ expertise, and then this information records the diversity of physician experience. Patients’ vote volume reflected clinicians’ online contribution and engagement, 22 which were investigated in studies of word-of-mouth 14 and experience sharing.23,24 The diversity also reflects that physicians have a grasp of a much broader scope of e-consult experience than their recorded expertise. These labels grow progressively more diverse as patients vote. There were two standard measures of these labels and votes (see further details in the Measures section): the breadth of voted expertise (BE) and the volume of votes (VV). The former measure (BE) in OHCs reflects the number of labels annotated by patients, which are inherently subjective for several online tasks. But substantial variation cannot be avoided among different annotators. Advocates argue that the patient votes provided patients with much-needed information about physician quality from the customer experience perspective. These systems aggregated enough votes, which reflected the patient responses. The existing evidence clarified that correlations between the VV and physician experience were statistically significant, suggesting a positive relationship between online votes and physician quality. 15 However, their results also indicated the average number of votes per physician was still low, and most voting variation reflects evaluations of punctuality and staff.

Although e-consults are associated with patient responses, the accessibility of e-consults may have an impact on the patient assessments of physician expertise using tags. For deducing a collective response, the degree of voted diversity (DD) is introduced as a proxy of the e-consult diversity on patient responses. The degree of diversity was defined as statistics to be calculated from sample data. 25 Yule initially introduced the measure of diversity in case individuals of a population are classified into diverse groups. 26 This diversity was renamed as a measure of concentration. 27 Several studies which investigated online service diversity were in other types of online communities.28,29

In treatment effect-related studies,30,31 the previous literature can be classified into three research streams. First, quasi-experiments 32 were implemented to investigate the average treatment effect of physicians’ provision of e-consult services on patient review valence. 16 Despite such recent progress, few studies have considered the impact of e-consult accessibility on physician expertise (with patient-generated tags) in OHCs. Second, the application of content analysis of comments has many terms from patient views through poster mining.23,33 Patient experience from free-text comments posted online was also captured for studying projects such as whether these patients were treated with dignity. 17 For example, the Information Strategy for the National Health Service (NHS) in England attempted to obtain a score to predict sentiment analysis against the patient ratings left on the NHS Choices website. The website asked patients to rate whether they were treated with dignity by the physicians and other staff in these hospitals, with the five options. The first three options (“all of the time,” “most of the time,” and “some of the time”) were grouped, in this case into a “more dignity” class, and the options “rarely” and “not at all” into a “less dignity” class. Subsequently, the NHS Choices website asks all patients whether they would recommend the hospital or not. Thus, the sentiment analysis of the comments on dignity (on the NHS Choices website) would impact the patient assessments of these hospitals or even the physicians in those hospitals by capturing patient experience. However, few studies have captured patient experience from their tags on physicians’ expertise and votes. Third, crowd-voting techniques were also emerging in online healthcare-related studies. 34 Researchers can move from a small homogeneous population of participants to a large heterogeneous population with diverse backgrounds (expected to be unbiased). 35 But the crowd-voting systems seek a collective value emerging only from the quantitative properties of the collection of distributed work. However, this bias toward popularity can prevent what may otherwise be suitable consumer-service matches, especially in e-consult services.

From the literature on covariates of intervention implementation, many determinants may decide the physicians’ e-consult accessibility and further establish patient assessments of physicians’ expertise with tags. The studies on online reviews have examined the relationship between their characteristics (valence, volume and variance) and brand attitudes through a conceptual model. 36 In clinical practice, hospitals with brand names are often assumed to be big and/or reputed hospitals. Some doctors perceive that their attachment to those branded hospitals also has a positive impact on their patient recruitment. 37 In branded hospitals, there often employs a number of celebrity doctors (renowned doctors). With the ascension of celebrity doctors, their surgeons can attract wealthy patients, command large fees and often appear in marketing materials. Meanwhile, those hospitals often charge higher fees to patients for online health services to accommodate the high cost of employing celebrity doctors. The effects of online reviews and server delivery volume on firm profits are mutually enhancing. 38 The causal effect of honorary titles was also validated for impacting physician service volumes. 5 Information aggregating mechanisms of online voting suggest that online services cannot avoid inequality in distributing service amount. 39 The clinic title and city level also have an impact on the inequality of health service delivery.4,8 From a professional capital perspective, the physician gains social and economic returns during their term of tenure within OHCs. 18 Patients can find the matching physicians using voting information (i.e. information on their expertise), which is more accessible than their formal introduction.

In summary, knowledge about the relationship between e-consults and patient assessments of physicians’ expertise using tags in OHCs is minimal. Although the determinants of e-consult accessibility contain several other factors in OHCs, in this study we focus on examining how e-consult accessibility impacts patient assessments. To answer the issue of whether the physicians’ e-consult accessibility affects patient assessments of their expertise, the hypothesis testing on the responses is not enough. The scientific significance cannot be judged from the p-value, which can only tell us statistical significance. The investigator needs to go beyond hypothesis testing. Randomization inference is a basic rule for answering causal questions and this study attempts to investigate the issues through a case–control study.

Method

Design

We examined e-consult accessibility inferences on patient assessments of physicians’ expertise using patient-generated tags with a case–control study (see Figure 4). Accessibility of e-consults (AeC) is a binary variable adopted as the treatment indicator, indicating whether the physician provides e-consults (online written). On Good Doctor, 22.8% and 39.1% of the physicians provided telephone consultation and online written consultations, respectively. 40 The value of AeC took 1 when the physician provided e-consults, otherwise 0. This factor of AeC is employed as the treatment for this case–control study.

The study framework of influence of e-consult accessibility on patient assessments using tags and votes for physicians in OHCs.

The objective is to identify the differential effect of treatment (DET). This effect refers to the difference between the conditional mean of the outcomes with respect to the treatment and control given their balanced covariates. 35 The conditional means are generated from the dual responses of subjects or the DD, which typically measures how many different expertise labels (of services) a physician offers. Service diversity is a supply-side measure of breadth. The following section describes the measurements of the responses and covariates.

Measure of dependent variables

The measurements of patient-generated tags are the BE and the VV (see Table 2). With these two measurements, we developed one response variable (DD) for identifying the DET. For measuring BE and VV, equation (1) is acquired from text analysis, as implemented in previous studies.15,17 Physicians’ expertise (labeled by their patients’ online votes) was found more likely accurate than their formal designations. 15 The number of reviews is widely used as one predictor of e-consults. 17 The DD indicates the degree of diversity, 25 which was defined as entropy. Information entropy is defined as the average amount of information produced by a stochastic source of data. It is one of several efficient ways to investigate how to help patients make the best use of online votes.

Variable definitions and measurements.

Let Si be the vector of physician i's expertise identified by their patients, (Si, Votes(Sij)) the sequence of patient votes for m labels; m is the size of online consulted disease-related labels for voting. These voted tags contain both the primary expertise of physicians and their secondary expertise. Let Votes(Sij) be the vote number of the online consulted disease-related tags (Sij), the volume of the doctor's jth service expertise. Suppose that P(Sij) is the probability of service expertise (Sij). Given the label sequence of patient votes, we further demonstrated the patients’ votes aggregated service diversity, including the BE, the VV and the DD.

Since observation of less probable events occurs more rarely, the net effect is that the entropy received from nonuniformly distributed data is always less than or equal to log2(n). According to the property of entropy, the value of DD is zero when all the patients’ votes are assigned to one label of physician expertise. 41 The value of DD only considers the probability of observing the specific event of online votes on physician expertise. The limitation of this measurement is that it lacks the meaning of the events themselves, by encapsulating the information about the underlying probability distribution. These measures are provided to evaluate the performance of patient-generated tags in OHCs, but how they influence the service delivery in OHC is still deserving of more investigation.

Covariates

To focus on the impact of e-consults on the responses, we employed four control variables (see Table 2): tenure (TE) with OHCs, clinic title (CT), review rating (RR) and amount of SP. The Tenure variable is the time in months that the physician has been activate in the OHC, rather than the amount of time a doctor has been a doctor. The clinic title refers to chief doctor, associate chief doctor, attending doctor, resident doctor and others. Some types of physicians are able to transition more easily to telehealth than others. A surgeon would need to have more in-person visits while an internal medicine physician may have less serious issues that he regularly attends to and so his patients are more easily transitioned to telehealth. Specialists seem more likely to have less diversity in tags and are more likely to require in-patient visits.

In Table 2, the first three control variables (RR, CT and TE) are standard in related studies on OHCs. 4 The variable of TE illustrates the impact of how long the physician has been active in their role. Since the independent variables in this study contain a stock variable, we introduce another variable (SP) to control the reverse impact. In this study, all control variables are stock variables representing the historical data of the samples at the data acquisition time. With labels voted by patients in OHCs, they improved the information sharing of the physician's expertise and recommendations for future patients.

Data collection

The study data are collected from the Good Doctor website through a web crawler. The sample data include the necessary information on physicians’ websites (including the accessibility of e-consults), the disease-related labels of physicians’ expertise and the online votes provided by patients.

Because of ethical considerations, data collection and analysis do not breach the relevant terms of use listed on the Good Doctor website. None of the study data are related to private information about physicians. The duration of data collection is during two days, from August 26, 2017 to August 27, 2017. The number of patient votes for physician expertise ranged from 1 to 1723. For a reliable and stable analysis, we applied the following inclusion and exclusion criteria. (a) The volume of physicians’ expertise votes must not be less than a cutoff (i.e. five). (b) Expertise labels were voted during the last 2 years from July 27, 2015 to July 26, 2017. (c) The numbers of past SP and review ratings are not less than 1. From the original data set, 9841 samples of physicians have remained after filtering. The total vote amount of all physicians’ expertise is 704,467. The physicians came from 1255 different hospitals widely distributed in China. In the data sample, 5029 were not accessible for e-consults (see Table 3). Within the retrospective data, 9841 physicians gained 704,467 votes of clinical expertise on the study of the OHC during 2 years, from August 26 2015 to August 25, 2017.

Statistics of the empirical data.

The following aspects of sample characteristics are worth noting. First, the data source of patient votes differs from the votes of physicians’ review ratings or the records of physicians’ accumulated clinical experience (Figure 1). Patient votes represent their contributions to sharing medical experience. Although these votes and physicians’ expertise labels were presented with inherently subjective information, these posted labels were familiar to the patient communities in OHCs. Second, the number of patients considers patients who were serviced during the investigation time. Multiple visits were also recorded only once. Third, the clinic titles were categorized into five classes (from junior to senior), namely, chief doctor, associate chief doctor, attending doctor, resident doctor and others. Moreover, the physicians’ review ratings (also known as online word-of-mouth) were measured by the star rating with a mean value. It equals 2.746 on a scale from 1 to 5 with five being the highest.

Statistical analysis

In this case–control study, the data analysis of e-consult accessibility is conducted by estimating the average treatment effect

31

on the DD of physicians’ expertise. This study first fit the propensity score model with the data of treatment and covariates, as demonstrated in equations (3) and (4). Then we fit the data with the responses for the DET, as shown in equations (5) and (6). This study investigated how e-consult accessibility might impact the patient assessments of physicians’ expertise using patient-generated tags. All variables are defined in Table 2.

According to the theory of causal inference, the model in equation (3) is a logistic regression that captures the covariates’ compressed information on the treatments. It has an equivalent form to equation (4). This model explicitly illustrated the proportion of the case group to the control group. Model (5) expresses the DET, which is the difference between the case and control groups. Each term in model (6) is a conditional mean response of the subjects. Model (6) is an inverse probability weighting (IPW) estimator of model DET, which is built with the inverse propensity score weighting method. 5

Results

e-Consult's effect on patient responses

For the patient responses on the BE and the VV, the results of t-tests show the difference is significant (p < .001) for the control and case groups. Their responses of BE and VV (see Figure 5) were estimated with unadjusted and weighted methods, respectively. All the influences of the e-consults are significant in the patient assessments. For the BE, its mean (unadjusted) was 7.305 for the case group, while the mean was 9.465 for the control. Their conditional means (adjusted with weighting) were 5.707 and 9.508 for the case and control groups. The results imply that there exists a difference in means of the two groups. The 95% confidence intervals were [−2.173, −2.146] and [−3.824, −3.778] with the unadjusted and weighted methods, respectively. The results provided the empirical evidence that the BE is significantly different (p < .001).

Distributions of the BE and VV with unadjusted and weighted methods for the two groups. The control group is denoted as AeCi = 0 and the case group as AeCi = 1. (a) BE, (b) VV.

Similarly, the results provided empirical evidence that the VV is significantly different (p < .001) for these two groups. Its mean (unadjusted) was 39.720 for the case group, while the mean was 84.565 for the control. Their conditional means (adjusted with weighting) were 34.323 and 81.740 for the case and control groups. The 95% confidence intervals were [−45.083, −44.607] and [−47.683, −47.150] with the unadjusted and weighted methods, respectively.

The empirical evidence also demonstrated that the DD is significantly different (p < .001) for these two groups. The result implies that there exists a difference in means of DD. However, the hypothesis testing on the responses is not enough to answer the research issue, that is, whether the physicians having e-consult accessibility have an impact on patient assessments of their expertise. Thus, the DET is necessary for solving that scientific issue. Covariate balance needs to be diagnosed before further inference in a case–control study.

Covariate balance diagnosis

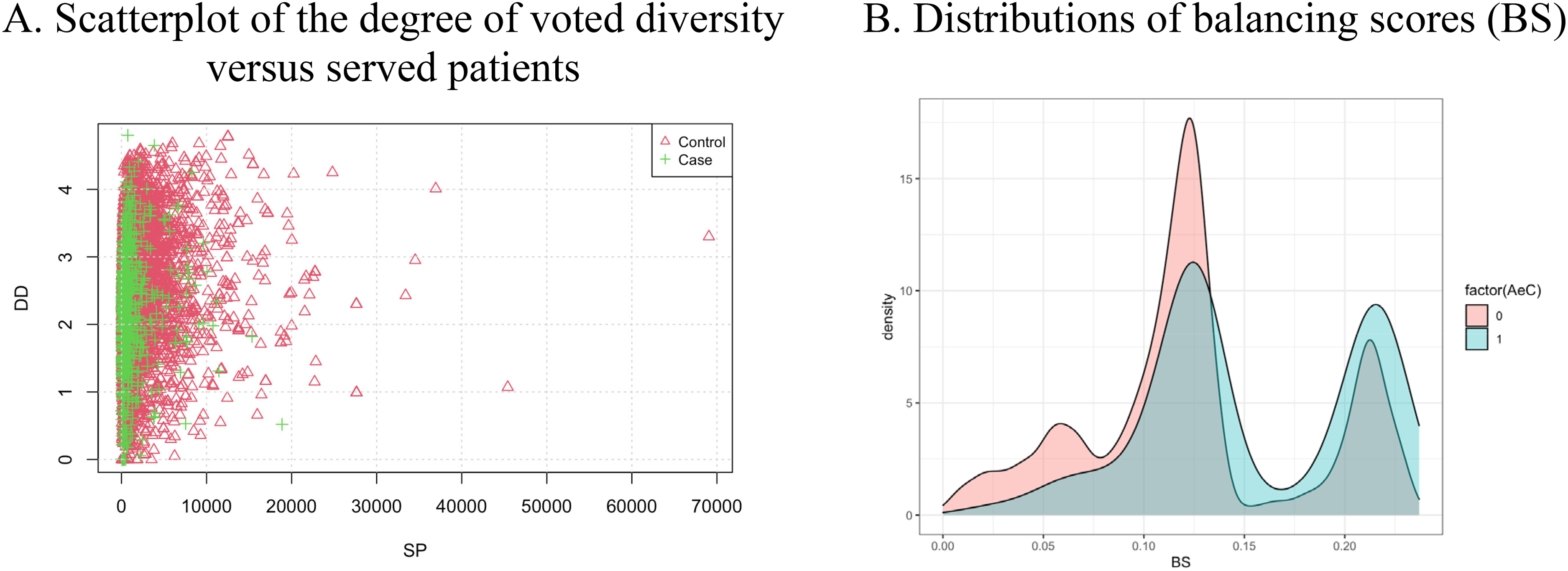

Two aspects of the covariate balance are examined: a scatterplot of the DD versus SP and the distributions of BS (see Figure 6). The feature of SP represents one of the covariates.

Scatterplot of the two responses (a) and BS (b) for the control and case groups. The control group is denoted as AeCi = 0 and the case group as AeCi = 1. (a) Scatterplot of the DD versus SP, (b) Distributions of BS.

The distributions show that the covariates between the two groups lack overlap (see Figure 6(a)). The control group's DD range is close to that of the case group, but the control group's SP range is much more extensive than that of the case group. The sample size of the control group is larger than that of the case group. The ranges of the two groups are close on the distributions of BS, but their distributions lack overlap when BS is under 0.13 (see Figure 6(b)).

Using both the scatterplot and distributions of BS can help diagnose the covariate balance between the two groups. To reduce the estimation bias, investigators often weight cases in control–case studies. This study implemented the IPW method (see Statistical analysis section).

Sensitivity analysis

The empirical evidence suggests that the concentration of the patient-generated tags is much more significant in the case group than in the control group. To reduce the weights’ sensitivity, we conducted 100 runs of randomized inference with a case sampling probability of 0.8. For each run, the propensity score model (4) was employed to obtain the weights for units in the case and control groups.

The results of the multiple responses show the e-consult's effect with unadjusted (MR) and weighted methods (see Figure 7). The center positions in Figure 7(a) are (7.305, 39.720) and (5.707, 34.323) for the case group (in pink and blue) and (9.465, 84.565) and (9.508, 81.740) for the control group (black and green). Moreover, the center positions of the case group (Figure 7(b)) are (7.305, 39.720, 2.104) and (5.707, 34.323, 1.592) in the coordinates BE, VV and DD for the mean responses (MR) and conditional mean responses (CMR), respectively. These two positions are much lower than these of the control group (in black and green), which are (9.465, 84.565, 2.517) and (9.508, 81.740, 2.547) for MR and CMR, respectively.

Comparison between the MR and CMR for the control and case groups. The three responses are VV, BE and DD. (a) Comparison of two responses and (b) comparison of DD.

With sensitivity analysis, the results were also estimated with the cutoff [0.1, 0.9] of the case weights. The other subjects outside of this weight interval were trimmed from the samples. This trimming method reduces the sensitivity of the extreme weights because either too small or too large weights would be sensitive for the mean estimation.

The results in Figure 8(a) suggest that the e-consult's effect is robust with the weighted method. For the case group (AeC = 1), the average DD is 2.103 for these physicians with e-consults accessible (photo and text queries). For the control group, their average DD is 2.516 for these physicians without e-consults. The discrepancy is 0.413 between the case and control groups. Meanwhile, the results with the weighting method (IPW estimator) suggest that the difference is much more significant (0.953 > 0.413) than that with the naïve approach. For the case group, their average DD is 1.593 for those physicians with accessible e-consults. For the control group, the average DD is 2.546 for those physicians without e-consults. Compared with the results of the naïve method, the average DD in the case group (AeC = 1) is much lower (2.103 < 2.516 and 1.593 < 2.546) than in the control group (AeC = 0).

Comparison of the conditional mean responses and sensitivity analysis results with 100 runs for the two groups with the unadjusted and weighted methods. The control group is denoted as AeCi = 0 and the case group as AeCi = 1. (a) Comparison of the conditional mean responses of DD, (b) Sensitivity analysis results of the DET.

Moreover, Figure 8(b) illustrates the e-consult's differential effect for the unadjusted and weighted methods. The result suggests the effect with the unadjusted method is −0.413 (±0.007). This result suggests that patient-generated tags in the control group are 0.413 higher on entropy than those in the case group. Compared with the results of the naïve method, the e-consult's differential effect with the weighted method is −0.953 (±0.012). This result suggests that the e-consult's differential effect in the control is 0.953 higher than those in the case group.

In summary, the differential effect shows the influence of e-consults on the patient assessments of physician expertise using patient-generated tags. The results of differential effect suggest that the e-consult service increases the concentration of patient-generated tags. As a small entropy indicates a high concentration, a high average DD suggests the concentration of the patient-generated tags. Thus, the result provides two insights: first, the concentration of the patient-generated tags is much more significant in the case group than in the control group; second, the e-consult's differential effect on patient-generated tags in the control group is 0.953(±0.012) higher than those in the case group.

Discussion

Principal results

e-Consults are still a useful supplement rather than a replacement for diagnosis and treatment by hospital entities. This type of service offers an appealing new modality for the rational appropriation of healthcare services in OHCs, providing convenience for existing and potential patients, physicians and hospitals. The patients’ vote system in an OHC provided channels for the patients to mark their physicians’ expertise and vote on those labels. These expertise tags also reflected the primary and secondary illnesses of their encounters.

First, our results suggested that the concentration of the patient-generated tags is much more significant in the case group than that in the control group. The e-consult's differential effect on the patient-generated tags in the control group is 0.953(±0.012) higher than in the case group. Our findings could also help people understand the current status of patients’ online labels on physician expertise and service diversity. As the study 18 suggested, online consultation websites should provide essential design elements such as ratings of physicians (user feedback), articles contributed by physicians, and free consultation services to reduce service concentration. This phenomenon of service concentration in OHC refers to the effect of celebrity doctors commonly recognized by their high-status capital (i.e. clinic title and hospital level). The pursuit of celebrity doctors is widespread in offline and online health services, although these celebrity doctors rarely have sufficient time to respond with limited energy and time. The reduction of service concentration leads to weakening the effects of these celebrity doctors and to optimizing the allocation of healthcare resources.

Second, the results on t-tests show the difference between the patient responses on BE and VV is significant (p < .001) for the control and case groups. Previous studies 19 provided evidence that analyzing the comments posted online about physicians can identify possible solutions for improving patient satisfaction. Our results provided empirical evidence to gain a deep understanding of the effect of patients’ vote aggregating diversity on the amount of physicians’ service delivery.

Third, this study employed the IPW method to control the two groups’ covariate balance. This approach reduced the bias of the differential effect estimation. The e-consult's differential effect with the unadjusted method is −0.413(±0.007), while that with the weighted method is −0.953 (±0.012). The result with less bias suggests that the e-consult's differential effect on patient-generated tags in the control group is 0.953 higher than in the case group. Previous findings 3 indicated that the covariates (including review rating, clinic title, tenure with OHD and SP) hold a positive correlation with physician service delivery. This study demonstrated that e-consults had a positive impact on the concentration of patient-generated tags.

Moreover, this study presents a methodology of e-consult's differential effect estimation, which shows the potential strengths of statistical inference with multiple responses from patient-generated tags and votes in OHCs. Although previous studies13,19,30 applied text mining to extract hidden topics from web-based physician reviews, our study developed methods to analyze patients’ votes and investigated the effect of their aggregating diversity on the number of physicians’ service delivery. This study examined the effect of the treatment variable on the responses (see Figure 4). It also investigated the differential treatment effect on the response. Our empirical evidence confirmed that the concentration of the patient-generated tags is much more significant in the case group than that in the control group, with an e-consult's differential effect equaling 0.953(±0.012). The results support the argument that the e-consult reinforces the increment of the already-received physician expertise (labelled by patients), reducing the mark information's diversity.

Limitations

Our study limitations lie in the following aspects. First, all data on patient votes (including physicians’ expertise labels and many votes) and physicians’ service delivery were collected from one OHC (the Good Doctor website). The size of each physician's expertise is calculated in the patient crowd voting process for two days. 4 The duration of data collection was from August 26, 2017 to August 27, 2017. Therefore, a bias exists in the measure of a time interval. As the labeled service votes were recorded for 2 years and had changed as time passes, the status of the service diversity for each physician had also dynamically changed. The data cannot avoid the redundancy of voted labels and measures.

Second, this study did not consider the semantics of these tags to improve the measurement precision of breadth of specialties. The semantics of user-generated tags may impact the number of such votes on tags. For example, “cardiac myxoma” and “surgery” may be semantically closer than “surgery” and “physical therapy.” Some of the physicians’ expertise are listed by themselves in their personal profiles. In future studies, the expertise reflected in the tags will be used as control variables.

Third, the covariates contain only the essential variables while omitting the others. Our study should be regarded as a starting point of causal inference, rather than an examination of the determinants of e-consult accessibility or a final causal statement. Although an associational link is much easier to implement with the data, establishing a causal link is our ultimate goal for further investigation. The thresholds present in the data filter process can be adjusted with other control variables, including the hospital level, city locations and group diversity of physicians, which can also be considered in the model. Other determinants may also affect the amount of physicians’ service delivery (e.g. the ranks of physicians on Good Doctor based on patient votes). Relevant data can be collected to reduce the bias of results in future works.

Fourth, our findings suggest that regardless of e-consult availability, these responses from patients can enhance a rich-get-richer effect for physicians whose expertise is well known and vice versa for unpopular physicians. However, criticizing any physician for providing vast service diversity to gain several SP in OHCs could be inappropriate. In OHCs, it is also beneficial for society when physicians would like to service the patients with their expertise in the niche service area of medical consultations. Additional results can be achieved through further investigation of the longitudinal data of online service. More research can be conducted on the reverse dependency between the number of past visits and the VV, or even the dynamics of patient responses in OHCs.

Conclusions

e-Consults in OHCs are essential for improving access to health care because they may avert the need for a face-to-face visit between patients and medical specialists. This study investigated the impact of e-consult accessibility on the patient assessments of physician expertise in OHCs using patient-generated tags. With a case–control study framework, the data from the Good Doctor website was examined to estimate the DET. The main findings from results are two-fold: first, the concentration of the patient-generated tags is much more significant in the case group than in the control group; second, the e-consult's differential effect on the patient-generated tags in the control group is 0.953 (±0.012) higher than those in the case group. The results support the argument that e-consults reinforce the increment of the already-received physician expertise (marked by patients), reducing the mark information's diversity. The diversity can be disclosed by the physician's expertise marked and voted by the patients. These patient responses can enhance a rich-get-richer effect for well-known experts, and vice versa for unpopular ones.

For OHC managers, this study provides new insights, and they can employ these empirical results to encourage physicians to be involved in e-consults in the OHC. Service diversity plays different roles from the supply and demand sides. e-Consult service innovations can bridge the service divide between medical needs and supplies. Although the service may be traditional, the delivered service can reach new patient groups through new business models. The most pressing question for organizational managers and policymakers in OHCs is whether the reduced burden to patients and flexible access to physicians can translate to better outcomes. This study provided a rigorous evaluation of the effect of e-consults on healthcare utilization, especially on the patient assessments of physician expertise using patient-generated tags. The statistics metrics on the differential effect is a cutting-edge solution for e-consult access, which was measured by objective criteria rather than primarily by provider perceptions (physicians’ introductions). To improve platform performance, the OHC managers should provide a pilot application with a dashboard that is integrated with the statistics metrics and e-consult's effect to provide the relevant recommendations for the users.

For future patients, the system in OHC can enable their engagement and share the experience by listing their consulted disease and voting for the physicians. The primary need for patients is to achieve coordinated, high-quality and efficient care by finding their matching physicians with the level of specialty. This study provides decision-making support for them to seek the matching physicians with their assessed expertise. The application with the statistics metrics and e-consult's effect will help them to quickly identify the expertise of the physicians from the tags and votes of historical patients. The strengths of relevant recommendations suggest that future patients will find the matching physicians with their combination of their expertise labels. These combinations are helpful for the patients to better understand their correct conditions of diseases.

For physicians in OHCs, this study also encourages them to offer e-consults to improve their service delivery contribution, which can be reflected by the concentration of the patient-generated tags on their medical expertise. Although these patients’ responses can enhance a rich-get-richer effect for the well-known experts, the physician can also provide e-consult service with their expertise in the niche areas. The physician's expertise tags from the historical patient responses will also save time for their communication on the complications of related diseases with future patients. For patient safety, they can require the platform to remove some wrong labels regarding their expertise that were assessed by historical patients. This behavior will also improve the OHC platform to provide better e-consult services.

Footnotes

Acknowledgments

Yuan-Teng Hsu gratefully acknowledges financial support from Shanghai Business School.

Contributorship

All authors contributed equally to this manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

Not applicable, because this article does not contain any studies with human or animal subjects.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the National Natural Science Foundation of China (No. 72241422, 62272077), Chongqing Municipal Education Commission (No. KJQN202200608, 22SKGH149), and Chongqing Municipal Science and Technology Bureau (No. CSTB2022TIAD-KPX0155, 2023DBXM008).

Guarantor

Y-TH.

Informed Consent

Not applicable, because this article does not contain any studies with human or animal subjects.

Trial Registration

Not applicable, because this article does not contain any clinical trials.