Abstract

Objective

Clinical implementation of remote monitoring of human function requires an understanding of its feasibility. We evaluated adherence and the resources required to monitor physical, cognitive, and psychosocial function in individuals with either chronic obstructive pulmonary disease or stroke during a three-month period.

Methods

Seventy-three individuals agreed to wear a Fitbit to monitor physical function and to complete monthly online assessments of cognitive and psychosocial function. During a three-month period, we measured adherence to monitoring (1) physical function using average daily wear time, and (2) cognition and psychosocial function using the percentage of assessments completed. We measured the resources needed to promote adherence as (1) the number of participants requiring at least one reminder to synchronize their Fitbit, and (2) the number of reminders needed for each completed cognitive and psychosocial assessment.

Results

After accounting for withdrawals, the average daily wear time was 77.5 ± 19.9% of the day and did not differ significantly between months 1, 2, and 3 (p = 0.30). To achieve this level of adherence, 64.9% of participants required at least one reminder to synchronize their device. Participants completed 61.0% of the cognitive and psychosocial assessments; the portion of assessments completed each month didnot significantly differ (p = 0.44). Participants required 1.13 ± 0.57 reminders for each completed assessment. Results did not differ by disease diagnosis.

Conclusions

Remote monitoring of human function in individuals with either chronic obstructive pulmonary disease or stroke is feasible as demonstrated by high adherence. However, the number of reminders required indicates that careful consideration must be given to the resources available to obtain high adherence.

Introduction

Holistic human function is a critical outcome in all medical disciplines. Rehabilitation professionals specialize in various domains of function (e.g. physical, cognitive, and psychosocial) and aim to optimize function in all patients. These domains of function have complex interactions and are challenging to measure in clinical practice for several reasons. First, the measurement tools currently available have limited ecological validity to real-world function.1,2 Second, current tools often provide relatively low information content data; thus, the sensitivity of these measures to track recovery and predict key health effects is lacking. 3 Lastly, current measurement tools are only obtained at the time of clinical care, providing only a discrete snapshot of an individual's function. This is particularly problematic because daily function occurs in complex environments that rarely mimic that of the clinic. There is a clear need for measurement approaches that allow rehabilitation professionals to capture real-world function outside of clinical visits both at a high frequency and a high resolution. Capitalizing on digital health solutions that facilitate the remote monitoring of function—including physical, cognitive, and psychosocial function—is one approach that may overcome the limitations of current measurements.

Physical, cognitive, and psychosocial functions are key functional domains that are critical to measure together to understand the whole person. All three domains have been related to numerous outcomes—such as employment status, 4 social roles, 5 hospital admission, 6 and mortality7–9—in a variety of patient populations. Furthermore, there are complex relationships between these functional domains that necessitate concurrent measurement of all three domains. For example, in clinical populations, psychosocial function (as captured by anxiety, self-efficacy, and depression) is often associated with cognitive impairment10,11 and decreased physical function.12–15 Clearly, measurement across these functional domains is essential but comprehensive assessment spanning these domains requires access to a wide range of expertise and significant costs of time and effort.

Remote monitoring tools provide an avenue to facilitate assessment across functional domains, as teams with experts in each area can strategically design the battery of remotely collected measures and drastically reduce the time needed to collect data in the clinic. Though the benefits of remote assessment of physical, cognitive, and psychosocial function are clear and advances in technology have made remote assessments of function increasingly possible, remote monitoring is not yet a part of routine clinical care. For these approaches to be implemented into clinical care, a thorough understanding of the feasibility of remote monitoring of function is needed.

Two key components of feasibility are adherence and the resources needed to obtain good adherence (i.e. the practicality of the time needed to obtain adherence). 16 Both components are essential to consider, as they have implications for scaling remote monitoring of function and its integration into clinical care. To date, patient adherence to approaches for remote monitoring of function has only been examined superficially. For example, research on wearable devices that monitor physical activity has measured adherence by determining whether the number of steps on a given day exceeded an arbitrary threshold.17–22 However, this does not provide insight into how long the device was worn during the day and may result in incorrect labeling of non-wear days due to either long bouts of inactivity that are common in patient populations or simply because of the natural variability in daily activity. More accurate measurement of adherence to this type of monitoring will provide insight into the potential scalability of remote monitoring of physical function. Additionally, the examination of long-term adherence (e.g. windows of time spanning months to years) to remote monitoring approaches is also needed to guide the integration of these approaches into clinical care. For example, previous work has shown good adherence to remote monitoring of cognitive function but only over short periods of time (i.e. days or weeks).23–25 Additionally, many studies22,24,25 that examined adherence to remote cognitive assessment excluded individuals with cognitive deficits, limiting the ability to understand how well monitoring systems generalize to broader patient populations.

An understanding of the resources needed to acquire quality data from remote monitoring is essential for potential clinical integration. The answers to questions such as “how often do individuals need to be reminded to complete their remote monitoring tasks?” are critical to capitalizing on the benefits of remote monitoring technologies and to understanding the potential burden placed on a study team or clinical team. Yet, no studies discuss the resources needed to obtain the reported adherence. Consequently, research examining the feasibility of remote monitoring of function over time in a variety of patient populations with various levels of impairment is needed.

The primary purpose of this study was to examine the feasibility (i.e. completion rate, adherence, and resources utilized) of remote monitoring of physical, cognitive, and psychosocial function for three months in individuals with stroke and chronic obstructive pulmonary disease (COPD). We selected these two patient populations as they have distinct impairments that may impact the feasibility of remote monitoring of function and could benefit from such remote monitoring due to the prevalence of reduced physical activity,26–28 impaired cognition,29–32 and abnormal psychosocial function33–35 in both patient populations. We hypothesized that there would be high patient adherence accompanied by significant resources needed to achieve that adherence. As a secondary aim, we compared the feasibility of remote monitoring in these two different populations to determine if there were unique considerations for different patient groups. This comparison is valuable because it allows us to better understand how remote monitoring approaches may generalize to a diverse group of patient populations, a critical step for understanding clinical utility and scalability. We hypothesized that there would be no difference in feasibility between diagnoses. Lastly, we explored the characteristics of individuals who were adherent to remote monitoring of (1) physical, cognitive, and psychosocial function, (2) only physical function, (3) only cognitive and psychosocial function, or (4) neither. This is important as it impacts the ability to expand the use of remote monitoring in clinical practice.

Methods

Participants

We prospectively recruited individuals from two patient populations: those with stroke and those with COPD. We recruited individuals with stroke from Johns Hopkins Hospital who were at least 18 years old and had a history of stroke as confirmed by ICD10 codes within the medical record. Individuals with COPD were recruited from the Johns Hopkins Pulmonary Clinic who were at least 30 years old and had a history of COPD as verified by ICD10 code or spirometry metrics within the medical record. It is worth noting that the discrepancy in the age criteria is due to the differences in etiology between COPD and stroke. Specifically, COPD develops due to years of cumulative inhalational exposures and, therefore, is almost exclusively a disease that impacts older adults. On the other hand, stroke, while most frequent in older adults, can occur at any age. All participants also had to own a smartphone and have internet access via in-home Wi-Fi. Participants were excluded if they primarily used a wheelchair for mobility; however, other assistive devices, such as canes and rollators, were allowed. It is important to note that although assistive devices have been shown to impact the accuracy of wrist-worn devices, 36 we intentionally included these individuals to maximize the generalizability of our findings. Unlike previous work, we did not exclude individuals with cognitive impairments; however, we did not systematically assess cognition at the time of enrollment.

The Johns Hopkins University Institutional Review Board approved the study protocol in both individuals with COPD (IRB00236214) and stroke (IRB00247292). Aall participants provided either oral or written consent. The Institutional Review Board authorized both oral consent, using a preapproved script, and written consent. This decision was adopted due to the low risk of the study and to facilitate recruitment during the COVID-19 pandemic. Additionally, participants agreed to remote monitoring of (1) physical function and/or (2) cognitive and psychosocial function for one year (i.e. participants could enroll or withdraw from the physical activity portion and the cognitive/psychosocial portion separately). The primary study was an observational study to understand physical activity, as well as cognitive and psychosocial function, longitudinally in individuals with COPD and stroke. The study team did not act on the data collected and did not provide feedback to the participants. In this secondary analysis, we focus on a three-month portion of enrollment to assess two components of feasibility.

Study procedures

Participants who agreed to participate in the remote monitoring of physical function wore a Fitbit Inspire 2 (Fitbit Inspire 2; Fitbit Inc, San Francisco, CA, USA), which is a commercially available activity monitor that measures step count and heart rate at the minute level. The study team provided the Fitbit device to all individuals and assisted with set up, including the installation of the Fitbit application onto their smartphones. Participants with a history of stroke wore the device on their unimpaired wrist whenever possible to maximize the accuracy of the device;37,38 however, individuals with stroke who were unable to independently place the device on their unimpaired arm due to hemiparesis and who had limited social support to assist in donning the device wore it on their paretic wrist. All participants with COPD wore the device on their non-dominant wrists. Although the literature suggests that wearing the device on the upper extremity may not be as accurate as wearing it in other locations,37–40 we elected for the wrist wear location to capture heart rate as this metric allows us to better define wear time and provides valuable information about an individual's health. Individuals were instructed to remove the device when showering and when charging the device but to wear it at all other times, including while sleeping. They were also asked to synchronize their device with the Fitbit application one time per day. A custom-built spring boot application with an angular front end that ran on the AKS Cluster application automatically extracted minute-level data from the Fitbit application programming interface (API) three times a day. Once the data was extracted, the application performed a series of logic checks to determine how long it had been since the device was synchronized. If a participant had not synchronized their device in five days, no data would be extracted from the Fitbit API and the application automatically sent a reminder to the participant instructing them to synchronize their device or to contact the study team with questions. This reminder was sent either via text message or email based on the participant's preference. If the participant still had not synchronized their device after 12 days, a follow-up reminder was sent to the participant. If the participant still had not synchronized their device by day 19, the application sent a notification to the study team to follow-up with the participant via phone call. The timing of these reminders was based on the length of time that the Fitbit Inspire 2 stores minute-level data. Specifically, if the participants synchronized their devices after either the five- or 12-day reminder, minute-level data would be transferred to the Fitbit servers and still be accessible to the study team. Based on pilot work, the 19-day reminder was placed at the time when minute-level data is erased from the Fitbit Inspire 2, although we should note that summary data (e.g. total steps per day) is still saved after this time. This reminder system allowed us to track the frequency at which participants needed to be reminded to synchronize their devices and how participants responsed to reminders, thereby providing insight into the resources needed to implement remote monitoring of physical function.

Individuals participating in the remote monitoring of cognitive and psychosocial function completed online assessments to measure these domains of function. Both types of assessments were completed on the testmybrain.org platform,41,42 which is a web-based platform accessed via a link that was specific to this study and to each testing session. To measure psychosocial function, participants completed PROMIS 43 short forms for anxiety, depression, general self-efficacy, daily self-efficacy, and social participation. These questionnaires were completed monthly, resulting in a maximum of four assessments of psychosocial function during the three-month timeframe used in this analysis. Using the same platform, participants completed three cognitive tests that were developed by The Many Brains Project via the testmybrain.org platform.41,42 These tests were completed at the beginning and end of the three-month period in this analysis. The three cognitive tests were (1) Connect the dots, 44 which measures processing speed and executive function, (2) Verbal Paired Associates Memory, 41 which measures episodic memory, and (3) Choice Reaction Times,42,44 which measures processing speed and cognitive inhibition. These measures were select based on prior research showing deficits in executive functions, processing speed, and memory in individuals with COPD29,30,45 and stroke.31,46,47 When participants were due for their assessment, the same custom-made application described above sent an automatic message to the participant that included a link to the website to complete the assessments. In the months when the participants were required to complete both cognitive and psychosocial assessments, a single link was used to access all assessments. Thus, the feasibility of cognitive and psychosocial monitoring is examined together. Participants were asked to complete these assessments as soon as possible, but were given up to seven days to complete the assessments. Each day, the custom-made application extracted test results from the testmybrain.org API. During the seven-day window, up to two additional reminders were sent, one on the second day of the seven-day window and then again on the fourth day. As was the case with the reminders for monitoring physical function, all reminders—including the message to initiate the testing session—were sent either via a text message or email based on the participant's preference. These reminders were used to determine the needed resources to achieve the reported levels of adherence.

Completion rate

The first metric of feasibility was the completion rate. The completion rate was defined as the percentage of participants who were consented that completed a full three months of remote monitoring. This metric is important as individuals withdrawing or being lost to follow-up reflects feasibility. We calculated this metric separately for monitoring physical activity and for monitoring psychosocial and cognitive function. We examined the completion rate, and all other metrics of feasibility, for psychosocial and cognitive function together since these domains were assessed using the same platform. We report the percentage of participants who completed the full three months of each monitoring approach in the entire sample (i.e. COPD and stroke) and in each diagnosis. A Fisher's exact test was used to compare the percentage of participants who completed the three months of monitoring between diagnoses. All analyses were completed in R (v 4.0.5). 48

Adherence

Next, we quantified participant adherence to monitoring physical activity and psychosocial and cognitive function. The primary metric of adherence to physical activity was daily wear time, which was defined as the number of minutes during which the device recorded a heart rate. The accelerometry R package was used for this calculation. 49 Because the heart rate monitor can be unintentionally turned off, we manually checked the data to ensure that the heart rate monitor was on to ensure the accuracy of our wear time metric. In cases when the heart rate monitor was turned off for longer than one week, participants were excluded from the analysis. After labeling each minute as the device being worn or not, we calculated the average percentage of the day the device was worn per participant during the first three months of enrollment. This primary metric was calculated over the course of an entire day, during daytime hours, which we defined as 8:00 am to 9:59 pm, and during nighttime hours, which we defined as 10:00 pm to 7:59 am. We report this metric during the three timeframes for the entire sample and compare this metric between diagnoses using a Wilcoxon rank-sum test due to non-normality. As a secondary analysis, we explored the impact of wear location (i.e. impaired vs. unimpaired wrist) on adherence in participants with stroke. This analysis allowed us to determine whether the arm used to don the device impacted the adherence to wearing the device.

We then examined daily wear time across the three-month period. To do this, we calculated the average daily wear time for the entire day per month. We examined the effect of time by comparing the daily wear time per month for the entire sample using a repeated-measures one-way ANOVA. We then performed a 3 × 2 mixed effects ANOVA with the main effect of month (i.e. months 1, 2, and 3) and group (i.e. COPD vs. stroke) to examine if adherence over time differed between diagnoses. We performed the same analysis with daytime and nighttime wear minutes, but the results were unchanged and are, therefore, not presented (data available upon request).

For the remote monitoring of psychosocial and cognitive function, participant adherence was measured via assessment completion rates. Because the cognitive and psychosocial monitoring were performed on the same platform, we examined adherence for these two functional domains together. We first examined the percentage of sessions completed in the entire sample and in each diagnosis group. We then examined the percentage of sessions completed by each participant. Fisher's exact tests were used to compare these metrics between diagnoses. Lastly, we examined the percentage of assessments completed at each time point (i.e. portion completed at Sessions 1, 2, 3, and 4). To assess the change in adherence over time in the entire sample, we performed a Cochran Q test, 50 which allowed us to determine if the portion of assessments completed changed over time. Lastly, we compared the portion of assessments completed over time between groups using a Cochran–Mantel–Haenszel test. 51

Resources utilization

The final metric of feasibility was a measure of resource usage, which we calculated to reflect the practicality of implementing remote monitoring. Our primary metric of resource utilization for the monitoring of physical activity was the number of reminder sessions provided to participants to achieve this level of adherence. For this analysis, we examined the need for reminders to synchronize devices in a subset of the participants included in the adherence analysis (Figure 1). The subset of individuals included in this analysis was defined as those who wore the device for three months while the reminder system was fully functional. This cohort only included a subset of individuals in the adherence analysis due to a delay in developing the reminder system related to Fitbit data in the application. In this subset, we first examined the number of participants who needed a reminder session. A reminder session was defined as any instance that required a five-day reminder to be sent to a participant. We compared the portion of participants requiring no reminder sessions, one reminder session, or more than 1 reminder session between diagnoses using Fisher's exact test. We then examined how many reminder sessions were initiated per person per month. We compared this metric between groups using a Wilcoxon rank-sum test. Although each reminder session included a five-day reminder, the need for the 12-day or 19-day reminder during that reminder session varied based that the participant's response to the reminder. Thus, we also examined the response to each reminder sent during the reminder session. In particular, we examined the portion of reminder sessions that resulted in the participant synchronizing their device after the five-day reminder, in the participant synchronizing their device after the 12-day reminder, and in the participant requiring a phone call to synchronize their device after 19 days. We compared the portion of reminder sessions with each response between diagnoses using Fisher's exact test.

Consort diagram for individuals included in each primary analysis. Shaded boxes indicate samples used in the analyses presented.

To examine the resources needed to obtain the reported levels of adherence for monitoring psychosocial and cognitive function, we examined the response to session initiation. In particular, we examined the percentage of assessment sessions for which participants (1) took the assessment immediately after the session was initiated, (2) required an additional reminder, or (3) did not take the assessment. We compared diagnoses with Fisher's exact test. Lastly, we examined how many reminders were needed for each participant for each completed session. For this analysis, only individuals who completed at least one assessment session were included. This metric was compared between diagnoses using a Wilcoxon rank-sum test.

Exploration of overall adherence metrics and statistical analysis

To examine adherence to remote monitoring of function broadly, we examined the portion of participants who were adherent to the remote monitoring of (1) physical, cognitive, and psychosocial function, (2) only physical function, (3) only cognitive and psychosocial function, or (4) neither. For this analysis, we used all individuals who were included in the adherence analysis for physical function and cognitive and psychosocial function, resulting in a subset of individuals being included. We defined being adherent to monitoring physical function as individuals who wore their Fitbit device for at least 75% of the day during the three-month period. Similarly, we defined being adherent to the monitoring of cognitive and psychosocial function as individuals who completed at least 75% (three out of the four) of the assessments. Although these cutoffs are arbitrary, the information gathered from remote monitoring is dependent on the quality of the data; thus, we opted to establish strict cutoffs rather than use the median or 50% adherence for this exploratory analysis. Using these definitions of adherence, we classified individuals as being in one of four adherence categories: (1) adherent to all remote monitoring (≥ 75% wear time and ≥ 75% of assessments completed), (2) adherent to the monitoring of physical function only (≥ 75% wear time and < 75% of assessments completed), (3) adherent to the monitoring of cognitive and psychosocial function only (< 75% wear time and ≥ 75% of assessments completed), or (4) not adherent to remote monitoring (< 75% wear time and < 75% of assessments completed). Here we report the percentage of individuals in each category and compare the portion in each category between diagnoses using a Fisher's exact test. We then compared (1) age, (2) sex, (3) race (black, white, etc.), (4) level of education (high school education and below, some college education to college graduate, or more than college graduate), (5) living status (alone or with someone), (6) employment status (working, not working, or retired), and (7) method of communication (email or text) between adherence categories using Kruskal-Wallis H-test for continuous data and Fisher’s exact test for categorical data.

Results

Participants

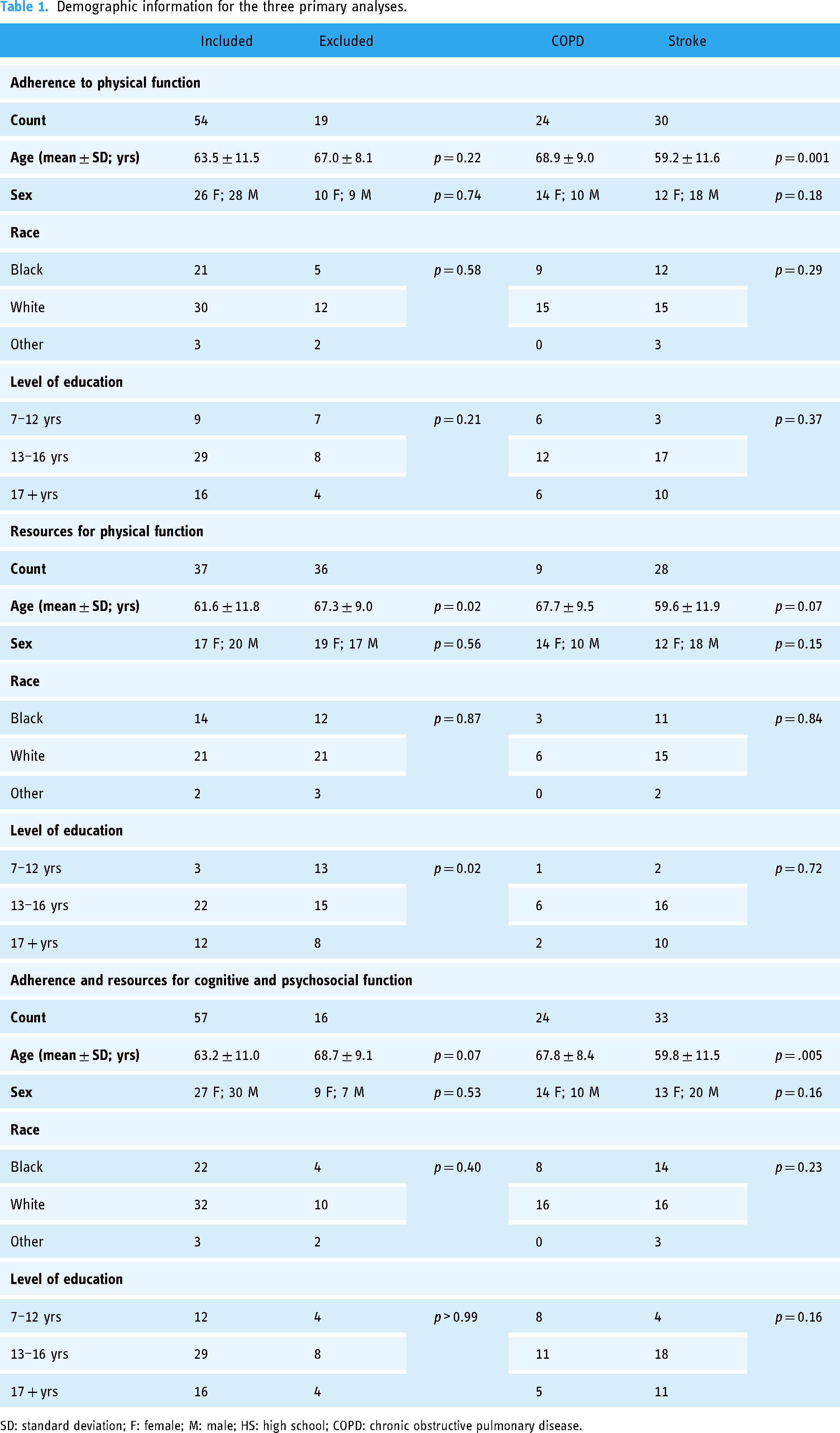

Seventy-three individuals (35 with a history of COPD and 38 with a history of stroke) have consented. As shown in Figure 1, five individuals withdrew before starting the remote monitoring protocol. Three of these individuals requested to withdraw because they no longer had time to participate, and the other two were withdrawn because the research team was unable to contact them for two months. This resulted in 68 individuals initiating the remote monitoring protocol for physical activity and psychosocial/cognitive function. All participants who provided consent were included in the analysis of completion rate; however, some participants were excluded from the analysis of adherence and resource utilization (Figure 1). Individuals were excluded from the analyses due to withdrawal, loss to follow-up, or technical issues. Reasons for withdrawal included lack of time, dissatisfaction with the Fitbit device, and not seeing any benefits from remote monitoring. We defined loss to follow having not received new data in two months and being unable to contact the participant. Demographic information of participants included in or excluded from each analysis is presented in Table 1. The two patient populations differed on age but were similar in all other characteristics. This age difference between the groups was not surprising due to the differences in disease etiology. Clinical characteristics of individuals included in each analysis are shown in Table 2.

Demographic information for the three primary analyses.

SD: standard deviation; F: female; M: male; HS: high school; COPD: chronic obstructive pulmonary disease.

Clinical characteristics of individuals included in the three primary analyses.

SD: standard deviation; COPD: chronic obstructive pulmonary disease; FEV1/FVC: fixed ratio of forced expiratory volume in 1 s/forced vital capacity.

Completion rate

The first metric of feasibility was the percentage of participants who completed the full three months of remote monitoring. For this analysis, individuals who withdrew prior to beginning remote monitoring, during the three-month period of interest, or who were lost to follow-up were defined as not completing the three-month monitoring protocol. In the entire sample, 80.1% (59/73) and 78.1% (57/73) completed three months of monitoring of physical activity and of psychosocial and cognitive function, respectively. There was no significant difference in completion rate between diagnoses related to monitoring physical activity (COPD: 74.3%; stroke: 86.8%; p = 0.24) or psychosocial and cognitive function (COPD: 68.6%; stroke: 86.8%; p = 0.09).

Adherence

Fifty-four participants were included in this analysis of adherence to monitoring physical activity (Figure 1). Data from 4979 days (COPD = 2208 days; stroke = 2771 days) were included. The average daily wear time per person during the three-month protocol was high at 77.5 ± 19.9% of the day. This equates to participants wearing the device on average 18.6 h a day during the three-month period. The percentage of wear time during daytime hours was 80.1 ± 17.6% or an average of 11.2 h. The percentage of wear time during nighttime was 73.9 ± 27.2% or 7.4 h. As shown in Figure 2(a), the average wear time during the entire day, daytime, and nighttime was not significantly different between groups (entire day: W = 291, p = 0.24; daytime: W = 327, p = 0.57; nighttime: W = 292, p = 0.24). As a sensitivity analysis, we performed the same analysis including the people who withdrew or were lost to follow-up during the three-month protocol (n = 63). In this analysis, we did not include the individuals who withdrew prior to set up or who had technical issues, as there was no data to include for these individuals. This analyses showed a high, but slightly lower average wear time during the entire day (74.0 ± 22.0%), daytime (76.5 ± 19.9%), and nighttime (70.5 ± 28.2%). There continued to be no difference in daily wear time between groups during the entire day (W = 387, p = 0.10), daytime (W = 430, p = 0.30), and nighttime (W = 388, p = 0.11). As a secondary analysis, we compared wear time in individuals with stroke based on wear location. This analysis was performed because donning the Fitbit after a stroke may present unique challenges to this patient group based on the function of the upper extremity. There was no significant difference in daily wear time based on wear location during the entire day (W = 139, p = 0.15), daytime (W = 129, p = 0.30), and nighttime (W = 149, p = 0.06; Supplemental Figure S1).

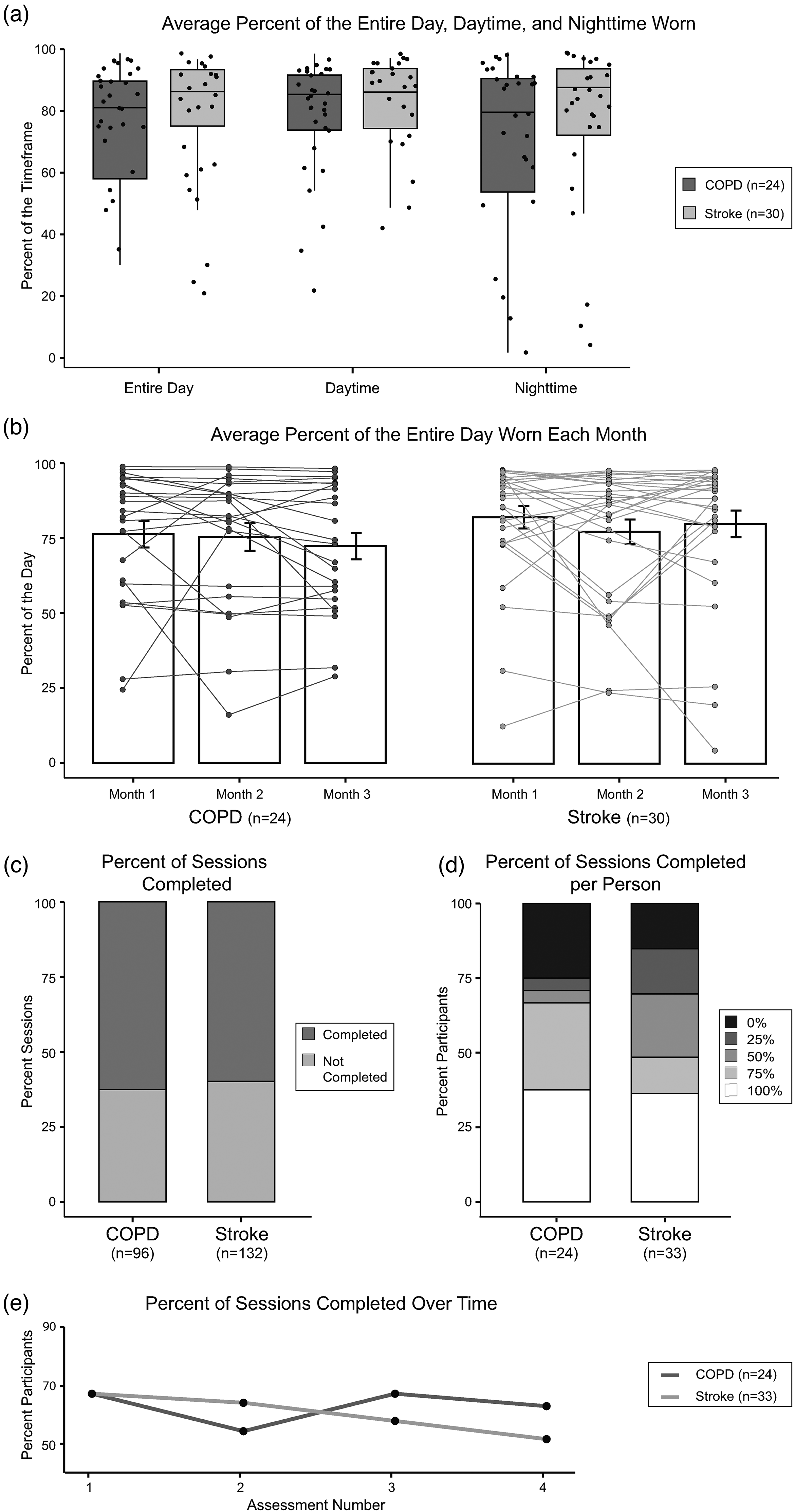

Adherence to remote monitoring of physical activity (a) and (b) and psychosocial and cognitive function (c) to (e). Adherence to monitoring physical activity was measured by the average percent of the entire day, daytime (8:00 am–9:59 pm), and nighttime (10 pm–7:59 am) that the Fitbit device was worn during the three-month period (a) and the average percent of the day the device was worn each month (b). No differences between diagnoses were observed in either adherence metric. Additionally, the percent of the day that the device was worn was not different over time. Adherence to monitoring psychosocial and cognitive function was measured by the percentage of sessions completed (c), the percentage of sessions complete per participant (d), and the percentages of sessions completed over the three-month period (e). No differences between diagnoses were observed and there was no effect of time on the completion of assessments. In (a) and (b), the dots represent individual data. In (b), the bars are the group mean and the error bars represent the standard error of the mean.

We further examined adherence to monitoring physical activity over the three-month period by comparing daily wear time during each month of the study. A repeated-measures one-way ANOVA showed that there was no significant difference between daily wear time over the three-month period (F(1.72,91.35) = 1.19, p = 0.30). A 2 × 3 ANOVA showed no main effect of diagnosis (F(1,52) = 0.91, p = 0.34) or month (F(60,88.32) = 1.13, p = 0.32) and no significant interaction between diagnosis and month (F(60,88.32) = 0.79, p = 0.44; Figure 2(b)), suggesting high adherence to wearing the device over the three-month period across diagnoses. Interestingly, the level of adherence is consistent for most individuals over the three-month period as shown by the individual data in Figure 2(b).

Fifty-seven individuals were included in the adherence analysis for remotely monitoring psychosocial and cognitive function (Figure 1). During the three-month period, 228 sessions were initiated (COPD = 96; stroke = 132). Of those sessions, 61.0% (139/228) were completed with participants completing an average of 2.44 ± 1.5 sessions per person. As shown in Figure 2(c), the percentage of sessions completed was not different between groups (p = 0.78). When examining the number of assessment sessions completed by each participant, 36.8% of participants (21/57) completed all four of the assessment sessions, 19.3% of participants (11/57) completed three of the four assessment sessions, 14.0% of participants (8/57) completed two of the four assessment sessions, 10.5% participants (6/57) sessions completed one of the four assessment sessions, and 19.3% participants (11/57) completed zero assessment sessions. A Fisher's exact test showed no significant difference in the percentage of sessions completed per person between groups (p = 0.13; Figure 2(d)). The percentage of sessions completed at each time point (i.e. sessions 1 through 4), regardless of diagnosis, was 66.7% (38/57), 59.6% (34/57), 61.4% (35/57), and 56.1% (32/57), respectively. Although there appears to be a slight decline, this change did not reach statistical significance via a Cochran's Q test (χ2(3) = 2.7, p = 0.44), suggesting stable adherence over time. Additionally, there was no difference in completion rate over time between groups (M2 < 0.001, p > 0.99; Figure 2(e)), suggesting that adherence was stable over time regardless of diagnosis.

Resources utilization

The final metric of feasibility was a quantification of the resources required to achieve the reported adherence levels. Thirty-seven participants were included in the analysis of resource utilization for physical activity (Figure 1). Of note, the sample included in this analysis was significantly younger than those excluded and had higher levels of education (Table 1). First, we assessed the percentage of participants who required reminder sessions, which were initiated after five days without synchronizing the device, during the three-month period. When looking at both diagnoses together, 35.1% (13/37 participants) required no reminder sessions, 21.6% (8/37 participants) required one reminder session, and 43.2% (16/37) required more than one reminder session, suggesting that most individuals required at least one reminder session during the three-month period. A Fisher's exact test showed no significant difference between diagnoses in the portion of participants requiring reminders (p > 0.99; Figure 3(a)). Next, we quantified how many reminder sessions were needed per person per month. Across the entire sample, 0.68 ± 0.81 reminder sessions were required per person per month. The Wilcoxon rank-sum test with continuity correction showed no significant difference between diagnoses in the number of reminders per person per month (W = 113.5, p = 0.66; Figure 3(b)), suggesting that study teams need to contact each individual wearing a device 0.68 times a month regardless of diagnosis to obtain high adherence. Lastly, we examined the response to each reminder session that was initiated. In the entire sample, 76.6% (59/77) of reminder sessions resulted in the participant synchronizing their device after the first reminder, while 7.8% (6/77) synchronized their device after a second reminder and 15.6% (12/77) required a phone call to synchronize their device. Thus suggests that participants were responsive to email and text message reminders to synchronize their device. A Fisher's exact test showed no significant difference in the response to reminder sessions between diagnoses (p = 0.75; Figure 3(c)).

Resources utilized for remotely monitoring physical activity (a) to (c) cognitive and psychosocial function (d) and (e). The resources required for monitoring physical activity were measured by the percentage of participants requiring zero, one, or more than one reminder session (a), the number of reminder sessions per participant per month (b), and the response to the reminder session (c). No differences were observed between diagnoses in these metrics of resource requirements. The resources required for monitoring psychosocial and cognitive function were measured by the response to the initiation of a session (d) and the number of reminders needed for each participant for each completed assessment (e). No differences were observed between diagnoses in these metrics of resource requirements. In (b) and (e), the dots represent individual data.

Fifty-seven participants were included in the analysis of resource utilization psychosocial and cognitive function (Figure 1). First, we examined the response to each initiated session. Of the 228 sessions initiated, 18.8% (43/228) of assessments were completed immediately upon initiation and without a reminder, 42.1% (96/228) of assessments were taken after additional reminders, and 39.0% (89/228) of assessments were not taken. A Fisher exact test showed that the response to each initiated session was not different between diagnoses (p = 0.91; Figure 3(d)). Lastly, we examined how many reminders were needed per participant for each completed session. Individuals who did not complete any assessment session were excluded from this analysis resulting in 46 individuals being included in the analysis. For the 46 participants who completed at least one testing session, 1.13 ± 0.57 reminders were needed per person for each completed session (total completed sessions = 139). As shown in Figure 3(e), a Wilcoxon rank-sum test showed no difference in this metric between diagnoses (W = 221, p = 0.49), suggesting that reminders to take assessments were needed regardless of diagnosis.

Overall adherence

In this exploratory analysis, we included individuals who completed three months of remote monitoring for both physical and cognitive/psychosocial remote monitoring, resulting in 52 participants (COPD = 22, stroke = 33). In this sample, 44.2% of participants (23/52) were adherent to all remote monitoring, 23.1% of participants (12/52) were adherent to only monitoring physical function, 17.3% (9/52) of participants were adherent to only monitoring cognitive and psychosocial function, and 15.4% (8/52) of participants were not adherent to either remote monitoring approach. The portion of individuals in each adherence category was not significantly different between diagnoses (p = 0.23; Figure 4(a)). Additionally, there was no significant difference in age (p = 0.93), sex (p = 0.73), race (p = 0.21), level of education (p = 0.78), living status (p = 0.15), employment status (p = 0.63), or method of communication (p = 0.74) between groups (Table 3).

Overall adherence to monitoring physical function and cognitive and psychosocial function. Using 75% wear time and 75% assessments completed as a threshold, individuals were categorized as being adherent to the monitoring of both physical function and cognitive/psychosocial function (dark grey), of only physical function (mid grey), of only cognitive/psychosocial function (light grey), or to no monitoring (white). There was no difference in the percent of participants in each category by diagnosis.

Characteristics of each adherence category.

SD: standard deviation; F: female; M: male; HS: high school. aWear time during the entire 24 h period was used to categorize individuals; however, daytime and nighttime wear time is reported as well. bNo statistical comparisons were completed for the percentage of the day the Fitbit was worn or the percentage of assessments completed as these metrics were used to create the adherence categories.

Discussion

Our results illustrate that remote monitoring of physical, cognitive, and psychosocial function over a three-month period is feasible in individuals with COPD or stroke. It contributes to our understanding of adherence to remote monitoring and of the resources needed to implement remote monitoring of function. Interestingly, the patient diagnosis does not appear to impact adherence to monitoring or the resources needed to monitor these functional domains, suggesting that remote monitoring may be feasible in a variety of patient populations with different impairments.

Completion rate

19.1% and 21.9% of participants either withdrew or were lost to follow-up for monitoring physical activity and cognitive/psychosocial function. Although this withdrawal rate is high, it is similar to or less than previously reported withdrawal rates in work that remotely monitored function for periods longer than 8 weeks.17,20,52,53 While this suggests that not all individuals will accept remote monitoring, we did not observe any significant differences between individuals who were included or excluded from our analyses related to adherence and where unable to characterize individuals who may not complete a long-term remote monitoring protocol. Future work with larger sample sizes may provide additional insight into characteristics that may be related to withdrawal from remote monitoring.

Adherence to monitoring physical function

Past work has shown that individuals perceive wearable devices, such as Fitbit, as an easy and useful way to monitor physical function.54–58 However, the value of remote monitoring of physical function with these devices in clinical care is dependent on patient adherence to wearing them. We found high daily wear time (> 77% of the day, on average) in individuals with COPD and stroke, indicating high adherence to wearing the device. This is consistent with past work that has found high adherence to Fitbits and other devices monitoring physical activity in individuals with multiple sclerosis,17,21 cancer, 18 joint replacement surgery, 20 and stroke. 22 However, those studies determined adherence by categorizing each day as a valid or invalid day based on a step count threshold. This does not accurately reflect how long a device was worn since an individual could exceed the established threshold by wearing the device for drastically different amounts of time. Furthermore, establishing an appropriate threshold is challenging in patient populations where activity levels may be much lower than those observed in healthy individuals.

The amount of wear time and the method for determining wear time is not trivial as it directly impacts our ability to draw conclusions from data obtained with wearable devices.59,60 In this work, we utilized heart rate, a physiologic signal, to more accurately define wear time. This approach has been used in older adults61–63 and appears to be a more accurate method for identifying wear time, 64 improving the identification of wear time by 14%. 62 Thus, this work advances our understanding of adherence to wearable devices by more accurately quantifying wear time. Importantly, we suggest that the average daily wear time in our sample (>18 h/day) is likely sufficiently high to generate quality data to be used within clinical care. This level of adherence across the two patient diagnoses that we studied indicates that remotely monitoring physical function may generalize to a wide range of patient groups.

We also found that wear time remained stable over the course of the three-month period. Unfortunately, few studies report adherence over time and the existing evidence has mixed results. For example, past work in individuals with knee arthroscopy 20 and cancer 18 showed stable adherence to wearing activity monitors over an 8- and 12-week period, respectively; however, it is contradictory to other work in individuals with multiple sclerosis 17 and joint replacement 65 where adherence declined over a three-month period. There are several potential explanations for the differing results. First, previous work examining adherence to wearing activity trackers over time has not used a physiological signal, such as heart rate, to quantify wear time. Furthermore, each of the previous studies used a different number of steps as the threshold to define a valid day. Thus, it is difficult to compare adherence rates given the limitations of the method used to define adherence. Additionally, it is possible that the patient populations included in the current work were particularly aware of the negative consequences of low physical activity. Thus, it is possible that these patient populations exhibit high levels of adherence because they understood the value of the Fitbit device to their health. Interviews of patients perceptions of the utility of these devices and of motivating factors to wearing the device would be useful in further exploring this. Lastly, we initiated reminders to individuals if we were not receiving data from the wearable device. While Block et al. 17 described a similar approach to maximizing adherence, other studies have not reported steps taken to maximize adherence. Thus, it is possible that our approach to reminding individuals to synchronize their devices assisted in stable adherence over time (see Resources Required section below).

Adherence to monitoring cognitive and psychosocial function

Our results show good adherence to remote monitoring of cognitive and psychosocial function through online assessments during a three-month time period. Although previously reported adherence rates to online assessments vary, the rate we observed is consistent with the previous literature examining remote monitoring for more than three months.23,25,53,66 Direct comparisons between adherence rates are difficult, however, due to heterogeneity in the duration of monitoring (8 days – 1 year),25,53 the frequency of assessment (3 times a day – once a month),25,67 and the patient population included (healthy controls or patient groups).23,53 From this heterogeneity, it appears that shorter study periods demonstrate higher rates of adherence, yet our work suggests that the adherence rate over a three-month period is high enough to provide valuable insights into an individual's functional status.

Additionally, past work has found that adherence to remotely administered cognitive and psychosocial measures typically declines over time.53,67,68 Contrary to this, we observed stable adherence during the three months evaluated. While we cannot determine the cause of this discrepancy, it may be due to increased motivation among the patient populations sampled. This notion is supported by the work of Beukenhorst et al. (2022), which suggested that patient populations may have higher motivation to engage in testing and monitoring of functioning. Lastly, while we did not exclude individuals with cognitive deficits, we also did not measure cognitive function at the time of enrollment. Yet, based on the prevalence of cognitive deficits in these patient populations, it is likely that a portion of our sample had cognitive deficits, suggesting that remote monitoring of cognitive function may be feasible in patient groups where cognitive deficits are common; however, further work is needed to specifically examine the role of cognitive ability on the feasibility of this type of remote monitoring.

Overall adherence

Because of the complex relationships between various domains of function, it is important to measure across these functional domains simultaneously. While past studies have examined the feasibility of remote monitoring within a single domain, the participants in the current work participated in the monitoring of physical function and of cognitive and psychosocial function concurrently. Forty-four percent of participants were adherent to remote monitoring across functional domains, while an additional 40.4% of participants were adherent to remote monitoring of either physical function or cognitive and psychosocial function. We were unable to identify specific characteristics of individuals who were compliant with all, some, or none of the remote monitoring approaches. The small sample size (< 10) in the groups that were adherent to no remote monitoring and to only the monitoring of cognitive and psychosocial function likely limits our ability to identify distinguishing characteristics. Additionally, the thresholds that we used to define these groups were arbitrary since there is no clear definition of what constitutes "good" adherence. It is possible that the thresholds used in this work are not the optimal threshold to identify characteristics related to adherence. Finally, we may not have been able to identify characteristics related to adherence due to the limited granularity of the metrics examined. For example, we used living status as a proxy for social support; however, this alone does not reflect the support system of an individual. Further work that improves our ability to identify which individuals will be adherent to various remote monitoring approaches is needed.

Lastly, when examining adherence across these domains, the type of monitoring is important to consider. Remote monitoring can be broadly classified as either a passive or an active approach. 69 Active approaches are associated with some burden to the participant. In the case of this work, the monitoring of cognitive and psychosocial function is an active monitoring approach as it required participants to complete assessments. Passive monitoring, on the other hand, collects data with no burden placed on the individual. The monitoring of physical function via wearable devices is more akin to passive monitoring since the device will collect data as long as it is worn. Thus, there may be some individuals who are less adherent to active approaches than passive approaches because of factors that we did not measure in this work (e.g. apathy, motivation). Interestingly, we observed similar adherence rates to the monitoring of physical function, which was done passively, and the active monitoring of cognition and psychosocial function. While these results are encouraging, especially regarding the potential of monitoring function actively, we must point out that the frequency of these assessment approaches is drastically different (i.e. monthly for the active approaches and minute-level for the passive approach). There may be a balance between the frequency of active assessment and adherence, which was not explored in this work. As a result, further research exploring this trade-off would be beneficial.

Resources required

For remote monitoring of physical function and cognitive/psychosocial function, we capitalized on a custom-built application to maximize adherence and provide insight into what resources would be required to obtain the levels of adherence reported in this work. This application automatically extracted data and completed a series of logic checks to assist in maintaining adherence. Specifically, if Fitbit data had not been collected within the previous five days, a reminder session was initiated. Similarly, if an individual had not completed their scheduled online cognitive and psychosocial assessments, a separate reminder was sent. Although we do not know what adherence would be without these systems, this analysis provides insight into the resources needed to achieve the reported adherence levels. In this work, a majority of individuals (64.7%; 24/37) needed at least one reminder session to synchronize their Fitbit during a three-month period. Similarly, of the cognitive and psychosocial assessments that were completed, a majority (69.1%, 96/139) required at least one reminder. Here we developed an application to send these reminders automatically; however, if an application was not accessible, this quantity of reminders would require a significant amount of people’s time. This demand on resources has been described as a potential barrier to implementing wearable devices in clinical care 2 and our results support that. This speaks to the need for scalable applications like the one we utilized here to reduce the human resources needed to ensure adherence to remote monitoring approaches.

One potential concern of an automated system is that individuals may not be as responsive to automated messages as they would be to direct contact with a person (i.e. a phone call); however, our results suggest that participants respond well to the automated messages that were sent via email or text. This is evident by the large percentage of participants who synchronized their device immediately after the reminder session was initiated (76.6%) and of assessments that were completed after additional reminders (42.1%). Although our results indicate that an automated system could reduce the resources needed to achieve high compliance, it is unclear how frequently these reminders should be sent. For example, despite the reminders, 39.0% of the online assessments were not completed. It is possible that additional reminders for each online assessment would have increased our adherence rate. Whether an application is being used or not, however, the resources needed to obtain high levels of adherence are essential to consider when implementing remote monitoring approaches in clinical care.

Limitations

Although this work adds to our understanding of the feasibility of a remotely monitoring function, it is important to note some limitations. First, we elected to use Fitbit to monitor physical function (i.e. a passive approach) and online assessments to monitor cognitive and psychosocial function (i.e. an active approach). Thus, our results related to adherence cannot be generalized across different approaches. Passive approaches to monitoring cognition, such as patterns of computer usage,70,71 may generate higher adherence rates due to the reduced burden placed on the participant. Although the withdrawal rate in the current work is similar to previous work, the withdrawals do speak to the feasibility of remote monitoring approaches. Here we have described those who withdrew in detail, but additional work, including qualitative interviews, could be helpful in further understanding the feasibility of these approaches particularly related to withdrawals. Lastly, we did not assess the impact of the timing, frequency, or method (i.e. automatic message vs a manual phone call) of reminders on adherence. Additional work to understand the role of these factors would be beneficial.

Conclusions

Our results suggest that remote monitoring of physical, cognitive, and psychosocial function simultaneously is feasible in two distinct patient populations. Here we demonstrate feasibility by a high adherence to wearing a wearable device and completing online assessments, both of which are stable over a three-month period in two distinct patient populations. However, reminders to synchronize the device and complete the assessments were required to obtain this level of adherence. This suggests that obtaining high levels of adherence is possible when using automated reminders, like the ones we used here. Lastly, adherence does not seem to be related to various demographic characteristics or to diagnosis. Together our results suggest that remote monitoring across domains of function may be feasible in a variety of patient populations with different impairments.

Supplemental Material

sj-docx-1-dhj-10.1177_20552076231176160 - Supplemental material for The feasibility of remotely monitoring physical, cognitive, and psychosocial function in individuals with stroke or chronic obstructive pulmonary disease

Supplemental material, sj-docx-1-dhj-10.1177_20552076231176160 for The feasibility of remotely monitoring physical, cognitive, and psychosocial function in individuals with stroke or chronic obstructive pulmonary disease by Margaret A French, Eva Keatley, Junyao Li, Aparna Balasubramanian, Nadia N Hansel, Robert Wise, Peter Searson, Anil Singh, Preeti Raghavan, Stephen Wegener, Ryan T Roemmich and Pablo Celnik in DIGITAL HEALTH

Footnotes

Acknowledgements

We would like to thank the Johns Hopkins Technology Innovation Center, specifically Sharon Penttinen, Kirby Smith, and Chris Doyle, for their role in developing the application to collect the data used in this work.

Contributorship

MF, RR, PS, NH, and PC conceived the study and participated in protocol development. MF, PR, BW, and NH were involved in gaining approval from the Institutional Review Board. MF, JL, AB, BW, and NH were involved in participant recruitment. MF, EK, and RR performed data analysis and data interpretation. All authors contributed to the interpretation of the data. MF wrote the first draft of the manuscript. All authors reviewed and edited the manuscript and approved the final version of the manuscript.

Ethical approval

The Institutional Review Board of Johns Hopkins University approved this study (IRB00247292 and IRB00236214).

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Sheikh Khalifa Stroke Institute; the Johns Hopkins’ inHealth Precision Medicine Initiative; and the National Institutes of Health [Grants 1F32HD108835-01 and 1K23HL153778-01].

Guarantor

PC

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.