Abstract

Nonprescription drug labels are relatively ineffective in refuting drug misconceptions. We sought to improve the effectiveness of an aspirin label as a refutation text by manipulating selective attention and label-processing strategy. After reading a facsimile label, those of 196 undergraduates who attempted to explain why shaded drug facts are “easily confused” recalled more refuting drug facts than participants who attempted to explain why those facts are “easily ignored.” However, “easily confused” processing did not change truth ratings of misconceptions associated with those drug facts. We conclude that refuted misconceptions remain in memory but are inhibited by disconfirming drug facts.

Introduction

Over-the-counter (OTC) drugs can be harmful or even fatal if consumers do not pay close attention to the information provided on the OTC label about uses, contra-indications, interactions with other drugs, potential side effects, and appropriate dosage levels and intervals.”Therapeutic errors” in self-medicating with both nonprescription and prescription drugs have been shown to account for as many as 10 percent of the 2 million unintentional “drug exposure calls” to poison control centers in the United States (Bronstein et al., 2009). OTC drugs that pose health threats if not taken as directed by the drug label include analgesic, laxative, antihistamine, cough suppressant, and heartburn medications. Given the potential risks of self-medication with nonprescription drugs, OTC drug labels are obligated to provide a consumer with the information needed to use that drug safely and effectively (Soller, 1998; Sutherland, 2010). Toward that end, the Food and Drug Administration (FDA, 1999) has standardized the organization, format, and reading level of label information as the now-familiar Drug Facts Label (DFL). In addition, the FDA mandates comprehension studies for all nonprescription drug labels (FDA, 2010; Morris et al., 1998). Nonetheless, consumers often ignore or misunderstand label information that would enable them to use a drug safely and effectively (Bennin and Rother, 2014; Lokker et al., 2009; Wolf et al., 2007). These reading failures may arise from deficiencies in the quality of the label text (Bailey et al., 2015; Schwartz et al., 2007; Shrank et al., 2007; Smith et al., 2011) or from deficiencies in the quality of a consumer’s comprehension skills (Davis et al., 2006; Morris and Aikin, 2001; Pawaskar and Sansgiry, 2006).

Prior knowledge effects may also underlie some of the problems that consumers have in making sense of simple label directives. As an illustration, consider Davis et al.’s (2006) report of a study participant who responded to the warning label directive “DO NOT CRUSH OR CHEW—SWALLOW WHOLE” by explaining that one should “chew it up so it will dissolve, don’t swallow whole or you might choke.” Conceivably, this misunderstanding might reflect inattention, low health literacy, or a poorly worded directive. However, this misunderstanding might also reflect the heightened concern of a mother of three young children about the potential risk of a choking hazard. Mindful of that concern, she might well infer that she could reduce the risk of choking by thoroughly crushing and chewing the tablet. In re-interpreting the label directive, she has reconciled the discrepancy between her prior knowledge and the authoritative text of the label in favor of her prior knowledge. Under other circumstances, she might have reconciled the discrepancy in favor of the authoritative label directive. This example suggests that substantial improvements in the comprehension of OTC drug labels may require the modification of naïve preconceptions consumers have about the safe and effective use of nonprescription drugs.

There is a small body of research that documents knowledge-based reading failures (Bostrom et al., 1994; Catlin et al., 2015; Ellen et al., 1998; Jungermann et al., 1988; Lokker et al., 2009; Ryan, 2011; Shone et al., 2011). The clearest demonstration is that by Ryan (2011) and Ryan and Costello-White (2015). Their methodology involves a pretest–posttest assessment of truth ratings assigned to a set of 30 claims about the facts to be found on a facsimile aspirin label. Of these 30 claims, 15 could be readily confirmed by reading the aspirin label; the remaining 15 could be readily refuted by reading the label. A careful reading of the facsimile label produced a substantial increase in the judged truth of label-supported claims (LSCs) on the posttest. That reading also produced a significant decrease in the judged truth of label-refuted claims (LRCs) on the posttest. In both studies, however, the increase in truth values for LSCs was much greater than the decrease in truth values for LRCs. These results demonstrate that individuals are better at using label information to confirm valid claims about aspirin drug facts than in using label information to correct invalid claims about aspirin drug facts. The disturbing implication is that reading an OTC label very carefully may be effective in confirming valid naïve beliefs about a drug but relatively ineffective in correcting invalid naïve beliefs.

Correcting misconceptions that impair text comprehension can be challenging enough to require specialized texts that are capable of producing conceptual change (see Duit and Treagus, 2015; Vosniadou, 2013, for comprehensive reviews in different scientific domains). Science educators have long recognized that students often have naïve scientific misconceptions that are not easily corrected (see McCloskey, 1983; Winer et al., 2002). For example, even college graduates may hold strongly to the mistaken belief that it is warmer in the summer than in the winter because the sun is closer to the earth (Bailey and Slater, 2004). Science texts that are designed specifically to dispel common scientific misconceptions are generally referred to as refutational texts. In her review of the literature, Tippett (2010: 953) notes that refutation texts are most effective in correcting a misconception when they (a) clearly articulate the misconception, (b) label it unambiguously as incorrect, and (c) correct the misconception with an explanation of the relevant scientific principle. More recently, investigations of the co-activation hypothesis (Kendeou et al., 2014; Danielson et al., 2016; McCrudden and Kendeou, 2014; Van Den Broek and Kendeou, 2008) have sought to identify the specific cognitive processes necessary to produce texts that promote the conceptual change necessary to modify naïve misconceptions. The co-activation hypothesis assumes that a necessary condition for conceptual change is that a given misconception and the corrective information offered by a text be simultaneously active in working memory. In addition, however, the text must prompt a reader to revise or replace the invalid memory-based conception with the valid text-based conception. The question we investigate in this study is whether it is possible to design a refutational DFL that is effective in correcting drug misconceptions and in improving the recall of the corrective information on the label.

Design constraints in creating refutational OTC drug labels

Unfortunately, the content and format of a nonprescription drug facts label is so highly constrained (FDA, 1999) that it is difficult to devise a refutational DFL using either the science education formula (Tippett, 2010) or the co-activation formula (Kendeou et al., 2014). However, there are two DFL features than can be manipulated: the use of a conspicuous general injunction to the consumer and the shading of critical label phrases. For example, the general injunction “See new warnings information” now appears on the Principal Display Panel (PDP: the front label that includes brand name and product logos) for many nonprescription drug labels. This injunction amounts only to a general recommendation to read the label carefully to discover any new warning information. The PDP could include much more specific and useful label-processing directives. A second re-design opportunity lies in making better use of the selective shading of keywords or phrases on an OTC label. Leat et al. (2014) report that 24 percent of the prescription drug labels examined in their study made use of gray highlighting to emphasize information that was not “patient-critical.” A feasible and more patient-critical use of shading would be to highlight on a nonprescription drug label those keywords or phrases associated with each Primary Communication Objective established by the FDA for that drug. Because there are only about a dozen Primary Communication Objectives for any nonprescription drug (see Raymond et al., 2002, Table 3), such highlighting would not likely compromise the label-reading process.

Controlling attentional focus

We used label shading to focus participants’ attention on keywords associated with label drug facts that confirmed valid claims about the safe and effective use of aspirin—claim-supporting drug facts (CSDFs). We also used label shading to focus participants’ attention on keywords associated with drug facts that refuted invalid claims about the safe and effective use aspirin—claim-refuting drug facts (CRDFs). Figure 1 shows the seven shadings of keywords for claim-supporting drug facts on a facsimile Drug Facts Panel. Figure 2 shows the seven shadings of keywords for claim-refuting drug facts on the same Drug Facts Panel. Participants were randomly assigned to read either a facsimile label (a PDP and two Drug Facts Panels) with the 15 CSDFs shaded or a facsimile label with the 15 CRDFs shaded. It should be noted that shading only CSDFs (as in Figure 1) will direct participants’ attention to those drug facts and reduce their attention to CRDFs. Similarly, shading only CRDFs (as in Figure 2) will direct participants’ attention to those drug facts and reduce their attention to CSDFs. As a consequence, less attention will be paid to unshaded drug facts than if no shading were used, and more attention will be paid to shaded drug facts than if no shading was used.

Drug Facts Panel with claim-supporting drug facts (CSDFs) shaded and the associated label claims shown in text boxes.

Drug Facts Panel with claim-refuting drug facts (CRDFs) shaded and the associated label claims shown in text boxes.

Promoting label/belief discrepancy detection and label reconciliation

We expected that keyword shading would focus our participants’ attention on the associated drug facts in the label text and serve to activate whatever preconceptions they had about those drug facts. Our analysis of pre-label ratings suggests that in the aggregate, the shading of claim-supporting drug facts (see Figure 1) are likely to activate label-supported preconceptions, and the shading of claim-refuting drug facts are likely to activate label-refuted preconceptions (see Figure 2). According to the co-activation hypothesis (McCrudden and Kendeou, 2014; Van Den Broek and Kendeou, 2008), it would be necessary for a misconception and its corrective drug fact to be active in working memory simultaneously in order for differences between the two to be detected and reconciled. We sought to devise a processing strategy that would likely facilitate the detection and reconciliation of discrepancies between activated preconceptions and shaded drug facts. We also sought to devise a control processing strategy that would be less likely to facilitate the detection and reconciliation of discrepancies. We identified two semantic processing strategies that seemed likely to vary in their effectiveness in correcting misconceptions.

“Easily-confused” label processing

If we ask participants to explain a why consumer might misunderstand or be confused by a particular shaded drug fact, we encourage them to engage in both elaborative and distinctiveness processing. Elaborative processing occurs as participants seek to generate different plausible interpretations of a drug, including those based on their own preconceptions (Stein and Bransford, 1979). Distinctiveness processing occurs as participants seek to determine how different any generated interpretation might differ from the interpretation most consonant with the wording of the shaded drug fact (Jacoby et al., 1979). This encoding strategy involves deep semantic processing that is likely to promote the detection of discrepancies between alternative interpretations and the most veridical label–based interpretation. The emphasis upon possible misinterpretations should also likely bias participants toward resolving any discrepancies in favor of the interpretation that adheres most closely to the text of the drug fact. As a consequence, easily-confused processing should promote the refutation of misconceptions and the recall of the CRDFs associated with those misconceptions.

“Easily-ignored” label processing

If we instead ask participants to explain why a consumer might disregard or ignore a particular shaded drug fact, we encourage them to assess the importance of that drug fact in relative isolation. Some elaborative processing will occur as participants seek to judge the relative significance of the shaded drug fact. Some distinctiveness processing will also occur as participants seek to determine what evidence they have concerning the authoritativeness of the shaded drug fact. Neither form of processing promotes a direct consideration of possible misconceptions nor how they might be reconciled with a label-based interpretation of the shaded drug fact. As a consequence, easily-ignored processing should be less likely than easily-confused processing to promote the refutation of misconceptions and the recall of the CRDFs associated with those misconceptions.

The encoding difference produced by easily-confused and easily-ignored processing strategies will not be enacted as we intend them to be if participants do not take the instructions seriously. In order to increase that likelihood, we asked our participants to spend 40 seconds considering each shaded drug fact and explaining in writing why it might be confused or why it might be ignored. Numbered lines were included at the bottom of each label facsimile for these explanations to be recorded as participants were paced through the reading of the aspirin label text (only four of the seven numbered lines for the first Drug Facts Panel are shown in Figures 1 and 2). The experimenter also attempted to induce a demand bias by asking participants to write very legibly so that their responses could be easily classified.

Cued-recall of CRDFs

Label information may fail to correct a mistaken belief about the safe and effective use of a nonprescription drug because that belief biases the label comprehension process or because the label text fails to modify the mistaken belief. If an individual simply misunderstands corrective label information as supporting an existing mistaken belief, then the accurate recall of that corrective information should be compromised. However, if an individual fails to use corrective label information to modify a mistaken belief (see Van Den Broek and Kendeou, 2008), then the corrective information may be accurately recalled even though the mistaken belief is retained. Therefore, the recall of corrective label information provides useful information about why nonprescription drug labels may fail to correct mistaken beliefs. We assessed the recall of the 15 CRDFs that corrected mistaken beliefs about aspirin by using a cued–recall test rather than a free-recall test. Our cued recall test made use of the keyword associated with each corrective drug fact as a retrieval cue for that fact. For example, in both shading and both processing conditions we provided menstrual pain as a cue for the recall of the drug fact “Aspirin can be used for the temporarily relief of menstrual pain.” We did not use a free-recall test (“recall all of the drug facts you can recall from the aspirin label”) because the cued recall test would be a more sensitive measure of recall for the 15 CRDFs. A cued-recall test also allows us to control the amount of time spent in trying to recall a given drug fact and makes the recall task more manageable for our participants.

Experimental design and hypotheses

We used a mixed analysis of covariance to analyze changes in truth ratings for label-supported claims (LSCs) and label-refuted claims (LRCs) as a function of reading the aspirin label. We manipulated the between-groups factor Keyword Shading to highlight CSDFs or CRDFs. We manipulated the between-groups factor Processing Strategy to encourage Easily-Confused or Easily-Ignored label processing (see Figures 1 and 2 for detailed examples). Trials (pretest and posttest) served as the single within-groups factor. Rather than including Claim Type (LSC or LRC) as a second within-groups factor, we conducted separate analyses of the label-supported and the label-refuted aspirin claims in order to clarify and simplify our analyses. Our covariate measures were designed to assess two components of health literacy. One covariate is domain-specific knowledge of the organization and format of nonprescription drug labels—our assessment of DFL knowledge. The second covariate is the extended-range vocabulary test we used as a surrogate measure of general verbal ability. Because we used cued recall only as a posttest measure, those data were analyzed with a between-groups analysis of covariance with Attentional Focus and Processing Strategy as our between-groups factors and Label Format Knowledge and Verbal Ability as our covariate measures.

Activation hypothesis

We hypothesize that our attentional-focus manipulation will encourage participants to pay close attention to claim-associated label information. We hypothesize that pretest drug claims so targeted will be modified by label processing to a greater degree than those drug claims not so targeted. Ratings of LSCs should increase, and ratings of LRCs should decrease.

Discrepancy reconciliation hypothesis

We hypothesize that participants given easily-confused instructions will engage in label-processing efforts that will focus them on the ways in which LSCs are verified by supporting label drug facts, and the ways in which LRCs are discredited by refuting label drug facts. Such efforts are expected to promote the detection of discrepancies between LRCs and CRDFs on the facsimile label. Easily confused instructions are also expected to focus participants on the reconciliation of claim/label discrepancies. As a consequence, truth ratings for shaded LSCs will increase and truth ratings for shaded LRCs will decrease with easily-confused label processing, while ratings for unshaded LSCs will increase significantly less and ratings for unshaded LRCs will decrease significantly less. In contrast, we hypothesize that participants given easily-ignored instructions will focus on the salience and interest value of drug facts in isolation rather than on the degree to which they verify LSCs and discredit LRCs. As a consequence, easily-ignored processing will increase truth ratings for shaded and unshaded LSCs to a lesser degree than will easily-confused processing. Easily-ignored label processing will also decrease truth ratings for shaded and unshaded LRCs to a lesser degree than will easily-confused processing.

Cued recall hypothesis

Finally, we expected that CRDFs would be most often recalled when their keywords have been shaded (see Figures 1 and 2) and their associated LRCs have been activated and reconciled with label information. The cognitive effort associated with easily-confused processing will promote elaborative and distinctiveness processing to a greater degree than will the cognitive effort associated with easily-ignored processing. As a result, recall for the CRDFs should be greater with easily confused label processing. We did not assess recall of claim-supporting drug facts because the focus of the study is on refutational-processing effects.

Method

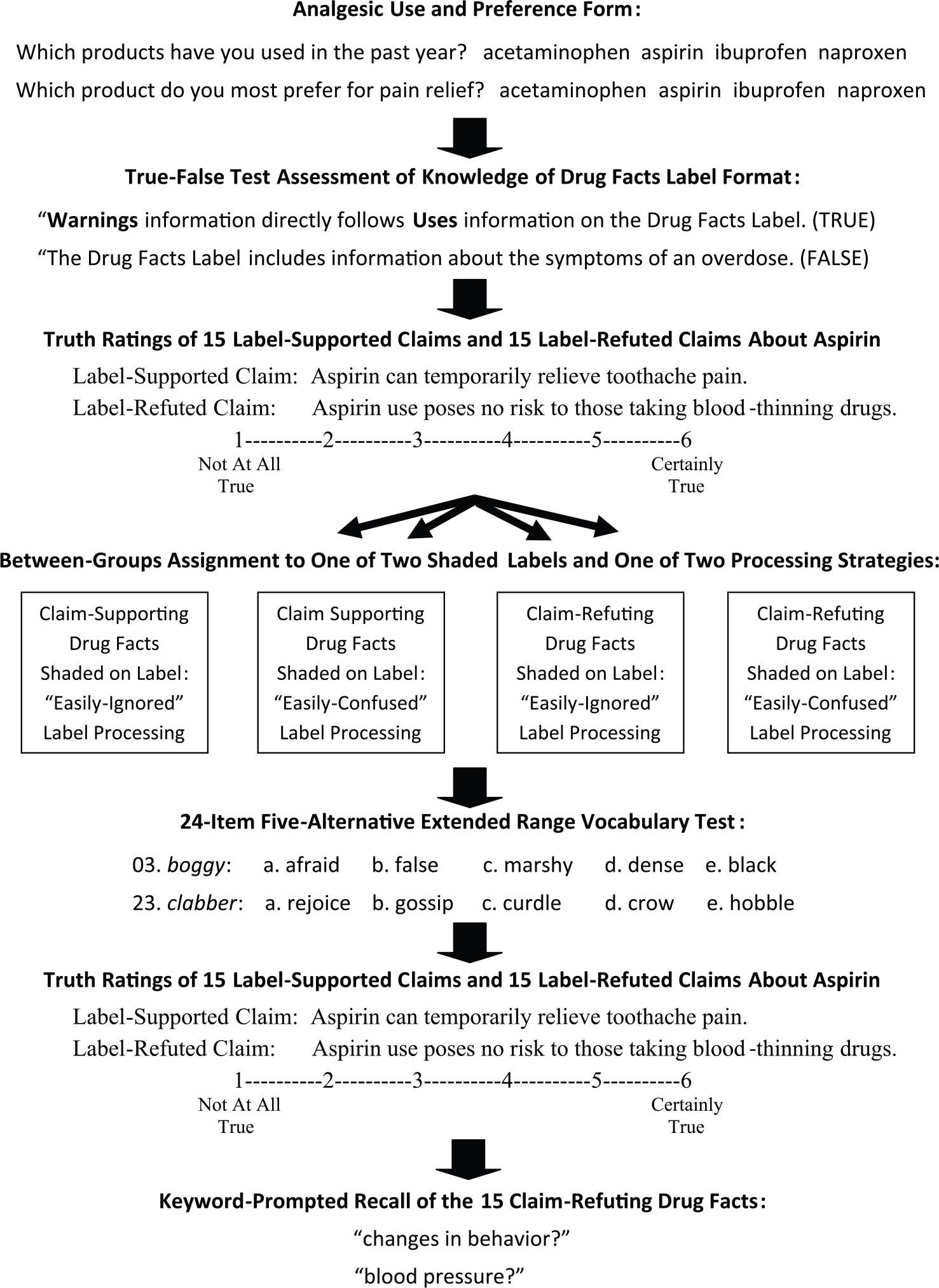

Figure 3 is a schematic representation of the sequence of tasks in this study. The discussion below provides detailed information about nature and purpose of each task.

Sequence of tasks and measures in the study.

Participants

In order to satisfy a research requirement in their introductory psychology course, 170 female and 26 male students signed up for the study using an online research-participation system. Only self-reported native English speakers between the ages of 18 and 24 years were recruited for the 50-minute, small-group sessions. After signing the consent form for the study, participants completed a short form that provided us with information about their use of and preference for nonprescription single-ingredient analgesics. The great majority of our participants used nonprescription analgesics other than aspirin. In total, 145 of them reported having used ibuprofen in the previous 30 days, 98 reported using acetaminophen during that period, 48 reported using naproxen, and 18 reported using aspirin. Only 6 participants reported aspirin to be their preferred medication for pain relief, 114 reported a preference for ibuprofen, 35 reported a preference for acetaminophen, and 33 reported a preference for naproxen.

Materials and measures

DFL knowledge

We created a 30-item true–false questionnaire that sampled participants’ knowledge of both the nature and ordering of information found on nonprescription drug labels (FDA, 1999). Some items focused on their knowledge of the ordering of label headings (e.g. “Warnings information usually follows Uses information on the Drug Facts Label,” “Directions about when and how to take an over-the-counter drug are near the end of the Drug Facts Label”). Other items focused on specific information included in all nonprescription drug labels (e.g. “Over-the-counter drug labels explain how a drug should be stored to maintain its potency,” “All over-the-counter drugs include specific cautions for pregnant or breast-feeding women”). Scores on this questionnaire ranged from 12 to 25, with a mean score of 19.26 (standard error of measurement (SEM) = 0.18).

Truth ratings of claims about the drug facts for aspirin

Our survey included 15 LSCs and 15 LRCs about the drug facts to be found on a regular-strength aspirin label (see Tables 1 and 2 for the complete set of claims). LSCs were statements that paraphrased specific drug facts on the aspirin label and are clearly supported by the label text. For example, the claim Students of high-school age or younger should not be treated with aspirin for symptoms of chicken pox or the flu is clearly supported by the label drug fact “Children and teenagers who have or are recovering from chicken pox or flu-like symptoms should not use this product.” Figure 1 shows a portion of the facsimile aspirin label with seven LSCs shown on the right next to their CSDFs. LSCs targeted supporting drug facts from throughout the facsimile label. Each targeted drug fact provides information essential for the safe and effective use of aspirin as an analgesic.

Means and their standard errors for pretest truth ratings (1 = Not All True; 6 = Certainly True) of label-supported claims.

Means and their standard errors for pretest truth ratings (1 = Not All True; 6 = Certainly True) of label-refuted claims.

In contrast, LRCs were statements that misrepresented specific drug facts on the aspirin label and are clearly refuted by the label text. For example, the claim Aspirin should not be used to treat menstrual pain because of the risk for increased bleeding is clearly refuted by the label drug fact “Uses temporarily relieves … menstrual pain.” Figure 2 shows seven LRCs shown on the left next to their CRDFs. LRCs targeted refuting drug facts from throughout the facsimile label. Each CRDF also provided information essential for the safe and effective use of aspirin. In addition, the LRC targeting each refuting drug fact was written to sound as plausible as possible. Our set of 15 LRCs therefore represented misconceptions about aspirin that were discrepant from the label text.

Facsimile aspirin labels

We created a three-page, legal-sized facsimile of the Principal Display Panel and the two Drug Facts Panels for a brand name aspirin label. We used Arial font sizes of 14 and 12, respectively, for the standardized headings and text to provide a highly legible version of the label format mandated by the FDA. The wording and the use of bold face, italics, punctuation, and spacing corresponded to that used on the actual aspirin label. One version of this label used gray highlighting to focus participants’ attention on the 15 label drug facts that verified the 15 LSCs (shown in part in Figure 1). A second version of the label used gray highlighting to focus participants’ attention on the 15 label drug facts that discredited the 15 LRCs (shown in part in Figure 2).

Label-processing instructions

The cover sheet for each facsimile label instructed participants to attend carefully to the words on the label sheets that were shaded in gray. Participants were told that they would have to read the instructions very carefully on their own. Once all participants had read their instructions, they were asked to read them very carefully a second time to be certain they understood what information they needed to write down on the numbered lines in the label facsimile. The two facsimile labels were assigned randomly to participants in each session. Participants either read a label in which only CSDFs were shaded (as illustrated in Figure 1) or a label in which only CRDFs were shaded (as illustrated in Figure 2).

Participants randomly assigned to use the easily-confused processing strategy read the following instructions:

On the next three pages, you will find a simulated version of the actual Drug Facts shown on all aspirin labels. You will notice that certain words and phrases on the label are shaded in gray. These words and phrases highlight label information often misunderstood by individuals using aspirin because they seem wrong or confusing. Each shaded word or phrase highlights an idea that consumers sometimes misunderstand. We want you to see if you can figure out why this idea is often misunderstood. For each shaded word or phrase, ask yourself, “How could anyone be confused by this idea?” You will have a short amount of time to write down your answer in a brief sentence on one of the numbered lines at the bottom of each sheet.

Participants randomly assigned to use the easily-ignored processing strategy read the following instructions:

On the next three pages, you will find a simulated version of the actual Drug Facts shown on all aspirin labels. You will notice that certain words and phrases on the label are shaded in gray. These words and phrases highlight label information that is often ignored by individuals using aspirin because it seems unimportant or irrelevant. Each shaded word or phrase highlights an idea that consumers sometimes overlook. We want you to see if you can figure out why this idea is often misunderstood. For each shaded word or phrase, ask yourself, “How could anyone ignore this idea?” You will have a short amount of time to write down your answer in a brief sentence on one of the numbered lines at the bottom of each sheet.

General verbal ability

After participants had read their aspirin label as directed, they were asked to complete the Extended Range Vocabulary Test (ERVT: Ekstrom et al., 1976)—a convenient index of general verbal ability. The 6-minute, 24-item test is suitable for Grades 7–16 and covers a wide range of ability levels. Each item includes five alternatives, and individuals are encouraged to guess if they are able to eliminate at least one of the alternatives. ERVT scores for our participants ranged from 0 to 16, with a mean score of 8.93 (SEM = 0.23). We asked participants to complete the ERVT after they had read the facsimile aspirin label in order to prevent them from rehearsing label information prior to the aspirin claims posttest.

Cued recall test of claim-refuting drug facts

In all experimental conditions, recall of label information discrediting each of the 15 LRCs was tested by presenting the shaded words or phrases associated with those CRDFs (for example, menstrual pain and changes in behavior in Figure 2). When the experimenter read aloud the keyword phrase for a claim-refuting drug fact (see examples shaded in Figure 2), participants were given 20 seconds to write down the complete drug fact before the experimenter read the next keyword phrase. Two different random orderings of these cues were alternated from session to session.

Procedure

Participants were tested in six- to eight-person group sessions, removing the necessary forms from their materials folders as directed by the experimenter. The experimenter explained that the purpose of the study was to find out what kinds of problems consumers might experience in reading an OTC drug label for aspirin carefully enough to be able to self-medicate safely and effectively.

Participants were then asked to complete the Drug Facts Label Knowledge questionnaire. Next, they were asked to use a 6-point Likert scale (1 = Not At All True; 6 = Certainly True) to report their impressions of the validity of each of 30 aspirin claims. We told them some claims accurately reflected information found on the product label for regular-strength aspirin, while other claims contradicted label information on the label. We told participants to rely on first impressions in making their judgments. We emphasized that they should report their personal beliefs or feelings rather than what they might have read or heard. We also informed participants they would complete the questionnaire a second time after they had read our facsimile aspirin label. They self-paced themselves through the claims, taking about 3 minutes to make their ratings.

After completing the aspirin claims pretest, participants were told they would be reading a facsimile aspirin label and answering questions about how consumers might respond to the information on that label. They were told to read the cover sheet instructions for their label very carefully to find out what kind of questions they would have to answer about shaded items on the three-page facsimile. After reviewing their instructions a second time, participants then had 10 minutes to read the label in its entirety, taking 40 seconds to respond to each shaded item as they read. The PDP page in the facsimile had only one shaded text element and a single numbered line at the bottom of the page. The experimenter allowed participants seconds to read that page carefully and then write their easily-confused or easily-ignored response on that line. The two Drug Facts Panel pages that followed the PDP page each had seven shaded text elements and seven numbered lines for participants’ responses. In order to ensure that all participants spent just 10 minutes reading and responding to the label, the experimenter asked them to maintain a pace of 40 seconds for responding to each shaded item; 5-, 2-, and 1-minute warnings were given to assist in maintaining that pace.

When participants finished the label-processing task, they had 6 minutes to complete the 24-item ERVT. The experimenter explained that this vocabulary test assessed differences in verbal ability that might influence performance on the label-processing task. The aspirin claims questionnaire was re-administered immediately thereafter. To minimize any confusion in rating the claims, the posttest ordering of claims was the same as the pretest ordering. We anticipated that participants might attempt to recall their initial ratings in order to appear consistent or to recall facts from the simulated label in order to appear informed. To ensure that they focused instead on reporting their label-updated personal beliefs, we strongly and specifically encouraged them to report their current intuitions:

Don’t try to recall to recall your previous ratings or anything you may have read or heard about aspirin—instead just rate the statements according to what you now believe. Read each statement carefully, but then make a snap judgment about how true it now seems to you.

The experimenter again allowed them 3 minutes to complete the set of 30 claims.

Finally, the experimenter administered the cued recall test by reading aloud the shaded words or phrases that signaled those label drug facts that refuted each of the 15 label-refuted aspirin claims, allowing 20 seconds for each response to be written. Participants were told only that the recall test focused on information about aspirin that was particularly important for its safe and effective use. Once the cued recall task was completed, participants were given a brief explanation of the purpose of the study and allowed to ask any questions they wished.

Analysis

Unit of analysis

For greater clarity in presenting our findings, we analyzed the data for LSCs and LRCs separately. In order to ensure that any observed effects were neither participant-dependent nor claim-dependent, we conducted parallel analyses of participants and claims. In the individual participant analyses, the participant was the unit of analysis, and we collapsed across claims to obtain mean LSC and LRC scores for each participant on the pretest and the posttest. In this analysis, trials was a within-subjects factor; Attentional Focus and Processing Strategy was between-subjects factors. In the individual claim analysis, the LSC or LRC was the unit of analysis, and we collapsed across participants to obtain mean Attentional Focus, Processing Strategy, and Trials scores for each of the 15 LSCs and each of the 15 LRCs. In this analysis, Attentional Focus, Processing Strategy, and Trials were within-claims factors.

Data transformations

We first demonstrated that participants assigned randomly to the four experimental conditions did not differ in their pre-label ratings of LSCs or LRCs. Because they did not differ significantly, we subtracted pre-label scores from post-label scores to obtain the updating index we use in our main analyses. For LSCs, the index should be positive because reading the label should increase the truth ratings for those claims. For LRCs, the index should be negative because reading the label should decrease the truth ratings for those claims. The means reported for the individual–participant analyses were all adjusted for the covariate effects of Verbal Ability and Label Format Knowledge scores. The means reported for the individual claim analyses were unadjusted because the two between-subjects covariates could not be used in those analyses. Recall counts were analyzed using both the square-root-of-x transformation and the square-root-of-(x plus 1) transformation (Howell, 2007: 318–324; Tabachnick and Fidell, 2007: 86–89). Because these transformations did not change the pattern or significance of effects, untransformed counts were used in the analysis of cued recall performance reported below.

Results

Overall, the 15 pre-label LSCs received substantially higher truth ratings, M = 4.58, SEM = 0.04, than did the 15 pre-label LRCs, M = 3.38, SEM = 0.04. We analyzed the pre-label truth ratings as a function of claim category and experimental condition to determine whether participants in our four experimental groups differed initially in their ratings of LSCs and LRCs. The effect of claim category was highly significant, F(1, 191) = 898.20, MSE=0.16, p = 0.000, partial eta-squared = 0.83. However, that very strong effect did not interact with either of the treatment groups to which participants were subsequently assigned, Fs < 1.00. Although our participants already assigned higher truth ratings to LSCs than to LRCs, our Attentional Focus and Processing Strategy manipulations of the label-reading process were intended to increase the initial LSC truth ratings and to decrease the initial LRC truth ratings.

This analysis of the pretest ratings served two purposes. First, it demonstrates that there is some validity to our participants’ preconceptions about aspirin and that we were successful in creating plausible LRCs. Second, the absence of pretest differences among the four treatment groups allows us to simplify the analysis by subtracting pretest ratings from posttest ratings to create change scores as our index of label-induced changes in beliefs. Claim-supporting effects are visible as positive values on a bar graph, and claim-refuting effects are visible as negative values on the same bar graph (see Figure 4).

Attentional and processing effects on changes in truth values for label-supported and label-refuted claims.

Effect of label processing on changes in truth ratings for label-supported aspirin claims

Individual-participant analysis

Pre-label truth ratings of LSCs were subtracted from post-label ratings to obtain a measure of the degree to which reading the aspirin label increased truth ratings for claims explicitly confirmed on the aspirin label—an index of CSDF Shading or CRDF Shading. These change scores were entered into a two-factor between-subjects analysis of covariance that included Processing Strategy (Easily Ignored or Easily Confused) and Attentional Focus (Claim-Relevant or Claim-Irrelevant) as fixed factors, with Label Format Knowledge and Verbal Ability entered as covariates. Neither Label Format Knowledge, F(1, 190) < 1.00, nor Verbal Ability, F(1, 190) = 2.53, p < 0.15, was a statistically significant covariate. The main effect of Attentional Focus was significant, F(1, 190) = 45.00, MSE = 0.26, p < 0.001, partial eta-squared = 0.19, but Processing Strategy, F(1, 190) = 1.55, p < 0.20, and its interaction, F(1, 190) < 1.00, were not. Covariate-adjusted truth ratings for LSCs increased significantly more when claim-relevant label text was shaded (M = +0.76, SEM = 0.05) than when it was not (M = +0.27, SEM = 0.05). The covariate-adjusted change score means for all four participant-based LSC effects are shown in Figure 4.

Individual-claim analysis

Pre-label truth ratings for each of the 15 LSCs were subtracted from their post-label ratings to obtain confirmational updating scores. These change scores were entered into a within-claims two-factor analysis of variance that included Processing Strategy (Easily-Ignored or Easily-Confused) and Attentional Focus (CSDF Shading or CRDF Shading) as factors. Because individual claim scores were collapsed across individual participants, there is no meaningful way to include either Label Format Knowledge or Verbal Ability as covariates in this analysis. The main effect of Attentional Focus was significant, F(1, 14) = 21.06, MSE = 0.10, p < 0.001, partial eta-squared = 0.60, but neither Processing Strategy, F(1, 14) = 3.92, p < 0.10, nor the interaction, F(1, 14) < 1.00, had a significant effect. Truth ratings for LSCs increased significantly more when claim-relevant label text was shaded (M = +0.76, SEM = 0.12) than when it was not (M = +0.28, SEM = 0.07). The change score means for all four claim-based LSC effects are also shown in Figure 4.

Ceiling effects

It is possible that processing strategy effects were not detected for truth ratings of LSCs because increases in posttest truth ratings for those claims are limited by ceiling effects. The distributions of pretest ratings for all 15 LSCs are negatively skewed toward the scale maximum of 6.00, indicating that posttest increases for those claims may be underestimated by our rating scale. We evaluated this possibility by examining the correlation between the sizes of overall pretest ratings for each of the LSCs with the overall sizes of posttest increases for those claims. LSCs receiving lower pretest ratings should be less subject to ceiling effects and larger, while LSCs receiving higher pretest ratings should be more subject to ceiling effects and smaller. Therefore, a significant negative correlation between pretest ratings and posttest increases for LSCs would reflect the presence of ceiling effects. Spearman’s rho values for easily-confused processing were significantly negative whether the supporting label drug facts were shaded on the label or not, rs = −0.72, p < 0.05 and rs = −0.63, p < 0.05, respectively. Rho values for easily ignored processing were also significantly negative whether the supporting drug facts were shaded or not, rs = −0.84, p < 0.01 and rs = −0.56, p < 0.05. These results suggest that the absence of processing strategy effects in the claim-by-claim analysis of LSC truth ratings may be due to artifactual ceiling effects.

Reversals of initial judgments of label-supported claims

It is to be expected that an effective refutational label will reverse initial “false” judgments of LSCs but not reverse initial “true” judgments of LSCs. Truth ratings of LSCs were dichotomized into “true” judgments of 4, 5, or 6 and “false” judgments of 1, 2, or 3 to determine if reading the label reverses initial judgments. If the label is effective in modifying LSCs, then the probability that “false” judgments of those claims change correctly to “true” judgments should be high, and the probability that “true” judgments of LSCs change incorrectly to “false” judgments should be low. Only the claim-by-claim data have enough false-to-true and true-to-false cases to permit a meaningful overall analysis of such changes. The median false-to-true reversal rate is 75.6 percent for the 15 LSCs, and the median true-to-false reversal rate for those claims is 6.2 percent. There are too many empty cells to permit a similar analysis within each treatment condition or for individual participants. As far as LSCs are concerned, these data demonstrate that a careful reading of the label is 12 times more likely to correct a misconception than it is to induce a misconception.

Effect of label processing on changes in truth ratings for label-refuted aspirin claims

Individual-participant analysis

Pre-label truth ratings of LRCs were subtracted from post-label ratings to obtain a measure of the degree to which reading the aspirin label decreased truth ratings for claims refuted by the label—an index of refutational updating. These change scores were entered into a two-factor analysis of covariance that included Processing Strategy (Easily–Ignored or Easily Confused) and Attentional Focus (CSDF Shading or CRDF Shading) as between-subjects factors, with Label Format Knowledge and Verbal Ability entered as covariates. Neither Label Format Knowledge, F(1, 190) < 1.00, nor Verbal Ability was a significant covariate, Fs < 1.00. The main effect of Attentional Focus was significant, F(1, 190) = 19.03, MSE = 0.36, p < 0.000, partial eta-squared = 0.09, but neither Processing Strategy, F(1, 190) < 1.00, nor its interaction, F(1, 190) = 2.13, p < 0.15, had a significant effect. Covariate-adjusted truth ratings for LRCs decreased significantly more when the claim-relevant label text had been shaded (M = −0.15, SEM = 0.06) than when it had not (M = +0.23, SEM = 0.06). The adjusted change score means for all four LRC effects are displayed in Figure 4.

Individual-claim analysis

Pre-label truth ratings for each of the 15 LRCs were subtracted from their post-label ratings to obtain refutational updating scores. These change scores were entered into a two-factor analysis of covariance that included Processing Strategy (Easily Ignored or Easily Confused) and Attentional Focus (CSDF Shading or CRDF Shading) as within-claims factors. The main effect of Attentional Focus was significant, F(1, 14) = 6.19, MSE = 0.35, p = 0.05, partial eta-squared = 0.31, but Processing Strategy was not, F(1, 14) = 1.72, p < 0.20. Truth ratings for LRCs decreased significantly more when claim-relevant label text was shaded (M = −0.15, SEM = 0.17) than when it was not (M = +0.23, SEM = 0.06). However, the Focus-by-Strategy interaction was significant, F(1, 14) = 8.02, MSE = 0.03, p < 0.05, partial eta-squared = 0.36. Curiously, the interaction represents a difference between the two processing strategies when participants had been focusing on irrelevant label text (that relating to claim-supporting drug facts rather than claim-refuting drug facts). There is a significantly greater decrease in truth ratings for Easily-Confused than for Easily-Ignored processing in the claim-irrelevant label condition, t(14) = 2.74, SEMDiff = 0.07, p = 0.016. The difference is not artifactual because it is comparable in size to the nonsignificant interaction found in the individual participants’ analysis reported above. However, an effect that involves processing strategy differences for the focus-condition label on which CSDFs were not shaded is not theoretically meaningful. Only the difference between the two processing strategies in the claim-relevant shading condition is crucial in this analysis. The difference in change-scores between the two processing strategies in that condition is not significant, t (14) < 1.00. Change-score means for all four LRC effects are displayed in Figure 4.

“Basement” effects

It is possible that processing-strategy effects were not detected for truth ratings of LRCs because decreases in posttest truth ratings for those claims are limited by basement effects. The distributions of pretest ratings for 8 of the 15 LRCs are positively skewed toward the scale minimum of 1.00, indicating that posttest decreases for those claims may be underestimated by our rating scale. We evaluated this possibility by examining the correlation between the sizes of overall pretest ratings for each of the 15 LRCs with the overall sizes of posttest decreases for those claims. LRCs receiving higher pretest ratings should be less subject to basement effects and larger, while claims receiving lower pretest ratings should be more subject to basement effects and smaller. Therefore, a significant negative correlation between pretest ratings and posttest decreases for LRCs would reflect the presence of basement effects. Spearman’s rho values for easily-confused processing were nonsignificant whether the refuting drug facts were shaded on the label or not, rs = −0.14, p > 0.50 and rs = −0.02, p > 0.50, respectively. Rho values for easily-ignored processing were nonsignificant whether the refuting drug facts were shaded or not, rs = −0.02, p > 0.50 and rs = +0.15, p > 0.50. We conclude that the absence of processing-strategy effects in the claim-by-claim analysis of LRCs cannot be attributed to artifactual basement effects.

Reversals of initial judgments of label-refuted claims

It is to be expected that an effective refutational label will reverse initial “true” judgments of LRCs but not reverse initial “false” judgments of LRCs. Truth ratings of LRCs were dichotomized into “false” judgments of 1, 2, or 3 and “true” judgments of 4, 5, or 6, as we did above for label-supported claim ratings. If the label is effective in modifying misconceptions about LRCs, then the probability that “true” judgments of LRCs change correctly to “false” judgments should be high and the probability that “false” judgments of LRCs change incorrectly to “true” judgments should be low. Again, only the claim-by-claim data have enough true-to-false and false-to-true cases to permit a meaningful overall analysis. The median true-to-false reversal rate is 28.6 percent, and the median false-to-true reversal rate for the 15 LRCs is 34.9 percent. As far as LRCs are concerned, a careful reading of the drug label is about as likely to induce a misconception as it is to correct a misconception.

The nonparametric Mann–Whitney U test (Mann and Whitney, 1947; Reinhard et al., 2000) indicates that the median beneficial (false-to-true) reversal rate for LSCs is significantly higher than the median beneficial (true-to-false) reversal rate for LRCs, U = 5, two-tailed, p < 0.05. That test also indicates that the median detrimental (true-to-false) reversal rate for LSCs is significantly lower than the median detrimental (false-to-true) reversal rate for LRCs, U = 7, two-tailed, p < 0.05. There are too many empty cells to allow us to use this test to evaluate our experimental hypotheses, but these results do document how difficult it is for individuals to make use of authoritative label information to correct misconceptions they have about a drug.

Cued-recall of claim-refuting drug facts

We used gist scoring to code recall protocols for the label drug facts that refuted each of the 15 LRCs. Drug fact recall counted as correct only if a participant (a) unambiguously reported the substance of the refuting drug fact and (b) made no reference of any sort to the label-refuted claim. For example, the claim-refuting drug fact “It is especially important not to use aspirin during the last 3 months of pregnancy …” was cued with months of pregnancy in all experimental conditions. Label-based responses such as “last three months—do not take,” “should not take aspirin if you are 3 months before delivery,” or “do not take if you are in 1st trimester” were counted as correct. Ambiguous responses such as “do not take if pregnant for more than 3 months,” “if you are pregnant, you shouldn’t take aspirin,” or “3 months of pregnancy, do not take” were counted as incorrect. We also excluded from the recall count any response that echoed one of the 15 LRCs. For the recall cue months of pregnancy, the associated drug fact was “It is especially important not to use aspirin during the first three months of pregnancy.” Therefore, we counted as incorrect such responses as “first 3 months possible birth defects,” “under 3 months, don’t use,” or “less than 3 could hurt newborn.”

Many of the drug facts are bulleted under headings that describe the action to be taken under specified conditions. Because “fever lasts more than 3 days” is a drug fact that reflects a bulleted condition under the action heading Stop use and ask a doctor if, participants had to report both the bulleted condition and the action heading for their response to be counted as correct. For example, the three responses “Consult a doctor if a fever lasts 3 days or more,” “more than 3 days, contact doctor immediately stop,” and “fever lasts for 3 days, stop use & contact doctor” were all counted as correct in our scoring scheme. However, responses such as “fever lasts more than 10 days, consult doctor,” “if fever lasts for more than 3 days stop taking it,” and “if fever doesn’t go away stop see a doctor” were not. In each of these latter three responses, the condition or the action is either incorrectly specified or unspecified.

The second and third authors independently coded each of the 15 cued-recall responses for all 196 participants. The recall form was separated from the rest of the participants’ materials so that the two coders were unaware of the experimental condition associated with the responses they were coding. Beforehand, both coders classified all recall responses from 20 randomly chosen protocols. The first author then resolved through discussion with them what additional coding guidelines would resolve all coder disagreements. For example, the response “consult a doctor if a fever lasts 3 days or more” was counted as correct because we reasoned that stopping the use of the drug was implicit in deciding to consult a doctor and would often go unsaid by participants. However, “if fever lasts for more than 3 day stop taking it” was not counted as correct because we reasoned that seeing a doctor was not implicit in deciding to stop using the drug. These supplemental guidelines were included as notes in the Excel files that both coders used to record their responses. Between-coder agreement was assessed as the Pearson correlation between the two recall counts obtained for each participant. Overall, that value was r(194) = 0.96, p < 0.001; Pearson values ranged from 0.95 to 0.97 for the four experimental conditions. Untransformed counts of label-based recall were used in the final individual -participant and individual-claim analyses because no square-root transformation affected the outcome of those analyses.

Individual-participant analysis

Verbal Ability was a significant covariate in the between-subjects analysis of covariance of cued-recall counts for label-refuting drug facts, F(1, 190) = 4.38, p < 0.05, but Label Format Knowledge was not, F(1, 190) = 1.94, p < 0.20. Both Attentional Focus, F(1, 190) = 79.24, MSE = 6.86, p < 0.0001, partial eta squared = 0.29, and Processing Strategy, F(1, 190) = 5.24, MSE = 6.86, p < 0.05, partial eta-squared = 0.03, significantly affected cued recall counts. The interaction of Focus and Strategy was not significant, F-(1, 190) < 1.00. Cued recall scores were significantly higher when the shading focus was on claim-refuting drug facts (M = 8.16, SEM = 0.27) than when the shading focus was on label-supporting drug facts (M = 4.81, SEM- = 0.26). Cued recall scores were also significantly higher with easily-confused processing of CRDFs (M = 6.91, SEM = 0.27) than with easily-ignored processing of CDFs (M = 6.06, SEM- = 0.26). The covariate-adjusted cued recall totals for refutational drug facts have a maximum count of 15 claims for each participant. In the individual-claim analysis described below, the maximum count is 50 participants -for each claim. In order to make the results of the two analyses comparable, the total counts for both analyses are shown as percentages in Figure 5.

The effects of claim-relevant and claim-irrelevant shading effects and “easily-ignored” and “easily-confused” label processing on the cued-recall of claim-refuting drug facts.

Individual-claim analysis

Verbal Ability and Label Format Knowledge were not entered as covariates in this analysis because they are between-subjects variables associated with individual participants rather than individual drug facts. As in the individual-participant analysis, both Attentional Focus, F (1, 14) = 40.45, MSE = 51.64, p < 0.001, partial eta-squared = .74, and Processing Strategy, F (1, 14) = 4.74, MSE = 20.30, p = 0.047, partial eta-squared = 0.25, were significant. The interaction of Focus and Strategy was again nonsignificant, F(1, 14) = 1.26. CRDFs were more often recalled when they had been shaded in the facsimile label (M = 27.27, SEM = 2.25) than they were when CSDFs been shaded (M = 15.47, SEM = 2.31). Refutational drug facts were also recalled significantly more often with the easily-confused processing of shaded CRDFs (M = 22.63, SEM = 2.27) than with the easily-ignored processing of shaded, CRDFs (M = 20.10, SEM = 2.04). As noted for the individual-participant analysis above, the total counts for the individual-claim analysis are shown as percentages in Figure 5.

Discussion

Summary of findings

We found clear support for our activation hypothesis in the claim-rating data. When we highlighted key words or phrases in the label text to draw our participants’ attention to claim-supporting drug facts, truth ratings of label-supported claims increased significantly more than when we did not. When we highlighted keywords or phrases in the label text to draw our participants’ attention to claim-refuting drug facts, truth ratings of label-refuted claims decreased significantly more than when we did not. With claim-relevant highlighting, the increase in truth-ratings for label-supported claims was about five times greater than the decrease in truth ratings for label-refuted claims.

We found no support for our reconciliation hypothesis in the claim-rating data. When participants used the easily-confused processing strategy to evaluate highlighted drug facts, truth ratings of LSCs did not increase significantly more than when participants used the easily-ignored processing strategy. Similarly, when participants used easily-confused processing to evaluate highlighted drug facts, truth ratings of LRCs did not decrease significantly more than when participants used the easily-ignored processing strategy.

We found support for both the activation hypothesis and the reconciliation hypothesis in our cued-recall data. The attentional-focus manipulation and the label-processing manipulation had significant and independent effects on the cued recall of claim-refuting drug facts. Cued-recall of these CRDFs was significantly greater when they had been shaded in the label text than when they had not. Cued-recall of claim-refuting label text was also significantly greater with easily-confused label processing than with easily-ignored processing. The difference in cued-recall rates attributable to attentional focus was more than three-and-a-half times greater than that attributable to processing strategy.

Interpretation of findings

Our data provide partial support for the co-activation account of refutation text effects on misconceptions. Focusing our participants’ attention on drug facts that refuted a false claim about aspirin clearly decreased our participants’ faith in such claims. However, a label-processing strategy that involved a consideration of how consumers might be confused by those drug facts produced no additional decrease in their faith in those false claims. One interpretation of our claim-rating data is that our easily-confused processing strategy simply failed to prompt the reconciliation of false aspirin claims with refuting drug facts on the label. However, this interpretation fails to explain why refutational easily-confused processing produced an increase in the recall of refuting drug facts.

Prior to offering an interpretation of our findings, we should note that prior efforts to use this paradigm to produce refutation change have been only modestly effective, with confirmatory updating values on our 6-point scale ranging from 0.70 to 1.04 and corrective updating values ranging from −0.11 to −0.39 (Ryan, 2011; Ryan and Costello-White, 2015). The updating scores in this study are +0.76 and −0.27, respectively. Surprisingly, the shading manipulation and the processing intervention in this study did not produce substantially greater updating effects than those we had previously obtained by simply asking participants to read the label carefully while checking for typographical errors.

Our interpretation of the findings in this study is that refutational change does not involve replacing a naïve misconception with a correct conception. Instead, we believe that a naïve misconception remains in memory, but the refuting information is stored as well and can be used to override that intuitive belief with a more reasoned explanation. With respect to Kahneman’s (2003) distinction between intuitive and logical modes of thought, we assume that the truth-rating data in this study reflect intuitive reasoning, while the cued-recall data reflect logical reasoning. From this perspective, the reconciliation process involves a reasoned comparison of a LRC and the aspirin label’s CRDF. When the reconciliation process favors the label’s authoritative drug facts, what is added to memory is the reconciling account and the CRDF, and what remains in memory is the intuitive belief that underlies the LRC.

Tversky and Kahneman (1971) used just an account such as the one we propose to explain why the intuitive judgments of statistically sophisticated researchers were inconsistent with formal statistical principles. More recently, Masson et al. (2014) have shown that physics experts are more likely than physics novices to activate brain regions involved in inhibition when they evaluate an electrical circuit displays that illustrate a common misconception about electrical circuits. Masson et al. argue that the misconception lives on in the memory of the experts but that experts suppress their intuitive belief in favor of a logic-based scientific account. In our study, participants were given instructions to rely on their “gut feelings” when they rated aspirin claims, but they were told to be as accurate and as clear as possible in responding to the recall prompts for CRDFs. We believe that the recall of a refuting drug fact reflects the successful reconciliation of an incorrect claim with label information. However, the feeling-based misconception lives on in memory to bias intuition-based responses on the claim-rating task.

Limitations of the study

One limitation of our study is that our processing manipulation may have been relatively weak. Participants using either the “easily-confused” or the “easily-ignored” processing strategy had only 40 seconds to develop a response to each of the 15 label drug facts they considered. Participants were not forced to justify their responses nor to rate their confidence in them. However, the significant effect of processing strategy on cued recall indicates that the manipulation was an effective one, but that it did not affect truth ratings. As we have explained above, it may be that misconceptions and their intuitive truth values endure even after they have been supplanted by scientific explanations.

A second limitation of this study is that our label-comprehension task lacks ecological validity. We designed this study to create optimal conditions for correcting misconceptions. We shaded keywords associated with CRDFs, and we asked participants to spend 40 seconds writing an explanation of how a reader might ignore or misunderstand the refuting drug fact. Our finding that truth ratings of invalid claims changed decreased very little under these conditions suggests that more routine label encounters (purchasing a drug or using the drug) would be even less productive in refuting misconceptions. The recall of specific drug facts should be compromised as well. If a consumer is only casually attending to label information when purchasing or using a drug, recall for drug facts is likely to be close to that we found for those drug facts that were not shaded on our facsimile labels. In that condition, our participants only recalled about one-third of the 15 unshaded drug facts. It would be of interest to examine truth-rating and cued recall performance for individuals who used a nonprescription drug on a regular basis to treat a chronic condition. Those adults taking daily 81-mg doses of aspirin as part of a “heart-healthy” regimen would be an ideal consumer population for such a study.

A final limitation is our choice of aspirin as a nonprescription drug. We chose aspirin because we find that young adults are much more likely to use ibuprofen or acetaminophen as their analgesic of choice. In principle, the amenability of aspirin misconceptions to label-based correction may be quite different from that of other nonprescription analgesics. However, we have obtained similar results in an unpublished study in which participants read ibuprofen or acetaminophen labels to confirm or correct preconceptions about those two sets of drug facts. Both drug labels give rise to the same strong confirmational effects and weak refutational effects we observe for aspirin. Future research should focus on other classes of nonprescription drugs such as antihistamines, antitussives, antidiarrheals, and acid reducers.

Implications

This study demonstrates the potential for the strategic use of text shading and PDP warnings to improve the comprehension of nonprescription drug labels. We shaded 15 claim-refuting text elements in one of our facsimile labels and 15 claim-confirming text elements in the other. Given that very few label elements are shaded in current drug labels (Leat et al., 2014), much greater use can be made of this tactic for calling consumers’ attention to drug facts that refute naïve misconceptions about a drug product. However, research will first be necessary to identify the drug-specific misconceptions that would constitute “Primary Refutation Objectives.” Currently, the FDA helps drug manufacturers define and refine the Primary Communication Objects for a nonprescription drug label. However, it may be even more important to consider whether a drug label is effective in correcting stubborn misconceptions about the safe and effective use of nonprescription drugs.

Further research will also be necessary to determine which general warnings ought to be showcased on the bottle-cap or the PDP of a nonprescription drug container. The injunction to “Always Read the Label” or “See New Warnings Information” may be contributing little to fostering powerful label-processing strategies. We have shown that the general injunction to “consider why you might be confused by some of these drug facts” or to “consider why you might ignore some of drug facts” can improve drug facts recall. Other general injunctions may be more effective than either one of these label-processing strategies. Improving the quality of general label-comprehension strategies need not require the omission of singularly important warnings. For example, the bottle-cap for Tylenol® includes the vital information that the product “Contains Acetaminophen” as well as the general injunction to “Always Read the Label.”

This study makes it clear that calling consumers’ attention to critical label information and urging them to be mindful of the potential for misunderstanding may prove ineffective in refuting intuitive misconceptions about aspirin. Future research should focus first on the generality of this effect—across a range of analgesics, a range of nonprescription drug classes, and a range of consumer populations. Just as importantly, future research must identify innovative ways to make it possible for nonprescription drug labels to serve as refutation texts. A nonprescription drug label fails to serve its intended purpose if it confirms consumers’ valid preconceptions about the drug but does little to refute their invalid preconceptions.

Footnotes

Declaration of conflicting interests

The author(s) declare no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical approval

The authors received formal approval from the University’s Institutional Review Board to conduct this study. All participants were treated in accordance with the ethical guidelines of the American Psychological Association.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.