Abstract

Objectives

To establish principles informing a new scoring system for the UK's Clinical Impact Awards and pilot a system based on those principles.

Design

A three-round online Delphi process was used to generate consensus from experts on principles a scoring system should follow. We conducted a shadow scoring exercise of 20 anonymised, historic applications using a new scoring system incorporating those principles.

Setting

Assessment of clinical excellence awards for senior doctors and dentists in England and Wales.

Participants

The Delphi panel comprised 45 members including clinical excellence award assessors and representatives of professional bodies. The shadow scoring exercise was completed by 24 current clinical excellence award assessors.

Main outcome measures

The Delphi panel rated the appropriateness of a series of items. In the shadow scoring exercise, a novel scoring system was used with each of five domains rated on a 0–10 scale.

Results

Consensus was achieved around principles that could underpin a future scoring system; in particular, a 0–10 scale with the lowest point on the scale reflecting someone operating below the expectations of their job plan was agreed as appropriate. The shadow scoring exercise showed similar levels of reliability between the novel scoring system and that used historically, but with potentially better distinguishing performance at higher levels of performance.

Conclusions

Clinical excellence awards represent substantial public spending and thus far the deployment of these funds has lacked a strong evidence base. We have developed a new scoring system in a robust manner which shows improvements over current arrangements.

Keywords

Introduction

A scheme to reward senior doctors who make an outstanding contribution to supporting the delivery of National Health Service goals in the UK has been in place since 1948. Iterations of the scheme led to the ‘Clinical Excellence’ award arrangements, in place between 2004 and 2022. The Advisory Committee on Clinical Excellence Awards of the UK government's Department of Health and Social Care advises health ministers on these awards. 1 The scheme is designed to reward senior doctors who deliver above the standards expected of a consultant, academic general practitioner, or dentist fulfilling the requirements of their post. Until 2022, around 300 new national awards were made by the Advisory Committee on Clinical Excellence Awards each year in England, at a recent annual cost of around £125 million ($154 million). 2 Four levels of national award existed: bronze, silver, gold and platinum; each level being associated with a financial reward ranging from between £36,192 ($44,537, bronze awards) to £77,320 ($95,253, platinum awards). Applicants for awards were assessed on evidence they provided regarding their contribution to delivering high-quality service, developing high-quality service, leading and managing high-quality service, research and innovation, and teaching and training. Evidence from applicants was independently scored by members of 15 regional subcommittees; approaches to scoring are informed by published research evidence. 3

Between March and June 2021, the Advisory Committee on Clinical Excellence Awards undertook a national consultation on the potential revision of the national awards scheme, with the implementation of proposals subsequently taking place in Spring 2022. A revision was intended to address concerns regarding equity of access to and opportunity in securing these awards. Changes introduced involve revision, rebranding and renaming of the scheme, a new focus on ‘clinical impact’, doubling the number of awards and an overall reduced value of individual awards. 4 The 2022 application round adopted the pre-existing four-point scoring scale (Box 1). However, a core part of a revised scheme is to have a scoring system that is robust, equitable, able to distinguish between levels of excellence and aligned with the new scheme's overall goals.

Overview of current application and assessment arrangements.

Sixteen regional subcommittees of the Advisory Committee on Clinical Excellence Awards in England and Wales, comprising professional, employer and lay members, assess applications for national-level awards.

Applications are assessed following the submission of evidence relating to five domains of performance:

Delivering a high-quality service Developing a high-quality service Leadership and managing a high-quality service Research and Innovation Teaching and training

Evidence is submitted by applicants, employers provide sign-off to show applications are supported, and citations and rankings may be provided by national nominating bodies for new applications. Previously employer rankings and citations, and citations from third parties, were included. The scoring and rankings by employers have been removed from 2021 but employers are still required to provide validation that the information presented by applicants is correct.

Each domain is independently scored by multiple trained assessors, using a four-point scale:

0 – does not meet contractual requirements or insufficient information produced to make a judgement

2 – meets contractual requirements

6 – over and above contractual requirements

10 – excellent

Here we present the findings of research designed to inform the development of a revised scoring system that complements our previously reported qualitative research. 5

Methods

We aimed to establish a set of principles that could be used to inform a new scoring system by employing an online Delphi process. 6 We used the resulting findings to develop a new scoring scheme, which we piloted in a shadow scoring exercise using anonymised applications from previous rounds of clinical excellence awards.

Delphi

For the purposes of this process, we utilise the definition of Fink et al. that an expert ‘… should qualify for selection because they are representative of their profession, have power to implement the findings, or because they are not likely to be challenged as experts in the field’. 7 We recruited 50 panellists from the existing Advisory Committee on Clinical Excellence Awards scoring committee members (all of whom were approached for an expression of interest by the Advisory Committee on Clinical Excellence Awards on our behalf), and representatives of national professional bodies (identified by us using contact details in the public domain). We targeted people from a range of ages, genders, and ethnicities and the Advisory Committee on Clinical Excellence Awards committee members makeup, including lay, professional and employer committee members and both chair and ordinary members.

In each round, panellists were presented with a number of items (Table 1) which they rated on a scale of appropriateness on a 9-point Likert scale (1=‘highly inappropriate’ to 9=‘highly appropriate’). Panellist's confidence in these ratings was solicited, but this was predominantly rated highly and so findings related to confidence are not presented. Panellists were invited to provide feedback on each item as well as (in Round One) to suggest alternative wording. Between rounds, panellists were provided with a summary of other panellists’ item ratings as well as a summary of comments, thus encouraging members to reflect on their judgements in subsequent rounds, shifting individual opinions into group consensus. Ratings and comments were made anonymously such that other panellists and researchers were unaware which panellists had contributed which ratings, thereby reducing the limitations imposed by group dynamics whereby a few dominate.8,9 After each round, items were rated as either appropriate (median rating of appropriateness 7 or higher), unsure, or not appropriate (3 or lower), and whether or not there was any disagreement using the RAND/University of California at Los Angeles appropriateness method. 10 Disagreement was based on the inter-percentile range (the difference between the 30th and 70th percentile appropriateness ratings), and the inter-percentile range adjusted for symmetry (see Appendix A). 10 We considered there to be evidence of disagreement when the inter-percentile range was more than the inter-percentile range adjusted for symmetry. A high-level summary of the material provided and of revisions made in each round is included in Appendix A with the full text of the online Delphi process available online. 11

Items rated in the online Delphi exercise along with the median ratings of appropriateness and whether there was disagreement among panellists in their rating.

Shadow scoring exercise

To support the shadow scoring exercise, we developed a portfolio of training cases based on actual historical applications. Cases included both those that historically proved to be successful or unsuccessful and both new and renewal applications at various levels of awards. Cases were anonymised by removing all names of individuals and employers. Where details of research outputs were included author lists were replaced with the number of authors and the position of the applicant within the author list and as a lead author (first, corresponding or last author). Historically, applicants have been able to include citation text provided by employers, individuals, recognised national nominating organisations and specialist societies. 12 Nominating bodies/specialist societies would also provide a ranking of the application relative to all supported applicants. Cases were based on 20 applications, where for 18 of which, two versions were created, one including all material, and a second with employer citation and ranking material removed (two applications did not contain citation material), that is, a total of 38 training cases. The historical scores given to each case, by each assessor, when the original application was made were made available to the research team.

Twenty-four experienced assessors of clinical excellence awards formed membership of a shadow scoring panel recruited for purposes of the research (recruited in the same way as Delphi panellists, including eight who were involved in both aspects of the study). Each member was sent 20 training cases and asked to score each of them using an online submission form representing a proposed novel scoring scheme developed following the Delphi exercise. Each of the 20 cases represented a different application, with each case being randomised to include a citation and ranking material or not on an assessor basis (i.e. some assessors saw an application with citations, while others saw it with citations removed and all assessors saw a mix of cases with and without citations). For the two cases where no citation material was available, all assessors were asked to score the same version. The order in which assessors were asked to score cases was randomised for each assessor to remove any order effects. We selected assessors to maximise diversity in terms of age, gender, ethnicity and committee role. In addition to scoring the applications, assessors provided feedback on the guidance, revised scoring and descriptors, using a structured set of questions. Further details of the scoring exercise are in Appendix B.

We employed multi-level regression models to examine the variance in scores given to applications that were either attributable to differences in the quality of the application itself, attributable to some assessors scoring systematically higher or lower on all applications, or unexplained/residual variance. We then considered only the variance attributable applications themselves and the residual variance and estimated what percentage of the sum of these two sources was attributable to the application. We did this separately for the scores from both this shadow scoring exercise and from original historic scores. The higher the percentage attributable to the application, the more reliable a scoring system is at distinguishing good and poor performance. Finally, we augmented models with an indicator of whether citation material was included or not, seeking to estimate the mean difference in scores reported when citation material and associated rankings were present. We provide further details of statistical analyses in Appendix C.

Oversight

We established a three-member advisory group for the project that met with members of the research team in August 2021 and February 2022. The research team also worked closely with the Advisory Committee on Clinical Excellence Awards (meeting monthly senior representatives of the Advisory Committee on Clinical Excellence Awards) to access data to inform the development of training cases and in the recruitment of some participants. We also worked with a six-member PPIE group, throughout the research. Members, three women and three men, were from varied employment and professional backgrounds and a mix of working and retirement age.

Results

Delphi

Forty-five (90%) of those invited participated in Round 1 of the process. Of those, 10 were women (22%). The average age of participants was 57.18 years (standard deviation = 6.74). Twenty-eight (62%) identified ‘Health service provider (e.g. Hospital, general practice)’ as their place of primary employment; seven (16%) selected University; four (9%) selected public sector or government body (e.g. Department of Health, National Health Service England Clinical Commissioning Group, etc.); and six (13%) selected ‘Other’. Thirty-five (78%) participants described themselves as White, with the remainder describing themselves as Asian. Thirty-six (80%) participants had experience of being an Advisory Committee on Clinical Excellence Awards committee member and 12 (27%) participants represented professional bodies or representative groups (with three panellists declaring both roles). Of these, 40 panellists completed Round 2, and 39 completed all three rounds.

Further details of the Delphi process are provided in Supplemental Appendix D. Median appropriateness ratings and disagreement status for the items rated in each round are shown in Table 1. Based on these findings, we established a set of principles on which a future scoring system may be based (Box 2). Based on these six principles, we developed a new scoring system and guidance for assessors which was employed in the shadow scoring exercise.

Six scoring principles emerging from the Delphi process.

(i) The definition of clinical excellence as laid out in the Advisory Committee on Clinical Excellence Awards Guidance for Assessors 2021 13 is appropriate, namely “Clinical excellence is about providing high-quality services to the patient. It is also about improving the clinical outcomes for as many patients as possible by using resources efficiently and making services more productive. Applicants need to show our assessors evidence of how they have made services more efficient and productive, and improved quality at the same time, as well as demonstrating their role as an enabler and leader of health provision, prevention and policy development and implementation.” Further specific detail in respect of this definition is provided in the relevant guidance material covering the five current domains of performance.

(ii) The definition of clinical excellence used in the assessment of applications for clinical excellence awards should be the same for all applicants, but the scoring of applications submitted by part-time workers should be amended to reflect the part-time nature of their role, with the financial reward made on a pro-rata basis.

(iii) For each domain, the description of scale points could be based on multiple aspects simultaneously and these aspects would include their performance relative to the expectations of their job description, the reach of an applicant's contribution to National Health Service care, the significance of an applicant's contribution to National Health Service care, and the impact of an applicant's contribution to National Health Service care. For example, ‘an applicant scoring at the highest point on the scale would be seen to be making an outstanding contribution, which is substantially exceeding the expectation of job description, highly impactful, highly significant, and of national or international reach.’

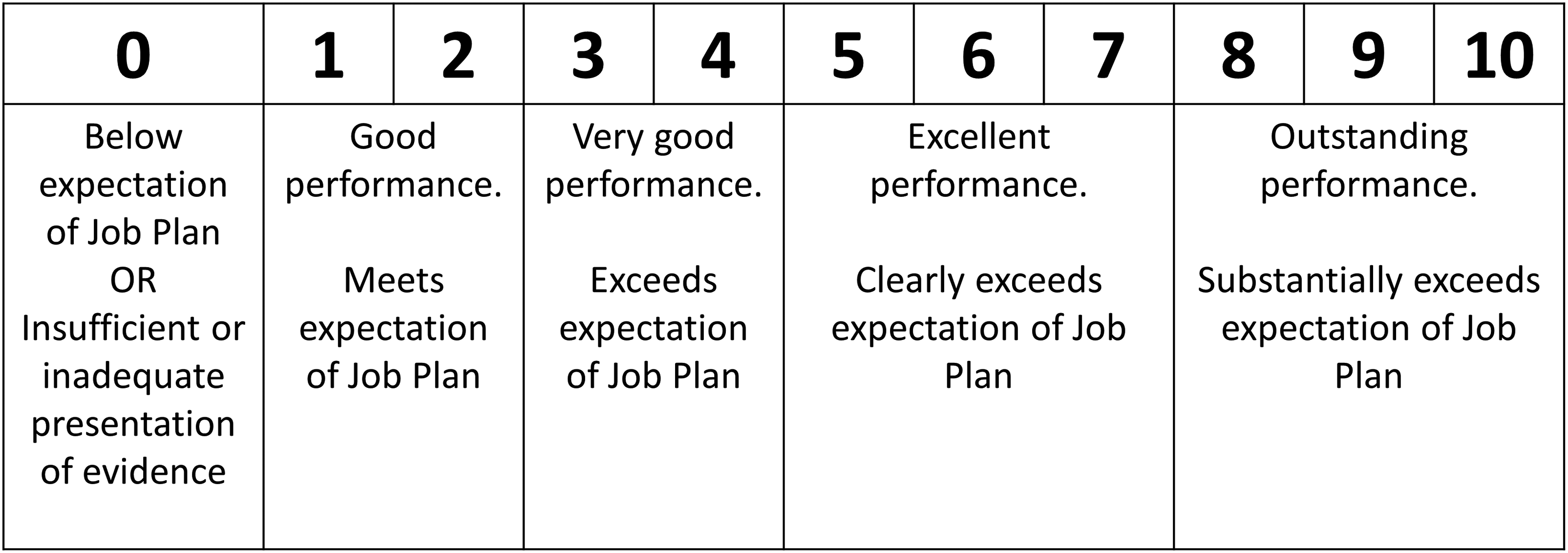

(iv) For each score given, an evenly spaced larger number of possible scores should be allowed to permit more granularity than exists in current scoring arrangements, for example, 0, 1, 2, 3, 4, 5, 6, 7, 8, 9 or 10 (compared with the four-point scale (0, 2, 6, and 10) in present arrangements). Clearly, defined scale descriptors covering a range of points should be provided, with the lowest point on the scale reflecting someone operating below the expectations of their Job Plan (see Figure 1 for example).

(v) Every applicant should provide evidence of excellence in all five domains, with scores for their four highest scoring domains contributing to their final score, but with the condition that the domains concerning service development and delivery were part of the scoring domains.

(vi) As part of the scoring process, applicants need to be benchmarked against their peers. However, there were three acceptable approaches to defining those peer groups: applicants could be benchmarked against all clinicians working in similar jobs/roles; all clinicians, eligible for an award, working in similar jobs/roles; all clinicians working in their speciality in similar jobs/roles.

Shadow scoring exercise

The shadow scoring exercise yielded a total of 472 scores given by 24 assessors for 20 cases (eight scores were unusable due to missing case identifiers; Figure 2 left panel). The distribution of historic summary scores using the original scoring system shows that many possible values are not used (Figure 2 right panel), a pattern not exhibited with the new scoring system where assessors have utilised the fuller range of scores available. There was less of a ‘ceiling effect’ with the new scoring system than is seen in the historic scores. The results of the mixed effects models are shown in Table 2. The total variance is larger for the new scoring system reflecting a larger range of scores used. However, 48% of the variance in individual scores was attributed to assessors when using the new scoring system suggesting that there may be more hawkish/dovish scoring than with the current scoring system, which can be seen in the distribution of scores for individual applications (Figure 3). When the variance attributable to assessors is ignored, a slightly smaller percentage of variance attributable to the application in the new scoring system (46%) than the current system (51%) is evident, but the confidence intervals on these estimates overlap (new scoring system 95% CI 39.3% to 53.4%, current scoring system 95% CI 40.5% to 62.0%) implying that there is no statistically significant difference in the reliability of the scales used in this way to distinguish between good and poor performance. With the new scoring system, we found that when applications scored higher there was less variability in scores between assessors (correlation coefficient between variance and mean = −0.72, p < 0.001). This implies that discrimination might be better for higher-scoring applications where there is more agreement between assessors on clinical excellence. No such correlation was found for the historic scores. Finally, we observed a high degree of correlation (correlation coefficient = 0.77, p < 0.001) between the old and new scoring system scores.

Example of application of scale descriptors for scale 0 to 10.

The distribution of scores assigned to the 20 applications by assessors in the shadow scoring exercise using the new scoring system (left, mean 30.6, range 1 to 50, interquartile range 24.5 to 38) and when originally considered for a clinical excellence award using the original scoring system (right, mean 33.9, range 14 to 50, interquartile range 26 to 42).

Box and Whisker plot showing the distribution of scores given to individual training cases by the 24 assessors.

Variance components for application scores when using the new and current scoring systems.

When considering the effect of citations and associated ranking material on scores, we found no evidence that scores were any different when citations were included (mean difference 0.65-point increase, 95% CI −0.42 to 1.73, p = 0.234) compared with applications without citation material. Furthermore, there was no evidence from a random slope model that the effect of citations varied by case.

Assessors were generally positive about the new scoring system and the guidance provided (further details in Supplemental Appendix E). Just over half of the time, citation material (when present) was considered to be useful. The most commonly reported time spent coming to an assessment of an application was 10–20 min (45% of assessments), with just 2% taking over an hour.

Discussion

Utilising a consensus-based approach to gain the views of experts, we identified six key principles which, we suggest, should be reflected in any revised approach to assessing and scoring applications for national clinical excellence awards. These comprise: (1) adopting the current definition of what constitutes clinical excellence as outlined in relevant documentation and guidance 13 ; (2) ensuring that scoring of applications takes account of less than full-time working arrangements and making awards available on a pro-rata basis; (3) providing clear scale descriptors to ensure applications are scored relative to the expectations of the applicants' job plan and reflect the reach, significance and impact of an individual's contribution; (4) adoption of an extended scale to facilitate a more granular approach to the scoring of applications; (5) provision by applicants of evidence across five domains, but with only their top four highest scoring domains contributing to their final score, and with evidence of clinical service contribution being mandatory; and (6) benchmarking of final scores against those of peers working in similar jobs/roles.

A shadow scoring exercise based on an assessment of experimental applications by a constituted panel of experienced assessors identified potential benefits of a proposed new 10-point scoring scale. Observed benefits (compared with existing scoring arrangements) included: the utilisation of a wider range of scores, less evidence of a ceiling effect in scoring and a similar reliability of scores overall, while potentially better differentiation at higher levels of performance, where needed most. However, given the large variation between assessor scores and the relatively low variability attributable to applications concerns remain over the number of assessors needed to reliably score applications. 3 The inclusion of citation material appeared to add little valuable information to inform scoring. Overall, assessors were positive in their views of the proposed new scoring arrangements.

Strengths and limitations

We report findings from the most rigorous research programme yet commissioned to inform the development of the national clinical excellence awards scheme in the UK. The scheme is unique in terms of scope and the extent of the publicly funded financial awards made under the scheme. A robust and reliable approach to the assessment of applications is warranted. We adopted a multi-method approach to the research, utilised experienced assessors to support the research, and reported additional findings in the Supplemental Material.

The research was commissioned and undertaken at pace and benefited from advice from senior staff who were part of the UK body overseeing the potential revision of the national clinical excellence awards scheme. While they did not influence the research agenda or reporting of findings, they had an early sight of emerging results offering potential for preliminary findings to inform a revision of the scheme to focus on national impact.

Alternative, but more costly, approaches might have involved double-blind scoring of actual (rather than experimental) applications within the ‘live’ application and assessment process, but this would present financial, organisational and scientific challenges, which were not surmountable within the timescale available. Reflecting this compromise, we note that historical scores were based on complete applications including information removed for anonymisation, which may account for some differences between the two scoring systems.

While it is possible that assessors may have been involved in scoring an included application when it was originally made, the number of applications made each year across 15 subcommittees suggests this would be rare. Furthermore, given that cases were derived from 2009–2019 applications, the impact of recall is likely minimal.

Policy implications

The UK National Clinical Excellence Award scheme is costly. It has been criticised as being elitist, and questions have been asked regarding the scheme's value for money.14,15 Specific concerns have been raised 16 (and acknowledged by the Advisory Committee on Clinical Excellence Awards 17 ) regarding the accessibility of the scheme to all potential beneficiaries, mostly notably to women, to those working less than full time, and to senior doctors from ethnic minority backgrounds. The focus of this research was to inform the development of new scoring arrangements that are robust and equitable.

To date, a revised scoring system incorporating all the principles derived through this work has not been implemented. The revised and rebranded scheme implemented in 2022 by the UK Department of Health and Social Care focuses on clinical ‘impact’ rather than on clinical ‘excellence’, and the impact was indeed one of the key domains our research identified as being of key concern to our research participants. These important scheme changes were informed, at least in part, by the findings of a national consultation exercise which ran in parallel to this research. 18 Other areas to consider as part of a revised scheme should include the reach and significance of an applicant's contribution – reflecting contributions beyond the immediate local geographical boundary of an individual's role, and the individual's contribution to influencing health and health care. Furthermore, a transition from the pre-existing, four-point, scoring scale to a more granular 0–10 scale has not yet been implemented. Given the similar levels of reliability found here between the two scoring systems that may be prudent. However, given that historic scores were provided by assessors who were well versed and trained in using that scoring system, and with some information having been removed for purposes of maintaining anonymity, there is potential for the reliability of scores using the proposed scoring system to be improved with training and use. A larger-scale prospective evaluation of the proposed scoring system, accompanied by full training in its use, is warranted.

Supplemental Material

sj-docx-1-shr-10.1177_20542704231217887 - Supplemental material for Informing the development of a scoring system for National Health Service Clinical Impact Awards; a Delphi process and simulated scoring exercise

Supplemental material, sj-docx-1-shr-10.1177_20542704231217887 for Informing the development of a scoring system for National Health Service Clinical Impact Awards; a Delphi process and simulated scoring exercise by Gary Abel, Rob Froud, Emma Pitchforth, Bethan Treadgold, Lucy Hocking, Jon Sussex, Marc Elliott and John Campbell in JRSM Open

Footnotes

Acknowledgements

We would like to thank the Advisory Committee on Clinical Excellence Awards, and in particular Professor Kevin Davies, for their ongoing support in this research and timely access to relevent data. We would also like to note Dr Stuart Dollow’s support for this work. We are grateful to our Advisory Group and Patient and Public Involvement and Engagement group for giving their time and expertise. Finally, we would like to thank all the study participants who contributed through their participation in the Delphi process and shadow scoring exercise. The project and team were supported throughout by Ellie Kingsland who provided administrative support and contributed to all reporting.

Competing interests

John Campbell holds a National Clinical Excellence Award. There are no other conflicts of interest to declare.

Funding

This research is funded by the National Institute for Health Research Policy Research Programme (project reference NIHR202992). The views expressed are those of the authors and not necessarily those of the National Institute for Health and Care Research or the Department of Health and Social Care.

Ethics approval

National Health Service ethical approval was deemed not to be required on the basis of screening using the National Health Service Health Research Authority assessment tool, and on advice from the Advisory Committee on Clinical Excellence Awards regarding the use of historical applications and anonymised historical data.

Guarantor

Gary Abel is the guarantor for this manuscript.

Contributorship

All authors were involved in the conception and design of the project. E Pitchforth and B Treadgold led on recruitment of panellists, G Abel, R Froud, E Pitchforth, B Treadgold and J Campbell conducted the Delphi study, G Abel, E Pitchforth, B Treadgold and J Campbell conducted the shadow scoring exercise. G Abel and R Froud conducted data analysis with input from M Elliott. G Abel drafted the manuscript and all authors made critical revisions.

Data sharing statement

Study data may be made available to appropriate individuals on a case-by-case basis following an application to the Chief Investigator.

Provenance

Not commissioned, peer reviewed by Julie Morris and Jacky Hayden.

Supplemental material

Supplemental material for this paper is available online.

Appendix A. Further details of the Delphi process

Appendix B. Further details of the shadow-scoring exercise

Appendix C. Assessors’ feedback on the use of the new scoring scheme and accompanying guidance

Table A1 shows the responses to the statements presented to the assessors when scoring applications (responses of not applicable have been excluded). In most occurrences, the assessors felt the scoring template and domain-specific guidance were easy to apply (83% and 84% agreed or strongly agreed, respectively). They also felt that most of the time (70% agreed or strongly agreed) the job plan provided a clear understanding of the applicant's roles. Similarly, most of the time it was felt that citations were useful (66% agreed or strongly agreed) with somewhat less agreeing that ranking of citation material was useful (52% agreed or strongly agreed). The most common time spent coming to an assessment of an application was 10–20 min (45% of assessments) with 11% taking less than 10 min, 13% taking 40–60 min and 2% taking over an hour.

A range of free text comments were submitted based on assessors’ experiences of using the revised scoring scheme. Looking across these for consistent or diverging views, three main points could be drawn:

Applicants vary in how detailed they write their job plans, assessors reflected that when written very well, the assessment process is smooth, whereas when they’re vague (e.g. not reporting how many supporting professional activity sessions are involved and remunerated, how much of the applicants’ time was attributed to direct clinical care, or simply listing all of their roles but not spelling out what the roles involve day-to-day), it is much more challenging. Clearer guidance and support for applicants on writing a detailed job plan/what to involve may help this. Views on the value of citations were mixed. Some are described as helpful, especially when the application isn’t written very well, as it provides more info/context, whereas others say they don't really take notice of them/they're always positive. Citations from reliable sources such as Chief Executive Officers, Colleges and funding bodies were described as most helpful by some assessors. Clear guidance for assessors on how much value to place on citations may be helpful, as well as instructions for applicants on what type of citations/from whom should be sought. Assessors reflected that using the new scoring template meant taking more time than usual because of the need to become familiar with the new scores and scale descriptors. Clear guidance and a recommendation to allow for more time when using a new scale may be helpful.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.