Abstract

Objective

Time-lag from study completion to publication is a potential source of publication bias in randomised controlled trials. This study sought to update the evidence base by identifying the effect of the statistical significance of research findings on time to publication of trial results.

Design

Literature searches were carried out in four general medical journals from June 2013 to June 2014 inclusive (BMJ, JAMA, the Lancet and the New England Journal of Medicine).

Setting

Methodological review of four general medical journals.

Participants

Original research articles presenting the primary analyses from phase 2, 3 and 4 parallel-group randomised controlled trials were included.

Main outcome measures

Time from trial completion to publication.

Results

The median time from trial completion to publication was 431 days (n = 208, interquartile range 278–618). A multivariable adjusted Cox model found no statistically significant difference in time to publication for trials reporting positive or negative results (hazard ratio: 0.86, 95% CI 0.64 to 1.16, p = 0.32).

Conclusion

In contrast to previous studies, this review did not demonstrate the presence of time-lag bias in time to publication. This may be a result of these articles being published in four high-impact general medical journals that may be more inclined to publish rapidly, whatever the findings. Further research is needed to explore the presence of time-lag bias in lower quality studies and lower impact journals.

Introduction

Reporting bias (a widely researched issue concerning the selective reporting of studies dependant on the nature and significance of results) has consequences for the accurate dissemination of research into practice. Publication bias is perhaps the most widely researched form of reporting bias and occurs when studies reporting ‘positive’ or statistically significant results are more likely to be published.1–3 Associated with this is time-lag bias, whereby the nature and direction of study results influence time to publication. Delayed or lack of publication may result in ineffective or dangerous treatments being implemented as the findings of positive studies dominate the evidence base and bias treatment decisions until some time has passed and the papers reporting negative or null findings appear.1,4 Moreover, Chalmers 5 suggests that failure to publish the findings of research constitutes scientific misconduct since it represents wasted resources of funding agencies and of individual participants’ time. Such concerns have recently been raised following the delayed reporting of results from the large-scale De-worming and Enhanced Vitamin A trial of deworming and vitamin A. 6 Publication of the findings from this trial, in which one million children in India participated, was delayed by eight years due to the authors' concerns that the results did not support current evidence or policies on deworming practices. 7 In surgical trials, Chapman et al. 8 have shown that only 66% of completed trials were published, and those that were took a median time of 4.9 years from study completion to publication.

In a review of two earlier studies exploring time-lag bias,9,10 an average two- to three-year delay in publication of null or negative trial results has been reported. 4 This is supported by Decullier et al., 11 who found a statistically significant difference in the time to publication for positive and negative study results (5.2 years compared to 6.5 years, p < 0.001). Earlier studies are, however, limited by topic area and location – Ioannidis 10 explored only AIDS trials, while Stern and Simes 9 and Decullier et al. 11 concentrated on studies submitted to research ethics committees in Australia and France, respectively.

Various studies have surveyed researchers to explore reasons for non-publication or delayed publication, with findings suggesting that investigator-related reasons tend to be the source of poorer reporting of negative trial results, rather than rejection by journals.9,11–13 In survey studies exploring publication bias, the rejection of manuscripts has been cited as the reason for non-publication in only 5% of cases.11,13 Meanwhile, a recent review by van Lent et al. 14 supports the suggestion that time-lag bias may be associated with delayed submission to journals rather than delays associated with the review process. In their review, the direction of results had no effect on the acceptance of manuscripts for drug trials submitted to one general medical journal and seven specialty journals. 14

Our study updates and extends earlier research in this field by investigating the presence of time-lag bias from the period between trial end and publication, with no restrictions by topic area or trial location.

Methods

Search strategy and study selection

Four general medical journals (the BMJ, JAMA, the Lancet and the New England Journal of Medicine) were searched for randomised controlled trials published between June 2013 and June 2014. An initial database search of these journals yielded 685 potential articles reporting trial results (Figure 1), which were then screened for initial inclusion by one author. Original research articles presenting the primary analyses from phase 2, 3 and 4 parallel-group randomised controlled trials were included. Studies reporting longer term follow-up or sub-group analyses were excluded, as were cluster, factorial, crossover, non-inferiority and equivalence trials. Multiple co-authors checked article full-texts to ensure all obvious exclusions had been removed. Disagreements were resolved through discussion; arbitration with a third reviewer was not necessary.

PRISMA flow diagram indicating the number of studies identified, included and excluded, and the reasons for exclusion, in the review. In total, 208 studies were included in the quantitative analysis.

Data extraction

Data extraction of each article was undertaken by two independent authors using a standardised data extraction form. In order to calculate a time to publication, the date of publication (online and/or in print) was extracted. Where reported, the dates of completing recruitment, completing follow-up and a trial end date were extracted from the text. Additionally, a date of trial completion was sought from online registries (ISRCTN Registry, ClinicalTrials.gov, or similar) where possible.

In order to classify the trial findings as positive or negative, the results of the analysis of the primary efficacy endpoint from each paper were extracted. The following criteria were then applied: results were classified as positive if they reported a statistically significant result (p < 0.05 or 95% confidence interval (CI) for the difference excluding 0, or 95% CI for a ratio excluding 1) and negative otherwise. If multiple p-values were reported relating to several specified co-primary outcomes and/or time points, then the results were classified as positive if the majority of the p-values were significant, and negative if non-significant. If there were the same number of significant and non-significant results, or a primary outcome was not specified, then the p-value relating to the outcome used in the sample size was used where possible.

Study characteristics including journal, sample size and trial location were also extracted from the texts.

Statistical analysis

A variable for time from trial completion to publication was derived. The hierarchical procedure for deriving a trial end date was as follows:

Explicit trial end date stated in the paper (e.g. ‘We did this international, randomised, double-blind, placebo-controlled, phase 3 trial between 6 October 2009 and 26 January 2012’).

15

Completion of primary data collection/follow-up (e.g. ‘The recruitment of participants started in December 2011, and the follow-up period ended in September 2012’).

16

Approximate date of completion of follow-up calculated by adding the maximum length of follow-up to the recruitment end date. Date of completion given in online registry such as ISRCTN.

If a date was given as a month and year only, it was recorded as the 15th of that month for analysis purposes.

Due to variation in the reporting of electronic publication dates across papers and journals, for consistency, the date of paper publication was used to calculate time from study completion to publication. Time-to-event analyses were performed. Time to publication was compared between the groups of positive and negative trials using a log-rank test, and Kaplan–Meier curves are presented. Since all trials included in this analysis were published, there was no censoring. Median time to publication is presented with its 95% CI. An adjusted analysis was conducted using a Cox proportional hazards model including actual sample size, journal and whether the trial was conducted in one or multiple countries. The proportional hazards assumption of the Cox model was checked using log–log plots and tests of the Schoenfeld residuals.

Statistical analysis was undertaken in Stata v13. 17 All tests are two-sided at the 5% significant level.

Results

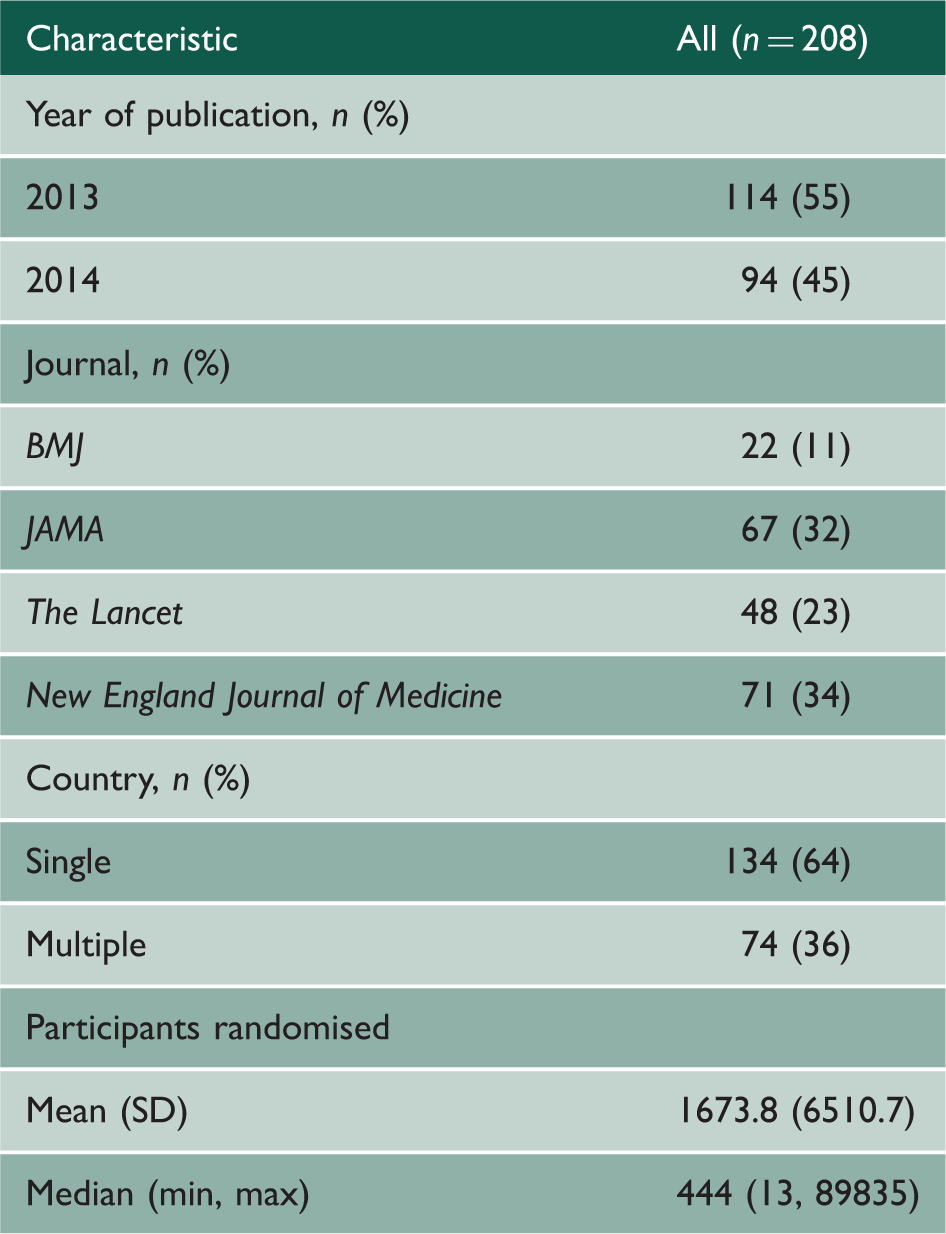

Characteristics of included studies.

Trial completion dates were recorded in online registries for 179 trials (86%). The differences between the trial end date derived from the paper and the date given in the registry ranged from −3197 to 1706 days (approximately −9 to 5 years). Negative differences indicate that the online registry completion date is after the end date derived from the paper suggesting, for instance, that the trial finished early, was stopped early, or that longer term follow-up is being conducted after the primary data collection. Positive differences indicate that the trial was completed later than originally planned. Only 100 (56%) trials finished within six months (before or after) of their completion date as recorded in their trial registration entry.

On average, trials took just over one year from completion to publication (median 431 days, interquartile range 278–618 days). There were two trials with outlying times to publication beyond four years of their derived completion date: the first was a randomised controlled trial of the varicella vaccine which took nearly five years to be published (29 June 2009 to 12 April 2014) 19 and the second was the aforementioned breast cancer screening trial, which took over eight years to be published (31 December 2005 to 11 February 2014). 18 In these trials, a non-significant primary result was reported in one and the findings could not be classified in the other as a comparison between the treatment groups for the primary endpoint is not reported.18,19

Characteristics of analysed studies by classification as ‘positive’ or ‘negative’.

Association between time to publication and trial findings

Median time to publication was 412 days (approximately 13 months) (95% CI 328 to 459 days) among negative trials and 433 days (approximately 14 months) (95% CI 378 to 485 days) among positive trials. No statistically significant difference was observed by the log-rank test (p = 0.48). The Kaplan–Meier curves for the proportion of trials published by positive and negative trials are shown in Figure 2. In the Cox proportional hazards model, the hazard ratio was 0.86 (95% CI 0.64 to 1.16), which indicates that the rate of publishing following the end of a trial is greater among negative trials, although this result is not statistically significant (p = 0.32). We found no evidence that trials conducted in a single country were published more quickly than international trials (HR 0.98, 95% 0.71 to 1.37, p = 0.93), nor that sample size predicted time to publication (HR 1.00, 0.99 to 1.00, p = 0.08). The median time to publication was lowest among trials published in the New England Journal of Medicine (345 days), followed by JAMA (421 days), the Lancet (498 days) and the BMJ (511 days). Overall, journal was seen to be a significant predictor of time to publication (chi-squared = 8.19, df = 3, p = 0.04). The main result was robust; Cox models including journal as a random (as opposed to fixed) effect, logging the sample size covariate and excluding the eight-year outlier were run and produced hazard ratios between 0.83 and 0.90 (p-values between 0.11 and 0.49).

Kaplan–Meier curves indicating time to publication for both positive and negative trials. The rate of publishing following the end of a trial is greater among negative trials (HR 0.86), although this result is not statistically significant (p = 0.32).

In assessment of the validity of the proportional hazards assumption, although log–log plots for the qualitative covariates in the Cox model showed evidence of non-parallel lines, covariate-specific and global tests of the Schoenfeld residuals did not indicate that the assumption was violated.

Discussion

This paper provides an updated review of the effect of trial findings on time to publication in randomised controlled trials. Our findings show no evidence of time-lag bias; no statistically significant difference was found in the time from study completion to publication for trials reporting significant and non-significant primary results. The large scale and systematic nature of this review, with no restrictions by topic or location, extends the evidence base, which has previously focussed on exploring time-lag bias in specific settings or disease areas.9–11 Our findings contrast these earlier studies, which could indicate that initiatives such as the AllTrials campaign, started in January 2013, which advocates all clinical trials be registered and reported, are achieving relative success. 20 However, it is also possible that the selection of high impact factor journals for this review may have influenced the likelihood of detecting time-lag bias as these journals publish greater quality studies that may be published more quickly, regardless of the results. Further research is needed to explore the presence of time-lag bias in a wider array of journals. The reasons for time-lag in lower impact journals may be more varied and could include rejections from other journals, lower quality submissions requiring more extensive revisions and less efficient peer-review processes (less staff or difficulty recruiting reviewers).

Although not shown to be related to significance of trial findings, considerable time delay appears to be present in the reporting of trial results in the included studies. On average trials took just over one year from completion to publication and these data were positively skewed, with some trials taking several years to publish. For thoroughness, we contacted the authors of two outliers to ascertain reasons for these long delays (eight years – Miller et al. 18 and five years – Prymula et al. 19 ). Prymula et al. claimed a number of reasons for the delay, most notably investigation into the GCP issues in Poland outlined in the manuscript; while Miller et al. cited extensive delays in obtaining cancer registry data and initial rejection of their research article as the cause of the publication delay (personal communication). Of course, a truer reflection of the intention to publish swiftly would involve investigating the time between trial completion and the date of initial submission, rather than publication. Previous reviews focusing on the influence of the peer-review process on time-to-publication suggests that it can add to the delay if not completed in a timely fashion 14 and high-impact journals are likely to request extensive revisions which can be time-consuming to address. Unfortunately, the date of submission is not commonly easily accessible.

While the delays in publication found in this review are concerning as they may hinder alterations in clinical practice, these findings represent just a snapshot of the potential problem. Trials that are never published is a separate and potentially more detrimental issue that could not be explored through the methods of this review; however, recent research suggests that in some fields of medicine up to 34% of completed trials remain unpublished. 8 Funding bodies have a vested interest in encouraging researchers to publish in order for them to see a maximum return on their investment. For many, if not all, funding bodies, it is required from an early stage to consider and declare how the findings from the research will be disseminated, and publication is strongly recommended. The National Institute for Health Research (NIHR) Health Technology Assessment (HTA) Programme for instance aims to publish a report, or monograph, for each project it commissions. Research teams are contractually obligated to write and submit this report upon completion of their study; therefore, the results from these projects are published irrespective of the findings. In addition, authors are encouraged to disseminate their findings through a publication in a peer-reviewed journal, and we suspect that having written a lengthy and comprehensive report, authors would be more likely to submit a journal article which is much shorter and could be relatively easily compiled with detail adapted from the monograph.

Our analysis of time to publication by journal suggests that journal Impact Factors may have influenced time to publication, with those articles published in higher impact journals tending to be published earlier. According to recent Journal Citation Reports at the time of writing this article, New England Journal of Medicine has an impact factor of 54.4, the Lancet of 39.2, JAMA of 30.4 and the BMJ of 16.4, which resembles the order of median time to publication (New England Journal of Medicine 345 days, JAMA 421 days, the Lancet 498 days and the BMJ 511 days). This lag time may be introduced if articles are rejected by higher impact journals and then require resubmission elsewhere; therefore, time-lag bias is likely to be more problematic in journals with a lower impact factor.

We encountered difficulty when determining trial end dates for this study due to wide variations in trial end dates reported through online registries and those derived from publications. This finding was surprising given that Consolidated Standards of Reporting Trials (CONSORT) recommends defining the periods of recruitment and follow-up and all four medical journals searched are CONSORT endorsing journals that require completion of a CONSORT checklist during submission. In some instances, online registries did not report completion dates, and in others, the dates reported had not been updated as the trial progressed and were often wildly inconsistent with those reported in the journal publications. This supports findings from an earlier paper by Zarin et al. 21 which reviewed the completeness of data entered into the ClinicalTrials.gov registry. To overcome this problem of poor reporting in online registries, we attempted to extract a trial completion date from trial publications, but again large inconsistencies in reporting made this difficult. Some texts clearly report the dates of commencing recruitment, the date of completing recruitment and the date of final primary follow-up/data collection, whilst others give very little indication of such timings. Occasionally, texts report a date the study was completed on, or give a range of dates in which the study was conducted; however, it is often unclear what exact definition of completion the authors have used. Options may include date of last data collection, date the data were handed over for analysis meaning all data had been collected and data queries resolved, or perhaps the date the trial funders required their report to be submitted. This study therefore highlights the importance of transparent reporting of trial start and end dates, and the need for trialists to update online registries throughout the trial to improve the accuracy of the information held. We used a standardised hierarchy to determine a trial end date in this study, which we recommend be adopted by researchers extracting this information from trial publications in the future.

Conclusions

In contrast to previous studies, we did not find a significant difference in publication lag time between ‘positive’ and ‘negative’ trials. While this may reflect improved research practices as a result of recent initiatives such as the AllTrials 20 campaign, it may be that these high impact journals only publish the highest quality studies which are relatively rapidly published, whatever their findings. Time-lag publication bias might be worse among studies published in journals with a lower impact factor. Further research could explore this through sensitivity analysis using quality of studies in meta-analyses.

Footnotes

Declarations

Acknowledgements

None

Provenance

Not commissioned; peer-reviewed by Charles Palenik.