Abstract

This study examines how data scientists, locally known as suanfa gongchengshi, employed by major Chinese infotech companies navigate their professional identities and aspirations amid the expansive process of datafication within and beyond the tech industry. Drawing on interviews with 23 data scientists from leading firms such as Tencent, Alibaba, and ByteDance, alongside a critical discourse analysis of dominant narratives surrounding the profession, we identify three key dynamics. First, data scientists cultivate a datafied professional habitus through continuous algorithmic experimentation, model optimization, and real-time feedback interpretation, resulting in an “alchemy-like” engagement with data. Second, they derive immediate professional and emotional rewards from high salaries, technical achievements, and adaptability, which sustain resilience and meaning in daily work. Third, their long-term aspirations for innovation and transformative roles are systematically constrained, as monopolized access to computational resources, research opportunities, and creative autonomy by tech giants reduces them to implementers of preexisting models. These findings highlight how data scientists both shape and are constrained by the data-driven infrastructures they sustain, revealing the complex interplay between professional achievement, structural limits, and the reproduction of technological hierarchies.

Introduction

Across the globe, the rapid development of large AI models and advanced algorithmic technologies is driving a profound process of datafication, in which social, economic, and cultural phenomena are transformed into quantifiable data that both shapes reality, changes organizational decision-making processes, and generates knowledge (Flensburg and Lomborg, 2023; Glaser et al., 2021; Van Dijck, 2014). Datafication is not a neutral act of measurement but a constructive mechanism through which aspects of the world become legible, operational, and actionable within complex sociotechnical systems (Halpern, 2015; Halpern et al., 2022). Through the accumulation, reuse, and extension of data, everyday behaviors, interactions, and patterns are transformed into datasets that govern technical processes and social relations, enabling large-scale monitoring, comparison, and intervention (Halpern, 2015; Halpern et al., 2022).

Alongside the process of datafication, dataism emerges as an ideology in which data and quantification are treated as inherently authoritative, positing that all phenomena—from human behavior to societal outcomes—can be understood, optimized, or predicted through numbers, often imbued with a quasi-religious sense of inevitability (Halpern et al., 2022; Lee and Björklund Larsen, 2019; Van Dijck, 2014). Van Dijck (2014: 198) summarizes this as: “the ideology of dataism shows characteristics of a widespread belief in the objective quantification and potential tracking of all kinds of human behavior and sociality through online media technologies.” Dataism also entails trust in the institutional agents who collect, interpret, and share (surplus) data derived from social media, internet platforms, and other communication technologies (Halpern et al., 2022; Van Dijck, 2014). Dataism can also be understood as a constellation of optimistic discourses around attention, power, and necessity that collectively shape and sustain public perceptions of Big Data, portraying it as a set of innovative technologies that leverage previously unused data for social and economic decision-making, and the rhetorical framing of these discourses helps explain Big Data's enduring popularity despite recurring controversies (Adamczyk, 2023).

Against this backdrop, this study examines how data scientists employed by major infotech companies navigate their professional identities and aspirations amid the rise of large AI models—an emergent profession that has only recently taken shape but is poised to exert profound and lasting impacts on the future, attracting growing educational and human resources (Dorschel, 2021). While data scientists play a central role in embedding AI and data-driven technologies into contemporary social life (Lee and Björklund Larsen, 2019), their own social positions and lived experiences, particularly the professional habitus, have received limited attention in existing research. Habitus, according to Bourdieu (1986, 1990), is an internalized system of dispositions through which individuals perceive, act, and respond to the social world, reflecting the interplay between structure and agency. Habitus embodies both personal and collective cultures acquired through socialization within specific fields, while retaining the capacity to adapt and transform in response to changing contexts (Bourdieu, 1986, 1990). Here, drawing on Bourdieu's insights, we understand the professional habitus of data scientists, within the context of pervasive datafication that saturates the field, as a datafied habitus—a set of dispositions and practices shaped by immersion in a data-rich professional environment, underpinned by an enduring and inescapable belief in dataism.

The Chinese infotech sector provides a fertile context for examining the professional habitus of data scientists for two main reasons. First, major tech firms such as Tencent, Alibaba, and ByteDance dominate vast digital ecosystems that embed AI deeply into everyday life, and their technological expansion has been closely integrated into state-led national digitalization programs (Zeng, 2020). In recent years, these firms have functioned as strategic partners in advancing AI to enhance China's global competitiveness and domestic digital governance capacities (Zeng, 2020), within an institutional environment marked by relatively weak data privacy protections and limited public resistance to technological intrusion (Lei, 2023; Zeng, 2020). Data scientists (suanfa gongchengshi) play a pivotal role within this landscape by operationalizing algorithms across sectors to enable such technological expansion (Zhang et al., 2020). The profession has rapidly expanded, with job postings doubling in 2024 and growing demand reflecting its strategic importance (National School of Development at Peking University and Zhilian Zhaopin, 2024). Second, and partly as a consequence of these dynamics, China's AI labor market has matured at extraordinary speed (Zhang, 2023), amplifying the opportunities and challenges of datafication in the lived experiences of technical professionals. Our empirical data draw on interviews with 23 data scientists and a critical discourse analysis (CDA) of narratives surrounding the role. Unlike general programmers, who typically enter the workforce after 2 years of technical training (Sun and Magasic, 2016), nearly 90% of Chinese data scientists hold a master's or doctoral degree, earning average starting salaries of around 30,000 RMB (∼4200 USD) and placing them in the upper-middle class (Maimai Talent Intelligence Center, 2022). Their strategic position within the expanding algorithmic infrastructure marks them as a unique and emerging profession within the ongoing global process of datafication.

This paper proceeds as follows. First, we introduce data scientists in the Chinese context, known as suanfa gongchengshi, examining their everyday work, career trajectories, and the development of this emerging profession. Second, to draw analytical strength, this study employs the framework of cruel optimism to examine data scientists’ paradoxical relationship to dataism and the broader datafied profession, highlighting how their attachment to data-driven professional aspirations is constrained by the very conditions that produce them (Berlant, 2011). Within the profession, the ideology of dataism is reinforced through interrelated discourses of attention, power, and need, which emphasize data's innovative potential while downplaying limitations (Adamczyk, 2023), portraying it as essential for solving complex problems, creating dependency on data-driven technologies, and consolidating dataism's influence on social and economic decision-making (Van Dijck, 2014). Recognizing that not all aspirational attachments to dataism are necessarily unattainable or illusory, we further adopt Norrito's (2024) framework of horizontal and vertical hopes to distinguish between different forms of aspirations in data science, allowing us to analytically separate realistic, achievable goals from those constrained by structural conditions. Then, after outlining our research design, we present our findings, highlighting three key dynamics: the cultivation of a datafied habitus through continuous algorithmic experimentation; the pursuit of attainable aspirations through salaries, technical pride, and adaptability in everyday work; and the structural foreclosure of long-term aspirations, which reveals how large AI models disempower data scientists, reducing them from innovators to implementers. The final section discusses the broader implications of these findings.

Contextualizing data scientists/suanfa gongchengshi

As datafication spreads across industries and everyday life and as dataism fosters the belief that quantified information can generate control, reveal truths, and direct progress, existing research on datafication generally falls into two interrelated strands (Flensburg and Lomborg, 2023). One strand focuses on software and engineering, examining technological infrastructures, software, and data-processing systems; and a second, social and user-centered strand, explores how individuals interpret, interact with, and are affected by datafication, often through qualitative methods capturing behaviors, attitudes, and socioemotional experiences (Flensburg and Lomborg, 2023). The latter approach typically focuses on end-users and seldom includes the professionals who design, develop, and optimize large-scale AI models and algorithms—the very actors whose practices, dispositions, and engagement with data form the primary focus of this study. Data scientists, or suanfa gongchengshi in the Chinese language, play a critical role in optimizing technological infrastructures and software, particularly data-processing and algorithmic systems, and thus represent the protagonists of the first, technically oriented strand of datafication research (Flensburg and Lomborg, 2023). As professionals directly implementing datafication processes across industries, they are not merely technical specialists but also key agents in reproducing the ideology of dataism.

For readers unfamiliar with the Chinese context, it is important to briefly introduce suanfa gongchengshi, commonly translated as “data scientist,” though its literal English equivalent is “algorithm engineer.” Both data scientists and suanfa gongchengshi are engaged in leveraging modern machine learning techniques to extract insights from data (Zhang et al., 2020: 1), highlighting their dual role in driving technological innovation and actively shaping how data are interpreted, understood, and applied across organizational and societal contexts.

The role of the data scientist in the United States was formally introduced by LinkedIn and Facebook executives in 2008 (Patil, 2011). Interestingly, almost around the same time—in 2007—the founder of Douban, one of China's earliest social networking platforms, coined the term suanfa gongchengshi during recruitment efforts aimed at developing an algorithmic recommendation system (Leiphone, 2021). Over the following decade, the primary responsibilities of suanfa gongchengshi have included developing and optimizing machine learning and statistical models, analyzing large-scale datasets, and solving complex business problems. In major Chinese internet companies, suanfa gongchengshi independently manage the entire analytical workflow—from data acquisition and preprocessing to modeling and delivering actionable insights—using tools such as Python, R, Scala, and SQL, as well as frameworks such as TensorFlow, PyTorch, Spark, and Flink, to optimize systems, detect anomalies, support data-driven decision-making, and communicate findings effectively to stakeholders with varying technical expertise. These responsibilities closely mirror those of data scientists in the United States and more broadly speaking, the West; for instance, Dorschel (2021: 7) cites a 2019 DoorDash job posting outlining duties such as “improve our search, recommendation, personalization and ranking algorithms,” which closely aligns with the work performed by suanfa gongchengshi in China.

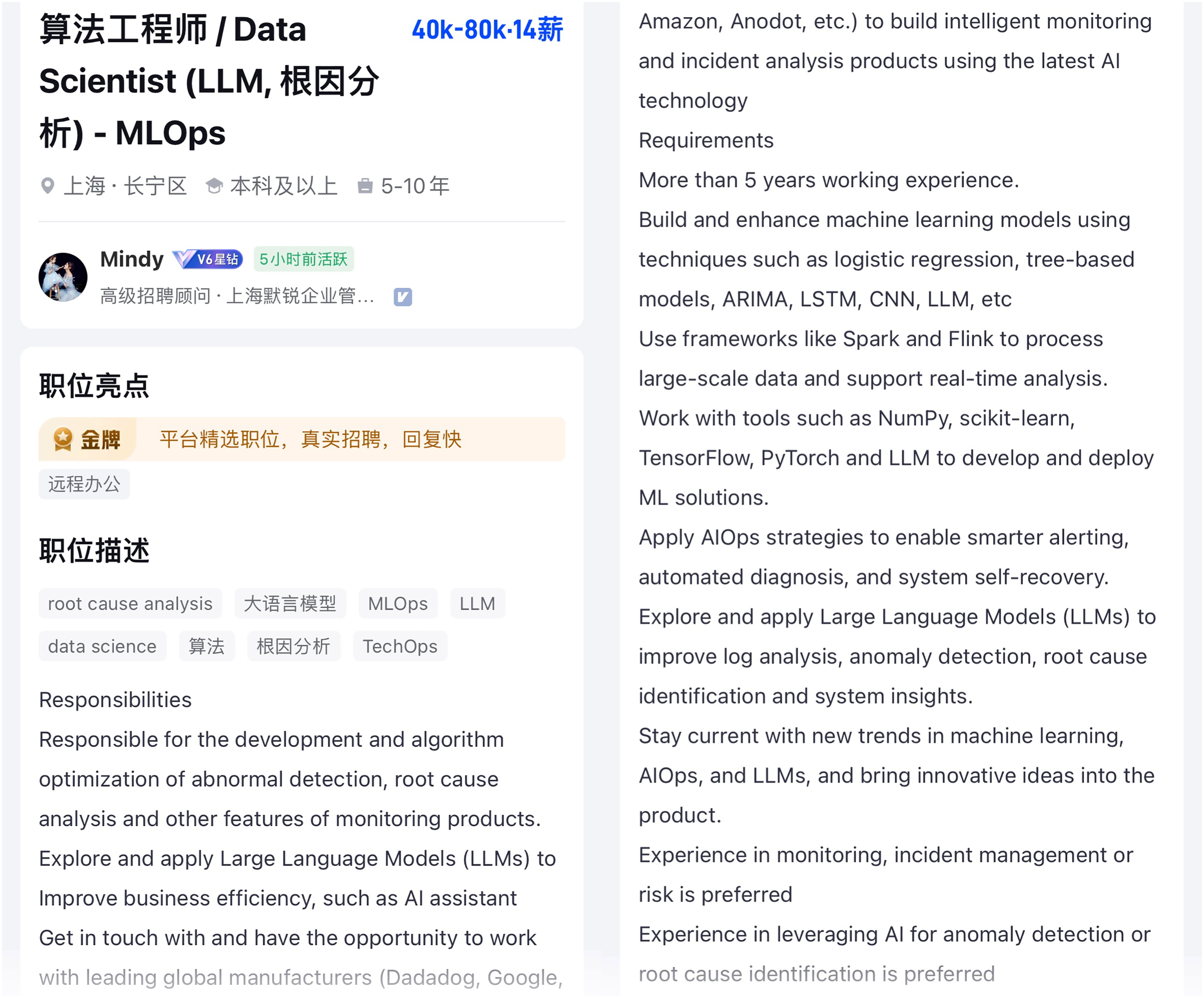

It is also important to note that this terminological mismatch is not merely a matter of translation. More fundamentally, the emergence of this professional role occurred in both the United States and China around the same period, and its rapid development left little opportunity for alignment or clarification regarding terminology. Interestingly, as the term “data scientist” became established in Western discourse, many Chinese recruitment postings began to present suanfa gongchengshi alongside the English label “data scientist.” This trend is clearly illustrated by job postings on MaiMai (see Figures 1–3). Furthermore, a number of Chinese suanfa gongchengshi professionals self-identify as data scientists on their Maimai profiles (see Figure 4), reflecting a convergence between the Chinese and Western conceptualizations of the role. This is why, in this article intended for an English-speaking audience, we adopt the term “data scientist” to refer to the subjects of our study.

Job posting on MaiMai that list the Chinese title suanfa gongchengshi (算法工程师) alongside the English label “data scientist.”

A recruitment posting on MaiMai in which the job title explicitly combines the Chinese term suanfa gongchengshi with its English counterpart “Data Scientist.”

A recruitment posting on MaiMai in which the job title explicitly combines the Chinese term suanfa gongchengshi with its English counterpart “Data Scientist.”

A professional profile on MaiMai in which the user lists their occupation as suanfa gongchengshi while simultaneously presenting themselves under the English title “Data Scientist.”

With the rapid development of large AI models, the title, scope, and connotations of “data scientist” continue to evolve; nevertheless, these professionals have generally assumed a crucial role in extracting, analyzing, and generating value from disorganized data, a form of expertise increasingly sought after by both governments and corporations (Gehl, 2015). Their responsibilities now extend beyond algorithm development to the integration and deployment of large AI models across digital platforms, influencing how AI technologies interact with and transform various societal domains (Burkhardt and Rieder, 2024). In popular discourse, they are often portrayed as “boundary crossers” and “world improvers” (Dorschel and Brandt, 2021: 193), as their work reaches beyond technical implementation to actively shape key functions of digital platforms, including visibility, censorship, content curation, and commodification (Dorschel, 2021). Consequently, data scientists have become among the most sought-after professionals globally, a trend that is equally evident within China's highly competitive AI-related industry (Liepin Big Data Research Institute, 2023). Despite their central role in contemporary technological infrastructures, the lived experiences of data scientists have received comparatively little attention in studies of datafication, and we aim to fill this gap by examining how they both contribute to and are shaped by the evolving landscape of AI and data-driven technologies.

This study seeks to contribute to and complicate existing research on digital labor by more precisely situating data scientists within, and against, established strands of scholarship (Dorschel, 2022b). Sociological research on digital labor has generally developed along two trajectories. The first focuses on occupations that utilize digital infrastructures, including gig workers (Lata et al., 2023), creative industry professionals (Alacovska et al., 2024), and specialized technology workers (Dorschel, 2022b), often critiquing capital accumulation and novel forms of labor exploitation enabled by digital technologies. The second trajectory, drawing on audience commodity theory (Smythe, 1977), focuses on productive consumers—such as fans—whose participation and affective engagement generate economic value for digital platforms (Fan et al., 2025; Sugihartati, 2020). This study situates data scientists within the first trajectory, conceptualizing them as technical professionals responsible for building, maintaining, and advancing the datafied systems embedded in contemporary social life.

Within the first trajectory outlined above, the expansion of algorithms and AI has prompted a growing body of scholarship to attend to the labor that underpins datafied systems, particularly crowd work, microwork, and so-called “ghost work,” with a strong focus on data annotation and platform content moderation (Fu et al., 2025; Le Ludec et al., 2023; Shestakofsky, 2024). These studies powerfully show how outsourced, underpaid, and often invisible workers are indispensable to data-driven systems, reflecting digital labor scholarship's long-standing concern with forms of work rendered marginalized and precarious by platform capitalism. By contrast, data scientists represent a different but equally critical category of technical labor: a relatively new occupation that has emerged alongside algorithmic and AI technologies and that appears, on the surface, far less precarious in terms of income and class position. This distinguishes them not only from crowd workers and gig laborers but also from earlier generations of Chinese programmers, whose professional identities were more fragile and less institutionally secure (Sun and Magasic, 2016). However, this relative material security does not place data scientists outside the dynamics of digital labor precarity. Their vulnerability stems not only from the high-pressure Chinese corporate environments (Li, 2023b) but also from their structurally ambivalent position at the forefront of datafication itself. Their labor directly sustains the infrastructures of algorithmic capitalism and enables the algorithmic management—and at times exploitation—of other digital laborers, including moderators, annotators, and gig workers. At the same time, their own professional futures are rendered increasingly uncertain as the AI systems they help to build threaten to displace or downgrade their own creative, decision-making, and research-oriented roles. As such, attending to their experiences allows us to bridge research on precarious platform labor and research on elite technical workers, revealing how data scientists occupy a structurally contradictory position within contemporary digital capitalism.

Theoretical framework: cruel optimism

Berlant (2011) conceptualizes cruel optimism as a condition in which the very goals or aspirations that individuals strive to achieve—such as career success, meaningful relationships, or personal growth—paradoxically impede their overall well-being. Optimism, while not inherently harmful, turns cruel when individuals’ attachment to it actively undermines the very goals that initially made them desirable. Empirically, cruel optimism captures the persistence of work norms (Harvey, 2005), wherein optimism itself can become a source of harm. On one hand, the pursuit of an ideal life fosters resilience and courage; on the other, it can desensitize individuals or obscure the structural inequalities that perpetuate precarity (Orgad, 2017). This persistent optimism functions as a contemporary iteration of Foucault's (1984, 2003) “technology of the self,” enabling individuals to construct meaning in their lives through attachments to ideals such as “professionalism” (Moore and Clarke, 2016) or practices like “self-improvement” (Hizi, 2019).

Cruel optimism has been widely used to critique the normalization of precarity in the digital economy (Berlant, 2011: 260), with scholarship examining both its discursive manifestations and lived experiences in digital labor. Recent studies highlight, for example, the cruel optimism embedded in China's online “play companion” industry, where seemingly effortless earnings ultimately prove unsustainable (Li, 2023a); the distinction between “good” and “bad” crunch in the gaming industry as a mechanism perpetuating unsustainable labor practices (Cote and Harris, 2023); and the reinforcement of offline hegemonic and heteronormative rural social structures in the seemingly optimistic space of live-streaming (Whyke et al., 2023). More recently, cruel optimism has been applied to algorithmic systems, such as Chinese platforms Douyin and Zhihu, which algorithmically promote gay visibility, fostering the fantasy of acceptance and happiness while masking enduring inequalities (Wang and Zhou, 2024). On Douyin, videos of romantic, able-bodied, middle-class gay couples are algorithmically amplified for commercial appeal, while on Zhihu, uplifting narratives by HIV-positive users are privileged over accounts of discrimination and systemic struggle; in both cases, algorithms curate visibility to promote commercially desirable emotions—hope, positivity, and love—sustaining attachments that ultimately hinder genuine flourishing, demonstrating that algorithms are neither neutral nor objective but actively shape affective environments to serve commercial interests.

This study seeks to extend this line of inquiry by building on Norrito's (2024) development of Berlant's framework, which distinguishes between vertical and horizontal hope, to understand the complex work experiences of data scientists within the infotech industry. Horizontal hope encompasses everyday forms of resilience and fulfillment, such as deriving satisfaction from stable employment, technical achievements, and community belonging, while vertical hope refers to aspirational desires for transformative, long-term success, such as becoming an innovator or leader within the AI industry (Norrito, 2024). Norrito's (2024) distinction between horizontal and vertical hope offers not merely a binary but a dynamic framework that illuminates how, for data scientists, the immediate satisfactions of salary, technical accomplishment, and professional pride are inextricably entangled with deferred aspirations for innovation and recognition. Horizontal hope highlights the immediate benefits—stable salaries, moments of technical accomplishment, and professional pride—that generate resilience and sustain commitment in the present. Yet these horizontal gains are inseparable from vertical hopes for innovation and recognition, which remain structurally foreclosed; it is precisely the tension between everyday rewards and unattainable futures that explains why these data scientists remain deeply attached to their work, even as their long-term aspirations are persistently deferred. As such, Norrito's (2024) framework captures both the temporal orientation (future vs. present) and the affective register (ambition vs. resilience) of data scientists’ experiences. This conceptual lens allows us to show how moments of professional pride, salary security, and technical micro-successes coexist with/and are undermined by, deferred aspirations for innovation and transformative agency.

Method

Research method and data

This study draws on a two-part qualitative dataset collected during the first author's master's candidature, for which ethical clearance was obtained from the then host university. The first component is based on in-depth interviews conducted between August 2023 and December 2024 with data scientists. We conducted interviews with 23 data scientists working in Shenzhen, Guangzhou, and Beijing (see Table 1), cities recognized as China's principal hubs for the internet industry. Participants were recruited by inviting acquaintances working as data scientists from a top-ranked computer science university in Guangzhou, and the sample was expanded through snowball sampling. Most of the participants are currently employed by major Chinese Big Tech companies, including Alibaba, ByteDance, Tencent, and Baidu. To protect participant privacy, all names have been anonymized.

Information of interviewees.

The interviews focused on three key areas. First, we examined participants’ current roles and job responsibilities, with attention to the daily work practices of suanfa gongchengshi (data scientists). Participants’ responsibilities spanned multiple domains, including recommendation systems, search algorithms, natural language processing, computer vision (CV), financial risk control, and advertising algorithms. Four participants who had shifted roles due to the introduction of large AI models were reinterviewed, and later interviews included individuals who had transitioned from traditional algorithmic roles to positions involving large AI models. Second, we investigated work experiences, including workload intensity, concerns about automation, and the personal and professional challenges associated with rapidly evolving technological environments. Third, we explored professional identity and engagement with the broader tech ecosystem, examining participants’ values, goals, and aspirations, how they describe their work to nonspecialists, their participation in technical communities and cultural practices surrounding technology, and their perspectives on the development of the Chinese infotech industry.

To address the limitations of convenience sampling in our interviews, we supplemented our data with qualitative observations from two major online platforms frequently used by data scientists: Zhihu and Maimai. Zhihu is a Chinese question-and-answer platform similar to Quora, where users participate in discussions on various professional topics. Maimai is a Chinese professional social networking site, similar to LinkedIn, with a stronger focus on anonymous or semianonymous discussions about workplace conditions, career advice, and industry trends. Data scientists on Maimai tend to form professional communities centered on career-related issues, including work transformations, challenges, and promotion opportunities. In contrast, Zhihu hosts a vibrant “geek culture” community—a subculture that values deep technical knowledge, problem-solving, and enthusiasm for technology. On both sites, data scientists are actively engaging in discussions. Guided by recurring expressions and themes that emerged from our interviews, we conducted systematic keyword searches on both platforms using terms “running experiments” (跑实验), “alchemy” (炼丹), and “prompt engineer” (Prompt工程师). This process yielded 122 relevant posts published between August 2023 and May 2025. The overlap between the vocabulary used by our interview participants and that found in these online discussions allowed us to triangulate individual narratives with broader community-level discourses.

We then conducted a CDA of these 122 posts following Fairclough's (1995) framework, which conceptualizes discourse as a form of social practice through which identities, power relations, and ideological positions are produced and reproduced. Rather than treating these posts as isolated opinions, CDA enabled us to examine how data scientists’ linguistic styles, metaphors, naming conventions, and narrative tropes reflect their professional identities, technological imaginaries, and positions within broader structures of platform capitalism and AI development. For ethical reasons, and because both platforms operate under semi-real-name registration systems, we omit the URLs of cited posts to prevent the possibility of reverse identification. Through this iterative analytic process, our CDA gradually revealed a structured distinction between two dominant orientations in the discussions. The first set of discussions focuses on present-oriented work conditions, including how to improve algorithmic performance, solve technical problems, and manage everyday tasks. Much of this conversation revolves around “running data” (跑数据) itself, with participants detailing how to clean, organize, and process datasets. These discussions foreground the intensely data-driven nature of data scientists’ everyday labor. The second set of discussions is future-oriented and centers on long-term career prospects and technological change. These posts reflect collective anxieties about the impact of large AI models on professional survival, age-related job insecurity, and prospects for career transitions. Two dominant affective registers emerge here. One is a pessimistic and self-deprecating tone, exemplified by jokes about becoming ride-hailing drivers after being laid off. The other reflects a neoliberal logic of continuous self-improvement, emphasizing lifelong learning and adaptability as moral and practical imperatives for avoiding obsolescence. Together, these two discursive orientations—one anchored in present labor practices and the other oriented toward uncertain futures—formed the basis for our subsequent analytical distinction between horizontal and vertical concerns.

Research findings

Cultivating a datafied habitus through algorithmic experimentation

Habitus can be understood as a system of durable dispositions shaped through prolonged socialization within a particular field, which guides perception, thought, and practice (Bourdieu, 1986, 1990). In the case of data scientists, we found that the daily work routines of running algorithmic experiments, optimizing models, and engaging intensively with vast volumes of data—produce a datafied habitus that positions data as the primary lens through which they interpret both professional tasks and, increasingly, their personal lives. Because algorithmic processes operate continuously without the need for rest, data scientists responsible for maintaining algorithmic systems remain in a heightened state of alertness even outside formal work hours.

Understanding the contemporary datafied habitus of data scientists requires situating their work within a historical trajectory that distinguishes them from earlier programmers and software/web developers, as well as from earlier iterations of the data scientists. In the early era of portal websites and search engines, roughly the late 1990s to the mid-2000s, programmers and software/web developers primarily focused on building and maintaining functional websites and applications, managing server-side logic, and implementing rule-based systems to process and present information. By the late 2000s, data scientist roles emerged, beginning to engage with tasks such as web crawling, information retrieval, and click-through rate prediction, which complemented rule-based designs but were not yet fully data-driven. With the rise of mobile Internet and the platformization of major Internet companies in the 2010s, the explosive growth of user behavior data on social media, e-commerce, and short-video platforms enabled platforms not only to guide user engagement through recommendation algorithms but also to continuously optimize these algorithms using the resulting data, thereby consolidating control over traffic and markets (Couldry and Mejias, 2019). Concurrently, algorithmic optimization responded to the Chinese government's increasing demands for governance of public opinion (de Kloet et al., 2019). This shift transformed the role of the data scientist toward modeling algorithms, conducting experiments, and optimizing data-driven systems (Mackenzie, 2019), embedding them deeply in the platforms’ growth logic and big data governance mechanisms (Plantin et al., 2018). As the volume and complexity of data have expanded exponentially, iterative testing has become essential to optimize models and sustain platform competitiveness. Today, data scientists generate value primarily through machine learning and deep learning, reflecting a structural shift in which data volumes exceed human cognitive capacities and algorithmic processing is required.

The black-box nature of deep learning further compels data scientists to engage in continuous experimentation, or “running experiments,” in which hypotheses aimed at advancing corporate interests are tested using deep learning models—computer files trained on datasets such as images, text, or audio to perform specific tasks through predefined algorithms. Platforms like Zhihu host numerous posts detailing how to manage these experiments effectively, illustrating how a datafied habitus is cultivated and normalized. For example, a widely circulated post by a CV data scientist, titled “A CV Data Scientist's Reflection after One Year,” emphasizes that success relies less on academic innovation and more on meticulous workflow management, experimental reproducibility, and solving business-driven problems. 1 The post details a culture of extreme technical precision and procedural discipline: meticulously labeling datasets, systematically organizing image and video files, carefully versioning annotation files, scripting preprocessing pipelines, and maintaining rigorous experiment logs. Mistakes in any of these stages are viewed as costly setbacks requiring significant time to correct. The author's advice to “write logs carefully so you can locate any experiment at a glance” and to set “read-only permissions” on critical datasets to prevent accidental deletion reflects a broader ethos of accountability, traceability, and control over data.

The immediacy of algorithmic feedback reinforces this datafied habitus, as data scientists receive real-time alerts quantifying the commercial impact of their experiments rather than waiting for outcomes to unfold over extended periods. Each algorithmic service is assigned a designated data-scientist owner, and when an experiment triggers an unexpected anomaly, that individual receives an automated alert. If no response is logged, the alert escalates to the next managerial level, and then to higher executives, creating a pyramid of accountability and pressure. As Wang Jun, recounted, an experiment he conducted triggered an alert predicting a potential annual revenue loss of over one hundred million yuan, compelling him to respond immediately—even while socializing outside work hours. “I freaked out,” he recalled. “I completely lost the mood to hang out and ended up spending the whole night fixing it.” Such escalation protocols keep data scientists in a state of continuous vigilance, disciplining them through the very algorithmic systems they maintain.

Paradoxically, moments of success in data experimentation generate exhilaration that sustains their attachment to data as a source of discovery, achievement, and professional recognition. As Xu Zhi explained, “Sometimes the model just suddenly works out of nowhere, way better than I ever expected. But most of the time, like ninety-nine percent of experiments, they’re just disappointing. But right when you’re about to give up, a good result pops up out of nowhere!” Together, these practices illustrate how daily routines, technical precision, and real-time engagement cultivate a professional habitus in which data become the dominant lens through which data scientists interpret their work.

In grappling with the opaque, black-box nature of deep learning models, data scientists often invoke the metaphor of alchemy—drawing on traditions from ancient Chinese mystical culture—to describe their practices. As Chen Hao explained: “Why call it ‘alchemy’? Like alchemists turning base metals into gold, we throw data into models, tweak a few parameters, and hope the results improve—what happens inside the ‘furnace’ doesn’t matter, only the input and output.”

Comparing artificial intelligence, particularly deep learning, to alchemy is not new (Campolo and Crawford, 2020), yet our participants invoke this metaphor to capture the constraints of an experimental process whose outcomes cannot be fully predicted and whose success must produce measurable, positive results. Their references to alchemy emphasize the opacity of deep learning and the central role of data, positioning themselves as alchemists whose raw material is data, even though their control over it is limited. The “success” of this alchemy is exhilarating because it reflects not only the payoff of tedious, repeated experimentation but also a key metric of professional performance, standing in stark contrast to the idealized notion of individual creativity often celebrated in the tech industry (Banks, 2007). In practice, it is self-discipline and systematic effort, rather than autonomy or creativity, that drive algorithmic and AI innovation. Interviewees’ everyday work goals were tightly aligned with quantifiable commercial imperatives, enforced through layered hierarchies and real-time monitoring. Each algorithmic service is assigned a responsible data scientist who must register and track key performance indicators. Weekly meetings with direct supervisors require detailed progress reports toward quarterly targets—for example, raising model accuracy to a specified benchmark—and explanations for any unexpected fluctuations must be justified with data. Visual dashboards make weekly changes in accuracy immediately visible, and the principle is simple: meeting the target yields good performance reviews and bonuses; repeated underperformance risks stalled promotion or eventual dismissal. As Liu Hui explained, they were expected to “show progress to present during weekly meetings,” with explanations for unexpected outcomes required to be “supported by data.” The principle of dataism governs the workplace, ensuring that all claims and evaluations are substantiated through measurable outcomes. This pressure to produce measurable progress is illustrated in a Zhihu post by a robotics data scientist, who continued a 13-day baseline experiment despite “contracting COVID-19 during the New Year period and not feeling like himself.” 2 Although the results were ultimately disappointing, the author reflected that painstaking debugging and repeated analysis were crucial for professional development, emphasizing that experimental success requires persistent iteration, rigorous error analysis, and enduring prolonged uncertainty. Other online discussions reveal a more demoralized perspective. On Maimai, a data scientist working on recommendation algorithms vented: “One model flops, then another, and half a year goes by with nothing to show for it.” 3 Replies offered practical survival strategies that have become part of the everyday playbook: “If the click-through rate tanks, check dwell time. If that's bad too, switch to conversion rate. And if nothing works, just lower the control group baseline—that's life as a data scientist!” Another commenter put it bluntly: “Bro, even the best algorithms hit bottlenecks,” hinting that success often depends less on genuine breakthroughs than on skillfully navigating and gaming the metrics.

In our interviews, we also found that this datafied habitus extends beyond the workplace into data scientists’ personal lives, shaping how they interpret everyday interactions and leading them to view the world as fundamentally quantifiable, where only measurable phenomena are regarded as real. Yet this reliance on data can create tensions with those closest to them. As Wang Jun recounted, a disagreement with his girlfriend highlighted the limits of data-driven reasoning: “My hobby outside of work is playing table tennis. I usually play on weekends or after I finish work early. But my girlfriend thought I was spending less time with her and got pretty upset. So, I made this table, splitting my week into time slots and color-coding them—one color for work, one for time with her, and one for table tennis. I just wanted to show her that table tennis barely took up any of my time. But when she saw it, she got even more upset. I don’t get why.”

This “not understanding” illustrates how the datafied habitus can privilege measurable logic over affective and emotional dimensions, leading data scientists to rely on metrics even in domains where human intuition and empathy are central. Through constant experimentation, strategic metric manipulation, real-time feedback, and personal relationships increasingly filtered through quantification, data scientists cultivate a deeply ingrained datafied habitus.

Horizontal hope amid datafied structures

Within the highly datafied routines described above, intensified by the extreme competitiveness of China's leading tech companies, we found that data scientists sustain horizontal hope through the everyday rewards of their work—competitive salaries, technical micro-successes, collegial solidarity, and faith in technological adaptability—which provide immediate, tangible, and emotionally sustaining stability. These present-oriented gratifications help explain why many remain deeply attached to their work despite long hours, constant experimentation, and the emotional volatility of model training. This sense of optimism is particularly strong during the early stages of their careers, when the broader societal hype surrounding AI—reinforced by state and media discourses emphasizing its role in economic recovery and social well-being (Zeng et al., 2022), as well as Big Tech's supposedly utopian visions of an AI-driven future (Crandall et al., 2021)—aligns with their initial excitement and professional engagement.

A key source of immediate gratification for data scientists lies in their relatively high salaries and elevated socioeconomic status. This is particularly significant against the backdrop of skyrocketing unemployment rates in recent years (Bram, 2025). Although data scientists are aware that the development of AI technologies in their industry contributes to widespread job losses in other information technology occupations—such as web design, graphic design, and customer service—they tend to believe that their own positions are insulated from such risks. As recent reports highlight, the starting salary for data scientists in major Chinese tech firms averages approximately 30,000 RMB per month (about 4200 USD), placing them firmly within the upper middle class (Dorschel, 2022a). Many interviewees no longer self-identify with the term diaosi (losers), a slang label originating in early 2010s Chinese internet culture that denoted young men perceiving themselves as socially and economically marginalized (Szablewicz, 2014). Data scientists also tended to differentiate themselves from general programmers, colloquially known as manong (code farmers), a term historically used to describe lower-status technical workers within China's tech industry (Sun and Magasic, 2016). On Maimai, a widely read post has circulated describing data scientists as “people above people” (ren shang ren), suggesting an elevated status even within the coding profession.

4

As one participant, Liu Liang, reflected: “In the past, people looked down on coders, but now, being a data scientist means you’re part of the most cutting-edge field. You’re solving real problems, creating real value, and society respects that.”

By emphasizing their ability to “solve real problems” and “create real value,” data scientists participate in a broader cultural repositioning of their profession, where technical proficiency is linked not only to financial success but also to the status of being at the forefront of technological innovation. Beyond material rewards, they derive considerable satisfaction from microlevel technical achievements. Moments of unexpected success—when a model suddenly performs beyond expectations—produce intense emotional highs that help sustain their engagement. Closely tied to this affective dynamic is another dimension of horizontal hope: the belief in continuous learning. For those who see themselves as capable of ongoing self-improvement, technological change presents no fundamental threat (the next section discusses those who do not share this view). Many interviewees highlighted constant skill upgrading as the key to surviving and thriving in an AI-driven economy. When reflecting on the widespread displacement of other internet workers by AI technologies, many attributed these job losses not to structural transformations but to what they perceived as a failure of those workers to update their skills. As Wu Jie explained, “If you want to get ahead, you’ve got to keep learning. The people who get left behind are usually the ones who just aren’t willing to keep up with new tech.”

Similar sentiments frequently emerged during our interviews. For example, one participant reflected, “It's harsh, but if someone like a visual designer loses their job, it's because they didn’t adapt. The tools are out there—you must keep learning. AI isn’t going to slow down for anyone” (Wu Jie). Another observed, “A lot of people complain about AI taking over, but honestly, it's because they got too comfortable. If you’re not upgrading your skills constantly, it's only a matter of time before you’re replaced” (Xu Zhi). Zhou Cheng similarly remarked, “It's like the industrial revolution. Short-term pain, but long-term gain. If you can’t adapt, you’ll end up driving a delivery scooter.” This collective outlook also sustains a widespread, though empirically unverified, form of optimistic dataism within the data-science community: the belief that the explosive popularity of ChatGPT will attract greater participation and investment in the field, ultimately benefiting all stakeholders.

The foreclosure of vertical hope

In the course of this study, it becomes clear that while horizontal hope helps data scientists sustain themselves through immediate, present-oriented rewards, their longer-term aspirations, or vertical hope—to become true innovators and transformative agents who can leverage the knowledge they continuously acquire—are increasingly constrained. The first and most significant factor is the monopolization of key resources: access to high-quality datasets, computational infrastructure, and opportunities for genuine innovation is largely concentrated in the hands of a few major international and Chinese technology corporations. This is not a challenge unique to China; globally, the rise of large-scale AI models has reinforced the dominance of Big Tech, a phenomenon described by van der Vlist et al. (2024) as “Big AI,” which emphasizes the deep interdependence between artificial intelligence and the infrastructure, resources, and investments of conglomerates such as Amazon, Microsoft, and Alphabet. A similar dynamic operates in China, where firms such as ByteDance, Alibaba, and Tencent dominate the development of large AI models. Only a small proportion of data scientists in the whole infotech sector are employed within these corporations, and even those working in these firms are rarely engaged in model creation; instead, many focus on implementation, running experiments, and optimizing existing systems. Within this context, their creativity is frequently channeled into repetitive, routinized work, reflecting broader patterns of digital labor under platform capitalism (Irani, 2015), a reality captured in online commentary describing them as “cogs in the machine.” As such, many of our participants experienced their own labor as monotonous, alienating, and devoid of creative agency. For instance, Zhu Feng said: “Long working hours are not my main concern. The real issue is that I feel like a puppet. My work consists of completing tasks handed down from above—when a product manager asks for a feature, I execute it. There's little room for my own ideas.”

Similarly, Ma Bin lamented the decline of meaningful research in the wake of large AI models: “Before, working on prompts felt like real exploration. Now, it's just optimizing outputs for business needs.”

For most of our participants, the sense of disappointment is not merely a short-term reaction to working conditions; rather, it reflects the erosion of long-held ideals that many have nurtured since boyhood. Several participants recounted that their early fascination with artificial intelligence was shaped by portrayals in science fiction, which framed AI as a domain for intellectual exploration, personal development, and potential societal impact. As Chen Hao explained: “Sci-fi movies about artificial intelligence are so cool. Achieving true AI isn’t just about technology—it requires understanding human consciousness and behavior. That's why AI attracted me in the first place: it connected computers with sociology and anthropology.”

However, this romanticized vision was rapidly disrupted by the rise of large AI models such as ChatGPT. As Liu Liang noted—a view shared by many respondents: “Before the advent of ChatGPT, the emphasis was on designing better artificial intelligence models. Now, it's clear that success depends on pouring massive resources into computing and data.”

Some data scientists had initially held genuine academic ideals, perceiving their work as opportunities for unrestricted exploration and creativity. Interviewees such as Xu Zhi, who transitioned from industry back into academia, observed that universities can no longer compete effectively in AI research. As he noted: “Now, with the rise of large models, universities focus more on applying existing algorithms to produce papers because building new models requires millions in equipment and resources, which many labs simply can’t afford.”

Another factor, which is particularly salient in the Chinese context, relates to age-related workplace dynamics and the abundant supply of young labor. Human cognitive capacities and the ability to learn are inherently limited over a lifetime, and in China's highly competitive tech industry, there is a prevailing belief that data scientists reach their professional peak around the age of 35. Beyond this perceived threshold, career prospects are thought to decline sharply, even as the pace of algorithm and model development continues to accelerate. Consequently, many online discussions mockingly advise data scientists to prepare for alternative, less demanding career paths after this age. For instance, on Maimai, posts often convey a growing disillusionment among data scientists, with discussions focusing on potential career transitions. One user remarked, “From what I see, algorithm jobs are indeed high-paying but involve little meaningful work. Don’t listen to the talk about gaining a comprehensive view of business processes—for a cog in the machine, it doesn’t matter.” 5 Comment threads under this post reveal ambivalence about future prospects; some humorously suggested that the only viable “way out” was to open a barbecue stall or to sell insurance. These discussions simultaneously reflect data scientists’ disillusionment with their long-term career prospects and their dismissive attitude toward alternative forms of employment. While users jokingly suggest options such as opening a barbecue stall or transitioning into insurance sales, these remarks are not intended as endorsements of such paths as viable career development; rather, they underscore a sense of frustration and the perceived lack of meaningful professional trajectories within the algorithm-driven sector.

Other users expressed even more pessimistic assessments, stating, for example, “Algorithms have no future” or “There's no way out in the internet industry anymore.” In some cases, contributors reported actually leaving the field; however, these transitions were described in a resigned and negative tone, without any indication of satisfaction or excitement about the new career. One contributor cynically remarked, “The end of data working life is selling insurance—I, a 35-year-old data scientist, am already moving into insurance sales,” conveying a sense of necessity and disillusion rather than enthusiasm for the new role. These discussions highlight a widespread recognition that, despite high salaries and technical prestige, work in algorithm-related roles increasingly offers limited opportunities for professional development and long-term career security.

The rapid development of large AI models has intensified pressures on data scientists to continually learn and update their skills, a burden that often falls on the individual, particularly within corporate environments where opportunities for structured professional development are limited. The deployment of these models by major technology firms for internal applications, such as recommendation systems and content moderation, further amplifies this pressure. As Chen Xin observed: “Before our company developed its own large-scale model, we built separate, task-specific models—for example, one algorithm for content moderation and another for the recommendation system. Now, our work has shifted toward deploying a unified large-scale model across multiple domains. This signals a new work paradigm in which we must acquire new skills, manage far larger volumes of data, and adapt quickly, as much of the experience we accumulated in the past has become obsolete with the rise of large models.”

In other words, with the intensification of datafication, data scientists whose expertise lies in older technologies often struggle to meet the evolving demands of this new paradigm, thereby rendering their positions increasingly precarious. Notably, the expectation of continuous learning—while providing a source of immediate satisfaction and professional resilience or horizontal hope—also functions as a disciplinary mechanism, reflecting a neoliberal doctrine that frames self-improvement and skill acquisition as an individual responsibility in response to structural pressures.

Moreover, given the broader societal neglect of mental health in China, infotech corporations provide minimal to no psychological support, leaving data scientists to navigate the emotional burdens of professional disillusionment on their own. In the absence of institutional support, they are exposed to intense pressures, expected to manage their own emotional well-being. Under these circumstances, humor, self-deprecation, and a sense of collective solidarity have emerged as some of the few forms of resistance available. Across interviews and online forums, participants frequently shared humorous anecdotes that reflect their precarious professional realities—for instance, jokingly referring to themselves as “prompt workers” to highlight the deskilling of their roles, or likening their work to that of entertainment celebrities as a “youth rice bowl” (qingchun fan), a Chinese expression denoting occupations that are highly dependent on youth and thus inherently short-lived. These analogies exemplify what Berlant (2011) describes as the transformation of attachments into forms of cruel optimism, wherein the very object that sustains optimism—meaningful technological innovation—simultaneously obstructs the fulfillment of the aspirations that originally motivated it. Our participants, particularly in the early stages of their careers, often perceive large AI models as enhancing productivity and expanding human capabilities (Crandall et al., 2021), aligning with China's state and Big Tech's techno-optimistic discourse on AI (Zeng et al., 2022). However, over time, they increasingly experience a loss of autonomy, creativity, and meaningful labor, reflecting the paradoxical “enchanted determinism” described by Campolo and Crawford (2020), in which professionals remain excluded from the development of AI technologies while continuing to place strong faith in AI's transformative potential.

Conclusion

This article addresses a critical gap in the literature on datafication, AI, and big data by foregrounding the diverse lived experiences of data scientists, whose work practices and affective structures—including aspirations, hopes, and disappointments—are deeply embedded in processes of datafication, thereby revealing how pervasive dataism and the dynamics of the platform economy intersect to shape professional identity, aspirations, and the structural constraints on career development in AI-driven work. This study yields several key findings. First, it highlights how data scientists internalize dataism as a dominant epistemology and embody a “datafied habitus” through continuous experimentation and algorithmic reasoning, demonstrating that datafication is not solely a top-down process but also one that depends on intensive human labor, prolonged work hours, and is subjectively experienced and emotionally sustained. Second, by applying the lens of horizontal and vertical hope, the study illuminates the affective structures that enable workers to endure their demanding work while simultaneously constraining their long-term aspirations. At the horizontal level, data scientists maintain a sense of well-being grounded in the present, supported by high salaries, technical micro-successes, professional pride, and a commitment to continuous learning. At the vertical level, aspirations for innovation, recognition, and transformative professional agency are systematically obstructed by structural limitations, including monopolized access to computational resources, technological deskilling, and rigid career trajectories. The disenchantment expressed by our participants arose not from a sudden rupture but from the gradual erosion of the excitement that accompanied their entry into China's leading technology firms. Before joining, and during the early stages of employment, many embraced an optimism carefully nurtured by both corporate branding and state discourse that celebrated AI as a driver of national rejuvenation. This alignment of personal ambition with national technological progress generated a sense of enchantment—what Crandall et al. (2021) describe as a fusion of utopian visions of technological advancement with reenchanted imaginings of progress visions of technological advancement with reployment, many embraced an optimism carefully nurtured by both corporate branding and st Yet as daily work routines unfolded, the reality of relentless performance metrics, escalating workloads, and diminishing creative autonomy produced a slow but steady disenchantment. Participants described the fading of “boyhood dreams” and the hollowing of the initial thrill of working for a prestigious Big Tech company. Their narratives reveal a cruel optimism (Berlant, 2011) in which the very promises of innovation and professional growth that drew them in also tethered them to self-disciplining practices and precarious career trajectories. In this sense, the Chinese case illustrates how enchantment and disenchantment are not merely individual emotional states but key mechanisms through which the politics of Big Tech labor are reproduced.

This study has several limitations. While the empirical data drawn from interviews with 23 data scientists and online discourse analysis provide rich qualitative insights, they may not fully capture the diversity of experiences across different regions, company tiers, or subfields within data science, particularly outside major Chinese tech hubs. Although the dynamics explored—such as the monopolization of innovation, platform consolidation, and psychological burnout—are globally resonant, the study does not engage in cross-national comparison, which limits broader contextualization. Furthermore, while the extension of cruel optimism offers a useful analytic lens, the focus remains primarily on emotional and structural dimensions, leaving intersections with gender, class, and regional inequality underexplored. For instance, the recurring references to “boyhood dreams” and “brotherhood” among participants underscore how the data-science profession remains overwhelmingly masculinized. Such narratives cast technical curiosity and relentless experimentation as inherently male pursuits, sustaining a workplace culture in which fraternal solidarity, long hours, and competitive tinkering are valorized and subtly excluding women from key networks of recognition and advancement. Accordingly, future research could prioritize the following questions as suggested avenues: How do data scientists’ experiences and the dynamics of datafication, dataism, and platform labor vary across different regions, company tiers, and subfields, particularly beyond major Chinese tech hubs? How do the structural and affective conditions identified in this study—such as monopolization of computational resources, platform consolidation, and emotional pressures—manifest in different national and cultural contexts? And how do the professional experiences, aspirations, and affective labor of female data scientists unfold within male-dominated workplaces, including the ways gendered expectations shape opportunities and strategies for career advancement?

Footnotes

Acknowledgements

The authors would like to thank all interview participants for generously sharing their time and experiences. The authors are also grateful to colleagues who provided valuable feedback on earlier versions of this manuscript.

Ethics approval

This study was conducted in accordance with ethical standards and received approval from Jinan University Human Research Ethics Committee (Approval No. [JNUSJC-2022077]).

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.