Abstract

Existing research has characterised data annotation as invisible and precarious digital labour, typically outsourced through global crowd-work platforms. In China, rather than being outsourced through transnational crowd-work platforms, it has been re-territorialised into state-regulated infrastructures and increasingly integrated into conventional employment models. Drawing on multi-sited ethnography, this paper examines how the formalisation of data annotation in China unfolds through intersecting state, market, and worker logics: a process of reterritorialisation that selectively formalises the organisation of the data annotation industry. At the state level, credentialisation schemes, regulatory measures, and the establishment of national data hubs embed annotation into infrastructures of data security and sovereignty. At the market level, competitive pressures for accuracy and efficiency drive procedural standardisation, task specialisation, and spatial reorganisation. At the worker level, formalisation provides contractual recognition and a sense of legitimacy, yet also entrenches surveillance, constrained autonomy, and limited career mobility. These dynamics suggest that formalisation of data annotation in China does not represent a linear transition from informality to stability, but rather a selective and layered incorporation of labour into institutional frameworks. Conceptually, we argue that this trajectory signals a shift from a deterritorialised planetary stacking order toward a reterritorialised state-embedded AI stacks.

This article is a part of special theme on Infrastructure, Labour, & Social Change. To see a full list of all articles in this special theme, please click here: https://journals.sagepub.com/page/bds/collections/infrastructure_labour_social_change?pbEditor=true

Introduction

When Xiaoling graduated in 2020, the tight job market pushed her to an online advertisement for data annotation on a crowdsourcing platform. The tasks were simple—mostly tagging images and translating short texts—but scattered and with unstable pay, making it only a temporary solution during the COVID-19 pandemic. As she gained experience, Xiaoling joined a guild (公会), an online community that coordinated tasks and payments for annotators. There, she encountered many online colleagues treating data annotation as full-time work, and she began to take on more complex assignments such as sentiment analysis, content moderation, and detailed image descriptions. Eventually, with the assistance of a local guild member, Xiaoling secured a contract with a small annotation company, working fixed hours in a physical office space with basic training. Despite the unstable contracts and the inconsistent projects assigned, this experience prepared Xiaoling for the new placement in a government-backed data annotation hub as a team leader. The work is harder and the rules stricter, but the new job offers Xiaoling confidence in front of her family members that she now has a “proper” job: a formal job with necessary social insurance in a government-recognised company.

The shifting work and experiences of Xiaoling and her peers demonstrate a broader reality and transformation that this article attempts to map. From generating, annotating, to auditing data, the so-called intelligent systems are inseparable from human labour. This reliance takes three principal forms across the AI production pipeline: AI preparation, encompassing data generation and annotation that feed machine learning models (Roberts, 2019); AI verification, involving the evaluation and correction of model outputs (Ekbia & Nardi, 2017; Fu et al., 2025); and AI impersonation, where humans temporarily substitute for algorithms in tasks they cannot yet perform reliably (Irani, 2015a; Mateescu & Elish, 2019; Tubaro et al., 2020). Far from peripheral, these functions are structurally embedded in AI development; even as the rhetorical promise of full automation often masks what some call deceptive AI (Rani & Dhir, 2024).

How such tasks are organised, whether through dispersed platforms or more structured company arrangements, as Xiaoling's recent job changes illustrate, has therefore become a central concern in the literature (Graham & Anwar, 2019; Le Ludec et al., 2023). Scholars have documented a shift from open crowdsourcing marketplaces toward more managed vendor-based or in-house operations, driven both by market pressures for accuracy, quality, and efficiency, and by regulatory demands for safety, accountability, and compliance in sensitive domains such as autonomous driving and healthcare (Muldoon et al., 2025; Posada, 2024; Schmidt, 2022; Tubaro et al., 2025). This move toward formalisation has not produced a uniform organisational model but rather a mixed configuration in which platform-based microwork coexists with company-based vendor and in-house arrangements, which Schmidt (2022) describes as a “planetary stacking order” of multilayered crowd AI systems.

In China, data annotation has likewise moved beyond its early reliance on online crowdsourcing toward more formalised and standardised forms of employment (National Data Administration, 2025; Wu et al., 2025). Unlike food delivery and other gig work, which remain flexible but precarious, a growing share of annotators is now employed in commercial companies or state-backed data hubs, subject to strict quality standards and performance evaluations. While this shift parallels the global trend toward specialisation, the Chinese case is distinctive: instead of being divided between the global Northern platforms and Southern suppliers, the different layers of the planetary stacking order identified in Schmidt's (2022) research of the global annotation industry are compressed within a single domestic ecosystem. Platform-style microwork, large-scale company-based operations and national data hubs coexist, embedded in state-led strategies of data governance.

Drawing on ethnographic fieldwork and semi-structured interviews, this article examines the formalisation of China's data annotation industry and the ways in which annotation labour is reorganised through the intersecting logics of the state, the market, and workers themselves. Empirically, the analysis identifies a process of re-territorialisation in which state and platform actors selectively formalise certain practices into recognised labour while leaving others in ambiguous or informal conditions. In other words, formalisation in China operates as a key mechanism of re-territorialisation by transforming crowd-based, placeless microwork into governed occupations anchored within national data hubs. In this way, it re-embeds data labour within China's territorial and infrastructural space. This process is simultaneously driven by market imperatives oriented toward competition, efficiency, and accumulation, and by state governance that emphasises regulation, compliance, and institutional control. The outcome is a set of hybrid organisational forms in which professionalisation coexists with informality, revealing how data labour is both integrated into global AI production chains and reconfigured by nationally specific governance regimes. More broadly, this re-territorialisation of the annotation industry signals a reorganisation of the relations among labour, capital, and state power in China's data economy, indicating a trajectory toward a state-embedded AI stacking order that departs from the globally dominant configuration.

Outsourcing, offshoring and planetary crowd AI stack

Contrary to popular imaginaries of AI replacing human labour, human work remains a structural component of AI production and application, spanning preparation (training algorithms), verification (checking outputs), and impersonation (performing tasks AI cannot yet handle) (Tubaro et al., 2020). Data annotation is one example of what critical scholars term “micro-work” (Irani, 2015b; Tubaro et al., 2020) in today's AI industry. Unlike public-facing gig work (e.g., ride-hailing, food delivery), annotation is performed behind the scenes, enabling firms to downplay workers’ contributions (Le Ludec et al., 2023; Roberts, 2014). This structural invisibility renders annotation paradigmatic “ghost work”, essential yet hidden, associated with undervaluation, low wages, and limited mobility (Gray & Suri, 2019). Even as it undergirds AI systems, annotation labour often remains deprived of “quality, meaning, and social status” (Tubaro et al., 2020).

Moreover, the huge demand for a pool of low-cost, deskilled labour has driven the offshoring of data annotation to lower-income regions across the Global South. Echoing classic TNC patterns, the AI industry's global supply chains increasingly locate human labour in the Global South via outsourcing and offshoring (Diepeveen et al., 2025; Erber & Sayed-Ahmed, 2005; Le Ludec et al., 2023; Wang et al., 2022; Wu et al., 2025. Meanwhile, technology conglomerates in the Global North retain control over upstream segments of the AI industry, encompassing research and development, design, and branding (Crawford, 2021; Schmidt, 2022; Tubaro et al., 2025). Regarding organisation, data annotation in many cases falls into the category of “cloud work” (Berg et al., 2018; Schmidt, 2017), characterised by short-term, piecework contracts and facilitated and organised through various cloud-based outsourcing platforms such as Amazon's Mechanical Turk.

As accuracy-critical domains (e.g., autonomous driving, health AI) expanded, demand for precision prompted a shift from flat marketplaces to orchestrated, multi-layered systems. As Schmidt (2022) points out, the increasing demand for accuracy has led to the emergence of “a specialised, full-service crowd-AI stack”, in which annotation work is professionalised and specialised with a more complex interaction between annotators and machines: “the degree of accuracy necessary for this type of work has forced the crowdsourcing industry to fundamentally restructure its processes—from a very direct, or flat, model that provided clients with little more than direct access to a crowd available for completing general tasks to a model in which the platform orchestrates every detail of a highly complex and multilayered process while the client buys the end results without ever coming into contact with the crowd.” (Schmidt, 2022, p. 138)

Within this model, annotators are increasingly reorganised through small specialist vendors or in-house divisions of major AI firms, embedded in conventional hierarchies rather than loosely connected as independent contractors. The spread of digital tooling and algorithmic management has intensified alienation, work intensity, and surveillance, dynamics that are widely analysed as forms of digital Taylorism in the platform economy (Altenried, 2020; Liu, 2022). This regime differs from on-demand gig work (e.g., food delivery, ride-hailing) insofar as annotators often trade flexibility for greater employment security and more predictable income, alongside firm-provided training and standard operating procedures. In return, they accept tightened organisational oversight and tiered supervision, with roles and pay re-stratified (Miceli & Posada, 2022). Moreover, growing specialisation entails deeper task fragmentation and role narrowing, while proprietary lock-in, where workers’ skills become tied to company-specific tools and taxonomies, further constrains their mobility across firms and platforms (Schmidt, 2022, p. 143).

These dynamics provide critical context for our analysis of annotation labour in China. We show that data annotation and microwork in China have likewise moved toward greater institutional integration and professionalised work regimes, as major internet and AI firms, for example, Baidu, ByteDance, Tencent, and Alibaba, operate in-house annotation centres alongside a growing layer of specialised vendors. Yet important distinctions from global patterns remain. Leveraging an abundant, relatively low-cost, and increasingly skilled workforce, together with dense domestic data resources and sustained public–private investment, Chinese firms have developed a more domestically embedded ecosystem for sensitive annotation. Relative to extensive offshoring elsewhere, firms appear to favour on-shore pipelines, though heterogeneity persists across regions and organisational forms. State recognition of annotation as AI infrastructure has raised the work's infrastructural visibility, even as its implications for workers’ well-being and autonomy remain contested. Moreover, the state's concern with data security and sovereignty and the standards and compliance regimes these policy discourses entail have tightened organisational structures and shaped the pathways of professionalisation in the Chinese context.

The formalisation and governance of China's data annotation industry

The formal and informal distinction has been a recurring theme in industrial and economic studies, emphasising the coexistence and interdependence of regulated and unregulated activities across both urban and non-urban, developing and developed contexts, as well as various sectors (Ayyagari et al., 2010; Gerxhani, 2004; Hart, 1973; Rauch, 1991). With the rise of the platform economy, the formal–informal dynamic has become increasingly entangled, as online production and distribution blur boundaries between legitimate and illegitimate markets and piracy or peer-to-peer sharing creates hybrid economies integrated into global media circulation (Cunningham & Silver, 2013; Zhao & Keane, 2013; Lobato & Thomas, 2018).

In labour studies, the formal–informal debate has centred on precarity, unregulated conditions, and the absence of social protection, with these dynamics increasingly understood as overlapping and mutually reinforcing rather than sequential stages (Bolton & Wibberley, 2014; Portes, 1978; Standing, 1999). Recent research on platform labour further highlights this co-constitutive relationship, showing how ghost work in AI production relies on hidden informal practices (Gray & Suri, 2019), while gig workers move between contractual regulation and precarious conditions (Wood et al., 2019).

In the context of China, strict state regulation combined with market forces has produced particularly complex intersections between formal institutions and informal practices (de Kloet et al., 2019; Keane & Zhao, 2012), illustrating how platform capitalism often depends on informal infrastructures later selectively formalised into industrial frameworks.

This tension between formality and informality foregrounds the role of governance. In China, formalisation is less an organic shift than a state-orchestrated process, where regulation and policy selectively incorporate informal practices into formal institutions. As Zhao (2019) argues, this selective formalisation is negotiated among the state, platforms, and precarious workers, making it especially relevant for emerging sectors such as data annotation, where governance aligns labour regimes and standards with broader political and economic priorities.

Recent policy discourse has positioned data as a strategic production factor, namely one of the three pillars of AI alongside algorithms and computing power, while identifying data annotation as foundational to sustaining AI innovation (People's Daily, 2024). Rather than a neutral recognition of annotation's technical role, these initiatives reflect a state-led project of formalisation that incorporates annotation into institutional frameworks. A key moment was the creation of the National Data Administration in 2023, which consolidated fragmented responsibilities over data resources, exemplifying how formalisation in China proceeds through the expansion of infrastructural state power and the framing of data as a strategic national asset (Xinhua, 2023). Building on this institutional consolidation, a suite of legal and regulatory measures has been deployed to operationalise these priorities.

This governance framework is reinforced by laws such as the Cybersecurity Law and the Data Security Law (State Council of the People's Republic of China, 2021), which authorise extensive state oversight of cross-border data flows, with the category of “important data” defined broadly to enable discretionary intervention (Creemers, 2022). Since 2022, regulation has extended to algorithmic services, requiring providers of recommendation or generative AI systems to undergo filing, security assessments, and, in some cases, ethical review, particularly when systems are deemed capable of shaping public opinion or mobilising users (Xu, 2024). Rather than simply imposing compliance, these measures embed formalisation into the AI supply chain, localising annotation pipelines within domestic infrastructures and aligning labour processes with the state's security and governance priorities, while constraining reliance on transnational crowdsourcing models (Yun, 2025).

At the labour level, the recognition of AI Trainers as a new occupation in 2020 marked the codification of previously informal annotation tasks into credentialised regimes (MOHRSS, 2021). National standards now define a five-tier career progression from basic annotation to algorithm evaluation, embedding annotation within a stratified skill hierarchy and linking workforce development to industrial upgrading agendas. The establishment of National Data Annotation Hubs in regional centres such as Chengdu and Hefei (Xinhua, 2024) further illustrates how labour formalisation is spatially anchored within state-regulated infrastructures, while MIIT's guidelines codify quality and consistency benchmarks (Fu et al., 2025).

In short, these dynamics suggest that the formalisation of China's data annotation industry cannot be understood as a linear transition from informality to formality, but rather as a negotiated process shaped simultaneously by state regulation, market imperatives, and labour reconfiguration. This tripartite lens provides a useful framework for analysing how governance, capital, and workers interact in the institutionalisation of digital labour.

Methodology

This study adopts a multi-sited and digitally embedded ethnographic approach (Marcus, 1995; Pink et al., 2015), integrating longitudinal fieldwork, participant observation, and semi-structured interviews. Between November 2023 and May 2025, the fieldwork was conducted across six Chinese cities and a range of annotation environments, including crowdsourcing platforms, start-ups, and platform-affiliated in-house teams. Prior to the commencement of fieldwork, the study received ethical approval from the institutional review board of the first author's home institution.

The first phase (Nov 2023–Jan 2024) involved joining a crowdsourcing platform as an annotator. The first author completed the platform's onboarding and training procedures and participated in image-to-text annotation tasks within a small workgroup. Snowball sampling within the group led to online interviews with annotators across different regions. In the second phase (Jan–Mar 2024), the researchers gained offline access to three annotation companies located in Changzhou, Chengdu, and Zhengzhou. On-site visits included interviews with managers and annotators, focusing on workflows, labour division, and quality assurance mechanisms. The third phase (June 2024) involved embedded participant observation at a start-up X in Yantai, where the first author worked for two weeks as an annotator performing video classification and 3D annotation for autonomous driving systems. The fourth phase (Jul–Aug 2024) included site visits to data annotation branches of platform-owned firms in Shanghai and Beijing, where in-house annotation processes were examined.

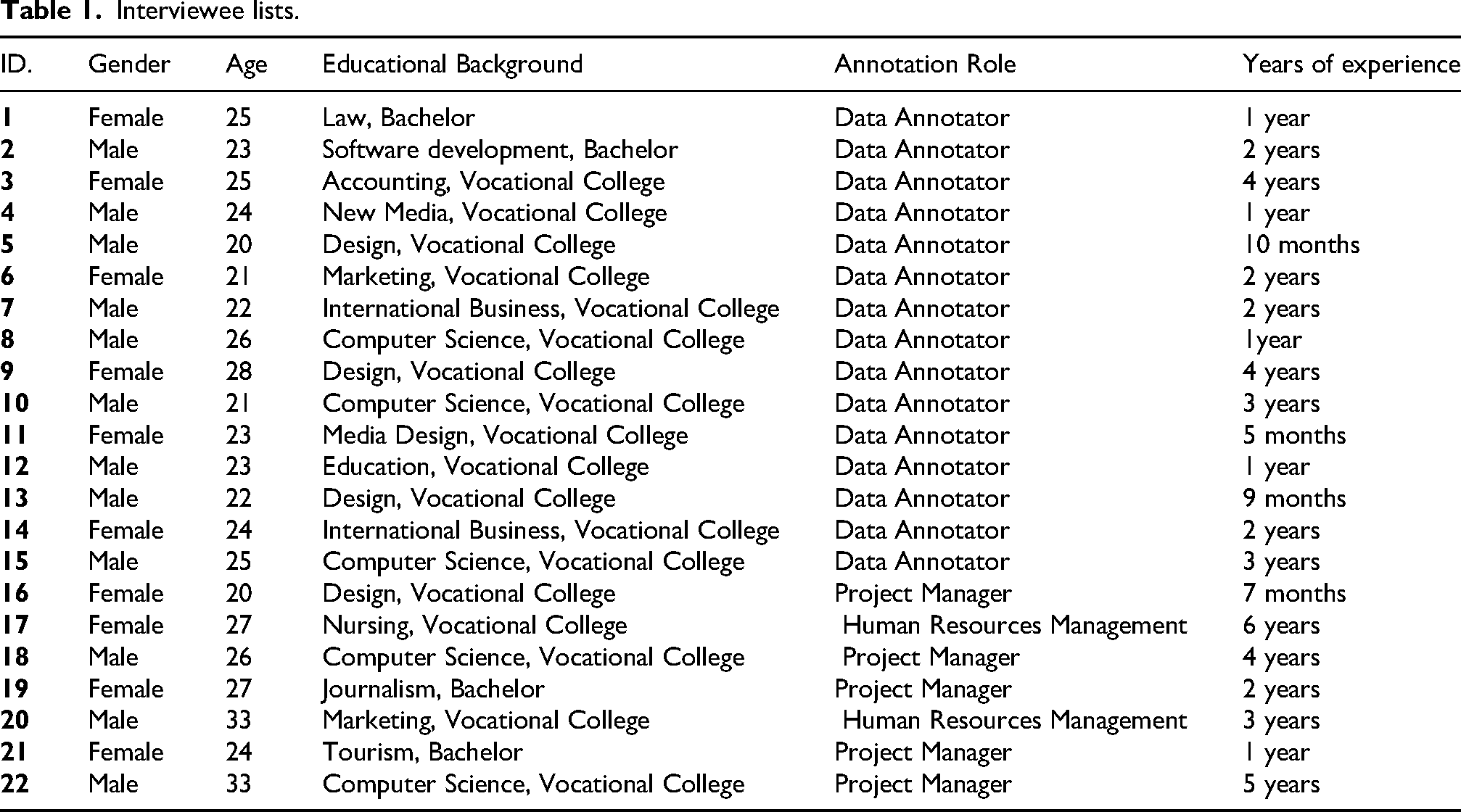

A total of 22 in-depth interviews were selected for this paper (see Table 1). The sample included 12 male and 10 female respondents, ranging in age from 20 to 33 years. Participants were engaged in various roles across the data annotation production chain, including frontline data annotators (n = 15), project managers (n = 5), and human resources personnel (n = 2), offering a multi-layered perspective on workplace governance, labour organisation, and professionalisation processes.

Interviewee lists.

The semi-structured interviews were designed to investigate how data annotators perceive their work, navigate institutional changes, and articulate their roles within China's evolving AI labour regime. The interview guide covered five key areas: entry pathways and work histories; comparisons with prior or alternative forms of labour; perceptions of industry transformation, including the rise of domain-specific tasks such as autonomous driving; experiences of standardisation, evaluation, and workplace interaction; and expectations regarding professional identity, social value, and the future of annotation in the context of automation. The interviews also encouraged participants to reflect on questions such as: “How did you enter this line of work?” “Do you feel your tasks have become more standardised?” and “Do you see yourself as a professional?” These discussions provided insight into how annotators make sense of their work amid growing institutionalisation, shifting governance logics, and uncertain occupational futures.

All interviews and fieldnotes were analysed using thematic analysis (Braun & Clarke, 2006), supported by NVivo software. The analytical approach was grounded in interpretive ethnography (Geertz, 1973; Guba & Lincoln, 1994), with a focus on how annotators make sense of their changing roles, identities, and working conditions under the formalisation of data labour in China. The analysis proceeded in three stages. We began with close reading and open coding of interview transcripts and fieldnotes, identifying recurring experiences, expressions, and metaphors. These codes were informed both by emergent patterns in the data and by the structure of the interview guide, which covered topics such as annotators’ entry into the industry, their daily work practices, comparisons with other jobs, experiences of standardisation, perceptions of agency and meaning in relation to AI systems, and expectations for the future of annotation work. In the next stage, the codes were organised into broader categories that reflected how processes of formalisation, specialisation, and professionalisation were experienced by annotators. We paid particular attention to how participants described shifts in training, quality control, and workflow organisation, as well as how they understood their roles in relation to managers, platforms, and AI technologies. Through this process, we identified how the institutional restructuring of China's data annotation industry produces not only new forms of labour governance but also new forms of subjectivity.

State-led formalisation: embedding data labour into governance infrastructure

Globally, the formalisation of data annotation has unfolded through outsourcing and offshoring, especially toward the Global South. What has emerged is not a linear replacement of crowdsourcing by company-like structures, but a stack-like order in which informal microwork continues to coexist with increasingly formalised vendor operations, while firms in the Global North retain control over research, design, and high-value segments (Crawford, 2021; Le Ludec et al., 2023; Schmidt, 2022; Tubaro et al., 2025). In China, however, formalisation of the data annotation industry has been actively state-led, embedded within national strategies of data security, sovereignty, and AI competitiveness. Through policy initiatives, occupational credentialisation, certification schemes, and the establishment of state-backed data hubs, the government has repositioned annotation from invisible microwork to infrastructural labour at the core of its AI governance agenda.

At the ground level, state-led reforms have re-channelled recruitment, contracting, and workplace organisation in annotation. Wang, who entered the industry in 2020, described how the system gradually shifted:

At first, I picked up tasks on a crowdsourcing platform, taking whatever orders I could find. But gradually, the orders became fewer, and most work started coming through guilds (公会) or team leaders (组长), who secured the tasks and distributed them to us. So I joined a guild. Over time, even the guilds began shifting offline, and through the guild, I got to know some local friends who eventually introduced me to this company.

His trajectory shows how fragmented platform work was channelled into collective intermediaries and, eventually, into formal employment, a process reinforced by state credentialisation and compliance requirements. Zhang, who had also worked through online platforms before moving to a company job, emphasised the change in contractual security:

Back then, I just signed up online and started working, and there were no contracts or social insurance. Honestly, I always felt uneasy. Now I have a formal contract with ‘five insurances and one housing fund’ (五险一金), and at least I know it won’t affect my retirement later.

Zhang's reflection illustrates how state-recognised occupational categories and welfare obligations transformed annotation from precarious gig work into formal employment legible to China's labour regime. In short, what appears as individual job mobility is better understood as institutional channelling, an outcome of regulation, credentialisation, and infrastructural anchoring at the core of state-led formalisation.

A further dimension of state-led formalisation is spatial and infrastructural anchoring. Under China's AI and data-sovereignty agenda, annotation has been centralised into designated industrial parks and national data hubs in cities such as Chengdu, Changsha, Hefei, and Shenyang (Xinhua News Agency, 2024). This relocation from dispersed remote work to on-site facilities allows authorities to enforce uniform compliance architectures, including secured networks, access controls, and auditable workflows, within territorially bounded sites. As Zhang explained:

We used to work from home or online platforms, but now the company has placed us in the industrial park. They said it's to meet security checks and government standards, so everything must be centralised.”

These hubs are not merely workplaces but nodes of governance. Firms benefit from subsidised leases, relocation grants, and standardised training schemes, but they are also subject to regular inspection and reporting obligations. Liu, a team manager, described:

Government inspectors visit regularly. They check our data pipelines, training records, and even how we store sensitive datasets. Everything is standardised, and we must file reports after each inspection.

Such arrangements institutionalise annotation within the state's compliance apparatus, embedding labour processes in infrastructures that are at once productive and regulatory. By linking space, subsidy, and supervision, the government has reclassified annotation as strategic infrastructure, positioning it as integral to national AI development rather than peripheral support work. This institutional reframing highlights how formalisation in China is advanced through regulation, infrastructural support, and direct oversight, laying the groundwork for the market-driven dynamics explored in the next section.

A further mechanism of state-led formalisation lies in the institutionalisation of supervision and compliance training within annotation hubs. Once dispersed and loosely monitored, annotation has been reorganised into multi-level management structures that codify responsibility and oversight. Rather than reflecting only corporate discipline, these hierarchies are institutional requirements designed to ensure that annotation processes conform to state regulatory frameworks on data security and governance.

Parallel to organisational restructuring, the content of training has been formalised. Instead of ad hoc online tutorials focused narrowly on labelling accuracy, annotators in state-endorsed hubs now undergo structured programmes that combine technical instruction with compliance protocols, security briefings, and confidentiality agreements. Training now includes what data we cannot touch, how to handle sensitive content, and what happens if we make mistakes. If we mess up, it is not just about fixing errors; it could mean violating regulations. (Zhang)

Zhang's account illustrates how training has been extended beyond performance to embed regulatory adherence into everyday annotation practice. This institutional embedding demonstrates that formalisation in China is not confined to contractual arrangements or workplace relocation. Through mandated managerial structures and compliance-oriented training, annotation has been recast as a form of governed labour: workers are positioned simultaneously as contributors to AI development and as frontline enforcers of state rules on data sovereignty. Infrastructural anchoring, therefore, operates not only spatially but also organisationally, consolidating annotation as part of the state-led governance apparatus.

Seen in this light, the distinctiveness of China's trajectory lies in how the state has accelerated the process of formalisation, framing annotation as part of a broader governance project tied to data security and sovereignty. However, this is only one dimension of transformation. Formalisation has also been shaped by how enterprises reorganise production, restructure employment, and adapt to competitive pressures. The next section examines this market dimension, exploring how logics of efficiency and profitability intersect with, and at times complicate, the state's regulatory project.

Market-driven formalisation

Alongside state intervention, the formalisation of annotation in China has also been propelled by market logics shaped by the intensifying competition in the AI industry. Globally, rising demands for speed and accuracy have driven firms to move beyond loosely coordinated microwork toward layered workflows and role specialisation (Casilli, 2025; Muldoon et al., 2025; Tubaro et al., 2025). Chinese companies follow a similar trajectory but with distinctive features. The pursuit of high-quality data at scale has pushed firms to accelerate annotation cycles, segment tasks into specialised pipelines, and frame “data compliance” (数据合规) as both a technical guarantee and a commercial asset to reassure clients. These strategies have, in turn, favoured large-scale, standardised operations and a spatial reorganisation of the industry, as annotation shifts from fragmented gig work toward corporate centres and regional bases. In this way, competitiveness and compliance operate as mutually reinforcing imperatives, with firms formalising not only to meet precision standards but also to secure reputation and market position in a crowded domestic market.

Competitive pressures are reshaping the organisation of annotation. Clients demand rapid turnaround and high precision, which pushes firms to continually refine standards, compress cycle times, and reduce variance across teams. An annotator who has worked across several firms described the pace and direction of change:

This industry is so new that, at the beginning, there weren’t any clear standards. Every project had its own way of doing things. But as more companies entered the market, the clients became stricter. Each time a new client came in, they added another set of requirements, sometimes small adjustments and sometimes a whole new checklist. We had to learn everything again. Compared to before, the work is much more complicated now.

This remark does not merely register “complexity”; it identifies the mechanism of market-driven formalisation. As competition intensifies, client-side requirements proliferate and change faster (requirements churn). To remain eligible for contracts, vendors translate these shifting demands into codified procedures, versioned guidelines, documented SOPs, audit trails, and change-control processes so that revisions can be absorbed quickly without derailing delivery schedules. In effect, speed and accuracy are jointly produced through process formalisation: firms standardise handoffs, instrument error tracking, and allocate specialised roles (PM, QA, reviewers) to stabilise output quality while meeting deadlines.

These shifting expectations drive deeper standardisation and proceduralisation, as firms compete to demonstrate their ability to meet sector-specific benchmarks and adapt to context-sensitive guidelines. In sectors like autonomous driving, annotation has become highly technical, requiring precise spatial and object-recognition standards. Liu, who works for a transportation-focused firm, explained:

I used to annotate anything like people, animals, objects but now I only analyse pedestrian movement for self-driving cars. The work is much more specialised, with less room for mistakes.

This illustrates how market-driven formalisation transforms annotation from interchangeable microwork into contextual, precision-oriented labour embedded in industry-specific infrastructures. To maintain these standards, firms have institutionalised multi-tier quality control systems: internal scoring mechanisms to monitor accuracy, randomised checks by quality specialists, and re-training or re-testing requirements for annotators who fall short. While such mechanisms ensure accuracy, they also limit autonomy. As Zhao put it bluntly:

After doing it for a long time, most of the work just feels mechanical, almost like cyber screw-turning. You repeat the same steps over and over. Even if something looks wrong, you still follow the guideline, because if you don’t, it gets rejected.

Beyond technical standards, competition has also reshaped the spatiality of the annotation industry. To reduce costs and secure labour, firms increasingly move from fragmented online gig work toward corporate offices and state-supported bases, relocating operations from high-cost coastal cities to cheaper inland provinces. This mirrors global outsourcing logics, but within China's borders: a domestic gradient that extends from first-tier urban centres to smaller cities and even rural areas. Here, multiple models coexist, online platforms, local firms, and large-scale annotation hubs, reflecting the hybrid structure of China's data economy. Annotators themselves recognise this gradient. A project manager in Chengdu explained:

Life here is relatively comfortable, and wages don’t need to be as high, so of course our costs are lower than in first-tier cities like Beijing or Shanghai. But now even Chengdu is getting expensive, so we may have to look for cheaper places further inland.

An annotator who moved from a tier-one city to a tier-three city described the change in spatial terms:

I used to work at a platform-owned base in Beijing, earning only four or five thousand yuan a month. Later, one of my team leaders moved back to our home province's capital to join a local data company, and they were also offering around four thousand. I immediately followed, because the cost of living is much lower there. With the same pay, life is definitely better.

These stories illustrate how the market logic of cost control drives relocation and scaling. Companies translate regional labour price differences into a competitive advantage, while workers experience a shift from dispersed gig work to concentrated bases. Spatial reorganisation thus becomes not just a background condition but a strategic lever in the formalisation process, allowing firms to claim both efficiency and reliability in a crowded market.

Hence, the market-driven formalisation in China is not simply about efficiency but about securing competitive advantage in a crowded industry. By embedding speed, professionalisation, compliance, and spatial reorganisation into their operations, firms transform annotation into an industrialised and reputationally anchored practice. However, this market logic does not operate in isolation. It intersects with state-led governance, producing overlapping imperatives that shape both how annotation is organised and how workers experience it. The following section turns to this worker perspective, examining how these dual logics are felt, negotiated, and contested in everyday practice.

Worker-experienced formalisation: layered subjectivities and selective formalisation

The extension of state-led regulation and market competition into the organisation of annotation raises a critical question: does formalisation mitigate the precarity and invisibility long associated with this form of digital labour, or does it simply reconfigure them in new ways? Empirical evidence suggests the latter. Rather than a straightforward transition from informal crowdwork to stable employment, annotation in China today is marked by a heterogeneous field. In-house teams at major platforms, certified vendor firms, semi-formal subcontracting workshops, and online crowd platforms all coexist, reflecting multiple and uneven pathways of incorporation. For some annotators, contracts, benefits, and professional titles have conferred a stronger sense of legitimacy and visibility. For others, annotation continues to resemble “ghost work” within multi-tiered supply chains, where project-based churn, metricised management, and proprietary lock-in reproduce dependency and replaceability. In this sense, formalisation does not unfold as a uniform or linear process but as a selective incorporation of labour into institutional frameworks, extending protections to certain segments while leaving large portions precarious. This uneven configuration illustrates how formal and informal economies are not sequential stages but interlocking layers, serving both state imperatives of governance and corporate demands for efficiency.

For some, their work remains akin to a screw (螺丝钉), a replaceable instrument that performs repetitive and mechanical tasks. For others, it is more like a sail (船帆), driving the progress of AI systems and ensuring that AI stays on the right track. This divergence in perception is closely tied to the ways formalisation has reshaped workers’ everyday experiences. Many annotators described a shift from viewing annotation as fragmented, temporary crowd work to seeing it as a legitimate job. Wang compares his earlier unstable work with his current experience: Back then, it felt like a very uncertain job. I didn’t even know how to explain it to my family, so I would just tell them I was doing some online gigs. But now, it really feels like going to work. I leave home in the morning, work during the day, sometimes stay late for overtime, and go home to sleep at night. We used to be outsiders, figuring things out in our own ways (野路子), but now we are part of the regular workforce (正规军).

Wang's reflections echo the sentiments shared by many annotators we interviewed. During these conversations, they frequently described how their formalising job gives them a stronger sense of doing “proper work” (正经工作). This desire for legitimacy is particularly significant in the broader context of data annotation in China, where many workers transition from informal, precarious roles to more structured employment. The shift toward formalisation not only offers material benefits, such as social and medical insurance but also shapes their self-perception and professional identity. Those working on video annotation, for instance, zealously describe their months of hard work contributing to the quality of videos they scroll daily on social media platforms. Similarly, those involved in self-driving annotation spoke with pride about their role in shaping China's well-known EV companies’ autonomous driving systems. One annotator shared, “Now I can tell my family I’m contributing to the X-brand autonomous driving project, and it feels more legitimate and gives me a sense of accomplishment.”

This growing awareness of the end-use of their work, whether in consumer platforms or advanced AI systems, further reinforces their self-perception of data annotation that has transitioned from invisible, piecemeal labour to recognised, valuable contributions. This growing identification is also intensified by the government-organised certification and establishment of professional rank, as mentioned previously, which frames data annotation as skilled labour rather than precarious, casual work. For instance, the “AI Trainer” certification is structured into five professional ranks and requires workers to meet specific competency standards. Yuan, a 23-year-old annotator who had recently completed the certification process, shares with us,

I used to feel like a part-time worker, like a temp. But after obtaining the AI trainer level 5 and level 4 certifications, it feels like I’m actually building a career. It's not just about marking images or tagging text anymore. Now I’m part of something bigger, more professional.

This shift in how workers perceive their role is indicative of a broader trend toward professionalising what was once considered a low-skill job. However, not all workers view this shift positively. Liu, who had been in the industry for four years, provides a more sceptical perspective:

It's good that there are certifications now, but I’m not sure how much they can really bring about change. We’re still stuck in the same low-paying jobs. The certification makes us feel official, but the reality is that the work hasn’t changed much. We still don’t have a real career progression.

Liu's comment epitomises a shared tension between the professionalisation of data annotation through certification and the industry's persistent lack of upward mobility. While certifications contribute to a more established professional identity, they do not necessarily translate into meaningful career development or higher income. Tao, a supervisor in an official data annotation centre, explains,

We’ve started offering more training opportunities, and workers now have official titles like “AI Trainer.” But beyond that, there aren’t many opportunities for promotion. Most people stay in the same position for years. There's not much upward mobility. That's why we keep recruiting new staff, because the older ones eventually will leave the industry.

This stagnation in career advancement has led many annotators to confront a gap between their initial aspirations and the long-term realities of annotation work. While formalisation may offer the appearance of professional growth, many workers find themselves trapped in low-wage, specialised roles with limited prospects. This tension between aspiration and constraint is often reflected in the metaphors workers use to describe their work, such as “sails” and “screws.” As the 24-year-old data annotator Jiang describes his experience as a screw:

I had big dreams when I started, like sails pushing me forward. But after a while, you realise you’re nothing but a screw in the machine, small, replaceable, and with no real control over where you go.

While many annotators resonate with the “screw” metaphor, viewing their work as mechanical and easily replaceable, there are also those with a more optimistic mentality. Jing offers a contrasting perspective:

People often say we’re like screws, something insignificant, easily replaced if broken. But I don’t see it that way. If I must describe it, I think we’re more like the sail of a ship. The sail provides power to move forward, but it also helps steer the ship in the right direction. Take what I do with social media annotation. Our work shapes what the public sees, and that, in turn, influences social values. So, I actually feel what we do is quite important.”

Jing's reflections point to a broader undercurrent among some annotators who understand their labour not merely as repetitive tasks but as interventions that shape digital visibility and public discourse, situating them within the very infrastructures that mediate social information flows. Such divergent interpretations underscore the ambivalent positioning of annotators in China's data economy: simultaneously the “screws” that sustain the apparatus and the “sails” that symbolically propel its expansion. As annotation becomes further institutionalised as infrastructural labour, the challenge ahead lies in whether such formalisation can move beyond selective incorporation to deliver substantive gains in security, recognition, and mobility—conditions necessary for building a more sustainable and equitable data infrastructure.

Conclusion: towards state-embedded AI stacks

This article explains how data annotation is being formalised in China and with what consequences, drawing on multi-sited ethnography and 22 in-depth interviews across six cities. By following annotators across platforms, vendor firms, in-house teams, and state-backed hubs, we showed that formalisation does not unfold as a linear passage from informality to stable employment. Instead, it takes the form of a layered, selective incorporation shaped by intersecting state, market, and worker logics. The result is a re-territorialisation of data labour into nationally governed infrastructures, a domestically consolidated yet uneven stack that recasts annotation as infrastructural work while preserving elements of precarity and control.

At the state level, credentialisation, regulatory consolidation, and the spatial anchoring of work within designated data hubs embed annotation into a compliance apparatus centred on data security and sovereignty. The recognition of AI Trainers, tiered skill standards, mandated supervision structures, and compliance-oriented training reposition annotation as strategic infrastructure. State inspection, reporting requirements, and bounded workplaces materially and symbolically reclassify annotation from hidden microwork to governed labour, formalising pipelines and constraining reliance on transnational crowd-sourcing models.

At the market level, competitive pressures for accuracy, speed, and reliability drive procedural standardisation, task specialisation, multi-tier quality control, and algorithmic oversight. Firms translate fluctuating client demands into versioned SOPs, audit trails, and stratified roles (PM, QA, reviewers), while spatial reorganisation moves operations to lower-cost regions and state-supported hubs. Data compliance becomes both a technical requirement and a commercial asset, reinforcing on-shore pipelines and favouring large, process-capable operators that can signal dependable throughput and governance readiness.

At the worker level, formalisation delivers contractual recognition, social insurance, and a stronger claim to legitimacy, as workers describe moving from outsiders to members of the regular workforce. Yet these gains are tempered by intensified surveillance, constrained autonomy, and limited mobility, frequently mediated by proprietary tools and taxonomies that lock skills to specific firms. Annotators oscillate between feeling like screws sustaining the machine and sails propelling and steering AI systems, an ambivalence that reflects how they become visible as infrastructure while still remaining governable, replaceable, and tightly measured.

Conceptually, we argue that China's trajectory marks a shift from a deterritorialised planetary stacking order to a state-embedded AI stacking order. In this shift, formalisation becomes the principal mechanism through which re-territorialisation takes place. As an infrastructural occupation located in designated hubs and governed by national data laws, the institutionalising annotation industry reconfigures how borders, sovereignty and labour organisation intersect in the AI economy. Global production logics persist, but they are rescaled and reconfigured through nationally-specific governance that compresses platform, vendor, and in-house layers into a domestic ecology. This configuration selectively formalises labour, deepens compliance architectures, and redefines infrastructural visibility. It is not a full passage from informality to stability; it is a reorganisation of control, recognition, and value within a hybrid, nationally anchored stack. It has become a crucial infrastructural project of national governance, reinforcing a vision of a state-directed data economy that extends beyond a digital China to shape the global order of AI and data governance.

For future studies, we may need to explore further how these formalised data annotation workers cope with and navigate the continuously updating annotation technologies. Will Chinese annotators move up the AI value chain, or will they become redundant and replaceable again? How does China's re-territorialisation of the data economy impact the global landscape of AI development and data governance? How does this re-territorialisation of data and data annotation contribute to China's edge in the global AI contest (e.g., the recent success of Deepseek and Apollo Go)? The answers to these questions may be essential for the future of work, but also the future of our shared planet.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Social Science Fund of China, (grant number 25CXW056).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.