Abstract

As digital platforms are increasingly shaping access to information, their control over platform data has profound implications for research, innovation, and accountability. This article presents the first comprehensive empirical study of how digital platforms have used contractual terms to enclose publicly available data over the past decade. Analyzing the Terms of Use of 279 platforms from 2012 and 2022, we identify a systematic increase in both restrictive and permissive clauses governing access to data for research purposes. We find that platforms adopted a dual contractual strategy: restrictive terms limit third-party access to data for research, while permissive terms preserve the platform's own ability to use and share data with selected partners. This strategy emerged alongside the rise of consent-based privacy regulation, especially the EU's General Data Protection Regulation (GDPR), which, despite its objective of protecting users, inadvertently expands platforms’ discretion over data access. Our findings suggest that privacy regulation has facilitated, rather than curtailed, platform data enclosures—enabling platforms to assert de facto control over data in the public domain. We contribute to the literature on data governance, platform regulation, and contract theory by documenting how platforms adapt boilerplate contracts in response to regulatory change and leverage privacy obligations to restrict independent research. These findings carry significant implications for legal and policy debates surrounding data access, transparency, and the future of platform accountability. They underscore the need to regulate the privatization of publicly available data to ensure equitable access for research serving the public interest.

Keywords

Introduction

On 25 March 2024, the District Court of the Northern District of California rejected X Corp.'s claim against the non-profit Center for Countering Digital Hate. X alleged that the Center had breached X Corp.'s Terms of Service (ToS) by collecting public tweets, seeking to document an increase in hate speech on X's site since its purchase by Elon Musk. Similar attempts to stifle platform accountability research have been more successful. In 2019, for example, Facebook (Meta) halted a disinformation study conducted by New York University's (NYU) Cybersecurity for Democracy (Vincent, 2021) by sending a cease and decease letter claiming that the study team's use of a third-party application to collect data hosted on Meta's server violated its ToS (Chiauzzi and Wicks, 2019).

As platform data takes on a more central role in research and development, legislators, courts, and scholars have become increasingly concerned about the constraints on data access for scientific purposes, particularly when access to publicly available data is restricted by contractual barriers imposed by digital platforms (Fiesler et al., 2020; Huq, 2021; Kadri, 2021; Mancosu and Vegetti, 2020; Rubinfeld and Gal, 2017). In the EU, the Digital Services Act (DSA) introduced a new procedure for obtaining access to data for specific research purposes. Similarly, the proposed Platform Accountability and Transparency Act (PATA) in the United States would require large digital platforms to allow data access for research purposes. Meanwhile, in the last nearly three decades of litigation, US courts have voiced multiple, sometimes conflicting opinions on the enforceability of contractual terms restricting data access (Solove and Hartzog, 2025; Perrino, 2023).

To date, only one study, published in 2020, has investigated platforms’ use of their Terms of Use (ToU) (including their ToS, privacy policies, community guidelines, and similar end-user agreements) to restrict access to data (Fiesler et al., 2020). This article contributes to this literature by providing new empirical evidence on contractual restrictions affecting access to data for research. It examines how data protection regulations intersect with the drafting of platforms’ ToU and how this dynamic shapes the scope of permissible access to platform data for research purposes. Analyzing the ToU of 279 digital platforms, we identify 12 types of contractual clauses related to data access. We assess their prevalence and practical implications, and demonstrate how platforms invoke privacy obligations to block third-party data collection and create “data enclosures,” which, in effect, restrict access to data.

This article is organized as follows: the “Background” section presents the background for our analysis, clarifying that, from a legal perspective, platform data largely remains in the public domain and should, in principle, be freely accessible for researchers to collect and utilize. Nevertheless, platforms exploit the interaction between their ToU and data protection regulations to effectively enclose publicly available data. The “Data and methodology” section outlines our research methodology. Our findings, presented in the “Results” section, demonstrate the increasing prevalence of ToU restrictions on third-party access to data, even as platforms increasingly rely on their ToU to secure broad rights to use the data as they see fit. The “Discussion” section discusses these findings and their implications. Specifically, it describes the unintended consequences of data protection regulation; that is, platforms’ misusing users’ rights to promote their own business interests. Employing a restrictive strategy in their ToS and community guidelines to limit researchers’ access to data, platforms can avoid unwanted scrutiny and maintain their competitive edge. At the same time, platforms apply a facilitating strategy in their privacy policies, enabling themselves, selected affiliates, and paying customers to use the data for platform-approved research that aligns with the platforms’ business interests.

Background

Platform data scraping and its academic significance

Lawyers and social scientists view data differently. From a legal perspective, data is commonly understood as recorded “facts about a situation, person, [or] event” (Cambridge Dictionary)—discrete “units of information” governed by privacy and property laws (Woods, 2016). Privacy laws focus on whether data reveals “information relating to an identified or identifiable natural person” (GDPR, Art. 4), that is, whether it is “personal” (Viljoen, 2021). Intellectual property laws treat data as an intangible economic asset, raising questions of ownership and permissible use (Determann, 2018). From an economic perspective, data is inherently non-rivalrous: its use by one person does not deprive others of the opportunity to use it. Therefore, data does not entail the usual justifications for assigning property rights.

In contrast, social scientists increasingly view data not as mere facts, but as constructed artifacts shaped by context and human choice, such as platform design. Data is produced—a context-dependent representation of reality that is open to interpretation (Kitchin, 2014; Marres, 2020; Rogers, 2021). Recently, legal scholarship has started to embrace these insights, viewing data as a form of power—an instrument of control underpinning decision-making and market dominance (Fahey, 2021). This view emphasizes data's constructed nature and the impact of “data bias,” highlighting the importance of paying careful attention to how data is collected and used (Zarsky, 2014).

This article focuses on legal barriers to accessing publicly available platform data—what Richard Rogers calls “native” digital data, such as posts, links, hashtags, and images shared by users on digital platforms (Rogers, 2021). The latter may include social media websites (e.g. Facebook and Twitter/X), as well as digital marketplaces (e.g. Amazon and eBay), video-gaming sites (e.g. Rumble), image-sharing platforms (e.g. Pinterest), and social bookmarking services (e.g. Reddit and Plurk).

Although data is non-rivalrous and, in principle, easy to duplicate, digital platforms control access to unique and unmatched data sets (Rubinfeld and Gal, 2017). Publicly available platform data is especially valuable for research. As Noortje Marres notes: [Platforms] have rendered social life reportable, interpretable, shareable, and influenceable in potentially new ways. […] The very same digital transformations that have made available new types of social data and enabled the application of new computational methods in social research are equally affecting the role of locatedness, embodiment, latency, atmosphere, and so on in social life, in short, its contextual—or […] “situational”—character. (Marres, 2020: 2)

Platform data has thus become a “living lab” of invaluable real-time data, providing researchers with opportunities to reach new frontiers of knowledge and understanding, and challenging existing research methodologies (Rogers, 2021). It serves a wide array of research needs across various disciplines: Computer scientists use it to train natural language processing (NLP) and artificial intelligence (AI) models (Perriam et al., 2019), while health researchers deploy it to analyze mental health and substance abuse patterns (Nebeker et al., 2020). Platform accountability research leverages platform data to investigate such critical issues as political ad targeting (Horwitz, 2020), social polarization (Israeli and Tsur, 2022), discrimination (Edelman et al., 2017; Laouénan and Rathelot, 2022), and platform bias (Patro et al., 2022).

In the social sciences and the humanities, Rogers (2021) and Marres (2020) use platform data to develop innovative methodologies that blend quantitative and qualitative approaches to create nuanced “metapictures” and “situational analytics” that reveal the complex dynamics of digital social environments. These and other critical perspectives (Gitelman, 2013) emphasizing data's contextual and situational character, highlight researchers’ need for independent access to platform data, rather than platform-mediated access, such as via Facebook Open Research and Transparency (FORT).

Scraping publicly available platform data: The legal landscape

Notwithstanding the importance of platform data for research and development in the era of AI and LLMs, digital platforms employ various self-governed contractual and self-imposed technological measures to keep publicly available data under their exclusive control (Schwalbach and Mauer, 2025). They do so precisely because they lack a clear basis for claiming any proprietary rights in it.

To begin with, trade secret laws are largely irrelevant to publicly available platform data due to the lack of secrecy in this regard (18 U.S.C. § 1839).

Additionally, copyright laws explicitly exclude data from their protectable subject matter (Determann, 2018). With data considered a building block for future innovative and creative works, granting rights in it would contradict the primary objective of intellectual property (IP) legislation of promoting scientific progress and innovation (Determann, 2018). Only compilations of data exhibiting sufficient originality in their selection and arrangement may qualify for copyright protection (Determann, 2018; Huq, 2021). Such protection, however, does not extend to the underlying facts (17 U.S.C. § 102(b)). Moreover, even when platform data is sufficiently original to qualify for copyright protection (notably certain user-generated content), copyright laws include exceptions and limitations that permit the use of such content for socially beneficial purposes, including research (17 U.S.C. § 107).

]Finally, as recently explained by the District Court of the Northern District of California in X v. Bright Data , platforms typically obtain only a non-exclusive license to their users’ content, primarily to limit their legal liability for harm caused by user-generated content (UGC). Consequently, since platforms cannot assert rights in excess of those that they actually acquire, they may not lawfully exclude third parties from accessing such content.

Absent IP protection, access to publicly available platform data largely remains subject to two legal frameworks: privacy law and contracts. Publicly available data that includes personal information may be subject to privacy and data protection laws (Solove and Hartzog, 2025). Even though data subjects voluntarily published it on a publicly accessible website, they did not necessarily relinquish their privacy rights to that data. In Carpenter v. United States , for instance, the Supreme Court held that there is a reasonable expectation of privacy under the Fourth Amendment for geolocation data about publicly observable automobile movement. Accordingly, although privacy laws have no direct bearing on questions of ownership of (or rights to) data (Determann, 2018; but see Lessing, 1999; Westin, 1967), they do offer a parallel, competing, framework pertaining to the access and use of personal data (Solove and Hartzog, 2025).

Over the past decade, the collection and use of platform data have been subjected to a heavy regulatory burden. In March 2012, the US Federal Trade Commission (FTC) published its recommendations for protecting users’ privacy in an era of rapid change (FTC, 2012). The FTC recommended that “companies […] limit data collection to that which is consistent with the context of a particular transaction or the consumer's relationship with the business, or as required or specifically authorized by law” (FTC, 2012: 27, 42). Especially noteworthy in this regard is the aftermath of the Cambridge Analytica Scandal, first reported on 17 March 2018, involving the exploitation of personal data gleaned from around 50 million Facebook profiles, originally collected for research purposes, by a political consulting firm that was hired to bolster the Donald Trump's 2016 presidential campaign (Cadwalladr and Graham-Harrison, 2018; Rosenberg et al., 2018). The resulting public outcry placed personal data protection and user consent at the heart of regulators’ agenda. Most notably, Europe's General Data Protection Regulation (GDPR), which came into effect on 25 May 2018, imposes specific requirements on data controllers, such as digital platforms, to protect the interest of data subjects in their personal data.

The GDPR allows personal data to be processed only if permissible under one of six lawful bases enumerated by the regulation (including processing necessary for a contract, legal obligation, or legitimate interests). The section most relevant to the use of platform data for research, the GDPR's Article 13, requires data controllers to inform data subjects of how their data will be used, for how long it will be stored, and how users can exercise their data-related rights. By extension, these terms create a consent-based regime for access to and use of personal information. Article 13 also mandates that any transfer of data to non-EU countries should be subject to an agreement between transferor and transferee that incorporates the privacy protections of EU legislation, allowing data subjects to enforce those rights as a third-party beneficiary, and requires data controllers to disclose the identity of any third party that may handle the data. Article 28 further subjects any transfer of data from controllers to processors to a written contract that not only ensures controllers’ power and discretion over processors’ activities and any onward transfer of data to sub-processors, but also requires controllers to verify processors’ compliance with the GDPR.

Regarding the platform transparency and accountability obligation, which may support platform data research, Article 5 of the GDPR requires data controllers to meet transparency obligations and adopt appropriate technical and organizational measures that would enable them to demonstrate compliance with the law.

Finally, the GDPR provides data subjects a right of claim for harms caused by platforms’ non-compliance with the GDPR, as well as the right to withdraw their consent to data processing. GDPR violations may also result in administrative fines of up to four percent of the violating platform's annual revenues.

In addition to privacy laws, publicly available data is governed by self-imposed measures namely, technological barriers and contractual restrictions that define the scope of permissible data access (Elkin-Koren et al., 2025). Specifically, platforms regularly deploy various contractual limitations to restrict researchers’ access to platform data via their ToS, privacy policies, or community guidelines (Fiesler et al., 2020).

Next, we explain how these two legal frameworks—privacy law and contrct law—could be exploited to create data enclosures.

Prior research and research hypothesis and significance

Although platforms have minimal legal claims to the publicly available data hosted on their servers, they possess strong commercial and reputational incentives to restrict data access. Exercising exclusive control provides platforms with competitive advantages over data-deprived newcomers (Rubinfeld and Gal, 2017), enables selective sharing with partners and paying customers (Huq, 2021), and shields platforms from the regulatory scrutiny that may result from platform accountability research (Kadri, 2022). According to the literature, these commercial and reputational interests likely influence how platforms craft their ToU.

This study explores the contractual restrictions on access to data imposed by digital platforms. It builds on two complementary bodies of research that support the hypotheses detailed below.

The first examines platforms’ use of contractual terms to restrict data access. While multiple studies have analyzed platforms’ contracts (Karanicolas, 2021; Pałka, 2025; Suzor, 2018; Wiśniewska and Pałka, 2023), only one prior study—Fiesler et al. (2020)—has specifically investigated how platforms employ their ToU to restrict data access and create what we term “data enclosures.” Analyzing the ToS of 117 social media platforms as they appeared in November 2017, Fiesler et al. identified four types of restrictive terms: three that directly prohibit data collection (manual, automatic, or any type of collection) and one requiring users’ permission prior to data collection. They found that only 25 platforms (21%) included none of these four types of restrictive terms.

Our study extends this foundational work in three critical ways. First, we examine a broader range of platforms beyond social media sites, including digital marketplaces, video-gaming sites, image-sharing platforms, and social-bookmarking services. Second, we significantly expand the analytical framework, identifying 12 distinct types of access-to-data terms rather than Fiesler et al.'s four categories. Third, our temporal scope captures the period between 2012 and 2022—a period marked by pivotal developments in data governance, including the Cambridge Analytica scandal and the implementation of the GDPR, enabling us to trace the evolution of platform ToU and assess how significant regulatory and ethical developments influenced platform drafting practices.

The second body of research on which this study builds examines how regulatory changes shape contract drafting practices, providing further theoretical support for our hypothesis that platforms adapt their ToU in response to evolving legal and ethical environments. Contracts serve as flexible mechanisms for governing market relationships. This is particularly true of boilerplate contracts that are drafted unilaterally, offered on a “take it or leave it” basis, and typically grant drafters discretion to unilaterally modify terms (Furth-Matzkin and Sommers, 2020; Sales, 1953; Wilkinson-Ryan, 2014). Research demonstrates that digital platforms frequently amend their ToU (Elkin-Koren et al., 2022), and that they do so in response to shifts in the applicable legal and ethical landscape (Choi and Gulati, 2004, 2006), changes in party characteristics and relationships (Marotta-Wurgler and Taylor, 2013), and evolving market dynamics (Bar-Gill and Davis, 2010; Becher and Benoliel, 2021; Ben-Shahar and Pottow, 2006; Davis and Marotta-Wurgler, 2020; Taylor, 2011).

Drawing on these two bodies of literature, we expect platforms to strategically adapt their ToU in response to relevant changes in their legal and regulatory environment. This expectation is particularly well-founded given the evolution of privacy law during our study period. As we have seen, the GDPR and analogous US privacy legislation—including the Fair Credit Reporting Act (FCRA) and the Health Insurance Portability and Accountability Act (HIPAA)—establish strict consent-based frameworks that restrict the use of personal data. These regulatory developments create opportunities for platforms to leverage the interplay between contractual terms and privacy obligations to justify restrictive data access policies.

Building on these theoretical foundations, we advance the following hypotheses: (1) digital platforms make extensive use of contractual mechanisms to enclose the data they host; (2) between 2012 and 2022 platforms significantly revised the data access terms in their ToU to align with evolving legal and ethical standards; and (3) these changes were strategically designed to serve platforms’ commercial and reputational interests by leveraging privacy clauses to restrict third-party access to publicly available data while preserving their own discretionary right to use and share such data.

Our study makes four distinct contributions to the literature and practice of data access. First, we provide the first comprehensive empirical analysis of how platforms systematically adapted their ToU in response to major regulatory changes between 2012 and 2022. This temporal analysis reveals how platforms strategically exploit regulatory developments to advance their interests while restricting third-party research access.

Second, we offer policymakers empirical evidence of regulatory gaps and their unintended consequences (Dari-Mattiacci and Marotta-Wurgler 2022). Our findings demonstrate how platforms have leveraged privacy regulations designed to protect users to create data enclosures that serve corporate rather than public interests (Kadri, 2022). This evidence is particularly timely given ongoing regulatory efforts, such as the EU's Digital Service Act Article 40 and the proposed US Platform Accountability and Transparency Act, which seek to mandate research access to platform data.

Third, we contribute to contract theory by documenting how platforms engage in “drafting in the shadow of regulation” (Mnookin and Kornhauser, 1979); that is, actively exploiting regulatory frameworks rather than merely complying with them (Hwang and Jennejohn, 2022). Our findings suggest that courts should interpret platforms’ ToU within their regulatory context rather than as neutral contractual arrangements between equal parties, as suggested by recent decisions like X Corp. v. Bright Data .

Fourth and finally, the risk of legal liability is driving researchers to seek out new scraping techniques (Dryer and Stockton, 2013; Schwalbach and Mauer, 2025), such as the counter-archiving technique used to study Facebook after it had shut down researchers’ access to public data through its application programing interface (API) (Ben-David, 2020) and the API-bypassing methodology based on a screen scraping routine of public Facebook posts (Mancosu and Vegetti, 2020). Our study enables researchers to further develop methodologies that account for these legal constraints while pursuing accountability research and other socially beneficial investigations. In turn, it provides practical guidance for navigating the evolving contractual landscape of platform data access (Perriam, et al., 2020).

Data and methodology

Our study investigates the contractual frameworks constructed by platforms to control access to and the use of platform data for non-commercial research purposes, as reflected in digital platforms’ ToU.

To compose a thorough and inclusive list of digital platforms, we followed Fiesler et al. (2020) by first including all platforms in Wikipedia's 2022 lists related to digital platforms; namely, the lists of social-networking services, online marketplaces, online video platforms, image-sharing websites, and social-bookmarking sites (a total of 333 digital platforms). After excluding platforms that were no longer operational, platforms whose ToU were inaccessible via the Way-Back Machine (see below) or their websites, and platforms irrelevant to our research context (e.g. airline ticket companies), our list comprised 279 platforms.

For each platform, we analyzed the ToU effective at the data collection point (1 July 2022) and, using the Way-Back Machine, the ToU effective as of 1 July 2012. For platforms that have both a mobile (app) and a web-based interface, we only analyzed the web-based ToU. We selected 1 July 2012, because 2012 is the year in which the European Commission introduced the GDPR, which significantly altered the ethical, legal, and regulatory landscape applicable to personal data protection). Specifically, and as discussed in the “Background” section, the GDPR's Articles 13 and 28 create a regime for the collection, use, and transfer of publicly available platform data that is based on user consent.

The ToU were collected manually. For platforms that had not yet been established in 2012, or that did not post the relevant contracts on their website, we used the earliest available version of the contract. If a platform had blocked the Way-Back Machine from accessing its databases, we used past versions of its contracts posted on its websites, where available. Finally, for non-English ToU, we used Google Translate to read and compare the legal documents.

The comparison between the 2012 and 2022 versions of platforms’ ToU focused on searching for any additions to, changes in, or deletions from the language of terms implicating access to data. To facilitate the process of encoding, we conducted a preliminary reading of the ToU of four platforms (Facebook, LinkedIn, Google, and Yelp) to identify 12 types of access to data-related terms. We then distinguished between terms that restrict access to data for research and those that facilitate such access, even if at the platforms’ sole discretion, as such facilitating terms could be interpreted in a manner that is favorable to researchers. 1 We also distinguished between terms that have a direct effect on access to data and those that have an indirect effect.

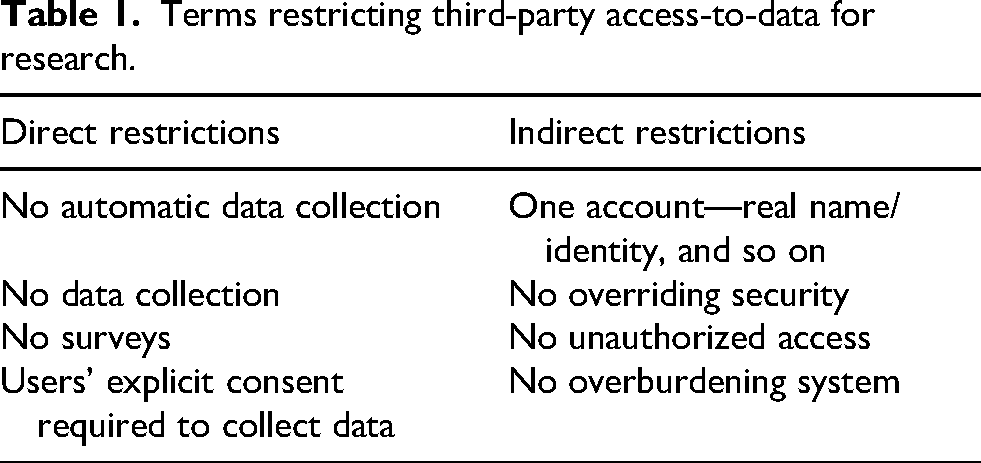

In total, we identified eight terms that directly or indirectly restrict access to data and four that facilitate such access. Four types of terms directly restrict access to data:

Four other types of terms place indirect restrictions on researchers’ ability to collect platform data:

Terms restricting third-party access-to-data for research.

We also identified four types of terms that may facilitate researchers’ data access. Two terms directly support the collection of data for research:

Terms facilitating access-to-data for platforms research.

After collecting all platforms’ ToU in both their 2022 and 2012 versions, we provided our team with detailed guidelines for identifying and categorizing each type of term to ensure consistency across all reviewers. Our team then manually read each contract to create a spreadsheet detailing whether and which of the 12 access-related terms appeared in each platform contract's 2012 version, its 2022 version, both, or neither.

We recorded each time any one of the 12 types of terms appeared in any particular contract, including in different contracts from the same platform, as well as the number of platforms that use any one of the terms in one (or more) of their ToU, in both 2012 and 2022. We then assessed whether the change in the language between the two versions was substantial or minor. Changes in the scope of the restriction were deemed substantial, while wording-only changes with no relevant legal effect were considered minor. To increase accuracy, we employed a text comparison tool for each section of the contract, allowing us to visualize the changes between the two versions. Our findings were documented in an Excel spreadsheet, indicating for each platform in the sample whether any one of its contracts contained each of the 12 terms (assigned the number 1) or not (assigned the number 0), as depicted in the spreadsheet extract shown in Figure 1.

Excel spreadsheet extract.

Results

Access to data for scientific purposes: Main findings

We begin by providing an overview of the current state of access to platform data for non-commercial research purposes. We found no terms of relevant legal implications that did not fit within the 12 terms identified in our initial readings of the four platforms. Our findings support our main hypothesis that digital platforms extensively use contractual measures to enclose the data hosted on their servers. Of the 279 platforms reviewed, 208 (74.6%) have contractual terms that directly restrict access to data by explicitly prohibiting the collection of data, the automatic collection of data, or both. Most platforms also incorporate one or more terms that indirectly restrict access to data, placing contractual limitations on unauthorized access to data (59%), the overriding of the platform's technological security measures (57%), and the overburdening of the platform's computers (53%).

Of the 71 platforms (25.4%) that do not explicitly restrict access to data, 47 (16.8%) employ one or more contractual terms that indirectly achieve a similar end, requiring researchers to gain each user's explicit consent before accessing and collecting data; restricting unauthorized access, the overriding of security measures, or the overburdening of the platform's computers; or limiting users to only one account and obliging them to state their real name when using the platform. Thus, only a small minority of platforms (8.6%) place no direct or indirect contractual restrictions on the collection of data for research purposes.

The platforms that do not contractually restrict access to data have little in common. They include sites such as Readgeek, a book recommendation platform that may have little commercial or reputational interest in restricting access to data, as well as platforms that might be expected to restrict access to data for research, such as Gentlemint, sometimes described as “Pinterest for Men” (Knapp, 2012), and Gaysir, which, as their website explains, is a Norwegian social network aimed at the LGBTQIA

Alongside the widespread use of contractual terms to restrict access to data for research, platforms also commonly include provisions that indirectly facilitate their own research efforts and enable data sharing with selected partners. Notably, 226 platforms (81.9%) include

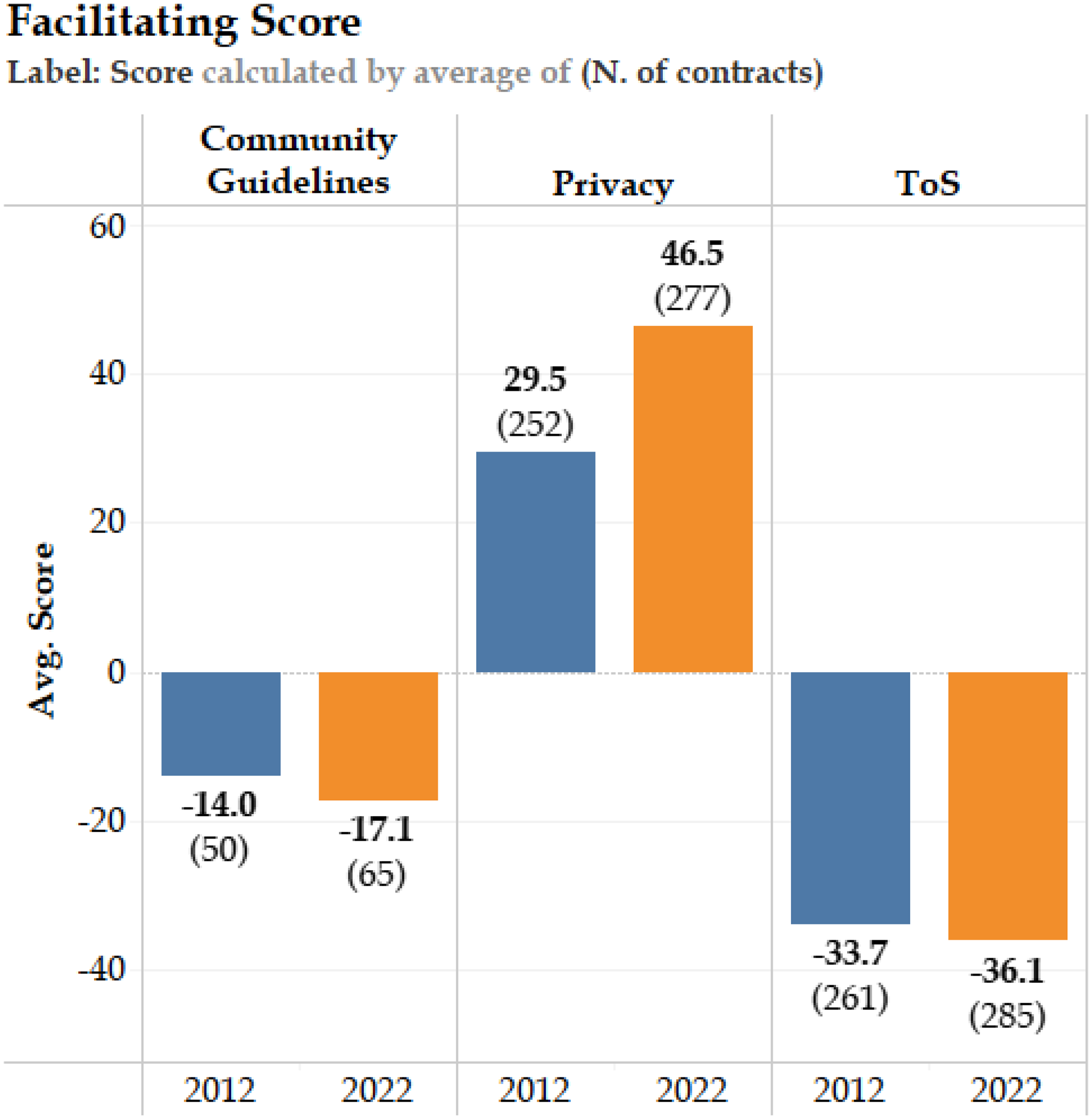

Finally, our findings also reveal a division of labor in platforms’ use of the different user agreements (Suzor, 2018). To assess this result, we assigned −12.5 points to a platform every time that one of the eight types of terms that directly or indirectly restrict access to data was included in a particular contract, and a score of +25 to each time that one of the four terms that directly or indirectly support access to data was included in their ToU. Thus, a contract that included all 12 terms received a score of 0; a contract that included only and all the terms that support access to data received a score of 100; and a contract that incorporated only and all the terms restricting access to data received a score of −100. As depicted in Figure 2, we found that while ToS had an average score of −36.1, privacy policies’ average score was +46.5. This, we suggest, demonstrates platforms’ use of different contracts to speak in various voices. Specifically, while platforms use their ToS agreements to enclose data, employing contractual measures to deny others’ access to and use of the data hosted on their servers, they use their privacy policies to carve out exceptions to the regulatory and contractual restrictions placed on the use of data. In turn, this ensures that only they, their affiliates, and their chosen (paying) customers are able to access and use the enclosed data as they see fit.

Facilitating scores.

Changes over time: The evolution of terms controlling access to platform data

We used two types of comparisons to analyze the evolution of contractual terms controlling access to platform data for research purposes. First, at the contractual level, we compared the frequency with which platforms’ ToU include terms that restrict (or permit) access to data for academic purposes in July 2022 and in July 2012. Overall, we examined 567 contracts. Second, at the platform level, we compared the number of platforms that included access-restricting (or -permitting) terms in at least one of their contracts in 2012 and 2022. Overall, this analysis included 264 platforms.

The findings of our first analysis show that there was a relatively slight, but statistically significant, increase in the use of all types of access-restricting terms in platforms’ ToU between 2012 and 2022. In particular, we found a 22% increase in the number of

Much more substantial was the increase in terms that may facilitate platform data research. The largest increase occurred in

Cross-contract comparison.

The findings of our second analysis show a similar increase in the number of platforms, as opposed to the number of contracts, which include terms that (directly or indirectly) facilitate access to platform data for research purposes in at least one of their contracts. The largest increase was in the use of

We additionally found statistically significant, but more modest, increases in the number of platforms that restrict access to data for research. In particular, between 2012 and 2022, the number of platforms that included a

Cross-platform comparison.

The above findings suggest that, alongside a significant increase in terms that directly or indirectly support platform data research, there was a parallel, smaller, increase in terms that restrict platform data research. The findings, however, should be viewed against the base level of terms facilitating or restricting access to data for academic purposes. For example, while the percentage-point increase in the number of platforms that included

Furthermore, recall that there were twice as many types of terms that restrict access to platform data (eight) than support it (four). Accordingly, and as already mentioned, to explore the impact of changes in platforms’ drafting practices over the 10-year period, we examined the degree to which each type of contract enabled or restricted platform data collection by assigning +25 points to each term that facilitated access to platform data and −12.5 points to each term that restricted such access.

Our findings, depicted in Figure 1, show that the tendency of both community guidelines and ToS to restrict platform data research increased between 2012 and 2022: Community guidelines received scores of −14 in 2012 and −17.1 in 2022, while ToS received scores of −33.7 in 2012 and −36.1 in 2022. This suggests that platforms’ ToS, which predominantly define the bilateral relationship between platforms and their users (including researchers), have taken a more restrictive stance in relation to platform data collection.

In contrast, privacy policies are becoming more supportive of platform data collection, with a score of 29.5 in 2012 and 46.5 in 2022. This means that platforms’ privacy policies, which unilaterally define what data controllers may or may not do with their users’ personal data, have become substantially more supportive of platform data collection over time. Taken together, these findings support our second and third hypotheses, that platforms deploy their ToS to enclose data, while at the same time using their privacy policies to define the scope of permissible data collection to advance their own interests, and that these practices have proliferated over time. As we explain in the following subsection, although the design of our experiment cannot conclusively support a causal link, the correlation between our findings and the changes we describe in platforms’ regulatory environment suggests that these results may represent an unintended, albeit anticipated, consequence of privacy regulations, such as the GDPR. Its consent-oriented approach expands and strengthens platforms’ ability to control access to data via private ordering.

Discussion

Contractual response to regulatory changes

When viewed as a whole, our findings demonstrate how and why digital platforms, as profit-driven private entities, structure their contractual relationships with their users to simultaneously minimize legal risks and maximize opportunities to monetize and leverage data for business purposes. As we discuss below, platforms’ ToU have been designed to maintain control over third-party access to data while safeguarding the platforms' right to use that data, regardless of the regulatory developments.

In particular, our findings illuminate the possible influence that major regulatory and ethical changes can exert on platforms’ contracts. 4 We identified significant changes to platforms’ ToU governing data access between July 2012 and July 2022. While we cannot establish a definitive causal relationship, these changes correspond with the growing emphasis on personal data protection. As we hypothesized, and as we elaborate further below, the regulatory shift appears to have prompted platforms to redefine the scope of contractually permissible access to platform data for research purposes, facilitating exclusive control over publicly available data.

First, the emergence of consent-based regulatory requirements governing the use of personal data between 2012 and 2022 helps explain the proliferation of

At first glance, these terms appear to support the use of platform data for research and, in turn, potentially enhance platforms’ reputation and foster public trust. However, because the GDPR makes data sharing conditional upon users’ consent (in the absence of another basis for processing personal data), it grants platforms broad discretion to determine who may access platform data and for what purpose. Platforms have invoked user privacy claims in litigation against scrapers—such as X v. Bright Data—to justify restricting access to their data. Yet, in this case, the court was not persuaded. In rejecting X's claim that its ToU were designed to protect user privacy, Judge Alsup held that “X Corp., however, is not looking to protect X users’ privacy. It contends that ‘improper scraping … interferes with X Corp.'s own sale of its data through a tiered subscription service’ […], [yet] X Corp. is happy to allow the extraction and copying of X users’ content so long as it gets paid.”

Second, and relatedly, the introduction of new restrictions on the transfer of data outside the European Union may explain the significant increase we have identified in the use of

Third, the consent-based framework of the GDPR alongside the public outcry following the Cambridge Analytica scandal may further account for the increase in terms restricting access to platform data for research. This possible relation further underscores the interaction between newly enacted consent-based privacy regulations and the simultaneous increase in terms that both limit third-party data access and permit platform-approved research. For example, the significant rise in the use of

Fourth and finally, the increased frequency of

Our findings, therefore, suggest that changes in privacy regulation interact on a profound level with platforms’ drafting practice, resulting in a double-drafting strategy being employed by platforms in their ToU. This consists of a restrictive strategy, which sets limits on access to platform data by third parties, and a facilitative strategy, which grants platforms a contractual right to utilize users’ data in their own (commercial) research and subjects any noncommercial research—for example, research that is conducted using Meta's FORT API—to the platform's sole discretion.

Platform data enclosure via contracts

In his seminal work, The Second Enclosure Movement and the Construction of the Public Domain, James Boyle described the expansion of intellectual property in the digital era with reference to a useful analogy to the historical enclosure of common lands by landowners in England, which entailed publicly accessible land being fenced off and privatized for exclusive use (Boyle, 2003). Our findings regarding platforms’ ToU drafting practices and their attempt to create their own data regime take Boyle's claim a step further, demonstrating how contractual, rather than property, rights can rival, conflict with, and even override the public ordering of access to data, transforming data from a public resource into a privatized one.

The enclosure of data by platforms results from a combination of two types of contractual strategies made evident by our findings. The facilitating strategy ensures that platforms are authorized to employ users’ data for research and development purposes in any way they see fit. This affirmative contractual right is then supplemented by platforms’ restrictive strategy, which employs contractual terms to limit the collection and use of data by others, thus creating new barriers to accessing data that would otherwise be publicly accessible.

Contracts apply to their contractual parties. Yet, platforms often draft broad provisions, seeking to subject anyone who accesses the platform's website to their ToU, regardless of whether they have an active account. Such provisions intend to contractually bind even random website visitors, who do not have a user account, to the platform's ToU. In turn, this subjects any activity by registered and non-registered users alike, including researchers, to non-negotiable contractual terms unilaterally drafted by the platform.

Importantly, platforms often justify the imposition of their contractual terms on non-registered scrapers by reference to their legal obligations and reputational interest in protecting their users’ privacy. In a series of recent decisions, however, the District Court of the Northern District of California has begun to unravel the interplay between privacy regulation and contractual access-to-data restrictions. In Meta v. BrightData , Meta argued that its contractual restrictions on scraping apply to both logged-on and logged-out users and behaviors, implying that any “unauthorized” scrapper necessarily violates Facebook's and Instagram's Terms. The court was not convinced. Applying established canons of contract construction, it held that the ToU apply only to logged-in scrapers, not logged-out ones, stating that “Meta surely understands the difference between defeating anti-automated scraping and piercing privacy walls.”

In another decision by the same court, X v. Bright Data , the court addressed the interplay between the two types of contract-drafting strategies. Pursuant to X Corp.'s ToU, users own their content while platforms are granted a non-exclusive right to make use of the data. Nevertheless, and despite having no exclusive right in the data, X Corp. seeks to prevent the scraping of data by any third parties, even if they are no longer a party to the contract. However, “[A] non-exclusive licensee,” the court held, “has no more than a privilege that protects him from a claim of infringement,” and “because such a licensee has been granted rights only vis-à-vis the licensor, not vis-à-vis the world, he or she has no legal right to exclude others. […] Yet, that is exactly what X Corp. seeks to do with its claims based on the scraping and selling of data—to exclude others from using, copying, reproducing, processing, adapting, modifying, publishing, transmitting, displaying, and distributing X users’ content” ( X v. Bright Data : 849). Consequently, although X Corp. acquires only a non-exclusive right from its users to use the content hosted on its platform, by using contractual terms to prohibit any type of scraping, it “ostensibly acquires” an exclusive right in data via its ToU, “upend[ing] the careful balance Congress struck between what copyright owners own and do not own, and what they leave for others to draw on” ( X v. Bright Data : 852), and thus shrinking the public domain.

The court in X v. Bright Data observed that such contractual practices create a “massive regime of adhesive terms” that effectively grants platforms de facto property rights in data and “fundamentally alter[s] the rights and privileges of the world at large” ( X v. Bright Data : 850). Our findings demonstrate that these contractual practices are not limited to X Corp. alone, but are much more prevalent.

The combination of data-related laws and regulations and the two contractual strategies demonstrated in our findings allows platforms to use their ToU to exert significant power and control over how data hosted or generated on their sites is shared and used outside the platform. If enforceable, these ToU would provide platforms with legal cover to override the legislative data regime, replace it with their own, and provide themselves with a property-like right in platform data. As we have seen, some courts have demonstrated their unwillingness to allow platforms’ enlistment of contract law to achieve this end. Overall, however, the enforceability of contractual provisions that may conflict with copyright law has been highly contested, and courts in the United States have routinely approved and enforced such contractual overriding of copyright norms in numerous cases, despite mounting criticism by academics (Rub, 2017).

Conclusion

Platform data is a critical resource for research and innovation in the public interest. From a proprietary perspective, a major portion of publicly available platform data is in the public domain and thus free for all to use. Platforms nonetheless restrict data access, distorting the delicate balance between exclusivity in proprietary goods and access to public goods.

This article provided empirical evidence supporting the prevalence of data enclosures. It showed that platforms draft their ToU to preserve their de facto control over the data hosted on their servers, while restricting access to and use of the same data by third parties. Our findings further identified a correlation between platforms’ legal obligations and their contract drafting strategies. Specifically, platforms use their data protection regulatory duties under the GDPR to employ a double-side drafting strategy, restricting the use of publicly available platform data by third parties (including researchers), while maintaining their own privilege to use that vary same data.

Such data enclosures generate data monopolies and thwart public benefit research and innovation. Legal policy should therefore strive to prevent them. One possible strategy is to adopt top-down regulatory measures that revoke the discretion of platforms to limit researchers’ access to and use of publicly available data. Article 40(12) of the EU Digital Service Act (DSA, 2022), for instance, mandates very large online platforms and search engines to provide researchers studying systemic risks in the European Union access to real-time data that is publicly accessible in their online interface. Likewise, the proposed US PATA (Perrino, 2023), if enacted, would shield researchers and journalists who collect publicly available data from digital platforms to conduct research on matters of public concern from legal liability.

In addition, courts can develop bottom-up, case-by-case exceptions and limitations to contractual terms seeking to restrict access to publicly available data for public benefit purposes (e.g. academic research). Recently, for example, a Court in Cologne, Germany, found that Meta's use of such data to train its AI model did not violate the GDPR, despite Meta never obtaining users’ consent (Geseley, 2025). Likewise, in the United States, the court of the Northern District of California rejected X Corp.'s claim that Bright Data's scraping of the publicly available data posted by X Corp.'s users constituted a breach of X Corp.'s ToS, rejecting what it described as the platform's attempt to use its ToS to assert property-like rights in publicly available platform data. Eventually, a combination of top-down and bottom-up legal strategies may prevent data enclosures and ensure that publicly available data is accessible for academic and similar research conducted for public benefit.

Footnotes

Ethical considerations

There are no human participants in this article and informed consent is not required.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Author contribution

Equal contribution.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Israel Science Foundation (grant number 1870/21).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

Subject to appropriate ethical and legal considerations, authors are willing to share the research data in a relevant data repository.