Abstract

Rapid innovation in digital services relying on artificial intelligence (AI) challenges existing regulations across a wide array of policy fields. The European Union (EU) has pursued a position as global leader on ethical AI regulation in explicit contrast to US laissez-faire and Chinese state surveillance approaches. This article asks how the seemingly heterogeneous approaches of market making and ethical AI are woven together at a deeper level in EU regulation. Combining quantitative analysis of all official EU documents on AI with in-depth reading of key reports, communications, and legislative corpora, we demonstrate that single market integration constitutes a fundamental but overlooked engine and structuring principle of new AI regulation. Under the influence of this principle, removing barriers to competition and the free flow of data, on the one hand, and securing ethical and responsible AI, on the other hand, are seen as compatible and even mutually reinforcing.

Keywords

A European response to artificial intelligence

Recent advances in ‘machine learning’ and ‘neural networks’ whereby algorithms ‘learn’ based on a continuous inflow of new data – combined with the big data revolution (Kitchin, 2014) – means that AI now penetrates virtually all aspects of society. AI generally involves computer technology capable of performing tasks requiring ‘intelligence’ and therefore otherwise only executable by humans (Bolander, 2019). This includes natural language processing forming the basis of search engines, chatbots and spam filters, image recognition technologies used to identify cancer on radiographs or for national surveillance purposes – for example, for automated facial recognition from hundreds of millions of interconnected CCTV cameras in the Chinese case (Aho and Duffield, 2020). Not to mention self-driving cars, gaming, algorithmic trading in financial markets or computational social science.

Within the recent decade, these rapid developments have impelled regulators in the world's leading economies to rethink and redefine rules and strategies across a wide array of policy fields. Where the US and China have been faster in pursuing distinct regulatory programs from early on, the European Union (EU) remained for long a slow and reactive regulator – as even the European Commission (2018a) admits. However, since 2017, there has been a surge in ambitious and coordinated EU regulation and initiatives on AI across a very broad range of policy fields. This reflects the view that AI presents not only assive potential to solve economic, social and political problems, but also considerable challenges, especially to human rights, ethics and consumer protection. For example, even machine learning algorithms ‘unsupervised’ by humans often reproduce cultural gender, race and other biases (Bechmann and Bowker, 2019). The spread of AI also raises concerns about digital surveillance and human rights (Aho and Duffield, 2020; Zuboff, 2019). At the same time, AI alters our economic system in profound ways through the emergence of powerful ‘big tech’ companies – notably Microsoft, Apple, Amazon, Google (Alphabet) and Facebook – and the constitution of data as a new form of capital (Sadowski, 2019). The power relations of policy-making also change. One report reveals that more than 600 tech companies spend an annual total of €97 million lobbying the EU institutions (Bank et al., 2021), while another report finds that the UK public debate on AI is clearly industry-led (Brennen et al., 2018). On this background, we need to consider the mechanisms by which these concerns are constituted as problems of governance and transposed into actual regulation, that is, into rules, jurisprudential practices and broader policy initiatives.

In this article, we specifically address the question of how purportedly ‘ethical’ regulation of AI in the EU relates to more traditional motives of regulation, especially those constructed around the Single Market and the removal of barriers to competition (Foster, 2022; Jabko, 2006; Krarup, 2022). This is particularly relevant because the EU pursues a position as global leader on what is often termed ‘ethical AI’, which implies a particular approach to AI governance. The research literature generally portrays regulation of AI as stretched between opposite and even conflicting ‘socio-technical imaginaries’ forming part of a wider web of governance and power struggles over the management of the digital economy (Bareis and Katzenbach, 2022; Guay and Birch, 2022; Mager and Katzenbach, 2021; Micheli et al., 2020; Prainsack, 2019). Specifically, in comparison with the market-led ‘platform capitalism’ model of the US and the state-led ‘panoptic’ digital economy in China, the EU has emphasized citizen and consumer rights as well as social and cultural values more broadly (Aho and Duffield, 2020; Boyer, 2022; Guay and Birch, 2022; see also Liu, 2022). Emphatically, this ethical approach has not crowded out concerns for economic integration and harmonization in the EU. Quite on the contrary, the EU has pursued a ‘state-market regime’ in which competition and responsibility are apparently seen as harmonious (Guay and Birch, 2022; see also Bareis and Katzenbach, 2022). However, the coexistence of ethics and markets motives in EU regulation leaves us wanting with regard to the deeper logic and the dynamics that bind together apparent opposites.

On this background, we raise two interrelated research questions. First, we ask what is the relationship between recent EU regulation on AI and notions of market integration and ethics, respectively? Following both EU discourse and the analysis of socio-technical imaginaries, we conceive of ‘ethics’ here in very broad terms, following empirical connections rather than delivering an a priori definition. This question includes a broader understanding of how ‘the market’ is configured vis-à-vis other concerns in the regulation on AI – notably ethics but also economic growth, democracy, social justice and even healthcare and the environment. Second, to what extent – and how, more specifically – does the motive of Single Market integration shape EU regulation on AI and the pursuit to become a global leader on ethical AI? Here, as we discuss in section 2, we are particularly interested in the creative vagueness and even paradoxes of the notion of ‘the market’ in the EU – identified by scholars of political economy in different contexts as allowing EU bodies to assume regulatory initiative, but also structuring those initiatives accordingly (Foster, 2022; Jabko, 2006; Krarup, 2022).

As we discuss in detail in section 3, we first conduct a quantitative analysis of all – some 1500 – official EU documents mentioning AI between 1977 and 2021. This allows us to appreciate both the surge in regulatory activity since 2016–2017 and the breadth of policy fields touched by the new EU regulation on AI, widening the narrower focus in most existing literature on ethics and the economy. Next, in order to substantiate the apparent omnipresence of Single Market logics, we conduct a close reading of selected documents, including reports, communications and legislative works, deploying a ‘problem analysis’ methodology to identify moments of discursive tensions, uncertainty and contradiction (Krarup, 2021a). We show how key initiatives find their legal basis in treaty provisions about the Single Market, competition and the free movement of goods, capital, services and people. Moreover, data is conceptualized as the new ‘raw material’ for the digital age, with the specific risk of big tech ‘gatekeepers’ (a new legal concept) monopolizing access to this essential resource and infrastructure. Finally, EU regulation on AI is motivated by the impending ‘fragmentation’ of the Single Market resulting from the asymmetric and uncoordinated formulation of national policies.

Our double analytical movement of expanding the thematic scope of inquiry (quantitative analysis) and dissecting their internal logics of co-existence (qualitative analysis), demonstrates that markets and ethics are deeply entangled and conceptually inseparable problems, rather than conflicting imaginaries. Specifically, Single Market integration – that is, the constitutional aim to remove ‘barriers to competition’ in the EU (Krarup, 2022) – constitutes a vital reference for EU initiatives, both in narrow legal and in broader political terms. The European Commission in particular portrays the rapid innovation in AI as producing new threats to free and fair competition in Europe. However, it also sees the creation of a ‘Digital Single Market’ as the key to unlock not only economic but also social, political and other potentials embedded in new AI technologies. In other words, advancing market integration is not an isolated objective in EU regulation on AI. Rather, it is seen as inseparable from such disparate aims as securing human rights and ethics, strengthening public health, consolidating the EUs geopolitical and economic position in the world, and pushing for social and political progress within the Union.

Thus, in Section 2, we discuss the literature on socio-technical imaginaries of AI regulation in relation to the broader political economy literature on European market integration and present our problem analysis framework. In Section 3, we describe our methodology and the materials guiding the analysis. Section 4 positions AI in relation to Single Market integration, including historical and thematic mappings of official documents. Section 5 begins the in-depth reading of selected documents, inquiring how and why AI regulation comes to touch upon a wide array of policy areas. In Section 6, we analyse the vision of a ‘common data space’ in the EU, while Section 7 studies the ensuing paradox of competition leading to fragmentation. On this background, Section 8 shows how the new legal concept of ‘gatekeepers’ tackles emergent problems of big tech through a revision of the existing competition framework. Finally, Section 9 concludes and discusses avenues for future research.

Between or across ethics and the market

In the existing literature on the politics of AI in Europe, two themes have dominated: ethics (Bechmann and Bowker, 2019; Hagendorff, 2020; Jobin et al., 2019; Larsson, 2020) and imaginaries (Bareis and Katzenbach, 2022; Cave and Dihal, 2019; Mager and Katzenbach, 2021; Natale and Ballatore, 2020; see also Beckert, 2016; Jasanoff and Kim, 2009). Regulatory responses to the surge of AI are widely analysed as instances of a broader antinomy between the benefits and risks of new technology (Aho and Duffield, 2020; Calo, 2017). Compared with the regulatory strategies for incorporating AI into existing political economies in China and the US, that of the EU is generally portrayed reactive. Although purported to prioritize individual rights over economic benefit, Aho and Duffield (2020: 208) portray regulation on AI in Europe as bending to short-term catch-up projects – emblematic of ‘Western decline’ and of ‘the crisis of capitalism that can no longer deliver sustainable futures for its citizens in a globalized world’. Here, positive imaginaries building on ethical concerns and visions for ethical AI are contrasted with the more dystopic ones of societies succumbing to surveillance capitalism, the monopoly power and political clout of big tech and the marketization of personal data.

While the analysis of socio-technical imaginaries is useful for seizing the dramaturgical portrayal of AI in various political contexts, it is less adequate for assessing the political economy of legal and institutional concepts. Specifically, in the case of the EU, a distinct legal concept of ‘the market’ is known to frame regulatory action quite broadly. This is because Single Market integration affords a unique mandate for supernational initiative by EU institutions to remove ‘barriers to competition’ – which can be interpreted very broadly (Krarup, 2022). At the same time, the removal of barriers to competition creates a deep-seated problem in the EU of how to distinguish, concretely, between the fully harmonized and equal-for-all ‘infrastructures’ affording a ‘level playing field’ for competition, and ‘the market’ as such with its fragmentation into competing providers and consumers (Krarup, 2021b).

In the context of EU integration, ‘the market’ connotes such issues as competition policy and consumer protection, but not necessarily fiscal and monetary objectives of economic growth, on the one hand, and broader political and institutional integration, on the other. Historically, however, a deliberately vague notion of ‘the market’ has served the Commission as a diplomatic instrument in gathering support among Member States – with their often incompatible interests – for a much broader program of European unity (Jabko, 2006). Moreover, compared to the laissez-faire jurisprudential regime in US, securing ‘fair’ competition serves EU institutions as a cause for action, for example, against monopoly formation in big tech (Foster, 2022). On this background, it is remarkable that the role of market integration has received very little attention in the literature on AI regulation (one exception is Guay and Birch, 2022).

The historical importance of ‘the market’ and ‘competition’ in European integration and regulation broadly makes us question the adequacy of the market-ethics binary often found in the literature on socio-technological imaginaries in regulation on AI. The European ambition of creating a Single Market understood as a frictionless common space of fair and competitive commercial transactions implies certain paradoxes well-known, for example, in the context of financial markets and their infrastructures (Krarup, 2022; Millo et al., 2005; Riles, 2011). On this basis, our analysis focuses on how deep-seated problems of market integration in the EU frame the ways in which new challenges, such as those related to AI, are addressed. Thus, rather than seeking a simple imaginary or ideological coherence (e.g. neoliberal) in EU regulation, we suggest that such problems are exactly that – problems, producing tensions, uncertainties and contradictions, leaving room for sometimes paradoxical policy development and a multiplicity of voices, while also serving as an engine for the production of new responses (Krarup, 2021a, 2021b). Indeed, paradox yields not only constraint, but also dynamism (Best, 2005). In order to pursue this line of inquiry, we implement an analytical framework focused on the problematization of new objects of regulatory action (Ossandón and Ureta, 2019). Specifically, we draw on the ‘problem analysis’ methodology of Foucault, which looks for discursive patterns of tension, uncertainty, contradiction and conflict in order to qualify our understanding of the epistemic structures within which a problem – here: of regulating AI in the EU – is posed (Delaporte, 1998; Krarup, 2021a; Ossandón and Ureta, 2019).

Rather than constructing and contrasting ideal typical responses to the problems of AI, our focus is thus on how the co-existence of different responses is organized and conceptualized as a problem in each context. In this way, our aim is to inquire how a broad array of policy issues appear to be entangled with a constitutional market integration problematic in European regulation on AI.

This approach differs somewhat from that of the study of socio-technological imaginaries and governance. First, while the latter acknowledges the role of controversy and contradiction (Mager and Katzenbach, 2021), problem analysis frontloads them. Second, the notion of problem differs from that of the performativity of discourse and the analysis of power struggles in so far as it is rather oriented towards the discursive problem conditions that allow and enable a multitude of different responses to co-exist (Krarup, 2021a). Nevertheless, while it would be impossible to account for all the relevant actors engaged in power struggles over each document or set of documents in our comprehensive analysis, our contribution may serve as a reference point for future studies of AI governance in Europe.

Methodology and materials

Regulation designates, narrowly, the creation and enforcement of rules through institutionalized procedures (cf. Baldwin et al., 1998). Regulation is thus generally embedded in broader formations of governance, that is, the processes and activities whereby societies are steered. However, our approach is different from this conventional conception in that we focus more on recurrent patterns of conceptualization and problematization of a given socio-economic domain by a certain political body – in casu, the European Union, particularly the European Commission – irrespectively of the (differences in) formal character of the documents under study (Krarup, 2021b; Ossandón and Ureta, 2019). Hence, we do not reserve the term ‘regulation’ narrowly to designate the European legal form (as in the General Data Protection Regulation, GDPR).

Specifically, our study is based on official EU documents concerned with AI, accessed via EUR-Lex – the official online repository for EU documents related to legislation (including legislation, proposals, communications, reports, and other). Inspired by recent contributions to computational social science (Carlsen and Ralund, 2022), we pursue two complementary analytical strategies, one statistical and the other based on close reading of selected sources. In the statistical analysis, we use searches in the official online repository of EU documents related to legislative works (including legislation, proposals, communications, reports and more), EU-Lex, as our main data source. The repository goes back to 1977. In the analysis, we use both simple document counts for different search terms and a more advanced network and cluster analysis of the thematic keywords used by the authoring EU bodies to tag a large portion of the documents. Specifically, we use the Leiden algorithm which is an improved version of the widely used Louvain cluster analysis technique for detecting communities (Traag et al., 2019). Here, each keyword occurs as a ‘node’ in the network and each co-occurrence of two different keywords in the same document is represented by an ‘edge’ (connection) between two nodes. Communities are detected by the method of modularity, identifying the clusters of keywords that are the most connected (compared to the average).

Second, narrowing our focus to central policy documents resulting from the surge in AI regulation since 2016, we conduct a close reading of the role played by Single Market integration and related topics, such as competition and consumer protection. In our selection of documents for this part of the analysis, we relied on statistical mapping combined with recent overviews (Daly et al., 2019; Niklas and Dencik, 2020), as well as on continuous assessment throughout the analysis (cross-referencing between documents being quite frequent). Our selection focuses mainly – but not exclusively – on the European Commission, the executive branch of the EU, which has played an important role in advancing the AI regulatory agenda. Table 1 provides an overview of the selected sources – a total of 27 EU documents on artificial intelligence from the period 2015–2021.

Documents consulted.

Here, our analysis moves from the topical mapping to an analysis of the problems driving and structuring the regulation (Krarup, 2021a). In our case, this involves coding materials with thematic and chronological tagging, as well as a more elaborate, analytical coding of documents, chunks or snippets of text, iteratively reorganizing the material in search for conceptual and discursive relationships, patterns and structures. Specifically, we search for relationships of tension, uncertainty, contradiction and conflict manifest in speech and text because the comparative mapping of such instances supports the inferential analysis of the underlying problem structures in a given context (Krarup, 2021a). Particularly, we focus on instances related to ‘the market’ and the general problems in the EU of distinguishing the ‘level playing field’ from competition itself (Krarup, 2021b, 2022).

Artificial intelligence and European market integration

Our first research question concerns how the recent surge in EU regulation on AI relates to notions of market integration and ethics, and how these concepts are positioned in a broader policy landscape. In the EU, AI has to a large extent been sorted under the already existing strategy for a ‘Digital Single Market’. The idea of a Digital Single Market dates back at least to Jean-Claude Juncker's priorities as the newly appointed President of the European Commission. At the time, artificial intelligence as such was not on the radar – focus was on telecom, big data and cloud computing (European Council, 2015). However, the ambitious programme for creating ‘a fair level playing field’ became the framework within which AI would later be inserted (cf. European Commission, 2018c). The Digital Single Market Strategy has involved considerable legislative activity over the recent years, including the following:

General Data Protection Regulation (GDPR) (2016) Regulation on the Free Flow of Non-personal Data (FFD) (2018) Cybersecurity Act (CSA) (2019) Open Data Directive (2019) Digital Content Directive (2019) Data Governance Act (Proposal) (2020) Digital Markets Act (Proposal) (2020) The Digital Services Act (Proposal) (2021) Artificial Intelligence Act (Proposal) (2021) Machinery Regulation (Proposal) (2021)

Already in the Digital Single Market Strategy, consumer benefits, economic growth and better governance were all pursued first and foremost through market integration (European Council, 2015). The Commission made it clear that as ‘the global economy is rapidly becoming digital’, information and communications technology (ICT) ‘is no longer a specific sector but the foundation of all modern innovative economic systems’ (European Council, 2015). Certainly, market integration is not the sole concern addressed – economic growth, social and political progress, human rights and ‘technological sovereignty’ (in geopolitical and economic competition with other regions) are other important goals found in many documents, as we shall see (see also Niklas and Dencik, 2020). But market integration became a key framework and driver for a broad array of regulatory and other initiatives in the EU.

This inscription of EU regulation on AI into a (Digital) Single Market framework is also visible in the prospect provided in Figure 1, plotting the yearly relative frequency of documents returned in a search for ‘artificial intelligence’ on EUR-Lex. The repository goes back to 1977 but frequencies remain very low for a long time, averaging 3.3 mentions per year up through 2014, compared to 529 in 2021. Indeed, we see a surge from almost no mentions beginning around 2016/2017 with more than 3% of all documents from 2021 including ‘artificial intelligence’ somewhere in the text.

EUR-Lex normalized word frequency. Note: Search term frequencies (number of documents in search) divided by total number of documents (empty search) gathered from www.eur-lex.Europa.eu on 16 Nov 2021.

Figure 1 also plots the yearly frequency of ‘artificial intelligence’ co-occurring with, respectively, ‘ethics’, ‘vision’, ‘market’, ‘single market’ and ‘infrastructure’. Figure 1 reveals that ethics and visions do indeed play a role, but that the associations of AI with ‘market’, ‘infrastructure’ and even the much narrower ‘Single Market’ are markedly more frequent, suggesting the relevance of looking more into the intersection of artificial intelligence policy and market integration in the EU. Clearly, we need a closer look at the role of ‘the market’ in EU regulation on AI.

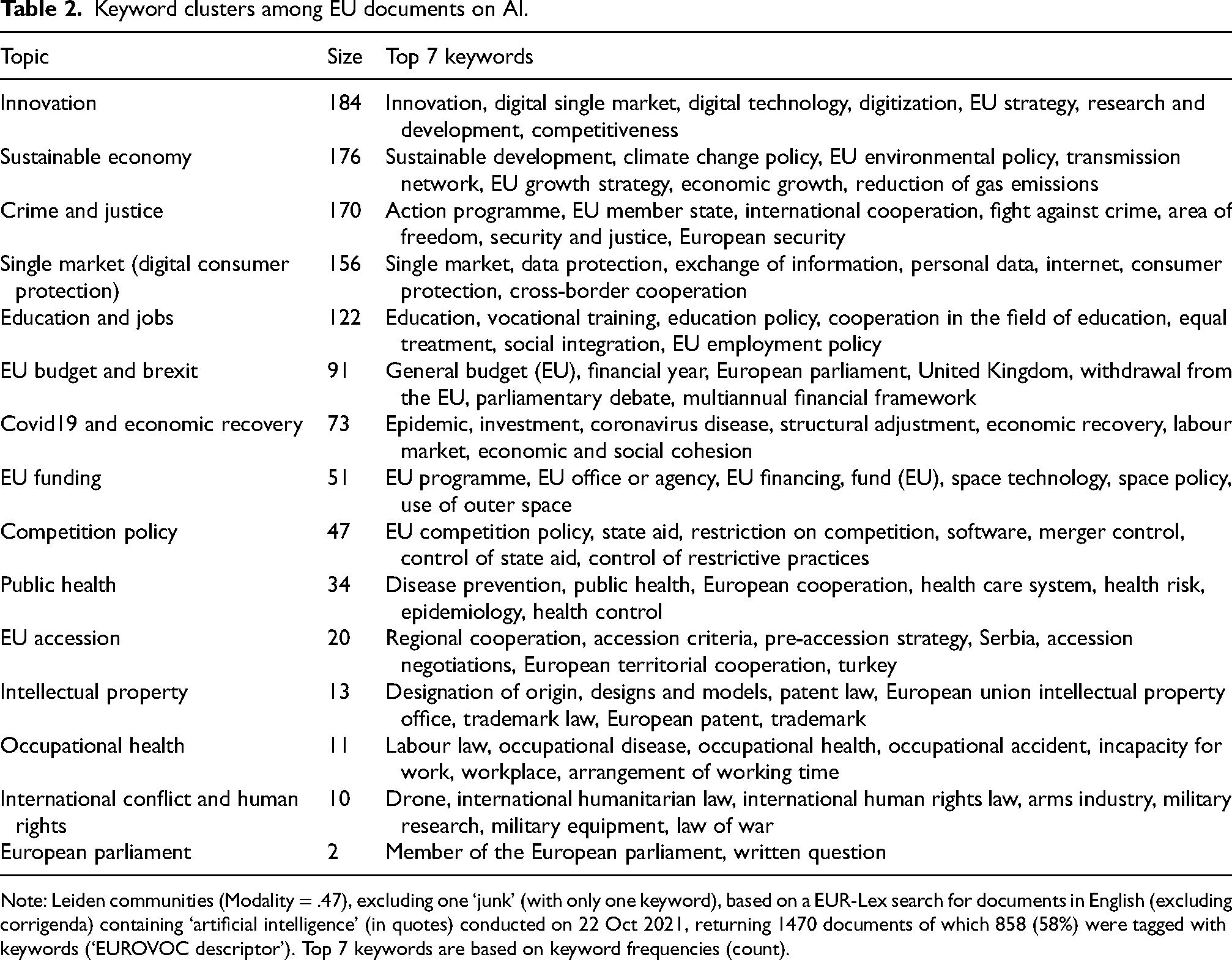

As a way of obtaining an overview of the policy areas affected by the new EU regulation on AI, we execute an EUR-Lex search on ‘artificial intelligence’ among official EU documents. We submit the thematic keywords used by EU institutions to tag documents to network analysis (Traag et al., 2019), detecting 15 different and fairly separated topics, that is, clusters of keywords. The thematic clusters of documents are presented in Table 2, which also provides technical details on the analysis. While some clusters in are quite small and we suspect some to be only marginally relevant to AI (e.g. EU Accession), Table 2 still demonstrates the breadth of AI regulation in the EU – covering as broad and diverse policy areas as innovation, sustainable economy, the (Digital) Single Market and consumer protection, crime and justice, education and jobs, EU budget and Brexit, public health, Covid19 and economic recovery, competition policy, and intellectual property.

Keyword clusters among EU documents on AI.

Note: Leiden communities (Modality = .47), excluding one ‘junk’ (with only one keyword), based on a EUR-Lex search for documents in English (excluding corrigenda) containing ‘artificial intelligence’ (in quotes) conducted on 22 Oct 2021, returning 1470 documents of which 858 (58%) were tagged with keywords (‘EUROVOC descriptor’). Top 7 keywords are based on keyword frequencies (count).

As a way of inspecting relationships between the different clusters, Figure 2 plots the network of relations among them. The thickness of the lines (‘edges’) between each pair of clusters reflects the relative strength of ties between the two. We see that many of the smaller clusters (e.g. ‘Intellectual Property’, ‘Occupational Health’) are well separated, whereas some of the bigger clusters are more closely connected. The central position of the cluster ‘Covid19 and Economic Recovery’ reflects the massive and encompassing response to the pandemic that includes up to 20% of the European Recovery and Resilience Facility (up to EUR 134 bn. in 2020–2026) allocated to ‘digital transformation’ (European Commission, 2021b). Nonetheless, the pandemic cluster is of secondary interest to our analysis here. Instead, we draw attention to the importance of market themes across some of the biggest clusters. In particular, the keywords ‘Digital Single Market’ in the Innovation cluster and ‘Single Market’ and ‘Consumer Protection’ in the Single Market cluster, together with the Competition Policy cluster, clearly reflect market integration as an important theme for EU regulation on AI.

Network of keyword clusters. Note: Network of keyword co-occurrence at Leiden-cluster level. Node sizes reflect the number of keywords in each cluster, ni. Edge thickness reflects the actual number edges between keywords in the two clusters, ebtw, divided by the maximum possible number of edges between them, that is,

The quantitative analysis thus far raises the questions of why and how, more precisely, the themes of AI and market integration are related in EU regulation – and, in particular, to ethics, given the overall EU focus on ethical AI. The above overview represents the broader contexts in which AI is talked about in the EU. In the qualitative reading of a small selection of central EU documents on recent AI regulation narrows the focus, seeking to clarify the more specific role played by Single Market integration.

An all-encompassing technology

In order to address our second research question on how the motive of Single Market integration shapes EU regulation on AI and the pursuit to become a global leader on ethical AI, we now turn to qualitative analysis. The selected documents (Table 1) present a broad array of themes, concerns and solutions. The Commission singles out Europe as a potential vanguard of ‘human-centric AI’ foregrounding ethics to make innovation and development benefit consumers (e.g. through GDPR), workers (e.g. through training) and public services (European Commission, 2018d). There is also a strong focus on enhancing European AI research and on creating closer ties between research and business – ‘innovation from the lab to the market’ (European Commission, 2018a). However, as also suggested by the quantitative analysis, rather than being treated as separate concerns, these and yet other questions are treated as inextricably intertwined. For example, aligning concerns often viewed as differing if not conflicting, the Commission writes that ‘Spearheading the ethics agenda, while fostering innovation, has the potential to become a competitive advantage for European businesses on the global marketplace’ (European Commission, 2018d).

Specifically, alongside the list of new regulations to safeguard privacy and enhance consumer protection, such as GDPR, the Commission proposes to spend EUR 1.5 bn. on the development of ‘large-scale reference test sites open to all actors’ (European Commission, 2018d) and to create an ‘AI-on-demand platform’ for all AI users in the EU along with more and stronger regional ‘Digital Innovation Hubs’ (e.g. in existing science parks) to help small and medium-sized enterprises in particular exploit AI (European Commission, 2018a: 8). In this way, the European strategy on AI is to make the Union competitive globally by maximizing investments, synergies and ethics (European Commission, 2018d). Our overall argument in this and the following three sections highlights how market integration shapes this grand synthesis of issues in EU regulation on AI – not just due to its role in buttressing economic growth, but more fundamentally through the special status of market integration for EU policy.

At a 2017 meeting, the European Council called for ‘a sense of urgency to address emerging trends’, inviting the European Commission ‘to put forward a European approach to artificial intelligence’ along with ‘necessary initiatives for strengthening the framework conditions with a view to enable the EU to explore new markets through risk-based radical innovations and to reaffirm the leading role of its industry’ (European Council, 2017:7). Following a declaration on AI signed by 24 member states and Norway (The Member States, 2018), the European Commission's (2018a) Communication on Artificial Intelligence in Europe outlined the stakes in historical dimensions: Like the steam engine or electricity in the past, AI is transforming our world, our society and our industry. Growth in computing power, availability of data and progress in algorithms have turned AI into one of the most strategic technologies of the twenty-first century. The stakes could not be higher. The way we approach AI will define the world we live in. Amid fierce global competition, a solid European framework is needed. (European Commission, 2018a: 2)

Referencing the EU's strong industry and research position in robotics and the potentials for making public sector data available as ‘the raw material for AI’, the Communication more specifically points to the ‘Digital Single Market’ with ‘common rules, for example, on data protection and the free flow of data in the EU, cybersecurity and connectivity’ in order to ‘help companies to do business, scale up across borders and encourage investments’ (European Commission, 2018a: 3). While urging that no-one be left behind and that new technologies be based on values, the Commission also argues that these points contribute to ensuring Europe's competitive position, specifically through education and the General Data Protection Regulation (GDPR), as ‘this is where the EU's sustainable approach to technologies creates a competitive edge’ (European Commission, 2018a: 3).

The Commission sets a goal of surpassing EUR 20 bn. of yearly combined EU investments in information and communication technology by 2020 and pushes strongly for an approach that brings ‘innovation from the lab to the market’ (European Commission, 2018a: 8). In so doing it envisions the creation of an ‘AI-on-demand platform’ for all AI users in the EU, ‘including knowledge, data repositories, computing power,… tools and algorithms’ along with more and stronger regional ‘Digital Innovation Hubs’ to help small and medium-sized enterprises in particular exploit AI by offering ‘expertise on technologies, testing, skills, business models, finance, market intelligence and networking’ (European Commission, 2018a: 8).

The Coordinated Plan on AI published later in the same year confirms many of the above points (European Commission, 2018d). While public investments play a major role in the Plan, the relationships between innovation, market integration and international competition are made explicit: [I]t is of utmost importance to avoid market fragmentation in strategic sectors such as artificial intelligence, including by strengthening key enablers (e.g. common standards and fast communication networks). A real Single Market with an integral digital dimension will make it easier for businesses to scale up and trade across borders and thereby further boost investments. (European Commission, 2018d)

In fact, market integration – specifically the creation of a ‘Digital Single Market’ with standardized regulations and infrastructures and without internal obstacles to the free flow of AI services – is presented as a key condition for successful AI innovation and development which, in turn, is depicted as a strategy for making the European economy competitive globally: The effective implementation of AI will require the completion of the Digital Single Market and its regulatory framework … Furthermore, infrastructures should be both accessible and affordable to ensure an inclusive AI adoption across Europe, particularly by small and medium-sized enterprises (SMEs). Industry, and in particular small and young companies, will need to be in a position to be aware and able to integrate these technologies in new products, services and related production processes and technologies, including by upskilling and reskilling their workforce. Standardisation will also be essential for the development of AI in the Digital Single Market. (European Commission, 2018d)

There is a striking parallelism here between the conception of the market and that of AI development, as both are said to rely on standardization of regulation and infrastructures. Indeed, like the Single Market is conceived as a common space for market transactions, so AI innovation must be facilitated ‘by creating a common European Data Space: a seamless digital area with the scale that will enable the development of new products and services based on data’ underpinned by ‘interoperable’ data available in ‘open, FAIR [findable, accessible, interoperable, reusable], machine readable, standardized and documented’ formats (European Commission, 2018d). Indeed, AI and the market – more specifically, ‘fair’ competition – intersect in the vision of a European ‘common data space’.

A ‘Common Data Space’ in the EU

In its ambitions to strengthen the European digital economy, the European Commission is strongly concerned with how to secure that not only big companies but also small and medium-sized enterprises reap the benefits of AI. It is in this context that the talk of data as the ‘raw material of the Digital Single Market’ must be seen (European Commission, 2018b). The Commission projects the creation of a ‘common data space’ in the EU, which they define as ‘a seamless digital area with the scale that will enable the development of new products and services based on data’ (European Commission, 2018b). Although the Commission does not use the precise expression, it is as if the free movement of data has been added to the original free movement of goods, labour, services and capital (Council of the European Union, 2008). This includes making both public and private data available for ‘re-use’ through ready-to-use interfaces, thus reducing ‘market entry barriers’, as well as standardizing formats, buttressing ethics and accountability and promoting transparency to form an ‘open data ecosystem’ (European Commission, 2018b).

To be sure, the immediate motive here is economic growth and social progress, but the pursuit winds up in concepts about the market and frictions to competition. As the ‘raw material’ for AI, data has moved from being a commodity to become a vital infrastructure for the ‘Digital Single Market’. Or, rather, a conflict emerges over its two roles as, simultaneously, a property-commodity of the owners of data and as public information infrastructure needed in the production of goods and services but accessible on an unequal basis (provoking ‘barriers’ and ‘distortions’ to competition).

Ethics, global geopolitical competition and market integration are inseparable issues in the EU documents on AI. In its communication contributing to an informal EU leaders’ meeting on data protection and the Digital Single Market in 2018, the Commission made this very clear. Sandwiched in the text between accounts of GDPR and ePrivacy regulation, the communication states that: The EU data protection rules enable the free flow of personal data within the Union, from which the critical mass of data essential for a strong data economy can be generated. … Building a genuine European Data Space requires a level playing field also for non-personal data, and a proposal to this end is already on the table. (European Commission, 2018c)

The close association of privacy and fundamental rights (widely cited in the document) and the creation of a Digital Single Market is far from trivial. For example, citing the EU Charter of Fundamental Rights, it states that: ‘Strong data protection, confidentiality of communications and data security are crucial to dispel individuals’ doubts about misuse of their data and to create trust. Without this trust, the potential of a thriving data economy will not be met’ (European Commission, 2018c: 2). This interweaving of issues undoubtedly reflects the fact that human rights and market integration are two important domains of EU authority and purview on which their engagement with AI can build. This reveals that the recent heave in European AI regulation is not simply the reflection of a universal problem in modern Western societies about the pros and cons of new technologies – spurred by the surge in computational power, machine learning algorithms and data availability – but reflects the specific legal and institutional structure of the EU through which AI is problematized.

The conceptual structure thus consists not simply in a duality of ethics on the one hand and market integration on the other. Rather, the two are inseparable: market integration, too, is cast in ethical terms (growth, prosperity, social and political progress, human rights) whereas ethics are often conceived in market terms (consumer rights, consent, transparency). For example, the communication on ‘a trusted Digital Single Market for all’ states that ‘robust data protection and privacy rules not only ensure fundamental rights, but generate trust in the digital economy’ and that ‘Bringing down the Digital Single Market barriers within Europe could contribute an additional EUR 415 billion to European Gross Domestic Product’ (European Commission, 2018c). In this way, consumer benefits via access digital services and goods across the EU, the geopolitical promotion of privacy regulations and social and political visions of progress and prosperity are all inextricably intertwined with the creation of a Single Digital Market, according to the documents.

The close-knit relationship between the different aspects of AI in the EU approach to regulation is likewise reflected in the combined proposal for a comprehensive regulatory framework on AI and revision of the 2018 coordinated plan on AI (European Commission, 2021b, see also 2021c). The framework to launch Europe's ‘Digital Decade’ affects a long and broad list of regulations and directives, including the Data Governance Act (‘smooth access to data’), the Machinery Directive (‘safety risks of new technologies’), the EU Security Union Strategy (cybersecurity), the Digital Education Action Plan, the Digital Services Act, the Digital Markets Act, the European Democracy Action Plan, the Product Liability Directive, and the General Product Safety Directive (European Commission, 2021b).

The recent regulatory program on AI in the EU is thus to a very large extent modelled on existing notions of market integration, removal of barriers to competition and consumer protection. The question, then, is how EU institutions in this particular case handles issues of the new infrastructures necessary to create a level playing field for AI?

Competition vs. fragmentation – infrastructures vs. monopolization

Free and fair competition in the absence of barriers and fragmentation (a ‘level playing field’) requires fully harmonized and integrated market infrastructures – in the widest possible sense, encompassing not only rules and regulations, but also digital and physical mechanisms and means of exchange. The problem is that while market integration thus calls for universal infrastructure provision (equal for all market players), then such provision – especially the mechanisms and means of exchange – is also a kind of service that, according to the same underlying principle of competition, ought to be provided by an array of competing market players, leading to infrastructure fragmentation (Krarup, 2019). This market integration paradox also materializes around the new wave of European AI regulation.

In the ‘European Strategy for Data’ (European Commission, 2020a), the Commission addresses the problems hindering realization of data economy potentials, topping the list with ‘fragmentation between Member States’. National approaches to AI differ in both kind and scope and ‘the emerging differences underline the importance of common action in order to leverage the scale of the internal market’ (European Commission, 2020a). One topic permeates the list of issues discussed by the Commission in relation to AI markets fragmentation: the role of data. Administrative harmonization is called for so that companies can plug into not just their national market but the Single Market from anywhere in the EU (the ‘once only principle’) – specifically concerning data sharing between government and business. Similarly, The Commission calls for the ‘application of standard and shared compatible formats and protocols for gathering and processing data from different sources in a coherent and interoperable manner across sectors’ (European Commission, 2020a).

But the data-driven nature of AI also raises concerns about concentration of market power: ‘A case in point comes from large online platforms, where a small number of players may accumulate large amounts of data, gathering important insights and competitive advantages from the richness and variety of the data they hold’ (European Commission, 2020a). Such ‘data advantage’ can enable certain companies to ‘set the rules on the platform and unilaterally impose conditions for access and use of data or, indeed, allow leveraging of such ‘power advantage’ when developing new services and expanding towards new markets’ (European Commission, 2020a).

The new status of data as the ‘raw material’ of AI thus imposes a paradox on market regulation. As the Commission states, the data that a business (especially big tech) providing an online platform can generate, may yield a competitive advantage (see also Boyer, 2022). Indeed, with the growing importance of AI, the provision of certain services – and consequently the markets in those services – will hinge on access to those data. In that case, data produced on a competitive basis by private businesses, carrying commodity value, has turned into a market infrastructure. With this turn, restrictions on access that a business could legitimately impose on data-as-commodity now compromise the notions of ‘level playing field’ and ‘open and fair’ competition.

Interestingly, the issue of data infrastructures also stretches beyond the borders of the Single Market. Specifically, the Commission is concerned that the EU is not self-sufficient in many AI infrastructure domains, such as cloud provision. Being dependent on external providers puts the EU in a vulnerable position to ‘external data threats’ and entail a ‘loss of investment potential’ (European Commission, 2020a). Vice versa, EU service providers may be subject to third-country regulation, presenting risks of legal uncertainty and – of particular concern with regards to China – that ‘data of EU citizens and businesses are accessed by third country jurisdictions that are in contradiction with the EU's data protection framework’ (European Commission, 2020a). In this way, like we saw with ethics and markets in the previous section, the Single Market and what the Commission calls Europe's ‘technological sovereignty’ become entangled in ways that make it hard to decide where the one ends and the other begins.

Big tech and barriers to trade: the concept of ‘Gatekeepers’

Across the European Commission's recent legislative proposals – the Data Governance Act (2020b), the Digital Markets Act (2020c), the Digital Services Act (2020d), the Artificial Intelligence Act (2021a) and the Machinery Regulation (2021d) – the legal basis for new EU regulation is consistently identified in Article 114 of the Treaty of the Functioning of the European Union (EU, 2020) on the Internal Market. Specifically, the Commission raises concerns about ‘regulatory fragmentation’ with Member States introducing different measures at varying pace, failing to match the ‘intrinsic cross-border nature’ of the services in question (European Commission, 2020c). The Treaty obliges the Commission and the Council to take action to ensure the establishment and functioning of the Internal Market, understood as ‘an area without internal frontiers in which the free movement of goods, persons, services and capital is ensured’ (EU, 2020: Art. 26, 2). While spanning a wide range of fields and issues, the new wave of European AI regulation is thus rooted in the Treaty provisions on the internal market, matching the broader 2020 ‘European Strategy for Data’ – of which these legislative packages are part – which ‘aims to strengthen the single market for data’ (European Commission, 2020b). This is not to say that other legal frameworks are unimportant. Notably, the EU Charter of Fundamental Rights plays an explicit role, for example, in the Commission's proposal for an AI Act to prohibit unacceptable and to regulate high risk implementations of AI – not least in terms of privacy and safety (European Commission, 2021a). Nevertheless, ‘the market’ remains a central concept in the edifice of AI regulation in Europe.

One domain where the problems of ‘the market’ become apparent is big tech, such as online marketplaces, app stores, search engines, social networks, video-sharing, operating systems and other. In its proposal for a Digital Markets Act, the Commission (2020c) introduces a new concept to complement that of ‘dominant position’ enshrined in the Treaty (Council of the European Union, 2008: Art. 102). The existing notion of ‘dominant position’ considers, for example, large companies imposing ‘unfair’ prices, limits on production and innovation, or asymmetric conditions (disadvantages). In addition to this, the proposed concept of ‘gatekeeper’ concerns companies that suspend or impede competition based on their position as mediators between trading partners (business to consumer or business to business), offering what the Commission calls ‘core platform services’ on unequal terms due to significant network effects and scale economics. Gatekeepers are thus defined by the Commission by having ‘a significant impact on the internal market’, operating gateways between business and customers and enjoying an ‘entrenched and durable position’ (European Commission, 2020c). The Commission writes that digital services: increase consumer choice, improve efficiency and competitiveness of industry and can enhance civil participation in society. However, whereas over 10 000 online platforms operate in Europe's digital economy, most of which are SMEs [small and medium-sized enterprises], a small number of large online platforms capture the biggest share of the overall value generated.

In other words, the platform economy made possible by AI, big data and digitalization more broadly carries immense potentials for growth and competition alike, but inevitably also involves serious distortions of competition with a few big tech companies creating ‘their own platform ecosystems’ connecting buyers and sellers (European Commission, 2020c). A parallel phenomenon is well-known in finance, where large trading platforms and infrastructures come to occupy a double role of offering financial services in the market, on the one hand, and becoming the market itself, that is, the condition of possibility for transaction on a competitive basis in a given field, on the other hand (Krarup, 2021b). The paradox of gatekeepers is therefore one of ‘the market’ as an objective of European integration. Large digital platforms offer new opportunities for companies and consumers across Europe to connect and transact, increasing competition. However, platforms with more users and transactions get primary access to more valuable data (the ‘raw material’ of AI) and can therefore offer a deeper and wider market. They thereby achieve a competitive advantage over alternative platforms (network effects) and can build vast infrastructures impossible to match by most other tech companies (scale economics). Thus assuming the role of ‘gatekeeper’, big tech companies are in a position to distort competition and the Internal Market through ‘dependencies’, ‘unfair behavior’ and lack of ‘contestability’, resulting in ‘inefficient outcomes in the digital sector in terms of higher prices, lower quality, as well as less choice and innovation to the detriment of European consumers’ (European Commission, 2020c).

The situation is a paradox in the sense that the platforms are thus simultaneously a condition for and a barrier to increased competition and innovation. While only a recent and rapidly evolving object of regulation, AI thus becomes inscribed in a broader and older problem of market integration understood as the removal of barriers to competition in Europe. The objective of the Commission's proposal for a Digital Markets Act is therefore ‘to allow platforms to unlock their full potential’, acknowledging that ‘market processes are often incapable of ensuring fair economic outcomes with regard to core platform services’. While the platforms are, in many cases, the very frontier of the new digital market, the Commission thus sees an urgent need to ensure a ‘contestable and fair digital sector… with a view to promoting innovation, high quality of digital products and services, fair and competitive prices, as well as a high quality and choice for end users in the digital sector’ through comprehensive regulatory measures.

Conclusion

The rapid evolution of artificial intelligence has made the world's biggest economies formulate ambitious strategies for reaping the economic, geopolitical and other potentials of this emerging field of technology. The European Union has been widely considered a slow and reactive player in this context, but with extensive and comprehensive regulatory activity since around 2017, the image today is rather one of a targeted and ambitious effort to contest for global leadership. EU regulation on AI covers a wide range of policy fields, with geopolitical competition (‘technological sovereignty’), human rights and consumer protection, public health, and social and political progress among the most salient issues.

Our main finding in this article is that Single Market integration plays a connecting and synthesizing role across many of these otherwise very different fields of regulatory activity. Existing literature has approached the topic mainly from an imagined futures perspective and emphasized the uneasy coexistence of ethically sound economic and political potentials versus new sources of human and consumer right infringements by big tech, foreign states or other actors. Our conclusion by no means dismisses these findings, but rather recasts them within the legal framework of Single Market integration in the EU.

Where some see European regulatory responses to AI as images of ‘Western (Aho and Duffield, 2020: 208), our analysis reveals a far more ambitious and comprehensive line. Moreover, our analysis suggests that the EU's recent regulatory commotion reflects more than a universal antinomy between the benefits and risks of new technology, resurrected by the recent surge in computational power, machine learning techniques and data availability (Calo, 2017). Specifically, our analysis brings forth deep-seated problems of market integration embedded in the constitutional legal framework of the EU through which new challenges, such as those related to AI, are addressed. Rather than simple ideological coherence (e.g. neoliberal), these discursive problems enshrined in the EU project are exactly that – problems, producing tensions, uncertainties and contradictions, leaving room for sometimes paradoxical policy development and a multiplicity of voices, while also serving as an engine for the production of new responses. Indeed, paradox yields not only constraint, but also dynamism. We have seen how the EU manages to closely intertwine concerns for human rights, economic growth and Single Market integration, as market integration is ascribed with ethical motives (growth, wealth, geopolitical security, social and political progress) and privacy is often framed in a market-like contractual logic (consent, transparency, consumer protection). We see a great interest in future research further exploring the paradoxes of the market in the EU and their effect on regulation through a comparative focus on recurring patterns of technopolitical controversy and across longer spans of time and wider arrays of socio-economic domains.

Footnotes

Disclosure statement

The authors have no conflicting interests to declare.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.