Abstract

This systematic literature review addresses the intersection of two rapidly evolving areas of knowledge and practice: Indigenous Knowledge Systems (IKS) and artificial intelligence (AI). There is growing scholarly recognition of the rich and diverse nature of IKS, which are unique intergenerational understandings of worldly relations from an Indigenous standpoint. There is now a vast literature on the promise and pitfalls of AI. However, there is a lack of systematic reviews showing how these two dynamic literatures are intersecting, and what the major themes are. AI has the potential to assist the promotion of IKS; however, there are also potential risks arising from AI for Indigenous peoples, such as the erosion of cultural knowledge, and data-grabbing that fails to respect the principles of Indigenous Data Sovereignty. These risks can exacerbate existing knowledge hierarchies and socio-economic inequalities. In this paper, we conducted a systematic review of articles published between 2012 and 2023 (January) on Indigenous peoples and AI. We shed light upon four unique overlapping categories into which existing literature can be classified and comprehensively discuss literature under each category. The first two categories discuss AI’s role in assisting the promotion of IKS and the third focuses on the pitfalls of using AI for Indigenous peoples. The final category discusses how IKS itself can enrich the development of AI. We further identify several gaps in the literature and highlight avenues requiring attention on AI’s role with Indigenous peoples and their knowledge systems.

Keywords

Preface

This paper comes out of a multi-year participatory research partnership between First Nations and non-First Nations research practitioners, all of whom hold positions at the University of Melbourne. The research agenda and projects have been co-designed by the First Nations researchers with extensive engagement with the relevant Traditional Owner group. The views expressed are those of the authors alone and do not seek to represent the views of First Nations broadly. The non-First Nation authors acknowledge their positionality as academics of migrant origin located in an academic institution whose establishment and operation have been entwined with a larger settler–colonial project of dispossession and Western scientific knowledge production.

Introduction

Artificial intelligence (AI) has revolutionised various sectors such as healthcare, education, and law enforcement, generally perceived to be beneficial to modern life. However, its negative impacts including the creation or exacerbation of vulnerability for certain social groups, are increasingly recognised (Mehrabi et al., 2021; Vinuesa et al., 2020). Recent literature questions if AI is becoming ‘A New (R)Evolution or the New Colonizer for Indigenous Peoples?’ (Lewis et al., 2020; Whaanga, 2020). There are also renewed concerns around the technological determinism that AI may stand for (Winkel, 2024). This review addresses AI’s role concerning Indigenous 1 peoples, a group exceeding 476 million worldwide across over 5000 distinct groups (United Nations, 2023a) and the Indigenous Knowledge Systems (IKS), which are intricately woven through the rich tapestries of oral traditions, Dreaming stories, customary lore, Indigenous science, art, songs, dance, and ceremonies – a cultural and intergenerational knowledge system foundational to most Indigenous cultures (Hampton and Toombs, 2013).

This systematic review, unique in its comprehensive scope, explores the academic literature on AI’s application in Indigenous contexts – where Indigenous contexts are to be understood as instances where AI was used with, for or in the background of data about Indigenous communities, people, lands, and tangible and intangible resources – over the past decade. Unlike previous surveys focused on specific sectors or issues (Krakouer et al., 2021b) and (Ratana et al., 2019), this work, to the best of our knowledge, is the first systematic review to understand how AI has been applied in the context of Indigenous research agendas, including its positive or negative impact on Indigenous self-determination and well-being, and IKS.

We define AI as a sociotechnical system encompassing computational technologies with capabilities for automatic knowledge extraction and pattern recognition from data such as numerical, text, audio, video (e.g., image recognition, speech recognition, and handwriting recognition), and automated decision-making (e.g., medical diagnosis systems) informed by Vinuesa et al. (2020) and Lewis et al. (2020). It encompasses a variety of subfields of AI, including machine learning, neural networks, and deep learning-based methods. This definition recognises AI as deeply embedded within broader cultural, scientific, political, and historical contexts that are produced through the multiple, heterogeneous discursive spaces of scientific practice (Knorr-Cetina, 1981; Latour and Woolgar, 1979; Pickering et al., 1992). The social contingency of science as practice is critically important to understand how AI as technoscience shapes the relations of and within colonial nation-states, and the formation and affirmation of Indigenous-led theories and methodologies that turn the gaze back onto technoscience knowledge production itself (Kolopenuk, 2020; Lewis et al., 2020; TallBear, 2013).

Our review sheds light on four overlapping categories into which existing literature can be classified. The first two categories include literature that focuses on how AI can support Indigenous peoples and their knowledge systems. Therefore, throughout this review, ‘AI for’ refers to AI systems that are developed for the benefit of Indigenous peoples. Specifically, (i) AI tools for the preservation and awareness of Indigenous knowledge, land, and languages and (ii) AI tools for addressing challenges encountered by Indigenous peoples or improving their quality of life (as identified by Indigenous peoples). The third focuses on AI’s risks, in particular, (iii) concerns and problems associated with using AI in relation to Indigenous communities, people, lands, and tangible and intangible resources. The final category discusses the potential of IKS to support AI, specifically (iv) utilising IKS itself to enrich and inform the development of AI systems.

Outline of the review

The review is organised as follows: the Background section provides the background and motivation for this review, Review Design illustrates the review design and methodology, Outcome section discusses the outcomes of the review, in particular, the thematically classifying the literature and discussing related articles, and the final section discusses the gaps and highlights future research avenues.

Background

IKS (also referred to as traditional knowledge, traditional environmental or ecological knowledge) describes the intergenerational understandings of the world from an Indigenous standpoint that are unique and different from Western Scientific Knowledge Systems (WSK) or respective settler knowledge systems. IKS is situated within an Indigenous paradigm that is always relational, purposeful, and grounded by tribal or ancestral histories (Goulding et al., 2016).

The notion of ‘Indigenous articulations’ rejects rigid and essentialist approaches to understanding difference and instead attends to the diversity and specificity of a variety of cultures that identify as Indigenous (Clifford, 2001; TallBear, 2013). Indigeneity tends to refer to the temporal priority of First Nations in relation to the disruptive processes of colonisation and the survival of peoples and knowledge systems (TallBear, 2013). This stands in contrast to Western science depictions of Indigenous knowledge as dying and in need of salvage. Place is also critical to Indigenous articulation, with knowledge systems coevolving with living landscapes that shape relations between the human and non-human (Rose, 2017).

It is important to avoid sedimenting binary distinctions between Western science and Indigenous articulations and IKS. As critical Science, Technology, and Society (STS) studies inform, WSK’s claims to objectivity, neutrality and universality are just that – ‘claims’ – that obscure the social -embeddedness and contingency of scientific knowledge-making processes (Jasanoff, 2004). Processes of objectification are, in fact, based on validation by consensus amongst representatives of the dominant group, which then becomes the official interpretation of reality (Stanfield, 1985; Stanfield II and Dennis, 1993). WSK, whilst laying claim to certain principles and practices of abstraction and empiricism, can be understood as practice and culture with its own institutional moorings. This diverts us away from a ‘sterile dichotomy’ (Agrawal, 2014) between Indigenous and Western knowledge systems toward the approach of critical Indigenous STS, which disrupts and challenges the certainties of Western science, makes visible the colonial logics and practices that legitimate particular discourses; and asserts the self-determining authority to theorise and understand the world (Kolopenuk, 2020; Moreton-Robinson, 2013). This translates into processes of dialogue and spaces of negotiation, where knowledge is transformed and adapted through exposure and interaction (Hudson et al., 2010). This is a space of radical possibility that is underpinned by respect for traditional knowledge systems and recognition of the relational and embodied nature of these knowledge systems.

This ‘negotiated space’ stands in stark contrast to dominant modes of WSK knowledge production about Indigenous peoples that have privileged quantitative methodologies and drawn on large administrative processes of data collection to produce deficit narratives about Indigenous peoples (Walter et al., 2021). Unchecked by ethical processes of Indigenous engagement and Indigenous Data Sovereignty, AI has the potential to amplify the deficit-focused statistical narrative. AI is used in critical decision-making areas such as healthcare and law enforcement (Yu et al., 2018) and can multiply impacts on vulnerable and minority communities (Mehrabi et al., 2021). For instance, studies have shown that AI systems used in child protection services disproportionately refer Indigenous children to these services compared to what would be expected based on actual case numbers (Krakouer et al., 2021a; Wilson et al., 2015).

AI’s role in both advancing and complicating human lives, particularly regarding discrimination and inequality, necessitates an urgent reevaluation of AI governance. This need is critical for Indigenous peoples who have historically faced control, policing, and discipline through WSK tools. A key challenge for AI is integrating Indigenous Data Sovereignty principles into its design and ensuring Indigenous leadership in decision-making processes (Lewis et al., 2020; Neurips Workshop: Indigenous in AI, 2020). However, AI also has the potential to support Indigenous peoples and their initiatives in healthcare (Scheetz et al., 2021), language revitalisation (Kann et al., 2022), and environmental conservation (Ochungo et al., 2022). We review the current state of scholarship on AI and IKS to better understand and provide a systematic overview of topics that are discussed in this space.

Review design

We designed a review framework following the guidelines of the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) (Page et al., 2021) and referring to recent reviews conducted in the intersection of AI and other sectors (Haltaufderheide et al., 2023; Krakouer et al., 2021b; Ouyang et al., 2022).

Article search

We conducted a literature search on AI and Indigenous peoples from 2012 to 2023 using the Web of Science Core Collection 2 . The first query consists of the keywords: ‘artificial intelligence’ or ‘machine learning’ or ‘deep learning’ and ‘indigenous’ or ‘aboriginal’ or ‘first nation(s)’ and ‘ethic(s)’ or ‘fairness’ or ‘bias’ or ‘equity’ or ‘data sovereignty’ yielded 64 articles. The second query, which excluded terms ‘ethic(s)’, ‘fairness’, ‘bias’, ‘equity’ and ‘data sovereignty’ resulted in 412 articles, with 378 remaining after duplicate removal. Additionally, five notable works, including Indigenous in AI workshops and key position papers – (Neurips Workshop: Indigenous in AI, 2020, 2021, 2022), The collective benefit, authority to control, responsibility, ethics (CARE) Principles for Indigenous Data Governance (Carroll et al., 2020) and Indigenous Protocol and AI (Lewis et al., 2020) were included. Articles were screened by title, abstract, and full text. Figure 1 shows a flowchart of the overall review process.

Flowchart of the review process presented according to the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines (Page et al., 2021).

Limitations

Our review has limitations, such as relying on a single database, which might have missed relevant literature, especially from First Nations authors often underrepresented in academic publications. Nonetheless, the purpose of this paper is to conduct a systematic literature review based on academic publications. As a systematic literature review, this work is inherently oriented towards areas already explored within the existing body of work on the intersection of Indigenous peoples and AI. Given the developing nature of this field, the available literature is limited, which constrains the scope of our review and affects the comprehensiveness of the issues discussed. In fact, there is currently no work on the translation between epistemics of Indigenous knowledge and that of data-centric algorithmic approaches of AI. Additionally, this review uses ‘Indigenous’ as a collective term to explore overarching themes, trends, and discussions in the existing literature on AI’s impact on Indigenous peoples. However, this approach does not reflect the diversity of Indigenous communities and knowledge. Understanding AI’s impact on different Indigenous communities requires direct engagement with specific groups and their knowledge authorities, which is beyond the scope of this review.

Eligibility criteria

We included articles on AI’s use with Indigenous peoples or related data, aligning with the United Nations Declaration on the Rights of Indigenous Peoples (United Nations, 2023b) Article 31. We considered all aspects related to Indigenous peoples, such as resources, knowledge, and arts, as Indigenous data. Articles that do not discuss topics related to Indigenous peoples and AI and those using ‘Indigenous’ for native datasets unrelated to Indigenous data were excluded. Ethical AI discussions not focused on Indigenous communities were also omitted, along with grey literature. Furthermore, this review only considered articles written in English. Following these criteria, 53 articles were selected for an in-depth review.

Outcomes

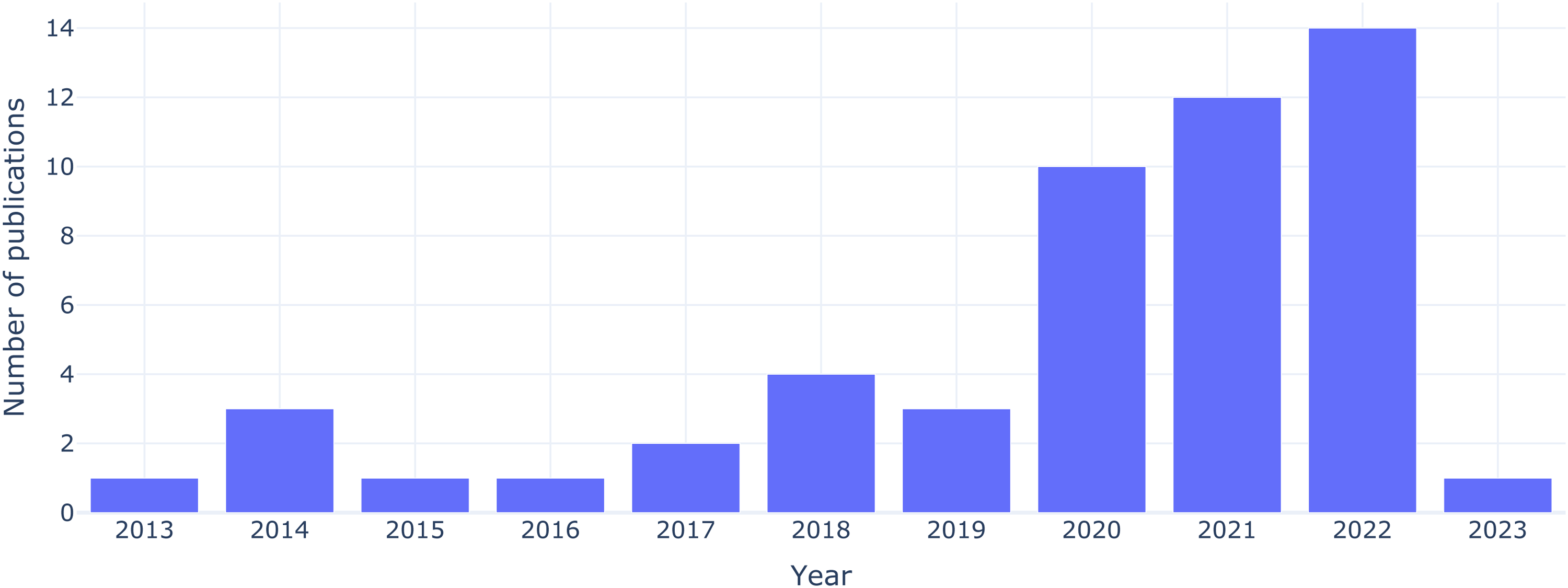

Figure 2 illustrates the increase in articles on Indigenous peoples and AI over the past decade 3 . Publications were sparse prior to 2020, but there has been a marked rise in recent years indicating a heightened research interest and underscoring the relevance of this systematic review.

Number of publications related to Indigenous peoples and artificial intelligence (AI) by year of publication.

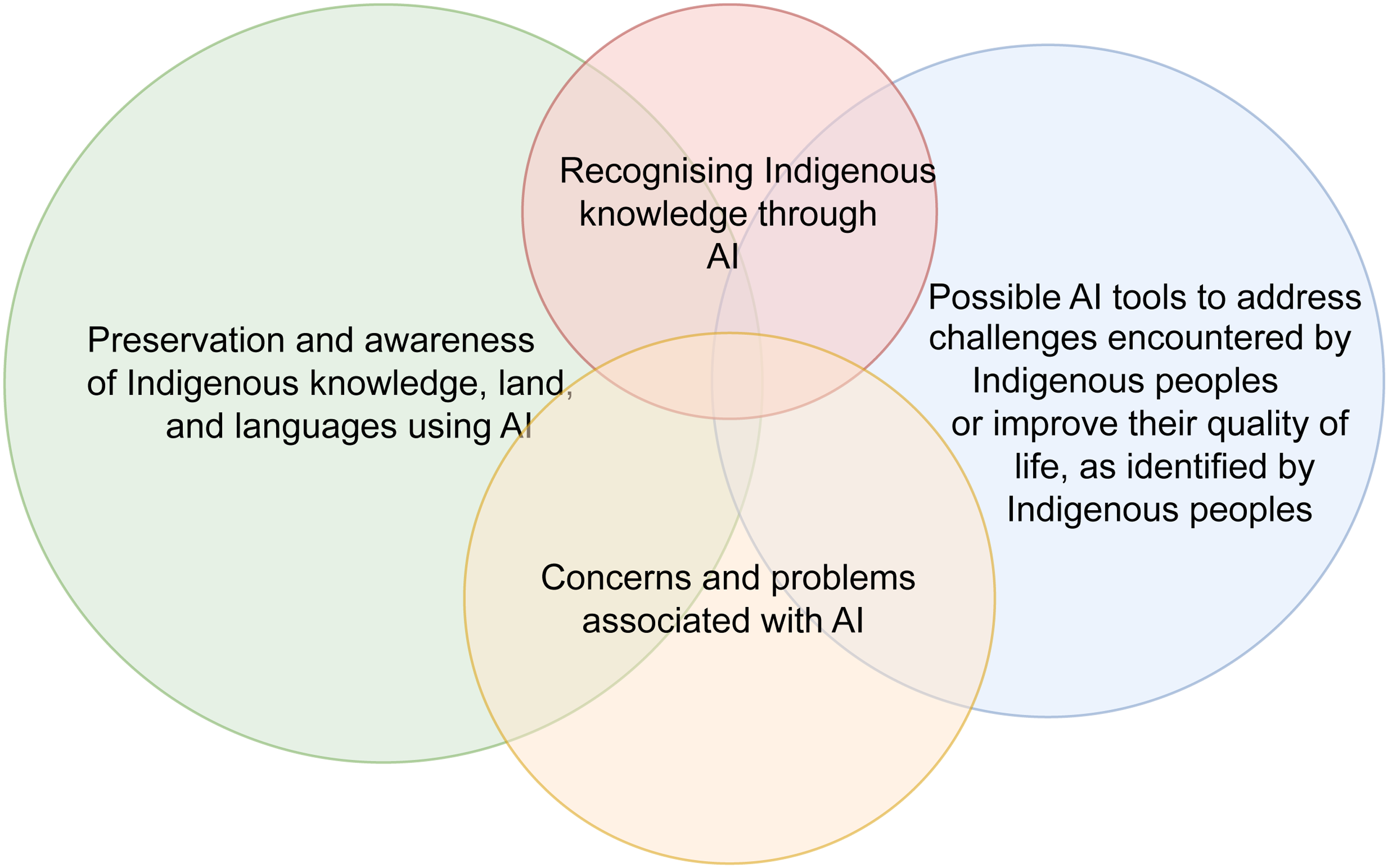

We developed a typology, illustrated in Figure 3 to categorise and deepen our understanding of the reviewed articles. This framework organises and analyses diverse perspectives and topics into four main groups.

AI for IKS preservation and awareness. AI addressing Indigenous people’s challenges. Indigenous people’s concerns in relation to AI. Integrating Indigenous knowledge in AI development.

Proposed typology for Indigenous peoples and artificial intelligence (AI) articles reviewed in this work (some articles are included in more than one category.).

The following subsections detail the categorised articles summarised in Tables 1 to 3. Figure 4 shows the geographical locations of Indigenous peoples represented in the reviewed articles. The majority of the research is concentrated in the Americas, Australia, New Zealand, Africa, and South East Asia. Due to Indigenous communities’ diversity, we avoid generalised statements, specifically noting each community or region involved (see Tables 1 to 3), ensuring a nuanced understanding of the research findings.

Countries of Indigenous peoples and the number of articles related to each country. These include: Aotearoa (New Zealand), Australia, Brazil, Canada, Colombia, Ecuador, Ethiopia, Guatemala, Kenya, Malaysia, Mexico, Peru, Taiwan, and United States.

Summary of papers included in the review (Part 1). Subsection titles are indicative, as some articles discuss multiple topics.

AI: artificial intelligence.

Summary of papers included in the review (Part 2). Subsection titles are indicative, as some articles discuss multiple topics.

IKS: indigenous knowledge systems; AI: artificial intelligence; NLP: natural language processing.

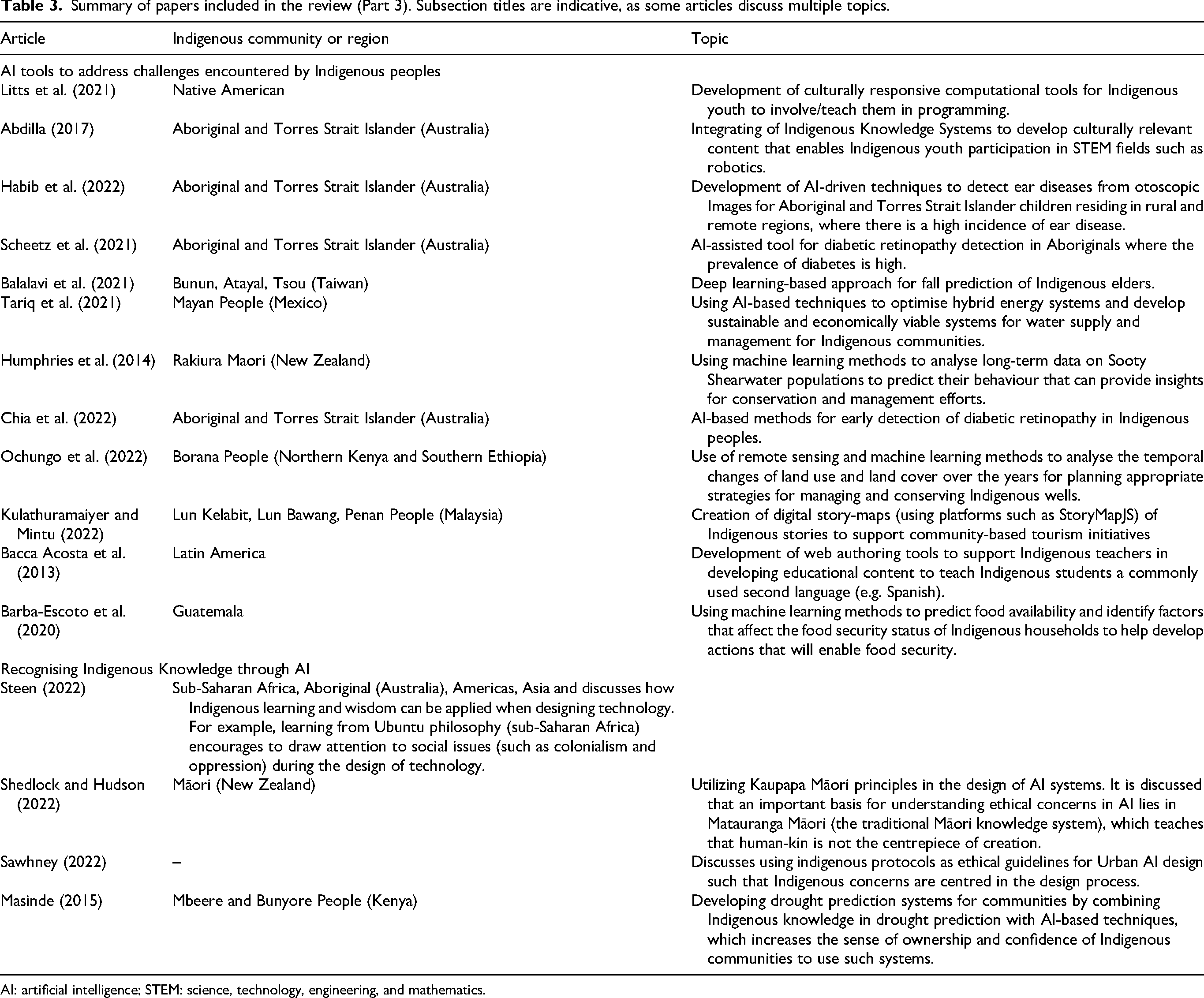

Summary of papers included in the review (Part 3). Subsection titles are indicative, as some articles discuss multiple topics.

AI: artificial intelligence; STEM: science, technology, engineering, and mathematics.

Preservation and awareness of IKS

We found articles in this category mainly discussing how AI can be used as a tool to support the preservation and awareness of Indigenous traditions, cultural heritage, lands, and languages, with a majority discussing the potential use of AI for Indigenous language revitalisation.

Indigenous language revitalisation

The use of Indigenous languages has decreased significantly due to the ongoing colonial practices that prevent and even punish the use of these languages (De Varennes and Kuzborska, 2016; Lothian et al., 2020). There is, however, a significant counter-movement of language revitalisation occurring, supporting the maintenance and revitalisation of these languages as an essential step for reconciliation (Lothian et al., 2020; Mahelona and Jones, 2020). Studies that discuss Indigenous language revitalisation using AI focus on a few different topics as shown in Figure 5, which includes: (i) data scarcity of Indigenous languages to train existing deep learning-based natural language processing (NLP) models (Bustamante et al., 2020; Kann et al., 2022; Maguiño-Valencia et al., 2018; Mercado-Gonzales et al., 2018; Sierra Martínez et al., 2018; Thai et al., 2019), (ii) language transcription (e.g. transcribing handwritten text or speech) (Belay et al., 2020; Coto-Solano et al., 2022; James et al., 2020; Munyaradzi and Suleman, 2014), and (iii) using AI for Indigenous language education (Oliva-Juarez et al., 2014; Rahaman et al., 2021).

High-level view of topics discussed in articles addressing Indigenous language revitalisation using artificial intelligence (AI).

Addressing data scarcity for AI models: AI models excel in NLP tasks for widely used languages such as English, but face challenges with low- or under-resourced Indigenous languages. Indigenous languages have limited digitally available text corpus for training language models, leading to inadequate performance in NLP tasks involving them (Kann et al., 2022; Liu et al., 2022). Several studies attempt to address this and support language revitalisation. For example, to improve automatic speech recognition (ASR) for the Seneca language (an Indigenous language in North America), Thai et al. (2019), utilised transfer learning and data augmentation methods. Starting with a model trained in English, the authors adapted it for Seneca using only 10 hours of recordings, showing significant improvements in ASR. Another study, ‘AmericasNLI’ (Kann et al., 2022), involving 10 Indigenous languages of the Americas, aimed to validate higher-level semantic tasks such ase natural language inference (NLI) and machine translation, essential for understanding sentence dependencies. It compared three transfer-learning-based techniques: zero-shot learning (considering that there is no labelled data for the Indigenous language and using a pre-trained language model from a different language), model adaptation via continued pretraining (using unlabelled data from Indigenous languages for fine-tuning), and translation-based approaches (converting the Indigenous languages to a high-resource language to create labelled data for the task), finding the latter most promising for Indigenous languages. Further efforts to mitigate data scarcity include creating text corpora for Indigenous languages (Bustamante et al., 2020; Maguiño-Valencia et al., 2018; Mercado-Gonzales et al., 2018; Sierra Martínez et al., 2018). For instance, a machine learning-based text annotation tool was developed for Peruvian Indigenous languages (Mercado-Gonzales et al., 2018), aiding in linguistic corpus construction for NLP tasks. Another study (Bustamante et al., 2020) developed text corpora for four Indigenous languages in Peru, using rule-based and unsupervised machine learning approaches to extract content from educational materials.

AI tools for language transcription: Indigenous language revitalisation is increasingly leveraging AI models for tasks such as text-to-speech or speech-to-text systems (Belay et al., 2020; Coto-Solano et al., 2022; James et al., 2020; Munyaradzi and Suleman, 2014). These technologies aim to expedite the documentation of Indigenous languages, significantly reducing the effort of manual transcription (Coto-Solano et al., 2022). For instance, transcribing an hour of Indigenous language audio can take up to 50 expert hours (Coto-Solano et al., 2022). Recent studies have focused on ASR systems using deep learning-based methods such as DeepSpeech (Hannun et al., 2014) and XLSR (Conneau et al., 2020), for languages such as Cook Islands Māori. These systems are developed using recordings of traditional stories, aiding ‘re-oralising’ of vital cultural narratives using technology. Similarly, text-to-speech initiatives for Māori language (Te reo Māori) (James et al., 2020) have been developed using hidden Markov model-based approaches with tools such as MaryTTS (an open-source text-to-speech synthesis system). Other research includes using convolutional neural networks for character recognition in Amharic language documents (Belay et al., 2020), facilitating digitisation of historical records.

AI-based tools for language education: Some studies show that Indigenous language education can bridge Indigenous and non-Indigenous cultures. For instance, Rahaman et al. (2021) developed a mobile app to assist in learning the Noongar language, spoken by Aboriginal people in Western Australia. This app uses deep learning to identify wildflowers and displays their names and sounds in Noongar, aiding beginners with pronunciation. Another study (Oliva-Juarez et al., 2014) employed Dynamic Time Warping to improve vowel pronunciation in the Choapan Zapotec language, spoken in Mexico, further illustrating the role of AI in Indigenous language education.

Advances in language technologies offer potential for Indigenous language revitalisation, but it is essential to align technology development with the needs and priorities of Indigenous communities (Liu et al., 2022). While some communities may prioritise language documentation, instruction, or reclamation, others might focus on preserving cultural heritage. Therefore, the suitability of technologies such as ASR systems may vary across communities and understanding the community’s specific requirements and priorities is fundamental in developing such technologies for Indigenous peoples.

Preservation of indigenous land

Indigenous communities are increasingly focused on ecological restoration, a key part of revitalising their culture and land stewardship. An example is the Amah Mutsun Tribal members, who partner with researchers and organisations to restore culturally and ecologically significant plant species (Taylor et al., 2023). This process of mutual caretaking between people and place positively impacts the well-being of both the plants and the Amah Mutsun people (Taylor et al., 2023). Therefore, research explores AI’s potential role in aiding ecological restoration processes, contributing to restoration, healing, and well-being. One such example is the MaxEnt model, using maximum entropy modelling, that identified culturally relevant plants for the Heiltsuk First Nations by merging archaeological and field survey data, focusing on redcedar trees in the Great Bear Rainforest (Benner et al., 2019). Similarly, Taylor et al. (2023) employed an ensemble machine learning method to map 10 important plants for the Amah Mutsun Tribal members. Additionally, AI and remote sensing have been used to detect illegal logging and gold mining affecting Indigenous areas (Boaro et al., 2021; Walker and Hamilton, 2019). For example deep neural networks, specifically, U-Net, to segment gold exploration areas in Amazonia’s Tapajòs region using satellite imagery.

Awareness and preservation of traditions and cultures

Studies (Robles-Bykbaev et al., 2018; Trescak et al., 2016, 2017) emphasise AI’s role in preserving Indigenous cultures, highlighting the utility of computing tools for (i) Indigenous peoples to share their stories and cultural practices and (ii) educating non-Indigenous communities about Indigenous traditions. Virtual reality (VR), enhanced with AI and multimedia games, has been proposed (Trescak et al., 2016, 2017) to simulate Aboriginal Australian traditions. Similarly, in Ecuador, a gaming platform based on Cañari Indigenous culture (Robles-Bykbaev et al., 2018) serves as an educational tool. Studies (Trescak et al., 2016, 2017) discuss static forms such as text fall short in capturing Aboriginal worldviews, such as Dreaming, whereas VR and AI can offer dynamic methods for cultural preservation. Additionally, AI is leveraged in Aboriginal rock art preservation (Kowlessar et al., 2021), employing transfer learning to analyse and date Arnhem Land Rock art, contributing to heritage conservation efforts.

Espín-León et al. (2020a, 2020b) highlight that Amazonian Indigenous communities are losing cultural identity due to ongoing colonisation and globalisation. In response, an AI method using self-organising maps was developed to assess cultural identity (i.e. degree of ethnic differences) between Waorani Amazon communities and inhabitants of nearby cities.

AI to address challenges encountered by Indigenous peoples

Articles in this category explore the use of AI to overcome challenges encountered by Indigenous people and improve their quality of life. Figure 6 shows different problem domains or sectors these articles cover.

Different sectors where artificial intelligence (AI) has been used to address challenges encountered by Indigenous peoples.

Healthcare: Several studies (Balalavi et al., 2021; Chia et al., 2022; Habib et al., 2022; Scheetz et al., 2021) have explored the application of AI in addressing health-related challenges for Indigenous populations. There is a lack of frequent screenings for diabetic retinopathy (DR) among Indigenous Australians due to the inaccessibility of DR services (Chia et al., 2022). AI-assisted systems, such as the ones proposed in Chia et al. (2022) and Scheetz et al. (2021) are used in DR screenings in Aboriginal medical clinics in Western Australia as an emerging solution. These systems use deep neural networks such as Inception-v3 to detect various eye disorders. Additionally, AI is being leveraged to develop solutions for specific age groups within Indigenous communities, such as fall prediction algorithm for Taiwan’s Tsou and Atayal Indigenous elders (Balalavi et al., 2021), and ear disease detection systems for Indigenous Australian children (Habib et al., 2022). These automated systems are particularly valuable in rural areas where access to proper healthcare is limited (Habib et al., 2022).

Education: The integration of AI into educational initiatives (Abdilla, 2017; Bacca Acosta et al., 2013; Litts et al., 2021) is proving beneficial for Indigenous communities, particularly in addressing the lack of representation in computing, and therefore, the inability to drive the development of AI systems. This approach aims to bridge educational disparities and preserve Indigenous cultural heritage. For example, a robotics workshop designed for Indigenous Australians (Abdilla, 2017) seeks to enhance their engagement in science, technology, engineering, and mathematics (STEM) fields through ‘culturally relevant’ computing. Similarly evaluating an Augmented Reality and Interactive Storytelling platform for Native American youth Litts et al. (2021) delves into cultural responsiveness in education. These works emphasise that AI in education is not only about technology but also about forging a vital cultural connection that empowers Indigenous learners to engage in technology. Additionally, they align with the broader goal of recognising Indigenous knowledge through AI.

Environment and Tourism: AI applications are increasingly supporting Indigenous communities’ environment and tourism challenges, including water management (Ochungo et al., 2022; Tariq et al., 2021), ecological and agricultural efforts (Barba-Escoto et al., 2020; Humphries et al., 2014), and Indigenous-led tourism activities (Kulathuramaiyer and Mintu, 2022). For example, to tackle clean water scarcity among Maya peoples in Mexico, Tariq et al. (2021) designed a hybrid energy system optimised via machine learning for effective water management. Similar efforts in Ethiopia and Kenya (Ochungo et al., 2022) utilise AI to analyse satellite images and identify landscape characteristics of Indigenous wells and their change over time as an effort to preserve these traditional wells. Additionally, AI’s potential to address agricultural challenges by predicting food security in Indigenous communities is seen in Guatemala (Barba-Escoto et al., 2020). Another study (Humphries et al., 2014) used machine learning to analyse long-term data collected by Rakiura Māori on Sooty Shearwater populations, aiding conservation and management efforts led by Rakiura Māori people. Despite AI’s recognised role in addressing environmental challenges, its application in Indigenous tourism efforts is less studied. One article (Kulathuramaiyer and Mintu, 2022) focuses on developing virtual tourism programs for Indigenous communities in Sarawak, Malaysia, using digital story maps, particularly proven relevant in COVID-19 world to help raise awareness and educate about Sarawak’s culture. These wide-ranging applications demonstrate AI’s potential to aid Indigenous communities in environmental and tourism initiatives while respecting and preserving their unique cultural heritage.

Concerns and problems related to AI

This section discusses articles that shed light on the concerns and problems of AI systems from an Indigenous perspective covering ethical, cultural, and equity dimensions as shown in Figure 7.

Key artificial intelligence (AI)-related concerns discussed in the literature from an Indigenous perspective.

Concerns over open data sharing

There is a critical tension between the endorsement of principles of ‘open data sharing’ in AI practice and Indigenous rights to own, control, derive collective benefit from, maintain, and protect Indigenous data. Scientific communities, and the AI community in particular, strongly endorse widespread accessibility to data and the reproducibility of research findings (Wilkinson et al., 2016). Indeed, open data underpins the viability of AI, which relies on large datasets to improve the accuracy of AI systems and benchmark them against existing solutions. However, this approach has been critiqued due to the historical and ongoing misuse and exploitation of Indigenous data, including the secondary use of data without consent (Carroll et al., 2020; Tsosie, 2021).

Indigenous communities should be the decision-makers when evaluating the benefits, potential harms, and future applications of data, aligning these assessments with their own cultural values and ethical principles. Lewis et al. (2020), in their ‘Indigenous protocol and artificial intelligence’ position paper, highlights the importance of respecting and supporting data sovereignty when developing AI systems (see Figure 8). They emphasise that Indigenous communities must control how their data is collected, used, and analysed, and these communities must be the decision-makers of when to protect or share their data. The United Nations Declaration on the Rights of Indigenous Peoples (United Nations, 2023b) Article 31 highlights that Indigenous peoples have the right to maintain, control, and protect their data, and this includes human and genetic resources, literature, oral traditions, information knowledge about the environment, land, and visual and performing arts.

Guidelines for Indigenous-centred artificial intelligence (AI) design v.1 by Lewis et al. (2020).

The Indigenous-led CARE (Carroll et al., 2020) and ownership, control, access, and possession (OCAP) (Schnarch, 2004) principles and frameworks were developed to provide guidance and support on the path towards Indigenous Data Sovereignty, and can be applied to guide appropriate AI development (Boscarino et al., 2022). However, there is a lack of peer-reviewed literature exploring how these principles might apply to AI or whether AI can practically be made compatible with them, given its modus operandi. For example, ensuring the 6 Rs of Indigenous research methods in AI research (respect, relationship, representation, relevance, responsibility, and reciprocity) (Tsosie et al., 2022) presents challenges such as the risk of misrepresentation, algorithmic biases that can disrespect Indigenous knowledge, and the difficulty in maintaining reciprocal, culturally relevant, and responsible relationships with Indigenous communities throughout the AI development process.

One strategy designed to address concerns about ethical and respectful Indigenous control and access to data relates to the development of privacy-preserving AI systems (Boscarino et al., 2022).They discuss that Indigenous peoples are underrepresented in genomic datasets, which leads to limited accuracy and biased decision-making of AI systems used in precision health, thereby exacerbating healthcare disparities. The authors propose the adoption of federated learning techniques, that is, training machine learning models to learn from data distributed across diverse locations without necessitating the transfer or exposure of Indigenous data beyond their respective communities. This approach provides a particular techno-solution to the challenge of preserving privacy and mitigating unintended secondary data usage. However, it does not fully address the complex epistemological and ontological challenges surrounding genomic research and Indigenous knowledge (Hudson et al., 2010; TallBear, 2013).

Unfair decision-making of AI systems

Fairness in AI has gained increasing attention in recent years, driven by numerous studies that have shown instances in real-world applications where AI systems have yielded unfair outcomes, particularly impacting underrepresented and marginalised communities. For example, within criminal justice systems, risk assessment software has been shown to generate unfair decisions towards marginalised communicates (Mehrabi et al., 2021), and gender bias has been observed in machine translation systems, manifesting during the translation process (Cho et al., 2021). Mehrabi et al. (2021) discuss that biases in AI systems contribute to unfair decision-making, and such biases can manifest at any stage of an AI development life cycle, including in the data, model training, and evaluation. Many sources can contribute to biases in AI, including historical biases in data, data imbalances, biases in AI algorithms, and human bias in interpreting decisions.

Biases in AI systems pose a significant threat to Indigenous communities, who have suffered marginalisation through ongoing colonising practices. Several studies discuss the perpetuation of existing healthcare disparities for Indigenous communities due to unfair decision-making of AI systems deployed for healthcare applications (Boscarino et al., 2022; Chia et al., 2022; Scheetz et al., 2021; Yogarajan et al., 2022). Yogarajan et al. (2022) used electronic health records collected by clinicians in New Zealand to study biases in AI models used in healthcare settings that can impact the Indigenous population in New Zealand. They developed machine learning models for three prediction tasks using the health records and found that the models exhibited biased predictions for Māori patients compared to the New Zealand European patients. The authors further identified potential sources of bias in the data used to train the models, including demographic and socio-economic factors and potential biases in the model itself. Similar observations were observed by Scheetz et al. (2021), where AI systems for DR screening in Australia exhibited a bias towards Aboriginal peoples. In particular, when comparing the system’s performance with other ethnic groups, such as Chinese, Malay, and Caucasian, the system exhibited low specificity when used for Aboriginal peoples, implying many false positive diagnoses. The authors highlighted several potential reasons for this issue, including the presence of co-existing eye diseases in Aboriginal peoples and differences in retinal pigmentation with the images used to train the model, which were predominately images from adults of Chinese ethnicity. They recommended further training the AI system with representative images and implementing stricter re-imaging protocols to overcome such issues.

Krakouer et al. (2021a) discuss the concerns associated with the deployment of AI systems for predicting child abuse or neglect risk within child protection services, particularly in their implications for Indigenous communities due to biases that can manifest in such systems. They emphasise that these systems heavily rely on administrative data that may already be tainted by biases stemming from historical and systemic discrimination against Indigenous families. Krakouer et al. (2021a) review of various studies in this field found some form of bias in predictions generated by the AI systems used in child protection systems. For instance, a study conducted by Wilson et al. (2015) observed that their AI model exhibited a tendency to refer a disproportionately high percentage of Māori children to child protection services compared to what would be expected based on actual case numbers. Specifically, the model referred 69% of Māori children, while the observed share of substantiated cases for Māori children was 61%. Krakouer et al. (2021a) underscore the importance of not only harnessing the potential benefits that AI systems offer in improving child protection services but also rigorously examining the quality of administrative data upon which these systems are built. They emphasise that simply making adjustments to AI algorithms will not mitigate bias in decision processes due to the complex interactions between historical, environmental and systematic contexts in child protection services. Instead, advocate for the co-design of AI systems used in child protection services in collaboration with the individuals and communities affected by such automated decisions and coupling this process with the development of transparent and explainable AI systems.

The importance of understanding the social structure in which AI systems operate as a strategy to mitigate bias towards Indigenous peoples is discussed by Lewis et al. (2020); Zajko (2021). Figure 8 shows an overview of guidelines proposed by Lewis et al. (2020) for Indigenous-centred AI design that stresses recognising AI systems’ cultural and social nature to mitigate potential biases. These guidelines stemmed from a workshop led by Indigenous researchers worldwide that focused on AI’s implications towards their communities. The authors discuss that all technical systems are cultural and social systems. Therefore, those designing AI systems must be aware of their own cultural and societal biases that can impact the developed systems and actively implement strategies that foster inclusivity and respect for diverse cultural and social perspectives.

Culturally biased computational tools

A limited number of works have highlighted the lack of cultural sensitivity in AI systems for Indigenous communities (Chugh, 2021; Henriksen et al., 2022; Litts et al., 2021). While the lack of cultural sensitivity can be implied as a form of bias in AI, we opted to discuss this concern as a distinct category to emphasise its significance. For example, Chugh (2021) discuss how AI systems used in Canada’s legal systems fail to account for cultural nuance, history, and the impact of colonialism on Canada’s Aboriginal people. In particular, discussing the findings of ‘Jeffrey Ewert v. Canada’, they highlight that the Supreme Court found that using risk assessment tools to evaluate the risk posed by offenders such as Ewert was a significant problem as the cultural heritage or background of Ewert and other Aboriginal offenders were not taken into account or considered in the data used by these assessment tools. Chugh (2021) highlights that this lack of cultural sensitivity and understanding in automated decision-making tools leads to unfair treatment of Indigenous communities that poses a significant threat to these communities. These concerns are also related to how AI systems are evaluated for fairness, as discussed in works (Steen, 2022; Yogarajan et al., 2022). Currently, AI systems are tested for fairness using measurable metrics such as equal opportunity (i.e. protected and unprotected groups should have equal true positive rates from a system) or equalised odds (i.e. protected and unprotected groups should have equal rates for true positives and false positives from a system), etc. (Mehrabi et al., 2021). However, these measurable metrics based on WSK raise several concerns, such as whether existing fairness metrics are sufficient to develop fair and ethical AI systems for Indigenous peoples and whether it is appropriate to categorise all minority and marginalised groups together when discussing fairness or biases in AI as done in a majority of the present literature.

Litts et al. (2021) explored another dimension of lack of cultural appreciation in AI by studying the cultural biases embedded in computing tools that hinder the participation and creativity of Indigenous youth in the field of computer science and, more broadly, in AI. Using a programming platform: ARIS, they conducted a workshop with Native American students to explore how programming platforms could support storytelling. Authors highlighted the cultural bias of such computing tools by showcasing how certain stories were more easily created due to their linear narratives using if, else statements, whereas students with nonlinear narratives of storytelling faced difficulties in translating their work using the programming tool. The lack of cultural appreciation was further highlighted as a concern by Lewis et al. (2020). They raised the question ‘How do we (Indigenous peoples) undertake the challenging work of more consciously translating our cultural values into computational concepts that can then be implemented in code?’ These existing limitations in programming platforms that constrain the creativity of Indigenous youth to engage in the stepping stone for AI development may relate to a broader issue discussed by Henriksen et al. (2022), who argue that Western epistemologies inform AI development. Henriksen et al. (2022) argue that non-Western and Indigenous knowledge and creativity are excluded in AI and are driven by Eurocentric ideologies that support individualism, western sensibilities, and understandings such as anthropocentric views. The authors highlight the advancements of AI in future could make it unclear what is traditionally considered ‘human’ and ‘non-human’ and about AI’s consciousness. Given these uncertainties and the potential impact of AI on society, Henriksen et al. (2022) stress the importance of the ethical design of technology, considering different understandings of the world, including non-Western and Indigenous knowledge.

Recognising indigenous knowledge through AI

There are some articles that advocate for recognising broader worldviews in AI design, including IKS, underscoring the need for a more inclusive approach (Henriksen et al., 2022; Lewis et al., 2020; Steen, 2022).

Masinde (2015), in her early work emphasises combining IKS and WSK in AI development by proposing a drought prediction system for sub-Saharan Africa, merging traditional insights with modern forecasting methods to increase community trust and relevance. Abdilla (2017) also integrated IKS with technology, developing culturally relevant robotics content for Indigenous youth and exploring how IKs can shape future AI and robotics. Abdilla (2017) raises an important question in her work that inspired several other studies (that we discuss next): ‘How might we create a space for Indigenous Knowledge Systems and Pattern Thinking to impact and influence future developments in, for example, autonomous systems in robotics and artificial intelligence (AI)?’

Lewis et al. (2020) developed Indigenous-centred AI guidelines with Indigenous communities (see Figure 8). Through the principle ‘Relationality and Reciprocity’, they emphasise that Indigenous knowledge often centres on relationships; therefore, AI systems should be constructed with an understanding of the interconnectedness between humans and non-humans. Furthermore, they point out that AI systems are themselves a part of these interconnected relationships. The role and position of AI systems within these interconnected relationships will vary based on specific communities and their established practices for recognising, respecting, and integrating new elements into these relationships. Lewis et al. (2020) emphasise that these community-specific values and understanding of the world must be considered in AI design. Building on Lewis et al. (2020), several works (Sawhney, 2022; Shedlock and Hudson, 2022; Steen, 2022) explore IKS’s role in crafting ‘ethical AI’ systems. Shedlock and Hudson (2022) highlight Mātauranga Māori’s (i.e. Māori knowledge) contribution to reducing AI biases and offer a model based on Mātauranga Māori for AI development stages, emphasising harmony, accountability, and user engagement. They discuss Mātauranga Māori’s view of an interconnected world, contrasting Western AI ideologies.

Steen (2022) along with Shedlock and Hudson (2022) and Henriksen et al. (2022) challenge the Eurocentric ideologies that drive AI development and underscore the significance of Indigenous philosophies from regions such as sub-Saharan Africa, Australia, the Americas and Asia in shaping ethical AI. He highlights the Ubuntu philosophy’s relational approach and its potential to address societal AI development. Steen (2022) also questions the best methods for Westerners to collaborate and learn from Indigenous peoples, emphasising the need for equal respect for Indigenous knowledge in AI development. Additionally, Sawhney (2022) extends this discussion to Urban AI systems suggesting that Indigenous learnings can inform Urban AI systems for ethical development, taking into account socio-cultural contexts, and long-term impacts on various stakeholders, including the environment.

Discussion

Our review categorises the literature into four main themes, as illustrated in Figure 9. These themes, represented by different coloured circles in size proportionate to the volume of literature, cover topics from AI’s role in preserving IKS and language revitalisation to addressing Indigenous peoples’ needs and challenges in sectors such as healthcare, education, environment and tourism. Recent literature, emerging since 2020, has begun focusing on AI’s concerns, particularly on three key issues: concerns over Indigenous data, unfair decision-making of AI systems, and cultural bias in AI systems that hinder Indigenous participation in AI. However, the literature on recognising IKS using AI is limited, with notable foundational works by Abdilla (2017) and Lewis et al. (2020). A key topic discussed in works in this category was using IKS to inform and enrich the ethical design of AI systems.

Four overlapping categories we identified during the systematic review discussing the interaction between Indigenous Knowledge Systems and artificial intelligence (AI). The circle size is an approximation of the volume of literature we found under each category.

Figure 9 shows a Venn diagram to indicate the interconnectedness between the four themes. For example, developing AI-based systems for Indigenous language preservation involves multiple themes: it not only relates to the preservation and awareness of IKS and addressing challenges faced by Indigenous peoples but also encompasses concerns and problems associated with AI, such as Indigenous data sovereignty. Moreover, these systems should be developed with the recognition of IKS rather than being driven solely by WSK, which ties into the theme of recognising IKS through AI. However, most existing literature focuses on a singular aspect, with few exploring multiple intersections. The position paper on ‘Indigenous protocol and artificial intelligence’ by Lewis et al. (2020) stands out as the only paper for addressing the interconnectedness of these themes comprehensively. We advocate for increased cross-thematic research, integrating problem-solving approaches with Indigenous concerns and IKS in AI development. Future research should span all these four themes. There also needs to be more literature focused on the concerns related to AI from an Indigenous perspective and recognising IKS using AI. There is also a lack of literature around the commensurability of AI and IKS, and in situating AI in the context of IKS. Much of this writing is found in the grey literature rather than peer-reviewed publications. This may be due to issues of accessibility, cultural safety, and institutional appropriateness for First Nations in higher education.

Future directions

We highlight the recent advancements in AI, particularly in ‘Generative AI’, a field producing new content (e.g. text, images, audio, and videos) from learned data patterns. Generative AI, gaining prominence since 2022, is reshaping industries and everyday social relations and brings the promise of adaptive modes of communication, innovation, and interaction. However, it requires greater transparency and regulation. Decolonial Indigenous-centred critique must be embedded to avoid the risks of ongoing colonisation and oppression. Our search covering 2012 to 2023 found no literature on generative AI’s intersection with IKS, likely due to its recent emergence. It is evident though that generative AI’s capability to create content raises a number of challenges related to IKS. The automated generation of Indigenous artwork is one amongst many examples. How are the principles of Indigenous Data Sovereignty to be applied including consent processes? Are these AI generations culturally appropriate and safe? How do we ensure AI systems respect the nuances of different traditions and cultures of Indigenous communities? Can such generations, in fact, help preserve the richness of Indigenous cultures worldwide and do the benefits outweigh the risks? Ghosh et al. (2024) expose potential biases of generative AI to non-Indigenous non-Western societies through a decolonial lens. However, such a work did not exist regarding any Indigenous communities at the time of writing. Several previous works (Abdilla, 2017; Lewis et al., 2020; Shedlock and Hudson, 2022; Steen, 2022) discussed the importance of Indigenous peoples steering AI development to inform the ethics and the importance of diverse expert involvement in AI development. Mohamed et al. (2020) argue that a decolonial lens which reveals and responds to the historical and ongoing processes of colonising power should be applied to AI. This involves political community-building, and bringing decolonial critique into technical practice through documentation, design and dialogue. We advocate integrating these insights into emerging generative AI research. If AI is a vast commensuration exercise (Jaton and Sormani, 2023), we must attend to the social processes and power relations that facilitate Indigenous Knowledge concepts being compared and made legible using a common metric (Espeland and Stevens, 1998). In the period of our study, there was no literature attending to the processes of commensuration of IKS through AI. More research is needed to explore the potential incommensurability and the epistemic violence that can occur in presuming that AI can equivalise across knowledge systems. It is important to highlight that which is lost through AI processes and the material and symbolic consequences of this particularly when it involves sacred knowledge which certain Indigenous nations rightly control access to (Masoni, 2017). Another aspect that is missing in the current academic discourse is the correlation between computational power requirements and land grabbing and dispossession from Indigenous peoples. Especially in the context of the sizable energy requirements of AI models (Chowdhury et al., 2025), and existing practices of Indigenous land dispossession due to mineral and energy extraction, we believe this area needs extensive research (Scott, 2025; Spiegel, 2021).

Conclusion

This review discusses the dynamic role of AI in relation to Indigenous peoples and issues, underscoring the need for academic literature to evolve rapidly to capture both challenges and opportunities while applying a critical lens. The literature reveals benefits and opportunities for Indigenous peoples through AI, yet highlights concerns in AI processes, methodologies, and governance. Our systematic review, spanning from 2012 to 2023, focused on 53 articles to comprehend AI’s global impact on Indigenous peoples. From this, we proposed a typology to classify existing literature, offering a structured framework for organising and analysing the diverse perspectives and themes. This typology identifies four categories: (i) AI as a tool for the preservation and awareness of IKS, (ii) AI’s application in addressing Indigenous people’s challenges, (iii) potential problems and concerns of Indigenous peoples regarding AI, and (iv) the recognition and integration of IKS in AI. In light of AI’s rapid progress, we discussed future directions that need urgent attention on AI’s impact on Indigenous peoples. AI and IKS meet in the conceptual realm – involving foundational questions around epistemology and the unique and relational dimensions of different worldviews (ontologies) and practical questions about the application of tools and frameworks that can stem the unchecked development of AI and embed principles of IDS. The scholarly literature must bring these strands together to do justice to the challenges going forward.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Australian Research Council’s Discovery Project Grant (DP220101035), University of Melbourne’s FEIT Indigenous Research Grant and the Melbourne Social Equity Institute (MSEI) Seed Grant, with further support from the Centre of Excellence for Children and Families over the Life Course (CE200100025).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.