Abstract

Few studies characterize the diffusion of racist content on fringe social media platforms. We demonstrate how racism spread on Parler, a far right, un(der)-moderated social media platform, and that a single comment to a racist post increases the likelihood a person will generate and propagate new racist content. We found that racism on Parler was a social “contagion.” Using 50,375 posts from 2018 to 2021 that contained racist remarks, we quantified the spread of racism from the posts-to-comments (micro) and user-to-user (macro) levels. Comments on racist posts were 21 times more likely to be racist than comments on non-racist posts. On an average, Parler users posted 166% more racist content after an engagement with a racist post. At the posts-to-comments level, the spread of racist sentiment is alarming within ethnic subgroups (e.g. anti-Jewish-specific comments were 191 times more likely to appear on “anti-Jewish” posts; anti-Black-specific comments were 227 times more likely to appear on “anti-Black” posts). The spread of racist rhetoric between subgroups is also significant, albeit lower than within subgroups, suggesting a spillover effect. Aggregate user posting patterns also suggest that the spread of racist rhetoric between subgroups is lower, but significant. Our findings therefore suggest interventions should target users actively engaging with racist content. Furthermore, our study provides evidence that Parler served as a gateway platform that radicalized users toward racist content production, underscoring the urgency of intervention. Given the drawbacks of traditional moderation strategies, we propose additional interventions, policies, and structural changes for social media platforms to mitigate racism online.

Introduction

Online racist content is rooted in centuries-old colonial and post-colonial structural inequalities (Daniels, 2013). Longstanding racist narratives and messaging have been reshaped and refashioned into seemingly new racist content on the Internet (Mathew and Prashad, 2000). Racism has been shape shifting over the centuries, is highly malleable, and can have varied targets depending on context. Early scholarship saw the Internet as a liberatory place to playfully experiment with one's identity and take on varied avatars and forms (Turkle, 1999) (e.g. crossing gender and racial lines). However, later research has argued that the Internet codified race and racism into its infrastructure (Daniels, 2013). Critical race scholars have made clear that the role whiteness plays in structuring code and applications remains understudied (Brock, 2020). Because racism can be the norm in many spaces of the Internet, digital segregation and social inequalities are manifested in virtual platforms, sometimes creating altogether new inequalities (Sharma, 2013). For this reason, some scholars see virtual spaces as reconfiguring and updating hate from analog to digital (Munn, 2023a). Moreover, social media and the Internet more broadly are making racist content highly accessible and these digital incarnations, through memes and videos for example, can be alluring to new audiences (Munn, 2023a; Murthy and Sharma, 2019).

In the period after Barack Obama became US president, a so-called colorblind, “post-racial” era emerged in many western countries, wherein “vile, overtly racist discourses and harassment fled to online spaces” (Ortiz, 2021) and sometimes became magnified. For example, online trolls employed flagrant and overt racist language, including frequent use of the N-word on platforms like 4-chan and the troll wiki Encyclopedia Dramatica (Phillips, 2015). Trolls purposefully used inflammatory, racist content as “bait” to incense average users on social media (known as “trollbait”) (Phillips, 2015). Racist troll behavior operates on disliking individuals, groups, ideas, and movements. Disliking things, people, and groups online are performative and can feel like a claim to superiority by the disliker (Gray, 2021). Though some racist trolling is done for baiting, getting attention, and as a “joke” (i.e. for the “lulz,” what trolls call content that is perceived as funny or triggers repeated laughter) has real and sometimes major impacts on social media users who are targeted or inadvertently exposed (Phillips, 2015). Moreover, racist meme art from fringe platforms like 4chan and even mainstream image sharing platforms can cross into popular media like Fox News and Sky News, thereby spreading “toxic” discourse (Phillips, 2015). Political elites also amplify racist content, which can polarize and foster increased discrimination online and offline (Irfan et al., 2020). Though there is a rich scholarship characterizing racism, much less has been done to document the effects of racism online, which, over time, cumulatively effects racial minorities (such as stress and changes to their own digital practices to actively avoid racist content) (Brock, 2020).

As Brock (2020) argues, our interfaces to the Internet—from browsers to platforms—“perform racial ideology,” which reinforce whiteness as the norm. Therefore, there is a critical need to study specific platforms and their roles in maintaining, producing and promoting racist ideologies, content, and behaviors. We chose the niche, right wing social media platform Parler as a case study due to its lack of content moderation and its hosting of extreme, racist content (Mendoza and Linderman, 2021; Munn, 2021; Paczkowski and Mac, 2022). Parler attracted users who had previously been de-platformed from mainstream spaces such as Facebook and Twitter due to heightened content moderation policies, including individuals with ties to white supremacist groups (Hitkul et al., 2021; Nicas and Alba, 2021b). Many participating in the 2021 United States Capitol attack were Parler users and members of white supremacist groups (Thompson and Fischer, 2021). White supremacist ideologies rooted in “white loss” (i.e. the feeling that white males have been “robbed” of/“lost” their previous privilege (Fine et al., 1997)) which engendered disenchantment, rage, and mobilization, emotions that Parler capitalized on (Munn, 2023a). Moreover, racist misinformation was abundantly employed on Parler to recruit and promote the Capitol attack (Thompson and Fischer, 2021).

Given the dangers of un(der)moderated racist speech, we sought to characterize how racist content spreads on under-moderated platforms through a case study of Parler. We first explore the micro-level spread of racism occurring in singular interactions when users comment on racist posts. While each interaction may seem minor, previous work has found aggregating interactions on social media platforms can help discern larger scale social formations (Murthy et al., 2021). Therefore, these encounters we studied on Parler collectively form the basis for cascading effects in racist content proliferation. We therefore seek to answer the following research question (RQ):

Building on this understanding of micro-level contagion, we examine how these interactions influence aggregate user posting behavior. Rather than tracking how far individual instances of racism spread (as previous research has done), we investigate how users progress from echoing racist content in comments to actively creating it. Thus, we ask:

Finally, given racism's malleable nature, we explore how racist discourse transforms through both micro-level and macro-level processes. Given limited research quantifying how racist content toward one group affects others, we choose specifically to explore:

To answer these questions, we analyzed 44,350,815 million comments and 50,375 posts created by 3,538,899 Parler users between August 2018 and January 2021. We applied correlation analysis between users’ engagement(s) with racist posts and their own posts with racist language to better understand what factors facilitated the broad distribution of racist speech across the network (macro-level). We then utilized contagion-based methods (Kwon and Gruzd, 2017) to better contextualize how racism spreads at the micro-level (i.e. comments and reposts fostered by parent posts).

Background

Race, racism, and digital technology

Racism on social media is not “a glitch but [is] part of the signal” of the Internet (Nakamura, 2013). There is ultimately a default to whiteness in online spaces, which originates from white geek masculinities endemic to early Internet forums (Kendall, 2000) and tech entrepreneurship (Mellström et al., 2023). Nakamura and Chow-White (2012) succintly state: “no matter how ‘digital’ we become, the continuing problem of social inequality along racial lines persists.” Misogyny and racism online (e.g. attacks on social media against anti-racist activists) has become ubiquitous on many platforms and spaces (Ng, 2022). This is a reflection of pre-Internet articulations and negotiations of race and racism. Omi and Winant (2008a, 270) define race as “a concept which signifies and symbolizes social conflicts and interests by referring to different types of human bodies.” A “racial formation” and the racism that raced bodies experience are part of “sociohistorical process[es]” that are not fluid, but involve representation, creation, and destruction that is undergirded by hegemony and power and intersects with other forms of marginalization based on difference and inequality (e.g. sexism) (Omi and Winant, 2008a). Simply put, micro- and macro-discussions racism are affected by persistent structural inequalities (Omi and Winant, 2008b) and this is the same on social media or non-technologically mediated spaces. Noble (2018) gives the example of how she searched for “black girls” on Google and received results which indicated that black girls “were still the fodder of porn sites, dehumanizing them as commodities, as products and as objects of sexual gratification.” Noble argues that these types of search results connect with sociohistorical racial tropes “as old and endemic to the United States as the history of the country itself” (Noble, 2018). Previous work found that facial recognition classifiers performed better for lighter skinned males, confirming gender and phenotypic biases in commercial computer vision systems (Buolamwini and Gebru, 2018; Chun, 2021). This has led to cases of mistaken identity and the wrong people being sent to jail (Gebru, 2020). In the case of large language models (LLMs), ChatGPT was found to have racial bias in treatment advice, recommending white patients “with superior and immediate treatments” (Yang et al., 2024). Beyond racial bias, ChatGPT was also found to perpetuate caste and religious bias (Khandelwal et al., 2023).

The social construction of racial categories (e.g. the monolithic category “Asian American”) perpetuated a model minority myth, which left already vulnerable Asian Americans more marginalized and this has knock on effects in social media (Senft and Noble, 2013). For example, centuries old racial stereotypes make race a powerful social differentiator online, affecting dating preferences, racist articulations, and anti-racist responses (Senft and Noble, 2013). Anti-Asian and anti-Chinese racial stereotypes manifested in online multiplayer games echo earlier racial tropes (e.g. “they ‘all look the same’ because they all are the same” (Nakamura, 2009)) dehumanizing Asians as “interchangeable and replaceable” (Nakamura, 2009). Racism on social media platforms is therefore very much “sociohistorical” and as in pre-Internet racism. Even simple, everyday online experiences can expose individuals to racist content. For example, Chun (2021) found that Google's algorithms permitted and, in some cases, suggested racist content for potential advertisers to use in campaigns. Online racism shapes the identities of racialized Internet users (Brock, 2020) and identifying, tracing, and rendering visible these content remains important. Indeed, racism, as a social formation, is, following Althusser and Balibar (cited by Hall, 1980), “composed of a number of instances—each with a degree of ‘relative autonomy’ from one another.” Some of these instances are happening on social media platforms and thereby re-articulating racialized social formations. Moreover, the “policies and design choices” (Massanari, 2019) of certain social media platforms have been found to enable hostile social formations. For example, Massanari (2017) found that Reddit's cultural practices and design “implicitly allows anti-feminist and racist activist communities to take hold.” For example, Reddit's karma points, which are gained by receiving upvotes on posts and comments, can incentivize “toxic technocultures” (Massanari, 2017) such as dog whistle sexism and racism. In the case of Rumble, the right-wing video sharing platform, its YouTube-like format places a video at the forefront and encourages users to comment. Munn (2023b) found that Rumble's structure facilitates “surface” (i.e. moderate, visible, and accessible) racist and transphobic video content which attracts “sublevel” (more explicit but not immediately accessible) comments. Both Reddit and Rumble are highly accessible and incentivize and elicit “sublevel” racist content. While moderation exists on Reddit and problematic content can be reported, Rumble is considered under moderated (Le Monde, 2022; Zimdars, 2024).

Early research exploring “cyber-racism” (including racial hate, aggression, and prejudice) relied on qualitative analyses of textual data. This work encountered difficulties in quantifying how the Internet helped mobilize racist individuals as well as propagate racist content online (Bliuc et al., 2018). Newer, AI-based computational approaches seek to detect, monitor, and analyze racist content on social media. One popular computational approach focuses on the use of machine learning (ML), typically supervised, multi-class classification algorithms (Istaiteh et al., 2020). Malmasi and Zampieri (2017) employed a linear support vector machine (SVM) classifier and character 4-gram model to distinguish between hate speech, profanity, and other tests, boasting a model accuracy of 78%. Vidgen and Yasseri (2020) built upon that classification approach by constructing a multi-class SVM classifier to detect Islamophobic content on social media platforms. ML classification algorithms provide effective tooling to detect, monitor, and analyze the spread of racist speech across social media platforms. While ML-based approaches are robust in natural language processing capabilities, they leave gaps regarding the influence of content structure—post content, comments, and hashtags on the spread of discrimination-based hateful speech.

Contagion of racism

The spread of ideology-based misinformation, conspiracy theories, and racialized speech on social media platforms is partially underpinned by the tendency for humans to “catch” others’ emotions. Hatfield et al. (1993) first posited that emotions are transferred between people in social contexts ranging from the one-to-one interpersonal level to large groups. This helps contextualize how the diffusion of emotions can foster other contagious ideas. Otterbacher et al. (2017) demonstrated that people engage in linguistic matching on social media. Chartrand and Bargh (1999) found that similarity between two people can initiate measurable changes in behavior where individuals passively alter their behaviors to match the current social environment (termed the “chameleon effect”). Like many evolutionary-based behaviors, these mechanisms serve a crucial social function that is generally pro-social and adaptive (Hatfield et al., 1993). However, modeling aggression, rewarding aggression, and failing to intervene increases subsequent aggression (Bandura et al., 2022).

These theories also apply to human communication online. On social media, these types of pro-social behaviors play a substantial role in the contagion of ideas. Instead of relying on non-verbal cues, users inadvertently learn from cues embedded within a text-based medium—such as syntax and tone. Gonzales et al. (2010) employed a linguistic style matching algorithm to predict group cohesiveness between discussions occurring face-to-face and via text-based computer-mediated conversations. Their findings demonstrate the effectiveness of pure language to predict change in social psychological factors, ultimately supporting the presence of mimicry in computer-mediated interactions. The existence of imitation, conformity, pressures to fit in, and the chameleon effect on social media platforms can inadvertently create racist echo chambers as unsuspecting users are unconsciously roped into echoing hateful speech to “fit in” with the network. These subtle interactions can rapidly transform into a movement of people echoing hateful speech and spreading this content across social platforms. Of note, this outcome goes against what would be expected by the popular “Spiral of Silence” theory, which posits that an individual whose beliefs would go against the grain, consciously suppresses non-conforming beliefs to avoid social isolation (Noelle-Neumann, 2006). Rather, online, the opposite can hold true with the majority, who have more tolerant or centrist beliefs, jettisoning them to conform with more extreme positions. For example, empirical work with Facebook posts found that a vocal minority of racist posters did not fear the social isolation predicted by Spiral of Silence, but, rather they “felt comfortable expressing unpopular views” (Chaudhry and Gruzd, 2020).

Diffusion-based methods have been used to study how content spreads across social media networks and provide insights into hate speech online. On Gab, another minimally-moderated platform similar to Parler, hateful posts were found to spread faster and further and networks of users creating hateful posts were more densely connected with one another (Mathew et al., 2019). A longitudinal analysis of tweets determined that users exposed to hateful content were more likely to create more hateful content in their own posts (He et al., 2021). Networks of posts that contained profanity versus those that did not on the Chinese social media platform Weibo were found to propagate significantly faster, especially in the first iteration (i.e. moving directly from the originating post to neighbors) (Song et al., 2021). Vosoughi et al. (2018) employed a differential diffusion of all the verified news stories distributed on Twitter from 2006 to 2007 and found that misinformative political content often traveled faster, deeper, and more broadly throughout the network than truthful content.

On a micro-level, contagion-based methods provide insights into the relationship between posted content and comments on the user level. Kwon and Gruzd (2017) employed a comprehensive dictionary of English swear words and their abbreviations alongside contagion-based methods to examine the swearing behavior of commenters on YouTube. They concluded that the presence and intensity of swearing in the parent comment increased the probability and frequency of swearing in the child comment (Kwon and Gruzd, 2017). By considering relations between post and subsequent commenting behavior, Song et al. (2022) found that peer-mimicry is the largest driver of contagion, with generalized reciprocity and direct mimicry being less significant drivers.

Parler: an un(der)-moderated niche platform

Social media platforms have mitigated the spread of hateful content by censoring instances of hateful speech that violate terms-of-service, informed by public appeals, policy changes, and political pressure (Hitkul et al., 2021; Leskin, 2020; Meta, 2021; Nicas and Alba, 2021a). In contrast, some niche platforms have opted to avoid content moderation altogether in the name of free speech as an appeal to libertarians and conservatives who were censored on mainstream platforms (Leskin, 2020; Nicas and Alba, 2021a). For example, Parler embraced an ultra-libertarian ideology and advertised itself as “the world's premier free speech social media platform.” Parler's application of minimal moderation provided a space for racist language, dangerous conspiracy theories, and political misinformation to circulate. Specifically, Parler was found to have a higher volume of Q-Anon-related content than Gab and Twitter (Sipka et al., 2021), and Parler harbored an abundance of misinformation related to the Black Lives Matter movement (Norton et al., 2023). Parler has high rates of suspected bot activity which exert disproportionate influence and further radicalize users (Venkatesh et al., 2024). Though Twitter users generally denounced participants of the 2021 U.S. Capitol Attack, Parler had a strong conservative narrative supporting the attackers, which was centered around hate and misinformation (Hitkul et al., 2021). Despite these dangers of alternative, under-moderated platforms, there remains a dearth of works that specifically study and quantify racism on these platforms.

Methods

We employed computational methods to study comments, post-threads, and user information on Parler separately. Figure 1 highlights the difference between these units of study. The examination of comments and post-threads provided an opportunity to identify how the contagion of racist content occurred on Parler. Section “Comment-level unit contagion” examines both RQ1 and RQ3, section “Post-thread-level unit contagion” examines further evidence for RQ1, and section “User-level contagion” examines RQ2.

Units used for various levels of Parler data analysis.

We utilized a publicly available dataset of 98.5 million posts and 84.5 million comments made by 4.4 million Parler users between August 2018 and January 2021 (Aliapoulios et al., 2021). All comments contained an ID linking them to a parent post. Since the dataset did not contain all content from Parler, comments linked to a parent post not in the dataset were removed. Users with no posts/comments after this trimming were also removed. This yielded a dataset of 44.3 million comments and 3.5 million users. Data processing and metric collection was performed using Python on an Oracle Cloud virtual machine.

We utilized a dictionary-based approach to detect racist sentiment, meaning we classified racist posts based on the presence of specific predetermined terms. We observed word-level contagion to quantify racism saturation by dictionary match frequency. Like Tulkens et al. (2016), our dictionary of racist terms came from both inductive and deductive methods which started from a small corpus of racist terms and was expanded by manually reviewing neighboring posts, comments, and terms. The selected corpus was narrowed to frequently posted terms, and then collapsed into categories encompassing anti-Black, anti-Asian, anti-Muslim, and anti-Jewish content. Our dictionary therefore captures racist sentiments that persistently circulated on Parler. The full dictionary contains 33 terms and is available in Supplementary Materials. 1 The anti-Asian category contains a significant number of terms related to COVID-19 since the dataset includes posts that are concentrated around 2020. Table 1 details category definitions, and Table 2 highlights example posts labeled with racist content.

Racism categorization framework.

Example posts labeled with racist content. 2

Comment-level unit contagion

For all comments represented in our dataset (N = 44,350,815), racism was measured in both the comment itself and the linked parent post. Two additional control attributes were measured for each comment: message length and message influence. For the former, the length of the comment (character count) and its hashtag list (hashtag count) were quantified, since longer comments with more hashtags have a larger chance to have racist terms. For the latter, we measured influence as the number of reposts and upvotes on the parent post; this accounted for the likelihood that more viral posts were more likely to contain racism.

Extending Kwon and Gruzd's (2017) contagion-based method, we utilized a mixed effect logistic regression to quantify the likelihood of racism occurring in a comment given the racism present in its parent post. Four independent variables were coded to indicate types of racism in the parent post (0 = absent, 1 = present): anti-Black, anti-Asian, and anti-Jewish. Comment length, comment hashtag list length, post-reposts, and post-upvotes were measured as controls. The dependent variable was a binary value of if the comment contained racism toward a specific subgroup. We utilized four logistic regression models to measure racism directed toward each subgroup. We created a fifth logistic regression model to consider only general racist content in posts and comments.

Post-thread-level unit contagion

To analyze contagion within post threads, we first measured the amount of each type of racism present in every post containing at least one racist term (N = 50,375). Next, we measured the frequency of each type of racist comment on the posts, along with the total number of comments on the posts. The post length (a character count) and hashtag list length were utilized as controls. Since post virality could influence the amount of racism the comment section has, the number of post reposts was also measured. We measured the relations between the attributes of racist posts and performed Pearson's R correlation analyses between the collected attributes of the racist posts.

User-level contagion

Users were studied in two groups. To study the trends associated with all Parler users, Group 1 contained all users in our dataset (N = 3,538,899). To study how engagement with racist posts alters subsequent posting patterns, Group 2 contained only users who had ever commented on racist content (N = 57,696).

For every user in Group 1, we first collected the number of comments/posts ever created by the user containing racism. We counted the number of times in which each user created any comment (racist or not racist) on a racist post of another user. Lastly, we conducted a correlation analysis between the number of comments that an individual user published on others’ posts containing racist content against the number of original racist posts/comments created by the same user. This revealed how user engagement with racist content influenced racist content creation.

We also examined the temporal posting patterns of users in Group 2 (N = 57,696) before and after an “initial engagement” with a post containing racist content (i.e. the first time a user comments on a racist post). For each user, we measured the number of racist comments/posts they created before and after “initial engagement,” to quantify and predict how engagement with racist content affects how much racist content users produce behavior. We measured the average relative frequency of users’ racist posting before and after making a comment on a racist post.

Results

Comment level attributes

We created five logistic regression models (see Table 3) and analyzed the influence of racism presence in parent-post, comment length, hashtag list length, upvotes, and reposts on the chance the comment contained racism.

Mixed-effect model: predictors that a comment is racist (N = 44,350,815).

OR is the odds ratio. LL OR and UL OR are the upper and lower limits on the 95% confidence interval for the odds ratio.

Comment length noticeably influenced the presence of racism in the comment across all regression models. This is unsurprising given that longer comments increase the opportunity for the presence of racist words. The effect of the number of hashtags was less clear. In some models, more hashtags increased the chance of racism, and in others decreased the likelihood. This is likely partially due to our dictionary's multi-word terms (e.g. “china virus”); word terms cannot appear in hashtag lists (which are only singular words).

Interestingly, across all models, greater upvotes of a parent post reduced the probability of racism in comments. For the aggregate racism model, every 10,000 additional upvotes on a parent post decreased the chance a comment contained racism by 16.3% (p < .001). In contrast, in certain models (generally racist, anti-Black, anti-Muslim), the number of reposts on a post increased the likelihood that the child comment had racism. In the general racism model, every additional 10,000 reposts increased the chance a comment contained racism by 2.7% (p < .05). For the anti-Jewish and anti-Asian model, reposts were not found to have a significant effect. This demonstrates that frequently reposted posts had a higher chance of containing racist comments, but posts with more upvotes had a lower likelihood of containing racist comments.

We found that comments are far more likely to be racist when they occur on racist posts. While this may be self-evident, our logistic regression model not only quantifies the degree to which this effect occurs, but, importantly, found that it is especially high within ethnic subgroups. Since binary coding was used to label racism present in posts/comments, a model's racism odds ratio equaled the probability of a racist comment occurring on a racist post relative to a non-racist post. Across all five models, this probability increase was statistically significant. The general racism model (see Model 1 in Table 3) showed that comments were 21.51 times more likely to be racist if they occur on racist posts compared to non-racist posts (p < .001). For the specific subgroups, this effect was large and significant for racism toward that specific group. The anti-Black model (Table 3, Model 2) demonstrated that comments were 226.90 times more to be anti-Black on anti-Black posts (p < .001). The anti-Asian model (Table 3, Model 3) revealed that comments were 35.56 times more likely to be anti-Asian on anti-Asian posts (p < .001). The anti-Muslim model (Table 3, Model 4) determined that comments were 55.59 times more likely to be anti-Muslim on anti-Muslim posts (p < .001). The anti-Jewish model (Table 3, Model 5) showed comments were 190.51 times more likely to be anti-Jewish on anti-Jewish posts (p < .001).

While the spread of racism was strongest within subgroups, we find some spillover-effect between different ethnic subgroups, albeit minimal and significantly smaller. Across all sub-group specific models, the strongest predictor of a comment containing subgroup-specific racism was the parent-post containing racism toward the same subgroup. For example, in the anti-Black model, an anti-Black parent post had an odds ratio of 226.90 (p < .001). However, anti-Muslim, anti-Asian, and anti-Jewish terms in the posts all had odds ratio's less than 2. This trend holds across all subgroups (see Table 3).

In addition to model creation, we measured the probability of racist posts occurring based solely on data distribution. Figure 2 shows the conditional probability of a racist comment given its occurrence on a racist or non-racist post toward a homogenous group. The distributions in Figure 2 are consistent with the results of our logistic regression.

Probability of a comment being racist.

Post-thread level attributes

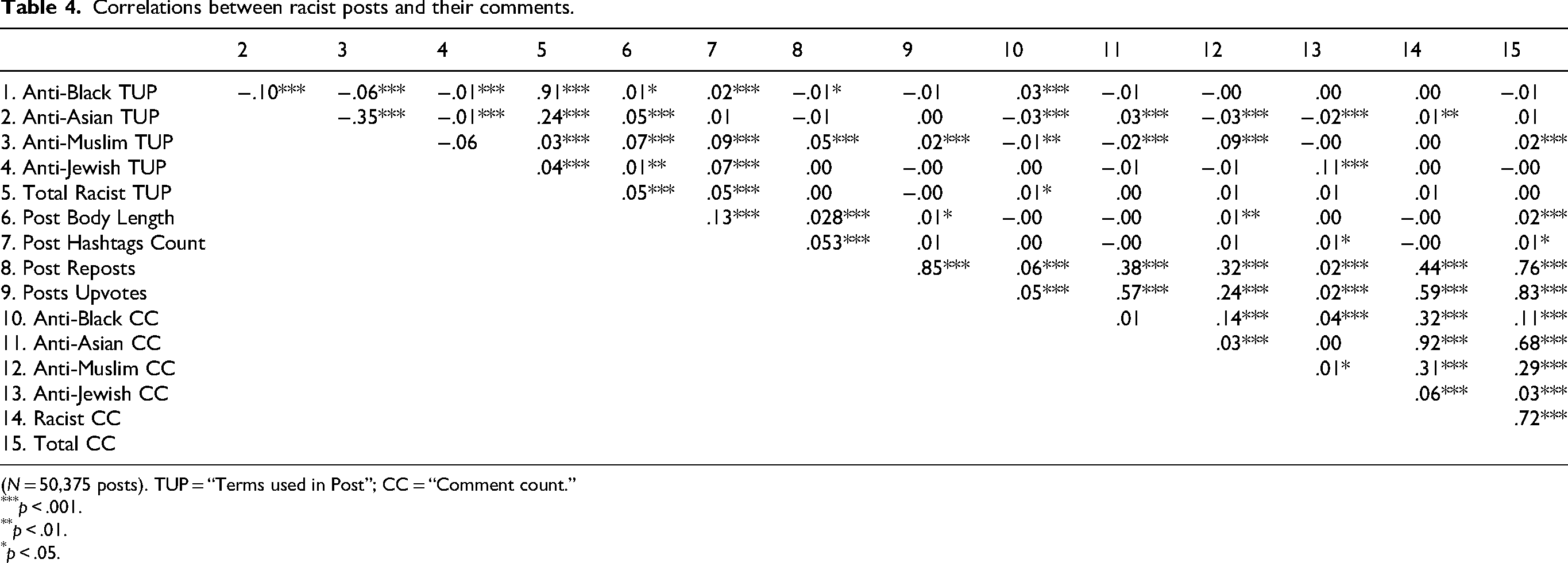

We found that 9163 posts (18.19%) contained anti-Black terms, 30,402 posts (60.35%) contained anti-Asian terms, 10,209 (20.27%) contained anti-Muslim terms, and 1045 (2.07%) contained anti-Jewish terms. We used Pearson's R correlation analysis between the collected variables across all racist posts in our dataset (N = 50,375 posts). The results are summarized in Table 4.

Correlations between racist posts and their comments.

(N = 50,375 posts). TUP = “Terms used in Post”; CC = “Comment count.”

p < .001.

p < .01.

p < .05.

The number of racist terms targeting a subgroup in a post is significantly and positively correlated with the number of racist comments on the post targeting that same subgroup. However, the correlation is weak, indicating that although there is a significant relationship across groups, the absolute number of racist terms is not always predictive of the number of racist comments. Figure 3 illustrates our correlation results. For anti-Black terms in a post and anti-Black comments, the correlation coefficient was 0.0252 (p < .001). For anti-Asian, anti-Muslim, and anti-Jewish terms, the correlation coefficients were 0.0298 (p < .001), 0.0858 (p < .001), and 0.1122 (p < .001), respectively. Of note, the number of racist terms used in a post had either a negative or insignificant (p > .05) correlation with the number of racist comments on the post for general racist comments or comments with a different target group from the parent. For example, the number of anti-Black terms was not significantly correlated with the number of anti-Asian comments.

Correlation between racist terms in a post and the number of racist comments.

User level attributes

To study user-level attributes, we conduct Pearson's R correlation analysis between variables across all 3,538,899 Parler users in our dataset (see Figure 4 and Table 5). First, we found that the amount of racist content a user creates is significantly, positively correlated with the amount of racist content that the user replies to (r = .3076, p < .001). Second, across each subgroup, we found that the number of times a user makes racist content against a subgroup is most strongly correlated to the number of times a user engages with racist content targeting the same subgroup. We mapped the best-fit lines across each subgroup (see Figure 5) and the number of comments on racist content leads to a significantly increased probability of posting racist content. Third, we found that the number of racist comments by users targeting a specific subgroup is still positively, and significantly correlated to the number of times a user replies to racist posts targeting different subgroups. This suggests that users exposed to racism against one specific group transition into posting racist content against several groups.

Correlation between user-made racist posts and comments on racist posts.

Predicting user-made racist posts or comments based on the number of times a user comments on racist content; shaded region is the 95% confidence interval.

Correlations between user-collected variables (N = 3,538,899 users).

p < .001.

We found that users post more racist content after an engagement with another user's racist content. We used paired sample t-tests to compare the mean amount of racist content posted by Group 2 users (N = 57,696) before and after their first racism engagement point. We found before engagement, a user in this set posted M = 0.3050 racist posts (SD = 3.9026), and after engaging with racism posted M = 0.8127 racist posts (SD = 7.454), a significant (p < .001) increase of 166%. The difference remains significant (p < .001) considering subgroup-specific racism before and after engagement (see Table 6 and Figure 6).

Average count of racist posts or comments made by Group 2 users before and after first commenting on a racist post.

Average number of racist posts made by Group 2 users before and after commenting on a racist post (N = 57,696).

p < .001.

Figure 7 illustrates the number of “racism engagement points” (i.e. the number of times a user commented on racist content) before a user posted their own racist content. We start with 6219 racist users before the first engagement point, and the biggest increase in users posting racist content (4763) came after just a single engagement point. After two engagement points, there are only 1576 new racist users, and after three, only 692 new racist users. This suggests that the first engagement point is the most powerful, causing users to actively engage with racist content. However, engagement alone is not sufficient to cause every user to post-racist content; after 20 engagement points only 14,364 out of the 57,696 (25%) ever posted racist content.

Users who create racist content after increasing engagement points.

Discussion

We analyzed the contagion of racism at a post-comment interaction level and at an aggregate user level. There remains a dearth of work studying racist content on niche, fringe platforms such as 4chan, 8chan, Gab, and Gettr. Our approach enables a quantitative analysis of the spread of racist speech on Parler at scale, and our findings provide evidence about the spread of racism online, underscoring the need for policies that curb hateful, racist speech on social platforms.

Principal findings

Our study demonstrated that racist comments were far more likely to be racist if they were responding to a racist text, even after controlling for virality, popularity, and length. Overall, comments were 21 times more likely to be racist in response to racist content than non-racist content. This illustrates that one mechanism for the spread of contagious racist speech on social media platforms occurs in post-to-comment interactions. Among the subgroups we studied, anti-Black and anti-Jewish posts and comments were the most susceptible to racist contagion, as they were substantially more likely to include racist content when responding to racist posts (i.e. 191 and 227 times more likely, respectively). Our findings quantify and underscore the stark rate at which racism spreads from posts to comments.

Our findings support previous work which found that racist contagion occurs within text-based interactions (Gonzales et al., 2010; Hancock et al., 2008) and that the fear of social isolation theorized by the Spiral of Silence does not deter a small number of individuals from posting racist content on social media (Chaudhry and Gruzd, 2020). Moreover, our results underscore that contagion is not always adaptive, even when it may technically be prosocial. This spread of racist content from parent posts to child comments can be further explained by the engagement associated with the negative emotional valence evoked by divisive speech inherent to racism on a platform like Parler. We also found that the contagion of racism demonstrates greater subgroup specificity at the micro-level than the macro-level. In our sample, racist speech directed toward one group did not strongly generalize to other groups at the post-to-comment level (i.e. our logistic regressions suggest racist comments are far more likely to appear on racist posts toward that same subgroup than other subgroups). However, at the aggregate user-level, we found that the amount of racist content a user engages with does predict the amount of racist content they produce, even across different groups.

We found that the mere presence of racist content in posts predicted racist comments more than the sheer volume of racist terms. When we focused on racist-only posts, the number of racist terms present in a post was not a significant predictor of the number of comments containing racist terms. Essentially, one racist mention was sufficient to cultivate racist echoes in the comments. In terms of a dose–response to a pathogen, it appears that encountering a single instance of racism is sufficient for susceptible users to actively engage with racist content.

Our results demonstrate that in the case of posts on Parler, racist content is a social contagion. This is also supported by the fact that interaction with racism predicted racist posting patterns. Since the number of racist posts by an individual was significantly and positively correlated with the number of racist posts with which their users ultimately engaged, we highlighted the highly interconnected nature of racist posters on social media platforms. These were consistent with previous findings by Mathew et al. (2019), who reported that racist users on Gab form networks 20 times as dense as non-racist users. We found that an individual's engagement with others’ racist content heavily predicted that individual's inclusion of racist content in subsequent posts.

Moreover, a singular engagement with racist content significantly influenced subsequent posting behaviors of susceptible users (i.e. the most significant increase in new, user-generated racist content occurred after a user first interacted with racist content). Therefore, the contagious nature of racist content combined with the sheer volume of racist posts on Parler made the platform an ideal “radicalization point” for transforming susceptible users into active participants in online racism.

Implications for social media platforms and policy

Our results confirm the findings of previous work, which concluded that Parler served as a migratory platform for de-platformed social media users (Hitkul et al., 2021) as well as a gateway that radicalized users into racist ideologies and worldviews (Munn, 2023a). Our work provides evidence of how under-moderated social media platforms can significantly impact the spread of racism beyond isolated communities (e.g. white nationalists) and into broader populations. Our findings confirm that the Spiral of Silence theory is being negated in the case of racist content on Parler (i.e. a handful of highly racist users do not fear social isolation on the platform and, therefore, do not silence their beliefs and thoughts online). This has implications for platforms and policy in that if a Spiral of Silence was present on niche social media, it would have provided a protective mechanism which would self-silence certain racist voices. Given a lack of user-level censorship, social media platforms and policy makers will therefore need to take active steps for intervention against racist content, rather than expecting users to self-moderate.

Following exposure and engagement with racist content, Parler users began posting more racist content. Therefore, Parler incubated racist content and facilitated its diffusion. Racist content on Parler ultimately served as a contagion that radicalized users into posting content that was increasingly more racist. Though mainstream platforms like Reddit and Facebook engage in active content moderation practices, alternative platforms like Parler enable the growth of racist content into a platform-level contagion due to under- or non-moderation. There is an urgent need to push for social media platforms to follow design choices and content moderation policies that inhibit the spread of racist content by design.

Our findings support targeted content moderation strategies that identify and filter any amount of racism, rather than tagging posts that exceed a sometimes arbitrary volume of racist terms. This form of content-level moderation could be implemented through user-controlled message filtering (i.e. users choose to filter out any message with racist terms) or platform-initiated removal of racist posts and these types of interventions are seen as protective of democratic institutions (Lukito et al., 2022). However, content moderation has drawbacks (e.g. algorithmic moderation tends to overstate its accuracy (Gillespie, 2020). We also acknowledge concerns related to the potential of “overblocking” content that is not actually racist, leading to the disproportionate policing of language used by certain groups (Gorwa et al., 2020; Sap et al., 2019). Moreover, racism, misogyny, transphobia and other forms of hate are highly context sensitive (e.g. historical, sociocultural, platform culture, etc.) and can be coded (Gerrard and Thornham, 2020; Ging et al., 2020). Part of racism's complexity involves its manifestation in “mundane” life; there is “rampant racism at the micro level [and the] mundane nature of this talk that makes it so compelling” (Myers and Williamson, 2001). These aspects complicate automated intervention efforts, especially if racist content prima facie seems “palatable” or “mundane.” Therefore, algorithms cannot completely replace common sense, discernment, and ultimately human judgment, necessitating some level of human-in-the-loop moderation strategies. Given a major allure of Parler is its “free speech” focus, content moderation may not be feasible. If heavily moderated, users would likely turn to other, less moderated platforms.

Hangartner et al. (2021) suggests that social media platforms could increase the visibility of targeted counterspeech to reduce racism. Employment of concomitant counterspeech strategies could be particularly effective for scenarios deemed most likely to cause violence or perpetuate psychological harms. As He et al. (2021) notes, in the context of COVID-19-related racism, counterspeech was particularly effective in decreasing the risk users begin producing racist content. Counterspeech could be especially effective since it does not require platform support and can be created by external actors.

Mainstream and alternative platforms could also encourage the dissemination of “inoculating” content (Lewandowsky and Yesilada, 2021), information that specifically exposes the misleading techniques behind racist misinformation. Lewandowsky and Yesilada (2021) found that exposure to “inoculating” content made users less likely to agree with and share content. In the context of our results, exposure to inoculating content could reduce the likelihood users first engage with racist content by gaining resilience against misleading techniques, protecting them from being radicalized into racist behavior. Even if platforms do not actively encourage the dissemination of inoculating content, users and other stakeholders (e.g. governmental and non-governmental organizations) should be encouraged to create and disseminate inoculating content. As further alternatives to content removal for moderation strategies, platforms can also minimize content by showing it on fewer user feeds or demonetize content to reduce incentives for hateful content production (Jardine, 2019).

Our findings also shed light on how racism interacts with social media platform design. Unlike mainstream social media platforms, Parler lacks a ranking algorithm and displays user feeds purely chronologically. This structure should ideally remove some toxicity incentives by not prioritizing content by engagement metrics. However, our results underscore that users still produce and engage with racist content. This suggests the utility of actively incorporating anti-hate mechanisms into the structure of social platforms. Moreover, there are structural changes platforms could adopt to curb hate without chilling free speech. Jahanbakhsh et al. (2021), for example, found that behavioral nudging of users (e.g. forcing a user to check a box confirming their post is accurate) makes them more conscious of what they share, effectively reducing misinformation. This strategy could be applied both on mainstream and alternative, free-speech-focused platforms. Anonymity online can foster an environment for hateful behavior and reducing the anonymity of platforms (e.g. Facebook's policy requiring real names of users) could effectively combat hate (Jardine, 2019). Although alternative platforms are likely unwilling to adopt these policies alone, governments can proactively require platforms to control anonymity (Jardine, 2019).

Even though the above strategies do not curb free speech, a remaining concern is that Parler and similar niche social media lack incentive to change platform policies to encourage counter speech and build anti-hate mechanisms into infrastructure. Given that counter speech and inoculating content do not require user buy-in, one solution could be encouraging more grassroots action, such as active bystander intervention in racist conversation on platforms (Rashawn et al., 2024) or creation of accounts to independently disseminate inoculating content on platforms.

Policies must play an active role in “maximiz[ing] the good and minimiz[ing] the bad” on platforms (National Academies of Sciences, Engineering and Medicine, 2024). This could be realized in a variety of ways: requiring that platforms employ automated moderation, holding platforms accountable for hateful content on their services, or mandating age/identity verification on platforms. In June 2024, the US Surgeon General called for a health warning on social media platforms to increase awareness of risks and dangers, especially among vulnerable populations such as youth. Our results provide evidence that consumption of racist content on platforms may be actively harmful to users and could support even post-level warnings about potential health impacts of engagement with racist content.

Results from our study and related work can be used to embolden and push forward policy action. For example, due to rampant criminal activity on Telegram, prosecutors in France arrested Telegram CEO Pavel Durov for complicity in criminal activity on the platform (Breeden and Satariano, 2024). Parler, in refusing to regulate content, played a similarly complacent role in the spread of hate on social media, affirming the potential need for regulatory action. These proposed policies and strategies have drawbacks and benefits. We do not argue that our results support any of these as better than another. Rather, in the pursuit of interventions, our results can inform interventions and provide compelling, quantified evidence of the dangers of racism online that policy makers, advocacy groups, and platforms can use to support and push forward changes to curb online racism.

Limitations

The retrospective nature of our study restricted our access to a subsection of Parler made accessible after the site was initially deplatformed (Aliapoulios et al., 2021). Therefore, the representativeness and generalizability of our findings are inconclusive and would benefit from replication. Likewise, our analyses excluded some posts corresponding to comments that could have directly affected the post- and comment-level analysis. However, we believe that the user-level analysis was relatively unaffected because the post-collection strategy scraped all posts of users in our subset. Additionally, Parler's representativeness of other alternative, right-wing social media platforms is unclear. Nevertheless, our results still glean insight on fringe platforms with little-to-no content restrictions.

Another limitation is that any dictionary used for racist content detection is not exhaustive and would likely fall short in capturing the highly contextualized, coded, semantic, and euphemistic features that often present in racist posts online. However, we opted for specificity over sensitivity. We chose terms that almost always signify explicit racism, and though most computational approaches will miss some racist speech in this use case, we are confident that the speech labeled as racist in our dataset was indeed racist. For this study, a limited dictionary does not impact our methods which primarily analyze word-level contagion. As a result, however, our findings are specific to the spread of overtly racist content. Because much of our analysis was of terms around 2020, there is a large representation of racism specifically related to the COVID-19 pandemic. Therefore, our results, while robust due to minimal noise, are based on algorithms that underestimate the volume of racist language and misrepresent the distribution of racism across subgroups.

Finally, in analyzing user-level phenomenon, we characterize a user's first comment on a racist post as a proxy for their first active “engagement” with racism on a platform. Due to limited availability of comprehensive data on user’ behavior on Parler and other platforms, we cannot ensure that this really is the first engagement. Despite these limitations, our work contributes to characterizing the macro- and micro-level behaviors of users on Parler.

Future directions

Future work could employ LLMs like ChatGPT or nuanced text classifiers to try to capture more racist posts without sacrificing specificity. Context aware classifiers could contribute more meaningful and complex analyses of how racism spreads. Future work could also potentially address limitations by collecting prospective data from emerging far-right, alternative platforms and build dictionaries attuned to capture the euphemistic, symbolic features inherent in racist posts on social media (often hidden through racist hash tagging, digital blackfacing, and racist emoji use). The potential for niche, right-wing platforms like Parler to radicalize users means future research may benefit from repeating our analysis across other platforms (both alternative-tech like Rumble, Gab, 4Chan and mainstream such as Facebook and Twitter) to comparatively examine if differing platform-restrictions, structure, and moderation levels influence the contagion of racism. A systematic review of online racism identified that there remains a dearth of work on how “racists come to cluster ideologically into groups and how those members collectively radicalise” (Bliuc et al., 2018). Recent advances in ML open possibilities for future work to better study such mobilizations across platforms.

Our user-level spillover findings also suggest the benefit of studying the cross-platform spread of racism. This research can specifically explore whether racism diffused to users who migrated to alternative platforms has spill-over effects (if the same users return to more mainstream platforms). As we were unable to explore the impact of viewing racist content (without engagement) on the spread, future research should seek to quantify this. Future work can extend and develop our approach to other, similar platforms to help mitigate the spread of racist content. Finally, there is a dearth of studies exploring social media interventions specifically preventing hate speech. Our study characterizes the dynamics of racism's proliferation on social media that can form the foundation for future intervention-based research (including interventions to prevent the cross-ethnic spillover of racism).

Conclusion

We explored racist content as a contagion on the US-based social media platform Parler and found that racism spread from parent posts to child comments. Users post more racist comments after first engaging with a racist post, particularly if they reciprocated with a racist sentiment in a subsequent comment. Our work helps explain and quantify how racism operates as a contagion on some social media platforms. Moreover, our findings highlight the dangers of un(der)regulated platforms like Parler in propagating racist ideologies and radicalizing users into active propagators of racist content. Our work found a stark shift in users’ behavior after initial engagement with other users’ racist content. Engagement with racist content (i.e. making a comment) predicted that subsequent parent posts created by susceptible users who first commented on other racist posts were more likely to feature racist content compared to those who did not engage with racist posts. We also found a unique spill-over effect occurring between ethnic subgroups. Our results highlight that there is an urgent need for interventions specifically designed to contain the varied ways racist speech spreads on social media platforms and provides evidence that makes interventions more actionable for policy makers and platforms.

Supplemental Material

sj-docx-1-bds-10.1177_20539517251321752 - Supplemental material for Quantifying the spread of racist content on fringe social media: A case study of Parler

Supplemental material, sj-docx-1-bds-10.1177_20539517251321752 for Quantifying the spread of racist content on fringe social media: A case study of Parler by Akaash Kolluri, Dhiraj Murthy and Kami Vinton in Big Data & Society

Footnotes

Acknowledgements

This work was supported by Good Systems, a research Grand Challenge at the University of Texas at Austin. This work was also supported in part by Oracle Cloud credits and related resources provided by the Oracle for Research program. The authors thank Pranav Venkatesh, Nikhil Kolluri, and Kellen Sharp for providing suggestions and feedback on previous versions of this manuscript.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Good Systems, a research Grand Challenge at the University of Texas at Austin.

Conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.