Abstract

New media studies invested in online political conflict, radical and antagonistic subcultures have taken an interest in the affordances that shape memes, vernaculars and online political communication. One often overlooked affordance is the ensemble of social, communication, platform and legal frameworks stipulating what users can and cannot say, which I call “speech affordances.” To explore this concept, I look at the strategic communication of 4chan, Twitter and YouTube subcultures tied to a historical meme, “Kekistan,” often perceived as a key example of the ideological cacophony of the 2015–2017 online “culture wars.” I focus on how 4chan's policy of user anonymity, YouTube's unmoderated comment sections and Twitter's more proactive moderation practices brought some influencers to alter the original connotations of the meme into “overt” messages tolerable to Twitter and YouTube out-groups and platform moderation policies. Speech affordances bear methodological implications for historical studies of speech moderation and the overall mechanisms in which problematic language adapts to spaces with distinct speech norms.

Keywords

This article is a part of special theme on Mapping the Micropolitics of Online Oppositional Subcultures. To see a full list of all articles in this special theme, please click here: https://journals.sagepub.com/page/bds/collections/micropoliticsonlinesubcultures

Introduction

In the field of censorship studies, it is understood that the moderation of public speech “materializes everywhere” as a practice that constitutes and defines the boundaries of public spheres (Post, 1998: 2). Spheres that opt not to rely solely on regulatory means, such as state censorship, seek to maintain or restore public order by modulating the circulation of “extreme” language, that is, speech that transgresses “the boundaries of acceptable norms of public culture” (Pohjonen and Udupa, 2017). Examples include incitement to violence, hate speech and languages ruled improperly by historical processes of political and cultural re-orientation, such as denazification (Vincent, 2008: 37), decommunization, or more recently, decolonization. Foundational processes like these seek to drive historical change by countering, marginalizing, obfuscating or destroying language that conjures and normalizes undesired behavior, ideas or beliefs—past or present.

Since the Santa Clara Principles were formalized in 2018, mainstream social media platforms have taken a more resolute approach to implementing these measures into their content moderation practices. But one recurrent observation is that, instead of disappearing from the face of the Web, moderated language tends to be redistributed beyond or

In dialogue with recent contributions from the philosophy of language (Ayala, 2016; Saul, 2019), affordance theory (Bucher and Helmond, 2018) and content moderation studies (Gibson, 2019; Gillespie, 2018; Munn, 2020), this commentary proposes a definition of “speech affordances” as platform content moderation policies, techniques or speech norms practiced within social media. It describes how extreme speech alters and adapts to platforms with distinct speech affordances. As a case study, it describes the formation of an admittedly “dank” (passé) meme of the 2016–2018 online culture wars: Kekistan. Since 2017, Kekistan has generated popular interest as an example of a meme whose original (though somewhat ironic message) of white nationalism was lost in translation as it circulated from 4chan/pol to Twitter and YouTube, and was consumed in the latter platforms as a symbol of anti-identity politics (Tuters, 2019). Though this meme is not an exhaustive example of the phenomena described above, it is sufficient to illustrate how speech affordances affect the usage of more or less problematic language across (online) public spheres. It may also inform—as it should—case studies outside the United States, insofar as speech moderation in any given public sphere struggles with the re-emergence of their historical extremes. 1

Speech affordances

While much has been said of affordances in general (Bucher and Helmond, 2018), there has been less focus on content moderation policies, techniques and user speech norms as affordances in their own right. One of the closest references to speech affordances originates from Ayala (2016), who discusses the role of social structures in determining one's capacity for self-expression (see “speech capacity” in Ayala, 2016: 1). Their argument is that what one says may bear more or less effect depending on the position one speaks from, as much as the position of the interlocutor one speaks to. Speech relies on “the detection and exploitation” of affordances available within social interaction, which are embedded within a larger social structure and the norms that govern it (Ayala, 2016: 1–4).

Arguably, the materiality of online platforms bears a number of additional features that complicate social interaction. Different platforms shape messages according to different affordances and vernaculars (Gibbs et al., 2015). Through their policies, techniques and user cultures, every platform may be understood as effectively licensing certain forms of speech, which in turn provide more or less favorable conditions for the expression of certain ideas. For the past 10 years, for example, a great deal of research has taken issue with the role of content optimization, one recurrent argument being that more personalized content recommendations contribute to polarized and hateful language (Munn, 2020: 8). Others have taken interest in the role of content moderation, specifically from users, to describe how subreddit community guidelines enable different speech cultures—“free speech” subreddits being more prone to antagonistic language and “safe spaces” containing “higher rates of self-censorship” (Gibson, 2019: 1). Yet others have looked at identity affordances, such as user (pseudo)anonymity, in facilitating the types of extreme language found in 4chan and similar forums (Hagen and Venturini, 2023).

Three types of speech affordances

Given these examples, one could place speech affordances into three, broad categories. The first are “high-level” affordances that “enable or constrain […] communicative habits” (Bucher and Helmond, 2018), such as content moderation policies and the local or international legislations they are modeled after. These policies effectively delimit the boundaries of permissible speech in a given platform, though they are dynamic documents whose definitions of objectionable language vary in time, depending, for example, on local legislation and a change of consensus around what language is acceptable. When crossing a platform's policy line (Constine, 2018), suspended users may transit back and forth between more or less moderated spaces, with the latter often being designed to circumvent the speech jurisdictions of the former. This transit forms a dynamic, fringe-to-mainstream social media ecology, where users invested in objectionable content may create their own clandestine affordances (coded language, private accounts, auto-suspensions, redirects) to keep content circulating across these spheres.

“Low-level” affordances enforce and operationalize content moderation policies. These may be content moderation techniques (demotion, deplatforming, flagging, user reporting), as well as platform features (e.g. ephemeral or self-destructive posts). One could classify them into five broad mechanisms, each consisting of keeping objectionable content from gathering prominence and bearing significant negative effects on a platform's user base and reputation. These are counter-speech (flagging, context labels, re-directs); marginalization (demotion, deactivating post engagement and sharing features, de-listing URLs); obfuscation (partially hiding posts or users from newsfeeds or their profile); embargoes (temporary user and post suspension) and censorship (expulsion or destruction of content through deletion or permanent suspension, colloquially called “deplatforming”). These techniques enforce different punishments based on different degrees to which content is tolerated. Once it surpasses a platform's policy line, or indeed its margin of tolerance, it may be censored (deplatformed).

Users may want to enforce their own speech norms through community guidelines, subreddit mods and other platform vernaculars. Arguably, 4chan has become the home of extreme speech due to its anonymity affordances, which allow users never to not disclose their identities with usernames, their post history or profiles. It could be said that anonymity affords users the freedom to dissociate speech from the moral oversight of public identity (Phillips, 2015: 25). Similarly, the ephemerality of 4chan board feeds—that is, the fast order in which user posts appear and disappear in a feed—is described as a factor in the board's constant concoction of conspiratorial narratives, as users weave arbitrary connections in a constant stream of unrelated posts (Tuters et al., 2018).

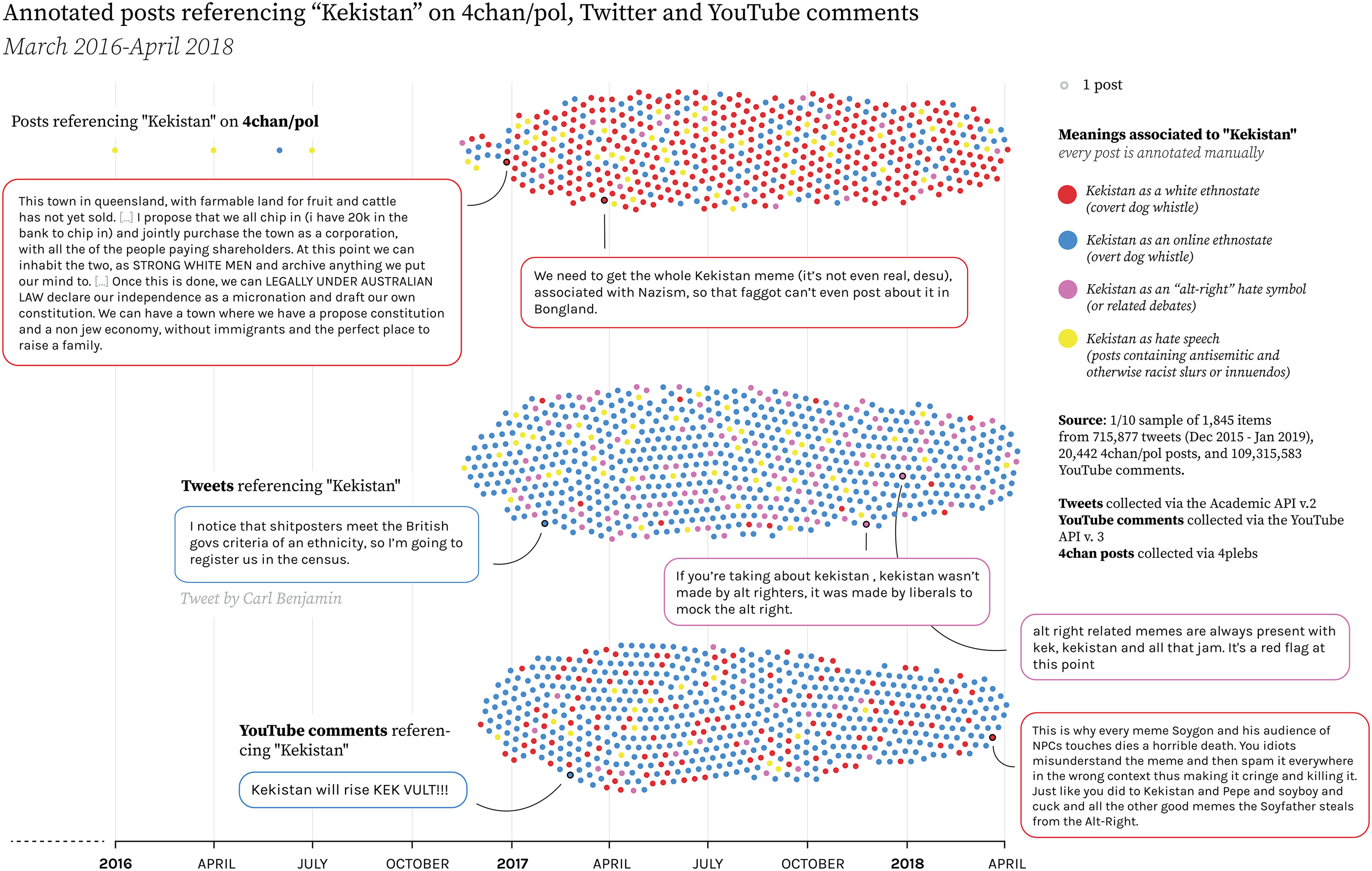

While enforcing content moderation policies, content moderation techniques and user cultures effectively enforce the “explicit and implicit” norms (Ayala, 2016: 3) of what can be said in a given platform. That is, they constitute a “possibility space” for speech agency (Haslanger, 2016: 18). One implication, which I will highlight in the case study that follows, is that instead of being relegated solely to less moderated platforms, objectionable speech is fragmented into different messages that can be still be “sayable” in proportion to a platform normative standards. We will see how the initial “base meaning” of the Kekistan meme, originally formulated on 4chan/pol, was that it was an online ethnostate for white nationalism, and was progressively fragmented into overt and covert messages tailored to Twitter and YouTube out-groups (Figure 1).

How did speech affordances form the Kekistan meme?

At the time of Kekistan's emergence in 2017–2018, Twitter and YouTube's content moderation had poorly enforced policies against hateful language, relying mostly on a relatively diverse user base to report it. I first highlight the role of user-based speech moderation and then comment on the aftermath of Twitter and YouTube's updated hate speech policies post-2019.

Kekistan as a white ethnostate

The “Kekistan” meme emerged around 2017 as a fictional nation-state, whose culture, religion and policies were intended to reflect the idiosyncrasies of 4chan/pol's anonymous subcultures. At the heart of their political identity was an interest in far-right political philosophy, particularly American white nationalism, crystallized in an initiative to have “Kekistan” be the name of a private island ruled by eugenic policies and inhabited by shitposters (anon, 2017).

At the time, some proponents of the meme were cognizant of active moderation efforts in other platforms, be it by other platform users (e.g. the community standards of certain subreddits) or by platforms themselves, particularly Twitter (anon, 2018a, 2018b). Combined with 4chan's strong in-group subculture, these factors explain why the meme was not meant to be communicated nor understood by external audiences, and why its original white nationalist connotations were more mentioned on 4chan than elsewhere on Twitter and YouTube (Figure 2).

Simplified summarization of speech affordances across Twitter, the YouTube comment section and 4chan.

1/10 sample of 4chan/pol posts, tweets and YouTube comments mentioning “Kekistan,” coded by theme.

Kekistan as an overt dog whistle

In Twitter of early 2017, British influencer Carl Benjamin noticed the meme and turns it into a practical joke against the British census’ definition of “ethnicity.” Externally, the meme comes across as much milder than its original version: it drops its white nationalist subtext, mutating into an initiative to bait left-wing “social justice warriors” into believing that Kekistan was a hate symbol (with the understanding that falling for this joke is as much of a joke as the concept of ethnic identity).

One reason for this mutation is the platform Benjamin is posting from (Twitter), which was mostly governed by the moral oversight of a wider and more ideologically diverse user base than 4chan/pol. There, the meme adapts into what Saul would call an “overt” dog whistle. That is, it used “ambiguity, implicature, and other non-semantic means” (Saul, 2019: 4) to impart a coded message (that Kekistan is a joke) to a target audience (Benjamin's followers), while communicating another meaning (that it is a hate symbol) to Twitter out-groups. This ambiguity created a vacuum that made both user- and platform-based moderation reticent to sanction it, which may explain why most tweets stayed online despite making inconspicuous racist provocations (see Figure 2).

Kekistan as a covert dog whistle

In contrast, the relatively lesser moderated comment sections of YouTube videos contain both 4chan's and Benjamin's versions of the meme. While some allude to racist and racialist concepts, others speak of the meme in the same terms as Benjamin (Figure 2). Such developments begin to cause alarm, as external watchdogs like the Southern Poverty Law Centre warned about the racist and anti-semitic connotations of the meme (Neiwert, 2017). Their reports caused a number of rebuttals by, amongst others, Benjamin himself, who mocks the watchdog for falling for his joke (Kekistan is a Terrorist Group, 2017).

But as Benjamin decides to save face and unmask the joke, others on Twitter and YouTube begin to question the genuineness of his disclaimer. Unbeknownst to them, Kekistan did bear white nationalist connotations on 4chan/pol. This makes Benjamin's followers, and to an extent Benjamin himself, the targets of their own joke. Back on 4chan/pol, anons reviled the possibility that Kekistan act as a gateway drug—or redpill—into serious dedication for white nationalist precepts. Perhaps the most significant sign of approval from 4chan/pol's far-right segments was from Richard Spencer, who in mid-2017 rose to Twitter to plead in defence of the “Kekistani people” (Richard Spencer, 2017a, 2017b).

While 4chan could afford the more extreme versions of the meme as an imaginary (white) ethno-state, this particular connotation was dropped when it arrived in the more policed environments of then-Twitter. It was transformed from a message that only a few could exchange within a close in-group (a

This is to not say that Kekistan's original meaning was necessarily rejected by users on Twitter or YouTube comment sections, but simply relegated to places that could afford its expression. The meme's ambiguity was not just the product of well-thought rhetorical ruses, but the consequence of a coagulation of meanings produced in platforms with distinct speech affordances. This ambiguity facilitated its circulation across users with no direct relation with its primary, objectionable meaning. It became a message without origins, conveniently lost in translation.

After deplatforming

It could be said that the process of dissemination of Kekistan reflects the ideological cacophony of the online 2016–2018 culture wars, waged under the premises of highly controversial topics like race, identity and nationality. The wave of content moderation that followed, whether by Twitter or YouTube, took stronger measures to identify, delete, demonetize or demote this type of objectionable content (including some mentions of Kekistan (Figure 3). This may have reassured some that the worst elements of these debates had been effectively marginalized in platforms with low traffic and little users. But any such forms of taboos may re-emerge today in the same way as they did in 2016, as they continue to be active wherever their expression is best afforded.

Online and moderated tweets that tweeted “kekistan” in 2017, as of January of 2023.

As an alternative, one could monitor the extent to which public debate takes place across a fringe-to-mainstream ecology, in order to address the more or less inexpressible ideas and sentiments of public debate as a whole. Though these expressions are marginalized, they also bear a form of imminence: they may return with the added value they gained from marginalization as the sentiment of a “silent majority.”

In doing so, one may also map the very constitution of public speech norms—that is, what different publics consider to be acceptable to say and why—in order to formulate content moderation measures that can bear more consensus across platforms. This exercise may be applied to speech regulation not solely tied to US issues, but phenomena as geographically widespread as incitement speech, intolerance, revisionism and other expressions of historical violence that have called for active regulation, or conciliation at last.

Supplemental Material

sj-docx-1-bds-10.1177_20539517231206810 - Supplemental material for The affordances of extreme speech

Supplemental material, sj-docx-1-bds-10.1177_20539517231206810 for The affordances of extreme speech by Emillie de Keulenaar in Big Data & Society

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article

Supplemental material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.