Abstract

The proliferation of big data analytics in criminal justice suggests that there are positive frames and imaginaries legitimising them and depicting them as the panacea for efficient crime control. Criminological and criminal justice scholarship has paid insufficient attention to these frames and their accompanying narratives. To address the gap created by the lack of theoretical and empirical insight in this area, this article draws on a study that systematically reviewed and compared multidisciplinary academic abstracts on the data-driven tools now shaping decision-making across several justice systems. Using insights distilled from the study, the article proposes three frames (optimistic, neutral, oppositional) for understanding how the technologies are portrayed. Inherent in the frames are a set of narratives emphasising their ostensible status as vital crime control mechanisms. These narratives obfuscate the harms of data-driven technologies and evince idealistic imaginaries of their capabilities. The narratives are bolstered by unequal structural arrangements, specifically the unevenly distributed digital capital with which some are empowered to participate in technology development for criminal justice application and other forms of penal governance. In unravelling these issues, the article advances current understanding of the dynamics that sustain the depiction of data-driven technologies as prime crime prevention and law enforcement tools.

Introduction

Datafication is a rapidly proliferating phenomenon in contemporary Western societies, and it refers to ‘the ubiquitous quantification of social life’ (Baack, 2015: 2). It manifests itself mainly in big data analytics, and it stems from the so-called data revolution (United Nations, 2014) of the past few decades. Datafication is also a by-product of the accumulation of large-scale administrative data and of behavioural and social data from heightened human interaction with smart devices and online platforms including social networking sites (Boyd and Crawford, 2012), and it is increasingly used to inform decision-making within and beyond the public, political and economic sectors.

There is a fast-growing body of critical literature on the proliferation of datafication in the form of the data-driven models now increasingly applied in justice systems across the world for predicting risk (Angwin and Larson, 2016), forecasting crime hotspots (Ensign et al., 2018; Lum and Isaac, 2016) and implementing the biometric identification of targeted individuals (Bennett Moses and Chan, 2018; Fussey, 2019). The rapid rise of data-driven technologies in justice systems highlights their growing popularity as prime crime control tools despite the growing corpus of studies pointing to their potential harms (Barocas and Selbst, 2016; Ferguson, 2017; Fussey, 2019; Hao and Stray, 2019; Oswald et al., 2018). This calls for close scholarly scrutiny of how the technologies are framed and presented to the state and the general public, both of whom may be considered the core stakeholders. Therefore, this article provides an empirical account of the frames that appear to legitimise the technologies. This is explored through two fundamental concepts: utopic ‘sociotechnical imaginaries’ (Jasanoff et al., 2007), which emphasise the benefits of datafication whilst obscuring its harms, and ‘digital capital’ (Van Dijk, 2005), which refers to the resources required for technology development. Motivated actors with access to such capital can dominate technology development and the truth claims made about their capabilities.

To fulfil its objectives, the article draws on the findings of a narrative review of abstracts from academic articles on the technologies and their capabilities. Using insights gained from the narrative review, we propose the following frames for understanding how the capabilities of data-driven technologies are depicted in the extant literature: (a) optimistic frames which endorse the tools and their ostensible status as the panacea for cost-effective and efficient crime control; (b) neutral frames that are ambivalent towards the potential harms and benefits of datafication although some are solutionist in that they acknowledge the potential harms of datafication and proffer remedial artificial intelligence (AI) models and (c) oppositional frames which emphasise several harms of datafication and reject the view that data-driven tools constitute the panacea for crime control.

Datafication and the proliferation of data-driven technologies

Data-driven technologies that have emerged as part of the datafication phenomenon are being deployed for a range of tasks. Some examples are social media mining, sentiment analysis and natural language processing. These technologies now inform decision-making at several levels of the criminal justice process. For example, experimental algorithmic tools can influence police operational decisions to improve a police force's decision-making, such as how to allocate policing resources (Mastrobuoni, 2020; Oswald et al., 2018). In many countries, police services are also using new data-driven technologies for intelligence and surveillance-led policing (Bennett Moses and Chan, 2018). The technologies, which are sometimes produced through collaborations between academics, the state and non-state actors, are also influencing other criminal justice services. In court, they can inform decisions about sentence severity, and in the penal system, they can determine the intensity of interventions, parole decisions and prison security classifications (e.g. MOJ, 2019). To perform their tasks, the technologies automate a combination of administrative data from criminal justice services and/or big data from other sources.

The private sector and academic researchers (particularly data and computer scientists, software developers as well as mathematicians and statisticians) are at the forefront of the datafication phenomenon (e.g. Brantingham et al., 2018; Mohler et al., 2015). Their frames about the technologies can evoke and legitimise sociotechnical imaginaries (Jasanoff et al., 2007; Jasanoff, 2015) of criminal justice technologies as the panacea for crime control. The concept of sociotechnical imaginaries is defined as ‘collectively held and performed visions of desirable futures […] animated by shared understandings of forms of social life and social order attainable through, and supportive of, advances in science and technology’ (Jasanoff, 2015: 25). As such, these imaginaries depart from expectations of innovation of science and technology (Jasanoff et al., 2007); however, they do not only embed visions of technological possibilities, but they also express a shared understanding of the common good (Jasanoff, 2015).

Therefore, imaginaries of ‘smart’ criminal justice can be held not only by the proponents and developers of the technologies but also by state consumers of technological products and even ordinary members of the public (Jasanoff et al., 2007). But, collectively held imaginaries are informed by specific framings and visions of the benefits of technological development that can obfuscate technological harms, as frames organise knowledge and influence thinking (Borah, 2011; Entman, 1993). An example is the capacity for data-driven technologies to reproduce biases embedded in foundational data. In replicating biases, the technologies connect the past and the future and, in the process, sustain a social order marked by historical inequalities. As Jewitt et al. (2015: 90) put it, such imaginaries can influence how people ‘see and organise the world, in its histories as well as its futures’. The frames should as such be investigated since they are not simple visualisations of future possibilities: they can inform the design of technologies that either rebalance or maintain an unequal social order and can fuel expansionary applications of the technologies by justice systems.

This trend overlooks the caveats proffered by critical data scientists who emphasise the potential for big data analytics to rely on biased administrative data that prompt algorithmic replications of existing inequalities (e.g. Starr, 2014). Notwithstanding this, the criminal justice system is becoming increasingly datafied. For example, more than 150 data-driven predictive algorithms are now applied in penal systems across the world, including low- and middle-income countries (Fazel et al., 2012). Yet, even if academic attention on this issue is certainly rising (for instance in the legal discipline, see among others Custers, 2013; Hamilton, 2015; Law Society, 2019; Starr, 2014), criminological and criminal justice scholarship has remained relatively silent on this subject matter. The concept of sociotechnical imaginaries is therefore useful since it alerts us to the need for critical analysis of the frames that instigate and sustain imaginaries of ‘smart’ criminal justice or datafication as the panacea for crime control.

Reflecting this perception, in several countries, there have been official declarations about the merits of data-driven predictive algorithms and their importance in policing and criminal justice decision-making (only a few examples: in Australia see AFP, 2021; in the United Kingdom see MOJ, 2019; in France, see Politico, 2020). Authorities using predictive policing software similarly evoke the sociotechnical imaginaries depicting the tools as capable of achieving crime prevention aims, stressing their technocratic benefits such their ability to provide efficient and cost-effective solutions to the problems of crime and deviance (e.g. Dearden, 2017; Kelion, 2019 MOJ, 2015; National Offences Management Service, 2016). The technologies are also generally depicted in policy documents as scientifically objective and capable of a higher degree of accuracy and impartiality than human actors or when combined with professional judgement (Home Office, 2002). These presumed benefits denote the influence of positive frames and sociotechnical imaginaries regarding the capacity of technologies to improve human decision-making. It appears that equal consideration is not given to technological limitations. However, speculation aside, empirical insight is needed to unravel the frames and their implications.

The critical scholarship on data-driven technologies

Rejecting the positive depictions of datafication inspired by sociotechnical imaginaries, critical research has underlined how big data are not easy to manage, especially when potentially important data need to be identified and prioritised amongst a white noise of basic rumours and potentially misleading information (Middleton et al., 2020). In addition, there are no certainties provided by big data analyses, but only projections of patterns based on computational models. Indeed, a large corpus of research highlighting the social harms of the technologies has emerged. Central to this body of work is the view that as data-driven technologies continue to proliferate, their depiction by their academic and commercial developers as the panacea for crime control seems to obscure their challenges. This fast-growing scholarship is sometimes described collectively as critical data studies. Moving away from the earlier myopic idealism of certain sociotechnical imaginaries that have long surrounded the emergence of AI (Natale and Ballatore, 2020), this scholarship is increasingly recognising the harms of big data when automated by algorithms across the public and private sectors. Insights into these harms have emerged from various disciplines including criminology (Hannah-Moffat, 2018; Ugwudike, 2020), legal studies (Custers, 2012, 2013; Hamilton, 2015; Starr, 2014), media and cultural studies (Dencik et al., 2018; Iliadis and Russo, 2016), and computer science (Hao and Stray, 2019).

What emerges from these fields of study is that technologies designed by academics and others sometimes in collaboration with state authorities (such as the police) and sometimes with commercial motives can reproduce existing structural inequalities for several reasons. One is that data (in)justice (i.e. the social harms and benefits of datafication) can depend on socioeconomic status (Dencik et al., 2018; Taylor, 2017; Završnik, 2019). Importantly, the Bourdieu-inspired concept of digital capital (Bourdieu, 1986) can help us understand the uneven distribution of technological harms and benefits. From a sociological perspective, digital capital can be defined as the ability to not only access digital techs but to also design and capitalise a range of resources linked to digital technology (Van Dijk, 2005). Depending on the technology, digital capital, particularly via design and commercialisation capabilities, tends to be endowed in wealthy corporations and other elites such as academics and researchers. Perhaps relatedly, these groups also seem largely immune from technological harms.

In contrast, the socioeconomically marginal seems more vulnerable to harms such as ‘dataveillance’, that is, ‘a form of continuous surveillance’ (van Dijck, 2014: 198) using digital methods as has been noted by studies of the surveillance of defendants and convicted people (Willoughby and Nellis, 2016). Groups such as ethnic minorities and the disabled are also more vulnerable than others to misidentification given their noted underrepresentation in the data on which AI surveillance and identification systems rely (Law Society, 2019). Furthermore, the socioeconomically marginal and particularly those amongst them who are minorities can experience higher rates of policing activity in their residential area. This can be triggered by predictive policing algorithms that are prompted by racially biased arrest data to designate their geographical region as ‘high crime’ and in need of enhanced policing activity (Ferguson, 2017; Harcourt, 2015; Lum and Isaac, 2016). Minorities are also more vulnerable than others to overprediction of recidivism risks by algorithms relying on similar data (Angwin and Larson, 2016; Eaglin, 2017; Hannah-Moffat, 2018; Hao and Stray, 2019; Ugwudike, 2020).

Given these trends, it has been argued that data harms are proliferating at the same rate as the technologies exploiting the increased availability of big data, but knowledge of the harms and how to attenuate them is not (Taylor, 2017). An upshot of this is that the general public and the services relying on the data-driven algorithms can overestimate the veracity of the technologies (Hollywood et al., 2018). Positive frames depicting the tools as the panacea for crime control can exacerbate this problem. But there is a dearth of research on how the technologies are framed. To address the gap in knowledge, this article unravels the dominant frames and their implications, shedding light on how they are conjoined to issues of digital capital and sociotechnical imaginaries.

Methodology

Our narrative review of abstracts sought to advance understandings of the frames legitimising the datafication of criminal justice by answering the following questions: (a) how are the capabilities of datafication and data-driven technologies for criminal justice framed and presented to potential stakeholders and the general public alike? (b) what explains the different frames identified and (c) what are the implications?

Data search criteria

We focused on academic literature retrieved through the citation indexing service Web of Science (WoS), which indexes the text and metadata of most peer-reviewed academic journals, books, abstracts, conference papers, reports and other relevant literature. We looked for ‘topics’, a category which encompasses titles, abstracts, authors and keywords. Search terms used were as follows: TOPIC: (‘big data’ AND ‘criminal justice’) OR (‘big data’ AND policing) OR (mining AND ‘criminal justice’) OR (mining AND policing) OR (artificial AND ‘criminal justice’) OR (artificial AND policing) OR (machine AND ‘criminal justice’) OR (machine AND policing) OR (algorithm* AND ‘criminal justice’) OR (algorithm* AND policing). The keyword search returned 1773 results, which became 1330 once we refined the search using the exclusion criteria set out further below. We exported all titles, abstracts and associated metadata in our search results. The abstracts were then manually screened again by two researchers to enhance interrater reliability. The aim was to assess relevance since keyword searches notoriously produce false positives. Following this manual screening, a total of 493 abstracts were selected for in-depth analysis.

Data exclusion criteria and research limitations

We focused on material published in the last 11 years (January 2009 to December 2019) to reflect the period that has witnessed the rapid expansion of the big data phenomenon. Also, to make qualitative analysis manageable, our searches focused on terms commonly used in the academic literature on datafication and data-driven technologies as they pertain to criminal justice.

We recognise that the dataset used has a number of limitations. First, it is worth noting that a limitation of our data gathering approach is that WoS indexes the text and metadata of most relevant material, but some journals, books and conferences, for instance, might be overlooked. In this regard, we consider our study to be exploratory in nature and useful to start a discussion on sociotechnical imaginaries and digital capital in the context of data-driven technologies for crime prevention and control, not to be an end point. Whilst it is important to note that bibliometric evidence suggests that the coverage of WoS databases is competitive in the social sciences (Martín-Martín et al., 2018), additional research should consider other databases, to ensure a more comprehensive coverage. Also, we recognise that looking at abstracts does not tell us the whole story (especially considering that abstracts in different disciplines are written in quite different ways), and a proper meta-analysis on the topic here addressed would be beneficial. Yet, as recognised in the literature, abstracts are of fundamental importance for screening academic publications, to the point that the necessity and urgency to improve abstract reporting have been broadly discussed (see e.g. Guo and Iribarren, 2014; Saint et al., 2000) – as such, we believe they offer crucial information for our analysis.

Another limitation of this study that needs to be acknowledged is that we considered only studies and reports published in English. Even if English has become the

Data analysis

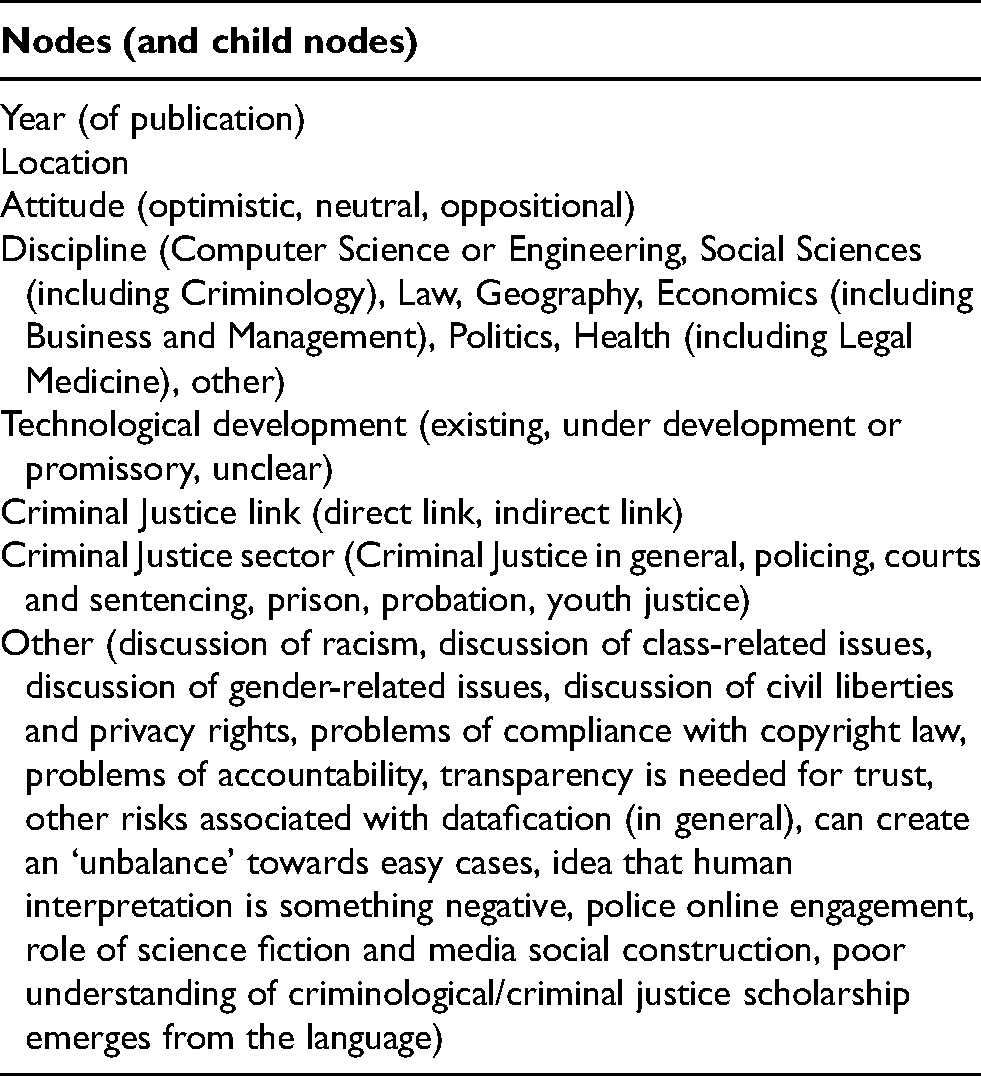

We used a thematic synthesis approach to analyse the data and present the findings. First, the data were uploaded to NVivo 11 (a data analysis software package to manage and arrange unstructured information) for coding. We used NVivo to systematically organise and analyse the data manually sampled from WoS, and we applied codebook thematic analysis which is a structured approach to coding that incorporates reflexive elements (Braun et al., 2019). In line with this approach, the main themes (conceptualised as domain summaries, or main ‘nodes’ in NVivo) and most sub-level nodes (child nodes) were determined in advance of full analysis, but they were then compared and revisited within the context of the abstracts as analysis progressed (see Table 1 for a summary of the nodes). Of course, a certain degree of overlap exists between some of the sub-codes identified (consider, for instance, the division by discipline); in creating categories, we aimed for a good balance between being sufficiently fine-grained for analytical purposes whilst maintaining sufficient accuracy considering the information available in the WoS database. Besides assisting us with qualitative analysis, NVivo allowed us to count the number of times each code was used across the sampled abstracts. An audit trail was maintained throughout, and the two authors worked collaboratively on data coding and interpretation.

Coding framework.

Findings

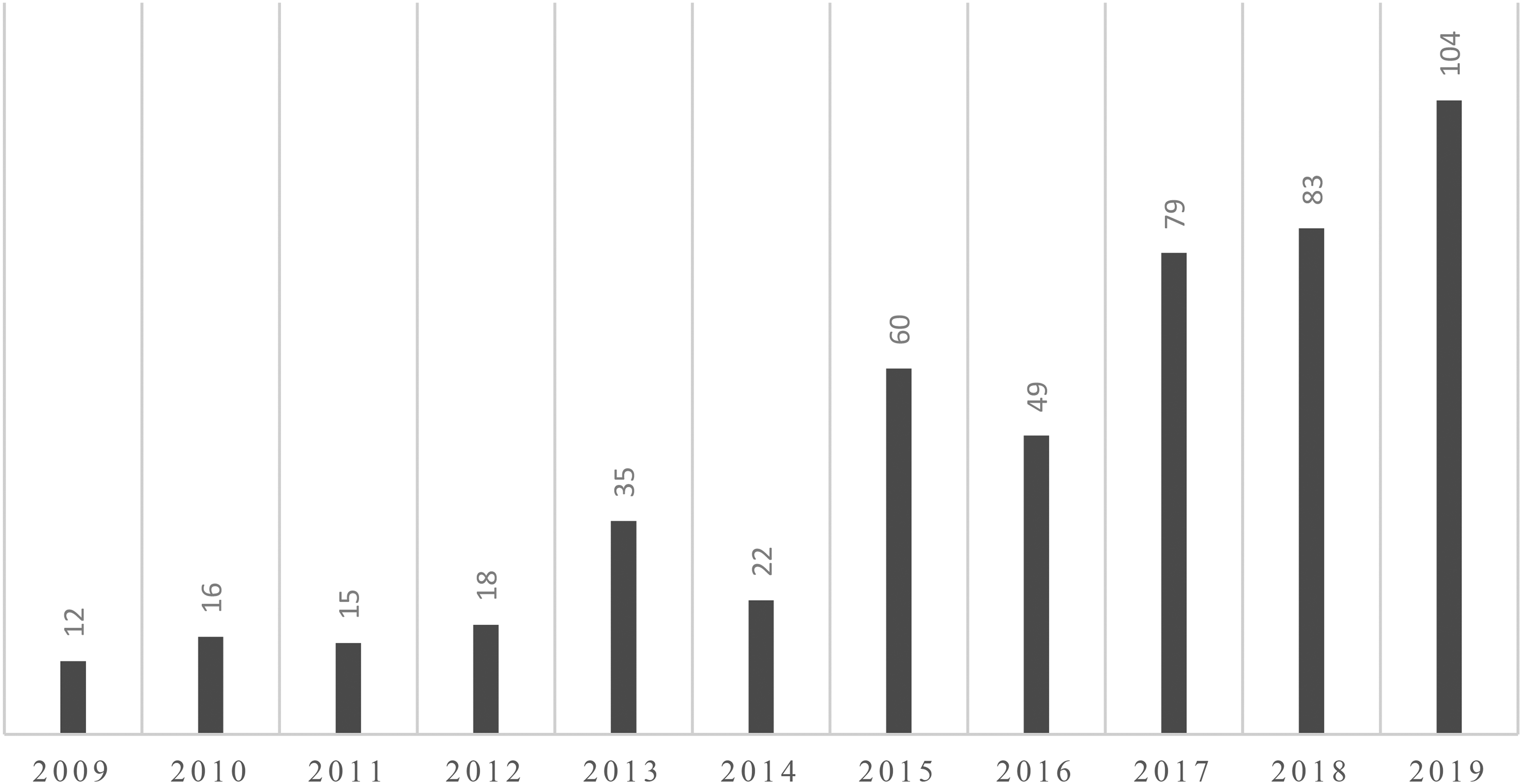

First, we looked at the year of publication (as reported in WoS) to understand the evolution of relevant scholarship during the timeframe of interest As shown in Figure 1, research on datafication and the accompanying rise in data-driven technologies is a fast-growing field, and this article is timely given its evaluation of key frames emerging from the scholarship.

Number of publications per year.

Second, we looked for any geographical reference present in the abstract detailing, for example, where the data-driven tool was developed or tested, or the origin of the databases used. A specific location was referred to only in 147 publications. This is problematic since the elision of locational information implies broad generalisability of results and a tendency to overestimate the scope of data-driven technologies. Worryingly, studies now show that such technologies are context specific, and both accuracy and fairness can be undermined if applied in other jurisdictions and contexts (Olver et al., 2014). For tools to have broad applicability, they have to be tested and validated for use beyond the location in which they were created.

Most of the abstracts focused on technologies that have a direct link to criminal justice (355) meaning that the data-driven technology described has been developed or discussed with specific reference to the criminal justice system. The remaining 138 abstracts focused on other uses and sectors but mentioned criminal justice as a potential application. Considering both the direct and the indirect links, most abstracts were directly relevant to policing (including forensics and urban safety), as shown in Figure 2 below (please note that the sum is slightly more than 493 as some publications mentioned/referred to more than one criminal justice sector).

Criminal justice sectors.

Only a minority of abstracts, however, gave emphasis to a number of key datafication-related problems, as presented in the review of the literature above. More in detail, only a limited number of abstracts referred to potential issues of racism (12), of class-related issues (2) or gender-related issues (2). Similarly, only a few abstracts mentioned explicitly issues of civil liberties and privacy rights (14), problems of compliance with copyright law (2), problems of accountability (5) or generally mentioned the existence of risks associated with datafication (7). Only one abstract stressed that, when applied to criminal justice, datafication could create an ‘unbalance’ towards easier cases, and only one abstract focused on the role of science fiction and social media construction in framing public understanding of the topic.

Increased transparency in big data and their use was suggested as potential solution to increase public trust in datafication in a few studies (4). Quite worryingly, it was suggested that human interpretation is somehow something negative, a problem to be ‘fixed’ with data-driven technologies (6) – ignoring that data ‘do not speak for themselves’ (see González-Bailón, 2013) – and the language used in a few abstracts (11) suggested a poor understanding of relevant criminal justice/critical scholarship. This latter fact is probably not surprising, considering that most of the abstract considered emanated from computer science and engineering, with very little multi- or interdisciplinary effort (see Figure 3, which represent the macro-categories of the prevalent disciplines, depending on the specific Journal, Proceedings or else where the study was published). The amount of publications in computer sciences and engineering could also be partially explained by a different publication culture in those disciplines, where it is more common to have papers published by groups of authors.

Disciplines.

We also considered the level of technological development, categorising abstracts depending on whether the data-driven technology described was in use (i.e. the publication was discussing/presenting a tool already in use, or the tool has been developed to an extent that it has been tested/trialled in real life) or not in the criminal justice system at the time of writing. As emerges from Figure 4, most of the technologies discussed were under development, and in a number of publications, the phase of development was not clear (e.g. when data-driven technology was discussed in general, without focussing on a specific tool).

Level of technological development.

Categorising and analysing the frames

How are the capabilities of data-driven technologies framed?

A final code considered was the ‘attitude’ one – that is, how data-driven technologies were prevalently portrayed in the abstract. Our findings show that the way the technologies and their capabilities are framed can be dimensionalised along three categories:

Please note that the following sections provide, in quotation marks, references to the abstracts analyzed. As it would have been impossible to discuss them all within the word limit allowed, we decided not to single out any of them: in our work, we want to highlight some trends and patterns that we think are of great interest in the current debates on data-driven technologies for crime prevention and control, not to debate the merits of specific articles. We also focus on frames relating to the use of data-driven technologies by criminal justice services. The frames may also be relevant in other contexts and to other actors deploying such technologies in other sectors, and a useful contribution of this article is that it provides frames that can be explored and expanded on in other contexts by future studies.

Optimistic frames

These were, by far, the most prominent and frequent frames. They depicted the rise of datafication in the criminal justice systems positively and encouraged the automation of ‘rich’ sources of data ‘now readily available’, indicating that this would enhance the efficiency and effectiveness of criminal justice. In general, optimistic frames evinced utopic sociotechnical imaginaries, with technoscientific innovations ‘encoding visions of the good society’ (Jasanoff et al., 2007: 6). They presented AI models as the future or the ‘next generation criminal justice’ that will provide ‘rich’ services to the courts and law enforcement and ‘power’ these services in the future. The key optimistic frames identified were, as such, primarily utopic:

Neutral frames

Neutral frames were non-committal in their analysis of the harms or benefits of datafication. These frames evoked a technorealism that vacillated between utopic and dystopic imaginaries and fell into three categories:

Oppositional frames

Abstracts containing oppositional frames were fewer than those comprising the other two frames, but because the oppositional abstracts presented theoretical arguments unlike the largely empirical content of the other three, they produced richer insights. This explains why they had more dimensions (listed further below) than the other frames. Together, the oppositional frames generally rejected the sociotechnical imaginaries that emphasised technological potentialities, even when such rejection meant overlooking implications such as the operational need to consider new uses of data, or the possibility that uninformed or discretionary human decision-making can also have serious adverse consequences. As will be discussed more in detail in the following section, behind oppositional frames, we generally find more subject knowledge of criminal justice systems and sociolegal theorisations (intellectual and critical capital) but less evidence of digital capital.

Meanwhile, oppositional frames depicted the sociotechnical imaginaries as rooted in myths propagated by invested proponents and ‘technological evangelists’ as well as media exaggerations. In rejecting the imaginaries, the oppositional frames drew attention to the social impacts of datafication including problems stemming from data injustices and the need for robust governance. Thus, they revealed that critical analysis of sociotechnical imaginaries is useful for unravelling ‘the implications of technology's social embeddedness for responsible global governance of both knowledge and technology’ (Jasanoff et al., 2007). The key oppositional frames were as follows: privacy violations, racial bias, algorithmic opacity, fairness concerns, inadequate legislation, the commercialisation of datafied criminal justice and systemic bias.

Oppositional frames also underlined the need for laws such as freedom of information legislation requiring developers providing commercial algorithms for public sector application to release their algorithms for scrutiny. Nevertheless, proprietary laws impede access to algorithmic black boxes, and in doing so, they undermine transparency and accountability (Pasquale, 2016). In the United States for example, the Supreme Court has held in response to requests by a defendant to scrutinise one such algorithm that data-driven predictive technologies applied in penal systems are protected by proprietary rights (Harvard Law Review, 2017; HOC, 2018).

Aligned with this, some oppositional frames implied that fairness imperatives cannot be achieved using technical means since the effects of biased administrative data on outputs (risk scores) and wider social outcomes (systemic discrimination) were not measurable. Besides, efforts to achieve fairness can jeopardise predictive accuracy. Ultimately, decision-makers have to subjectively determine the appropriate balance between fairness and accuracy, or even how fairness should be constructed and measured, a reality made clear by researchers such as Hao and Stray (2019).

Aligned with this was the view that the developers of commercial algorithms were being empowered to exercise the ‘sovereign power’ previously reserved for the state. As others have noted, this provides further impetus for non-state actors to participate in criminal justice policy and governance (Hannah-Moffat, 2018; Ugwudike, 2020). It calls attention to the politics of science and technology innovation since, as noted earlier, the developers exert power through their ability to inform technology design and criminal justice expenditures (Jasanoff, 2015).

Another issue dealt with by oppositional frames is the role of data-driven algorithms and their developers in knowledge production about social problems such as crime and risk. In drawing attention to this, oppositional frames echoed the concerns expressed by others who note that data-driven technologies, including the commercial versions, are arbitrarily reframing constructions of crime and deviance (Eaglin, 2017; Ugwudike, 2020). Here, again we witness how the concept of sociotechnical imaginaries can highlight the politics of science and technology innovation. In this case, whilst optimistic frames emphasised positive imaginaries, oppositional frames unravelled the meanings and social implications of technology innovation.

What explains the different frames identified?

Sociotechnical imaginaries and access to digital capital

Optimistic frames manifested imaginaries of data-driven technologies as the panacea for crime control. They did so through their allusions to the propitious availability, utility and capabilities of datafication, endorsing the merits of the technologies. Problems such as biased predictions were either ignored or subjected to tests designed to disprove them. Crime control imperatives were prioritised over the due process and human rights concerns mooted by sections of the critical scholarship (e.g. Eaglin, 2017; Hamilton, 2015; Starr, 2014; Steinbock, 2005). Neutral frames were vacillatory and just as likely to acknowledge sociotechnical imaginaries as they were to refute them. Unlike these two frames, oppositional frames refuted them entirely. Thus, the different frames evoke potentially competing imaginaries. Whilst the optimistic frames and aspects of the neutral frames emphasise the promise of technical accuracy for efficient criminal justice, oppositional frames focus more on racial and social justice imaginaries. These competing visions have for example been reflected in recent fairness debates concerning deployments of criminal justice algorithms. To cite one example, the risk of recidivism algorithms applied in several western jurisdictions including the UK and the US is considered technically fair if they predict outcomes equally well for all social groups (predictive parity) even if the error rates are higher for some groups than others (Dieterich et al., 2016; Skeem and Lowenkamp, 2020). Hence, predictive parity for efficient criminal justice is prioritised, reflecting the emphasis on technical efficiency associated with optimistic and some neutral frames. But critics of the algorithms adopt oppositional frames that focus on visions or imaginaries of systemic and structural equality, arguing that predictive parity is insufficient and that fairness demands an equal balance of error rates for all racial groups (equalised odds) (see Angwin et al., 2016).

Apart from their differing sociotechnical imaginaries, differences in intellectual (subject knowledge) versus digital capital investments also seem to explain the differences between the authors of the three frames. As noted earlier, digital capital includes the ability to access and capitalise resources linked to digital technology (Van Dijk, 2005). Positive endorsements of such techs stemmed largely from data scientists as well as software developers, mathematicians and statisticians. Their dominance over the design of such techs is indicative of their access to digital capital (in this case, relevant design skills) and means that even if no apparent connections exist between them, together they constitute a heterogenous ‘technoepistemic network’ of experts (Ballo, 2015: 10) able to inject that their ideals and priorities into technology development, framings and imaginaries. This conjures up an image of a world ‘envisioned by actors who have the capacity to materialise their visions’ (Ballo, 2015: 11). It is argued that this network is male dominated and lacks representation from socially marginal groups such as racial minorities and deprived communities. This could in part explain the uneven distribution of the risk and harms of criminal justice technologies which as the authors of oppositional frames observe, seem to particularly affect socially marginal groups.

Beyond their access to digital capital, it may be that the proponents of optimistic and neutral frames are also motivated by the promise of professional advancement or commercial and other gains (Caulfield and Condit, 2012). Or it may simply be that their position is inspired by visions of the utopic possibilities offered by new technologies, which emphasises transcendence and a sort of disembodied vision of the relationship between humans and machines (Brydolf-Horwitz, 2018), and this may be more prevalent in individuals with certain disciplinary backgrounds. This could in turn explain the heightened tendency to create imaginaries of ‘smart’ criminal justice as superior to human competencies and capable of providing efficient and cost-effective crime control. Whatever the reason, the foregoing reveals the usefulness of sociotechnical imaginaries as concept that can be used to reflect on the meanings and motivations underpinning technology development. In any case, we should not forget that policy-makers, law enforcement officers and other practitioners that should use or rely on data-driven technologies do not always have a strong digital capital (Sanchez et al., 2019).

What are the implications?

As already noted, positive frames and imaginaries of datafication as vital for the efficient maintenance of the administration of criminal justice were dominant. Several implications arise from this and can be conceptualised as the perpetuation of data harms, the prioritisation of systemic or technocratic crime control and the endorsement of expansionary datafication. The first pertains to the social implications whilst the latter two relate to political implications.

Models for networked surveillance and control were also developed. For example, there was evidence of data linkage practices whereby data from different agencies were pooled together for automation by predictive algorithms to demonstrate how such data can be used for early intervention activities by authorities. We have already seen that data linkage to inform official decision-making has been described as capable of leading to data breaches and decontextualisation of data that can prompt unwarranted state intervention (Dencik et al., 2018). Alongside this ethical problem, models enabling state authorities such as the police to bypass privacy restrictions were proposed. These models were said to be capable of storing metadata from the datasets that police services were not permitted to keep in storage. An example is evidence relating to child sexual abuse. With the proposed models, the police could store the metadata for future investigations. In proposing these models, the optimistic and neutral frames prioritised crime control imperatives and managerial concerns over concerns about data harms (regardless of the fact that they could be justified under the relevant legal framework, if we consider this issue from a legalistic perspective).

Conclusion

This article presented and discussed the findings of a study that systematically reviewed and compared multidisciplinary academic abstracts on the data-driven tools shaping (or with the clear potential to shape) decision-making across several justice systems. In this context, we proposed three main frames – optimistic, neutral and oppositional – for understanding how relevant technologies are portrayed, debated how notions of sociotechnical imaginaries and access to digital capital are of the upmost importance to explain differences in the frames and conceptualised the main social and political implications of the current datafication revolution in criminal justice.

The prevalence of optimistic frames we have observed is consistent with current criminal justice deployments of data-driven technologies, which continue to proliferate despite well-documented concerns about data harms. What this indicates is that the frames and their accompanying technologies are central to depictions of the technologies as the panacea for crime control regardless of their accompanying negative impacts. Through their digital capital (but in a context where many practitioners who should use or rely on algorithmic tools often do not have a comparable digital capital), the authors of optimistic and neutral frames have been able to develop and test advanced AI models to illustrate their frames and support law enforcement efforts. In the process, however, they have been more likely to depict their products and similar tools only highlighting the positives and not the many negatives that are still present with the current state of technological advancement and available databases. Indeed, by presiding over the discourse about datafication, the domain frames overshadow the caveats provided by the oppositional frames (notably about privacy violations, racial bias, algorithmic opacity, fairness concerns, inadequate legislation, the commercialisation of datafied criminal justice and systemic bias). By paying insufficient attention to these problems and developing data-driven AI models for criminal justice application, the dominant frames are encouraging the confident expansionary rather than the cautionary datafication of criminal justice.

We hope that our contribution will help furthering critical debates on the complex yet fundamental balance needed in improving the efficiency, effectiveness and legitimacy of criminal justice systems on the one hand, whilst avoiding dangerous and unjust data harms on the other. In a debate still too dominated by a small number of scientific disciplines that have the digital capital to frame the discussion in a certain direction, but that are likely to lack some of the critical expertise traditionally residing in the social sciences and other disciplines, increased cross-disciplinary efforts are a possible solution to diversify ownership of and access to digital capital in order to facilitate the inclusion of a broader range of perspectives and ways of knowing and to stem the dominance of the imaginaries currently overlooking the potentially harmful sociopolitical implications of untamed datafication.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.