Abstract

By the early 2020s, emotional artificial intelligence (emotional AI) will become increasingly present in everyday objects and practices such as assistants, cars, games, mobile phones, wearables, toys, marketing, insurance, policing, education and border controls. There is also keen interest in using these technologies to regulate and optimize the emotional experiences of spaces, such as workplaces, hospitals, prisons, classrooms, travel infrastructures, restaurants, retail and chain stores. Developers frequently claim that their applications do not identify people. Taking the claim at face value, this paper asks, what are the privacy implications of emotional AI practices that do

This article is a part of special theme on Big Data and Surveillance. To see a full list of all articles in this special theme, please click here: https://journals.sagepub.com/page/bds/collections/hypecommerciallogics

Emotional AI refers to technologies that use affective computing and artificial intelligence techniques to sense, learn about and interact with human emotional life. This paper assesses the privacy implications of these technologies and organizational applications employed to make inferences about emotions, feelings, moods, perspective, attention and intention. While at an embryonic stage, they are becoming increasingly present in everyday objects and practices such as assistants, cars, games, mobile phones, wearables, toys, marketing, insurance, policing, education and border control. They are also being used to regulate and optimize the emotionality of spaces, such as workplaces, hospitals, prisons, classrooms, travel infrastructures, restaurants, retail and chain stores. To explore these developments, this paper asks, what are the privacy implications of emotional AI practices that do

On emotion sensing

The practice of using computer sensing to interact with emotional life has origins in the 1990s, with the field of affective computing (Picard, 1997, 2007). What McStay (2016, 2018) terms ‘emotional AI’ and ‘empathic media’ are made possible through weak, narrow and task-based AI efforts to see, read, listen, feel, classify and learn about emotional life. This involves data about words, images, facial expressions, gaze direction, gestures, voices and the body, that in turn encompasses heart rate, body temperature, respiration and electrical properties of skin. Given that this paper is interested in physiological inferences, input features might be facial expressions, voice samples or biofeedback data. The output is named emotional states that are then used for a given purpose. Applicable machine learning techniques vary, but frequently involve convolutional neural nets (useful for images and system efficiency gains), region proposal networks (useful for multiple object recognition) and recurrent neural networks (that draw on recent past data to determine how they respond to new input data).

Output emotional states are used to enhance interaction with devices and media content; create new forms of toys and entertainment; make experiences more immersive; enhance artistic expression; surveil and enable learning; facilitate self-understanding of moods and well-being; optimise and regulate behaviour in closed spaces (e.g. prisons and travel infrastructures); judge risk (e.g. by providing car insurance companies data about reactivity); surveil and measure emotionality of bounded spaces (such as retail outlets or cities); surveil customers and worker performance; provide emotional reactivity feedback to marketers and facilitate creation/targeting of advertising.

The ‘basic emotions’ methodology (Ekman and Friesen, 1971, McDuff and el Kaliouby, 2017) that sits behind much emotional AI has been widely critiqued (Andrejevic, 2013; Leys, 2011; Russell, 1994). Indeed, the 2018 AI Now Report debunks it as pseudoscience, linking facial coding with phrenology (Whittaker et al., 2018). This critique is extreme, although practitioners and vendors of emotional AI recognise that single labels rarely capture complex emotional and affective behaviour (Gunes and Pantic, 2010). The key problem with face-based approaches is that they are based on reverse inference where an expression is taken to signify the experience of an emotion (Barrett et al., 2019). This is problematic because ‘similar configurations of facial movements variably express instances of more than one emotion category’ (Barrett et al., 2019), which indicates that more detail on the context of the situation is required to understand the emotion. This requires more data and potentially more invasive practices (McStay and Urquhart, 2019).

Application of emotion tracking has progressed from in-house research facilities (such as those used in neuromarketing to detect responses to adverts) to online and physical contexts. Including emojis (Davies, 2016; Stark and Crawford, 2015), wearables (Lupton, 2016; Neff and Nafus, 2016; Picard, 1997), human–robot interaction (Bryson, 2018), education (Williamson, 2017), retail (Turow, 2017), employee behaviour (Davies, 2015; Grandey et al., 2013) and border control (Sánchez-Monedero and Dencik, 2019), the broader business strategy for emotional AI companies is ubiquitous usage of automated emotion detection in all personal, commercial and public contexts. To suggest a broad rule, if there is any form of value in understanding emotion in a given context, emotional AI has scope to be employed. Companies interested in emotional AI include established companies such as NEC, IBM, Apple, Google, Microsoft and Facebook, but also a long list of smaller companies (such as Eyeris, Sensing-Feeling and Affectiva) seeking to define new markets (for an extended list, see Emotional AI, 2018).

On privacy: Towards a common good

Despite liberal roots in respect for individuality, selfhood, autonomy and control, it is clear that privacy includes these principles but is not synonymous with them. Critically for this paper, privacy is not only an individual right, but a group right because it is a collective good. Further, given what is at stake – bodies, emotions and experience – dignity (for individuals and groups) remains especially important in ubiquitous computing contexts (Edwards, 2016).

A dignity-based understanding diagnoses the problems with passive profiling and using Big Data techniques about emotions for unconscious influence: it is about recognising that phenomenological experience is important, innately worthy and should not be appropriated. Applied to the body and face expressions, this is not moral idling as the blog for the European Data Protection Supervisor (Europe’s data protection authority) states, ‘Turning the human face into another object for measurement and categorisation by automated processes controlled by powerful companies and governments touches the right to human dignity’ (Wiewiórowski, 2019). Dignity also serves to block conceptions of privacy as an indirect expression of other rights, such as property. As Floridi (2016) puts it, the ‘my’ in ‘my data’ is not the same as the ‘my’ as in ‘my car’, because personal, sensitive and intimate information plays a constitutive role of who we are.

On privacy as a common good, Floridi (2014) points out that the right to privacy is not just an individual right, it is a There are very few Moby-Dicks. Most of us are sardines. The individual sardine may believe that the encircling net is trying to catch it. It is not. It is trying to catch the whole shoal. It is therefore the shoal that needs to be protected, if the sardine is to be saved. (2014: 3)

Regulatory context: Emotional AI and soft biometrics

The value of group-oriented body-focused privacy critique becomes clear when cast against European Union (EU) regulation on data protection. The EU is a useful benchmark as it has the most stringent data protection and privacy standards in the world: thus, if the EU is not adequately prepared to legally address emotion sensing, arguably, nowhere else will be. In general, as stated in Article 4(1) of the General Data Protection Directive (GDPR), EU directives and regulations exist to protect personal data. This is when information can be used to identify or single out a person from others, so they can be treated differently. If the information in question is not personal data, the regulations do not apply. Yet, the EDPS, through Opinion 4/2015 on data, technology and dignity, moves towards group privacy sentiment stating that Big Data ‘should be considered personal even where anonymisation techniques have been applied’, although adding that ‘it is becoming ever easier to infer a person’s identity by combining allegedly ‘anonymous’ data with other datasets including publicly available information’ (2015: 6). While the overall point focuses on personal identity, it is multi-staged, i.e. in the first instance such data should be considered personal upfront (even if not identifying). Following this thinking, if personal in the first instance, then it follows that it will be also sensitive (as per Article 9 of GDPR, requiring explicit opt-in) because it has scope to involve identifying biometrics. However, this is notable opinion rather than law. Oddly, the GDPR makes no reference whatsoever to emotions. Similarly, a proposal for the revised ePrivacy directive rarely mentions emotions (European Commission, 2017). Only recitals 2 and 20 mention emotions although, importantly, recital 2 defines them as highly sensitive. Yet, while introduced, emotions do not appear in the articles of the proposed ePrivacy directive. To an extent this is understandable because ‘emotion’ is an imprecise word (including social media sentiment analysis and Big Data inferencing, such as mood tracking of Spotify usage, as well as ‘soft’ and ‘hard’ biometrics). However, given the increasing role of emotion in data analytics and facilitating human–machine interaction, the absence is still surprising.

Although GDPR makes no reference to emotions, it does address identifying (or hard) biometric data. Article 4(14) defines it thus: ‘biometric data’ means personal data resulting from specific technical processing relating to the physical, physiological or behavioural characteristics of a natural person, which allows or confirm the unique identification of that natural person, such as facial images or dactyloscopic data. (European Commission, 2016: 34)

Importantly, the Article 29 Opinion states that: Mention should also be made to the use of the so-called soft biometrics defined by the use of very common traits not suitable to clearly distinguish or identify an individual but that allow enhancing the performance of other identification systems. (Article 29 Data Protection Working Party, 2012: 16) Moreover some systems can secretly collect information related to emotional states or body characteristics and reveal health information resulting in a nonproportional data processing as well as in the processing of sensitive data in the meaning of article 8 of the Directive 95/46/EC. (Article 29 Data Protection Working Party, 2012: 17) More recently it is not only identity that can be determined from a face but physiological and psychological characteristics such as ethnic origin, emotion and wellbeing. The ability to extract this volume of data from an image and the fact that a photograph can be taken from some distance without the knowledge of the data subject demonstrates the level of data protection issues which can arise from such technologies. (Article 29 Data Protection Working Party, 2012: 21)

In addition to the need for group privacy protections, there is another issue for concern about emotion tracking. That is, despite being about the body, modern regulation of soft biometrics speaks of data rather than privacy. Whereas the original Data Protection Directive (that GDPR replaces) frequently mentions privacy, in GDPR this is radically downplayed in preference of ‘data protection’. Lynskey (2015) suggests comparing Article 8 of the European Convention on Human Rights (ECHR) to the protection offered by data protection law. She observes that data protection law has little to say about an intrusive strip-search, but this would be accounted for by Article 8 of ECHR that demands respect for private and family life. This presents a different picture from that found in data protection legislation that focuses on identification as the primary route to harm. What is clear is that an account of emotional AI based solely on data tracking and identification is a limited one. This paper reasons that as emotion tracking emerges, a broader dignity-based understanding to questions of commercial power and data privacy is required. Given that a quintessential attribute of dignity and humanity is physical, mental and experiential self-determination, how do influential stakeholders conceive of privacy in intimate contexts?

Methods

So far, this paper has identified a lacuna in critical literature on emotional AI and privacy, and in European law regarding soft biometrics and non-identifying usage of data about emotions. To explore what stakeholders think about ethics and emotional AI, this paper draws on insights derived from interviews with relevant stakeholders, a workshop with stakeholders to design ethical codes for using data about emotions and a UK survey to gauge citizen feelings about emotion capture technologies.

Interviews

The first source of insight comprises interviews with stakeholders directly interested in emotional AI. The objective here was to obtain understanding about the composition and scope of the emotional AI industry, what it does, why it does it, where it is heading and what stakeholders see as the main ethical issues. Across 2015–2017, 108 open-ended 1-hour interviews were conducted to elucidate views from industry, policymakers concerned with data and national security, municipal authorities and privacy-oriented NGOs.

2

Of these 108 interviews, 33 entailed discussion of non-identifying emotional AI, privacy and ethics. Many interviewees prefer not to be named in person or by company, but the categories are as follows:

This paper focuses on this sample, although it is indirectly informed by contextual norms, values and attitudes encountered in the wider interviewing process. The sampling strategy was based on two factors: knowledge saturation and diversity (Bertaux, 1981). Variety was important because the study sought a broad understanding of interest in emotional AI at this early stage of its application. These included geographically varied organisations from the US, UK, France, Belgium, Estonia, Israel, Russia, United Arab Emirates and South Korea. The size of companies ranged from global (e.g. Alphabet (Verily), Philips, IBM and Facebook) to start-ups (mostly from London and San Francisco).

Interviewees comprised chief executive officers and individuals in strategic positions from the following sectors: advertising and marketing; policing and national security; education; insurance; angel investment; in-car experience and navigation; human resources and workplace management; sports; sex toys and psychosexual therapy; mental health; ethical hacking; art; and media, interactive film and games companies. Each sector was selected on the basis of their current work in emotion detection, or likelihood of interest in these applications. In addition to industrialists and public sector actors, people working in privacy-friendly NGOs (Electronic Frontier Foundation, Open Rights Group and Privacy International) were interviewed to obtain a critical, policy-oriented perspective. Legal dimensions of emotion capture were explored in interviews with media and technology law firms, and European policymakers in the field of data protection. A multi-tiered consent form allowed interviewees to select a level of disclosure they were comfortable with.

Questions revolved around

Workshop

The second source of empirical understandings is a creative workshop organised by this paper’s author at Digital Catapult, London (16/09/2016).

3

It was conducted on the basis that privacy insights about emotional AI could be disclosed through peer-based discussion and activities. Following Veales’s (2005) approach to creative workshops, 21 participants interested in data about emotions were asked to design ethical guidelines. This took the form of a list of do’s and don’ts. Titled

Survey

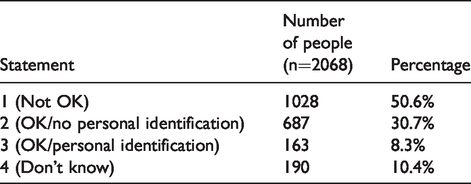

This third tranche of data comprises a demographically representative UK nationwide survey (n = 2068) conducted in November 2015. The UK was chosen because emotional AI is developing there apace. 4 Closed-ended questions were used to gauge lay attitudes to potential uses of emotion detection employed in technologies and contexts that citizens are familiar with. These embraced its employment in social media for market research, reactive billboards in advertising, online games, interactive movies and voice-based search (see Table 1 for the specific questions asked). Utilising a multiple-choice format, response options were scaled to reflect industry practices and the research interest in anonymisation. Options were as follows: overall rejection of emotion capture practices (‘not OK’); acceptance of anonymised emotion capture practices (‘OK anonymised’); acceptance of identifying emotion capture (‘OK identifiable’) and a final category for those who do not have a view or do not understand the question (see Table 2 for response options in full).

Closed-ended questions in UK public survey of emotion detection.

Response options in UK survey on public views of emotion detection.

The survey was executed online via ICM Unlimited, a commercial survey organisation. Online surveys have methodological caveats including difficulties of presenting complex topics and minimal control over respondents’ condition (attentive or distracted). However, this approach generated a respectable weighted sample of geographical regions, age groups, social classes and gender, while avoiding social desirability bias. This is especially pertinent in privacy-related research that is recognised as having scope for bias (Zureik and Stalker, 2010). On complexity, participants were not provided further information than that contained in the question. This was deemed acceptable because the research was interested in lay responses to plainly stated propositions about emergent technologies.

Key findings from interviews and workshop: A weak consensus on privacy

There were notable differences of opinion on specific aspects and motives among stakeholders with a professional interest in emotional AI. Some of the themes established were to be expected, such as speed of technological

All interviewees with commercial interests in profiling said that it is inevitable that machines will be employed to try to gauge feelings, emotions and intentions. They were confident that emotional AI would increase in scope and prevalence in 5–10 years. Gabi Zijderveld from Affectiva, a sector-leading company that uses facial coding to understand emotion, represents the overall view of interviewees from emotion-based companies. She says: We believe that this tech will be ubiquitous in the future, maybe five years; we’ll see a lot of the tech that we interact with on a daily basis using emotion. We see a lot of tech with human-tech interaction playing out in a digital context. With smart AI systems we need to understand how human emotions factor into this. We believe this is largely missing today and this is a negative thing. (Interview 2016)

Each interviewee recognized privacy as a concern yet had different perspectives on why it is an issue. Coming from a health background (although Emotiv’s headwear is widely used in market and user experience research), Kim Du states: It’s your brain data. Right now there’s no regulations, starting with security about how wearable companies are collecting the data they’re collecting about their users. It’s an ethical issue given no policy guidance. (Interview 2016)

A handful of commercially oriented interviewees see opportunity in privacy-by-design techniques. One smartphone app developer interested in emotions and video-calls stated a preference for ‘edge computing’ because ‘Anything that is processed by the cloud entails resale of emotions and an after-market of emotions: this presents dangers’ (Anonymised Interview 2016). However, importantly, the interest in privacy ethics is not just ethical, but also entails commercial

An interviewee from a global technology firm exemplifies the overall view of emotion capture businesses stating, ‘the reason why emotion detection has not scaled as quickly as expected has less to do with the technology itself, but reticence of clients and their desire to avoid a PR backlash’ (Anonymised Interview 2016). Gawain Morrison of Sensum 5 echoes this, saying emotions are ‘that final personal frontier’ and ‘Super-tech companies are paranoid about being seen to do the wrong thing, such as facial coding’. In reference to adding emotional AI to existing media, Morrison adds: ‘No-one will hurt shareholders or their bottom line with an unnecessary bolt-on. It’s a powerful tool and it will require a new company to take it into the setting before it’s accepted. The old guys are untrusting’ (Interview 2016).

The workshop held at Digital Catapult was primarily organised to create self-generated guidelines for technologists and businesses working with emotional AI. Attendees concluded with a range of overlapping suggestions. While focused issues were discussed regarding psychological suppositions (e.g. reliability of ‘basic emotions’), appropriate time length of data storage, racial and cultural difference regarding emoting, potential for citizen manipulation, the right to be forgotten, scope for pre-crime analytics, repurposing of collected data and user experience, the workshop delegates were asked to agree some basic rules. They said that ‘Do’s’ should include ‘put the person first’; ‘put them in control’; that ‘external and internal guidelines are necessary’ (i.e. regulations) and ‘autonomy and choice’. Unanimously, they also agreed ‘use of data about emotions should be proportionate to the goal’, and that ‘users’ benefit trumps commercial gain’. On ‘Don’ts’, they agreed that those in the business of emotion detection should ‘not be covert’ (regardless of whether identifying or not) and they should not use emotion as the ‘be and end all of profiling’. These are familiar liberal approaches to privacy, involving autonomy, control, capacity for management and transparency. Yet, given that participants were told that an overview of the findings would be published and disseminated by Digital Catapult, an important organization in the UK and European digital industry ecology, the uniformity and strength of recommendations is surprising.

Despite this overall emergent finding of a weak consensus on the need for privacy in emotional AI, there were

Perhaps surprisingly, the weak consensus on privacy (excluding advertising and retail) also includes NGOs working in data protection. While they raised concerns, they did not dismiss using emotions to interact with technologies if liberal privacy principles are respected. On fears, Gus Hosein of Privacy International (views not representative of organisation) observed that emotional AI makes us prone to subtle manipulation. Hosein cites state surveillance of populations and commercial interest in understanding purchase intention. This is realised in China as it uses emotional AI in public spaces such as airports, railway stations and other parts of smart city infrastructure (Wong and Liu 2019). Similarly, Dubai (or ‘Smart Dubai’), for example, analyses sentiment and retail spending trends and employs in-house psycho-physiological measures (using Emotiv headwear) to understand happiness in the region (Anonymised Interview 2016). On emotional AI and soft biometrics, Hosein says this ‘freaks me out’ and ‘we don’t have the legal frameworks’ (Interview 2015). On being asked about emotional AI that does not make use of personal data, he reasons ‘it is still taking something from me… it is still interacting with me… it is interfacing without my say so’.

Yet, Hosein also highlighted enabling aspects of new technologies. Key factors for him are ‘control’, and a ‘say on outcomes’. These principles align with industry views described earlier, indicating that the views of industry and pro-privacy groups are

A more antagonistic response was expected. Instead, Killock insisted that liberal principles of control, awareness, meaningful consent and better regulation by centralized institutions are required. An interview with Jeremy Gillula of the US Electronic Frontier Foundation (views not representative of organisation) found Gillula arguing that a person should be aware of what is being collected, adding: ‘If you’re aware that machines are tracking your emotions to make interactions better, that’s OK’. As an example, he says, ‘If my smartphone understands that I’m angry and can provide a response that calms me down, that’s OK’ (Interview 2016). Echoing the smartphone app developer, he said that what matters is that information is secure and that the device owner has control over whether data is shared to the cloud. His criticisms were less about commercial uses of emotional AI, but governmental and policing awareness of moods (such as anger), accountability to devices that pertain to read emotions, algorithmic biases in interpreting of emotions, and how information about emotions might be used in courts, such as in a divorce subpoena.

On regulation, Gillula diverged from UK NGOs. Reflecting EFF’s libertarian roots, he is wary of increased government regulation because technology evolves quicker than law. Yet, he adds that companies should be open to independent auditing of security and that they should have a legal duty to tell users how their data is processed. Omer Tene (Vice President of Research and Education of International Association of Privacy Professionals (IAPP)) made a similar argument. Stating that technological progress is inevitable, he suggests that at best privacy advocates can try to adjust social norms and policies to limit or moderate it (Interview 2016). Speaking of his own views rather than IAPP’s, he says that it is difficult to see government curtailing development of emotional AI. Recognising potential privacy issues, he believes emotional AI will grow and be widely used. Tene says that new types of data-intensive technology always trigger privacy hotspots, adding, ‘One juncture you’re bound to bump into is children’. This point was made in reference to emergent tracking of emotion in schools. Tene says that, ‘I think there’ll be angst from parents on the impacts on opportunities of their kids down the road’.

To summarise, the interviews and workshop found that the emotional AI sector is likely to grow rapidly within the next five or so years. A hard-core element (advertising) sees little wrong with collecting emotional data in bulk because it reasons that greater consumer understanding will lead to better service from marketers. However, the majority consensus evident across commercial, regulatory and NGO sectors is that more ethical industrial practices (such as opt-in consent) will win public trust; and that there is a creepy factor to be overcome. What, however, do citizens actually think of these emerging practices? Are they party to the emerging weak consensus?

UK survey findings

Reported feelings from UK citizens showed little variance across different media and technological forms (sentiment analysis, out-of-home advertising, gaming, interactive movies, voice and mobile phones). Similarly, gender, social class and region did not produce noticeable differences. As detailed in Table 3, the overall mean averages for

Overall UK citizen feelings in 2015 about emotion detection.

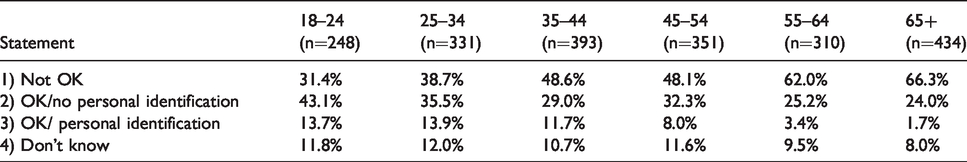

However, as reported in Table 4, age was

Using age to segment UK citizen feelings about emotion detection.

This paper speculates that younger people are more open to novel forms of engagement with technology but remain wary of identifying processes. On this basis, there is a case to be made that younger people might be party to a weak consensus on the basis of control-based accounts of privacy. Nevertheless, it should be kept in mind that the 18–24s are a small percentage of an overall suspicious citizenry. On attitudinal differences between age groups, the survey methodology shows its weaknesses. However, speculatively, the generation most open to emotion detection was born between 1991 and 1997, when the web emerged as a mass medium. Further, according to UK findings by media regulator Ofcom (2019), this generation displays the highest levels of internet usage per week; is highly likely to use lots of websites/apps; is most likely to access the internet via smartphones and shows high overall levels of interactive media use. It is also most likely to be very confident about staying safe online, least likely to have read terms and conditions thoroughly, yet most likely to have changed social media settings of specific sites to be more private. Thus, although younger people are open to new experiences, this should not be mistaken for not caring about privacy.

Discussion: A critical window for the weak consensus

This paper has accounted for the growth of emotional AI that assesses bodies for indication of emotional states. Its first contribution is that it has pushed forward the privacy debate by diagnosing an over-emphasis on identification in data privacy regulation and omission of non-identifying soft biometric data about emotional life.

Its second is the finding that over half of UK citizens are ‘not OK’ with the principle of emotion detection (identifiable or otherwise) and that this has no current remedy in UK or European law. Especially, when privacy is seen in terms of bodily integrity (Nussbaum, 1999), this takes us beyond questions of personal data and identification, to dignity and the right for people to have self-determination over their own bodies. Privacy as dignity does not hinge on identification and, as a result, may be extended to groups, especially given interest in objectifying emotions without opt-in consent for commercial gain. On what should be done to promote dignity in relation to emotional AI and soft biometrics, if regulatory action is deemed necessary, there is scope to do this within the framework of GDPR as Article 9(4) grants capacity for Member States to maintain or introduce further conditions or limitations with regard to the processing of genetic data, biometric data or health data. As a result, argued through the prism of emotional AI and [soft] biometric data, this paper echoes Wachter’s call for ‘new protections based on holistic notions of “data about people” and group conceptions of privacy’ (2020: 55).

Third and finally, there is the unusual consensus on privacy among industry and data protection NGOs, providing opportunity for regulatory change. Although older people (over-55s) were ‘not OK’ with emotional AI, and therefore not party to the weak consensus, younger people appear party to the weak consensus on the basis of a control-based account of privacy (that is, they are happy to interact with technologies but want a meaningful say over the process), also suggesting that antagonism will reduce in future decades. Interviewees working with voice, facial coding and body measurement data about emotions all said they ‘feel strongly’ about

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the UK’s Arts and Humanities Research Council [grant number AH/M006654/1].