Abstract

Corporate carbon footprint data has become ubiquitous. This data is also highly promissory. But as this paper argues, such data fails both consumers and citizens. The governance of climate change seemingly requires a strong foundation of data on emission sources. Economists approach climate change as a market failure, where the optimisation of the atmosphere is to be evidence based and data driven. Citizens or consumers, state or private agents of control, all require deep access to information to judge emission realities. Whether we are interested in state-led or in neoliberal ‘solutions’ for either democratic participatory decision-making or for preventing market failure, companies’ emissions need to be known. This paper draws on 20 months of ethnographic fieldwork in a Fortune 50 company’s environmental accounting unit to show how carbon reporting interferes with information symmetry requirements, which further troubles possibilities for contesting data. A material-semiotic analysis of the data practices and infrastructures employed in the context of corporate emissions disclosure details the situated political economies of data labour along the data processing chain. The explicit consideration of how information asymmetries are socially and computationally shaped, how contexts are shifted and how data is systematically straightened out informs a reflexive engagement with Big Data. The paper argues that attempts to automatise environmental accounting’s veracity management by means of computing metadata or to ensure that data quality meets requirements through third-party control are not satisfactory. The crossover of Big Data with corporate environmental governance does not promise to trouble the political economy that hitherto sustained unsustainability.

Keywords

Promissory discourses of Big Data might well benefit from sociotechnical studies of data practices (Ruppert et al., 2015). I set out from an investment of hope into Big Data analytics: an analytics that would (re)use corporate environmental data to improve environmental governance. Ethnographically grounded, I analyse a Fortune 50 company’s environmental accounting practices that generate the data that is envisioned to be (re)used in this promissory analytics. This scope contributes to the sociotechnical analysis of the zone in which measuring corporate conduct is supposedly controlled, standardised, metrologised, audited (Barry, 2006; Bowker and Star, 2000; Lagoze, 2014; Mol, 2006; Power, 1999). My interest in environmental data practices builds on the analysis of enactment of environments and the performativity of accounting (Lippert, 2015; Lohmann, 2009; MacKenzie, 2009). I approach corporate environmental conduct as enacted through environmental information practices. Assessing the promise of governing environmental conduct through the devices of markets and democracy requires studying how the informational demands of these devices map onto the affordances of corporate environmental data. I empirically detail corporate data practices, whilst treating the informational demands theoretically. This analysis provides ground for arguing that integrating Big Data into environmental governance cannot be expected to unsettle the unsustainable powers of political and economic arrangements.

At the outset of this argument, consider a promissory space, extending the corporate and the policy field. Whitington (2016: 57) tells us: A ‘Silicon Valley venture capitalist[,…] former senior vice president of governance and risk at SAP […plans a] “big data” software platform for tracking, analyzing, and acting on carbon metrics for very large firms’. Writing for the United Nations, Peres (2014: 5) suggests environmental ‘big data analytics […are] now indispensable’, improving the environmental management of organisations and production chains (2014: 11), whilst speculating that Big Data analytics of the environment may provide ‘a basis for designing, implementing and evaluating public policies’ (2014: 5). I interpret Whitington’s venture capitalist and Peres’ United Nations text as illustrative of a broader ambition that Big Data might fill the gaps in environmental decision-making. Big Data analytics promises to produce the evidence needed for evidence-based decision-making, in firms and the market as well as in democratic deliberations. Following basic theory of economics and democracy (Akerlof, 1970; Habermas, 2006), environmental governance through markets or through democracy succeeds if the different actors have equal and symmetrically distributed access to the relevant information about the world they decide upon (cf. Bulkeley and Mol, 2003) 1 ; and – illustrated by the United Nations’ Agenda 21 – markets and democracy are the two dominant normative orientations in environmental governance. The equal distribution of good and relevant information is seemingly central to legitimising market and democracy.

The political importance of information and their actual use and dynamics is paralleled by emerging research agendas addressing the intersection of (environmental) governance and Big Data. Hazen et al. (2016) formulate a research agenda towards the use of Big Data for sustainability in supply chain management. As suitable analytical frameworks, they point to actor-network theory (ANT) and ecological modernisation theory (EMT). Madsen et al. (2016) suggest – towards developing an international political sociology of data practices in Big Data – to explore how data sources are produced, shaped and what is in/excluded in making data ready for processing. Along these lines, I explore the shaping of environmental data in specific settings of enacting environmental data involving devices like spreadsheets and PowerPoint slides, which are meant to render carbon conduct transparent (cf. Grossman et al., 2006). These practices and devices constitute – in ANT jargon – material-semiotic settings of datafying carbon (MacKenzie, 2009), or – in the terminology of EMT – informational governance of environmental reform (Mol, 2006). Following Ruppert et al. (2015), I treat Big Data not in essentialist terms, but as an effect – of data practices. I decline essentially differentiating data from information but consider the categorisation of entities like qualifiers and quantifiers as information or data a situational achievement.

A caveat: Ignoring ANT and EMT, the response to my concern could be deductively derived. With Blühdorn (2013) and Pellizzoni (2011), I could construe a critical stance to environmental politics and ‘find’ that concern for the environment under neoliberalism is a mere simulation, in which the uncertainties and fluidities of/in nature and technoscience are instrumental in sustaining unsustainability. Addressing carbon governance, we could problematise tremendous failures in the design and the implementation of emission reduction production and trading (Lohmann, 2009). Following Chilvers and Kearnes (2016), when taking realist stances on participative politics, no political device can be considered as truly achieving democracy. Thus, hopes for evidence-based environmental governance through markets or deliberative democracy appear misguided. However, these authors themselves do call for empirically opening up the practices through which democracy is enlivened, markets enacted, accounts of the environment made. This would resonate with Callon’s (2009: 541) and Callon et al.’s (2009) proposal to study how affected actors, and their voices, are (not) welcomed into the hybrid forums that shape carbon markets.

However misguided some optimistic investments in Big Data and evidence-based decision-making in markets and deliberative democracy, the social and technical practices in which environmental data is sourced and processed so that these promissory narratives appear ‘grounded’ are not mythical; they are effective, and take part in shaping our world. I turn to the sourcing, shaping and processing of a body of environmental data in a multinational corporation. This body of data was drawn from subsidiaries across five continents, summed up as the company’s global carbon footprint. The making and shaping of this carbon footprint served to represent the environmental impact of administering over one trillion USD, as the company was operating in the financial services sector. I call this transnational corporation GFQ (Lippert, 2013b). Whilst the environmental data practices had not – during my ethnographic fieldwork (2008–10; Lippert, 2013b; 2014) – been designated as ‘Big Data’, the data that was produced is subject to reuse considerations now, to be correlated with the mass of corporate sustainability reports, feeding into ‘new’ hopes for Big Data as voiced by Whitington’s venture capitalist and Peres.

Environmental data was meant to inform GFQ’s corporate sustainability strategy and to shape accountability practices. I analyse internal accountability practices – a subsidiary’s sourcing of data, processing of data between subsidiary and headquarters, the headquarters' reviewing of data – and their relation to external accountability agencies – the control of the company’s data practices through audit guidelines and the release of data to a ranking. In economic and democratic theory sharing environmental accounts symmetrically with NGOs and ranking/index organisations is significant for civic self-rule and for ensuring transparency in markets. Hopes of ecologically modernising organisations, economy and society – an epistemologically realist discourse – presume reliable and transparent information about environmental reality: missing, misleading or wrong information effects the externalisation of environmental impact. When the company does not know where, when and how it emits, it can neither perform ‘adequate’ evidence-based decision-making in-house nor allow their political and economic transaction partners to take corporate emissions fully into account.

My concern is not whether or not GFQ manages to fully account for their carbon emissions. Rather, I want to analytically attend to how they stabilise their data as infrastructure, and how their data practices are situationally configured. This approach allows me to identify particular (un)sustainabilities that are prefigured in this corporate carbon reporting. Central to this is asking how accounting for environmental realities interferes with the demand for symmetrical information.

I engage a range of logics and practices that stabilise imaginaries of control over data. Data sourcing and processing are supposed to be standardised, leading to certainty about the relationship between data and reality. With Bowker and Star (2000), I address how standards’ categories relate to practical realities. As Big Data conversations recognise the limits of standardised data divorced from context (cf. boyd and Crawford, 2012), metadata is evoked as a means to record context (cf. Vis, 2013). Boellstorff (2013) reminds us that metadata, as a category, will always exclude some realities from being taken into account. The first section, ‘Sourcing data: Asymmetries and uncertainties as situational context', tests these two logics/practices empirically, shows that data is always tied to their creation and specifies resulting uncertainties. A key intervention of Big Data in conventional epistemologies of good data practice is that these uncertainties are not considered problematic: errors will be averaged out – given a statistics in which naturally distributed messiness does not affect the patterns ‘detected’ in Big Data correlations. The second section, ‘Straightening out data', troubles the promise of averaging errors out. I retrace how GFQ straightened data out to produce comfort – comfortable environments that do not disturb. As part of neoliberal regimes of self-regulation, data practices are to be rendered transparent for external stakeholders through audit. According to Strathern (2000), audit cannot achieve complete transparency and always relies on trust. I detail relations of trust and scrutiny between third parties and GFQ’s compliance with standards. Using Power (1999), the third section, ‘External control', explores how GFQ and external control agents achieve to decouple data/standards from environmental ‘reality’.

Ethnographically tracing the doing of carbon data in relation to these logics of control brings this article into a conversation with Lagoze’s (2014) take on ‘control zones’. He draws on Atkinson’s (1996) programmatic discussion of how research libraries could establish their value by carefully controlling the research library’s information dynamics. With Lagoze, the notion ‘control zone’ shifts, comes close to an analytics that foregrounds the spaces in which data is materially and semiotically practiced and he proposes to evaluate data practices in relation to the purported use of data (as fitness for use). With ‘control zones’, I focus on the data practices involved in GFQ’s projects of measurement and transparency for ecologically modernising their operations (cf. Mol, 2006). Lagoze’s analytics resonates with Barry’s (2006) discussion of metrological zones, which I read as infrastructures that make information comparable across time and space. With Barry and Lagoze, we can bridge ANT and EMT, inquiring into the situated political economy of controlling data practices. I investigate the fitness of corporate environmental accounting data for Big Data analytics that aspire to ground environmental governance – an environmental governance that is fit for deliberative democracy and fit for optimal allocations of environments through markets.

I show: Within GFQ’s private metrological zone uncertainties systematically increase and multiply. Pressing messy realities into methods that rely on order does not increase certainty, control and order, but proliferates mess. However, the messy quality of GFQ’s carbon reality is not communicated to stakeholders, but staged through performances of audit as non-messy. The company stages their data as under control – as much as Atkinson desires for the libraries. In effect, the strategy of staging order where mess is significantly characterising environmental reality proliferates information asymmetries, rendering consensual understanding of the environmental impacts across corporate and stakeholder actors impossible. Failing to achieve increasing information symmetry points to the inadequacy of trust in theoretical models of markets and corporate citizenship as part of deliberative democracy and indicates particular unsustainabilities: such as not giving voice to situated environmental knowledge, and systematic non-engagement with emission sources, leading, e.g. to the 300-fold under-reporting of emissions.

The thesis here is that the enactment of data is not only effecting troubling environmental realities, but is also failing the promises of democratic and of market-driven environmental governance. I show how GFQ’s environmental data is largely a project of attempting to ‘settle’ rather than ‘unsettle’ accounts. GFQ’s enactment of environmental data prefigures ways of governing environments that do not disturb the company’s business whilst rendering impossible a careful relation to the environmental realities supposedly represented by the data.

In the following, I ethnographically describe environmental data practices. In each of the sections that follow, I initially guide through the field and subsequently consider analytical implications. The first section analyses how the relationships between carbon data and emission as molecules are obscured in ‘standardised’ accounting. Second, I establish that the company’s environmental data is enacted in a directed manner, not deterministically but pushed for strategic interests. Third, I show that inter-organisational data configurations can easily lead to stabilising, rather than to reflexively opening up, accounts of emission realities.

Sourcing data: Asymmetries and uncertainties as situational context

In this section, I take on the understanding that data is systematically related to data sources, representing a reality ‘out there’, and that standardised data collection achieves certainty.

So, let us visit a ‘source’ of data to analyse how the relationships between carbon data and emissions as molecules are obscured in accounting. GFQ headquarters’ agents called the process of making data available for their central database ‘data collection’. I use the concept ‘source’ to study the relation between data and what the data represents and focuses on data practices of ‘sourcing’, which allow GFQ to maintain the imaginary of data as collectable from sources. Interested in the work of entering data into the database, I followed a headquarters request for data to the request’s addressee, a subsidiary in Western Asia. There I was introduced to an engineer, Nick. He had been tasked with collecting the requested data on water consumption. I use the case of Nick’s work on water consumption data to illustrate the practical and systemic uncertainties and asymmetries involved in and proliferating at the core of corporate environmental accounting.

We reviewed the data he had collected and looked into sourcing other requested data. Nick approached filing water consumption by diligently gathering data from different sources of water consumption, including a well, tap water and drinking water. This data ‘gathering’ involved calling and emailing people to get the subsidiary’s financial accountants to provide him with consumption data. 2 Tap water consumption data was available in invoices, shelved in Nick’s office. He could simply get up, fetch and look up the invoice data. Yet another water data type required Nick to leave the building: We went to the car park, and in a corner of the car park he showed me the well they used for watering the garden. Accounting for the water withdrawn from the ground with the well was easily doable. A problem emerged around other, seemingly innocent, objects stored on the car park: bottled water in plastic containers, each providing 20 litres of drinking water.

A day later, drinking water cropped up as an issue. Nick prepared entering water consumption data. We noted that GFQ’s environmental database differentiated water with three accounts: (a) ‘natural water’, which we considered to use for the water harvested at the well; (b) ‘drinking water’, which we considered to use for the water bottled in the containers and (c) ‘rainwater’. This resulted in some wonder: how should we account for tap water consumption? To clarify this, I emailed Elise, the headquarters-based assistant of GFQ’s environmental management system, informing her about our accounting intentions. I asked her: ‘What is tap water—which account are we supposed to use?’. Elise replied swiftly ‘Drinking water in cans is not included into the calculation, merely the water got from taps (drinking water)’. Her response deviated from Nick’s and my accounting intention. I let Nick know that the headquarters was not interested in the drinking water he had collected but instead wanted tap water to be accounted for as drinking water. 3 He was interested in my take on this and I suggested that he might opt for using the bottled water data only for the internal accounting of his subsidiary. I left it to him to define subsequent steps. Next, Nick called the canteen and the cafeteria, asking them about how much drinking water they used. When he entered the data for the account ‘drinking water’ in the central database, he added the canteen and cafeteria’s 171 m3 of drinking water use and commented in his data entry that canteen and cafeteria used bottled water. Consequently, the sum of tap and bottled water consumption constituted his subsidiary’s drinking water account.

Analysing this episode, I identify, first, an informational asymmetry that intersects with the theme of standardisation and, second, uncertainties about the emissions that resulted from the environmental data.

Asymmetric information about drinking water

Standardisation of accounting is meant to ensure users can judge the data, minimising uncertainty. In the top-down logic of standardisation, the standard of environmental accounting provides users with equivalent understandings of the data, resulting in information symmetry. So, was any such standard involved?

The international environmental accounting standard ‘VfU’ (originally developed in Germany; VfU, 2005) actually related to Nick’s work situation. It was translated into the situation by four mediators:

a hard copy of the headquarters’ guideline on environmental accounting, which Nick had positioned on his desk; the database interface, specifying which data it desired; Elise’s email; my verbal comments.

In the top-down logic of standardisation, the hard copy and the database should have sufficed to determine the accounting for water consumption. Standardisation did not fully succeed. Instead of judging Nick, I attend to the informational configuration of this situation.

Nick was understanding that in his region, Western Asia, drinking water was usually acquired in bottles. This deviated from VfU’s understanding. VfU’s core included a group of Germans who considered tap water the default drinkable water. I identify an information(al) asymmetry, competing understandings of drinkable water: tap water versus bottled water.

In the face of this asymmetry, Nick constructively assembled a drinking water fact by joining the two sides of the asymmetry: he summed up tap water and bottled water consumption; and he classified the sum as drinking water. The fact that Nick enacted was a practical compromise, simultaneously following VfU by including tap water whilst dissenting from the prescriptions by not excluding the bottled water.

I consider Nick’s work a careful engagement with the various informational materials within the asymmetrically structured situation. Carefully, Nick relationally played out his agency to involve all these informational materials in his account. Analytically, then, I differentiate (a) carefully relating to the various materials and agencies involved in situationally acting in informational asymmetry from (b) the imaginary of universally implementing a standard. Yet, a dilemma remains: given the meaningful existence of alternatives, neither option can ensure certainty. Would uncertainties disappear when we do not focus on the ‘social’, but on the ‘technical’?

Uncertain emissions

I present two sets of uncertainties. One set relates to the database’s factor for converting the amount of drinking water into carbon emissions. The other set revolves around the plastic of bottled water.

The conversion factor involves three uncertainties (online Appendix 1):

This list sketches several uncertain stories folded into the conversion factor’s construction; such stories appear hidden from data users.

Nick’s inclusion of bottled water tied in another set of uncertainties. In terms of GFQ’s guidelines, the plastics of water containers polluted the category of drinking water. If Nick had followed Elise’s prescription by excluding the bottled water from the calculation, neither would have the water nor the containers been accounted for. By including the 171 m3 of bottled water GFQ emitted 64 kg CO2 more than if Nick had excluded the 171 m3. Yet, from an accounting perspective, the containers were badly accounted for in VfU’s drinking water category, since bottled water comes with higher carbon footprints than tap water provision – Botto (2009) suggests 300 times. Relative to Nick’s account, GFQ ‘saved’ ≈20 tonnes of emissions compared to a scenario in which Nick had used (and been provided with) a bottled water account (online Appendix 2).

Recognising these sets of uncertainties frames the environmental data enacted as precarious. Both, classification system and the construction of classes are interwoven with uncertainties and contingencies. For environmental data to neatly fit the accounting project, rich stories of contingencies, uncertainties and problems are ignored.

Discussion

I investigated the ground of the imaginary of data as collectable from sources. My approach locates the source in practice: Nick was sourcing data. Sourcing included enacting a range of material and semiotic references and coordinating these to evoke amounts of consumed water. This resonates with Latour’s (1999: 66–76) notion of ‘source’, pointing to hybrid traces. I analysed Nick’s enactment as a careful engagement with the situational context of competing understandings of drinking water. The standard could not foresee all the contingencies of the situatedness of data, yet the facts produced were stripped off these contingencies, effectively naturalising the fact (Bowker and Star, 2000: 299). Here is an information asymmetry between data worker and standard.

The data work of converting environmental data into emissions involved a range of contingencies and uncertainties. Using the Swiss rate of water treatment universalises the emission structure of the Swiss conversion factor; a factor’s author, Gabor, siding with ‘the environment’, relates to an abstract universal environment. How these universals relate to non-Swiss realities remains unclear. Gabor, as an individually working, self-employed calculative master, clearly knows that he cannot attain some total objectivity. Carbon emission accounts – that come as ‘simple facts’ or ‘just numbers’ – are not ‘fit’ to represent the rich stories of the fact that relate the fact to differentiated scapes of time and geography. The project of internalising the environment into decision-making through datafying environments cannot accommodate thick stories/contexts about enacting these environments (cf. Lippert, 2013b, 2016; Lohmann, 2009; MacKenzie, 2009).

Informational asymmetry, the loss of context, would not appear problematic if Latour’s analysis holds. Whilst he recognised that in chains of translations, each translation produces a reality, different from the pre-translation reality (1987), he also theorises the reversibility of chains of translations (1999). On the latter, my analysis of data work deviates: practices of enacting data are mediated in a situational context and shaped by the uncertainties and contingencies of the data/base. Situational context, uncertainties and contingencies are not fully explicable but bound to the situation. Later data users, outside of the situation, cannot easily trace the relations that produced the data. This undermines the possibility for consensual understanding.

Lagoze (2014) uses ‘integrity’ to distance his analytics from the positivism of ‘correctness’. He qualifies integrity in terms of fitness for use, consensual understanding and trust. This links my analysis to Lagoze’s concept of ‘Big Data’, which he defines through the disruption of integrity. However, other Big Data voices stick to positivist framings – proposing a range of ‘solutions’ for retaining context, including metadata (cf. Vis, 2013) or automated sense-making (Lukoianova and Rubin, 2014). Such solutions offer a form of ‘veracity management’. Yet, recognising data as enacted in situational context troubles such positivist approaches. Neither algorithms nor large datasets can account for the environmental and organisational issues situated in enactment work. Furthermore, the uncertainties and contingencies involved in the situations in which data is ‘figured out’ are deleted precisely in the process of construing data points that fit in the data entry forms. Consequently, rich information about the quality of data is lost and possible accounting errors cannot be accounted for, rendering any kind of ‘veracity management’ less effective. Investing hope in data-driven solutions to managing veracity ignores that any metadata is also situated contextually, resulting in a ‘theoretically infinite regress’ (Boellstorff, 2013).

Thus, the informational structure between data worker, standard and algorithm needs to be considered asymmetrical. Environmental accounting provides decision-makers with data. However, whether that data actually represents what they claim to represent is obscured. Only because data is provided, we cannot assume that the data approximates well what they supposedly represent. Yet, information asymmetry does not stop practice, it shapes it.

Considering that GFQ consumed several million cubic meters of drinking water yearly without accounting for the plastics of containers, we might have to calculate with several giga tonnes of unaccounted emissions (e.g., 4.5 gt CO2e for each million m3; online Appendix 2). Most users of the facts Nick produced, and into which Nick’s facts were aggregated, were not positioned to open up this calculative space. This suggests that not only are information asymmetrically distributed but also the powers to calculate, to orchestrate this space (cf. Callon and Muniesa, 2005; Lippert, 2013a). These asymmetries indicate two forms of unsustainabilities: (a) emissions are externalised, (b) users, not even Nick’s boss, least political and market publics, are configured to take part in contesting facts; I consider these an environmental as well as an information infrastructural unsustainability.

Where such asymmetry leads to market failure in economics, informational-calculative asymmetries in environmental governance may effect policy failure, too. Action based on the data/base may make impossible ‘good governance’ of the entities the data supposedly represent.

Straightening out data

Recognising contingencies and uncertainties in environmental data practice and noting that such data practice may result in poor accounts of environmental-organisational complexity does not automatically challenge quantification proponents. Easily available, the frequentist position suggests statistics is well able to accommodate data error. 4 Their solution is straightforward: given a random distribution and directions of error, the more data you collect, the better you can differentiate the noise from the signal. The respective question is, then, whether carbon data do indeed average out the errors. Engagement with veracity management recognises a related complication (Lukoianova and Rubin, 2014; Vis, 2013): Deception may cause non-random errors.

When following the data from Nick into the headquarters, I find data was processed in a detailed manner, enacting GFQ’s global environmental impact as a reportable reality (see Nadim, 2016), informing GFQ’s decision-making, released in print and in various digital versions to shareholders and other publics. In the following, I analyse three moments of this data flow. The pattern I identify troubles both the frequentist understanding as well as the discourse of deception. I argue that data practices were aligned to straighten data out, minimising risks of data causing trouble for the company or its workers.

Guiding data entry

Consider the interface Nick was using to enter data. This interface was configured to also show the user the respective data for the same account of the prior reporting year. If new data entered was quantitatively deviating from prior reported data by more than 10% (a centrally set threshold), the user would be asked to provide a comment – stored as part of the dataset. The deviation between old and new data would be shown in percentage terms, and the user had the possibility to adjust data (until a yearly deadline).

This configuration of the user through the database shaped the practice of data entry: if an entry was not sufficiently alike the data reported previously, users would be penalised by having to author a comment (for which they had to take responsibility), and having to go through further steps in order to store the dataset. This positioned users to learn that they can more easily get the job done if the data they reported did not prompt them for comments, involving further steps.

Reviewing data entered

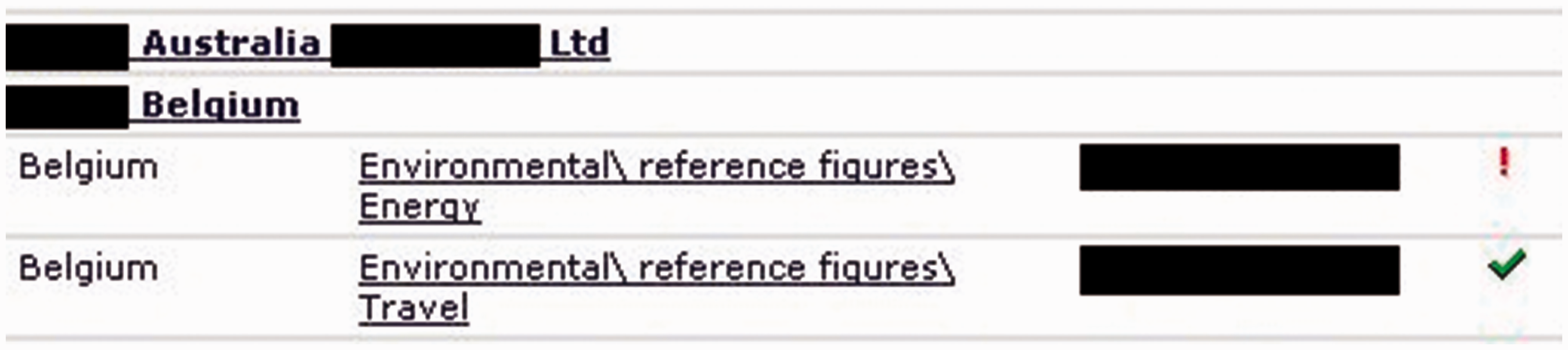

All the data submitted by the subsidiaries were reviewed by headquarters staff. This review was supported by an interface that signalled whether the reported data was within the threshold. If the deviation exceeded the ±10% (or if for other reasons a comment was provided by the data entry agent), the reviewers (Elise was the first to check the data) would be automatically shown a red exclamation mark, otherwise (for sufficiently similar data) a green tick mark (Figure 1). After the data entry deadline, Elise checked for red exclamation marks and decided whether the respective subsidiary would need to explain their data more thoroughly. This resulted in months of loops of questions, corrections, adjustments and explanations between headquarters and subsidiary. Throughout these loops, Elise was looking for inconsistencies in the data, and, was seeking to identify data in need of cleaning.

Screenshot: HQ view on data submitted by subsidiaries (rendered anonymous, extract, source: reproduced with permission from publisher, Lippert, 2013b: 373).

I identify a sociotechnical configuration of data processing that engenders a close engagement with data that was not alike prior data or with data that was marked with comments. If good reasons for deviations existed, data could be accepted; if the reasons were not considered good enough, subsidiaries were asked to correct data or comments. New data could be more similar to data reported in the prior year. And similar data would not automatically attract attention.

Reporting data

I now shift to a moment of preparing carbon data for public disclosure of emissions. GFQ shared their carbon footprinting data with many organisations, investors, indices and rankings. Here I focus on one of the globally largest disclosure exercises that I call Corporate World Carbon Ranking (CWC Ranking). Frederik was responsible for GFQ’s carbon content, which our team entered into CWC Ranking’s questionnaire. In a 45 min phone conversation, he and I discussed various edits of GFQ’s response (to the questionnaire) that I had proposed. He authorised some of my proposals, but remarked in general, that copying answers from the previous year’s response was an apt approach for completing the current questionnaire because the previous answer had already been authorised by superiors and, thus, legitimised by GFQ.

Frederik, in this moment, offered a rationalisation of the practice of keeping data similar or alike. Earlier reported data was already proven to have worked within GFQ or, in this case, even outside of GFQ. Generating new data that was similar to old data was rational, at the least in the terms of risk, responsibility and accountability. By keeping data similar, he – as the data owner – was not risking an exposure of himself (or GFQ) to provoking new reactions. The implicit assumption was that how data was read would not constantly change. If data was maintained, then the readings of data would remain similar. New and different data might invite unwelcome questions on what or why something had changed. Indeed, CWC Ranking asked GFQ to state whether emissions ‘vary significantly compared to previous years’. Keeping data similar meant keeping out of trouble.

Discussion

This section troubles the presumption that data errors are averaged out. I presented how data enactment systematically incentivised keeping data alike across temporal frames, where data entered for prior temporal frames set standards for later data entry, involving adjustments of data in target configurations. ‘Cleaning’ data involved deleting wrong data and asking ‘data gatherers’ for ‘better’ data. The situated quality of data was assessed in relation to the potential to cause friction. ‘Different’ data disrupted the routine assessment of data; alike data fit well in. GFQ’s environmental cyberinfrastructure straightened data out, not causing friction within the corporate (data) dynamics. With Bowker (2014) this infrastructure appears as achieving an ‘eternal present’, in which data presents the decision-maker with an always fitting, comfortable environment.

In these configurations of data work, the notion of deception becomes analytically useless because it implies unbiased data. However, humans, databases, algorithms and their interaction cannot be configured to be ‘neutral’. The database needs to be programmed as much as the humans involved are to perform well for the company. Workers perform environmental accounting in a materially and semiotically situated and contextual way, involving justifying and explaining data, negotiating data with coworkers and superiors whilst ensuring that they can fit the data into the forms provided by computational interfaces.

Whereas in Barry’s (2006) metrological zones an ‘out there’ is to be measured, and metrology is about making ‘out there’ comparable across time/space (Latour, 1987, 1999), I identified a metrology that was infra-structured to comfort the data user by avoiding disruption of their expectations about the ‘out there’. This analysis resonates with problematisations of the production of comfort in accounting research (cf. Power, 1999). Aligning data practices to the production of comfort implies that how data fit to realities supposedly represented was progressively and systematically obscured. Informational symmetry between external users and the company is rendered even less attainable because of the systematic provision of data that are to comfort their users. Thus, GFQ’s environmental data was fit for comforting but not fit for meeting the informational demands of environmental governance. Enacting a neat eternal present – avoiding engagement with disruption – appears environmentally unsustainable.

External control

Whilst the first section has foregrounded limits to control within the control zone (recognising uncertainties and contingencies), in the second section I clarified that effective control does not necessarily imply a more veritable relationship to the reality envisioned by the positivist. Corporate governance discourse accommodates the possibility of governance ‘below standard’; to rescue promises of informational governance of environmental reform, ‘independent’ control, quality assurance, audit, indices and rankings to generate transparency are evoked (e.g., Mol, 2006). Corporate accounting is aligned with such ‘instruments’, shaping what Power (1999) calls ‘audit society’. The combination of corporate accounting with external control establishes a dispositif that distributes control and performs autonomy in the (corporate) subject; such dispositifs are key to enacting the ‘self-correcting’ actor envisaged in advanced liberal democracy (Grossman et al., 2006: 3). GFQ’s control zone would thus be enveloped within a second-order control zone. I turn to discuss external control of data processes and of the data themselves.

At the centre of governing environments through IT is the assumption that data engenders ‘consensual understandings’ of the environments represented. In contrast to this, I argue that the inter-organisational ‘control’ of data practices closes down options to open up and scrutinise accounts of emission realities. To ground my claim I turn, first, to the audit of environmental accounting, and second, to data perspectives maintained at CWC Ranking.

Guiding and assuring data practices

Audit relations are very much shaped by documentary processes and demands on documentation (Power, 1999). A guiding document that a Big Four auditor offered GFQ to prepare for audit exemplifies this: a PowerPoint slide that was used to put various hard criteria on environmental information management into perspective. The slide specifies: Assumptions, estimations, non-reporting of specific facts, non-consideration of themes, modification of earlier communicated data, deviation from data communicated elsewhere … [a] all this is acceptable, as long as you make it sufficiently transparent for the audience! [b] and a fundamental extent of data series’ continuity and the statements is maintained.

GFQ’s footprints were later underwritten as assured by a Big Four auditor. GFQ, thus, had hired an auditor to ‘independently’ confirm that the environmental information is trustworthy. The quality ‘trustworthy’, however, was not merely assigned to numbers but also to small print (specifying data processing contingencies rudimentarily). I wonder, however, whether third parties, relying on the assurance, scrutinise such metadata.

Conjuring up information symmetry

Like Grossman et al.’s (2006) positioning of the Climate Leadership Index, CWC Ranking figures centrally in imagining the global carbon economy as transparent and allowing for information symmetry. I show that we cannot assume that they actually scrutinise the corporate data they reuse. For that I turn to CWC Ranking; at their Main Office I met Rick, GFQ’s contact at CWC Ranking.

I wondered how Rick imagined the data they received from companies. He told me that they do not carry out any verification whether the data submitted was correct. In short, he made clear: CWC Ranking accepts corporations’ data submissions. Rick suggested that the primary audience of data are the shareholders. And, he argued, because the companies are controlled by the shareholders, CWC Ranking does not expect companies to lie. Thus, the ranking organisation assumed reusing corporate data produces a correct meta-account.

Trust in the data producer was able to substitute more stringent verification procedures. CWC Ranking performed external trust, rather than control. The imaginary of information symmetry is strengthened by CWC Ranking providing the environmental governance discourse with data on climate changers. Governance informed by the ranking is as out of touch with data complexities as the ranking is with the rich contingencies, uncertainties and dynamics of environmental data. Governance is limited to hoping that environmental accounts are trustworthy.

Discussion

GFQ’s auditor attributes transparency to the impression by presentations of data on users; they ‘only’ wanted GFQ to present environmental data that were assurable, rather than to publicly delve into the complexity of their environmental accounts. This finding parallels Power’s (1999) problematisation of organisational processes that are structured to produce an ‘auditable performance’. The standard itself is ‘watered down’ in the mutual construction of the assured reality. With Power I identify the decoupling between an (idealised) strict standard, auditors and auditee.

The case of CWC Ranking’s trust in data reduces even more radically the coupling between the possibility of control and realities under control. Their foundational trust implies that corporate data is unlikely to be called into account. Trust figures as an alternative to projects of achieving ‘full’ transparency (Strathern, 2000).

Both auditor and ranking agency are dependent on the company to provide data. Are these agents of external control positioned to scrutinise data? Had they demanded ‘integrity’ of GFQ’s environmental information, it would have been rational for GFQ to select these organisations out and work with competitors. I identify an economy of trust and of ignorance in which supposed agents of control are locked into an asymmetrical relationship in which they are precariously positioned, unlikely to cause friction. Trust between the organisations disables critical questions and consensual understandings of environmental data, thus generating mutual comfort. Sustaining the organisations’ profitable relations implies ignoring environmental friction.

In sum, I identify inter-organisational relations that effect comfort, not governance of environmental data, least of all environments ‘out there’. This discussion undermines promises of external control. ‘External’ organisations co-configured GFQ’s data infrastructure to stabilise carbon footprints. Data was set up to settle accounts, rather than to unsettle them. Decoupling allows enacting environments that are fit to simulate trustworthiness; no troubling questions, no surprises: environmental conduct appears ‘as expected’.

Big Data: Missing the situatedness of corporate environmental data

Corporate environmental data abounds. This paper addressed whether Big Data analytics of corporate environmental information is fit to meet the promises of evidence-based environmental governance through markets or deliberative democracy. A core premise of such governance is that the subjects and parties of governance – citizens and consumers – have symmetrical access to information and are able to make sense of them. I empirically ‘tested’ this premise drawing on an ethnography of environmental accounting in a Fortune 50 company. The present analysis approached corporate data practices by attending to the ‘control zones’ (Lagoze, 2014) of carbon accounting, specifically focusing on the material-semiotic space in which data is handled.

The argument of this paper builds on the analysis of enactment of environments (Lippert, 2013, 2015). Following this approach, corporate environmental conduct is enacted through environmental information practices. These practices shape the environmental realities that matter for the company as well as for external control agents. A range of entities take part in configuring control zones, shaping data practices: accountants and managers, algorithms and databases as well as auditing organisations. The control zones I cover above extend from the sourcing of data in a subsidiary via the processing of data between subsidiary and headquarters, the reviewing of data at the headquarters, the control of the company’s data practices through audit guidelines and the release of data to a ranking.

Several instruments were supposed to standardise how environmental data sourced at the subsidiary level results in carbon emissions. Yet, these instruments were not able to determine accounting practice. Uncertainties involved data entry agents and included the design of data entry interfaces as well as both the (non-)fit and dynamics of carbon conversion factors.

Within the headquarters’ control zone, sociotechnical configurations of data flow from subsidiary into the central database incentivised enacting data that did not raise questions. Data handling in data entry, review and processing was controlled by several routines that shaped data to be alike earlier reported data. Thus, corporate environmental data production was not producing normally distributed errors, but it was shaped to straighten data out.

A zone of external control is supposed to ensure good data practices within companies: audit, rankings and indices promise transparency. I showed that the auditors were not demanding perfect data but were satisfied with assurable presentations of data. I register a shift from control over data practices to control over the staging of data for particular audiences. A ranking organisation foundationally trusted the data they received from companies, not calling the companies to account for their data. Control was substituted by trust. Both forms of supposedly external control are decoupled from uncertainties, contingencies and dynamics of data practices on the ground.

For my argument, these empirical findings matter in two ways. First, they detail the enactment of corporate greenness. Second, they provide the ground for explicating the particular politics of these enactments. The configuration of enacting environments was choreographed by the company; it was set up to settle environmental accounts, rather than to unsettle them. Whilst environmental realities were processed in the companies’ informational governance, these environments were shaped not to disturb, or trouble the corporate flow.

In short, my findings disillusion Mol’s (2006) ‘informational governance’ of corporate ‘environmental reform’. Facing this result, enthusiasts of automatisation might simply call for eliminating the humans – their agency in decision-making – thereby promising the prospect of proper data practices. Against such promise, I consider two points. First, imagining data analytics ‘without humans’ simply misses the normativities and biases inscribed in software and hardware. Second humans matter also in configuring datasets. When Elise and I discussed conclusions of my study, we considered prospects of automatising environmental data. She offered the following contrast: From the headquarters’ perspective manual data practices are sources of error, whereas from the subsidiary perspective manual data practices add quality. Thus, automatising accounting shifts control zones, with more algorithmic agency to control some data while less in control over how data actually relate to situated environmental concerns.

These findings have implications for two key discussions of Big Data. First, as boyd and Crawford (2012) suggest, Big Data needs to consider how context can be retained. In contrast, my analysis suggests that the doings of data–environment relations are not fully specifiable but inextricably bound to the situation. Metadata and other attempts to translate context into a machine-calculable state lose the situatedness and uncertainties of translating environmental relations into data. Second, the promise of re-useability of corporate environmental data faces the problem that data is dynamic. My study shows that the relation between data and what they supposedly represent is subject to change as data is made and remade in loops of review, processing and adjustment. Following Lagoze (2014), if datasets are uncertain and not fully specified/specifiable, their reuse and combination increases fuzziness, decreasing controllability; uncertainties are not likely to decrease with Big Data analyses but to multiply; consensual understandings of corporate environmental impacts become impossible.

Scaling up the (re)use of corporate environmental data (e.g., with Big Data analytics) to inform governance is, thus, troubled in several ways: not only is the reversibility and referentiality of data questioned the more data use is removed from the situations in which environments are related to data, but also are the scales of what and how environments are measured rendered less specific (cf. Simons et al., 2014). In sum, the kind of corporate environmental data practices I observed is not compatible with the core criterion of providing full information symmetrically to citizens and consumers. Significant information about how environmental information is constituted and how that information is related to local environments evade datafication, so that corporate sustainability reporting feeding discourses of climate change policy and markets asymmetrically informs decision-makers and market actors. Proliferating Big Data analytics of carbon reporting is likely to fail markets as much as deliberative democracy.

A significant environmental risk is that staging governance as evidence based and, thus, in control, may become eased through discourses of Big Data. If Big Data analytics (re)use (even if unwisely) corporate environmental data to offer results to decision-makers, the latter will be propped with even more ‘data’ they can enrol in the play they want to perform. Just as data is enacted to enable performances of audit/ability, (big) data can be employed in performances of evidence to sustain plays of evidence-based governance. To generalise (Lippert, 2014), such Big Data analytics may prefigure an environmental politics in which environments matter neither ecologically nor economically but environments would exist as an unaccountable, decoupled, post-integrity but ‘comforting’ dreamscape – an eternal present (Bowker, 2014) of environments that do not challenge the political and economic order, prefiguring intensifying market and political system failure (cf. Lippert, 2016). In other words, the crossover of Big Data with environmental governance cannot be expected to unsettle political and economic arrangements that hitherto have excelled in producing and sustaining unsustainability.

This environmental risk is relevant for reflexive considerations of Big Data, economy and society. If Big Data refers to data (practices) ‘beyond integrity’, then the normative questions crops up: should Big Data analytics be unequivocally supported? Lagoze (2014: 9) thinks they should: ‘it is futile and even undesirable to seek a return to traditional, rigid control zones’. I question this stance with boyd and Crawford (2012: 675): ‘how [do] the tools participate in shaping the world with us as we use them’? We have to consider what worlds are prefigured and enacted through (Big) Data informed decision-making. How to live with governance that merely claims to be evidence based? Forward-looking, at the intersection of STS and Big Data, the environment and political theory we might have to think differently about politics in techno-environmental zones (Barry, 2006): how could (post)governance actually be grounded in engagements by all affected parties with troubling and rich stories (of non-standardised, situated, dynamic and deeply contextual realities), rather than mere spreadsheets and PowerPoint slides? Instead of governing as if we knew the facts, we might have to approach environments by radically questioning how ‘modern’ institutions can develop relations of care for environments and their uncertainties. A precautionary approach could call to constrain economic, industrial and societal activities to levels at which detailed, contextual, situated and informationally symmetrical accountability – among the humans affected by the activities – is possible.

Footnotes

Acknowledgements

First, I am deeply grateful to Lydia Stiebitz for substituting my parenting work, which enabled me to work on this analysis. I am grateful to Regina Norrstig and Gernot Rieder for decisive discussions in early stages of this paper. I thank Jennifer Gabrys and Nerea Calvillo for the generative comments and editorial support. Three reviews have been generative in revising the paper. The analysis would not have been possible without GFQ and its sustainability staff granting access for fieldwork. I am indebted to the scholars at Lancaster University’s Centre for the Study of Environmental Change and it’s Centre for Science Studies, especially Lucy Suchman, Claire Waterton and Brian Wynne, between whom the sensibilities employed in this paper have crystallised.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The work underlying this analysis was conducted while I was supported by scholarships by Hans-Böckler-Foundation and the German National Academic Foundation.