Abstract

Why do people spread false news online? Previous studies have linked affective polarization with misinformation sharing and belief. Contrary to these largely observational findings, however, we show that experimentally improving people’s feelings about opposing partisans (versus members of their own party) has no measurable effect on people’s intentions to share true news, false news, or the difference between them, known as discernment. By contrast, we find evidence that a reminder of accuracy can modestly improve truth discernment among people who report sharing political news. These results suggest the need for a reexamination of the role of affective polarization in the dissemination of misinformation online.

Keywords

Introduction

Though its prevalence is often overstated (Budak et al., 2024), false and untrustworthy news is widely shared online, spreading inaccurate claims about political parties, candidates, and events (e.g., Grinberg et al., 2019; Guess et al., 2019). Understanding why people engage in this behavior is an important research topic that can inform efforts to counter the spread of misinformation, which can have harmful consequences for society and democracy on topics ranging from COVID-19 to the 2020 U.S. presidential election.

We specifically consider the role of affective polarization—a notable and widely studied concern (e.g., Druckman and Levy, 2022)—in misinformation sharing. Polls show that affective polarization, which is defined as the gap between people’s feelings toward their own party and their feelings toward the opposing party, has been growing in the United States in recent decades (Hetherington and Rudolph, 2025). At the individual level, affective polarization has been linked to belief polarization (Druckman et al., 2021), including belief in misinformation (Garrett et al., 2019; Jenke, 2024) and biased evaluations of politicized facts (Voelkel et al., 2024). In particular, Osmundsen et al. (2021: 108) find that negative evaluations of the opposing party (the component of greatest interest in the affective polarization measure we use) are strongly associated with sharing false news, concluding that “partisans share politically congenial news, primarily because of hostile feelings toward the out-party” and they typically “pay more attention to the political usefulness of news rather than the information quality.”

However, available evidence to date is largely correlational; little is known about the causal effects of affective polarization on misinformation sharing or belief. Most notably, Broockman et al. (2023) find that several manipulations which substantially reduce affective polarization have no measurable effect on a range of outcome measures to which affective polarization has been linked in observational data. Its effects on misinformation sharing have not been tested, however.

The most relevant evidence to date comes from an experiment conducted after our own in which Jenke (2024) also uses the Broockman et al. (2023) experimental design to test its effects on misinformation belief (not sharing). Using a structural model, Jenke (2024) estimates the effect of treatment-induced changes in reported affective polarization on misinformation belief and concludes that reducing affective polarization reduces misinformation belief. However, this finding is not robust to more than “moderate” violations of the identifying assumptions of the model (Jenke, 2024: 882). 1 Moreover, the direct effect of treatment in the Jenke data on misinformation belief, by contrast, is null (see Table B6 in Online Appendix B).

We therefore designed an experiment testing if hostile feelings toward the opposition party cause people to share false news. Our study contrasts this account with a competing theory of cognitive inattention, which instead suggests that people share false news because they do not sufficiently attend to accuracy in deciding what news and information to share (Pennycook et al., 2021). One way to combat such inattention is an accuracy prompt, which reminds users to consider whether the posts they see are true. Previous research indicates that these nudges increase people’s ability to distinguish between accurate and inaccurate information in both sharing intentions and behavior (e.g., Pennycook and Rand, 2022). By measuring the relative effects of interventions targeting these two factors (affective polarization and inattention to accuracy), we can learn both about the most important causes of sharing false news as well as the most effective approaches to preventing fake news sharing and improving truth discernment. We also evaluate how these factors relate by testing whether high levels of affective polarization reduce the effects of accuracy prompts.

In the study reported below, we employ a 2 × 2 between-subjects design to estimate the separate and joint effects of accuracy salience and affective polarization in a news-sharing task using relevant true and false headlines from the 2020 U.S. election campaign. Consistent with previous research, we find suggestive evidence that making accuracy considerations more salient increases discernment between true and false headlines. By contrast, we find that reducing affective polarization does not measurably change the sharing of false news headlines or improve truth discernment in sharing. These findings suggest that efforts to prevent the spread of false news should not focus on reducing affective polarization.

Hypotheses

We specifically tested the following preregistered hypotheses and research questions.

First, given the association between negative feelings toward partisan opponents and news-sharing behaviors observed by Osmundsen et al. (2021) and similar correlational findings reported in the literature (Druckman et al., 2021; Garrett et al., 2019; Jenke, 2024; Voelkel et al., 2024), we expected participants in the positive experience condition of the affective polarization manipulation to express reduced intentions to (a) share false news headlines relative to true news and to (b) share news headlines that are congenial to their partisanship regardless of veracity when compared to participants in the negative experience condition. 2

Second, based on the findings reported in Pennycook and Rand (2022), we expected exposure to an accuracy prompt to reduce the intention to share false news relative to true news.

Third, we expected the positive experience condition in the affective polarization manipulation to increase the effect of exposure to an accuracy prompt on intentions to share false news relative to true news, especially for congenial false news relative to uncongenial false news, when compared to the estimated accuracy prompt effect in the negative experience condition.

Finally, we posed two research questions for which we had weaker theoretical expectations. We consider whether the effects of the accuracy prompt intervention are lower among people who are high in a trait called Need for Chaos that measures a general apolitical dissatisfaction with the political system and a desire to destroy it (Arceneaux et al., 2021). People high in Need for Chaos have been found to be more likely to share false information—seemingly out of a desire to promote disorder (Petersen et al., 2023). We also consider whether effects are diminished among people who strongly identify with their party (Osmundsen et al., 2021).

Methods

Sample characteristics

Our study was conducted among participants recruited from the Prolific survey platform. After a soft launch with 50 participants who identified as Democrats or Republicans, we recruited a quota sample of 1000 U.S. adult residents cross-stratified by sex, age, and ethnicity. All respondents provided informed consent to participate in this research, which was approved by the Dartmouth Committee for the Protection of Human Subjects (STUDY00032293). To counterbalance the Democratic tilt of this sample (64% identified or leaned Democrat), we then sought to recruit an additional 1000 participants (approximately) using qualifications for self-identifying as a Democrat or a Republican but adjusted the sample sizes for each (319 Democrats, 736 Republicans) to target a final sample of approximately 1000 Democrats and 1000 Republicans before exclusions. Data was collected from May 7–20, 2021.

To enter the experiment, participants had to pass at least one of two pre-treatment attention checks (Berinsky et al., 2021) and successfully answer two pre-treatment questions demonstrating their understanding of the behavioral game used in the affective polarization manipulation on their first or second try (Broockman et al., 2023). Those who did not were terminated from the survey.

Our study sample also reflects the following exclusions. First, following our preregistration, we exclude participants who indicated they were pure independents—that is, do not lean toward either party—from the final sample because they would not be affected by the affective polarization manipulation. Second, we include only participants who use Facebook or Twitter and who report sharing political content following Study 1 in Pennycook et al. (2021). We examine the effects of the treatments on this set of participants to maximize comparability with prior research and because we are most interested in their effects on people who actually share political news on social media. 3

Finally, we make several additional exclusions that were not specifically noted in our preregistration: participants who dropped out of the survey prior to the experimental randomization; those who were ineligible due to taking part in a pretest; and a single participant who dropped out after rating a single news headline (making it impossible to calculate the mean headline ratings we use in our analysis below).

After these exclusions, we are left with a final study sample of 785 participants (slightly smaller than Study 2 from Pennycook et al. (2020), which tests similar manipulations). In total, 45% of our participants are male, 28% identify as nonwhite, and 62% have obtained a bachelor’s degree or higher. Their median age is 35–44. Finally, 57% identify with or lean toward the Democratic Party and 43% identify with or lean toward the Republican Party.

Experimental design

We conducted a 2 × 2 between-subjects experiment in which participants were first independently randomized with equal probability to either have a positive (p = 0.5) or negative (p = 0.5) experience in a trust game manipulating affective polarization (Broockman et al., 2023; Westwood and Peterson, 2022). After participants played the trust game for two rounds, they were randomized to receive an accuracy prompt with probability 0.5 asking them to consider the accuracy of a single news headline (Pennycook et al., 2020, 2021). Respondents then completed a news-sharing task.

Procedures and materials

Participants completed the study on the Qualtrics online survey platform. All question wording and stimuli are provided in Online Appendix A.

After providing informed consent and completing a pre-treatment questionnaire, participants took part in a modified trust game used in Broockman et al., 2023; Westwood and Peterson, 2022 to provide exogenous variation in affective polarization by creating positive or negative interactions with members of the opposite party. We follow their design verbatim except participants played two rounds of the game rather than three due to survey time constraints. In the game, participants played as Player 2. They were told that they were playing with a person from the opposite party (Player 1) and that the other player would decide how much money to allocate to them. The amount would then be tripled, and Player 2 would need to decide how much to give back. The remaining amount would be used to calculate the bonus payments they would receive (with a 0.03 multiplier) in addition to their baseline payment of $1.50.

In reality, Player 1 was fictitious. The survey was pre-programmed to make allocation decisions based on whether participants were randomized to have a positive or negative experience with an ostensible outpartisan. In the positive experience condition, participants were told that they were allocated $8 by the Player 1 in both rounds. In the negative experience condition, participants were told that they were allocated $0 by Player 1 in both rounds. In both versions, participants were told that Player 1’s reason for their allocation was Player 2’s political party in round 1 and political party and income in round 2. The positive experience condition was expected to mitigate participants’ negative feelings toward the opposite party, reducing affective polarization; the negative experience condition was expected to do the opposite (though see Broockman et al. (2023), who find the difference in affective polarization between them is driven by the positive experience condition).

Participants were then randomized with probability 0.5 to receive a prompt asking to consider the accuracy of a news headline. Participants in the accuracy prompt condition were shown one of four randomized headlines and asked “To the best of your knowledge, is the above headline accurate?” (the exact manipulation used in Pennycook et al. (2020)). Participants then indicated whether they believed the headline was accurate. The participants received the prompt following the trust game but before the news-sharing task. Per prior research, it is intended to make accuracy considerations salient when participants subsequently consider whether to share news headlines or not. Participants who did not receive the accuracy prompt continued to the news-sharing task immediately after the trust game.

In the news-sharing task, which mirrors Pennycook et al. (2020), participants were shown six false and six real news headlines in randomized order in a format that mirrored Facebook article previews. Three of each type (i.e., false and real) were selected to be congenial to Democrats and the other three were selected to be congenial to Republicans.

The false headlines were published from June 2020 to May 2021 and were largely adapted from Coppock et al. (2023), who drew them from the fact-checking website PolitiFact. The true news headlines were selected to mirror the topics and partisan congeniality of the false headlines as closely as possible but to be factually accurate. However, we made two changes to the headlines after filing our preregistration. First, we replaced the false, Democrat-congenial headline, “Report: Trump Responsible for All Covid Deaths” with “USPS Reportedly Failed to Deliver 27% of Mail-In Ballots in South Florida” because we could not locate the source of the former headline. Second, we excluded ratings for the headline “Biden: ‘A Black Man Invented the Lightbulb, Not a White Guy Named Edison’” from our analysis. We previously coded this headline as false and congenial to Democrats, but Joe Biden did utter the (false) sentence and was accurately quoted in the article shown. In addition, the headline could potentially be interpreted as congenial to either party depending on how a respondent feels about Biden and whether they believe his claim. We therefore concluded that the truth status of the headline and the party for which it is congenial is unclear. 4

The primary dependent variable is sharing intention. We measure participants’ intention to share each news headline on a six-point Likert scale ranging from “Extremely unlikely” (1) to “Extremely likely” (6). These ratings were then averaged at the participant level to produce mean sharing intentions for true headlines, false headlines, and the difference between them, which we refer to as “truth discernment” (the same outcome variables used in Pennycook et al. (2020)). 5 Although we concede that false news is of greater normative concern and that the intention to share false news can also be a viable dependent variable, we use truth discernment as our primary dependent variable as it accounts for the willingness to share false news relative to true news.

After the news-sharing task, we administered manipulation checks measuring the perceived importance of accuracy in sharing news articles on social media and feelings toward Democrats and Republicans (both people who are members of the parties as well as politicians and elected officials). The latter measures were used to calculate post-treatment affective polarization.

All respondents were debriefed about the trust game and the veracity of the news headlines after completing the study.

Results

We evaluate the results of our experiment using ordinary least squares (OLS) regression with robust standard errors. Unless otherwise noted, the analysis below follows our preregistration (see https://osf.io/snxe2/?view_only=8af338addef24785b802c63ca455b1e0). The reported p-values below do not account for multiple comparisons. If we instead perform an exploratory false discovery rate correction using the two-step procedure created by Benjamini et al. (2006), we find that none of the reported estimates for treatment effects or marginal effects of interventions on preregistered subgroups are significant. We qualify our interpretation of our findings accordingly below.

We first consider whether the affective polarization and accuracy prompt manipulations worked as expected using our preregistered manipulation checks.

We find that the affective polarization manipulation worked as intended. Relative to the negative experience condition, respondents in the positive experience condition reported significantly lower levels of affective polarization when asked about Democrats and Republicans in general and about politicians from the parties (partisans: −4.672, p < 0.05 [−0.15 sd]; politicians: −5.103, p < 0.05 [−0.17 sd], respectively; see Table B2). Using the formulation from Broockman et al. (2023), the reductions in affective polarization induced by the manipulation are equivalent to “rewinding” 6.9 years of the over-time trend in affective polarization toward the public in the United States and 5.8 years for political elites. These figures derive from the measurements of affective polarization against party and political elites respectively from 1980 to 2020 (Tyler and Iyengar, 2024).

By contrast, the accuracy prompt manipulation had no measurable effect on the self-reported importance of accuracy considerations in sharing (−0.057 on a five-point scale, p > 0.05; see Table B2). This null result mirrors Study 4 in Pennycook et al. (2021), which finds that an accuracy prompt changes sharing intentions (as we find below) despite having no measurable effect on the perceived importance of sharing accurate news online. 6

Main effects on news-sharing intentions.

OLS with robust standard errors; *p < 0.05, **p < 0.01, ***p < .005 (two-sided). Sharing intentions for true and false news headlines were measured on a six-point scale. The positive outpartisan experience variable estimates the effect of assignment to that condition relative to the negative outpartisan experience condition (the reference category). Preregistered control variables are Biden approval (four-point scale); party identification (Republican indicator); college degree (bachelor’s degree indicator); gender (male indicator); race (nonwhite indicator); age group (indicators for 35–44, 45–54, 55–64, and 65+); social media use (indicators for self-reported usage of Facebook, Twitter, Snapchat, Instagram, and WhatsApp); and sharing behavior (indicators for self-reported sharing of news about sports, celebrities, science, and business). See Online Appendix A for news headlines and question wording.

News-sharing intention by accuracy prompt condition and headline type.

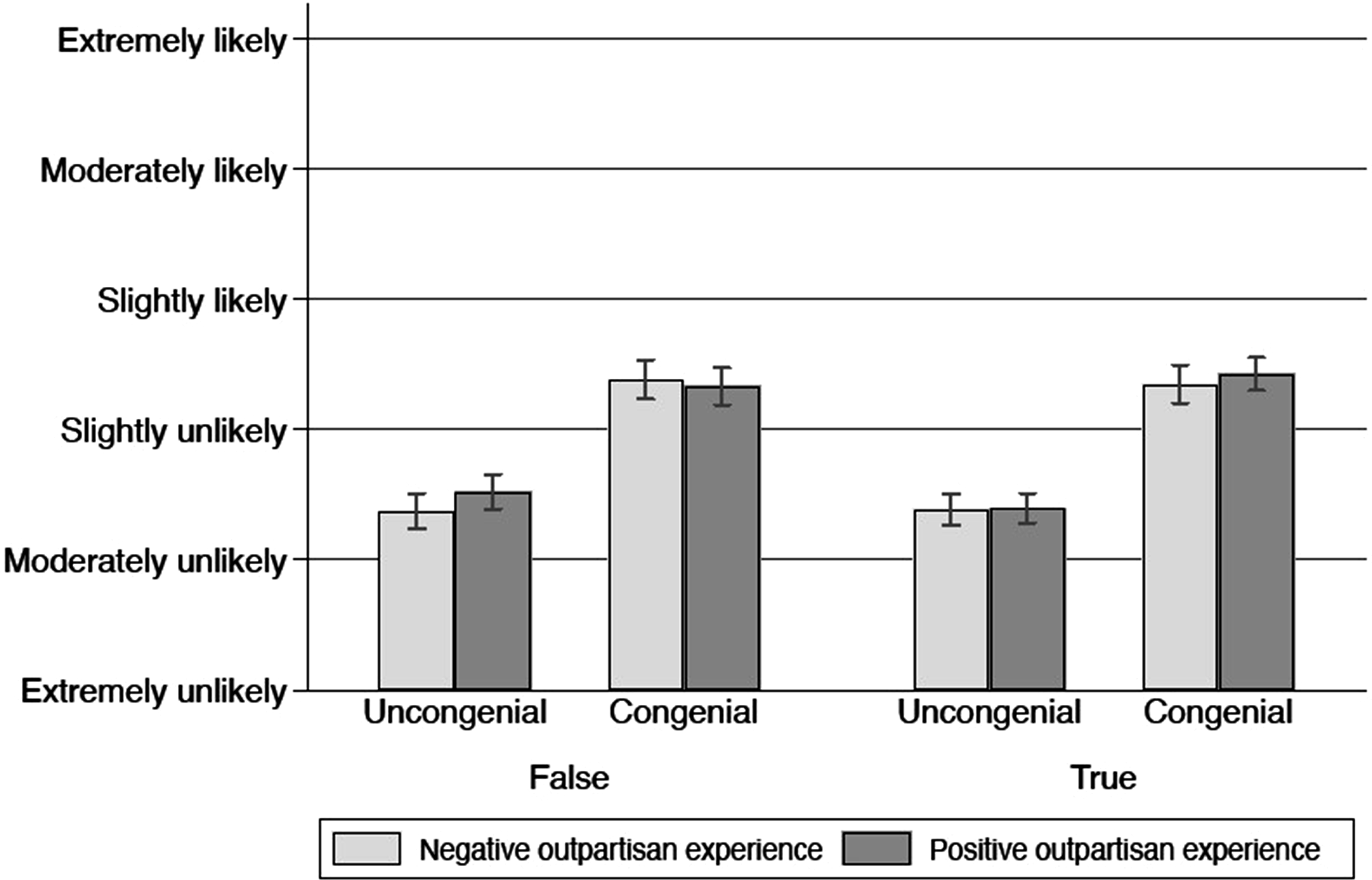

By contrast, we found no support for our expectation that exposure to the positive experience condition in the affective polarization manipulation would reduce intentions to share false news. Instead, exposure to the positive experience condition did not measurably change intention to share true or false news relative to the negative experience condition (0.109, p > .05, 95% CI: −0.04, 0.26; and 0.117, p > .05, 95% CI: −0.05, 0.28; respectively; see Table 1). As a result, we could not reject the null of no effect on the difference in sharing intentions between true and false information (Table 2 and Figure 2). News-sharing intention by affective polarization condition and headline type. Affective polarization manipulation effects on news sharing by partisan congeniality. OLS with standard errors clustered by respondent; *p < 0.05, **p < 0.01, ***p < .005 (two-sided). Observations represent average sharing intention for congenial or uncongenial news, which were measured on a six-point scale. The positive outpartisan experience variable estimates the effect of assignment to that condition relative to the negative outpartisan experience condition (the reference category). Preregistered control variables are Biden approval (four-point scale); party identification (Republican indicator); college degree (bachelor’s degree indicator); gender (male indicator); race (nonwhite indicator); age group (indicators for 35–44, 45–54, 55–64, and 65+); social media use (indicators for self-reported usage of Facebook, Twitter, Snapchat, Instagram, and WhatsApp); and sharing behavior (indicators for self-reported sharing of news about sports, celebrities, science, and business). See Online Appendix A for news headlines and question wording.

Joint effects of accuracy prompt and affective polarization manipulation on news sharing.

OLS with robust standard errors; *p < 0.05, **p < 0.01, ***p < .005 (two-sided). Sharing intentions for true and false news headlines were measured on a six-point scale. The positive outpartisan experience variable estimates the effect of assignment to that condition relative to the negative outpartisan experience condition (the reference category). Preregistered control variables are Biden approval (four-point scale); party identification (Republican indicator); college degree (bachelor’s degree indicator); gender (male indicator); race (nonwhite indicator); age group (indicators for 35–44, 45–54, 55–64, and 65+); social media use (indicators for self-reported usage of Facebook, Twitter, Snapchat, Instagram, and WhatsApp); and sharing behavior (indicators for self-reported sharing of news about sports, celebrities, science, and business). See Online Appendix A for news headlines and question wording.

Moderators of accuracy prompt effects.

OLS with robust standard errors; *p < 0.05, **p < 0.01, ***p < .005 (two-sided). Sharing intentions for true and false news headlines were measured on a six-point scale. Partisan leaners are the excluded category for the not strong partisan and strong partisan indicators. Indicators for Need for Chaos refer to the second and third terciles of mean responses on an eight-item scale. The positive outpartisan experience variable estimates the effect of assignment to that condition relative to the negative outpartisan experience condition (the reference category). Preregistered control variables are Biden approval (four-point scale); party identification (Republican indicator); college degree (bachelor’s degree indicator); gender (male indicator); race (nonwhite indicator); age group (indicators for 35–44, 45–54, 55–64, and 65+); social media use (indicators for self-reported usage of Facebook, Twitter, Snapchat, Instagram, and WhatsApp); and sharing behavior (indicators for self-reported sharing of news about sports, celebrities, science, and business). See Online Appendix A for news headlines and question wording.

Before administering the treatments, we also measured respondents’ Need for Chaos using an eight-item scale from Petersen et al. (2023) and divided them into terciles of low, medium, and high NFC. We then estimated how the effects of the accuracy prompt vary by level of Need for Chaos. The results, which are provided in Table 4b, indicate that exposure to an accuracy prompt increased truth discernment among low-NFC respondents (0.319, p < .005) but had no measurable effect on discernment among high-NFC respondents (−0.089, p > 0.05, 95% CI: −0.30, 0.12). We can reject the null of no difference in accuracy prompt effects between high- and low-NFC respondents (−0.409, p < 0.01). 8 These results are illustrated in Figure B2 in Online Appendix B.

Conclusion

Results from an experiment conducted among a large sample of social media users who share political news online indicate that reducing affective polarization does not affect sharing intentions for false or congenial news headlines. By contrast, we found evidence that making accuracy considerations salient increases discernment in sharing between true and false news headlines. The effects of an accuracy prompt did not vary measurably when affective polarization was exogenously reduced nor among strong partisans. However, the prompt’s effects seemed to be strongest among people who are low in Need for Chaos, a dispositional factor associated with sharing hostile political rumors.

These findings contribute first to the literature on the consequences of affective polarization and the causes of misinformation sharing and belief. Affective polarization is often discussed as a potential influence on misinformation sharing and belief (Garrett et al., 2019; Jenke, 2024; Osmundsen et al., 2021). We instead find that improving people’s feelings toward the opposition party relative to one’s own has no measurable effect on intentions to share false news. This finding underscores the importance of experimentally testing observed correlations between affective polarization and antinormative behaviors like sharing false news, which may be spurious (Broockman et al., 2023; Voelkel et al., 2023). Moreover, our findings build on and extend existing research from Broockman et al. (2023) showing that manipulating affective polarization affects interpersonal attitudes but not other types of political attitudes and behavior. We similarly show that affective polarization is less influential on false news sharing than expected, lending credence to the claim that its harms to democracy are less than the field has typically assumed.

In addition, our findings provide new insights into the role of (in)attention to accuracy in false news sharing. The accuracy prompt had no measurable effect on the self-reported importance of accuracy in news sharing online, but it still improved discernment between true and false headlines in sharing. In addition, these effects were not measurably affected by either the affective polarization manipulation or whether the respondent identified as a strong partisan. These results suggest that accuracy prompt effects are largely cognitive and possibly subconscious—the most important factor is making accuracy salient, not whether people say accuracy is important in sharing or not.

However, several limitations should be noted. First, we measured sharing intention in a hypothetical context. Mosleh et al. (2020) and Arechar et al. (N.d.) find that such measures correspond well to real-world behaviors, but future research should ideally measure effects on actual sharing behavior. Second, our results were collected using a non-representative U.S. sample with stimuli that were salient at the time the study was fielded; future replications with a representative sample and/or non-American respondents using different sets of stimuli would be desirable. Finally, the effects of the trust game on affective polarization in our sample were smaller than those found in Broockman et al. (2023) (−4.7 and −5.1 points for the public and politicians versus −14.3 and −9.8 points, respectively). These effects are still substantively meaningful (−0.17. and −0.13 standard deviations, respectively) and do not diminish the validity of our findings, but it would nonetheless be valuable to replicate the study using manipulations that generate larger effects.

Nonetheless, our results provide important new evidence that the effects of affective polarization on the spread of misinformation may be overstated. Further research is needed to determine which harms it causes (if any) beyond interpersonal hostility and to develop more effective approaches to countering false news sharing that take these findings into account.

Supplemental material

Supplemental Material - Reducing affective polarization does not affect false news sharing or truth discernment

Supplemental Material for Reducing affective polarization does not affect false news sharing or truth discernment by Carter Anderson, Oliver Byles, Joshua Calianos, Sade Francis, Chun Hey Brian Kot, Bennett Mosk, H. Nephi Seo, Julija Vizbaras, and Brendan Nyhan in Research & Politics.

Footnotes

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Dartmouth Center for the Advancement of Learning and the Carnegie Corporation of New York.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Carnegie Corporation of New York Grant

This publication was made possible (in part) by a grant from the Carnegie Corporation of New York. The statements made and views expressed are solely the responsibility of the authors.