Abstract

Political elites express their ideological positions on contentious issues across various arenas in the public sphere. Social science research often relies on data extracted from various media or political and administrative sources, as well as surveys that are administered directly with the political actor. Although some studies compare ideology across different sources, few systematically analyze how political actors adjust their ideological messaging to the audiences in the respective communication arenas and how such changes are associated with systematic bias in data sources. This paper uses a unique dataset combining climate policy belief observations from three arenas—social media, Congressional testimony, and surveys—on identical ideological variables and during the same time period. We apply item response theory to understand how responses differ by arena and find that ideological communication on Twitter is most left-leaning, Congressional testimony is most right-leaning, and surveys, the data source with the smallest potential arena effect, is in the middle. We also find that actors with strong ideological leaning moderate their positions on social media and in Congress. These findings enhance our understanding of strategic communication depending on audience context and inform social research on biases when analyzing specific data sources.

Introduction

Social scientists have drawn on elite opinion to answer some of their most central questions (Kertzer and Renshon 2022), including: Do voters align with candidate ideology (Hare et al., 2015)? How do parties compete and discipline their legislators (McCarty et al. 2001)? How do interest groups position themselves in policy subsystems and coalitions (Klüver 2009)?

Research has relied on a variety of data sources to extract elite opinion, including surveys (Kertzer 2022), political speeches (Diermeier et al., 2012), voting behavior (Ansolabehere and Kuriwaki 2022), or social media (Mosleh and Rand 2022). At the same time, scholars recognize that elite opinion is context-dependent: the opinion expressed by an actor may change depending on the audience, or the “arena” in which it is being broadcasted (Van Aelst and Walgrave 2016). Revealed ideological preferences through roll call voting may differ from ideal points estimated from self-reported survey responses, and they may yet differ from the policy preferences signaled to political allies, opponents, and voters through social media—even if the same measurement model and items are employed. We refer to these systematic differences as

The arena effect provides insights into why media coverage presents a skewed image of the social world (McLuhan 1994). In elite opinion research, arena effects may bias the measurement of ideological positions. For instance, Jost and Sterling (2020) provide evidence that the communication patterns of conservative and liberal members of Congress are ideologically more similar on the Congressional floor than on social media because legislators use the former arena to convince other political elites and the latter arena to convince their core supporters and/or ordinary citizens. These findings imply that research on ideological positions might reveal more polarized and extreme stances on social media, yet more moderate and similar ideological positions of the same political elites in Congress. Focusing on one of these sources may consequently bias measurement in research on elite opinion. Similarly, selective media consumption by the public may bias their perception of ideological ideal points due to arena effects.

There have been efforts to compare coverage across different media platforms to “isolate what among our findings is universal, and what depends on the context of specific platforms” (Bode and Vraga 2018, 4). Such comparisons look specifically at social media versus offline media sources (Castanho Silva and Proksch 2022; Ivanusch 2024), as well as survey data (Mellon and Prosser 2017). Differences have also been found in research comparing ideology in political text data to survey results. Comparing advocacy groups’ publicly stated positions to their responses in surveys, Ingold and colleagues find a “substantial divergence between official and private expressions of policy positions” (Ingold et al., 2020: 193). Recent literature has also focused on differences between social media platforms (e.g., Bossetta and Schmøkel 2023; Larsson et al., 2024).

The findings of these studies suggest that political elites strategically modify how they publicly display their political preferences. While elites likely hold relatively stable and consistent views on political issues and have vested interests in either advancing or muting these issues, they seek to promote and maximize their impact through engagement across various media platforms. However, the audiences of these platforms represent different sub-groups with diverse demographics, political opinions, and expectations regarding elite behavior. To persuade these various social groups, elites may therefore need to adjust their public expression of preferences, for instance by altering the tone of their language, the framing of their arguments, or the intensity of their political demands (Kelm 2020; Stier et al., 2018).

We contribute to this literature by systematically linking the aggregate ideological position an elite actor conveys on a left-right scale to the arena in which the position is presented by providing empirical evidence on several theoretical mechanisms related to audience costs and attention incentives. Our analysis investigates differences in how political elites communicate ideological beliefs on climate politics in the U.S., comparing offline versus online and public versus private arenas. Using ordinal item response theory (IRT), we introduce a novel measurement by comparing the ideological positions of the same political elites over the same time frame and the same climate-related issues, yet across different arenas, highlighting how elites adjust their positions based on audience expectations across different media sources. Our study offers an overview of multiple types of arena effects that interact in influencing and potentially biasing elite communication of preferences and opinions. Our findings reveal that elites lean left on Twitter and right in Congressional hearings. However, actors with a stronger right- or left-leaning stance on climate politics will rather unveil these preferences in private arenas such as surveys where the political repercussions are perceived to be lower than in public arenas. Although surveys are not free from bias, such as interviewer bias and social desirability, they hence come closest to the “ground truth” of an actor’s intrinsic opinion. Furthermore, we find additional actor type- and arena-specific incentives with a given audience, as detailed in the next section.

Expected differences across arenas

We compare expressed ideology in a salient policy domain—climate politics in the U.S.—across three of the most important arenas: testimony in the U.S. Congress, survey responses, and social media discussions on Twitter. Climate politics represents a deeply contentious issue with a wide range of stakeholders involved in policymaking and various audiences interested in the topic. The contentious nature and broad participation of actors make climate politics an ideal case for studying arena effects.

To formulate expectations about specific arena effects, we will employ the following notation:

Our expectations towards political elite behavior on Twitter, Congress and the survey are as follows: First, if there is a public audience, such as on social media, audience members may punish the display of ideologies that deviate far from the median ideology of the audience. Social media users tend to be more progressive on average than the general population. In the US, for example, Twitter user have been found to skew more towards Democrats (Wojcik and Hughes 2019). If elite actors play to this larger Twitter audience, we expect that elite ideology on Twitter will skew more to the ideological left than ideology presented in testimony or survey responses, hence

Second, an alternative hypothesis consistent with the literature is that users on Twitter will be more extreme in either direction than in other arenas as they are communicating through polarized echo chambers (Falkenberg et al., 2022), although other research on U.S. political elites argues the polarization of Twitter is overstated (Mukerjee et al., 2022). If elite actors play to their respective bases, we expect to see their ideology as more extreme (in either direction) on Twitter (Sunstein 2007), that is,

Third, while survey responses are often subject to social desirability bias, testimony is potentially subject to punishment by the Congressional audience. If the audience costs of facing partisan legislators are greater than the audience costs of facing an interviewer and potentially an academic audience, we can expect that actors display more moderate views in testimony than in surveys, that is,

Fourth, scientific actors are driven by a motivation for societal impact, that is, they want their voice to be heard. They should play to the predominant ideology in the respective arena and display a higher cross-arena volatility than other actors (Perna et al., 2019; Walter et al., 2019). We should therefore expect scientists to take a more left-leaning position on Twitter and a more neutral/right-leaning position in Congress as per the previous expectations. Businesses, environmental groups, and NGOs, in contrast, should display more consistent ideologies. Businesses, for example, are likely right-leaning as they will want to reduce regulatory impact on their operations. We should therefore expect

Finally, we expect individuals to take a more extreme stance than collective organizations on Twitter, as suggested by research that documents how views become more extreme on Twitter (Sunstein 2007).

Data and methods

To isolate arena effects, everything but the expressed opinion must be held constant across actors, including who speaks, when they speak, the items over which aggregate opinion estimates are inferred, and the methodology employed to estimate opinion from the different sources. Accordingly, this paper analyzes data collected from three distinct sources (for full details on constructing the dataset, data sources, and sampling, see our Supplemental Information): discussions about specific climate policies in Congressional hearings during the 113th and 114th sessions of the US Congress (January 2013 - January 2017), Twitter data from the top policy actors about the same climate policy issues during the same period, and survey responses collected directly from the same policy actors working on climate during the 114th session of the US Congress in summer 2016 (response rate: 57%). Actors across all three data sources were sampled according to their participation in Congressional hearings and/or the survey, representing the core of the policy network on climate policy in the U.S. Our analysis focuses specifically on 28 policy elites who participated in at least two of the three arenas.

Drawing on prior research (Fisher and Leifeld 2019), the nine most salient and controversial policy beliefs about climate change were content-coded in the Congressional and Twitter data. Two of the nine items represent categories related to the science of climate change, which has been a central theme in the climate change debate in the United States for many years: “Climate change is real and anthropogenic” and “Climate change is caused by greenhouse gases.” The seven other categories were about different climate policy issues/instruments: “Legislation that regulates carbon dioxide emissions will not hurt the economy,” “Legislation should establish a market for carbon emissions (cap and trade),” “Legislation should establish a carbon tax,” “The Federal government (not states) should take the lead on climate policy,” “Climate change (or failing to address climate change) poses a security threat,” “States should accept the Clean Power Plan,” and “The US should meet or exceed the 26%-28% emissions reduction target by 2025 against a 2005 baseline (per the Paris agreement).”

Supporting and rejecting statements on the respective items were coded; the absence of positive or negative statements or a combination of supporting and rejecting statements was assumed to reflect neutral/ambivalent positions on a three-point scale. The surveys asked attitudinal questions on the same nine items, using a five-point Likert scale which was later collapsed into a three-point scale (agreement, neutrality, disagreement) to match the scales of the other two sources. This data collection strategy makes the three data sources as comparable as possible despite the different ways opinions are uttered in these arenas.

A joint ordinal item response model was estimated across the 28 actors and their two to three arenas each (yielding 78 ideological/ability parameters for comparison). Item response models translate higher-dimensional preferences by actors into positions on a latent ideology dimension. The ordinal IRT model was defined in the Bayesian programming environment JAGS and estimated using Bayesian Gibbs sampling with uninformative normal priors for the parameters. For full methodological details, see our Supplementary Information.

Each actor–arena combination was weighted equally in the estimation and based on the same nine items. This setup enables us to compare source-specific ideal points within each actor as well as actor-type-specific ideal points within sources as if each actor–arena combination was a separate actor. In an additional robustness check, we estimated the six missing actor–arena combinations as parameters from the data to achieve a balanced panel, yielding posterior distributions corresponding to the uninformative priors for these parameters and no changes in the estimated results for the remaining parameters.

Samples from the full posterior distribution of the ability parameters for actors in arenas were then used as input data to regress ability on actor type and other covariates, using a standard Bayesian linear model with uninformative priors in JAGS. Summary statistics were generated to describe differences in estimated opinion between arenas and between actor types. To aid interpretation, predicted ideologies were generated based on the regression model for several hypothetical scenarios.

Results

Figure 1 reports the mean values of the estimated ability parameters and their 95% credible intervals. Table 1 summarizes these ideological positions by actor type. On average, actors have negative (left-leaning) ideological scores on Twitter (mean posterior ability Ideological positions and credible intervals. Summary statistics for ideological scaling (means and 95% credible intervals).

Positions on the left are (slightly) more extreme on Twitter (

Hence, while the first part of the second expectation is consistent with the evidence, it is not true more generally that ideology displayed on Twitter is more extreme than in other arenas on either side of the political spectrum.

Both on the left and right, positions are more extreme in the survey

The overall evidence suggests that

The mean ideal points of actor types vary across sources (Table 1). Scientists

Bayesian linear regression models for the three arenas.

aZero is outside of the 95% Bayesian credible interval. Reference group for actor types: Government

Consistent with the previous findings, the intercept in the Twitter model (

Individuals are more right-leaning than organizations on Twitter but are more left-leaning in the survey and Congress. The latter findings might indicate that organizational behavior is more influenced by arena effects than individual behavior. Organizations will state a progressive opinion on Twitter to attract a progressive audience. When they engage with politicians in Congress, however, organizations are more moderate.

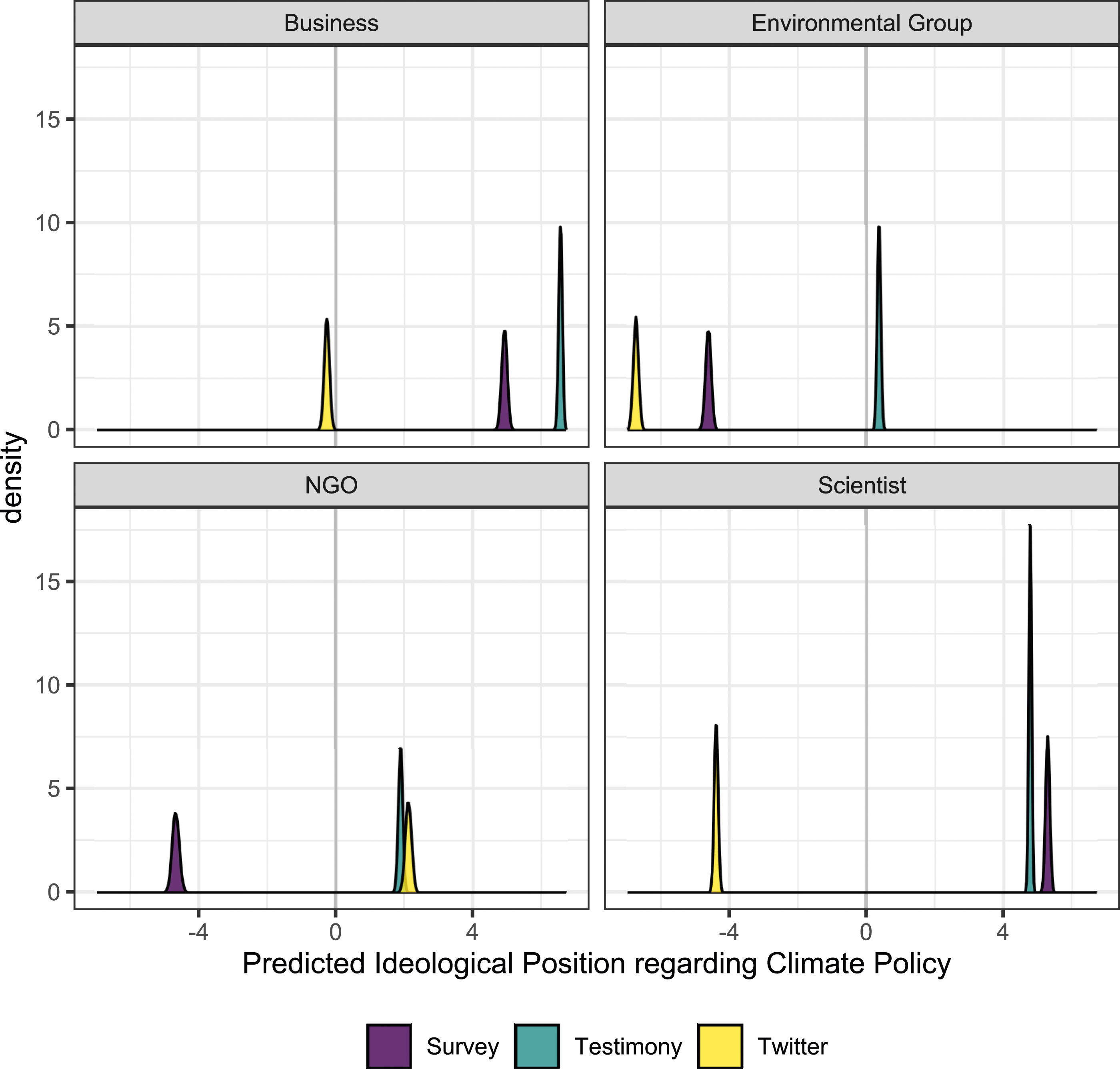

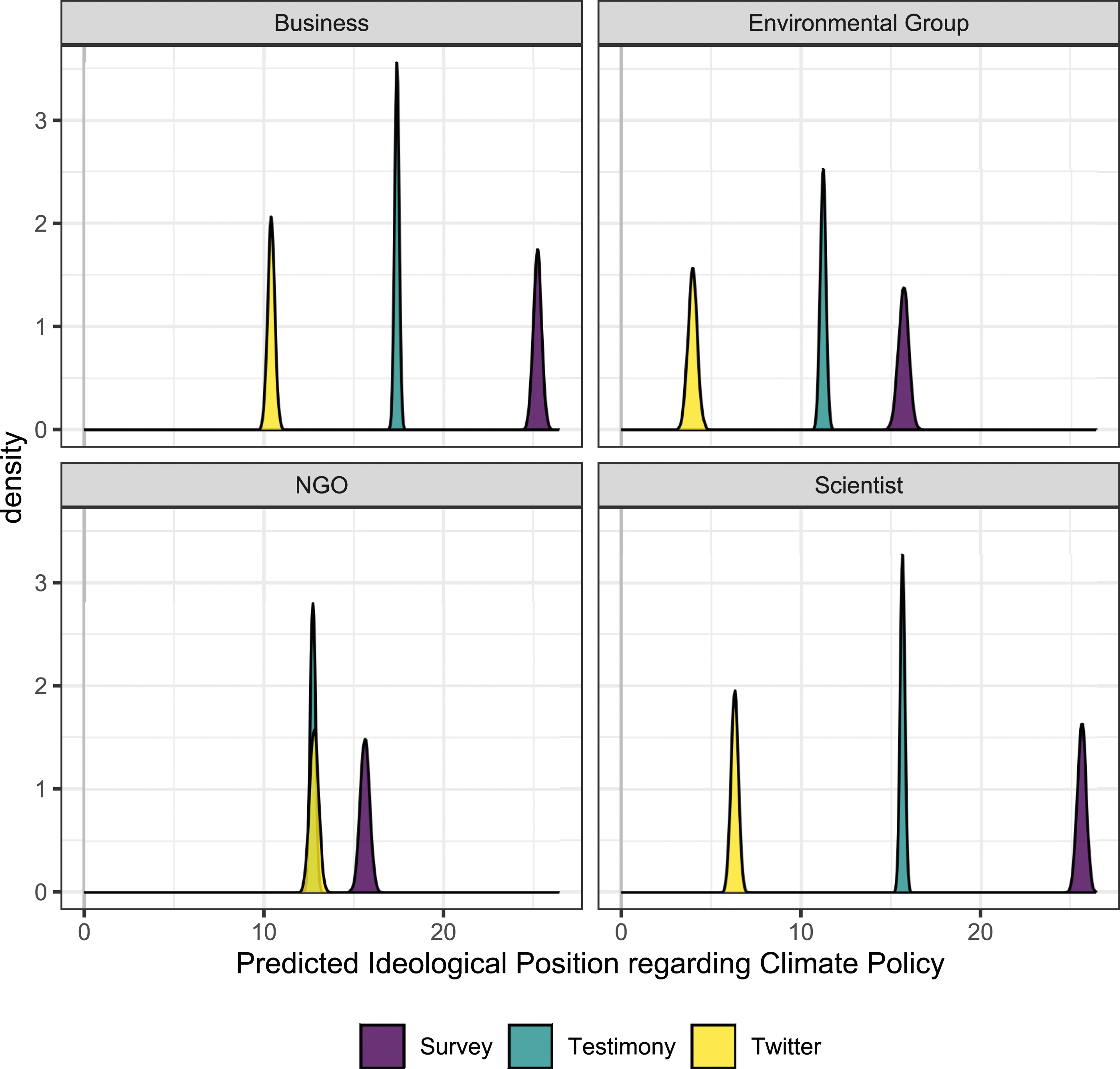

To aid the interpretation further, we computed predicted ideologies for three different scenarios based on the posterior distributions of the regression models. In the first scenario, the ideology of the respective arena was predicted by holding the ideology in the other two arenas constant at their means (Figure 2). Separate predictions were generated per actor type. For instance, survey ideology was predicted for each actor type when the actor type was assumed to have an average Twitter ideology (relative to the distribution of Twitter ideologies) and an average testimony ideology. In the second scenario, the two other ideologies were held constant at the value corresponding to the actor with the highest ideology value in the respective arena, that is, assumed to be maximal per source (Figure 3). In the third scenario, the minimum observed values were used (Figure 4). In all scenarios, the “Individual” variable was set to the modal value (0 or 1) of the respective actor type, leading to 1 for SCI and 0 for all other actor types. The three scenarios reveal what ideologies each actor type would display in each arena if they were ideologically moderate overall, if they were very right-leaning, or if they were very left-leaning. Predicted ideologies for mean ideological values per source. Predicted ideologies for maximum ideological values per source. Predicted ideologies for minimum ideological values per source.

The average/moderate predictions (Scenario 1; Figure 2) yield a picture that is consistent with the descriptive results, with Twitter and surveys being to the left of testimony across actor types, except for NGOs. For very right-leaning actors of each type (Scenario 2; Figure 3), all three arenas are predicted to be in the positive range, with Twitter generally being more moderate than testimony and surveys taking the most extreme ideological position, which is again consistent with the descriptive evidence reported above. that is, even right-leaning actors convey moderate stances on social media to appeal to the left-skewed audience. For very left-leaning actors of each type (Scenario 3; Figure 4), Twitter occupies a more moderate position than surveys. We interpret this finding to mean that very left-leaning actors moderate their expressed speech on social media while they can speak relatively freely in surveys due to the lack of a public audience. We see this pattern that surveys occupy the outer position at both the left and right extremes of the spectrum.

Conclusion

Through our structured analysis of data collected from a diversity of sources around the same topic over the same time period, this paper sheds light on arena effects for three selected sources. The self-presentation of different actor types is connected to their strategic aims on different platforms with respect to the expectations of the platform’s audience. These arena effects then play a role in how left- or right-leaning an actor appears on contested issues like climate change and to what extent they self-censor.

By applying a novel methodology for measurement that compares the ideological positions of the same political elites on the same issues but across different arenas, we show that on average, actors are more left-leaning on Twitter and in surveys than in Congressional testimony. However, the more they lean either to the left or right, the more they tend to avoid expressing radical stances in public arenas, such as on social media or in Congress, presumably for fear of negative public reactions that potentially threaten their overall aims. In other words, arena effects are tightly bound to the specific audience that is seen as consuming the different sources of information. Public arenas such as Twitter and Congress are connected to audiences with different demographics and ideologies who might sanction individuals for unfavorable preferences. In contrast, the survey audience is mostly represented by the interviewers and the scientific community. This audience might also provoke certain arena effects (e.g., social desirability, for an overview, see Horiuchi et al. 2022). However, these effects are likely to impact the overall credibility and strategic behavior of actors to a lesser extent than public arenas (Ecker et al., 2022). As a result, surveys would be the best choice to elicit the ground truth as the audience costs are smaller.

Scholars should more explicitly account for arena effects when selecting and analyzing source materials in elite opinion research. Furthermore, journalists and the public need to understand that none of the sources necessarily reflect revealed preferences (Jerolmack and Khan 2014). Future studies should examine if these arena effects can be identified for other contested issue areas and how other sources compare. We chose a single contested issue area in one country, and additional research needs to establish to what extent the arena effect depends on context. Qualitative interviews might offer insights into the underlying mechanisms, about which we can only speculate. The data presented here were collected before the global climate strikes in 2019, which boosted climate skeptic activity on social media and fueled ideological polarization towards climate change politics (Falkenberg et al., 2022). Future research should also examine if the same arena effects are still at work around the climate issue as it becomes even more polarized (Egan and Mullin 2024).

Regarding the social media arena effect, our data were collected during the period when Twitter was seen as a relatively open arena for public discourse, and well before Elon Musk purchased Twitter, which led to substantial changes in the way the platform was run, who engaged on it, and the data availability from it. Research also must investigate how these changes have affected who participates and how they present themselves on this particular social media platform. More generally, the social media landscape has become broader and more fractured in recent years. Social media platforms may differ in their specific biases and arena effects. Research using social media data to infer ideological positions of elites needs to consider the arena effect associated with the respective medium. Our analysis has linked mechanisms related to audience costs and actor types to the ideological positions taken by actors privately and in the public sphere. These findings can guide the development of expectations around arena effects in other media as well.

Supplemental material

Supplemental Material - Ground-truthing political elites in the public sphere: Measuring the arena effects of elite opinion

Supplemental Material for Ground-truthing political elites in the public sphere: Measuring the arena effects of elite opinion by Tim Henrichsen, Philip Leifeld, Lorien Jasny, Iain Weaver and Dana R. Fisher in Research & Politics.

Footnotes

Acknowledgments

The authors would like to thank Ryan Bakker for helpful advice in the initial stages of the project. Any errors remain our own. The authors would like to thank Amanda Dewey, Ann H. Dubin, Anya Galli Robertson, Joseph McCartney Waggle, and William Yagatich for their research assistance during the data collection stage of the project.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the MacArthur Foundation (#G-1604-150842 and #G-16-1609-151514-CLS). The funding source had no involvement in research design, data collection, analysis, or interpretation of these findings.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.