Abstract

What can researchers do to address anomalous survey and experimental responses on Amazon Mechanical Turk (MTurk)? Much of the anomalous response problem has been traced to India, and several survey and technological techniques have been developed to detect foreign workers accessing US-specific surveys. We survey Indian MTurkers and find that 26% pass survey questions used to detect foreign workers, and 3% claim to be located in the United States. We show that restricting respondents to Master Workers and removing the US location requirement encourages Indian MTurkers to correctly self-report their location, helping to reduce anomalous responses among US respondents and to improve data quality. Based on these results, we outline key considerations for researchers seeking to maximize data quality while keeping costs low.

Introduction

Amazon Mechanical Turk (MTurk) is a popular and inexpensive way for scholars, particularly those interested in American politics, to gather survey responses. Much of MTurk’s value, however, rests on drawing respondents from the target population of individuals located in the US and obtaining high-quality responses. In 2018, researchers noticed an increase in responses to these human intelligence tasks (HITs) that were a mix of anomalous responses that did not fit requester expectations and/or work from foreign, primarily Indian workers using virtual private servers (VPSs) to circumvent survey location restrictions (Chmielewski and Kucker, 2020). Indians comprise a major part of the MTurk workforce, so addressing this problem is of paramount importance.

Two sets of solutions have been developed to detect and resolve these issues: question batteries designed to uncover anomalous responses (Barends and de Vries, 2019; Buchanan and Scofield, 2018) and location-based methods to block IP addresses of foreign workers attempting to access US-specific HITs (Ahler et al., 2019; Kennedy et al., 2020). We know that foreign workers often answer these question batteries in the same ways as do US respondents (Kennedy et al., 2020). Further, we also know that foreign workers successfully circumvent location-based detection methods, including VPS detection (Dennis et al., 2020; MacInnis et al., 2020). Therefore, neither of these solutions can successfully identify foreign workers who provide non-anomalous responses and appear to be located in the US (Dennis et al., 2020).

Foreign respondents who access US-specific surveys undetected may respond randomly, adding random noise to survey results (Kennedy et al., 2020). What is more likely is that the cultural experiences of foreign workers result in responses that are systematically different than those of US workers. For example, we asked survey respondents to list the three most important government positions. Although most US respondents listed the President, Vice President, and Speaker of the House, 10% of Indian respondents listed the head of the post office. Had these Indian respondents accessed a US-based survey, we would have incorrectly interpreted US public opinion. As such, ensuring that only US workers complete US-specific HITs is a high priority for maintaining MTurk data quality.

We surveyed Indian MTurkers (N=302) and show that 26% of respondents do not provide anomalous responses and, therefore, could access US-specific surveys without being identified, thereby contaminating the MTurk US survey pool. Indeed, 3% of our respondents on Indian MTurk claimed to be taking the survey from a US state, suggesting that they regularly access US-specific surveys. We identify characteristics of workers who provide anomalous responses.

As Indian MTurkers can pass question batteries and circumvent IP address detection, we develop a simple solution to improve US-specific HIT quality: using Master Workers, removing HIT location restrictions, and asking respondents to self-identify their country of residence. We implement this solution, alongside a battery of pilot tested questions designed to induce different answers among US and foreign respondents. The results (N=302) indicate that over 87% of Indian MTurkers honestly self-report their location. Only four respondents used a VPS, and at most 3% of respondents with US-specific IP addresses were potentially foreign respondents, compared with the 20–30% of suspected foreign respondents detected in surveys with US-specific location restrictions (Ahler et al., 2019; Dennis et al., 2020). Based on our results, we provide guidance for researchers designing MTurk HITs who face trade-offs between data quality and cost.

Anomalous responses

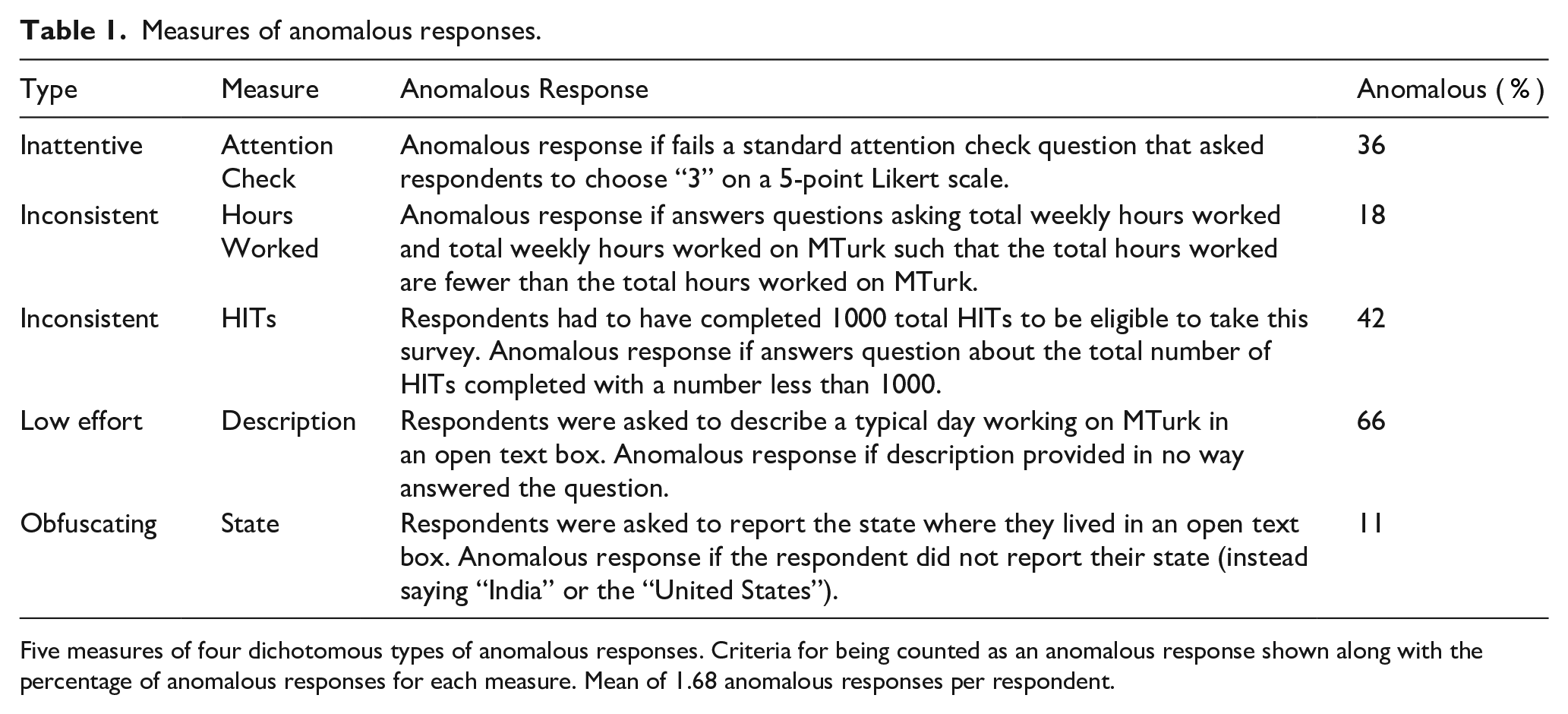

We conducted a survey asking Indian MTurkers about their descriptive characteristics, work on MTurk, and communication patterns. 1 Embedded in our survey were five measures of anomalous responses (Table 1), which we argue represent the main anomalous responses requesters observe when conducting surveys and experiments on MTurk. 2 A total of 36% of respondents failed our attention check question. We developed two measures to assess whether respondents provided consistent answers: 18% reported working more hours on MTurk per week than the total number of hours worked and 42% reported having completed fewer HITs than were required to be eligible for the survey. Fully 66% of respondents did not write a coherent description of their typical day working on MTurk, our measure of effort. There is also evidence that Indian MTurkers do use location-based technology to access US surveys: 11% obfuscated their location and 3% actually claimed to live in a US state, even though the survey was only available to MTurkers located in India. Overall, although 74% of Indian MTurkers failed at least one of these five measures, 26% did not, meaning that their non-anomalous responses would go undetected if they accessed US-specific surveys.

Measures of anomalous responses.

Five measures of four dichotomous types of anomalous responses. Criteria for being counted as an anomalous response shown along with the percentage of anomalous responses for each measure. Mean of 1.68 anomalous responses per respondent.

What is more, respondents who provided one or more anomalous responses were more likely to engage in other forms of inattentive behavior like satisficing and hurrying (see Supplemental information SI.2). Taken together, these results emphasize the need to identify and to reduce the number of foreign respondents in US-based surveys: they are not the target population, and a high number of anomalous responses can negatively affect data quality.

Explaining anomalous responses

Who provides anomalous responses? We use Bayesian model averaging (BMA) (Montgomery and Nyhan, 2010) to explore relationships between respondent demographic and workplace characteristics and anomalous responses. BMA generates all possible model specifications and determines the proportion of models where each independent variable is included (the posterior inclusion probability (PIP)).

There are three key variables that differentiate Indian MTurkers providing anomalous and non-anomalous responses (see Supplemental information SI.3). Respondents who receive help starting on MTurk and those who have household members working on MTurk were more likely to provide an anomalous response. Respondents who had worked on MTurk longer were less likely to provide an anomalous response. Experienced workers know how to navigate the cutthroat MTurk system by forming relationships, sharing information only with key friends, and treating work on MTurk as a way earn some extra money while doing HITs of their choosing. Much of the work available to Indian MTurkers is repetitive, particularly low paying, and does not value worker experience. Hence, these MTurkers are more likely to be interested in and to have the skills to be able to navigate the process of completing US-specific surveys undetected.

Addressing anomalous responses

How can requesters incentivize high-quality Indian workers who pass all five measures of anomalous responses not to take US-specific surveys when they could do so successfully? Based on our experience working with Indian MTurkers, we propose limiting the respondent pool to Master Workers and removing all location-specific requirements (see Supplemental information SI.4). Although foreign respondents use increasingly advanced methods to circumvent technology designed to prevent them from accessing US-based surveys, workers in our approach have no incentive to obfuscate their location. Requesters can then filter out non-US-specific responses after the HIT is complete.

We test this design by fielding a survey among Master Workers with no location requirement (N=302). The survey was similar to our first survey with the addition of questions asking about the respondents’ country of residence and six pilot tested questions designed to elicit different responses among US and Indian workers. See Supplemental information SI.5 for details. Only four respondents (1.32%, three in the US and one in India) used a VPS. Among Indian respondents, 87.95% self-reported that they were in India. None of the five Indian respondents who claimed to live in the US masked their IP address, so their location was easily detected using IP address detection techniques.

We want to know whether any respondents whose IP address locates them in the US are actually Indian respondents who circumvented VPS and anomalous response checks. Note that existing IP address detection methods cannot detect these respondents because their IP address indicates that they are in the US. A total of 81.43% of respondents with IP addresses in the US passed all four measures of anomalous responses and all six items used to differentiate US and Indian respondents. Of the 15.71% who passed nine of ten measures, there was no pattern in these errors, suggesting that they were in fact respondents in the US who made a mistake.

Six respondents (3.51%) passed eight or fewer questions. This is a substantially smaller percentage of potentially foreign respondents than identified in previous surveys (Ahler et al., 2019; Dennis et al., 2020). These respondents largely passed our items designed to determine whether they were US residents, but received a lower score because they provided anomalous responses to other questions. There is some chance that these respondents are foreign workers taking US-specific surveys and circumventing VPS detection, but it is more likely that they are either inattentive US citizens or people not fluent in English.

Discussion and conclusion

Limiting survey respondents to Master Workers and removing location requirements disincentivizes Indian respondents from claiming to be located in the US and from gaining access to US-specific work. Though few Indian MTurkers are Master Workers, opening surveys designed for US participants to foreign workers trades off increased confidence that US respondents can be successfully identified for increased cost. Table 2 describes common ways researchers restrict HITs to certain types of workers and use analytic techniques to filter out non-US-based respondents (see Supplemental information SI.6 for details). The table shows that more stringent analytic techniques cost more while increasing confidence that US-based respondents can be identified successfully.

Strategies to reduce anomalous responses.

HIT Restrictions are Amazon features to restrict survey eligibility. We assume all HITs are restricted to an approval percentage above 98%. Analytic techniques are requester strategies to filter out anomalous responses. We assume that IP address detection occurs after the survey is complete. Cost pp. is the estimated cost per non-anomalous, US response. Cost estimate is based on $1.00 payment, 30% of non-Master and 20% of Master Workers providing anomalous responses, 3% of workers using a VPS, and 80% of Master Workers being from the US 20% Amazon Fee plus 5% Master Worker fee.

We focus on the last three rows of the table, where confidence is highest. Surveys open to all respondents will have more anomalous responses than surveys restricted to Master Workers, both because non-Master Workers are less attentive and because foreign workers can access US-based surveys. These factors make it worth restricting survey respondents to Master Workers, who Loepp and Kelly (2020) show are not demographically different from non-Master Workers. Dropping the location restriction means collecting responses from foreign workers, which increases costs, but provides the surest way to discourage foreign workers from obfuscating their location or circumventing location requirements. This technique also allows requesters to compare self-identified foreign respondents to anomalous US responses to see whether anomalous US responses are likely to be from foreign workers, which validates the quality of the sample.

Requesters should think carefully about the trade-off between confidence and cost when selecting a survey approach. We test a method that removes existing incentives for foreign workers to defeat location blocking technologies. As such, requesters need not continue to invest in trying to block foreign workers from US-based surveys and instead can focus on building positive, long-lasting relationships with reliable MTurk workers. Obtaining foreign survey responses for requesters only interested in US respondents is an added cost that may not be feasible for some requesters, but the ability to compare responses from self-identified foreign workers to anomalous US-based respondents adds a new layer of previously unidentified data quality checks. Our work suggests that carefully considering the incentives and motivations of foreign respondents to access US-based surveys can lead to new data quality solutions that are beneficial for both requesters and workers.

Supplemental Material

sj-pdf-1-rap-10.1177_20531680211016971 – Supplemental material for Anomalous responses on Amazon Mechanical Turk: An Indian perspective

Supplemental material, sj-pdf-1-rap-10.1177_20531680211016971 for Anomalous responses on Amazon Mechanical Turk: An Indian perspective by William O’Brochta and Sunita Parikh in Research & Politics

Footnotes

Acknowledgements

We thank the Editor and Reviewers, Mathangi Krishnamurthy, Chris Lucas, and Bryant Moy for their comments and suggestions.

Correction (June 2025):

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Weidenbaum Center on the Economy, Government, and Public Policy.

Notes

Carnegie Corporation of New York Grant

This publication was made possible (in part) by a grant from the Carnegie Corporation of New York. The statements made and views expressed are solely the responsibility of the author.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.