Abstract

The prevalence of political innumeracy – or ignorance of politically relevant numbers – is well-documented. However, little is known about its consequences. We report on three original survey experiments in which respondents were randomly assigned to see correct information about the racial composition of the US population, median income and educational attainment, and the unemployment and poverty rates. Although estimates of these quantities were frequently far from the truth, providing correct information had little effect on attitudes toward relevant public policies.

In Innumeracy (1988: 3), John Allen Paulos asserts that “innumeracy, an inability to deal comfortably with the fundamental notions of number and chance, plagues far too many otherwise knowledgeable citizens.” For many citizens, innumeracy means unfamiliarity with politically relevant numbers. Is this lack of familiarity consequential? Would creating “numeracy” change political attitudes? Investigations of innumeracy and political ignorance have done more to map their contours than demonstrate their consequences. But as Lupia (2006) argues, it is important to justify why any particular fact is important to know. One way is to show that learning facts changes opinions.

We investigate the consequences of remediating innumeracy. The paper makes two central contributions. First, we extend previous studies by examining unexplored or under-explored topics, including knowledge of the racial composition of the United States population, the median income, educational attainment, the unemployment rate, and the poverty rate in the United States.

Second, we conduct three original survey experiments to investigate whether providing individuals with correct information actually affects opinions. Some previous work on innumeracy has examined correlations among numerical estimates and political attitudes. However, there have been few tests of what happens when erroneous estimates are corrected.

We find that correct information has little effect on related political attitudes, even when that information corrects serious misperceptions. However lamentable ignorance of political facts may be, our results suggest that remediating this ignorance may not affect actual opinions.

Innumeracy and its (potential) consequences

Although scholars debate the extent of the public’s factual knowledge of politics (e.g. Delli Carpini and Keeter, 1996; Gibson and Caldeira, 2009; Lupia, 2006; Luskin and Bullock, 2011; Prior and Lupia, 2008), certainly a large proportion of the public does not know many different political facts, which in turns suggests that “enlightening” them could change their attitudes. This conclusion applies to political innumeracy in particular. Many citizens do not accurately estimate quantities related to population demographics (Alba et al., 2005; Citrin and Sides, 2008; Herda, 2013; Kuklinski et al., 2000; Morales, 2011; Nadeau et al., 1993; Theiss-Morse, 2003), macroeconomic statistics (Conover et al., 1986; Holbrook and Garand, 1996; Sigelman and Yanarella, 1986), and the federal budget and other quantities related to public policies (Berinsky, 2007; Gilens, 1999; Kuklinski et al., 2000; Kull, 1995–96).

Why should these kinds of factual misperceptions affect attitudes? One reason is that attitudes may be distillations of relevant beliefs, where beliefs are “all thoughts that people have about attitude objects” (Eagly and Chaiken, 1993: 11). As Eagly and Chaiken put it: “The assumption is common among attitude theorists that people have beliefs about attitude objects and that these beliefs are in some sense the basic building blocks of attitudes” (103). For example, attitudes toward public policies depend on beliefs about the beneficiaries of those policies, such as their deservingness (e.g. Iyengar, 1991). Similarly, beliefs about the importance of social problems, as manifested in estimates of quantities like the unemployment rate, could affect attitudes about the government’s response.

If beliefs are the building blocks of attitudes, then changing beliefs could change attitudes. A variety of psychological theories speak to this possibility, but among the most successful and durable is Festinger’s (1957) dissonance theory. Festinger argues that when people hold beliefs that imply divergent or opposite conclusions, they tend to change one or more of those beliefs to bring them into greater agreement. Correcting a factual misperception may induce dissonance and thereby lead people to change other attitudes. For example, after finding out that a social problem is more or less serious than you initially believed, you may think differently about how the government should respond to the problem.

However, other theories suggest that attempts to correct misperceptions would not necessarily change attitudes. For one, misperceptions can be stubborn. The estimates of politically relevant numbers noted earlier are far from random guesses. They vary systematically with cognitive ability as well as contextual information (see Citrin and Sides, 2008; Herda, 2010; Hutchings, 2003; Jerit et al., 2006; Luskin, 1990; Nadeau and Niemi, 1995; Nadeau et al., 1993; Sigelman and Niemi, 2001; Wong, 2007) and are often held with considerable certainty (Kuklinski et al., 2000).

Second, people tend to resist changing their attitudes. Festinger noted that people often avoid information that conflicts with existing beliefs. And when that information is impossible to avoid, people may ignore it, discount it, or rationalize it away (Lodge and Taber, 2013). For example, Gaines et al. (2007) describe how Democrats and Republicans correctly perceived that casualties in the Iraq War were increasing over time, but interpreted that fact differently – with Republicans more likely to perceive the number of casualties as moderate or small. Correcting misperceptions can even “backfire” by worsening misperceptions among those least predisposed to believe the correct information (Nyhan and Reifler, 2010). In these studies, beliefs are not so much building blocks of attitudes but consequences of attitudes. People shape their perceptions of fact to fit the opinions they already hold.

These competing theories suggest that correcting information could be effective or ineffective. It is perhaps no surprise, then, that the evidence is mixed. Misperceptions can be correlated with attitudes. For example, Nadeau et al. (1993: 343) find that people who overestimate the size of minority groups also perceive them as a greater threat (see also Citrin and Sides, 2007), but understandably qualify their conclusion: “the connection may be one of cause or effect” (see also Herda, 2010; Hochschild, 2001; Kuklinski et al., 2000: 801). Studies using experimental designs to correct innumeracy have found both that information changes political opinions (Gilens, 2001; Howell et al., 2011) and that it does not (Berinsky, 2007; Kuklinski et al., 2000). In the conclusion, we discuss our results in light of these studies to identify potential reasons for apparently divergent findings.

Experiment #1: correcting estimates of average income and educational attainment

The first experiment was conducted in the 2007 Cooperative Congressional Election Study (CCES). Respondents (N=1,000) were asked to estimate the average household income in the United States and the percentage of Americans with four-year college degrees. Respondents entered a number in a textbox or checked a box that said “I don’t know.” 1 In this and subsequent experiments, these items were designed to help respondents make quantitative estimates. The items avoided jargon (e.g. “median income”), asked about relatively uncomplicated but still relevant categories (those with “a four-year college degree”), and simplified the task of supplying a percentage (see Ansolabehere et al., 2013). Eighty-three percent of the sample provided an estimate of average income and 82% provided an estimate of the percentage with a college degree.

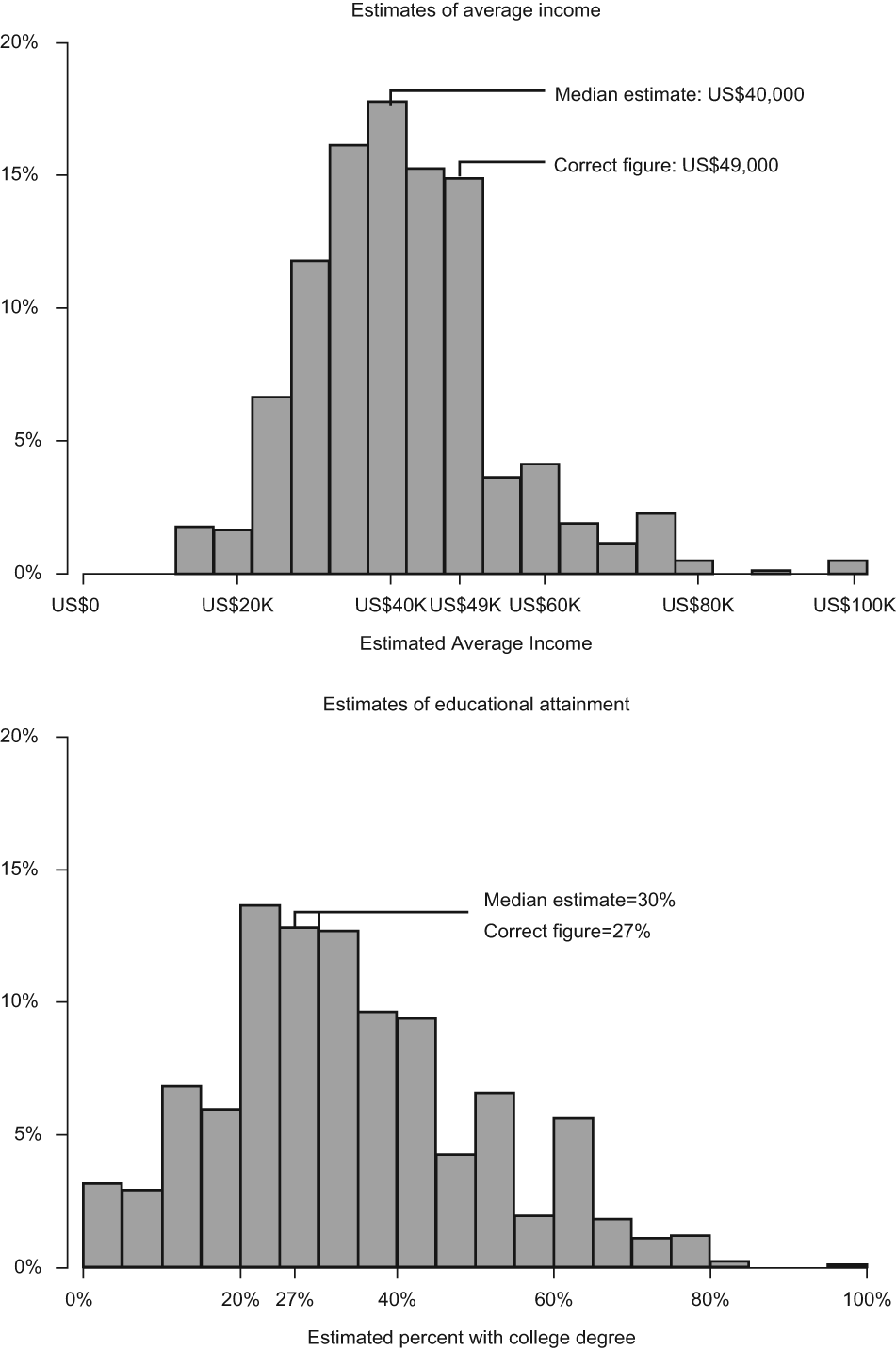

Although the estimates varied, on average they were close to reality (Figure 1). The median estimate for income was US$40,000; the actual median household income at that time was US$49,000. 2 The median estimate of the percent with a college degree was 30%; the actual Census figure was 27%. The correlation between the two estimates was modest (r=.15; p<.001).

The distribution of estimates of average income and the percentage with a college degree.

After respondents estimated average household income, they were randomized into one of two experimental conditions. One condition was given the correct information under the guise of asking them whether they had heard of a new Census Bureau report – a tactic used in similar experiments (Gilens, 2001). The treatment said that “the Census Bureau has estimated that the average household earned about $49,000 in 2006.” The other condition asked whether they had heard of this report but provided no correct information. A similar experiment was carried out after respondents gave their estimates of educational attainment. 3 Immediately after these two experiments, respondents were asked whether government spending on various programs should be increased, decreased, or kept the same. The programs were: loans for college tuition, the war on terrorism, aid to the poor, national defense, and job training. We focus on student loans, aid to the poor, and job training, which are most relevant to national income and educational attainment. People who overestimated the median income or the proportion with a college degree should support more spending on these areas when they learn that conditions in the country are “worse” than they had presumed. Similarly, people who underestimated should support less spending, since the information would suggest that conditions in the country are “better” than they had presumed.

Across the four experimental conditions, there were no significant differences in preferences for increased spending in these areas (Figure 2). For example, the percent favoring increased spending on aid to the poor ranged from 48–49% across the four conditions. Regressing respondents’ estimates on the treatment groups suggested no statistically significant differences across treatment groups (see Panel A of Table A-1 in the supplemental appendix). The experimental treatments had little direct effect.

The effects of correct information about income and educational attainment.

Experiment #2: correcting estimates of the unemployment and poverty rates

The second experiment was conducted in the pre-election wave of the 2010 CCES (N=1,000). Its structure varied slightly from the first experiment. Respondents were randomized into one of three conditions: 40% of respondents estimated the unemployment or poverty rate, 40% estimated these rates and were given the correct information, and 20% did not provide estimates or receive this information. This randomization occurred separately for questions concerning unemployment and poverty, making the experimental design a 3×3 factorial. Eighty-eight percent of respondents provided an estimate of the unemployment rate; 82% estimated the poverty rate.

Estimates of the unemployment rate were clustered around the actual rate at that time (9.6% – see Figure 3). 4 The median estimate was 12%. Approximately 25% of the sample gave an estimate that was 15% or higher, accounting for the long right tail of the distribution. Estimates of the poverty rate were more variable, but on average higher than the actual rate (20% vs. 13%). This dovetails with Kuklinski et al.’s (2000) finding that the public overestimates the fraction of Americans on welfare. The correlation between these sets of estimates was r=−0.06 (p=0.23).

The distribution of estimates of the unemployment and poverty rates.

The experimental manipulations were framed as questions about news or government reports, with one version of the manipulation including the actual unemployment or poverty rate. All respondents were then asked about the level of government spending on loans for college tuition, aid to the poor, job training, unemployment benefits, and food stamps – which in theory should be more connected to these economic statistics than they were median income or educational attainment.

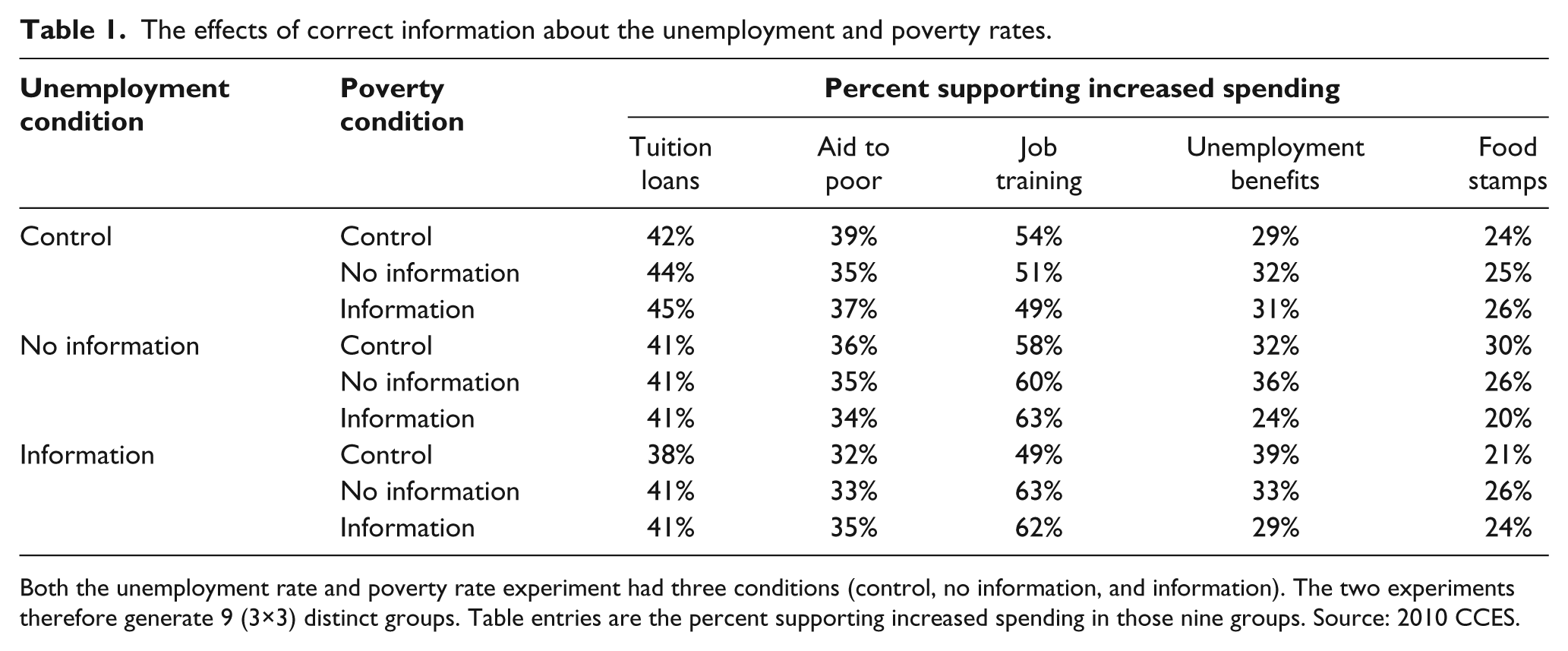

Again, there is little effect of correct information on preferences for increased spending (Table 1). There are very small differences between those who guessed but did not receive information, and those who guessed and then received one or both pieces of correct information. Models of spending preferences also suggest no systematic differences across experimental conditions (see Panel B of Table A-1).

The effects of correct information about the unemployment and poverty rates.

Both the unemployment rate and poverty rate experiment had three conditions (control, no information, and information). The two experiments therefore generate 9 (3×3) distinct groups. Table entries are the percent supporting increased spending in those nine groups. Source: 2010 CCES.

Experiment #3: correcting estimates of the racial composition of the population

The third experiment was conducted in the post-election wave of the 2010 CCES (N=844). Respondents were randomly assigned to three conditions: 40% guessed the fraction of the population in each of three racial groups (“white or Caucasian,” “black or African-American,” and “Latino or Hispanic”), 40% guessed those fractions and subsequently received correct information, and 20% did not guess at all (the control group).

As in previous studies (e.g. Nadeau et al., 1993; Theiss-Morse, 2003) respondents underestimated the percentage of the population that is white and overestimated the percentages that are black or Latino (Figure 4). The median estimate of the percent white was 55% (vs. 65% in reality), while the median estimates of the percent black and percent Latino, 20% in both cases, were larger than reality (12% and 15%, respectively). Larger estimates of the percent white were associated with smaller estimates of the percent black (r=−0.55; p<0.001) and the percent Latino (r=−0.61; p<0.001). Estimates of the size of the two minority ethnic groups were positively correlated (r=0.24; p<0.001).

The distributions of estimates of the racial composition of the population.

The experimental manipulation involved a news story about Census Bureau estimates of the racial composition of the American population. Following this prompt, respondents were asked five questions about three policy areas relevant to black and Latinos: government spending to help blacks, affirmative action, and immigration. Correcting overestimates of minority group population size should create more willingness to support policies that would benefit these groups, while correcting underestimates of minority group population size should create less willingness to support policies that would benefit these groups.

Once again respondents who received the correct information gave similar responses on average to those who provided guesses but did not receive the information and to those in the control group (Figure 5). The largest pair-wise difference was .04 on scales that range from 0 to 1. Additional analysis also found no statistically significant treatment effects (Panel C of Table A-1).

The effects of correct information about racial composition.

Conclusion

We find that correcting examples of a particular type of misinformation – political innumeracy – has little effect on political attitudes. These null effects emerged regardless of policy domain or the particular kinds of numbers provided. 5

This raises at least two questions. Why did correct information matter so little? And, across the scholarly literature, why are the effects of factual information so inconsistent? Based on our findings and other studies (Berinsky, 2007; Gilens, 2001; Howell et al., 2011; Kuklinski et al., 2000) that also involve experimental treatments with correct numerical information, there is no clear answer. The inconsistencies do not appear to depend on several features of research design. Across these studies, the treatments all involved similar statements of correct numerical quantities, such as the number of American military casualties in Iraq (Berinsky, 2007), the average teacher’s salary (Howell et al., 2011), or the percent of the budget spent on foreign aid (Gilens, 2001) or welfare (Kuklinski et al., 2000). Moreover, both Gilens’ and our experiments frame the correct facts as originating in a news story or government report, although Gilens finds significant effects of correct facts and we do not.

In terms of the sequence of questions and information in the survey experiment, our design most closely resembles the second experiment presented in Kuklinski et al. (2000). Their study and ours first asked respondents to provide estimates and then directly corrected the estimates of a random subset. (The other studies did not ask for estimates first.) Kuklinski et al. liken this to hitting respondents “right between the eyes” with the correct information. In their study this was the only experiment that had a significant impact: directly correcting people’s estimates of how much of the budget goes to welfare made them less opposed to welfare spending. But this same design did not elicit similar findings in our experiments.

The types of issues examined in these studies also offer little insight into their divergent findings. One might expect that correcting information should matter less for attitudes about issues tied to durable and deeply rooted predispositions, such as partisan or racial identities. This might explain why our experiment involving the racial composition of the population and attitudes about affirmative action and immigration did not turn up a significant treatment effect. The same finding emerged from the first experiment in Kuklinski et al. (2000), which examined attitudes toward another racialized issue, welfare (see Gilens, 1999). The same finding also emerged from Berinsky’s study of attitudes toward Iraq War, which was a highly polarizing issue for Democrats and Republicans (Jacobson, 2007).

However, these studies mostly examine issues related to the budget, government spending, and taxes. Although attitudes about these issues are connected to durable predispositions such as partisanship and ideology, these attitudes also vary a great deal over time (Wlezien, 1995). But despite a similar focus on spending – whether on foreign aid and prisons, on teacher salaries, on welfare (Gilens, 2001; Howell et al., 2011; and Kuklinski et al., 2000, experiment 2, respectively), or on spending on various programs, as in our experiments – these studies still have divergent findings. This is true even though the relevance of the facts to the specific policy items seems comparable in these studies – e.g., the crime rate and spending on prisons in Gilens (2001) and the unemployment and poverty rates and spending on unemployment benefits and aid to the poor in our studies.

There is clearly much more we need to know about the conditions under which correct information affects attitudes. A promising direction for future research is to better understand people’s willingness to incorporate factual information into their attitudes. In other words, although innumeracy may reflect “an inability to deal comfortably with the fundamental notions of number and chance,” as Paulos puts it, the willingness to draw upon correct numerical information may be more a matter of motivation than ability. For example, people appear more motivated to answer factual questions correctly when provided a financial incentive (Prior and Lupia, 2008) and to incorporate substantive information when polarizing party cues are not present (Druckman et al., 2013). Further research should elaborate on the factors that influence this motivation.

Better understanding the connection between facts and attitudes has important stakes for both empirical and normative political inquiry. For empirical inquiry, the important question is the extent to which citizens function like “motivated reasoners” or are actually willing to update their beliefs and attitudes in the face of new and even dissonant information. The competing theoretical perspectives and empirical findings suggest that this question is far from resolved. For normative inquiry, the crucial debate is over how much citizens need factual information in the first place (see Lupia, 2006). And of course the two questions are related: the normative value of facts may depend on whether the public incorporates facts into their thinking.