Abstract

In this paper, we examine the developments at the crossroads of mobile phones and AI––mobile AI––by highlighting and considering their implications for the significant, cross-cutting, and highly intersectional area of disability. As we shall discuss, disability is remarkably prominent in how mobile AI is imagined and deployed. Several kinds of mobile AI explicitly claim to provide innovations that will improve the lives of disabled people. Such claims regarding disability have been associated with the arc of mobile media and communication since the first-generation cellular mobile phones (and telecommunications before that), as well as with the most recent phase of AI fervor fueled by generative AI. While there is some emerging work in relation to disability and AI, as yet, there is little critical discussion of disability in relation to mobile AI. Against this backdrop, we are keen to spotlight disability on the mobile AI research agenda. To do so, we discuss the implementation of an AI voice assistant by Grab. Grab is a superapp operating across Southeast Asian nation-states that has already made its mark on mobile communication and everyday life through a range of offerings. Targeted at disabled people, we analyze how Grab’s AI Voice Assistant functions and discuss its implications for inclusion and the future of mobile media and communications.

In this paper, we examine the developments at the crossroads of mobile phones and AI––mobile AI––by highlighting and considering their implications for the significant, cross-cutting, and highly intersectional area of disability.

We define mobile AI as “AI designed for, integrated in and associated with mobile technologies and mobilities” (Goggin, 2025, p. 3). From 2023 onwards, mobiles and their associated ecosystems and technologies have gained visibility as part of the wider embrace of AI by contemporary societies (Crawford, 2021), but also as part of the redesign and reconfiguring of the next phase of mobile media and communication in the long passage after the smartphone. Major mobile devices, operating system developers, and carriers, including Apple, Samsung, Oppo, among others, are incorporating AI into their devices. There is a new wave of innovation in wearables, such as Meta's collaboration with sunglasses manufacturers Ray-Ban and Oakley, and dedicated AI device technologies such as the now-defunct Humane AI Pin and the Rabbit R1. Other specific examples of mobile AI that have enabled disabled people include wayfinding apps that integrate mobile location data and haptics to support disabled people’s movements (Ren et al., 2023), as well as health monitoring apps that integrate wearable sensors and mobile phones to detect health risks in real time (Virginia Anikwe et al., 2022). These developments mark what Goggin (2025) describes as a “mobile AI moment,” where smartphones are becoming key sites for AI experimentation and integration.

As we shall discuss, disability is remarkably prominent in how mobile AI is imagined and deployed. Several kinds of mobile AI explicitly claim to provide innovations that will improve the lives of disabled people. This has been a long-standing warrant associated with new technologies. Indeed such claims regarding disability have been associated with the arc of mobile media and communication since the first-generation cellular mobile phones (and telecommunications before that), as well as with the most recent phase of AI fervor fueled by generative AI (genAI). While there is some emerging work in relation to disability and AI, as yet, there is little critical discussion of disability in relation to mobile AI, less so when it comes to questions of disability justice—disabled people’s involvement in and direction of the design and development of technologies themselves (Zhuang & Goggin, 2026).

Against this backdrop, we are keen to spotlight disability on the mobile AI research agenda. To do so, we zero in on and discuss the implementation of Grab’s AI Voice Assistant, targeted at disabled people: how it functions and its implications for inclusion and the future of mobile media and communications. Grab is a superapp—an app or platform that can provide multiple services—operating across Southeast Asian nation-states that has already made its mark on mobile communication and everyday life through a range of offerings, especially in Southeast Asia. Branded as an “everyday everything app,” Grab offers food, grocery, and package delivery, transportation, and financial services to its consumers, with some 40-million users monthly across eight Southeast Asian nation-states (Goggin & Athique, 2025; Grab, 2025c). With its wide regional reach driven by expanding mobile connectivity across the region, Grab and its corresponding technologies, while marketed as beneficial and empowering to various populations (including disabled people) by offering employment and other opportunities, can also be exclusionary and problematic (Yang et al., 2023; Zhuang & Goggin, 2024a). Our commentary is guided by a disability perspective, one that (a) aims to examine the broader dominant structures that disabled people are embedded within, and (b) is informed by the disability rights movement (Zhuang et al., 2023). Specifically, we note how the implementation of the AI Voice Assistant, while aimed at enabling disabled people, is ultimately problematic and disempowering as it reframes disability and inclusion in narrow terms and disregards the broader structural power relations that disabled people are embedded within.

The prominence of disability in the mobile AI moment

As Rich Ling observes, mobile technologies have become integral to everyday life, embedded into the very fabric of contemporary societies and across diverse demographics (2012). For disabled people, mobile phones have transformed how they navigate their everyday lives (Dalvit, 2023). Especially during the smartphone phase, mobile media has provided new forms of communicative mobility that evolved with the introduction of sensor technologies, locative media, and other innovations (Goggin, 2016; Locke et al., 2022). Mobiles in general have enhanced disabled people’s lives by providing new affordances for community building, communication, and access to resources (Goggin, 2017). Yet mobiles also have had a storied and problematic history in the social relations of ability and disability. Early smartphone interfaces, specifically the iPhone, incorporated haptics in narrow ways that marginalized disabled users; however, subsequent adaptation and integration of broader accessibility features illustrate the evolving responsiveness of Apple to advocacy by disabled people’s organizations (Goggin, 2017). So, what does the mobile AI moment do to disability, and, by the same token, how does disability play into the assembling of mobile AI?

To start with, it is important to understand—especially because it is not widely recognized––that AI and disability have had and continue to have a complex relationship. There are potential benefits and affordances that AI can provide, offering transformative benefits and enhancing disabled people’s livelihoods (Zhuang & Goggin, 2024b). Mobile AI has been deployed to augment education (Girhe et al., 2024), support mobilities (Nasser et al., 2024), or to enhance health outcomes (El Morr et al., 2024). However, the hype surrounding disability and AI, particularly in mobile contexts, is tempered by critiques of how ableism and exclusion are embedded within these technologies (Goggin & Zhuang, 2025), what Ashley Shew (2020) describes as technoableism, where technology is seen as the solution to disability. Scholars and disabled activists have long pointed out the problems associated with the introduction of new technology, including continued marginalization, replication of social bias, and limited inclusion (Tan & Ho, 2025; Whittaker et al., 2019).

When it comes to the intersections of mobile AI and disability, there are a wide range of contexts, instances, and so-called use cases. A leading area in the use of AI is Microsoft's Seeing AI that has evolved at the intersections of machine learning, natural language processing, and computer vision to help blind and visually impaired people recognize and interact with the objects and environments around them (Microsoft, 2024). From the outset, Seeing AI was conceived of as mobile technology and distributed as an iOS app in 2017, achieving 3-million downloads by the end of that year (Microsoft, 2024).

In recent years, the advent of genAI in these mobile apps has enabled new forms of interactions for blind users (Zhuang & Goggin, 2025). In Asia, region-specific apps such as 小艾帮帮 (Eyecoming) and 豆包 (Doubao) have achieved widespread adoption in Chinese markets, much like Seeing AI has globally. In the case of Seeing AI, its 2024 update included important breakthroughs associated with genAI, such as a video description feature (where users can have video content described) and a feature that allows the app to recognize and read inaccessible PDFs (Guide Dogs NSW/ACT, 2024).

Mobilizing disability in voice AI

We now turn to a specific area of AI that has received comparatively little discussion—voice. Voice AI, as it has been termed, is an area that has attracted a great deal of attention in the genAI period. Notably, American congresswoman Jennifer Wexton, who lost her embodied voice after being diagnosed with progressive supranuclear palsy, a degenerative brain disease, had her voice restored through AI-powered voice-cloning technology from ElevenLabs (Schwartz, 2024; Wexton, 2024a). Launched in 2022, U.S.-based ElevenLabs is an AI products and services company that has attracted attention and scrutiny for its synthetic AI speech services (Clark, 2026; Michel et al., 2025). Subsequently, Wexton was able to give speeches to the U.S. House of Representatives (Wexton, 2024b) in her cloned voice via an augmentative and alternative mobile device (Alper, 2015). Other deployments of AI voice and mobile technology include mobile applications that use AI and speech recognition to translate nonstandard speech into clearer output, helping people with speech impairments to communicate (voiceitt, 2025; Whispp, 2025). Similarly, voice assistants such as Siri support disabled people in performing tasks on their mobile devices (Foley & Fialka-Feldman, 2025), and are claimed to revolutionize how disabled people interact with the world around them (Pradhan et al., 2018).

In their working paper Voice AI and Authenticity, Burgess et al. (2025) provide a very helpful account by which to situate these use cases of voice AI technologies. Burgess and others note that a major implication for disability is that these AI voice interfaces come to be associated with ableist and normative ideologies and are ultimately problematic. Indeed, as Jonathan Sterne (2021) reminded us, voice is a site of ongoing contestation and mediation, revealing how technology, culture, and notions of disability and normativity shape what it means to speak and to be heard. We also need to put this emergence of AI voice interfaces in the trajectories and dynamics of the longer histories and wider dynamics of mobile technology, disability, and voice, laid out by Meryl Alper (2017). These perspectives underscore how AI raises profound questions about voice, cloning, and authenticity. Grab's use of a voice AI assistant is not novel but, rather, fits into broader histories of sociotechnical inventions of disability and mobile communications.

In May 2025, Grab launched its AI Voice Assistant within its superapp to “allow visually-impaired users in Singapore to book rides using voice commands” (Grab, 2025a). Reports indicate that the assistant engages users in conversation to clarify instructions (Koh, 2025; Loi, 2025). In publicity materials on its website (Figure 1), Grab emphasized that the assistant would benefit the elderly and less digitally savvy, but specifically pinpointed visually impaired users who “rely on their phone's built-in accessibility tools, which typically read aloud app elements as users swipe through them” (Grab, 2025a). Grab's introduction of the AI function and integration into its mobile apps fits within the broader trajectory of mobile AI and disability innovation, yet this also raises critical questions about access, equity, and the future of inclusive design.

Screenshot of publicity on Grab website of the AI Voice Assistant (Grab, 2025a).

In walk-throughs (Duguay & Gold-Apel, 2023) of the AI Voice Assistant conducted by the research team in November 2025, to use the voice assistant, the mobile phone device must have the text-to-voice accessibility function—the screen reader—turned on. Such a function has become increasingly common across Apple and Android devices and is frequently used by blind and/or visually impaired users. Crucially, the AI voice-assistant function is not available when the phone's native accessibility functions are not enabled. That is to say, only blind users who have turned on the phone's screen-reader function are able to access the AI Voice Assistant. In other words, despite the broader population that the voice AI function is purported to serve (such as the elderly and the less digitally savvy), disability (or more specifically, blind people) is its main target.

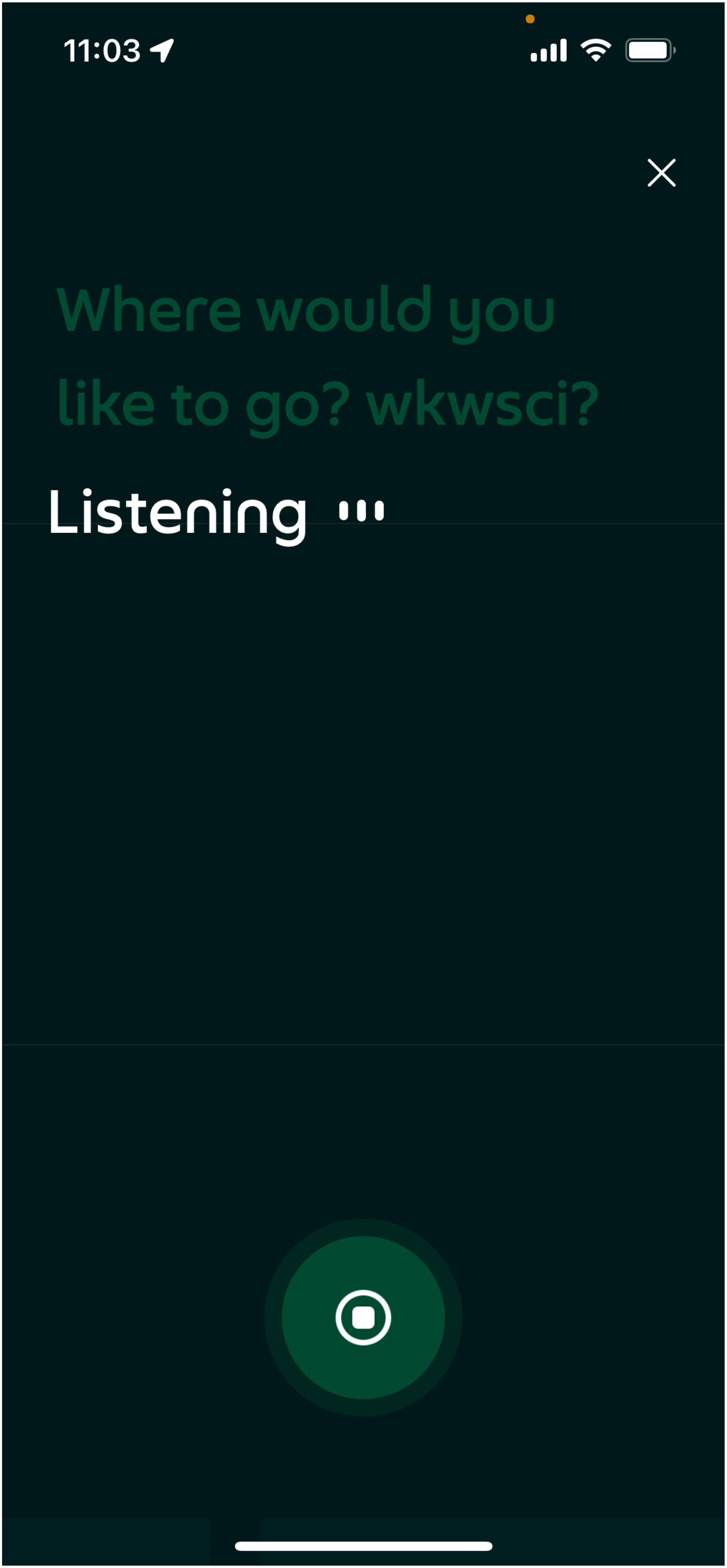

With the text-to-voice function turned on, users can activate the AI Voice Assistant by navigating to the AI voice button or clicking on the transport function. When activated, users interact by speaking to the assistant, which asks further questions to clarify the task it needs to carry out. In our walk-through, the assistant started by asking whether the user would like to go to a particular destination. Upon the user's reply, the assistant next confirms the user's pickup location. The conversational aspect of the interface allows the AI Voice Assistant to offer recommendations (for pickup and destination) and to execute the booking of transportation options (see Figure 2).

Screenshot of AI Voice Assistant interface.

While it is too early to see how the Grab AI Voice Assistant, and similar innovations, will play out in everyday life, it offers several key insights in relation to the intersection of mobile AI, disability, and voice. First, the introduction of a mobile AI voice assistant enables multiple modalities of app use—not only through voice but also through touch, since blind users must still navigate to the voice-assistant function and rely on the phone's native haptic alerts and text-to-speech functions. In this way, our communication with AI systems—and technological systems more broadly—is shifting from the touchscreen toward diverse, multimodal interfaces that integrate tactile, auditory, and vocal forms of interaction (Goggin, 2017).

Second, despite the embrace of multimodality, and the ways in which it might support greater accessibility, the AI Voice Assistant operates in a limited way—restricted primarily to the booking of transport options—and does not support the access and use of other functions within the superapp. As blind users shared with the research team, they preferred using the superapp without the AI Voice Assistant, opting instead to rely on existing screen-reader tools such as VoiceOver (iOS) or TalkBack (Android) to input text and navigate the interface. Crucially, in a walk-through by the research team, the screen reader spoke at the same time as the AI Voice Assistant, duplicating the messages conveyed and making it difficult to understand the information. Blind users also conveyed that the AI Voice Assistant might misunderstand their voice inputs. We note that Grab is cognizant of the issue and has mobilized its employees to donate some 80,000 voice samples to improve the speech-to-text model and is asking for Singaporeans to volunteer their voices (Grab, 2025b); this, however, also raises ethical issues related to compensation and labor (Wu, 2023).

Third, specific attention is paid to disability in Grab's publicity materials, which emphasize the benefits of the AI function for visually impaired users (Grab, 2025a). In national media reports such as The Straits Times, disability is prominently featured in headlines, as are images of blind users engaging with the AI Voice Assistant (Koh, 2025; Loi, 2025). This framing positions Grab as an agent of empowerment and inclusion. Yet, such deployments and representations also reflect what Mara Mills (2010) has termed the “assistive pretext”—a discursive strategy where technology is promoted or legitimized under the guise of assisting disabled people, but ultimately serves other interests, such as brand image enhancement or market expansion (Hong, 2022).

Mobile AI for inclusion?

Whither mobile AI and how can we better build for disability inclusion? What is needed, as our commentary highlights, is broader structural change. The use and deployment of AI technologies in mobile systems—and their intersections with modalities such as voice—cannot be divorced from the broader technological, legal, and cultural ecosystems in which mobiles are situated. Apps do not operate in isolation; they exist in complex relationships with other mobile functions such as accessibility settings, voice inputs, and emerging AI infrastructures. While legal instruments like the United Nations Convention on the Rights of Persons with Disabilities have served as cornerstones, guiding disabled people’s inclusion toward equal participation in societies, more must be done to examine and transform the systems and power structures within which technologies operate (Zhuang et al., 2025). These use cases, while addressing specific accessibility needs, remain narrow in scope and do not yet constitute a broader reimagining of inclusion (Goggin & Zhuang, 2025). Such a reimagining, we suggest, lies in the transformation of disabling relations in existing mobile media and communication, and what the changes represented by mobile AI portend for the next phase of mobile technology and the prospect for inclusive futures. To advance disability justice (Zhuang & Goggin, 2026), interventions in mobile AI must move beyond assistive design toward structural change—centering disabled people's participation in governance, development, and evaluation. Only through such participatory and justice-oriented approaches (Costanza-Chock, 2020) can we move from accessibility as compliance to accessibility as collective transformation––in which mobile communication and media stand to play a fundamental role.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Nanyang Technological University (Start-up grant no. 03INS002089C440).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

The authors confirm that the public data supporting the findings of this study are available within the article.