Abstract

Mobile instant messaging (MIM) applications have received increasing attention from social science researchers lately. Despite the high frequency of its use for multiple purposes, research in such environments entails specific ethical challenges, more familiar for researchers from the Global South, where MIMs have become an important part of the media landscape for a decade or so. This article discusses the ethical dilemmas that emerged in two distinct cases where authors were part of the research teams, concluding that current frameworks fall short in the sociotechnical dimension. To address this, we complement the casuistic-heuristic model with an affordances-driven approach, which contemplates the agency of the group members within the interactive processes that take place in digital environments and the possibilities and constraints that platforms' features offer during the research process.

From its early stages in 2010, mobile instant messaging (MIM) applications such as WhatsApp, Facebook Messenger, Signal, and Telegram have become a popular phenomenon with different uses worldwide (Newman et al., 2022). Recently, extreme and violent discourses as well as news and misinformation circulate through these applications, especially in the Global South (Chagas, 2022; Garimella & Eckles, 2020; Kalogeropoulos, 2021), posing a threat to democracy. Despite this, research on the content that circulates in MIM applications remains scarce and to meet ethical standards for research involving human subjects on these platforms is challenging.

In fact, this paper emerged as a byproduct of the discussion that arose while the authors struggled with ethical dilemmas over how to deal with a research that, while seeming timely and relevant, did not fit the usual boundaries, as will be seen in the case studies. Stemming from the empirical experience, we discuss the main frameworks about ethics in digital research and propose a new perspective for research on MIM that complements them.

When communicative technologies such as public and private surveillance cameras became more pervasive, especially in the northern hemisphere, new approaches such as contextual integrity (Nissenbaum, 2004, 2010) emerged to take into account the flows of information while considering the subjacent norms that regulate the communities under such surveillance. This framework has successfully been applied to more recent technologically mediated contexts such as big data (Zimmer, 2018) and social media research (Nissenbaum, 2011; Zimmer, 2010). However, we claim that they fall short in borderline cases, such as the two cases presented here. Although both are framed in a country context with some shared norms, from this perspective the micro-contexts of each individual who is part of the group is unknown. The appropriateness, the norms of information flow, the distribution process, and the justice rules that work for one person may not work for another. For those reasons, the contextual integrity approach—which for some “remains one of the most powerful ones in guiding researchers to apply guidelines and norms” (Locatelli et al., 2023, p. 137) on digital research—fell short in our endeavor to study MIM platforms where the researcher lacked any mechanism to know in depth the context of each individual who is part of the chat group.

Another framework that has been specifically developed for the digital writing context is the casuistic-heuristic approach (McKee & Porter, 2008). This approach considers relevant variables as continuums instead of binary to support decision-making processes using heuristic maps (McKee & Porter, 2008). Though such frameworks present innovative approaches and have been widely adopted in the digital realm, we argue that they lack a socio-technical perspective, which we claim to be essential in research on MIM. Thus, we pose the following research question: How to tackle ethical decisions when investigating socially relevant issues on MIM platforms including such socio-technical dimensions?

The structure of this article includes three main parts. The first part discusses relevant literature on MIM research and ethics on digital environments. Then we describe the ethical dilemmas that arose during two research studies performed by the authors on MIM. Finally, we synthesize with an affordances 1 -driven approach as our contribution to move forward on a socio-technical-aware ethical decision-making framework.

Researching instant messaging content in the (and from the) Global South

From an academic perspective, there has been a growing interest to conduct research on the social phenomena taking place in, with, or through instant messaging applications such as WhatsApp, Telegram, or Facebook Messenger in the last decade. While in Global North countries, SMS (short messaging services) were the main technology for interpersonal communication for decades, high telephone rates in Global South countries provided a push toward the adoption of MIMs, where messages travel through the Internet, avoiding consumption rates of minutes or messages in mobile phone plans. Moreover, WhatsApp has commercial agreements in several countries that allow users to have the service free of data traffic charges, the so-called “zero-rating” fees.

Due to their popularity, MIMs have been used in different countries in very particular ways in social life environments, such as for remote teaching during the COVID-19 pandemic in Oman (Naghdipour & Manca, 2022), for health support in Burkina Faso (Arnaert et al., 2019), for the parent–child relationship in the Chinese translocal context (Yu, Huang & Liu, 2017), or to coordinate the movement for women's right to drive in Saudi Arabia (Khalil & Storie, 2021). From a strictly political perspective, studies point to the use of MIMs to coordinate social movements in Mexico and Spain (Treré, 2020) and to avoid censorship and surveillance in authoritarian contexts such as Cuba (Herrada, 2020) or Malaysia (Johns et al., 2024). At the same time, MIMs have also been used for not so noble and sometimes antidemocratic purposes. Telegram has been used by ISIS to coordinate its activities and disseminate its ideas (Karasz, 2018); WhatsApp has been used to promote political campaigns, hate discourses, and misinformation in countries such as India (Garimella & Eckles, 2020) and Brazil (Barbosa & Back, 2020).

Some studies reveal that WhatsApp's affordances allow the development of political strategies such as memetic persuasion during the 2019 presidential election in Indonesia (Baulch et al., 2022), while others warn about some of the dangers of WhatsApp's affordances. Gil de Zúñiga and Goyanes (2023) state that those who consume more news on WhatsApp tend to have less knowledge about politics and are more radical, which is positively related to their participation in illegal political protest activities.

The penetration, adoption, and use of WhatsApp in southern countries yielded high figures. In 60 out of 90 countries measured by We Are Social, 2 WhatsApp was the most popular. It is the most widely used application in Latin America, Africa, Middle East, Russia, and some European countries. Taking into consideration the countries in which the study cases were located, in mid-2021, WhatsApp was used by 41% of Brazilians to consume news, while in Chile the number reached 31% of the population (Newman et al., 2022). Despite this, academic research on social dynamics of MIMs has been characterized by the difficulty of accessing content. Privacy expectations and security characteristics (encrypted conversations) make it difficult to access the group chats without raising ethical concerns.

Easy accessibility to content is insufficient to consider it as public, as much as deleting it does not imply it disappears. For this reason, researching on platforms that allow easy access to the content demands a strict anonymization process to protect the identity of people and their traces of interactions (Bischof et al. 2022). People may not be aware of the scope of their digital data, and their initial expectations when sharing content may not consider the dimensions of the platform being used nor the significance of the information shared to third parties or in aggregated contexts (Santos, 2022). People may not have the skills and knowledge to set up their digital privacy, so not everything they share digitally should be conceived as a public text. In both cases, researchers are responsible for establishing the boundaries between the public and the private and thus ensuring to minimize as far as possible physical, psychological, or moral harm caused to the subjects. In addition, researchers must keep in mind legal considerations and personal responsibilities when facing distressing information during the research (Stern, 2003).

In this complex task, the affordances of platforms can shed light on people's privacy expectations while using the platform (López, 2020); consequently, this would offer clearer guidelines to researchers when faced with ethical dilemmas. In this regard, there are different types of technology-mediated environments in which citizens express their thoughts and feelings and exchange information. All these environments exist within a continuum that varies from the most strictly public to the most strictly private spaces, depending on the limitations on access either defined by the users or allowed/constrained by the platforms, while at the same time subject to the personal or group configurations that users employ to their digital spaces.

Due to the difficulties presented, instead of using empirical WhatsApp data, several research papers have approached the issue using self-reporting data (Kaufmann & Peil, 2020), via surveys to study exposure (Valenzuela et al., 2021) or belief in misinformation. Not many address sensitive social subjects or issues: studying the images of a food porn group (Kozinets et al., 2017) is remarkably different from a community of nationalists unknown to researchers (as one of the cases under discussion). Another challenge lies in the differences between platforms, or even within the same platform when different features are activated, such as connecting with others, making content visible, and editing it (Treem & Leonardi, 2012).

Affordances are relational properties that enable or constrain possibilities of action (Hutchby, 2001) that technological systems offer. This means different users may not only act differently as they adopt a platform, but they may have different imagined expectations or outcomes, rather than concrete ones (Nagy & Neff, 2015). Following the discussion above, we suggest that analyzing such ranges of possibilities within the affordances’ perspective might offer important clues on how to solve some methodological and ethical dilemmas faced within research in MIM content.

Digital ethics in social sciences: from North to South

The digital context crosses almost all spheres of daily life nowadays. However, conducting research in this dynamic, decentralized, and multifaceted environment (Weller, 2015) may present great challenges due to the ethical issues involved (Barbosa & Milan, 2019), as we enter what Moor (1985) calls “policy and conceptual vacuums” (p. 272) due to the wide range of possibilities within the realm of research in digital spaces.

One of the reasons for the aforementioned challenges is that the international standard of research involving human subjects considers the principles of the Belmont Report (1978) and the Singapore Statement (2010) as ethical guidelines, though both documents are temporally and contextually distant from the current reality. Regardless, both documents stand as a standard for scientific research and as international references that are considered by Institutional Review Boards when evaluating the possible ethical impacts of the study. Updates to the protocols of research institutions have been insufficient when facing the dramatic changes in the digital world (see Bishop & Gray, 2017) and some are considered as “super conservative” in this regard (Franzke et al., 2020, p. 13). Therefore, it has been said that applied ethics in digital environment studies is a sector full of gray areas (Kozinets, 2015; Willis, 2017) and hazy boundaries (Kantanen & Manninen, 2016), where researchers are often limited by rigid academic structures and criteria due to procedures that do not consider the complexity and constantly evolving nature of the digital context.

Additionally, there is no agency, institution, or handbook to solve practical concerns arising from various research papers (López, 2020). Despite the progress made on data publication and exchange in social media, so far they deal usually with the controversial concept of public data, such as social media posts (Bishop & Gray, 2017; Klassen & Fiesler, 2022; Willis, 2017). Those efforts rarely are directed specifically to content obtained in MIMs.

As some research topics have become highly mediated by platforms, algorithms, and other variations of information brokers, other factors should be considered. In this regard, the Ethics Working Committee of the Association of Internet Researchers offers one of the most up-to-date guidelines on the subject in its most recent publication Internet Research: Ethical Guidelines 3.0 (IRE 3.0). In addition, it stands as an international support agency that can provide help and advice in negotiation processes to receive approval from local ethics committees (Franzke et al., 2020, p. 13). Other frameworks were either developed to technologically mediated informational environments such as surveillance cameras (Nissenbaum, 2004) or digital writing in general (McKee & Porter, 2008). We will make the case that they share one fundamental shortcoming: they do not sufficiently take into account the relational nature of the interaction between human subjects with chat app technologies that lie behind the creation of digital content.

Inspired by Aristotle, Jonsen and Toulmin (1988) argue that instead of science, ethics is a field of experience. In that line, the casuistry-heuristic approach by McKee and Porter (2008) takes into account the complexity of human experience, in this double dimension: casuistry-heuristic, because the particular circumstances of each case are considered, prevailing over generalized protocols; and based on rhetorical principles, where audiences are at the center of the moral judgments. Their conception of audiences (in plural) points to dialogical, active, interlocutory persons and communities, as opposed to the passivity behind the idea of a research “subject.” Still, their conception of the audiences, participants, or communities (p. 726), even if a dialogical one, connects with an analog paradigm of communication ecosystems, detached from digital media such as social networking sites (SNS) and MIMs. The authors argue that audience-centered judgments focus on “human experiences,” but they do not pay attention to the human–interface interactions, where user interactions with an interface translate into recognizable inputs by the information device within a context (Hassenzahl & Tractinsky, 2006). This process of translation is even less generalizable than the myriad information ecosystems in the digital realm, approached by McKee and Porter (2008) as continuums of data inputs for the research. In other words, while focusing only on the platform (such as forums, conversation services, and SNS), their approach fails to capture the relational dimension of the interaction between user and interface.

In that sense, MIM platforms are a context prone to having borderline cases to discuss such dilemmas. Research into actual content within such platforms offer many levels of complexity, as they present challenges related to privacy, data capture, user expectations of privacy, technical challenges to gather data and others that we will explore in this article. Besides, MIM also stands out as a less explored case in the literature, because it is much more relevant in the Global South than in countries that usually lead academic publications and where case analyses are Western-centered (Locatelli et al., 2023).

In South America, ethics boards are relatively new, and social sciences research projects are often evaluated from the perspective of bioethics or paralleled with medical research (Nicacio, 2023)—standards that are hardly adequate to social sciences. Additionally, the southern ethics committees usually follow the northern approaches (Locatelli et al., 2023), even when the research problems may differ. MIM content, though, is a pivotal topic that has become much more of a concern in Global South regions such as South Asia, South America, and Southern Africa rather than in Europe or North America. Its privacy expectations, interactive nature, and specific spectrum of functionalities make it worthy to think of as a borderline case to reflect upon ethical challenges in human research in digital environments.

Institutional ethics committees have become the norm in Chilean universities, and ethical standards have been established from the health field since 1986 (Cadenas et al., 2021). Currently, Law 20.120 on scientific research in human beings (2006) is the main national reference. Also, the Technical Norm 0151 regulates accreditation standards for the Ethics Scientific Committees (2013). In the absence of specific nationalethical regulations for research in social sciences and humanities the National Agency for Research and Development recently published an ethical guide for the scientific studies in this area (2022). However, it does not make any reference to research on MIM.

On the other hand, Brazil has a hierarchical centralized authority anchored in biomedics and health, widely criticized by researchers in social sciences and humanities (Nicacio, 2023; Zaluar, 2015). The Brazilian system is still in its early stage regarding ethics committees at public universities and the regulatory guidelines and standards for research involving human beings, 4 used as basilar documents to justify ethical concerns of digital technologies research in the field of humanities, are, again, mainly originated from the area of health sciences.

In sum, while in Brazil the absence of ethics evaluations is still frequent in major institutions, in Chile there is more progress, and generally the postgraduates’ research projects must be approved by ethics committees. Yet, in many cases, they are too conservative regarding digital environments. In both cases, though, as researchers we suffered from the lack of ethical frameworks that embraced all the dilemmas we faced while studying human-created content in MIM environments.

Beyond informed consent

Informed consent is one of the most widely used tools to ensure subjects may make an informed decision to join the research, lowering possibilities of harm and empowering the subjects to evaluate what is at stake, with “full knowledge of relevant risks and benefits” (Zimmer, 2018, p. 3). This process includes providing timely information about the research to the subjects involved, such as the objectives, participation details, possible physical or psychological risks, benefits of participating, and confidentiality of the provided information. With this data, the subject decides whether or not to participate voluntarily in the research, and this response, with some exceptions, is documented with their signature or verbally registered in a recording, which then becomes a guarantee that serves as an essential part of the current scientific procedures for academics and institutions. As such, it has become one of the main requirements to protect participants of a study on human subjects.

The informed consent process, like several mandates of scientific ethics, was originally designed for face-to-face or offline contexts (López, 2020); this is one of the major complications for scholars of digital communications. Obtaining informed consent can be difficult in online environments for the aforementioned reasons and other factors, such as the inability to contact the subjects, disruption of the methodological integrity (Bishop & Gray, 2017), potential alteration of the dynamics of the community during the analysis (Kozinets, 2015), and the ideological or cultural position of the researcher. This sort of tension that emerges on ethical decision making in digital environments is strongly highlighted by McKee and Porter (2008), who call for a casuistic-heuristic approach instead of somewhat binary protocols offered by some ethical guidance systems.

Moreover, in the digital realm there are cases where it becomes impossible or detrimental to disclose the researchers’ intentions and even their presence (Kozinets, 2015). In some cases, approaching the observed group by sharing common ground with them may be ideal, before explaining that it is social research. In other cases, if the gender, ethnicity, ideology, or other relevant identity aspects are not shared, the approach of a fly on the wall may be considered, offering a balance between the sensitive nature of the subjects and the social value of the research (Brotsky & Giles, 2007), or due to the public nature of the information—for instance, content published on social media (Willis, 2017). Bishop and Gray (2017) lost important data due to disclosure and finally obtained some consents via Twitter. Other researchers never disclose their intent and even assume an overt identity to enable research on sensitive topics (see Brotsky & Giles, 2007). McKee and Porter (2008) operationalize this sort of tension as “closeness/distance between researcher and participants” (p. 737).

Kantanen and Manninen (2016) did not consider informed consent in the pilot version of their study on a virtual teaching–learning community because they did not conduct interviews. Other researchers, as Warfield et al. (2019) specify, practice strategies such as ongoing consent and “ethics-on-the-go.” Willis (2017) adds that if the data are public and only textual, such as public Facebook groups analyzed by the author, obtaining informed consent may be ignored, arguing that observation on public digital platforms is similar to observation in physical public spaces; therefore, informed consent could be waived. Few others, like McKee and Porter (2009), pose the possibility of consulting the participants’ wishes regarding informed consent, as an approach founded on rhetorical tradition that considers the input of the audiences.

Far from being a consensual issue, those are different ways of approaching and observing a digital reality, depending, amongst other factors, on how sensitive its members are to a third-party participant or an observer, the impact the presence of the researcher may have when joining due to the app's response (such as a notification to the group), and the positionality of the researcher (Hampton, 2021). While Marzano (2018) claims that not identifying oneself is not the same as deceiving the subjects, Boellstorff et al. (2012) criticize the passive observer approach. In light of such debate, we recognize that this approach undoubtedly impacts ethical principles such as transparency, privacy, and consent. However, as we will see in some cases, like the second one analyzed in this study, it is necessary to put many factors in a balance and make sure that no harm or, in worst case scenario, as minimal harm as possible is done and anonymity of the observed individuals is inescapably respected.

Between social interest and private rules

In absence of other rules, institutional review boards may suggest that researchers must comply with the terms and conditions of use of each platform, 5 confounding private corporate interest with the public interest behind a social scientist's research. Such terms may sometimes be more restrictive than academic ethical protocols (Beurskens, 2010) while more permissive in other dimensions (Bishop & Gray, 2017). One way or the other, they were not at all designed in the interest of the subjects, their protection, or their privacy, but following other sets of principles and values related to the commercial venture. In this scenario, researchers face another dilemma: complying with private interests or pursuing their social role, even if this role contradicts the former. As mentioned by Zetter (2016), complying with developers’ regulations may impede research and cause researchers to engage in bad practices, creating some degree of impunity. For this reason, academics have criticized when platforms hinder academic research (Bruns, 2019) and questioned the need to follow the terms of the platform, especially when their research benefits society and is conducted in favor of knowledge, open science, and public benefits (Bishop & Gray, 2017; Franzke et al., 2020; TBPS, 2021).

In social media research, it has been documented that ethics committees with less experience on the topic tend to adopt more conservative and less nuanced views regarding data use and consent (Hibbin, Samuel & Derrick, 2018), such as binary workflow of the “Human Subject Regulations Decision Charts” designed by the Office of Human Research Protections in the U.S. Code of Federal Regulations (McKee & Porter, 2008, pp. 714–715), to the point of considering social media content as text rather than human subject research (Willis, 2017).

If we think about the context of dissimilar levels of assimilation of ethics review procedures and committees in the South, this finding becomes even more relevant. Moreover, MIM applications differ from social media, and are located diversely across the public–private spectrum, where many involuntary traces of interactions (Bischof et al., 2022) coexist with a residual perception of privacy due to their origins as a uniquely interpersonal means of communication. One of the main features of MIMs is group chats, which can be private or public. One way to distinguish is to consider private when the entry of a new user depends on the invitation of a moderator, while on public groups one can join using a publicly available link. Thus, public groups can be accessed not only through an invitation but also from a link shared in another chat or in some other website as “directories” of WhatsApp groups—which are, strictly speaking, open spaces, and therefore public. Once the link is clicked, the user accesses the group without further approval or moderation by the administrators according to the platform's terms of use, 6 although they can be excluded or their messages deleted at any time; this varies according to the diversity of administrator roles in each platform.

If the “publicness” of the information obtained in social media is far from consensual (see Hibbin et al., 2018 and Zimmer, 2010), MIM groups bring about even more doubts, providing fertile ground to consider the privacy paradox (Dienlin et al. 2021), contradicting expectations of privacy between different users, even when they belong to one same group, for instance, and at the same time representing uncharted territory to many of the ethics committees across the globe. In line with Hibbin et al. (2018), we believe that more than discussing the publicness of such environments, though, the more constructive path is about “how you actually handle that kind of information … in a way that is responsible” (p. 156).

Method

The goal of this paper is to identify a valid framework to tackle ethical decisions when investigating socially relevant issues on MIM. To meet the goal, we took two methodological steps. First, we described the ethical and methodological dilemmas that emerged while carrying out two studies of WhatsApp groups. While the cases are perhaps not perfect for comparison, they are both led by authors of this article, therefore allowing for inside scoops that would be impossible from secondary sources. Also, it allows to problematize ethical and methodological decisions in the research process from within the depth of the researchers’ position. Both cases are highly relevant in their regional contexts, for they are immersed in sensitive political circumstances. Second, we identified key affordances that allow for a more nuanced casuistic approach, designed and discussed possible heuristics to map the ethical dilemmas and inform the decisions.

Cases studies

The first case is a private WhatsApp group launched in 2016 by a political activist in Brazil. The group members demanded Dilma Rousseff's return to the presidency after the beginning of the impeachment process. United Against the Coup (in Portuguese: Unidos Contra o Golpe, henceforth UCG) is a pro-democratic group that includes professionals, journalists, students, and activists. The researcher knew some of the activists before the researcher’s formal entrance in the group. UCG is still active at the time of writing with some seasonality between political event cycles on WhatsApp, with a moderate decline in membership.

The second case concerns a group of Chilean activists themed around the issue of nationalism, created in mid-July 2019. The researcher joined in August 2019 and left in January 2020. There are indications that the group is still active, which is why its real name is not disclosed. In October 2019, the country had the deepest social crisis since the end of the dictatorship, called Social Outbreak. In this context, this case is relevant because it reveals how people get informed in times of crises. Contrary to the previous case, the researcher had a political and ideological stance opposed to the group, besides being an immigrant in a nationalist group, thus it was considered that disclosure could put them at risk. We will name this group “Nationalists.”

Results and discussion

The following results are grouped in two sections. The first follows the somewhat intuitive and to some extent informed decisions that the researchers took along the way and left them with the open question of how such a process could be systematized to make decision making more suitable to other researchers who face similar dilemmas. Then we proceed with our contribution to answering that question by applying the casuistic-heuristic approach (McKee & Porter, 2008, 2009) with an affordances-driven perspective.

Descriptive comparative procedures

This section involves a comparative analysis of the two cases presented, indicating the differences between them, following the usual procedures of academic research using a process approach (Franzke et al., 2020): (1) inclusion in the digital environment; (2) implementation of the research; and (3) presentation of results. This approach allows us to explore ethical and methodological dilemmas in the research process (Schlütz & Möhring, 2018).

Inclusion

Implementation

Presentation of results

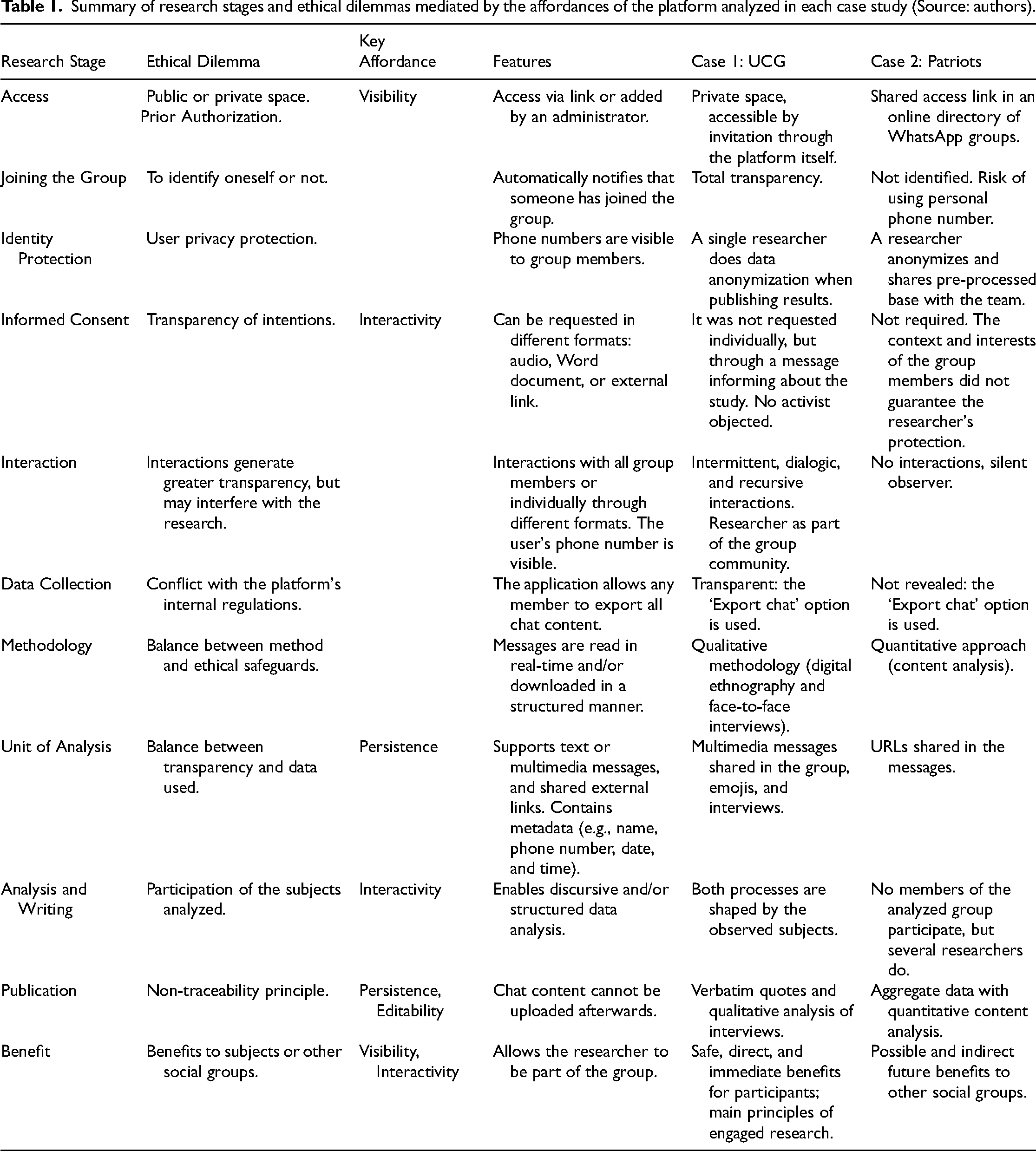

Table 1 synthesizes the discussed points above, summarizing how they relate to distinctive affordances, as we move to the next section where we propose a systematization of the ethical dilemmas faced by building upon an affordances-driven casuistic-heuristic approach.

Summary of research stages and ethical dilemmas mediated by the affordances of the platform analyzed in each case study (Source: authors).

Affordances-driven perspective to ethics

Both cases analyzed share several similarities: platform, topic of interest (politics), the need to share with people of similar interests in a crucial political context in each country, only one researcher immersed in the group, to name a few.

However, it was the differences that led us to question how to act when faced with ethical procedures such as informed consent, the identification of the researcher, the presentation of the study and its objective, the units of observation, the method, the privacy expectations of the participants, among others. This section explores the affordances-driven perspective as a framework that complements the casuistic-heuristic approach (McKee & Porter, 2008) to systematize the decision processes the researchers went through in the analyzed cases.

Although the previous analysis was focused on WhatsApp groups, in this section we systematize the affordances above, building on previous typologies of digital and social media affordances (Treem & Leonardi, 2012), and extend the gaze to different MIMs that give us a variety of methodological possibilities and ethical decisions about issues such as privacy, transparency, and informed consent.

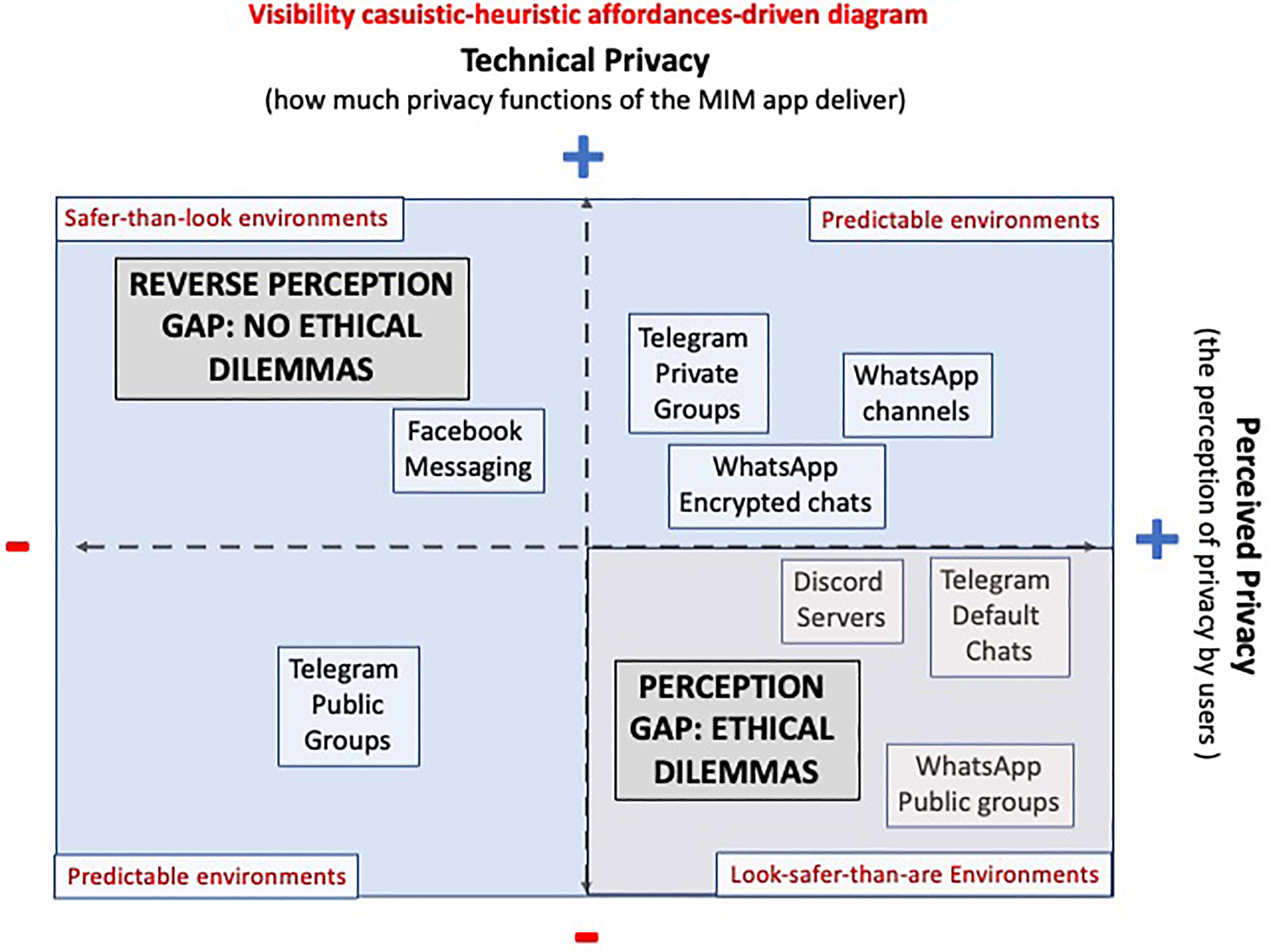

Affordance 1: Visibility

Visibility casuistic-heuristic affordances-driven diagram.

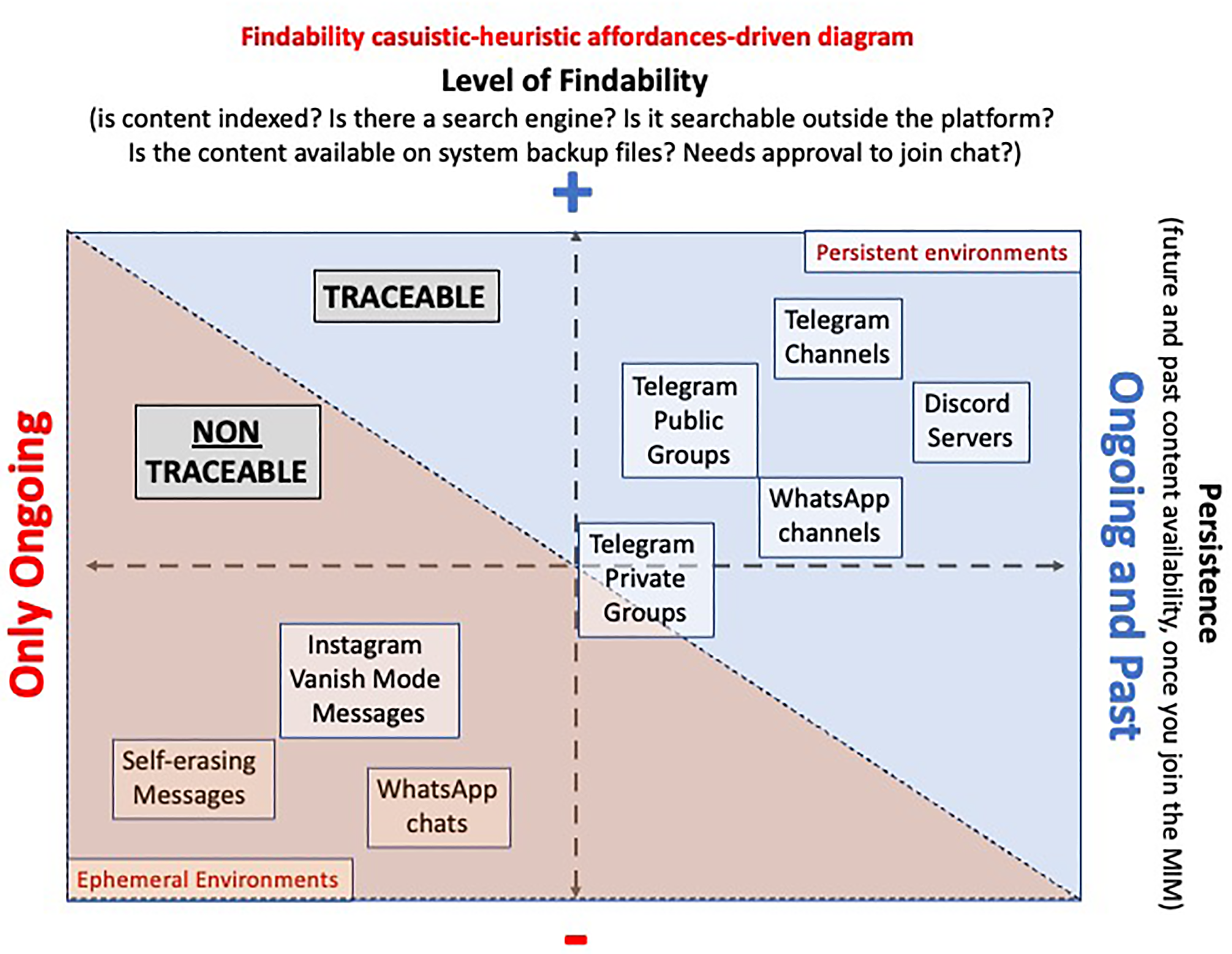

Affordance 2: Persistence/findability

Traceability-related features in Telegram and Discord allow new community members to view the conversation history (concept of persistence in affordances’ literature), while in WhatsApp this is not possible. The dilemmas here emerge as information is findable or persists in the platform and the continuum emerges around the idea of traceability of information. We suggest analyzing this dimension via the affordances of findability and persistence (see Figure 2). As a general recommendation, to ensure non-traceability in MIM research, we suggest not using direct quotes but paraphrasing ideas, following TBPS (2021).

Findability casuistic-heuristic affordances-driven diagram.

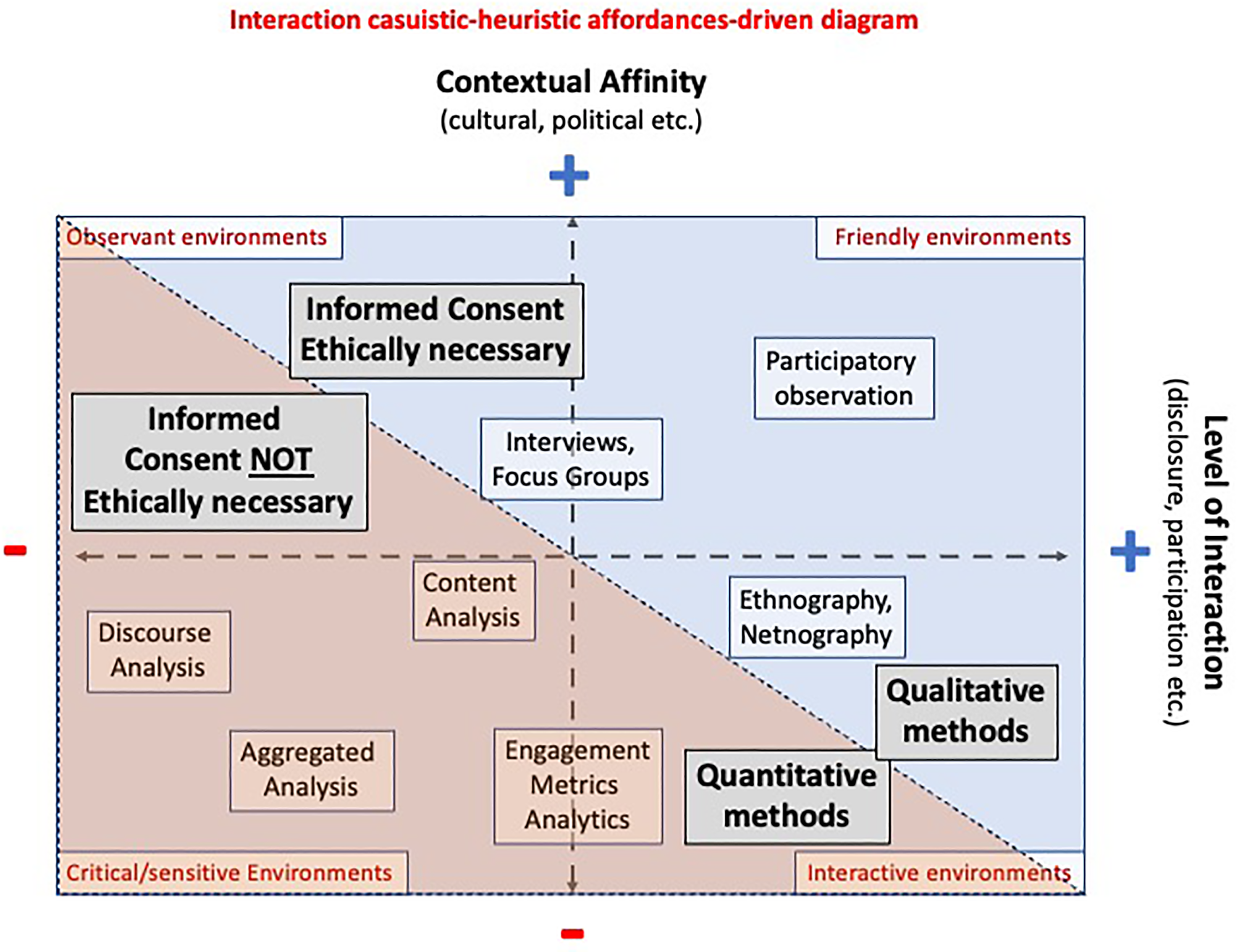

Affordance 3: Interactivity

MIM apps allow the interaction between users and between researchers and users, which requires transparency and consent. Sometimes it is not feasible or safe, carrying over ethical and methodological challenges. The methodological paradigm used will depend on the research question, but also on the topic, context, affinity between researchers and the researched community, and the features that mediate the content analyzed. Researchers should pursue a balance between these elements, viability of the research, and safety and well-being of the people involved, including themselves. As deployed in Figure 3, the more the researcher needs to understand the beliefs and behaviors of users by approaching the contents they share in the chat, i.e., from a qualitative perspective, the deeper the researcher's interaction would be with the group in terms of disclosure, i.e., transparency regarding their intentions and presence. On the contrary, the less affinity the researcher has with the group, due for example to cultural, gender, or ideological reasons, the more difficult it is to be successful interacting and disclosing their intentions. In this case, being undercover allows to not violate the community norms, and/or being considered an outsider to the research community (Klassen & Fiesler, 2022). Thus quantitative methods are more appropriate as the subjects under study are not as exposed compared to qualitative analysis: “data are aggregated and thus any disclosure risk is negligible” (Bishop & Gray, 2017, p. 167). Furthermore, the researchers lower the risk of flaws due to their “situated knowledge” when they are outsiders (Hampton, 2021).

Interaction casuistic-heuristic affordances-driven diagram.

Affordance 4: Editability

To answer the research questions, different units of analysis should be considered, which depend on the features of the platforms. Discord, for example, contains a multiplicity of channels created ad hoc in a highly customized manner, inviting more qualitative analyses. In contrast, other MIMs with more structured interfaces such as WhatsApp and Telegram allow quantitative analysis on several fronts. WhatsApp does not have an interface for data collection (such as X’s (formerly Twitter) API), making it difficult to collect information and perform massive analysis. Some MIMs allow messages to be edited, others do not; some allow to be deleted after a period of time, while others allow users to delete their messages, putting time pressures on data recollection procedures. These actions reflect directly on the findability and persistence of the content (Figure 2).

Affordances-driven casuistic-heuristic maps for ethical decision making

As we move forward to systematize the reflections on the procedures adopted to both cases above described, some affordances were detected, which enabled organizing the dilemmas that arose during the research process using McKee and Porter (2008) and Sveningsson's (2004) grids for mapping research data. While we mapped four main affordances, some maps complement each other, as questions of privacy relate to those of visibility or persistence, for instance. The gap between the perceived privacy and the technical privacy is a problem that should be addressed by the researcher when deciding how to approach the subjects and their content, along the lines of the audiences-centered rhetorical approach proposed by McKee and Porter (2008). But privacy also mingles with questions of visibility, findability: is the personal mobile phone number available (e.g., WhatsApp chat) or is it hidden (e.g., Telegram)? Is the group indexed by a search engine or by the Internet Archive?

In the case of the levels of interactivity with the research subjects, platform, and so on, McKee and Porter (2008) make the case that binary heuristics to visualize nuanced continuous categories are a good way to approach casuistically the challenges of research design and ethical evaluation (e.g., private/public x sensitive/non-sensitive information). In Figure 3 we propose heuristics that relate two dimensions to inform decisions regarding epistemological approach to methods in order to avoid exposing subjects or researchers to any harm. Following the authors, we propose the “contextual affinity” concept as a proxy to what they call “degree of closeness/distance” between researcher and participants, one of their suggested casuistic-heuristic maps to analyze ethical dilemmas.

Analytically speaking, the affordances-driven approach allows to better grasp the agency of the individual users. Due to the socio-technical component of digital environments, users may be using a widely public environment, but with the perception that it is safe and private (Figure 1). So even the private–public continuum is complex to scrutinize. Another example could be Telegram channels and groups. Though there might be a perception of Telegram as a more private chat environment than WhatsApp due to Meta's succession of dodgy data handling procedures (Satariano, 2023), its chats are not encrypted by default and the groups and channels are listed on an indexed search engine, making them easier to find.

Path forward

This article contributes to an outstanding discussion on ethical standards in MIM applications’ content research, motivated by its urgency from a Global South perspective. To answer our research question we explore a complementary approach to the casuistic-heuristic framework with an affordances-driven perspective that encompasses user's agency as we map the different continuums that arise during decision-making processes regarding ethical conundrums.

Many of the dilemmas encountered have an important individual component, since the relationship of the user with the technology varies. To fulfill such void, casuistic-heuristic mapping of continuums of affordances allows us to spot not only variations within platforms but also regarding users’ appropriation of such platforms, as they have agency to configure them to some extent.

Many aspects of the framework presented can be applied to other digital spaces beyond MIM, especially because currently mobile devices are a fundamental tool for media and communication research (Kümpel, 2022; Schnauber-Stockmann & Karnowski, 2020). Also, this approach contributes to the debate about ethical decisions in digital research and complements proposals as the case-based process (McKee & Porter, 2009) and the contextual integrity approach (Nissenbaum, 2004, 2010; Zimmer, 2018). The reflection raised in this paper should be deepened, further systematized and extended to other cases of analysis, but it is a first step to think about ethical and methodological decisions from an affordances perspective on MIM environments.

Despite limitations, our ultimate goal is to reflect upon the potentials, constraints, and risks of researching MIM applications, and for the academic community to contemplate new ways of thinking regarding the ethical dimensions that arise in these platforms, rather than to avoid them. We think it is possible to research MIM's growingly relevant sociopolitical issues for and from the Global South, negotiating ethical and methodological dilemmas as Schlütz and Möhring (2018) and Locatelli et al. (2023) suggest.

From an academic perspective, seeking alternatives to generate new knowledge from these dramatic changes and making ethical decisions on a case-by-case basis is essential (Kantanen & Manninen, 2016; López, 2020; McKee & Porter, 2009). After all, “an ongoing ethical reflection might be more helpful and beneficial in the long term for society than now restricting research” (Franzke et al., 2020, p. 2). Nevertheless, ensuring progress in science causing minimal harm (Zimmer, 2018) and safeguarding the protection and integrity of the subjects and researchers should be our main concern.

Footnotes

Acknowledgments

We would like to deeply thank the reviewers for their acute, detailed, and constructive review of the manuscript. It was a tough process, but without their feedback this paper would not be what it is now. We would also like to thank Professor Liliana de Simone and the feedback from colleagues of the course on Netnography on PUC-Chile, where these thoughts first germinated. Moreover, we would like to acknowledge all the UCG activists; without them, this research would not have been possible.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The first author was supported by Universidad Andres Bello and Center for Intercultural and Indigenous Research (ANID/FONDAP/1523A0003). The second author was funded by Agencia Nacional de Investigación y Desarrollo (ANID) through the Fondo Nacional de Desarrollo Científico y Tecnológico, grant N° 11230980 and through the Fondo de Financiamiento de Centros de Investigación en Áreas Prioritarias, grant N° NCS2021_063. The third author's work was supported by the FCT (Portuguese Foundation for Science and Technology) PhD grant SFRH/BD/143495/2019 and a visiting fellowship under the Center for Advanced Internet Studies (CAIS) GmbH, Spring/Summer 2022. In addition, CAIS provided funding towards the open access publication.

Notes

Correction (September 2024):

Article updated to correct the funding.

Author biographies

Nadia Herrada Hidalgo is associate faculty at the School of Journalism in the Andrés Bello University (Santiago, Chile) and researcher at Center of Intercultural and Indigenous Research (CIIR, Santiago, Chile). Her research interests include communication and heritages; ethics in social sciences research; Indigenous people, digital media and tourism; screens, children and teenagers. She is a journalist from the University of Havana (Cuba) and she holds a master's and a PhD degrees in Communication Sciences at the Pontifical Catholic University of Chile

Marcelo Santos holds a PhD and an MSc in Communications Science and a MSc in Semiotics. He is an associate professor at the Communications Department of Universidad Diego Portales and a researcher at the CICLOS-UDP. His research interests are the crossroads between digital communication technologies and democratic processes, mostly involving social media and instant messaging apps, including but not limited to social movements and activism, political participation, misinformation, dirty political tactics, inauthentic coordinated behavior.

Sérgio Barbosa is a PhD candidate at the Centre for Social Studies (CES), University of Coimbra. He is a research fellow at the Institute for Advanced Studies on Science, Technology and Society, TU Graz. His research interests include digital sociology, chat apps, digital ethics, and Global South.