Abstract

Civic argumentation refers to societal problems that may affect various scientific disciplines. Societal problems are complex and their possible solutions controversial. Making informed and reasoned decisions on these problems requires domain-specific content knowledge and domain-specific argumentation skills. This study addresses argumentation on societal problems in the economic domain. It examines 159 high school students’ written arguments on two socio-economic problems in a performance test by applying a domain-specific analytical framework with quality criteria for argument structure and content and by using qualitative content analysis, cluster analysis and variance analysis. Our findings show that students’ argument structure did not substantially vary between the two test tasks, but their argument content did. Students tended to generate arguments with justifications that supported their own position, but seldom with justifications that qualified it. Of all arguments, a quarter were fully accurate, about half referred to scientific concepts and half included multiple perspectives. We identified three distinctive argument profiles regarding structure and content of argument quality. Moreover, the argument profile is a distinctive factor for students’ content knowledge. Our study gives insights into students’ written argumentation skills and content knowledge on socio-economic problems and offers a promising analytical framework for future research in this domain.

Keywords

Introduction

Argumentation in education may appear at two levels. At the macro level, argumentation is learning content in curricula, that is, a part of the knowledge to be taught. Knowledge encompasses knowledge acquisition regarding concepts and principles (“what we know”), knowledge use regarding scientific methods (“how we know what we know”) and scientific evaluation criteria (“why we belief what we know”, “why we prefer this over that”) (Duschl, 2007; Duschl and Hamilton, 1997). At the micro level, argumentation is learning process in classroom, that is, a complex, discursive process on the knowledge to be taught and learned (Driver, Newton, and Osborne, 2000; Duschl and Hamilton, 1997; Duschl and Osborne, 2002; Jimenez-Aleixandre, Rodriguez, and Duschl, 2000; Kolstø and Ratcliffe, 2007; Tiberghien, 2007). Thus, arguments are the artefacts generated by students in this process.

Argumentation in the classroom can be separated into two main forms and respective goals. Scientific argumentation is about scientific topics; it is thus bound to a specific scientific discipline and its epistemology (Jimenez-Aleixandre et al., 2000; Kelly, Druker, and Chen, 1998; Kelly and Takao, 2002). Using scientific argumentation in education aims to develop students’ knowledge and skills on the nature of science. It refers to knowledge justification: in an argument, claims are justified by a line of logical clauses and/or by empirical evidence (Driver et al., 2000; Kuhn, 1993; Osborne, Erduran, and Simon, 2004). An argument is constructed by building theoretical models and explaining relations between their components (Siegel, 1995).

Conversely, civic argumentation is about societal problems, also denoted “questions socialement vive” (Legardez and Alpe, 2001). A societal problem is a social dilemma that affects one or more scientific disciplines (Acar, Turkmen, and Roychoudhury, 2010; Kolstø, 2006; Sadler, 2004; Sadler and Donnelly, 2006; Sadler and Zeidler, 2004; Simonneaux, 2007; Tiberghien, 2007). Using civic argumentation in education aims to develop students’ participation in society and to establish a citizen culture. Argumentation on societal problems involves persuasion and negotiation (Myers, 1990; Sadler and Donnelly, 2006): an argument connects an assertion or conclusion by justifications, whereby the justifications follow facts and narratives. Such an argument is evidence-based and value-based (Kolstø, 2004; Sadler and Donnelly, 2006; Zeidler and Sadler, 2007). Moreover, civic argumentation involves “reasoning about causes and consequences and about advantages and disadvantages, or pros and cons, of particular propositions or decision alternatives” (Zohar and Nemet, 2002, p. 38). Societal problems with focus on science are known as socio-scientific issues. Recent examples are alternative fuels, genetic engineering, local environmental pollution, and global climate change (Sadler, 2004; Sadler and Donnelly, 2006; Sadler and Zeidler, 2004). Those problems with focus on social science include, for instance, agricultural trade, consumer protection, retirement provision, energy supply, immigration, and unemployment (Ackermann, 2019, in print; Simonneaux, 2007). Accordingly, civic argumentation on societal problems is part of both science education (e.g. biology, chemistry, ecology, technology, medicine) and social science education (e.g. geography, history, sociology, economics, politics, law).

Research on argumentation in education to date has largely examined students’ scientific argumentation in the classroom, covering all school grades (Erduran, Simon, and Osborne, 2004; Jimenez-Aleixandre et al., 2000; Kelly et al., 1998; Kelly and Takao, 2002; Lawson, 2003; Osborne et al., 2004; Sandoval, 2003; Sandoval and Millwood, 2005). Likewise, studies on students’ civic argumentation in particular mostly address socio-scientific issues and the related conceptual knowledge (Christenson, Rundgren, and Zeidler, 2014; Patronis, Potari, and Spiliotopoulou, 1999; Ratcliffe, 1996; Rundgren, Eriksson, and Rundgren, 2016; Sadler, 2004; Sadler and Donnelly, 2006; Sadler and Zeidler, 2004; Simonneaux, 2007). Few studies can be found on students’ civic argumentation on other types of societal problems, particularly on socio-economic problems (Gronostay, 2016, 2017; Siegfried, in print; Wilcke and Budke, 2019). Furthermore, studies on argumentation in the classroom develop or adopt analytical frameworks for arguments (Duschl, 2007). Still, most studies use analytical frameworks with quality criteria for either argument structure or argument content (Sampson and Clark, 2008).

Our study addresses the above-mentioned research gap in argumentation on societal problems in the economic domain and two-dimensional analytical frameworks. The aim of our study is to examine upper high school students’ written arguments on socio-economic problems in a performance test by applying a domain-specific analytical framework. In our study, we answer three research questions: (RQ1) What patterns in argument structure and argument content can be found in student answers and how do these patterns differ between problems? (RQ2) How many argument profiles may be identified and how can they be characterised? (RQ3) What is the relation between domain-specific argumentation skills and domain-specific content knowledge? The empirical findings of our study may help to effectively promote the quality of civic argumentation in the classroom in the economic domain.

We start by outlining the theoretical background and state of research regarding civic argumentation in the economic domain, analytical frameworks of arguments as well as the relationship between argumentation skills and content knowledge. Next, we describe the methodological approach, including the data basis and data analysis. We then present the findings and discuss potentials and limitations of the study. Finally, we give an outlook for further research in this field.

Theoretical background and state of research

In this section, we sketch characteristics of civic argumentation on socio-economic problems in the economic domain. We then outline existing analytical frameworks of written arguments and their quality criteria for an argument. Finally, we describe findings from empirical research on students’ argumentation skills and content knowledge.

Civic argumentation in the economic domain

Economic education refers to economic-characterised situations arising in different life spheres (Ackermann, 2019, pp. 62–66; in print; Albers, 1988; Kaminski, 2017, pp. 36–38; Seeber, Retzmann, Remmele, and Jongebloed, 2012, pp. 87–88). In the personal-financial life sphere, individuals are faced with situations such as money management, sustainable consumption, long-term savings and private pensions. In the vocational-entrepreneurial life sphere, individuals have a role as employee or employer/entrepreneur. General vocational situations involve decisions for a profession, for a job change or further education. General entrepreneurial situations arise from administrative, operational and strategic corporate governance. In the societal life sphere, individuals are confronted with complex socio-economic problems and with possible problem solutions that are ambiguous and controversial (Ackermann, 2019, pp. 67–70; Dubs, 2013; Eberle, 2015). Current examples for such socio-economic problems include agricultural trade, consumer protection, energy supply, healthcare provision, immigration, retirement provision, public finance, transport infrastructure, and unemployment (Ackermann, 2019, in print; Simonneaux, 2007).

In many framework curricula of lower and upper high schools, economic and civic education are anchored as learning goals, and problem-solving and reasoning emphasised as learning skills necessary for 21st century (NEA, 2012; P21, 2019). In Switzerland, for instance, preparing students for demanding tasks in society is a primary educational goal of upper high schools (EDK, 1995, article 5 paragraph 1 MAR). This is particular important given that Swiss citizens are periodically invited to vote on solutions for societal problems via public referenda.

Like societal problems in other domains, socio-economic problems are accompanied by social dilemmas: the problems are complex and their possible solutions controversial (King and Kitchener, 2004; Sadler, 2004). Particularly, there are interdependencies in the economic, political/legal and ecological system, which lead to multiple perspectives on a problem (e.g. local/global, north/south, present/future, quantity/quality) and multiple conflicting solutions driven by different stakeholders, whereby no solution is obvious or unambiguous. For instance, the proposed problem solutions of “retirement provision” can be analysed from a short-term and long-term perspective, from a private and state viewpoint, using evaluation criteria for public finance and public welfare, taking into account interests of younger and older generations.

Making informed and reasoned decisions on socio-economic problems requires domain-specific knowledge and domain-specific skills (Ackermann, in print). Domain-specific knowledge refers to scientific facts and concepts related to the problem (Zohar and Nemet, 2002), such as exchange rates, income distribution, price mechanisms, public goods and the solidarity principle. Domain-specific skills require cognitive and metacognitive processes on a problem, namely analysing, evaluating, explaining, reasoning, deciding, and reflecting. Domain-specific skills may be supported by domain-specific social skills, such as communication, cooperation and collaboration (Euler, 1997; Grob and Maag Merki, 2001).

Analytical frameworks and quality criteria of a written argument

In educational research, argumentation and argument are conventionally distinguished (Garcia-Mila and Andersen, 2007; Kuhn and Udell, 2003; Osborne et al., 2004). Argumentation refers to a complex, discursive process among students. Through this discourse, student groups construct arguments by proposing (single argumentation), opposing (critical argumentation) and evaluating (responsive argumentation) ideas on a scientific topic or societal problem (Gronostay, 2016). This discourse has two intertwined meanings (Billig, 1987; Kuhn, 1993): The internal discourse is related to cognitive and metacognitive strategies, particularly the ability to think abstractly, analytically and critically (Tiberghien, 2007). The external discourse is a social dialogue or debate that takes either a direct/oral or indirect/written form; it enables one to externalize the internal discourse and to explicitly develop and refine it (van Eemeren and Grootendorst, 2004). This “transposition didactique” (Jiménez-Aleixandre and Erduran, 2007, p. 5) involves hypothesis on learning processes and learning outcomes.

An argument is an artefact generated in this discourse by a student in order to articulate and justify a claim on the scientific topic or an opinion on the societal problem. In order to examine students’ arguments, education researchers usually develop or adapt analytical frameworks (Duschl, 2007). Any analytical framework has to provide criteria to evaluate the quality of students’ arguments. With regards to written arguments, two main types of analytical frameworks can be found in the literature: domain-general and domain-specific (Sampson and Clark, 2008).

Domain-general analytical frameworks may be used in various scientific disciplines (Erduran et al., 2004; Kelly, Regev, and Prothero, 2007; Means and Voss, 1996; Schwarz, Neuman, Gil, and Ilya, 2003; Toulmin, 1958). They focus on the structure of an argument, such as structural components in an argument (i.e. claim, data, justification), structural complexity of an argument (i.e. number of justifications either supporting or qualifying the claim) and type of justification (e.g. abstract/scientific, specific/consequential, truism, authority, personal). The quality of an argument is then defined according to the presence or absence of these structural aspects. In the general framework proposed by Toulmin (1958), an argument comprises six structural components: claim, data, warrant, backing, qualifier, rebuttal. A claim is an assertion articulated for general acceptance and founded on data (i.e. specific facts and figures). A warrant provides a connection between the data and the claim, more precisely, it emphasises the relevance of the data for the claim (e.g. “since”, “because of”); a qualifier strengthens the warrant (e.g. “even if”, “even though”). A backing establishes general conditions to support the warrant (e.g. “due to”), and a rebuttal relativises or invalidates the warrant (e.g. “unless”, “only if”). In contrast, the general framework elaborated by Schwarz et al. (2003) describes the structural complexity of an argument. The type of argument is rated on four levels: simple claim (i.e. the claim is not supported by any reason); one-sided argument (i.e. at least one reason that supports the claim); two-sided argument (i.e. at least two reasons that either support or challenge the claim); compound argument (i.e. at least two reasons that explicitly support, qualify or rebut the claim). The type of justification is classified into six categories: abstract reasons apply scientific concepts, consequential reasons refer to consequences of an action, common-sense reasons include generally accepted beliefs and truisms, authority reasons generally refer to scientists or politicians, personal reasons include one's own experience, vague reasons consist of imprecise statements.

Domain-specific analytical frameworks are used in a specific scientific discipline or subdiscipline (Kelly and Takao, 2002; Lawson, 2003; Sandoval, 2003; Sandoval and Millwood, 2005; Sandoval and Millwood, 2007; Zohar and Nemet, 2002). They thus focus on the content of an argument, that is adequacy and accuracy of an argument from the scientific perspective. Depending on the scientific discipline, these frameworks evaluate accuracy of scientific knowledge in arguments, epistemological operations in arguments and validity of alternative explanations for puzzling observations.

Argumentation skills and content knowledge

Previous studies on argumentation on socio-scientific issues suggest that students have difficulty understanding the domain-specific concepts addressed in the problem given, avoiding heuristics in arguments, and discussing options for action in an elaborated and reflected way (Acar et al., 2010). Apart from that, studies on written argumentation on socio-scientific issues indicate that there is a substantial relation between domain-specific argumentation skills and domain-specific content knowledge (Fleming, 1986a, 1986b; Hogan, 2002; Sadler and Zeidler, 2004; Tytler, Duggan, and Gott, 2001; Zeidler and Schafer, 1984). Particularly, content knowledge is crucial for the quality of argument structure (i.e. complexity of argument, number of reasons), but not necessarily for argument content (i.e. appropriateness and accuracy of reasons) (Sadler and Zeidler, 2004). In other words, generally, the more students know about a topic, the more they are able to articulate supporting and/or qualifying justifications in their argument. But this does not necessarily mean that they are able to articulate an appropriate and accurate justification because they are still not experts on the topic.

The relation between argumentation skills and content knowledge was again identified in intervention studies on argumentative writing on socio-scientific issues (Kortland, 1996; Schwarz et al., 2003; Zohar and Nemet, 2002). In the intervention, students are usually instructed on a specific scientific topic and on argument quality. Before the intervention, most students generated a one-sided argument with just one reason, reasons were merely common sense or vague, and few students used correct specific scientific knowledge in their reasons. After the intervention, however, there was an increase in compounded arguments, the number of reasons, abstract and consequential reasons, and the scientific knowledge used in reasons. To conclude, if students do not have a sound understanding of the scientific topic and do not have learning opportunities to rehearse arguments, they may be prevented from generating complex arguments, and formulating relevant and accurate justifications by referring to specific scientific knowledge.

The relation between argumentation skills and content knowledge in the context of socio-scientific issues is even more remarkable (Zohar and Nemet, 2002). In a respective intervention study, the experimental group received instruction on the topic and on argumentation, while the control group only received topic-related instruction. Students in the experimental group improved the quality of their arguments after the intervention, both for similar and transfer problems, but students in the control group did not. Both groups performed higher in a knowledge test after the intervention, but the experimental group outperformed the control group. It is concluded that instruction and practice on argumentation improves conceptual understanding of the scientific topic, while greater conceptual understanding does not necessarily improve argumentation skills.

Methodological approach

In this section we first describe the data basis of the study, that is, sample description, test instrument and task specification. Then we describe the data analysis, that is, the analytical framework applied to the data, the qualitative and quantitative procedures.

Data basis

Sample description

The data were collected from the research project WBKgym that examined upper high school students’ in German-speaking Switzerland by using the revised test on economic-civic competence (WBK-T2) (Ackermann, 2018b, 2019) and a questionnaire on socio-demographic characteristics (Ackermann, 2018a). For our study, we took a subsample of 159 students from the 12th grade who took “economics and law” as major course. The students were aged 18 and 19. 47% of the students were female, 92% spoke German as their first language, 90% held a Swiss citizenship and 51% had at least one parent with an educational qualification at university level. The students in the subsample had identical curricular learning opportunities in the course “economics and law” regarding school timetable, educational goals and learning content. Thus, they are assumed to be homogeneous regarding curricular domain-specific content knowledge (economic and civic education).

According to the Swiss framework curriculum, the course “economics and law” comprises three learning fields: economics, business administration (including finance) and law (including civics) (EDK, 1994, p. 76). The course's learning content covers economic concepts and models (e.g. market, economic cycle, foreign economics, public finance), legal concepts and acts (e.g. human rights, political institutions and processes), as well as socio-economic problems (Ackermann, 2021; Ruoss and Ackermann, in preparation). Some socio-economic problems are explicitly mentioned as learning content in the curriculum (e.g. economic integration, public dept, retirement provision, unemployment) while others are implicit (e.g. agricultural trade, environmental protection, health care, immigration). Transdisciplinary skills such as problem-solving, argumentation and communication are also assigned to be taught in this course (EDK, 1994, pp. 11-24). Consequently, the course “economics and law” is meant to contribute to civic education and education for sustainable development (EDK, 1994, 2020).

Test instrument and task specification

The revised test on economic-civic education (WBK-T2) is a written psychological performance test that covers four current socio-economic problems (retirement provision, energy supply, public debt, manager salaries) and encompasses 32 items in selected-response and constructed-response format (Ackermann, 2018b, 2019). The validity of the WBK-T2 test scores is extensively evaluated in the above-mentioned research project (Ackermann, 2019). Regarding content validity, experts evaluated problems and items of the WBK-T2 as relevant and adequate. Regarding internal structure validity, the partial credit Rasch-model proved to be a satisfactory test model with a one-dimensional structure and item homogeneity for two manifest groups (major course, gender). Classical and probabilistic item analysis showed good values for weighted item infits (all items with 0.92 < wMNSQ 1 < 1.17), uncorrected item discriminations (28 items with item-total correlation > .20), and test reliability (EAP/PV 2 = .76, WLE 3 = .74, Cronbach's α = .74). Consequently, the WBK-T2 test scores may be interpreted as students’ domain-specific content knowledge on the included socio-economic problems.

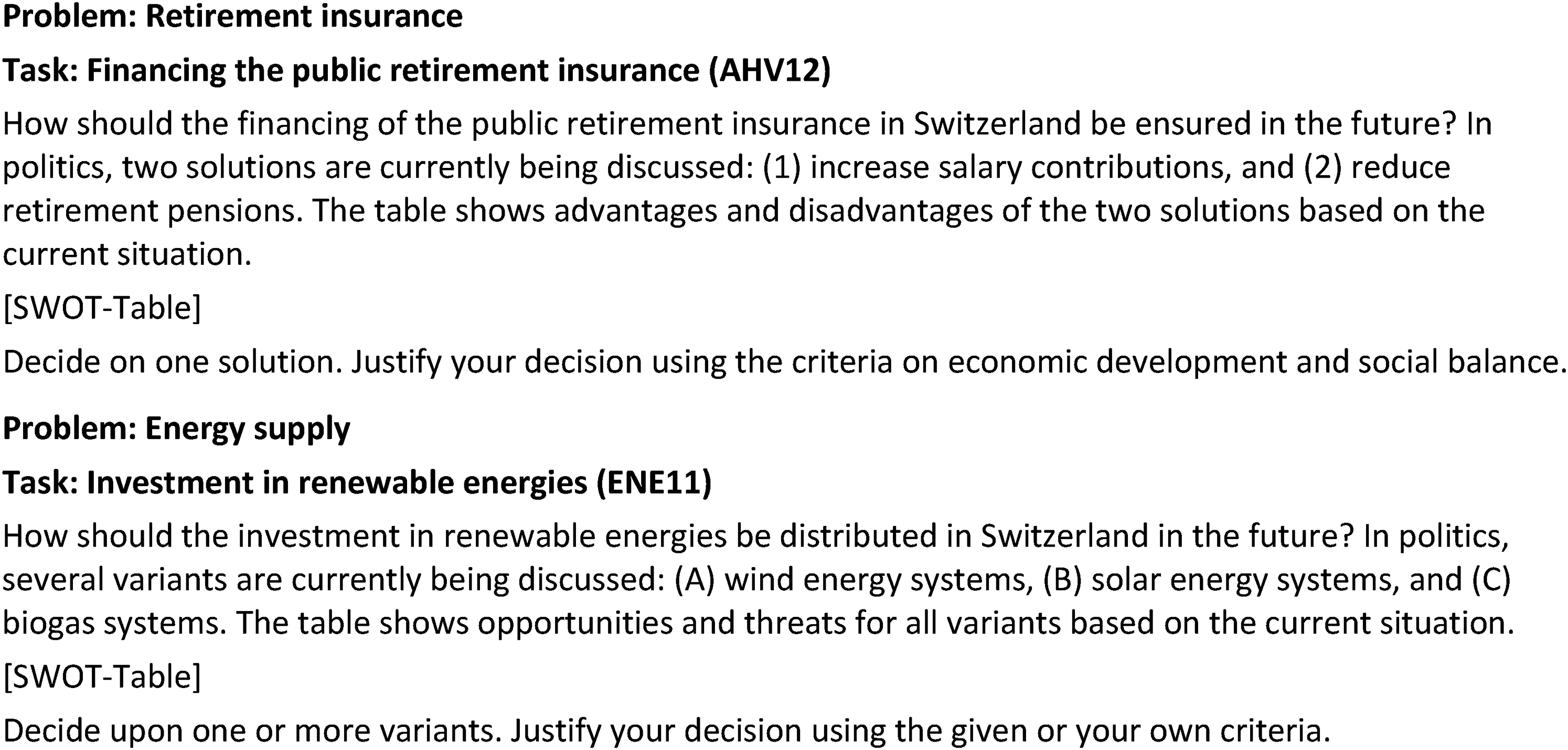

From the WBK-T2, two constructed-response items were selected (see Figure 1). For a comparative analysis, the test items were taken from two distinct socio-economic problems: “retirement provision” (AHV) and “energy supply” (ENE). These items are specified as problem-oriented tasks. Each task introduces an authentic problem and sketches well-known problem solutions. In the task, students are provided two or three problem solutions and asked to take a position by selecting one or several of the solutions (AHV12: solution 1 and 2; ENE11: variant A, B and C). Students are then asked to justify their position by writing some phrases regarding given or their own criteria.

Task specification. Source: Own translation according to Ackermann (2019).

Data analysis

Domain-specific analytical framework

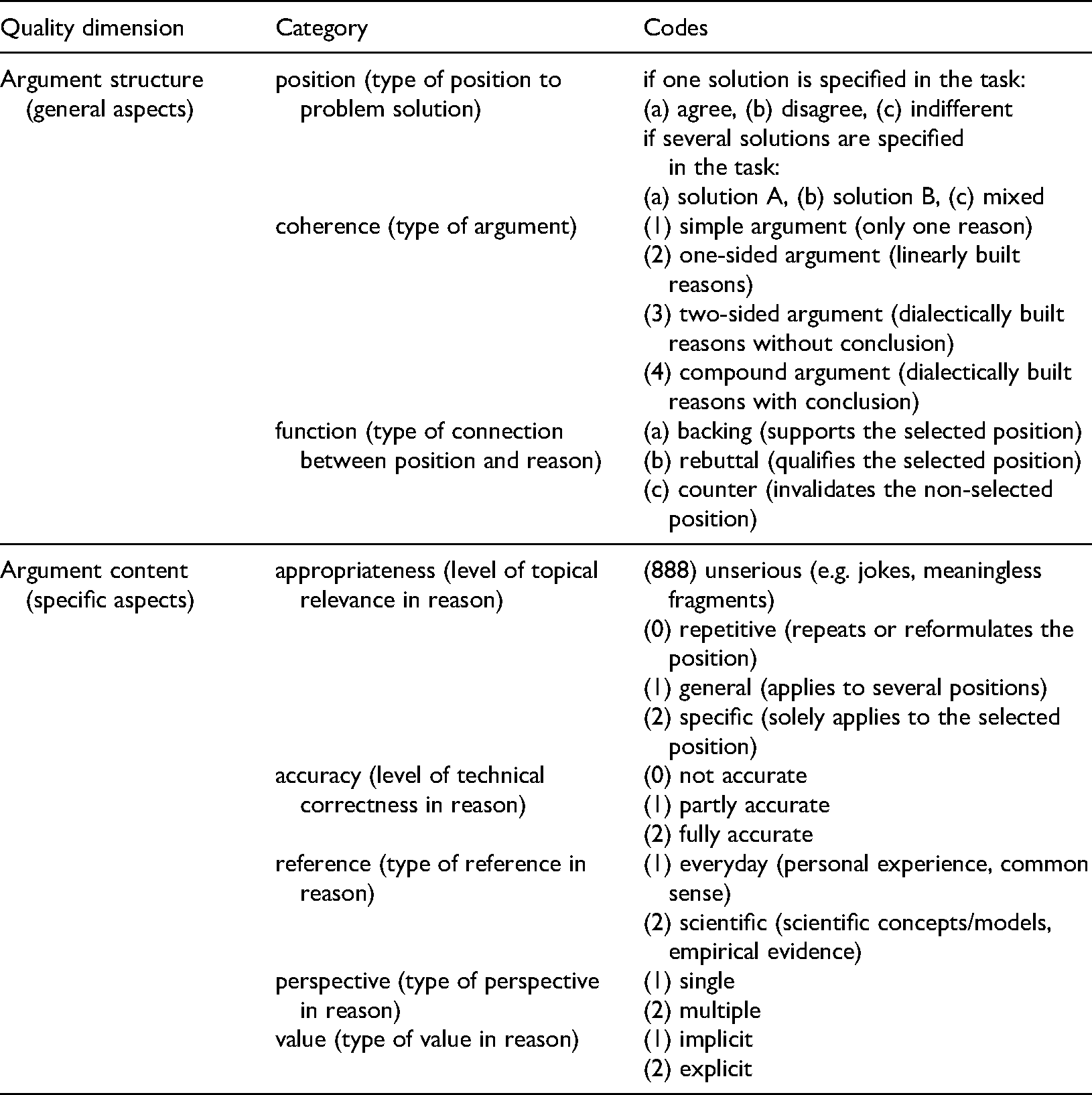

In order to elaborate a domain-specific analytical framework for argumentative writing in the economic domain, we considered characteristics of civic argumentation on socio-economic problems, and reflected on existing analytical frameworks and their quality criteria for an argument. Accordingly, our analytical framework for argumentative writing on socio-economic problems captures argument structure based on general aspects and argument content based on specific aspects (see Table 1).

Analytical framework for argumentative writing on socio-economic problems.

Regarding argument structure, the framework has three categories: position, coherence and function. Position captures the problem solution specified in the task and selected by the student. Coherence applies to the type of argument (Schwarz et al., 2003). A simple argument is followed by one reason. In a one-sided argument, reasons are built linearly and progressively to support and strengthen the position taken. In a two-sided argument, reasons are built dialectically to alternately support and qualify the position. Compound arguments are two-sided with an explicit conclusion. Function covers the type of connection between the position selected and the reasons formulated (Toulmin, 1958): a “backing” supports and strengthens the selected position (i.e. for one's own position), a “rebuttal” qualifies the selected position (i.e. against one's own position) and a “counter” invalidates the non-selected position (i.e. against the opposing position).

There are five argument content categories in the framework: appropriateness, accuracy, reference and perspective. Appropriateness refers to the topical relevance of the reason and is scaled on four levels: “unserious” (e.g. jokes, meaningless fragments), “repetitive” (i.e. repeats or reformulates the position), “general” (i.e. applies to several positions) and “specific” (i.e. solely applies to the selected position). Accuracy refers to the technical correctness of the reason and is scaled on three levels (Zohar and Nemet, 2002): not accurate, partly accurate, fully accurate. Reference focuses on the type of reference given in the reason (Schwarz et al., 2003): “everyday” is an explanation based on personal experience (e.g. family practices) and common sense, whereas “scientific” is an explanation based on scientific concepts/models and empirical evidence (e.g. debt brake, exchange rate, income distribution, price mechanism, public goods, solidarity principle). Perspective accounts for the “single” and “multiple” perspectives included in the reason (e.g. local/global, North/South, presence/future, various interest groups). Value captures “implicit” and “explicit” ethical considerations in the reason (e.g. efficiency, prosperity, solidarity, sustainability, liberty, security, equality) (Ackermann, in print; Kolstø, 2004; Sadler and Donnelly, 2006; Zeidler and Sadler, 2007).

To evaluate the quality of a written argument, it is not necessary to use all categories of the framework. Moreover, the relevant categories depend on the specification of the writing task (e.g. format, time, goal, recipient). For example, if the task asks for a one-side argument, students are not expected to write a two-sided or compound argument.

Qualitative data analysis and coding procedure

The qualitative data corpus encompasses students’ written answers to the two constructed-response items AHV12 and ENE11 in the performance test WBK-T2. As students’ answers were short in general (i.e. one to three phrases), we defined the complete answer as a coding segment. Students’ answers were analysed using qualitative content analysis (Mayring, 2015), whereby the domain-specific analytical framework served as the coding scheme and was deductively applied to the data. The category appropriateness was used to sort out unserious and missing answers from further coding. The categories coherence and value were not coded for because the task specification prompted a short, one-sided argument. Qualitative data analysis was performed with MAXQDA 2020 (VERBI, 2019).

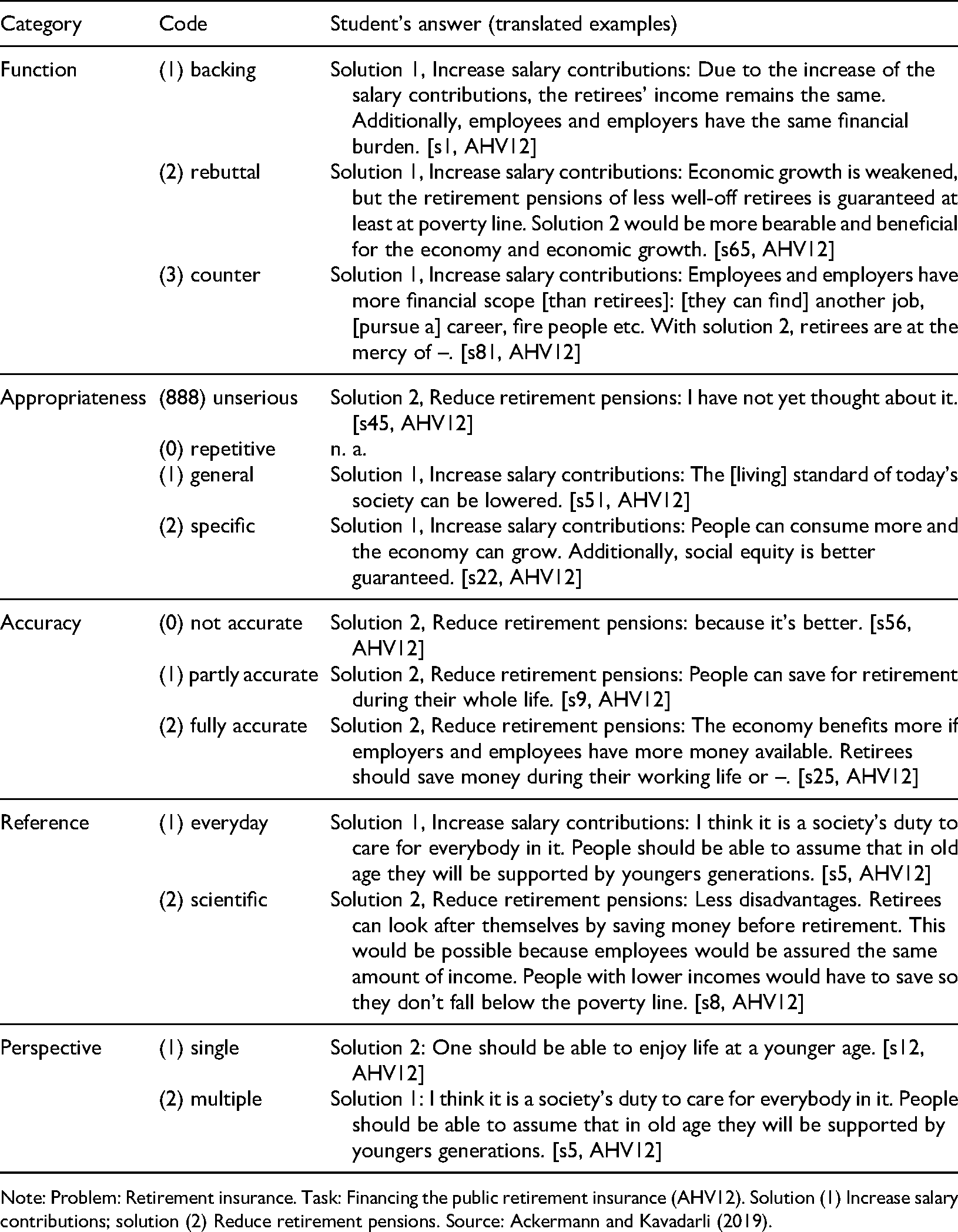

During the coding procedure, a coding manual was drafted and continuously augmented (Ackermann and Kavadarli, 2019) (see Table 2). In the first phase, two researchers coded about 20% of the material separately and discussed their disagreements in order to refine the coding scheme and enrich the coding manual. The inter-coder reliability yielded good values (relative agreement = 81%, Cohen's κ = .75) (Döring and Bortz, 2016, p. 566). Thus, in the second phase, one person coded the remaining material.

Extract of the coding manual of task AHV12.

Note: Problem: Retirement insurance. Task: Financing the public retirement insurance (AHV12). Solution (1) Increase salary contributions; solution (2) Reduce retirement pensions. Source: Ackermann and Kavadarli (2019).

Quantitative data analysis and statistical procedure

The quantitative data set includes variables for the single argument categories, argumentation skills and content knowledge. The categories position and function were recoded from nominal-scaled variables into a metric-scaled variable. 4 The categories accuracy, reference and perspective were taken as ordinal-scaled variables. 5 The variable argumentation skills was calculated as the sum score of the five argument variables (position, function, accuracy, reference, perspective) and the two items (AHV12, ENE11). The variable content knowledge was computed as the sum score of the problems “retirement provision” (AHV, 9 items) and “energy supply” (ENE, 7 items) in the performance test WBK-T2.

We ran descriptive analysis for patterns in argument structure and argument content, cluster analysis for argument profiles as well as correlation and variance analysis for the relationship between argumentation skills and content knowledge. Quantitative data analysis was performed with SPSS Statistics Version 26 (IBM, 2019).

Findings

In this section, we present the results with respect to the research questions: patterns in argument structure and content (RQ1), number and characteristics of argument profiles (RQ2), and relation between argumentation skills and content knowledge (RQ3).

Patterns in argument structure and content (RQ1)

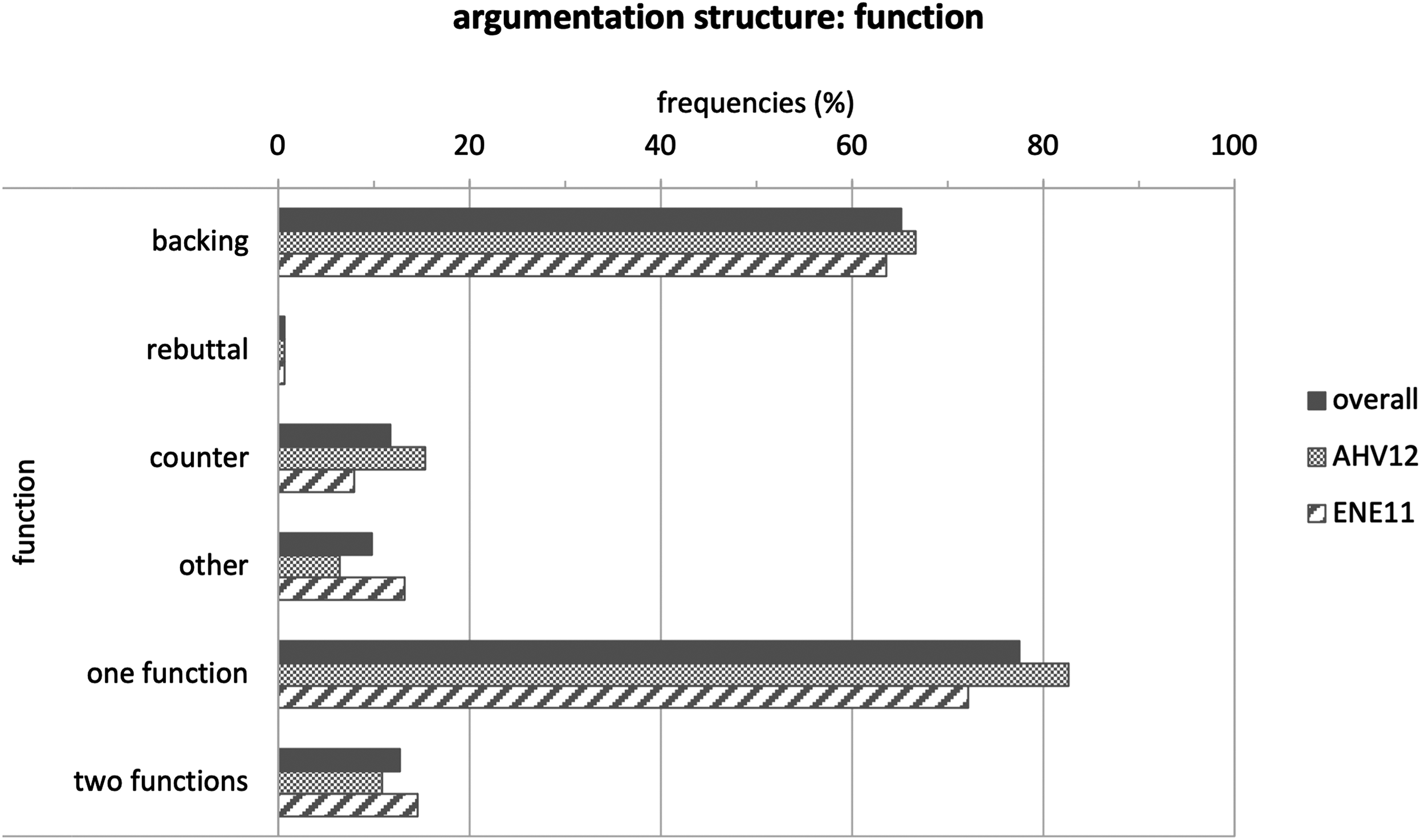

In order to identify patterns in argument structure and content, we used frequency analysis for each task and category. Position in task AHV12 is balanced between the two proposed solutions: “(1) increase salary contributions” (48%) and “(2) reduce retirement pensions” (50%). Although the task prompts students to decide on one solution, 1% of students favoured a combination of both solutions. In task ENE11, two-thirds of students favoured a single variant (62%), whereas about one-fifth either a 2-variants-mix (21%) or 3-variants-mix (17%). Both tasks show a similar pattern in function (type of connection) (see Figure 2): 65% are backings (AHV12: 67%, ENE11: 64%), 12% counter (AHV12: 15%, ENE: 8%) and only 1% rebuttals. In 78% of the students’ answers, one function was identified (AHV12: 83%, ENE: 72%) and in 13% two functions (AHV12: 11%, ENE11: 15%). 10% of responses could not be assigned a function (AHV12: 6%, ENE11: 13%).

Patterns in argument structure: function (type of connection).

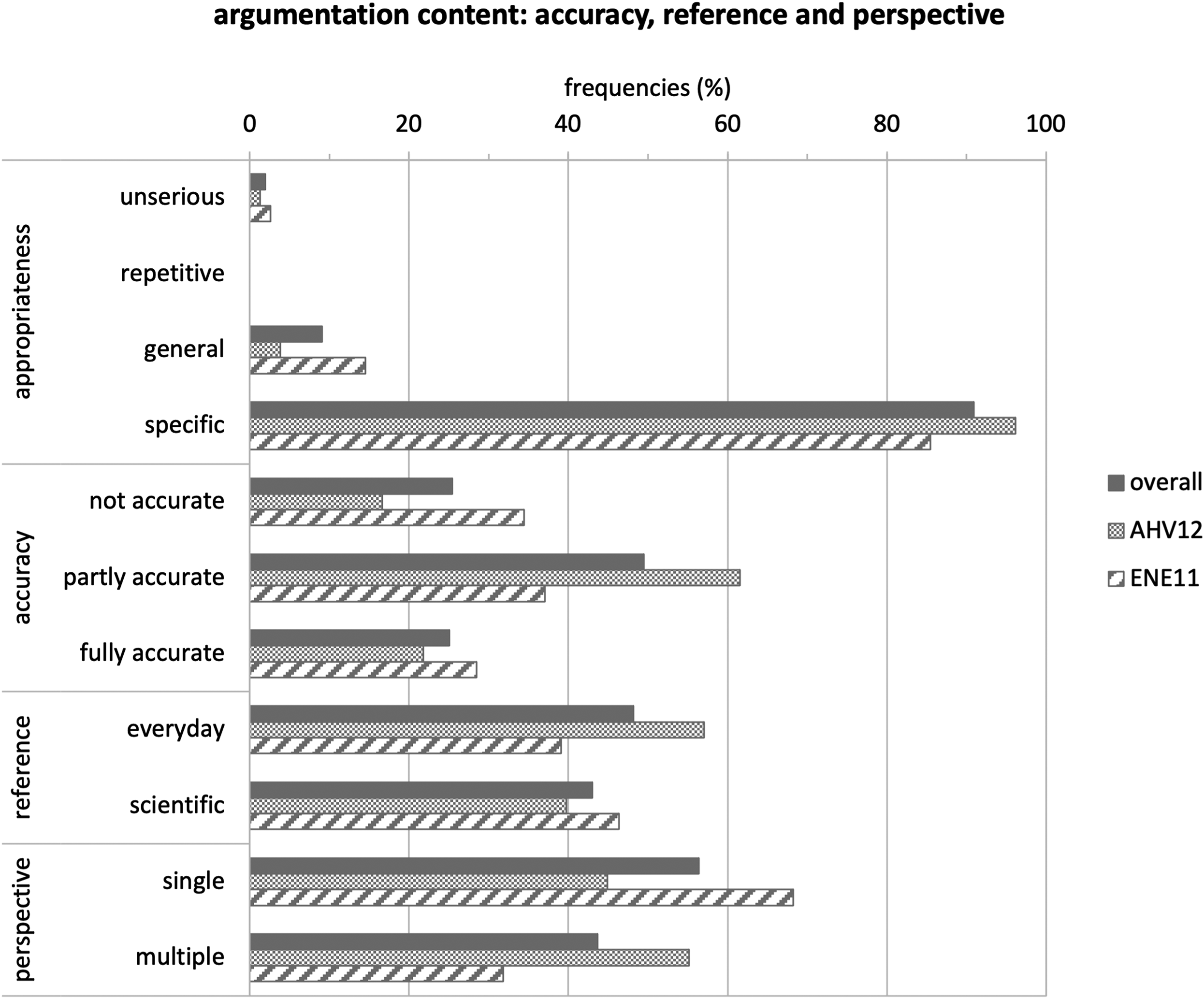

In appropriateness, about 90% of students’ answers are specific and 2% unserious (see Figure 3), although general answers were three times higher in ENE11 than in AHV12 (4%; 15%). In accuracy, 25% of answers were rated as either not accurate or fully accurate and 50% as partly accurate. Despite this quasi-normal distribution, not accurate answers were twice as high in ENE11 as in AHV12 (17%; 34%). Similarly, in reference, answers with everyday explanations (48%) and scientific explanations (43%) occurred with similar frequency, but the former was more frequently found in AHV12 than in ENE11 (57%; 39%). In perspective, more answers employed a single perspective (56%) than multiple perspectives (44%), but the difference is smaller in AHV12

Patterns in argument content: appropriateness, accuracy, reference and perspective.

Characteristics of argument profiles (RQ2)

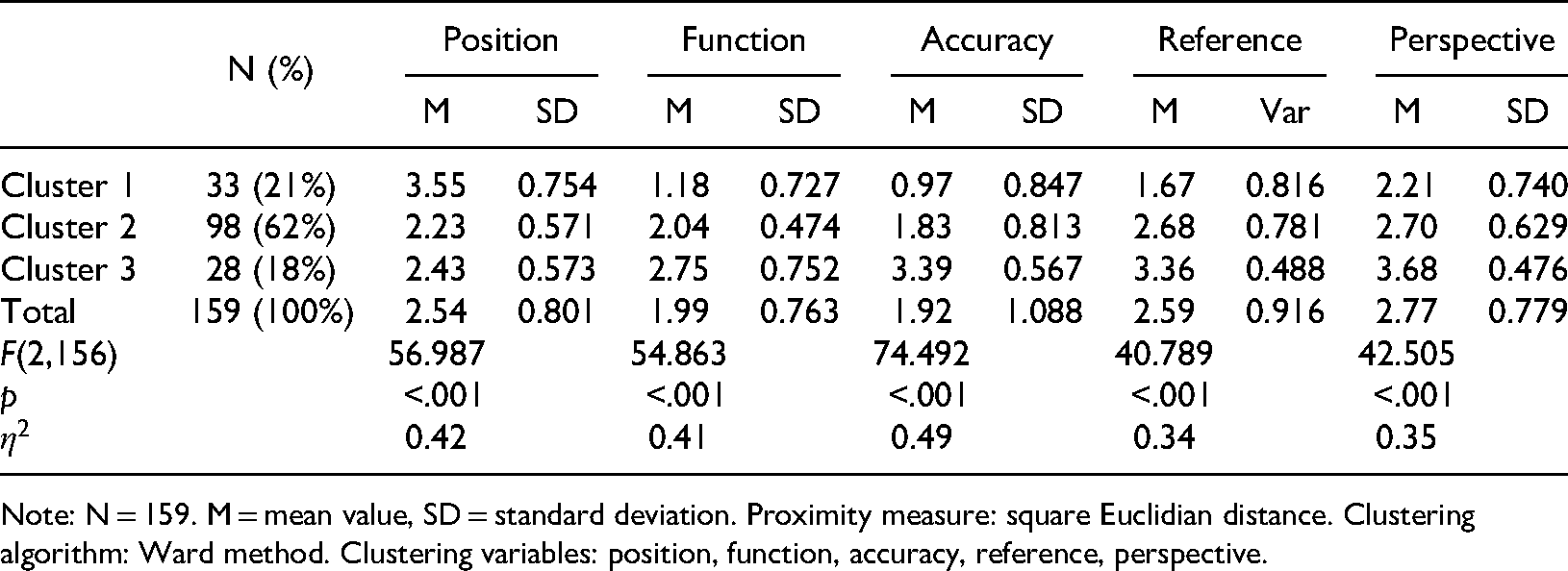

In order to identify and characterize argument profiles that can serve as levels of argumentation skills, we ran a hierarchical cluster analysis with square Euclidean distance as homogeneity measure and the Ward method as clustering algorithm (Backhaus, Erichson, Plinke, and Weiber, 2016, pp. 435–469; Fromm, 2012, pp. 191–222). From the quantitative data set we included five metric-scaled argument variables in the cluster analysis 6 : position, function, accuracy, reference and perspective.

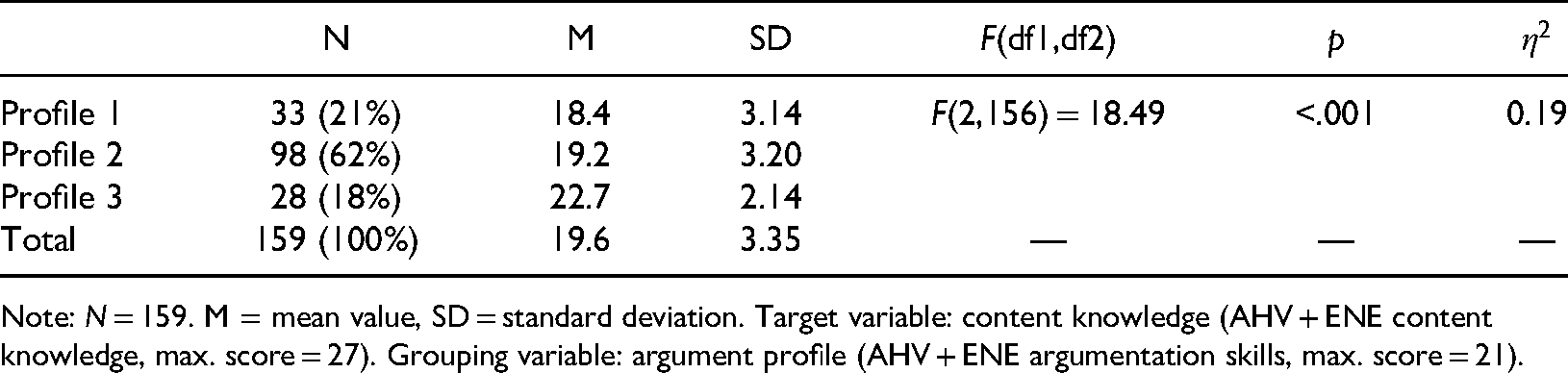

The cluster analysis indicates a 3-cluster-solution with distinct groups (Nc1 = 21%, Nc2 = 62%, Nc3 = 18%) (see Table 3). To evaluate the statistical quality of the cluster solution, we calculated descriptive and inference statistics for each of the five clustering variables (Fromm, 2012, p. 214). For each variable, the standard deviation in each cluster is lower than the standard deviation in the whole sample. Moreover, the variance in each cluster is lower than the total variance of the whole sample; the effects are large and statistically significant (p < .001; 0.34 ≤ η2 ≤ 0.49).

Descriptive and variance analysis of the 3-cluster-solution.

Note: N = 159. M = mean value, SD = standard deviation. Proximity measure: square Euclidian distance. Clustering algorithm: Ward method. Clustering variables: position, function, accuracy, reference, perspective.

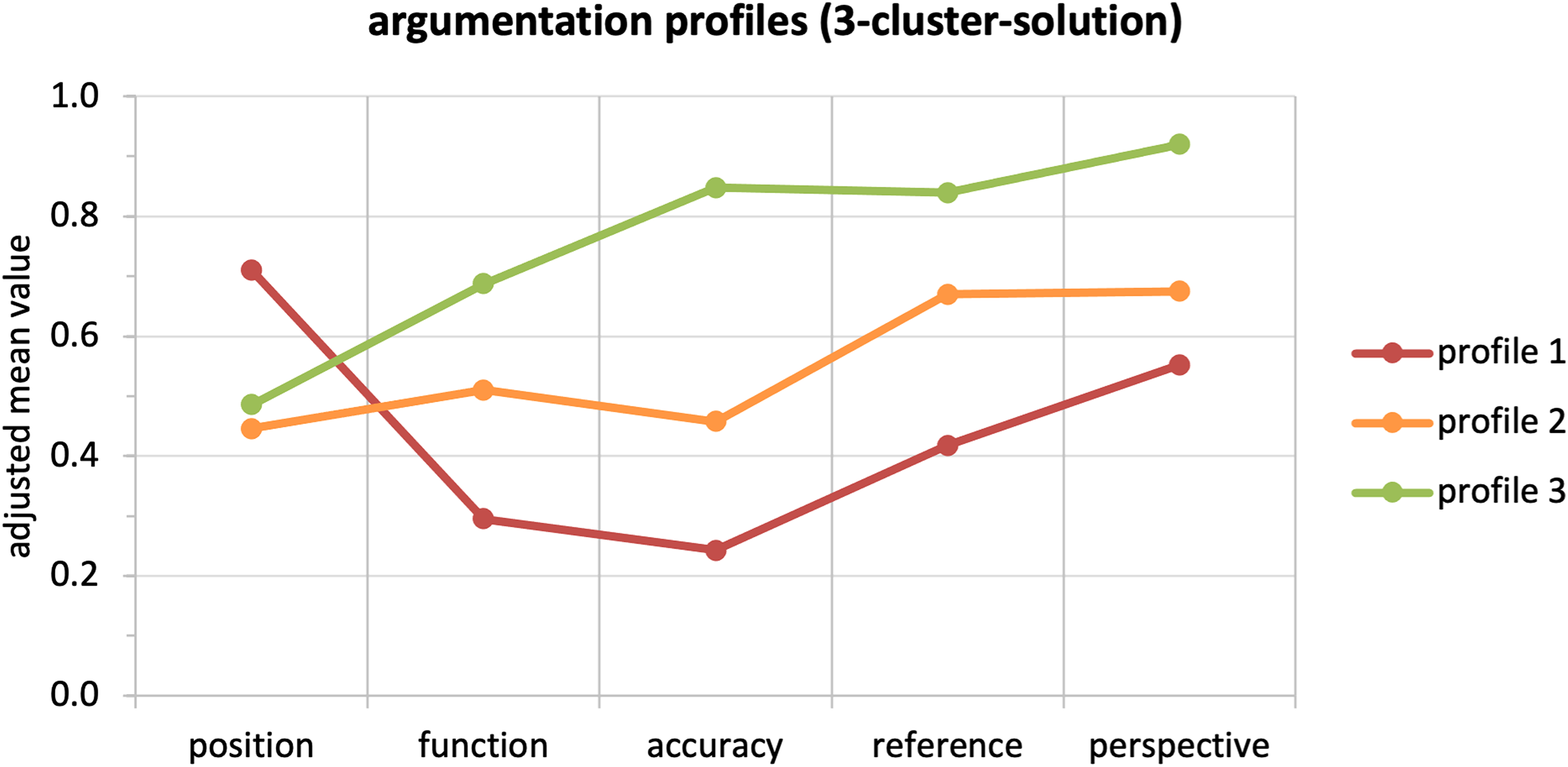

We used this 3-cluster-solution to define and characterize argument profiles. The profiles are represented by adjusted mean values of each clustering variable (see Figure 4) and characterised as follows:

Profile 1 (red) has an ambiguous argument structure and low to moderate argument content. It favours more than one problem solution (position) and uses no or one function. It does not give an accurate reason (accuracy), refers mostly to everyday explanations (reference) and includes mostly single perspectives. Profile 2 (orange) shows both a moderate argument structure and argument content. It favours one problem solution (position) and uses one function. It gives mostly partly accurate reasons (accuracy), refers equally to everyday and scientific explanations (reference) and includes single and multiple perspectives. Profile 3 (green) has a moderate argument structure and high argument content. It favours one problem solution (position) and uses one or two functions. It gives mostly fully accurate reasons; refers mostly to scientific explanations (reference) and mostly includes multiple perspectives.

Argument profiles (3-cluster-solution). Note: N = 159 cases. Adjusted mean values of clusters (in %). Argument variables: position, function, accuracy, reference, perspective.

Relation between argumentation skills and content knowledge (RQ3)

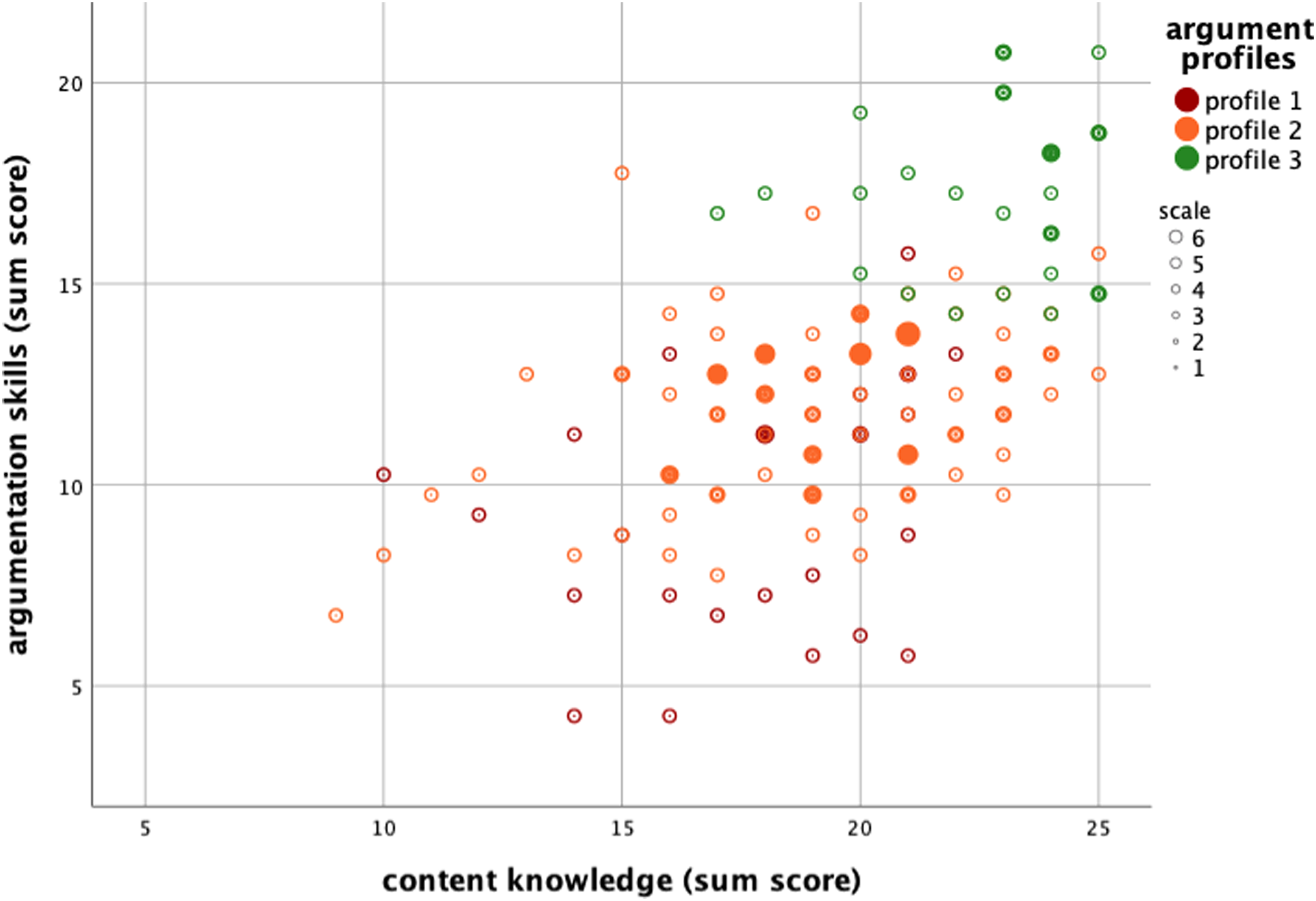

Firstly, the overall relation between argumentation skills and content knowledge was calculated by bivariate correlation analysis (Backhaus et al., 2016, pp. 57–124). Secondly, the relation between argument profiles and content knowledge was calculated by univariate, single-factor variance analysis (Backhaus et al., 2016, pp. 163–201), where content knowledge was the dependent target variable and argument profile the independent grouping variable.

The scatterplot indicates that argumentation skills and content knowledge are positively correlated (see Figure 5), with the correlation being statistically significant and moderately positive (r = 0.54, p < .001). The mean difference in content knowledge regarding the three argument profiles is statistically significant (F(2,156) = 18.49, p < .001) and has a large effect

Scatterplot of argumentation skills (3-cluster-solution) and content knowledge.

Differences in content knowledge regarding argument profiles (3-cluster-solution).

Note: N = 159. M = mean value, SD = standard deviation. Target variable: content knowledge (AHV + ENE content knowledge, max. score = 27). Grouping variable: argument profile (AHV + ENE argumentation skills, max. score = 21).

Discussion

This study addressed argumentation on societal problems in the economic domain. The aim of our study was to examine upper high school students’ written arguments on socio-economic problems in a performance test by applying a domain-specific analytical framework. In this section we discuss the findings, potentials and limitations of our study.

On the domain-specific analytical framework

The domain-specific analytical framework presented in this study applies to civic argumentation on societal problems in the economic domain. In particular, the framework can be used to examine and support students’ argumentative writing on socio-economic problems. The framework captures quality criteria of argument structure and argument content, operationalised by eight categories: position, coherence, function, appropriateness, accuracy, reference, perspective and value. In contrast to most existing analytical frameworks used in research on argumentation in education, our frameworks combines domain-general aspects, that is, quality criteria of argument structure (Erduran et al., 2004; Kelly et al., 2007; Means and Voss, 1996; Schwarz et al., 2003; Toulmin, 1958) and domain-specific aspects, that is, quality criteria of argument content (Kelly and Takao, 2002; Lawson, 2003; Sandoval, 2003; Sandoval and Millwood, 2005; Sandoval and Millwood, 2007; Zohar and Nemet, 2002).

In the present study, the analytical framework was transformed into a coding scheme and the coding scheme deductively applied to students’ answers to constructed-response items in the performance test WBK-T2 (Ackermann, 2018b, 2019). Students’ arguments were usually short (one to three phrases) and simple (one-sided argument with one to two justifications) as this is what the specific task required of them. The extent to which this framework can also be applied to longer and more complex students’ arguments, as expected in essays on socio-economic problems, needs to be evaluated in a subsequent study. For instance, longer and more complex arguments may allow for more differentiated coding of the categories reference, perspective and value. Consequently, the analytical framework may be refined when faced with a richer data corpus.

Nevertheless, our analytical framework seems to be a fruitful tool for educational research and practice. On the one hand, it serves as an instrument to assess students’ specific learning outcomes in the classroom; and on the other, as a guideline to teach and practice the scaffolding of learning processes in teacher training.

On argumentation skills and content knowledge

Argument structure did not substantially vary between the two tasks, but argument content generally did (see RQ1). Students selected the specified positions to the problem fairly equally in both tasks. This implies that the specified problems’ solutions were sufficiently controversial to the students. Backing is often found as function in students’ arguments but rebuttal and counter rarely appeared. This corresponds with findings in previous studies that students more frequently generate arguments that support (backing) rather than qualify their own position (rebuttal) and invalidate the opposite position (counter) (Chinn and Brewer, 1993; Kortland, 1996; Kuhn, 1993; Schwarz et al., 2003). From a cognitive psychology viewpoint, a qualifying justification is less straightforward than a supporting one, because it demands higher cognitive processes. Only a quarter of the arguments are fully accurate, but accuracy varies between the tasks, especially at the levels not accurate and partly accurate. It seems that the task on “energy supply” was more difficult for students than the task on “retirement provision”. About half of the arguments refer to scientific explanations. This corresponds to findings in previous studies in which students more frequently drew on everyday experience in their arguments than scientific concepts and empirical data (Acar et al., 2010; Kortland, 1996; Sadler and Zeidler, 2004; Zohar and Nemet, 2002). Several explanations may hold for these findings in our study. Firstly, students relied on the general information offered in the problem description and task specification of the performance test. Secondly, students perceived abstract scientific concepts and authentic societal problems as unrelated fields of learning and action; thus, they seldomly transferred and integrated scientific explanation into their arguments (Haskell, 2001). Thirdly, the testing in the study was detached from the instruction in classroom; thus, students hardly recalled any scientific concepts from the classroom.

Three distinctive argument profiles were identified and characterised (see RQ2). Profile 1 has an ambiguous argument structure and low to moderate argument content, profile 2 shows both a moderate argument structure and argument content, and profile 3 has a moderate argument structure and high argument content. In the classroom, these profiles might be helpful for individually scaffolding cognitive and metacognitive processes. However, whether these profiles are specific to the present sample (12th grade students with major course “economics and law”) or whether they are generalizable for other student groups remains unclear. Thus, further research in this field is necessary to confirm this finding.

A moderately positive relation between domain-specific argumentation skills and domain-specific content knowledge was found (see RQ3). Moreover, argument profiles could be used to distinguish students in their level of argumentation skills and level of content knowledge. This corresponds to findings in previous empirical studies: students’ conceptual understanding of the societal problem enables them to articulate arguments that are complex, accurate and multi-perspective (Sadler and Donnelly, 2006; Sadler and Zeidler, 2004; Zohar and Nemet, 2002).

There are some limitations in the study's design that have to be considered when interpreting the findings. Frist, the performance test was time-constrained (32 items to be completed in 60 minutes). Consequently, effective time on the two constructed-response items used for analysing arguments was very short. This might have forced students to give shorter answers than they would have been capable of with more time. Second, the task specification in the performance test does not explicitly demand extensive answers. Thus, students’ argumentation skills may not have been fully displayed, and our findings on argument patterns and profiles might be biased downwards. Third, all students in the sample attended the major course “economics and law” with an identical curriculum and are assumed to be homogeneous regarding curricular content knowledge on the socio-economic problems in the performance test. Thus, our findings on the relation between argumentation skills and content knowledge might be specific for this student group and might not hold for samples with other major courses and less curricular content knowledge (Christenson et al., 2014).

Outlook

Our study contributes to empirical research on teaching/learning at upper secondary school level as well as civic argumentation in the classroom. It presents a promising analytical framework for future research in the economic domain, and it gives insights into students’ argumentative writing on socio-economic problems. Particularly, our findings on the relation between argument profile and content knowledge seems to be crucial for promoting effective argumentation in the classroom (i.e. from profile 1 to profile 3). Moreover, the findings may be fruitful for teacher training and practice in order to promote quality of civic argumentation in social science education.

In order to evaluate the applicability and transferability of the analytical framework as well as to generalize the findings presented in our study, more research is needed on civic argumentation in the economic domain. Future studies on argumentative writing on socio-economic problems should vary in sample characteristics (e.g. major course, school type, country), socio-economic problems (e.g. agricultural trade, healthcare provision), task specification (e.g. one-sided and two-sided argument) and writing format (e.g. short response or essay).

Footnotes

Acknowledgments

The authors thank Sanja Stankovic for assisting in the literature review and coding procedure, Christin Siegfried and Claudio Caduff for critical remarks on the coding manual and the manuscript as well as Rosa Brown for proofreading.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.