Abstract

Studies indicate that male students outperform female students in economic literacy and that a specific item format (selected-response, constructed-response) favours either males or females. This study analyses the relationship between item format and gender in economic-civic competence using the WBK-T2 test (“revidierter Test zur wirtschaftsbürgerlichen Kompetenz”). The WBK-T2 encompasses 32 items, of which 53% have a selected-response format and 47% a constructed-response format. To answer the research questions, we used a sample of 375 Swiss high school students and ran T-tests and multiple regression analyses. Male students significantly outperformed female students in the overall test score, in the selected-response test score and in the constructed-response test score, but effect sizes are rather small. Interest in socio-economic issues predicted but did not moderate the test scores; however, prior knowledge in economics did. Our results indicate that the balanced test form of the WBK-T2 regarding selected-response and constructed-response items does overcome the gender gap in overall test scores and format-related test scores for students with prior economic knowledge. However, this does not apply for students without prior knowledge in economics. Thus, there must be other test-external variables, such as prior knowledge in economics that cause the gender gap in economic-civic competence.

Introduction

The measurement of cognitive performance is accompanied by the expectation that people with the same ability have the same chances to reach the same test result, regardless of their gender or social background. However, different studies have found evidence that other construct-irrelevant factors such as test motivation or test format (i.e. item format, answer format) may influence test results (e.g. Haladyna and Rodriguez, 2013; Lindner et al., 2015; Lesage et al., 2013). In performance tests, the answer format may be either constructed-response (CR), that is, written answers of a few words or sentences, or selected-response (SR), that is, an answer selected from two options (true–false items) or from several (multiple-choice items) options (American Educational Research Association (AERA), American Psychological Association (APA) and National Council on Measurement in Education (NCME), 2014: 77). Thus, since CR items require test takers to retrieve language skills, some researchers assume that CR items require higher motivation and interest in the topic (e.g. Guthrie and Wigfield, 2005). As a consequence, test scores of poorly motivated and disinterested students would not (just) reflect their levels of competence but rather their lack of motivation and interest, caused by the item format. Conversely, to process SR items, it is sufficient to tick an answer option, either by knowing or guessing (Lindner et al., 2015). Whereas some test takers use a guessing strategy if they do not know the right answer, others prefer to leave out questions they are not able to answer with certainty (Lesage et al., 2013). This variation in guessing tendencies can lead to systematic distortions of the test results based on factors such as personality traits (e.g. fearfulness, conscientiousness) or gender. Hence, it seems that test scores not only provide information about individuals’ knowledge regarding the test content but also about their motivation, interest or their reading, writing and guessing skills. Test scores may provide biased information as different test formats may measure construct-irrelevant factors. Since results of educational studies are used for public discussions and for monitoring education systems, it is important to avoid testing construct-irrelevant information to a large extent.

Studies examining economic literacy with standardized cognitive tests found that participants regardless of their age have difficulties in answering the test items. Moreover, they outline mainly a gender-specific test performance, that is, male students show higher tests scores than female students (e.g. Brückner et al., 2015a, 2015b; Förster and Zlatkin-Troitschanskaia, 2010; Schumann and Eberle, 2014; Soper and Walstad, 1987). To overcome these deficits in economic literacy, different research groups analysed the effect of learning opportunities in economics in school or university on learning outcome of males and females. In most cases, the results show that, regardless of the number of economic courses attended, the gender gap remains the same or even increases (e.g. Schmidt et al., 2016; Schumann and Eberle, 2014). Thus, several explanations have been offered in literature for these remaining gender differences in the domain of economics. Higher test scores by male students have been attributed to their higher interest in economics and their higher cognitive ability in mathematics (e.g. Becker et al., 1990; Beck and Wuttke, 2004).

Other explanations take the item format as a cause for the gender gap into account. In this context, some studies have found that male students outperform females in solving SR items, such as multiple-choice items (Walstad and Robson, 1997). In contrast, studies in the field of science (science, technology, engineering and mathematics (STEM), e.g. mathematics) and language (e.g. English as first language) have found that female students perform better in CR items than in SR items (Bolger and Kellaghan, 1990; Beller and Gafni, 2000; Reardon et al., 2018). In order to compensate for the disadvantages that seem to exist for both genders in particular item formats, some have proposed the systematic variation of SR and CR items (e.g. Reardon et al., 2018). However, a systematic variation in item format is missing in most economic literacy tests; moreover, they mostly consist of SR items (cf. Beck, 1993; Schumann and Eberle, 2014). Therefore, the goal of the present study is to analyse the gender gap in the test of economic-civic competence in relation to item formats, since different item formats are used in this test.

Theoretical background

The construct of economic-civic competence

Economic-civic competence refers to economic-characterized situations arising in different life spheres (structural level). Handling these economic-characterized situations in turn demands domain-specific cognitive processes (process level). This competence definition involves individual dispositions and results in individual behaviour that is observable and measurable (Ackermann, 2019: 59; Eberle, 2015; Eberle et al., 2016).

At the structural level, the model of economic-civic competence encompasses three life spheres (Ackermann, 2019: 60, 63–67). In the personal-financial life sphere, individuals are faced with economic-characterized situations such as money management, sustainable consumption, long-term saving and private pension (cf. Seeber et al., 2012: 87). In the vocational-entrepreneurial life sphere, individuals have either a role as employee or employer/entrepreneur (cf. Seeber et al., 2012: 87). General vocational situations involve decisions for a profession, for a job change or further education. General entrepreneurial situations arise from administrative, operational and strategic corporate governance. In the societal/economic life sphere, individuals are confronted with current and complex socio-economic issues that are linked with ambiguous and controversial solutions (Seeber et al., 2012: 87–88). These socio-economic issues stem from different policy fields, for example, retirement provisions, health care, energy supply and agricultural trade (Ackermann, 2019: 73–76; Eberle et al., 2016). Depending on the type and degree of democracy, individuals are invited to express their opinion via public debates and/or referenda.

At the processes level, the model encompasses information processing and problem solving (Ackermann, 2019: 60–61, 76–83). Information processing is modelled by three levels (cf. Marzano and Kendall, 2007): (k1) recognize, (k2) understand/apply and (k3) compare/evaluate/decide. Problem solving is modelled by four phases (cf. Edelmann and Wittmann, 2012: 190–191; Kuhn, 1999; Massing, 1995): (p1) identify and formulate a problem; (p2) analyse the problem from multiple perspectives; (p3) search, compare and evaluate alternative problem solutions; and (p4) implement, evaluate and modify a solution. However, information processing and problem solving are not isolated processes, but are interwoven to some extent: every phase of problem solving requires levels of information processing. Depending on the life sphere and the specific situation, the extent and intensity of these cognitive processes may vary. To exemplify these processes, we look at the issue of ‘energy supply’ from the societal/economic life sphere. In phase 1, political actors and/or the media usually bring the issue to the public’s attention, for example, the nuclear phase-out. Next, individuals gather, select and evaluate information on the issue; identify involved actors and their interests; and distinguish between facts and opinions. Such information may concern the risks involved with a nuclear phase-out, the alternative energies that are available to compensate for nuclear energy, the realistic period of time for a nuclear phase-out and so on. In phase 3, political actors and/or the media propose solution approaches; individuals compare the proposed solution approaches by weighing advantages and disadvantages, opportunities and threats; then, individuals decide upon one solution by referenda, for example, a nuclear phase-out within the next 30 years. In phase 4, finally, public authorities implement the solution and evaluate it after some time.

Thus, to be economic-civic competent means to soundly understand economic concepts, to systematically analyse complex problems, to compare and evaluate controversial solutions based on criteria, as well as to formulate one’s own opinion and make reasoned decision about solutions.

To measure the socio-economic facet of economic-civic competence, the test on economic-civic competence (WBK-T2) was developed and revised (Ackermann, 2018, 2019). The specifications of the WBK-T2 are described below.

The role of item formats in test development and validation

For test development, test specifications regarding purpose, content, format, test length and scoring are equally necessary. The content specifications refer to the construct’s content domain and describe its scope and aspects, such as content areas and cognitive processes (AERA, APA and NCME, 2014: 14, 76). The format specifications describe the item format (i.e. SR or CR) and scoring procedures (AERA, APA and NCME, 2014: 14, 76–78). Selected-response formats (SR) may be formulated as true–false items or multiple-choice items. Constructed-response formats (CR) are either short-response items that require a response of one or a few words or extended-response items that require a response of one or more sentences (AERA, APA and NCME, 2014: 77). SR items may yield a greater width of the domain, whereas CR items may reach a greater depth of processes (Rodriguez, 2003: 164). SR items are more efficient in test administration and test evaluation and their analyses are highly objective and thus are predominant in large-scale assessment. In contrast, CR items are often assumed to enable the assessment of higher cognitive processes (reproduction performance instead of recognition performance) and have a very low rate of guess probability (Jonkisz et al., 2012: 39). However, a rival claim states that the measurement of these cognitive processes is a consequence of careful item writing rather than a characteristic of the item format (Rodriguez, 2003: 164). Less discussed is the fact that CR items have the potential to demand creative performance, which is often required in the social sciences and humanities (Lindner et al., 2015).

The specified response formats and mix of response formats depend on the purposes of the test, its content and the testing platform. Considerations of expediency (e.g. ease of responding, cost of scoring) and validity must be taken into account when selecting item formats (AERA, APA and NCME, 2014: 77). When the test contains a mix of item formats, the test developer has to demonstrate the usefulness of the items in terms of information obtained and of inference supported (Rodriguez, 2003: 163).

For the evaluation of construct equivalence of response formats in a psychological test, two approaches are offered in the literature (Rodriguez, 2003: 164): First, stem-equivalent SR and CR items are used. This approach allows for the control of content differences and the isolation of format effects but requires a test design with two equivalent groups of test takers or multiple testing of each test taker. Second, stem-independent items in the two formats are used, addressing similar or different aspects of the construct’s content areas or cognitive processes. This approach can be carried out in a simple test design but the test developer has to be aware that content and format effects are confounded in it.

Gender gap in test achievement and item format

Different studies have examined whether and to what extent the item format (CR or SR) has an unintended gender bias. Research in different domains (e.g. mathematics, first language, biology) has provided support for differences in gender achievement that favoured females for CR items and males for SR items (mathematics: Garner and Engelhard, 1999; Taylor and Lee, 2012; science: DeMars, 1998; biology: Federer et al., 2016; meta-analyses: Bennett, 1993). The found gender differences are higher for CR items than for the SR items (Arthur and Everaert, 2012; Lafontaine and Monseur, 2009; Reardon et al., 2018). Lafontaine and Monseur (2009) found that reading skills have a larger impact on achievement differences between males and females (24% of variance explained) than the item format (16% of variance explained).

A variety of reasons have been proposed to help explain gender differences depending on the item format (Clinton et al., 2014; Federer et al., 2016; Simkin and Kuechler, 2005). One suggestion is the way in which CR items require answers in contrast to SR items. The productive language skills required especially for CR items may differentially influence the performance of females and males on tests, since females tend to have better reading and writing skills and therefore might have an advantage on CR items (Clinton et al., 2014; Federer et al., 2016). This suggested factor of female advantage in productive language skills will have a higher influence on the solution rate of CR items than on SR items (Simkin and Kuechler, 2005). However, female students’ advantage on CR items is mainly found for older test takers (i.e. after grade 4) (e.g. Taylor and Lee, 2012).

Other explanations regarding gender differences due to the item format refer to guessing tendencies for SR items that might differ between genders (Riener and Wagner, 2018). Males seem to be higher risk-takers and more likely to compete recklessly due to their overconfidence than females (John, 2017; Niederle and Vesterlund, 2007; Riener and Wagner, 2018). Especially when the test taker is unsecure of the correct answer of an SR item, it could be an advantage to take the risk of guessing instead of leaving out the question. Even if wrong answers are penalized (i.e. counted as minus points), the risk-seeking test taker may exclude some odd distractors and the likelihood to select the right answer increases.

Another characteristic of the item format is that CR items require greater effort than SR items because they require productive language skills. If problem-based item stems are used, CR items require more profound reading skills, and it takes more time to formulate an answer (Rodriguez, 2003). Thus, test takers who show high levels of intrinsic motivation in reading or writing, as well as higher interest in the content itself, may perceive CR items as less demanding than test takers with lower intrinsic motivation and interest in the content. Moreover, since SR items mainly require ticking an answer option, there might be an interaction effect between intrinsic reading motivation or interest in content and item format (Guthrie and Wigfield, 2005). In this context, Schwabe et al. (2015) outlined that gender as well as intrinsic reading motivation significantly influence the reading achievement of test takers. Moreover, they found a positive and significant interaction effect between motivation and item format as well as between item format and gender. In addition, the later interaction effect did not decrease after controlling for intrinsic reading motivation (Schwabe et al., 2015).

To examine these differences, Reardon et al. (2018) did a study calculating differences depending on the relation of CR and SR items in mathematics and English as first language. They found that the gender gap differs on average by .11 SD of test scores without any CR items in comparison to test scores with 50% CR items. In summary, these results suggest that the inclusion of a variety of item formats, including CR items, may make assessments more gender equitable.

Gender gap in test achievement and learning opportunities

In order to clarify the connection between learning opportunities and gender differences in performance tests, Helmke’s (2014) offer-usage model is helpful. This model describes teaching as an offer to learn, which does not per se lead to knowledge expansion or competence development. Instead, the learner has to use the learning offer by initiating a learning activity. On one hand, the individual usage of the learning opportunities is influenced by various personal learning characteristics, such as motivation to learn or interest in the learning object. On the other hand, the usage also depends on the quantity and quality of the learning opportunities.

Due to the findings regarding positive effects of formal learning opportunities in economics on economic literacy (e.g. Beck and Wuttke, 2004; Brückner et al., 2015a; Förster et al., 2015; Gill and Gratton-Lavoie, 2011; Holtsch and Eberle, 2018; Kuhn et al., 2014; Schmidt et al., 2016; Schumann and Eberle, 2014; Siegfried and Wuttke, 2016), researchers have sought to establish a link between gender differences and learning opportunities (Brückner et al., 2015b; Förster and Zlatkin-Troitschanskaia, 2010; Schumann and Eberle, 2014; Soper and Walstad, 1987). However, participation in learning opportunities in economics does not always seem to compensate for gender differences, and gender differences even appear to increase after attendance at economic courses (Siegfried, 1979; Schmidt et al., 2016). Yet, other studies show that attending economic courses moderates the gender effect on economic literacy, that is, participation in economic courses reduces the gender gap in economic literacy (Siegfried, 2019; Siegfried and Ackermann, under review).

In summary, it can be assumed that test takers who have received more learning opportunities may also have smaller differences between genders with regard to the item format used.

Research questions

The construct of economic competence in general, and economic-civic competence in particular, does not presume a gender difference in overall test scores per se. Instead, there are differences presumed regarding school form and educational track (e.g. general education at high schools and at vocational schools), prior knowledge (e.g. major course in economics) and socio-cultural background (e.g. German as family language) (Ackermann, 2019: 148–151). However, various studies investigating economic competence report gender effects in their samples (e.g. Beck, 1993; Schumann and Eberle, 2014; Walstad et al., 2013), although some of these studies only found these trends in subsamples (Schumann et al., 2017).

In order to analyse the relationship of test scores, item format and gender regarding economic-civic competence, this article addresses the following research questions:

Research Question 1 (RQ1): To what extent do male students outperform female students in economic-civic competence?

Research Question 2 (RQ2): Is the gender gap in economic-civic competence systematically related to the item format of the test items?

Research Question 3 (RQ3): Is the relationship between item format and gender in economic-civic competence moderated by interest in socio-economic issues and major course in economics?

Method

Sample

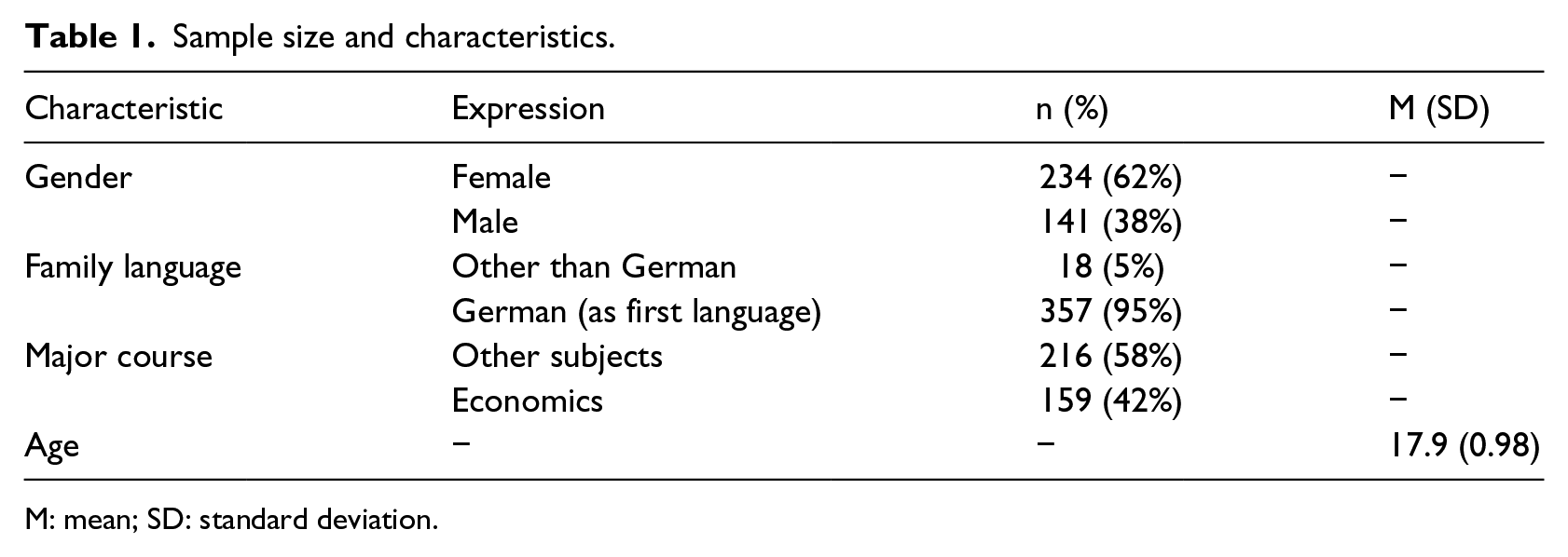

The data were taken from the research project WBKgym (Ackermann, 2019: 192–196). The sample consists of 375 students in the 11th and 12th grade at high schools (‘Gymnasium’) in a German-speaking canton of Switzerland; 62% of the students were female and 38% male (see Table 1). The students were aged between 17 and 19 (M = 17.9, SD = 0.98), 95% spoke German as first language with their family, and only 5% another language; 42% of the students were enrolled in the major course in economics, and 58% in a major course in other subjects, for example, biology and chemistry, physics and mathematics, foreign languages, visual arts or music.

Sample size and characteristics.

M: mean; SD: standard deviation.

Instrument

Even though a couple of test instruments for economic competence have been developed and applied in recent years (e.g. the WBT by Beck, 1989; the OEKOMA test by Schumann and Eberle, 2014), none seem to be appropriate for measuring the construct of economic-civic competence and the format–gender relation. The WBT follows a categorical approach in the content domain (e.g. basic concepts, microeconomics, macroeconomics, foreign economics) instead of a situational/problem-oriented approach (i.e. real situations within the above mentioned content domains) and it only specifies SR items. Although the OEKOMA test integrates a problem-oriented approach in the content domain, it offers only a small variability in item format, that is, about 90% of the items are SR and 10% CR.

Thus, we used the rather new test on economic-civic competence (WBK-Test) to analyse the format–gender relation. The first version of this test (WBK-T1) was developed by Eberle et al. (2016) to measure economic-civic competence. Later, Ackermann (2018, 2019) revised it and developed a second version (WBK-T2). The WBK-T2 is a written performance test that aims to measure the societal/economic dimension of economic-civic competence. The content and format specifications of the WBK-T2 are described below.

Specifications of WBK-T2

The WBK-T2 addresses students at the end of secondary school, that is, in the 12th or 13th school year of their general education (‘Gymnasium’) or vocational education, and aged 17 to 19. The explicit target group is enrolled in a major course in economics, which implies prior knowledge of economic and political concepts and related problem-solving methods. The contrast group is enrolled in another major course, such as science or humanities.

The WBK-T2 includes four socio-economic issues: retirement provision, energy supply, public debt and management salaries. The issues were identified and selected based on criteria of political relevance (i.e. topicality and authenticity regarding real-world problems), scientific character (i.e. technical concepts of economics and politics), complexity and controversy (i.e. open-ended problem, multiple perspectives, multiple solutions), and adaptability (i.e. applicable to other German-speaking countries for an international comparison) (for details, see Ackermann, 2019: 154–163).

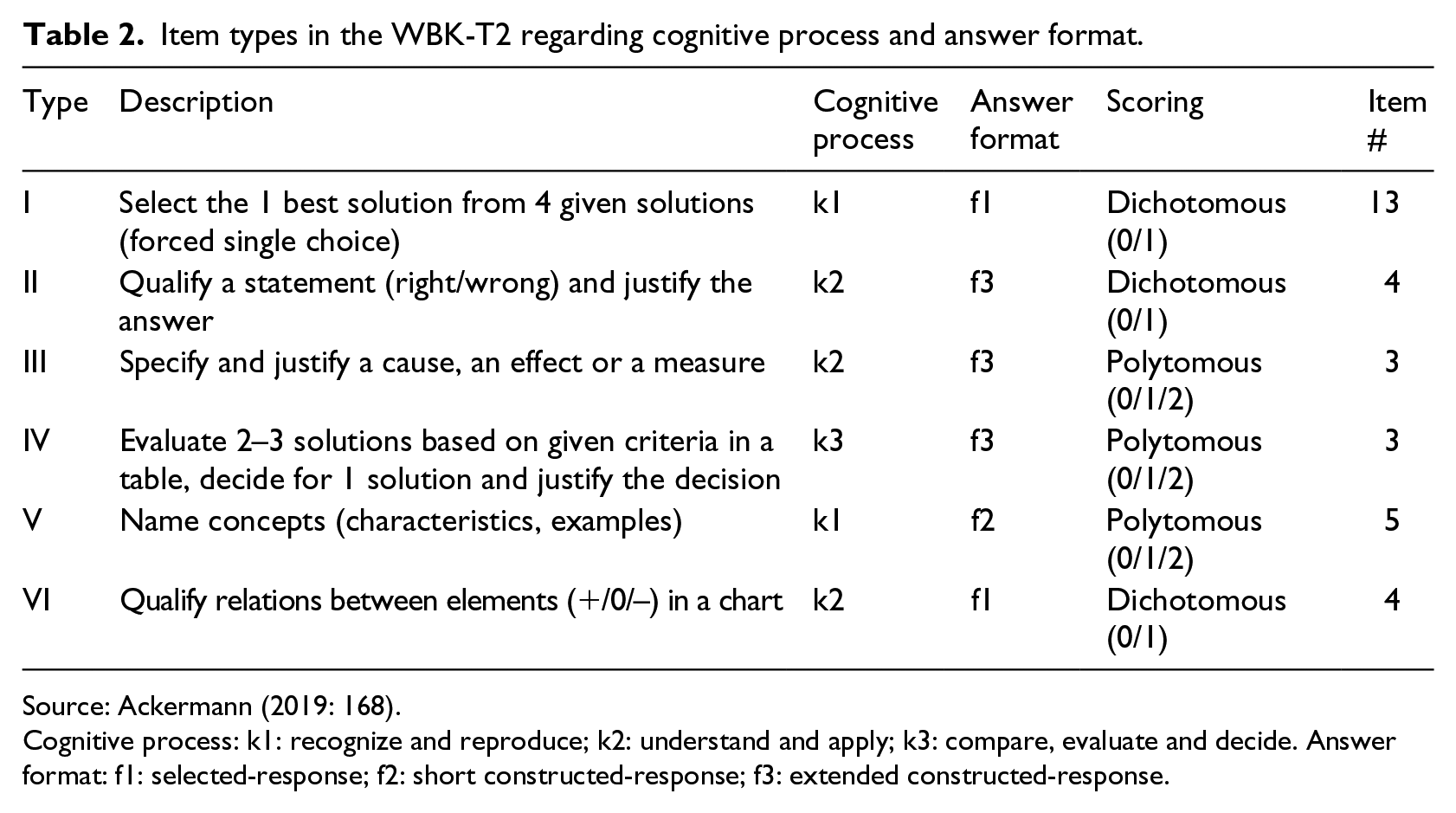

Each issue begins with a short introduction followed by about 8 items, in total 32 items. The items systematically vary in cognitive process and answer format (see Table 2) (for details, see Ackermann, 2019: 166–174). The cognitive process is operationalized on three levels: (k1) recognize and reproduce, (k2) understand and apply and (k3) compare, evaluate and decide. The answer format is defined as either (f1) SR (i.e. single answer choice) or (f2/f3) CR; 53% (n = 17) of the test items have a SR format, and 47% (n = 15) a CR format. As each item covers another content element of the issue, the item formats in the WBK-T2 are stem-independent.

Item types in the WBK-T2 regarding cognitive process and answer format.

Source: Ackermann (2019: 168).

Cognitive process: k1: recognize and reproduce; k2: understand and apply; k3: compare, evaluate and decide. Answer format: f1: selected-response; f2: short constructed-response; f3: extended constructed-response.

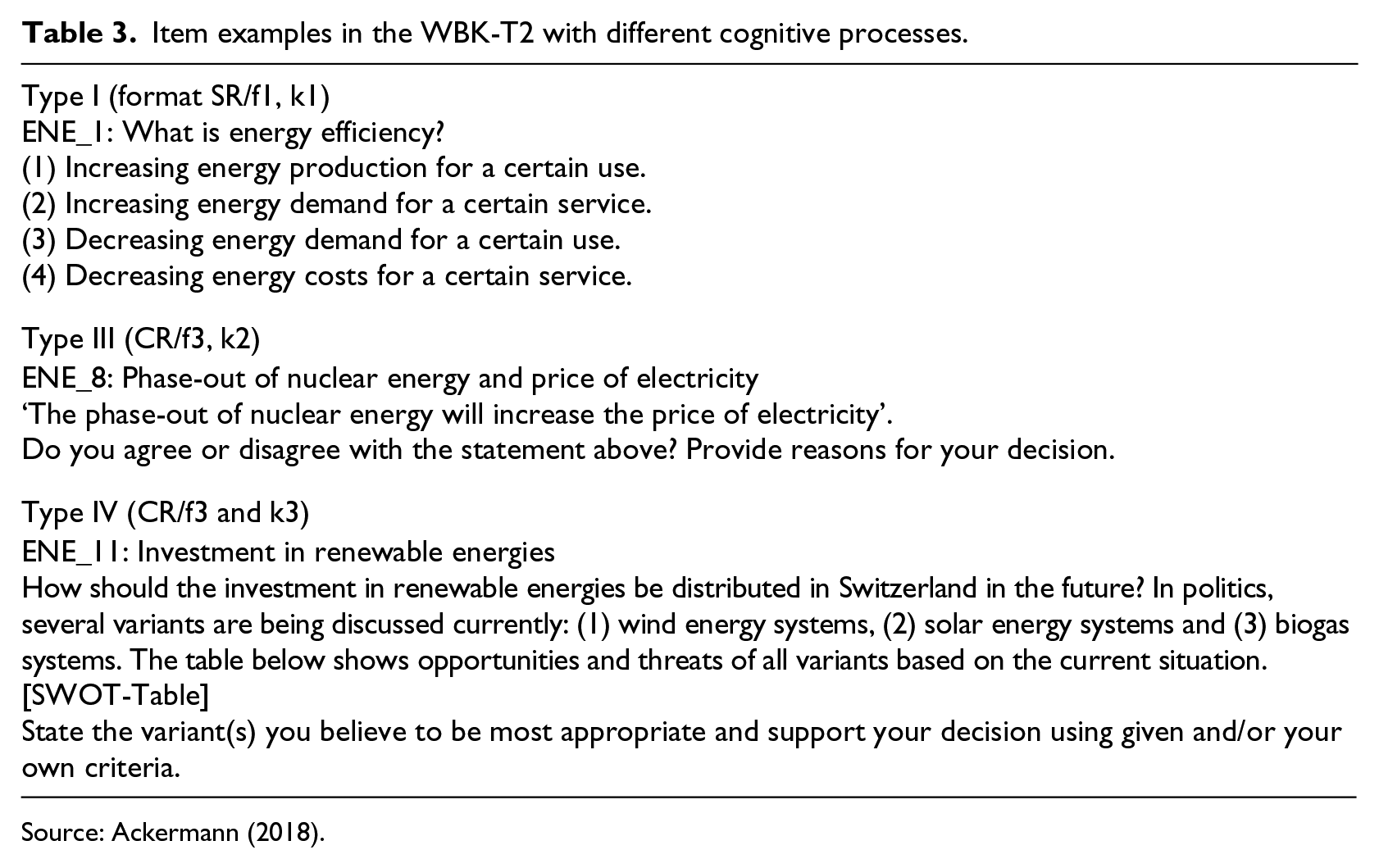

Examples for items in the WBK-T2 with different intended cognitive levels are shown in Table 3.

Item examples in the WBK-T2 with different cognitive processes.

Source: Ackermann (2018).

Validation of WBK-T2

The WBK-T2 and the interpretation of the individual test scores regarding the construct of economic-civic competence was evaluated using an evidence-based validation procedure, including validation aspects of test content, internal structure and relations to external criteria (cf. AERA, APA and NCME, 2014; for details see Ackermann, 2019).

For content validation, content-pedagogical knowledge experts evaluated the WBK-T2 items in semi-structured interviews regarding construct representativeness, and linguistic and technical appropriateness. The experts gave valuable contributions to omit or modify existing items from the test and to construct new items. For internal structure validation, a partial credit Rasch model was estimated with ACER ConQuest Version 4 (Adams et al., 2015; Rost, 2004). The analyses suggest the WBK-T2 has a one-dimensional structure regarding both cognition process and item format. The reliability of the whole WBK-T2 (EAP/PV = .76, WLE = .74, α = .74) and the quality of the single items (item-total correlation ⩾.20 in 86% of all items) are good. The person-item map shows a satisfying distribution of the item difficulties along the person abilities (person parameters, Mθ = 0.525, VARθ = 0.394; restricted item parameters, Mσ = 0.000, VARσ = 1.460). Furthermore, the analyses confirm the homogeneity of the items in the WBK-T2 (0.92 ⩽ wMNSQ ⩽ 1.17, tolerable DIF effects regarding gender and major course). 1 For external criteria validation, mean and correlation analyses were computed. They showed that students in economics courses (p < .001, Cohen’s d = 0.80) and with German as first language (p = .003, d = 0.74) had higher scores.

To conclude, the WBK-T2 seems to be a reliable operationalization of the construct that is the societal/economic domain of economic-civic competence, and test scores may be interpreted for the proposed uses of the WBK-T2.

Results

Gender gap in overall test scores (RQ1)

RQ1: To what extent do male students outperform female students in economic-civic competence?

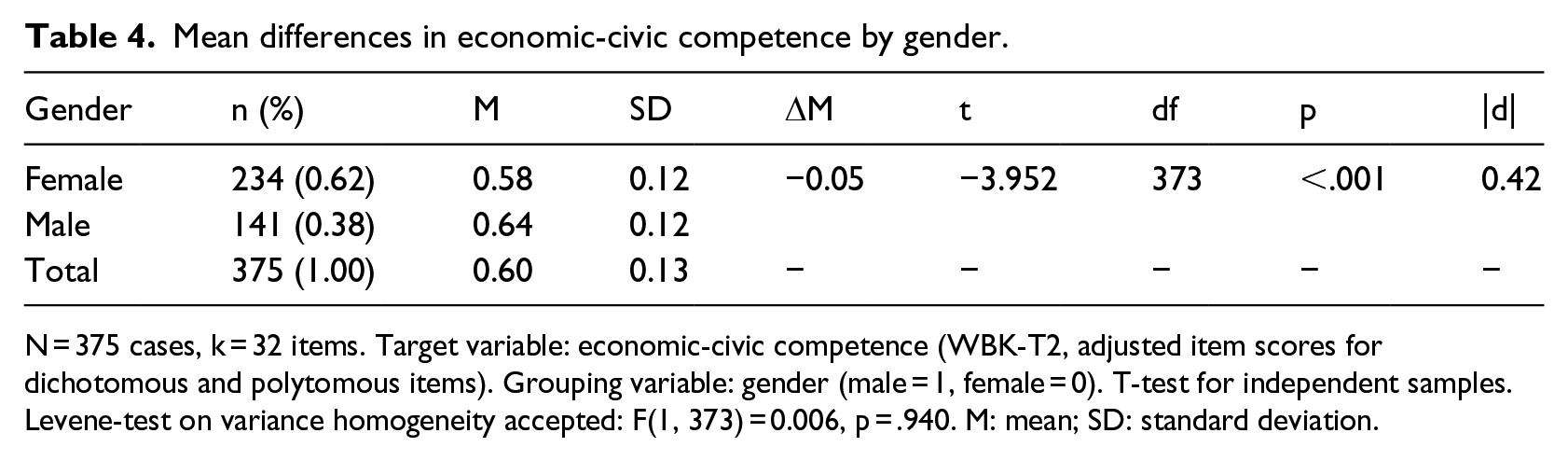

To analyse the gender gap in civic-economic competence measured by the overall test scores in WBK-T2, we computed T-tests for independent samples with SPSS (e.g. Fromm et al., 2010; IBM, 2017). As WBK-T2 items have different scoring (see Table 2), we used classical item difficulties (adjusted item scores instead of sum scores).

Regarding the overall test scores in the WBK-T2, male students significantly outperformed female students (t(375) = –3.952, p < .001), but the size of the gender effect is still small (Cohen’s |d| = 0.42) (Cohen, 1988: 24–27) (see Table 4) (see also Ackermann, 2019: 271).

Mean differences in economic-civic competence by gender.

N = 375 cases, k = 32 items. Target variable: economic-civic competence (WBK-T2, adjusted item scores for dichotomous and polytomous items). Grouping variable: gender (male = 1, female = 0). T-test for independent samples. Levene-test on variance homogeneity accepted: F(1, 373) = 0.006, p = .940. M: mean; SD: standard deviation.

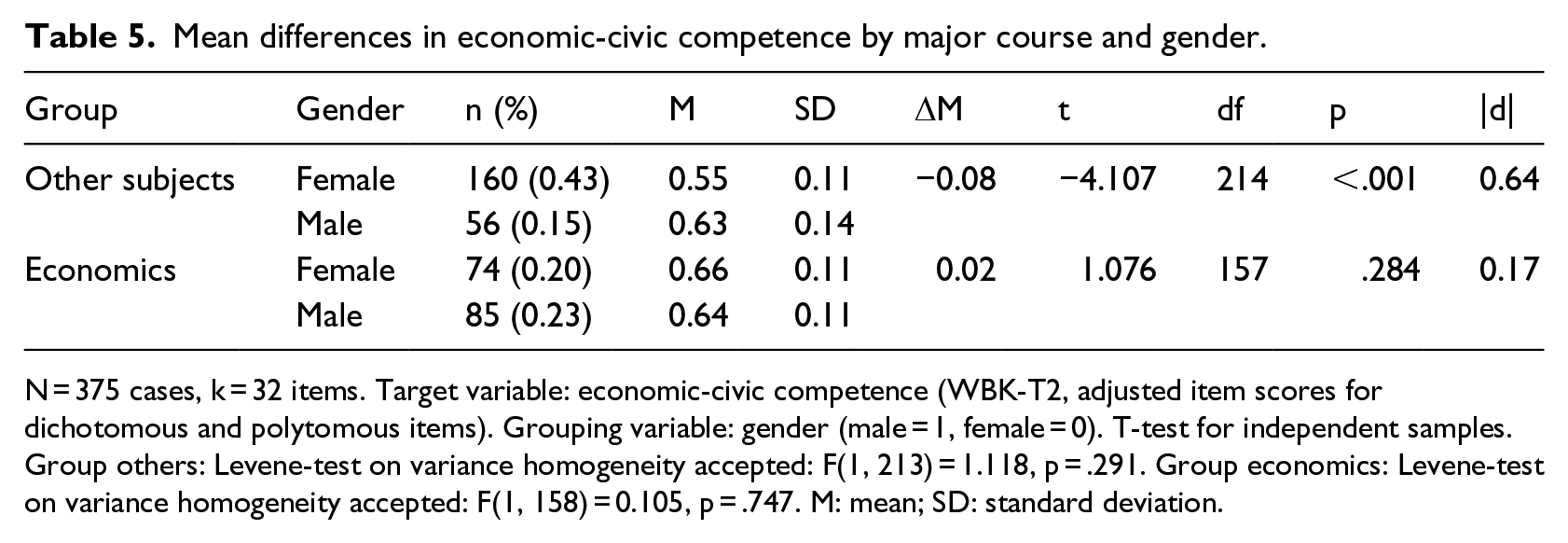

This result regarding the gender gap in economic-civic competence corresponds with findings from previous studies regarding the gender gap in economic competence (cf. Brückner et al., 2015a, 2015b; Förster and Zlatkin-Troitschanskaia, 2010; Schumann and Eberle, 2014; Soper and Walstad, 1987). Yet, taking the heterogeneous sample regarding prior curricular knowledge in economics into account (see Table 1), a more differentiated picture emerges. The gender gap is smaller for students in the economics course, but is greater for students in other courses (see Table 5) (see also Ackermann, 2019: 271–273). Within the group ‘other subjects’, male students significantly outperform female students (t(214) = –4.107, p < .001), and the gender effect size is moderate (Cohen’s |d| = 0.64). Conversely, within the group ‘economics’, female students outperform male students, although the mean differences are not significant (t(157) = 1.076, p = .284) and the effect size is very small (Cohen’s |d| = 0.17).

Mean differences in economic-civic competence by major course and gender.

N = 375 cases, k = 32 items. Target variable: economic-civic competence (WBK-T2, adjusted item scores for dichotomous and polytomous items). Grouping variable: gender (male = 1, female = 0). T-test for independent samples. Group others: Levene-test on variance homogeneity accepted: F(1, 213) = 1.118, p = .291. Group economics: Levene-test on variance homogeneity accepted: F(1, 158) = 0.105, p = .747. M: mean; SD: standard deviation.

To conclude, these results on economic-civic competence measured by the WBK-T2 may help to refine previous studies’ findings regarding the gender gap in economic competence. They suggest that prior knowledge in economics acquired in formal learning settings such as a major course in economics influences the extent of the gender difference.

Gender gap in format-related test scores (RQ2)

RQ2: Is the gender gap in economic-civic competence systematically related to the item format of the test items?

To further analyse the relevance of the item format regarding the gender gap in economic-civic competence measured by the test scores in WBK-T2, we computed T-tests for independent samples for each item format with SPSS (e.g. Fromm et al., 2010; IBM, 2017). To conduct the analysis, we divided the 32 items into two categories of item format, SR (f1) and CR (f2/f3) (see Table 2), and calculated classical item difficulties (adjusted item scores) for each format. According to the specifications of the WBK-T2, we had to follow a stem-independent approach to examine the effect of the item format.

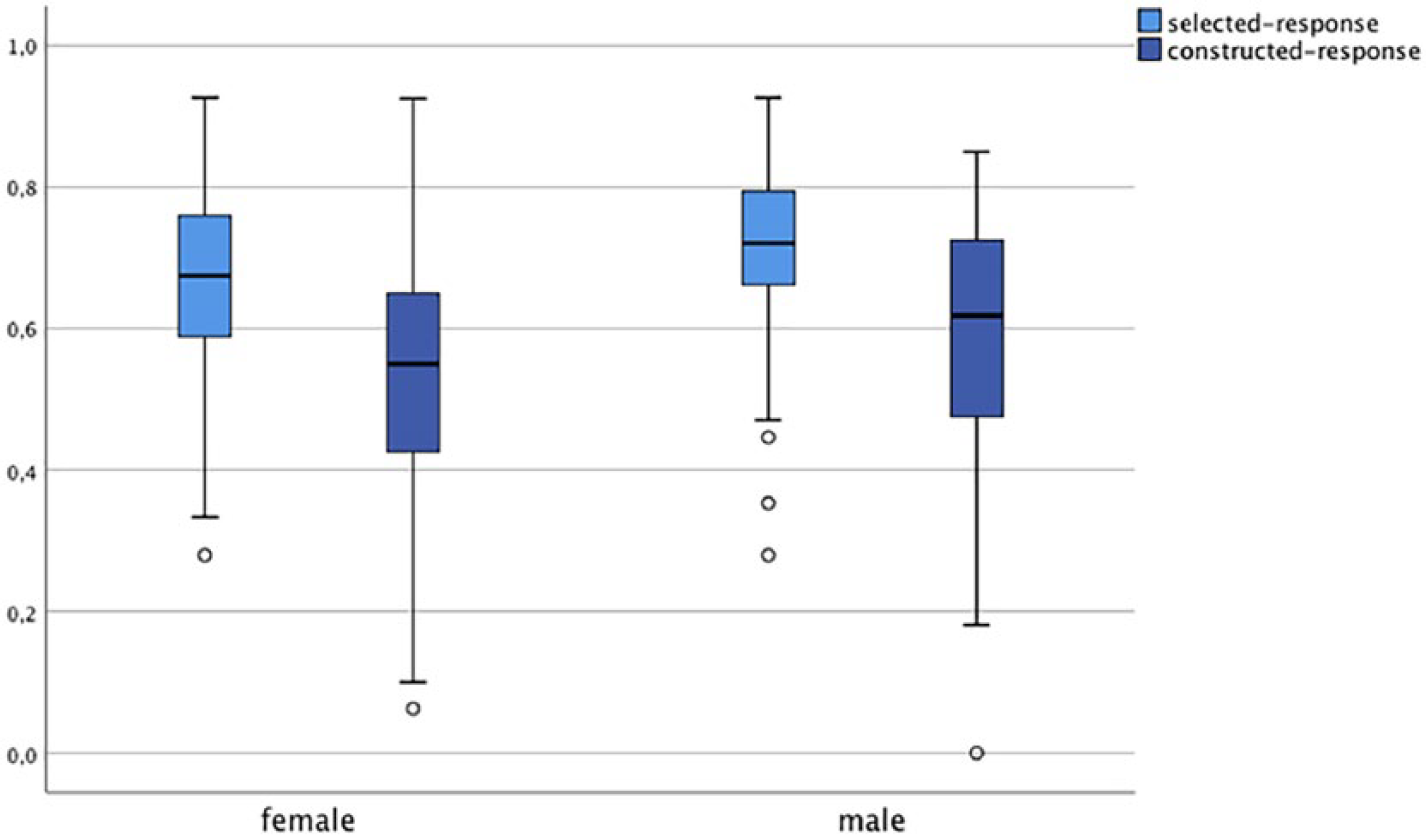

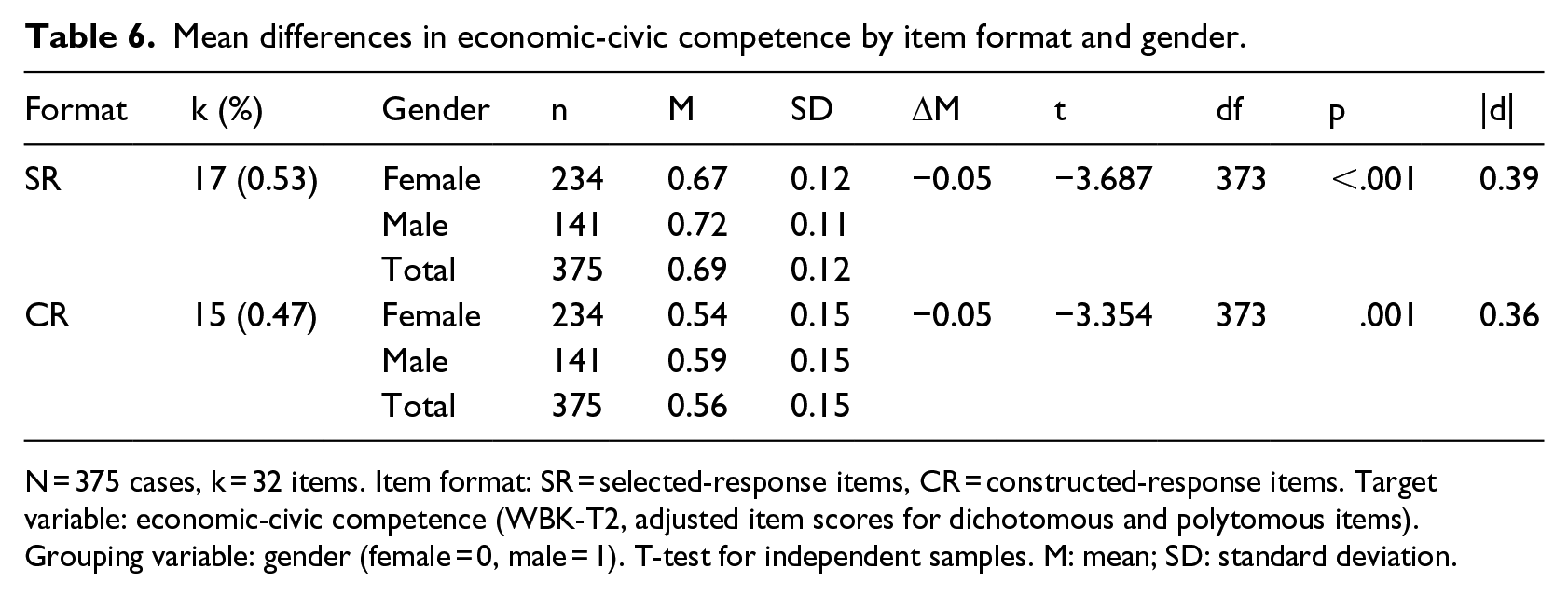

For both female and male students, the average test score for SR items was higher than for CR items (see Figure 1). Compared to females, males scored significantly higher in SR items (t(373) = –3.687, p < .001) and in CR items (t(373) = –3.354, p = .001). However, gender effect sizes are small (Cohen’s |d| = 0.39 and 0.36) (see Table 6). Thus, the item format in WBK-T2 does not favour a gender per se, since male students outperformed females in both selected and CR items.

WBK-T2 scores by item format and gender.

Mean differences in economic-civic competence by item format and gender.

N = 375 cases, k = 32 items. Item format: SR = selected-response items, CR = constructed-response items. Target variable: economic-civic competence (WBK-T2, adjusted item scores for dichotomous and polytomous items). Grouping variable: gender (female = 0, male = 1). T-test for independent samples. M: mean; SD: standard deviation.

These results from our study only partly confirm findings from previous studies in other domains, such as mathematics and first language (English). They found that SR items favoured male students (Walstad and Robson, 1997) and CR items female students (Beller and Gafni, 2000; Bolger and Kellaghan, 1990; Reardon et al., 2018).

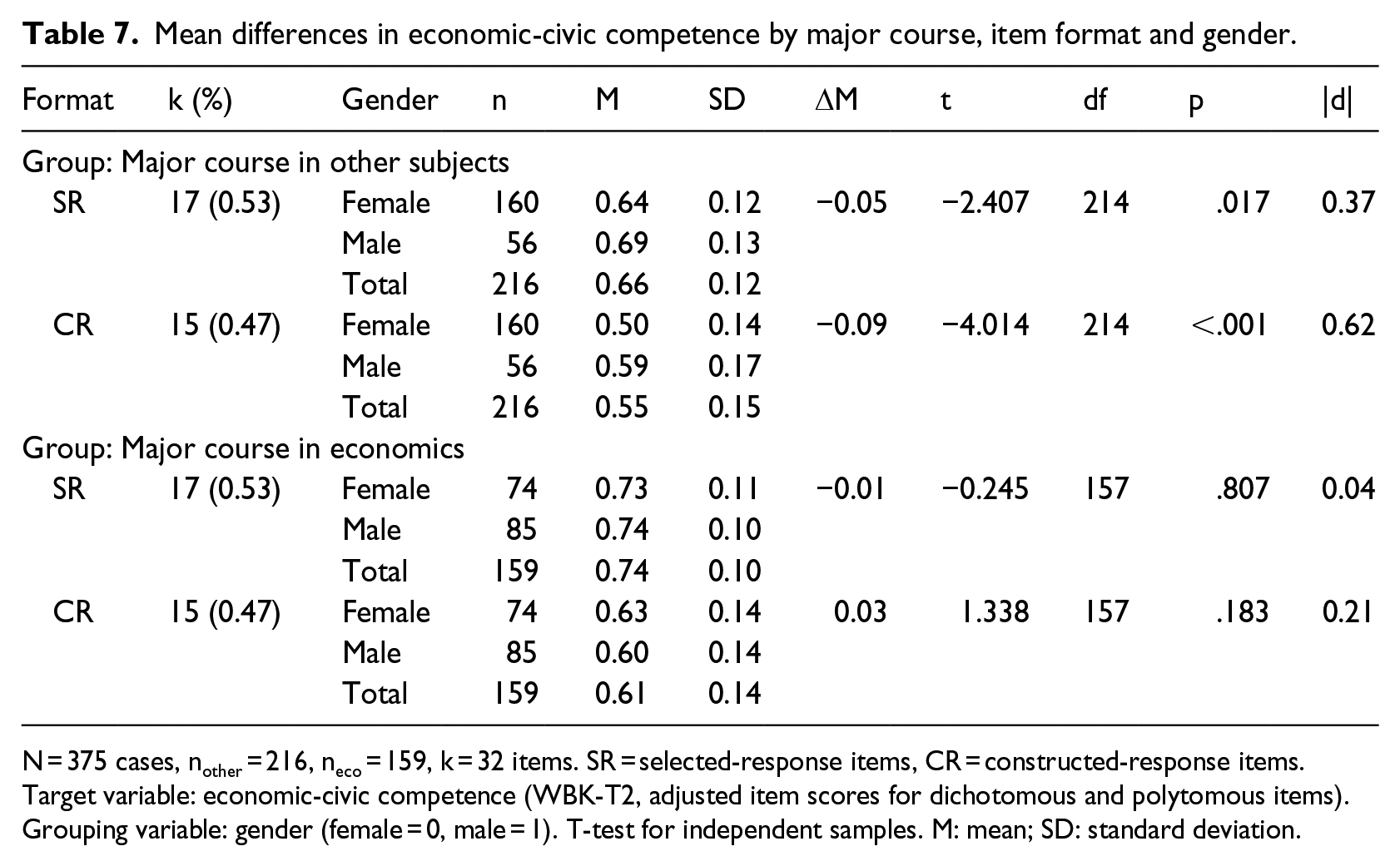

Due to the differentiated results according to prior knowledge in economics (see previous section), the format-related gender gap was analysed separately for students in the group ‘economics’ and in the group ‘other subjects’ (see Table 7). Within the group ‘other subjects’, male students had significantly higher format-related test scores than female students for SR items (t(214) = –2.407, p = .017, Cohen’s |d| = 0.37) and for CR items (t(214) = –4.014, p < .001, Cohen’s |d| = 0.62). Within the group ‘economics’, males had a higher SR test score (t(157) = –0.245, p = .807, Cohen’s |d| = 0.04) and females a higher CR test score (t(157) = 1.338, p = .183, Cohen’s |d| = 0.21), but these differences are not significant and the effects are small.

Mean differences in economic-civic competence by major course, item format and gender.

N = 375 cases, nother = 216, neco = 159, k = 32 items. SR = selected-response items, CR = constructed-response items. Target variable: economic-civic competence (WBK-T2, adjusted item scores for dichotomous and polytomous items). Grouping variable: gender (female = 0, male = 1). T-test for independent samples. M: mean; SD: standard deviation.

Gender gap in test scores, item format, interest and prior knowledge (RQ3)

RQ3: Is the relationship between item format and gender in economic-civic competence moderated by interest in socio-economic issues and prior knowledge in economics?

To examine the relationship between test scores (i.e. mean score of all adjusted item scores), item format (i.e. mean score of adjusted item scores for each format), gender, domain-specific interest, prior knowledge (major course in economics) and grades in the subject ‘economics and law’, we conducted correlation and regression analyses with SPSS (e.g. Backhaus et al., 2016; IBM, 2017). Prior knowledge was measured by a dummy variable indicating whether or not the students attended a major course in economics (no = 0, yes = 1). Domain-specific interest, that is, interest in socio-economic issues, was measured by a continuous variable, computed as the mean score of three rating scale items (range = 1–5, M = 3.88, SD = 0.77, Cronbach’s α = .71; for example: ‘I like to discuss with others current economic and societal topics’.).

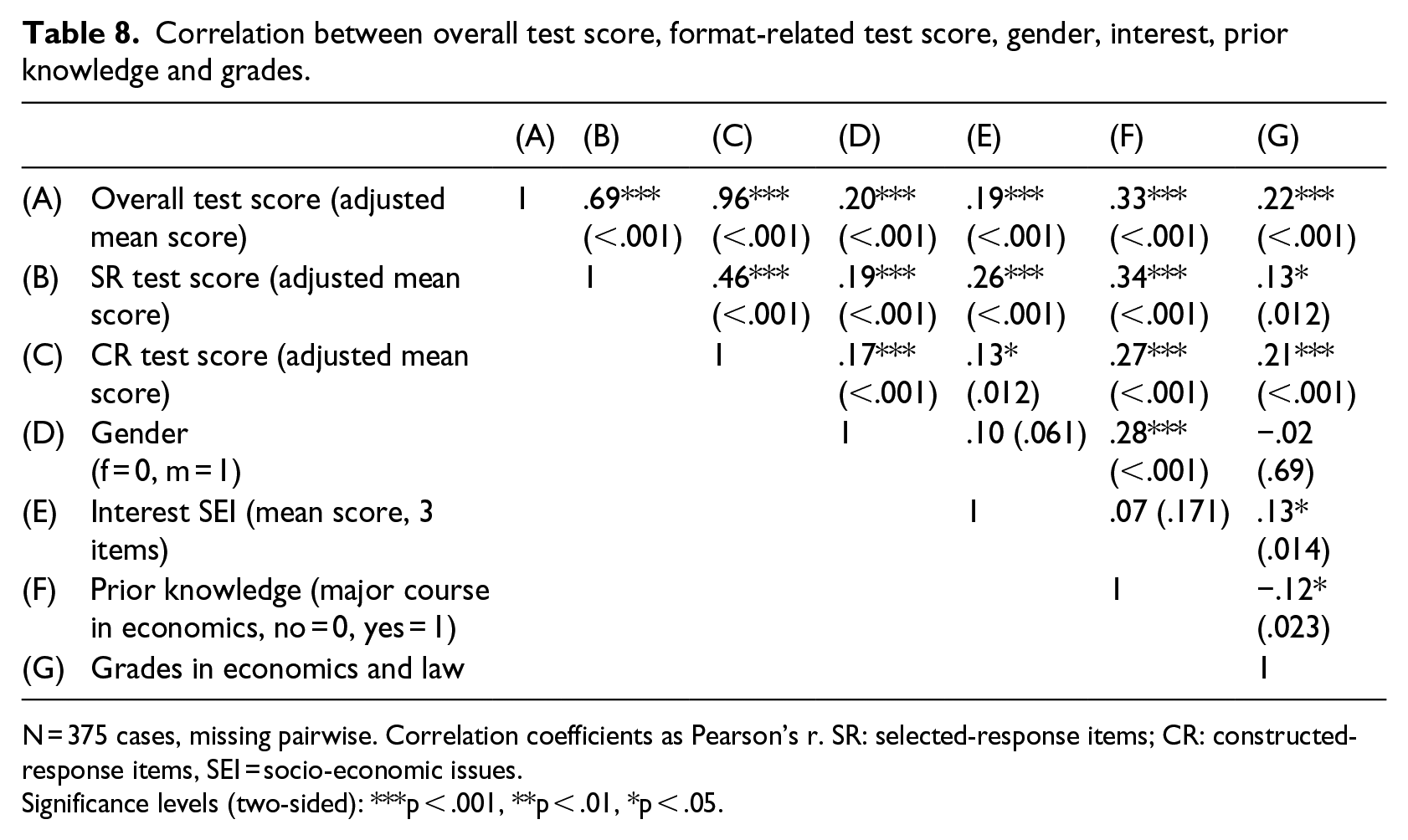

The results of the correlation analysis are summarized as follows (see Table 8):

Correlation between overall test score, format-related test score, gender, interest, prior knowledge and grades.

N = 375 cases, missing pairwise. Correlation coefficients as Pearson’s r. SR: selected-response items; CR: constructed-response items, SEI = socio-economic issues.

Significance levels (two-sided): ***p < .001, **p < .01, *p < .05.

Gender positively correlates with the overall test score (r = .20, p < 001) and the item-related test scores, that is, male students outperform female students in all cases, although the correlation with SR items (r = .19, p < .001) is higher than the correlation with CR items (r = .17, p < .001). This corresponds with the results presented in the previous section.

Gender is not correlated with interest in socio-economic issues (r = .10, p = .61). It is also not correlated with students’ grades in ‘economics and law’ (r = –.02, p = .69). This indicates that neither interest nor grade may be a predictor or moderator of the gender gap in economic-civic competence. However, the result regarding the missing correlation between gender and interest is not in line with previous studies’ findings, where gender differences in interest in economics were found (e.g. Schumann and Eberle, 2014).

Gender is positively correlated with enrolment in a major in economics (r = .28, p < .001). This indicates that prior knowledge may be a strong indicator or moderator of the gender gap in economic-civic competence. This result corresponds with findings in previous studies, where gender differences in economic competence have been explained by participation in economics courses (e.g. Schumann and Eberle, 2014).

The correlation between interest in socio-economic issues and the overall test score is little positive (r = .19, p < .001). The correlation is higher with test scores for SR items (r = .26, p < .001) than with test scores for CR items (r = .13, p = .012).

The correlation between prior knowledge in economics and the overall test score is moderately positive (r = .33, p < .001), although prior knowledge in economics correlates higher with test scores for SR items (r = .34, p < .001) than with test scores for CR items (r = .27, p < .001).

The correlation between the grade in ‘economics and law’ and the overall test score, as well as the item-related test scores, is positive. The correlation with the overall test score (r = .22, p < .001) and the CR test score (r = .21, p < .001) is higher than the correlation with the SR test score (r = .13, p = .012). Moreover, the grade in ‘economics and law’ correlates positively with interest in socio-economic issues (r = .13, p = .014), but negatively with prior knowledge in economics (r = –.12, p = .023).

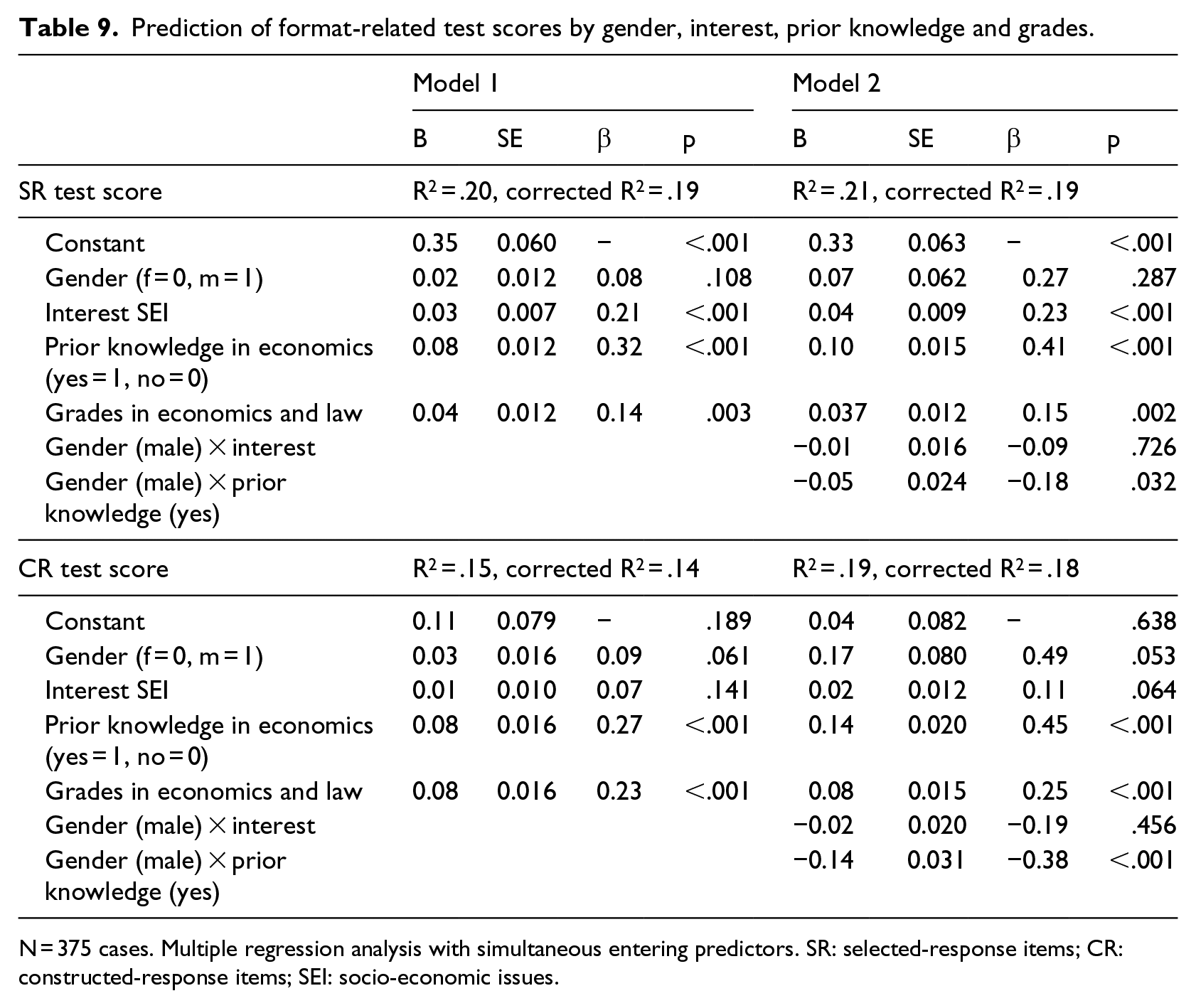

For multiple regression analyses, we used gender, interest in socio-economic issues, prior knowledge in economics (major course) and grades in the subject ‘economics and law’ as independent variables, and test scores of the item formats (SR test score and CR test score) as dependent variables. In order to examine the extent to which independent variables explain variance in format-related test scores, two regression models, one without interaction effects (model 1) and one with interaction effects (model 2), were estimated (see Table 9).

Prediction of format-related test scores by gender, interest, prior knowledge and grades.

N = 375 cases. Multiple regression analysis with simultaneous entering predictors. SR: selected-response items; CR: constructed-response items; SEI: socio-economic issues.

In model 1, the predictors explain about 19% of the variance in SR test scores (R2 = .20, corrected R2 = .19). The effect of interest on SR test scores is rather small, but significant (β = .21, p < .001); the effect of prior knowledge is moderate and significant (β = .32, p < .001). The effect of the grade in the subject ‘economics and law’ is small but significant (β = .14, p = .003). Conversely, the predictors explain only 14% of the variance in CR test scores (R2 = .15, corrected R2 = .14). The effect of interest (β = .07, p = .141) and prior knowledge (β = .27, p < .001) and the grade in ‘economics and law’ (β = .23, p < .001) on CR test scores is rather small, but significant. Interestingly, when other predictors are controlled for, gender is no longer a significant predictor for both, SR test scores and CR test scores.

Regarding model 2, still 19% of the variance in SR test scores (R2 = .21, corrected R2 = .19) and 18% of the variance in CR test scores (R2 = .19, corrected R2 = .18) are explained by the predictors. For the SR test score and the CR test score, there is no interaction effect between gender and interest. However, for SR test score and CR test score, a significant interaction effect is found between gender and enrolment in an economics major course (SR: β = –.18, p = .032; CR: β = –.38, p < .001). In other words, male students enrolled in an economics major course achieved lower CR test scores and SR test scores than female students. Model 2 again shows no significant influence of gender on the format-related test scores.

Discussion

This study aimed to analyse the format–gender relation in the test of economic-civic competence (WBK-T2). The WBK-T2 test uses two different item formats (SR and CR) that are equally balanced across it. The items vary regarding content elements and required cognitive processes. In order to analyse the extent to which this specific test form might overcome gender differences in test scores, the article asked two main questions; (1) whether there is still a gender gap in overall test scores and (2) whether the gender gap is systematically related to the item format. Regarding the overall test scores in the WBK-T2, male students significantly outperformed female students, although the effect size is small. However, the gender gap is smaller for the group of students in the major course in economics and increased for the group in other major courses. These results imply that a high achievement in the WBK-T2 depends more on prior knowledge in economics gained through formal learning opportunities than on gender. Regarding the format-related test scores, the average test score of SR items is higher than of CR items for both genders. Male students scored significantly higher in both SR and CR items, but the effect sizes are small. Addressing prior knowledge in economics again, significant gender gaps in both item formats were only found for the group ‘other subjects’. These results imply that the gender gap in WBK-T2 is not influenced by a specific item format or by the distribution of item formats, but rather by the formal learning opportunity chosen by the test taker.

The third question was whether the relationship between item format and gender is moderated by interest in socio-economic issues and prior economic knowledge. First, gender is not correlated with interest in socio-economic issues. Interest is a significantly positive predictor for the adjusted SR test score and adjusted CR test score, but the effect is higher for SR items than for CR items. There is no interaction effect between gender and interest for both item formats. However, this result is not in line with other studies that indicate that to answer a CR item, more interest in the content is needed than for SR items, which require students to tick an answer option. Second, a major course in economics is a significant predictor for both item formats, but here also, the effect is higher for SR items than for CR items. Moreover, an interaction effect for males/females enrolled in an economics major course was found for the SR test scores and CR test score. These results are in line with other recent studies (Ackermann, 2019; Siegfried and Ackermann, under review), which have found evidence that the attendance of economics courses can decrease the gender gap. It seems that female students benefit from in-depth courses more than male students do.

Hence, for students with prior knowledge in economics, the balanced test form regarding SR and CR items in the WBK-T2 overcomes the gender gap in overall test scores as well as in format-related test scores. However, this does not hold for students without prior knowledge in economics. For these students, both item formats in the WBK-T2 favour male students. We conclude that the gender gap in WBK-T2 test scores is not influenced by test-internal construct-irrelevant factors, such as item format, but can likely be explained by test-external variables, such as prior knowledge in economics.

However, we cannot generalize the results discussed above yet. First, the instrument WBK-T2 is the only test so far to examine the format–gender relation, as other tests in the domain of economics (e.g. WBT, OEKOMA) do not have (enough) variation in item format. The question remains whether the results of our study are characteristic of the test instrument or of the content domain. Second, the sample used in the study is highly selective and might not be representative for all students of the WBK-T2 target group. Thus, high school (‘Gymnasium’) students might show a different format–gender relation to vocational school students, as they are more used to open-ended questions and CR tasks. Third, the data might be tied to a country-specific teaching and learning culture. Students from other cultures might show a different format–gender relation to Swiss students. As shown by Förster et al. (2015), the gender gap in test of economic literacy (TEL) tests differs between countries.

This study has succeeded in exploring some explanatory approaches for gender differences in the domain of economics. It supports findings from previous studies on the relevance of learning opportunities in economics. Learning opportunities may not only increase students’ economic literacy but may also reduce gender differences. In this context, it would be interesting to investigate how such courses are designed so that gender differences can be balanced, and how derivations for the conception of learning opportunities in economics in general can be achieved. Even if there are no significant differences in the item format-specific test scores between males and females for SR items and CR items, the results of the regression analyses indicate that influencing factors assume a different importance depending on the item format. However, further research is needed to verify these findings. For this purpose, further research on the gender gap in the domain of economics should take into account other samples and include further gender gap–related influencing factors such as linguistic ability. Furthermore, it would be interesting to investigate the extent to which the test results differ between gender when stem-equivalent SR and CR items are used (see Siegfried and Wuttke in the same issue).

Footnotes

Acknowledgements

The author(s) thank Nihat Yasartürk for double coding the students’ answers to constructed-response items, Ahmed Khatib for formatting and Rosa Brown for proofreading.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.