Abstract

The authors describe a project that compared the effects of three interventions on the retirement investment knowledge of public school teachers in the Midwest. They interpret outcomes from three different interventions (online training, site-based workshop, and hybrid of online and site-based). While the study results indicate that program participants increased their investment and retirement knowledge of content presented in the measured approaches, the differences in gains between the interventions were not significant. The authors call for additional research into the investment and retirement education of teachers that employs larger samples and uses valid and reliable instrumentation.

Public school teachers represent a unique group for whom education about investment and retirements represents a professional need. Research studies document the weak financial literacy and economic knowledge of teachers that has existed for some time (e.g. Garman, 1979; Grimes et al., 2010; McKenzie, 1971; McKinney et al., 1990). Teachers generally possess weak understandings of personal finance. Educating teachers about the importance of financial planning may provide information that provides them with social studies content knowledge as well as about strategies for wealth development that could enhance their retirement experiences.

A largely female population, elementary and secondary education teachers represent a group largely under-researched by the personal finance and economics communities. The need to research this group may be supported through findings that women tend to anticipate greater income disparities at retirement than men (Hershey and Jacobs-Lawson, 2012). Just as different social conditions shape how women time their receipt of Social Security benefits (Gillen and Heath, 2014), teachers change their behaviors and timing of their retirements in response to retirement program incentives (Brown, 2013; McGee and Costrell, 2011). At the same time, teachers demonstrate some elements of financial awareness. Teacher knowledge about their retirement programs relates to their time in the profession, with mature teachers more aware of their retirement programs than those just entering the profession (DeArmond and Goldhaber, 2010).

Recent studies provide mixed results as to whether different approaches to teacher learning (such as graduate courses or workshops) designed to enhance teachers’ financial or economic knowledge actually increase participants’ understanding of personal finance (Harter and Harter, 2012; Swinton et al., 2010). Nevertheless, studies suggest that employer-sponsored financial education may stimulate employee action with regard to financial and retirement planning. Research that documents the relationship between financial wellness and workplace satisfaction may indicate a presence of a similar association of financial health and employee fulfillment (e.g. Kim, 2008; Kim et al., 2006; Prawitz and Cohart, 2014). Providing teachers with information about investments and pension programs may enhance their professional performance and their sense of personal gratification.

This article describes a research study that compared three inventions that inform educators about investing tenets and about teacher retirement programs. The research question guiding the study was as follows:

Are there differences in the confidence and knowledge changes among teachers who experience a computer-based modular intervention, a lecture-debriefing intervention, and a hybrid intervention that combines the computer-based and lecture interventions?

The article informs the community about a needed area of financial education research that may enhance the financial wellness of teachers. Subsequent to this introductory section, an overview of research literature that relates to educating teachers about their personal finances appears. A description of the methodology, which includes the sample, procedure, and treatment, follows the literature, and a presentation of findings ensues. Finally, a discussion section relates findings to the literature before the appearance of concluding thoughts.

Literature

This review of literature documents the need for the financial education of teachers. It begins with a description of literature that concerns planning for retirement, teacher retirement programs and associated patterns of teacher knowledge and practice, particularly with regard to their understanding of retirement matters. Information that concerns employer education efforts with regard to employees’ personal finances ensues.

Financial education and retirement planning

Financial education represents a critical need given the decreasing percentage of Americans that researchers consider as being prepared to retire. Workers lack sufficient information about retirement plan benefits and overestimate their financial capability to retire (Clark et al., 2010; Hanna and Cheng-Chung Chen, 2008; Kim, 2013). Educating teachers about the importance of financial planning may provide them with information about strategies for wealth development that could enhance their retirement experiences. As financial literacy relates to the development of strategies for investing and wealth development, older Americans, particularly women and teachers, of increasing longevity who worry about retirement funding, present an especially worthy group to learn this information (DeVaney, 2008; Van Rooij et al., 2012). People who are knowledgeable of features associated within financial tools may plan their finances better than those who do not (Lusardi and Mitchell, 2011).

Teacher retirement programs

The weak financial conditions of many state pension funds represents a well-publicized matter as many states consider amendments to their traditional defined benefit pension programs as measures to address underfunding (PEW Center, 2012). Teachers employed by school districts that provide retirement funding through defined benefit retirement plans experience limits opportunities for mobility, investment alternatives, and work choice (DeArmond and Goldhaber, 2010). A significant financial cost relates to early separation from a teacher retirement system, even if that separation is associated with enrollment in a retirement system in another state (Costrell and Podgursky, 2010). While defined benefit plans offer long-term benefits for employee loyalty, funding concerns necessitate that teachers understand the costs and benefits of other programs.

Public school teachers currently receive opportunities to make choices with regard to their retirement programs (Chingos and West, 2013; Goldheiber and Grout, 2013). Yet the preparedness of participants to make suitable choices relates to their financial literacy. Agnew and Szykman (2005) report that those who possess low degrees of financial literacy and who participate in retirement plans tend to acquiesce more readily to investment default options than do participants of more awareness. Thus, possession of financial knowledge may translate into participants’ confidence making investment decisions.

Teachers generally do not plan for retirement, citing insufficient resources or debt burdens as preventing their contributions (ING, 2010). Nevertheless, educators’ ignorance of investment and retirement tenets may motivate participation in retirement planning. Teacher candidates (teacher education students preparing for licensure) lack knowledge of retirement planning topics, although they express interest about the topics when surveyed about them (Lucey and Norton, 2011). The ignorance and interest of teacher candidates inform about the potential need for financial education as part of teacher induction processes. Educating teachers about the importance of retirement planning represents an opportunity to empower them to take ownership of their personal finances.

Employer-sponsored financial education

For businesses that operate with a focus on earnings, the cost of employer-sponsored financial education for employees requires justification in-terms of its effect on profitability. Mandell (2008) observes that efforts to provide financial education within the workplace represent responses to regulatory directives. These efforts relate to traditional benefits, such as health insurance and retirement; however, employers express little interest in expanding to financial education content (Mandell, 2008). Employers devote attention to other areas of business operation, while outsourcing financial education programs to established vendors who conduct seminars. Less costly paper-based vehicles for employee reading are also utilized (Kim, 2008; Mandell, 2008).

While employers recognize potential for personal wellness of employees provided through financial education, Mandell (2008) claims that profitability concerns in an uncertain economic environment discourage thinking about such endeavors. The reasons for these concerns relate to worries about general employer costs, increasing expenses associated with other benefits, lack of employee appreciation for financial education, and insufficient evidence that the rewards for such programs exceed the expenses (Mandell, 2008).

Low percentages of employees benefit from workplace financial education efforts, despite research that documents favorable outcomes associated with these programs (Kim, 2008). Despite the advantages associated with participation in employer-sponsored financial education programs, most employees resist opportunities to participate. Seligman and Bose (2012) find that both employer-sponsored retirement education and participant investment management contribute to favorable outcomes for employees. Thus, the benefits of programs relate to both the knowledge gained and the opportunity for participants to apply the learning.

Literature argues that the simple presentation of information represents an insufficient consideration with regard to financial education (e.g. Lucey, Agnello & Laney, 2015; Tisdell, 2014; Vitt, 2014). Rather, learner emotions and cultural contexts represent factors for consideration in development of learning settings that meet the needs of all stakeholders (i.e. employers and employees).

Teacher and teacher candidate financial education efforts

Efforts to prepare and educate teachers and teacher candidates about tenets of personal finance have proven successful. Schug, Wynn, and Posnanski (2002) document successes informing teachers about personal finance tenets and facilitating their development of related lessons. Lucey (2008) notes positive outcomes from teacher candidates’ research of financial literacy standards and their preparation of lesson plans. Harter and Harter (2012) contrast the gains in knowledge of teachers who participated in a workshop about financial literacy with gains in knowledge of teachers who completed a semester long graduate course. Hensley (2013) reports that increased motivation and content appreciation occurred among teachers who participated in a series of pilot experiences that trained teachers to teach about personal finance. Statistics in Hensley’s report indicate that a significant number of participants may possess pro-financial literacy beliefs and behaviors prior to the experience. Participant interest and motivation to learn may contribute to program outcomes.

Technology-based learning

Technology-based learning represents a potentially low-cost approach for employers to educate their employees about financial tenets. Yet, when considering the concept of low cost, one needs to contemplate the extent to which cost represents a financial or social concept. The manner by which one employs technology in learning relates to that conundrum. If cost represents a financial concept, then we consider the amount of money to be spent. If cost represents a social concept, then we need to consider the human socialization consequences of our instructional choices.

Still influential in education research, Taylor’s (1980) typology for computer use identifies three approaches. The first is as a tutor, which is much like a personal tutor. The computer has a body of information that is communicated to the user. The second, as a tool, uses the computer as a resource for facilitating student inquiry for learning about concepts and problem-solving. Morrison and Lowther’s (2009) NTEQ instructional model applies this approach by positioning the teacher as a technology expert who creates learning environments for students to solve problems through computer use. The third, as tutee, uses the learner to program the computer. This approach requires a lot of time because the learner becomes an expert in content and programming.

Using the computer as a tutor lends itself to distance-learning because it involves less bilateral interaction with students. Unilateral communications control the flow of information and reward learners for proper responses to material. As to whether online or face-to-face learning is more effective, a review of literature conducted by Means et al. (2010) determine that learning through online means was just as effective as learning through face-to-face environments; however, blending online and face-to-face instruction improved learning outcomes of face-to-face environments. Their analysis does not interpret if the blending enhanced online instruction.

In summary, financial education represents an important matter to be addressed by employers. In an environment that places an increasing amount of responsibility on employees to make sound financial decisions that concern their retirement, employers face the difficult task of deciding what education program best provides their employees with the tools to make financial decisions at the lowest cost to the employer. Teachers represent a group of employees that, on the whole, possess marginal knowledge about personal finances. Informing them about personal investments and state retirement programs is essential to their prudent financial decision making with regard to retirement. Considering that nearly two thirds (64%) of states had funded less than 80% of their anticipated pension obligations (PEW Center, 2012), both new and veteran members of the teaching profession need accurate information about the workings of investments and retirement programs.

Methodology

The researchers interpret the outcomes of a computer-based modular intervention, lecture-debriefing intervention, and a hybrid intervention (which combined the computer-based and lecture interventions) on participants’ understandings of investments and retirement programs. We also consider whether significant differences occurred in the changes in knowledge provided by each of the three interventions. Literature with regard to employer-sponsored financial education and with regard to technology use suggests that blending mastery and cooperative learning methods would yield higher achievement than cooperative and mastery approaches separately (e.g., Laney et al., 1996; Means et al., 2010).

Sample

Participants consisted of public school teachers in a Midwestern state who were associated with any of three project stages. Stage 1 occurred during the spring of Year 2. The summer of Year 1 witnessed the recruiting and enrollment of teachers

Stage 2 of the project occurred during the summer of Year 2. This stage recruited participants through email invitations of graduate students in the study of curriculum and instruction. All students in these programs are required to have at least 2 years of teaching experience for program enrollment.

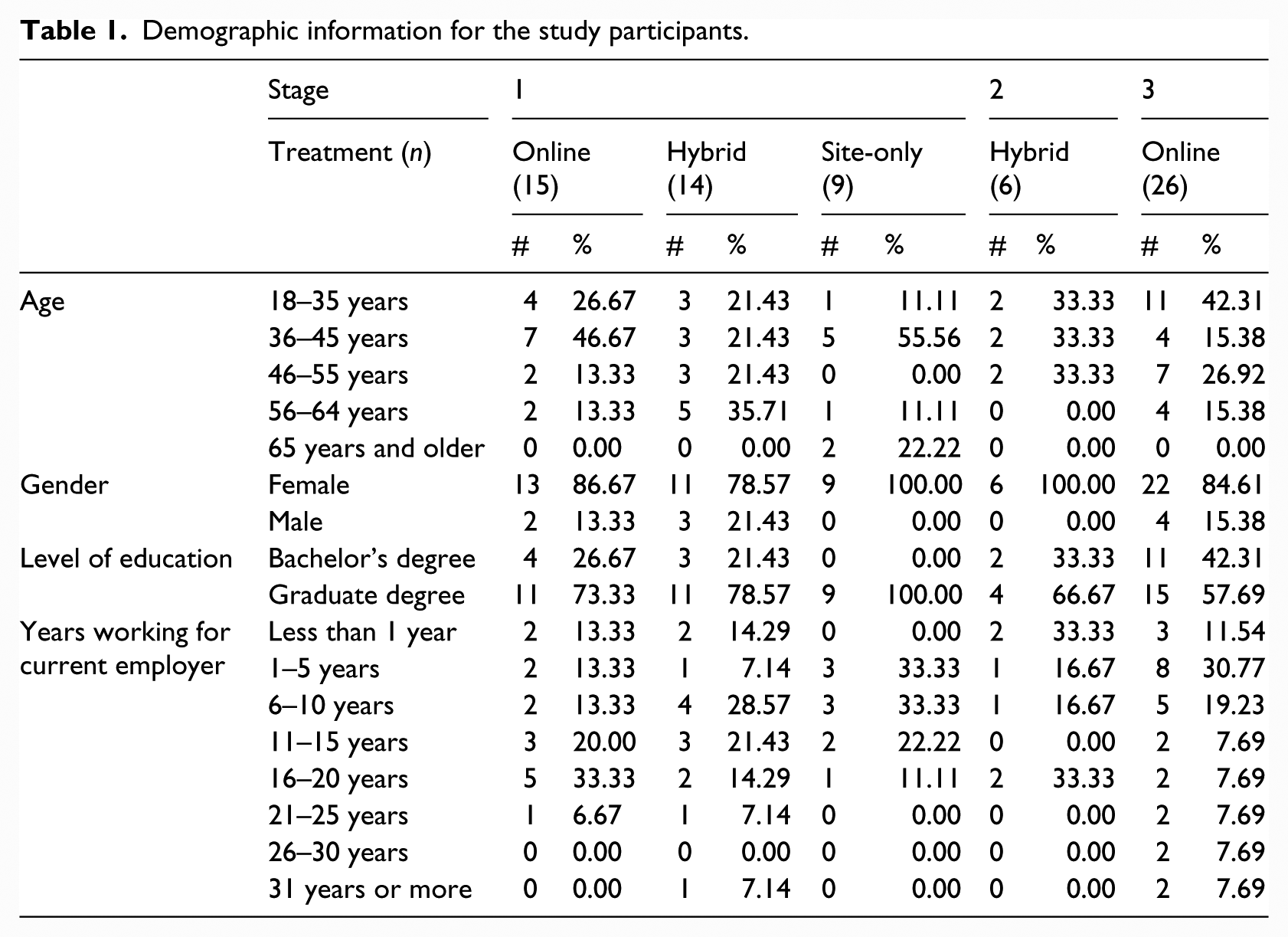

Stage 3 occurred during the spring and summer of Year 3. During the spring, teachers in three school districts (one rural, one urban, and one mid-sized city) received email invitations to participate in the study. For all participants, the median age group was 36–45 years old, 87.1% were women, and all participants identified themselves as White/Caucasian. The majority (71.4%) had completed or was in the process of completing a graduate degree, and the median grouping of number of years working for their current employer was 6–10 years. Disaggregated information by stage and treatment for the study participants are provided in Table 1.

Demographic information for the study participants.

Across the three stages (and the three treatments in Stage 1), there were no significant differences in these demographics between those who completed the initial survey only and those who completed both surveys.

This study is a randomized treatment and control condition design study. It involved three stages that occurred over three semesters.

Procedure

The research project was producer-driven. No effort was made to interpret participants’ financial education needs or education preferences in development of the interventions. The bases for interventions related to the conveyance of information developed by the producer of the instructional software and the manners of its delivery.

Participants in Stage 1 were randomly assigned to one of three interventions. Intervention 1 (online) experienced an adaptation of the Investor Education in Your Workplace training program, a 10-hour online investor training program that included assessments at the commencement and completion of the process. This program was coordinated with Precision Information (PI) a company that produces and implements financial education software.

The majority of the online content derived from a preexisting PI program with a module containing additional information specific to the retirement system that served the participants in the study. The 10 modules contained the following titles:

Getting Started with Savings and Investing;

Basics of Personal Finance;

Investing Basics;

Investing Strategies;

Investment Risks;

Basics of Retirement Planning;

Investing in Mutual Funds;

Working with Financial Advisors;

Saving for College, and Putting It All Together.

In addition, all participants in Stage 3 participated in the online group. Each participant in this treatment completed approximately 10 hours of training.

Intervention 2 (site-based) consisted of a university-based experience that employed lecture, small group discussions, and activities. The topics covered in this intervention were identical to those covered in online modules; however, university faculty delivered the material through a combination of lecture and small group breakout sessions. This intervention consisted of four lecture periods with cooperative activity sessions interspersed. An assistant professor in the college of business conducted the lecture. Four assistant professors from the school of teaching and learning in the college of education facilitated the breakout sessions. Each participant in this treatment completed approximately 6 hours of training.

Participants in Intervention 3 (hybrid) experienced both the online modularized learning and the site-based learning experiences. Participants in this intervention completed the same online training content as those participants assigned to Intervention 1. They also attended the site-based learning experience as did those assigned to Intervention 2. Each participant in the hybrid treatment completed approximately 16 hours of training.

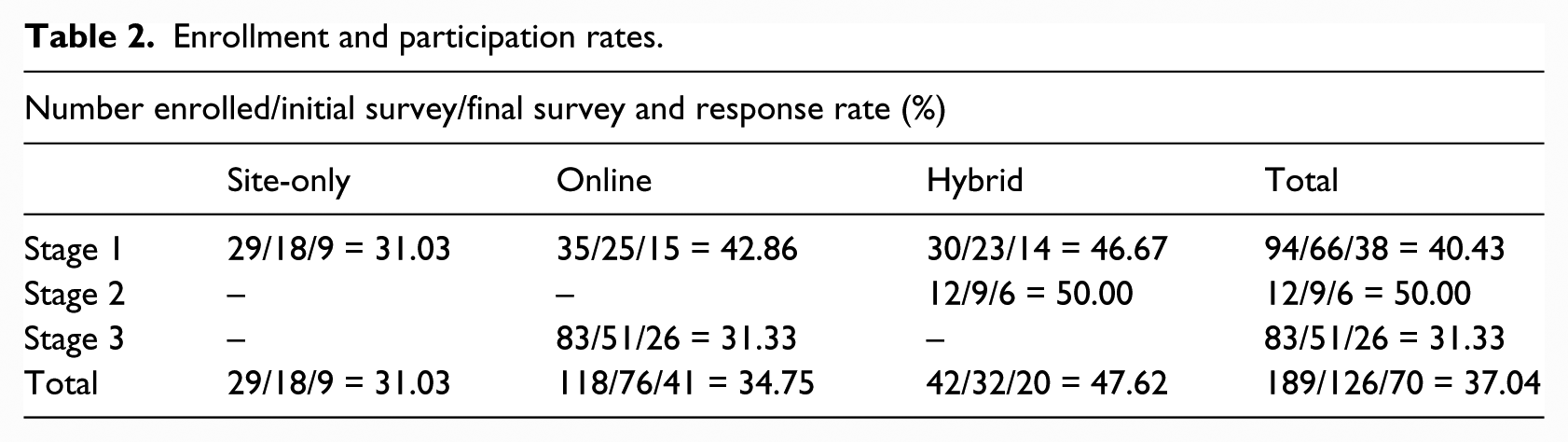

All participants in Stage 2 were assigned to the hybrid group. Table 2 provides a summary of the stages, interventions, and participation rates.

Enrollment and participation rates.

Data collection

Participants completed three instruments: two surveys and a quiz. A large public institution of higher learning in the Midwest hosted the first survey online. Participants completed the second survey and quiz twice, once before and once after the learning experiences. Those participants who expressed interest in the research completed this survey, which contained items that sought information about participant demographics, the reasons for their research participation and their self-assessment of retirement knowledge. All participants received a $25 stipend upon completion of this survey and returning of a signed contract to enroll in the study.

The investigators randomly assigned those who completed the survey to one of the three interventions and enrolled them in the program at the Educated Investors website (managed by the principal investigator). Those assigned to the hybrid and online groups began working on the learning modules. Those assigned to the site group pursued their normal activities until the site-based experience. Participants who completed the final quiz and survey received a $25 stipend for completion of the survey. Participants in the site-based program (both hybrid and site-only groups) received $50 travel stipend for their workshop participation.

At the website, enrollees were to complete both an initial quiz about their knowledge concerning financial literacy and a survey about their attitudes towards personal finances. These two tools were to have been administered to participants before and after their completion of the online modules. For reasons that are unclear, participants who experienced the site-based intervention completed the surveys, but not the quizzes. Those who experienced the online or hybrid interventions completed both the surveys and the quizzes.

The survey contained items that interpreted participants’ comfort discussing financial issues, participation in various financial programs, and perceptions of knowledge about different financial concepts. The survey items derived from a survey that PI utilized in its financial education efforts with other clients. PI adapted the survey for the current project by removing items that concerned topics unrelated to the current project.

The quiz consisted of 26 items which mostly derived from a bank of preexisting quiz items provided by PI. From this database, a list of 50 items was selected for use by the principal investigator for use in the quiz. As a result of further communications with PI, it was decided that 30 items would be used. The principal investigator reduced the number of items accordingly, and PI selected 26 for use in the quiz. The 26 items were distributed among the following module topics in the following manner, Getting Started with Saving and Investing (2 items), Basics of Personal Finance (5 items), Investing Basics (3 items), Investment Strategies (5 items), Investment Risks (0 items), Basics of Retirement Planning for the State (5 items), Investing in Mutual Funds (5 items), Working with Financial Advisors (0 items), Saving for College (0 items), and Putting It All Together (1 items).

The omission of questions associated with financial advisors and savings for college relates to the general purpose of the study, which concerned the education of teachers about investments and retirement programs. While it is acknowledged that no questions were asked with regard to investment risks, it is recognized that risk represents an element of any financial decision, and thus was implicitly addressed through other quiz items.

Findings

The presentation contains two sections. The first section conveys the attitudes of participants regarding their confidence with financial topics before and after the learning experiences, comparing the results by intervention as appropriate. The second section communicates the overall knowledge of participants before and after the learning experiences, comparing results by intervention as appropriate.

Descriptive analyses of participants’ attitudes

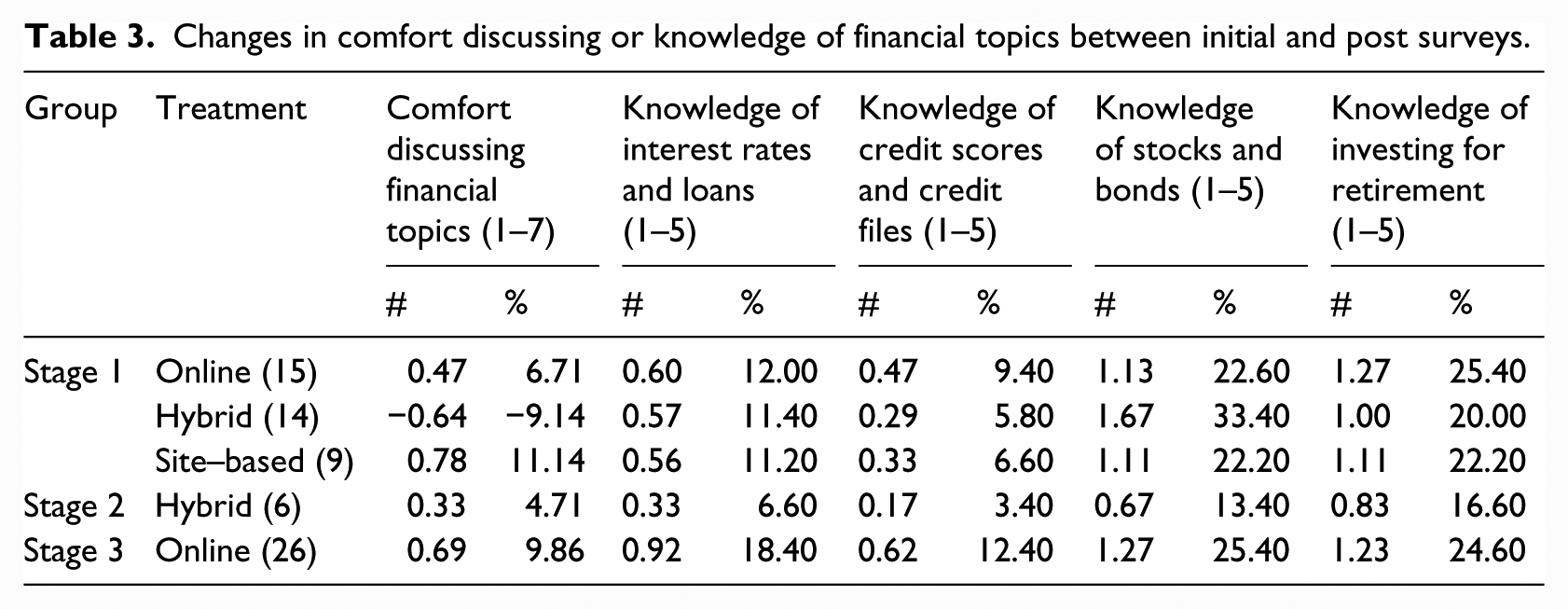

The researchers determined changes in participants’ attitudes by interpreting patterns of responses to five survey items. The first item asked participants to rate their comfort discussing financial topics with others. The item contained seven possible responses ranging from “Does not Describe Me” (1) to “Describes Me Very Well” (7). The other four items sought respondents’ comfort in their knowledge of four financial topics: (1) interest rates and loans, (2) credit scores and credit files, (3) stocks and bonds, and (4) investing for retirement. These four items contained five possible responses that ranged from “Nothing” (1) to “A lot” (5). The program addressed two ((1) Stocks and Bonds and (2) Investing for Retirement) of the four topics. The program did not address the other two topics ((1) Interest Rates and Loans, and (2) Credit Scores and Credit Files). The inclusion of the two uncovered topics provides a basis for comparing any changes that might result from the program coverage of topics. Table 3 includes the mean difference scores and percent change for all five survey items.

Changes in comfort discussing or knowledge of financial topics between initial and post surveys.

The statistics in Table 3 indicate that Stage 1 participants who received the site-based (0.78) or online (0.47) training expressed more confidence discussing topics related to personal finance after the learning experiences than they did before the training. During Stage 1, these two (site-based and online) groups also expressed greater confidence of knowledge about topics presented in the training (increases ranging from 1.11 to 1.27) than of those topics not presented (increases ranging from .33 to .60) after the experience than they did before. The increases in confidence of knowledge associated with topics emphasized in the training (stocks and bonds and investing for retirement) was greater than for those not covered.

In three (interest rates and loans, credit scores and credit files, investing for retirement) of the four items for which increases in confidence in knowledge were measured in Stage 1, greater increases were associated with online learning. Although participants associated with the hybrid group in Sage 1 expressed a decrease in their comfort discussing financial topics, they conveyed a greater increase in knowledge of stocks and bonds than did members of other groups.

Participants in Stage 3 conveyed this pattern of greater confidence of knowledge with presented topics (1.27 and 1.23) versus those not presented (.92 and .62). Participants in Stage 2 also convey greater confidence in knowledge of presented topics (.67 and .83) than not presented topics (.33 and .17). They also indicated a gain in comfort discussing financial topics.

Group analyses of changes in attitudes

Given the ordinal-level nature of survey data, the researchers performed a series of Wilcoxon signed-rank tests to examine the differences between responses to these five items on the initial and final surveys across the three stages and interventions. The following conditions indicated this type of analysis as appropriate. (1) The differences between responses on the initial and final surveys were able to be ranked; (2) study participants were randomly assigned to groups in Stage 1; however, not for Stages 2 and 3; (3) the distributions of the difference scores (defined as the difference between initial and final survey responses) were normal for all three treatment groups in Stage 1 and the Stage 3 (online only) participants. Except for one item, the distributions for the Stage 2 participants (hybrid only) approximated a normal distribution.

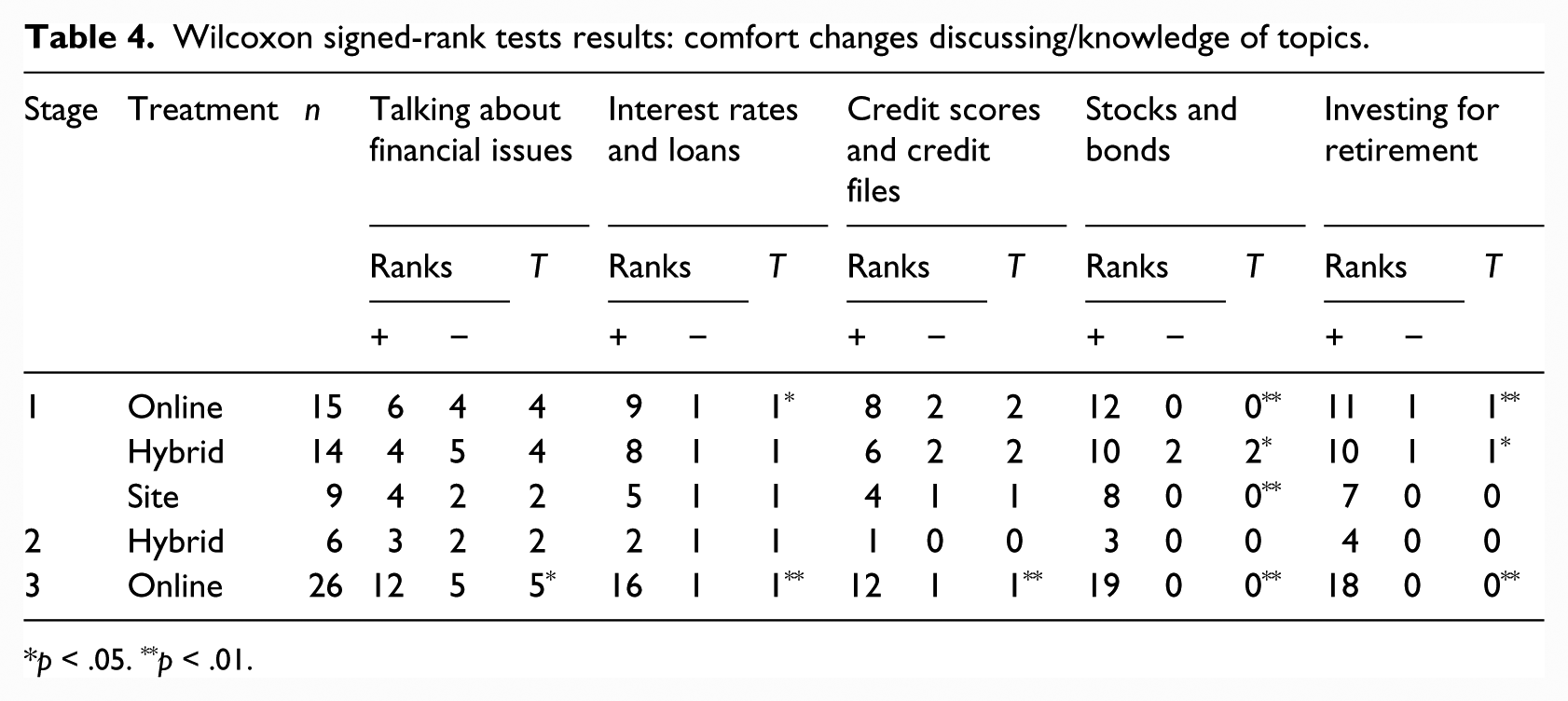

The results of the Wilcoxon signed-rank tests are provided in Table 4. Participants in the online intervention in both Stages 1 and 3 significantly increased their confidence regarding interest rates and loans, and across all interventions in these two stages, participants significantly increased their confidence regarding stocks and bonds. Despite this small sample, participants in Stages 1 and 3 showed a significant increase in their confidence regarding investing for retirement.

Wilcoxon signed-rank tests results: comfort changes discussing/knowledge of topics.

p < .05. **p < .01.

The statistics in Table 4 indicate significant gains in Stage 1 and Stage 3 participants’ confidence of knowledge associated with topics presented. The increases were not significant for participants in Stage 2.

Stage 1 participants assigned to the online treatment expressed significant gains with regard to their confidence in knowledge of stocks and bonds and investing for retirement. Participants assigned to the hybrid treatment also experienced significant gains in confidence, though not to the level of significance as the online group. Participants assigned to the site-based group experienced significant gains associated with only one of the items.

Stage 3 participants (online) also realized significant gains in their confidence regarding stocks and bonds and investing for retirement. Stage 2 participants did not convey any significant increases.

Group analyses of changes in knowledge

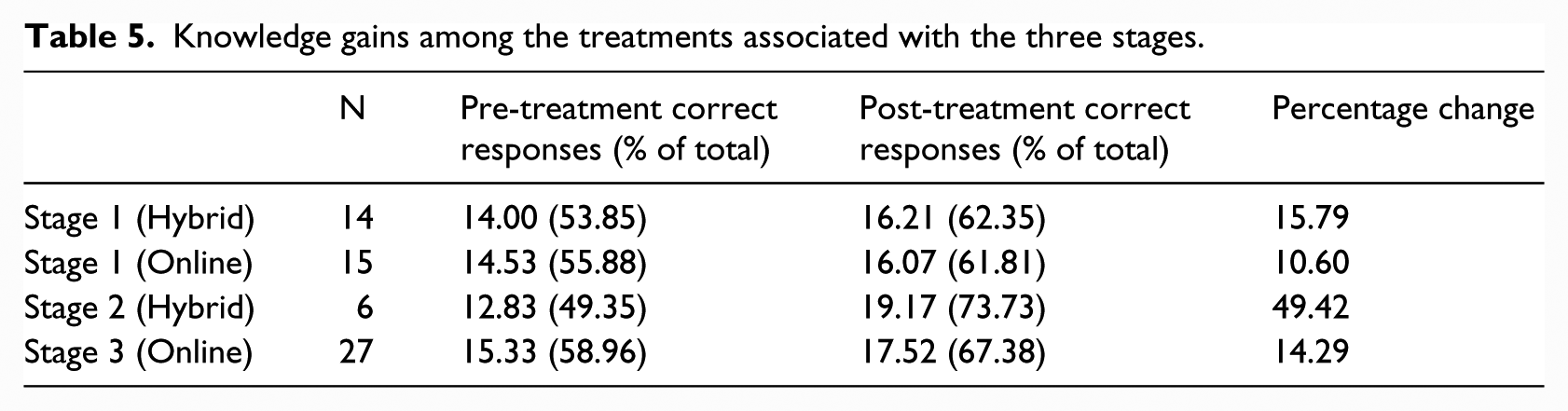

Table 5 presents statistics that describe changes in overall knowledge (from the quizzes administered) for the groups that participated in the Stages 1, 2, and 3. The statistics in this table indicate that increases in participant scores occurred through the online and hybrid interventions. Because of the unavailability of information, the analysis did not interpret changes in participant knowledge for the site-only group.

Knowledge gains among the treatments associated with the three stages.

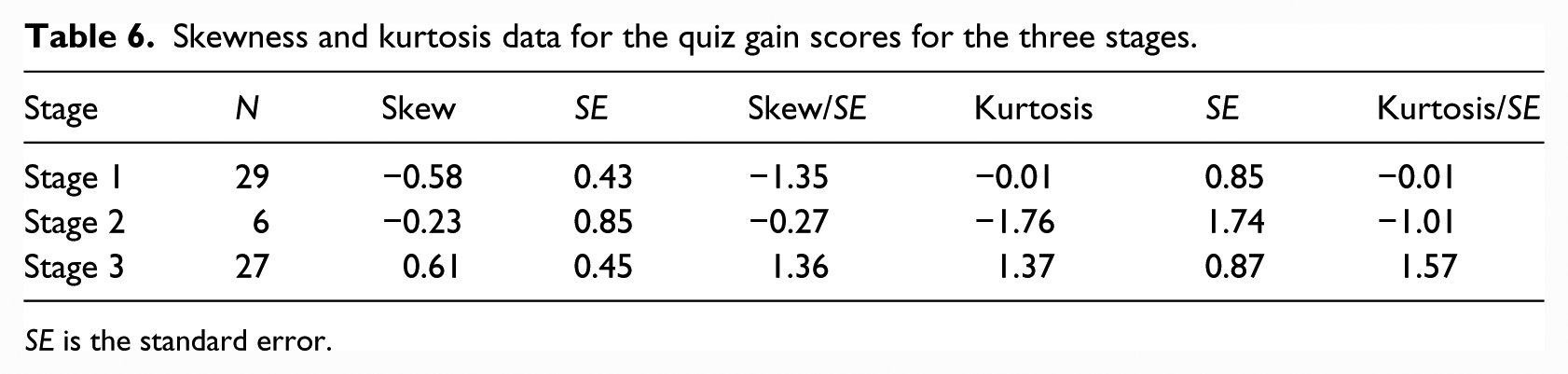

The researchers conducted a one-way between-groups analysis of variance (ANOVA) to interpret patterns of significance between initial and final quiz scores among the three stages to determine if aggregating these data would be appropriate. Concerning assumptions for inferential analysis (1) participants were randomly assigned to groups in Stage 1, (2) skew and kurtosis statistics indicated that distribution of the difference scores seemed to be approximately normal across the three stages, and (3) Levene’s test indicated that the error variances of the difference scores between the three stages were not significantly different (F(2, 59) = 0.54, p = .59). Please refer to Table 6 for relevant statistics.

Skewness and kurtosis data for the quiz gain scores for the three stages.

SE is the standard error.

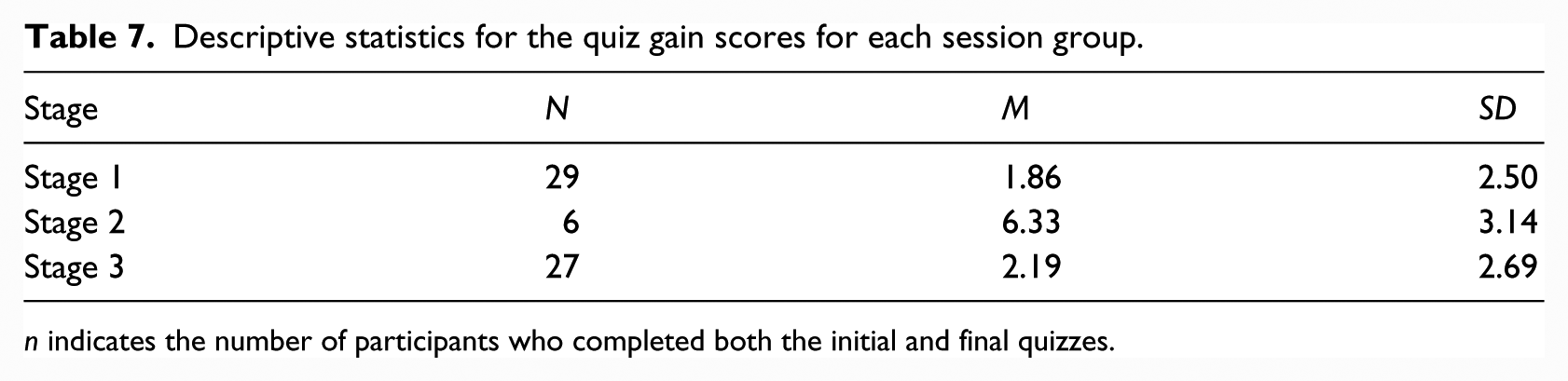

The analysis indicated presence of significant difference in gain scores among the three stages (F(2, 59) = 7.32, p = .001, R2 = .20; see Table 7). The researchers employed Bonferroni post hoc tests to determine which groups’ gains were significantly different from the others. The results indicated that the gain scores of Stage 2 participants were significantly higher than the gain scores of Stage 1 participants (p = .001) and Stage 3 participants (p = .003). Thus, only the data from Stage 1 and Stage 3 were aggregated.

Descriptive statistics for the quiz gain scores for each session group.

n indicates the number of participants who completed both the initial and final quizzes.

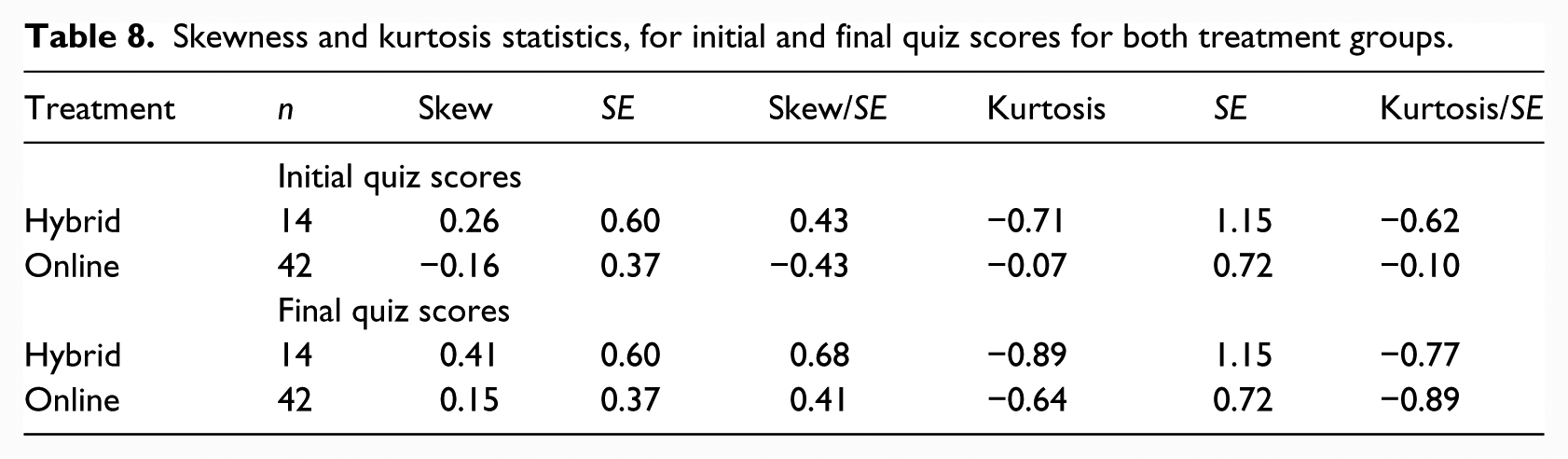

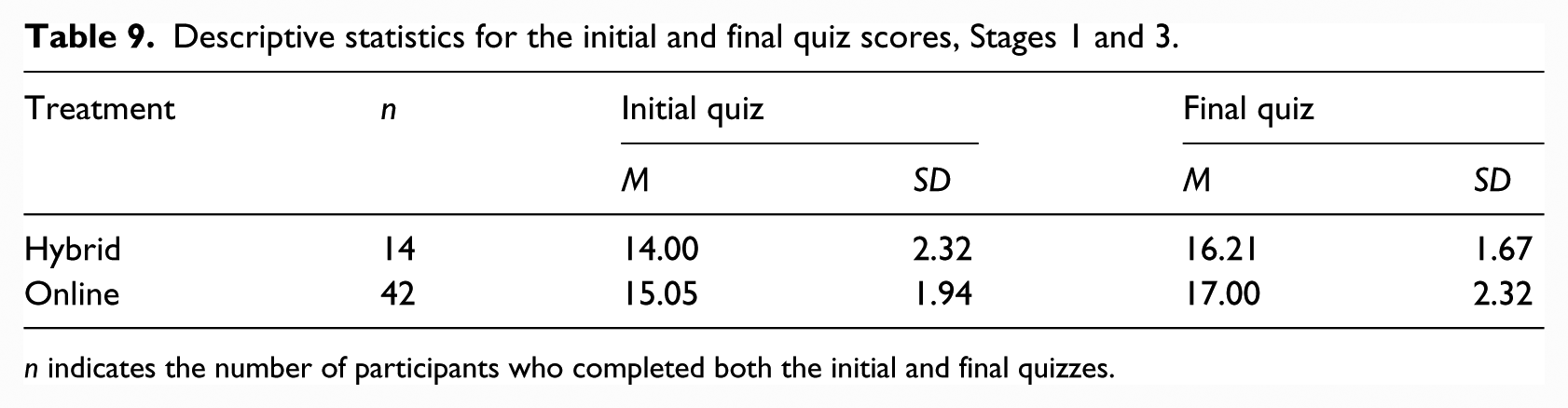

Because the aforementioned analysis indicated no significant differences in the gain scores between Stage 1 and Stage 3, these data were aggregated. A two-way mixed ANOVA was conducted to match the 2 (treatment: hybrid or online) × 2 (quiz scores: initial and final) design. When interpreting the three assumptions for inferential analysis, the researchers observed that participants for Stage 1 sample were randomly assigned, and the distributions of the initial and final quiz scores for both treatment groups seemed to be approximately normal (see Table 8 for skew and kurtosis data). In addition, Box’s test indicated that the observed covariance matrices of the quiz scores between the two treatment groups were not significantly different (F(3, 9308.43) = 0.84, p = .47). The results of the ANOVA indicated that there was neither a main effect of treatment, F(1, 54) = 3.20, p = .08, partial η2 = .06, nor an interaction effect of treatment and quiz scores, F(1, 54) = 0.11, p = .75, partial η2 = .002, see Table 9. There was, however, a main effect of quiz scores. Final quiz scores (M = 16.80, SD = 2.19) were significantly higher than initial quiz scores (M = 14.79, SD = 2.07), F(1, 54) = 27.02, p < .001, partial η2 = .33. Thus, there was a significant increase in quiz scores for participants in the hybrid and online treatment groups across both Stages 1 and 3.

Skewness and kurtosis statistics, for initial and final quiz scores for both treatment groups.

Descriptive statistics for the initial and final quiz scores, Stages 1 and 3.

n indicates the number of participants who completed both the initial and final quizzes.

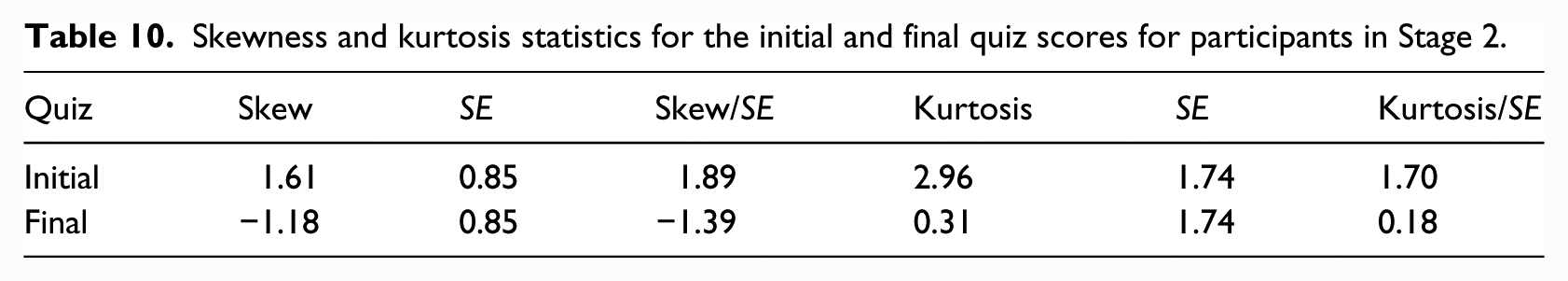

The analysis associated with Stage 2 involved a paired-samples t test to compare the initial quiz scores and final quiz scores. The quiz scores were measured on a ratio-level scale, but the sample was not randomly selected. In addition, distributions of the initial and final quiz scores seemed to be approximately normal (Table 10). Because two of the three assumptions were met, these results should be interpreted with caution as they may not be generalizable. The results of the t test indicated that there was a significant increase between the initial quiz scores (M = 12.83, SD = 2.23) and the final quiz scores (M = 19.17, SD = 3.06), (t(5) = 4.94, p = .004, d = 2.02).

Skewness and kurtosis statistics for the initial and final quiz scores for participants in Stage 2.

Discussion

This research study found that (1) participants of all interventions expressed increased confidence in knowledge of the topics covered, (2) significant confidence increases in confidence of knowledge were observed in online and hybrid groups, (3) participants of all interventions demonstrated increased knowledge from the interventions, and (4) no significant differences in gains in knowledge occurred between participants associated with online and hybrid inventions. What follows is an interpretation of findings in view of the literature.

Educating teachers about tenets of investments and retirement planning represents a desirable objective as indicated by patterns of teacher ignorance with regard to personal finances (Grimes et al., 2010). This need relates, in part, to (1) employer needs to educate employees, (2) recent changes in retirement programs, and (3) patterns of interest upon topic exposure (e.g. Lucey and Norton, 2011; Grimes et al., 2010; Mandell, 2008).

Additional studies need to interpret how learning conditions relate to these outcomes. Studies that employ larger, more diverse samples and interpret various forms of technology use could provide more insight into relationships between educators and technology-based learning about personal finance. While Taylor’s (1980) typology (tutor, tool, tutee) for computer use provides a broad framework for interpreting technology’s learning applications and their derivatives, cooperative site-based learning offers potential for enhancing online learning processes (Means et al., 2010).

Findings of no significant differences in knowledge gains among online and hybrid groups would suggest that additional work needs to consider the conditions for learning and how they relate to patterns of achievement. The hybrid group received six more hours of training than the online group; however, provided no additional gains of significance than those completing the online modules. Thus, while the hybrid experience reinforced the content provided in the online module, it did not offer opportunity for clarification of additional concerns. Future studies should consider how the nature of reinforcement learning experiences relates to the needs of participants. Participant buy-in with regard to the content and structure of learning opportunities may enhance learning outcomes (e.g. Vitt, 2014),

Findings of significant gains should be considered in view of the volunteer nature of research participants. Hensley (2013) documented successes of involving teachers in the processes of program development; however, study participants already possessed strong knowledge of topics associated with the program. Participant successes may relate to patterns of interest in the nature of the training. The subject study involved volunteer participants who were motivated to improve their knowledge of personal finance and investments. Studies that interpret the outcomes of these treatments on students participating in the program for compulsory reasons may differ from those presented herein.

The site-based experience offers the potential to enhance learning through the online process. The matter of realizing this potential relates to how employers define and determine acceptable costs for such processes. While state and local governments are not profit motivated, they have a responsibility for financial accountability to their constituents while maintaining public education integrity. At the same time maintaining a public service workforce of integrity necessitates employment settings that actively promote employee wellness, which includes financial security.

The small number of cases associated with this study prevents development of a fully informed model to explain the relationships between cost and efficiencies of employer-sponsored financial education programs. Further research that uses large study groups and draws upon a variety of curricular and instructional approaches would contribute to development of a comprehensive model.

Limitations

Our findings relate to the program participants only and are not generalizable to the teacher population in general. The reader should exercise caution when interpreting the findings of this report as many challenges were encountered in the processing of data that support the analysis. These difficulties related to data format, survey item sequence, response inaccuracies, and computer programming matters. It is possible that translation of data to a workable form may have produced some minor errors.

No efforts were made to ascertain the validity or reliability of instrumentation. Results of this project interpret participants’ knowledge of content provided in the programs; however, such knowledge may not extend to all areas of personal finance and investments. A challenge associated with programmatic training concerns the matter of whether participants are learning program content or learning the intended concepts. In addition, the phrasings or structures of survey and quiz items may affect patterns of participant responses. Lucey’s (2005) study, which interpreted the Jump$tart Survey of Financial Literacy, recommended stronger efforts to provide for valid measures of financial literacy. Such efforts are necessary for interpreting the knowledge gained in employer-sponsored financial education processes as well.

No efforts to explore patterns of statistical consistency between survey respondents and non-respondents occurred. It is acknowledged that the study results may relate to a sample of volunteer school teachers concerned about learning information that concerned investments and retirement. Findings may not be generalized to the school districts from which this sample derived or from the general population.

Another consistency concern relates to the comparison among participants in the three stages, as it could be argued that they derived from three different populations. It is acknowledged that participants derive from three different sources, though the participants in Stage 2 represent veteran teachers from the immediate area. At the same time, we note that literature consistently points to weak knowledge of personal finance among teachers (Garman, 1979; Grimes et al., 2010; McKenzie, 1971; McKinney et al., 1990). Given that the present study did not provide sufficient robustness for comparison of participants from different sites, we encourage future studies that use larger more diverse samples.

It is recognized that the number of participants during Stage 2 portion of the study was small in comparison to the numbers of participants in Stage 1 and Stage 3. This situation may relate to the nature of the recruiting process and the conditions for participation. Participants in Stages 1 and 3 were recruited through emails at their places of employment through cooperative processes with school and district administrators. Under these conditions, participants may have perceived completion of the online modules as processes to be completed within the contexts of their regular professional obligations. Recruitment of Stage 2 participants occurred through university communications during a summer session that involved coursework of a compressed nature (4 or 6 weeks) compared to spring and fall semesters (16 weeks). Without the professional environment to motivate and regulate their participation in the project, Stage 2 participants persisted in the program through individual means.

We acknowledge the existence of many different technology forms and processes that serve as vehicles for financial education. The current study described a comparison of interventions that used technology as a tutor, dispensing information to participants and testing their short-term retention of presented information. Studies that employ other forms of technology (e.g. simulation) as a tutor may yield different results. Different patterns of outcomes may also be realized by research studies that compare these interventions when using technology as a tool or as a tutee.

We wish to address the position that this study lacks a control group for analysis. We believe that the online only group serves as a sufficient control to interpret the effects of reinforcing lectures about the content. We acknowledge that this research study involves a small sample and encourage further efforts to verify or refute our findings using larger and more diverse samples. In addition, nonparametric statistics (Wilcoxon signed-rank tests) were used when appropriate to account for the small sample sizes, nonrandom sampling methods, and skewness of the data distributions.

Finally, we acknowledge the perspective that state pension funds represent matters of public policy and thus do not fit within the scope of the information studied. We respectfully disagree with this position. Particularly in situations where employers provide choices between defined benefit and defined contribution plans, enrollees in pension programs have the right to understand the mechanics, risks, and rewards of these retirement funds. Without this information, participants lack the knowledge to make informed decisions about their retirement funding alternatives.

Conclusion

This project found that a computer-based modular intervention and a supplemental lecture-debriefing intervention have a significantly positive effect on the retirement investment knowledge and confidence of public school educators who participated in the project.

Additional studies are needed to confirm these results using instruments of documented validity and reliability. In addition, future studies should interpret the longitudinal effects of the various treatments and the extent to which participants apply the learning in their financial practice.

While such efforts to extend the research may provide additional information about this employer-sponsored education endeavor, the input of prospective learners should not be ignored. According to Vitt (2014), “Workplace education requires educators to understand, develop, and incorporate sociocultural contexts into pedagogy in order for it to be effective” (p. 67). Valuing of employee contributions to the workplace represents an element of developing employee wellness. Financial educators should consider the value of soliciting teacher input to generate a sense of buy-in and ownership in the development of programs that fit their learning wants. Developing training opportunities that involve small groups that experience increased senses of personal worth may generate the significant gains that employees need.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research for this article was funded by a grant from the Investors’ Protection Trust.