Abstract

The ability to solve problems is a key skill and is essential to our day-to-day lives, at home, at school and at work. The present study explores the quality of managerial problem-solving of participants who are in secondary education. We studied 10th, 11th, and 12th graders following a business track in the Netherlands. Participants were asked to represent, diagnose and solve business cases. The transcripts (handwritten ‘case solutions’) of all 213 participants were analysed for managerial knowledge and problem-solving accuracy. Futhermore, we controlled the data for individual interest. The results demonstrate students’ progress in terms of the quality of solving problem from 10th grade until 12th grade. Several implications for education are discussed.

Introduction

In formal education, the importance of students’ proficiency in problem-solving is increasingly being recognized. ‘In modern societies, all of life is problem solving’ (OECD, 2014: 26). Brant and Wales (2009) argued that in order to succeed in life, students need a number of key skills of which problem solving ability is one. This recognition of the importance of developing problem-solving skills in formal education is apparent from the recent changes made to international tests as well as in national (Dutch) curricula. For example, the latest Programme for International Student Assessment (PISA) tests explicitly assess the problem-solving skills of 15-year-old students. On a national level, the introduction of a new national curriculum in the Netherlands for upper general secondary education (15–18 years) has switched focus from merely the acquisition of conceptual knowledge to the development of skills and, in particular, domain-specific problem-solving skills. This shift from knowledge-oriented to skills-oriented education is not limited to the Netherlands and has also been instituted in other Western countries such as England and the United States (e.g. Mercier and Higgins, 2013).

For a long time, problem-solving and its development have been the focus of attention in both academic domain learning as well as expertise development research. Therefore, these strands of research are highly relevant when studying the development of problem-solving skills in formal education. In the field of (professional) expertise research (Boshuizen et al., 2004) as well as in academic domain learning (Alexander and Murphy, 1998), it is generally acknowledged that experts outperform novices when it comes to the quality of solving problems. This is due to experts’ (1) well-organized knowledge, (2) problem analysis and problem representation, and (3) strong self-monitoring abilities (Chi et al., 1988).

In the conventional approach of expertise development research, the early phases of gaining expertise are studied in the setting of higher education by examining the aptitudes of a restricted pool of participants (i.e. students). Researchers have argued that only those who have the necessary aptitudes will be able to enter the specific domain of expertise (Hatano and Oura, 2003). However, various authors argue that the role of secondary education in expertise development should be examined. These authors (Alexander, 2005; Bereiter and Scardamalia, 1986) state that an aim of secondary education is to plant the seeds for expertise in any domain. This is roughly in line with Mehta et al. (2011) who found that secondary school students expect that education prepares them for their future professional lives. This entails helping students develop the types of knowledge representations, ways of thinking, and social practices that define successful learning in specific domains (Goldman et al., 1999). It implies supporting and stimulating students in the process of continually transforming the repertoire of knowledge, attitudes, and skills to become better problem solvers in a particular domain, according to expertise standards (Boshuizen et al., 2004).

Till now, these expectations regarding the contribution of secondary education have primarily been put forward on conceptual grounds. This article is concerned with providing empirical arguments to highlight the seeds of expertise that are planted during secondary education. Our purpose is to show to what extent secondary school students in three consecutive upper grades differ in their quality in problem solving. Students’ problem solving is researched in two steps. The first step is to test the assumption that students’ quality of problem solving develops along dimensions of expert problem solving in three consecutive upper grades of secondary education (10th, 11th and 12th grade). The second step is based on the argument that focusing solely on cognitive aspects in expert problem solving is incomplete, because it ignores the problem solver’s individual interest in the problem (Alexander, 2003; Mayer, 1998). Therefore, we address the question what the differences are in the quality of problem solving in terms of expertise characteristics between 10th, 11th and 12

In this study, we use a methodological approach that is common in expertise development research. We offered secondary school students expert-like domain-specific problems to trigger a level of expert-like behaviour. These problems can be characterized as ill-structured, complex and multi-disciplinary (Arts, 2007). Given this type of problems is common in business, we have selected the business track in secondary education as the setting for our study. In addition, we used a cross-sectional design to investigate the differences in problem solving among students from three consecutive grade levels.

Expertise development and the study’s hypotheses

Various authors (e.g. Bereiter and Scardamalia, 1993; Herling, 2000) claim that the key to expertise lies in an individual’s ability to solve problems. Experts professionals are constantly solving problems. The quality of problem solving itself manifests the degree of expertise. Moreover, problem solving is related to the domain-specificity of expertise, such as analysing a law case (Nievelstein et al., 2010), approving financial statements (Bouwman, 1984), or choosing appropriate statistical techniques (Alacaci, 2004).

Experts, however, do not become experts overnight. The development of professional expertise is a lengthy process starting in secondary education proceeding through higher education and continuing in the workplace (Boshuizen et al., 2004; Bryce and Blown, 2012; VanFossen and Miller, 1994). In secondary education, students are encouraged to acquire knowledge, practices, and ways of thinking that constitute a particular domain (Goldman et al., 1999). During this educational phase, a student acquires knowledge and skills and uses them to become a better domain-specific problem solver. The transformations in knowledge and skills are reflected within stages of proficiency at various points along the path to expertise (VanFossen and Miller, 1994).

In this respect, stage theories imply a developmental continuum from novice to expert and identify characteristics and development activities at each stage (Grenier and Kehrhahn, 2008). From an educational view on expertise, stage theory is often used (e.g. Arts et al., 2006a; Schmidt et al., 1990). This theory focuses on the development of and changes in the organization of domain-specific knowledge structures, distinguishing different developmental stages of expertise (i.e. novices, intermediates and experts). This perspective on expertise development gave researchers insight into transformations in domain-specific knowledge structures from beginner to expert level in a specific domain. Domain-specific knowledge covers knowledge types, such as conceptual knowledge and procedural knowledge related to a specific expertise area (Sternberg, 1999). In this article, we will mainly discuss the transformations of domain-specific knowledge structures during the educational phase of expertise development, the focus of our study.

Expertise research has shown the difficulty of making a domain-specific knowledge structure explicit. Therefore, research into these structures uses derived variables. The three most common are: (1) participants’ application of domain-specific concepts, (2) participants’ problem representation, and (3) inferences (Arts et al., 2006a; Rikers et al., 2000). The quality of domain-specific knowledge structures, operationalized in abovementioned variables, is a precondition for reaching a particular level of cognitive problem solving performance (Arts, 2007; Boshuizen, 2004). In expertise development research, this performance is often reflected in (4) the accuracy of diagnoses and (5) the accuracy of solution.

Many problems that people (expert or not) face require some sort of diagnosis of the situation based on conceptual knowledge to characterize the situation and to act adequately (Schmidt and Boshuizen, 1993). In expertise development research, the application of concepts is an indicator for the possession of conceptual knowledge. Schmidt and Boshuizen (1993) investigated the difference in application of biomedical concepts by asking second-year, fourth-year, and sixth-year medical students and experienced internists to explain the process that had caused the medical problem described in a clinical case. They found that the number of (biomedical) concepts applied by the students increased with increasing expertise levels. Custers and colleagues (Custers, 1995; Custers et al., 1999) found similar results in the field of medicine. In the field of business, Vaatstra (1996) and Arts et al. (2006a) found a significant increase in the number of domain-specific concepts from freshman year through graduation. Furthermore, Bryce and Blown (2012) found that expertise, such as extensive knowledge-skill in applying scientific language and recognizing relationships between concepts, demonstrated a rising vocabulary means (use of astronomical concepts) during the transition from primary to secondary school. These findings are consistent with Boshuizen’s (2004) perspective on novices’ learning, which she refers to as knowledge accretion that typically takes place during formal education.

Second, domain-specific knowledge structure denotes both the elements of what one knows in a domain (existence or absence of concepts), and how the elements are linked (Alacaci, 2004). The quality of problem representation, in terms of connectedness, plays an important role in explaining the differences between novices and experts as well. Arts (2007) defines problem representation as the way in which an individual has interpreted and processed problem information into a ‘mental model’ (p. 15). Various authors (e.g. Chi et al., 1988) stated that domain experts represent problems on a deeper level than novices. Experts represent problem information by focusing on the meaning of information, that is, information not mentioned specifically in the problem, rather than superficial and literal aspects as novices do. This is consistent with findings of Boshuizen (1989), Vaatstra (1996) and Arts et al. (2006a) of a significantly positive linear effect between problem representation and students’ first to fifth years of university. This indicates that the quality of problem representation improves in terms of deeper understanding of the problem, depending on the number of years of formal education or familiarity with the domain.

Third, by making inferences, concepts and case facts can be related (e.g. Alexander, 2003; Schmidt and Boshuizen, 1993), oftentimes operationalized through (causal) reasoning, which goes beyond the cognitive activities associated with the mere use of concepts. Arts et al. (2006b) distinguish between descriptive inferences and explanatory inferences. Descriptive statements are descriptions of facts (either literal or paraphrased) and thus do not provide explanations of situations. Such statements provide indications of the subjects’ degree and comprehension of domain-specific, declarative knowledge (Arts et al., 2006b). Explanatory inferences are defined as statements with a causal relation of the type: ‘If Y then X’, or ‘X is caused by Y’. These kinds of inferences can be typified as indicators of procedural knowledge. Arts et al. (2006a) found that causal statements, for example, ‘if…then’ inferences, are the core units that explain expertise in terms of (managerial) problem-solving performance. Arts et al. (2006a) showed that the ability to make explanatory inferences from relevant business data separates (management) novices from (management) experts. This finding was also reported by other investigators (e.g. Rikers et al., 2000; Van de Wiel et al., 1998). In sum, expertise development research (e.g. Arts, 2007; Schmidt et al., 1990) suggests that domain-specific knowledge structures play a role in the diagnostic and solution accuracy.

Diagnostic accuracy is operationalized by two indicators: diagnostic correctness and diagnostic completeness. Diagnostic correctness commands an individual to formulate correct diagnoses (e.g. Rikers et al., 2000). Diagnostic completeness refers to the level of detail in the elaboration of the correct diagnosis (Arts et al., 2006a). Results from several studies in various fields (e.g. Arts et al., 2006a; Boshuizen, 1989; Vaatstra, 1996) regarding the progress of diagnostic correctness are unambiguous. They found a significant linear component indicating that the production of correct diagnoses (in absolute numbers) has a monotonically increasing relation with years of formal schooling. To a lesser extent, expertise research (Arts et al., 2006a; Rikers et al., 2000; Van de Wiel et al., 1998) focused on the completeness of the diagnoses. Rikers et al. (2000) revealed that sixth-year students provided significantly more complete diagnoses than second-year students. Van de Wiel et al. (1998) found similar results between second-, fourth-, and sixth-year students. Interestingly, in Arts et al.’s (2006a) article, only the student groups produced incomplete diagnoses, while the experts produced solely complete diagnoses.

Solution accuracy is operationalized by these indicators: solution correctness, solution completeness, and evaluation of alternative solutions. Solution correctness indicates whether an individual formulates a correct solution (Anderson and Leinhardt, 2002). Solution completeness refers to the kind of detail in the elaboration of the correct solution (Arts et al., 2006a). Various authors (e.g. Anderson and Leinhardt, 2002) found steady growth in the number of correct solutions during formal education. Furthermore, the literature reveals that completeness of the solution has a monotonically increasing relationship with years of formal business education. Noteworthy, after an initial increase in the number of incomplete solutions, a sharp decline occurs after the first year in business school (Arts et al., 2006a). Evaluation of alternative solutions is a complex judgmental process in which both the positive and negative aspects of alternative solutions are weighted and combined into an overall assessment of its goodness (Smith, 2003). Easton and Ormerod (2001)’s study revealed that experts (university teachers) evaluated roughly twice as many alternative solutions as novices (third year university marketing students) when analysing a case. Not only did the experts evaluate more, they did so with more and better evaluative criteria due to their conceptual depth. Bouwman (1984) compared decision making between accountancy novices and experts by having them evaluate a firm’s financial position. One finding was that the process of analysing the presented information and selecting only the data that promised to be particularly relevant for further evaluation was qualitatively assessed better by the experts in terms of ‘directed search’ (the case where an experts wants a specific item of information) and ‘the development of a feeling for the company’. To summarize, the application of concepts, problem representation, inferences, diagnostic accuracy, and solution accuracy reflect the predominant cognitive character of expertise and its development.

This cognitive science perspective on expertise and its development, however, largely overlooks powerful motivational forces (Pintrich et al., 1993). ‘ […] Without understanding those motivational/affective dimensions, educators cannot explain why some individuals persist in their journey toward expertise, while others yield to unavoidable pressures’ (Bereiter and Scardamalia, 1993 cited by Alexander, 2003: 10). Interest as a motivational variable has been described by Mayer (1998) as a critical ‘prerequisite for successful problem-solving’ (p. 50). According to Alexander (2003) and Ericsson (1996), individual interest plays a role in advances towards becoming an expert. Renninger et al. (2002) and Alexander et al. (2004) found that the way problem solvers interpret the problem solving situation partly depends on their individual interest. Individual interest can be defined as a relatively stable affective and evaluative orientation toward certain domains (e.g. Hidi and Renninger, 2006). As individuals become more individually interested in a domain, they learn more and as they continue to learn, their interest increases. From this perspective, then, individual interest appears to play a very important role in learning and academic achievement.

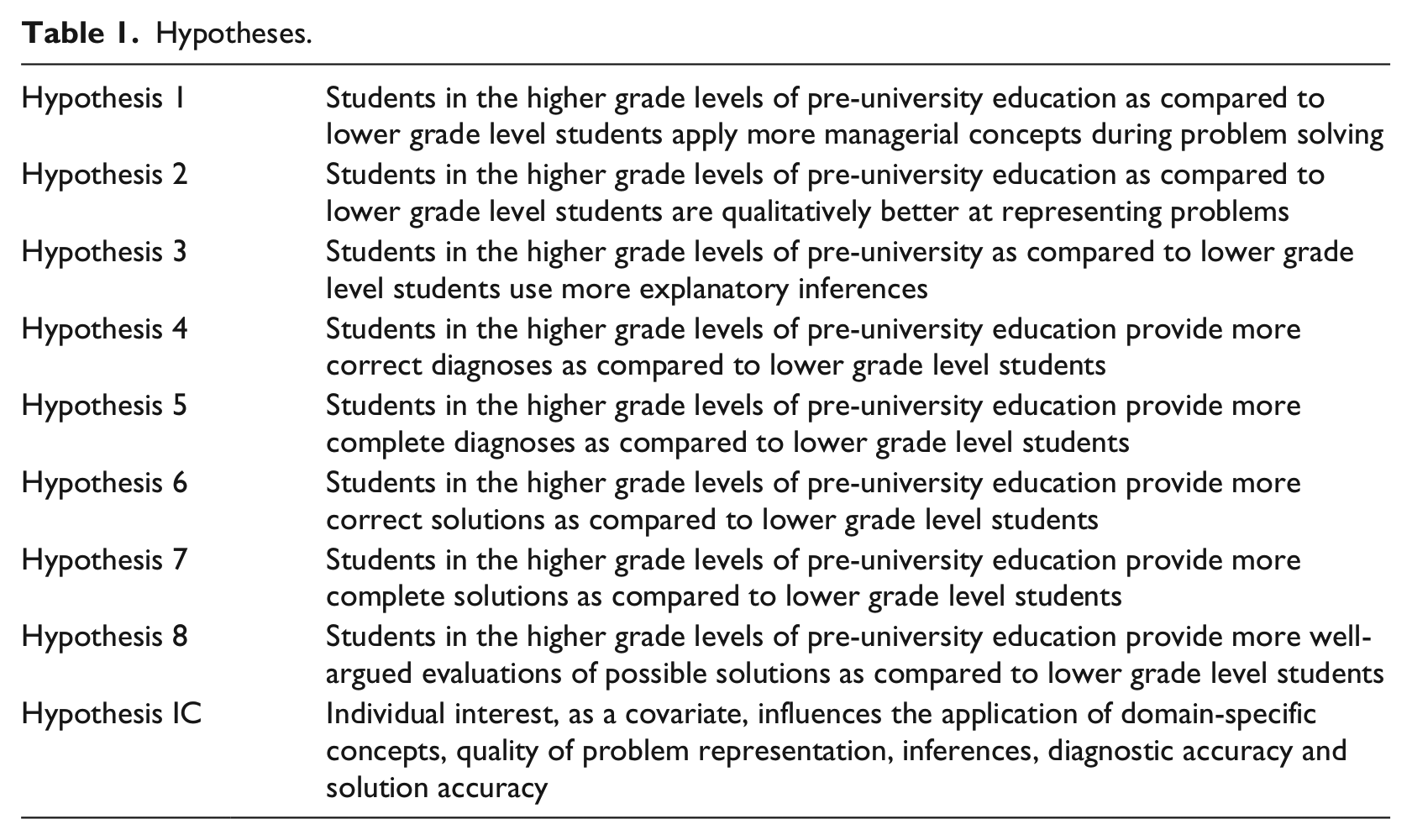

Based on this literature, the study identified five research questions with eight hypotheses listed in Table 1. It also explored a ninth hypothesis about individual interest as a covariate, listed as Hypothesis IC at the bottom of the table.

Hypotheses.

Method

Participants and setting

Participants were 213 pre-university students following a business track from 7 secondary schools 1 , 10 different classes, and 8 different teachers in the Netherlands. The sample included 111 (52%) girls and 102 (48%) boys. Furthermore, the sample comprised 64 10th graders (M = 15.48 years), 91 11th graders (M = 16.82 years) and 61 12th graders (M = 17.44 years). During these 3 years, students study business behaviour from various disciplines within business (e.g. organization, finance, marketing, financial policy, management information systems, and accounting) and from different perspectives of a range of stakeholders. The Dutch pedagogical system in these grades, introduced in 1998, is called ‘Study House’. The Study House implies that pupils learn in an active and autonomous way and encourages an independent attitude. All the schools in this research used a lesson method that supports the principles of the Study House.

Instruments

Business case

As a first step, we developed a business case (see Appendix 1) aligned to the typical characteristics of business problems (ill-structured, multidisciplinary and complex) with appropriate questions (Arts, 2007) that followed the objective according to the national curriculum for business education for pre-university students in Netherlands: ‘by the end of the 12th grade, pre-university students following a business track are expected to analyse common problems within commercial and non-commercial organizations in the fields of organization, finance, marketing, financial policy, management information systems, and accounting’ (College voor Examens, 2013).

Three experienced teachers in business education (two with > 30 years and one with >10 years of experience) verified students’ ability to analyse and solve the problems presented in the case and if the domain-specific jargon was understandable for these 10th, 11th, and 12th graders.

Individual interest

The measure of individual interest (nine items, α = 0.89) was derived from the ‘Hold Interest’ Scale (Harackiewicz et al., 2008). Sample items are as follows: ‘I find the content of this subject personally meaningful’ and ‘I see how I can apply what we are learning in Management & Organisation to real life’.

Procedure

Administration case

The students encountered a business case description. Each participant was asked to address four questions after reading the case description (see Appendix 1). There was no plenary discussion of the case, nor were students allowed to consult each other. Students were given 25 minutes to solve the case. They were informed that their responses were not limited in length and could be written in their own words. One week later, students filled out a questionnaire to measure individual interest.

Coding procedures

Various empirical expertise development studies in different fields (e.g. medicine: Schmidt et al., 1990; law: Nievelstein et al., 2010; geography: Anderson and Leinhardt, 2002; management studies: Arts et al., 2006a) have resulted in relevant insights into the operationalization of differences between the novice, intermediate, and expert level in terms of quality in problem solving. We analysed the 213 handwritten case protocols, as produced by the participants, by considering the (1) application of domain-specific concepts, (2) quality of the problem representation, (3) inferences, (4) diagnostic accuracy, and (5) solution accuracy.

1. Application of domain-specific concepts.

We counted the domain-specific concepts used by a student when used correctly and not literally mentioned in the case description. Therefore, we refer to the use of novel managerial concepts. Following Arts et al. (2006b: 395), we considered the use of novel concepts as ‘an indicator for the possession and use of theoretical discipline knowledge, in the sense of characterizing case information’. An example of a managerial concept as used by a participant: ‘the turnover outweighs the costs, so there is profit’. Profit is a novel managerial concept; turnover and costs were literally mentioned in the case description. Novel concepts were only counted upon first use.

2. Quality of problem representation.

Based on Vaatstra (1996), Smith (2003), and Arts et al. (2006a), we used four criteria which reflect the quality of problem representation: (1) student identifies that there is a problem, (2) student uses all relevant perspectives conferred by balance sheet or the profit and loss statement, or both, (3i) student (inter)relates the relevant perspectives using domain knowledge in a proper way, and (iv) student is able to transform the numbers into ratios. These criteria were the basis for the development of a scoring model (see Appendix 2). The higher the level, the deeper the participant processed the problem.

3. Inferences.

The meaningful unit of analysis was identification of a correct cause and correct solution for each argument. We have scored the use of descriptive, explanatory or no inference. Descriptive inferences were literally derived or paraphrased from the case. An example of a descriptive inference as used by a participant is ‘the organization has high personnel costs, about 20% of the total cost’. Explanatory inferences were defined as statements with a causal or propositional relation; statements in which students connected facts with their prior knowledge and transformed them into a novel idea, that is, ‘If Y then X’, or ‘X is caused by Y’. An example, as used by a participant, is ‘The football club’s canteen personnel costs could be reduced further by asking parents or members of the football club to do voluntary work’. Lastly, a correct cause or solution (or both) offered without elaboration, like ‘raise prices in the canteen’, was not counted as an inference. Only the number of explanatory inferences was counted.

4. Diagnostic accuracy.

First, we counted the number of correct and incorrect causes produced by the participant (diagnostic correctness). Second, each correct cause was evaluated on its completeness. Based on Vaatstra (1996), Smith (2003), and Arts et al. (2006a), we used three criteria to judge the diagnostic completeness: (1) consistency, that is, to what extent the student mentions causes connected to problem identification and definition (problem representation), (2) to what extent the student names different types of causes (e.g. precipitating and underlying) and (3) to what extent the student specifically takes into account the context of the football club (Smith, 2003). The three mentioned criteria were the basis for the development of a scoring model (see Appendix 3). The total score for diagnostic completeness was the sum of the scores of completeness on each correct diagnosis.

5. Solution accuracy.

First, we counted the number of correct and incorrect solutions produced by the participant (solution correctness). Second, each correct solution was evaluated on its completeness. We used four criteria to judge complete solutions: (1) solution consistency, that is, to what extent the student mentions a solution which is line with the problem representation, (2) to what extent the student indicates what further action should be undertaken for the mentioned solution (3) to what extent the student states the problem’s effectiveness or its feasibility, or both (4) to what extent the student weighs the solution in the context of the football club (Smith, 2003). The four mentioned criteria were the basis for the development of a scoring model (see Appendix 4). The total score for solution completeness was the sum of the score of completeness on each correct solution.

Based on Smith (2003) and Bouwman (1984), we used four criteria to judge the quality of the evaluation of alternative solutions: (1) weighing of alternative solutions, that is, to what extent the student is able to make a choice between the alternative solutions (2) consistency, that is, to what extent the student describes the relationship between the chosen alternative(s) and the problem identification and definition, (3) justification of the chosen alternative(s), that is, to what extent the student can explain why she or he made the choice for a particular alternative (4) to what extent the student includes specific contextual considerations into his or her justification. The scoring model for the quality of evaluation of alternative solutions is presented in Appendix 5.

Data analysis

We compared the three grade levels by using analysis of variance (ANOVA). The Boneferroni or Games-Howell test at a 5% level was used to control for Type 1 errors across the three pairwise comparisons. We used analysis of covariance (ANCOVA) for testing the effects for individual interest as a covariate on the differences in quality of problem solving between 10th, 11th and 12th graders. After testing for homogeneity of regression (interaction between covariates and factors), we found that none of the interactions in the analyses was statistically significant. This means that we can assume homogeneity of regression slopes. Effect sizes of AN(C)OVAs were measured by partial eta squared with .01, .06 and .14 representing small, medium and large, respectively (based on Cohen, 1988). All analyses were done using SPSS (Version 22.0).

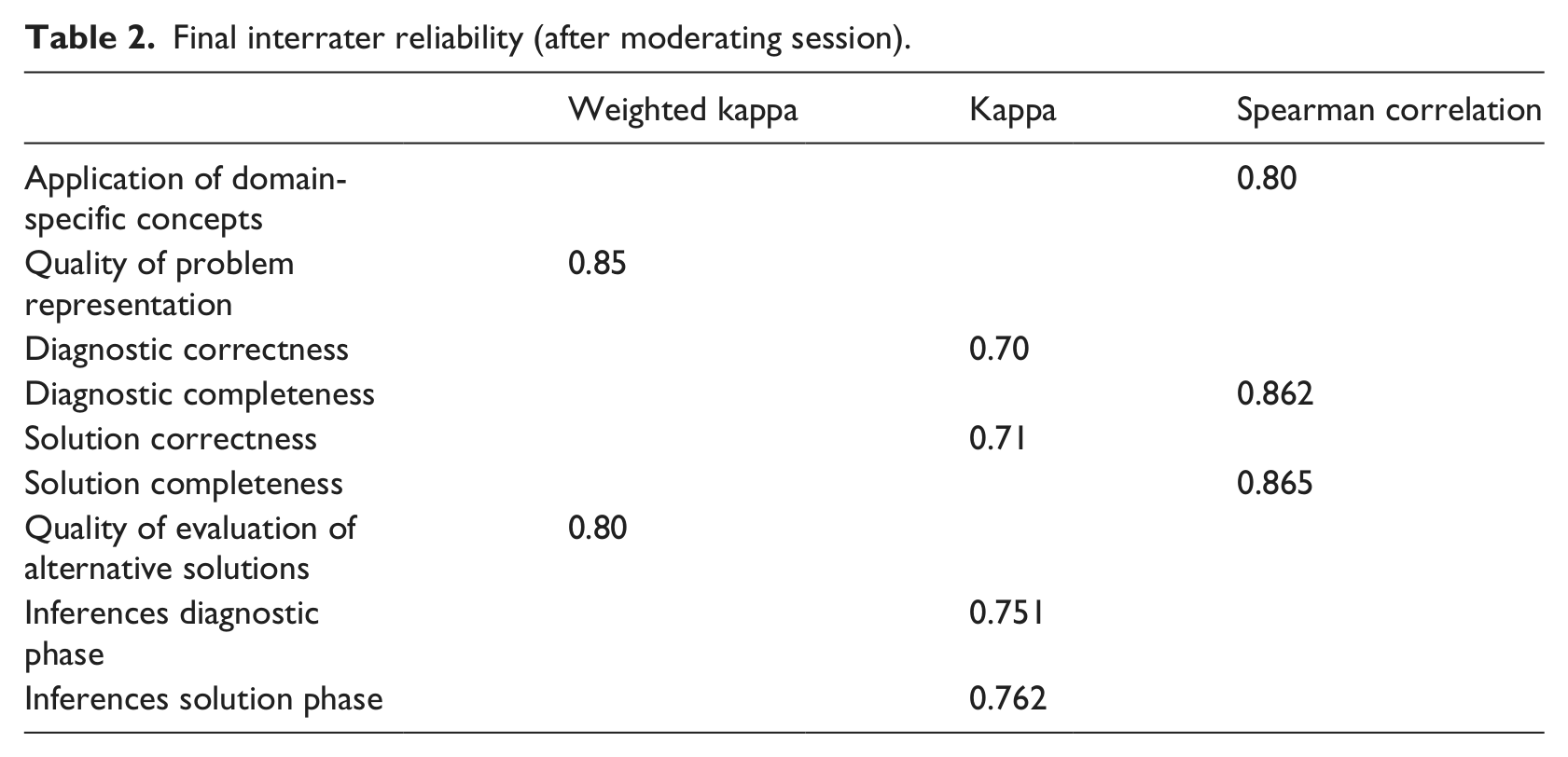

Interrater reliability

To determine the interrater reliability, two raters independently rated 26 protocols in two rounds. The first rater, experienced (> 10 years) in the field of business education and research, is a member of the research team. The second rater, not involved in the research project, is very experienced (> 30 years) secondary school teacher in business as well as a university teacher in business. The second rater received training before rating the protocols.

After a first coding round (10 protocols), low interrater reliability scores were found for quality of problem representation (weighted kappa = 0.58), diagnostic correctness (kappa = 0.64) and solution correctness (kappa = 0.60). After this first round of rating, a ‘moderating’ session was held. The first and second rater discussed disagreements and made adjustments to the scoring model so as to prevent those disagreements in the future. Adjustments involved altering the wording that defined the dimensions and that distinguished between scoring levels within a dimension. Furthermore, based on protocols, we added one level to the quality of problem representation. The second round of coding (16 protocols) established the desired degree of interrater reliability (see Table 2). We used a weighted kappa for quasi-interval scales, kappa for ratio and dichotomous scales and Spearman correlation for ratio scales.

Final interrater reliability (after moderating session).

Results

Application of domain-specific concepts

In line with our first hypothesis, the results showed that the difference in the application of domain-specific concepts among 10th (M = 2.18, SD = 1.70), 11th (M = 3.27, SD = 2.39) and 12th grade students (M = 3.78, SD = 2.50) were statistically significant, F(2, 210) = 8.52, MSE = 5.071, p < .001,

Quality of problem representation

Likewise as expected in our second hypothesis, the difference in quality of problem representation among 10th graders (M = 3.98, SD = 1.48), 11th (M = 5.04, SD 1.94) and 12th graders (M = 4.44, SD = 2.50) was statistically significant, F(2, 210) = 6.46, MSE = 3.117, p < .01,

The following two statements illustrate students’ responses concerning the quality of problem representation:

The club is in negative equity.(Girl, grade 10; level 2) The association has more expenses than income. It has a € 13796 deficit. You can find this deficit in the balance sheet, namely in the negative equity. The membership fees are not covering the personnel and housing costs. Also, the association has a lot of loan capital. The canteen’s earnings are relatively low considering the costs and turnover. So are the entrance fees if you compare them to the accommodation and match costs. (Girl, grade 11; level 5).

However, despite the fact that 11th graders scored higher than 12th graders, no significant differences were found between these two groups, nor between 10th and 12th graders. An ANCOVA indicated main effects of grade level, F(2, 209) = 4.613, p = .011, MSE = 3.112,

Inferences

We hypothesized that with increasing grade levels, the use of explanatory inferences increases. As expected in our third hypothesis, the use of explanatory inferences of 10th (M = 1.11, SD = 1.33), 11th (M = 2.00, SD = 1.73) and 12th graders (M = 1.50, SD = 1.64) was statistically significant, F(2, 210) = 5.59, MSE = 2.558 p < .01,

The post hoc test revealed that the use of explanatory inferences by 11th graders was significantly higher than by 10th graders. However, no significant differences were found between 11th and 12th and 10th and 12th graders. An ANCOVA revealed main effects of grade level, F(2, 209) = 3.661, p = .027, MSE = 2.544,

Diagnostic accuracy

The results showed that the differences in diagnostic correctness between 10th (M = 1.56, SD = 1.35), 11th (M = 2.30, SD = 1.54) and 12th grade students (M = 2.23, SD = 1.70) were statistically significant, F(2, 210) = 4.64, MSE = 2.385, p = .011,

In line with Hypothesis 4, the results showed that the difference in diagnostic completeness among 10th (M = 2.23, SD = 2.17), 11th (M = 3.48, SD = 2.48) and 12th grade students (M = 3.26, SD = 2.62) was statistically significant, F(2, 210) = 5.10, MSE = 5.956, p = .007,

In general, 10th graders only mention or identify a cause (which results in an answer at level 1, see Appendix 3), whereas on the whole 11th and 12th graders add a correct elaboration/explanation to the cause (which results in an answer at level 2 or higher, see Appendix 3). The following two examples are illustrative:

The membership fees are too low. (Boy, grade 10; level 1) The cost price of the canteen’s products is too high. As a result, the net profit markups are small. (Girl, grade 11; level 2)

However, post hoc analysis did not confirm these differences between 11th and 12th graders. The ANCOVA results confirmed the main effect of grade level, F(2, 209) = 3.254, p = .041, MSE = 5.759,

Solution accuracy

We hypothesized that with increasing grade level, the number of correct solutions produced increases (Hypothesis 6). The results showed that the difference in solution correctness among 10th (M = 1.55, SD = 1.50), 11th (M = 2.83, SD = 1.77) and 12th grade students (M = 2.46, SD = 1.68) was statistically significant, F(2, 210) = 10.72, MSE = 2.790, p = .000,

In line with Hypothesis 6, the results showed that the difference in solution completeness among 10th (M = 2.63, SD = 2.81), 11th (M = 4.71, SD = 3.09) and 12th grade students (M = 4.05, SD = 3.13) was statistically significant, F(2, 210) = 10.72, MSE = 2.790, p = .000, Raise the membership fees by 20 – 30 %. This will raise the proceeds. Members will stay with the club because it is probably in their area and they have friends at the club. (Girl; age; 18; grade 12; level 3) Increase the amount of the membership fee. (Girl, grade 10; level 1)

However, post hoc analysis did not confirm these differences between 11th and 12th graders. The ANCOVA results confirmed the main effects of grade level, F(2, 209) = 5.36, p = .005, MSE = 8.822,

Likewise, as expected in Hypothesis 8, the results showed that the difference in the quality of the evaluation of alternative solutions 10th (M = 1.77, SD = 1.29), 11th (M = 2.37, SD = 1.50) and 12th grade students (M = 2.63, SD = 1.73) was statistically significant, F(2, 210) = 5.43, MSE = 2.305, p = .005, Attract more members, as this will result in more income from membership fees as well as more sales in the canteen. (Boy, 10th grade; age; 16; level 1) I would focus on the canteen because I think a lot more profit could be made there. Reduce staff, lower costs, and the profits will start to go up. Additionally, I would also organise more events and on the days that the fields are not being used, use them for other events. And in that way generate more profit. (Girl, 11th grade; age;18; level 4)

However, post hoc analysis did not confirm these differences between 11th and 12th graders. An ANCOVA revealed main effects of grade level, F(2, 209) = 5.17, p = .006, MSE = 2.312, ηp2 = .047, but a non-significant effect for individual interest as covariate, F(1, 209) = .331, p = .566. Individual interest was non-significantly related to the quality of the evaluation of alternative solutions, not supporting Hypothesis IC.

Discussion and conclusion

The purpose of this study was to investigate whether the quality of students’ problem solving differs in terms of application of domain-specific concepts, problem representation, inferences, diagnostic accuracy and solution accuracy in three consecutive upper grades of secondary education (15–18 years). Based on the argument that expert problem solving is influenced by motivational aspects (Alexander, 2003; Hatano and Oura, 2003; Mayer, 1998), we incorporated the problem solver’s individual interest in the domain of business.

Our findings support the role of grade level on the dependent variable expertise in terms of quality in problem solving and support our hypotheses. These findings support existing literature: grade level is positively related to the application of domain-specific concepts (e.g. Bryce and Blown, 2012); to quality of problem representation (e.g. Arts et al., 2006a), to the use of explanatory inferences (e.g. Rikers et al., 2000), to diagnostic accuracy (e.g. Arts et al., 2006a; Vaatstra, 1996) and to solution accuracy (e.g. Anderson and Leinhardt, 2002; Arts et al., 2006a; Easton and Ormerod, 2001). Put generally, during the course of secondary education students develop in terms of professional expertise. However, the post hoc tests revealed that between Year 11 and Year 12 little significant differences were observed in terms of the quality in problem solving. Similarly, Arts et al. (2006a) found that during the transition to the workplace-stage (after graduation), graduates end up in a ‘confusion’ phase followed by a ‘consolidation’ phase. During this ‘consolidation’ phase, little significant progress in expertise occurs. A possible explanation is the ‘experiential shock’ graduates experience as the workplace requires different thinking and different knowledge than the problems during the educational period. For secondary education, this explanation does not apply, after all students do not go to the workplace. We will discuss three possible explanations for the ‘consolidation’ phase between Year 11 and Year 12.

First, the Dutch business curriculum has been frequently criticised for being overloaded (Voorend and Gijssen, 2001; Welp, 2007), covering a broad span of factual material at the expense of deep understanding (Alexander, 2005). Overloaded curricula might result in students focusing on rote memorization of facts and concepts, rather than on developing a well-structured knowledge base and problem-solving skills.

Second, at the end of Year 12, Nationwide leaving exams are held in the Netherlands. These Nationwide exams can be characterized as large-scale standardized exams (Scheerens et al., 2012). Standardized tests may result in teachers concentrating on the skills tested which tend to be fairly basic, whereas more complex skills such as problem-solving are rarely assessed (Mons, 2009). Furthermore, these exams can be considered as high stake, because the results of these exams are used to make major decisions about a student, such as high school graduation and as an entrance to higher education. In this respect, many scholars in the domain of assessment refer to the negative backwash effects (e.g. Biggs, 1995) or pre-assessment effect (e.g. Segers et al., 2006, 2008) of large-scale tests. First of all, former studies have been evidencing negative effects of tests on student learning. Students’ learning is influenced by their perceptions of the demands of an assessment task in terms of content (in terms of knowledge and skills) which will be assessed. Students focus on specific content which they expect to be tested (Segers et al., 2006). Students get to know the focus of the tests based on information from the teachers and peers, as well as on the test prep books and programmes which are widely available for students (Segers et al., 2006). Given problem-solving skills are not the focus of the Dutch national tests (Nusche et al., 2014), it can be expected that especially in the final year of secondary education when during the month of May the nationwide tests are administered, students focus on what they interpret as being relevant for passing the tests. In this respect, pivotal scholars in the field of educational psychology (Linn et al., 1991; Messick, 1989) have been discussing the low consequential validity of nation-wide large-scale tests, referring to the negative backwash effect of these tests on learning and other educational matters.

Third, the national exams have also backwash effects for teachers as they are also high stake for them. After all, the results of these exams are ‘publicly available accountability information’ (Scheerens et al., 2012). In this respect, Cunningham and Sanzo (2002) have shown that high stakes testing impacts negatively on creative and effective teachers, leading to cramming for tests rather than instruction. Moreover, schools, among other things, are nationally ranked based on nationwide leaving exams results. Backwash effects of the national exams for teachers in combination with the overloaded curriculum might promote ‘teaching to the test’ behaviour, that is, teaching focused on preparing students for a standardized test which does hardly measure problem-solving.

Considering the target group as well as recommendations from earlier expertise development literature, we examined whether individual interest, as a covariate expressed in Hypothesis IC, affects the quality of problem solving. We found a positive association between individual interest and the application of domain-specific concepts, diagnostic accuracy and solution accuracy (with the exception of the quality of the evaluation of alternative solutions). These ANCOVA results largely confirm that, besides experience, motivational variables, such as interest, help determine problem-solving performance. This offers a different perspective on expertise indicators to that of traditional research. Traditional expertise research takes to a minor extent into account the role if individual differences, such as motivation and interest. Instead, it focusses on experience as an indicator. We agree with Bradley et al. (2006) that experience alone is not an acceptable indicator of expertise. In line with their work, our results indicate that other factors, more precisely interest as a motivational variable, must also be present to develop expertise. The ANCOVA results support the assumption that expertise cannot be fully accounted for by experience, rather, in part. When adding individual interest as a covariate, the influence of grade level on diagnostic correctness became non-significant.

A final interesting finding in this study was the rising standard deviations concerning the majority of the expertise variables. This might indicate that education does not homogenize, but rather leaves room for differences between students. Some students progress faster in their expertise development than others. This might be due to various factors such as differences in intellectual capabilities, readiness to learn, or in perceptions of the learning and assessment environment.

The goal of secondary school learning environments is not to make experts of secondary school students but to help students develop the types of knowledge representations, ways of thinking, and social practices that define successful learning in specific domains (Goldman et al., 1999; Hatano and Oura, 2003) and thus lay the foundations for development of expertise, or as Tynjälä et al. (1997) argued ‘Education as an institution and educational practices have an important role in creating (or inhibiting) the preconditions for expertise’ (p. 479).

Our results show that secondary education contributes to students’ expertise development. The power of secondary education in laying the foundations for students’ expertise development can be increased by supporting (prospective) teachers in their understanding of what expertise development is about and the role of secondary education. Moreover, our results indicate that students’ individual interest positively impacts students’ problem solving. By enhancing students interest in the subject, teachers can increase the quality of students problem solving and therefore creating the precondition for expertise. In this respect, research on students’ interest (e.g. Bergin, 1999; Renninger, 2000) is a relevant source of information for (prospective) teachers.

Various authors suggest that deep processing of the problem representation can improve the quality of all aspects of the whole problem-solving process (e.g. Arts et al., 2006a). In the business case, we presented two domain-specific tools (a balance sheet and a profit and loss account) that play an important role in representing the problem underlying this case. It turns out that students have difficulty in integrating these two domain-specific tools, to one another. Although the national exams require students to master these tools, in practice this is far from cut and dried. This seems to be in line with the findings of Postigo and Pozo (2004). They have shown that secondary school students have difficulty in processing conceptual and implicit information contained in domain-specific tools (maps and graphs) in Geography coursework. It might be that teachers pay too little attention in class to processing implicit and conceptual information embedded in tools, or that teachers underestimate the difficulty their students encounter in processing such information. This implies that instruction facilitating students’ interpretation of external sources, such as profit and loss accounts and balance sheets, should focus on processing implicit and conceptual information. In this way, students are supported in how to unpack the meaning of the language of these sources and readily employ as they solve problems (Lebeau, 1998).

Footnotes

Appendix 1

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.