Abstract

The digital environment alters the way organizations use propaganda and facilitates its spread. This development calls for an outline of the features of propaganda by organizations on the Internet and to reconsider where public relations (PR) stops and propaganda begins. By means of a systematic review of primary research on organizational propaganda online, we propose a definition and describe the ‘five Ws’ of digital organizational propaganda: who employs propaganda, to whom, on which channels, which media are used (where), the objectives of the propaganda strategy (why), and in which contexts it occurs (when). Contrary to the offline setting, organizations engaging in propaganda online do not hide their identity and primarily address (potential) followers with the goal to change attitudes. Based on our findings, we propose a classification of digital organizational propaganda along three dimensions: ethical versus unethical, mutual understanding versus persuasion, and direct versus indirect communication. Digital organizational propaganda is defined as the direct persuasive communicative acts by organizations with an unethical (i.e. untruthful, inauthentic, disrespectful, or unequal) intent through digital channels. Thus, this study addresses the imbalance between the growing primary research on digital propaganda, the missing definition, and the lacking systematic empirical overview of propaganda’s digital characteristics.

Introduction

Given its twofold advantage of wide reach at low cost, the Internet is used intensely by an array of organizations to spread their ideas publicly (Goel, 2011). Political parties and movements in Western democracies (Caiani and Parenti, 2009; Klinger, 2013), corporations in China (He and Tian, 2008), and extremist organizations (Cooley et al., 2016) use these properties of the Internet to propagate their ideas to the public (Caiani and Parenti, 2009). On the one hand, digital channels allow for spreading propaganda regarding elections; on the other hand, they facilitate voter mobilization and dialogue (Klinger, 2013). Likewise, social media aid extremist groups (United Nations, 2015) to reach potential followers, but at the same time the virtual space also opens up the opportunity to discuss and scrutinize these efforts (Cooley et al., 2016). This puts propaganda by organizations high on the public agenda (Chang and Lin, 2014).

As Bernays (1928) famously wrote: ‘those who manipulate this unseen mechanism of society constitute an invisible government which is the true ruling power’ (p. 9). The discipline of public relations (PR) has developed a fairly good understanding of how repressive regimes employed propaganda to influence public opinion (L’Etang, 2006). Historically, propaganda and PR started off as one and the same (Olasky, 1984); it was with the study of totalitarian propaganda by fascist regimes before and during World War II that propaganda became the negatively coined term (Moloney, 2006), while PR was perceived as good (Weaver et al., 2006). From then on, propaganda has primarily been associated with unethical communication practices by totalitarian regimes (Jowett and O’Donnell, 2014), while PR has been described as ethical communication by organizations (e.g. Grunig and Hunt, 1984). However, also organizations engage in propaganda to pursue their goals: pharmaceutical companies, for instance, publish ghost-written articles in medical journals that support the findings of clinical trials for their drugs (Jowett and O’Donnell, 2014). Insights into how organizational propaganda evolves in the ‘digital age’ (Schmidt and Cohen, 2013) are even scarcer. Thus, this study focuses on propaganda by organizations on the Internet.

With technological advancement, the nature of propaganda has changed significantly from one-way communication through mass media channels directed at a passive audience to propaganda in a digital environment that allows for two-way communication without gatekeepers (Chang and Lin, 2014). ‘[W]hile the Internet is used for the empowerment of grassroots and protest movements, it is also increasingly being shaped and controlled by corporations, and used by political parties, reactionary forces, and even criminal groups for social control’ (Pedro, 2011: 1911). The Internet’s ubiquity, ease of access and spread, low costs, and organizing function (Goel, 2011) make it a primary propaganda channel. These hinder organizations and their audiences from recognizing where PR stops and propaganda begins. While the body of primary research on digital propagandistic communication is growing and conceptual papers on (nondigital) propaganda mark a good point of departure (e.g. Arnold, 2003), a clear definition of the concept and a systematic empirical overview of the phenomenon’s characteristics in the digital environment are missing.

This study sets out to investigate the impact of the digital environment on the use of propaganda by organizations, to delineate its characteristics, and to demarcate this new form of propaganda from ethical communication in PR by asking the following question: How is propaganda by organizations characterized on the Internet?

The study of propaganda does not know disciplinary boundaries as it has been researched in media studies (e.g. Herman and Chomsky, 1988), communication (e.g. Smith et al., 2015 [1946]), and political science (e.g. Eldersveld, 1956), but also sociology (e.g. Ellul, 1965) or psychology (Silverstein, 1987). We conduct a systematic literature review of empirical social science research on propaganda to answer the five Ws – corresponding to journalistic standards of inquiry – of digital organizational propaganda (Lasswell, 1948): (1) who employs techniques of propaganda, (2) to whom are they directed, (3) on which channels does propaganda take place and which types of media are used (where), (4) the objectives of the propaganda strategy (why), and (5) in which contexts it occurs (when). This study contributes to the field of PR by describing and defining organizational propaganda and by shedding light on how companies use it in the digital environment through a multidisciplinary and multilevel overview rather than focusing on single instances of digital propaganda. Thus, past propaganda theory is discussed against the affordances of the digital age in an effort to ‘tackle the relationship [of propaganda and PR] at all levels (state, government, organisation, group, individual, text, discourse)’ (L’Etang, 2006: 39) in order to clarify in how far such tactics contribute to, or jeopardize a ‘fully functioning society’ (Heath, 2006). Accordingly, this systematic review provides organizations, audiences, and researchers with standards to detect digital organizational propaganda.

PR and propaganda in the communication of organizations

Given the extensive track record of propaganda research in the social sciences since the 1920s and its peak after World War II, propaganda definitions proliferated (Jowett and O’Donnell, 2014). Alike PR (Edwards, 2012), propaganda remains frequently but ill-defined (Baines and O’Shaughnessy, 2014). Most of the definitions ascertain that propagandists aim to control the flow of information, deceive recipients, spread untruthful information (Messina, 2007), and intentionally misinform the public to benefit the sender over all others (Fallis, 2015). Propaganda has been described as a tool to spread ideology (Collison, 2003), but first and foremost propagandists aim at changing attitudes and behaviors, while PR is likewise often persuasive and, in its mainstream understanding, focused on maintaining relationships, no matter the theoretical viewpoint (Cutlip et al., 1994; Grunig and Hunt, 1984; Heath et al., 2009). Jowett and O’Donnell (2014), on the contrary, define propaganda as ‘the deliberate and systematic attempt to shape perceptions, manipulate cognitions, and direct behaviour to achieve a response that furthers the desired intent of the propagandist’ (p. 6). This definition does not detail the propaganda source, which can be individuals, governments, or other organizations, such as corporations. The focus of this study is on organizations as sources of propaganda.

Corporate propaganda has not yet evoked a lot of research attention; an exception is Beder’s (2005) study of propaganda efforts by US–American businesses to spread capitalist ideology (more examples, e.g., in Sussman, 2011). A reason for this paucity of research is that propaganda has often bluntly been equated with PR, or PR as ‘weak propaganda’ (Moloney, 2004: 90). This originated with Bernays’s (1928) famous propaganda book in which he names PR counsels propagandists. However, PR has since then developed into a discipline that is different from Bernays’s propaganda idea of manipulating public opinion. Moreover, the distinction of PR as ‘good’ versus propaganda as ‘bad’ does not hold (Weaver et al., 2006), as some consider propaganda part of PR’s toolbox (Messina, 2007).

PR includes dialogue and interaction with the goal of mutual understanding and adjustment between organizations and audiences (Hiebert, 2003); theoretically, this can be rooted in deliberation and communicative action theories (Habermas, 1984), where all parties in a discourse strive for understanding based on the normative demands of accountability, transparency, and participation (Lock, Seele, & Heath, 2016). Others root PR in dialogue theory arguing that viewing PR as oriented toward dialogue, that is, ‘dedicated on truth and mutual understanding’, is closer to the reality of PR (Taylor and Kent, 2014: 389). At the same time, PR applies persuasive communication (e.g. Cutlip et al., 1994) that is a form of communication with the goal to influence recipients on a voluntary account; persuasive communication has its roots in rhetoric and ancient Greek philosophy starting from Aristotle (Jowett and O’Donnell, 2014). Yet, whether persuasion or understanding is or should be the dominant objective of PR strategies is not the question here; rather, we point out that practitioners employ a range of PR tools that can range from persuasion to mutual understanding (Messina, 2007).

Inherent to propaganda is the persuasive attempt to alter attitudes and behaviors by limiting freedom and generating obedience (Taylor and Kent, 2014). Thus, we view propaganda as a subcategory of persuasive communication (Jowett and O’Donnell, 2014). The distinction of PR and propaganda becomes evident when looking at the moral dimension of this intent. Persuasion can be performed ethically such as in brand communication, as well as unethically as in propaganda. In fact, propaganda can best be regarded as one of the many tools that a PR practitioner has at hand (Cornelissen, 2014). Thus, demarcating the two is not ‘a play with semantics’ (Puchan, 2006: 113), but a necessary distinction within the field. Propaganda is a specialist form of unethical persuasive communication (Jowett and O’Donnell, 2014) and, when applied by organizations, a tool of PR.

Propaganda by organizations therefore has to be distinguished from ethical persuasion that covers a substantial part of the PR toolbox (Messina, 2007). Some authors (Weaver et al., 2006) contend that propaganda is not necessarily unethical, but depends on the context, ends, and transparency. Others claim that if communication is biased, intends to influence, applies high-pressure advocacy, simplifies and exaggerates, is ideological, avoids argumentative exchange, and does not take into account others’ views, the characteristics for propaganda are fulfilled (O’Shaughnessy, 1996). According to a utilitarian notion, persuasion is ethical if it ‘allows the audience to make voluntary, informed, rational and reflective judgements’ (Messina, 2007: 33) and is thus based on truthfulness, authenticity, respect, and equity. These principles are violated in propaganda, because propaganda builds on untruthful information, may conceal source and intent, is deceptive, and not dialogic. Thus, we can place propaganda at the unethical end of a spectrum from ethical to unethical (Taylor and Kent, 2014) and on the persuasive end of an axis from persuasion to understanding. Distinguishing propaganda as an unethical form of persuasion within the PR toolbox is crucial because trust in organizations is at an all-time low (Auger, 2014). With the rise of propaganda due to digital channels and media (Chang and Lin, 2014), distinguishing PR and propaganda becomes even more important to overcome skepticism regarding the communication of organizations.

Shifts in propaganda through the digital age

In the pre-Internet era, propaganda has been described as a tool that is used by powerful interests because it depends on large resources (Collison, 2003). With the turn of the millennium, the Internet has seen its surge as the primary communication medium for propaganda. Bots have influenced important political discourse in the last years, for instance, in the run up to the Brexit referendum in Great Britain (Howard and Kollanyi, 2018). The second Iraq War was described as the first Internet war (Hiebert, 2003), and extremists have started using the Internet for recruitment, training, and financing particularly after 9/11 (Jacobson, 2010).

Digital communication technologies have increased the spread of propaganda (Jowett and O’Donnell, 2014). Beyond that, we argue that these technological developments have also led to a new form of propaganda that has different characteristics than ‘offline’ or ‘analog’ propaganda. In classical propaganda, the propagandist’s identity is concealed and he or she is separated from its audience. The propaganda source does not communicate or access its followers directly, but reaches them via the media, as the primary goal is to change the general opinion of the public to eventually evoke a behavior change. Propaganda is persuasive in both environments, and the distinction between the sender representing himself as ‘good’ as opposed to ‘bad’ other voices (and critics) remains (Jowett and O’Donnell, 2014). Nevertheless, digital channels significantly alter the way propaganda is used by organizations.

The organizing function of the Internet, the effortlessness of spreading ideas, the possibility to form global networks, the ease of access (Goel, 2011), and the low costs proliferate propaganda activities by everyone and create a fertile ground for propaganda by organizations. Social bots can be created for a few dollars to simulate massive support for ideas (McCauley, 2015) ‘Flogs’, that is, fake blogs, are used to post fake opinions online to influence discourse (McNutt and Boland, 2007). Falsified identities participate in public opinion forming and counterfeit grassroots support for societal issues (Lock et al., 2016). Thus, it can be argued that besides black propaganda, the ‘big lie’ where the propagandist conceals his identity and spreads incorrect information, more and more gray and white forms of disinformation emerge in a digital environment. White propaganda comes from an identifiable sender and contains (more or less) truthful information, while gray propaganda originates from hardly identifiable sources and is therefore hard to grasp (Collison, 2003; Jowett and O’Donnell, 2014). However, common to all propaganda forms on the Internet is that they tend to violate the normative standards of truthfulness, authenticity, respect, and equity of ethical persuasion (Messina, 2007) and are likely infringing upon the demands for mutual understanding known as open discourse, participation, transparency, and accountability (Lock et al., 2016). Especially with the rise of white and gray propaganda on the Internet, audiences increasingly struggle with distinguishing unethical propaganda attempts from ethical persuasion in PR. This deepens the credibility gap in communication (Cornfield, 1987) and harms legitimate organizational interests and the information needs of audiences. Thus, with the continuously growing amount of propaganda on the Internet (Hatton and Nielsen, 2016), a close examination of this phenomenon is vital. To develop a systematic understanding and definition of this digital PR phenomenon, we analyze existing social science research in terms of the sources, contexts, audiences, media, channels, purposes, and forms of propaganda in the digital age.

Method: Systematic review

This study employs the method of a systematic literature review to gain conceptual insights into the characteristics of organizational propaganda in the digital context and thereof derive a definition of this phenomenon. Such a systematic approach is increasingly used in the context of communication research (e.g. Ludolph and Schulz, 2015; Noar et al., 2007; O’Keefe and Jensen, 2009; Peloza and Shang, 2011). It originated in evidence-based medicine and public health, where it is used to synthesize knowledge on treatments or health interventions to ultimately advice policymakers (Haddaway and Pullin, 2014). The method’s application in the social sciences was followed by more elaborate guides and discussions on appropriateness and use in this field (e.g. Major and Savin-Baden, 2012; Petticrew and Roberts, 2008). Its major advantage lies in the systematic synthesis and categorization of primary research. Thus, it assists research fields with a growing number of empirical studies but lacking conceptual and theoretical clarity in the development of ‘midrange’ theories (Bunn, 1993: 38). As opposed to traditional literature reviews, this methodology ensures a comprehensive and balanced overview of the state of the art in a certain research area using a systematic search strategy, predefined inclusion and exclusion criteria, and a coding of content conducted by two reviewers. Given that at least two reviewers independently screen the studies for eligibility, extract relevant data, and assess their methodological quality according to an a priori defined protocol, the results of a systematic review are less prone to subjective researcher biases and can therefore be considered more objective than literature reviews. This reduction of subjective biases leads to a greater reliability of results (Haddaway and Pullin, 2014).

Sample

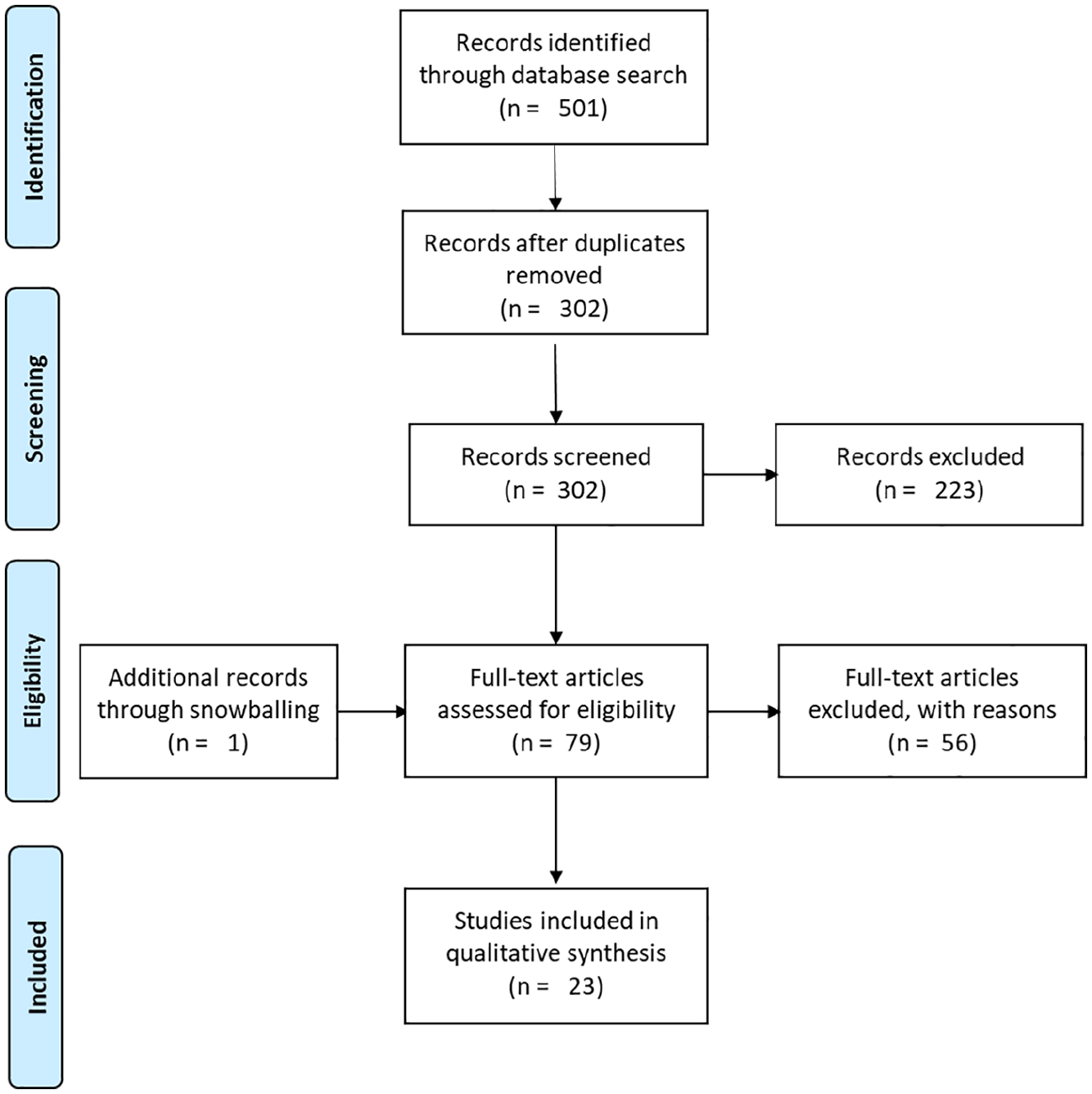

Given that there are different kinds of organizations engaging in digital propaganda, this analysis reaches beyond the field of PR to fully grasp the primary research available on this topic. Accordingly, we systematically searched eight electronic databases covering the fields of political sciences, communication sciences, economics/business studies, and other social sciences using a pretested search string. More specifically, these were Academic Search Premier, Business Source Premier, Communication and Mass Media Complete, EconLit, Political Science Complete, SocINDEX Full Text (all searched via Ebsco) as well as ABI Inform and ProQuest Sociology. The search string was developed based on the different concepts of interest of this study, namely, propaganda delivered by organizations in a digital environment. These concepts were linked through the Boolean operator AND and synonyms of the single elements connected using the Boolean operator OR. This led to the following search string that was pretested in a rapid desk search and adapted to the technical requirements of the specific databases: propaganda AND [(digital*) OR (“big data”) OR (internet) OR (online) OR (technolog*) OR (web*) OR (“social media) OR (cyber)] AND [(company*) OR (corporat*) OR (organi*) OR (business) OR (firm) OR (agenc*) OR (part*) OR (institute*) OR (association*)]. Results were limited to title, abstract, and keywords, assuming that these fields contain the main concepts of the research, following established practice in library studies and guidelines on systematic reviews (Booth et al., 2016). The systematic search was conducted in March 2016. The search of electronic databases was complemented by a hand search of potentially relevant books and the bibliographies of included studies and resulted in 501 articles. After the removal of duplicates, the titles and abstracts of the remaining 302 studies were screened independently by the two authors (see Figure 1 for a flow diagram). If a decision could not be reached based on the title and abstract of the article, the full text was obtained and assessed for eligibility. The reviewers’ results of the screening process were compared and all inconsistencies were resolved through discussion. This process led to an inclusion of 23 studies in the systematic review (see also: List of included articles).

Flow diagram of the systematic review.

Inclusion and exclusion criteria

Studies were considered eligible for inclusion in the review if (1) the term ‘propaganda’ was stated in the title, abstract, or keywords of the article; (2) the paper was concerned with the propagandistic activities of an organization such as a (non)governmental organization, company, institution, or party; (3) the propaganda was disseminated via a digital or online channel; and (4) empirical data collection methods such as content analyses or surveys were used. Studies were excluded if they did not meet all of the aforementioned criteria, examined interpersonal communication, and were books, literature reviews, commentaries, or conceptual in nature. However, literature reviews were screened for further potentially relevant articles.

Content analysis

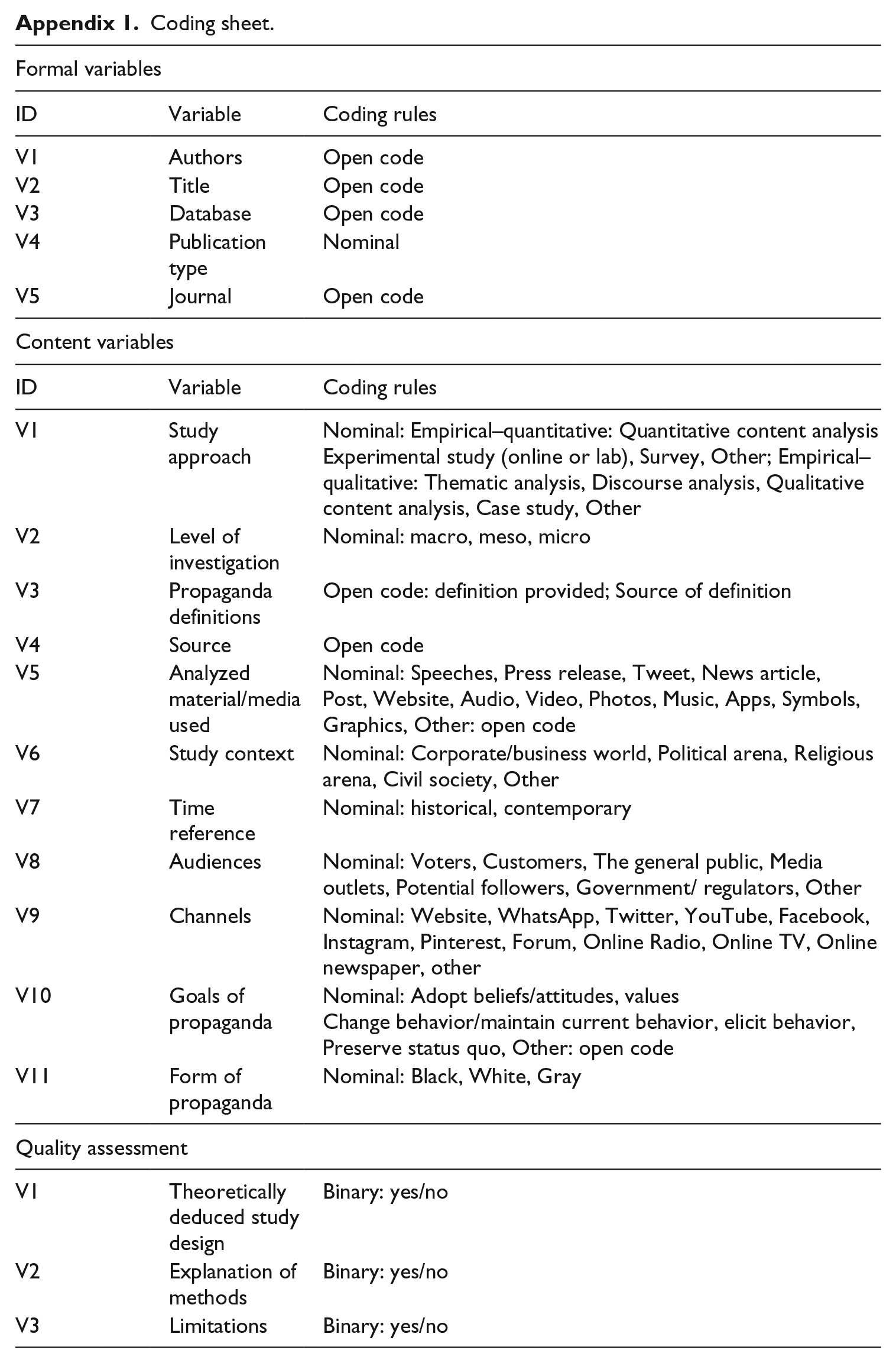

We extracted relevant data from the included articles using a pretested coding scheme (see Appendix 1). Besides a couple of formal coding categories, a range of content variables were coded to receive a comprehensive picture about the determining characteristics of organizational propaganda in the digital age (Major and Savin-Baden, 2012). Therefore, content categories were oriented at the previously outlined five Ws of digital propaganda by organizations (Lasswell, 1948) and at Jowett and O’Donnell’s (2014) propaganda analysis. Formal categories included the authors of a study, its title, the database it was retrieved from or whether it was identified via snowballing, the publication type (journal article, book, or other), and the name of the journal if applicable. In terms of content, we assessed the type of study approach such as quantitative content analysis, thematic or discourse analysis, and whether the investigation took place on a macro-, meso-, or micro-level. Moreover, the coding was designed to capture whether a clear definition of the term propaganda was provided. We further coded the source of the propaganda, its audience, the channel used to spread the propagandistic messages, and the studies’ societal context. In terms of propagandists, we coded different kinds of organizations as they occur in the articles (open code), such as companies, nongovernmental actors, parties, military, or extremist groups. We always follow the rhetoric of the analyzed studies in what to call ‘terrorist’, ‘extremist’, or ‘supremacist’ organizations. Extremist groups are understood in line with the United Nations (2015) concept of violent extremist groups, while acknowledging that exerting violence is not a distinguishing characteristic for whether an organization is extremist or not (Jesse, 2013). A further definition of extremist groups and a conceptual classification of the different sorts of extremist organizations are beyond the scope of this article. However, a valuable account of what counts as a terrorist organization is provided by Rothenberger (2012). The content analysis further included the type of analyzed material in the primary study, whether it had a historical or contemporary time reference, and the purpose and goals of the propaganda. Finally, the form of propaganda was assessed, that is, white, gray, or black propaganda depending on the accuracy of the message and whether its source is identifiable. The coding categories were derived from the previous literature (Jowett and O’Donnell, 2014) and – if necessary – inductively extended based on a first round of coding of the material.

The coding included a quality assessment of included studies. Given that this review includes empirical studies only, the level of reporting transparency was taken as a quality indicator (Gibbert and Ruigrok, 2010). Thus, we coded the existence of a methods section, reporting of limitations, and whether the study design was grounded on the literature or derived from a model, as these indicators are common to both quantitative and qualitative empirical work. The purpose of this step is to evaluate the studies’ rigor and comprehensiveness in reporting and to judge their reliability and validity. The information derived from these processes was narratively synthesized and is reported in the following. The data sheet with the coded categories of all variables is available at the first author’s institutional data repository (10.21942/uva.c.4616030).

Results

Quality of published articles

Systematic reviews foresee a quality assessment by the coders. Given that this sample included all sorts of empirical studies, from ethnography to experiments and mixed-methods designs, an assessment of quality cannot be generalized. However, every empirical academic study should at least embed or deduce its design from theory, report its methods, and comment on its limitations (Gibbert and Ruigrok, 2010). With the exception of one article (Lemieux et al., 2014), all studies reported on their methods, while only 39.1 percent commented on limitations. The studies’ rationales were in most of the cases (82.6%) embedded in theory, but authors also took an event as a starting point for empirical exploration: Torres-Soriano (2015) looked at the communication strategy of the North African jihadist group Al-Qaeda in the Lands of the Islamic Maghreb when it started to ‘go social’ with its first Twitter account. Regardless of academic discipline and origin (as apparent from the journal outlets chosen by the authors), quality of presentation and rigor in methods varied widely across the sample.

Sources of organizational propaganda on the Internet

A large proportion of the articles (43.5%) coded in this systematic review were concerned with the communication of extremist organizations such as the so-called Islamic State or Al-Qaeda. The articles usually investigated one particular sender, only in one case (Rothenberger, 2012) were several different religious groups and freedom-fighting organizations such as the Basque ETA subjected to analysis. Four studies concentrated on the use of digital media by military and paramilitary organizations like the Israeli army or Colombian FARC; extreme right-wing organizations in the United States, Italy, and Germany such as Ku-Klux-Klan or the Stormfront community were analyzed in three articles. Two studies focused on political institutions, that is, parties in Switzerland and governments in Spain, and companies. In one case, the author investigated the social media strategy of nonprofit organizations (Auger, 2013). Thus, most of the studies in this systematic review focused on extremist organizations.

Contexts of organizational propaganda on the Internet

The sources of propaganda determine the political and cultural context in which it takes place. Nine articles were situated in a religious, in this study Islamist, context. One of the studies also relates to a political context: Ciovacco’s (2009) piece on Al-Qaeda’s media strategy took a perspective centered on the connection to US politics, although religious motives such as the call to jihad cannot be separated from a group like Al-Qaeda. Eight studies are placed within the political arena, such as those concerning the propaganda of political institutions in Spain (Sande, 2016) or Heemsbergen and Lindgren’s (2014) paper on the Israeli Army’s social media feeds during wartime. Five studies fit into the category of civil society, such as extreme right-wing organizations, for instance, of the white supremacist movement (Brown, 2009), or nonprofit organizations’ social media strategies (Auger, 2013). One study on Chinese corporations (He and Tian, 2008) is situated in a business context.

Audiences of organizational propaganda on the Internet

The audiences of propaganda, along with the sources, reflect the historic and political context of propaganda. Due to the frequency of extremist groups as senders, the main targets of the analyzed propaganda were potential followers or members (n = 20), but also the general public quite often (n = 15). The government was the target of propaganda in eight studies; one study (He and Tian, 2008) investigated the phenomenon of corporate propaganda with the government as the sole audience, finding that state-owned firms in China are more likely to pursue propaganda activities toward the national government. The media, instead, was explicitly targeted by propaganda sources only in six cases, and media outlets were never the sole target audience for propagandists. This points to the conclusion that digital propaganda in this sample most often circumvents media outlets to target audiences directly. Voters (n = 3) and customers (n = 1) were rarely targeted by propaganda activities, which is also related to the majority of extremist organizations in the sample as opposed to companies or political institutions that account for only two studies each. Two studies explicitly named the enemies as the targets of propaganda, Seo’s (2014) analysis of the Israeli army’s and Hamas’s Twitter accounts and Badar’s (2016) account of the so-called Islamic State’s calls to genocide on the radio. Muslims, whites, women, and citizens were also explicitly mentioned as target audiences (n = 1 each)

Digital media of organizational propaganda

Authors of the included primary studies subjected diverse media to analysis. Most commonly, tweets (n = 9) and news articles (n = 7) were analyzed in the studies, followed by posts on Facebook and videos (n = 6). Audio material (n = 5) and organizations’ websites and pictures (n = 4 each) were also analyzed. Some articles had multiple units of analysis: Klausen (2015) studied tweets, pictures, and videos of Western-origin foreign fighters in Syria. Torres-Soriano (2011) explains how the propaganda of Al-Qaeda in the Lands of the Islamic Maghreb evolved over the years through an analysis of the organization’s media releases and audio and video statements. Rothenberger (2012) studied different terrorist groups’ use of the Internet and thus coded tweets, audio and video material, pictures, and sounds. Heemsbergen and Lindgren (2014) also looked at infographics published on Facebook. None of the articles investigated speeches, symbols, music, or apps.

Digital channels of organizational propaganda

Organizations’ websites (n = 10) and Twitter (n = 9) were the most often investigated channels of digital organizational propaganda. Online newspapers (n = 5) and Facebook (n = 4) were also used as channels, followed by three studies on YouTube, two on online discussion forums, and one on Internet radio. Hence, organizational websites are the main platforms of social movements to inform and gather members, for instance, in the case of Holocaust revisionist groups (Burris et al., 2000). They are more easily accessible by potential followers, as they do not require registration as social media sites such as Twitter. Accordingly, organizational websites are channels that allow for direct communication with (potential) members. Yet, Twitter appears to be a channel that is suitable for propagandistic communication efforts, possibly because it takes a while until accounts are shut down (Torres-Soriano, 2015), new accounts can be opened easily, and it is fairly simple for researchers to obtain Twitter data (Etter et al., 2018).

Purpose of organizational propaganda on the Internet

The goal of digital organizational propaganda was in most of the cases an attitude change in the minds of the audiences (n = 18), for instance, persuading the general public and potential followers for gun control/pro-gun arguments (Auger, 2013). In 47.8 percent of the studies, this objective was accompanied by aiming for behavioral change, that is, adopting an Islamist ideology and becoming a member of a jihadist movement (Torres-Soriano, 2011), or building an extreme right identity combined with fundraising (Caiani and Parenti, 2009). These two goals came along in eight cases with the aim to build legitimacy and preserve the status quo. Heemsbergen and Lindgren (2014), for instance, showed how the spokesperson of the Israeli army aimed to build legitimacy for the military strikes during the 2012 Gaza conflict, evoke an impression of the Israeli people being under threat by Hamas, and actively share the information on the war. Two studies revealed that digital organizational propaganda was employed to generate support among followers for the terrorist actions of the so-called Islamic State (Cooley et al., 2016; Hatton and Nielsen, 2016). Besides attitude and behavioral change, the propagandists also aimed to contextualize a terrorist event, that is, by setting the hostage crisis into the context of the Nigerian situation (Sullivan, 2014). And Klausen (2015) described how the so-called Islamic State influences the minds of jihadists from the West and recruits them, but also how Twitter is used to organize support networks and the emigration of fighters from the West to warzones. Finally, two studies (Bowman-Grieve, 2009; Caiani and Parenti, 2009) show that propaganda can also aim at networking, besides the objectives of changing attitudes, eliciting behavior, and building legitimacy.

Forms of organizational propaganda on the Internet

Overall, only one article (Silverman, 2011) mentioned the differentiation between gray, white, and black propaganda; thus, the following results are based on an own classification by the authors of this review. Black propaganda would correspond to communication that is untruthful (false information, lies), inauthentic (concealed source), unfair (selectivity), and disrespectful (half-truths, misleading) combining all criteria for unethical communication. None of the included studies investigated black propaganda. Two studies focused on gray propaganda, characterized by untruthful information: Brown (2009) studied the construction of white supremacist ideology, which included blatant lies about African American people (untruthful), although the source of propaganda was visible. Badar (2016) described how the so-called Islamic State spreads lies about Shias stating that they fake their Islamic beliefs to cause harm on the Islamic community. However, all other articles were concerned with white propaganda, which stems from an identified source with information that tends to be true, but usually selective (unfair). This is where the characteristic of the digital environment is most visible: although it is fairly easy and cheap to set up fake identities on the Internet (Daniels, 2009), propagandists do not try to conceal their identities. White propaganda, thus, is spread from an identified source and uses mostly accurate information. However, this information is likely to be selective (unfair) or composed in a way that paints a one-sided or misleading picture of an issue, group, or event (disrespectful).

Discussion: Digital organizational propaganda

The digital environment alters the way propaganda is performed by organizations. With its ubiquity, ease of access and spread, low costs, and organizing function (Goel, 2011), it invites all sorts of fake or real actors to reach their intended audience directly, while at the same time it also invites public dialogue around and scrutiny of propaganda practices (Cooley et al., 2016). Thus, as this study outlines, digital propaganda differs from analog propaganda with respect to the media, channels, forms, audiences, sources, and purposes.

First, digital channels and media allow for free participation and open discourse, although often at the cost of transparency (Lock et al., 2016), and are free of charge (in terms of access). Social media platforms, and Twitter in particular, provide effective channels for digital organizational propaganda. The gatekeeping and fact-checking function of media are thereby easily circumvented, multiple audiences are reached directly, and unfiltered content is distributed at no cost in an instant. Thus, intermediaries are no longer necessary to reach an organization’s stakeholders (Frandsen and Johansen, 2015). On the contrary, these platforms do not automatically confer source credibility. Thus, it takes time and an engaged follower base to achieve a status as a credible online source. However, audiences can be easily deceived and captivated through these channels that invite participation from literally everyone, fake or real (McNutt and Boland, 2007), for instance, in the form of bots (Howard and Kollanyi, 2018). These platforms also allow the quick spread of video and audio material, unlike in the past when propaganda videos had to be smuggled across borders in VHS (Video Home System) cassettes (Fattal, 2014). Twitter, for instance, allows for real-time communication from war zones, where Islamist fighters in Syria tweet photos directly from the battle field (Klausen, 2015). This example shows that such communication may give ‘the illusion of authenticity’ (p. 2) and appear to be truthful (given the real-time quality), but is still managed, tightly controlled, and distributed by intermediaries (inauthentic). The content is often selective (unfair) in that it does not provide the whole picture and disrespectful in the sense that the audience cannot assess the truthfulness of the information or is presented with selective footage.

Thus, following the data of this study, which is potentially limited because researchers study only specific cases where data are available, the digital environment seems to allow for more white than black propaganda. Gray propaganda seems to be less frequent. Whether these conclusions based on the study’s sample hold for the entire population is to be analyzed further, but given the information clutter of the digital arena, distinguishing PR from organizational propaganda becomes more difficult.

However, with these prerequisites, the digital audiences of organizational propaganda are on one hand limited to followers and members: Social media platforms and online forums often require that recipients create an account to follow others. Organizational websites demand a specific search to be found and accessed. Thus, most target audiences of propaganda in the digital environment are already connected in some way to the source by being followers and members or prospective followers. These audiences are often reached directly, without intermediaries such as the media (Herman and Chomsky, 1988). This is further catalyzed due to the difficulty for social media companies to block users or delete content (Crawford, 2017; Yadron, 2016). On the other hand, this digital content is, in theory, accessible to everyone. The general public as a generic target audience was the second most frequent target audience in the analyzed studies. In terms of counterpropaganda, only two articles mentioned ‘the enemy’ explicitly as a target audience, as described by Seo (2014) regarding the Israeli Army and Hamas, a classical propaganda–counterpropaganda situation that is today carried out online via Twitter.

The choice of audiences is also directly related to the sources of propaganda. Most of the studies in this systematic review focused on extremist organizations, be it terrorist groups such as Al-Qaeda, white supremacist movements such as the Ku Klux Klan, or paramilitary organizations such as Hezbollah. Only in four cases were corporate or political senders of propaganda detected: the Israeli army, political institutions in Spain, Swiss parties, and corporations in China. Thus, extremist organizations are the sources of digital organizational propaganda studied most often. This does not necessarily mean that legitimate organizations such as companies do not engage in propaganda, but may be due to researchers’ interest (or bias) toward extreme cases. However, to differentiate from extremist groups, legitimate organizations should communicate ethically, that is, truthfully, authentically, respectfully, and equally, no matter which channel.

The fact that predominantly extremist groups were investigated for their propaganda reflects the context or environment. As Jowett and O’Donnell (2014) rightly observe: ‘propaganda is like a packet of seeds dropped on fertile soil; to understand how the seeds can grow and spread, analysis of the soil – that is, the times and events – is necessary’ (p. 315). Thus, by and large, the sender reflects the times and political circumstances within which the propaganda takes place: Islamist terror is an imminent threat to Western and Middle East worlds since 9/11 and has gained momentum ever since. Freedom-fighting terroristic organizations such as FARC in Colombia have kept the country in suspense for decades until a peace treaty was made possible. And politically extreme organizations that were powerful in propaganda since the times of the Cold War stem from left-wing to right-wing political spectrum and reflect the political divide at the time, with both extremes existing at all times.

Digital propaganda’s objectives differ from classical propaganda (Jowett and O’Donnell, 2014) because the source of communication is not necessarily concealed: as evident from the analyzed studies, the propaganda source’s identity was known and the propagandist was usually embedded within the communicative spaces. Through this direct access, the propagandist could influence its primary target audience, the followers, and in a second step reach out to the secondary target group, the general public. Classical propaganda, on the contrary, would see altering the general public opinion as the foremost objective. However, both digital organizational propaganda and classical propaganda conceal the real purpose and mostly aim at inducing or changing behavior.

Thus, although the purposes of digital organizational propaganda are diverse, they can be summarized in two categories: calls to the mind and calls to action. Calls to the mind means propaganda that aims at influencing attitudes and beliefs of audiences. Violent extremist organizations, for instance, aim at spreading fear in the general public to maintain legitimacy in the minds of their followers and at times to divert attention from unsuccessful battles in war (Torres-Soriano, 2011). This is accompanied by generating support for these actions, which can be translated into behaviors. Thus, recruiting new members, gathering financial aids, and inducing followers to become active, for instance, in a protest, are calls to action. The difference to persuasive communicative efforts by, for instance, social movements is that these organizations acquire legitimacy through their legitimate cause and, besides persuading, focus on dialogic interaction (Stein, 2009).

Thus, the digital communication environment alters organizational propaganda activities. The Internet provides direct access for propagandists to their target audiences at no cost and allows to organize globally. All sorts of media can be exchanged. Thus, propagandists profit from the different effects of communication modes on recipients, as, for instance, visuals evoke more emotions than text (Smith-Rodden and Ash, 2012). In consequence, passing the gatekeepers of traditional media houses to reach constituents loses priority. Therefore, also the need to conceal the identity of the propagandist in terms of black propaganda is not central any longer. Indeed, for extremist organizations, which were most frequently studied in the included articles, openness regarding their identity when propagating ideas even strengthened their claims in terms of calling followers to action. The alleged transparency of the Internet results in more forms of white propaganda, but the question of whether the distributed information is truthful is moving center stage, connecting to the larger discussion on online disinformation (Fallis, 2015). The online communication environment with its global, yet allowing for targeted, reach and its organizing function, access to everyone, low costs, and alleged transparency (Goel, 2011) provides a space for propaganda activities shared between democratic organizations and extremist groups who communicate with their target audiences.

A classification of digital organizational propaganda

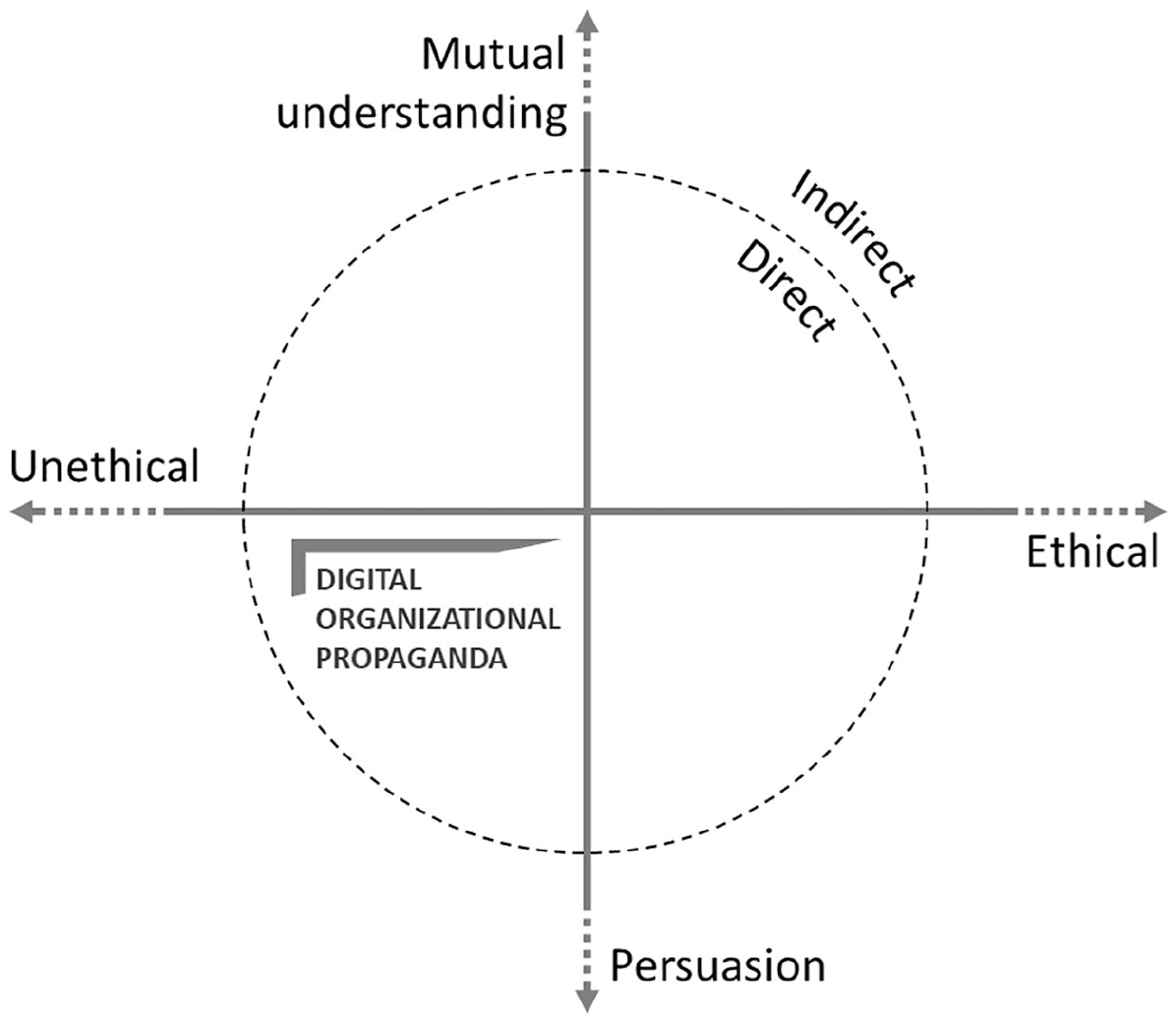

In an effort to differentiate PR and propaganda, Taylor and Kent (2014) place propaganda at the unethical end of a communication continuum and equate dialogue with ethical communication to form the other end of the spectrum. However, as our results show, such a distinction may be too simple when considering that PR in practice is often persuasive and not oriented toward dialogue, but still ethical. Alternatively, we suggest looking at organizations’ communication with their stakeholders on two dimensions (Figure 2), ethical orientation (ethical vs unethical) and communicative intent (mutual understanding vs persuasion), where the moral evaluation is captured in the first, not the latter, dimension. When considering the impact of the digital age, a third dimension emerges that represents the specificities of digital organizational propaganda. While analog and early digital propaganda usually had to pass through the media and thus reached the audience indirectly (Herman and Chomsky, 1988), today’s propaganda in the digital arena is characterized by direct access to the target stakeholders. Hence, the third dimension applied to classify digital organizational propaganda is direct (within the circle) versus indirect (outside the circle) reach.

A classification of digital organizational propaganda.

The left side of the figure displays unethical forms of PR (Messina, 2007), communicative acts that are untruthful, inauthentic, disrespectful, or unequal. Digital organizational propaganda belongs into the lower left quadrant, where unethical intent and persuasion come together. Thus, based on the analysis of existing research, we may define digital organizational propaganda as direct persuasive communicative acts by organizations with an unethical (i.e., untruthful, inauthentic, disrespectful, or unequal) intent through digital channels.

Unlike a communication source that aims to persuade ethically (lower right quadrant) such as nonprofit organizations as analyzed by Auger (2013), in organizational propaganda, the source may hide the purpose, spread untruthful information, and/or conceal its identity, which is deemed unethical (Jowett and O’Donnell, 2014). In his study on white racial ideology in online discourse, Brown (2009) shows how ‘white supremacist organizations’ misinterpret scientific evidence and misconstrue a link (disrespectful and untruthful) between godly descent and race to support their argument for the superiority of the ‘white race’. The digital nature of organizational propaganda is further characterized by direct reach of the target audience (within the circle). Most of organizational propaganda in the digital environment reaches the audiences directly without passing a gatekeeper like the media, for instance, via owned media such as online magazines issued by extremist groups. In the case of the ‘Inspire’ magazine published by an Islamic terrorist organization, Lemieux et al. (2014) found that the magazine provides false justifications for the use of violence to persuade potential followers to join their actions (untruthful). Similarly, the editors cut quotes of Western politicians and experts and presented them out of context (unfair) in the magazine ‘to provide information that makes the magazine appear to be reasonable and accurate, with positions that are rooted in fact’. (p. 363).

The upper left part of the graph is characterized by communication aimed at mutual understanding but with an unethical intent. For instance, situations in which an active participant who influences the discussion in an online discussion forum turns out not to be a real person but a fake persona mandated by an organization through a PR agency could fall into this category (Lock et al., 2016). The same breadth between direct and indirect reach is displayed here, depending on whether the communication takes place directly as in the example or through a mediator.

On the right-hand side of the figure, we find ethical PR (Messina, 2007). Thus, the very counterpart of digital organizational propaganda in the upper right quadrant is every form of deliberation, described by mutual understanding built on open discourse, transparency, and free participation (Lock et al., 2016) and communication in terms of ethical PR standards. Likewise, direct digital deliberation takes place when participants can participate in an online forum directly, while discourse that takes place via the media, for example, is indirect.

In the lower right quadrant, we find PR strategies such as online advertising: communication forms that meet the standards of ethical PR and aim at persuasion. Direct online advertising would, for instance, be customized ads on the Facebook profile of a person, while indirect online advertising are ads placed, for instance, in an online newspaper that are not personalized. Ethical persuasion fulfills the needs of the persuader and the persuadee, while propaganda benefits only the sender (Jowett and O’Donnell, 2014).

Practical implications

Following this classification of digital organizational propaganda, it is crucial for organizations and audiences alike to determine when such communication takes place. Messina (2007) put forward three tests of ethical persuasion based on a utilitarian notion: reversibility, publicity, and principles and criteria. Reversing and adapting them, we suggest testing for digital organizational propaganda by the following three standards:

Nonreversible. If the organization was the subject of this communicative act, how would it react? It would never have embarked on propaganda because of the detrimental consequences following from untruthful and inauthentic communication.

Controversial. The communicative act provokes harsh reactions and allegations of unfairness and disrespectfulness from other organizations, for example, competitors.

Unjustifiable. The consequences of this communicative act could not be justified with reason toward the organization’s stakeholders.

These tests can be applied by organizations to check if their planned digital communication strategy is propaganda rather than ethical persuasion. Audiences, be it consumers, watchdogs such as the media or nongovernmental organizations (NGOs), or policymakers, can assess if organizations communicate ethically by applying these three criteria to the communication of the organization.

Limitations

This study is subject to limitations. We covered a breadth of academic subjects and journals to deliver a comprehensive overview of digital propaganda by organizations. Thus, we chose to systematically synthesize existing evidence to study this phenomenon rather than to analyze instances of propaganda with primary data. Our goal is to provide an overview of digital organizational propaganda across disciplines and organizational contexts for research synthesis. However, fields might be biased regarding specific disciplinary orientations and researchers might favor extreme cases to gain significant results. Furthermore, each field has different conceptual approaches and reporting standards. This might be the reason why the quality of sampled articles varied widely. Another limitation relates to the rather simple structure of the search string. At the time of conducting the review, we chose to use a search string with a high sensitivity to ensure that most studies referring to our keywords were captured. Yet, the overall number of hits might seem small when compared to systematic reviews in public health that yield results in the thousands, but in these fields the overall research output is much higher. The specific character of the search (organizations only, not individuals) and the limit to social science databases (due to the focus on empirical studies) which we had access to explain the sample size. However, there may be articles and in particular gray literature that we have missed. A further discussion on the inclusion of gray literature – and a guide to systematic reviews in general – is provided by Booth et al. (2016).

The audiences of propaganda were almost never explicated (except for Ciovacco, 2009). Similarly, authors never explicitly stated the goals of the propaganda efforts, which we likewise deduced from the information given in the articles. Thus, two researchers coded all articles simultaneously and discussed single codes if judgments did not align to reach a consensus. Last, all of the analyzed articles focused on the content of propaganda messages from the perspective of the sender. Only one study engaged in a perception analysis (Hatton and Nielsen, 2016). Moreover, there are different ways to look at digital organizational propaganda: Caiani and Wagemann (2009) and Burris et al. (2000) conducted social network analyses of German and Italian extreme right movements and white supremacist groups in the United States, which we did not include in this analysis after thorough consideration during the consensus session. This is an equally fascinating lens to look at digital organizational propaganda, however, beyond the scope of this analysis.

Conclusion

PR is often alleged to be in a trust crisis and, given its historic roots, has frequently been equated with propaganda. The paradigmatic shifts of the Internet bring forward new opportunities for PR, on one hand by direct communication with audiences and fast access to data, on the other hand, because PR can more easily be undermined and used unethically for propaganda purposes. Based on a systematic review of primary research on propaganda by organizations in the digital age, we defined digital organizational propaganda as the direct persuasive communicative acts by organizations with an unethical (i.e. untruthful, inauthentic, disrespectful, or unequal) intent through digital channels and juxtaposed it to ethical PR and mutual understanding. The analysis of primary research highlights that the digital environment promotes most frequently white propaganda. The media is circumvented by such organizations and followers are reached directly. Propaganda’s audiences are targeted with calls to the mind and calls to action, without concealing the original purpose of the propaganda organization. However, only a minority of audiences actually consider taking action in response to propaganda, which could fuel future research in terms of effect models of communication. Propaganda effects on attitude and behavior change could be the topic of future studies, following the example of Hatton and Nielsen (2016). Future research could also analyze the rhetorical strategies applied by propaganda organizations and gauge their effectiveness. Similarly, it would be interesting to understand how to best measure propaganda. Regarding the classification of digital organizational propaganda, other forms of organizational communication along the axes of the graph could further be researched to map the array of different communication strategies on the Internet.

Footnotes

Appendix

Coding sheet.

| Formal variables | ||

|---|---|---|

| ID | Variable | Coding rules |

| V1 | Authors | Open code |

| V2 | Title | Open code |

| V3 | Database | Open code |

| V4 | Publication type | Nominal |

| V5 | Journal | Open code |

| Content variables | ||

| ID | Variable | Coding rules |

| V1 | Study approach | Nominal: Empirical–quantitative: Quantitative content analysis Experimental study (online or lab), Survey, Other; Empirical–qualitative: Thematic analysis, Discourse analysis, Qualitative content analysis, Case study, Other |

| V2 | Level of investigation | Nominal: macro, meso, micro |

| V3 | Propaganda definitions | Open code: definition provided; Source of definition |

| V4 | Source | Open code |

| V5 | Analyzed material/media used | Nominal: Speeches, Press release, Tweet, News article, Post, Website, Audio, Video, Photos, Music, Apps, Symbols, Graphics, Other: open code |

| V6 | Study context | Nominal: Corporate/business world, Political arena, Religious arena, Civil society, Other |

| V7 | Time reference | Nominal: historical, contemporary |

| V8 | Audiences | Nominal: Voters, Customers, The general public, Media outlets, Potential followers, Government/ regulators, Other |

| V9 | Channels | Nominal: Website, WhatsApp, Twitter, YouTube, Facebook, Instagram, Pinterest, Forum, Online Radio, Online TV, Online newspaper, other |

| V10 | Goals of propaganda | Nominal: Adopt beliefs/attitudes, values Change behavior/maintain current behavior, elicit behavior, Preserve status quo, Other: open code |

| V11 | Form of propaganda | Nominal: Black, White, Gray |

| Quality assessment | ||

| V1 | Theoretically deduced study design | Binary: yes/no |

| V2 | Explanation of methods | Binary: yes/no |

| V3 | Limitations | Binary: yes/no |

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.