Abstract

In August 2015, Julia Anderson, the Global CIO at Smithfield Foods, the biggest pork manufacturer in the US, was faced with a problem on how to structure the company’s ERP system. The company operated its own data center in the early 2000s. Eventually management of hardware and software was outsourced to an Infrastructure Management Service (IMS) provider. The system was running on servers hosted by an IMS provider, but lately a series of major problems had Julia contemplating whether or not to continue the company’s relationship with the provider. Furthermore, SAP AG, the company’s ERP vendor, released a new version of the system, SAP HANA. As Anderson pulled into the company’s parking lot on Monday morning, she had three difficult decisions to make. Should Smithfield stay with its ISM provider or switch to a cloud service, which was becoming an increasingly popular choice? Should the company convert its current SAP system onto SAP HANA platform, or implement and deploy a new system from scratch? Finally, should the company take a big risk and do both changes simultaneously?

Company history and background

Headquartered in Smithfield, Va., since 1936, Smithfield Foods, Inc. is an American food company with agricultural roots and a global reach. Smithfield Foods is the largest pork processor in the world and the largest U.S. hog producer, which provides more than 60,000 jobs globally. The company currently owns farms and facilities in 29 U.S. states and seven countries and boasts a portfolio of high-quality brands, such as Smithfield®, Eckrich®, Nathan’s Famous® and many others, that make Smithfield a top supplier to the retail, export, food service and deli channels.

In 2013, Smithfield was acquired by the WH Group, a Hong Kong–based publicly traded company, after the company’s shareholders voted overwhelmingly to approve the strategic combination. Since the acquisition, Smithfield has upgraded and consolidated its ERP system and migrated many of its IT services to the cloud. The technical and managerial aspects of this migration are the primary focus of this case.

Smithfield’s first information system

Rapid expansion starting in the 1980s, including significant merger and acquisition activity, created over time a Smithfield Foods’ network of more than 40 processing facilities across the U.S. that communicate data in real-time on operations and distribution activities. The company’s logistics network is a combination of Smithfield-owned distribution centers and third-party storage providers. Transportation providers move goods between hog farms, processing plants, and storage facilities. The company’s expansion resulted in a host of disparate and decentralized information systems, which required a transition to a unified ERP System. In the early 2000s, the company consisted of several independently operated companies that were all under the Smithfield Foods corporate umbrella. The largest subdivisions at the time were Smithfield Packing Company, Farmland Foods, and John Morrell.

Smithfield Packing Company’s first information system was developed by Monette Information Systems. The collaboration with Monette began with outsourcing payroll and gradually expanded to include management of customer sales orders, invoices, and general ledger. Monette Information Systems had only two customers: Smithfield and a local long-term care facility. In 2001, Smithfield purchased its side of Monette business (system source code and the database) and began to run the information system in-house. At that time, the system was hosted in the Smithfield-owned data center and the company purchased and managed all hardware and software resources needed to maintain and support the system.

Three degrees of IT outsourcing

A company-owned data center provides full control of data, hardware, and software. Such an operational model is commonly referred to as an “on-premises IT,” or “on-prem” for short. While maintaining full control over the data center affords the company a great deal of security, flexibility, and customization, it also comes with higher costs. There is significant upfront expense associated with purchase of facilities, hardware and software. The data center must be staffed to provide ongoing systems support, which brings recurring expenses associated with personnel salaries and training, software and hardware maintenance, and upgrade and data traffic and utilities costs. Under this model, the physical data center, hardware and software are considered to be assets, which are accounted for on company books and depreciated according to established accounting schedule.

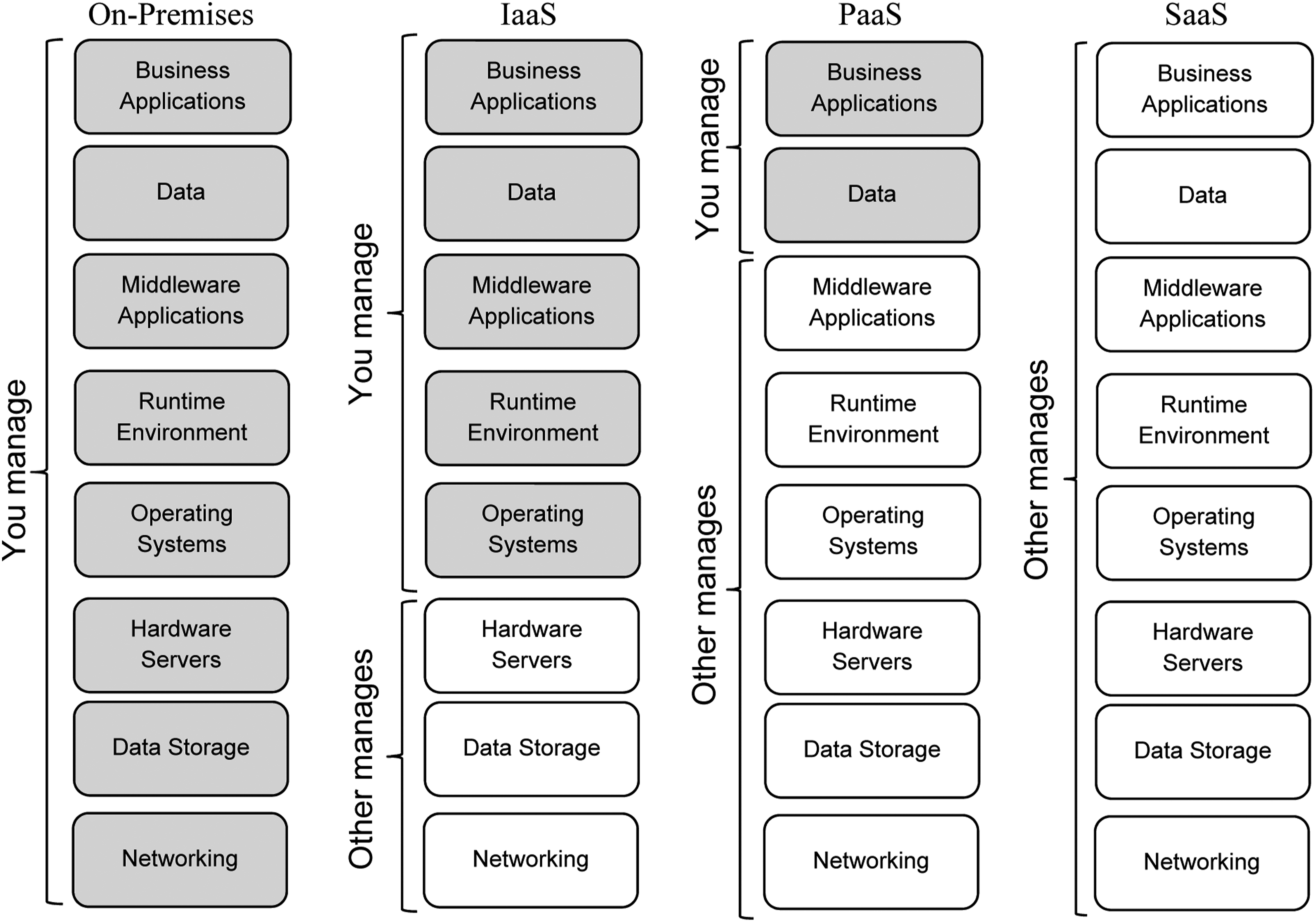

Some companies are willing to give up full control over some of the information system’s components and outsource them to a third-party service provider. When outsourcing IT operations, either fully or partially, there are three models which are generally considered: - IaaS (Infrastructure as a Service) - PaaS (Platform as a Service) - SaaS (Software as a Service)

Exhibit 1 illustrates the difference between on-premises IT and the three outsourcing models. As a company moves from full ownership, or the on-prem IT model, to IaaS, to PaaS, to SaaS, it outsources increasingly more of its IT operations to a service provider. The cost structure of the company changes as well. With the on-prem IT model, the data center is considered to be a depreciating asset. Under the SaaS model, the IT operations are fully outsourced with no assets on the books. All personnel, hardware, software, and utilities costs convert to a service cost, paid to a service provider for managing the entire IT stack. Three degrees of IT operations outsourcing (Source: Smithfield foods).

Smithfield IT operations prior to 2015

The information system acquired by Smithfield from Monette Information Systems was a custom legacy system, written in DIBOL language. The business data was stored in a dBase database. Over time the system became increasingly difficult to maintain due to a limited number of employees with the knowledge of the DIBOL language and the system itself. In 2003, Smithfield acquired Farmland Foods, which ran all of its business operations using SAP R/3 system. Following the Farmland Foods model, Smithfield Packing Company, the largest operating company in Smithfield’s portfolio, implemented SAP SD (Sales and Distribution) module in 2003. This allowed Smithfield Packing Company to streamline its order-to-cash process and to more easily integrate new customers into the information system. Five years later, in 2008, Smithfield Packing Company completely switched from the Monette legacy system and implemented a full, “wall-to-wall” SAP R/3 system using Farmland’s SAP instance as the template. With the growing number of acquisitions, Smithfield looked to outsource its data center operations to a third-party company to better manage its IT needs and streamline expenses. In 2007, an Infrastructure Management Service (IMS) provider was contracted to consolidate and manage all SAP R/3 systems related to Smithfield’s independently operating companies. The IMS provider owned a data center which housed the hardware, systems, and data of multiple customers (also referred to as “tenants”), like Smithfield, to provide capital for its operations, maintenance, and upgrade of the facilities. If the need for additional computational resources materialized, Smithfield had to purchase the needed hardware and wait until the new servers were delivered to the IMS data center. Additional time was then required for the IMS provider to install, configure, and bring the servers to full production mode. The IMS provider was also responsible for maintenance and supporting ongoing operations. The service provided could be best described as IaaS (see Exhibit 1).

The IMS provider used a dual data center model, with one facility located in California, and another in Texas. Both data centers were “hot” (up-and-running), with identical (mirror) systems and data. Once new data were posted by a tenant, both data centers were updated simultaneously so that both sites maintained identical copies of data at any point in time. If one data center would go down and become unavailable for some reason, the other would become a primary data center, carrying full workload, until the failed data center would be restored back to the operational mode. This service model worked well for a traditional data center—spreading the customers across the two locations ensures that investments would be balanced to support failover services. However, Smithfield lacked the ability to easily scale up and down its computing resources (number of servers, amount of storage, etc.).

Why would a company need flexibility in the amount of computing resources it has? Imagine an online retailer (such as Amazon), where most customers are shopping online during the day, but the demand drops drastically during the overnight hours. Similarly, the weekend shopping activity is likely higher than that on the weekdays. In order to efficiently process customers’ connection requests, more servers are needed during demand peak times. Once the demand subsides, the additional servers brought online largely become idle and unused. While Smithfield is not an online retailer, the company still has varying needs for computing resources over time. Fewer servers are needed to process business events data (posted production, customers’ orders, etc.) after normal business hours and the demand for products typically increases at the end of the year (Thanksgiving and Christmas hams). Servers used for software development and testing during daytime become idle at night.

In addition to the IMS provider’s inability to quickly scale up or down the computational resources, other problems began to gradually appear. The IMS provider’s business model could not compete with cloud services and tenants began abandoning the data center and switching to new cloud server providers that were coming online at a quick pace. Decreased revenues from the exodus of tenants resulted in cost cuts and reduced service by the IMS provider. Needed upgrades for the hardware and equipment were often either canceled or significantly delayed. IT staff were cut and some of the work was moved offshore to less-trained workers, including some of the critical systems monitoring processes. To mitigate this, Smithfield was forced to increase its own IT personnel to validate data backups, perform software patching and updates and other IT work, effectively eliminating any benefits of the vendor-managed data center model.

Because of increased outages, rising support cost to manage the system and deteriorating service quality, the decision was made to move Smithfield’s SAP system to a public cloud provider. This decision to move away from the hosted data center model resulted in something more scalable, robust, and reliable to properly support the company’s critical SAP production environment.

What is cloud computing?

The term “cloud computing” was first introduced in 2006, when Amazon announced its first publicly available cloud service, AWS (Amazon Web Services). In the early 2000s, Amazon was quickly expanding its retail operations and Amazon’s online business was growing at a faster pace than the capacity of any pre-packaged software solution. This prompted Amazon to design an IT infrastructure that would have the following characteristics: - Scalability (ability to add or release computing resources in response to peaks and valleys in demand) - Easy data backup and disaster recovery - High service availability in different geographical regions, and, - Easy to use

When Amazon completed its new infrastructure, it realized that excess computing capacity, built into the system for scalability purposes, was being used only occasionally and sporadically. As a result, in 2006 Amazon offered the S3 (Simple Storage Service), SQS (Simple Queue Service), and EC2 (Elastic Cloud Computing) services publicly as a beta service. Client companies could provision, or “spin up,” additional servers (EC2 service) or reserve additional storage space (S3 service), if needed, and release these resources back into a common “pool” when they were no longer required. The client company would pay only for the computing resources utilized and for the time during which these resources were utilized. Since its inception, AWS was aggressively adding new services to the portfolio. As of 2020, AWS offered more than 175 different services to its customers.

Smithfield’s corporate transformation

After a nearly three-decade period of expansion beginning in 1981, Smithfield Foods had acquired many companies. In 2015, Smithfield initiated a project to consolidate and streamline the management and information systems of the company. Instead of having multiple independently operating companies, Smithfield converted to a centralized, vertically integrated organization. The new structure required a single, centralized ERP system to support unified operations. The management team decided to continue using SAP to integrate all of the data and processes from the entire network of company facilities. The IT consolidation project was dubbed One SAP. But corporate transformation and the migration to “One SAP” was only one element of the initiative. Another factor prompting the change was a major update to SAP system itself. In 2011, SAP AG, the system vendor, released a new version of its system, SAP S/4 HANA. SAP announced that the support of SAP R/3 would eventually be discontinued. So, customers running SAP R/3 system had to decide whether to upgrade to SAP HANA or switch to another ERP vendor. Smithfield decided to remain with SAP AG and began planning the implementation of HANA.

SAP S/4 HANA features

A principal change made in SAP S/4 HANA compared to its previous version SAP R/3 was the design and implementation of its database. The SAP R/3 system, released in 1992, relied on a traditional relational database. For large companies, dealing with a large number of transactions, data processing (such as reports generation) involved reading multiple tables in a database all at once through tables joins, pulling massive amounts of data. This process required a significant amount of system resources and essentially prevented the system from doing anything else while a report was being generated. The speed of a query was also limited by data access times (how long does it take to read the first character after initiating the request). For example, a traditional hard drive using magnetic disks for data storage has an access time of 5–10 milliseconds. In a solid-state drive (SSD), where data are stored in a flash memory, the access time drops to 25–100 microseconds, or up to 100 times faster than the traditional drive. The fastest memory in a computer is Random Access Memory (RAM). RAM memory chips have access time of 50–150 nanoseconds or less. In order to take advantage of shorter access times, SAP HANA system relies on an in-memory database where a significant part of the corporate database is stored in a very large RAM. According to SAP, using in-memory database allows for a significant acceleration of database processing, where business reports are generated with little to no delay. As of this case writing, Smithfield SAP HANA system runs on a physical server with 3 TB of RAM, with plans to expand it to 6 TB in the near future. This RAM caches the most recent transactions (which occurred during the last 60 days). As transactions become older than 60 days, they are archived into a data warehouse.

In order to further enhance system performance, the structure of the database itself was modified in SAP HANA. Previous SAP versions relied on a relational database with a large number of tables. The SAP HANA database is designed with fewer tables. For example, all data for financial (FI) and cost accounting (CO) modules are stored in only one table. SAP refers to this database design as “Simple Finance” (S-FIN). Such simplification of the database structure results in fewer table joins being performed, and as a result, a significant acceleration of the data read process. This allowed Smithfield to run some of its end-of-month closing processes without taking precious hours out of the system operations.

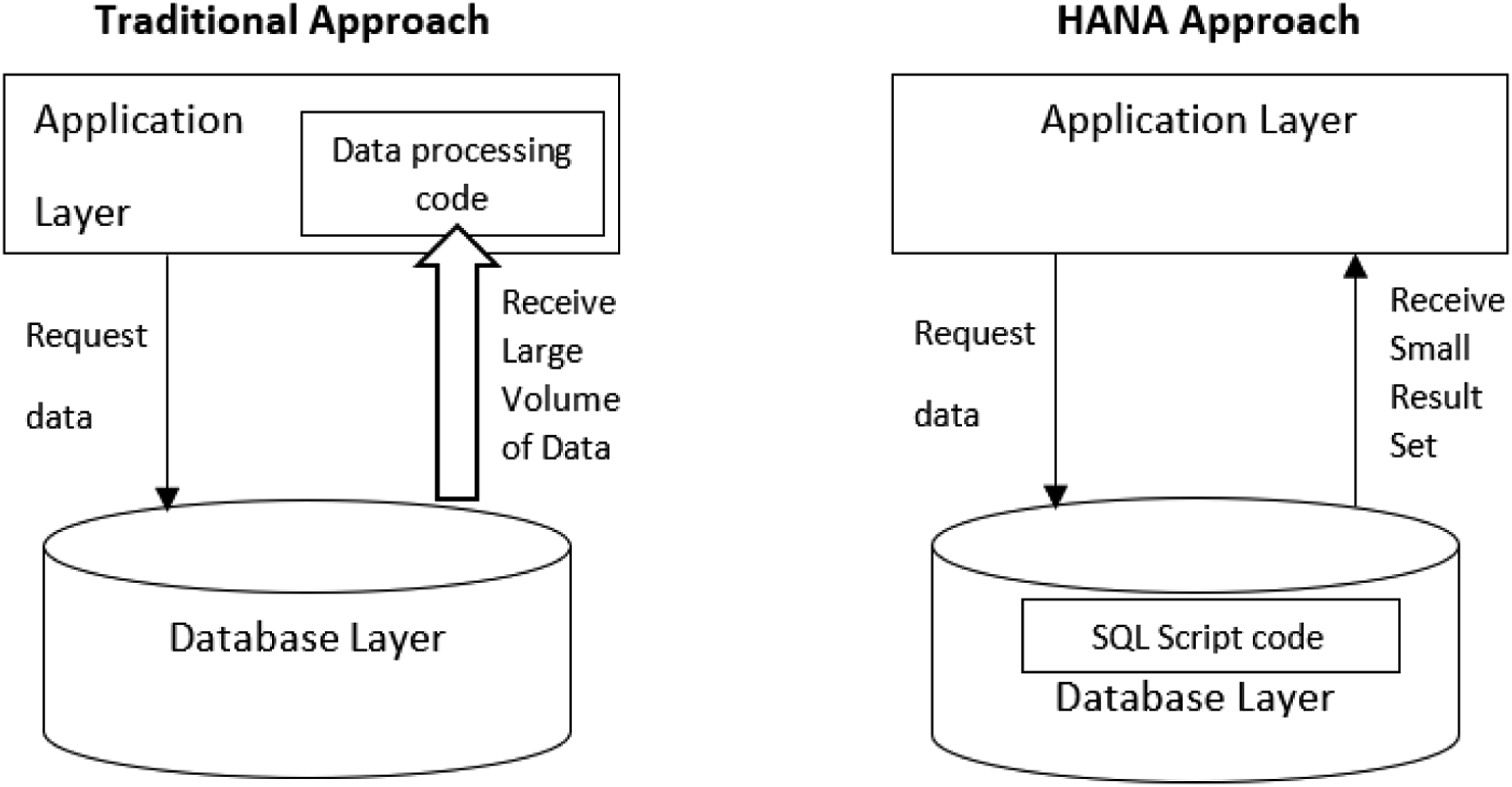

Another modification accelerating HANA database performance was the SQL Script, a dialect of SQL developed by SAP for the S/4 HANA system. Under the traditional approach, when a system’s application layer requests data from the database layer, SQL performs data reads and the database layer returns large volumes of data to the business application. Data processing code in the application layer performs further manipulations with the data. SAP significantly expanded the SQL statements, creating SQL Script. This scripting language allows developers to move a significant amount of complex business logic from the application layer into a database. When the application layer sends the data request, SQL performs the data reads and the SQL Script performs a lot of subsequent data processing within the database layer. The results of the data manipulation, which represent a much smaller data set, are communicated to the application layer. This design minimizes data exchanges between the database and business applications, reducing latency, and further improving system performance. Exhibit 2 below provides an illustration for the difference between traditional approach and HANA approach to data processing. Data Processing Under Traditional Approach versus HANA Approach (source: authors).

Finally, an additional advantage of SAP HANA is a new graphical user interface (GUI). The R/3 system’s user interface did not change in decades. With the proliferation of new devices, and massive adoption of mobile technology, the SAP R/3 system needed a face-lift to provide the many ways a client application can interface with an ERP system. Each computer configured to work with the SAP R/3 system required an installation of a GUI client application. Any changes to GUI application required an update of all client machines. In order to enable mobile users to have access to the full system’s capabilities, SAP developed a new front-end called SAP Fiori. This is a JavaScript-based user interface, which can be executed in a browser on a laptop/desktop computer, a mobile tablet or a smartphone. Since SAP Fiori runs within the web browser, any updates to UI screens are done on the application server and do not require any updates to client devices.

Data conversion

Generally, there are two types of data contained in an ERP system—master data and transactional data. Master data is relatively stable and does not change frequently. It includes data on company’s employees, suppliers, customers, etc. One of the most often used master data types is the material master data. It consists of data on raw materials, work-in-progress, and finished inventory. The other data type, transactional data, unlike the master data, is very fluid and changes frequently. Transactions represent the business processes which include sales orders, purchase orders, inventory receipts, shipments, payments and many other business events. Transactional data are updated frequently. Every day a company accumulates and records new transactions. At the same time, existing transactions are updated. For example, if the company ships a customer order, the status of the order would be changed to “shipped.” Master data are very closely linked with transactional data, as each transaction must specify the details of: - who participated in the transaction (which customer placed an order, which employee shipped the order, etc.) - what assets or resources participated in the transaction (which items were ordered by a customer, which items and what quantities were picked in the warehouse, etc.), and, - where the transaction occurred (where the order should be shipped to, from which warehouse should the order be shipped)

Before Smithfield could configure and deploy the new SAP HANA, data from all its independently operating companies had to be converted into a single HANA database. To accomplish the conversion, a common format for the master data in the One SAP system needed to be defined. Whenever possible, Smithfield used the industry standard data definitions (such as Universal Product Code or UPC). After the master data format was established, data from each of the independent operating companies had to be mapped and converted into the new format. Each company used its own unique master data definition, requiring each conversion to the One SAP system to be done separately. To perform the conversion, Smithfield used its own teams of data analysts who worked with an external consultant to assist with the data conversion process. A migration software tool was utilized to move the data from multiple disparate databases onto the HANA database. For data conversion, the mapping rules of old data onto new data format had to be set up in the data migration tool. The use of this tool automated and significantly accelerated the existing manual data cleansing process, enabled re-use of data conversion logic, and allowed a larger team of data analysts to work in parallel to clean source data and define mapping rules to match the target system.

The use of the data migration tool also allowed the data conversion code to be developed faster and permitted the project team to refresh the data in development and test systems much more frequently than in past projects. This gave the data conversion team more opportunities to practice and perfect the conversion process. It also allowed the project team to operate with “fresher” data than in past projects. During the data conversion process, it is not unusual that some data records do not convert correctly, and are reported as errors. The data migration tool yielded a conversion error rate which was 90% less than conversion methods used in the past.

In addition to the data migration tools, Smithfield also leveraged other data stewardship tools to provide an ongoing governance of master data, such as customers, products, materials, and supplier data. Data governance is important to ensure that if any changes to the database structure are done in the future, they will not compromise the integrity of the existing data. The data stewardship software allowed Smithfield to replace an iterative process of emailing spreadsheet templates with a web-based application featuring a built-in workflow system. The new process would automatically send all changes and updates to the correct people for input, review, and approval. It eliminated the “lost” requests that often occurred due to the numerous email threads created through the back-and-forth approval process. The new data stewardship software allowed the Smithfield data governance team to see exactly where any given request sits in process at any time. The new data governance process significantly improved “time-to-market” for data changes.

SAP HANA implementation: brownfield versus greenfield approach

Once all the data from each of Smithfield’s independently operating companies was merged into a single database, the SAP system needed to be configured to fit Smithfield’s business requirements. Generally, companies prefer installing ERP systems with a minimal amount of changes and custom programming. There are two reasons for this approach. First, when changing parts of a tightly integrated ERP system, there is always a risk that some of the modules’ interdependencies will be broken. Second, system customization comes with a significant cost and time requirement. To configure and deploy the “One SAP” system, Smithfield contracted SAP for technical expertise, and an SI (System Integration) partner. Anderson and her team needed to decide how the SAP HANA system would be configured and deployed. One SAP had to literally become the one way of doing business for all company facilities. But each independently operating company had a long-established way of conducting business processes.

The transition team could go the way of customizing the SAP HANA system or using the base or default processes that SAP provided. These options are called the Brownfield and Greenfield approach, respectively. Under the Brownfield approach, the exiting SAP system is gradually converted to S/4 HANA. During such conversion, the company can keep its existing business processes which it was using before along with any custom programming changes. Not all of the existing custom codes had to be migrated into the new SAP HANA system. Instead, the Brownfield approach allows the flexibility to evaluate and modify the existing business processes to fit the default SAP HANA business processes, or use the ones that are working for the company.

With the Greenfield approach, the SAP system is starting fresh with a clean slate. This approach focuses on minimizing the custom ABAP programming (ABAP is the programming language the SAP system is built with). Unlike the Brownfield approach that allows system customization to incorporate existing processes into the new system, the Greenfield approach implies the re-engineering of company’s business processes to adapt to the default business processes in SAP HANA. The process of changing the way business processes are organized and executed in the company is known as Business Process Re-Engineering (BPR). Under the Greenfield approach, minimal programming changes are done to the SAP system, and most, if not all, of the customization done on the previous SAP version implementation will be wiped away. Since the Greenfield SAP implementation requires a new system configuration (as opposed to the conversion of the old and proven system), it is a riskier approach than Brownfield.

The conversion team met with the liaisons from each of the largest independently operating companies who each advocated for their approach to be used as the new standard for performing and recording business processes. Based on these conversations, the team realized the best approach was to use the default SAP configuration as much as possible, while changing the business processes in packing plants to fit the SAP HANA system. For Smithfield, using the Greenfield approach for the new SAP HANA system presented fewer challenges than opting for the Brownfield method. The primary goal of the “One SAP” project was to eliminate multiple business processes used by different Smithfield companies and implement an enterprise-wide standard to be used across all Smithfield facilities. Business processes implemented in SAP HANA are based on best practices and industry knowledge, accumulated by SAP through numerous system deployments. The SAP HANA offered the most modern data structures to store business data. “It was the Simple Finance, which SAP HANA offers, that was by itself a good enough technical reason to go with the Greenfield approach” according to Anderson.

The benefits brought by SAP HANA included companywide real-time data availability. With the old SAP R/3 system, different independently operating companies were running their own instance of the SAP system. Generating reports using the most current data required the data to be first loaded into the data warehouse, which took time. Since the HANA database keeps all transactions in-memory rather than being stored, the most recent data posted is immediately available for report generation. Customer service and sales departments have real-time information on where the product is available or scheduled for production and can offer customers the best shipping rates and fastest delivery options. All purchasing planning and pricing information became centralized and transparent. This allowed Smithfield to lower the procurement costs.

Moving SAP to a public cloud provider

With the decision to move to the new SAP HANA system in 2015, the management team also decided to abandon the hosted data center model and move the system to a public cloud provider. Smithfield was about to undertake a major overhaul of its information system, which represented a big risk. The complexity and risk associated with the decision to transition to a new SAP platform were compounded by the simultaneous transfer of the company’s system to the public cloud. Some CIOs state that the complexity and dangers when moving an ERP system to the cloud is similar to a heart transplant. In order to make the transition as smooth as possible, Smithfield sought out expertise channels, such as America SAP User Group (ASUG), an independent network of SAP customers, partners, and industry professionals. Anderson’s team could not find anyone in these communities who had attempted such system overhaul before.

An unsuccessful ERP implementation can be very costly for a company and, in extreme cases, can cost the company its business. What gave Smithfield the confidence that converting to the new SAP HANA system, while simultaneously moving it to a public cloud, would work? “It was a buy-in from the top management, combined with the belief that the technology was mature and capable enough to make the conversion possible,” said Anderson. Smithfield’s top executives had a clear understanding of what it would take to convert a company of Smithfield’s size to a completely new information system, running on the cloud, and they were fully supportive and determined to make this change.

An ERP system can be deployed by a company in several different ways. First, it could be deployed “on-premises” in a data center, owned and operated by the company. Second, it could be deployed fully in the cloud, when the system is configured to run in a data center of a cloud service provider, and the client only pays for computing services consumed. Finally, the system could be deployed in a hybrid mode, with some business applications and databases running on client premises, and the remainder in the data centers of a cloud provider. The hybrid model can be an attractive option for companies which are not willing to give up control over their business data. Although cloud service providers promise high availability of their services, frequent data backups and protection against unauthorized access, the idea of keeping all business data, the lifeblood of a company, in the data center operated by a third party might be unsettling. For the conversion of the system to the public cloud, Smithfield selected a public cloud provider recommended by SAP.

Personnel training and converting to SAP HANA

A critical element of conversion to a new system is user acceptance. Resistance to change is human nature and deployment of a new enterprise-wide system is no exception. Employees oftentimes feel that the new system is being pushed onto them, while the old system worked just fine. Another issue is end-user training. In order to transition to a new system successfully and with minimal disruptions, all employees who will be using the new system must be educated and trained on the system features, screen layouts, reporting system, etc.

Smithfield took an enterprise-wide approach to training all end-users on the new “One SAP” platform. The Organizational Change Management (OCM) team was formed, which worked with key stakeholders at each facility to identify all personnel to be trained and ensure they were assigned the correct SAP business roles. The goal of the OCM team was to ensure the transition was as efficient as possible. As former CIO Anderson explains, “when installing and deploying an ERP system, you cannot say to the users “just do it,” you have to win people’s hearts and minds.”

The OCM team organized once-a-month town hall meetings at each facility with Smithfield’s top executives as guest speakers. These meetings addressed basic questions about the conversions to the new system, such as “why is Smithfield doing this, what is in it for you, and why is this important for the company?” Anderson and her team visited all of the company’s processing facilities. They met with employees and explained all the changes coming to the information system with the rollout of the new SAP system. Companywide instructor-led training was conducted to ensure each employee was trained using the consistent approach that was specific to the SAP process they would be interfacing with in the HANA system. At the beginning of each training session the conceptual context was presented, followed by hands-on system transactional exercises where the instructor demonstrated the process and then the users practiced the transactions. In addition, virtual content (job aides) was developed using a third-party recording tool which captured the end-to-end process of an SAP transaction. All training documentation was posted to an online knowledge base for reference by end-users. Monthly newsletters and periodic email update communications to the plants and farms were instituted to keep all employees informed of the changes as the SAP HANA conversion project moved forward.

Once the SAP HANA system was configured and deployed on a public cloud platform, Smithfield implemented a three-phase conversion of meat packing plants onto the new system. A selected group of plants in the Midwest were converted first to SAP S/4 HANA platform in October 2017, while other plants were still running on their old systems. The conversion was followed by a hypercare period that ran for a few months. During the hypercare period, an elevated level of support was offered to the converted business units to ensure the seamless adoption of the new system and prompt attention to any arising issues.

In the second phase, three meat packing plants of a newly acquired company were added to the “One SAP” system. In addition to rolling out “One SAP” to the plants’ operations, the company’s personnel at the three plants required a full integration and alignment to the Smithfield systems including Human Resource and time management and attendance systems. Smithfield communicated to new employees all company policies, as well as retirement and benefits options. Using “lessons learned” from the first phase of SAP conversion, resistance was mitigated, which allowed for a better user adoption of the system.

By the time of the third conversion phase of the remaining packing plants, which was scheduled in July 2018, the project team had a well-oiled process and end-users were fully aware of the previous two transitions. This allowed for a smooth conversion, wrapping up a multi-year project.

Reporting improvement

According to Anderson, the speed of standard reports generation, built into the SAP system, was significantly improved with the conversion to S/4 HANA. This was a result of an improved and simplified structure of the in-memory database. For analytics purposes, the transactional data are copied into a data lake, a storage repository that contains a vast amount of historical business data. The data lake allowed Smithfield to design and generate interactive charts and dashboards, as well as “traditional” paginated printable reports. In order to enable data users to create any required reports, Smithfield adopted a low-code/no-code approach. Under this approach, the software tools used are designed to utilize visualized, “drag-and-drop” user interface, making it easy for a user to build the required reports quickly and with minimal (if any) coding. Low-code/no-code software tools tend to have a very low learning curve, and employees can be trained to use them in a short amount of time. An additional benefit of the low-code/no-code approach is in the fact that business end-users are empowered to create their own reports without requesting assistance from the IT group, as it was in the past. This allows programmers and other IT professionals to concentrate on the tasks more relevant to the maintenance and enhancement of the corporate information system.

Integrated procurement, production planning and distribution

How does one judge a success or a failure of a new system implementation? One way is to compare costs and benefits. Benefits brought by a new system should outweigh the cost of the system acquisition. While the cost of the new system is easy to estimate from the invoices paid by the company, quantifying the benefits is not as straightforward. Usually the benefits come in the form of efficiencies, reduced costs, and higher sales due to improved customer service and product quality. However, the biggest cost savings observed by Smithfield came from the supply chain, logistics, and transportation optimization.

Smithfield Foods is a vertically integrated company, meaning its value chain includes every element of the pork production and distribution process—from its hog farming operations and processing plants to its transportation and distribution networks. In addition to its company-owned farms, Smithfield purchases hogs from independently contracted hog farmers. From a logistics standpoint, Smithfield operates a three-tiered supply network: hogs are shipped from farms to processing plants; finished products are shipped to distribution centers and warehouses; and products are then delivered to customers.

After the SAP HANA system was implemented and deployed, Smithfield obtained a single, companywide view of suppliers’ prices, production plans, inventory availability, storage capacity and customers’ demand. This allowed the company to optimize the entire supply chain and make informed decisions about sourcing hogs, where to transport and process them, and where to store the finished product.

Path to a multi-cloud configuration

In 2020, five years into its cloud journey, Smithfield started to consider moving away from a single cloud provider to a multi-cloud model where the applications and data are deployed on-premises and between several cloud companies. There were a number of reasons behind this decision. First, by the year 2020, all major cloud providers developed expertise and capability to host and support clients’ ERP systems. Second, the cloud industry saw tremendous growth, with increasingly more service providers entering the field and new and more robust services and capabilities being offered. The largest cloud providers offer better technology as they are able to invest more into their cloud infrastructure and services. In this environment, Smithfield’s first cloud provider did not quite mature to full cloud capabilities to the same extent as, for example, AWS, Azure, and Google did. Going with a single provider has a downside of relying too heavily on one cloud company which gives it a leverage to price its services more aggressively. Finally, multi-cloud system configuration will allow the company to split the workload between multiple cloud providers and shift the workload dynamically from one cloud to another, should such need arise.

By 2020, Smithfield already had a significant relationship with a major public cloud provider, using cloud servers for software development and quality assurance. In order to connect users to data and applications running on-cloud and on-premises, Smithfield contracted with a global digital infrastructure company that has established high-speed private network connections to all major cloud providers. Setting up such network connections would be difficult for any company with a portfolio of operations as diverse as Smithfield’s. Smithfield intended to leverage the private connection network created by a digital infrastructure company to enable its multi-cloud strategy.

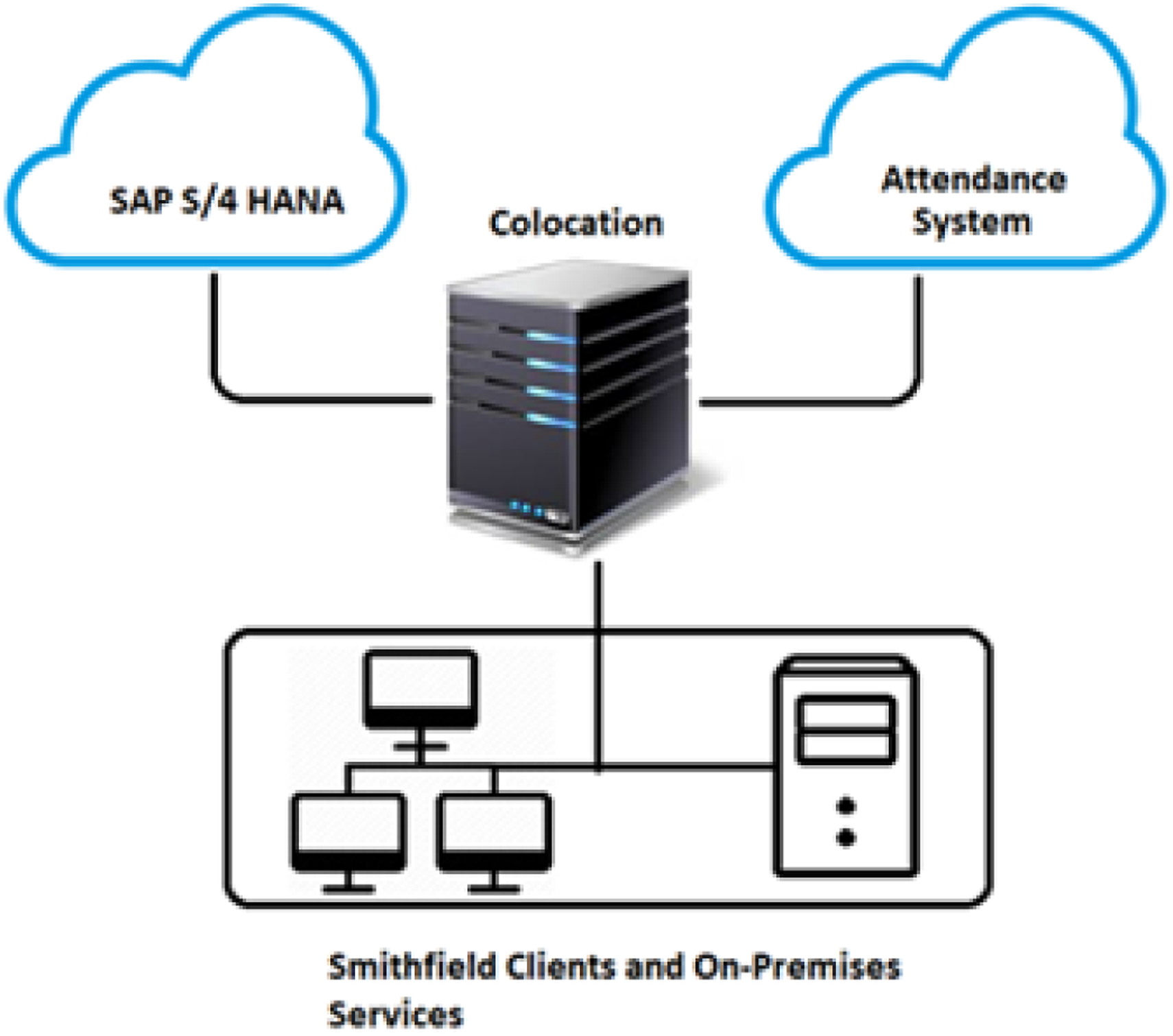

For example, Smithfield may have the SAP HANA system running on a cloud server, which needs to share employees data with the third-party application, such as personnel attendance and time management system running on another cloud provider’s server, as shown in the Exhibit 3. Data routing using colo (source: authors).

Data requests and communications are routed by a network hub, called colocation (or simply colo). The data transfer from the attendance and time management system running on a cloud server to a Smithfield data server running on-premises goes from the cloud to colo, and then from colo to a Smithfield data server. The multi-cloud strategy, adopted by Smithfield, is well supported by its partnership with the digital infrastructure company. As long as the colocation provider has established a connection with the selected cloud provider, servers of such provider can be easily integrated with the applications and databases running on any other cloud partner. Smithfield is using two colos, one on the East Coast, and one on the West Coast, in order to optimize routing of the requests.

Closing remarks

Moving an information system to the cloud has a major advantage of easily scaling up the system as needed. When a company acquires a new business, it needs more data storage, more servers, more CPUs and more RAM to support more users with the rights to access the system. A cloud environment makes this process easier, even though cloud providers sometimes market more capabilities than they can deliver. Cloud computing is a “computing capacity on demand,” whatever this capacity may be. Ramping up the number of virtual servers to add more users and data comes without having to invest in infrastructure.

One of the key factors for Smithfield to move the system to the cloud was the quality of service. Increasing service outages were a pivotal point in the company’s IT strategy change. Does that mean that with moving to the cloud, there are no service interruptions? They still happen in the form of network outages, fiber outages and databases and applications problems. But since the conversion to the cloud environment, there was never a system-wide outage like the ones experienced with a hosted data center. The transition to a public cloud provider allowed downtime to be more controlled. Each time when there were anticipated maintenance or software updates and hardware upgrades, the cloud provider notified Smithfield before the planned outages were executed.

Even when a company moves to a cloud service provider, occasional problems could still exist. For example, the servers at a cloud provider may experience overload, and users may observe an increase in network latency. If the connectivity to cloud servers is lost as a result of a network or application failure, there are contingency plans in place. To ensure business continuity under these circumstances, there is a redundant backup network in case there is a network outage. If the failure happened on the part of a cloud provider as opposed to the failure due to a business application error, the provider will move the workload to a different data center within 5 minutes, using its own infrastructure.

Potential problems like these have to be addressed in the system design phase. A fail-safe plan should be built into the system to allow for the business to still function when a failure happens. This applies not only to an infrastructure component (network, power supply, hardware) of the system, but the applications as well. For example, if a database is not accessible for data posting, there should be a temporary storage capability where the data are temporarily saved. When the connection to the database is restored, the data will eventually make its way to the storage.

As Anderson stated, “Smithfield went from an on-premises data center to IaaS and finally to a multi-cloud PaaS IT model. It does not mean that Smithfield’s way is right or wrong. This was just our approach.”

Questions for discussion

1) How did the cloud industry evolve, and what are the current major cloud companies? 2) What are the most popular services provided by cloud providers? 3) What was the advantage of Greenfield implementation versus Brownfield for Smithfield? What would be a migration path from SAP R/3 system to S/4 HANA if Smithfield would choose to use Brownfield approach? 4) Discuss pros and cons of moving the system into the cloud versus leaving it on-premises in a company-owned data center. 5) What is the difference between Master data and Transaction data? 6) Smithfield went through the transformation of its information system from on-premises to IaaS to PaaS. Why did the company not take the last step to SaaS?

Footnotes

Acknowledgements

This case was made possible through the generous cooperation of Julia Anderson, Global Chief Information Officer, Russell Hackworth, Senior Enterprise Architect, Matthew Groves, Director of IT Technical Services, Len Nicholson, Vice President IT Business Services and Transformation, and Michael Weyhmueller, IT Director Infrastructure Operations. All positions are listed as of the time of case writing. We are also deeply grateful to Laura Walsh, Smithfield Chief Information Officer as of 2021, for her assistance with case review and editing.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.