Abstract

Research on the automated assessment of mental disorders has primarily focused on adult participants and on behaviors on the individual level. We propose an approach to automatically assess the severity of children’s behavioral, emotional, and social problems from videos of face-to-face parent-child interaction. Children’s behavioral, emotional, and social problems were quantified using the Child Behavior Checklist (CBCL), focusing on the two broad categories “internalizing” and “externalizing” and the more specific categories “anxious”, “withdrawn”, and “aggressive”. Our experimental data comes from a cohort of 81 8- to 10-year-old children and their parents. We constructed features to represent the nonverbal face and head behaviors of the parents and children, combined them with the children’s symptom scores, and then fed these data to binary classifiers to make broad estimations of symptom severity. Prediction performance was good only for anxiety scores, although the prediction of withdrawal and internalizing scores did show some promise as well. We moreover identified the behaviors that were most informative in the context of predicting anxiety and withdrawal and investigated how they were influenced by symptom severity and topic of conversation. Our results exemplify how machine learning and computer vision can be used to gain further insights into child psychopathology.

Keywords

Introduction

Current methods to assess mental disorders depend primarily on clinical interviews and self-report scales in which psychological dysfunction is reported by either the affected individual or by someone else (e.g., their caregiver). Clinical interviews are time intensive, difficult to standardize across settings, and inherently subjective, while self-report scales are limited by various factors, such as the patient’s reading ability and differences between how clinicians and patients conceptualize their symptoms (Hinduja et al., 2024). They can be influenced by patients’ recall biases (e.g., downplaying or overestimating one’s symptoms), cognitive limitations (e.g., problems with memory), and social stigma (Low et al., 2020). Increased objectivity in the assessment of mental disorders may thus be achieved by examining behavior in a manner that is less susceptible to such biases. Nonverbal behaviors, for example, are often under less voluntary control than verbal behaviors and can sometimes be early and reliable indicators of disorders (Philippot et al., 2003). As such, the observation and measurement of nonverbal behaviors may be used to support existing methods of clinical screening and diagnosis.

The traditional approach to detecting nonverbal behaviors has been through manual annotation by trained human annotators, which is extremely time consuming and often requires compromises, such as annotating fewer frames or investigating fewer contexts. The automatic detection of nonverbal behaviors can be an efficient alternative, with many pre-built software toolkits readily available (e.g., Baltrušaitis et al., 2018; Bredin, 2023; Cao et al., 2017; Hinduja et al., 2023; Lugaresi et al., 2019; Onal Ertugrul et al., 2024; Plaquet & Bredin, 2023). These methods also have the potential for increased objectivity and representativeness, as it is much more feasible to annotate behavior from multiple angles and multiple contexts with automated methods than it is manually. The automated assessment of mental and neurodevelopmental disorders from multimodal cues has thus become an important topic in the field of affective computing.

Considerable focus has been placed on the automated detection of depression (see Girard & Cohn, 2015; Pampouchidou et al., 2019, for reviews), anxiety (see e.g., Tayarani-N & Shahid, 2025), and autism spectrum disorder (see de Belen et al., 2020, for a systematic review). This body of research, however, has two important shortcomings. First, there exists little research investigating nonverbal cues and their relation to psychopathology in children. Such research is crucial, because the patterns of nonverbal behavior that characterize specific disorders in children are likely to differ from those of adults (Kazdin et al., 1985). Second, the dyadic context of behavior has often been overlooked. Studies have focused on the nonverbal behaviors of individuals in isolation. This can be problematic, as most nonverbal behaviors occur and have meaning in the context of interaction. Even in clinical interviews, prospective patients observe and react to the behavior of the interviewer. To further our knowledge about the subtle nonverbal cues that mental disorders are marked by it is important to consider the dyadic nature of those cues (e.g., Bey et al., 2024; Bilalpur et al., 2023; Isaev et al., 2024).

To address the above-mentioned shortcomings, we propose an approach to automatically assess the severity of children’s behavioral, emotional, and social problems from videos of face-to-face parent-child interaction. These problems are commonly grouped under two broad categories:

Automated methods for assessing parent-child interactions exist and have been used to investigate constructs such as engagement level, interaction quality, and attachment style of children from infancy to adolescence (see Karaca et al., 2024, for a review). Important early work was done by Rehg et al. (2013), who introduced a multimodal dataset of adults and young children engaged in an interactive play protocol designed to assess socio-communicative milestones in the first two years of life. Studies utilizing automated methods with child participants have primarily focused on neurodevelopmental disorders such as attention-deficit/hyperactivity disorder and autism spectrum disorder (e.g., Bey et al., 2024; Isaev et al., 2024), whereas affective and conduct disorders have seen little attention. A notable exception is by Halfon et al. (2021), who developed a multimodal system to automatically assess children’s anger, anxiety, pleasure, and sadness levels from videotaped psychodynamic play therapy sessions. They used facial features extracted from videos together with transcripts of the sessions, and analyzed these for affect levels. Overall, pleasure was predicted best, and when both video and text data were used together. Anger, anxiety, and sadness, on the other hand, were predicted well from text data only, and facial analysis alone was not sufficient. The natural play setting also made it difficult to capture enough faces from a single camera viewpoint.

In the present study, we aimed to automatically assess the internalizing and externalizing problems of children, as measured by the CBCL, from videos of parent-child interaction. Building on previous research and gaps in the literature, we incorporated a wide range of nonverbal behaviors related to facial expressions, head orientation, and gaze direction, and examined behavior on both an individual and dyadic level. Although hand and body gestures are widely used and often have communicative power (see e.g., Hessels et al., 2025; Holler et al., 2009; Hostetter, 2011), and body posture data has previously been utilized in automatic depression detection (e.g., Joshi et al., 2013), the videos in our dataset only contained information from the shoulders up and did not allow for the inclusion of hand gestures or body posture (except for head movements). The specific research questions we investigated were: (1) Can we automatically predict children’s “internalizing”, “externalizing”, “anxious”, “withdrawn”, and “aggressive” syndrome scale scores from the face and head dynamics, eye gaze, and facial expressions of parents and children while they interact with each other? (2) If so, which features contribute most to prediction performance and (3) how are they represented when examined back in the context of nonverbal behavior during parent-child interaction? The study was exploratory, and no specific hypotheses were made.

Method

Dataset

The dataset used in the present study was collected as a separate add-on experiment in the Child & Adolescent cohort of the YOUth study conducted at Utrecht University (Onland-Moret et al., 2020). A complete description of participant recruitment and demographics, the technical details of the setup (e.g., data synchronization), and experimental procedure is presented in Holleman et al. (2021), with additional technical details found in Hessels et al. (2017).

Participants

The dataset consisted of 81 parent-child interaction videos. Participating children were between 8 and 10 years of age (M = 9.34 years), with 55 of them female (68%). Parents had middle to high education levels, representative of the overall relatively high socioeconomic status in the YOUth study compared to the general Dutch population (see Fakkel et al., 2020), and were aged between 33 and 56 years of age (M = 42.11 years), with 64 of them female (79%). The average family/household size of the sample was 4.27 residents (SD = 0.71 residents), with most parents having achieved middle to higher education level. Out of the 81 children, 70 had two parents or caregivers (92%), 7 had no siblings (9%), 42 had one sibling (55%), 23 had two siblings (30%), and 4 had three siblings (5%). All parents provided written informed consent for themselves and on behalf of their children. The study was approved by the Medical Research Ethics Committee of the University Medical Center Utrecht and is registered under protocol number 19–051/M.

Setup

The parent-child interaction videos were recorded using a dual eye-tracking setup consisting of two web cameras, two eye trackers, and two computer monitors (i.e., a dual eye-tracking setup with screens, using the terminology introduced in Valtakari et al., 2021). The parents and children sat on opposite sides of the setup in front of their respective computer monitors. The cameras were placed behind half-silvered mirrors and recorded at a resolution of 800 x 600 px at 30 Hz, allowing the parent and child to view each other through live video feeds of the cameras, which were presented at a resolution of 1024 x 768 px on their respective computer monitors. The camera feeds were saved with timestamps. Eye movements were recorded at 120 Hz using SMI RED eye trackers, and two AKG C417-PP Lavalier microphones were used to record the speech of both the parent and the child. Although physically present in the same room, participants viewed each other through computer monitors. One might argue that conversing in the setup was not completely representative of a typical face-to-face conversation. On the other hand, the nature of the setup allowed for fully frontal face data acquisition, which was beneficial for our automated analyses. Participants seemed to quickly get used to conversing in the setup. We do not have reason to believe that some participants perceived being in the setup different than others.

Procedure

Each dyad underwent two conversation scenarios in a fixed order. The “conflict” scenario had the parent and child discuss a recent disagreement and try to agree on possible solutions for solving it, while the “cooperation” scenario had them discuss an occasion that they would like to organize a party for and the activities that it would entail. Dyads were instructed to discuss each scenario for approximately five minutes.

Questionnaire

The CBCL is a widely used diagnostic screening questionnaire which contains 120 items representing typical problem behaviors of children. Parents rate each item on a 3-point scale (0: not true; 1: somewhat or sometimes true; 2: very true or often true for their child, see Achenbach & Ruffle, 2000). Items are categorized under different syndrome scales, allowing various dimensions of problematic behavior to be measured. The “internalizing” syndrome scale is a combination of the “anxious”, “withdrawn”, and “somatic complaints” syndrome scales. The “anxious” syndrome scale contains items that describe behaviors such as feeling fearful, worthless, and nervous. The “withdrawn” syndrome scale contains items related to behaviors such as feeling sad, not talking, and not enjoying things. The “somatic complaints” syndrome scale consists of specific somatic problems, such as feeling dizzy or tired. The “externalizing” syndrome scale is a combination of the “aggressive” and “rule-breaking” syndrome scales. The “aggressive” syndrome scale consists of items related to behaviors such as arguing, being mean, and destroying things, while the “rule-breaking” syndrome scale consists of items related to behaviors such as stealing and lying. We included both the “internalizing” and “externalizing” syndrome scales for an overall view, as well as the specific syndrome scales “anxious”, “withdrawn”, and “aggressive” to further differentiate between specific problems. 1 For additional examples of items on CBCL syndrome scales we refer the reader to Achenbach and Ruffle (2000) and the CBCL manual (Achenbach & Rescorla, 2001).

Final Dataset

CBCL data was available for 68 of the 81 parent-child dyads, for which 46 of the children were female (67%) and mean child age was 9.37 years. Of those 68 dyads, 63 dyads completed the conflict scenario, while all 68 dyads completed the cooperation scenario. Since each conversation scenario resulted in a one video recording per participant, the final dataset consisted of 131 video recordings. Video recordings for the conflict scenario had an average duration of 4.67 min (SD = 0.46 min, range = 3.43–5.93 min) and video recordings for the cooperation scenario had an average duration of 4.95 min (SD = 0.47 min, range = 3.07–6.06 min).

Video and Eye Tracker Data Analysis

To extract frame-level behavioral data, each frame of each video recording was first processed using two automated facial behavior analysis toolkits: OpenFace (2.0; Baltrušaitis et al., 2018) and PyAFAR (Hinduja et al., 2023; Onal Ertugrul et al., 2024). OpenFace was used to estimate head pose and gaze direction. When tested against ground-truth head pose and gaze data collected at distances comparable to normal screen viewing, OpenFace estimated head pose with a mean absolute error of 2.60° to 3.20° and eye gaze with a mean absolute error of 9.10° (Baltrušaitis et al., 2018). In practice, this means that its head pose estimations are rather reliable, whereas its eye gaze estimations leave a lot of room for improvement, but can potentially be used with sparse stimuli (for a validation, see Valtakari et al., 2023). PyAFAR was used to detect the occurrence of facial action units (henceforth AUs), representing specific facial muscle movements that are based on the facial action coding system (Ekman & Friesen, 1978). Importantly, AUs can be related to the expression of emotions. The combined activation of the cheek raiser (AU 6) and lip corner puller (AU 12), for example, represents a genuinely felt smile (i.e., a Duchenne smile, see Ekman et al., 1990). The adult version of PyAFAR detects the activation of 12 AUs (Hinduja et al., 2023). Given the nature of our dataset and previous PyAFAR evaluations, we expect average detection performance for AUs 6, 12, 14, and 15 to be around 0.89 or slightly lower due to cross-domain differences (Onal Ertugrul et al., 2019). Higher performance is anticipated for AUs 6 and 12, and lower for AUs 14 and 15 (Hinduja et al., 2023; see also Girard et al., 2017; Zhang et al., 2016, for the details of the GFT and BP4D+ databases PyAFAR was tested on, respectively). Lastly, the gaze data recorded by the eye trackers were downsampled and matched to the video frames using their respective timestamps.

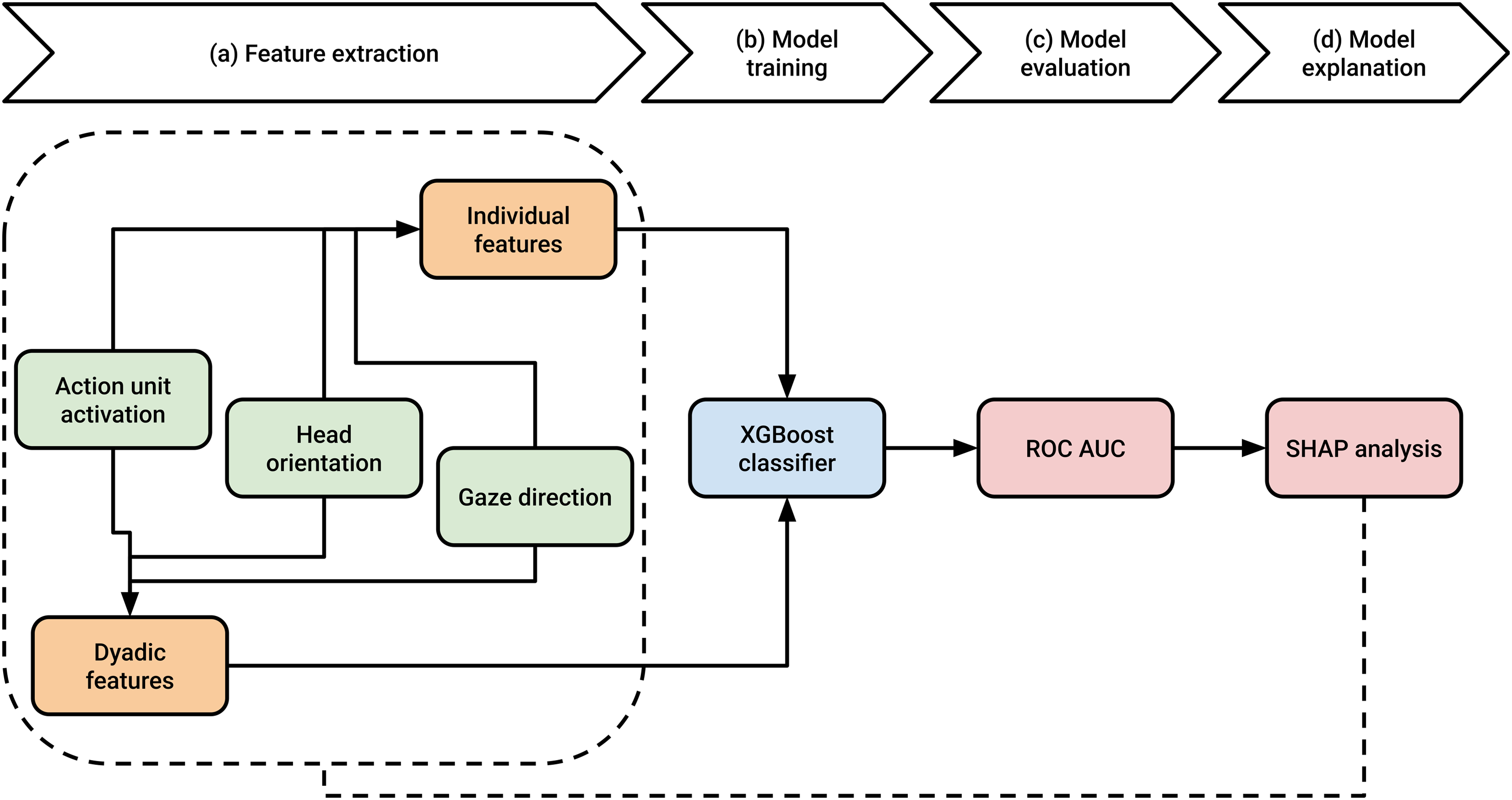

Feature Construction

We used the frame-level OpenFace, PyAFAR, and eye tracker data to construct two sets of features to describe observable nonverbal behaviors of the parents and children on the level of the videos. “Individual” features were constructed separately for the parent and the child, representing behaviors observable in their respective video recordings and eye tracker data. “Dyadic” features, on the other hand, were constructed using the combined data of both the parent and the child, representing synchronicity in their behavior. The complete feature construction process is explained in detail in the following two subsections.

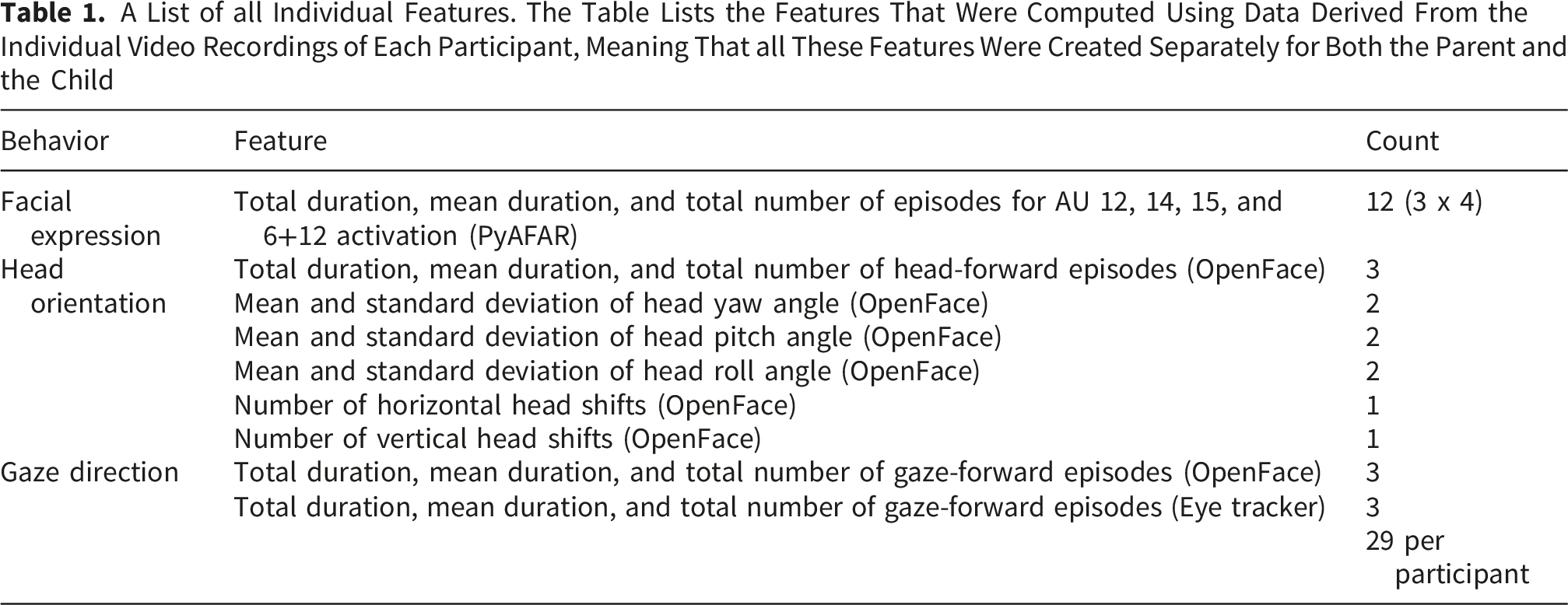

Individual Feature Construction

For AU selection, we were inspired by the results of Girard et al. (2014), who found depression severity to be related to the decreased activation of AU 12 (the lip corner puller) and AU 15 (the lip corner depressor), and increased activation of AU 14 (the dimpler). As such, we only included AU features related to the activation of those AUs, with the addition of the Duchenne smile, consisting of the combined activation of AU 6 (the cheek raiser) and AU 12. For head orientation and gaze direction, we focused on features related to face looking.

First, for each frame, an AU was labeled

Second, we grouped together consecutive frames for which an AU was labeled either active or inactive or head orientation or gaze angle was labeled either forward or averted to form episodes spanning multiple frames. Episodes of only one frame were filtered out and surrounding episodes were merged. These episodes were computed for all included AUs (active/inactive), head orientation (forward/averted), and gaze direction (forward/averted). Episode durations were determined using the frame timestamps described earlier in the method section.

A List of all Individual Features. The Table Lists the Features That Were Computed Using Data Derived From the Individual Video Recordings of Each Participant, Meaning That all These Features Were Created Separately for Both the Parent and the Child

Dyadic Feature Construction

A List of all Dyadic Features. The Table Lists the Features That Were Computed Using the Combined Data of the Parent and the Child

Binary Classification, Model Evaluation, and Model Explanation

To perform binary classification, the CBCL syndrome scale scores needed to first be reduced to binary classes. Given that our sample consisted of nonreferred children participating in a large cohort study, the raw CBCL syndrome scale scores were far from the diagnostic ranges. Therefore, we based our binary classes on the raw mean scores of the nonreferred sample as presented in the CBCL manual (Achenbach & Rescorla, 2001). Taking gender into account has been shown to improve classification performance at least for depression detection (Alghowinem et al., 2018; Maddage et al., 2009; Pampouchidou et al., 2016; Stratou et al., 2015; Yang et al., 2016). Due to the relatively limited number of boys in the final sample, we did not train classifiers separately for boys versus girls. However, gender was considered in binary class assignment: If a child’s score on a syndrome scale was above the mean of their respective age group and gender, the child was labeled as belonging to the positive class (i.e., the class “1”) on that syndrome scale, and as negative class (“0”) otherwise. The raw CBCL scores and the class cutoff thresholds are presented in Figure 1. Raw CBCL scores.

Next, using features from each dyadic interaction we trained and tested XGBoost classifiers (Chen & Guestrin, 2016) for binary CBCL syndrome classes using stratified four-fold cross validation. XGBoost was chosen due to its good performance even with slightly imbalanced datasets (i.e., with at least 25% positive classes, see Velarde et al., 2023) and ease of use. The classifier was trained and tested separately for three feature combinations and three scenario combinations. The three feature combinations were (1) individual parent and individual child features, (2) dyadic features, and (3) individual+dyadic features. The three scenario combinations were (1) conflict, (2) cooperation, and (3) conflict+cooperation. As the combination with both scenarios together included data extracted from two separate video recordings for most participants, we ensured that that all the data for any unique dyad was only in either the training or the testing dataset for any given fold. Using speaker diarization achieved with pyannote.audio (Bredin, 2023; Plaquet & Bredin, 2023), we further trained and tested the classifier separately with features built on data extracted during moments when the children were speaking and during moments when the children were not speaking. The resulting classifier models were evaluated using area under the receiver operating characteristics curve (ROC AUC), a performance metric that is not affected by imbalanced datasets (Jeni et al., 2013), after which the top contributing features for the best performing models were assessed using SHapley Additive exPlanations (SHAP) analysis (Lundberg & Lee, 2017).

2

Finally, to gain further insights into which features contributed most to classifier performance, we circled back to the distributions of those features to examine differences and interactions between symptom severity and the two conversation scenarios. An illustration of the analysis pipeline is presented in Figure 2. The analysis pipeline.

Results

How did the Classifier Perform?

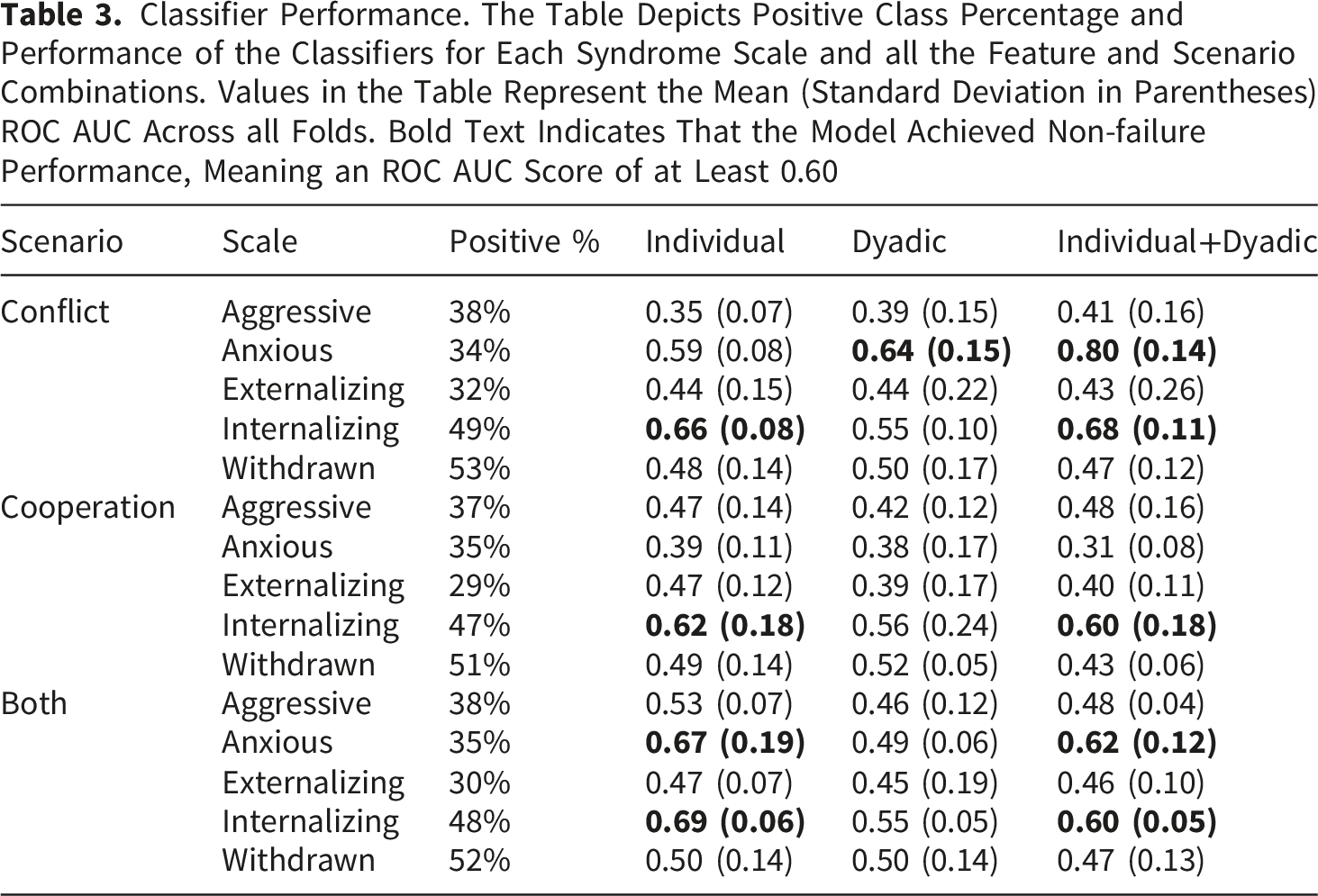

Classifier Performance. The Table Depicts Positive Class Percentage and Performance of the Classifiers for Each Syndrome Scale and all the Feature and Scenario Combinations. Values in the Table Represent the Mean (Standard Deviation in Parentheses) ROC AUC Across all Folds. Bold Text Indicates That the Model Achieved Non-failure Performance, Meaning an ROC AUC Score of at Least 0.60

Classifier performance.

Classifier Performance During and Outside Episodes of Child Speech

Next, we examined classifier performance separately with features extracted during and outside episodes of child speech. As Figure 4 shows, classifier performance for features extracted during episodes of child speech was overall quite similar to the general results presented in Table 3 and Figure 3, with two notable exceptions: First, the best-performing “anxious” syndrome scale classifier (conflict scenario, all features, see Figure 3C) now performed at chance level (M = 0.49, SD = 0.12; see Figure 4C). Second, there was a significant boost in performance on the “withdrawn” syndrome scale for the cooperation scenario using individual features only (M = 0.67, SD = 0.12; see Figure 4A), a classifier that previously performed at chance level (see Figure 3A). Classifier performance during episodes of child speech.

When examining classifier performance outside episodes of child speech (see Figure 5), overall performance was highly similar to the general results presented in Table 3 and Figure 3. Thus, it seems that the nonverbal behaviors that contributed to anxiety mainly presented themselves when the children were not speaking, while behaviors more important to withdrawal became somewhat more evident under different circumstances, such as during episodes of child speech during discussions that were about a potentially cooperative topic. Not much can be concluded about the predictions for the “aggressive”, “externalizing”, and “internalizing” syndrome scales, as performance on them was similar both during and outside episodes of child speech. Classifier performance outside episodes of child speech.

Which Features Contributed Most to Classifier Performance?

To shed light on which features contributed most to classifier performance, we computed the top 10 features of the best classifier for the “anxious” syndrome scale (conflict scenario, individual and dyadic features, see Figure 3C) and the best classifier for the “withdrawn” syndrome scale (cooperation, individual features, during child speech only, see Figure 4A) by averaging all features’ absolute SHAP values across all four folds. Children’s anxiety scores were best predicted from features related to nonreciprocal parent smiling, child head orientation, and parent head orientation/gaze direction (Figure 6A). This is not surprising, as anxiety has been linked to specific patterns of gaze behavior during dyadic interaction (Hessels et al., 2018; Kleberg et al., 2017; Wieser et al., 2009) and panic disorder severity has been linked to more frequent dyadic patterns of facial affective behavior between patients and therapists (Benecke & Krause, 2007). The individual child features that contributed best to predicting withdrawal were related to parent head orientation/gaze direction, child head orientation/gaze direction, child dimpling, child smiling, and parent lip corner depression (Figure 6B). Such behaviors were also expected, as differing patterns of head movements, smiling, and dimpling have all been implicated in relation to depression (see e.g., Bilalpur et al., 2023; Peham et al., 2015). Feature contributions assessed with SHAP analysis.

From Binary Classification Back to Nonverbal Behavior

As we had now identified one classifier which reliably predicted whether children fell above or below average (henceforth “high” and “low”, respectively) on the “anxious” syndrome scale, we circled back to the level of the features to examine whether we could observe differences in the children’s nonverbal behaviors depending on their anxiety levels and the topic of conversation. We limited our investigation to features with an average SHAP value that was greater than 0.2 (i.e., the top four features). This investigation yielded three interesting patterns (see Supplementary S2 for complete descriptive statistics).

First, as illustrated in Figure 7A, parents whose children scored low in anxiety demonstrated shorter episodes of parent-only Duchenne smiling in the conflict scenario than parents whose children scored high in anxiety. There are multiple possible explanations for this finding. For example, it could be that parents whose children were more anxious engaged in longer periods of Duchenne smiling in the conflict scenario in general, or it could be that the children themselves engaged in shorter periods of Duchenne smiling in the conflict scenario, resulting in shorter joint episodes. To investigate this further, we examined the average duration of the episodes in which parent-child dyads engaged in joint Duchenne smiling as well as the average duration of the episodes in which parents engaged in Duchenne smiling in general (see Supplementary S2). We did not find the average duration of joint Duchenne smiling between parents and children in the conflict scenario to depend on the child’s anxiety level, but we did find parents whose children were more anxious to engage in more overall Duchenne smiling during the conflict scenario, thus favoring the former of the proposed explanations. This suggests that parents with children who scored high in anxiety engaged in more parent-only Duchenne smiling for reasons unrelated to the amount of Duchenne smiling demonstrated by their child. Children’s behaviors as a function of anxiety level and conversation scenario.

Second, children who scored high in anxiety performed many more horizontal head shakes than children who scored below average, in both the conflict and the cooperation scenario, suggesting that the amount that children shook their heads was influenced by their anxiety level and not by the topic of conversation (see Figure 7B).

Third, as Figure 7C shows, children with a low anxiety score oriented their head forward for much longer average periods in the conflict scenario than children with a high anxiety score. Although a similar pattern was observed for the cooperation scenario, the greater overlap between the error bars indicates that the difference was not likely significant. It therefore seems that children with a high anxiety level averted their gaze more, but only when the topic being discussed was potentially conflicting.

Next, given the marked increase in performance on the “withdrawn” syndrome scale that was observed for the cooperation scenario during episodes of child speech, we conducted a similar investigation on its important features (see Supplementary S3 for complete descriptive statistics). An additional three interesting patterns were observed: (1) Parents whose children scored low in withdrawal gazed forward more than parents whose children scored high in withdrawal (Figure 8A). This was true for both the conflict scenario and the cooperation scenario. (2) Children low in withdrawal gazed forward more than children high in withdrawal (Figure 8B) in both the conflict and the cooperation scenario. (3) Children low in withdrawal demonstrated more AU 12 (the lip corner puller) activation than children high in withdrawal (Figure 8C), but only in the cooperation scenario, while the opposite was true for the conflict scenario. As the complete descriptive statistics suggest (see Supplementary S3), withdrawal symptoms were best characterized by a reduction in behavior, which was generally either roughly equal between scenarios or greater for the cooperation scenario. Children’s behaviors as a function of withdrawal level and conversation scenario.

Discussion

Our aim was to automatically assess the severity of children’s behavioral, emotional, and social problems from videos of parent-child interaction recorded under two scenarios: a conversation about a potentially conflicting topic and a conversation with a cooperative nature. To do this, we first quantified a wide range of nonverbal behaviors from the face and head dynamics of parents and children individually as well as on the level of the dyad (e.g., joint smiling). Next, we fed these nonverbal behaviors to a binary classifier to make broad estimations of symptom severity (i.e., whether a child’s symptoms were above or below average) on the more general Child Behavior Checklist (CBCL) syndrome scales “internalizing” and “externalizing” as well as the narrower syndrome scales “anxious”, “withdrawn”, and “aggressive”. Reliable predictions were achieved only on the “anxious” syndrome scale and specifically for the conflict scenario.

What might explain such results? The “anxious” syndrome scale is composed of items mainly related to the increase of negative affect, such as being fearful, as well as items that identify crying and nervous behavior. Perhaps the conflict scenario was more likely to elicit such behaviors than the cooperation scenario. In line with this idea, Thomas et al. (2017) assigned adolescents to either a conflict or a control task and found that adolescents assigned to the conflict task experienced greater levels of arousal than adolescents assigned to the control task, and that adolescents with higher baseline levels of conflict, as estimated through an interview, were also more likely to exhibit more hostile behavior, but only in the conflict task. Conversely, the “withdrawn” syndrome scale contains items mainly related to the reduction of positive affect, such as withdrawal from interactions, shyness, and feeling a lack of energy. One might thus expect withdrawn behavior to be more observable during moments that elicit an increase in positive affect. Our results were partly in favor of this, as we did observe almost fair performance for the “withdrawn” classifier under the cooperation scenario but not under the conflict scenario. Notably, however, this was true only when the classifier was trained using features extracted during episodes of child speech, suggesting that withdrawn behavior may not have been fully reflected during all moments of the interaction. The dichotomy between the presentation of anxious and withdrawn behavior (i.e., an increase in negative affect versus a reduction in positive affect) was also reflected in our results: smile-related behaviors were not included in the top 10 features of the best “anxious” syndrome scale classifier but were included in the top 10 features of the best “withdrawn” syndrome scale classifier. Ultimately, however, prediction performance for the “withdrawn” syndrome scale was, even at its best, still poor. A potential explanation for this could be that the cooperation scenario did not elicit enough positive affect for reductions of positive affect in certain children to become noticeable. Future research should find reliable ways to define and operationalize perceived levels of conflict and cooperation.

Prediction performance for the “externalizing” and “aggressive” syndrome scales was below poor for all tested combinations. It could be that the behaviors we quantified were not sufficiently representative of the “externalizing” and “aggressive” syndrome scales, which consist of behaviors that are not often characterized by specific facial expressions (e.g., stealing, swearing, breaking rules, being mean, arguing, fighting, screaming, and so on). Another possible explanation is that the context of the present study was not extreme enough for externalizing behaviors to occur. Such extreme behaviors may require more extreme contexts. Sampling bias may also have factored in, as children exhibiting more externalizing symptoms may have been less likely to participate (the experiment was done at the end of a long day of varied parent-child observations; Holleman et al., 2021). To add to this, there was limited representation of the clinical ranges for all syndrome scales. Small differences between syndrome scale scores may not be reflected in observable differences in the behaviors they are characterized by. Such effects may also vary across syndrome scales. For example, differences in symptom severity on the “anxious” syndrome scale may have been more observable in the behavior of the children than differences in symptom severity on the other syndrome scales.

What Can Automatic Assessment Methods Teach Us About Child Psychopathology?

A reliable automatic assessment model could potentially be used as a tool to aid in the screening and diagnosis of mental disorders. This can be advantageous, especially if what the model predicts allows clinicians or researchers to save time and money (e.g., by eliminating the need to conduct extensive clinical interviews or manually annotate large streams of data). The present study is the first we are aware of to attempt to automatically assess the internalizing and externalizing behavior of children. While our models may not yet be good enough to use as diagnostic or screening tools, our results provide initial evidence to suggest that making broad predictions of children’s anxiety levels from parent-child video recordings is possible. In addition to this, they have allowed us to identify some key behaviors.

First, parents whose children scored above average on anxiety demonstrated longer average episodes of parent-only Duchenne smiling (i.e., episodes during which the parent engaged in Duchenne smiling but the child did not) compared to parents whose children scored below average on anxiety, but only in the conflict scenario. In general, smiles have many social outcomes (see Gunnery & Hall, 2015, for a review). For example, people smiling in pictures are perceived more attractive and kinder than people who are not (Otta et al., 1996), and people are more likely to trust a photograph of a person when playing a trust game if the person is smiling (Scharlemann et al., 2001). Duchenne smiles, in particular, seem to generate a sense of trustworthiness in the observer, especially when issues regarding trust or cooperation are made salient (Johnston et al., 2010). Perhaps parents were sensitive to the anxiousness of their children and engaged in longer periods of Duchenne smiling in attempt to appear more trustworthy or cooperative during the conflict scenario. Relatedly, a recent study found increased maternal mental health challenges to be associated with an increased onset amplitude, onset duration, offset duration, and total duration of mothers’ smiles (Dust et al., 2024). Perhaps the anxiety levels of children were also reflected in the anxiousness or discomfort experienced by their parent, thus resulting in longer bouts of Duchenne smiling. To note, however, the difference in the average duration of parent-only Duchenne smiles between parents with children scoring high on anxious behavior and parents with children scoring low on anxious behavior was small (0.12 s) and should be interpreted with caution. However, an interesting line of future inquiry would be to examine whether this difference might increase when examining the nonverbal behaviors of more distinct groups with greater variability between children’s symptom scores. Moreover, while parent-only Duchenne smiling is a behavior demonstrated by the parent, it occurs in the context of parent-child interaction. Future research might investigate its underlying motivations further. For example, do parents tend to smile more in general when the topic of discussion involves potential conflict, or do they do so to adapt to the behavior of their child?

Second, children with more anxiety symptoms shook their head more than children with less anxiety symptoms, and this was true for both the conflict and the cooperation scenario. Shaking one’s head from side to side, a behavior learned in early childhood, is often used to signal disapproval in many human cultures and even among some non-human primates (Bross, 2020) but has many other uses as well (see Kendon, 2002). Perhaps children who scored high on the “anxious” syndrome scale were more likely to signal disapproval, regardless of whether they were planning a party or resolving a conflict together with their parent.

Third, children who scored high in anxiety oriented their head toward their parent’s face when resolving a conflict for shorter average durations than children who scored low in anxiety. In other words, it appears that children’s higher anxiety levels were associated with more head avoidance when resolving a conflict. Increased head avoidance on its own is not surprising, as traits related to social anxiety disorder have been found to be related to less looking at others’ eyes (Hessels et al., 2018; Weeks et al., 2019). Crucially, however, the fact that increased head avoidance mainly presented during the conflict scenario suggests that it is not globally related to anxious behavior but is dependent on the context of the interaction.

Children’s withdrawal symptoms, on the other hand, were best reflected by gaze behavior. Specifically, parents whose children scored low in withdrawal looked more at their child’s face during both the conflict and the cooperation scenario than parents whose children scored high in withdrawal, and children who scored high in withdrawal looked at their parent’s face fewer times than children who scored low in withdrawal. Overall, this was a similar pattern to that observed in the nonverbal behaviors related to children’s anxiety scores, suggesting that gaze avoidance plays a role in both anxiousness and withdrawal. The CBCL manual considers both syndrome scales to also represent depressed behavior, so finding similarities between the behaviors they are characterized by is not surprising. We also found children who scored high in withdrawal to smile less than children who scored low in withdrawal in the cooperation scenario, while the opposite was true for the conflict scenario. Interestingly, withdrawal was predominantly characterized by reduced presentation, and this reduction was either equal between scenarios or greater for the cooperation scenario. Behaviors representing anxiousness, on the other hand, were characterized by both reduced and increased presentation, and this was more pronounced for the conflict scenario than the cooperation scenario, further reinforcing the idea that context dictates the presentation of many symptomatic behaviors.

Lastly, we identified some more general aspects of behavior that contributed to classifier performance. The classifier for anxious behavior performed best when it was trained using both individual and dyadic features. The set of AUs included in the present study was motivated by the results of Girard et al. (2014), who found the symptom severity of depressed individuals to be associated with decreased activation of the lip corner puller and lip corner depressor as well as increased activation of the dimpler, and argued the results to suggest that individuals engage in specific patterns of facial behavior to maintain or increase interpersonal distance. Given our results, it may be that these facial behaviors serve as indicators of anxiety symptoms in children as well, and it moreover seems that they are not only important on the individual levels of the child and the parent but also on the dyadic level of the interaction.

It is moreover worth noting that the nonverbal behaviors that related to children’s anxiety scores were mainly observable outside of child speech (and therefore likely also involving moments of parent speech). Thus, the nonverbal behaviors of parents and children that relate to children’s anxiety levels may depend on conversational roles: parent behaviors may become more apparent during moments of speech while child behaviors may become more apparent during moments when they are listening and reacting to their parent’s speech. The conversational roles in the present study differed between the two conversation scenarios; as Holleman et al. (2021) summarize, parents spoke more in the conflict scenario than they did in the cooperation scenario while the opposite was true for children. Moreover, parents spoke more than children overall, and there was more silence during the conflict scenario than there was in the cooperation scenario. As such, the roles of the parent and child may have been more equal when, e.g., planning a party in the cooperation scenario, while the parent may have taken the lead more when resolving a conflict (Holleman et al., 2021). Differences in conversational roles between the scenarios may explain why behaviors related to anxiety symptoms became more apparent during the conflict scenario. This raises the question of whether the inclusion of verbal behaviors, particularly on the parent’s side, may have improved our predictions. This idea is partly supported by the results of Halfon et al. (2021), who found that affect information from the text modality, representing the speech content of play therapy sessions, proved to be much more useful for the prediction of children’s anger, anxiety, and sadness levels than facial affect information. To further explore the interaction between nonverbal and verbal behaviors in relation to child psychopathology, future research should aim to include representative behaviors from multiple modalities.

Challenges, Limitations, and Suggestions for Future Research

An important challenge in the automatic assessment of mental disorders from video recordings lies in the quantification of behaviors. Behaviors often need to be quantified on the level of the videos, meaning that the temporal dynamics and interactive nature of behaviors are easily lost. There exist a wide range of computations that one can perform on a single behavior, and through feature engineering one may reduce one’s dataset to features that contribute most to one’s model to improve its performance. However, once complex behaviors are represented by several different variables that all describe the same behavior but in different ways, the actual contributions of those behaviors can become difficult to identify. We attempted to keep our behavioral descriptions simple and easily explainable. This, however, may have contributed to us not finding links between nonverbal behaviors and symptom severity on most of the syndrome scales, highlighting the delicate balance between performance and explainability, which often differs between research fields. In social and affective computing, for example, one may wish to optimize on prediction performance, while in psychology, one may wish to optimize on explainability.

One major limitation of the study is related to the nature of the recording setup, which was designed to explore fine-grained details in gaze behavior. This was optimal for the analysis of face and head dynamics but rendered the analysis of full body posture and hand gestures impossible. The inclusion of additional features to describe the various body and limb movements people make when interacting with each other is likely to yield valuable information and will be incorporated in our future work.

Still other limitations lie in the constraints of the automatic analysis tools we used. PyAFAR, the tool we used to detect the occurrence of facial muscle movements, detects some movements better than others (Hinduja et al., 2023). It may be that the facial expressions that better characterize the other syndrome scales were more reliant on the facial muscle movements that are not detected well in the first place. It is also important to note that PyAFAR, as many other computer vision and machine learning models, was trained on adult participants and may generalize less well to children. The PyAFAR adult model has been shown to generalize less well (although still at acceptable levels) to infant data than Infant AFAR, a PyAFAR model fine-tuned on infant faces (Onal Ertugrul et al., 2023). However, since the children in our dataset were much older than infants, better generalization can be expected. Also, like Bilalpur et al. (2023), we set the threshold for AUs to be labeled as active if their occurrence value was at least 0.5. Given that AUs have different occurrence rates in general, each AU is likely to have a different optimal occurrence threshold. Future studies may benefit from empirically determined AU thresholds.

Finally, defining a baseline for head and gaze avoidance using data provided by OpenFace was not straightforward. Our baseline for facing or looking at the other person was defined using the head and gaze angles of periods during which we knew that both parents and children were likely to look at each other’s face (i.e., the median head/gaze angles of the first 100 frames of the video recordings). However, when observing the recordings, we noticed that in some cases parents and especially children moved their heads around quite a bit during the recordings. Although OpenFace reports head angle and gaze angle with respect to the camera, its estimates seem to be rather sensitive to changes in head position (and its gaze estimates are rather inaccurate, see Valtakari et al., 2023). This may have resulted in errors identifying episodes of head or gaze avoidance.

Conclusion

Applying machine learning and computer vision to automatically assess mental disorders from videos of parent-child interaction shows promise. It seems that at least anxiety symptoms are represented by the nonverbal behaviors of these interactions, particularly those pertaining to genuinely felt smiles on the side of the parent. The behavioral representations of such complex disorders are likely to vary greatly between individuals and between specific groups, such as adults and children. As our results demonstrate, they are also likely to vary depending on the context of the interaction, and many behaviors need to be examined in their interactive contexts. It is important to generate further research to identify such behaviors and the specific contexts they occur in.

Supplemental Material

Supplemental Material - Automatically Assessing Children’s Internalizing and Externalizing Behavior From Face and Head Dynamics During Parent-Child Interaction

Supplemental Material for Automatically Assessing Children’s Internalizing and Externalizing Behavior From Face and Head Dynamics During Parent-Child Interaction by Niilo V. Valtakari, Roy S. Hessels, Albert Ali Salah, Itir Onal Ertugrul in Journal of Experimental Psychopathology.

Footnotes

Ethical Considerations

The study was approved by the Medical Research Ethics Committee of the University Medical Center Utrecht and is registered under protocol number 19–051/M.

Consent to Participate

All parents provided written informed consent for themselves and on behalf of their children.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by a Utrecht University Dynamics of Youth (DoY) invigoration grant.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.