Abstract

This article examines the local evaluation and assessment practices of Finnish early childhood education and care (ECEC) based on Actor–Network Theory (ANT). We employ the concept of translation to discuss how evaluation and assessment practices unfold in networks and how actors come together in negotiations and contestations that seek to orient these networks. ANT approaches society as being formed of networks and directs attention to non-human actors, such as technical and material resources. In this article, we discuss how local evaluation and assessment networks are formed by translations connecting different actors. This article examines three cases in which various assessment tools were used locally by Finnish ECEC. The results highlight the arbitrariness and elasticity of the networks in the translation process. Thus, we introduce the concept of democracy of translation to examine the flexibility of assessment networks and the extent to which actors in these networks can re-negotiate and re-orient the practices taking place.

Keywords

Introduction

Evaluation and assessment have been integral parts of the education system in previous decades; however, in an international and historical context, both education and its evaluation have had different meanings (Verger et al., 2019). The global trend has recently shifted toward a more learning-centric assessment perspective. The volume and variety of data have also increased, and the data are used to make decisions that influence the daily practices of early childhood education and care (ECEC) (Bradbury, 2019; Roberts-Holmes, 2015). The learning-centric emphasis has caused concern in Nordic countries, such as regarding schoolification in ECEC, which correlates with educational institutions’ aims to normalize children acquiring the desired qualities and turning children into objects of evaluation (Alasuutari et al., 2014; Karila, 2012).

Data collection in all sectors of life has increased, changing not only practices but also expectations, including those of educational institutions (Bradbury and Roberts-Holmes, 2018). Such datafication practices relate to the neoliberal tradition and a rationality in which data is an instrument for guaranteeing educational quality and ensuring teachers’ accountability (Dahlberg et al., 2007). As data accumulates and circulates through different levels, from ECEC centers to administration, it creates spaces for comparison and calculation where data becomes a way of showing compliance. Often, this also leads to an obsession with gathering more and more data, as compiling data is believed to diminish the possibility of error in educating future citizens as efficient and productive members of society (Dahlberg and Moss, 2005). Associated with the rise of digital technologies, digitization, and big data, datafication is intensifying as more dimensions of social life play out in digital spaces. Datafication allows for a diverse range of information to become machine-readable, quantifiable data for aggregation and analysis (Southerton, 2020).

Nordic (i.e., Finland, Denmark, Norway, Sweden, Iceland, Faroese Islands, Greenland, and the Åland Islands) ECEC pedagogy and interpretations concerning curricula have been classified as belonging to the social pedagogical tradition which encourages play, relationships, curiosity, and the desire for meaning-making based on activities (Karila, 2012). Urban et al. (2022) state that the values and principles of guidance documents are similar in Nordic countries. The Nordic model is often separated from a neoliberal model (e.g., Ball, 1998), which is associated with the systematic testing of children, the external assessment of teachers’ activities and accountability (Mahon et al., 2012; Verger et al., 2019). However, when comparing evaluation and assessment practices, additional levels of variation can be discovered.

Recent indications show that Nordic evaluation and assessment practices are shifting toward a global test-based assessment model. For example, Denmark uses standardized tests in ECEC for young children (Gitz-Johansen, 2011; Ministry of Children and Education, 2022). In Sweden, a formalized concept of teaching is expressed in the curriculum. Sweden is also mushrooming various digital tools for evaluating and enhancing the operations of private actors (Ledin and Machin, 2021). In Finland, the pedagogical documentation of an individual child and a child group is a key part of an evaluation—hence, an individual ECEC plan should be made for each child (Finnish National Agency for Education, 2022). Finland has so far managed to resist global testing culture pressure in ECEC and basic education, and unlike other Nordic countries, evaluation data are only published on a general level (Wallenius, 2020). It can therefore be assumed that the Nordic evaluation model is not as united as the debate sometimes suggests (see e.g., Urban et al., 2022).

Nevertheless, evaluation and assessment practices have also become an integral part of ECEC in Finland and are related to increasing datafication (Mertala, 2021); however, it is important to note that in Finnish evaluation culture, the focus is on pedagogical activities and operating environments, not on the child and their skills. In other words, datafication means different things in the Finnish context than in United Kingdom education, for example, where the consequences of neoliberal evaluation model can be seen more openly. Dahler-Larsen (2011) noted that there may be conceptual challenges in researching evaluation. It is noteworthy that the terms assessment and evaluation have different cultural and historical meanings, particularly in an international context (Dahler-Larsen, 2011). In Finnish, the word arviointi is used to refer to both assessment and evaluation. In this paper, we utilize Gullo’s (2005) definition, in which assessment is used to refer to practices the staff undertake to gather information on children in various ways. This information is then used for teaching and curriculum development. Evaluation, on the other hand, refers to data collected using informal, formal, and standardized measures for judgments about program effectiveness and accountability (Gullo, 2005).

Notwithstanding the similarities of Nordic countries, Urban et al. (2022) argue that considerable responsibility is delegated to municipality-level actors, which can lead to local variations in ECEC settings at the country level. In this article, we continue the discussion about the different meanings and materializations of the Nordic evaluation and assessment model. We shift the focus from global and national debates to local-level and local practices by using Actor–Network Theory (ANT) to examine the construction of evaluation and assessment practices in Finnish municipalities.

This article is based on a multiple-case study approach. The cases examined three different assessment tools used locally in Finnish ECEC. First, we show that there is considerable variance in evaluation practices, even when they are nominally situated within a Nordic or Finnish model. Second, we employ the concept of translation to examine how assessment practices are enacted. Examining assessment practices through the lens of translation enables us to identify overarching narratives and explore variations at the local level. Based on the analysis we introduce the concept of democracy of translation to examine the flexibility of evaluation networks and the extent to which actors in these networks can re-negotiate and re-orient the practices taking place.

Tracking local evaluation networks

To extend the common notions of evaluation and assessment in Finnish ECEC, it is essential to examine network formation at the micro level in municipalities and ECEC centers. We see ECEC centers as places of minor politics (see e.g., Rose, 1999) where the limits of evaluation and assessment can be pushed further for the ECEC practitioners to adapt or contest prevailing assumptions concerning how evaluation should be carried out (Dahlberg and Moss, 2005). Our aim is to outline the practices and factors shaping the world more broadly, rather than through human action or technologies, such as digital assessment tools (Latour, 1999). ANT (Latour, 1999, 2005) is suitable for tracking local evaluation networks, not only because it emphasizes the singular character of networks—meaning evaluation unfolds differently in each case—but also because it acknowledges the performative power given to non-human actors, such as numbers, databases, texts, and pedagogical devices (Fenwick and Edwards, 2010; Piattoeva, 2018; Piattoeva and Saari, 2022). These patterned heterogeneous arrangements play out and make new connections in evaluation networks. They are not perfect designs but messy and non-coherent constellations (Law, 2007; Law and Ruppert, 2013), which can be utilized to explain why ECEC evaluation networks are formed differently in Finnish municipalities.

An often-used approach to actor networks is an analytical division into devices, documents, and “drilled” people (Helén and Lehtimäki, 2020; Law, 1986). While recognizing that stable ontological divisions between these three categories are particularly difficult to sustain with an ANT approach, where actors exist in relation to each other, the idea can be helpful in orienting the initial examination of a network. In considering an actor network of assessment, we can therefore focus on the devices participating in the practices (activity trackers, evaluation platforms, and portfolio applications); the documents guiding and stabilizing these practices (curricula, Ministry of Education reports, guidelines, and municipal directives); and the people who have been trained to operate in the networks (ECEC staff, municipal administration, and evaluators). In this article, we consider the role of humans and non-humans in the same analytical terms as hybrids that are closely connected (Law, 1986; Piattoeva, 2018; Valasmo et al., 2023).

We examine the formation of evaluation and assessment networks in Finnish ECEC through the concept of translation. Based on ANT, the concept is used to analyze events in which actors’ possibilities to act are negotiated and redefined. Translation can be considered an attempt to orient the network toward a particular goal and to gain power of speaking in its name (Callon, 1986). Translations are typically approached as processes that contain several stages. In this article, our focus is on the early stages of translation, namely (1) problematization, where an actor or group of actors defines other actors’ identities in a network to solve a certain problem, and (2) interessement, where actors are locked into proposed roles to solve the problem identified at the previous stage (Callon, 1986). We show how events that take place in these stages play a pivotal role in how the network operates. Interrupted or failed problematization and interessement can cause the network to become sidetracked and can have far-reaching consequences for its sustainability (also Helén and Lehtimäki, 2020).

We consider problematization as setting out a problem or a goal that it aims to solve. This may, for example, be a statutory obligation requiring an assessment to be performed. At the interessement stage, the roles of human and non-human network members are assigned. While it is obvious that the stages of translation seldom emerge in clear-cut divisions, the concept is helpful in understanding why local ECEC evaluation and assessment take many forms and why certain evaluation policies fail to mobilize or engage actors in different stages of translation. Therefore, our research question is as follows: How are local evaluation and assessment networks formed in translations connecting different actors?

Research material

In Finland, children start school at the age of seven after they attend a year of preprimary education. Every child under school age is entitled to ECEC which is organized as both a municipal and private service. Early childhood education is regulated by law, and its content is guided by the obliging documents of the National Core Curriculum (Finnish National Agency for Education, 2022) and the National Core Curriculum of Preprimary Education (Finnish National Agency for Education, 2014). The Finnish ECEC system is decentralized which means that the ways of organizing ECEC services are decided locally, for example. Thus, local variation is high. At the same time, municipalities are legally obligated to evaluate their own activities and have significant autonomy in how they carry out quality management and evaluation. Municipalities and service providers also have a legal obligation to participate in the evaluations carried out by the Finnish Education Evaluation Centre (FINEEC) (Act, 582/2015). National-level evaluations are based on the principle of enhancement-led, sampling-based evaluations instead of an inspection culture (e.g., Kauko et al., 2022). The main purpose of the evaluation of educational institutions is to produce information for teachers (Act on Early Childhood Education and Care, 540/2018).

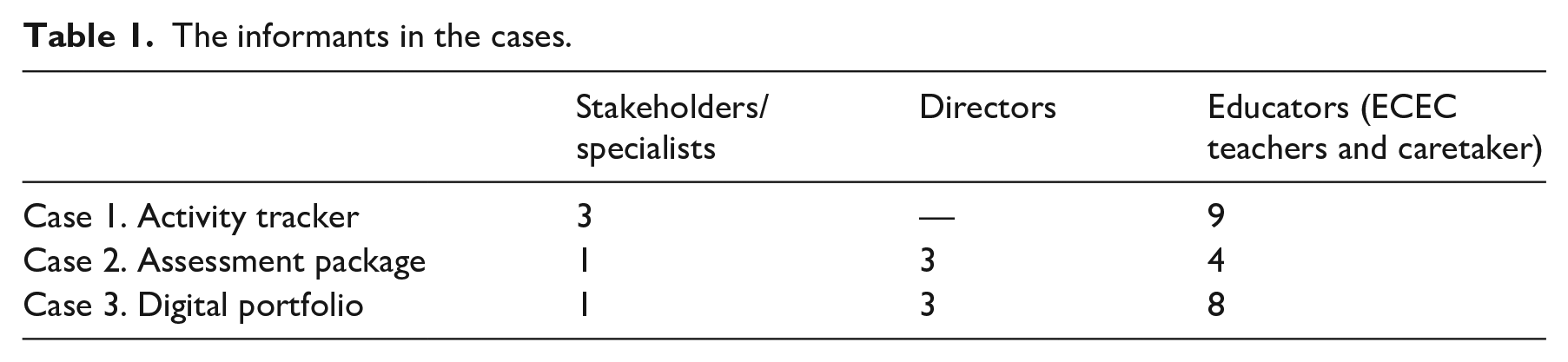

The cases examined three different assessment tools used locally in Finnish ECEC. Semi-structured qualitative interviews (n = 32) conducted in Finnish from 2021 to 2022 comprise the research material. The interviewees included ECEC planners, directors, teachers, and caretakers working among groups of children (see Table 1). 1 In addition to the respondents’ background information, the interviews evaluated the practices of municipalities and units, including how different evaluation tools have been introduced. The interviews were voice-recorded, and the duration of the interviews ranged between 30 and 70 minutes.

The informants in the cases.

The first case concerns an activity-tracking bracelet that was deployed in selected ECEC groups in a municipality for 3- to 6-month periods from 2017 to 2021. The trackers, resembling watches or bracelets attached to each child’s wrist, contained an accelerometer for measuring steps. These devices were marketed to ECEC providers as enabling easily accessible data on children’s movements and exercise and as a motivational tool for physical education (Paakkari et al., 2023). The non-human actors in the network were the bracelet, as well as the data generated by the wristband. The human actors were ECEC stakeholders, municipal administration staff and educators working in child groups.

The second case focuses on an assessment package used in several Finnish municipalities. The package was sold by a private company that was linked to a university research project. The decision to utilize the package was made by district managers in the municipality. The other human actors in the network consisted of assessment package users. The package includes child observations, a pedagogical environment assessment, and a director’s self-assessment. Non-human actors in the network included an assessment platform on tablet computers and the scales utilized to assess children. Non-human actors also contained forms and self-evaluations made by directors and other staff. In practice, a trained observer visited a randomly selected group of approximately five children and made structured observations on a tablet computer. The collected data were then transmitted to the company and combined with an evaluation of the ECEC learning environment and the director’s self-evaluation. On the basis of the data, a summary is then produced for the municipality, indicating the kinds of targets the municipality should set to improve the quality of ECEC services.

The third case concerns an electronic portfolio used in one municipality’s ECEC centers. The portfolio had been in use for about a year, and in the previous year, it had been piloted in some groups. The portfolio serves as a ready-made basis on which information about the activities of a child group is collected in images and texts. The human actors in this network consisted of ECEC stakeholders, directors and professionals working in child groups. The children were the target of the pedagogical documentation, but in two groups, they also participated in producing or evaluating the content. Parents were not actors in the network, and had no active role, even though the portfolio targeted them. Non-human actors included the portfolio itself, technical equipment (computers, tablets, phones, and photos), and instructions and guidelines for the staff.

Children and their parents can also be regarded as part of all three networks, even though their role was small, as we will see in the following chapters. All three tools evaluate the pedagogical actions of everyday life in ECEC, albeit in different ways. There are important differences between the cases with regard to whether the assessment is mandatory or voluntary or whether it is operated by private enterprises or by the municipality. We do not claim that these differences encompass all the existing variations in the field of assessment in ECEC. These cases offer an opportunity to examine how variations emerge in the translations of the assessment network. This helps to understand how a multiplicity of assessment practices is enacted behind the similarities of the Nordic model.

Method and ethics

The analysis proceeds as a dialog between the research material and theoretical thinking—in this case, the concept of translation—with one complementing the other (see e.g., Jackson and Mazzei, 2012). Initially, concise descriptions of each case were provided. The descriptions outlined human and non-human “key actors” relevant to the evaluation work, the objectives and meanings set for the assessment tool, and the respondents’ descriptions of the consequences of the evaluation. We then examined each case, following Callon’s (1986) work on the stages of translation. We sought to address the question of how local evaluation and assessment networks are formed in translations connecting different actors and why, and at what point, translating is not realized. Thus, we carried out a more detailed analysis of each case according to the two translation steps.

Our analytical questions (Jackson and Mazzei, 2012) focused on how different actors act at different stages of translation. We also focused on situations with a noticeable activity that maintained or impeded the functioning of the network, including three events where the translation faced challenges or “got stuck” as actors negotiated with their interpretations and aims. The research project adhered to the principles of good scientific knowledge (Finnish National Board on Research Integrity TENK, 2012). Consent was requested from all interviewees, and information about the research project was provided (informed consent) (Harcourt and Conroy, 2011).

Finland has a small population (approximately 5.56 million people), and the descriptions of an individual tool or municipality may become recognizable. Therefore, particular attention was paid to maintaining individual respondents’ confidentiality, and all identifying information was removed from the research material. Anonymity restraints may have affected the accuracy and thus the readability of the text; however, efforts were made to keep the text as accurate and readable as possible despite these constraints.

Results—strategies for translation

In this section, we shed light on how local differences in assessments emerge in networks that bring together multiple actors, all negotiating the ends and means of assessments. We examined the phases of translation in each of the three cases: the activity trackers, the assessment package, and the digital portfolio. The cases differ in terms of the assessment networks—the kinds of human and non-human elements becoming involved—whose connections are local, national, and even international. In two of the cases, the ambitions of the commercial operators were connected to the network. For each of the three assessment tools, the network was formed differently, and the factors that promoted and inhibited translations occurred in different parts of the network.

Problematization

We begin by investigating the problematization in the networks. This is the stage for introducing the problem that the network aims to solve. Analyzing the three cases shows how problematizations unfold with varying success. The cases show that there can also be considerable variation within a single case. While the reasoning for an assessment tool may be clear to the municipal ECEC director and administrative staff, the teachers in the child groups may simultaneously confess to being completely unaware of why the assessment is carried out. In these cases, it is worth noting that a problematization failure does not stop the use of the assessment tool. Even if the problematization fails, the assessment tool may still be in active use.

The problematization generally seemed to be clearer to those actors higher up in the command chain. In Case 2, a district manager responsible for assessments described why they purchased the assessment tool and its relationship with the statutory evaluation requirement: Ok, we won’t be getting any help from FINEEC, but we must get things started. And then the offer from the [company] fell wonderfully right into our lap. So, now this is taken care of, and when you think about all these things [FINEEC’s criteria for evaluating ECEC], we would never have been able to do them on our own. (Case 2, District Manager)

In a sense, the problematization was already carried out by the Finnish government introducing a law (540/2018), demanding the Finnish municipalities to conduct evaluation and assessment in ECEC. At the same time, FINEEC was set up as an external organization for the evaluation of ECEC. However, FINEEC did not produce ready-made evaluation materials for municipalities and service providers. This introduced the possibility of cooperation with a commercial actor. In the next excerpt an ECEC center director described the problematization in similar terms, unfolding linearly after the legislative change: They [the executive group] made this decision that is linked to ECEC evaluation becoming statutory. So, it arose from the fact that we didn’t have a clear assessment model of our own, and we are always taking these things pretty seriously. So when they gave instructions that ECEC must be assessed, we went and bought a solution straight away, and the company offered a ready-made package. (Case 2, ECEC Center Director)

In the excerpt above, the director describes the assessment package as the district manager’s response to a legal obligation for assessing the quality of the service, although two of the three interviewed directors did not recall why the assessment measures took place. Different meanings given to the utilization of the assessment package by the director and the district manager reflect the looseness of the network formed in the municipality during the first phases of implementation. The network meant to uphold the purpose of the assessment package was fragmented at the beginning of the implementation.

While there is fragmentation in problematization when moving from the district manager to the ECEC center director level, the assessment package was considered an ideal solution for the problem at hand, namely fulfilling the legal obligation. However, in the child groups, the tool appears to lose its context: Well, it just came. We weren’t asked that beforehand, in any way, whether we wanted one of these or not. It just came and was presented to us. And then they said that the use of the package will begin on this and this day. (Case 2, Teacher)

This excerpt shows how the assessment tool moved through the network. From a clearly articulated problematization at the executive level, we move to the teacher, considering the assessment as something that “just came.” With the same tool, different actors had different concepts of problematization, as shown in the previous excerpts. The disappearance of problematization may lead to a situation where evaluation begins to act as a means of control or where it is carried out for an external entity.

The disconnection in problematization can also be observed in the digital portfolio (Case 3). None of the respondents could clearly name a single person or a specific entity from which the development of the digital portfolio originated. Considering how widely the portfolio was used, we found this to be a significant shortcoming. Indeed, problematizations can become lost or broken in the networks, but this does not necessarily mean that the assessment tools are discarded. They continue to be used, even without the users knowing why. The stated goals of the portfolio—unifying communication, pruning overhead systems, and making pedagogy and pedagogical activities visible to parents—were shared among the interviewees. This aim was also materialized in the digital tool itself, where it was possible to use electronic stickers to connect documented activities to the objectives of the national core curriculum.

While the unclarity of the problematization did not always seem to hinder the use of the assessment tool, some interviewees openly contested the problematization:

The old way gave us more freedom [. . .]

What is the main reason the municipality is willing to do this for?

That’s a good question. It’s a bit like sucking up to the parents, showing that this ECEC center does these things like they should be done according to the curriculum. For me, this raises the question: Why don’t the people above us trust us workers? Why do we have to do this kind of decoration? (Case 3, Teacher)

The teacher questions the reasoning behind the digital portfolios, refusing to accept the problematization offered to them. The interviewee viewed the introduction of electronic portfolios as a sign of a lack of trust from the administration. Later, the interviewee frames the portfolio as “decoration.” In contrast to Cases 2 and 3, the problematization in Case 1, the activity tracker, appears more flexible and seems to unfold with little friction at different levels of administration. The project is initiated in the municipality by an administrative specialist tasked with finding initiatives that combine ECEC and digitalization: We probably stumbled onto it by chance because I can no longer remember where I found it from. But since it was connected with PE, and it sounded good to me, I thought it would be something I would definitely like to try in our ECEC centers. [. . .] I had no specific job description that it would be connected to physical exercise and digitality. It was mainly that the projects would have to encourage ECEC staff to use more digital applications or software in their everyday work. (Case 1, Municipal Specialist)

The interviewee stated that the event was unexpected (“stumbled onto it by chance”) and that the decision to attend was made at a moment’s notice. The mandate of the interviewee was broad in the sense that it made no mention of physical exercise or any specific pedagogical goals. It is notable that, problematizations have at least partly taken place prior to the arrival of the trackers in the translation processes of Case 1. A ministry report (Ministry of Education and Culture, 2016) highlighted the lack of exercise children receive in ECEC, and since then, a growing consensus has suggested that children need more exercise. The tracker initiative also ties to the digitalization programs of the municipality. As the specialist described, they were given freedom but encouraged to find programs that combined ECEC and digitality. The specialist had a personal interest in physical education (PE), which sparked interest in the device.

After making initial contact with the company selling the activity trackers, the interviewee sent a message to PE contact persons in the municipality’s ECEC centers suggesting they could participate in the experiment. The PE contact persons are volunteering staff members, and they arrange network meetings 2–4 times a year. These contacts are formed based on a common interest in sports and bypass a strict professional hierarchy: . . . each group was allowed to modify what was the right way for that group, and I know that in another group, this was not at all a teacher or even a caretakers’ thing, but the group’s assistant started to implement it. In another group, there was a very small group of people using those bracelets. So, very different ways were found for using it. (Case 1, Teacher)

The PE contact persons introduced the trackers to the ECEC centers and asked for volunteers. Often, their own child groups were the ones volunteering; thus, the network was built up as a network of enthusiasts. If there were no volunteers, the initiative was dropped. However, in contrast to Cases 2 and 3, it was not mandatory to participate. The tracker initiative brings the different elements together and seems to capture the interest of the network actors. The actors appear to either share a common interest in PE or to have their own partly concealed interests, to which the practice also seems malleable. Moreover, the tracker initiative appears compatible with digitalization, and it also offers a chance for pedagogy on cultural geography, such as virtual travel in different countries in the application and general shared activities. Regarding problematizations, the practice seems flexible to the extent that few interviewees in the excerpts questioned the problematizations.

Interessement

In the interessement stage, actors are assigned into proposed roles necessary for solving the problem. Shifting from problematization to interessement, the central questions are no longer whether the issue is important but rather how the network starts operating and whether the actors accept their suggested roles. When the network is set in motion, the actors must be convinced of its capability to solve the presented problem. Here, our analysis highlights the role of non-human actors in translations. Even when the problematizations have been accepted in the network, non-human actors may refuse to cooperate in the roles suggested in interessement. This occurred in Case 3. In the following interview, a teacher discussed storing the electronic portfolio:

How do you store those portfolios, and for how long?

Well, they are behind my user account on the computer and will remain there until I press delete. I don’t know.

So, there’s nothing agreed on that?

No, there hasn’t been any talk about it. Back when it was in an electronic workspace when a new semester began, we would delete the previous one and start over. But then someone “wonderful” went and removed the group from the workspace, and everyone’s portfolios disappeared into the ether. That must have been the last straw for some of us. Like, I’m not going to do this anymore. (Case 3, Teacher)

In the case of the electronic portfolio, the problematization leans on pedagogical goals and is reasonably well accepted. At the same time, its use requires competence that staff do not possess. Consequently, technology does not consent to cooperation or to the role given to it. It also adheres to the digital skills and motivation of the staff. One teacher said that their group had a non-educationally responsible assistant who was “terribly skilled in information technology.” The assistant’s competence in technology and the extra resources generated made it possible to use the portfolio. Although the teacher did the actual compilation and discussed the documented things with the children, the assistant played an important role as an intermediary between the portfolio’s technical features and the other employees.

The next excerpt describes how bringing the device into pedagogical situations can complicate interactions between children and adults: One of the challenges I found was that when I was singing with the children, I did not have the phone in my hand. If I am with children, having to use the phone immediately removes my presence in the activity, which is really challenging. I think it would be nice to take pictures or videos of kids while we’re doing some activity. (Case 3, Teacher)

Eventually, the non-human actors of the network greatly influenced the way the assessment tool was used. As the technical difficulties in using the electronic portfolio as intended became insurmountable, the teachers started using it more as a communication tool. All child groups in the municipality were expected to use the portfolio. Although the educators could question the use of the portfolio, the network around it was still loose enough for its users to take on their preferred roles and exploit the portfolio in the ways they wanted. The educators had a relatively high autonomy in deciding how to use the portfolios. In the case of the portfolio, this leads to a shift in the purpose of the assessment tool. Most of the educators described their use of the portfolio as primarily informing parents about what was done in ECEC. The portfolio therefore became a solution to the problem of how to make ECEC and its pedagogical activities visible to parents. However, there was no external control over its deployment.

An important contributing factor was that because the information was visible to the whole group, only general observations could be written. This led to the portfolio becoming “a nice way to message in pictures and words about the ECEC we’ve done here,” as one teacher stated. In the interessement stage, the network has difficulties precisely due to its generality.

Technical difficulties also played a role in Case 2, where interviewees criticized the rigidness of the assessment platform. Another example of how the interessement brought non-human actors into play was the way the assessment package’s scientific background was used as a justification for its deployment. The district director stated that the research-related justifications used to support the assessment package made them trust the process, as shown in the following excerpt: Now that I look back on it, it was a big thing that we got this. For our ECEC director and us district directors, our idea was that we trust this template and this system, and that this has been scientifically justified to us. (Case 2, District Manager)

The interviewed district manager responsible for evaluations in the municipality coordinated the rollout of the assessment package and referred to the scientificity of the assessment tool as a justification for selecting it. The research-based background of the instrument is also something on which the company depends heavily on its marketing material.

The activity bracelet differed from the other two cases due to its flexibility. As tracking was voluntary, children and adults were not required to use the assessment technology. The child groups found different ways of using the trackers, with an emphasis on the things each group found to work the best. In some groups, individual activity scores were compared daily, while others did not compare them at all. In the groups where the assessment score became a tool for influencing children’s behavior, the participants found ways of gaming the algorithm to renegotiate the situation (Paakkari et al., 2023). The activity bracelet seemed more flexible with regard to the different ways of using it in assessments.

Discussion—toward a democracy of translation?

Evaluation and assessment are globally considered self-evident parts of educational institutions. At the same time, there is a widespread idea of all Nordic countries building on a shared pedagogical tradition (e.g., Karila, 2012). Finland has also experienced, in line with international trends, a rapid and extensive rise in evaluation, implying a global trend of datafication. In this article, we examined the local evaluation and assessment practices in Finnish municipalities using ANT (Latour, 1999, 2005). Our aim was to test the assumption that evaluation in Finnish ECEC is carried out as a nationwide, uniform practice. To accomplish this, we employed the concept of translation (Callon, 1986) to indicate how assessment tools unfold in networks and how various actors come together in negotiations and contestations that seek to orient these networks.

Focusing on translations has offered the possibility of investigating the variations of assessment practices in detail. It has also offered insights into the different human and non-human entities in the networks: devices, documents, and people all play a role in how translations occur (see also Helén and Lehtimäki, 2020; Piattoeva, 2018). In Cases 1 and 3, the malleability of the device and its accompanying application enabled the users to implement the assessment practices in a multitude of ways. In Case 2, the assessment tool was considered rigid, and the usage was dictated. In the assessment, the focus was on the data, which were used, for example, to demonstrate the quality of pedagogy to and by the administration (see Paananen and Grieshaber, 2023).

Using translations as an analytic device leads us to introduce the concept of democracy of translation. We use this concept to highlight the significance of who can and who actually does participate in translation processes and the consequences of this for the network. We suggest that the translation process was successful in the case of the activity bracelet for two reasons. It became operational not only because the roles of the different actors (problematization) were clear, but also because the educators were able to utilize the knowledge and modify the bracelet to suit their own needs (interessement). However, there is a potential risk that the assessment practices can create inequalities if the networks are formed from those who are particularly enthusiastic or motivated. In the activity bracelet, the network involved human actors who were particularly interested in physical education. On the other hand, from the point of view of ECEC development, joining the network could however be especially advantageous for groups where PE is not considered important.

Our research question and the concept of translation draw attention to two aspects. First, our results point to the arbitrariness of assessment practices because the assessment tools were introduced without explanation, and the origin of the tools or their objectives were not necessarily known. When new tasks and claims are imposed on the administration, employees mostly play their part conscientiously. This can explain how new tools and ideas are quickly adopted, sometimes even if they are questioned. Moreover, this can also help us to understand local differences and even smaller, micro-level evaluation “regimes” and places of minor politics (see Rose 1999): differences arise when actors comply or refuse to act according to the translation in ECEC centers but also on a child group level. Through such practices, global evaluation trends (Bradbury, 2019; Karila, 2012) may tacitly become part of Finnish ECEC locally, or even in an individual ECEC setting. We suggest that some features of the neoliberal evaluation model, such as assessing individual children’s skills, materialize within the Nordic model and imply that the Nordic or even “Finnish” model is not as consistent as traditionally thought.

Second, the research highlights the elasticity of the networks. The results show that there is variation in the density of the networks, which affects how the network functions and in the consequences of evaluation and assessment. Based on the results, the assessment tools differed depending on the origin of the networks where the translations took place. The seeds of their successes and failures are sown in different stages. If the assessment network is sidetracked or if a key stage of the translation fails, it is difficult for the tool to be successful. In part, “the key actors” were situated at the administrative level, whereas, particularly for the portfolio, the key actors were the staff working in groups and the flexible and customizable portfolio itself. Ultimately, the question is whether the tools translate into pedagogical action in a way that teachers consider meaningful.

The concept of the democracy of translation also leads us back to the ANT division between devices, documents, and drilled people (see Helén and Lehtimäki, 2020). In several instances, it seemed that while the staff superficially abided by the instructions offered from the upper levels of administration, they openly expressed doubt in the interviews. A general feeling of incredulity was present in many interviews, as the interviewees were left wondering where and why new assessment practices had been drawn up. Such upfront expressions of cynicism point to a potential lack of trust in the organization. They also show how translations fail when the actors involved stop acting in the ways in which they have been drilled. As earlier research has identified several consequences of organizational cynicism (Dahlberg and Moss, 2005; see also Archer, 2017; Bradbury, 2012; Grant et al., 2018), we are left wondering what the effects of these developments are in Finnish ECEC.

Lastly, when considering the democracy of translation, the roles of children and parents in the networks is worth discussing. There is a lack of research concerning the role of children in ECEC evaluation and assessment, and the networks around them. While children are present as the targets of these processes—and of education—they often only play an instrumental role in actual assessments. For a democracy of translation, children should be allowed and encouraged to participate in assessments in ways that re-configure and question what assessment means and who it is carried out for (Paakkari et al., 2023). The results of these three evaluation tools, in turn, are not only used for tracking and evaluating children’s individual learning outcomes or progress but are also justified as an indication about the effectiveness of the teaching and educational institution and finally as a means of improving the standards and quality of education (see e.g., Bradbury, 2011; Oosterhoff et al., 2023; Sahlberg, 2016).

Concluding remarks

The central finding of our inquiry is that, while Finland has managed to resist many of the global trends of evaluation and assessment in ECEC, considerable variation exists on a local level—including practices that explicitly promote child assessment. We hope our research provides tools for thinking about local-level variation of evaluation and assessment methods and practices of Finnish ECEC in its global context. We want to highlight the fact that evaluation, taken as such, is not a negative thing. Rather, the information provided by assessments can be highly beneficial. Indeed, information about a child’s development or individual needs can strengthen the support the child receives (see also Franck et al., 2022). Nevertheless, one must be aware of the consequences of different tools and data collection methods, both in terms of their global, national, and local backgrounds, as well as the fact that different actors may have different motives.

The research also has limitations. The interviews were voluntary. Therefore, it can be assumed that the interviews were attended by those interested in evaluation and assessment. Further research could examine these actors more closely—for example, children and/or parents in the networks—as their roles in the networks, as seen in the results, were limited. However, the concept of translation has made it possible to bring out both the arbitrariness and the flexibility associated with networks, which may contribute to explaining local variation.

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Emil Aaltonen Foundation (Grant number 200257P) and Academy of Finland (Grant number 339603).