Abstract

The purpose of this study was to identify salient features for a critical thinking app and create an instrument to facilitate the app evaluation and selection process. Two questions guided the study: (1) What distinguishes critical thinking instructional apps from others? and (2) What design principles are essential to develop a critical thinking instructional app? The study was conducted in two phases, including a synthesis of existing research in Phase I and development of an evaluation instrument in Phase II. Three lines of research (on critical thinking, educational apps design principles, and tools for the evaluation of educational apps) informed the development of the instrument, which included three evaluation categories (content, pedagogy, and design). A synthesis of research used to create the instrument is included herein along with the instrument design process, rationale for this design, recommendations for usage, its limitations, and implications for future practice and research. Findings will enable app users to more wisely select critical thinking apps specific to their needs and assist app developers with distinguishing the salient qualities required to design apps for critical thinking. The study accordingly contributes to both software evaluation and its development with findings beneficial to both app users and developers.

Introduction

The ability to think critically is important to the academic, personal, and professional success of college students (Haynes et al., 2016). To cultivate students’ critical thinking skills, educators (e.g., Noddings, 2016; Tiruneh et al., 2014) recommend using a variety of pedagogical approaches such as case studies and higher order questioning. Increasingly, educational technologies are utilized to implement these approaches. Among technological tools, mobile software applications (apps) emerged promising increased support for teaching and learning, prompting the educational community to explore the affordability, design, and effects of this relatively new innovation (e.g., Li et al., 2018; Stevenson et al., 2015). For example, Laurillard (2009), Parsons (2014), and Cochrane (2013) all provide salient teaching considerations helpful to the professional practice and respectively address the pedagogical, educational, and social implications involved in collaborative technologies, including “mlearning” (i.e., mobile learning). A survey of the literature also revealed general agreement with Laurillard’s (2009: 5) assessment that while “social networking, collaborative, mobile, and user-generated-design technologies” create “exciting opportunities” for educators, these tools “are rarely developed” based on the actual professional practice and perspectives of teachers who use them. More concerning for higher education, Cladis (2018) argued that digital dependence affects not only language expression, but that reliance on such technologies has resulted in the rapid decline of creative and critical thinking.

The current study addressed two challenges apparent among the flourishing “critical thinking” apps that recently emerged. First, although some are explicitly categorized as critical thinking apps and others purport to foster these skills or claim to “boost” critical thinking, these apps provide only riddle or “brain training” games (e.g., Fading Away—Critical Thinking Challenge available on the App Store) rather than actually cultivate critical thinking. Among those available, there does not seem to be a consensus about what comprises a critical thinking app. The absence of a clear label or definition therefore requires users to sort through many apps before they can pinpoint useful ones. Second, while there are “best apps” lists with reviews (e.g., Cole, 2016; Lynch, 2017) to guide user selection, these are typically written based on personal preference and not evidence-based principles. The anecdotal recommendations, though well-intended, further render these reviews less beneficial. Oftentimes, the misalignment between an app’s label and its contents confuses users; the recommendations that do not build upon research can also result in misguidance.

To address the two challenges noted (i.e., variation on what app leads to critical thinking and possible confusion or misguidance in user selection), this study was designed to identify salient features deemed essential for critical thinking instructional apps and establish an assessment instrument to facilitate the design, evaluation, and selection process. The purpose was to help both users and developers distinguish well-designed apps from those that are poorly designed. A review of existing literature on critical thinking, educational apps design principles, and tools for the evaluation of educational apps was used to systematically respond to two questions central to the study: (1) What distinguishes a critical thinking instructional app from others? and (2) What design principles are essential in developing and selecting a critical thinking instructional app? Findings from the study can help users wisely select critical thinking apps tailored to their specific needs and assist apps developers with identifying salient qualities required to design effective apps that teach critical thinking. The study accordingly contributes to both software evaluation and development with findings beneficial to both app users and developers.

Literature review

The literature review synthesizes three lines of research relevant to the topic under investigation: (1) critical thinking, (2) design principles related to educational apps, and (3) tools for app evaluation. First, definitions of critical thinking are examined related to content of the apps under discussion, followed by pertinent pedagogical approaches and strategies. The review then highlights specific principles (i.e., instructional design, user interface design, and user experience design) deemed essential to develop educational apps. The review focuses next on the analysis of research-based tools (e.g., rubrics and checklists) for app evaluation. The literature review further informs the creation of an instrument to evaluate apps that teach critical thinking.

Critical thinking

Literature in education has well-documented definitions of and effective instructional strategies for critical thinking (Lennon, 2010; Tiruneh et al., 2014). However, whereas educators and scholars concur on the importance of critical thinking, the challenge is agreement on a common definition (Chenault and Duclos-Orsello, 2008; Johnson and Hamby, 2015). Several researchers (e.g., Flores et al., 2012; Rowles et al., 2013) summarized operational definitions and noted variations ranging from the relatively narrow and specific to more general delineations. The narrow, and “classic” definitions of critical thinking view this as logic or formal reasoning; the broad definitions, in contrast, expand the traditional scope with some including problem solving and creativity in the conceptualization of critical thinking (Haynes et al., 2016).

Some research studies (especially those prompting the development of critical thinking assessments) conceptualized the term as abilities, skills, and/or dispositions. For instance, Ennis’ (1987: 10) definition, “reasonable reflective thinking focused on deciding what to believe or do” has been broadly recognized in the field (Larsson, 2017). Ennis (1998) then derived a list of critical thinking abilities (e.g., identifying an issue and analyzing arguments). Aligned with Ennis’ studies, the American Philosophical Association’s (APA) Delphi report (Facione, 1990) presented the consensus definition of 46 critical thinking experts along with six core cognitive skills (interpretation, analysis, evaluation, inference, explanation, and self-regulation) and several affective dispositions (including inquisitiveness, open-mindedness, fair-mindedness, and willingness to revise views). The Delphi report further stipulated, in part, that these experts “understand critical thinking to be purposeful, self-regulatory judgment which results in interpretation, analysis, evaluation, and inference, as well as explanation of the evidential, conceptual, methodological, criteriological, or contextual considerations upon which that judgment is based” (Facione, 1990: 3). While there are many other popular definitions with new ones still emerging, the widely cited APA definition is considered the most influential and rigorous to date (Mathias, 2015; Oderda et al., 2010).

In addition to its conceptualization, a significant amount of research on critical thinking examines its instruction. Researchers have widely adopted Ennis’ (1989) typology of critical thinking instruction, which includes four pedagogical approaches: (1) a general approach in which critical thinking is taught explicitly in separation from the content of other subject matter, (2) an infusion approach in which critical thinking principles are made explicit and integrated into other subject matter, (3) an immersion approach in which critical thinking is also incorporated into other subject matter but its general principles are not made explicit to students, and (4) a mixed approach in which the general approach is combined either with the infusion or immersion approach. Instructional strategies used to support these approaches typically include analytical frameworks, argument mapping, case studies, class/online discussion, computer-assisted reasoning, critical reflection, logic modeling, and problem-based learning (Jenkins and Andenoro, 2016; Mathias, 2015; Niu et al., 2013). However, although educators recommend and adopt strategies applying the instructional approaches, they are not equally effective.

Further empirical studies on instructional interventions revealed more effective methods to improve critical thinking. In particular, meta-analyses of the interventions related to four approaches included in Ennis’ (1989) typology revealed explicit instruction to be more effective in improving critical thinking than implicit instruction (e.g., Abrami et al., 2008; Tiruneh et al., 2014). When combined with practice, explicit instruction appeared to vastly improve students’ critical thinking performance (e.g., Heijltjes et al., 2014). A meta-analysis study by Abrami et al. (2015) on teaching strategies in addition found that dialogue (i.e., teacher-led class discussion and conversation among students with minimal teacher participation) combined with authentic problems/examples also enhanced students’ critical thinking. A meta-analysis conducted by Niu et al. (2013) further revealed the relevance of subject-specific examples to improving students’ critical thinking. Based on this finding, Niu et al. (2013) recommended that instruction to improve critical thinking attend to context. Similarly, Bailin et al. (1999) argued for the provision of background knowledge in order for critical thinking to take place. Based on previous studies, Noddings (2016: 86) concluded that there obviously exists a general field consensus that “critical thinking must be about something.” In summary, previous literature reveals the saliency of effective critical thinking instruction involving explicit teaching of critical thinking principles processed within context, though the context can vary according to instructional needs (Noddings, 2016). Furthermore, effective instruction includes opportunities for students to practice, engage in dialogue, and experience authentic examples or problems specific to the content studied.

Educational apps design guidelines

Principles from instructional, user-interface, and user experience design illuminate how to develop and search for well-designed educational apps. Instructional design is a systematic process that follows research-verified principles of learning and teaching required to problem solve and create environments, activities, and materials for effective instruction (e.g., Gibbons et al., 2014; Smith and Ragan, 2004). During the process, it is expected that designers and educators clearly present learning outcomes, select effective instructional strategies to attain the outcomes, identify pertinent media to support instruction, and assess whether learners have reached the outcomes (Branch and Kopcha, 2014). Correspondingly, there are instructional design models (for a summary of models, see Tracey and Richey, 2007) that guide development of an instructional plan (including the design of apps). These models address three questions: (1) “Where are we going?, (2) How will we get there?, and (3) How will we know when we have arrived?” (Smith and Ragan, 2004: 7). By attending to anticipated outcomes, the strategies, assessments, instructional design principles, and models adopted help ensure instructional effectiveness.

User interface design refers to the intended methods and elements (which are mostly visual, such as screens, menus, and icons, but also include textual and voice elements, such as text and speech commands) through which users interact with a technological product (Interaction Design Foundation, 2019). Designers in this case anticipate users’ desired goals and actions and create user-friendly elements that enable users to easily complete their tasks. To inform practice, there are guidelines, principles, and theories available, including eight golden rules of interface design widely adopted in the field. The rules attend to consistency, usability, informative feedback, dialogue, error handling, reversal of actions, internal locus of control, and memory load (Shneiderman et al., 2017). Application of the rules not only minimizes or prevents user frustration, but it also increases the likelihood of user acceptance of a product.

User-experience design is vital to the creation of “everyday” things (Norman, 2013), including educational apps. The International Organization for Standardization (ISO, 2010: sec. 2.15) defined user experience as a “person’s perceptions and responses resulting from the use and/or anticipated use of a product, system or service.” Aligned with this definition, user-experience design generally refers to the process of creating a product or service that results in user satisfaction with their experience. Designers ensure a satisfying user experience by developing a logical and viable structure in the design that considers the following: (a) objectives of a product, (b) user needs, and (c) limitations that might impede the product to work successfully (Unger and Chandler, 2012). User-experience design is therefore user-centered. By focusing on users’ abilities, needs, feelings, and limitations, user-experience design aims to instill positive perceptions of users’ attitudes toward a product helpful in enhancing the product’s success.

Well-designed educational apps are both pedagogically sound and technologically friendly. Instructional design principles therefore prescribe effective pedagogy for app design to “support a learning goal,” which distinguishes an educational app from others (Hirsh-Pasek et al., 2015: 4). Given the plentiful supply of apps “self-proclaimed” to be educational (Papadakis et al., 2017: 3149), instructional design principles can contribute both to the design and selection of apps that truly have educational value. Furthermore, educational apps incorporating both a friendly interface and resulting in a positive user experience are more likely to deliver and maximize their educational value. Given this, the design and evaluation of educational apps may indeed benefit from the field’s guidelines available within instructional design, user interface design, and user experience design.

Instruments and criteria for evaluating educational apps

The proliferation of educational apps calls for the development of evaluative criteria and tools, most of which are in the form of a checklist or rubric. A checklist is an instrument presenting a set of evaluation criteria in phrases, statements, or questions in which evaluators indicate whether each criterion is met or not (e.g., by checking “yes” or “no”). Similarly, a rubric (usually in a table format) also includes evaluation criteria, but has a rating scale (with several levels) with descriptors depicting different levels of attainment (e.g., “adequately,” “partially,” or “not met”) for each criterion. The evaluation criteria for these instruments are sometimes grouped in different categories or dimensions (e.g., “instruction” and “design”). A quick search of the Internet reveals a variety of such instruments for app evaluation, though few available are informed by research.

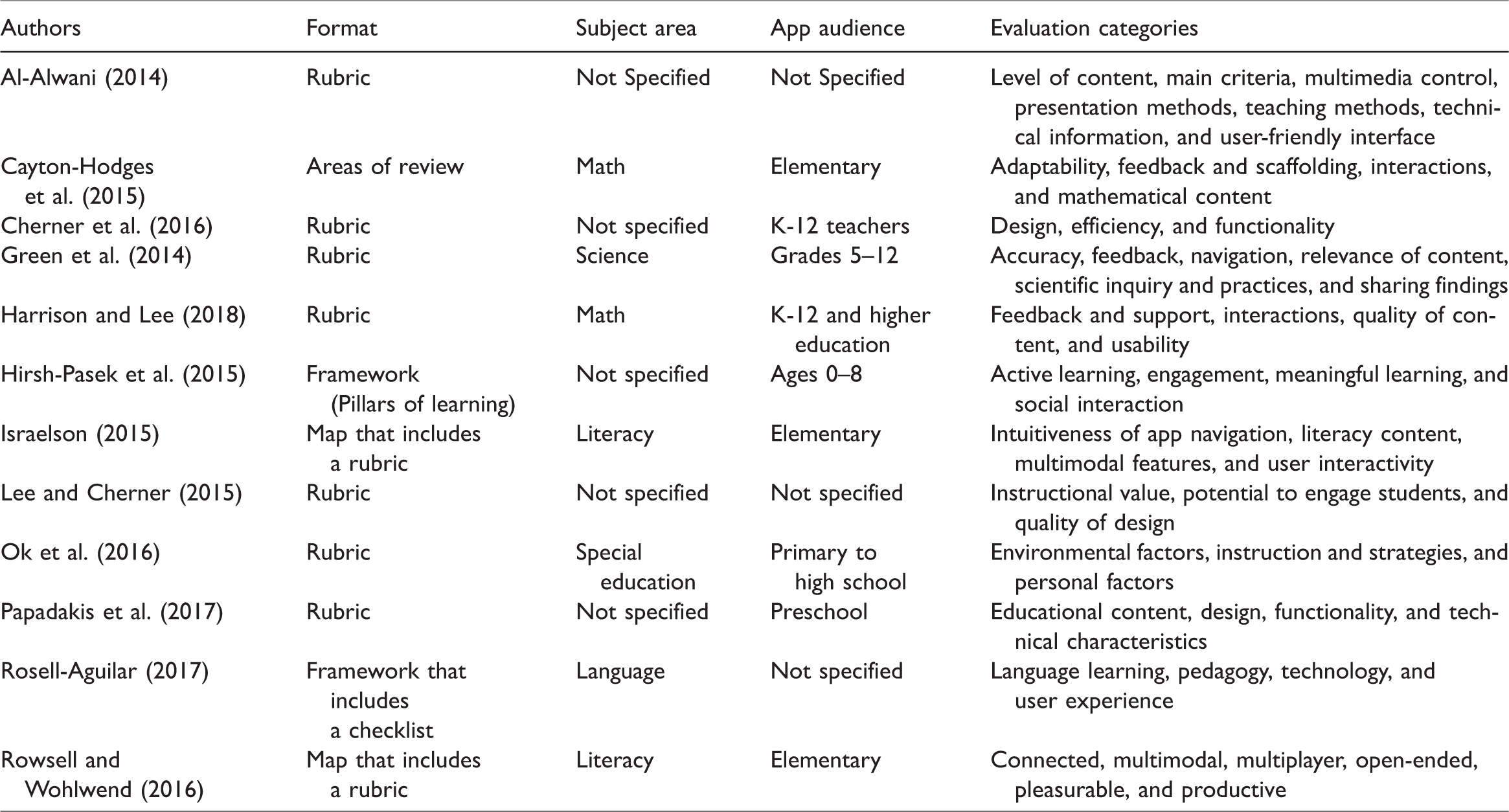

Most research-based instruments align evaluation criteria with evidence or recommendations from scholarly studies and are available in academic journals. Relevant research can therefore guide the design and selection of educational apps and enhance the rigor of app evaluation. Recently, new research-based instruments and criteria have emerged for general evaluation of instructional apps (e.g., Lee and Cherner, 2015) and are specifically for selecting literacy apps (e.g., Israelson, 2015), math apps (e.g., Harrison and Lee, 2018), teacher resource apps (e.g., Cherner et al., 2016), and apps for students with disabilities (e.g., Ok et al., 2016). As shown in Table 1, many of the instruments aim to facilitate the evaluation of apps for students in K-12. Regarding the evaluation categories, many adopt content, instruction, and app design, even though different terms are used. Currently, no such instruments are available for selecting critical thinking apps for college students.

Research-based instruments/criteria for evaluating educational apps.

This review of literature identified mainstream definitions of critical thinking such as those presented in Ennis (1987) and Facione (1990), along with effective strategies for critical thinking instruction. Also discussed were instructional and technological design principles and guidelines conducive to designing educational apps. Additionally, research-based criteria and instruments for app evaluation were examined revealing an absence of such instruments to identify critical thinking apps. Developing the app evaluation instrument in this study accordingly built upon the literature review with purposeful alignment of its evaluation criteria with research findings. The development of the evaluation instrument substantiated by previous research is described in the following section.

Method: Development process and considerations

This study aimed to create an instrument to evaluate critical thinking instructional apps and was conducted in two phases. Phase I involved a synthesis of existing research that informed the development and validation of an evaluation instrument in Phase II. During the first phase, the study modified and followed a process of research synthesis proposed by Cooper and Hedges (2009). Steps used in the process included: (a) formulating a research problem, (b) conducting a literature search, (c) evaluating search results, (d) analyzing results, (e) interpreting findings, and (f) using the findings to create a set of evaluation items. Two questions previously outlined in the introduction guided the selection of search criteria and identification of pertinent articles. As indicated in the literature review, three lines of inquiry were applied in Phase I to review prior research: (1) critical thinking skills, dispositions, and instruction to develop college students’ critical thinking, (2) design principles applicable to the design of educational apps, and (3) research-based criteria and instruments (e.g., rubrics and checklists) for app evaluation. The literature review in the earlier section synthesized the analysis and interpretation conducted. Insights obtained from this review then informed the evaluation criteria in Phase II.

Phase II involved development and validation of an evaluation instrument based on the literature review that generated a blueprint for creating the instrument including three major categories (content, pedagogy, and design). A set of evaluation criteria was also created that reflected the essential elements evaluators and developers consider when selecting or creating educationally appropriate critical thinking apps. To address the need for efficiency, the advice from McGahan et al. (2015) was incorporated in the design to ensure that the instrument designed was comprehensive in capturing the essential standards and concise enough so that the evaluation process is not laborious. A Likert-type checklist format was also adopted to enable efficient review and easy comparison (Smets, 2017) during the evaluation.

The research design used in the current study included a procedure similar to one used by Al-Alwani (2014), wherein an expert evaluation methodology was adopted involving participants invited to join a survey assessment. Similarly, the evaluation instrument created in this study was reviewed by an expert panel to establish content validity. This panel consisted of six people, including two college instructors experienced in teaching critical thinking classes using a general approach, three instructors who integrated critical thinking specific to their content areas (business administration, hospitality management, and literacy education), and a veteran computer programmer. All panelists were proficient using technology in education. More specifically, all faculty had extensive experience with technology integration within a traditional college format and/or online teaching. The programmer had 15 years of experience developing interactive systems specifically for education, including educational Web sites and apps. After creating the blueprint tool, the evaluation instrument, and a rationale document that presented the literature (with excerpts and references) supporting the inclusion of each item, all were forwarded to the experts for their initial review. Expert panelists individually rated the appropriateness and clarity of each evaluation item and provided overall comments via a SurveyMonkey form. The form included a summary area, three major evaluation categories (content, pedagogy, and design), and at the end a list of four items for overall evaluation.

To complete the form, panelists were instructed to reference all materials forwarded to them about the evaluation instrument prior to responding to the survey sent via SurveyMonkey. They were then asked to rate each of the items and enter comments specific to the design, language use, format, and other essential elements for the evaluation instrument created to assist educators with selecting viable critical thinking apps. While panelists did not use the evaluation instrument to actually assess critical thinking apps, their comments guided the instrument’s revision. For example, while the panel review of an earlier version of the instrument revealed general agreement on its clarity and suitability of the evaluation categories and items included, two of the panelists commented on the inclusion/exclusion of items and the clarity of the terms used; in particular, one stated, “Overall it is clear to me but not all faculty members have [a] background in technology (esp. those in the 3rd category), so some terms may not look clear to them.” These comments along with other feedback were then used to revise the instrument and create a new version (e.g., clarifying the evaluation items mentioned and making the terms more intuitive). The revised evaluation instrument was subsequently reviewed by the panelists who reached a consensus on the final version (see Appendix 1).

While the instrument’s design was based on the literature, it also utilized an interpretive approach to evaluation, especially to its three primary features, namely multivocality, contextualization, and interpretation (García-Villada, 2009). Regarding multivocality, the instrument enabled an app evaluation incorporating multiple perspectives from its stakeholders, including designers, instructors, and students. For contextualization, the instrument accepted evaluators’ professional experience and judgment to determine the appropriateness (e.g., in terms of instructional strategies) of an app under consideration specific to their context. With respect to interpretation, the instrument prompted evaluators to reflect and comment on their review of each major evaluation category and (based on their own reflection and assessment) make a subjective assertion about the app’s adoption. The instrument also provided effective research-based guidance and reference to its users in their search for and design of quality critical thinking instructional apps. Because an app evaluation cannot really be context-free, users utilized their personal perspective and local knowledge to employ the instrument in their evaluation.

Results

The app evaluation instrument is intended to facilitate assessment of individual apps and their saliency in meeting the specific needs of teaching and learning. Based on this study, the instrument created empowered users, especially educational professionals, to adequately and promptly identify well-designed instructional apps that foster critical thinking among college students. The instrument also provides criteria for mobile app developers to follow when they create critical thinking apps. The instrument designed drew upon previous research, incorporated essential design elements and features of quality critical thinking apps, and adopted a concise format to enable users/evaluators to efficiently make informed decisions for app adoption.

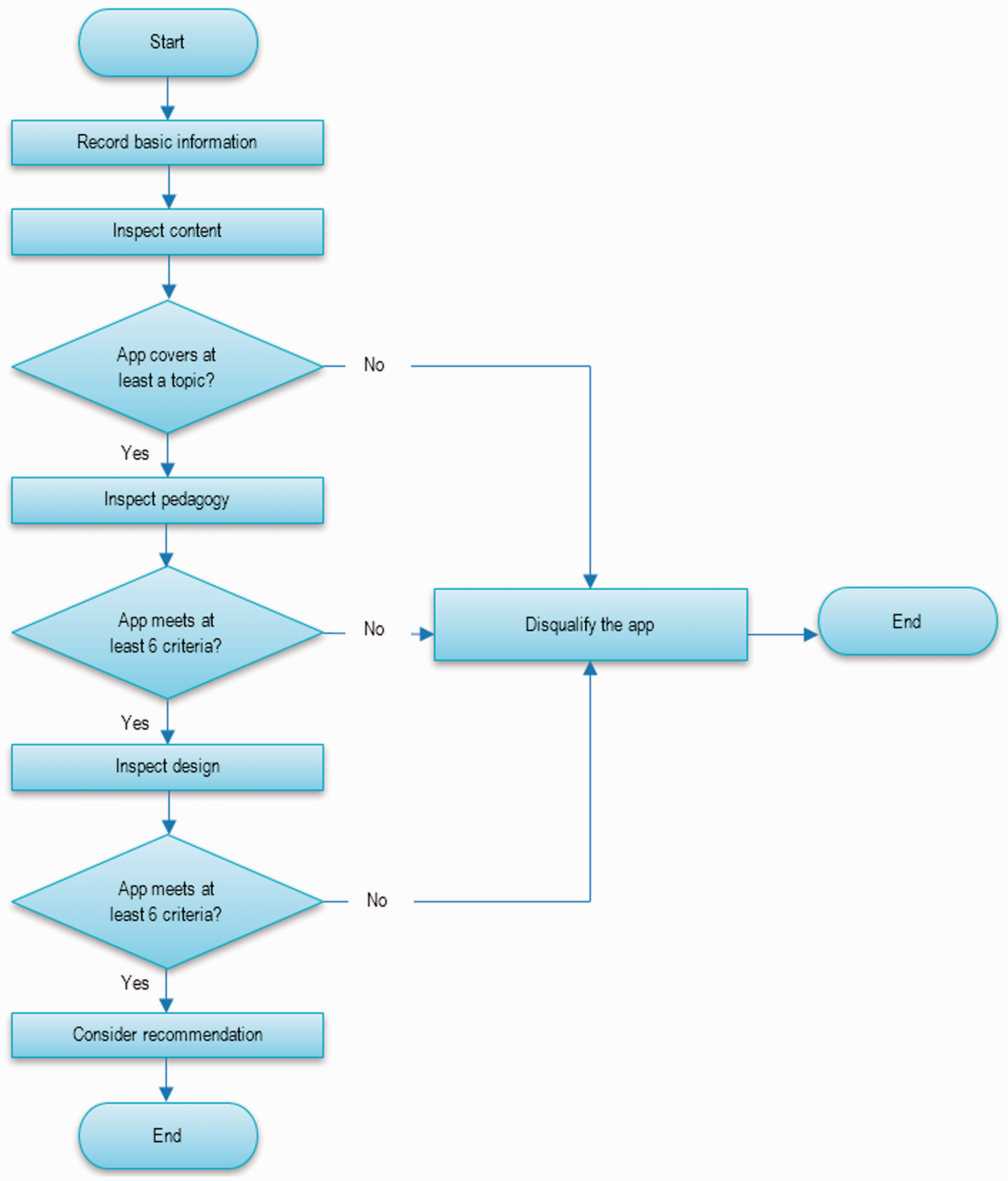

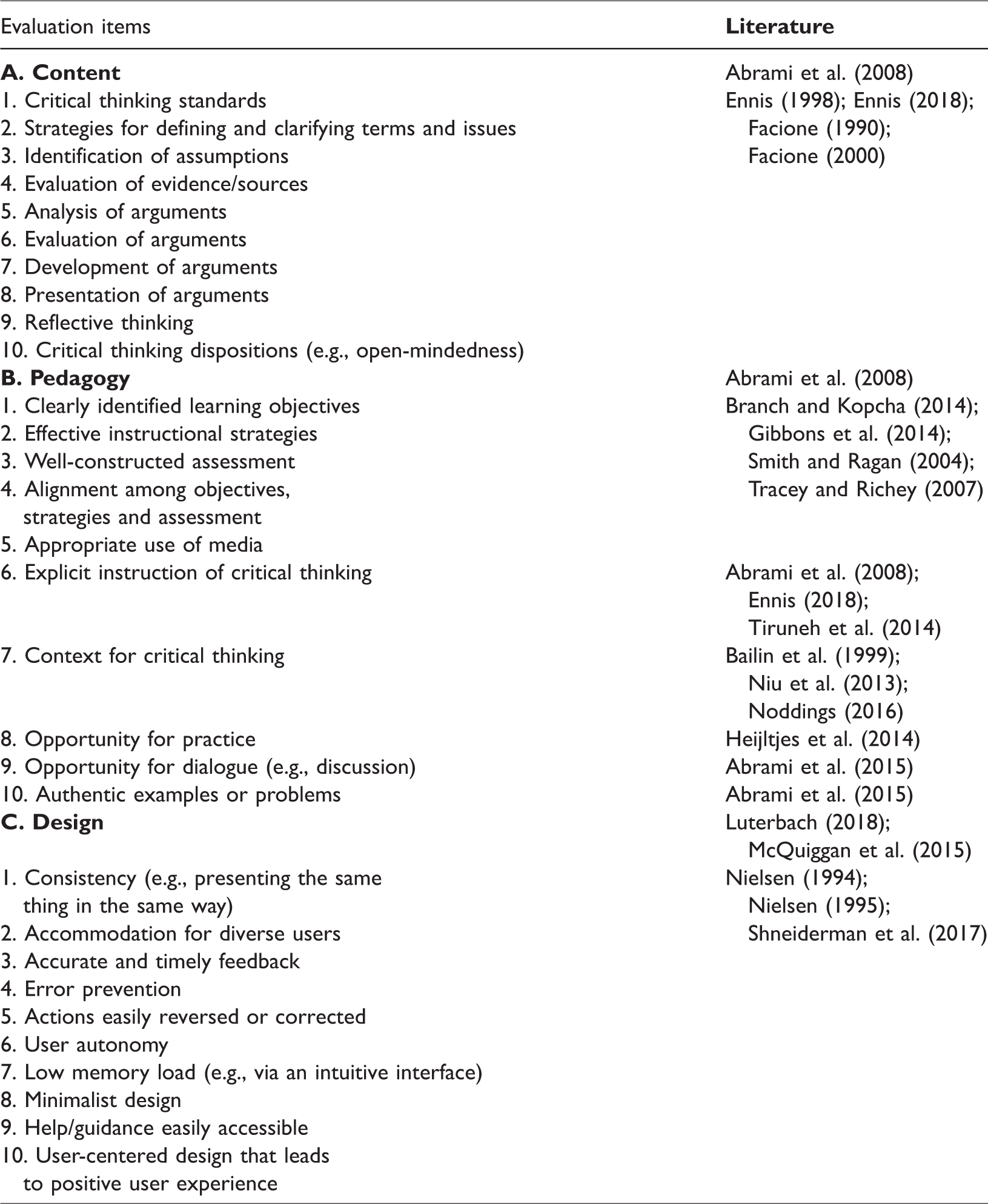

Similar to other tools used to evaluate software applications, this instrument (as shown in Appendix 1) includes a summary area prompting evaluators to record basic information (e.g., the title, publisher, and objectives) about an app under review. Afterwards, a list of evaluation items appears grouped in three major categories (content, pedagogy, and design). These categories encourage evaluators to consider crucial aspects of a critical thinking instructional app and render judgment on the attainment of each item using a five-point Likert-type scale. Evaluators then subsequently proceed to the overall evaluation of each major category and ultimately determine whether the app under evaluation is acceptable for adoption. The instrument’s design rationale aligned to the three major categories and their associated evaluation items is presented next. (See Figure 1 for a recommended evaluation process using the instrument and Table 2 for alignment of the literature review and evaluation items supporting the instrument’s design.) For example, Apps used to test the instrument (by the authors) during the design process included Fading Away – Critical Thinking Challenge, and Critical Thinking Skills 101. Using the recommended evaluation process (Figure 1), Fading Away was not considered a critical thinking app as it did not pass our review at the content level required for adoption. Similarly, whereas Critical Thinking Skills 101 was deemed a critical thinking app, it failed to display appropriate pedagogy and technology design features. (Specific findings from testing these apps, along with several others, are presented in another paper under preparation.)

Recommended evaluation process.

Alignment between the evaluation items and literature.

The instrument’s design adhered to the study’s goals to facilitate user identification of effective critical thinking instructional apps and provide salient design indicators for apps developers. Given that the intended users of the instrument are professionals with busy schedules, the instrument’s design considered both practicality and efficiency based on available research and best practices. Aligned with previous studies, three major evaluation categories were featured (i.e., content, pedagogy, and design) deemed previously by researchers as essential in critical thinking instruction and app design. The first category, “content,” serves as a precondition of “pedagogy,” which in turn is a precondition of “design.” The sequencing of the three categories allowed users to discontinue the evaluation once they find an app under consideration does not possess sufficient features in a category that serves as a prerequisite to subsequent one(s).

For example, if, from the evaluator’s perspective, an app under review does not provide sufficient or accurate coverage of any topics in the “content” category, the evaluator may simply reject the app’s adoption without going over the evaluation items in the two subsequent categories (i.e., pedagogy and design). The evaluation items in the “content” category are aimed to help evaluators distinguish critical thinking from non-critical thinking instructional apps. An app that does not provide sufficient and accurate critical thinking content is therefore deemed not worthy of further consideration of its pedagogy. Likewise, an app that includes accurate and sufficient content but failed to employ effective pedagogy does not warrant further evaluation regarding its design. A well-designed critical thinking instructional app is expected to adequately address all the three categories, and the sequencing of these categories, which allows for the cessation of an evaluation once a precondition is not met, facilitates efficient decision-making regarding adoption.

The meta-analysis by Abrami et al. (2008) supported inclusion of the first two major categories, content and pedagogy, which are two determining factors leading to the success of critical thinking instruction. In their analysis of 117 empirical studies on instructional interventions, the researchers ascertained that course content (as well as curriculum) and pedagogy contribute significantly to critical thinking skills and dispositions. The third category, design, was chosen because it plays a key role in defining the effectiveness and efficiency of instructional apps (Luterbach, 2018). User interface and experience design is especially important because it helps engage users in and create a positive learning experience. Without good design, the educational benefits of apps can fall short (McQuiggan et al., 2015). The evaluation instrument created therefore incorporated the three categories deemed essential for consideration when professionals design or select apps for critical thinking.

The evaluation items in the “content” category were primarily based on Ennis’ (1998, 2018) and Facione’s (1990) studies reflecting the mainstream conceptualization of the term “critical thinking.” These studies prescribed that critical thinking instruction provide content that enables students to develop the requisite abilities, skills and dispositions. The expert consensus statement from the APA Delphi report (Facione, 1990) further identified essential critical thinking cognitive skills and affective dispositions. Similarly, Ennis (1998, 2018) proposed a list of critical thinking abilities and dispositions that are essential for college students.

The first 10 topics in the content category for the instrument reflect the skills and dispositions explicitly stated in these studies. These topics guide students to become reasonable and reflective decision-makers. Before deciding or taking a position, students are required to define and clarify issues (including terms used in the description of issues), pinpoint unexpressed assumptions (both their own and in other’s presentation of the issues), and evaluate sources pertinent to their decision-making. Students are also required to analyze and evaluate arguments, provide a cogent rationale to support claims/positions, and construct/present their own arguments clarifying the position(s) they take. Moreover, students are required to reflect on their own thinking, and (as appropriate) revise decisions according to the evidence noting the reasoning behind their existing views or decisions. As Dewey (1933: 3) defined it, reflective thinking is “the kind of thinking that consists in turning a subject over in the mind and giving it serious consecutive consideration.” In the realm of critical thinking, being reflective requires people to examine their own and others’ thoughts in terms of their “reasonableness” (Norris and Ennis, 1989: 3). In addition, critical thinking instruction should provide guidance for students to examine both the process and outcome of their own thinking. The last of the first 10 topics included in the instrument addresses critical thinking dispositions such as open-mindedness and inquisitiveness. Instruction on these dispositions helps to nurture students’ inclinations to use and habits in using critical thinking skills (Facione, 2000).

The list of the first 10 topics is not comprehensive. As various definitions of critical thinking exist, consensus has not yet been reached regarding the required content of critical thinking instruction. While identifying suitable critical thinking apps, evaluators may prefer grounding certain topics, for example, logical concepts and fallacies. Some may wish to highlight critical thinking standards such as the intellectual standards including clarity and precision proposed by Elder and Paul (2013). With the instrument created for the current study, these topics were subsumed in the argument evaluation and its development. When students evaluate and construct arguments, they apply basic logical concepts, identify logical fallacies, and follow critical thinking standards. However, if users of the instrument opt to bring new topics to the spotlight, they are welcome to add these or other relevant topics to their evaluation. The item, “other topic, please specify,” invited evaluators to adapt the form to serve their own purpose. Because the main intended users of the instrument are educational professionals experienced in incorporating critical thinking, the authors believe these users should have a voice in the inclusion of evaluation items. Furthermore, users should have an opportunity to decide the content topics they wish to highlight or include in their evaluation. A critical thinking instructional app is expected to teach at least one of the topics, including the one(s) that evaluators add to the list themselves, in the “content” category. By all means, coverage of the topics should be sufficient and accurate from the evaluators’ point of view.

The evaluation items in the second category, pedagogy, drew upon both general instructional design models applicable to instructional design across disciplines and recommendations from empirical studies specific to critical thinking instruction. As discussed previously in the literature review, instructional design models guide instructional design (including instructional materials, such as apps) and include the following key elements to ensure quality instruction: (a) clearly stated outcomes, (b) effective instructional strategies, (c) appropriate media, and (d) assessments aligned with the outcomes. The first to the fifth evaluation items in the category were accordingly aligned with the elements included in the model. The next five items (items 6–10 in the instrument) exclusively addressed critical thinking instruction. As previously summarized in the literature, effective critical thinking instruction explicitly teaches pertinent principles, provides context for critical thinking, engages students in dialogue, presents authentic examples or problems, and includes opportunities for practice. Although adoption of these general and specific research-based recommendations cannot guarantee effectiveness, apps following these guidelines are more likely to be pedagogically sound and thus educationally valuable.

In the third major category, technology design, principles derived from user interface and user experience design further informed development of the evaluation items. For the instrument created, items eloquently synthesized in Shneiderman et al.’s (2017) eight golden rules of interface design and Nielsen’s (1994) usability heuristics were condensed to appear intuitive for app evaluators without a professional background in technology or interface design. Instead of jargon, layman’s terms were used whenever possible in the presentation of these principles. The list of principles included is applicable to the design of most interactive systems, including instructional apps.

Based on these principles, a well-designed app adopts a consistent design by using (for example) the same terminology, design patterns (i.e., those pertaining to buttons and screen layouts) and sequences of actions in a lesson. To accommodate the diverse needs and capabilities of app users, design flexibility (e.g., by offering shortcuts to proficient users and guidance to novices) is also required that is preferably customizable. Accurate and timely feedback is also essential so that users are continuously aware of the results of their actions and learning progress. To minimize user anxiety, the app should prevent serious user errors (e.g., accidently exiting an app without saving progress) while allowing users to easily resolve any issues and undo their previous actions. To increase satisfaction and achievement, the app should further provide user autonomy. That is, increase a user’s ability to interact freely while they are navigating the app. Given the limited capacity of short-term memory, minimize the memory load or recall required of users for the app and aim for a simplistic, minimalist design. While it is ideal for users to navigate apps successfully without help, it is beneficial to make assistance easily accessible to those who need it. Briefly, well-designed apps focus on app users and carefully consider their characteristics and unique needs to increase user satisfaction, engagement, and to generate a positive experience.

The instrument created included the essential criteria that a well-designed critical thinking instructional app should have and facilitated evaluators to conduct effective and efficient reviews before finalizing decisions on its adoption. Without doubt, there are considerations other than content, pedagogy, and design (the three major categories used in the instrument’s design) that evaluators explore in the decision-making process. These include the app’s cost, platform compatibility, and accessibility, which are not equally important to all evaluators. The information needed for considering aspects such as cost and compatibility is readily available at the online stores offering apps for free download or purchase. Evaluators may simply reference the information and take this into consideration. However, to examine aspects such as accessibility, evaluators may want additional evaluation criteria and tools (e.g., mobile accessibility testing tools) during the app review. Regardless of the aspect under consideration, evaluators are invited in the current instrument to provide a rationale for their decision in each category at the conclusion of their app evaluation session. This information reflects the weight or priority that evaluators assign to criteria (including those not listed in the instrument) for app adoption. As noted earlier, the current instrument is intended to facilitate evaluation, not dictate the criteria that evaluators, who have professional and local knowledge about what works in their context, must apply.

Recommendations and limitations

The intended users of the instrument are instructors and academic leaders in app selection that foster critical thinking. After users download a candidate app, obtain basic information (e.g., the title and publisher) from the app’s distributor (e.g., the App Store), and try out the app, they are advised to examine the three major categories essential in the evaluation of the app; these are listed in the instrument in sequential order. For efficient evaluation, the three categories are presented in priority order, thus allowing evaluators to discontinue evaluation and disqualify an app under review when criteria in an earlier category deemed more essential is not satisfactorily fulfilled. The last item for evaluation in each category further provides a gatekeeping purpose by prompting evaluators to reflect on their findings and state whether or not the app under review meets the minimal requirements in that category and, from their perspective, deserves further evaluation. First time users of the instrument are advised to familiarize themselves with the recommended evaluation process illustrated in Figure 1 and with the evaluation items described previously.

For decision-making purposes, an app must meet the minimal requirements in each of the three categories in order to be considered for adoption. In the first category, content, the app must provide sufficient and accurate coverage of at least a topic on the instrument’s list. For example, after the inspection of the content, evaluators conclude that the app under consideration does not adequately address any topics in the “content” category, this app clearly is not a critical thinking app and thus not appropriate for adoption, no matter how effective the app is pedagogically or designed. Evaluators may simply discard the app without inspecting the subsequent pedagogy or design aspects. Regarding the minimal requirements for pedagogy and design, the app must meet at least 60% of the evaluation criteria in each category. That is, the app should receive a rating of “4” or “5” on at least six of the evaluation items in the pedagogy and design categories, respectively. Sixty percent is typically considered a minimum passing point in most educational settings. While it is ideal for apps to display all pedagogical and design features, it is presently challenging to find a critical thinking instructional app that fulfills all the criteria. In their search for quality apps, users surely do not have to compromise quality; they are welcomed to raise the bar in their evaluation whenever possible. Nevertheless, it is recommended that app developers strive to incorporate all indicators viewed as essential to maximize the educational benefit of the apps released.

The instrument designed certainly has its limitations, among which are coverage of the evaluation criteria and usefulness of a holistic score. As mentioned earlier, the evaluation categories and items are not comprehensive. Even though they represent essential aspects required for critical thinking apps to be educationally beneficial, users of the current instrument may want other criteria they require or wish an app to fulfill. The current instrument also does not address select criteria whose importance might vary from one context to another. Moreover, despite the rating scale, the instrument is not intended for evaluators to tally ratings and generate a holistic score. The scale facilitates evaluator decision-making by checking how well an app meets each criterion, and a holistic score in this case cannot inform decision-making for adoption or provide a fair comparison across apps. For instance, an app that addresses 10 topics in the content category might receive a higher overall score than another app that covers only one topic. For this reason, based on the score provided, evaluators cannot conclude in this case that the former app is more suitable for adoption simply because the former app covers more topics. The instrument created was not designed for such quantitative comparison or evaluation, which cannot adequately capture the authentic value of educational apps (Cherner et al., 2016). Given the limitations, the instrument serves solely for its intended purpose (i.e., to help determine whether an app under review can be considered a critical thinking instructional app and whether the app is pedagogically sound and user friendly).

Implications and conclusion

This study holds implications for both practice and research. Specific to practice, the study echoed Hirsh-Pasek et al.’s (2015) position that app evaluation and development use an evidence-based approach. The research-based instrument generated within the study empowers educators to efficiently analyze critical thinking instructional apps and select those beneficial to learning and instruction. With an easy-to-use instrument based on the research, educators can move away from popularity ratings or personal testimonies when making decisions about app adoption. Moreover, the instrument serves as an informative guide to app developers (most of whom are software engineers) as they engage in the design and development of apps that foster critical thinking. App developers tend to make design decisions influenced by the latest technological trends, their own experiences with education, and their often-tainted conceptions about learning (Hirsh-Pasek et al., 2015). With this instrument, developers go beyond these influences and take into consideration, from initial planning to beta testing, essential features that quality critical thinking instructional apps need to deliver. The instrument created enables educators to identify quality apps and the educational benefits of the apps that developers create. Although not specifically formulated for designers creating or others using critical thinking apps, the instrument can be useful to identify apps that cultivate critical thinking for planning purposes. Nonetheless, it is recommended that first-time users review information herein to initially become acquainted with the evaluation process and items.

Results underscore the relevance of carefully reviewing theoretical underpinnings in the design and use of mobile apps. For example, Laurillard (2009) presented a cogent argument on the utility of collaborative technologies for professional practice based on her Conversational Framework. In doing so, she highlighted the importance of carefully considering the learning environment and context in which apps are actually used. Our findings agree with Laurillard (2009: 7) on the salience of ensuring that “pedagogy exploits technology” for educational purposes and that: Fortunately, we can turn to the traditions of learning theory to help with this. Amid the constant change of technology and its radical effects on the nature of learning and teaching, one thing does not change: what it takes to learn; especially what it takes to learn in the context of formal education.

While the current study supports theoretical and functional concerns in the design and use of apps in education, there is still much to gain (and learn) from using new technologies for instructional purposes. We (the authors) agree with Crompton and Burke (2018: 58) that “most research studies have found positive outcomes when using mobile devices.” While most of these studies involved undergraduates, there appears to be general consensus across disciplines on the value of mlearning (e.g., Nickerson et al., 2017). However, further research would be helpful on the design and use of apps for graduate and professional education (e.g., educational leadership, law, and medicine).

Further study is also required on the application of the instrument in app evaluation and an adaptation of the instrument for use in K-12. The instrument enables researchers to systematically review existing critical thinking instructional apps for college students, examine the quality of these apps, and identify the patterns and trends from those that are labeled as “critical thinking” apps. Results from this research shed light on the status of apps and inform app developers about the areas to which they can contribute further. In addition, adaptation of the instrument to assess K-12 critical thinking apps will expand the study to benefit a wider audience. It is recommended that adaptation keep the three major evaluation categories, but revision is required to adapt the items in each category. For instance, the present evaluation items in the “content” category build upon studies prescribing topics that a college program integrating critical thinking should address. While these topics are essential to instruction at the college level, they are not necessarily applicable to all levels in K-12. Similarly, items on pedagogy adhere to general instructional design principles with recommendations from studies specific to college-level instruction. Items in the design category likewise do not focus on younger learners and spring from principles and heuristics applicable to educational apps designed for adults and audiences of all ages. Although most of the criteria are useful for the evaluation of K-12 apps, some revisions with regard to research on these younger students might be necessary to ensure that the adapted instrument is pertinent to the specific needs of the group identified. A field study examining implementation of the instrument in the design and development process will also provide empirical support for its adoption in the creation of critical thinking apps.

The current study underscores the value of creating critical thinking apps that reflect what we know works best in teaching and learning. If they are designed well, the apps can be used to effectively support pedagogical practices that are deemed essential to students’ development of critical thinking (e.g., by providing plentiful opportunities for practice and for discussion). Also, apps that follow the design criteria indicated in the study can ensure that they include critical features to support student learning. This supports other literature on the value of mobile learning/apps either in general or in other subject areas (not specifically in critical thinking) for increased performance (e.g., Nickerson et al., 2017), supportive feedback (e.g., Tärning, 2018), ubiquity (Pimmer et al., 2016), and increased motivation (e.g., Klímová, 2018).

The current study applied research to inform practice. After analyzing three lines of research (i.e., on critical thinking, design principles, and instruments for app evaluation), the study used the results to develop an instrument to assess critical thinking instructional apps. Among the plethora of apps categorized as critical thinking, this instrument helped filter out those loosely defined or mislabeled and unearthed well-designed apps that truly offer educational value. Additionally, criteria listed in the instrument can guide developers creating similar apps for critical thinking instruction. The instrument design process, rationale for the design, recommendations for using the instrument, and its limitations further provide information for users helpful in their use of the instrument and selection or design of quality critical thinking instructional apps. As educators continue to seek apps that cultivate college students’ critical thinking and developers keep generating apps to fulfill this demand, this study helps support professional practice to the fullest extent.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.