Abstract

As per Good Pharmacovigilance Practices, pharmaceutical companies must act on potential adverse reactions to drugs. With significant increases in the number of case reports in recent years, they face pressure to raise the efficiency of their processes while maintaining data integrity and patient safety. The use of Large Language Models (LLMs) in safety case intake provides potential to advance processes without compromising quality. In this perspective review, we highlight the potential benefits of LLMs in case intake workflows, and points to consider relating to the current research landscape, inspired by our proof-of-concept (PoC) study. Benefits include raising the consistency of data extraction, reducing bias, and enhancing efficiency. We reflect on challenges in realizing the potential of this new technology from a practical industry perspective, namely (a) measuring the Return on Investment, (b) early involvement of subject matter experts, (c) handling unclear regulatory expectations, (d) system integration, and (e) organizational readiness. We illustrate the potential and its challenges through the lens of our PoC’s insights as well as through insights from published literature, which allowed us to estimate an efficiency gain from a business process perspective for data extraction and initial case report, demonstrating the technology’s potential and practical applicability in real-world scenarios.

Plain language summary

Pharmaceutical companies are required to respond to potential adverse reactions to drugs, as outlined in Good Pharmacovigilance Practices (GVP). With a significant rise in case reports in recent years, these companies are under pressure to improve their processes while ensuring data integrity and patient safety. Large Language Models (LLMs) offer a promising solution for enhancing safety case intake processes without sacrificing quality.

In this perspective review, we discuss the advantages of using LLMs in case intake workflows, drawing from our proof-of-concept study. The benefits of LLMs include improved consistency in data extraction, reduced bias, and increased efficiency. However, we also address the challenges of implementing this new technology from an industry standpoint. Key challenges include measuring the Return on Investment (RoI), the importance of early input from subject matter experts, navigating unclear regulatory expectations, integrating systems, and ensuring organizational readiness.

We illustrate the potential of LLMs and the associated challenges based on insights from our proof-of-concept study and existing literature. Our findings suggest that LLMs can lead to significant efficiency gains in data extraction and initial case reporting, highlighting their practical applicability in real-world settings. This review aims to provide a clearer understanding of how LLMs can transform drug safety processes while identifying the considerations necessary for successful implementation.

Keywords

Introduction

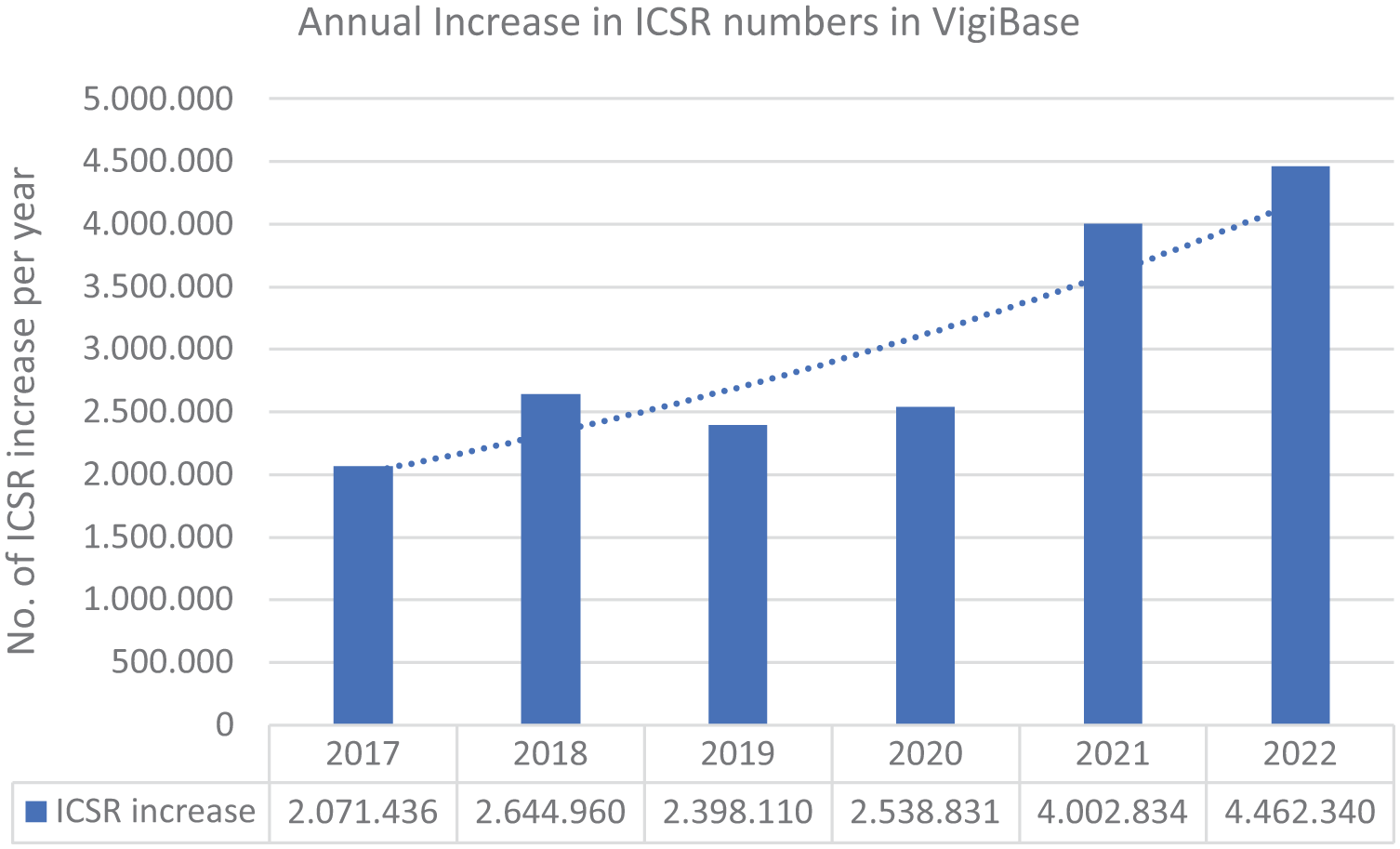

The efficient processing of large and increasing volumes of diverse types of safety data (see Figure 1) is one of the daunting challenges in pharmacovigilance. Individual case safety report (ICSR) processing entails intake, adverse event coding, narrative generation, triaging, regulatory submission, and ultimately safety interpretation. These steps may lead to high pressure on a limited workforce under time constraints, as particularly observed in times of the COVID-19 pandemic, while maintaining quality and regulatory compliance. 1

Increase in the amount of ICSRs in VigiBase over the last years based on numbers published by the WHO (replicated after references 2–7).

As observed in our practice, case intake operations face complex challenges beyond the number of cases, including handling diverse data sources with unstructured texts and scanned clinical report files or lab value reports, managing sudden peak inflows, and managing a finite workforce with limited ad hoc support. With the complexity of relevant information ranging from simple demographics to more structured but elaborate data, like lab values spanning multiple entries and fields, 8 simpler technology approaches, like Named Entity Recognition, were unsuccessful in consistently improving case intake operations under real-world circumstances, based on our experience. 9

At the same time, high expectations for quality and responsiveness, particularly for serious adverse events with substantial clinical and public health importance, demand more efficient solutions. Simply adding more staff is not a sustainable approach when striving for efficiency and higher quality. These challenges have also been tackled by industry groups like TransCelerate,10–12 while working groups like CIOMS XIV 13 are focusing on establishing good practices for the use of AI in regulated settings like pharmacovigilance.

In the context of these challenges, interest has been growing in leveraging advanced technologies,12,14,15 such as Large Language Models (LLMs).16,17 Advancements in computational power, particularly through cloud computing, the availability of large sets of training data, and the use of powerful graphics processing units, have enabled more sophisticated modeling approaches. 18 Among these advancements, LLMs and particularly the class of Generative Pre-trained Transformers (GPT) have received significant attention since the launch of ChatGPT in November 2022. These models are designed to generate (“G”) human-like text based on “prompts” that instruct the pre-trained (“P”) deep neural network, utilizing transformer (“T”) architectures 18 that rely on attention mechanisms to determine the proportionate relevance of a part of a sequence compared to others. Modern iterations such as GPT-4 (Other commercial models like Claude by Anthropic and open-source models like Llama3 may be considered as well; however, they were not in focus of our feasibility study) can deliver robust performance already through optimizing the choice of prompts (“prompt engineering”), while further techniques like fine-tuning of models or retrieval-augmented generation may be applied when suitable in the context of use. The ability to flexibly manage highly diverse input and complex instructions, handling up to 300 pages of content, and generating relevant human- or machine-readable outputs of 10 pages of written text highlights their potential as a promising technology in pharmacovigilance (as an example, GPT-4o may process up to 128,000 tokens in input and generate up to 4096 tokens in one instance)—even without dedicated training of the model. 19

This perspective review is motivated by a proof-of-concept (PoC) study we performed. This PoC’s objective was to evaluate the feasibility of integrating LLMs in ICSR intake and estimating its potential business impact. Specifically, we applied LLMs for data extraction from source documents for case intake to extract the four minimum criteria for valid ICSRs: reporter, patient, adverse event(s), and suspected drug(s). In addition, further safety-relevant fields were extracted. The PoC covered regulatory and compliance aspects via a risk control strategy.

The learnings of our PoC converged into five key Points to Consider (PtC), forming the backbone of our commentary. We use these PtC as a springboard for further exposition on lessons learned to support future research. Taking a practical industry perspective as well as relating our observations to scientific work in the field, we reflect on enabling innovative technologies. The experience we share, while preliminary, should aid others working in this space. An Appendix A provides further insights into our PoC’s methodology, with a fully detailed description provided as a Supplemental File.

Five PtC when activating innovative technology

We put forward five PtC regarding the integration of LLMs for case intake processes, based on our PoC:

The Return of Investment (RoI) needs to be measurable in a business context

Early involvement of subject matter experts increases RoI

Regulatory uncertainty remains a significant hurdle

System integration needs to be contextualized in the operational environment

Organizational readiness goes beyond technology.

In the following sub-sections, we elaborate on our aforementioned five PtC, linking these to results reported in literature regarding the use of AI in safety-critical environments.

The RoI needs to be measurable in a business context

Enabling innovative technology requires an initial investment, while organizations need to decide where to invest constrained resources. In addition, the use of LLMs typically yields costs per use. Thus, a clear business rationale should support the use of innovative technology. Importantly, we observed an overall efficiency gain potential of 39% in this PoC for our company, underscoring the substantial potential impact of these innovative approaches, though larger and more comprehensive studies are necessary for more precise estimates of this gain. Identified efficiency gains can be translated into financial benefits through time savings and optimized resource allocation.20,21 But besides efficiency, this automation also improves safety outcomes and staff satisfaction. Enhanced efficiency in processing complex reports enables human processors to focus on critical analytical assessments requiring human cognition, improving safety outcomes. In addition, this shift from repetitive tasks to more meaningful work may boost staff satisfaction and motivation by using state-of-the-art tools and technology.22,23 However, RoI varies by case type. Our observations indicate that LLMs exhibit enhanced performance and thus overall time benefit in processing complex study reports, which currently require significant manual effort, as opposed to the simpler patient support programs (PSP) reports that are easier to process—an effect that was also observed in other studies implementing LLMs into pharmacovigilance processes. 22 This distinction highlights the potential business value of implementing these innovative technologies. Furthermore, the increased efficiency in the routine processing steps of more complex reports allows human processors to refocus their attention on critical analytical assessments, which require expert human cognition the most.

Early involvement of subject matter experts increases RoI

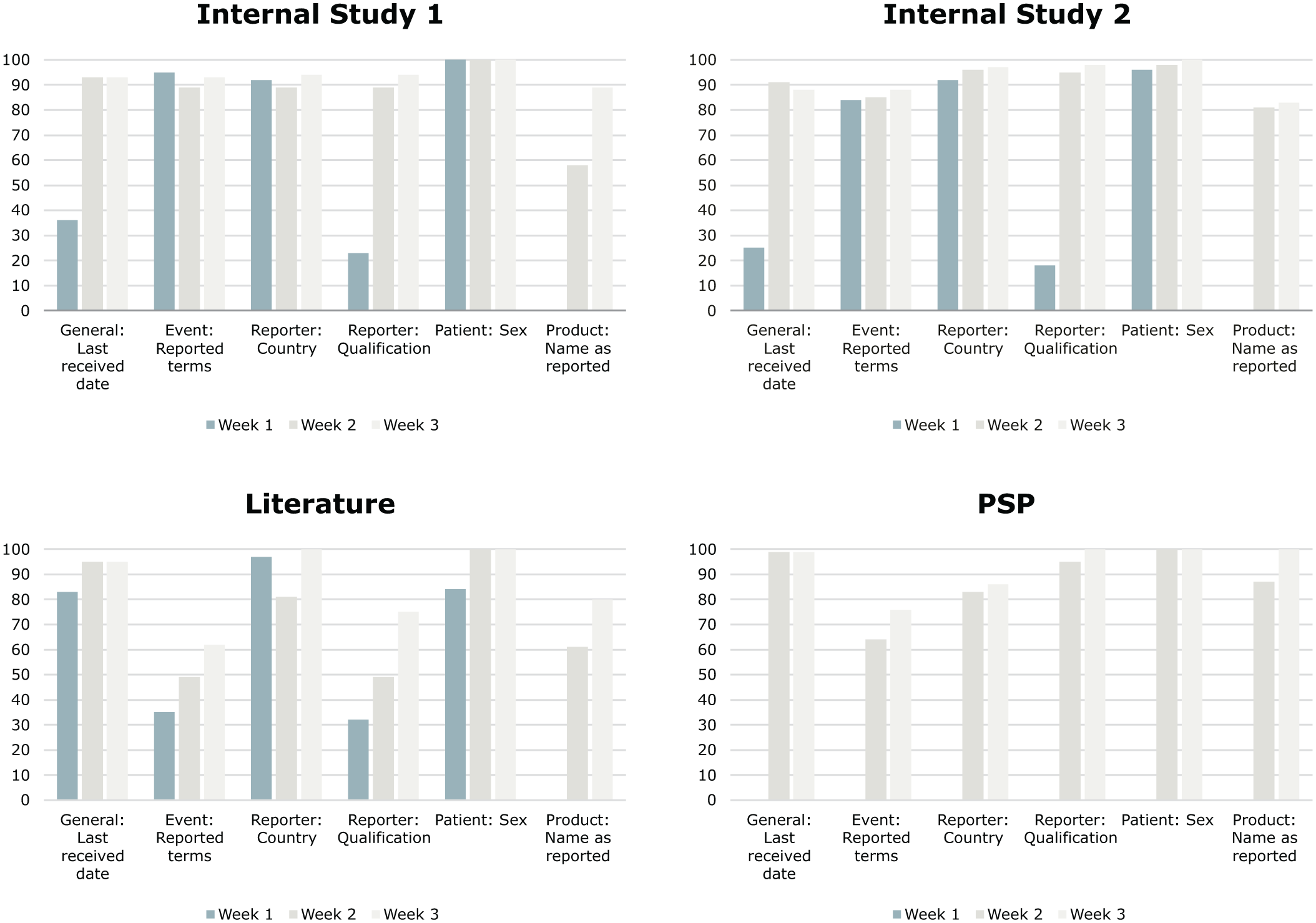

Handling the complexity of pharmacovigilance data necessitates robust modeling and testing to ensure reliability. Queries may be misunderstood, and “hallucinative” responses are well-known issues of LLMs. Process understanding proved to be key to optimizing the efficiency of the extraction, reaching reasonable performance for a representative set of fields. Therefore, early involvement of subject matter experts was highly relevant to derive a comprehensive scope definition regarding relevant report types and fields. Ongoing exchange aided in prompt engineering to integrate process understanding. This can also be seen in Figure 2, where input from subject matter experts helped to integrate process understanding and, as such, enabled improvement of model performance by refining prompts. Alternating between optimizing performance for a particular report type and assessing the performance across report types proved to be helpful to balance specificity and generalizability. While statistical evaluation provided immediate feedback on the performance during development, the business impact evaluation study provided true insights on a process level, though with more time and coordination effort. Iterations and a collaborative approach proved to be key in finding the right transition point from statistically driven optimization to the business level impact assessment, which serves as a proof point for our PoC. Thereby, it helped to efficiently use available resources with limited investment, effectively increasing the RoI. In addition, this approach helped to mitigate some limitations found in other studies, like rephrasing of the extractions, limited extraction performance for nested fields, or optimization plateaus.24–27 Still, limitations of the LLM need to be considered; therefore, we derived risk mitigation actions that included support of human oversight and verification activities.

Average match score in % over the first 3 weeks of the study for all investigated ICSR types.

Regulatory uncertainty remains a significant hurdle

Compliance with GxP standards is mandatory, while the regulatory landscape for AI technologies is still evolving at major health authorities such as both EMA 28 and FDA. 29 EMA’s multi-annual AI workplan 2023–2028 30 is expected to provide further guidance. However, current uncertainties need to be managed. For instance, CIOMS workgroup XIV minutes state that “Scalability is still an issue [while] reluctance may be due to unclear regulation and guidance [. . .],” 31 while Desai mentions in her overview article that “regulations are essential to ensure validation and accuracy for application in real-world settings [. . .].” 15 These concerns about regulatory acceptance have been present during the study; thus, we have taken a proactive approach to apply established risk management tools. This transferred the discussion from an abstract, challenge-oriented level to a practical, solution-oriented perspective. A risk-based approach has already proved its value during this study phase by influencing the design of the business impact assessment approach, such as providing references to the original source data. In addition, an overview of risks and possible controls facilitated discussions to contribute to overall readiness while offering a starting point for possible interactions with health authorities.

System integration needs to be contextualized in the operational environment

Exploring the use of LLMs in a sandbox environment may be a starting point; still, the operational environment, including existing systems’ restrictions and user requirements, needs to be understood to derive meaningful study results. As stated by Desai, “Full automation of PV system is a double-edged sword and needs to consider two aspects—people and processes,” 15 with further considerations like hardware, software, information content, and the human–computer interface mentioned by Ball and Dal Pan. 16 While we utilized a dedicated cloud tenant as a sandbox to extract fields via LLMs, discussions on options to integrate with existing safety solutions were a constant companion. We avoided fine-tuning approaches, as this would exhibit a high complexity regarding the management of foundational and fine-tuned models. Instead, we invested more resources into a prompting strategy with lower complexity. We identified dedicated pre-processing and post-processing as a highly relevant step, which translates into desired configurability for an effective embedding in an established safety solution, or alternatively into a requirement when considering a custom extension to the present solution.

Organizational readiness goes beyond technology

Human involvement and verification should achieve a combination of both sufficient trust and robust oversight when using new technology. However, in a state of insufficient organizational readiness, rejection or abandonment is more likely. 32 To address potential inhibiting factors from the start and to rather support the study and eventually its adoption, we engaged in discussions with the operational team early on. This included the following:

Mindset: Stakeholder acceptance and engagement were raised by emphasizing AI as a tool for augmentation, not the replacement of human work.

Awareness and training: Users were encouraged to take a learning opportunity, as they can only oversee AI-based components if they know how they work. Expectations adjusted accordingly, slowly moving away from expecting perfection.

Process readiness: It is crucial to assess and revise the existing process to ensure it is optimized for GenAI integration; otherwise, users may continue with outdated or redundant practices rather than fully leveraging the productivity gains offered by the new technology.

Given the time saved and more room for creativity, doubts gradually reduced over time, facilitating support and effective conduct of our study.

Conclusion and outlook

Streamlining case management workflows by automating routine tasks can enhance productivity, lead to substantial cost savings, improve organizational agility, and concentrate human cognitive resources on high-impact tasks. In addition, LLMs may improve consistency to achieve a reduction of potential regulatory or reputational risks when effectively managing operational risks in their use.

To evaluate this proposition, the potential benefit of LLMs was investigated in a PoC. Five PtC were derived whose aim was to better understand how to optimize development and real-world deployment of LLMs to process extensive case data across various source types and streamline case management. Initial findings indicate a potential efficiency gain of around 39%, with, on average, only 3.5% corrections required by human processors. We offer these PtCs to help guide ourselves and other stakeholders in the continuation of this innovation journey: (a) measuring the RoI, (b) early involvement of subject matter experts, (c) handling unclear regulatory expectations, (d) system integration, and (e) organizational readiness: from a practical perspective, further work is required to evaluate the full potential. From an organizational readiness and governance perspective, we should leverage guidance provided by organizations such as the CIOMS workgroup XIV 13 on AI in Pharmacovigilance and the EMA, including their recent reflection paper, 28 to eventually achieve compatibility with regulatory expectations and public perception.

Implementing LLMs is not just a technical enhancement; it represents a strategic move toward improving operational efficiency and ensuring high-quality outcomes in pharmacovigilance practices.

Supplemental Material

sj-docx-1-taw-10.1177_20420986251386222 – Supplemental material for How LLMs can advance safety case intake—points to consider and insights from a proof of concept

Supplemental material, sj-docx-1-taw-10.1177_20420986251386222 for How LLMs can advance safety case intake—points to consider and insights from a proof of concept by Hans-Joerg Roemming, Manfred Hauben, Wei Wannhoff, Claudia Schaffer, Irina Tihaa, Martin Heitmann and Veit Mengling in Therapeutic Advances in Drug Safety

Supplemental Material

sj-eps-2-taw-10.1177_20420986251386222 – Supplemental material for How LLMs can advance safety case intake—points to consider and insights from a proof of concept

Supplemental material, sj-eps-2-taw-10.1177_20420986251386222 for How LLMs can advance safety case intake—points to consider and insights from a proof of concept by Hans-Joerg Roemming, Manfred Hauben, Wei Wannhoff, Claudia Schaffer, Irina Tihaa, Martin Heitmann and Veit Mengling in Therapeutic Advances in Drug Safety

Supplemental Material

sj-eps-4-taw-10.1177_20420986251386222 – Supplemental material for How LLMs can advance safety case intake—points to consider and insights from a proof of concept

Supplemental material, sj-eps-4-taw-10.1177_20420986251386222 for How LLMs can advance safety case intake—points to consider and insights from a proof of concept by Hans-Joerg Roemming, Manfred Hauben, Wei Wannhoff, Claudia Schaffer, Irina Tihaa, Martin Heitmann and Veit Mengling in Therapeutic Advances in Drug Safety

Supplemental Material

sj-eps-5-taw-10.1177_20420986251386222 – Supplemental material for How LLMs can advance safety case intake—points to consider and insights from a proof of concept

Supplemental material, sj-eps-5-taw-10.1177_20420986251386222 for How LLMs can advance safety case intake—points to consider and insights from a proof of concept by Hans-Joerg Roemming, Manfred Hauben, Wei Wannhoff, Claudia Schaffer, Irina Tihaa, Martin Heitmann and Veit Mengling in Therapeutic Advances in Drug Safety

Supplemental Material

sj-eps-6-taw-10.1177_20420986251386222 – Supplemental material for How LLMs can advance safety case intake—points to consider and insights from a proof of concept

Supplemental material, sj-eps-6-taw-10.1177_20420986251386222 for How LLMs can advance safety case intake—points to consider and insights from a proof of concept by Hans-Joerg Roemming, Manfred Hauben, Wei Wannhoff, Claudia Schaffer, Irina Tihaa, Martin Heitmann and Veit Mengling in Therapeutic Advances in Drug Safety

Supplemental Material

sj-jpg-3-taw-10.1177_20420986251386222 – Supplemental material for How LLMs can advance safety case intake—points to consider and insights from a proof of concept

Supplemental material, sj-jpg-3-taw-10.1177_20420986251386222 for How LLMs can advance safety case intake—points to consider and insights from a proof of concept by Hans-Joerg Roemming, Manfred Hauben, Wei Wannhoff, Claudia Schaffer, Irina Tihaa, Martin Heitmann and Veit Mengling in Therapeutic Advances in Drug Safety

Footnotes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.